Some TCP Knowledges you May NOT know

TCP_OPEN-ReCALL

TCP_OPEN----RECALL

- Slow channel creation----need 3 round trips between client and server

- The original purpose for this design is concerning for security control

- Some kinds of applications (may not so deeply care security or leverage application-level security control) may be impacted a lot by this design. Like: Instant Message, Web server etc.

- Slow channel creation for one tcp channel may decrease the system availability like "large concurrent request web server behind firewall or LB" or "Instant Message"

TCP_FAST_OPEN

TCP_FAST_OPEN

- Wikipedia: https://en.wikipedia.org/wiki/TCP_Fast_Open

- TCP_FAST_OPEN the server side will ignore the 3rd tcp round trip from client to server (If the client's tfo cookie already be knew from server), then the server will send out data to client without waiting the acknowledge from client.

- Warning:it may have security issue which is quite similar with http cookie.

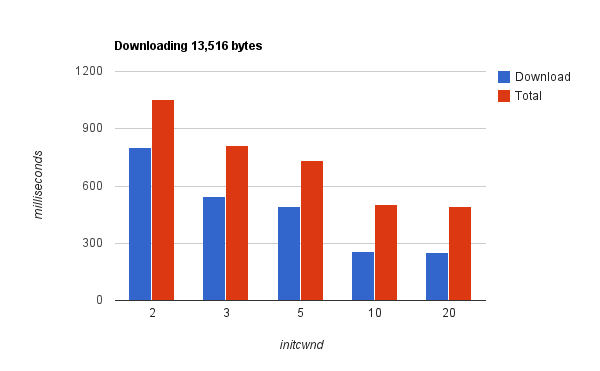

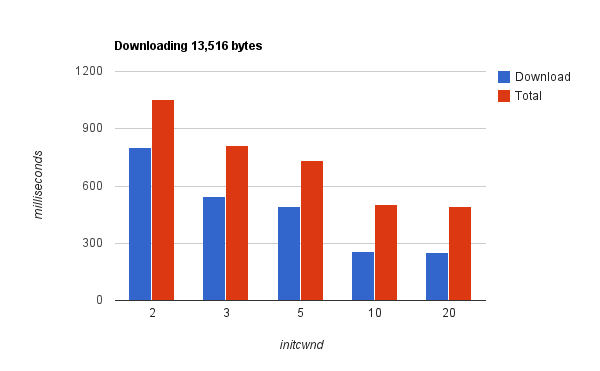

TCP Congestion window

- It's hard to explain clearly in simple sentence. So I'll give you link for your reference: http://www.cdnplanet.com/blog/tune-tcp-initcwnd-for-optimum-performance/

- The basic idea is if you have the confidence about the congestion status of your network, you can tune the initial congestion window to 10 rather than default 1. That way can improve the performance of tcp significantly.

- Warning: Test it in your network first, then tune it.

TCP CONGESTION WINDOW

Lower is Better (X means time, Y means window size)

TCP_NODELAY

- TCP initially was design for slow network, at that time using the network bandwidth as many as possible was the key goal for every design. Nagle's algorithm just created for that purpose----accumulating many small packet and grouping them sending out.

- That design would largely impact the latency for small packets transmission via TCP.

- Now the network bandwidth has already widened a lot, so if you application will send many small packet ( <<< MSS). You can turn off TCP_NODELAY to TCP_CORK for better performance

- Wikipedia: Nagle's algorithm - Wikipedia, the free encyclopedia

Ugly TIME_WAIT

- Now many web server consume lots of connections, and each connection (especially for http) at the end stage will enter into TIME_WAIT stage at the server side. TIME_WAIT will be 2 times MSL (Maximum Segment Lifecycle).

- TCP will leverage the upper mechanism to ensure that packets associated with one connection that are delayed in the network are not accepted by later connections between the same hosts

- But that will block the new request come in before TIME_WAIT is finished especially in the large concurrent requests server.

Attack Ugly TcP time_wait

- Setting the MSL with smaller value.

- http://tools.ietf.org/html/draft-faber-time-wait-avoidance-00

- Remember in the server side try use the socket option SO_REUSEADDRESS flag.

Resources

- http://googlecode.blogspot.com/2012/01/lets-make-tcp-faster.html

- http://www.techrepublic.com/article/tcpip-options-for-high-performance-data-transmission/1050878

- http://www.cdnplanet.com/blog/tune-tcp-initcwnd-for-optimum-performance/

- https://developers.google.com/speed/protocols/tcpm-IW10

Made with Slides.com