My First Go Project

(seven years later)

Thomas Bradford

Who are you, and why are you famous?

- From the USA (sorry)

- I used to write database internals, as a job

- Now I design languages and compilers, as a hobby

- About seven years ago, I decided to learn Go

- And they still haven't fixed error handling

I'm Thomas Bradford

So Why Go?

(we all have our reasons)

What are mine?

- I'm old, and therefore old-school

- Syntax confuses me

- I like code that's fast, but doesn't cost much

- Not the biggest fan of threads

- I suffer from rhabdophobia

Rhabdophobia is a fear of

Hello, Go!

(that first project)

It didn't look like this

package main

import "fmt"

func main() {

fmt.Println("Hello, World!")

}

Or even like this

package main

import "fmt"

func main() {

h := make(chan string)

go func() {

h <- "World"

}()

fmt.Printf("Hello, %s!\n", <-h)

}

It started out like this *

// lexer holds the state of the scanner.

type lexer struct {

name string // used only for error reports.

input string // the string being scanned.

start int // start position of this item.

pos int // current position in the input.

width int // width of last rune read.

items chan item // channel of scanned items.

}

// item represents a token returned from the scanner.

type item struct {

typ itemType // Type, such as itemNumber.

val string // Value, such as "23.2".

}

// itemType identifies the type of lex items.

type itemType int

const (

itemError itemType = iota // error occurred; value is text of error

itemDot // the cursor, spelled "."

...and ended up like this:

$ cloc .

-------------------------------------------------------------------------------

Language files blank comment code

-------------------------------------------------------------------------------

Go 179 1911 610 9868

Markdown 87 282 0 1342

Scheme 14 139 17 844

XML 14 0 0 667

JSON 1 0 0 24

YAML 3 4 0 18

make 1 6 0 17

-------------------------------------------------------------------------------

SUM: 299 2342 627 12780

-------------------------------------------------------------------------------

Whaaaaa?!?

OMG, did you build a

Scheme compiler?

Yes I did! And you should, too!

Scheme

(in two minutes)

Scheme is a Lisp dialect

(oh, crap!)

Lisp is a symbolic programming language

(double crap!)

Symbolic programming languages are capable of manipulating code as effortlessly as data

(in Lisp, code is data)

Lisp was invented in 1958 by John McCarthy at MIT

(so it's prehistoric)

Scheme was invented in 1975 by Guy Steele and Gerald Sussman at MIT

(so within my lifetime)

And in case you didn't know what Lisp or Scheme look like...

(define nth!

(let-rec [scan

(lambda (coll pos missing)

(if (seq coll)

(if (> pos 0)

(scan (rest coll) (dec pos) missing)

(first coll))

(missing)))]

(lambda-rec nth!

[(coll pos)

(if (indexed? coll)

(nth coll pos)

(scan coll pos (lambda () (raise "index out of bounds"))))]

[(coll pos default)

(if (indexed? coll)

(nth coll pos default)

(scan coll pos (lambda () default)))])))They look a bit like this

If that seems like too many parens, just get yourself a 1970s Lisp Keyboard

Problem solved!

So Why Lisp?

(we all have our reasons)

What are mine?

- I'm old, and therefore old-school

- Syntax confuses me

- I suffer from rhabdophobia

- I really missed the FP bus

What are mine?

Lisp is the originator of many things 'functional'

Perfect candidate for an incremental approach to developing a compiler

The Problem

(from lexer to tail-calls)

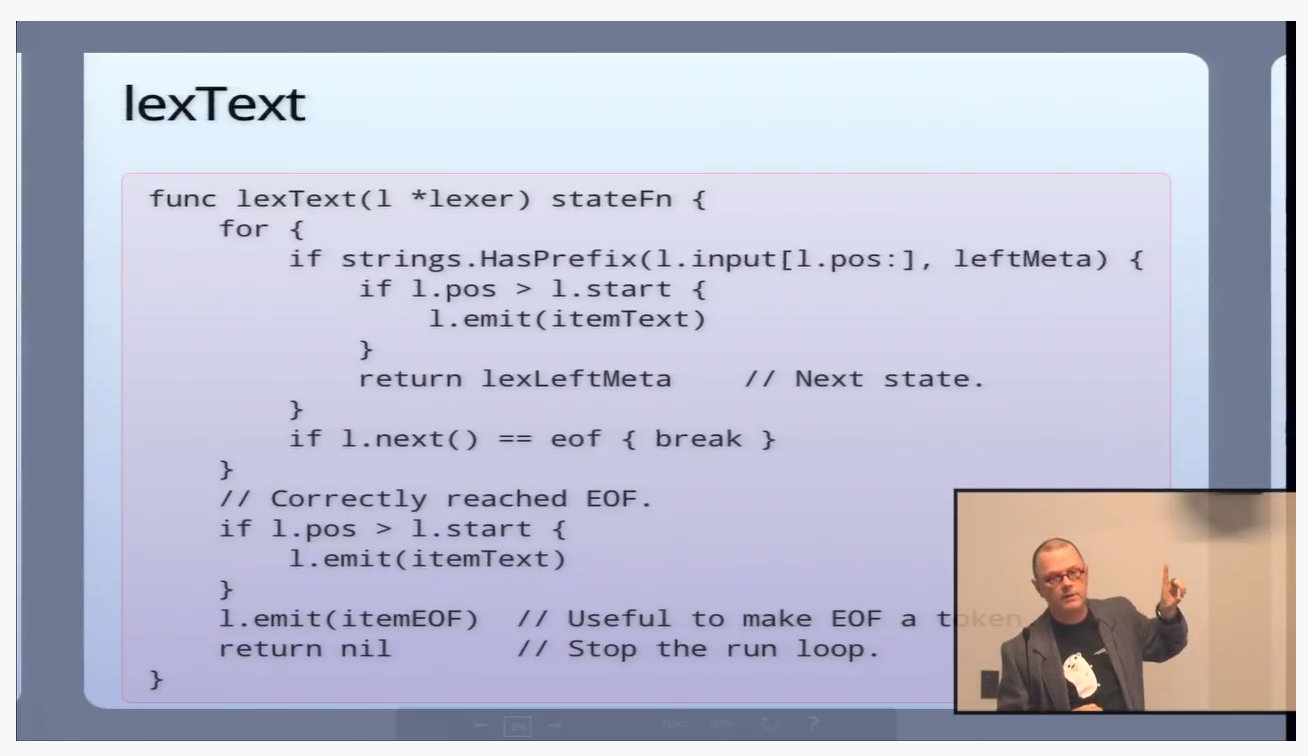

It all began with Rob Pike and his channel lexer

And now it resembles that lexer in absolutely no way

Because "Compiler"

Lexical

Analysis

Scan for the individual tokens of the language (numbers, ids, braces, etc)

Assure those tokens are appearing in adherence to the language's grammar

Assure that tokens are semantically valid (in-scope, correct type, etc...)

Describes an abstract working program that the optimizer can rewrite

Perform local and global rewriting of the abstract representation

Emit a final set of instructions that a machine can execute directly

Syntax

Analysis

Semantic Analysis

Intermediate Code Generator

Code

Optimizer

Machine Code

Generator

Complicating that is Lisp

It breaks the pipeline into two distinct steps:

-

reading takes a string of characters and performs lexical and syntax analysis

- evaluating performs the rest of the steps, including semantic analysis and execution

How Lisp reads

-

The lexical and syntax analyzers are a first-class element of the language and runtime

-

Together they are a function called read

-

They are available to the programmer!

- That means they must produce data that a Lisp function can consume

Reading in Scheme

(read

(open-input-string

"(display \"hello\")"))

(display "hello")

;; A List with two elements: a Symbol named

;; "display" and a String containing "hello"This evaluates to

How Lisp evaluates

- Semantic analysis, code generation, and execution are also a first-class element in the language and runtime

- Together these are a function called eval

- Also available to the programmer!

- Running Lisp code is generally a two step process of reading and then evaluating

- But you don't need to read to create the data needed for evaluation

Evaluating in Scheme

(eval (read

(open-input-string

"(display \"hello\")")))helloThis displays

(eval (cons 'display (cons "hello" '())))You can also evaluate a synthesized list

(eval '(display "hello"))On to the Implementation

Slicing Up The Problem

A Modest Approach

- No grand up-front vision or design. I sliced it up and worked toward an MVP

- That MVP was a pure interpreter (and a super slow one at that)

Lexical

Analysis

Scan for the individual tokens of the language (numbers, ids, braces, etc)

Assure those tokens are appearing in adherence to the language's grammar

Assure that tokens are semantically valid (in-scope, correct type, etc...)

Describes an abstract working program that the optimizer can rewrite

Perform local and global rewriting of the abstract representation

Emit a final set of instructions that a machine can execute directly

Syntax

Analysis

Semantic Analysis

Intermediate Code Generator

Code

Optimizer

Machine Code

Generator

Lexical

Analysis

Scan for the individual tokens of the language (numbers, ids, braces, etc)

Assure those tokens are appearing in adherence to the language's grammar

Take the syntax tree that was created and attempt to brute force a result by performing a lot of run-time checks

Syntax

Analysis

Interpreter

A Modest Approach

- The initial cut of the runtime was written in pure Go

- Once Lambdas and Macros were introduced, the Go code started to disappear

A Modest Approach

- I eventually wrote an abstract machine and a proper compiler for it

- I topped that off with a code optimizer

- And hacked together a tail call optimizer

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Base (First Function Called)

Top (Stack Pointer)

Stack Frame

Stack Frame

Stack Frame

Stack Frame

Base (First Function Called)

Top (Stack Pointer)

(define (to-zero x)

(cond

[(> x 1000) (to-zero (- x 1))]

[(> x 0) (to-zero (- x 1))]

[:else 0]))

(to-zero 9999)Mistakes Were Made

- Creating type hierarchies instead of duck-typing with interfaces

- Failing to properly compose interfaces and structs

- Completely misusing pointers

At first, I didn't fully understand the beauty of Go's type system

unnecessarily boxing primitives

type Number interface {

Cmp(Number) Comparison

Add(Number) Number

Sub(Number) Number

//...

}

type Integer struct {

value int64

}

// Cmp compares this Integer to another Number

func (l *Integer) Cmp(r Number) Comparison {

if ri, ok := r.(*Integer); ok {

if l.value > ri.value {

return GreaterThan

}

...type Number interface {

Cmp(Number) Comparison

Add(Number) Number

Sub(Number) Number

//...

}

type Integer int64

// Cmp compares this Integer to another Number

func (l Integer) Cmp(r Number) Comparison {

if ri, ok := r.(Integer); ok {

if l > ri {

return GreaterThan

}

...I loved to

panic and rescue

and I stuttered a lot

import "github.com/kode4food/ale/internal/sequence"

...

v := sequence.SequenceToVector(s)

// Why am I saying "sequence" over and over?

import "github.com/kode4food/ale/internal/sequence"

...

v := sequence.ToVector(s)

// "sequence to vector" makes WAY more sense than

// sequence sequence to vector.

I took the idiomatic guidelines on local variable names way too seriously

func evalBuffer(ns env.Namespace, src []byte) data.Value {

r := read.FromString(data.String(src))

return eval.Block(ns, r)

}

func evalBuffer(ns env.Namespace, src []byte) data.Value {

foo := read.FromString(data.String(src))

return eval.Block(ns, foo)

}

I used unqualified imports more than I should have

import "github.com/kode4food/ale/internal/sequence"

...

v := sequence.SequenceToVector(s)

import . "github.com/kode4food/ale/internal/sequence"

...

v := SequenceToVector(s)

import "github.com/kode4food/ale/internal/sequence"

...

v := sequence.ToVector(s)

What's Next?

- Proper type system

- Performance improvements

- Generics for collections

- Or maybe none of the above