Text Similarity

Stakes for the company

Measure the similarity between texts on a large scale

Which similarity metrics are relevant to our case ?

Is similarity on its own enough to explain / get a better understanding of Google (de)indexation ?

Can we build a mathematical model that would "predict" the indexation (or indexation chances) for a given text ?

A glance at NLP / Text Similarity

NLP acts on 3 levels :

- Syntax (syntactic processing : what is grammatical ?)

- Semantic (semantic processing : what does it mean ?)

- Pragmatism (pragmatic processing : what is the goal ?)

So does similarity.

A glance at NLP / Text Similarity

Similarity computing for us, is interesting on :

- Syntax : What makes two texts syntaxically / grammatically similars ? How to measure syntactic similarity ?

- Semantic : What makes two texts semantically similars ? How to measure semantic similarity ?

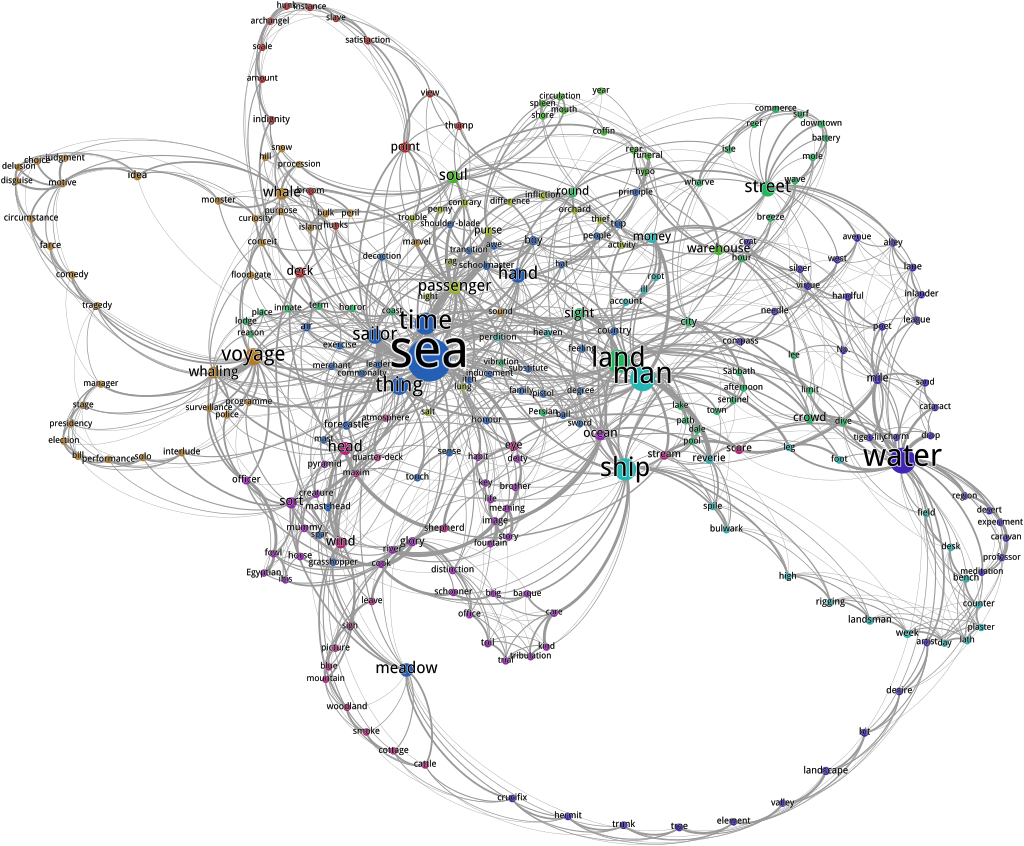

Language under mathematics

How to represent language (text) with mathematics ?