Robotics at Google

PaLM-SayCan Demo

Language as Robot Middleware

Andy Zeng, Fei Xia

CoRL Workshop on Language and Robot Learning

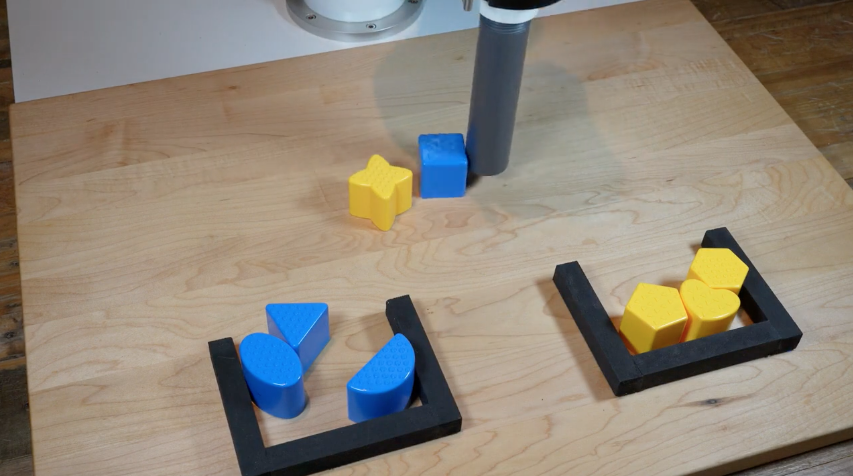

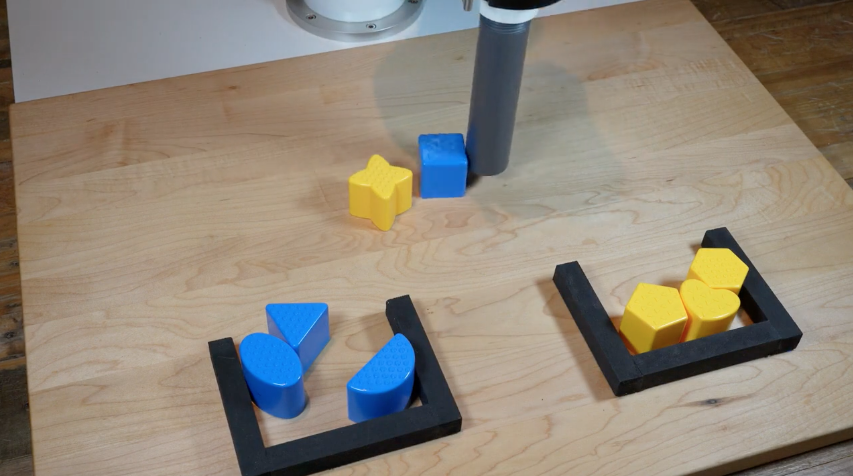

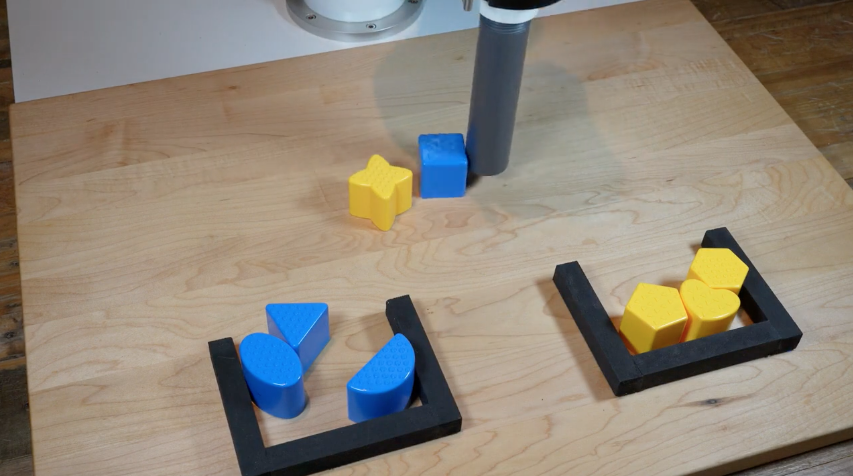

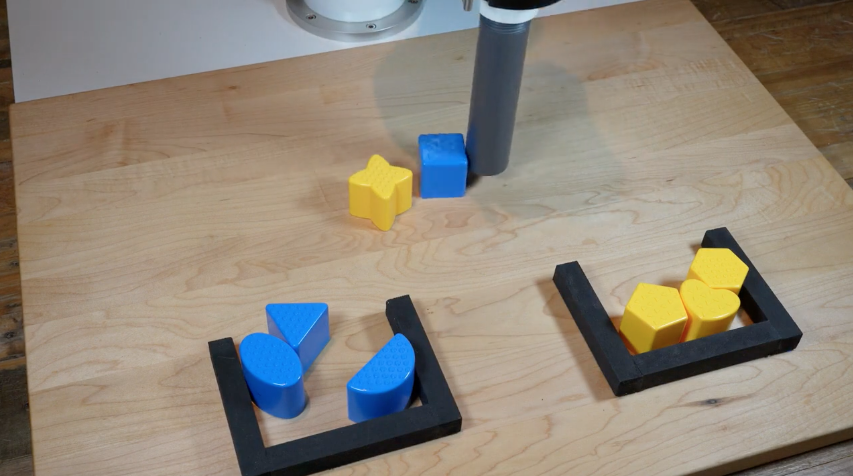

Manipulation

TossingBot

Interact with the physical world to learn bottom-up commonsense

Transporter Nets

Implicit Behavior Cloning

w/ machine learning

i.e. "how the world works"

On the quest for shared priors

Interact with the physical world to learn bottom-up commonsense

w/ machine learning

i.e. "how the world works"

# Tasks

Data

On the quest for shared priors

Interact with the physical world to learn bottom-up commonsense

w/ machine learning

i.e. "how the world works"

# Tasks

Data

Expectation

Reality

Complexity in environment, embodiment, contact, etc.

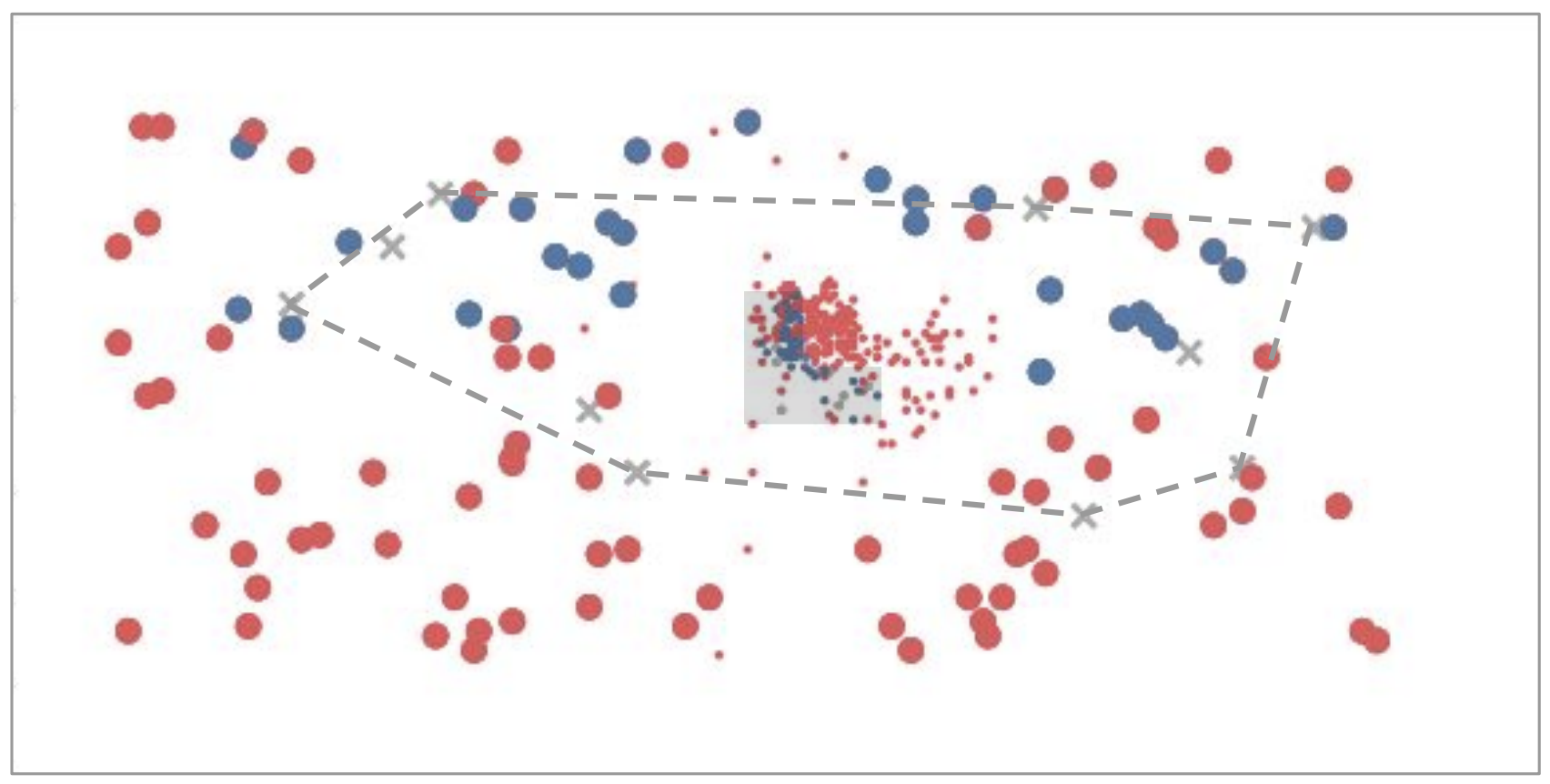

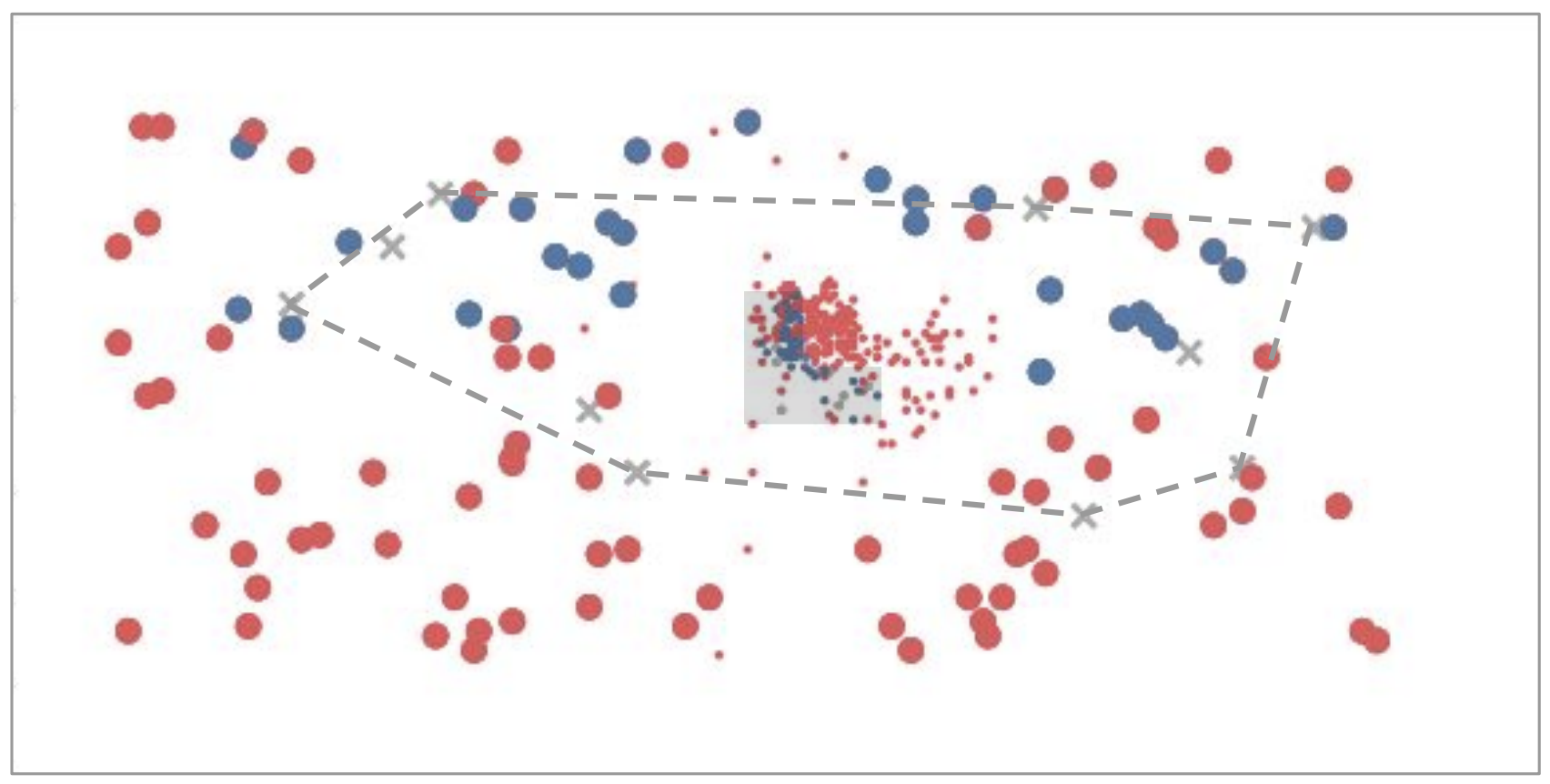

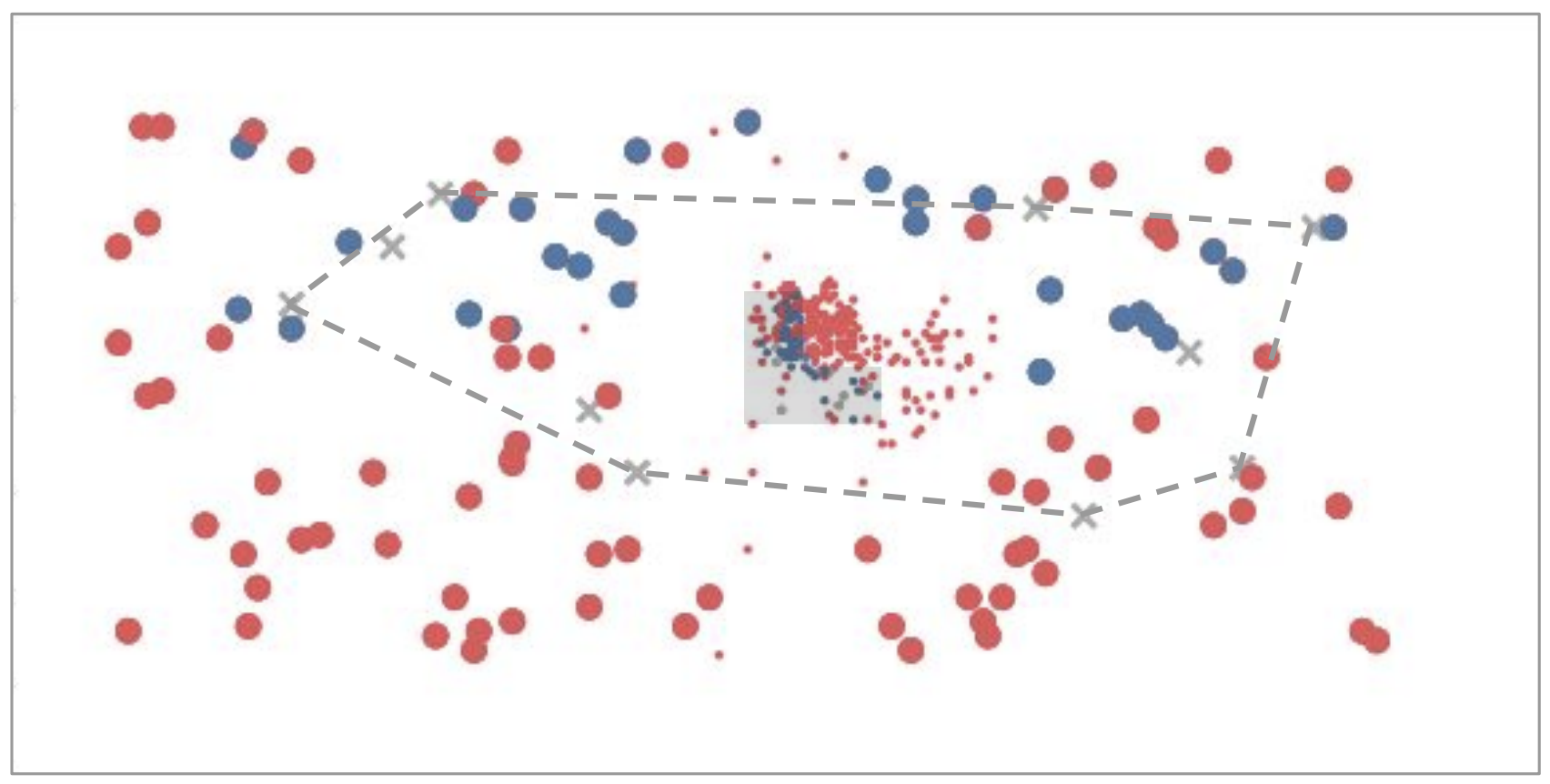

Deep Learning is a Box

Interpolation

Extrapolation

adapted from Tomás Lozano-Pérez

Deep Learning is a Box

Interpolation

Extrapolation

Roboticist

Vision

NLP

Deep Learning is a Box

Interpolation

Extrapolation

Internet

Meanwhile in NLP...

Large Language Models

Large Language Models?

Internet

Meanwhile in NLP...

Books

Recipes

Code

News

Articles

Dialogue

Demo

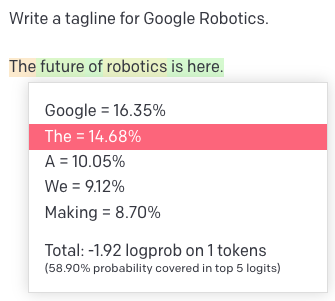

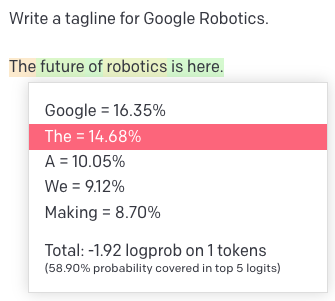

Quick Primer on Language Models

Tokens (inputs & outputs)

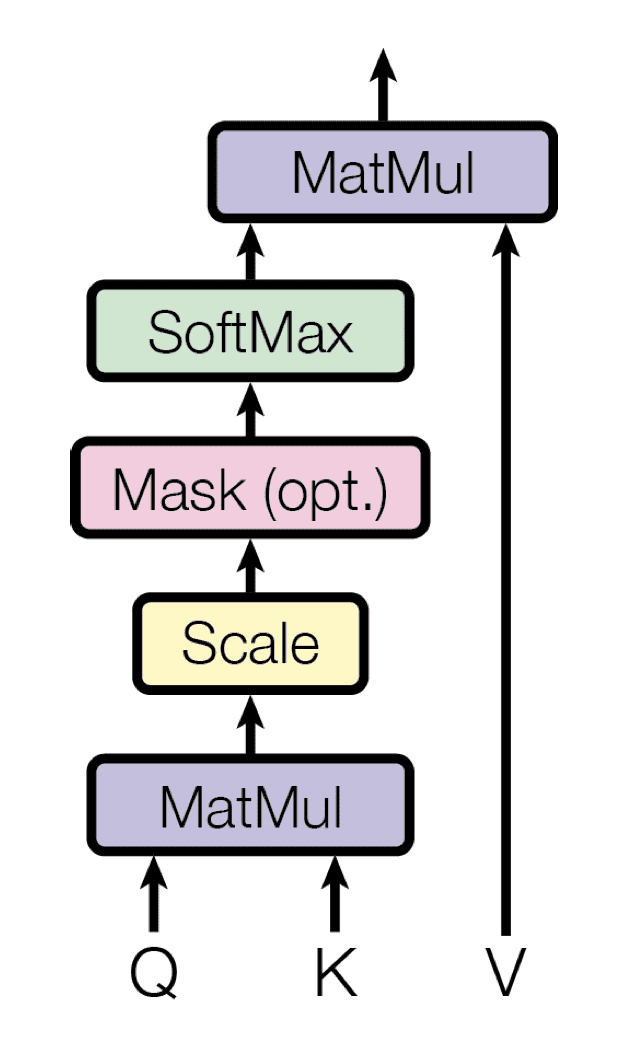

Transformers (models)

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

Quick Primer on Language Models

Tokens (inputs & outputs)

Transformers (models)

Pieces of words (BPE encoding)

big

bigger

per word:

biggest

small

smaller

smallest

big

er

per token:

est

small

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

Quick Primer on Language Models

Tokens (inputs & outputs)

Transformers (models)

Self-Attention

Pieces of words (BPE encoding)

big

bigger

per word:

biggest

small

smaller

smallest

big

er

per token:

est

small

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

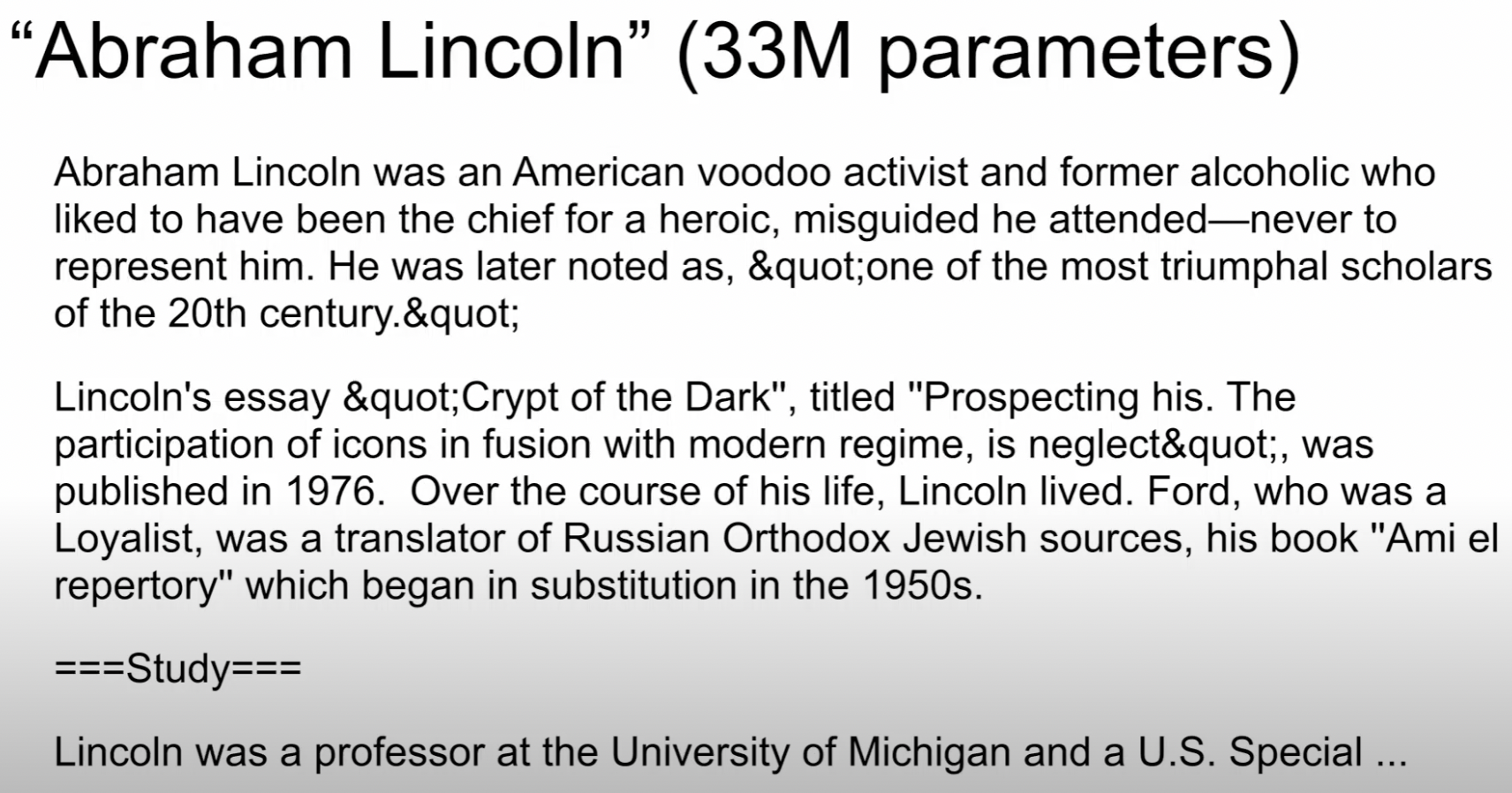

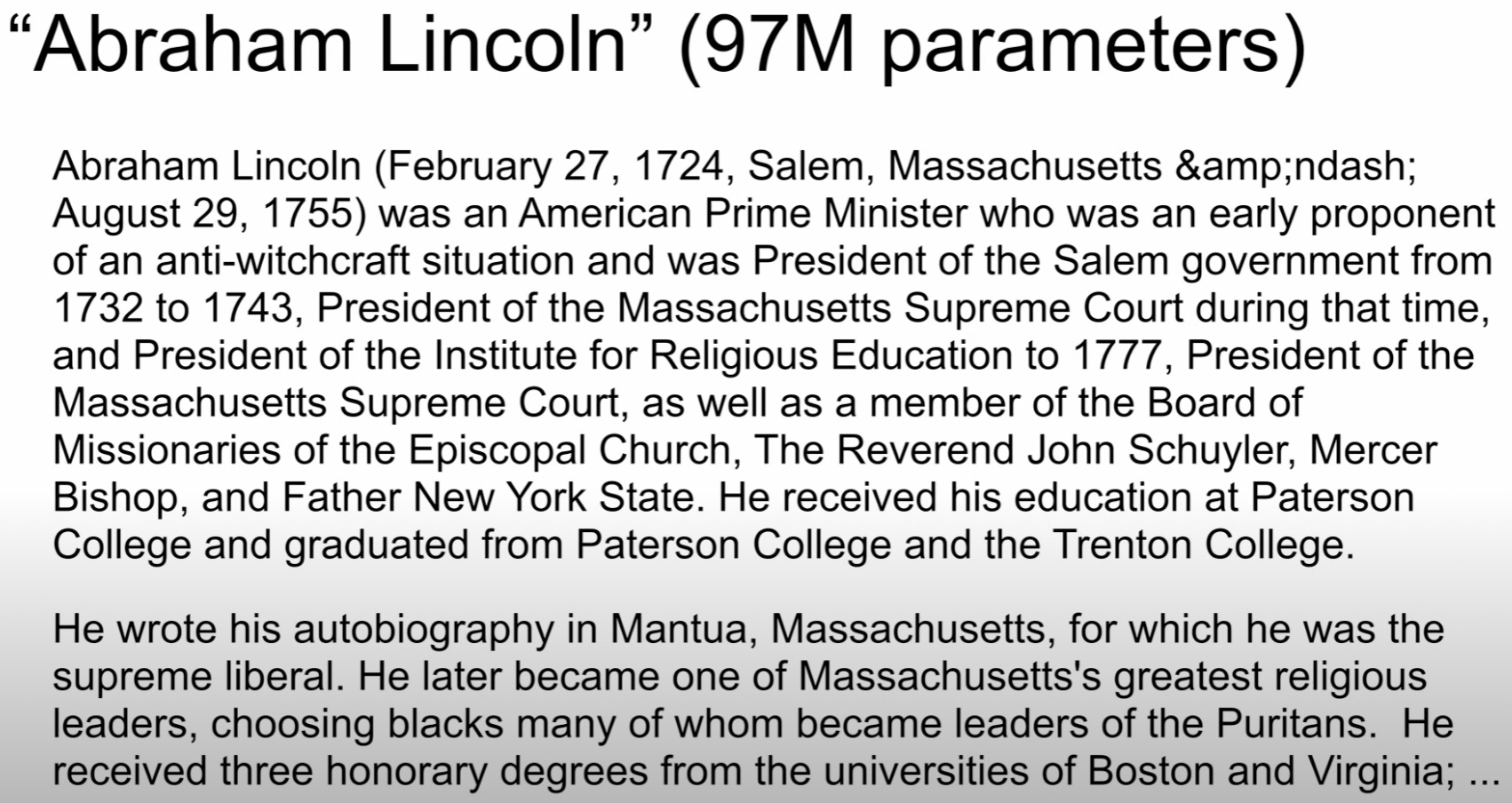

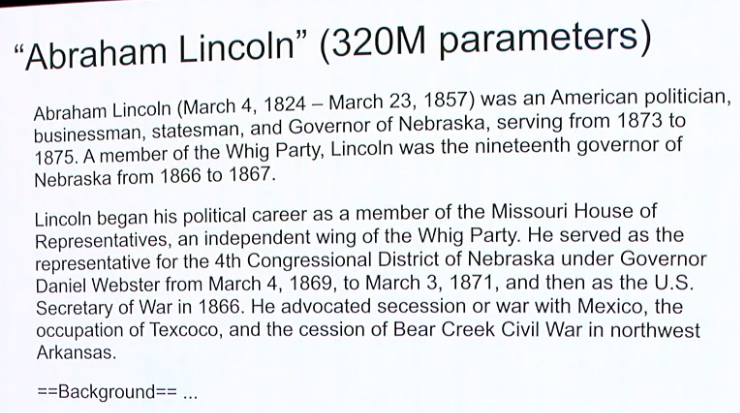

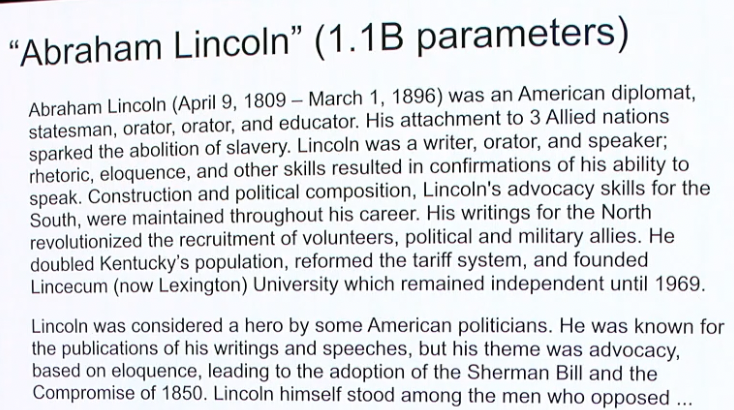

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Robot Planning

Visual Commonsense

Robot Programming

Socratic Models

Code as Policies

PaLM-SayCan

Demo

Somewhere in the space of interpolation

Lives

Somewhere in the space of interpolation

Robot Planning

Visual Commonsense

Robot Programming

Socratic Models

Code as Policies

PaLM-SayCan

Demo

Lives

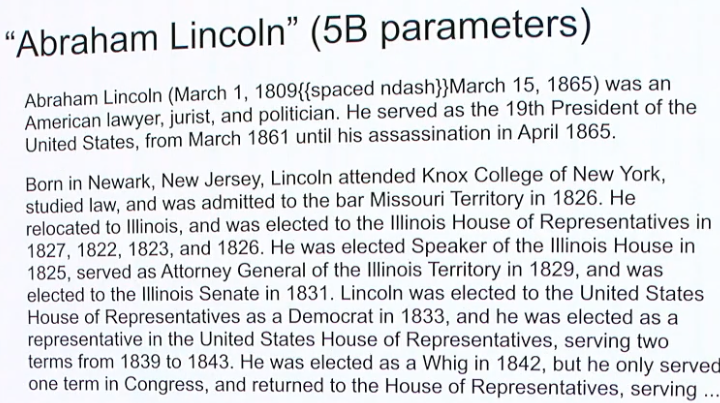

PaLM-SayCan

Live Demo

Fei Xia

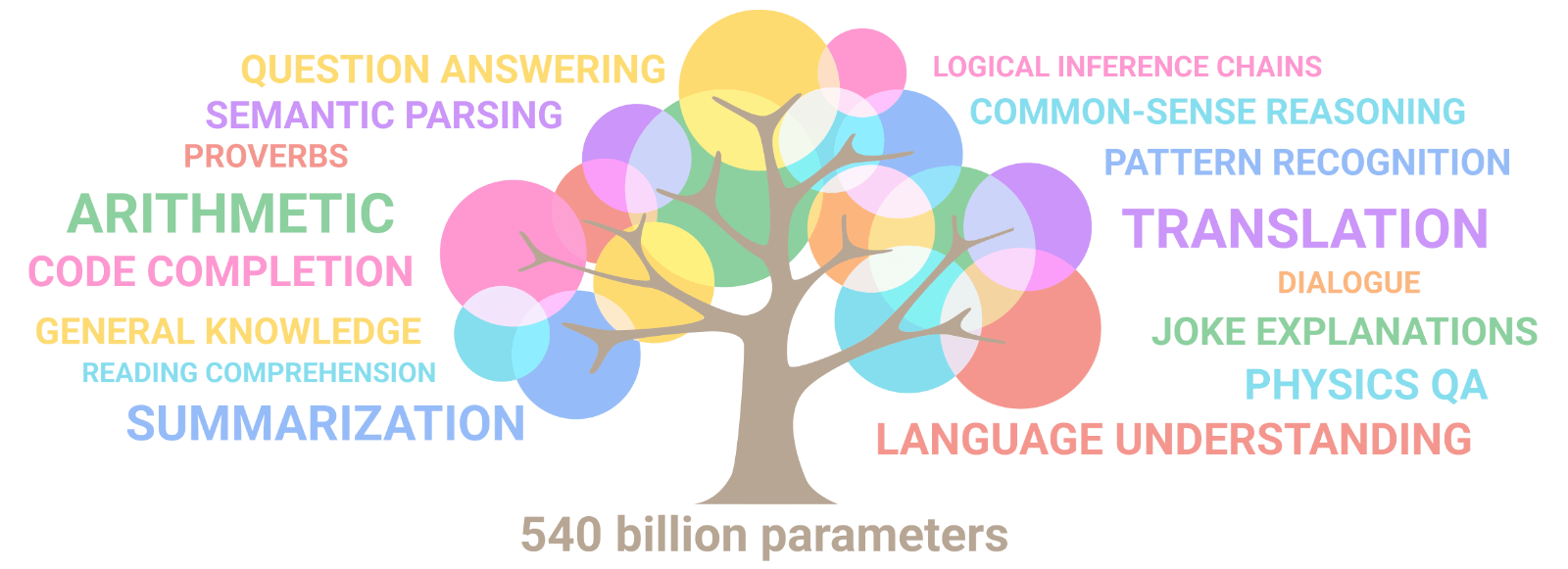

LLM planning on robots! Open research problem, but here's one way to do it

Do As I Can, Not As I Say: Grounding Language in Robotic Affordances

https://say-can.github.io

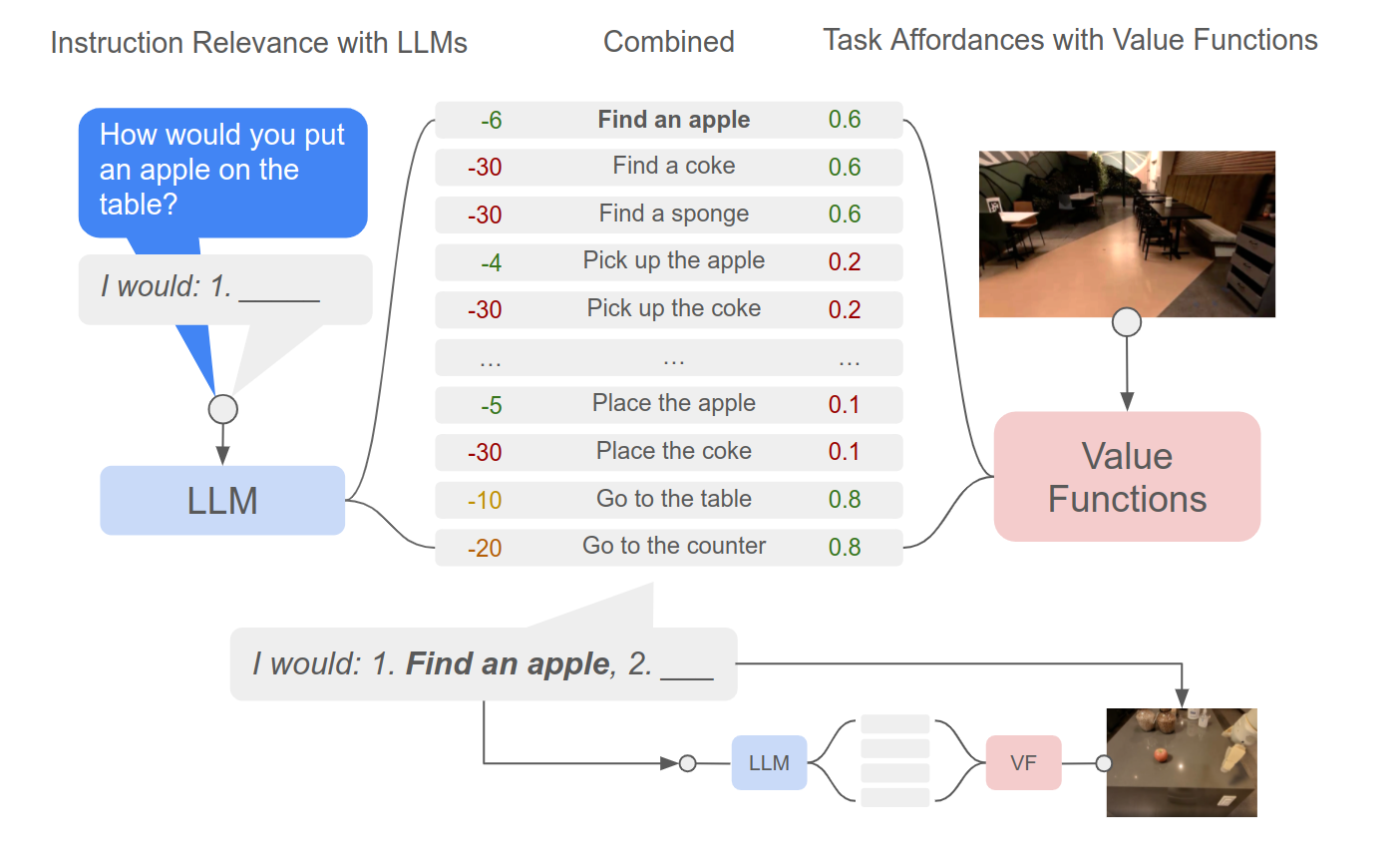

PaLM

Code is available

Limits of language as information bottleneck?

- Loses spatial precision

- Highly (distributional) multimodal

- Not as information-rich as e.g. images

- Only for high level? what about control?

Perception

Planning

Control

Socratic Models

Inner Monologue

SayCan

Wenlong Huang et al, 2022

Imitation? RL?

Engineered?

Limits of language as information bottleneck?

- Loses spatial precision

- Highly (distributional) multimodal

- Not as information-rich as e.g. images

- Only for high level? what about control?

Perception

Planning

Control

Socratic Models

Inner Monologue

SayCan

Wenlong Huang et al, 2022

Imitation? RL?

Engineered?

Scaling robot learning (today) is hard

Robot Learning

Not a lot of robot data

500 expert demos

5000 expert demos

50 expert demos

Robot Learning

Language Models

Not a lot of robot data

Lots of Internet data

500 expert demos

5000 expert demos

50 expert demos

256K token vocab w/ word embedding dim = 18,432

PaLM-sized robot dataset = 100 robots for 24 yrs

collecting (mostly) diverse data

Scaling robot learning (today) is hard

Robot Learning

Language Models

- Finding other sources of data (sim, YouTube)

- Improve data efficiency with prior knowledge

Not a lot of robot data

Lots of Internet data

500 expert demos

5000 expert demos

50 expert demos

256K token vocab w/ word embedding dim = 18,432

PaLM-sized robot dataset = 100 robots for 24 yrs

collecting (mostly) diverse data

Scaling robot learning (today) is hard

Robot Learning

Language Models

- Finding other sources of data (sim, YouTube)

- Improve data efficiency with prior knowledge

Not a lot of robot data

Lots of Internet data

500 expert demos

5000 expert demos

50 expert demos

Use language to help close the gap!

256K token vocab w/ word embedding dim = 18,432

PaLM-sized robot dataset = 100 robots for 24 yrs

collecting (mostly) diverse data

Scaling robot learning (today) is hard

2 Final Thoughts

adapted from Tomás Lozano-Pérez

Machine learning is a box

... but robotics is a line

Embrace compositionality

adapted from Tomás Lozano-Pérez

Machine learning is a box

... but robotics is a line

Embrace compositionality

adapted from Tomás Lozano-Pérez

Machine learning is a box

... but robotics is a line

2. compose them

autonomously

1. build boxes

"Language" as the glue for intelligent machines

Language

Perception

Planning

Control

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

We have some reason to believe that

"the structure of language is the structure of generalization"

To understand language is to understand generalization

https://evjang.com/2021/12/17/lang-generalization.html

Sapir–Whorf hypothesis

Towards grounding everything in language

Language

Control

Vision

Tactile

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

Lots of data

Less data

Less data

Thank you!

Pete Florence

Adrian Wong

Johnny Lee

Vikas Sindhwani

Stefan Welker

Vincent Vanhoucke

Kevin Zakka

Michael Ryoo

Maria Attarian

Brian Ichter

Krzysztof Choromanski

Federico Tombari

Jacky Liang

Aveek Purohit

Wenlong Huang

Fei Xia

Peng Xu

Karol Hausman

and many others!