Bridging Barriers

This slide deck has been co-created using generative AI for demonstrative and educational purposes.

Human-Machine Collaboration for

Better Access to Special Collections

by

Annika Rockenberger, PhD

University of Oslo Library

Digital Research Methods in the Humanities,

Social Sciences, Pedagogy, and Theology

Human-Machine Collaboration

Enhancing Access to Special Collections

The omnipresence of generative AI

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Morbi nec metus justo. Aliquam erat volutpat.

Experiment Overview

- Original letters are digitised

- Metadata is added to the letters by a librarian together with a domain expert

- Letters are transcribed using high-performance HTR models in Transcribus (Swedish Lion, Text Titan I ter)

- Domain experts with paleographic and linguistic skills correct the machine transcription (1x correction) and tag names and places

- Corrected transcriptions are uploaded to NotebookLM

- Specific instructions (prompts) for creating the summaries are provided

- The output is evaluated for correctness by domain experts

- The summaries are supplied on the ALVIN platform

The Letters

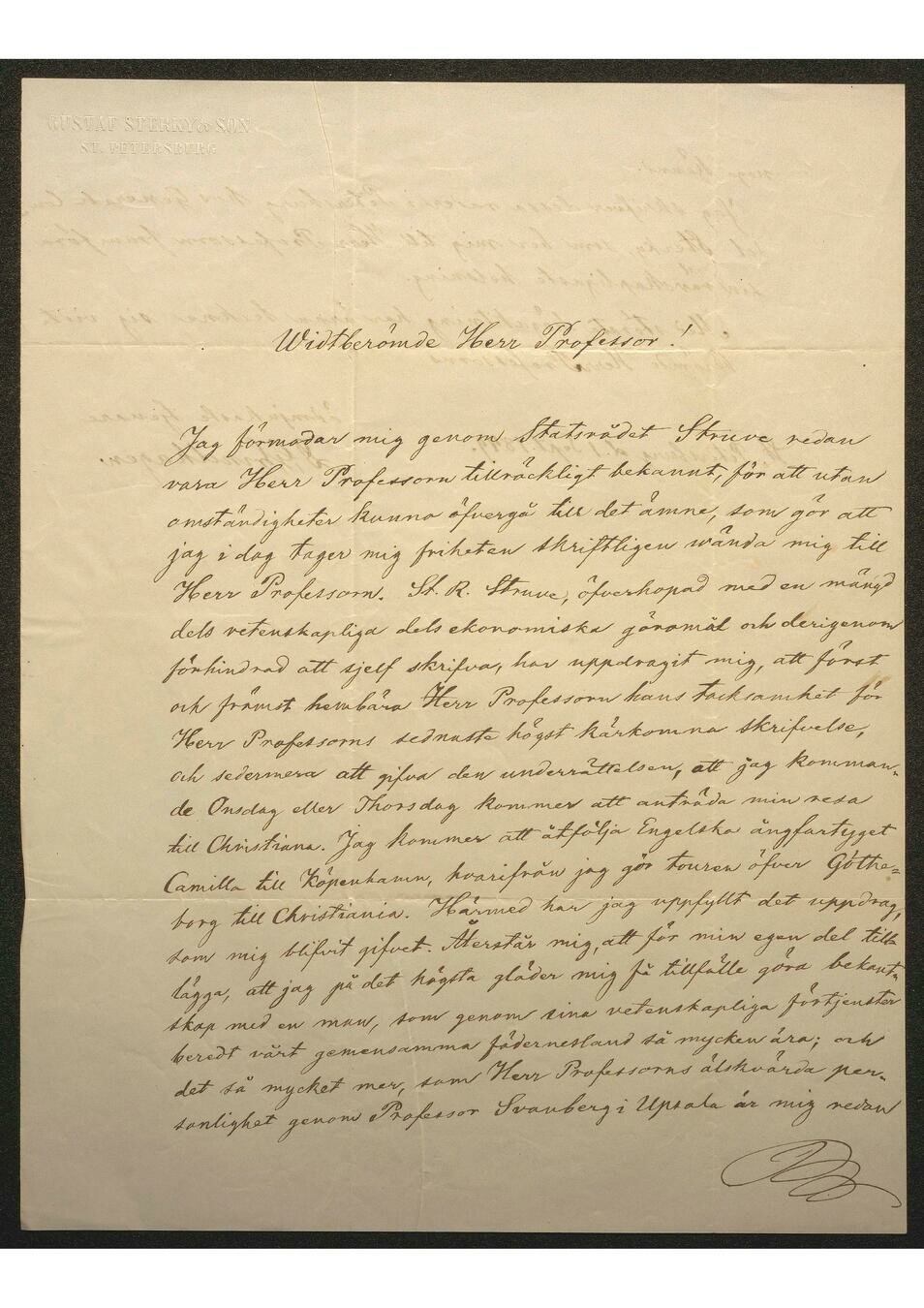

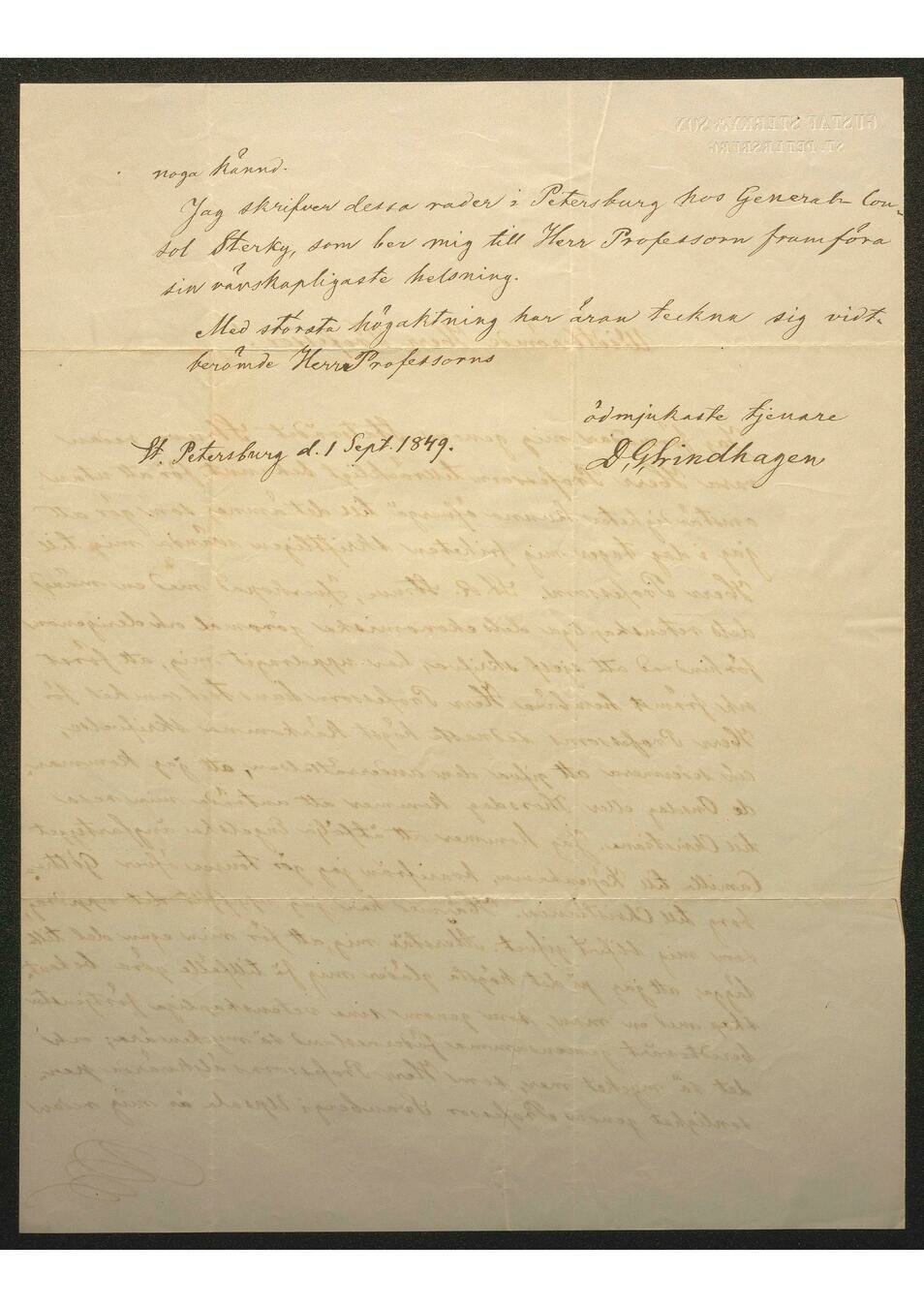

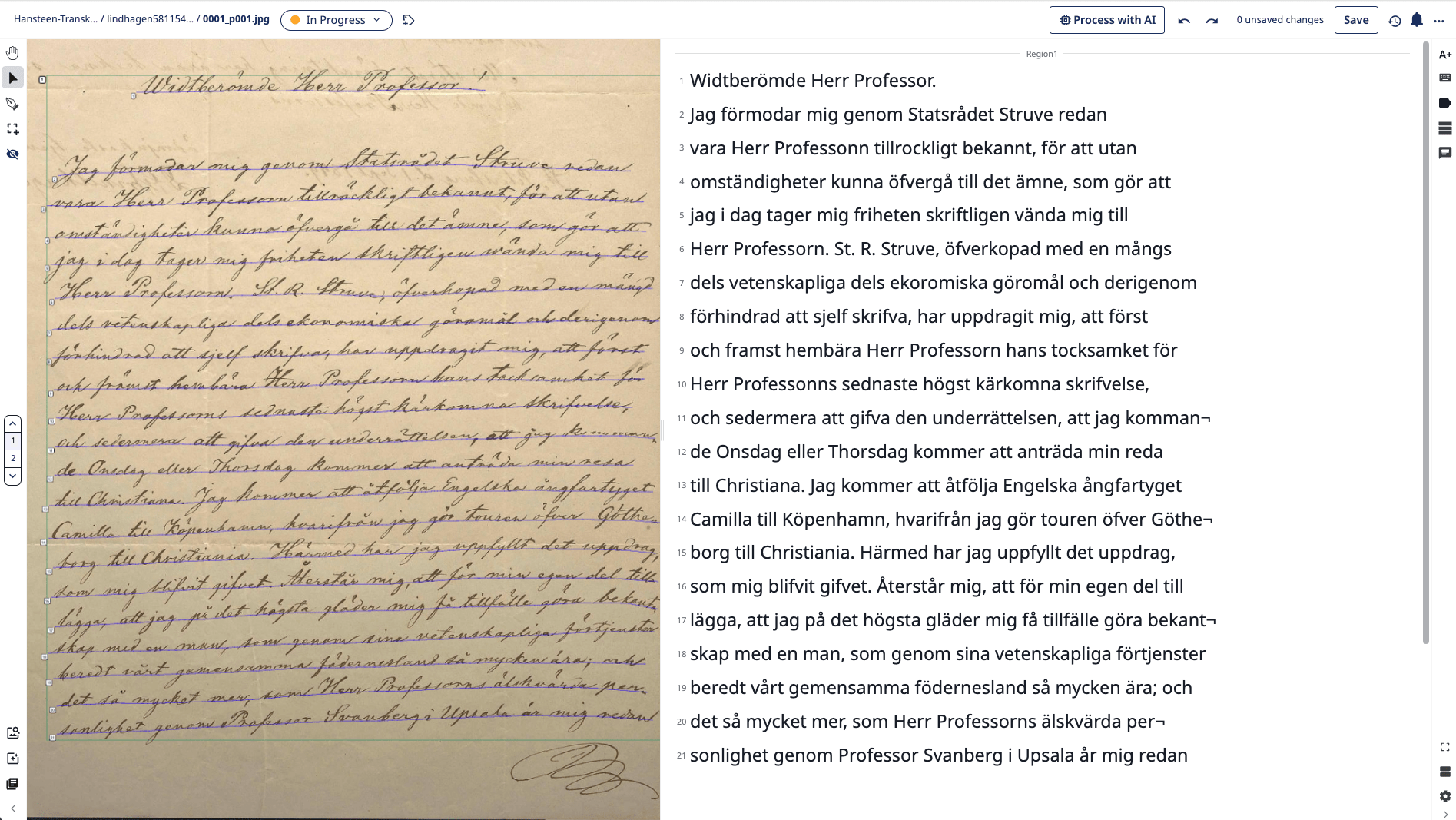

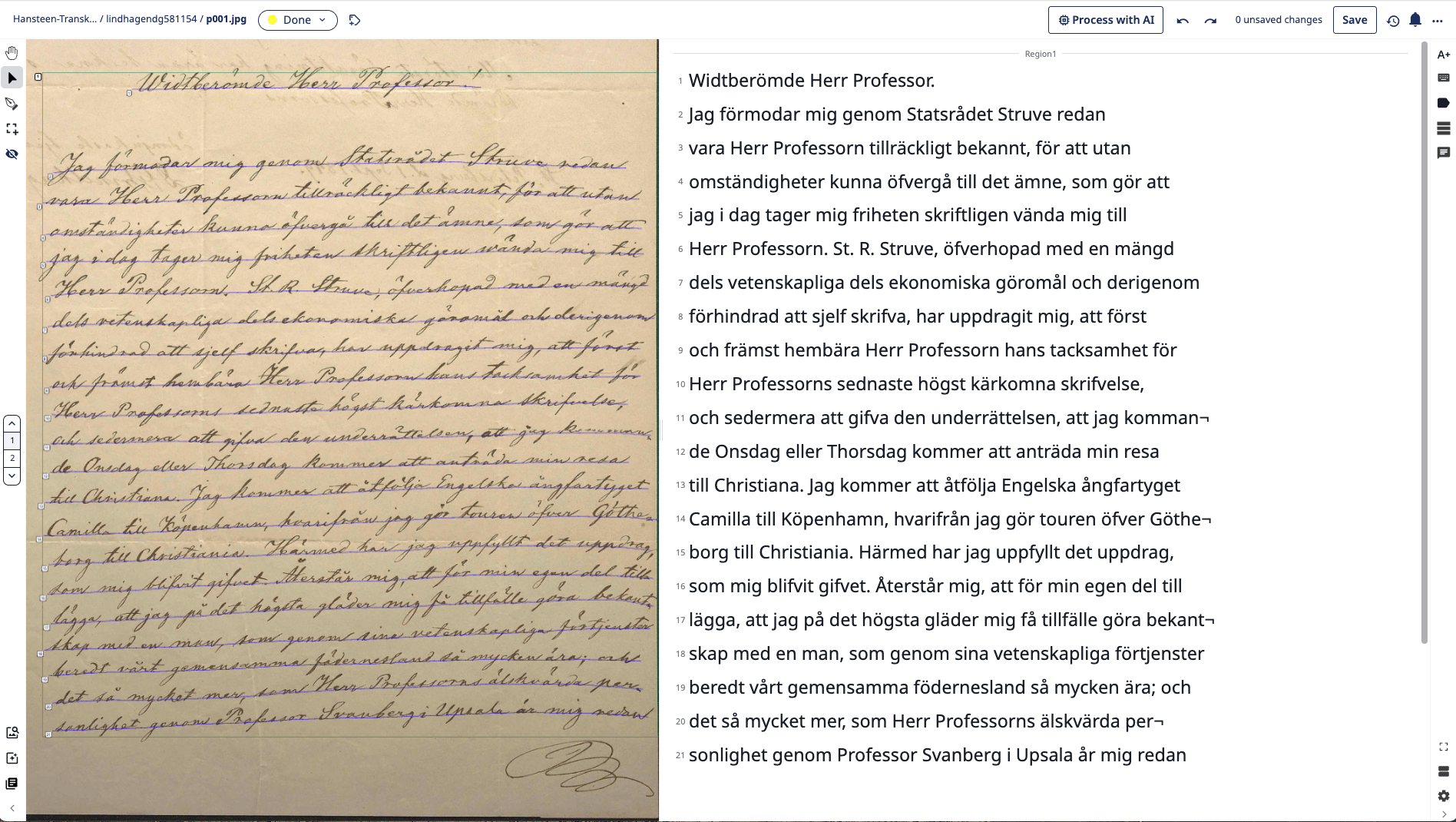

Daniel Georg Lindhagen to Christopher Hansteen. St. Petersburg, 1st September 1849

Digitised letter (left) – Record with metadata on Alvin platform, without images (right)

Experiment Overview

Our Challenge

The academic correspondence of the Norwegian Observatory:

Letters to Christopher Hansteen in the Collection of the Museum

of University History (MUV)

- Multilingual (German, French, Swedish, English, Russian)

- Several hands, few letters per hand

- Highly specific content (astronomical instruments, technical specifications, mathematical symbols and formulas)

- Early 19th-century handwriting

Experiment Overview

Manual Labor: Metadata

ALVIN platform and metadata, scanning and digitisation

Experiment Overview

Aim: Increase readability and searchability of individual letters

Situation: no time or money for expert manual transcription

Using machine learning to do text recognition, correct result by hand until publishable

Transcription Process

AI = Machine Learning

Handwritten Text Recognition (HTR)

Pattern recognition based on lines

Manual creation of Ground Truth (25+ pages)

Community project with materials from archives, libraries, museums, and research projects

Ground Truth: human-created, human-quality checked

AI = Large Language Model (LLM)

Language generation based on probability

trained on Ground Truth documents in the Transkribus collection

combined with HTR

Language/language group specific

Source bias (genre, medium, social group, type of document)

Training bias (good at recognising the known/most probable based on training set)

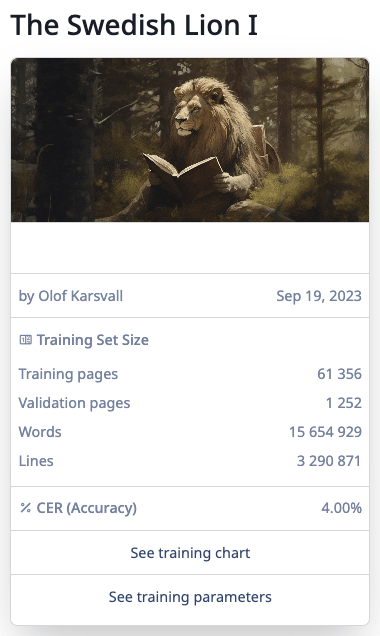

Swedish Letters

Machine Learning only HTR-model

trained on 15 million words / 3 million lines

running texts from 17th-19th century

Archival collections mainly

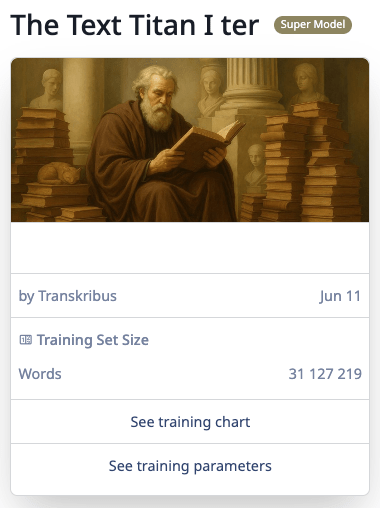

German Letters

LLM-enhanced HTR model

trained on 31 million words

multilingual, heterogeneous, balanced training set

historical and modern documents

???

Swedish Letters: Plain HTR

High-quality automatic transcription

Some characters are misread (r/n, a/o, k/h)

Swedish Letters – corrected

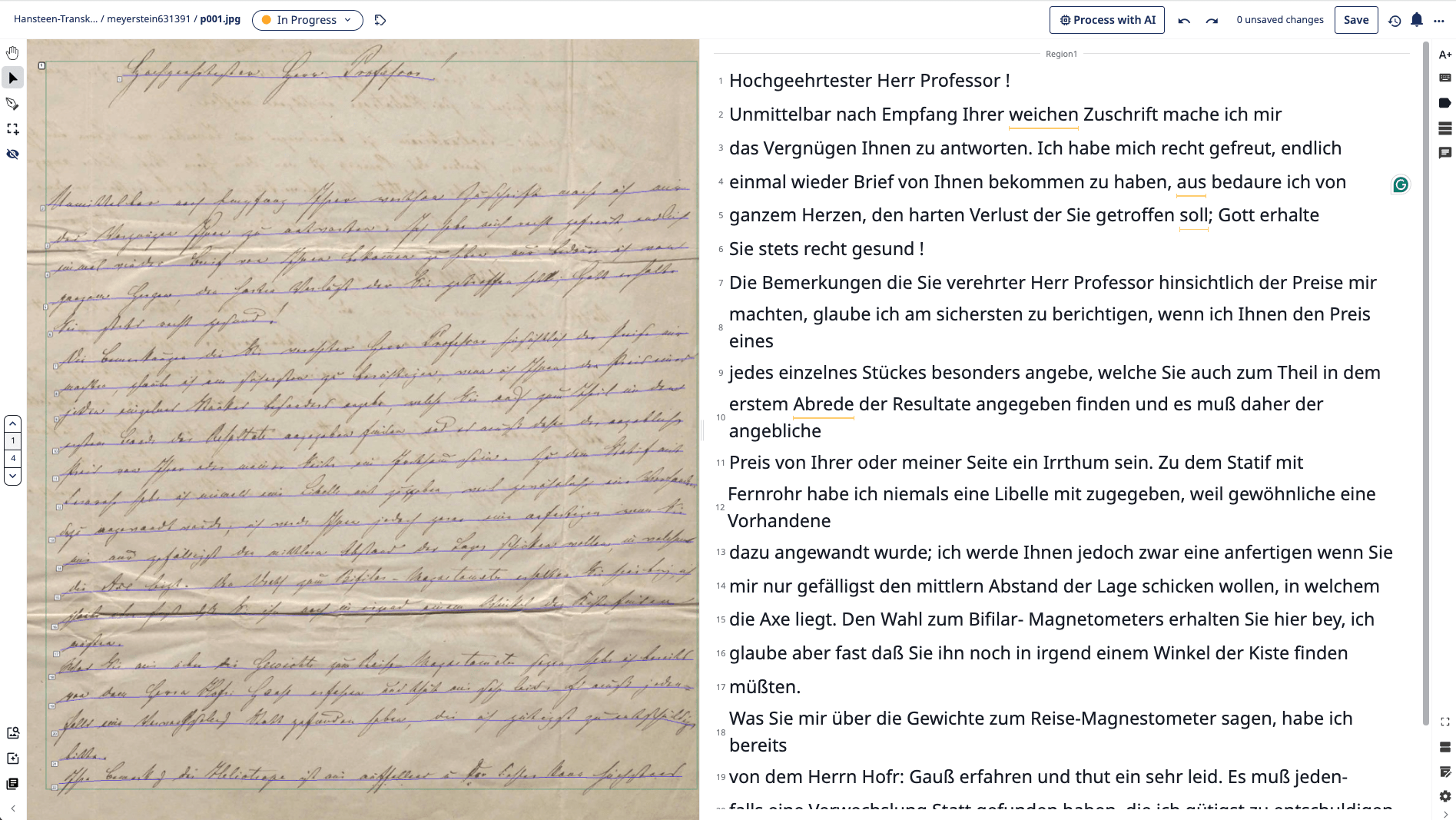

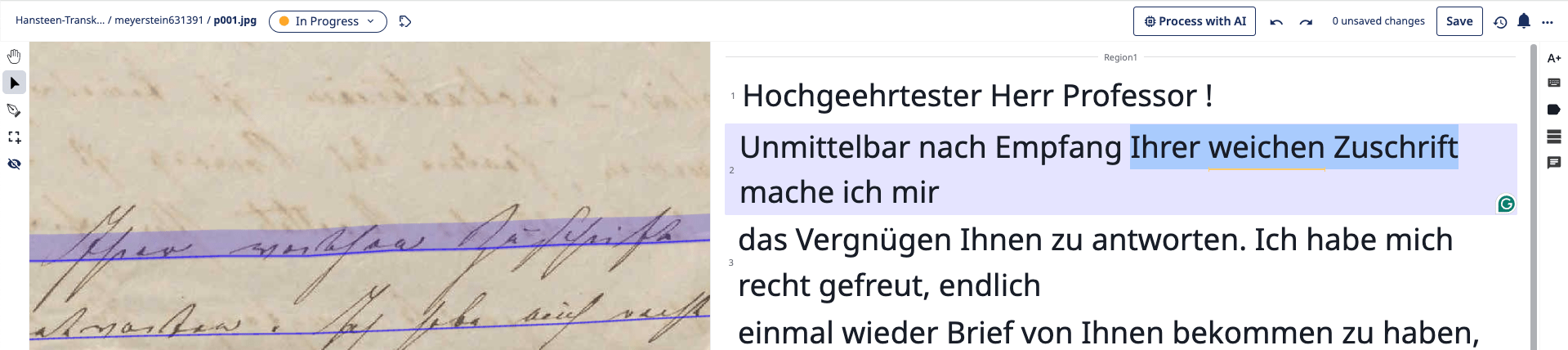

German Letters – LLM-enhanced HTR

High-quality text recognition, but:

too eager to produce probable text!

-

Excitement

-

Attention

-

Irritation (Believable Bullshit)

-

Frustration

-

Disenchantment

The Five Stages of … Proofreading LLM-enhanced HTR

Utilizing Generative AI

Created concise "Regesta" to illuminate essential content of letters.

AI Hallucination

In the field of artificial intelligence, a hallucination, or artificial hallucination (also called bullshitting, confabulation, or delusion), is a response generated by an AI that presents false or misleading information as fact. (Wikipedia)

One example of many: HTR-model with LLMs built in tends to confabulate

Broader Access and Understanding

ALVIN platform enhances digital cultural heritage accessibility.