Notes

IMAGES

Shirley Cards

Guest Lecture!

AI Alignment

Cahootery

https://cahootery.herokuapp.com/section/5fcea466558eb10004f28682

ISCHOOL VISUAL IDENTITY

#002554

#fed141

#007396

#382F2D

#3eb1c8

#f9423a

#C8102E

TL;DR

- AI benefits are real.

- a respectable living standard for all humans?

- hundreds of trillions of dollars per year

- AI risks are real.

- AI ethics and AI safety are not about being "for" or "against," but about solving real (technical and social organizational) problems.

- fairness, bias, error

- existential threat to human race

HOW ABOUT A GUEST LECTURE?

R. Miles (2021) does a nice job summarizing the issues that come under the heading "AI Safety" in about 20 minutes.

Artificial General Intelligence (AGI)

Machine intelligence with the capacity to learn any task that a human being can

What

if

we succeed?

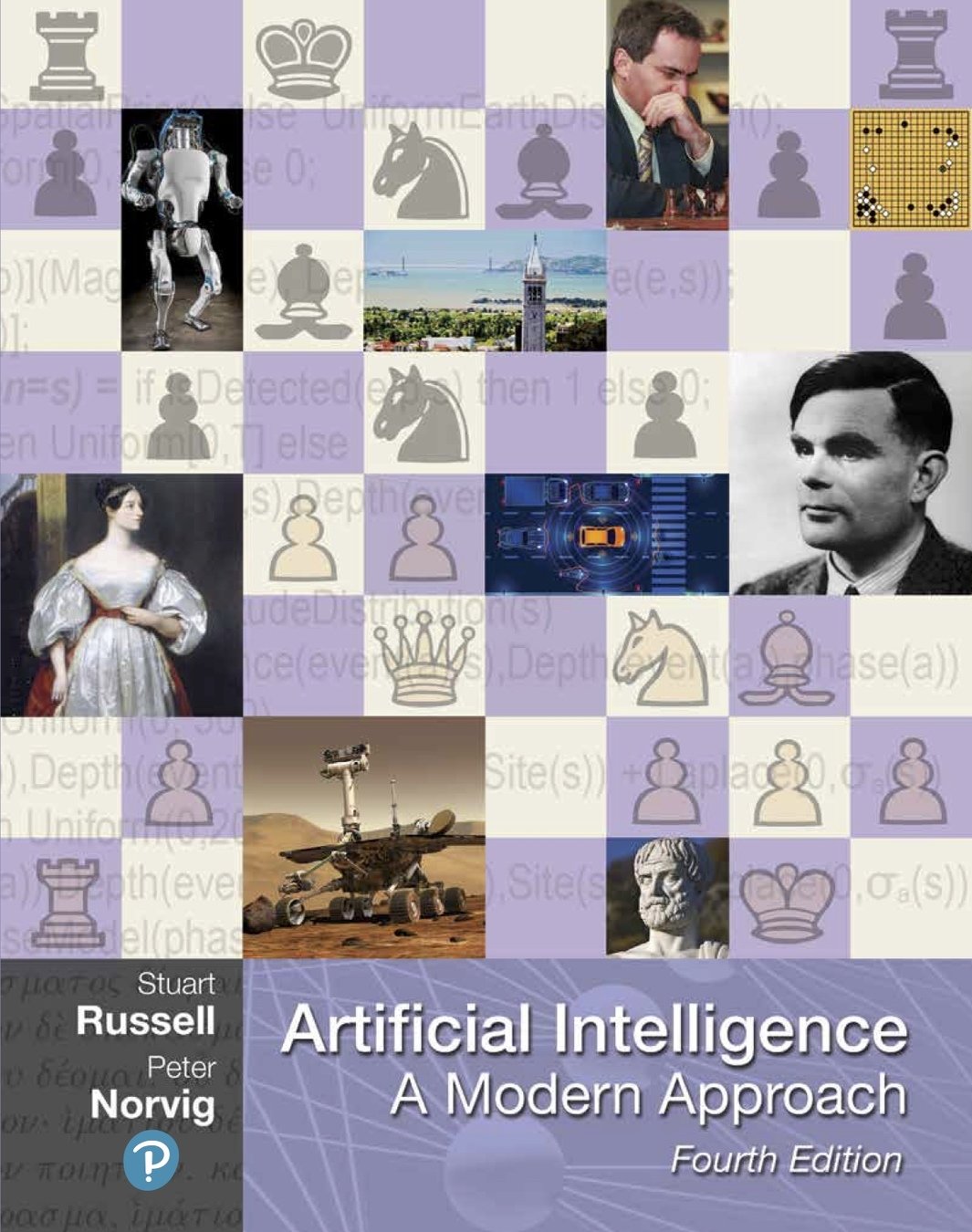

AGI References

Intelligence Explosion

If humans can build an AGI then an AGI would be able to improve upon itself even more quickly than humans could.

What

if

we succeed?

THE

ALIGNMENT

PROBLEM

How do we insure that super intelligent machines will share our values?

The problem: we don't know how to map "what we care about" (our "values") to the objective function that we give to an algorithm.

Imagine a machine that will do perfectly what it is told to do. Alignment is being able to tell it what we want it to do.

Game Change

A machine can see the whole thing at once. A big enough machine could base its next move on all the books ever printed.

What is a "long way" away?

As Alfred North Whitehead wrote in 1911, “Civilization advances by extending the number of important operations which we can perform without thinking about them.”

"The main missing piece of the puzzle is a method for constructing the hierarchy of abstract actions in the first place. For example, is it possible to start from scratch with a robot that knows only that it can send various electric currents to various motors and have it discover for itself the action of standing up?"

"What we want is for the robot to discover for itself that standing up is a thing—a useful abstract action, one that achieves the precondition (being upright) for walking or running or shaking hands or seeing over a wall and so forms part of many abstract plans for all kinds of goals."

"I believe this capability is the most important step needed to reach human-level AI."

Russell p 87ff

A final limitation...

"...of machines is that they are not human. This puts them at an intrinsic disadvantage when trying to model and predict one particular class of objects: humans."

..."acquiring a human-level or superhuman understanding of humans will take them longer than most other capabilities."

Russell p 97ff

The Risk

- Automated secret police

- Blackmail machines

- Personalized propaganda

- Deepfakes - nothing is true anymore

- People as reinforcement learning algorithms, training them to optimize the objective set by the state

- Robots put us out of work

The Promise

- a respectable living standard for all humans?

- hundreds of trillions of dollars per year

The King Midas Theorem

A system that is optimizing a function of n variables, where the objective depends on a subset of size k<n, will often set the remaining unconstrained variables to extreme values; if one of those unconstrained variables is actually something we care about, the solution found may be highly undesirable. This is essentially the old story of the genie in the lamp, or the sorcerer’s apprentice, or King Midas: you get exactly what you ask for, not what you want.

- Stuart Russell

The Alignment Problem

as a

Reward Function

Design Problem

Recall how thermostats work

TIME

TEMP

SET TEMP

ACTUAL TEMP

GAP

Furnace switches off but some heat continues to flow

Furnace ON

T

H

H

bedroom 1

bedroom 2

cold Canadian house in winter

And even if you'd realized this, you'd have a hard time figuring out what set temperature to select if you wanted to keep your door closed and have a reasonable temperature for sleeping.

WHY?

Even in something as simple as a thermostat, designing the control mechanism to take into account varied operating environments is really hard.

In reinforcement learning

we design

we design a reward function

to coax the agent to learn

a policy that benefits humans.

Reward Functions

domain: states x actions (4X4)

range: rewards and punishments, costs and benefits

| Actions | ||||

| Current State | A1 | A2 | A3 | A4 |

| S1 | S1 | S1 | S2 | S4 |

| S2 | S2 | S1 | S2 | S4 |

| S3 | S4 | S2 | S1 | S2 |

| S4 | S2 | S4 | S2 | S1 |

Reward Functions

domain: states x actions x nextStates (4X4X4=64)

range: rewards and punishments, costs and benefits

| 1 | 2 |

| 3 | 4 |

| Actions | ||||

| Current State | U | D | L | R |

| S1 | 0.85,S1 0.05,S2 0.05,S3 0.05,S4 |

0.85,S3 0.05,S1 0.05,S2 0.05,S3 |

0.85,S1 0.05,S2 0.05,S3 0.05,S4 |

0.85,S2 0.05,S1 0.05,S3 0.05,S4 |

| S2 | 0.85,S2 0.05,S1 0.05,S3 0.05,S4 |

0.85,S4 0.05,S1 0.05,S2 0.05,S3 |

0.85,S1 0.05,S2 0.05,S3 0.05,S4 |

0.85,S2 0.05,S1 0.05,S3 0.05,S4 |

| S3 | 0.85,S1 0.05,S2 0.05,S3 0.05,S4 |

0.85,S3 0.05,S1 0.05,S2 0.05,S3 |

0.85,S3 0.05,S1 0.05,S2 0.05,S3 |

0.85,S4 0.05,S1 0.05,S2 0.05,S3 |

| S4 | 0.85,S2 0.05,S1 0.05,S2 0.05,S3 |

0.85,S4 0.05,S1 0.05,S2 0.05,S3 |

0.85,S3 0.05,S1 0.05,S3 0.05,S4 |

0.85,S4 0.05,S1 0.05,S2 0.05,S3 |

I am in state X, should I do action Y? The likely result is Z - what are the expected rewards in life trajectories following from there? But I also have to consider that action Y could lead to states Z', Z'', etc.

The Alignment Problem

AI Safety

AI Ethics

AI Fairness

Human Compatible AI

Humanly Beneficial AI

Responsible AI

Not New for Humans

- principal/agent problems

- bounded rationality

- incomplete contracts

- bureaucracy

- intercultural communication

- education

- etiquette

- common sense

Not New for Computer Science

“It seems probable that once the machine thinking method had started, it would not take long to outstrip our feeble powers.... They would be able to converse with each other to sharpen their wits. At some stage therefore, we should have to expect the machines to take control.”

Alan Turing, 1951.

“We had better be quite sure that the purpose put into the machine is the purpose which we really desire.” Norbert Wiener, 1960

The Gorilla Problem

Direct Normativity

-

First Law: A robot may not injure a human being or, through inaction, allow a human being to come to harm.

-

Second Law: A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

-

Third Law: A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Asimov, 1942

Indirect Normativity

-

The machine's only objective is to maximize the realization of human preferences.

-

The machine is initially uncertain about what those preferences are.

-

The ultimate source of information about human preferences ("are all-encompassing; they cover everything you might care about, arbitrarily far into the future.") is human behavior.

Russell 2020

What is to be done?

Read Some Books!

Podcasts

References

Watch some curated lecture videos

A. Geramifard (2018) explains RL in a way that builds nicely on material from our "How Machines Learn" lecture. Longish, but well done.

Get Involved

There is a lot of important work to be done to develop tools that solve the problem of control. It turns out that we might not really want machines that we can simply turn on and then have them run without human intervention.

So far, we have talked a lot about bias and fairness but we haven't figured out how to maintain control and safety.

Get Involved

We need to study how to plan and make decisions with uncertain information about human preferences. And how should AI systems decide for groups? We need updates to economic theory, social theory, law, and policy.

Are you available?

FINIS

One More Thing...

How to Learn

Haven't Even Started

1 page read, low comprehension

1 page read, high comprehension

2 pages read, low comprehension

2 pages read, mixed comprehension

2 pages read, high comprehension

Poor Exam

So-so Exam

Excellent Exam

Play Video Game

Read

Read and Take Notes

Clean Bedroom

Sit Exam

Play Video Game

Play Video Game

Clean Bedroom

Play Video Game

Play Video Game

Play Video Game

Clean Bedroom

Clean Bedroom

Clean Bedroom

Read and Take Notes

Sit Exam

Sit Exam

How to Learn

Haven't Even Started

1 page read, low comprehension

1 page read, high comprehension

2 pages read, low comprehension

2 pages read, mixed comprehension

2 pages read, high comprehension

Poor Exam

So-so Exam

Excellent Exam

Play Video Game

Read

Read and Take Notes

Clean Bedroom

Sit Exam

Play Video Game

Play Video Game

Clean Bedroom

Play Video Game

Play Video Game

Play Video Game

Clean Bedroom

Clean Bedroom

Clean Bedroom

Read and Take Notes

Sit Exam

Sit Exam

Read and Take Notes

Clean Bedroom

Warning: The video that follows is a dramatization of violent and disturbing events.

Slaughterbots I

Slaughterbots II

Everybody's Got AI Principles

AI Governance

What Do Principles Look Like?

Integrate AI: The Ethics of Artificial Intelligence (2000)

1. If you want the future to look different from the past, you need to design systems with that in mind.

2. Be clear about what proxies do and don’t optimize.

3. When you deal with abstractions and groupings, you run the risk of treating humans unethically.

4. Beware of correlations that mask sensitive data behind benign proxies.

5. Context is key for explainability and transparency.

6. Privacy is about appropriate data flows that conform to social norms and expectations.

7. 'Govern the optimizations. Patrol the results.'

8. Ask communities and customers what matters to them.

AI at Google: Our Principles (2018)

1. Be socially beneficial.

2. Avoid creating or reinforcing unfair bias.

3. Be built and tested for safety.

4. Be accountable to people.

5. Incorporate privacy design principles.

6. Uphold high standards of scientific excellence.

7. Be made available for uses that accord with these principles.

Summaries from https://aiethicslab.com/big-picture/

Google's principles also have a section "AI applications we will not pursue":

In addition to the above objectives, we will not design or deploy AI in the following application areas:

- Technologies that cause or are likely to cause overall harm. Where there is a material risk of harm, we will proceed only where we believe that the benefits substantially outweigh the risks, and will incorporate appropriate safety constraints.

- Weapons or other technologies whose principal purpose or implementation is to cause or directly facilitate injury to people.

- Technologies that gather or use information for surveillance violating internationally accepted norms.

- Technologies whose purpose contravenes widely accepted principles of international law and human rights.

As our experience in this space deepens, this list may evolve.

So, What Is To Be Done?

Specification Gaming - Gaming the Specs

Research Directions

- Inverse Reinforcement Learning: algorithm infers reward function by observing behavior or policy of an agent

- Cooperative Inverse Reinforcement Learning: agents can learn their human teachers' utility functions by observing and interpreting reward signals in their environments

- Training by debate. training by means of debate between AI systems, with the winner judged by humans

- Reward modeling. Reinforcement learning in which an agent receives rewards from another model, one that is trained to predict human feedback

ERROR

SET

OUTPUT

Up

Until

Now

The What If We Succeed Problem

"As Norbert Wiener put it in his 1964 book God and Golem,7

In the past, a partial and inadequate view of human purpose has been relatively innocuous only because it has been accompanied by technical limitations. . . . This is only one of the many places where human impotence has shielded us from the full destructive impact of human folly."

Outtakes

My New Wonder Tool

Concepts (from other fields)

Accuracy

Validity

Precision

Reliability

Bias

Fairness

Bounded Rationality

"That's awesome news. We need unbiased police forces."

What Is Bias I?

when a machine learning model used to inform decisions effects people differently on the basis of categories that are not acceptable as reasons for differential treatment in a given community

Three Examples

COMPAS: Race & Recidivism prediction

- courts use estimate to inform bail, sentencing

- accurate prediction but bias in false positives

- national samples vs. local & temporal variation

Word Embeddings reproduce race/gender stereotypes

- WE put words into spatial proximity based on meaning

NIST Idemia: ID matching

- software mismatched white women’s faces 1 in 10,000, black women’s faces 1 in 1,000

What Is Bias II?

Bias is the tendency of a statistic to overestimate or underestimate a parameter

Fighting Bias

HR Example

Sampling Error

Many sources of error are subtypes of "sampling error" - when the cases that we collect data on do not actually "represent" that universe we intend to study.

Garbage In/Garbage Out

(GIGO)

- Algorithm cannot make sense of nonsense

- If social processes create the data, an algorithm will learn the social process, even things that "good" humans would be able to ignore

AI

https://achievement.org/achiever/marvin-minsky-ph-d/#gallery

Genesis

(A) Definition

The capacity of a system to interpret and learn from its environment and to use those learnings to achieve goals and and carry out tasks.

Penultimate Chapter

27.3 The Ethics of AI ... 986

27.3.1 Lethal autonomous weapons ... 987

27.3.2 Surveillance, security, and privacy ... 990

27.3.3 Fairness and bias ... 992

27.3.4 Trust and transparency ... 996

27.3.5 The future of work ... 998

27.3.6 Robot rights ... 1000

27.3.7 AI Safety ... 1001

Russell & Norvig 4th ed 2020

Reward Function

"Reward functions describe how the agent ought to behave. In other words, they have normative content, stipulating what you want"

In "gridworld"

RF: S -> R

RF: S x A -> R

etc.

Artificial General Intelligence (AGI)

from the NYT article

"For privacy advocates like Mr. Stanley of the A.C.L.U., the concern is that increasingly powerful technology will be used to target parts of the community — or strictly enforce laws that are out of step with social norms."

Alignment is Not Yet Understood

Getting to Alignment

- Direct normativity

- Indirect normativity

- Deference to observed human behavior

- Cooperative Inverse Reinforcement Learning

- Training by debate

- Reward modeling

Direct normativity

First Law

A robot may not injure a human being or, through inaction, allow a human being to come to harm.

Second Law

A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

Third Law

A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Indirect normativity

Eliezer Yudkowsky : "achieve that which we would have wished the AI to achieve if we had thought about the matter long and hard."

"coherent extrapolated volition" (CEV), where the AI's meta-goal would be something like "achieve that which we would have wished the AI to achieve if we had thought about the matter long and hard."

Indirect Normativity References

Deference to observed human behavior

Altruistic

Uncertain

Watch All of Us

Cooperative Inverse Reinforcement Learning

Cooperative Inverse Reinforcement Learning

Training by debate

Problem: training an agent on human values and preferences is hard

Option: a coach during training that labels good and bad behavior. Expensive. Difficult for complex tasks. Errors when bad behavior looks good.

Alternative: a coach during training that labels good and bad behavior. Expensive. Difficult for complex tasks. Errors when bad behavior looks good.

Training by Debate

Reward modeling

In RL, the environment "contains" rewards and the agent learns a policy to maximize its reward.

To deliberately train an agent, a human has to formulate those rewards.

A "reward function" maps states x actions to rewards.

Human observes snippets and offers critique.

Then the agent uses RL to learn a behavioral policy.

Critique is data for algorithm learning unknown reward function.