Part One: Systems That Get Stuff Done

Part Three: How Do Systems Learn to Get Stuff Done?

Part Two: How Do Systems Do Stuff?

Part One: Systems That Get Stuff Done

Part Three: How Do Systems Learn to Get Stuff Done?

Part Two: How Do Systems Do Stuff?

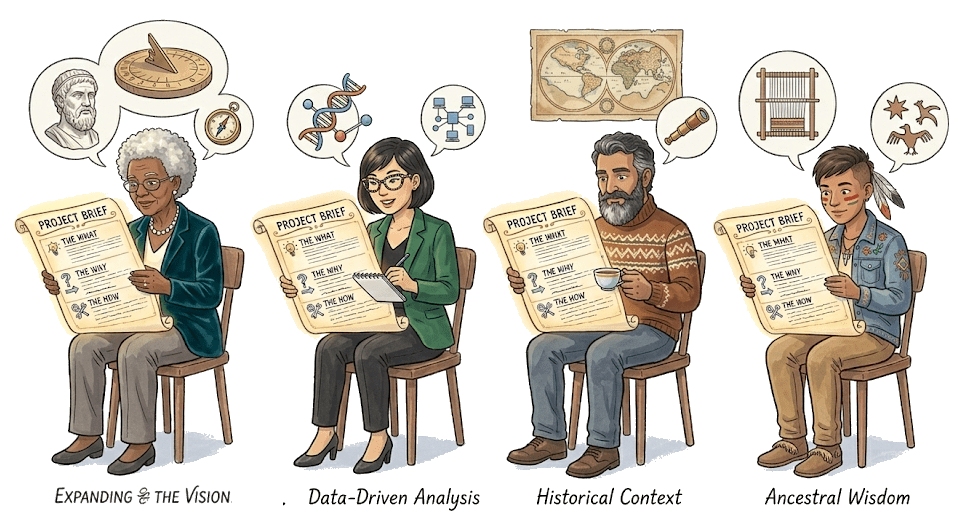

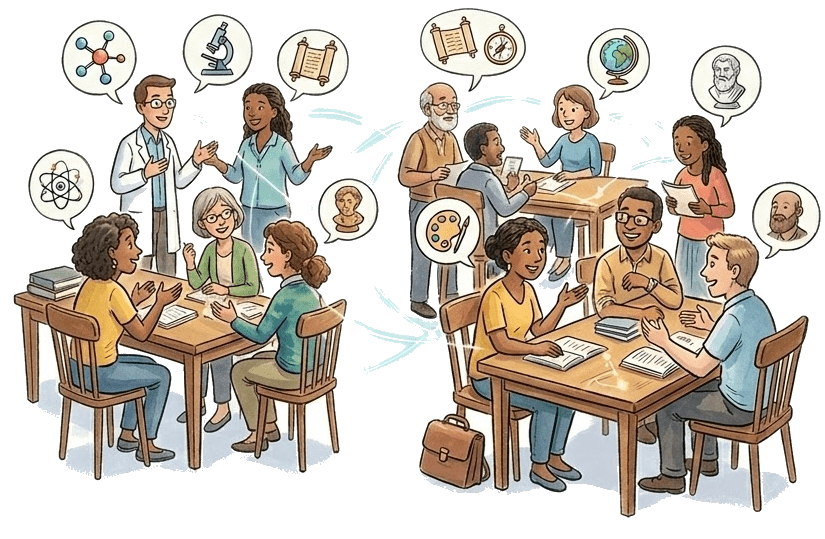

A just so story about how intellectual work gets done.

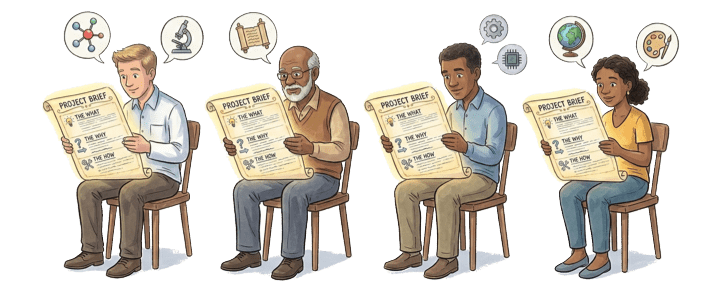

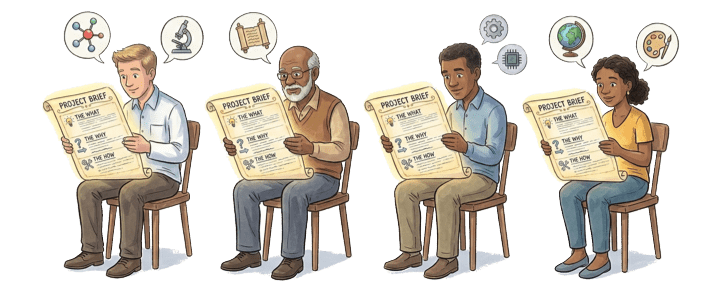

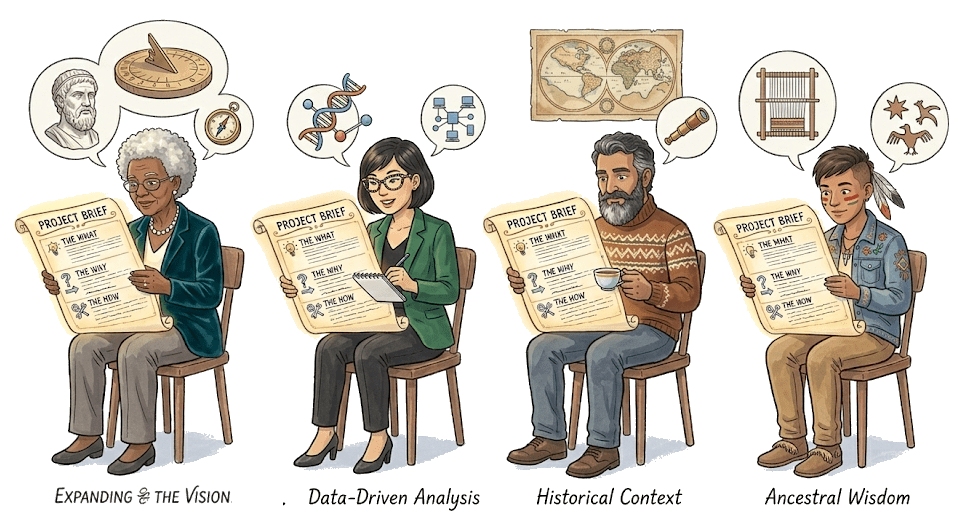

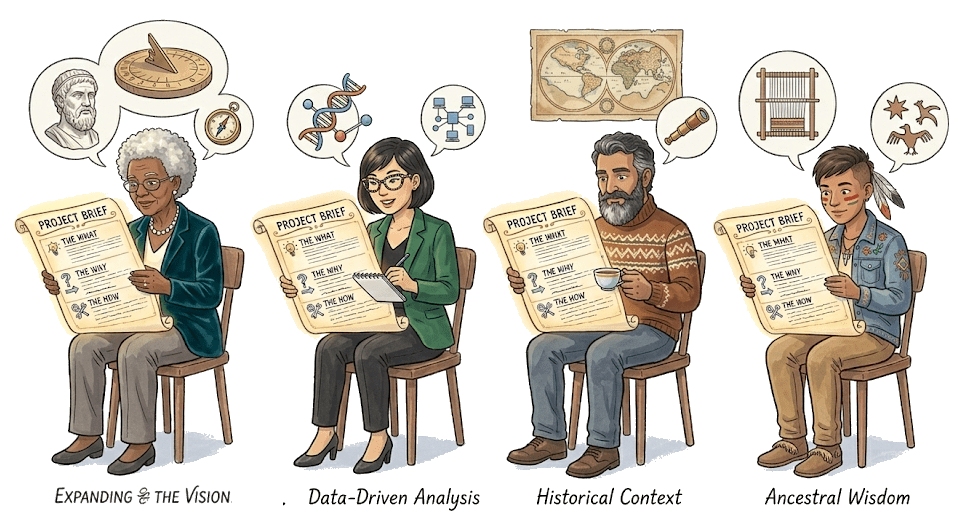

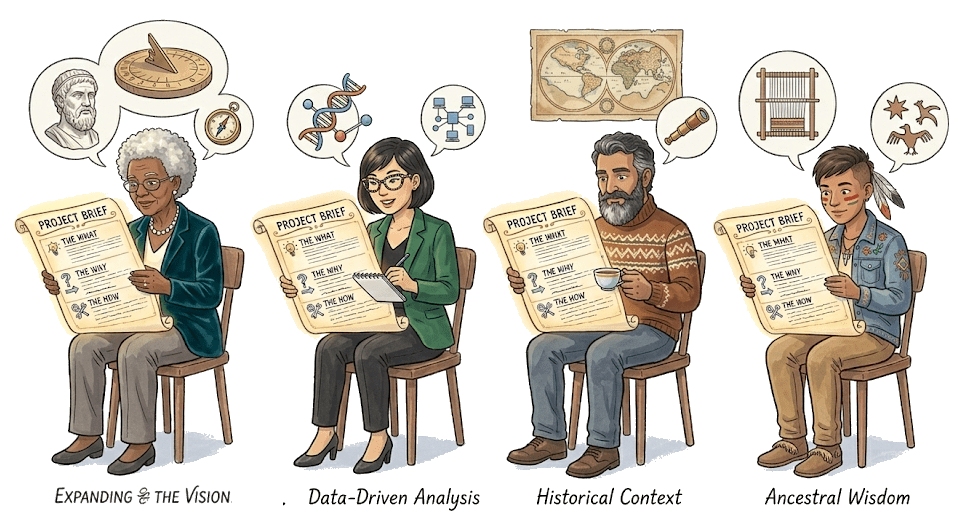

Let's imagine there is a community of collaborative scholars,

each with their own specialty and expertise.

One member, the principal investigator (PI), conjures up

a project and writes a brief document describing the

what and the why and the how.

Part One: Systems That Get Stuff Done

The PI solicits four member

The Calculus of Blame

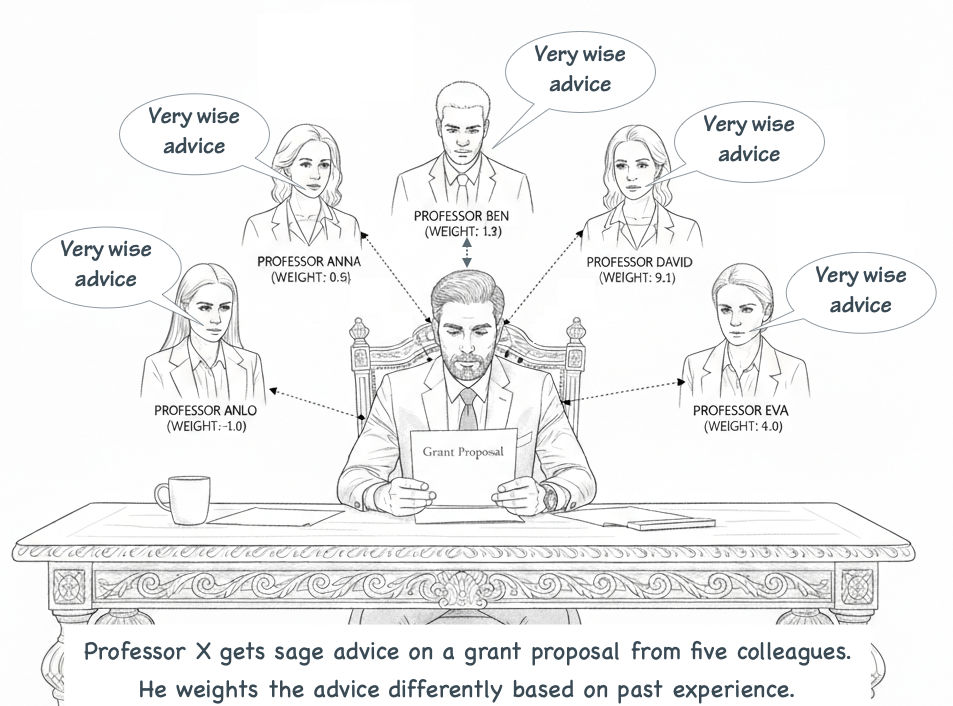

As our acknowledgements always say, a researcher depends on colleagues. I share my draft grant proposal with trusted colleagues. They offer advice. I value the advice of some colleagues more than others.

The grant I submit is a sort of weighted average of the advice I got.

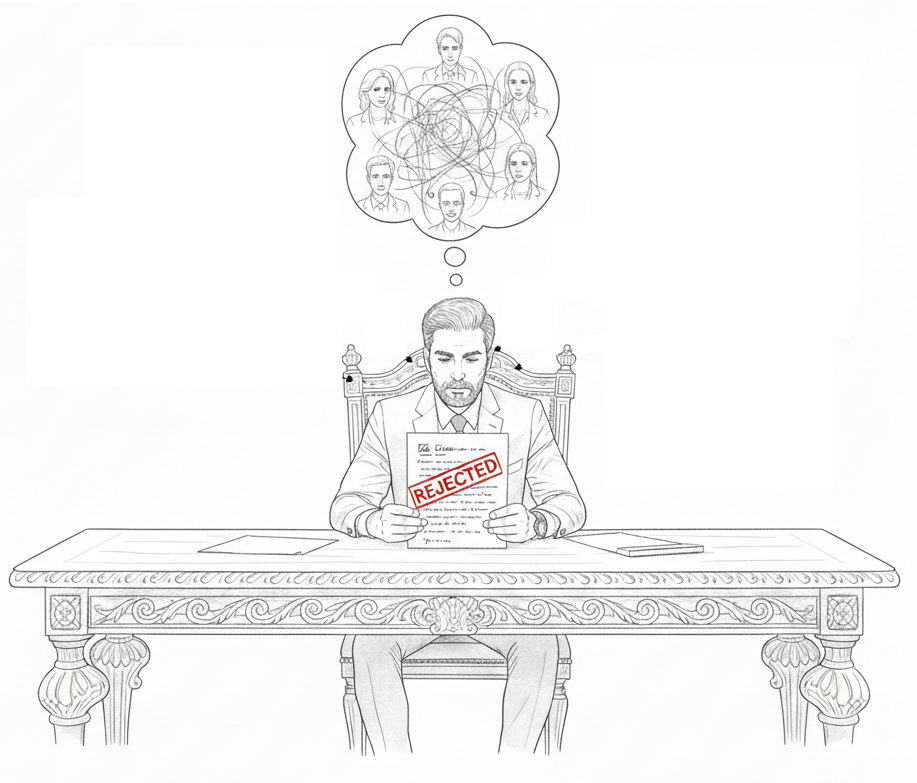

The Calculus of Blame. When the grant is rejected I read the referees' evaluation and scores and think about the advice I got. I ask myself how the score might be different if I'd taken Eva's advice a bit more to heart? Or if I had relied less on Ben's suggestions? I intuitively compute how much the outcome might have changed with small changes in how I weighed the advice of each of my colleagues.

And I make a mental note to adjust my weights next time.

The Derivative of Blame

The Concept: Determining who contributed most to the mistake.

-

The Scenario: You look back at the committee. You ask: "If I had listened to Professor Smith just a tiny bit less, would our total error have gone down?"

-

The Calculus: This is the Partial Derivative ($$). It tells you the sensitivity of the total error to that specific person's weight.

-

The Wisdom of Crowds: You aren't "teaching" the friends; you are calculating the "gradient" (the direction of steepest improvement) for the entire group's organization.

Coding Compliance vs Coding Learning

The Concept: Determining who contributed most to the mistake.

-

The Scenario: You look back at the committee. You ask: "If I had listened to Professor Smith just a tiny bit less, would our total error have gone down?"

-

The Calculus: This is the Partial Derivative ($\frac{\partial Loss}{\partial \omega}$). It tells you the sensitivity of the total error to that specific person's weight.

-

The Wisdom of Crowds: You aren't "teaching" the friends; you are calculating the "gradient" (the direction of steepest improvement) for the entire group's organization.

def evaluate_grant(impact_score, feasibility_score, budget_alignment):

# The human defines the rules and the logic flow

if impact_score > 0.8:

if feasibility_score > 0.7:

return "Approved"

elif budget_alignment == "High":

return "Waitlist"

else:

return "Rejected"

else:

return "Rejected"

# Logic is rigid; if the world changes, the code must be manually rewritten.import keras # or torch

# The human defines the architecture (the "committee")

model = Sequential([

Input(shape=(3,)), # 3 inputs: Impact, Feasibility, Budget

Dense(50, activation='relu'), # 50 "colleagues" processing the data

Dense(20, activation='relu'), # A sub-committee refining the views

Dense(1, activation='sigmoid') # The final decision (0 to 1)

])

# The human defines the learning process

model.compile(

optimizer='adam', # The strategy for adjusting weights

loss='binary_crossentropy', # How we measure the "error" or "blame"

metrics=['accuracy']

)

# The human provides data, not rules

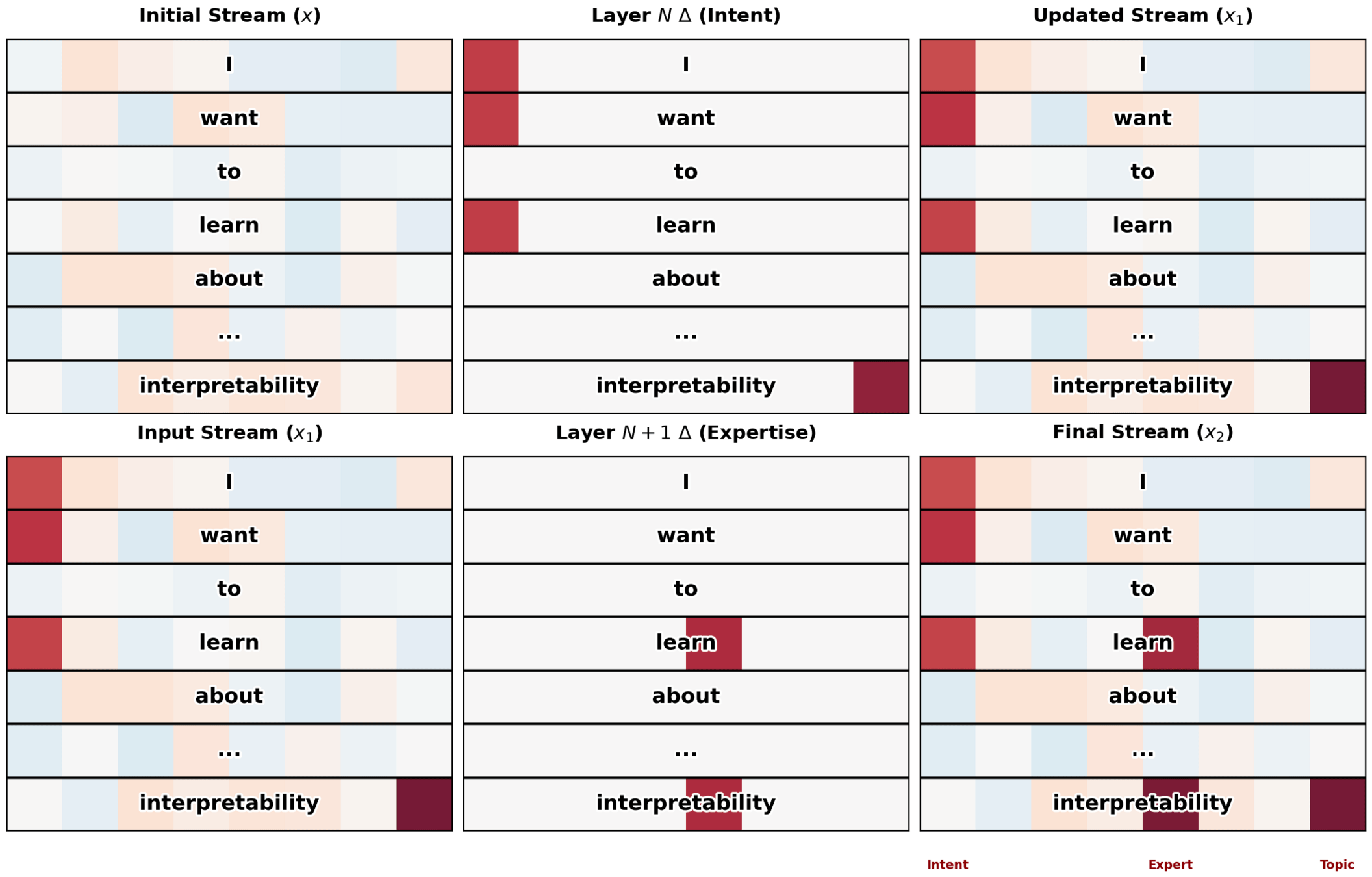

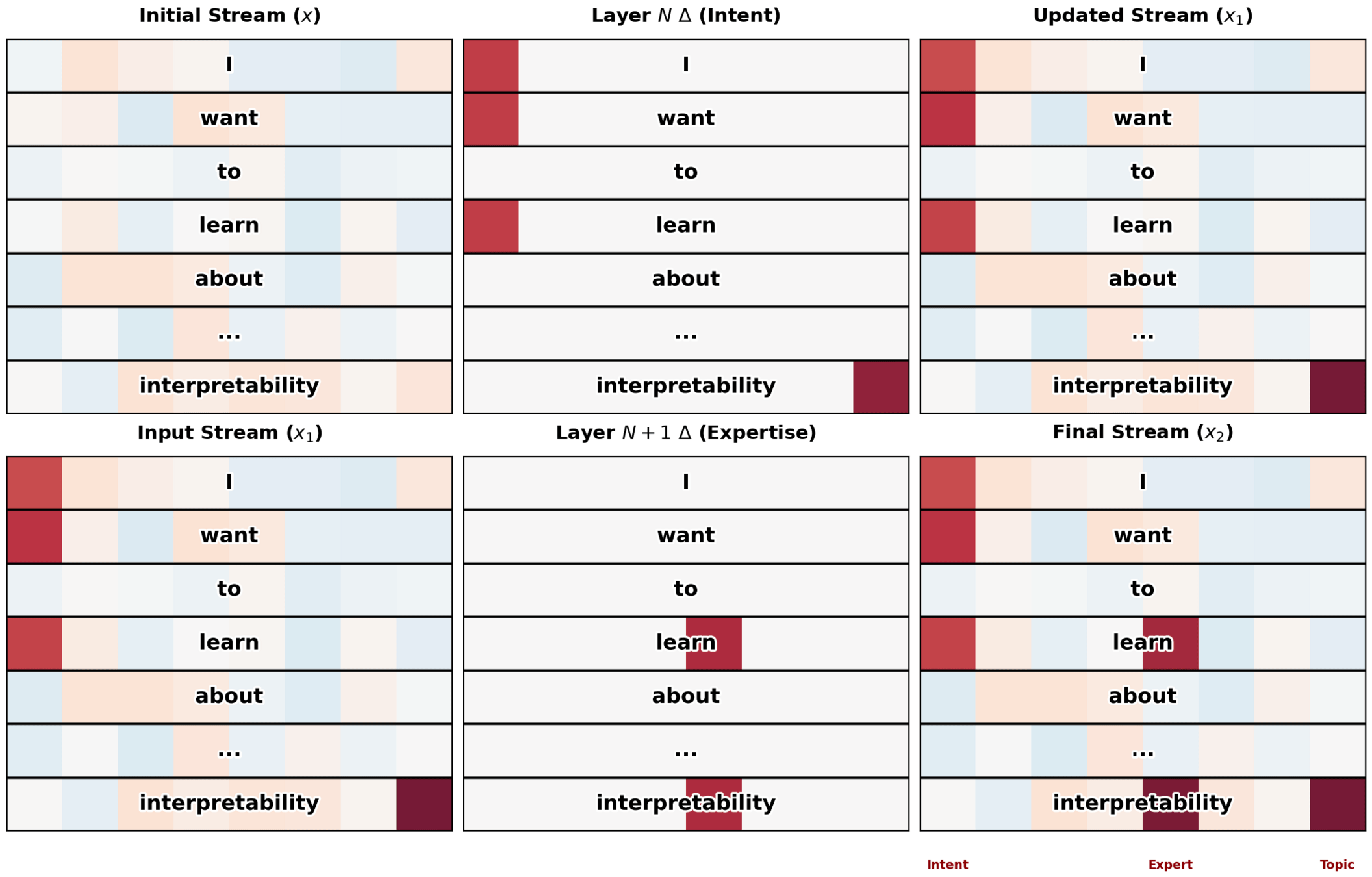

model.fit(past_grant_data, outcomes, epochs=100)Interpretability = What's Going On Inside

or

How is the LLM doing that?!

I

Absolutely, I'd love to be your conceptual tutor...

want

to

learn

about

...

intrepretability

Specialist Annotations

X1

X0

Specialist Annotations

...

Xn

BEST NEXT WORD

BEST NEXT WORD

APPEND

=<STOP>?

RESPOND

=<STOP>?

YES

NO

BEST NEXT WORD

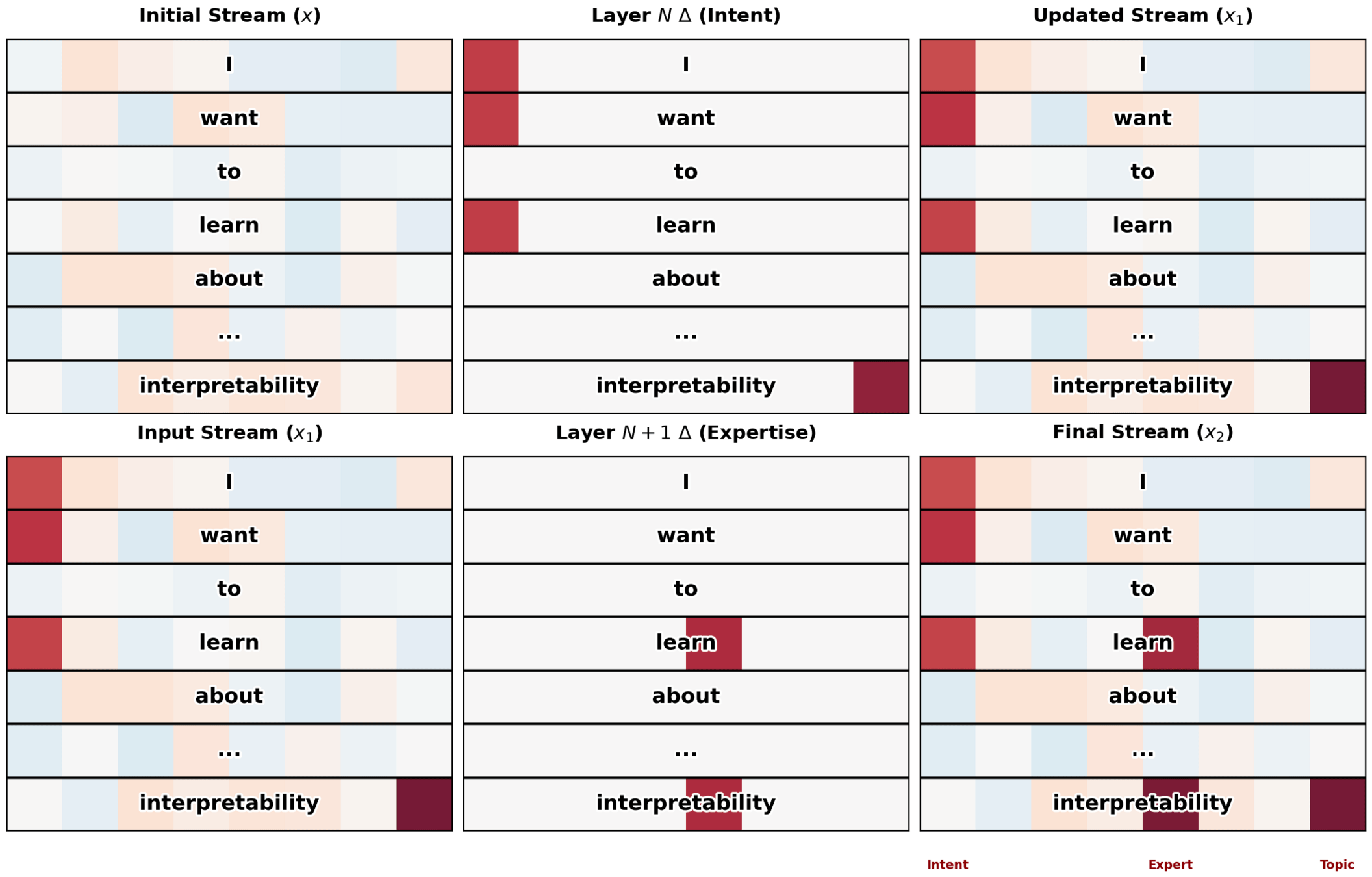

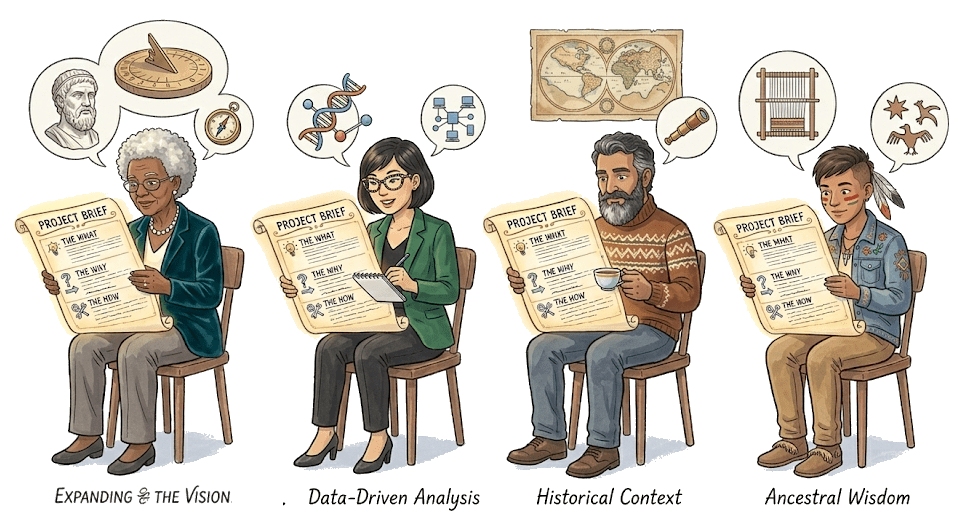

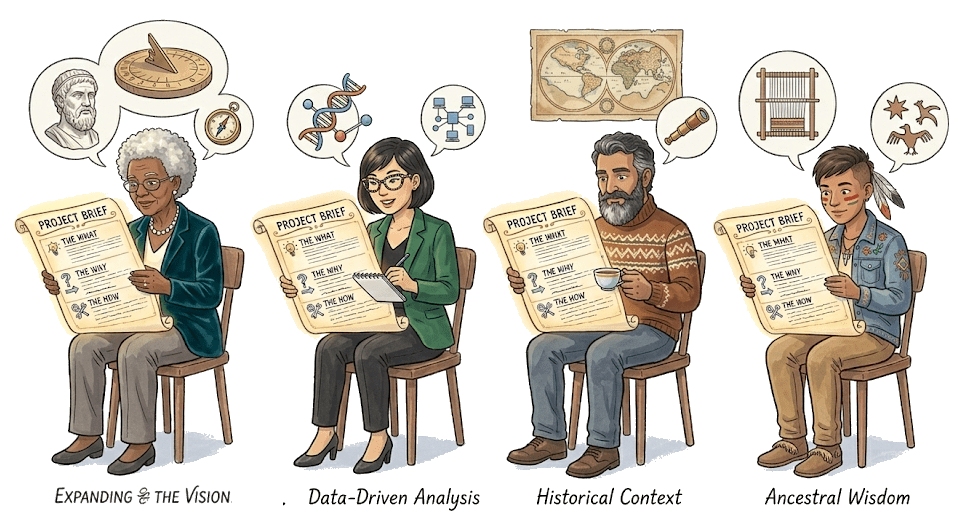

"I want to investigate whether ancient agricultural practices contain embedded knowledge about soil ecology that modern science has overlooked."

Physicist notes "complexity, emergence; distributed knowledge over generations"

Legal expert: not much to say

Historian notes "revisionist approach to history of science; methodological debates"

Humanist notes "tension in the framing; overlooked suggests deficit narrative; ancient/modern binary"

"I want to investigate* whether ancient* agricultural practices* contain embedded* knowledge* about soil ecology* that modern* science has overlooked*."

Physicist notes "complexity, emergence; distributed knowledge over generations"

BEST NEXT WORD

ETC.

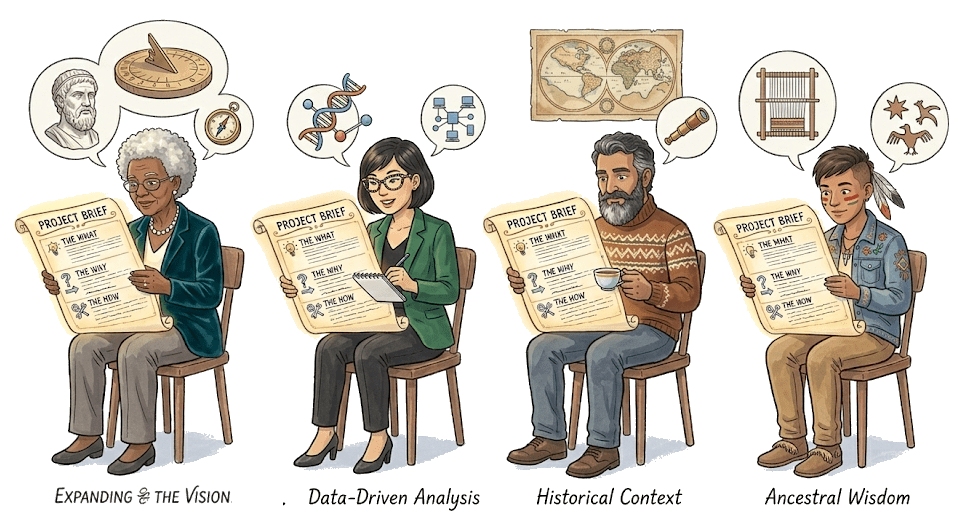

Philosopher of science notes "emergence" and "revisionism" and thinks "Kuhn, Polyani"

Historian: "revisionist" plus "binary" suggests "post-colonial studies"

Biologist likes "distributed knowledge" and "soil networks" and thinks "mycorrihizal networks!"

Ancestral wisdom expert thinks "embedded knowledge needs unpacking"

"I want to investigate* whether ancient* agricultural practices* contain embedded* knowledge* about soil ecology* that modern* science has overlooked*."

BEST NEXT WORD

"I want to investigate whether ancient agricultural practices contain embedded knowledge about soil ecology that modern science has overlooked."

Biologist likes "distributed knowledge" and "soil networks" and adds hints of "mycorrihizal networks!"

"I want to investigate* whether ancient* agricultural practices* contain embedded* knowledge* about soil ecology* that modern* science has overlooked*."

Physicist adds accents of "complexity, emergence; distributed knowledge over generations"

The two specialists

form a circuit

abstract claim

→

concrete grounding

Interpretability = What's Going On Inside

or

How is the LLM doing that?!

Absolutely, I'd love to be your conceptual tutor...