How do LLMs Predict?

Jason

The HOOK

HOOK IDEA 1

HOOK IDEA 2

HOOK IDEA 3

HOOK IDEA 4

HOOK IDEA 5

HOOK IDEA 6

HOOK IDEA 7

HOOK IDEA 8

HOOK IDEA 9

HOOK IDEA 10

Generate at least 10 possible hooks. You generally don't know what will work until you've exhausted the obvious and started to explore uncharted territory

Storyboard Your Narrative

the hook

scene 1

scene 2

scene 3

scene 4

the takeaway

Review Transformers:

Transformer

Phrase 1

Phrase 2

Phrase 3

Phrase n

.........

Token 1

Token 2

Token 3

Token n

.........

Logits 1

Logits 2

Logits 3

Logits m

.........

For simplicity, we shall treat the Transformer as a "black box": it takes a sequence of vectors (tokens) and outputs a new sequence of vectors of the same length

Now, Logits...?

- the number of logits ("m") matches the vocabulary size of the model

- each logit contains information of how possible a specific phrase should show up in the next position

- the greater the logit value, the more possible the phrase will take place in the next position

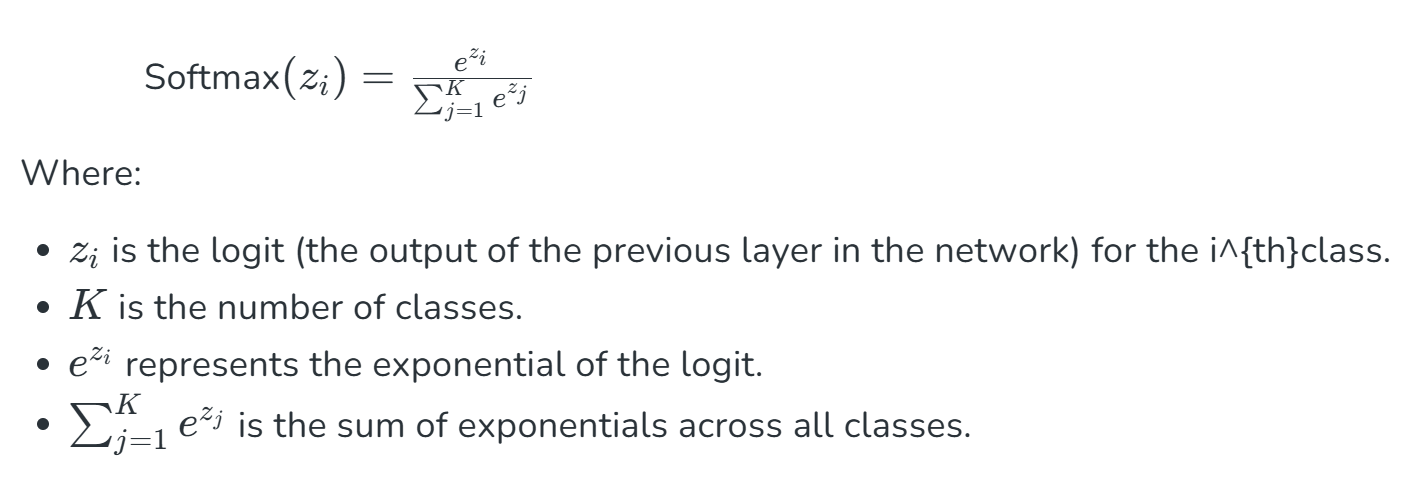

The SoftMax Function

source: https://www.geeksforgeeks.org/deep-learning/the-role-of-softmax-in-neural-networks-detailed-explanation-and-applications/

- normalizes the logits to values 0-1 that add up to 1, each representing the probability of specific vocabulary to occur

- brings logits to the same scale for comparison and analyses

- ensures positive values for manipulation and optimization (Cross-entropy loss, for example)

The Complete Transformer Architecture:

Transformer

Phrase 1

Phrase 2

Phrase 3

Phrase n

.........

Token 1

Token 2

Token 3

Token n

.........

Logits 1

Logits 2

Logits 3

Logits m

.........

Prob* 1

Prob 2

Prob 3

Prob m

.........

*Prob as in "Probability"

SoftMax

max( ) = Prob x

Prob 1, Prob 2, Prob 3,..., Prob m

Token x will be the next token!

Phrase x will be the next phrase of the sentence!

Yes, we have the next word...

but what about the word after that?

Autoregression: What makes transformers strong

The model makes the next prediction based on the outcome of the previous one

N * Phrases

(transformer)

The new predicted phrase

N * Phrases += new predicted phrase

(N+1) * Phrases

(transformer)

Another new predicted phrase

①

②

③

https://www.vectorstock.com/royalty-free-vector/cartoon-fight-scene-vector-42129877

The Autoregression goes on and on, predicting one new phrase at a time, and...

Voila! We have predicted an entire sentence!