Do Androids Dream of Exploding Stars and Receding Galaxies?

University of Delaware

Department of Physics and Astronomy

Biden School of Public Policy and Administration

Data Science Institute

federica b. bianco

she/her

https://app.sli.do/event/98bGw3kwqEeca4sGb882RS

when did the first Neural Network in astronomy review came out?

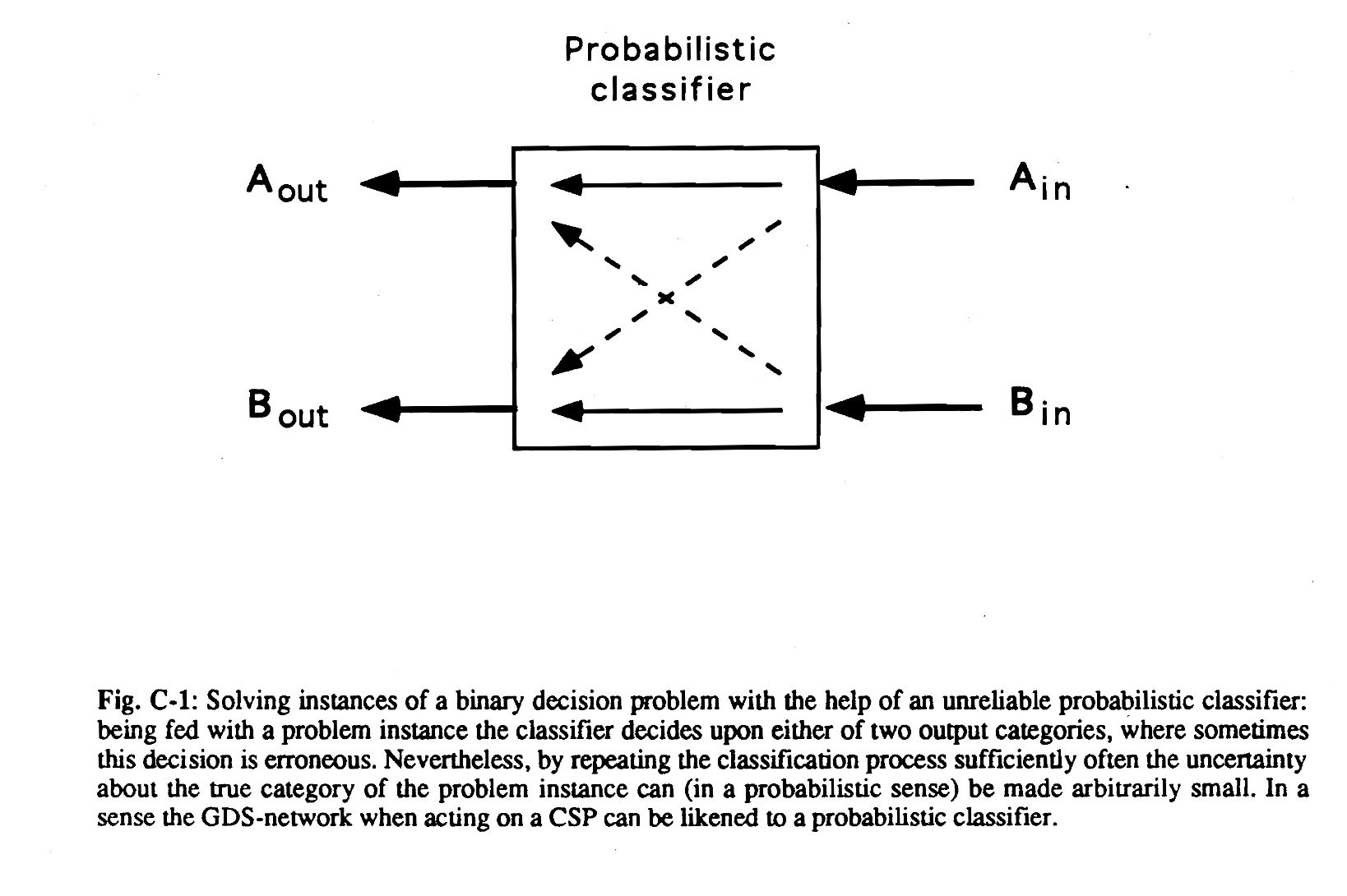

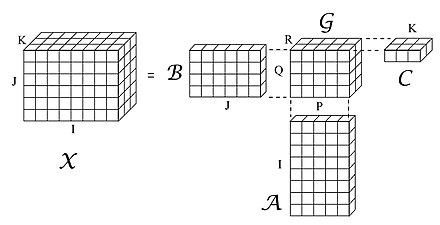

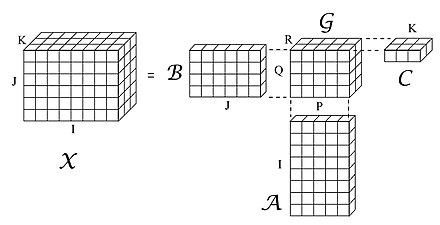

CSP: Constraint Satisfaction Problems

Opportunity

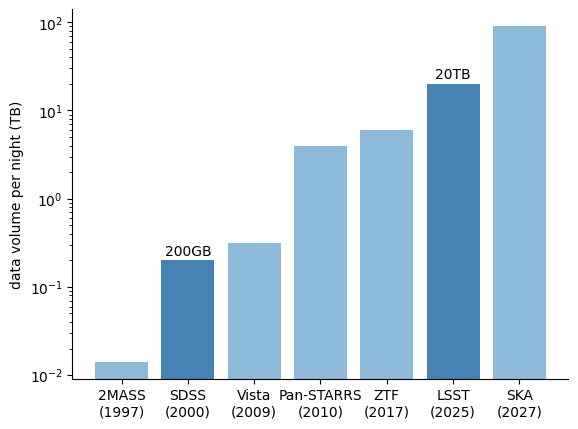

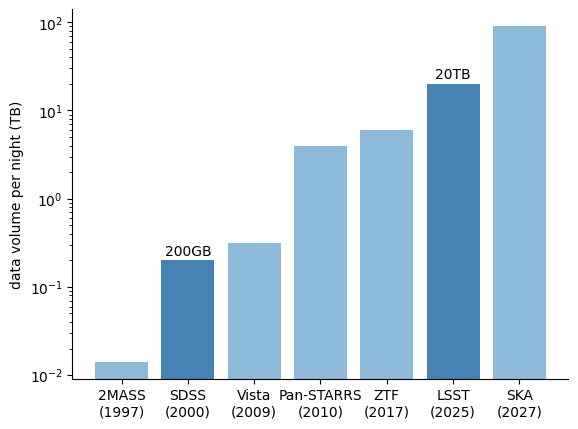

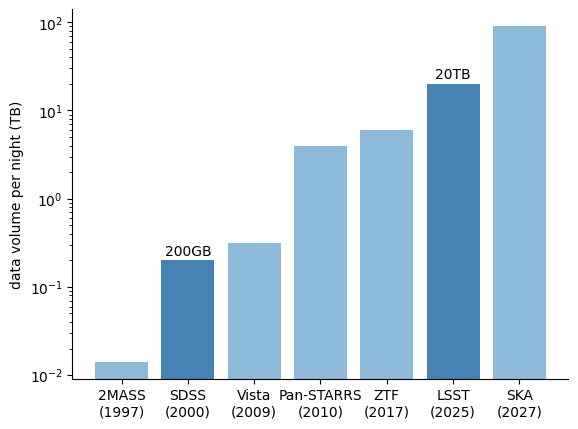

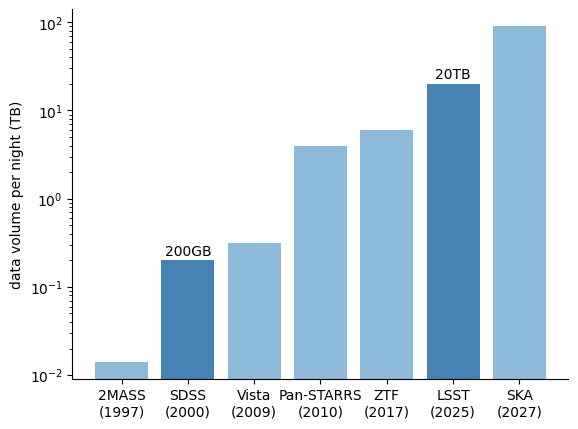

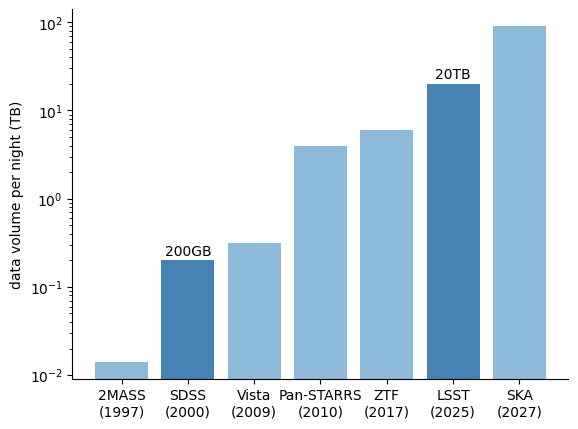

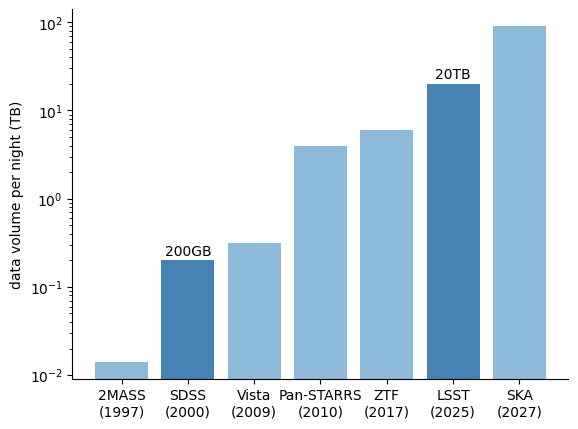

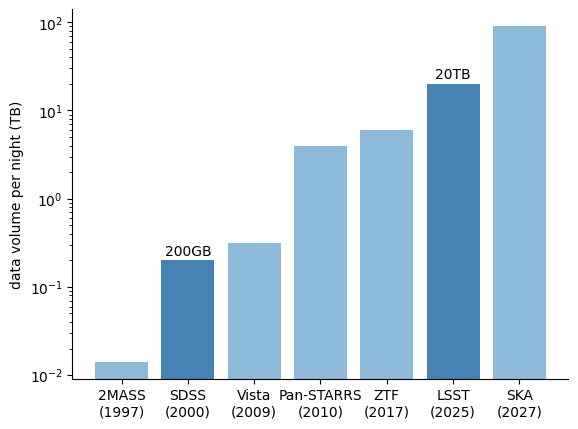

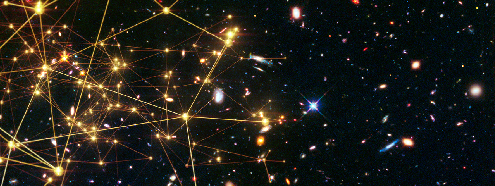

big data in astronomy

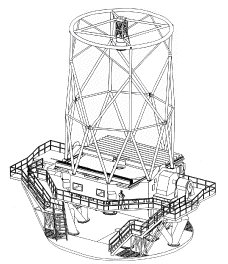

Rubin Observatory

Site: Cerro Pachon, Chile

Funding: US NSF + DOE

Building an unprecedented catalog of Solar System Objects

LSST Science Drivers

Mapping the Milky Way and Local Volume

Probing Dark Energy and Dark Matter

Exploring the Transient Optical Sky

To accomplish this, we need:

1) a large telescope mirror to be sensitive - 8m (6.7m)

2) a large field-of-view for sky-scanning speed - 10 deg2

3) high spatial resolution, high quality images - 0.2''/pixels

4) process images in realtime and offline to produce 10M nightly alerts and catalogs of all 37B objects

>=18000 sq degrees

~800 visits per field

2 visits per night (within ~30 min for asteroids)

+ 5x10sq deg Deep Drilling Fields with ~8000 visits

Objective: to provide a science-ready dataset to transform the 4 key science area

The DOE LSST Camera - 3.2 Gigapixel

3024 science raft amplifier channels

Camera and Cryostat integration completed at SLAC in May 2022,

Shutter and filter auto-changer integrated into camera body

LSSTCam undergoing final stages of testing at SLAC

HELL YEAH!

2025

edge computing

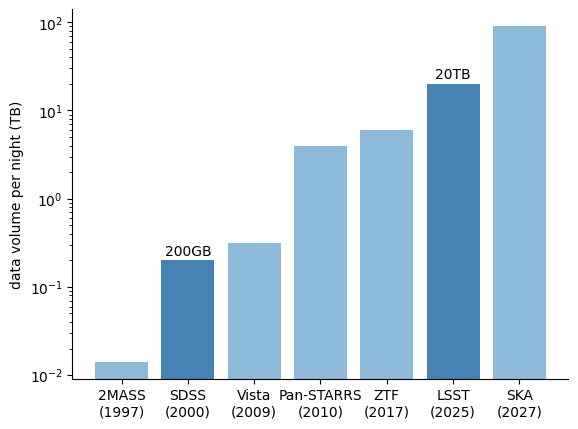

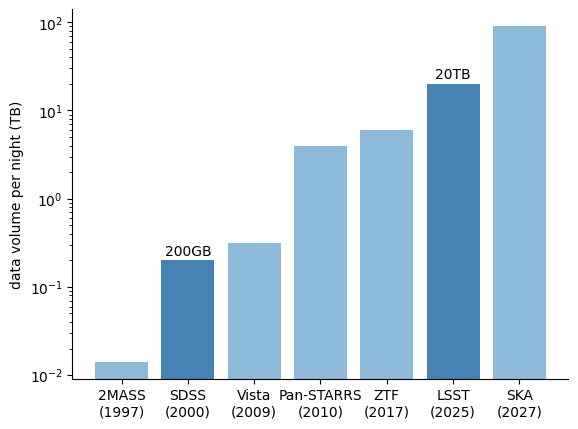

Will we get more data???

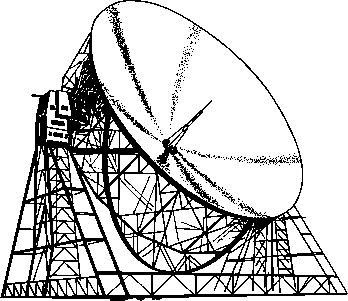

SKA

(2025)

edge computing

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200)Villar+ 2018

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

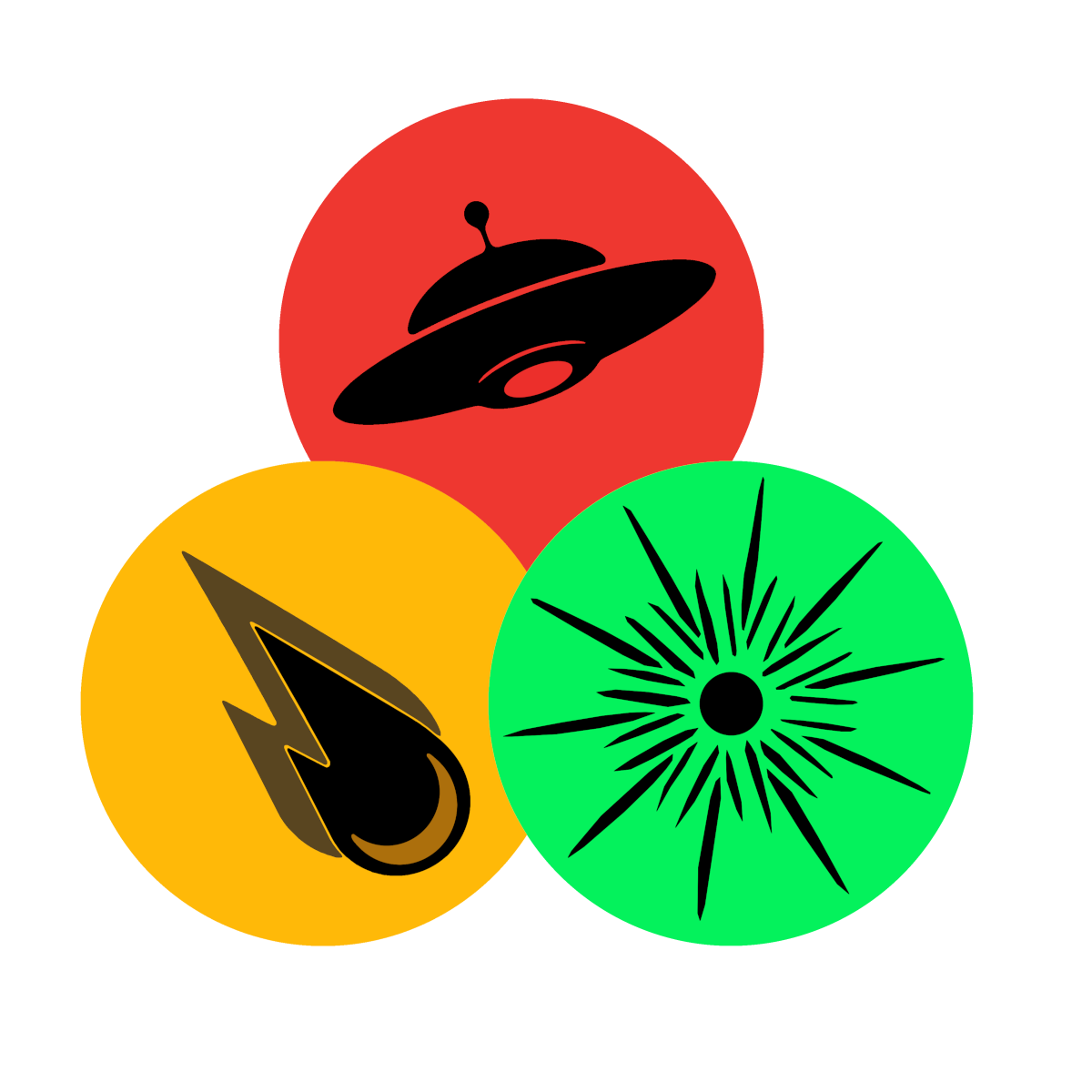

> 10 Interstellar Objects fom 2.... ?)

edge computing

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200)

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

edge computing

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200)

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200) Villar+ 2018

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200) Villar+ 2018

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

Rubin LSST Transients by the numbers

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200) Villar+ 2018

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

SKA

(2025)

17B stars (x10) Ivezic+19

~10 million QSO (x10) Mary Loli+21

~50k Tidal Disruption Events (from ~150) Brickman+ 2020

~10k SuperLuminous Supernovae (from ~200) Villar+ 2018

~400 strongly lensed SN Ia (from 10) Ardense+24

~50 kilonovae (from 2) Setzer+19, Andreoni+19 (+ ToO)

> 10 Interstellar Objects fom 2.... ?)

True Novelties!

Rubin LSST Transients by the numbers

"BUT BIG DATA DOES NOT MEAN BIG SCIENCE"

Yang Huang,

University of Chinese Academy of Sciences

SpecCLIP talk

survey optimization

Challenge

Introducing Rolling Cadence

Current plan: rolling 8 out of the 10 years

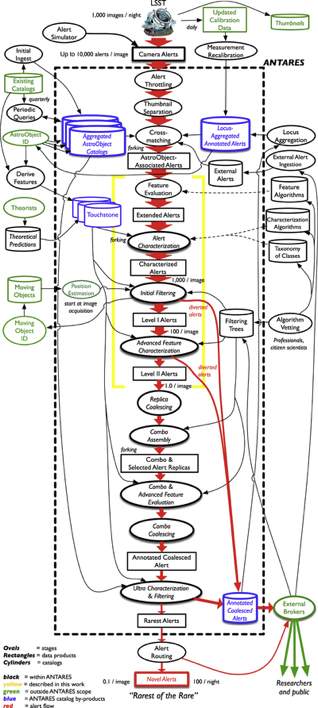

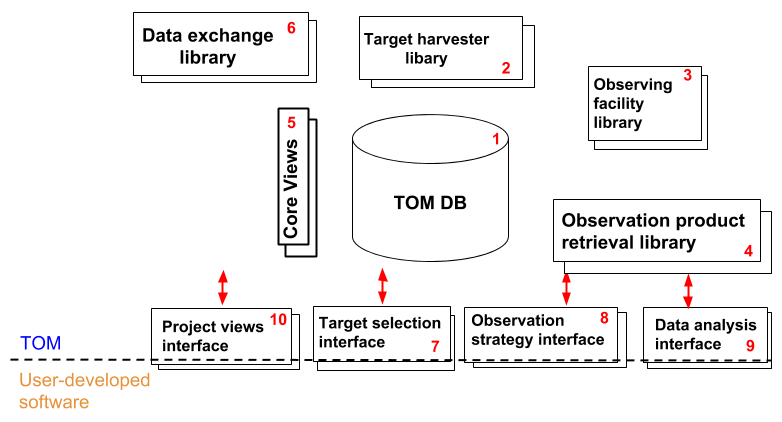

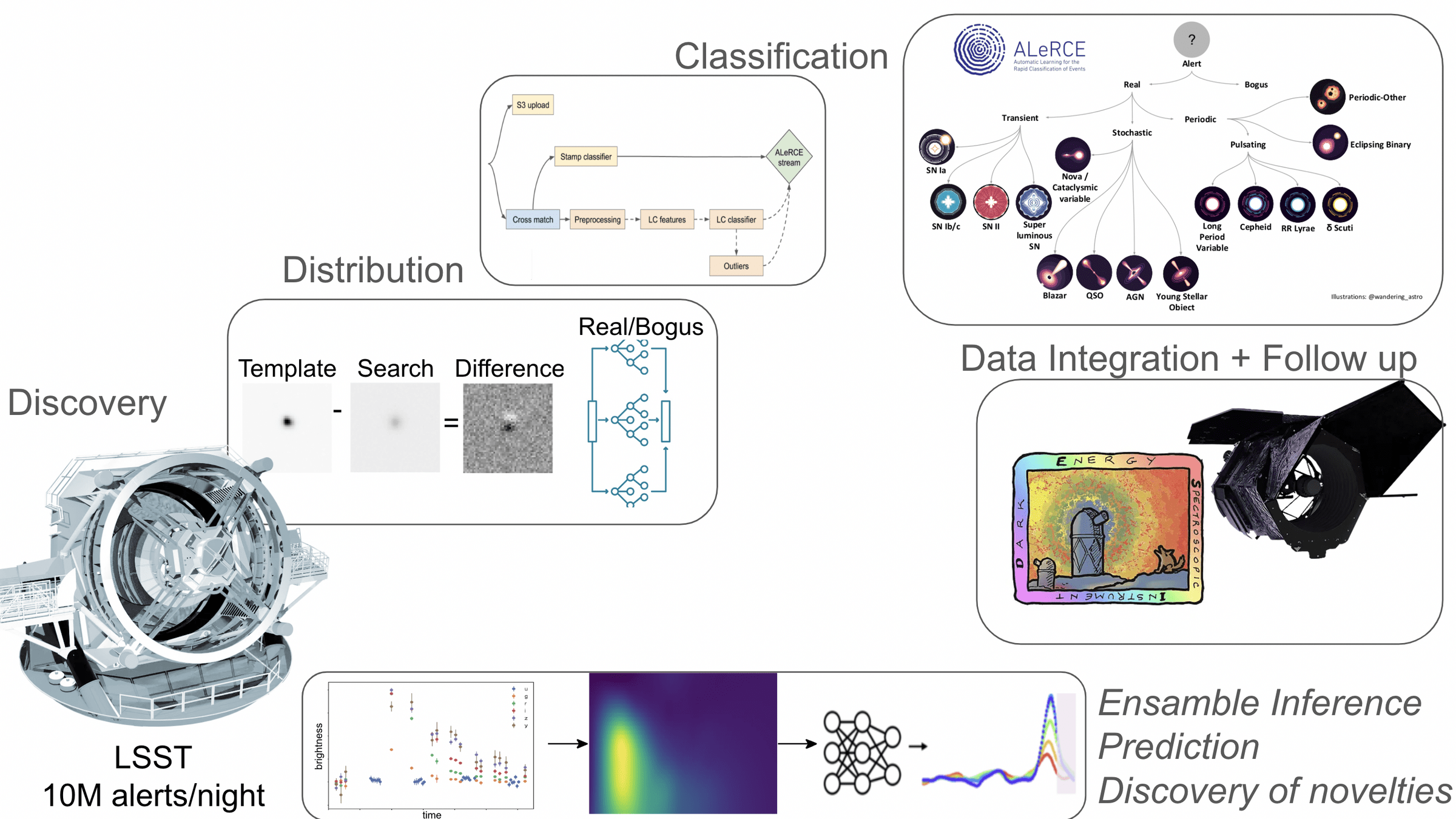

Discovery Engine

10M alerts/night

Community Brokers

target observation managers

Pitt-Google

Broker

BABAMUL

federica bianco - fbianco@udel.edu

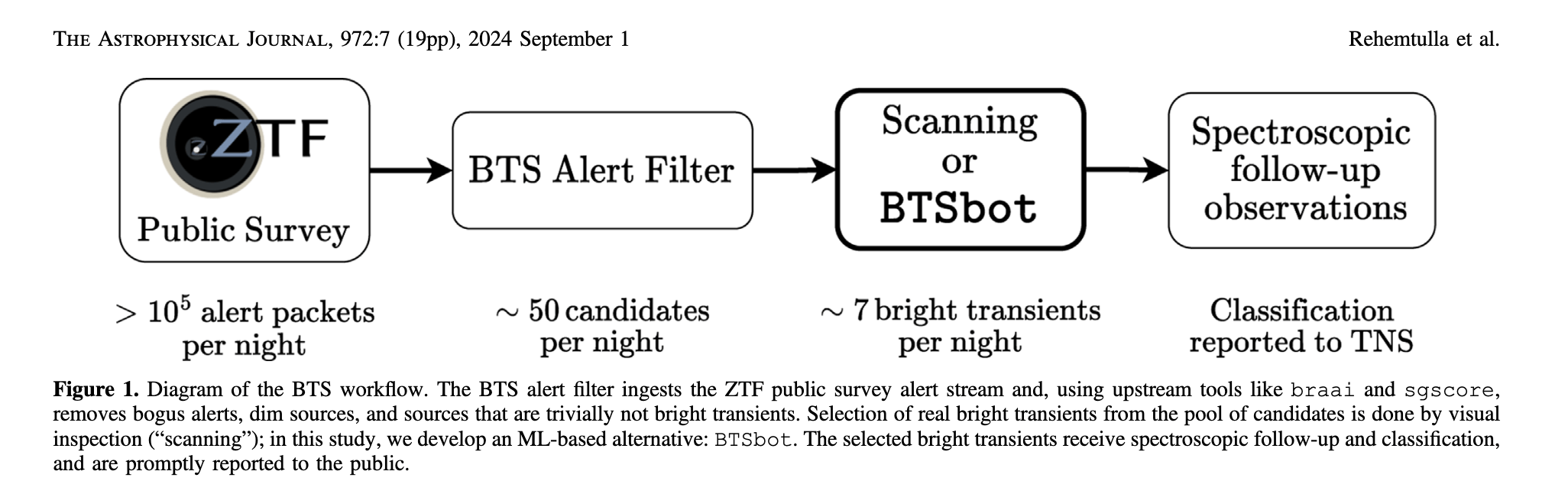

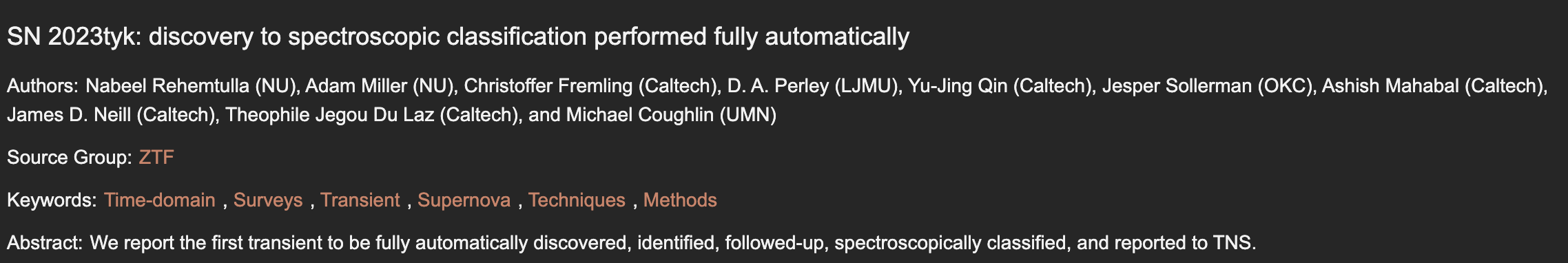

the new astronomy discovery chain

AI for survey design

subverting the TDA process

Challenge

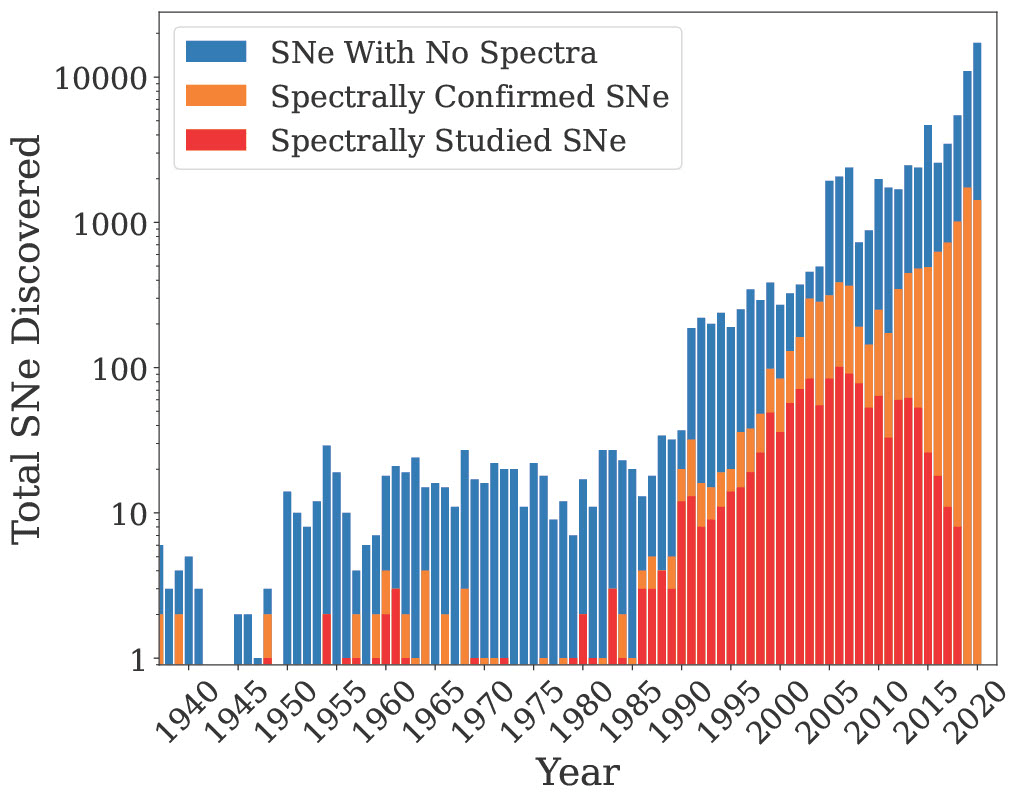

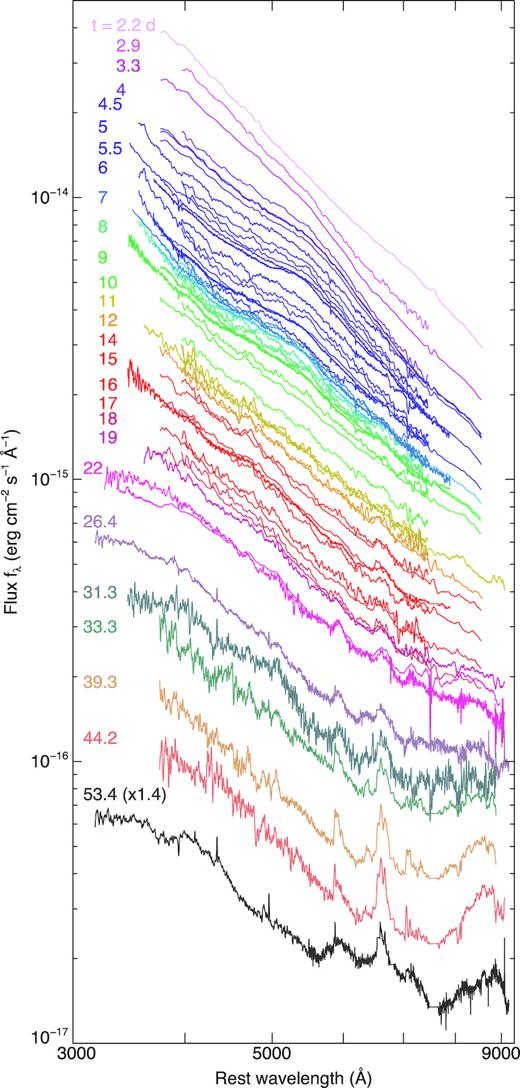

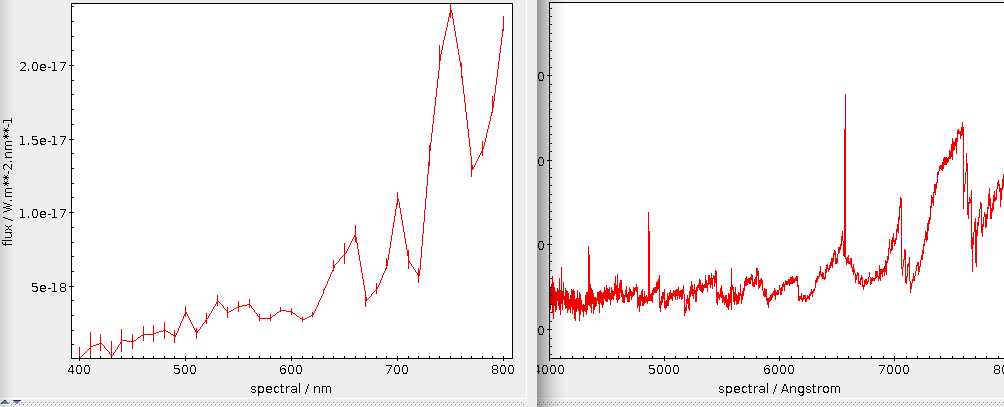

To this day, transient astronomy heavily relies on spectra

federica bianco - fbianco@udel.edu

Rubin will see ~1000 SN every night!

Credit: Alex Gagliano IAIFI fellow MIT/CfA

Challange

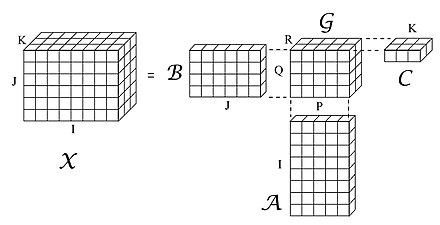

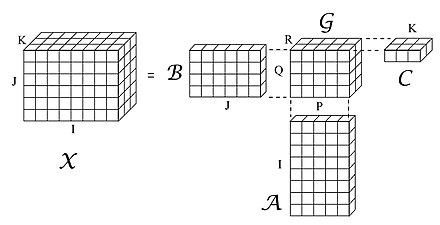

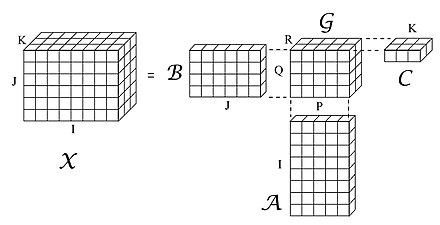

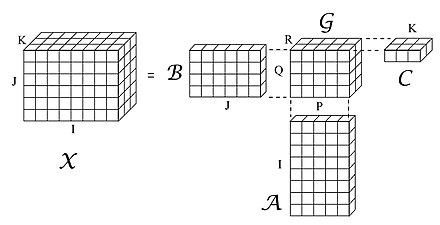

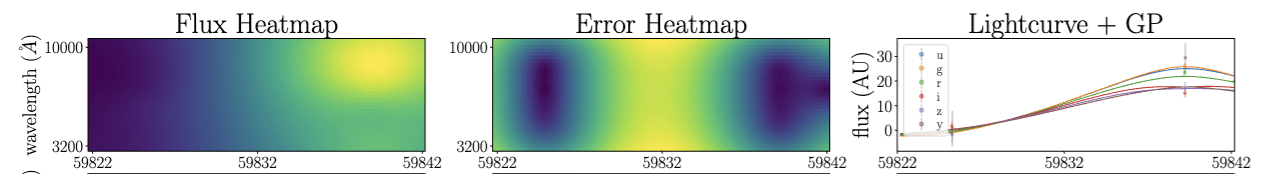

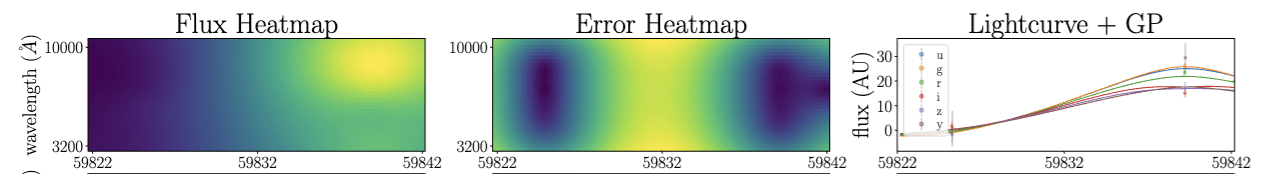

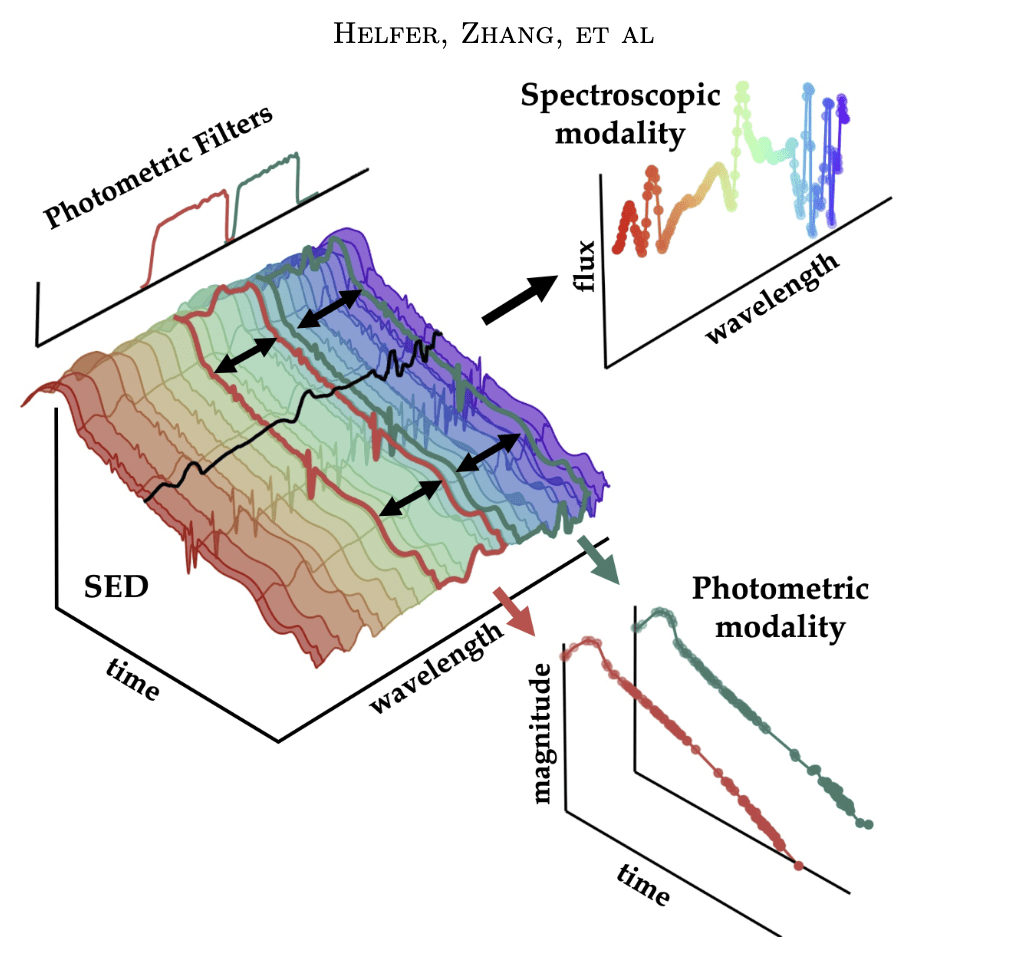

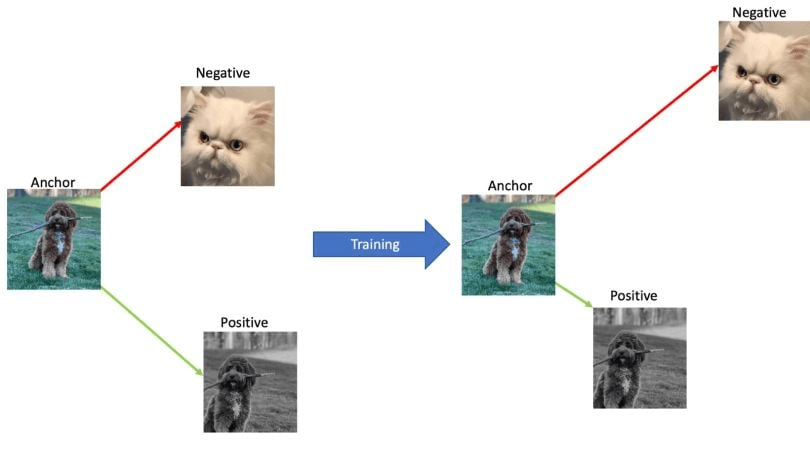

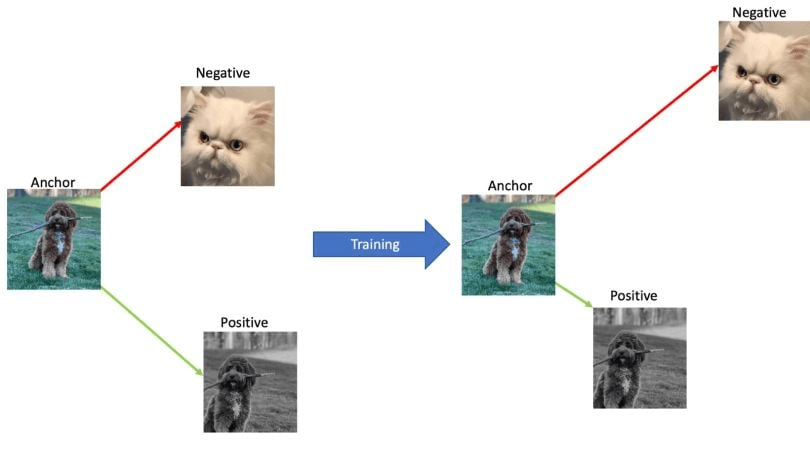

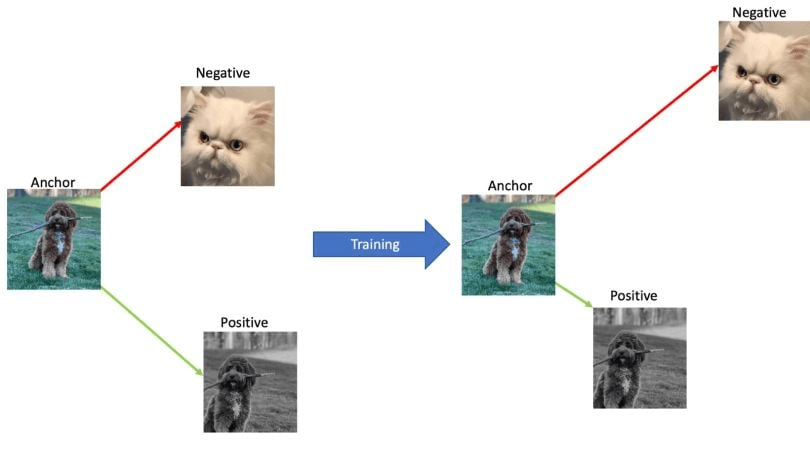

data encoding

well... it depends

2025

(2026)

edge computing

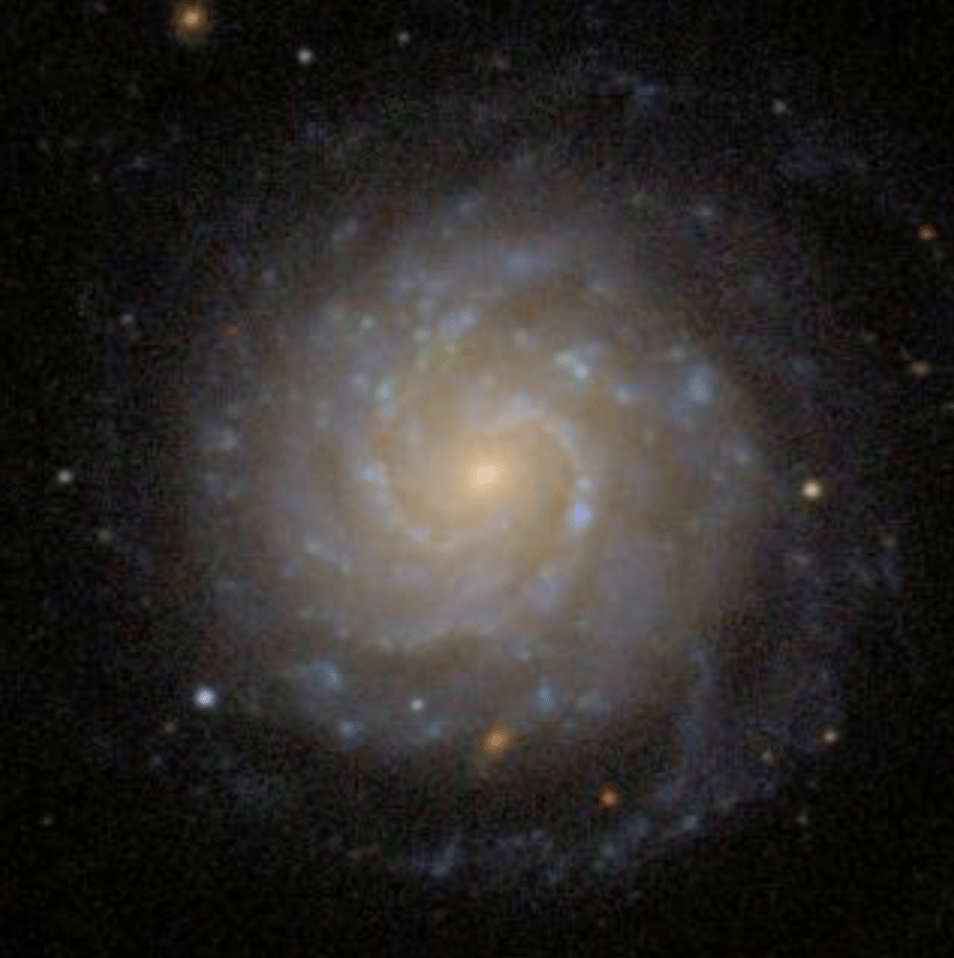

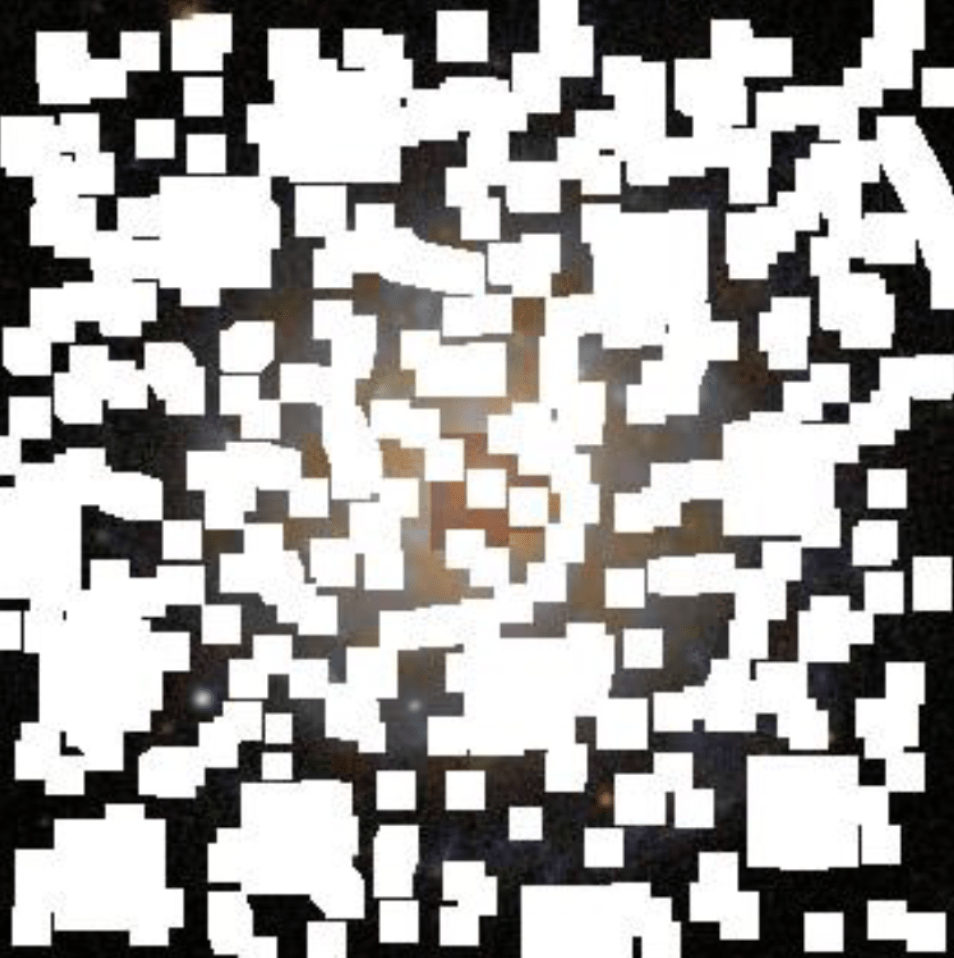

Is the data gonna also be better?

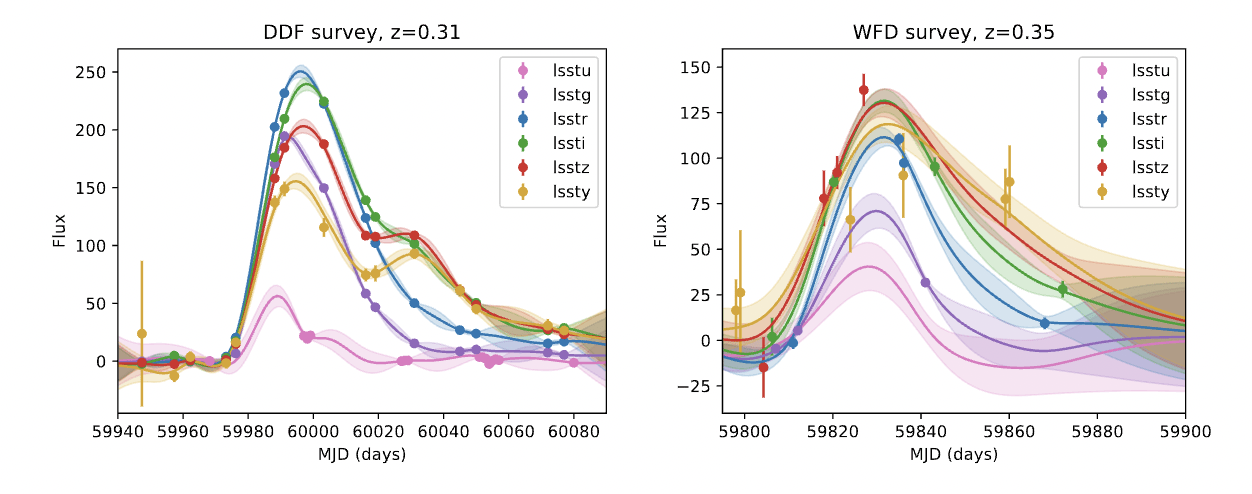

visualizatoin and concept credit: Alex Razim

visualizatoin and concept credit: Alex Razim

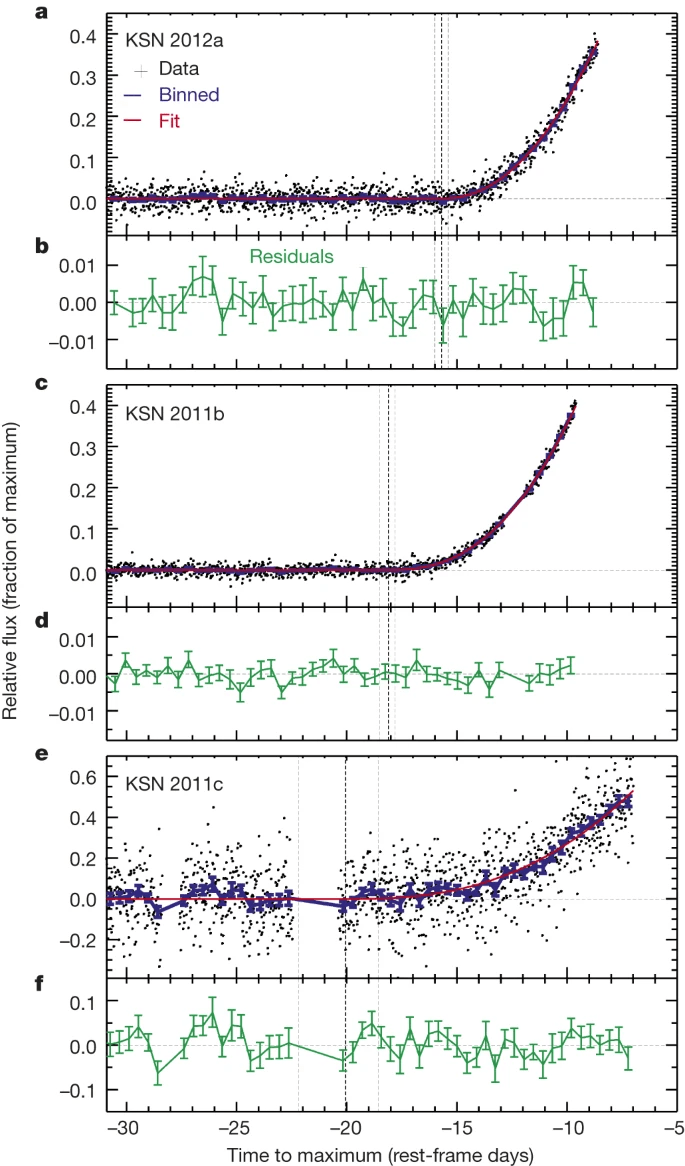

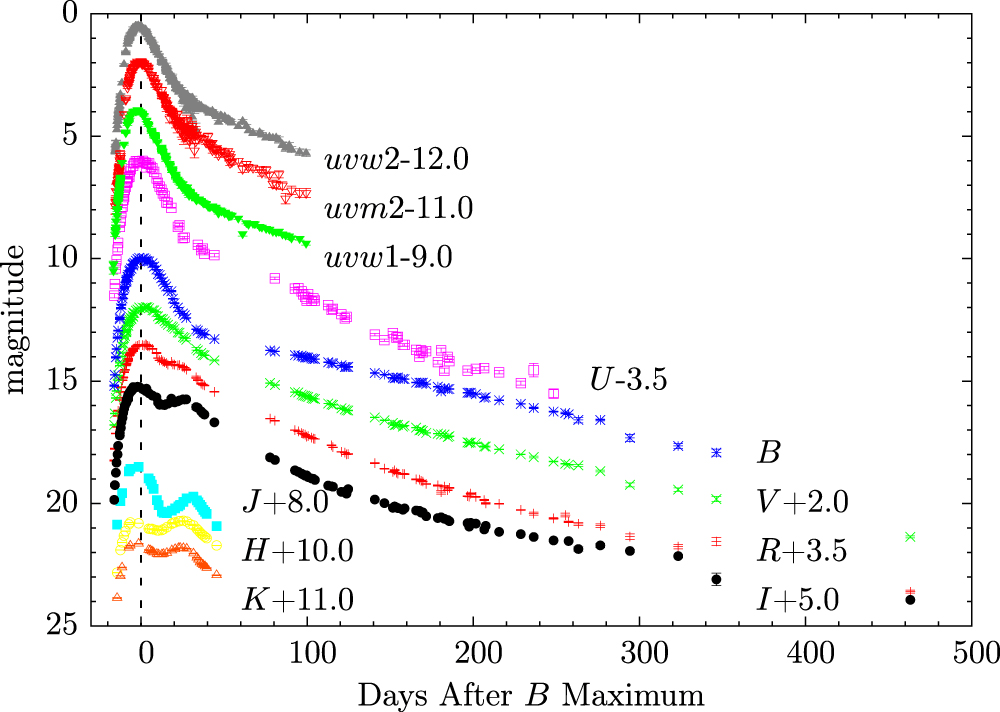

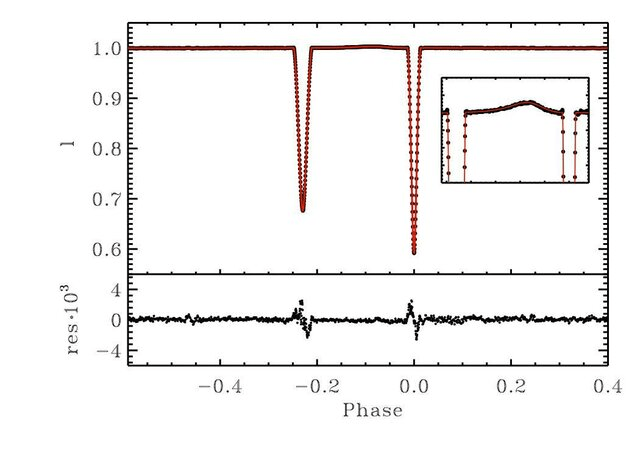

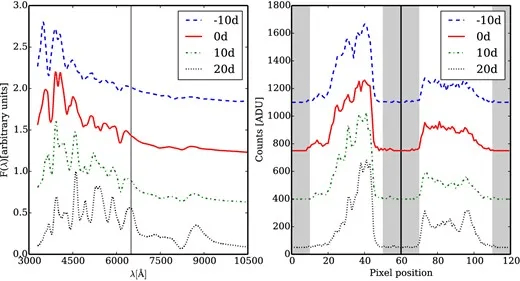

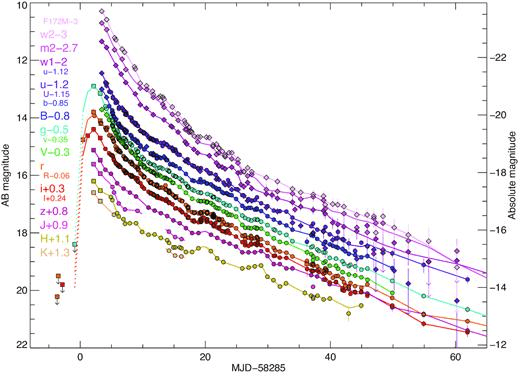

Kaicheng Zhang et al 2016 ApJ 820 67

SN 2011fe

deSoto+2024

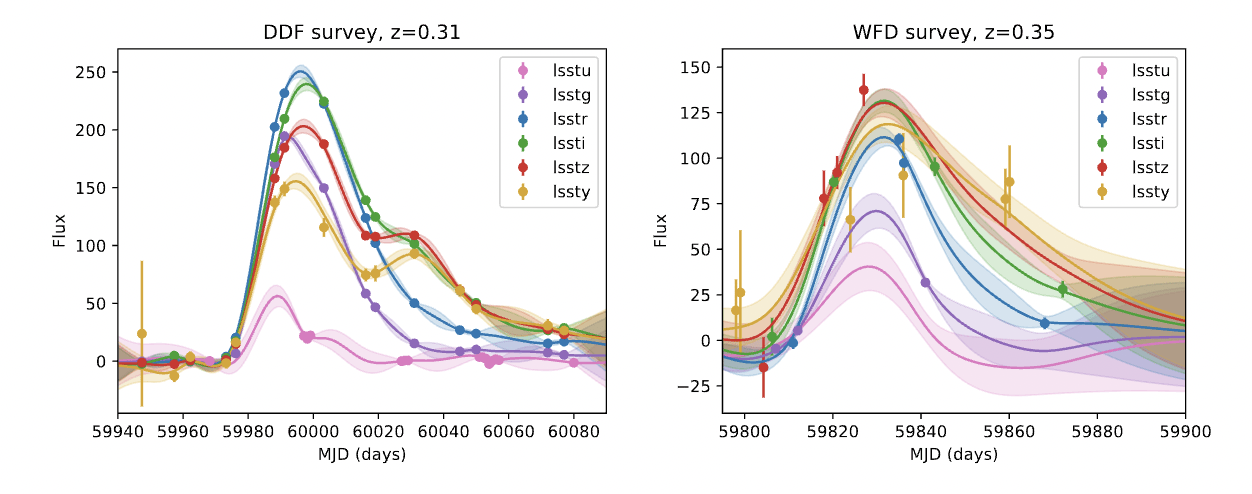

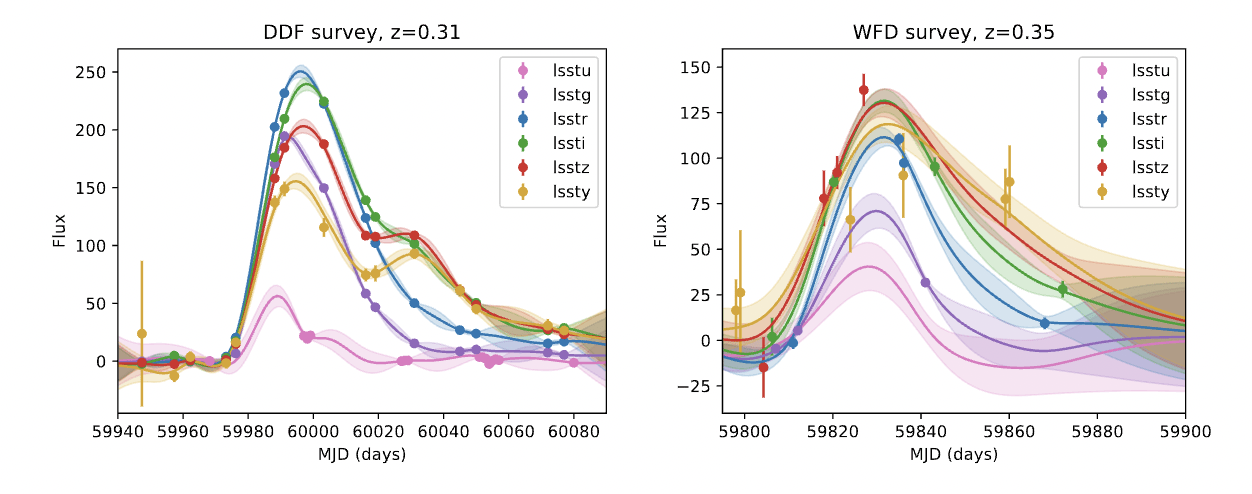

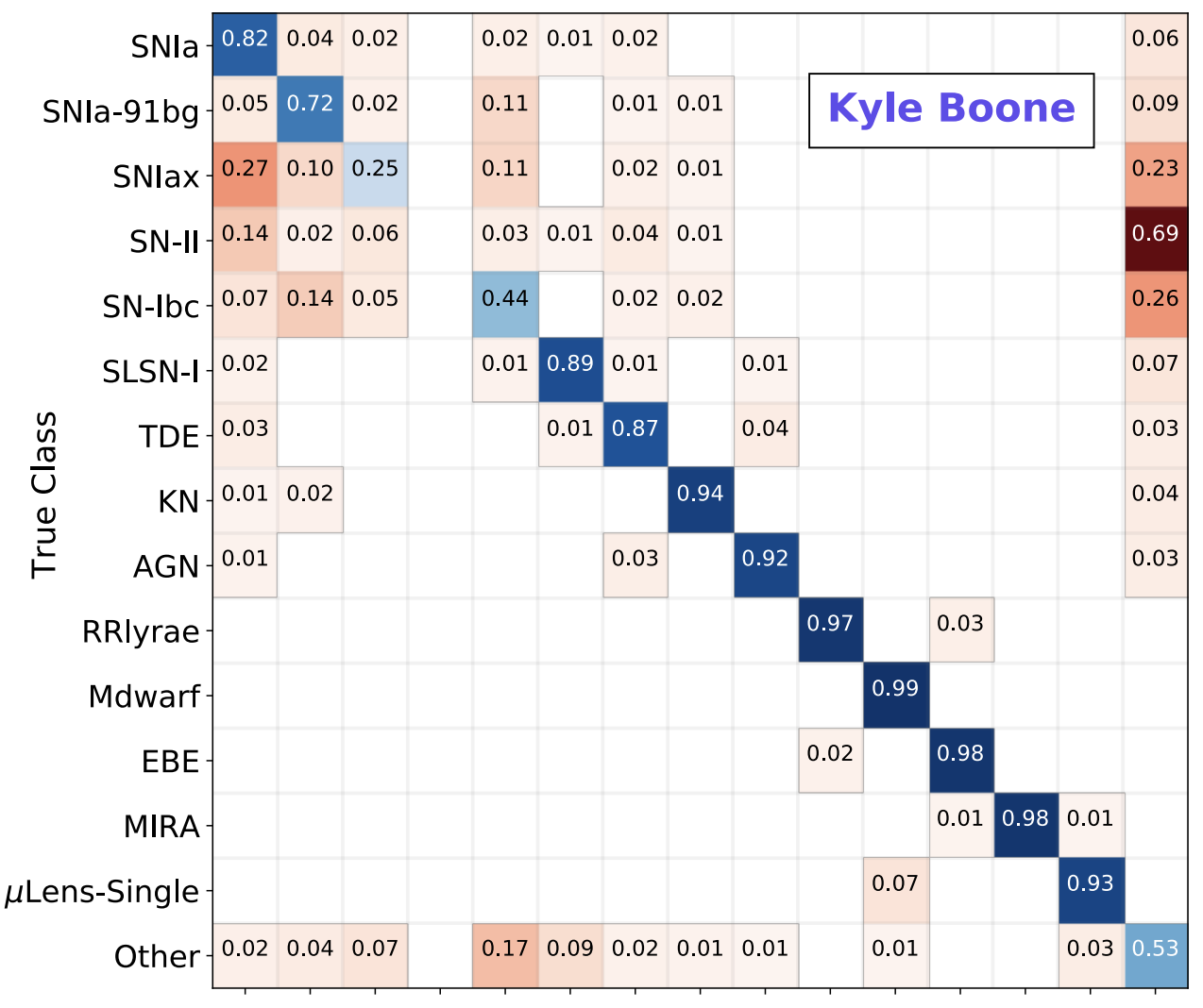

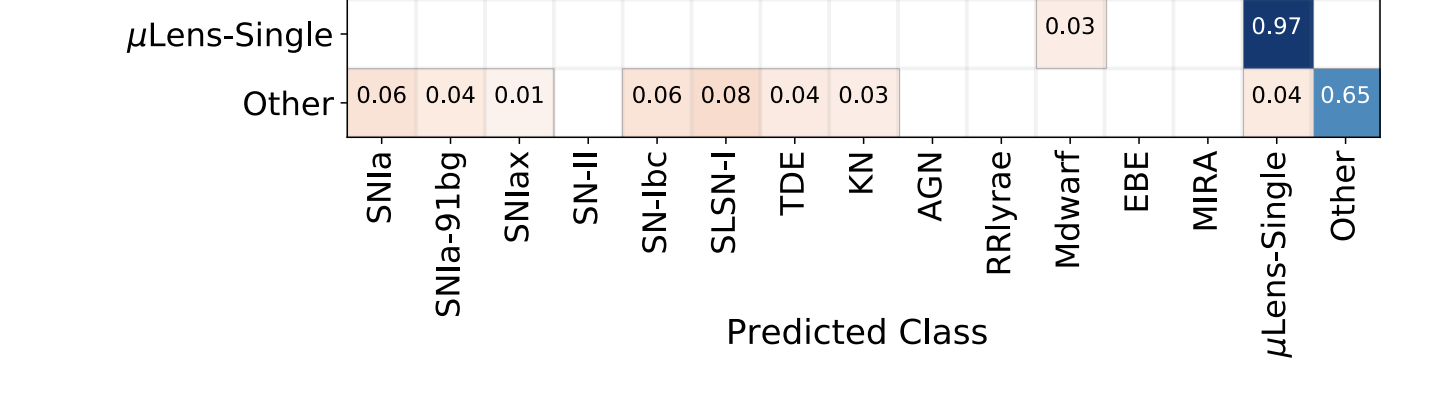

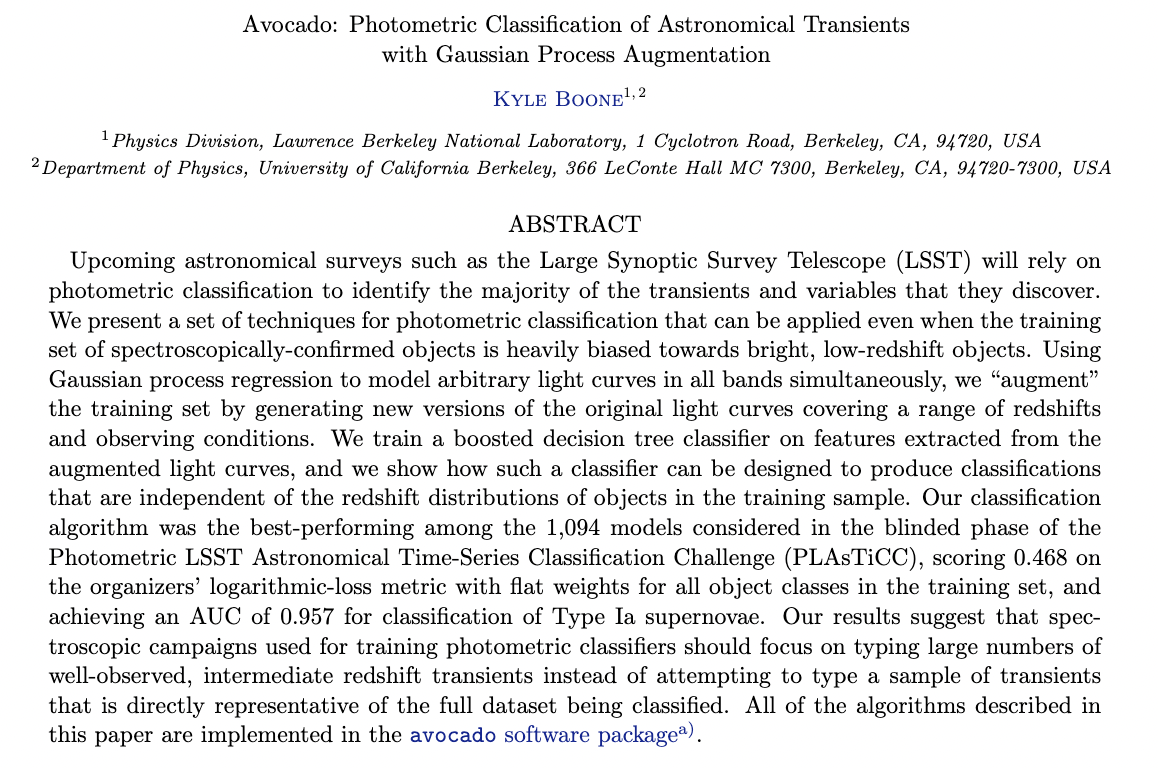

Boone 2017

7% of LSST data

Boone 2017

7% of LSST data

The rest

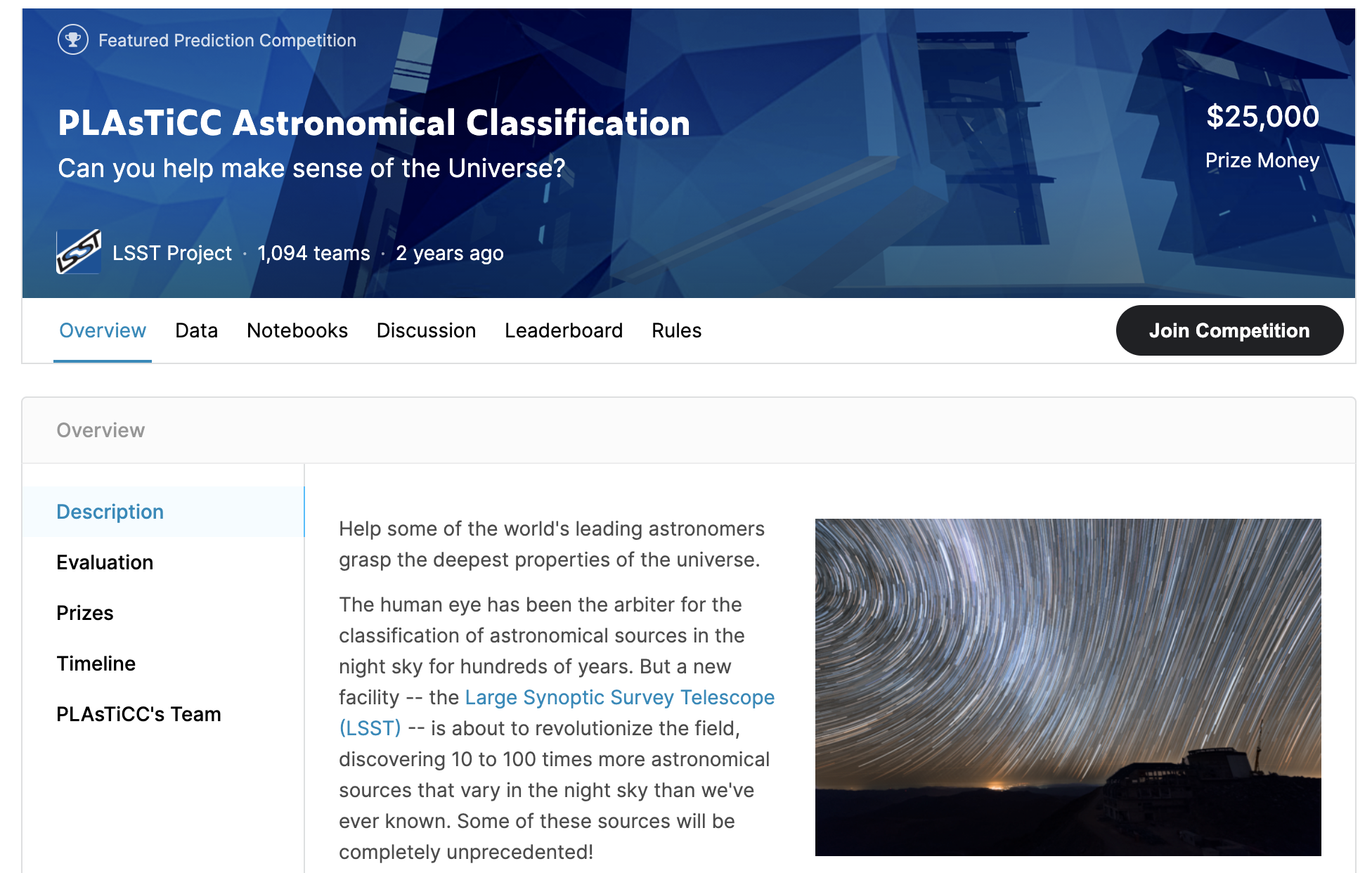

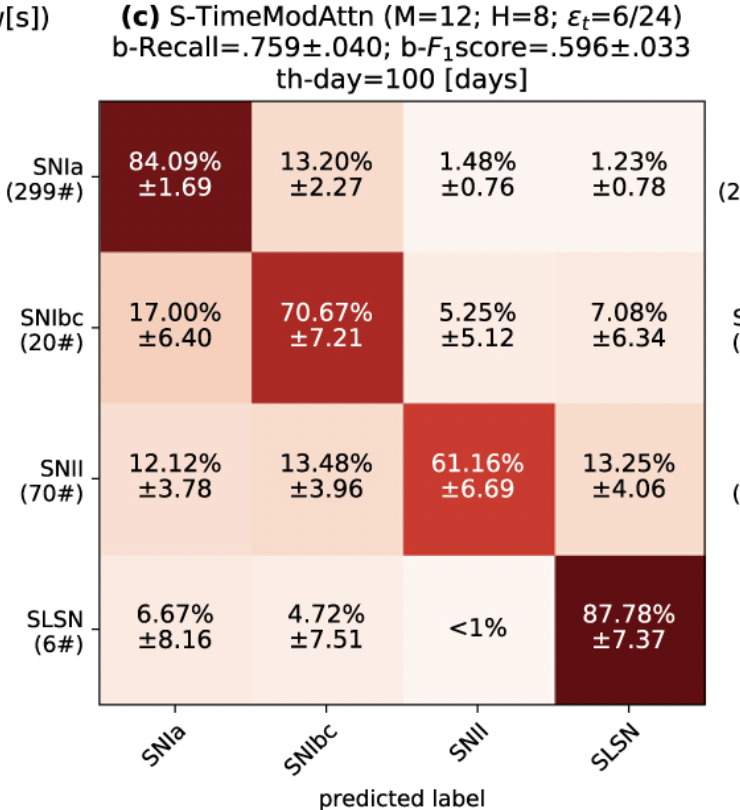

Photometric Classification of transients

Photometric Classification of transients

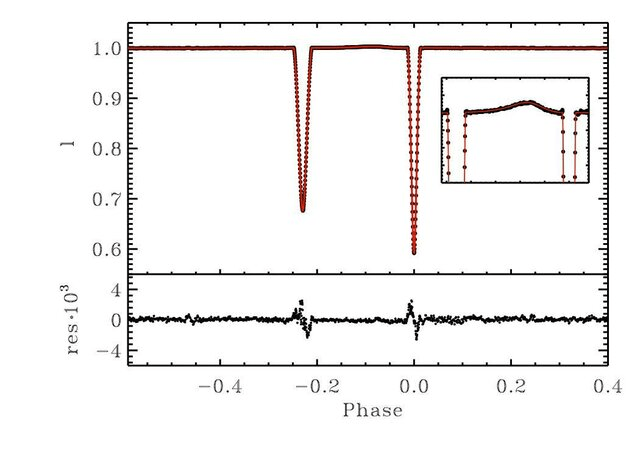

Kepler satellite EB

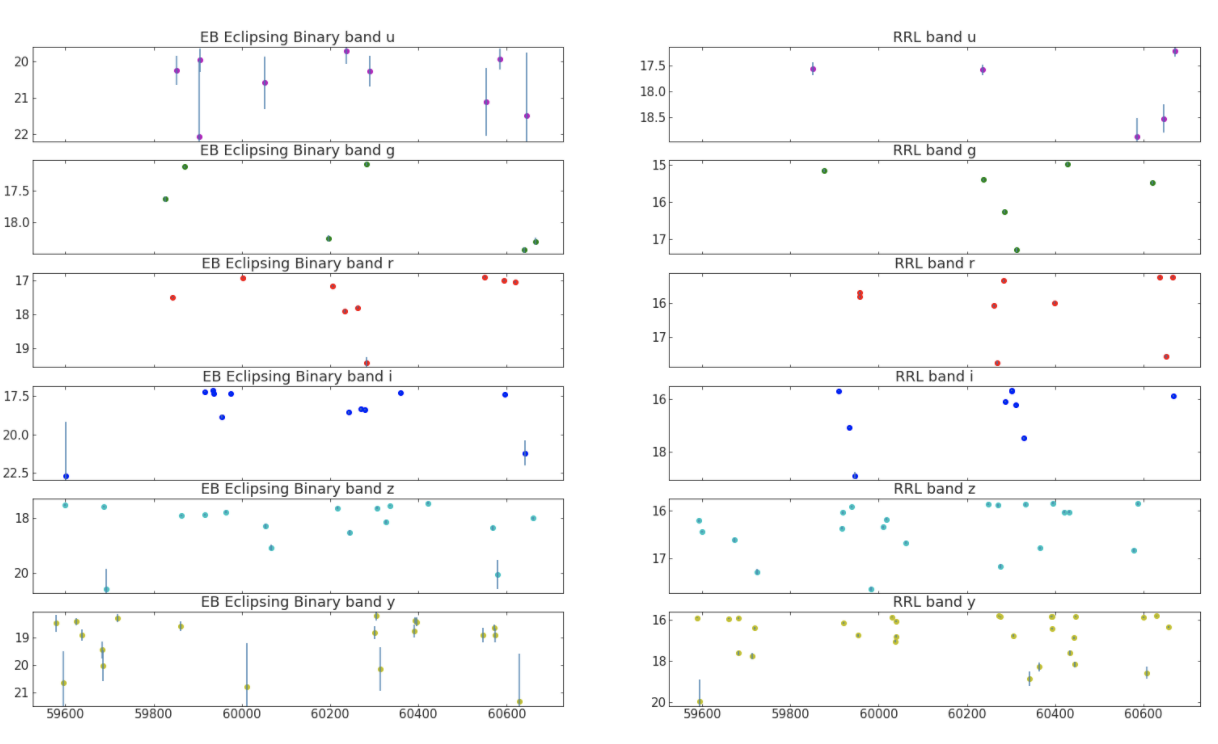

LSST (simulated) EB

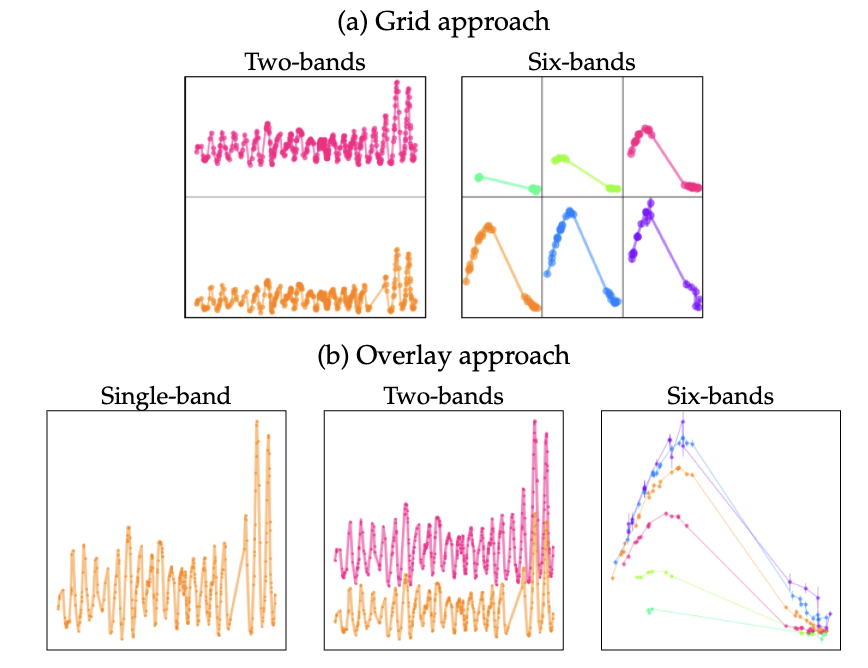

lightcurves make really bad tensors

is transient data AI ready?

lightcurves make really bad tensors

- Variable sizes of data vectors

is transient data AI ready?

lightcurves make really bad tensors

- Variable sizes of data vectors

is transient data AI ready?

- Variable sizes of data vectors

- Uneven sampling

- Variable sizes of data vectors

- Uneven sampling

lightcurves make really bad tensors

- Variable sizes of data vectors

is transient data AI ready?

- Variable sizes of data vectors

- Uneven sampling

- Variable sizes of data vectors

- Uneven sampling

- Variable sizes of data vectors

- Uneven sampling

- Different sampling at different wavelengths

lightcurves make really bad tensors

is transient data AI ready?

- Variable sizes of data vectors

- Uneven sampling

- Different sampling at different wavelengths

- Phase gaps can be months long over ~1 year

lightcurves make really bad tensors

is transient data AI ready?

- Variable sizes of data vectors

- Uneven sampling

- Different sampling at different wavelengths

- Phase gaps can be months long over ~1 year

- Multiple relevant time scales

lightcurves make really bad tensors

is transient data AI ready?

- Variable sizes of data vectors

- Uneven sampling

- Different sampling at different wavelengths

- Phase gaps can be months long over ~1 year

- Multiple relevant time scales

- Aleatory and Epistemic Heteroscedastic uncertainties

- Variable sizes of data vectors

- Uneven sampling

- Different sampling at different wavelengths

- Phase gaps can be months long over ~1 year

- Multiple relevant time scales

Willow Fox Fortino

UDelaware

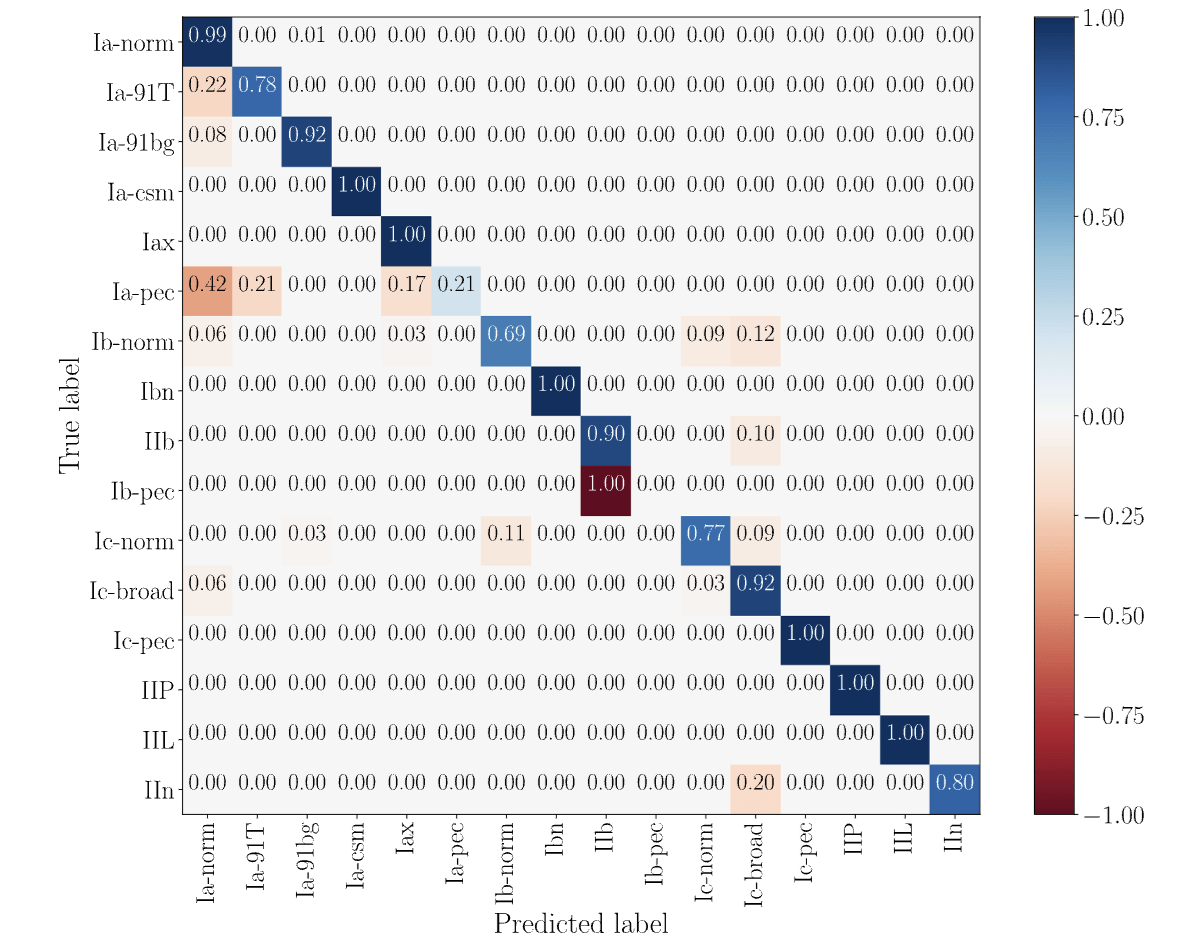

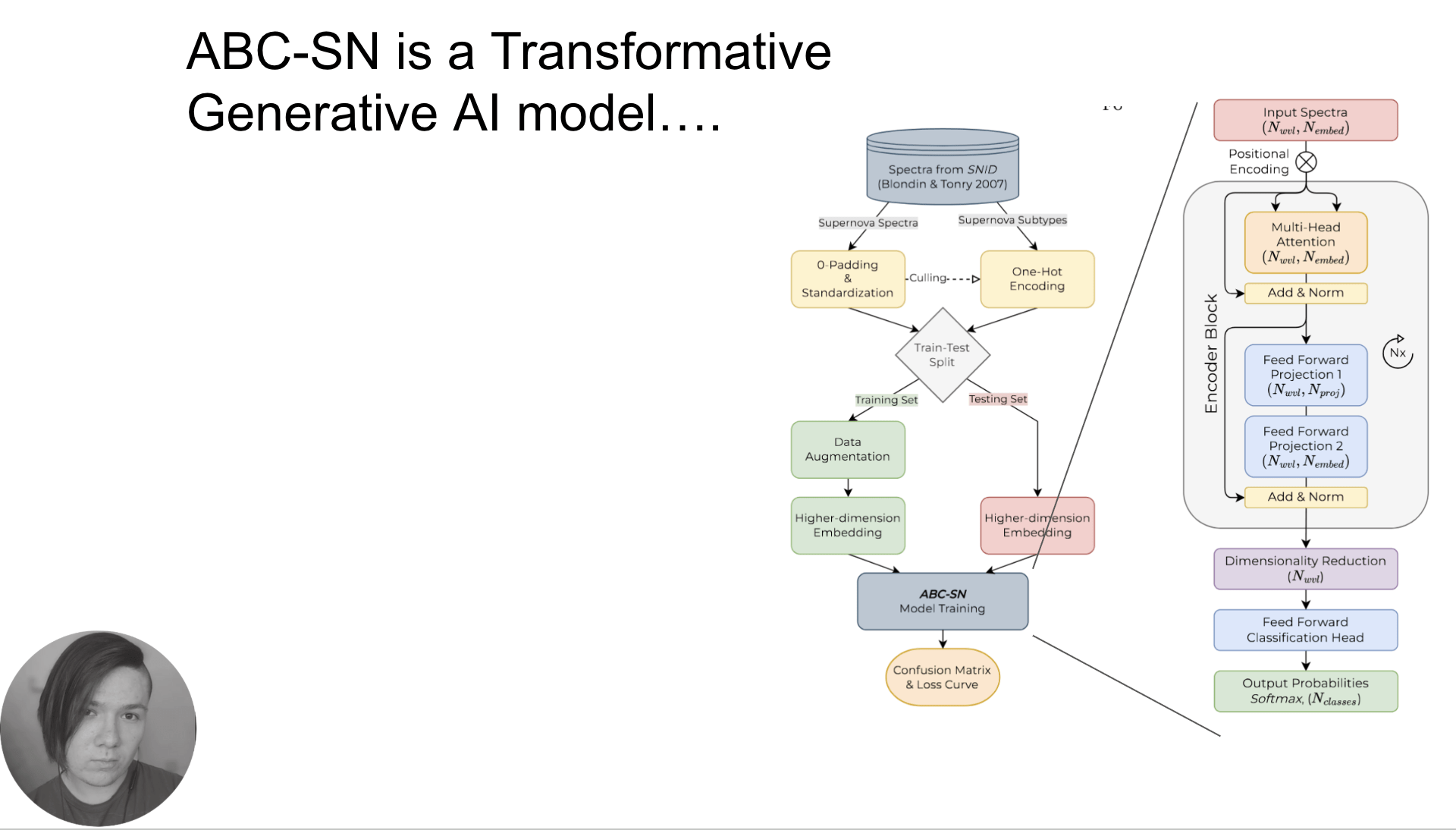

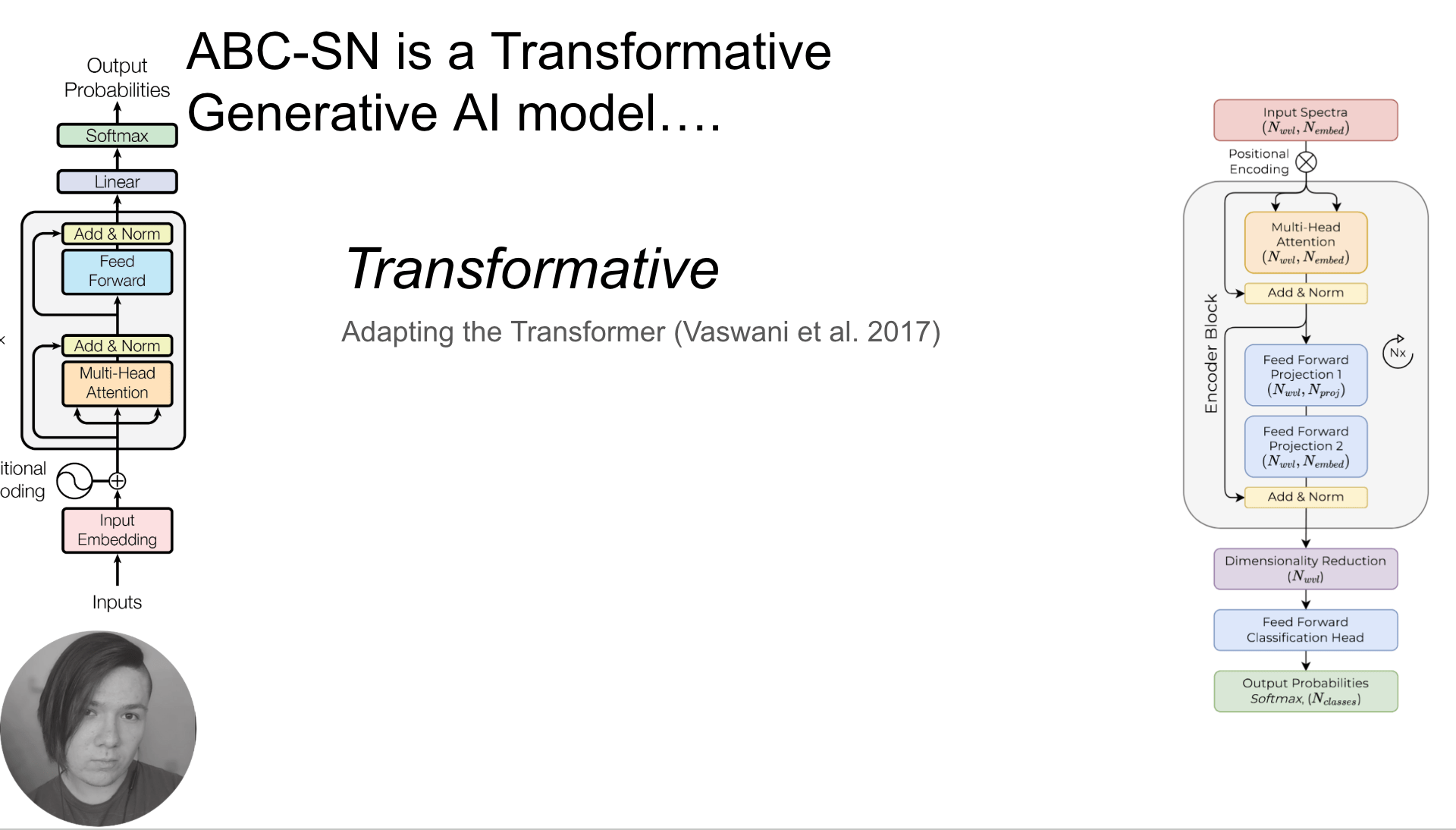

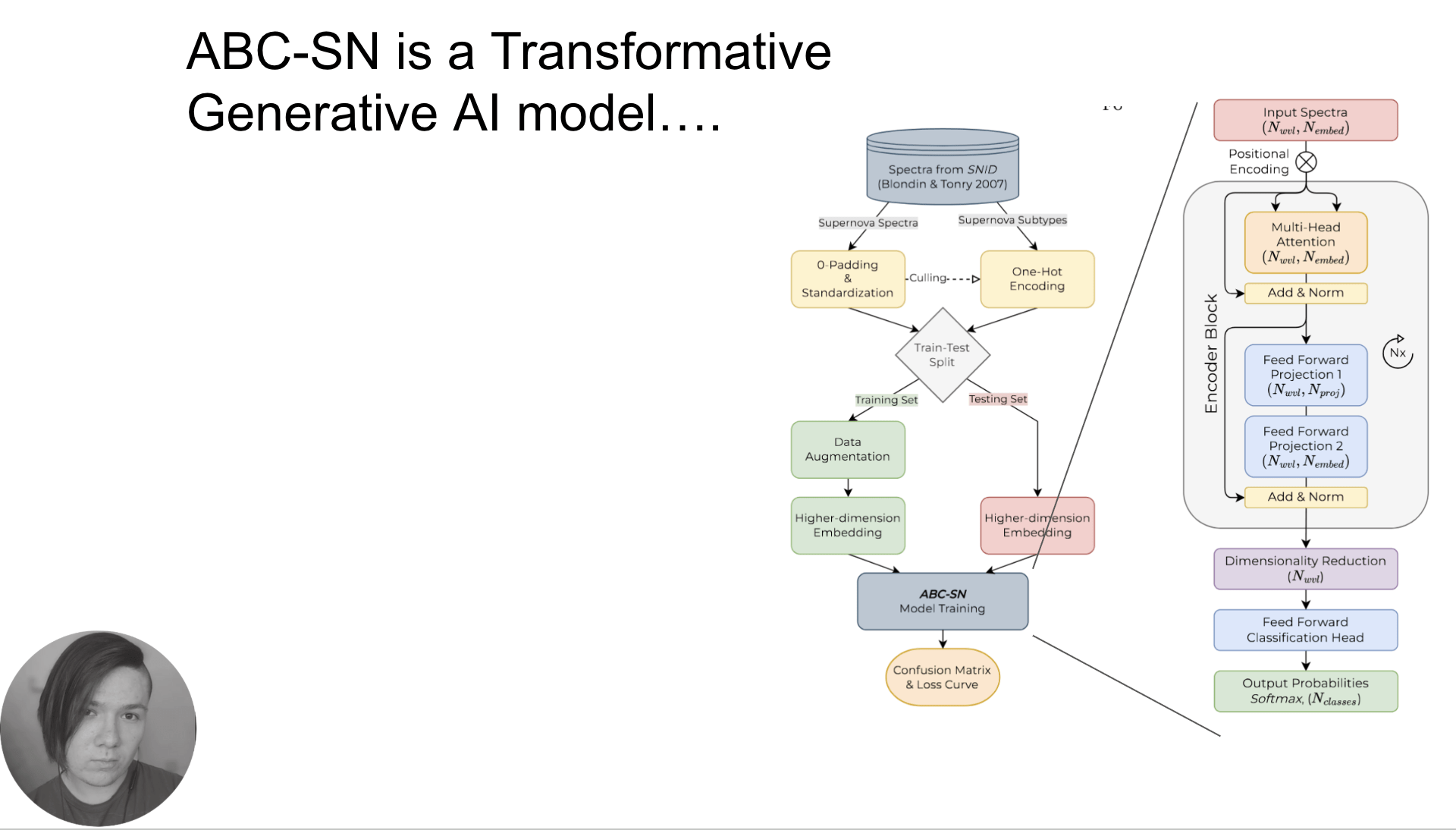

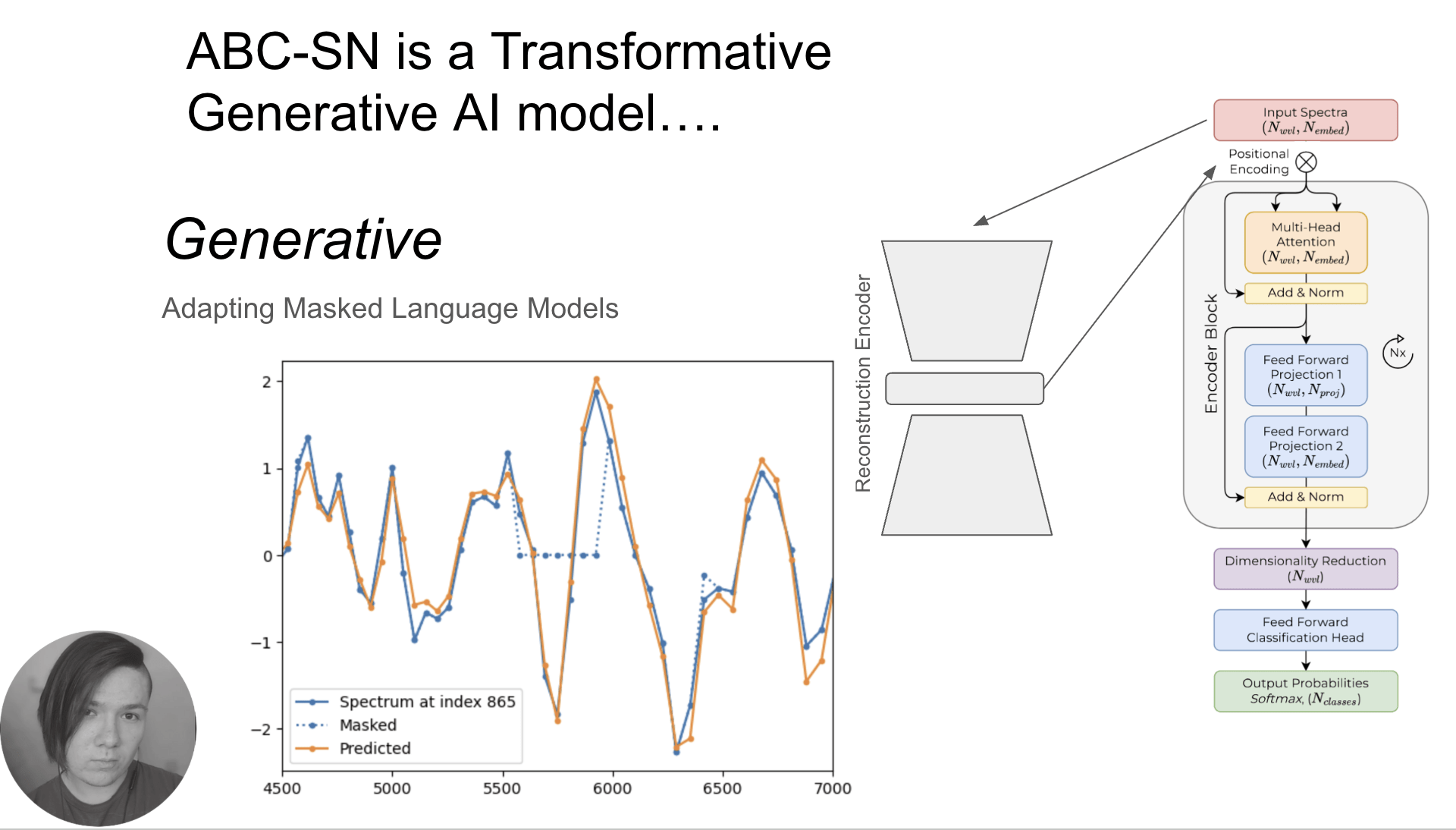

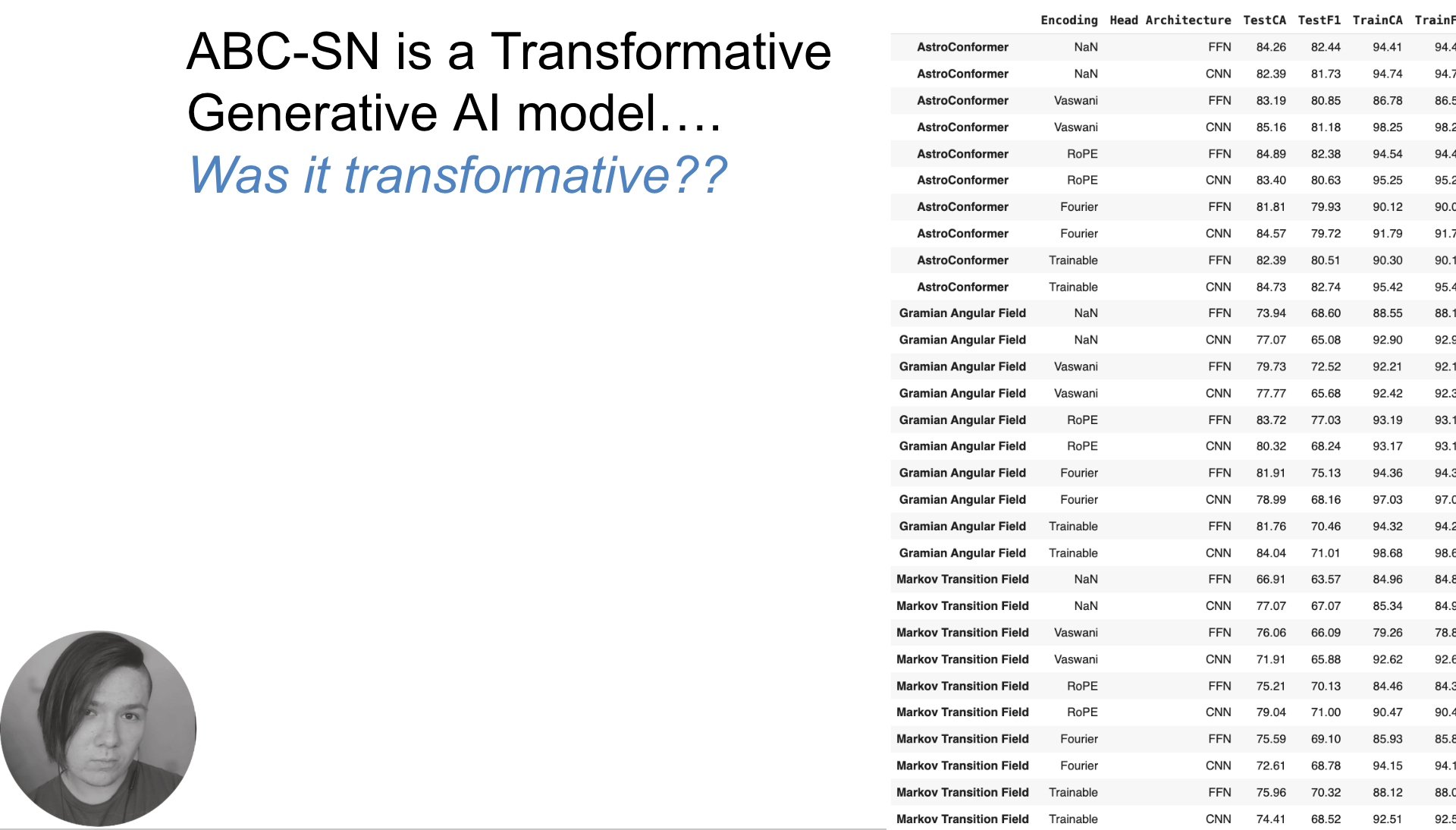

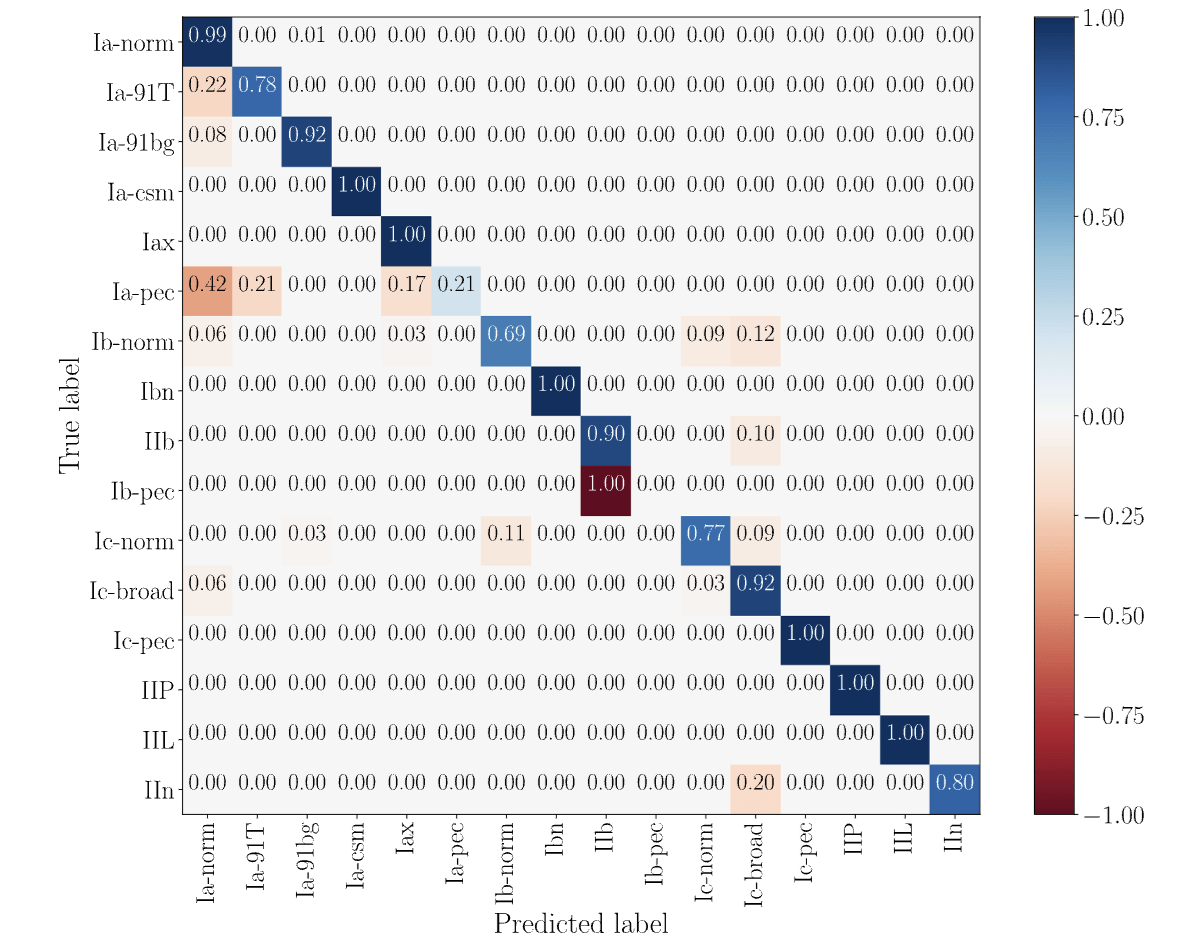

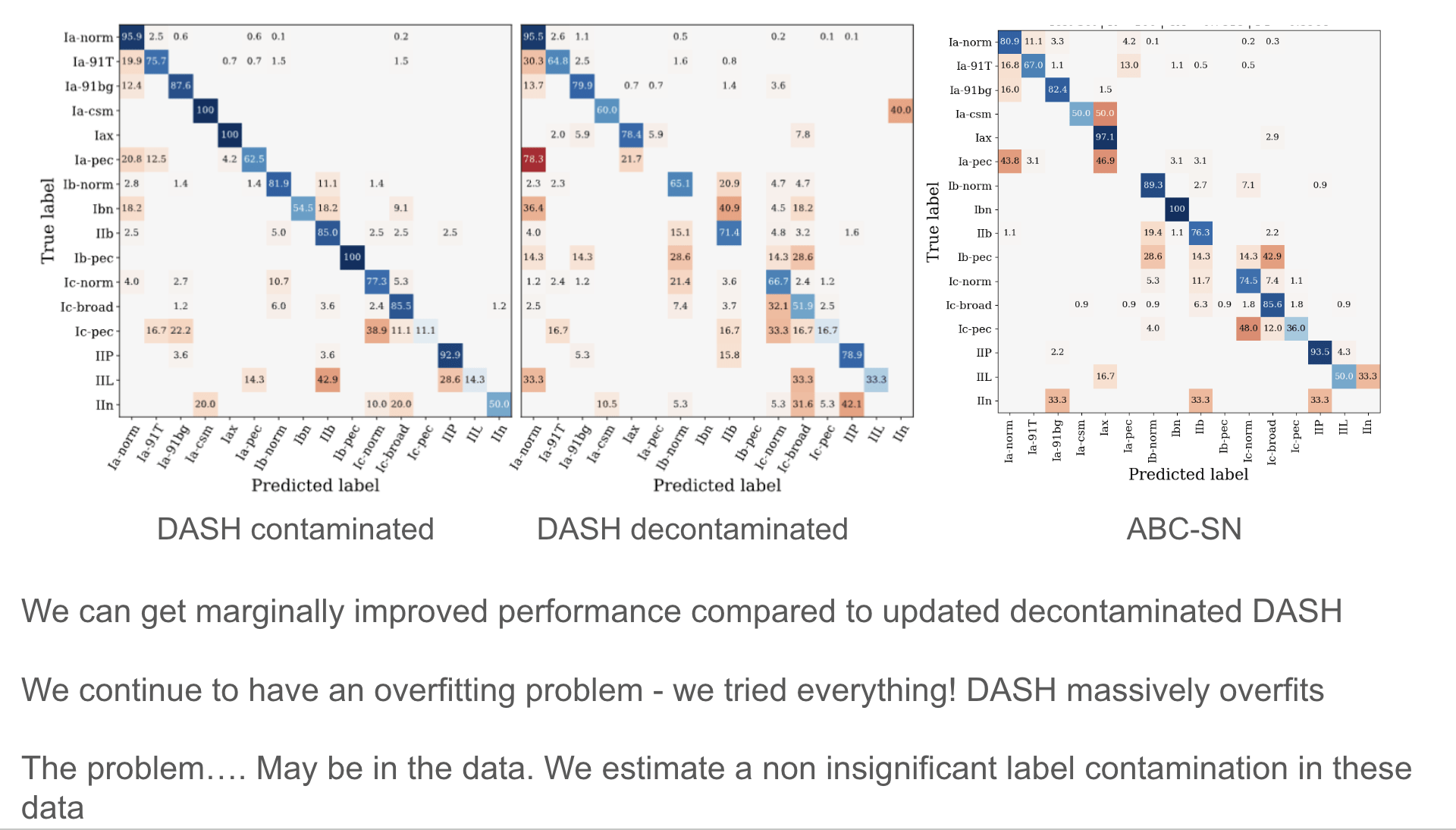

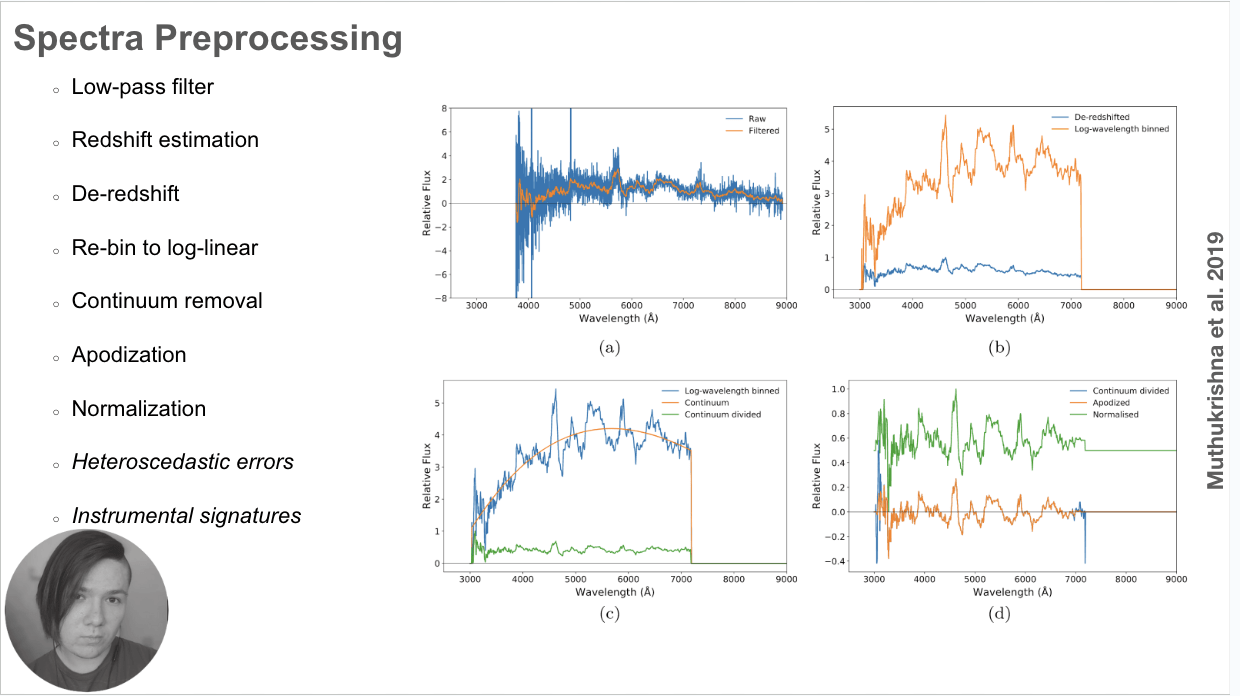

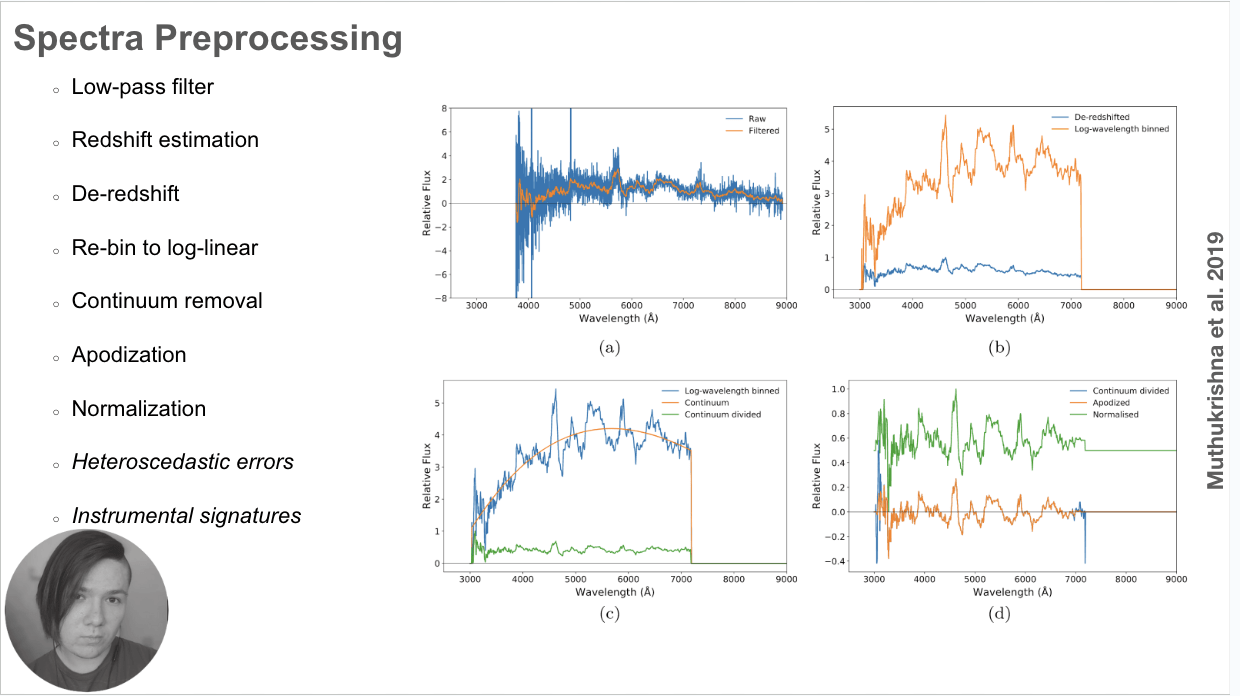

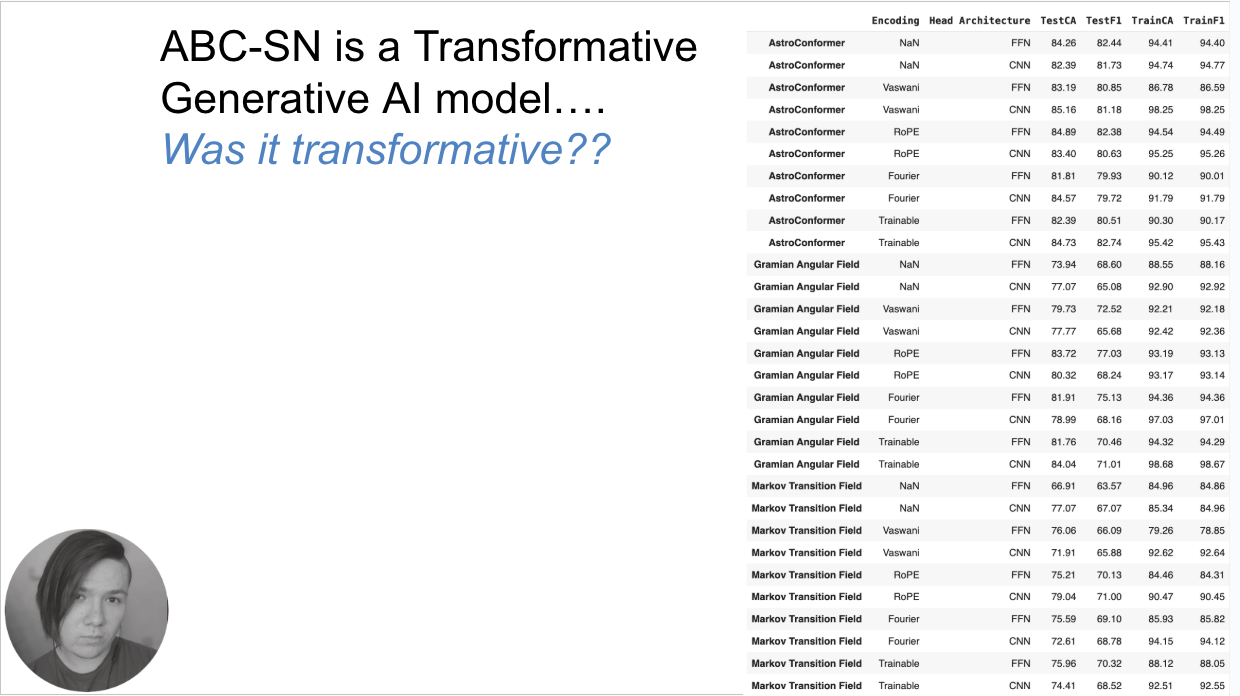

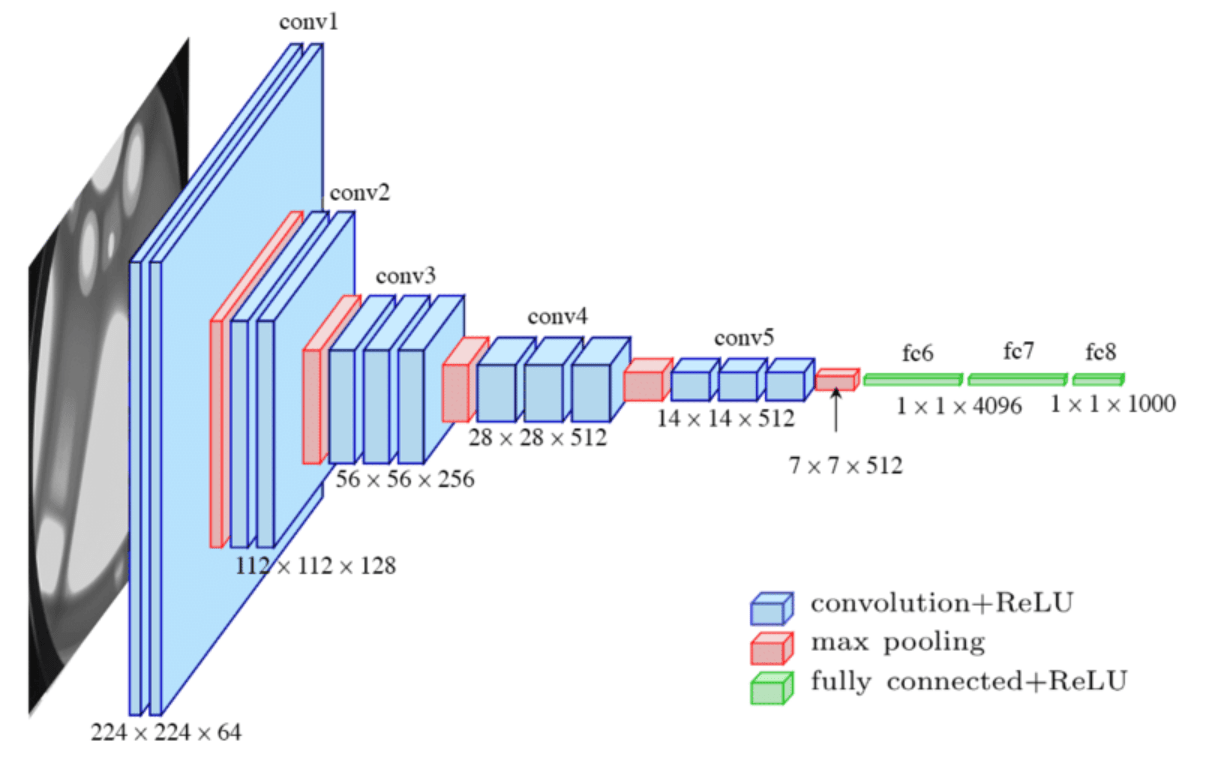

Optimal deep learning architectures for transients' spectral classification

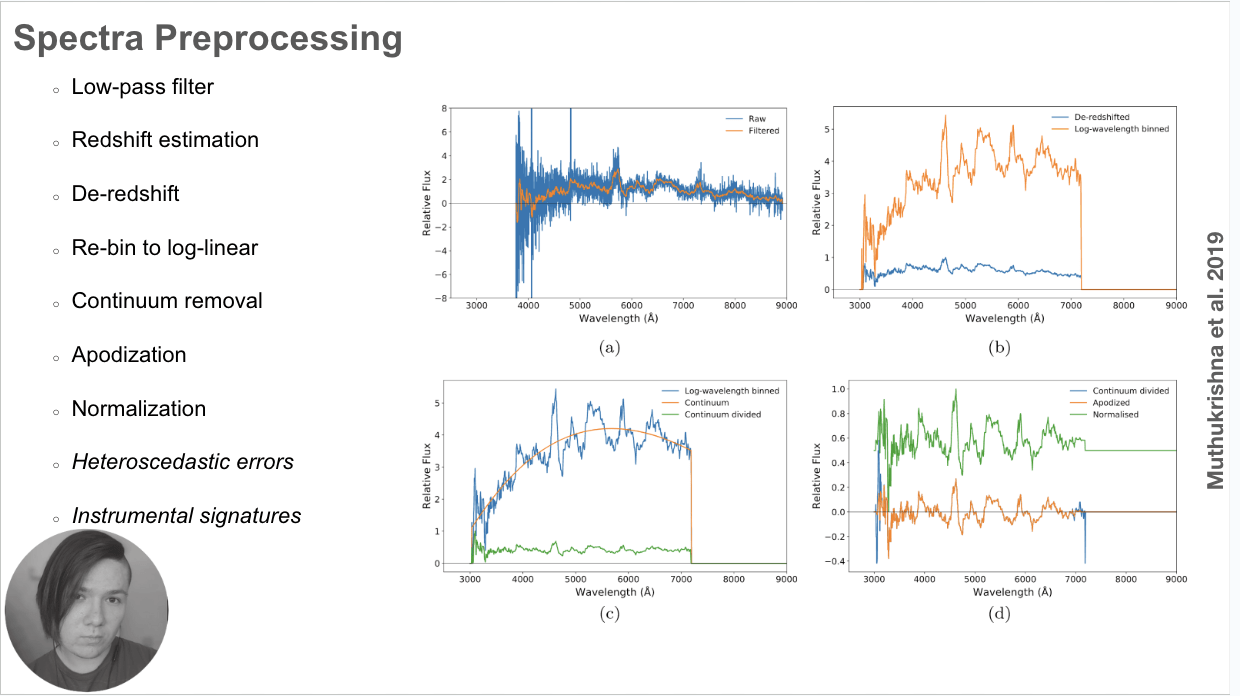

As seen in Muthukrishna+2019

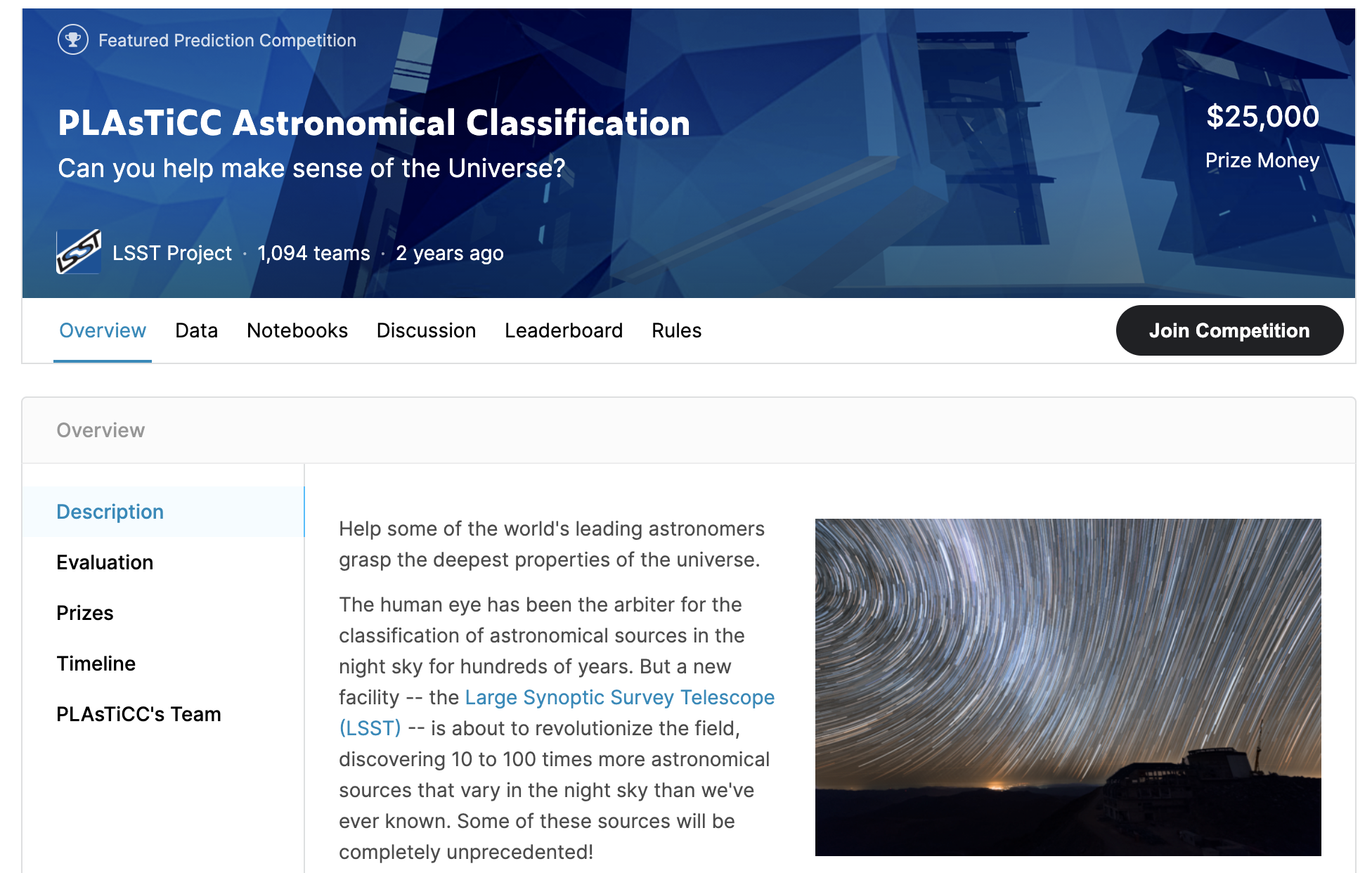

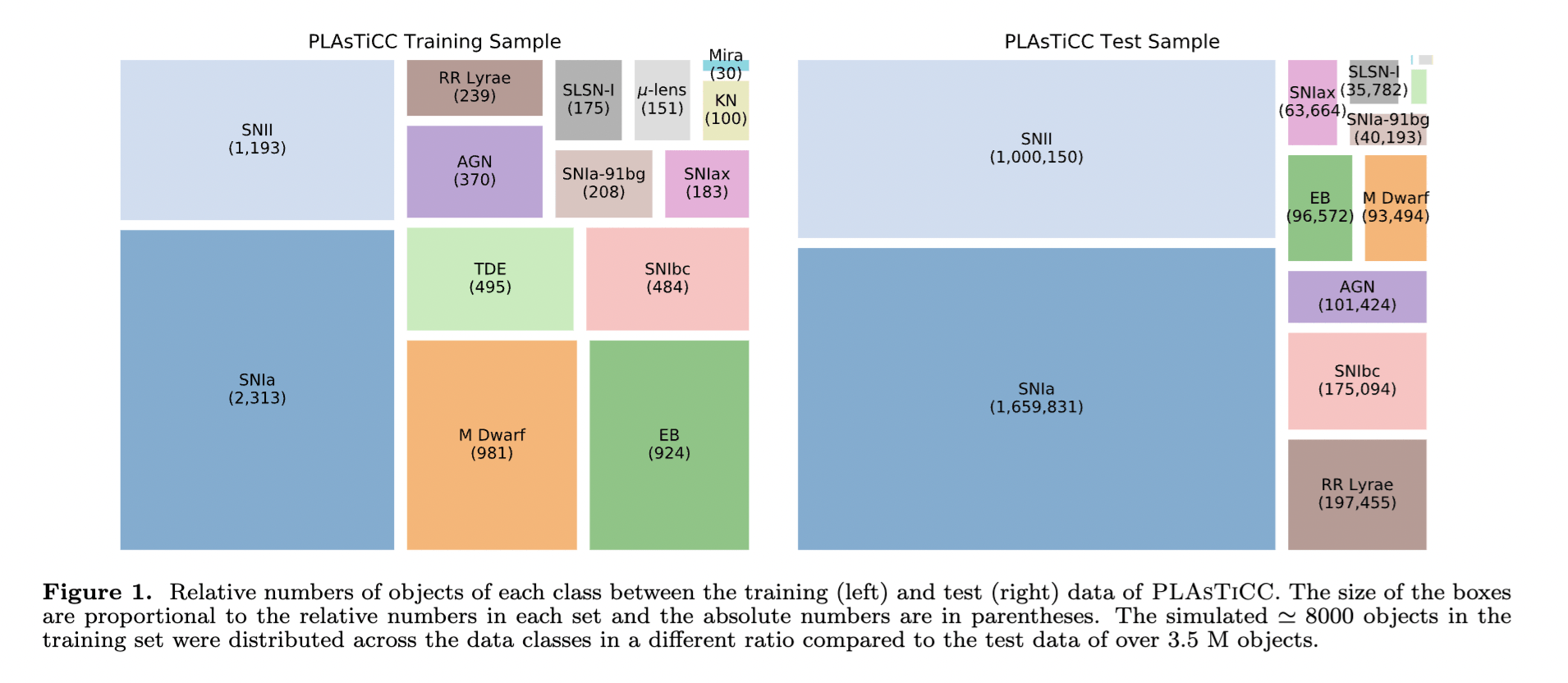

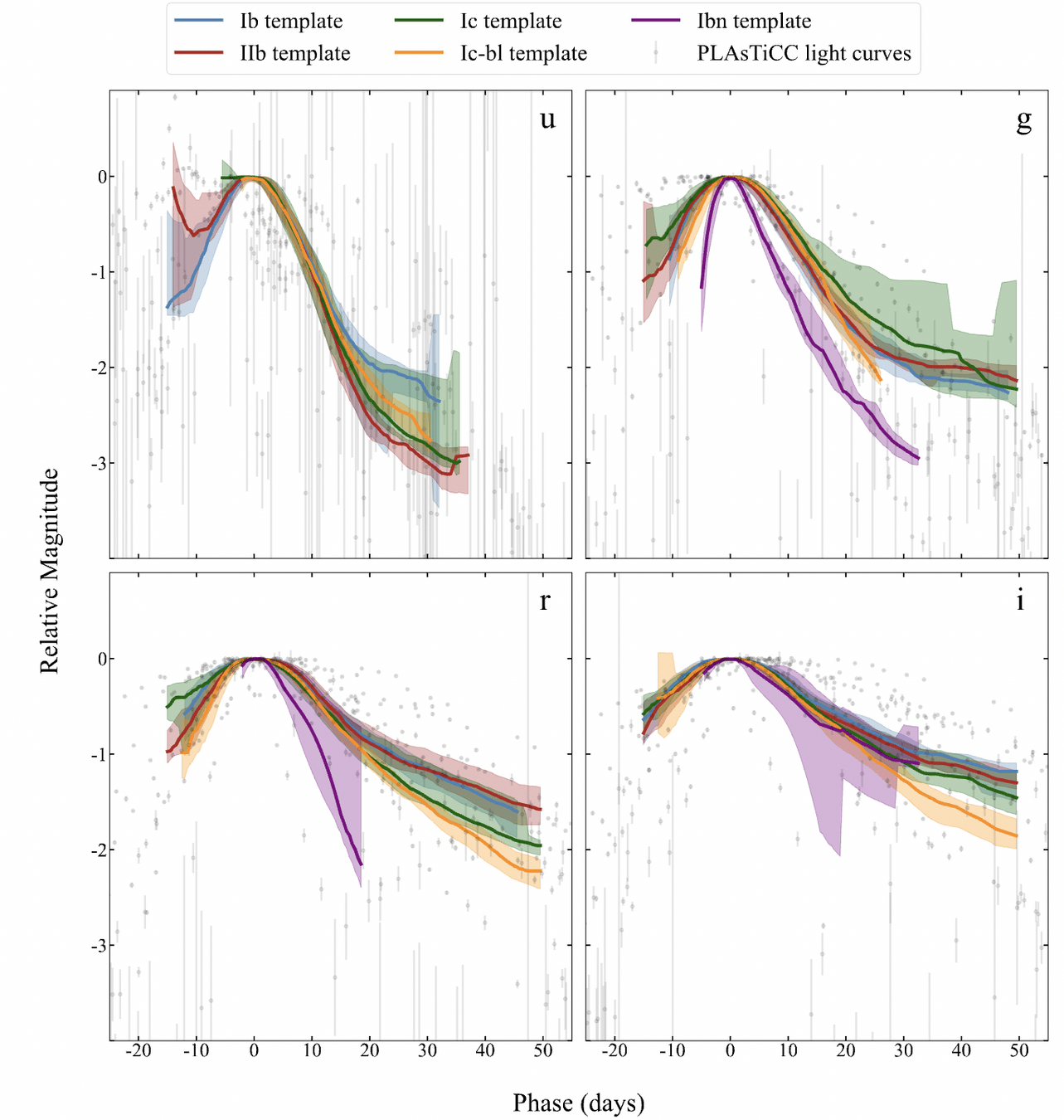

The PLAsTiCC challenge winnre, Kyle Boone was a grad student at Berkeley, and did not sue a Neural Network!

Hlozek et al, 2020

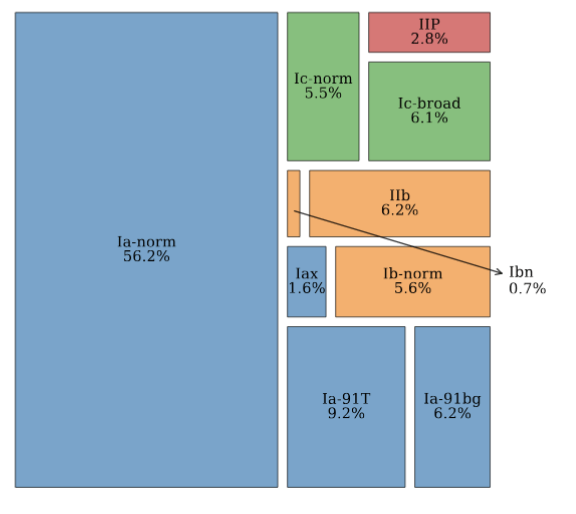

DATA CURATION IS THE BOTTLE NECK

models contributed by the community were in

- different format (spectra, lightcurves, theoretical, data-driven)

- the people that contributed the models were included in 1 paper at best

- incompleteness

- systematics

- imbalance

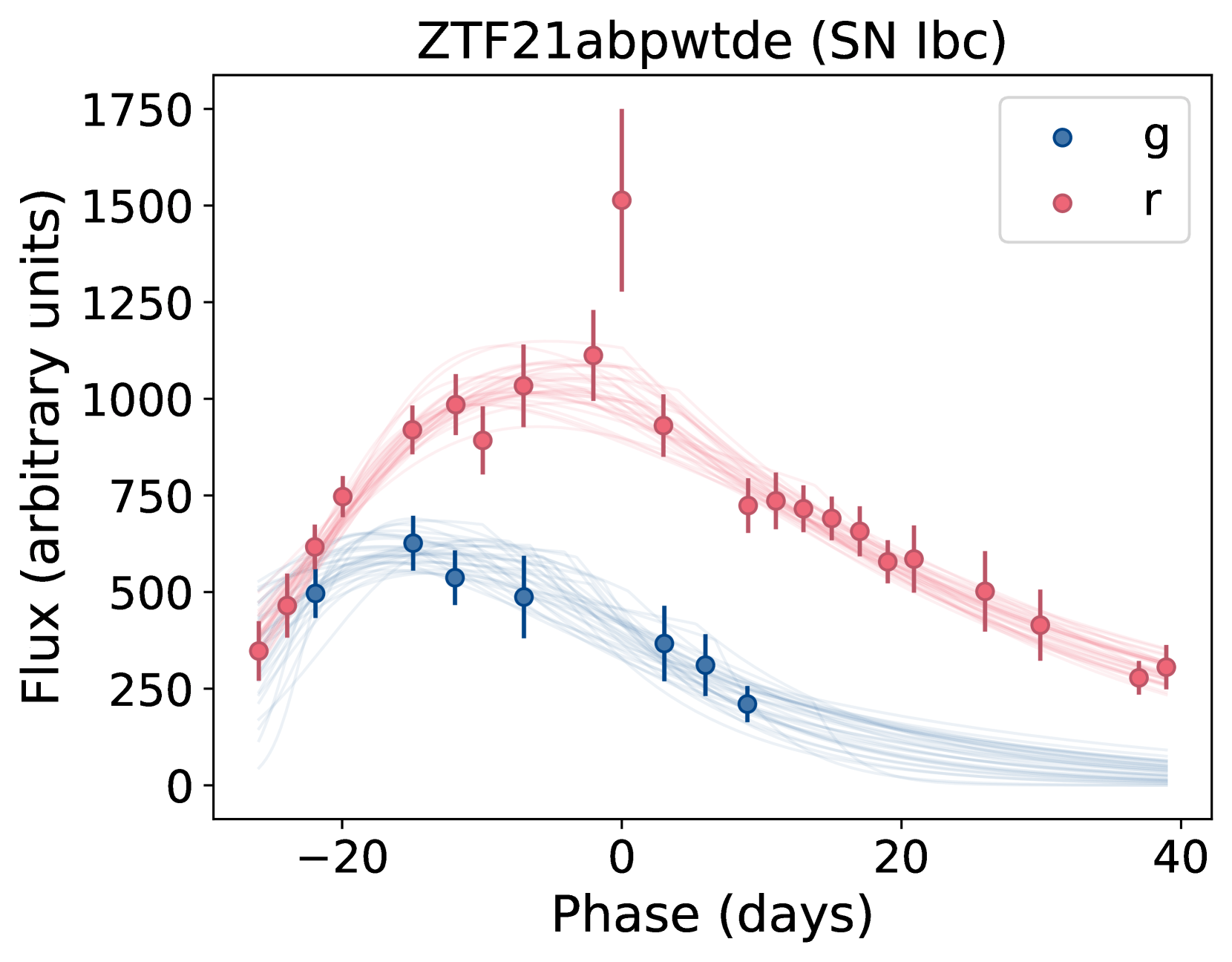

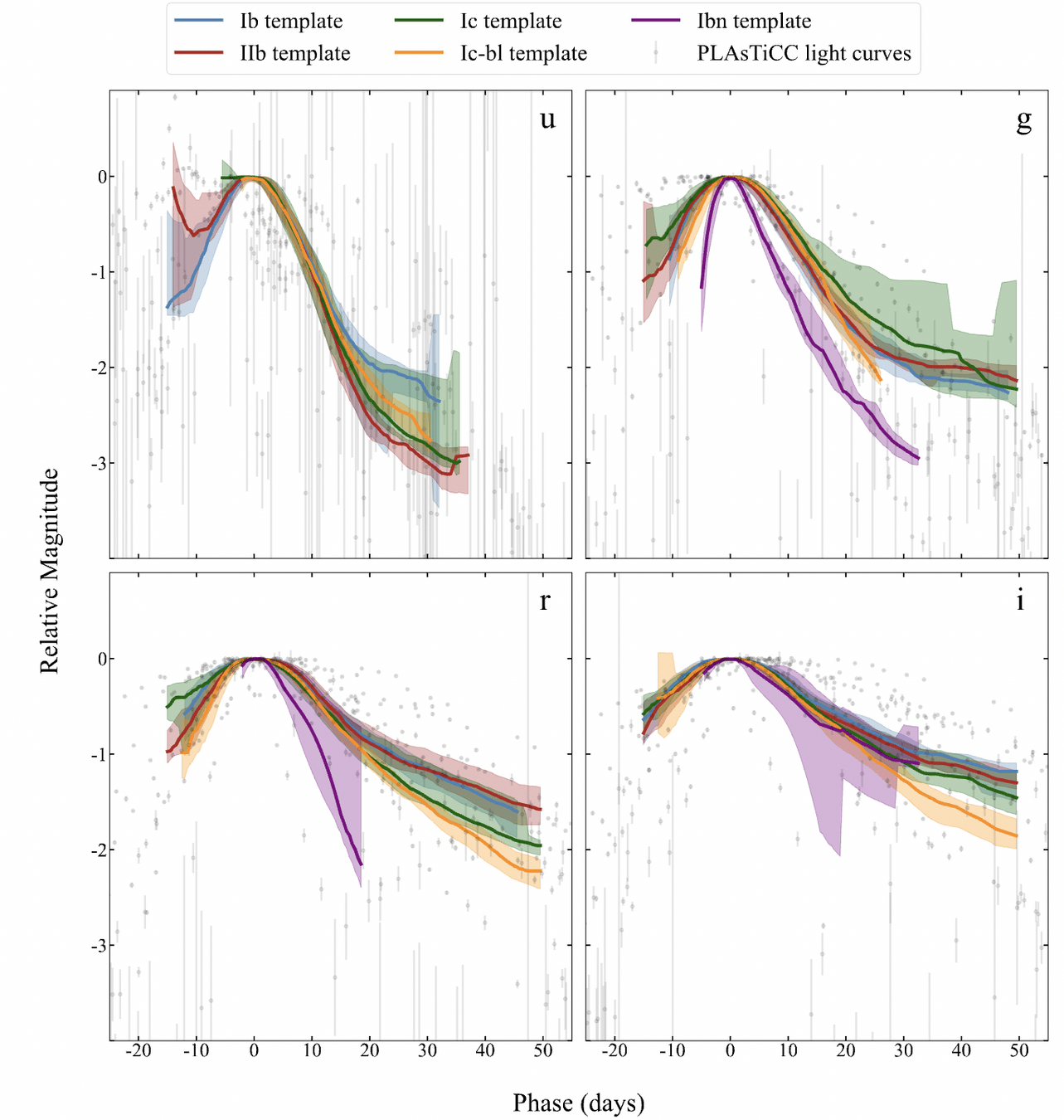

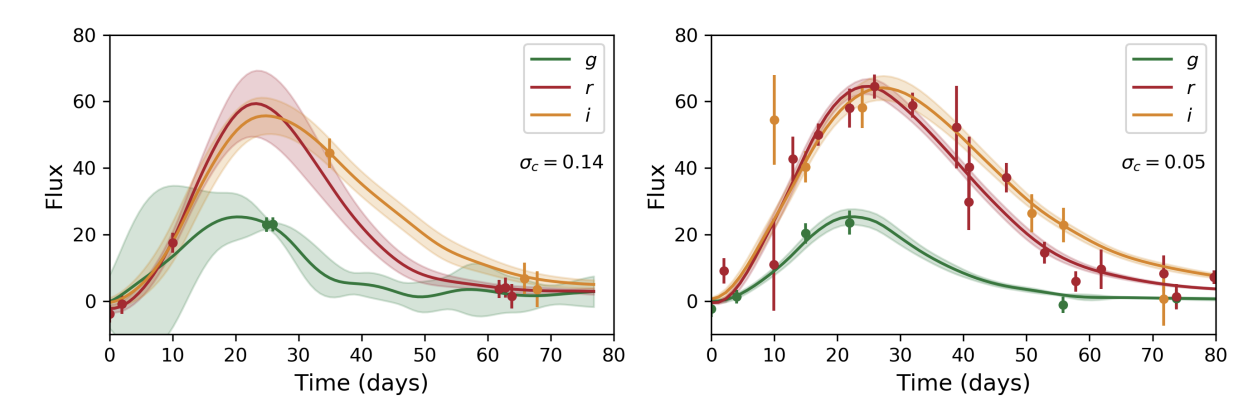

khakpash+ 2024 showed that the models were biased for SN Ibc

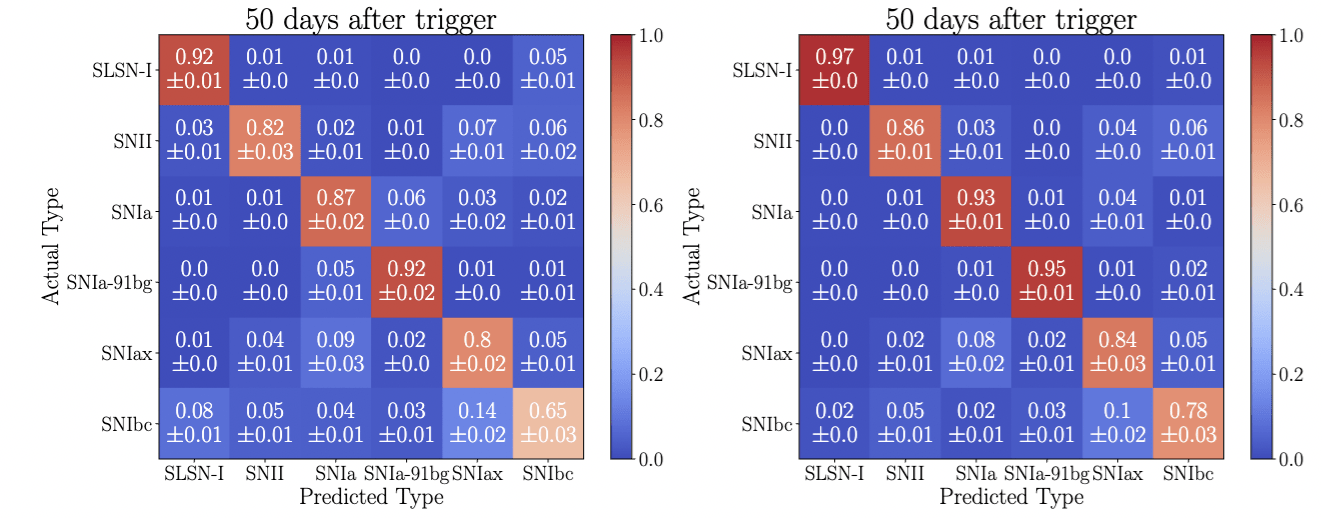

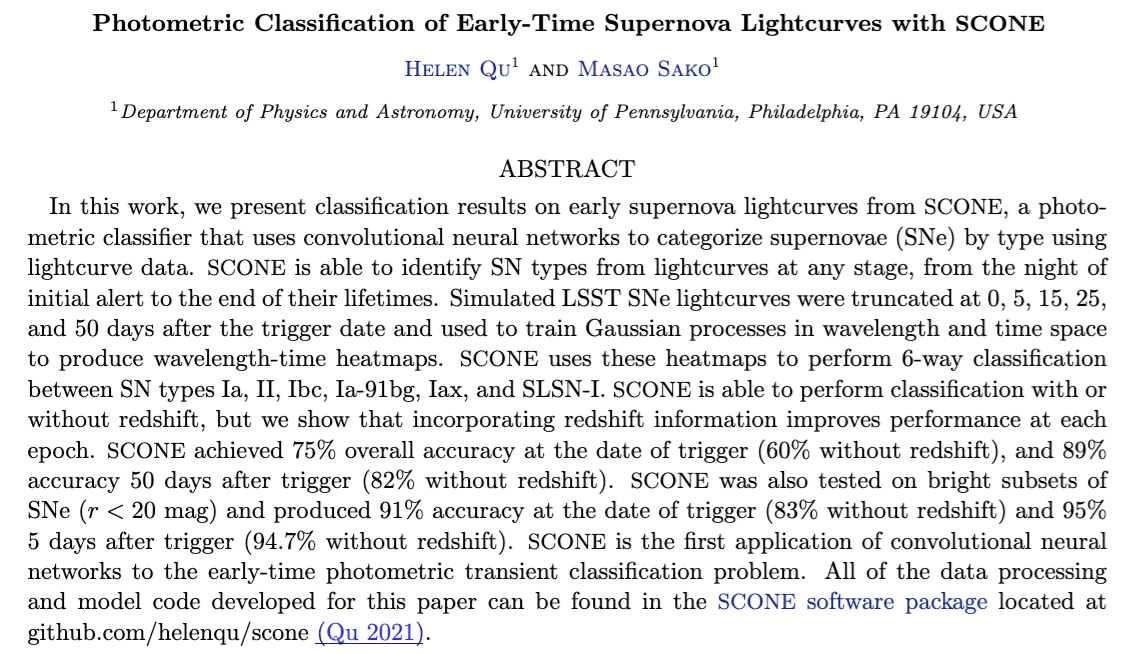

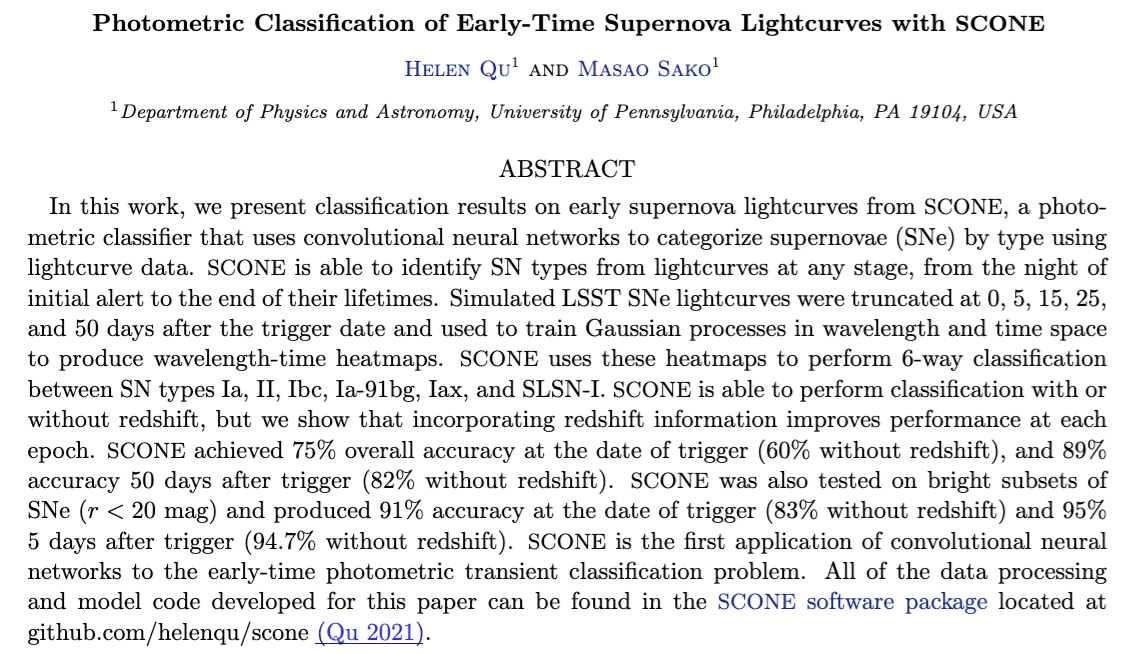

AVOCADO, SCONE, all these models are trained on a biased dataset and are being currently used for classification

Ibc data-driven templates vs PLAsTiCC

Dr. Somayeh Khakpash

LSSTC Catalyst Fellow, Rutgers

on the job market!

Lochner et al 2018

Text

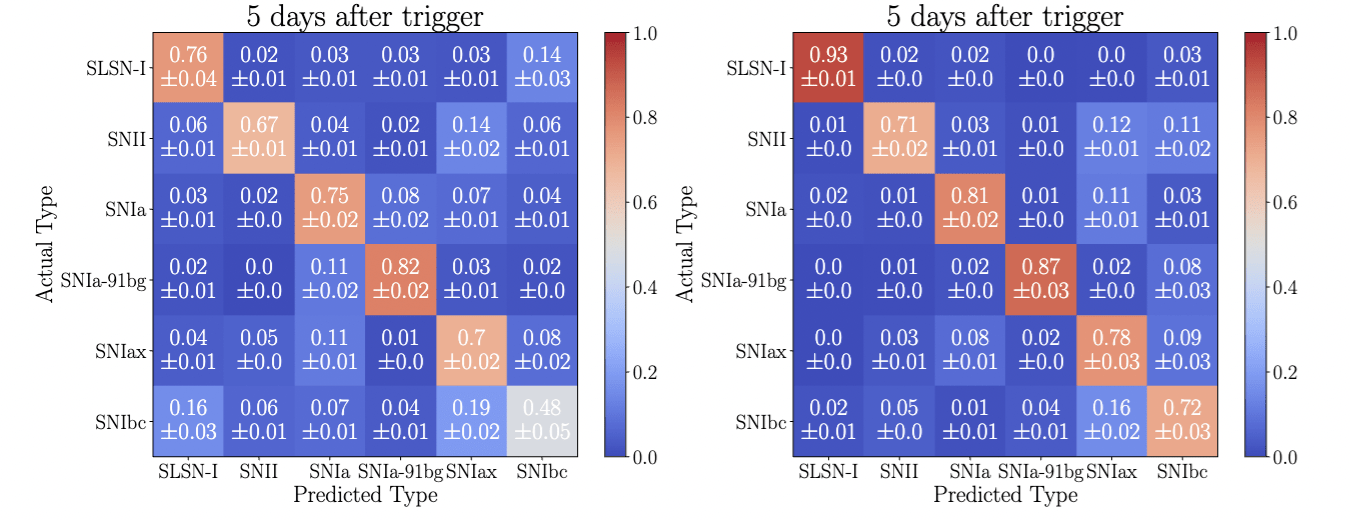

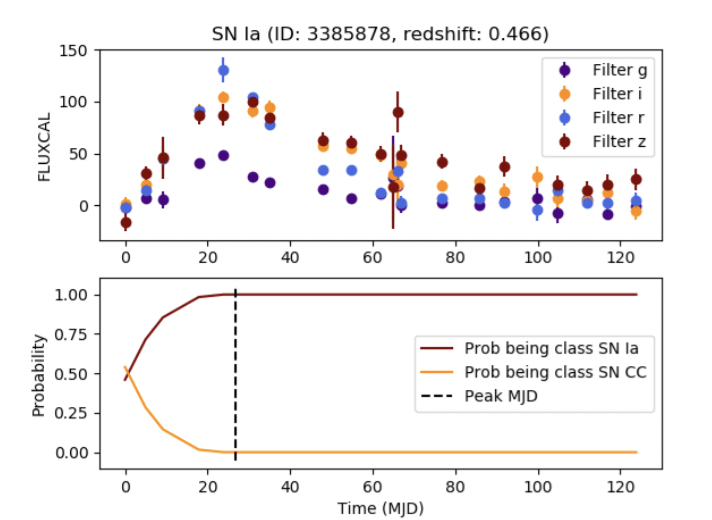

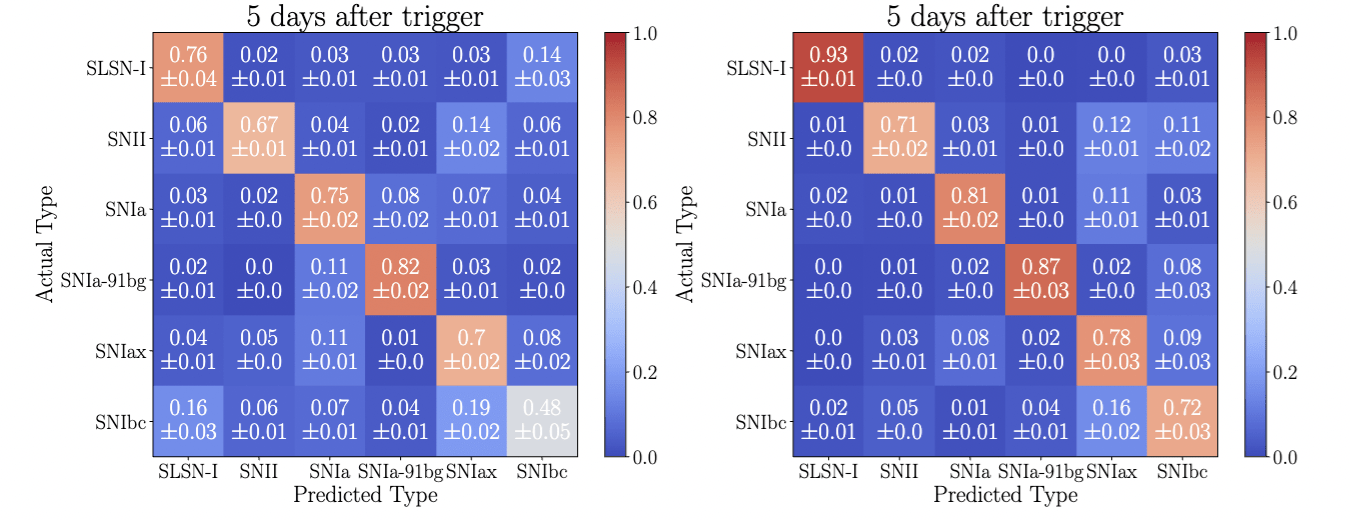

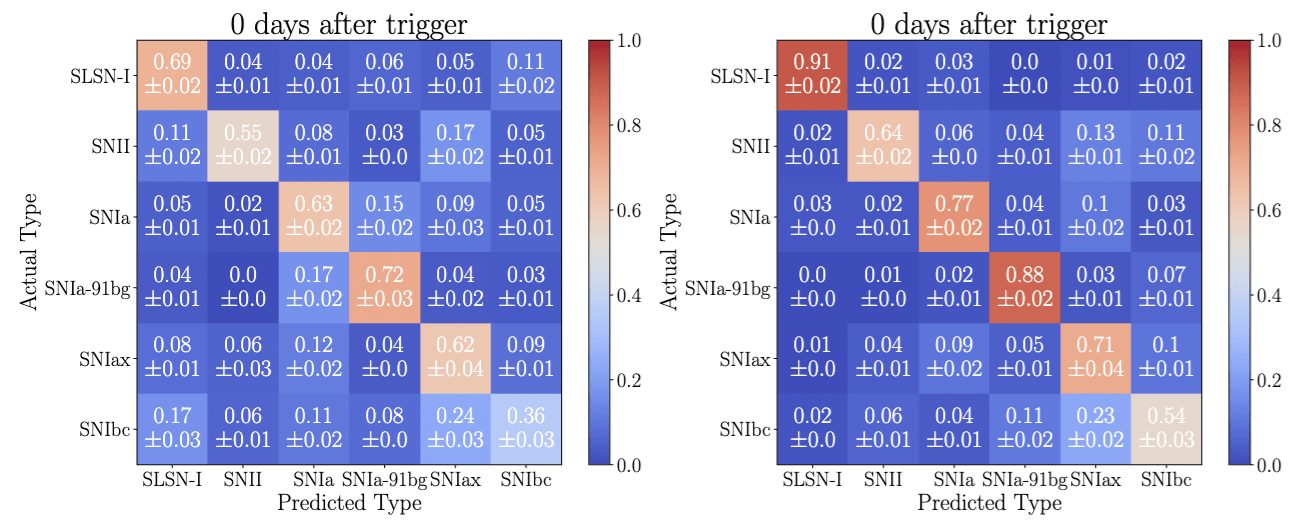

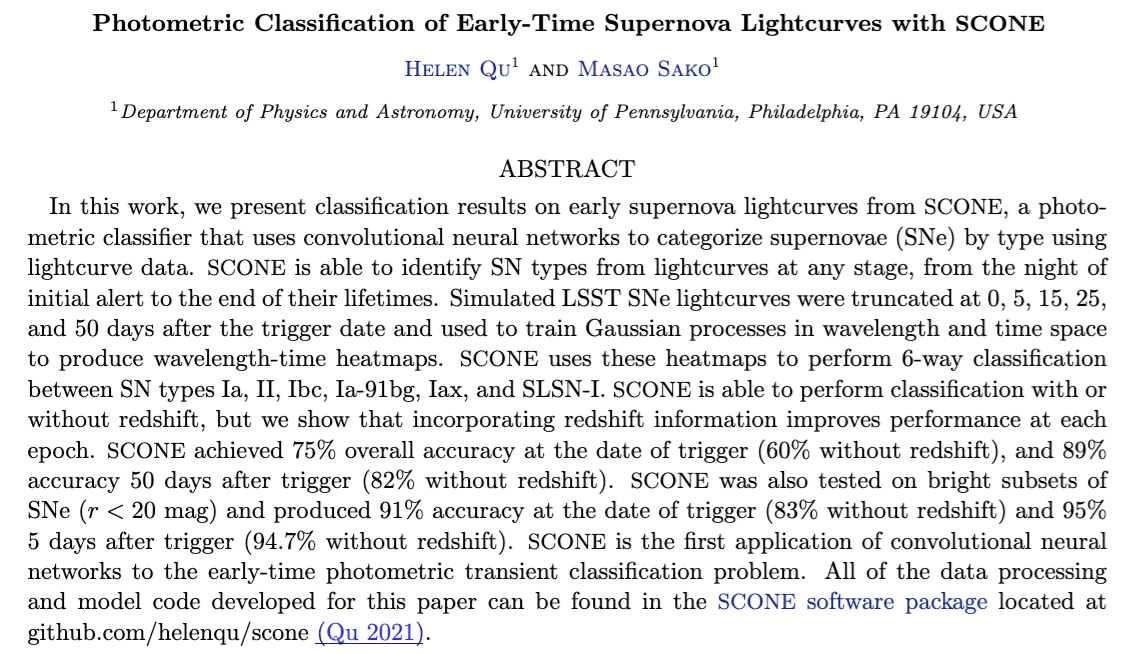

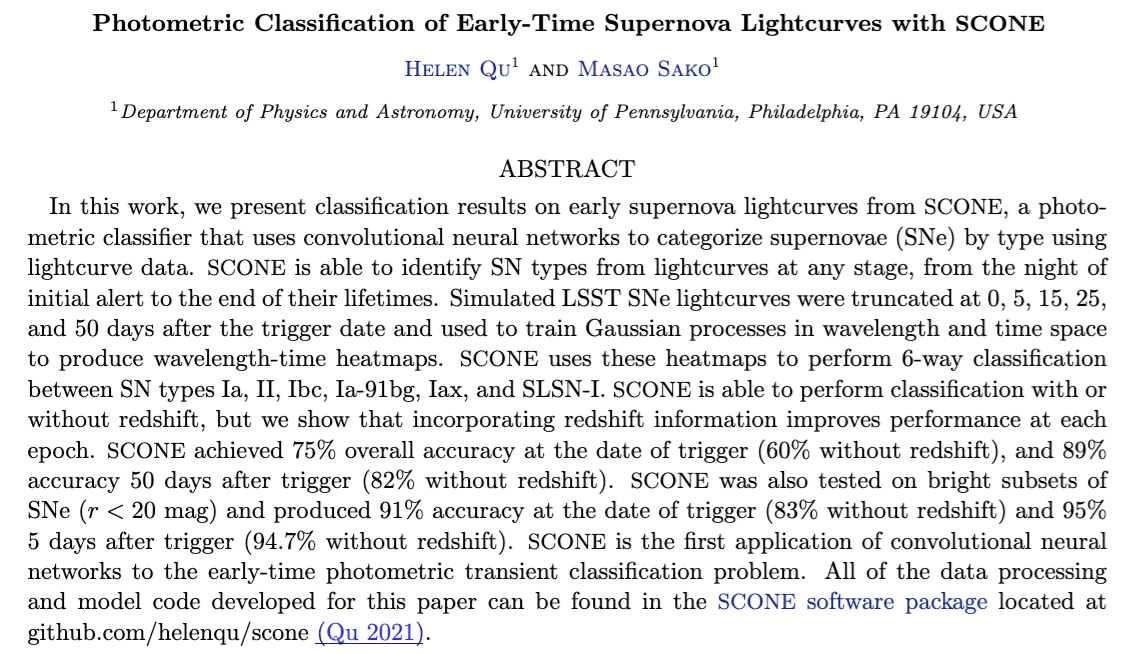

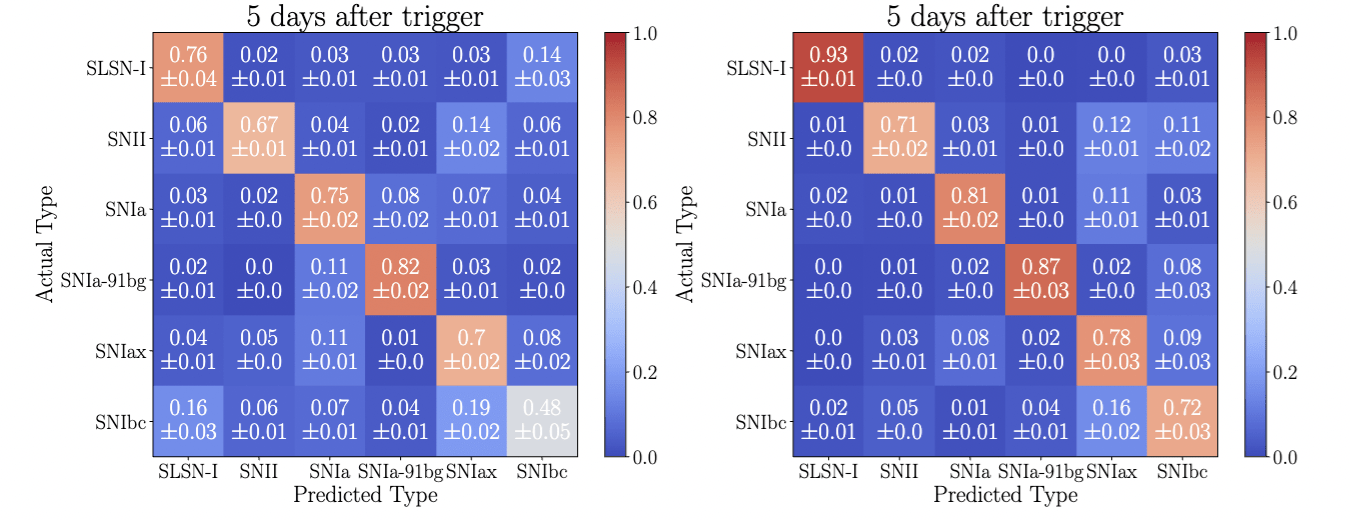

Classification from sparse data: Lightcurves

Classification from sparse data: Lightcurves

Text

Classification from sparse data: Lightcurves

without redshift

with redshift

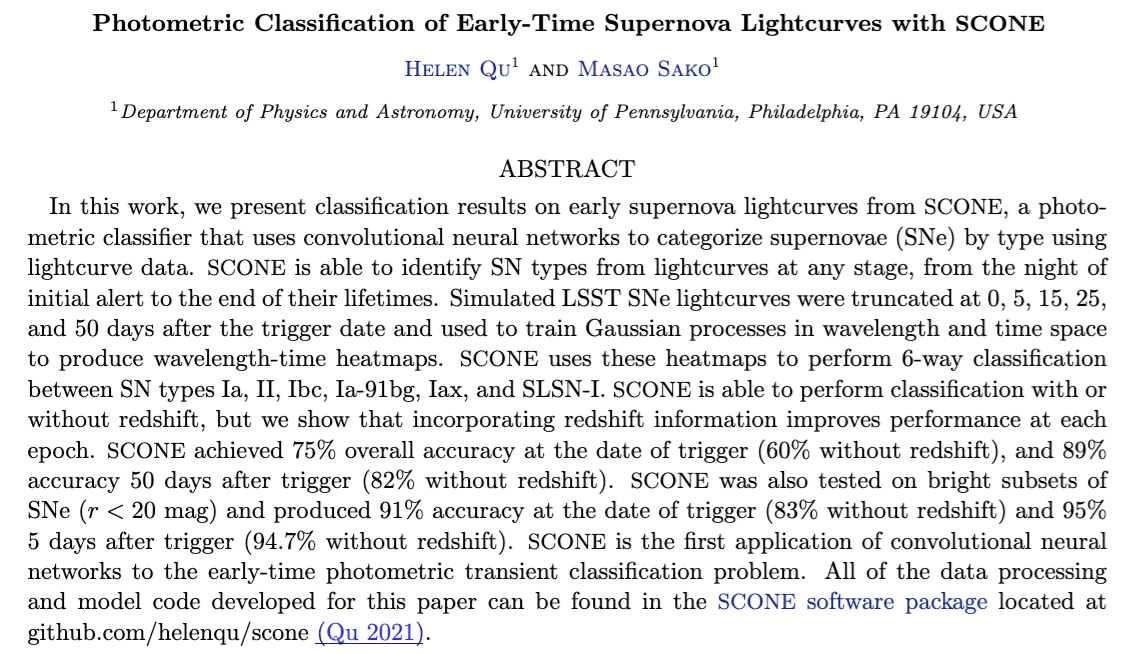

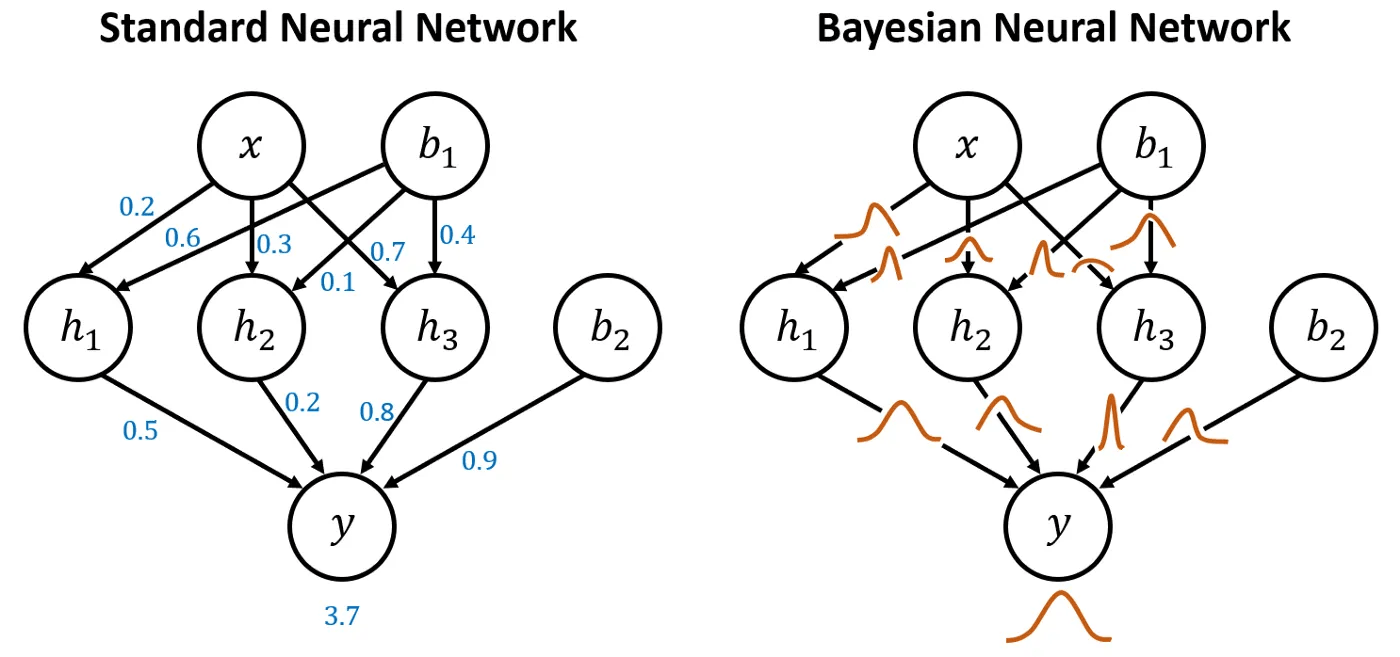

Methodological issues with these approaches

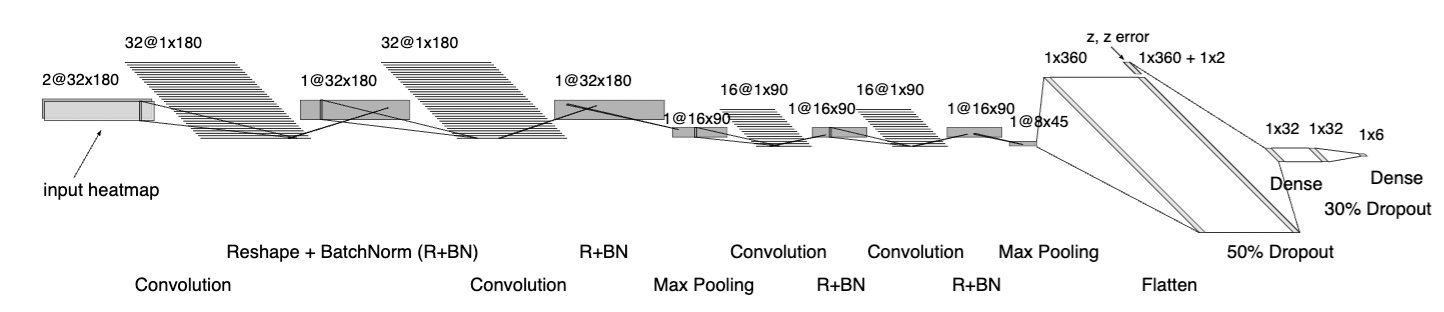

CNNs are not designed to ingest uncertainties. Passing them as an image layer "works" but it is not clear why since the convolution on the flux and error space are averaged after the first layer

Classification from sparse data: Lightcurves

without redshift

with redshift

Classification from sparse data: Lightcurves

without redshift

with redshift

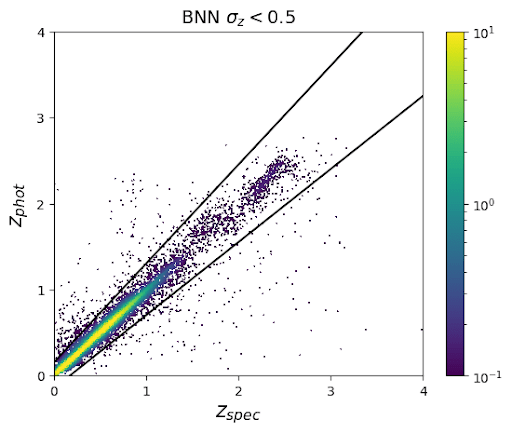

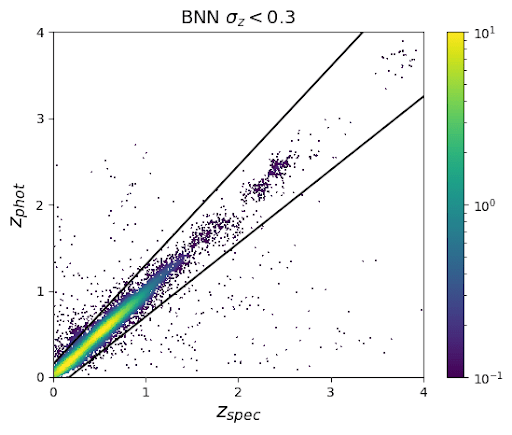

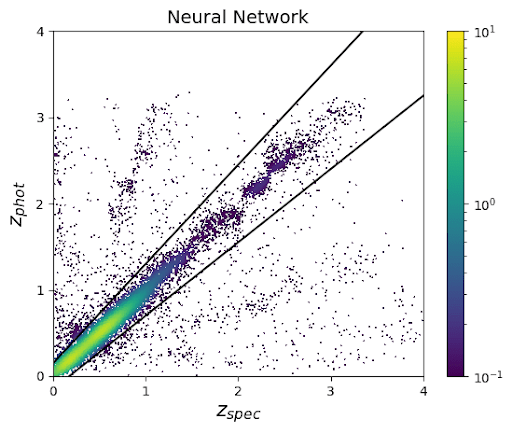

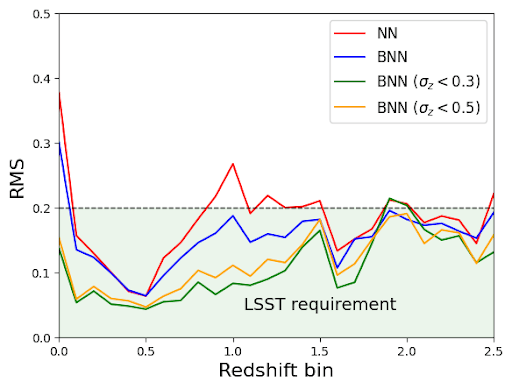

Photo-z

Lochner et al 2018

Text

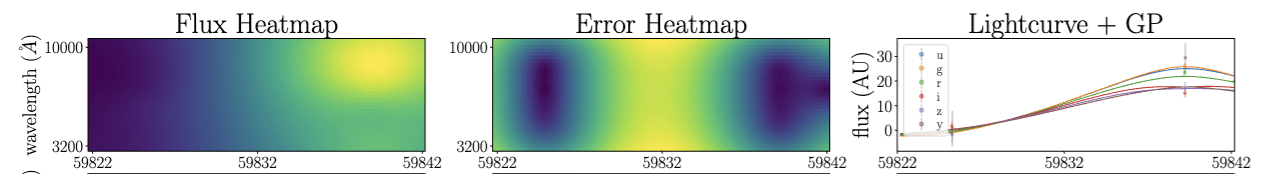

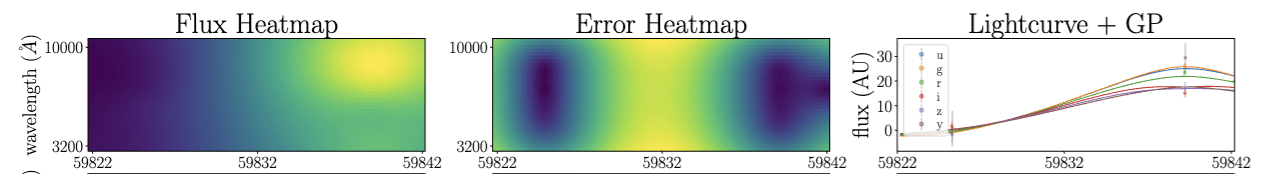

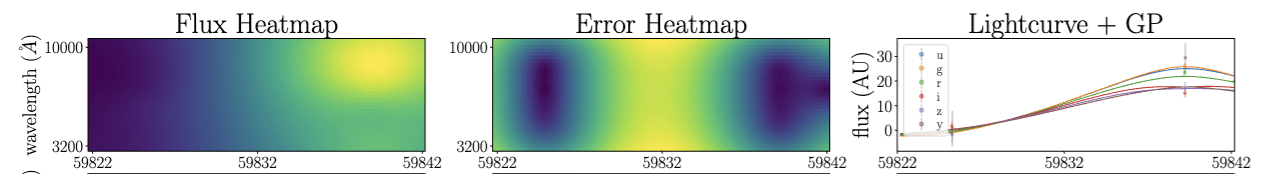

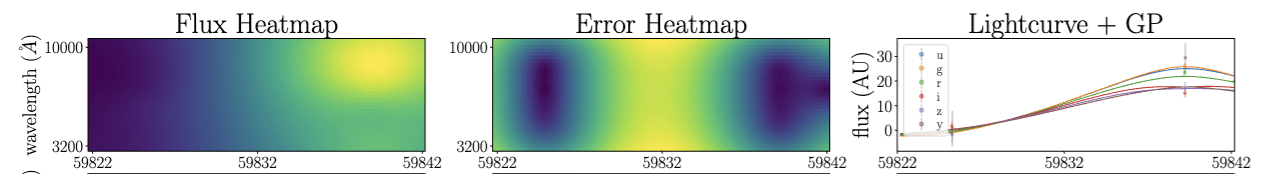

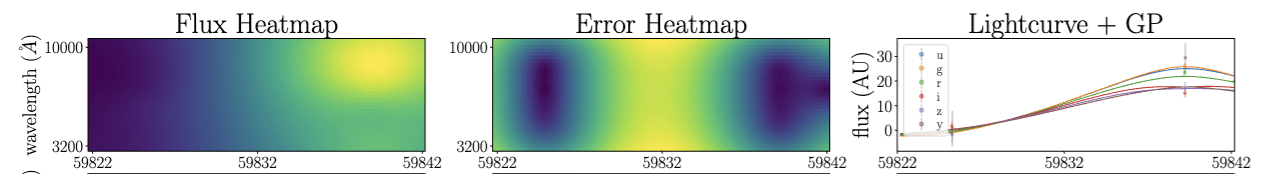

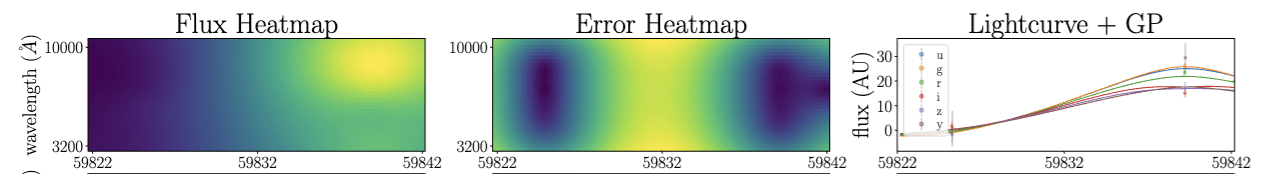

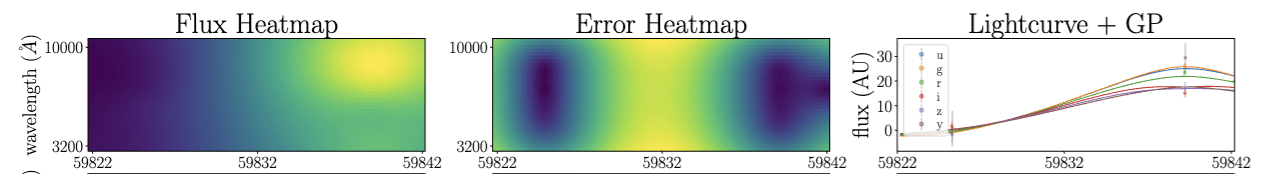

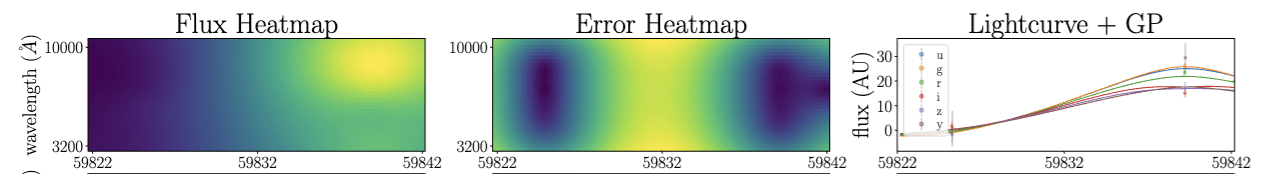

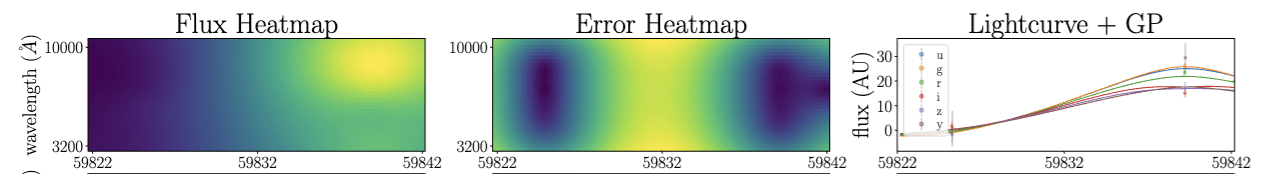

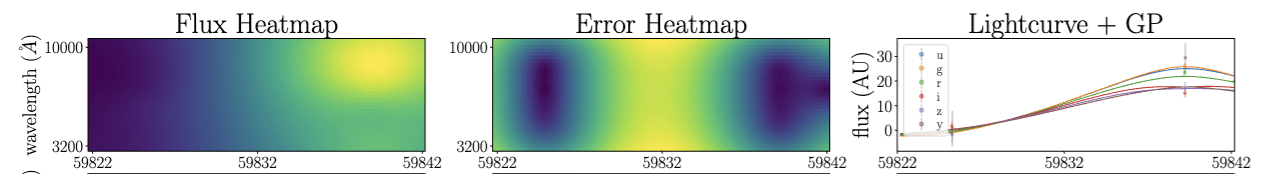

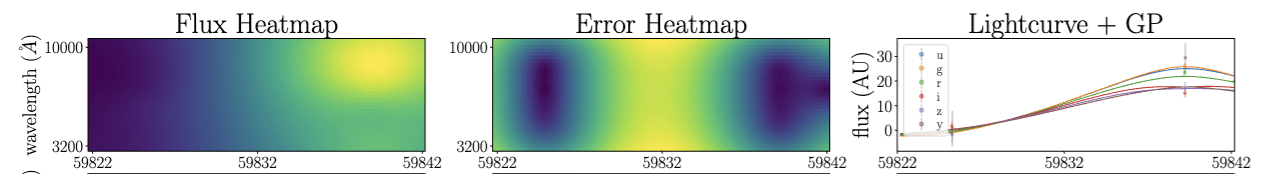

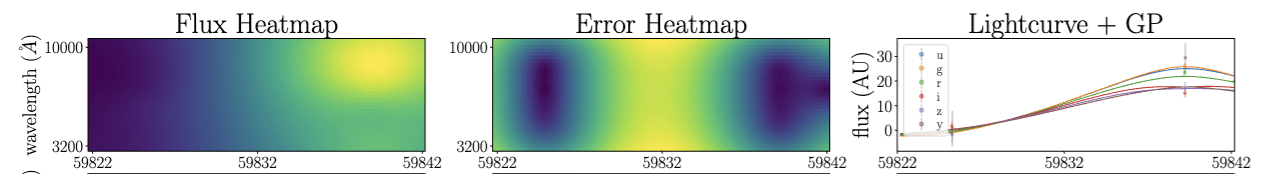

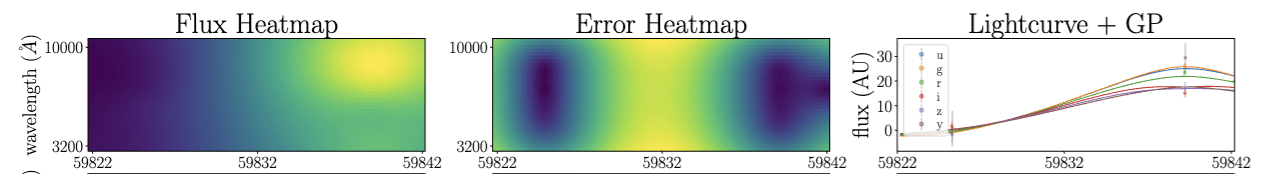

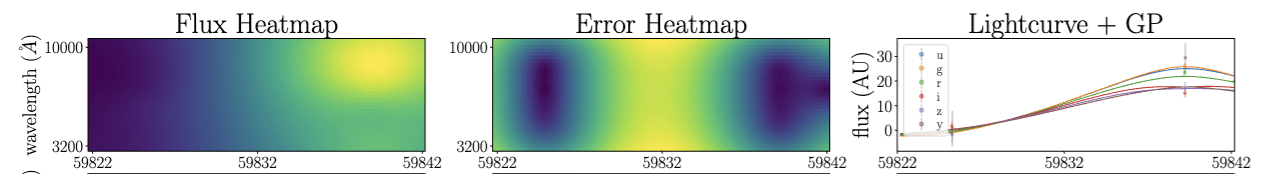

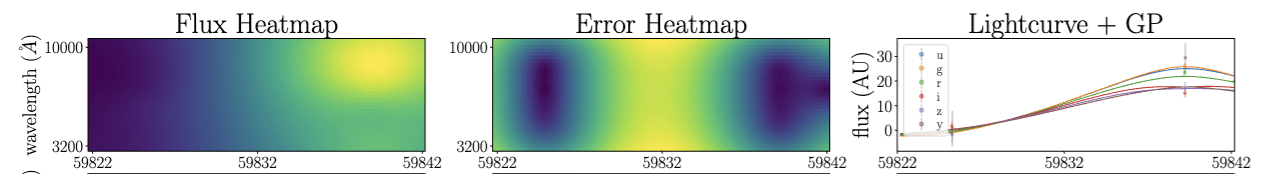

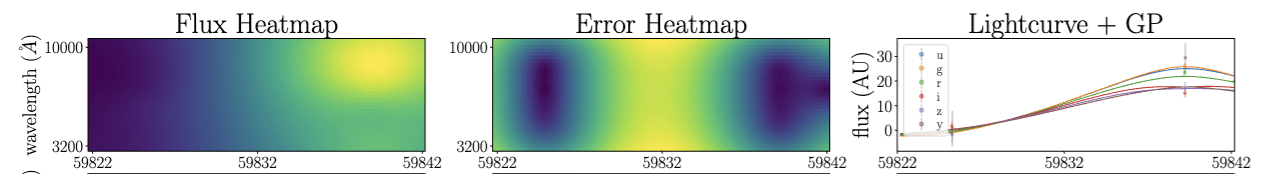

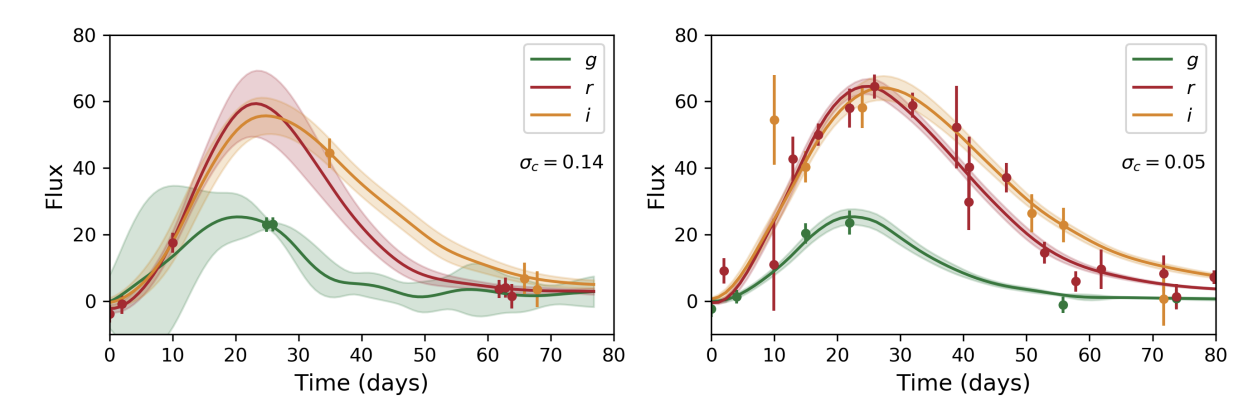

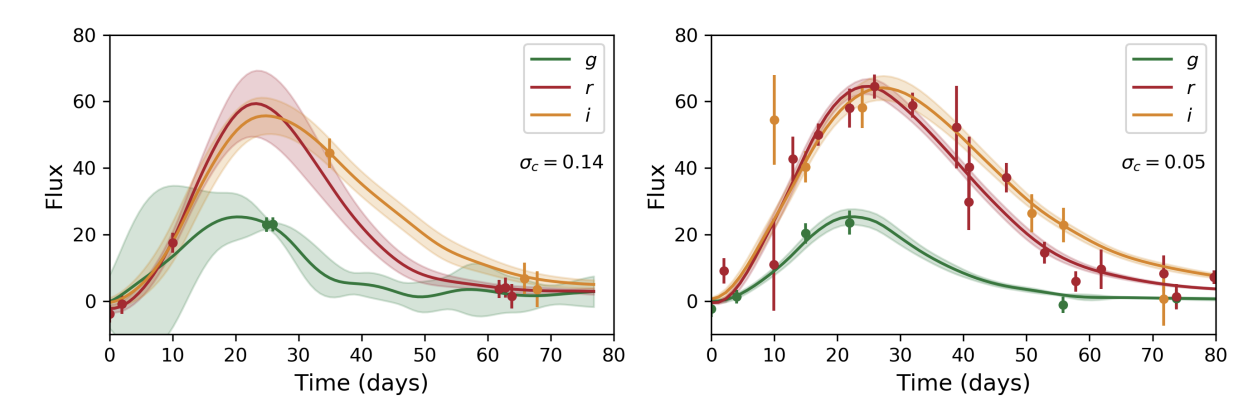

Addressing sparsity

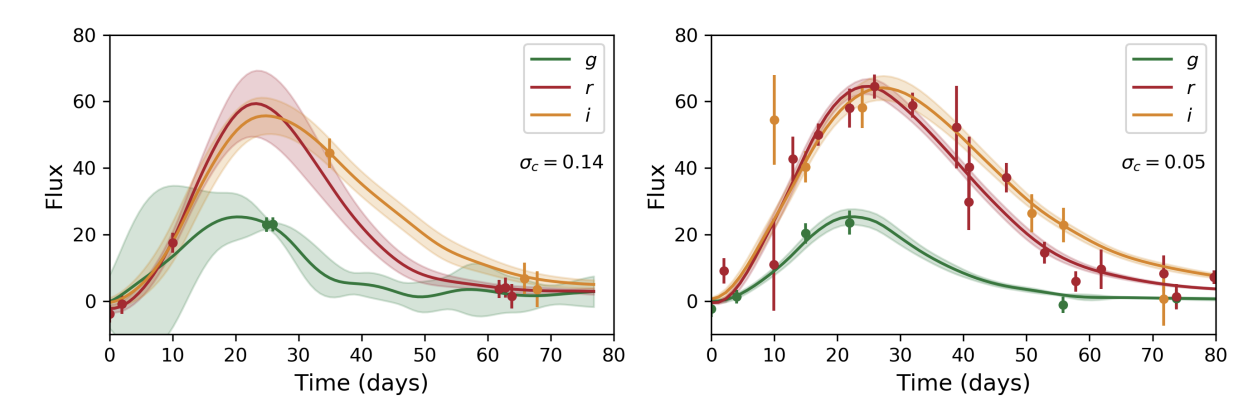

Boone19

Qu22

Gaussian processes work by imposing a kernel that represents the covariance in the data (how data depend on time or time/wavelength). Imposing the same kernel for different time-domain phenomena is principally incorrect

=> bias toward known classes

Methodological issues with these approaches

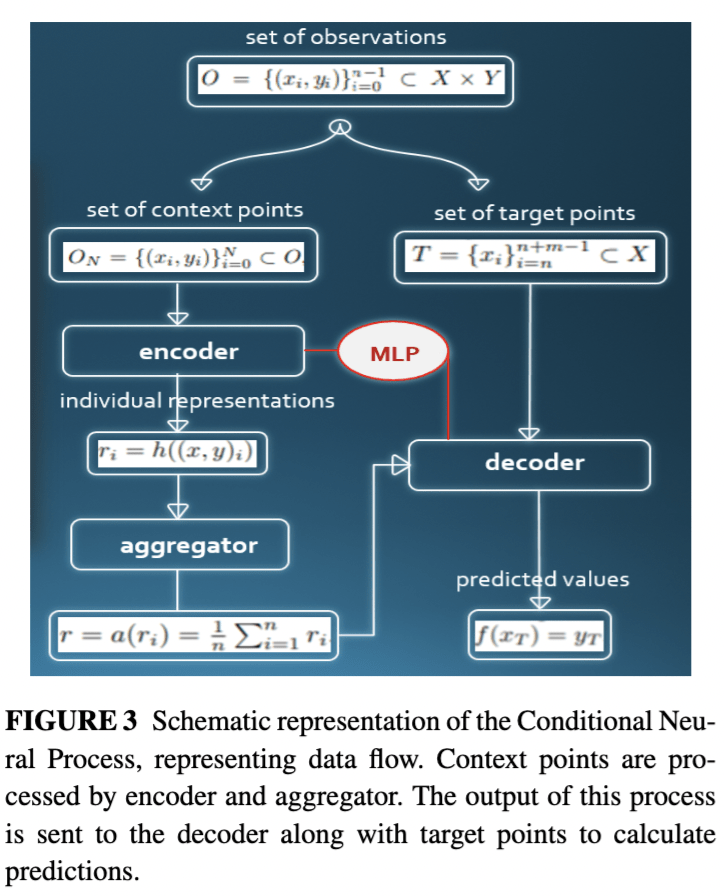

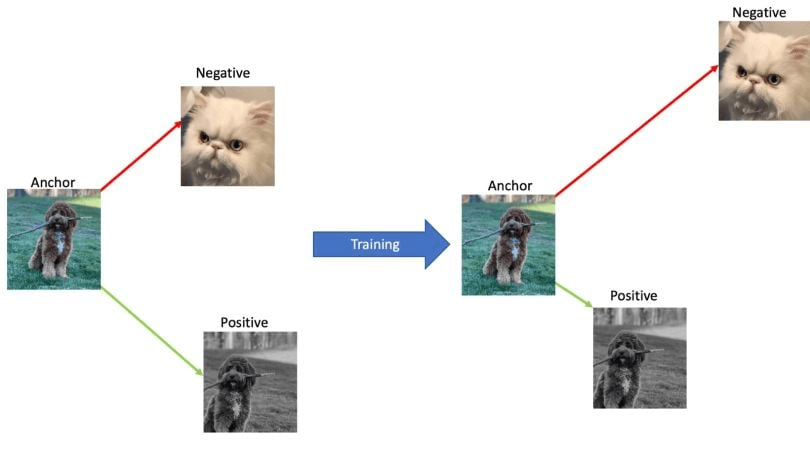

Neural processes replace the imposed kernel with a learned one

Gaussian processes work by imposing a kernel that represents the covariance in the data (how data depend on time or time/wavelength). Imposing the same kernel for different time-domain phenomena is principally incorrect

=> bias toward known classes

Methodological issues with these approaches

Neural processes replace the imposed kernel with a learned one

Hajdinjak+2021

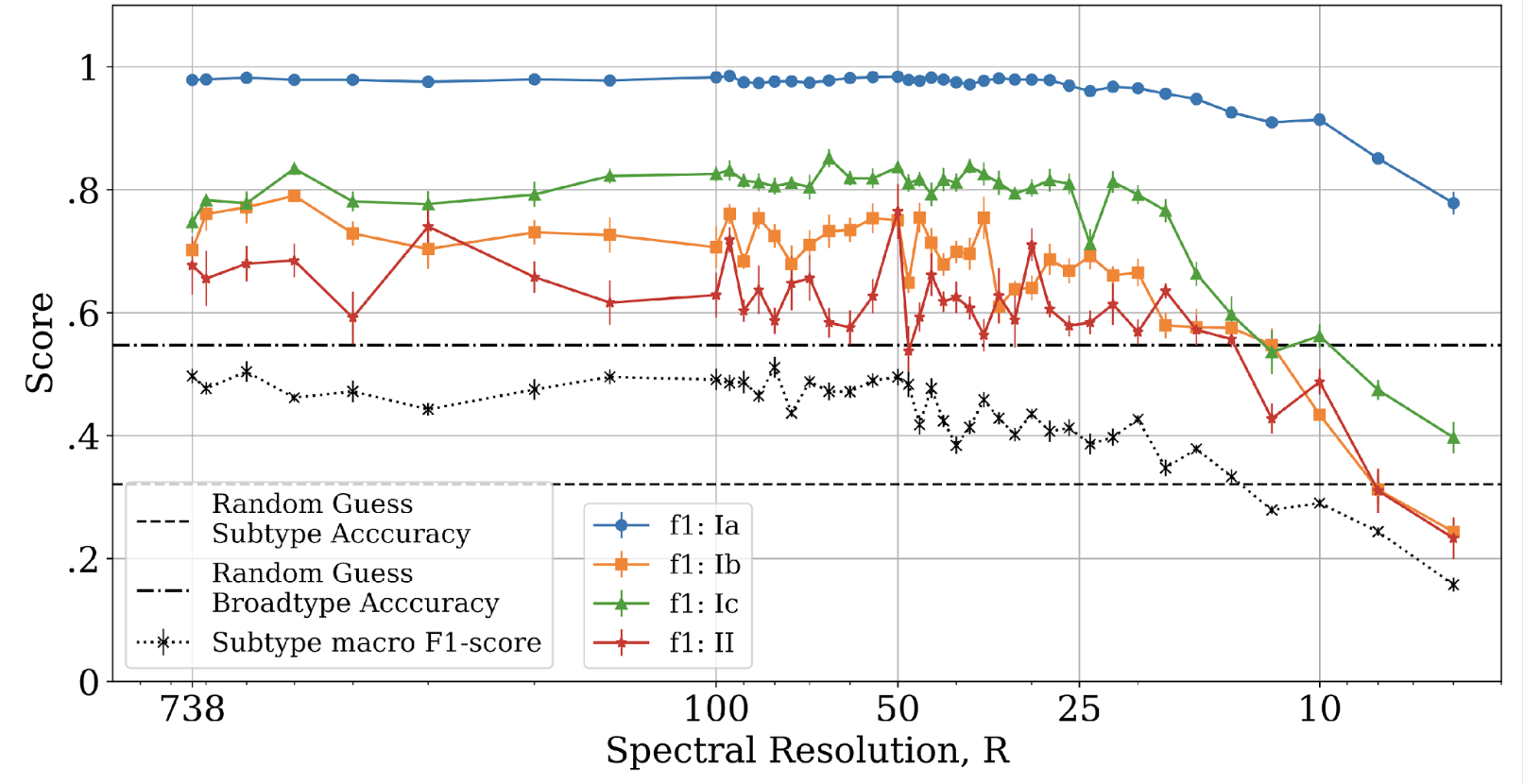

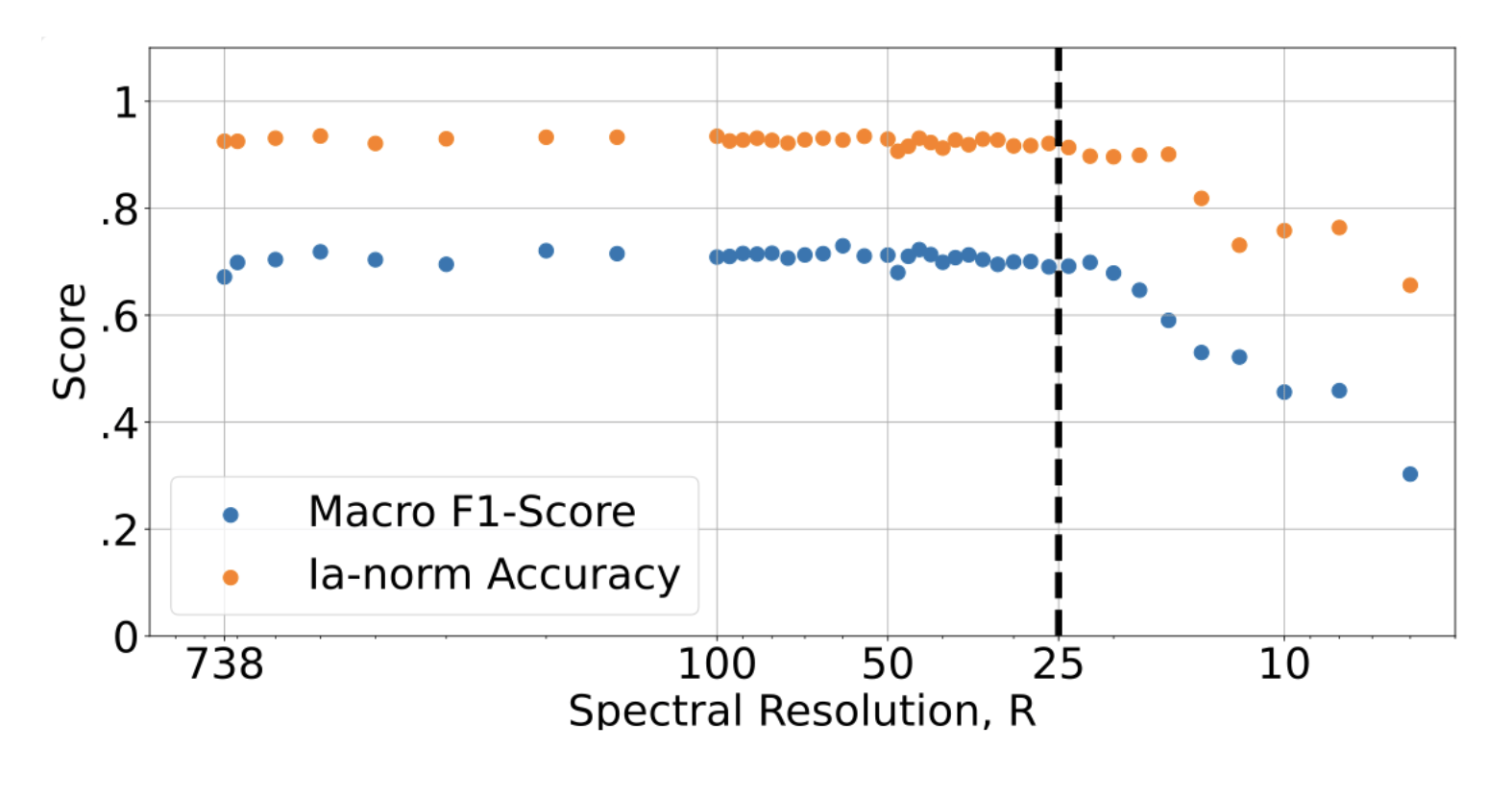

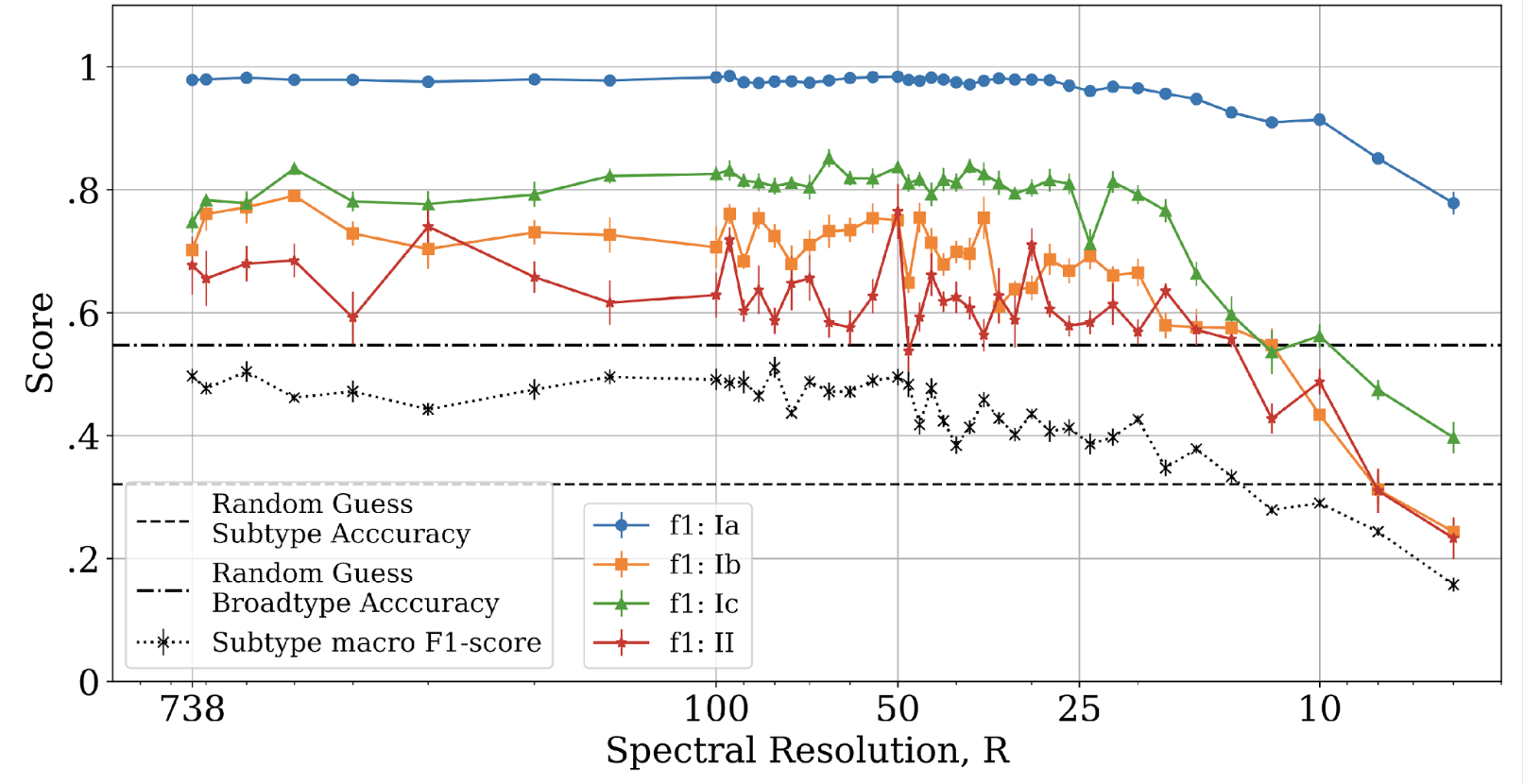

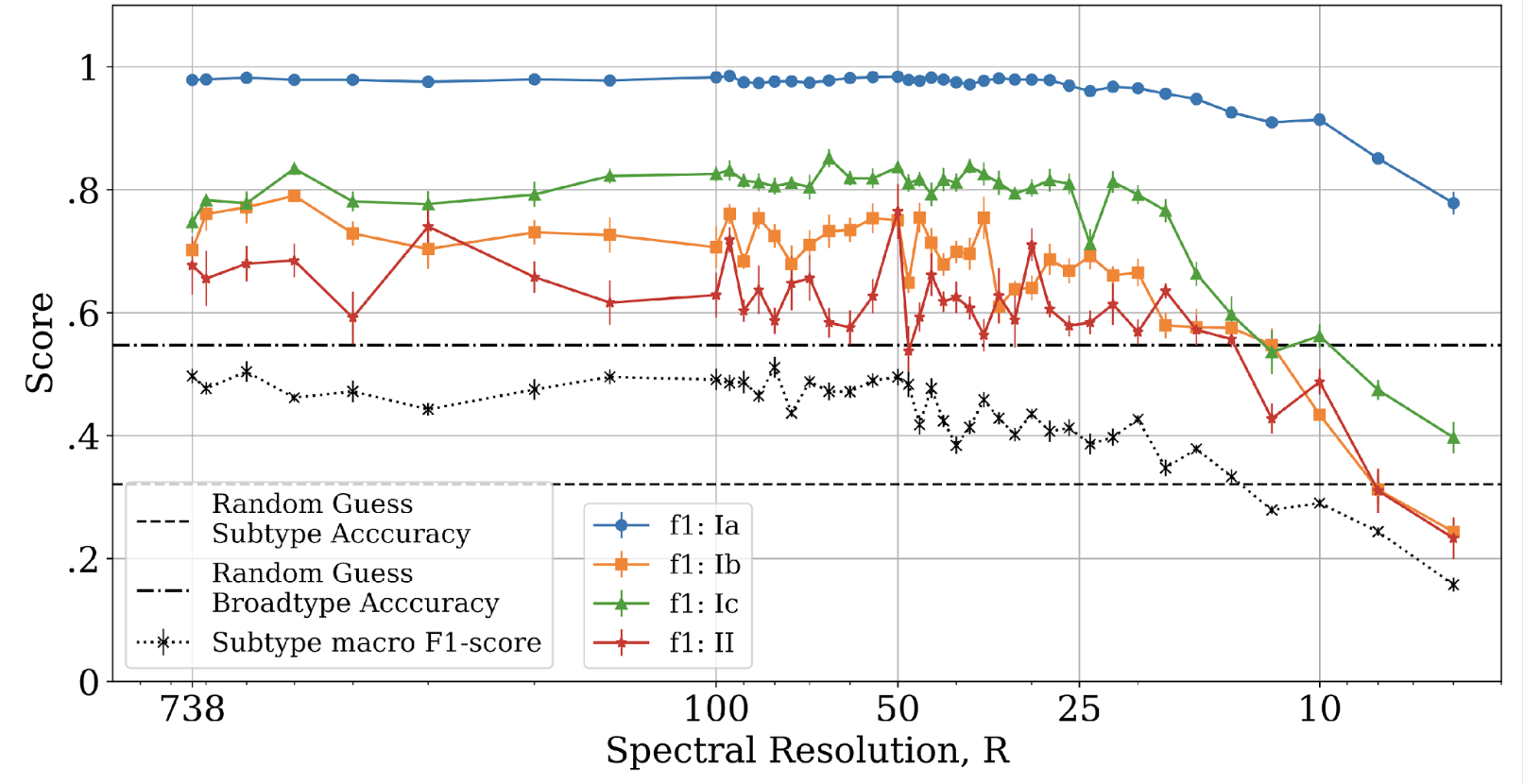

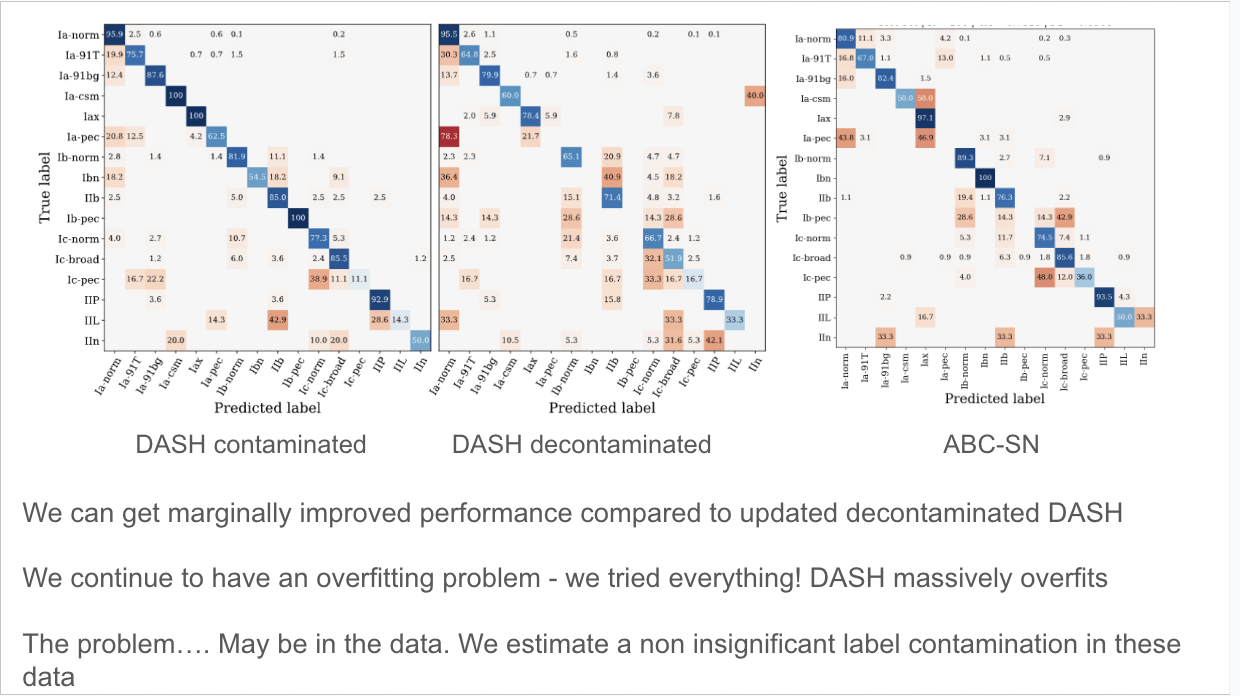

When they go high, we go low... spectra classification at low resolution

Astrophysical spectra require the capture of enough photons at each wavelength:

large telescopes

long exposure times

bright objects

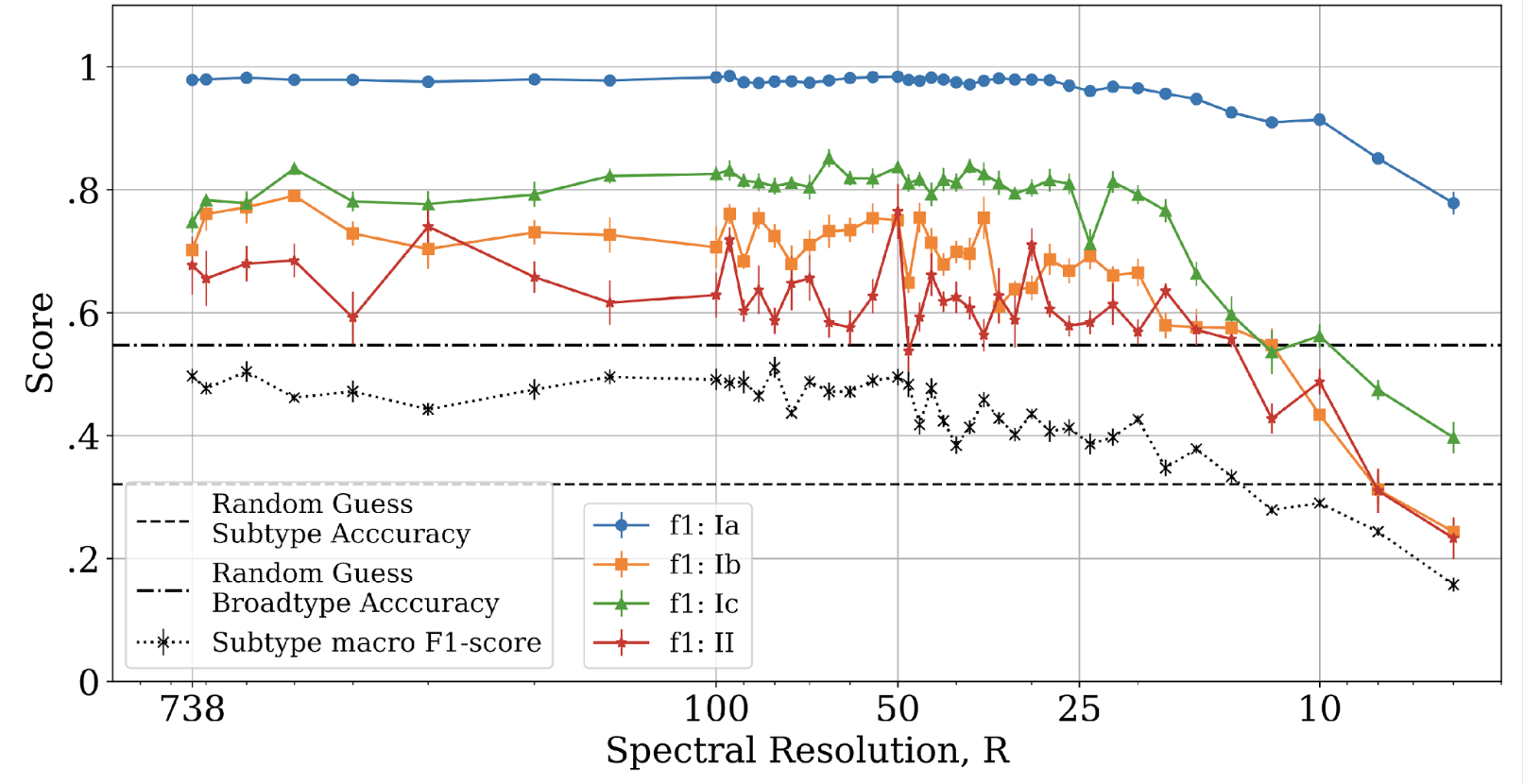

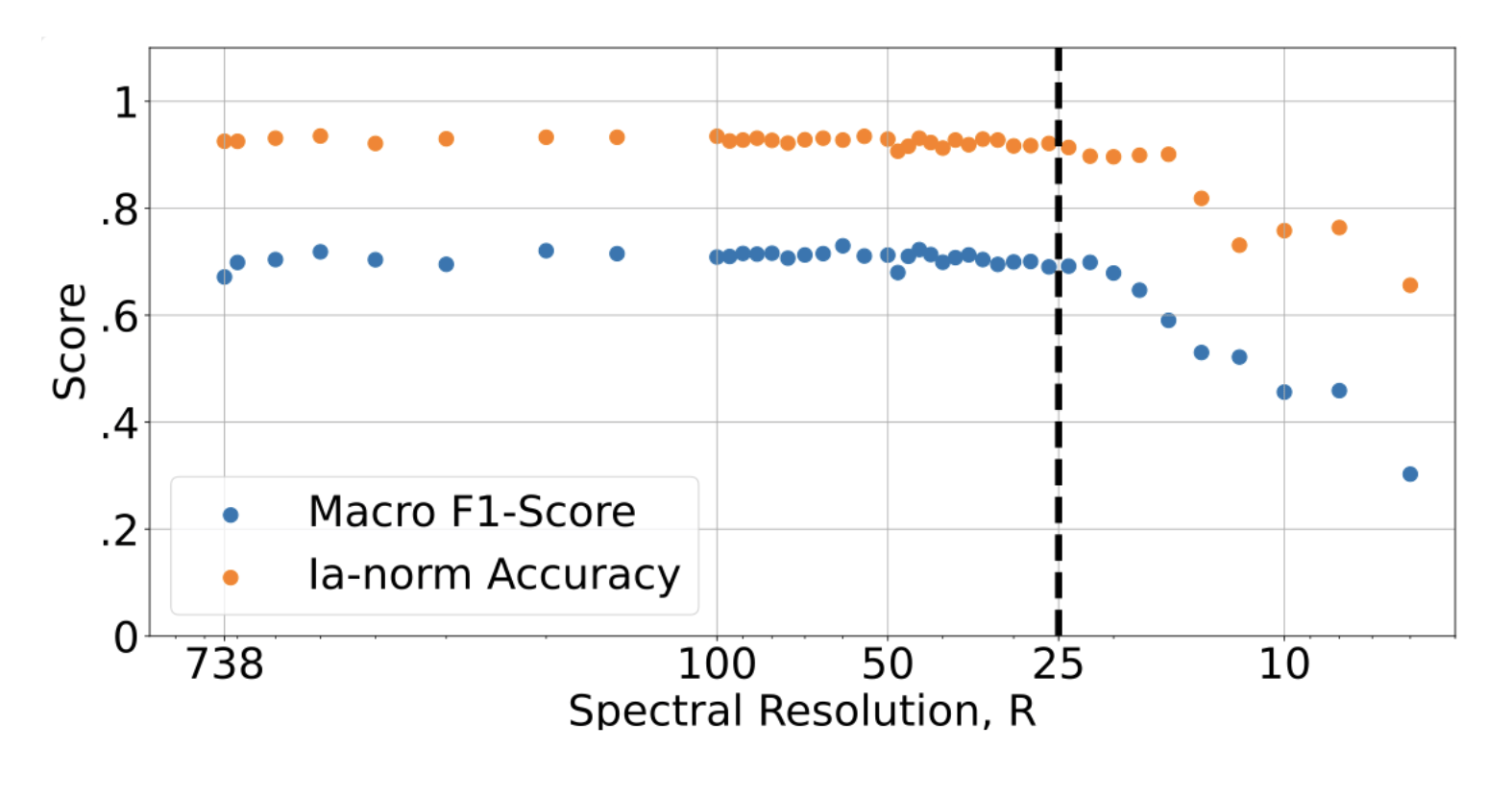

Willow Fox Fortino

UDelaware

When they go high, we go low

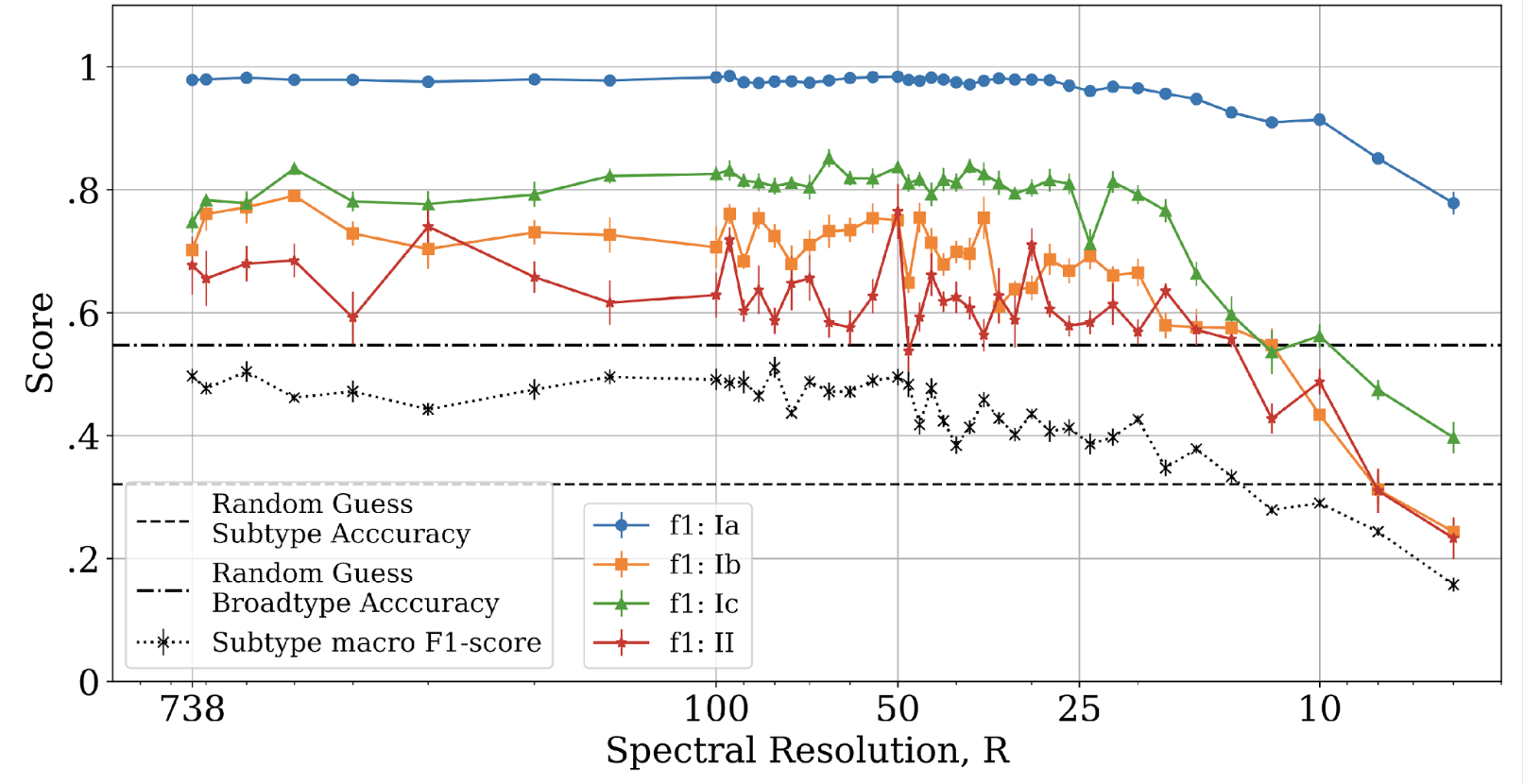

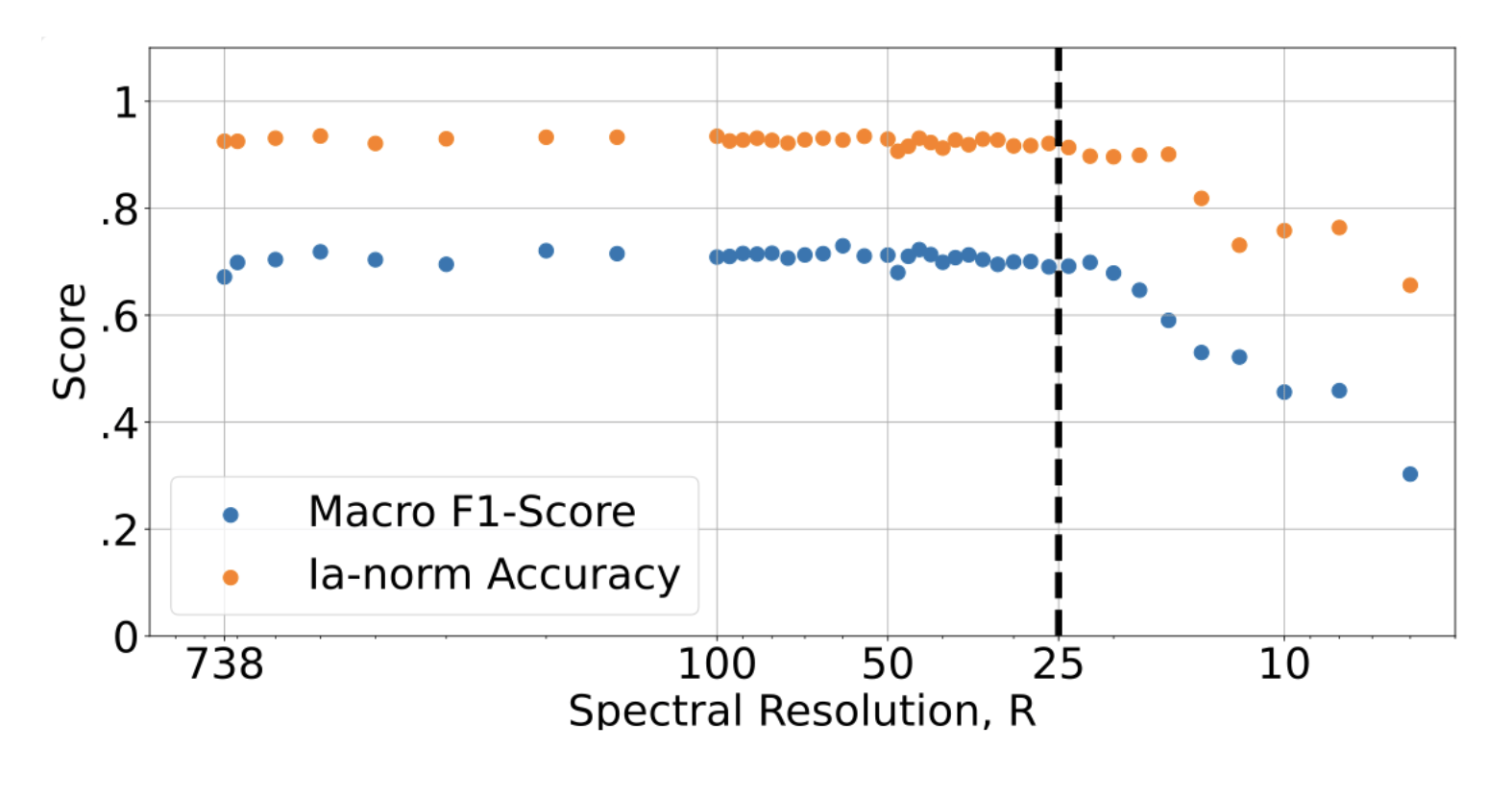

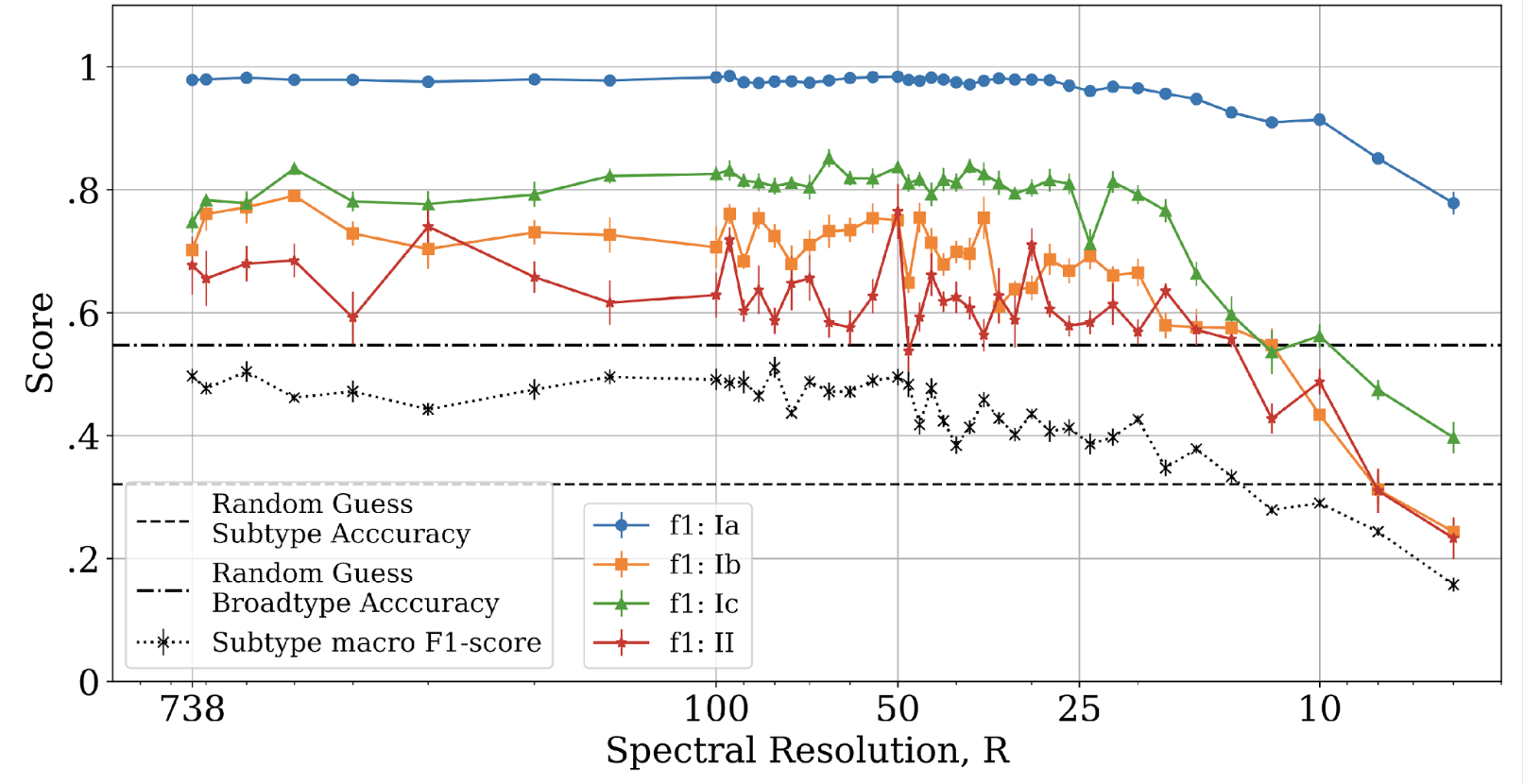

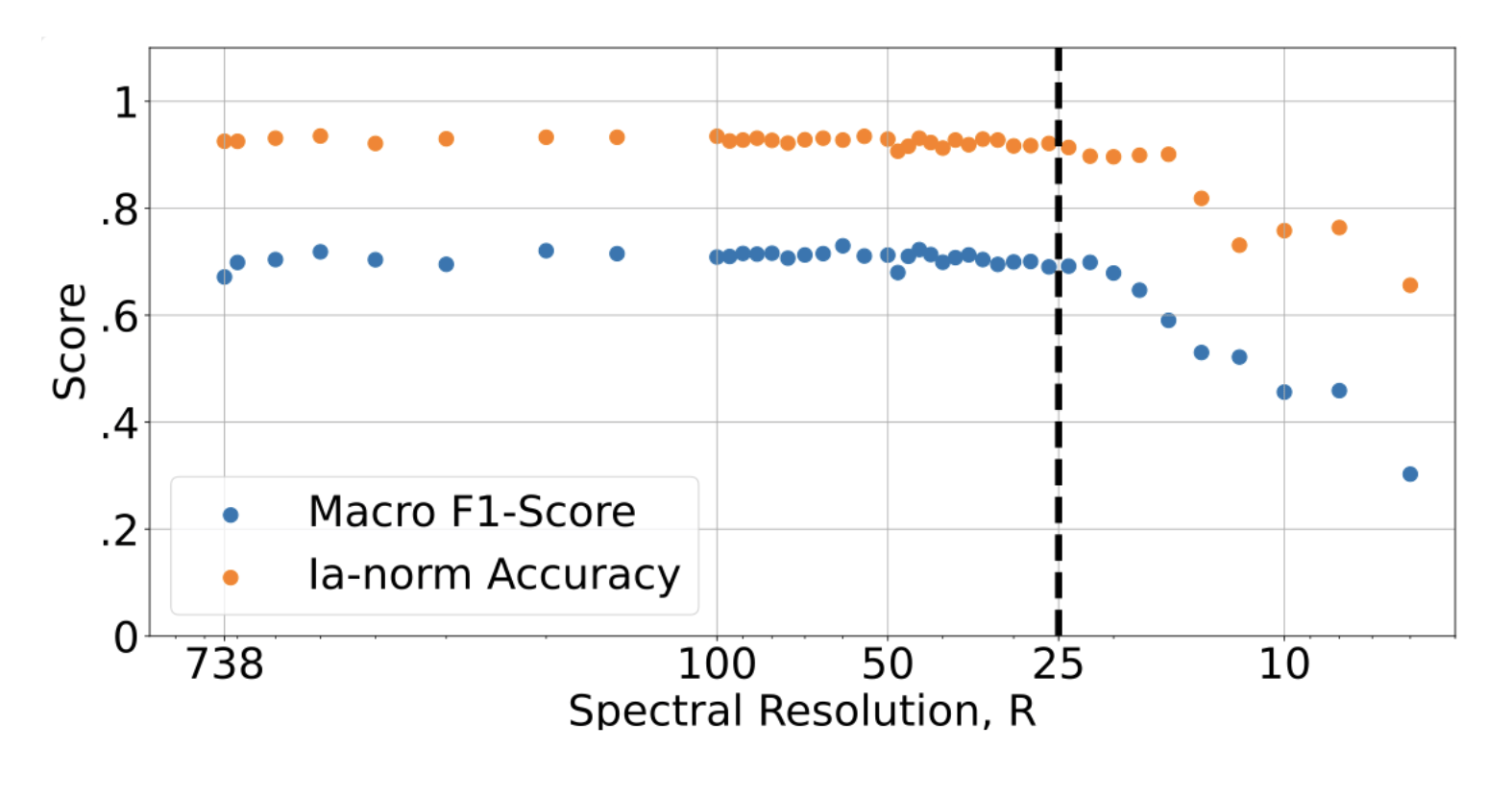

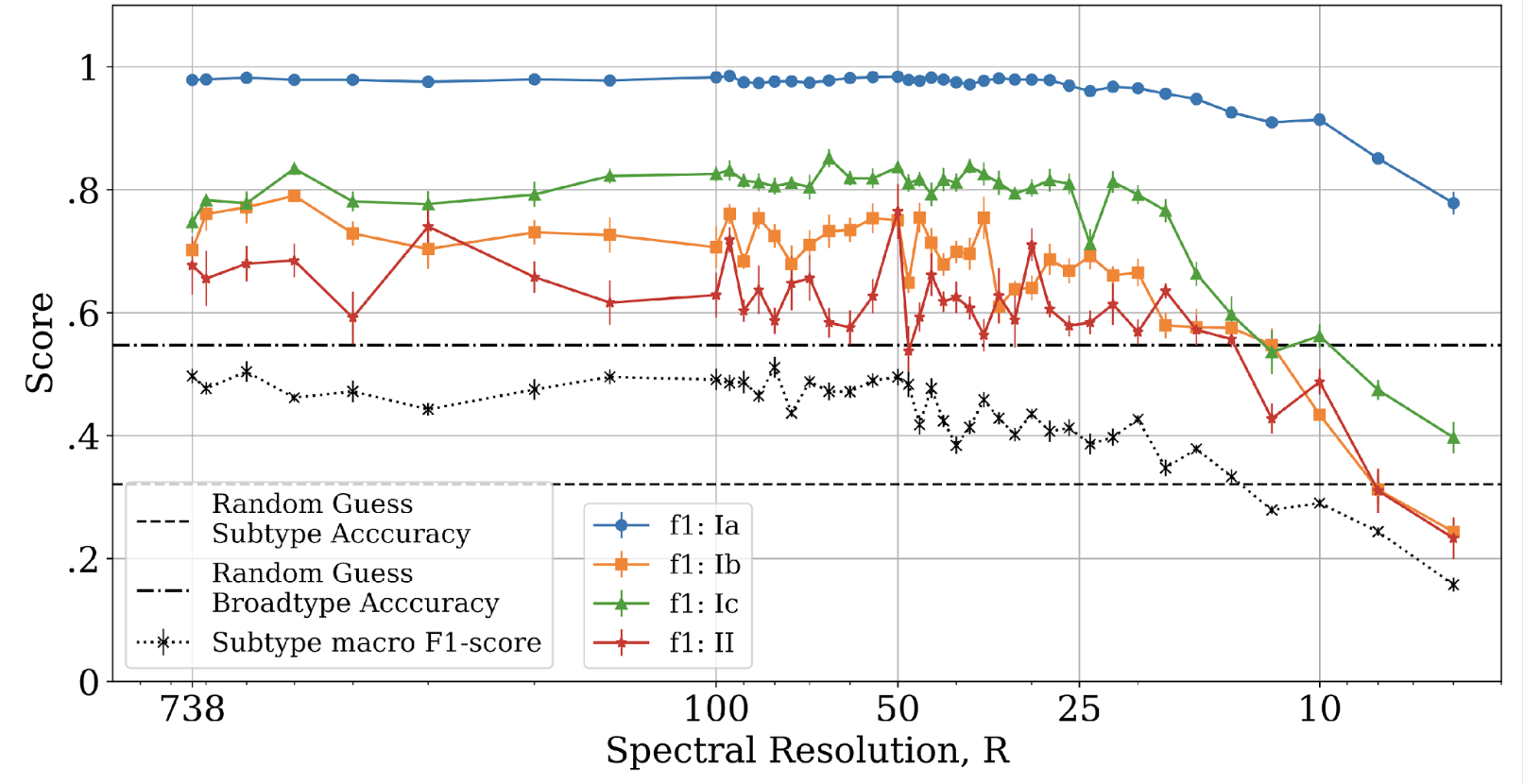

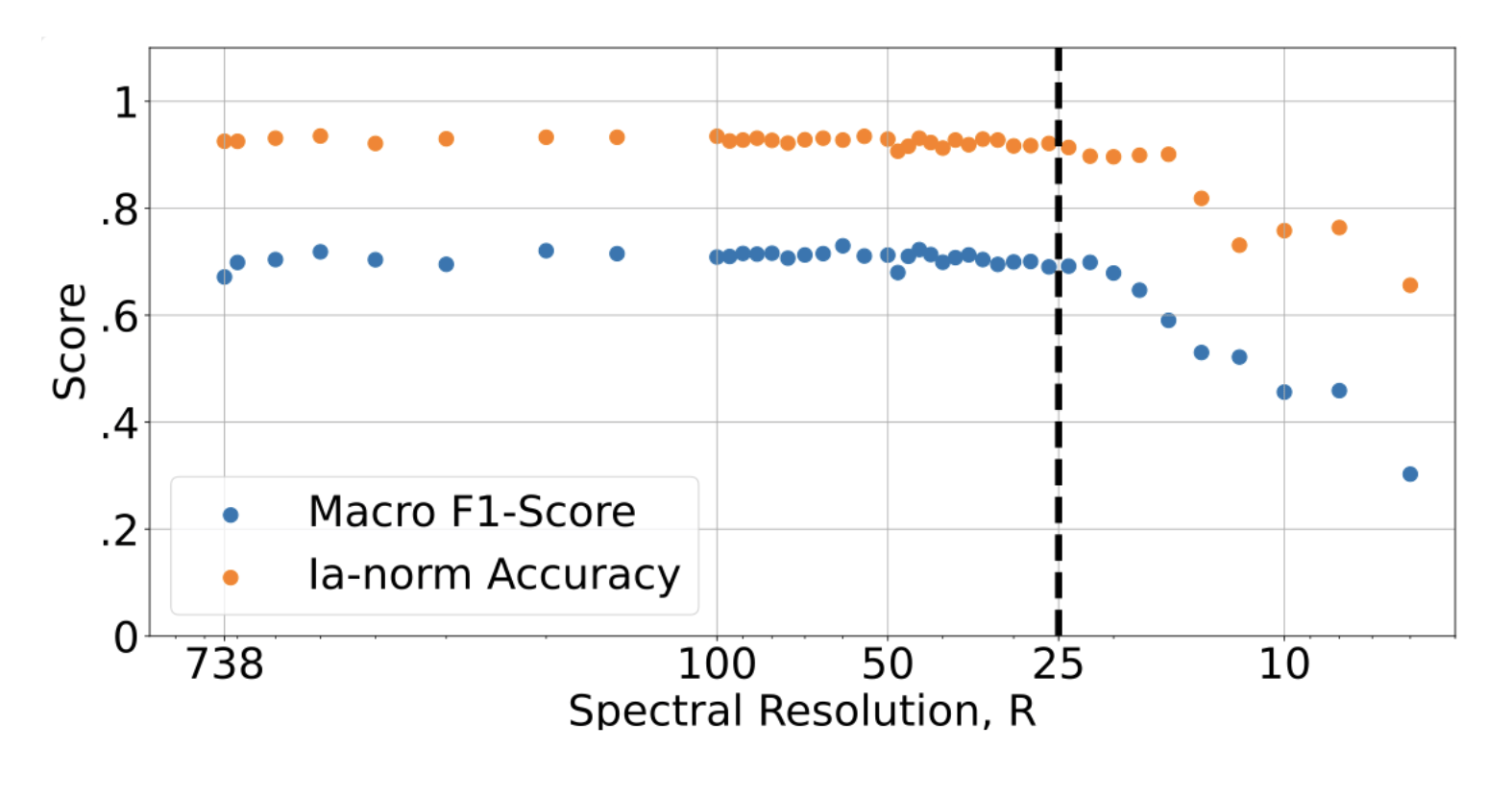

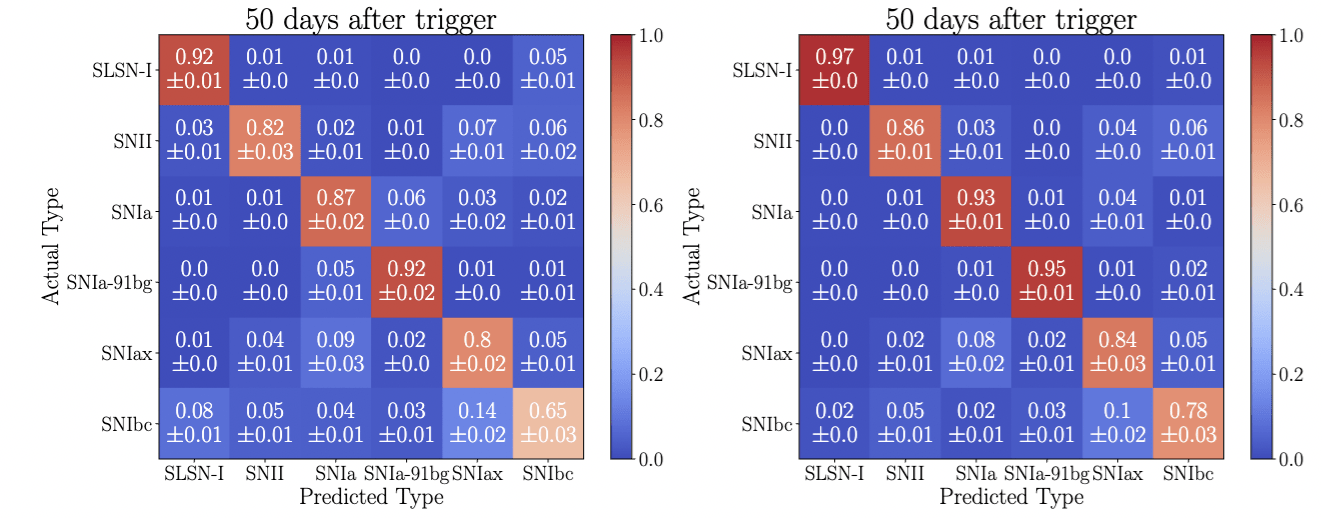

Classification power vs spectral resolution for SNe subtypes

FASTlab Flash highlight

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

FASTlab Flash highlight

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

FASTlab Flash highlight

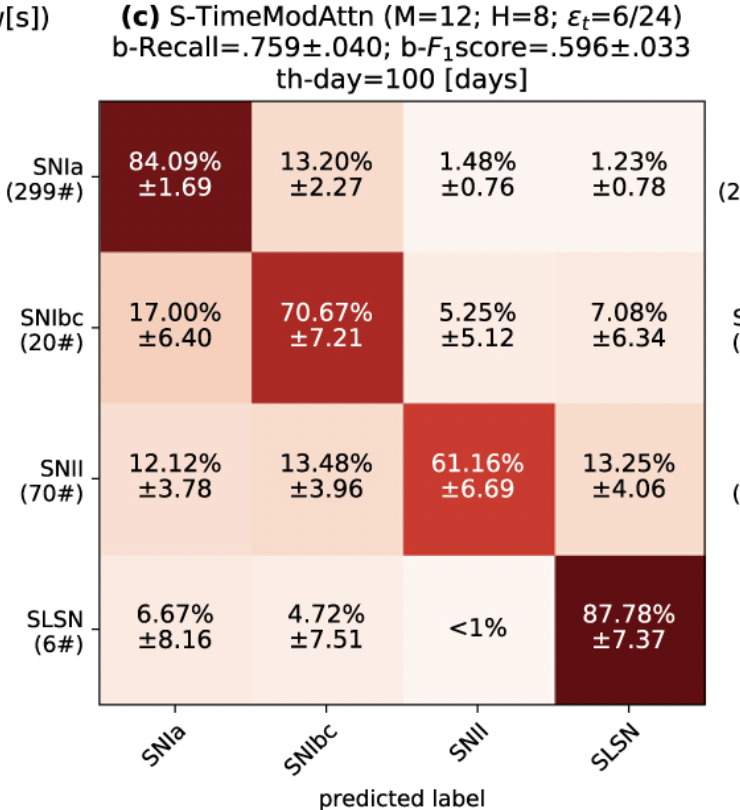

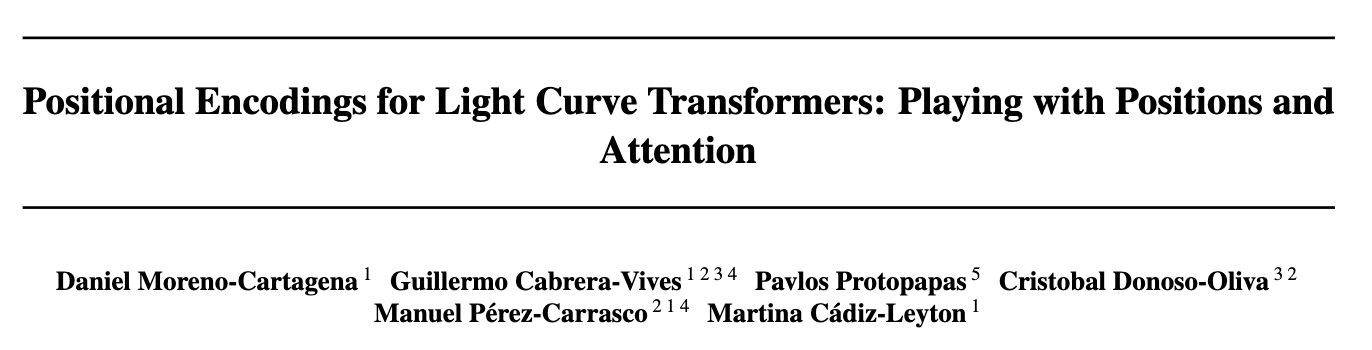

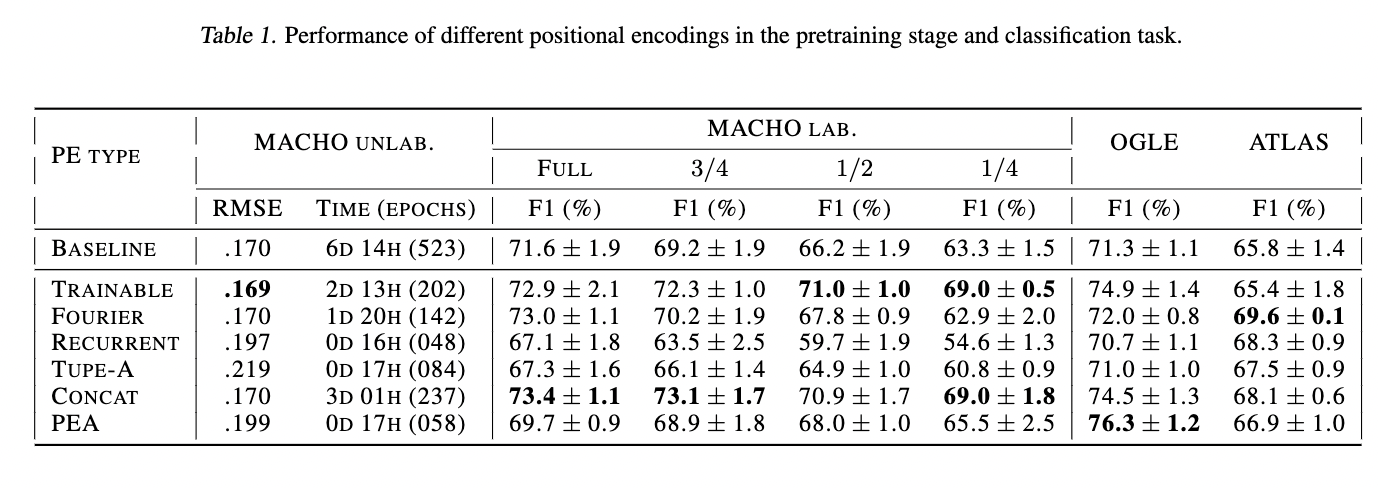

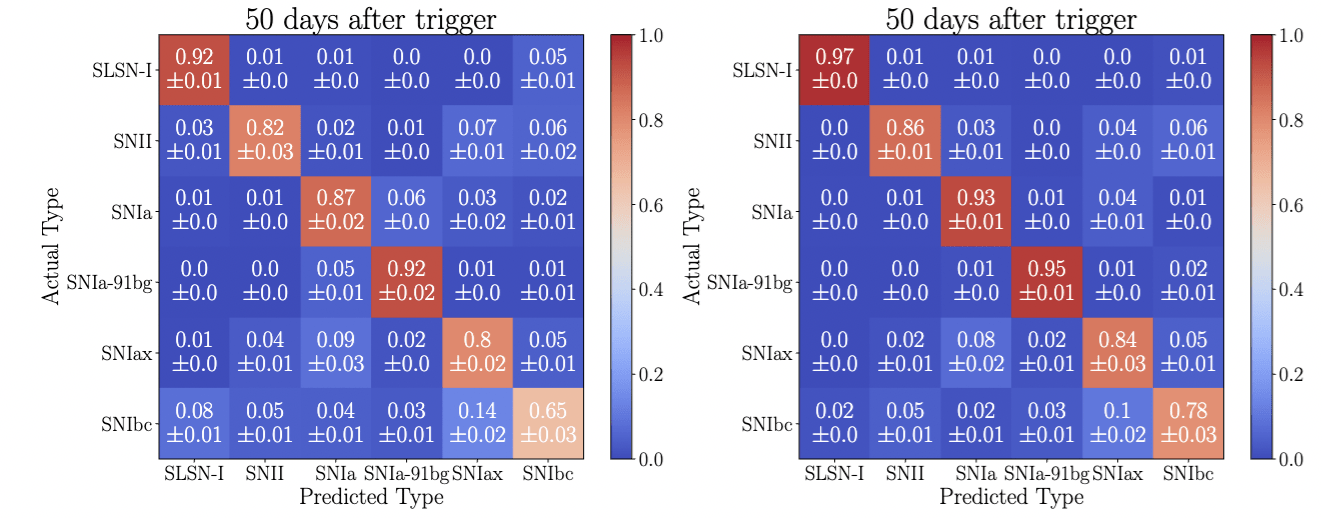

Classification from sparse data: Lightcurves

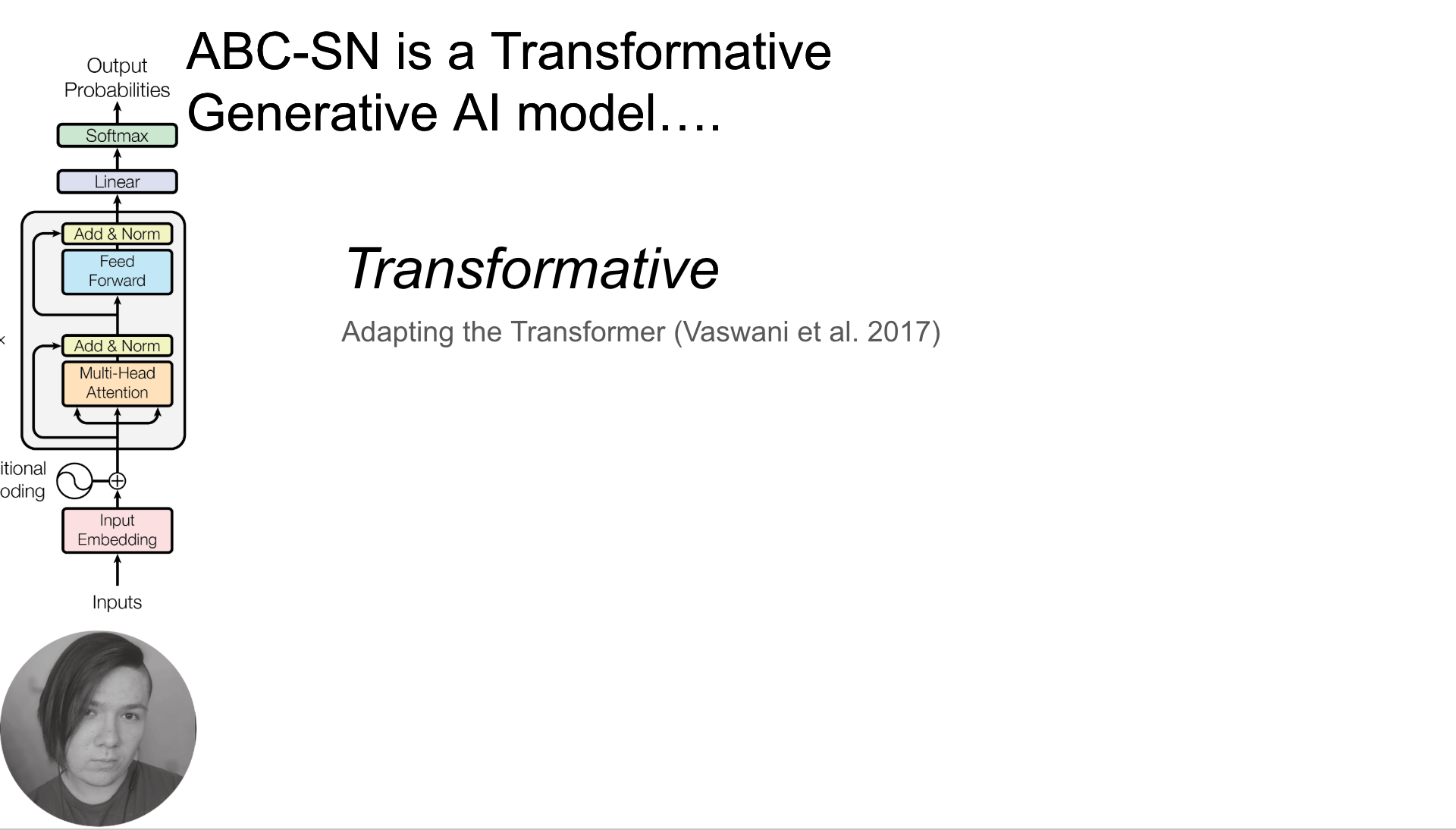

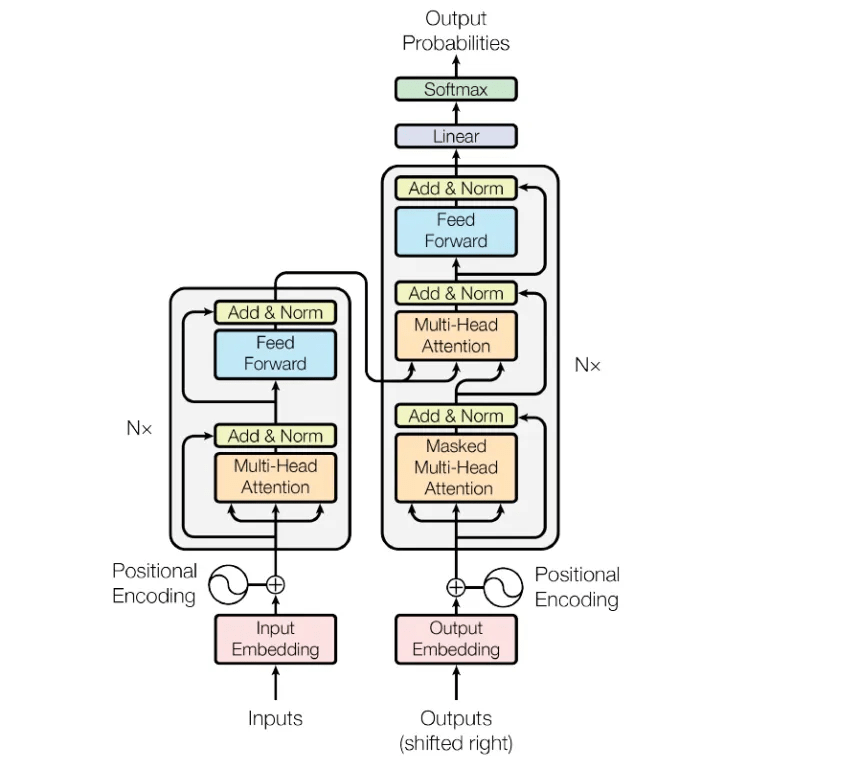

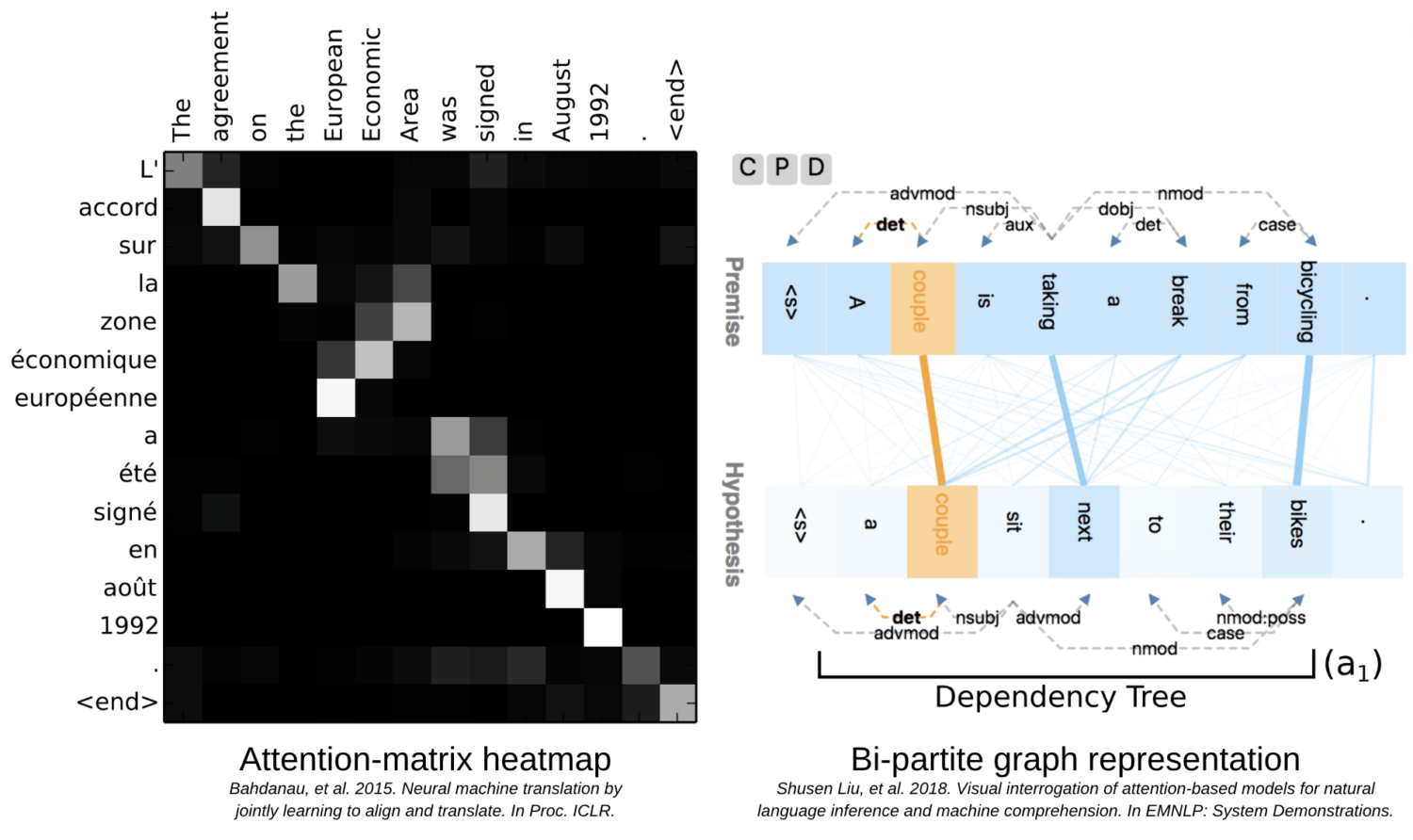

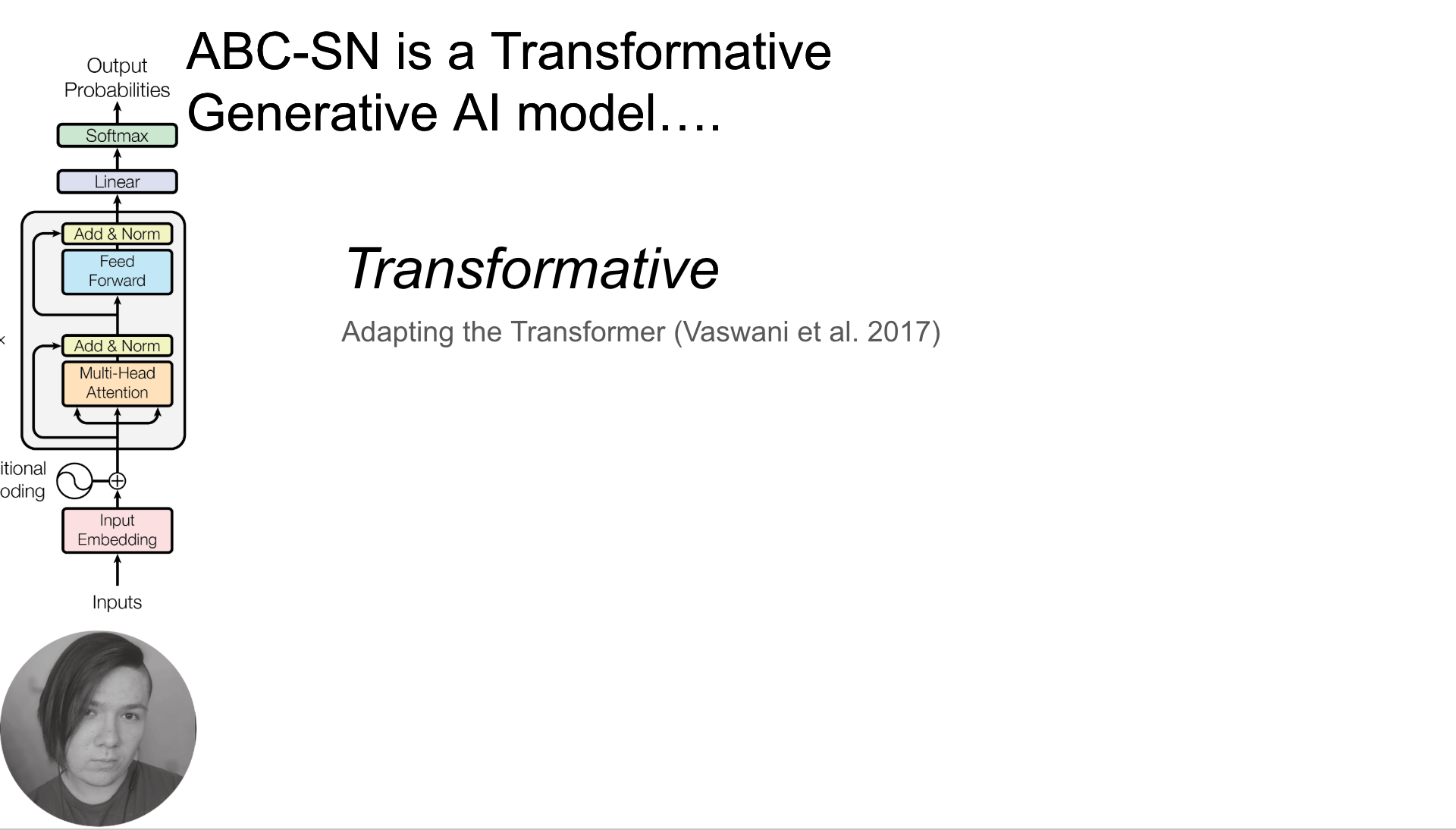

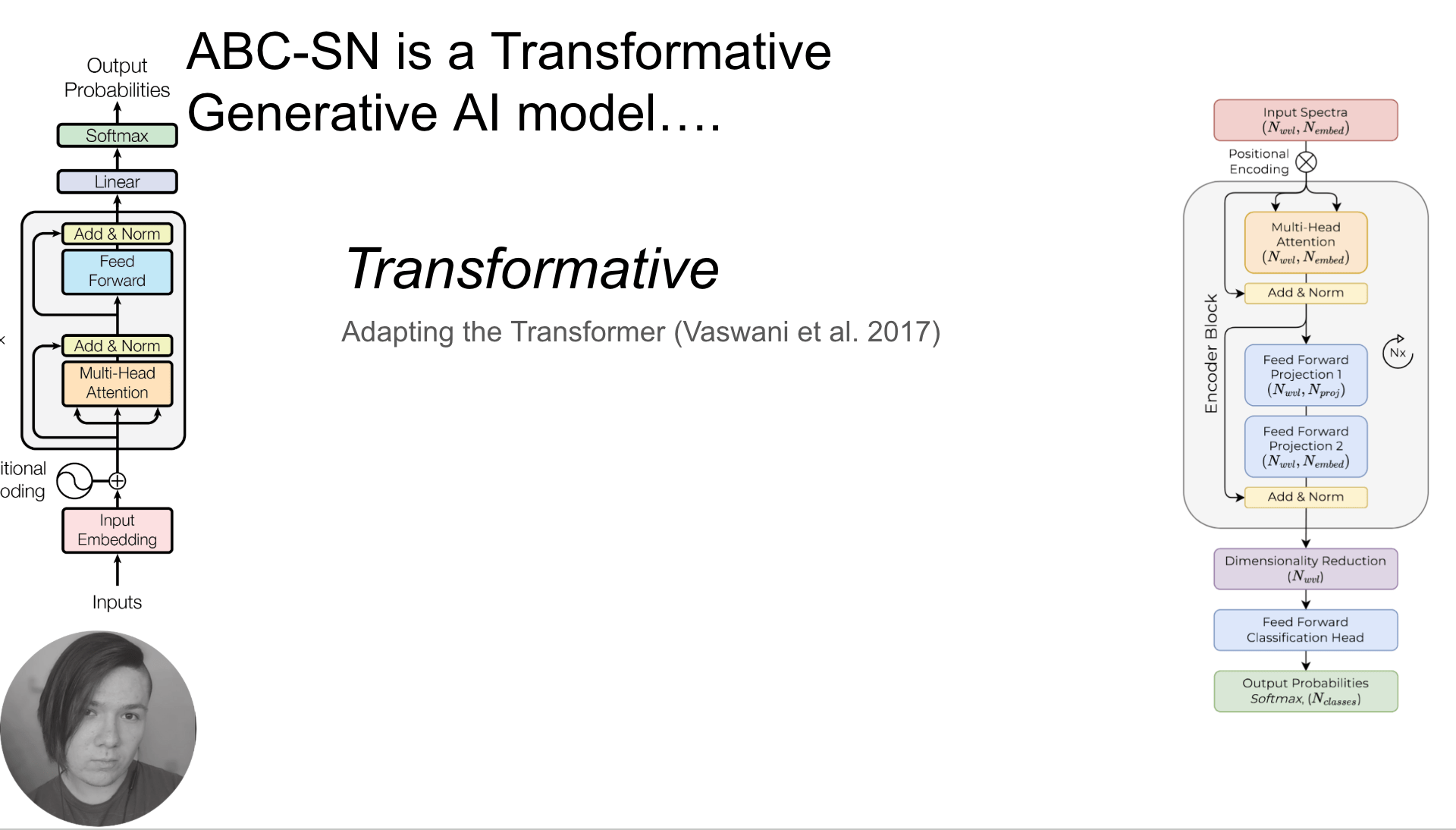

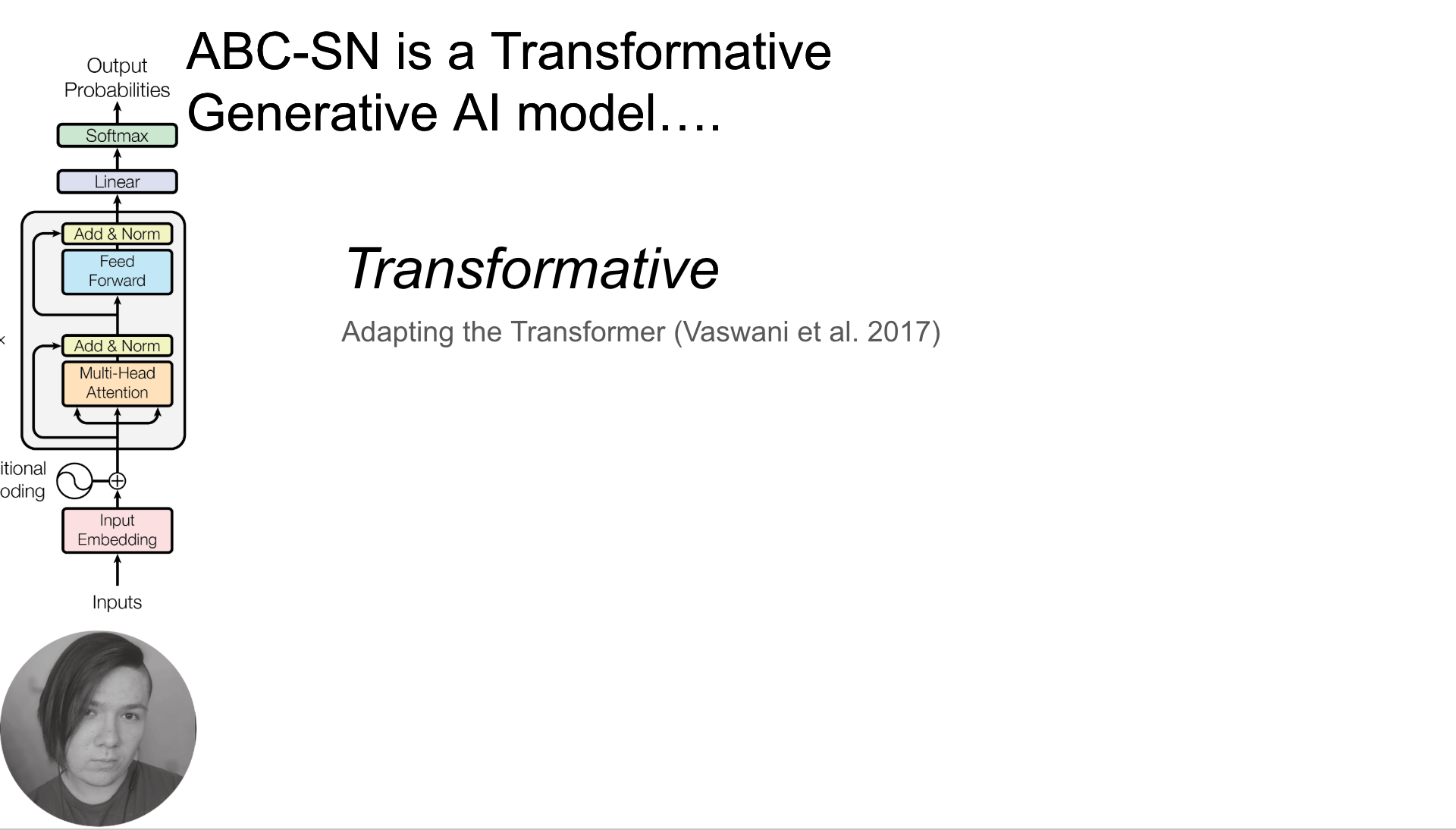

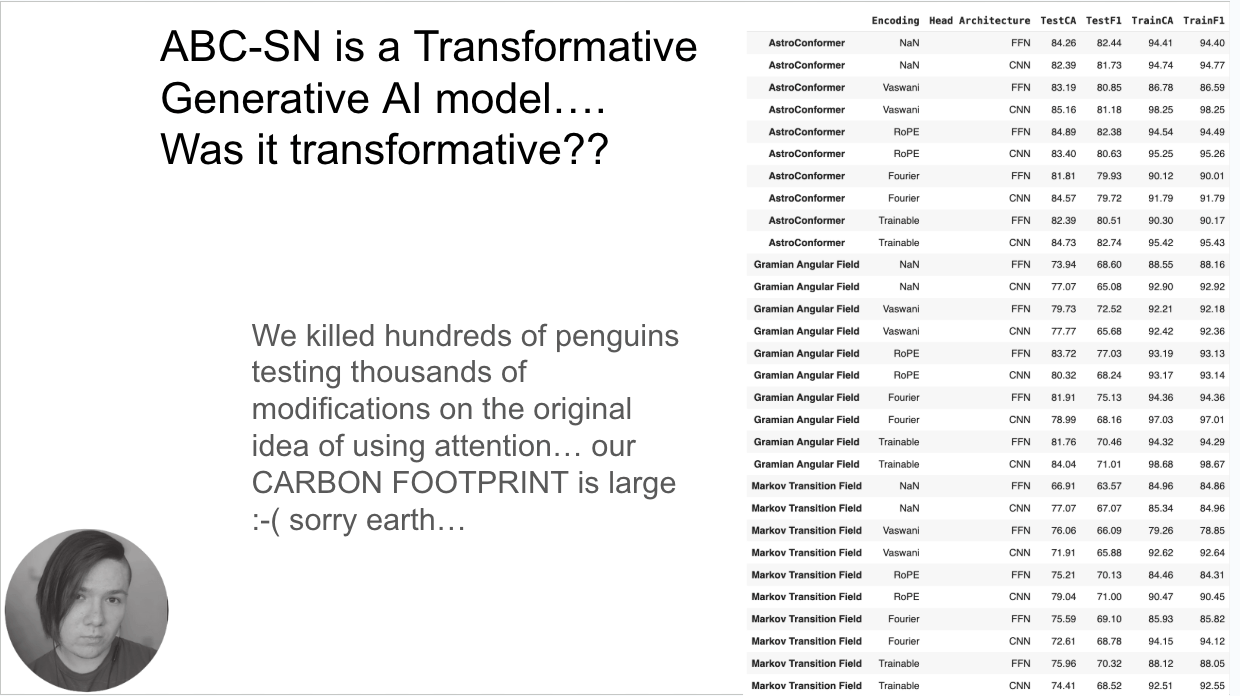

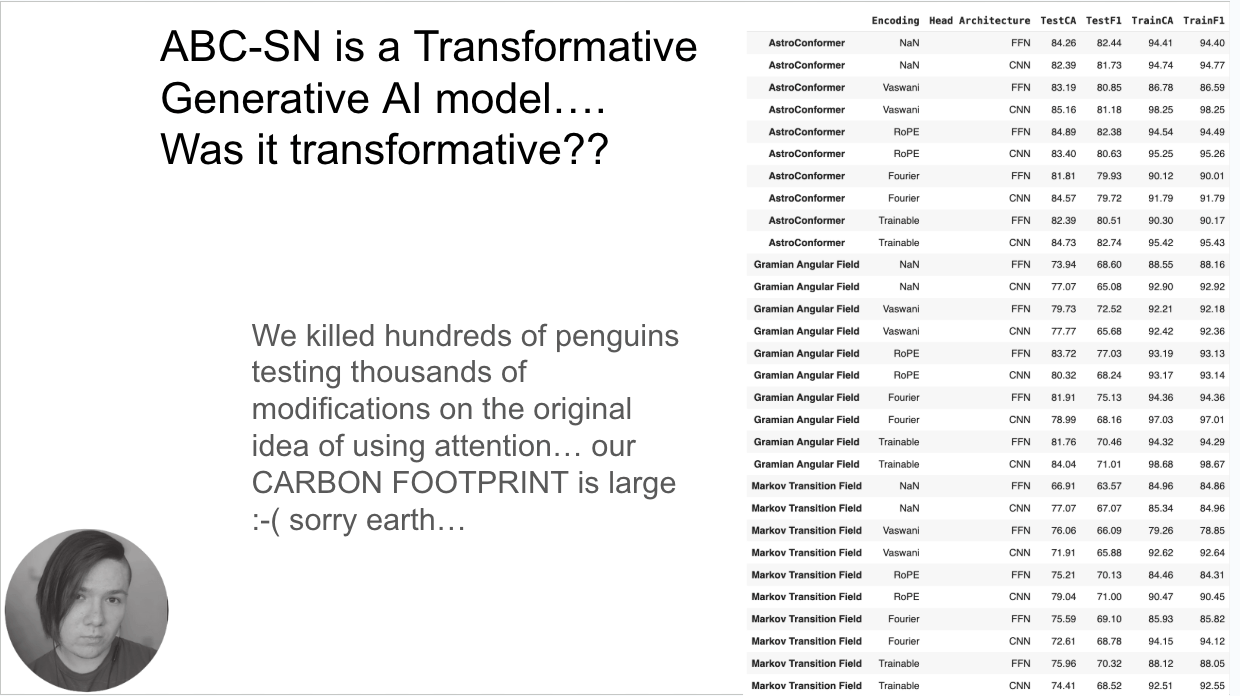

Viswani 2017 Attention is all you need

AI was transformed in 2017 by this paper

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

FASTlab Flash highlight

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

FASTlab Flash highlight

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

AI

ISN'T FREE

Willow Fox Fortino

UDelaware

As seen in Muthukrishna+2019

FASTlab Flash highlight

Text

A new AI-based classifier for SN spectra at low resolution

we badly need better benchmark datasets

Opportunity

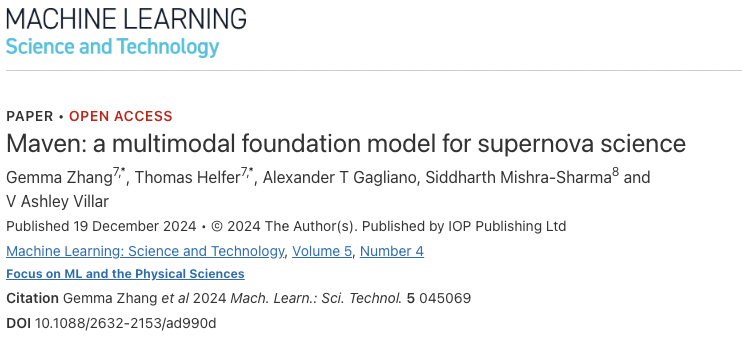

foundational models

why not images too?

lightcurve latent space rep

image

latent space rep

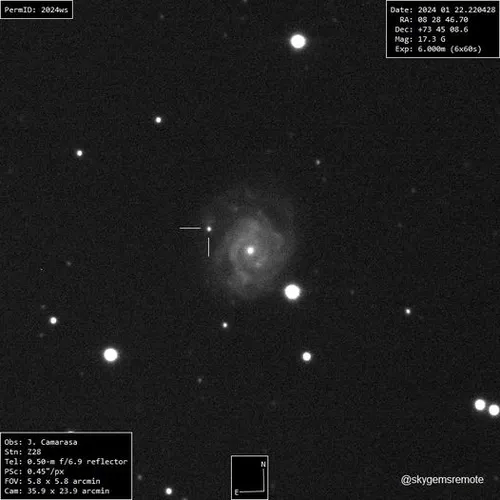

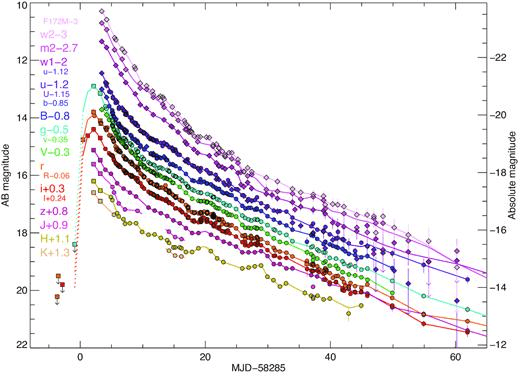

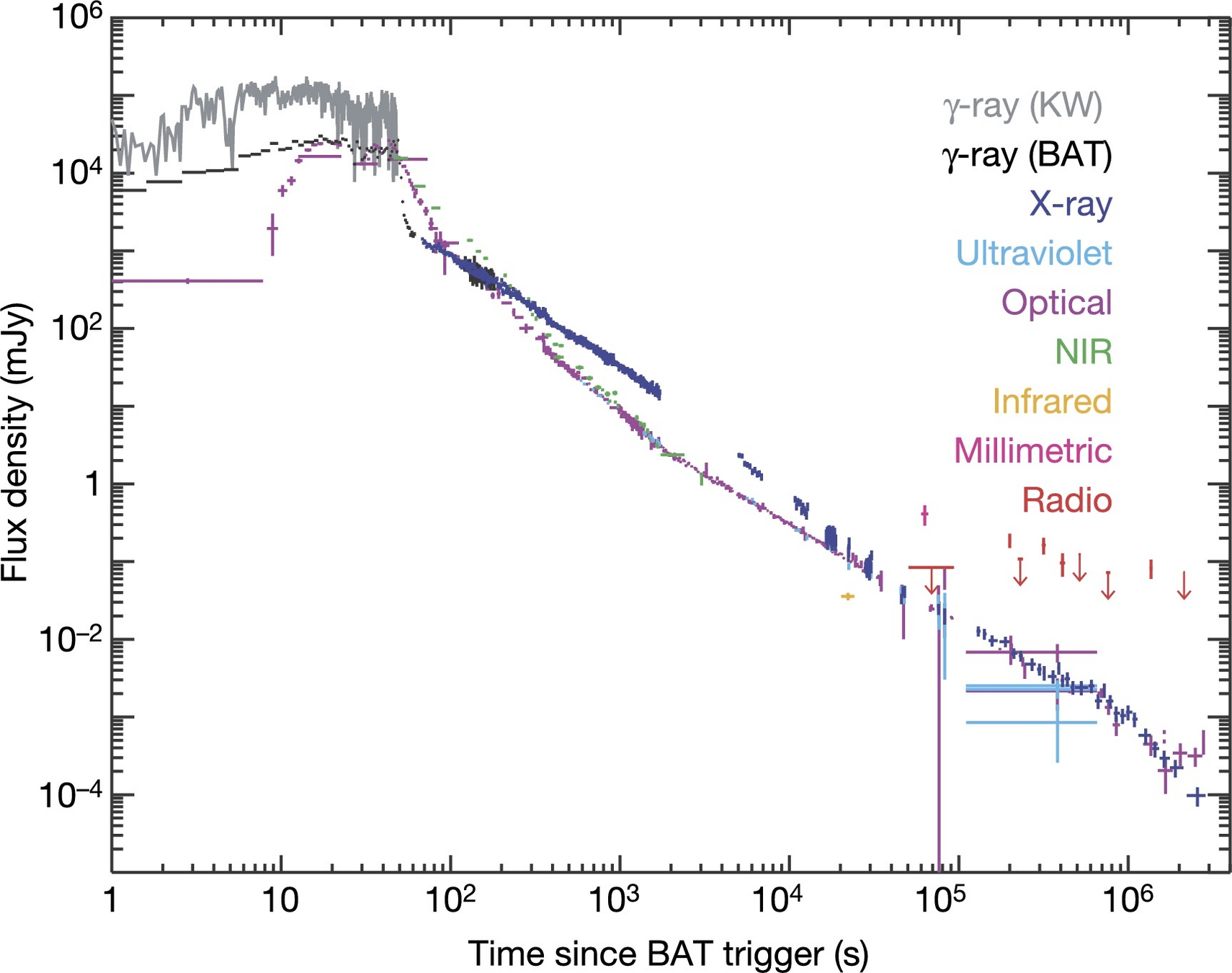

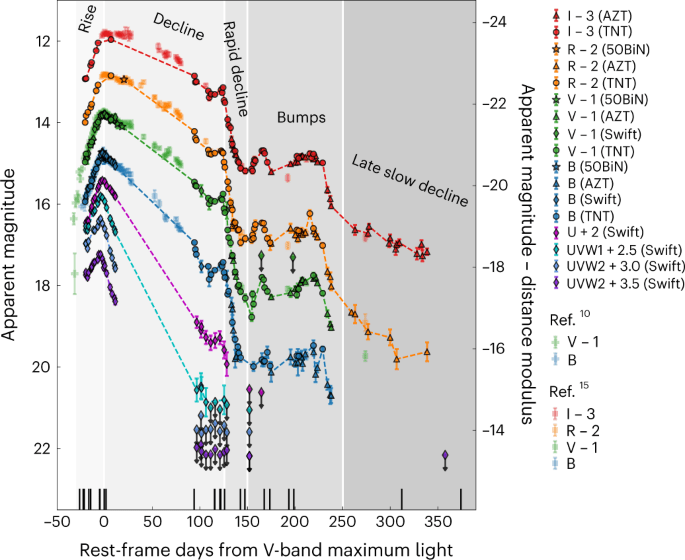

SN 2018cow

Perley+2018

SN 2018cow

Perley+2018

ethics of AI

Challange + Opportunity

Knowledge is power

- Astrophysical data is a sandbox. It has no social value, no privacy risk. We can safely learn about how bias builds into algorithm and how to correct it

Knowledge is power

- Astrophysical data is a sandbox. It has no social value, no privacy risk. We can safely learn about how bias builds into algorithm and how to correct it

- Ethics of AI is a critical element of the education of a technologist

With great power comes grteat responsibility

"Sharing is caring"

- Astrophysical data is a sandbox. It has no social value, no privacy risk. We can safely learn about how bias builds into algorithm and how to correct it

- Ethics of AI is a critical element of the education of a technologist

- AI is a transferable skill - use if for good!

the butterfly effect

We use astrophyiscs as a neutral and safe sandbox to learn how to develop and apply powerful tool.

Deploying these tools in the real worlds can do harm.

Ethics of AI is essential training that all data scientists shoudl receive.

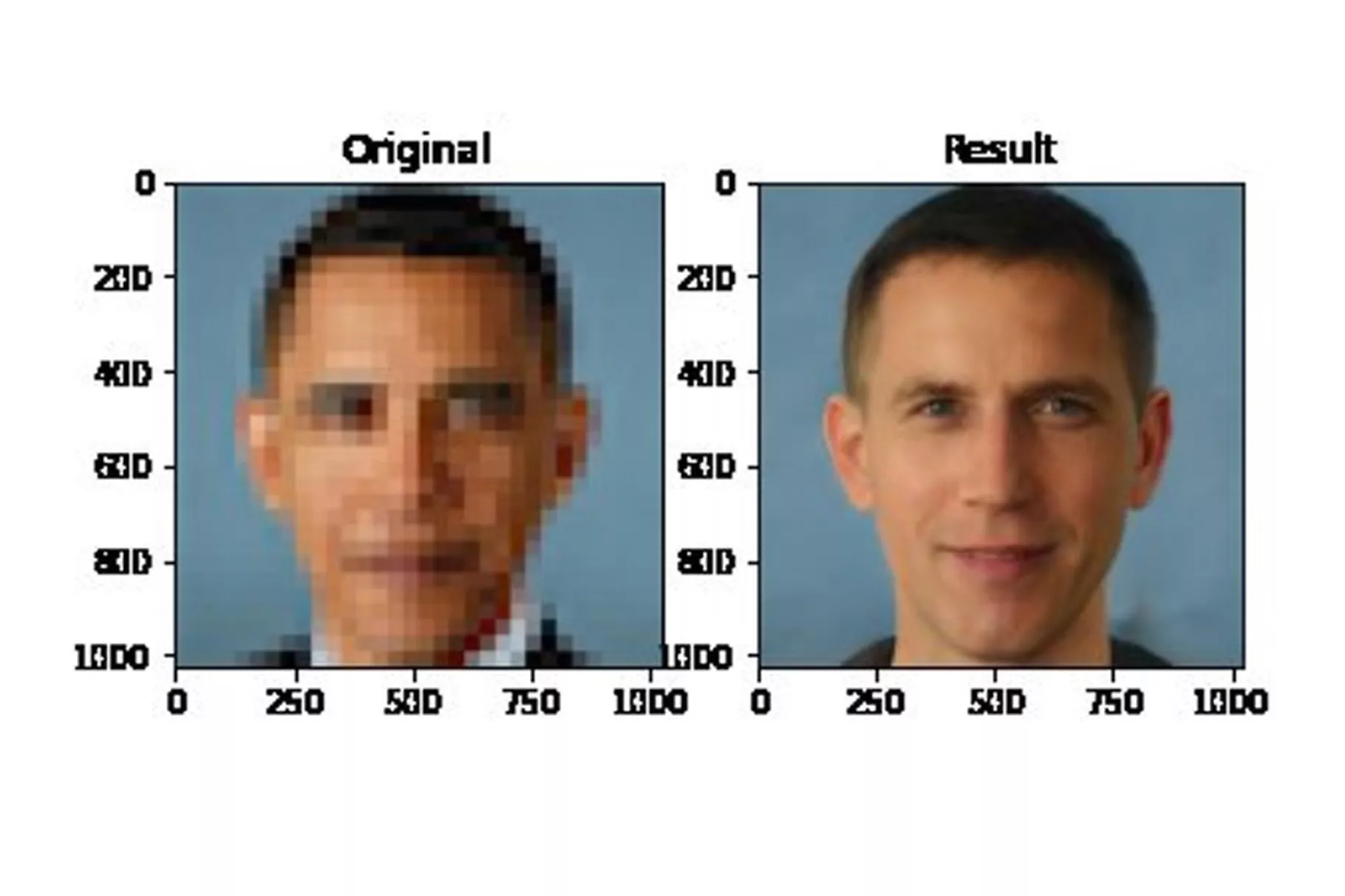

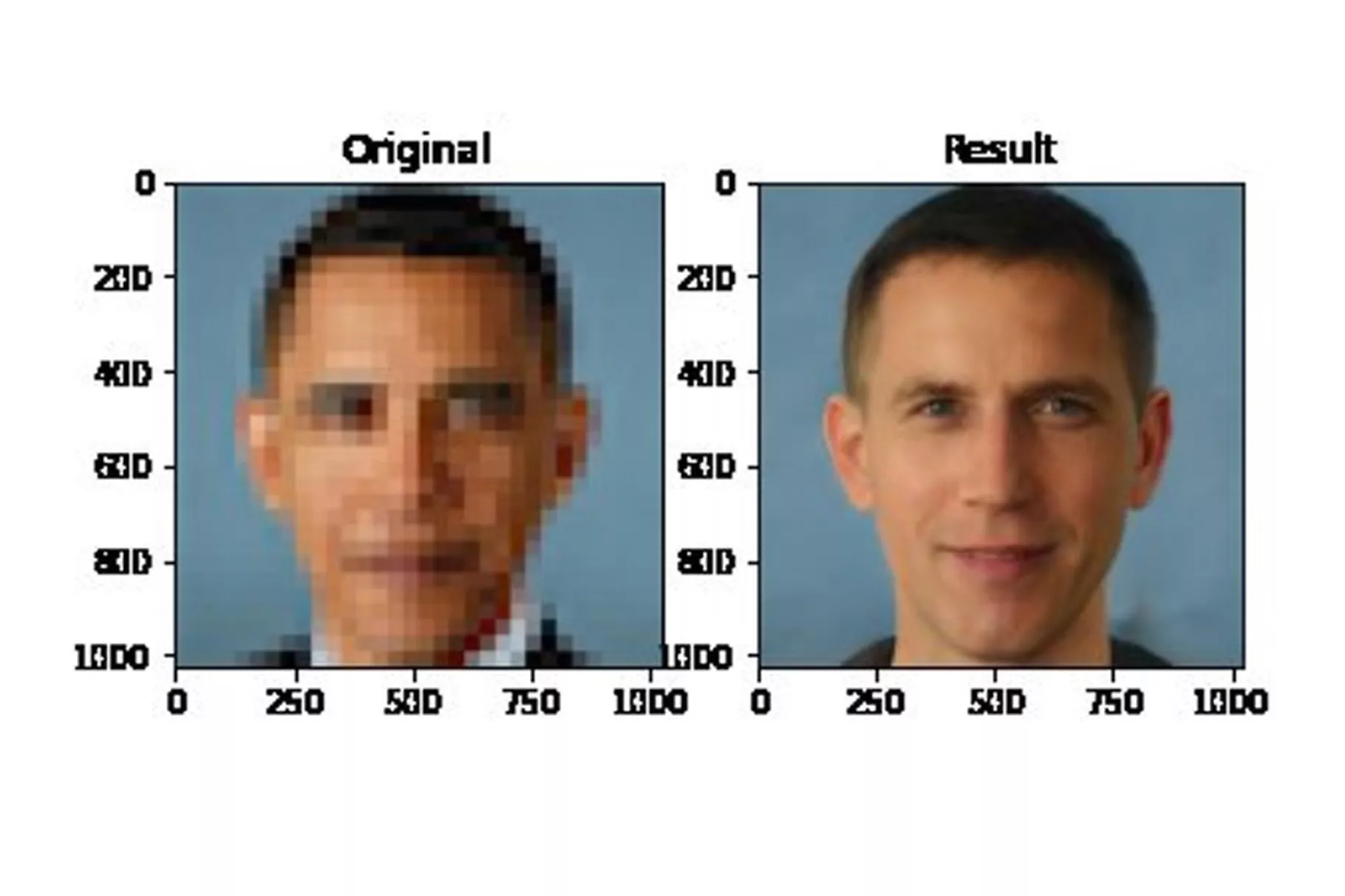

Why does this AI model whitens Obama face?

Simple answer: the data is biased. The algorithm is fed more images of white people

But really, would the opposite have been acceptable? The bias is in society

models are neutral, the bias is in the data (or is it?)

Why does this AI model whitens Obama face?

Simple answer: the data is biased. The algorithm is fed more images of white people

But really, would the opposite have been acceptable? The bias is in society

models are neutral, the bias is in the data (or is it?)

models are neutral, the bias is in the data (or is it?)

Why does this AI model whitens Obama face?

Simple answer: the data is biased. The algorithm is fed more images of white people

Joy Boulamwini

models are neutral, the bias is in the data (or is it?)

thank you!

University of Delaware

Department of Physics and Astronomy

Biden School of Public Policy and Administration

Data Science Institute

federica bianco

fbianco@udel.edu

bit.ly/biancouai25

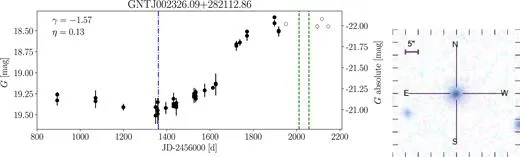

Willow Fox Fortino

UDelaware

When they go high, we go low

Classification power vs spectral resolution for SNe subtypes

Time series + low resolution spectrophotometry (R~3)

Precision Photometry (broad optical bands (G, BP, and RP) with space-based precision but bright magnitude limit (g~21)

Challange

benchmark datasets

khakpash+ 2024 showed that the models were biased for SN Ibc

AVOCADO, SCONE, all these models are trained on a biased dataset and are being currently used for classification

Ibc data-driven templates vs PLAsTiCC

Ibc data-driven templates vs PLAsTiCC

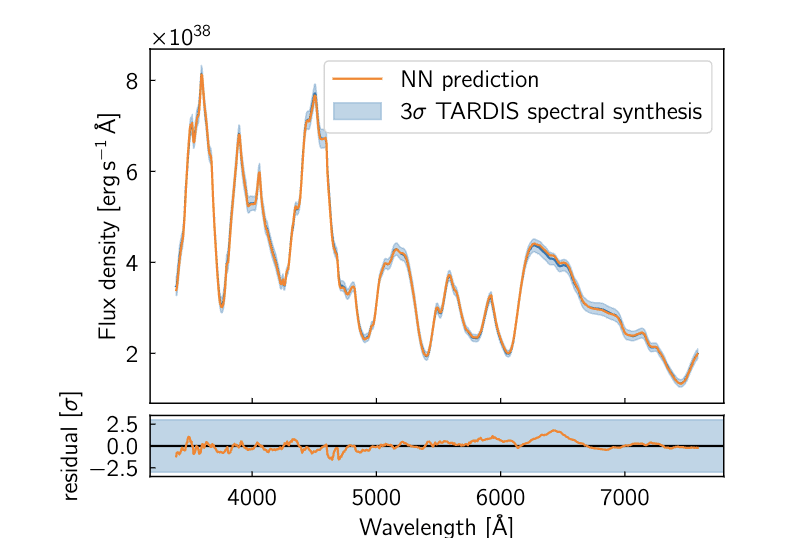

AI assisted modelling

Tardis uses a neural network to replace the radiative transfer model

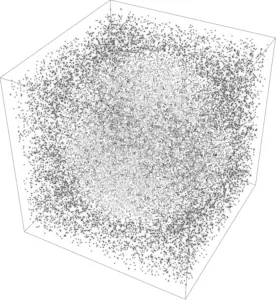

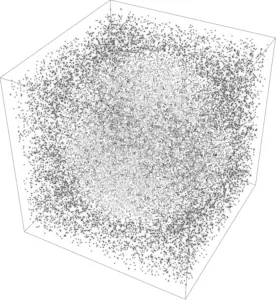

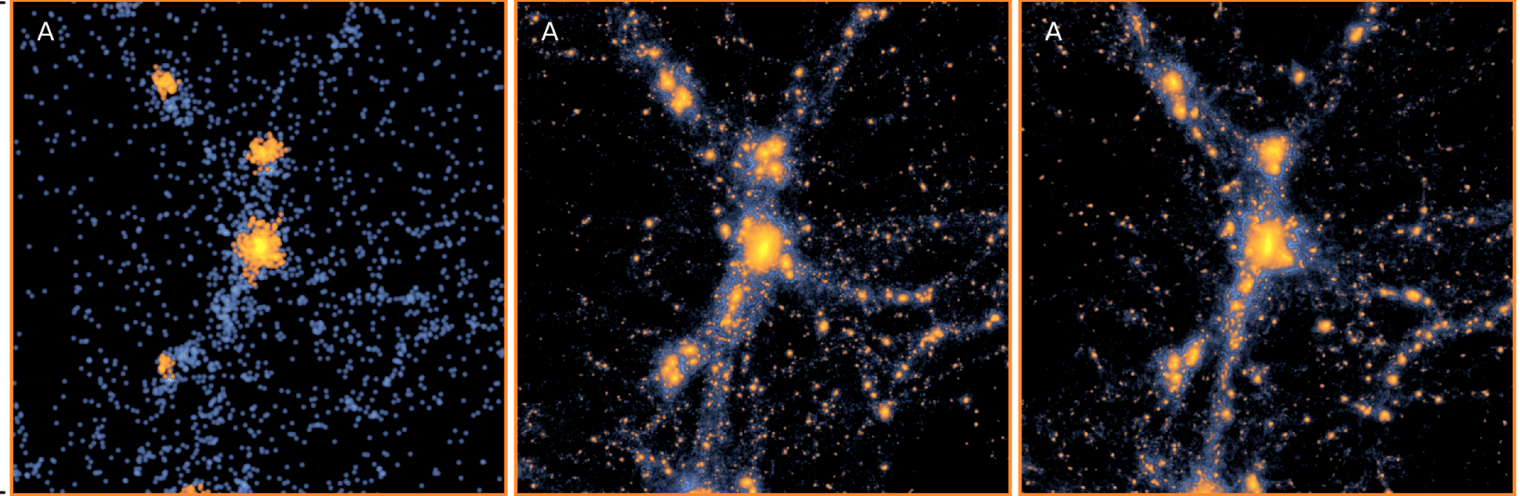

AI-assisted superresolution cosmological simulations

Yin Li+2021

LOW RES SIM

HIGH RES SIM

AI-AIDED HIGH RES

Challange

echological AI

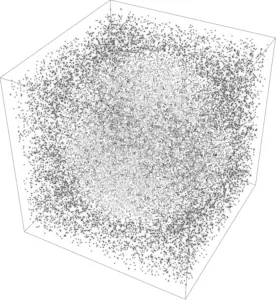

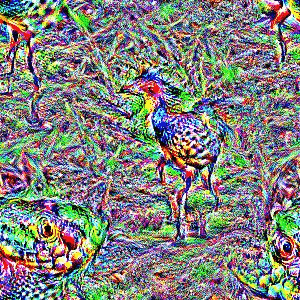

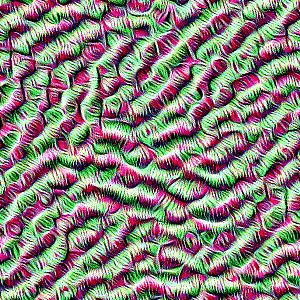

late layers learn complex aggregate specialized features

early layers learn simple generalized features (like lines for CNN)

prediction "head"

original data

trained extensively on large amounts of data to solve generic problems

Foundational AI models

trained extensively on large amounts of data to solve generic problems

Foundational AI models

We use the ILSVRC-2012 ImageNet dataset with 1k classes

and 1.3M images, its superset ImageNet-21k with

21k classes and 14M images and JFT with 18k classes and

303M high-resolution images.

Typically, we pre-train ViT on large datasets, and fine-tune to (smaller) downstream tasks. For

this, we remove the pre-trained prediction head and attach a zero-initialized D × K feedforward

layer, where K is the number of downstream classe

trained extensively on large amounts of data to solve generic problems

Foundational AI models

We use the ILSVRC-2012 ImageNet dataset with 1k classes

and 1.3M images, its superset ImageNet-21k with

21k classes and 14M images and JFT with 18k classes and

303M high-resolution images.

Typically, we pre-train ViT on large datasets, and fine-tune to (smaller) downstream tasks. For

this, we remove the pre-trained prediction head and attach a zero-initialized D × K feedforward

layer, where K is the number of downstream classe

Challenges in Space-Based Observations

-

Limited Field of View: Space telescopes often have smaller fields of view compared to ground-based surveys.

-

Data Latency: Delays in data transmission and processing can affect rapid follow-up.

-

Resource Allocation: Competition for telescope time can limit observations of certain transients.... LETS NOT TRIGGER 3 ToOs ON THE SAME TRANSIENT!!

(RacusinRacusin et al., 2008et al., 2008

(RacusinRacusin et al., 2008et al., 2008

GRB 080319B, the brightest optical burst ever observed

SWIFT

rapid response

SWIFT

HST, Chandra, SPITZER

...

Kepler, K2, TESS

high precision dense time series