Please stay healthy and well!

Remote Lectures

All Meetings

Schedule here:

Functions, Classes

Arrays, Vectors

Templates

GIBBS Cluster

Cython

Run our C++ code in Python using Cython

and compare timing against NumPy

Analyze a bunch of numbers and calculate min, max, mean, stddev.

What do we have?

stats.cc

What do we need?

setup.py

statistics.pyx

#include <iostream>

#include <vector>

#include <algorithm>

#include <cassert>

#include <cmath>

template <typename T>

class Stats {

public:

T get_min(std::vector<T> v);

T get_max(std::vector<T> v);

float get_mean(std::vector<T> v);

float get_stddev(std::vector<T> v);

};

template <typename T>

T Stats<T>::get_min(std::vector<T> v) {

T minvalue = v[0];

for(int i=1; i<v.size(); i++) {

minvalue = std::min(minvalue, v[i]);

}

return minvalue;

}

template <typename T>

T Stats<T>::get_max(std::vector<T> v) {

T minvalue = v[0];

for(int i=1; i<v.size(); i++) {

minvalue = std::max(minvalue, v[i]);

}

return minvalue;

}

template <typename T>

float Stats<T>::get_mean(std::vector<T> v) {

float sum = v[0];

for(int i=1; i<v.size(); i++) {

sum += v[i];

}

sum /= v.size();

return sum;

}

template <typename T>

float Stats<T>::get_stddev(std::vector<T> v) {

float stddev = 0;

float mean = Stats<T>::get_mean(v);

for(int i=1; i<v.size(); i++) {

stddev += std::pow(v[i] - mean, 2);

}

return std::sqrt(stddev / v.size());

}

void test_get_min() {

std::vector<float> somevalues;

somevalues.push_back(1.3);

somevalues.push_back(2);

somevalues.push_back(3);

somevalues.push_back(-241);

Stats<float> stats;

assert(stats.get_min(somevalues)==-241);

std::cout << "Test OK!" << std::endl;

}

void test_get_max() {

std::vector<float> somevalues;

somevalues.push_back(1.3);

somevalues.push_back(2);

somevalues.push_back(3);

somevalues.push_back(-241);

Stats<float> stats;

assert(stats.get_max(somevalues)==3);

std::cout << "Test OK!" << std::endl;

}

void test_get_mean() {

std::vector<float> somevalues;

somevalues.push_back(1.3);

somevalues.push_back(2);

somevalues.push_back(3);

somevalues.push_back(-241);

Stats<float> stats;

float diff = std::abs(stats.get_mean(somevalues)) - std::abs(-58.675);

assert(diff < 0.0005);

std::cout << "Test OK!" << std::endl;

}

void test_get_stddev() {

std::vector<float> somevalues;

somevalues.push_back(1.3);

somevalues.push_back(2);

somevalues.push_back(3);

somevalues.push_back(-241);

Stats<float> stats;

float diff = std::abs(stats.get_stddev(somevalues)) - std::abs(105.26712152899404);

assert(diff < 0.0005);

std::cout << "Test OK!" << std::endl;

}

int main()

{

test_get_min();

test_get_max();

test_get_mean();

test_get_stddev();

}

from setuptools import setup

from Cython.Build import cythonize

setup(ext_modules=cythonize("statistics.pyx"))# distutils: language = c++

from libcpp.vector cimport vector

#

# Connection to C++

#

cdef extern from "stats.cc":

cdef cppclass Stats[T]:

T get_min(vector[T])

T get_max(vector[T])

float get_mean(vector[T])

float get_stddev(vector[T])

#

# Python Interface

#

cdef class PyStats:

cdef Stats[float] stats

def get_min(self, vector[float] v):

return self.stats.get_min(v)

def get_max(self, vector[float] v):

return self.stats.get_max(v)

def get_mean(self, vector[float] v):

return self.stats.get_mean(v)

def get_stddev(self, vector[float] v):

return self.stats.get_stddev(v)

stats.cc

statistics.pyx

setup.py

import statistics

s = statistics.PyStats()

somevalues = [1.3, 2, 3, -241]

print( s.get_min( somevalues ) )More soon...

but not today...

Lex Fridman

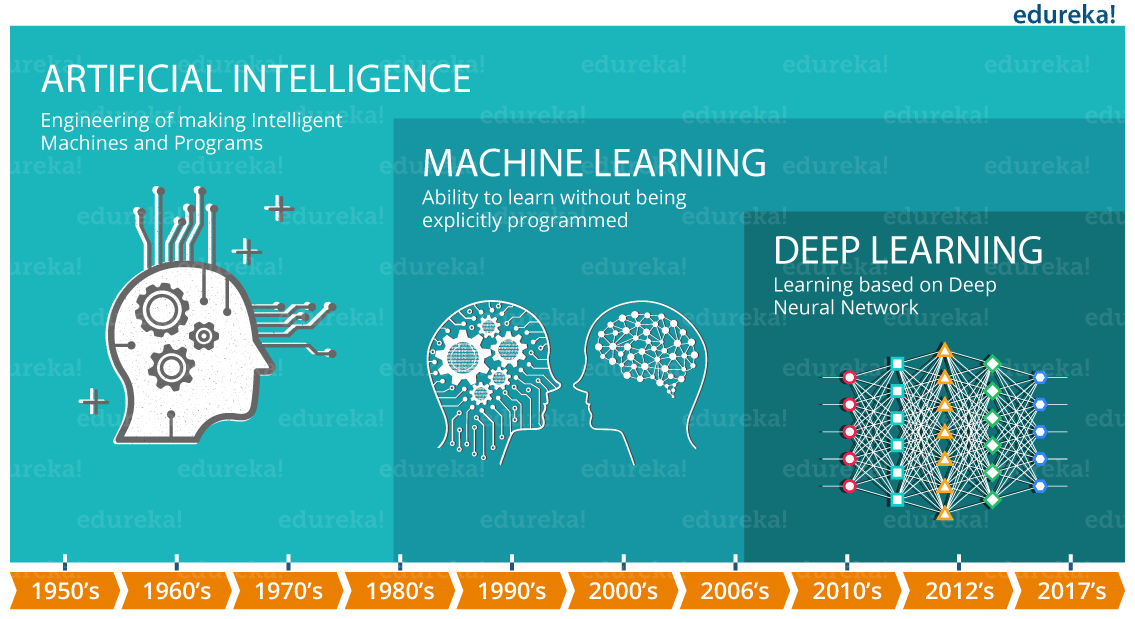

Artificial General Intelligence

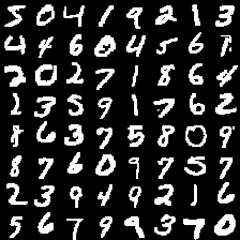

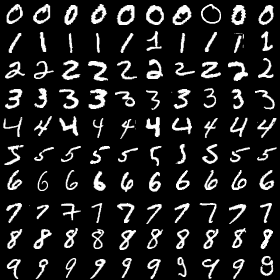

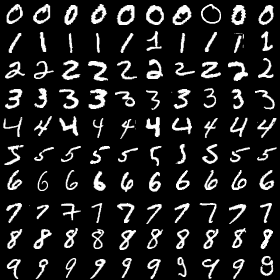

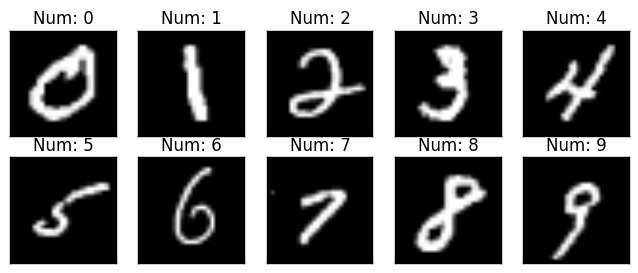

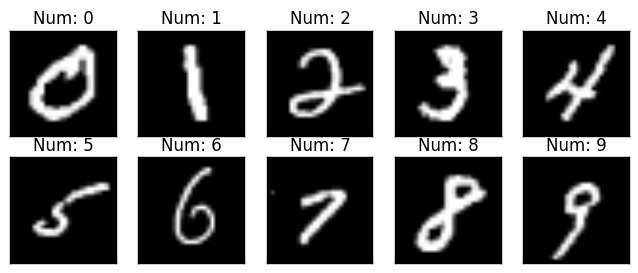

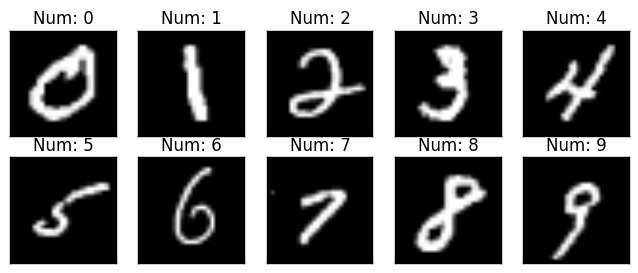

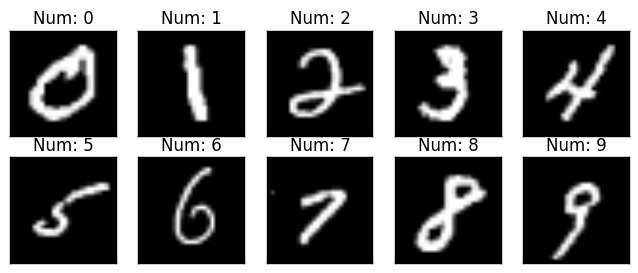

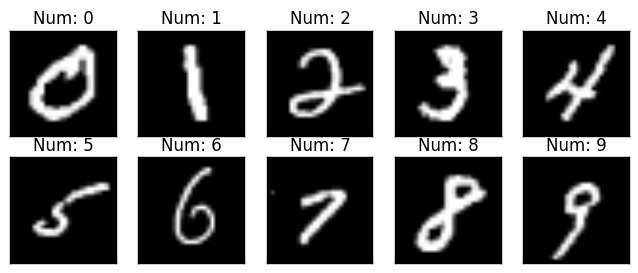

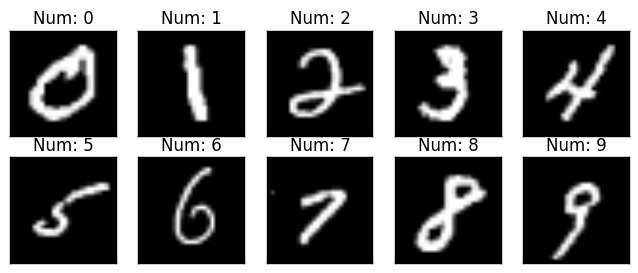

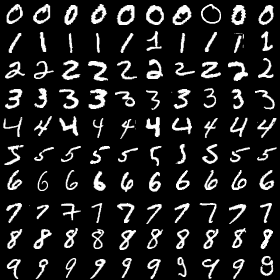

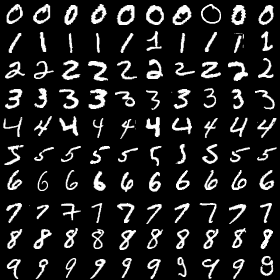

MNIST

Lex Fridman

Supervised Learning

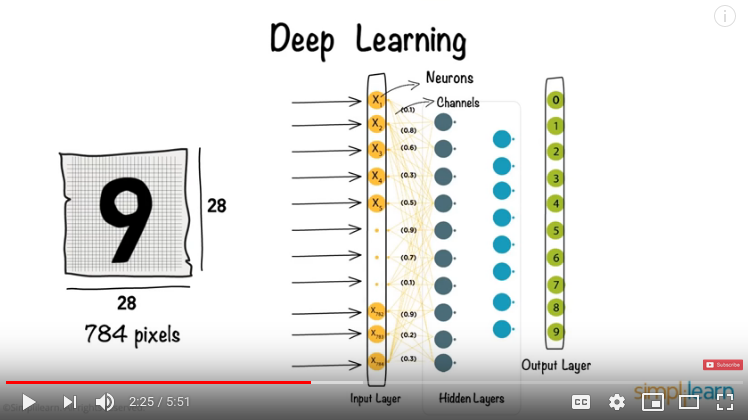

9

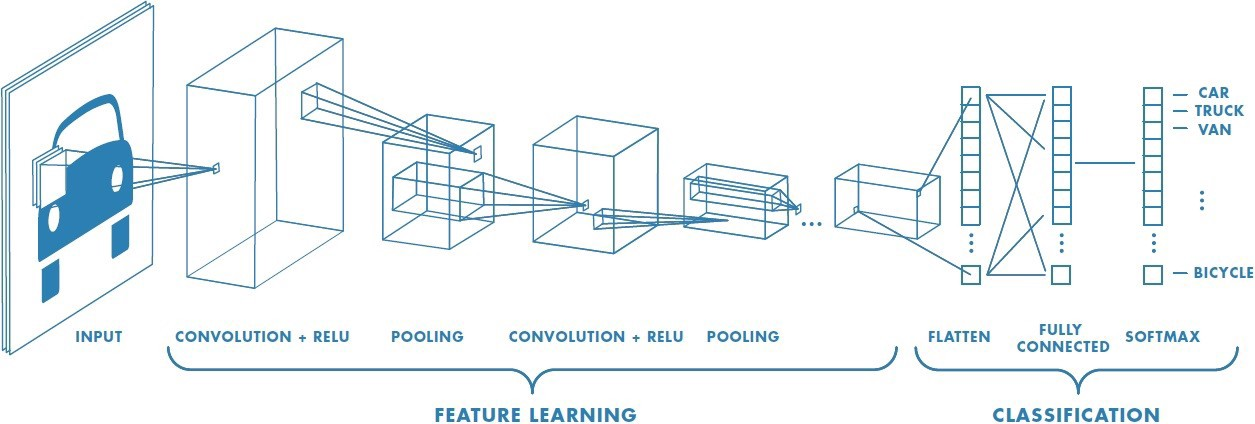

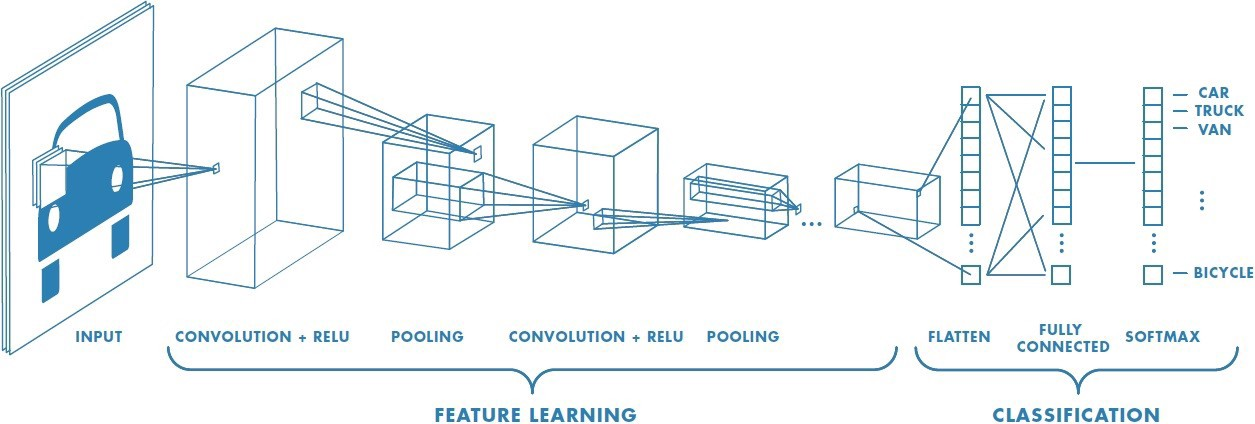

Convolutional Neural Network

cat

Convolutional Neural Network

Keras

Easier!

GIBBS Cluster

conda install keras-gpu

conda install pytorch

conda install pillow

1. Load Data

2. Setup Network

3. Train Network

4. Predict!

4 Steps

Data

Training

Testing

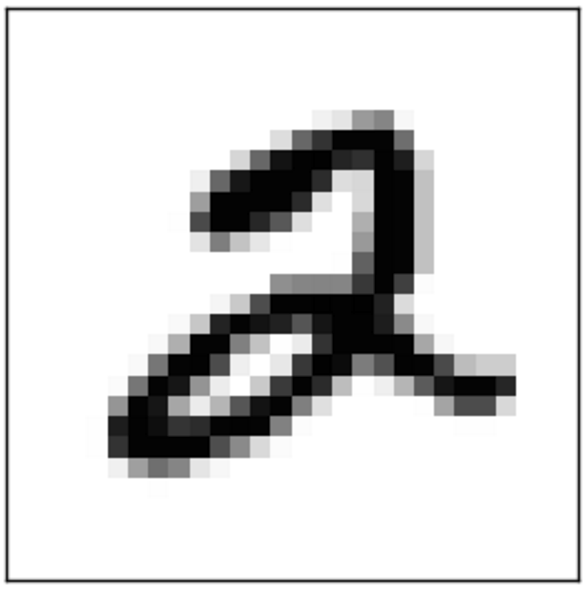

2

Label

?

Label

But we know the answer!

X_train

y_train

X_test

y_test

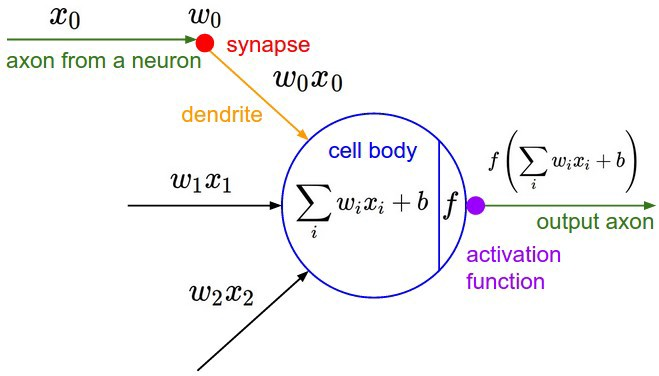

Setup Network

NUMBER_OF_CLASSES = 10

model = keras.models.Sequential()

model.add(keras.layers.Conv2D(32, kernel_size=(3, 3),

activation='relu',

input_shape=first_image.shape))

model.add(keras.layers.Conv2D(64, (3, 3), activation='relu'))

model.add(keras.layers.MaxPooling2D(pool_size=(2, 2)))

model.add(keras.layers.Dropout(0.25))

model.add(keras.layers.Flatten())

model.add(keras.layers.Dense(128, activation='relu'))

model.add(keras.layers.Dropout(0.5))

model.add(keras.layers.Dense(NUMBER_OF_CLASSES, activation='softmax'))NUMBER_OF_CLASSES = 10

MNIST

NUMBER_OF_CLASSES = 2

Cats vs. Dogs

Setup Network

NUMBER_OF_CLASSES = 10

model = keras.models.Sequential()

model.add(keras.layers.Conv2D(32, kernel_size=(3, 3),

activation='relu',

input_shape=first_image.shape))

model.add(keras.layers.Conv2D(64, (3, 3), activation='relu'))

model.add(keras.layers.MaxPooling2D(pool_size=(2, 2)))

model.add(keras.layers.Dropout(0.25))

model.add(keras.layers.Flatten())

model.add(keras.layers.Dense(128, activation='relu'))

model.add(keras.layers.Dropout(0.5))

model.add(keras.layers.Dense(NUMBER_OF_CLASSES, activation='softmax'))model.compile(loss=keras.losses.categorical_crossentropy,

optimizer=keras.optimizers.Adadelta(),

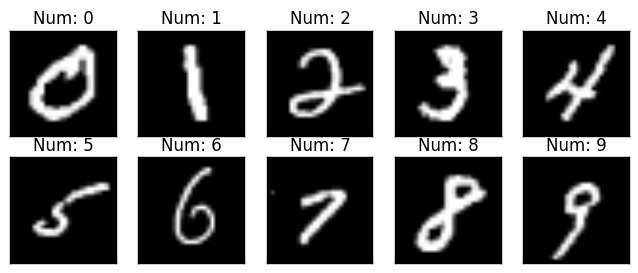

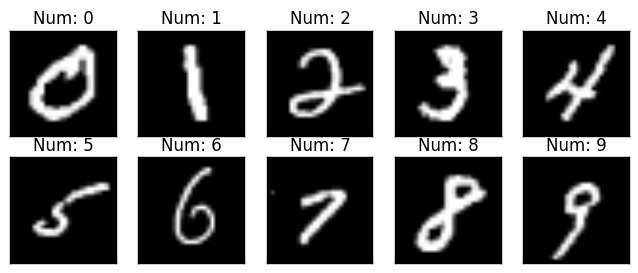

metrics=['accuracy'])Train Network

9

Training Data

Then we check how well the network predicts the testing data!

?

Loss

should go down!

Repeated.. (1 run is called an epoch)

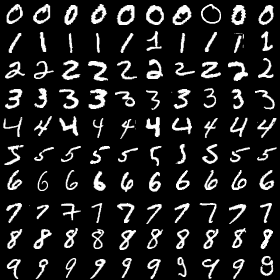

Predict!

Testing Data

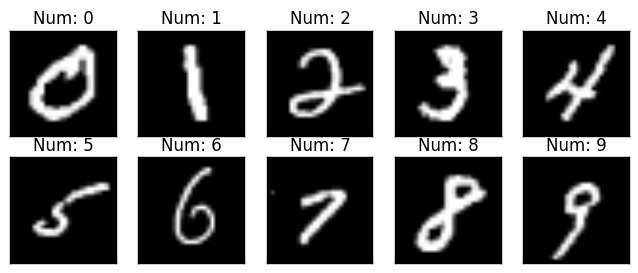

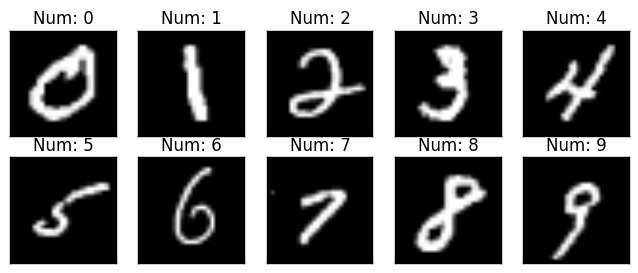

0 0 0

1 1 1

2 2 2

3 3 3

4 4 4

5 5 5

6 6 6

7 7 7

8 8 8

9 9 9

Measure how well the CNN does...