Bridging Simulators with Conditional Optimal Transport

Justine Zeghal, Benjamin Remy,

Yashar Hezaveh, François Lanusse,

Laurence Perreault-Levasseur

Field Level Meeting SkAI, Chicago

February 2026

Full-field inference: extracting all cosmological information

Bayes theorem:

Full-field inference: extracting all cosmological information

Bayes theorem:

Full-field inference: extracting all cosmological information

Bayes theorem:

Full-field inference: extracting all cosmological information

Simulator

Bayes theorem:

Bayes theorem:

Full-field inference: extracting all cosmological information

Simulator

Two ways to get the posterior:

- Explicit inference:

- Implicit inference

Full-field inference: extracting all cosmological information

Simulator

Two ways to get the posterior:

- Explicit inference:

- Implicit inference

Has to be realistic!

Bayes theorem:

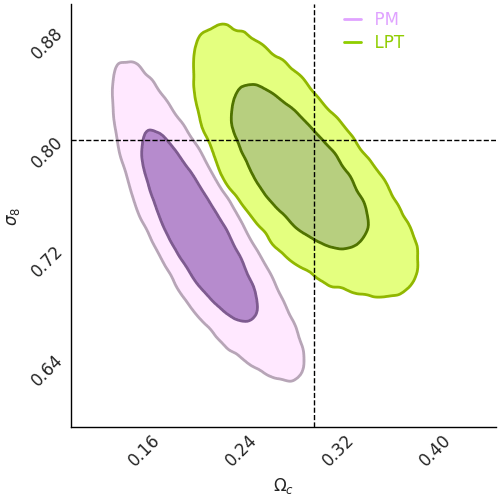

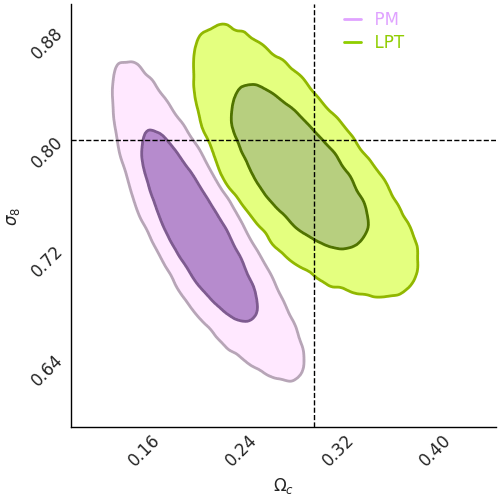

Wrong models generate bias

Fast simulations

Costly simulations

Wrong models generate bias

→ e.g. full nbody, hydro

Fast simulations

Costly simulations

| O(ms) runtime | ❌ |

| differentiable | ❌ |

| ❌ | |

| realistic | ✅ |

Wrong models generate bias

→ e.g. full nbody, hydro

→ e.g. log-normal, LPT, PM

| O(ms) runtime | ✅ |

| differentiable | ✅ |

| ✅ | |

| realistic | ❌ |

Fast simulations

Costly simulations

| O(ms) runtime | ❌ |

| differentiable | ❌ |

| ❌ | |

| realistic | ✅ |

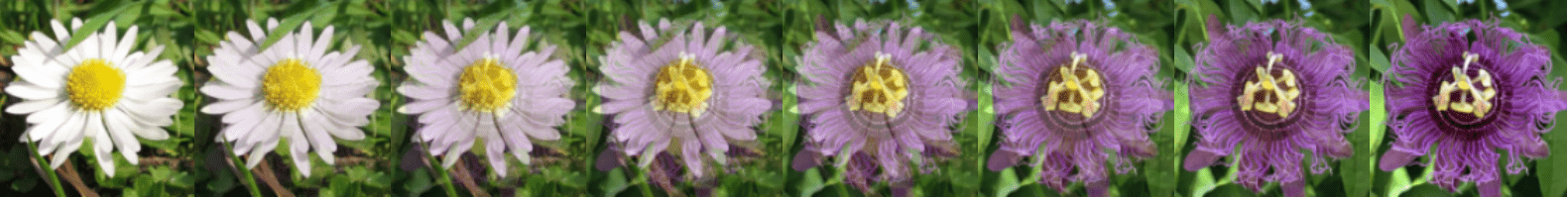

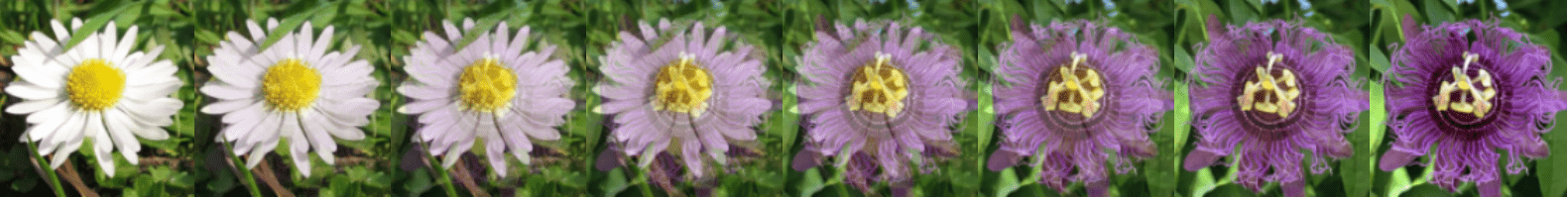

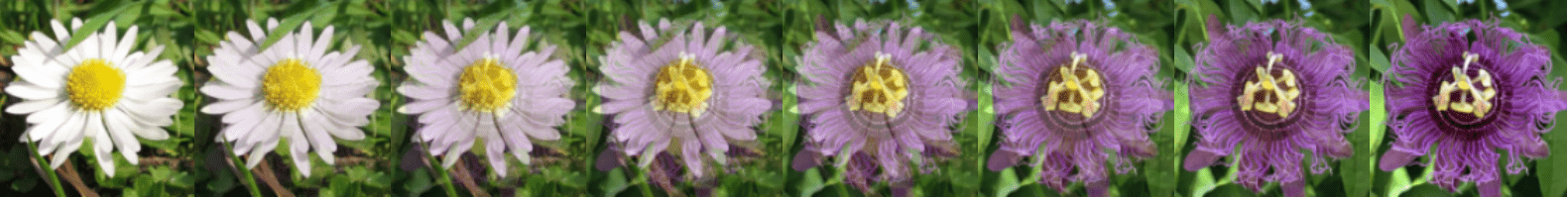

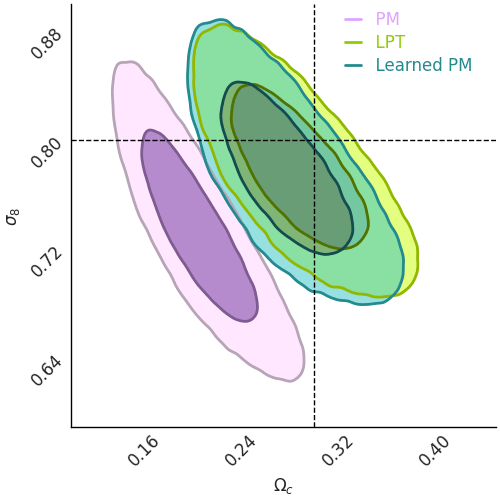

Learning the correction

We can learn

the correction!

Fast simulations

Costly simulations

Learning the correction

We can learn

the correction!

Fast simulations

- it preserves the conditioning,

Costly simulations

- ,

such that

- it minimally correct the simulation.

Learning the correction

We can learn

the correction!

Fast simulations

- it preserves the conditioning,

Costly simulations

- ,

such that

- it minimally correct the simulation.

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

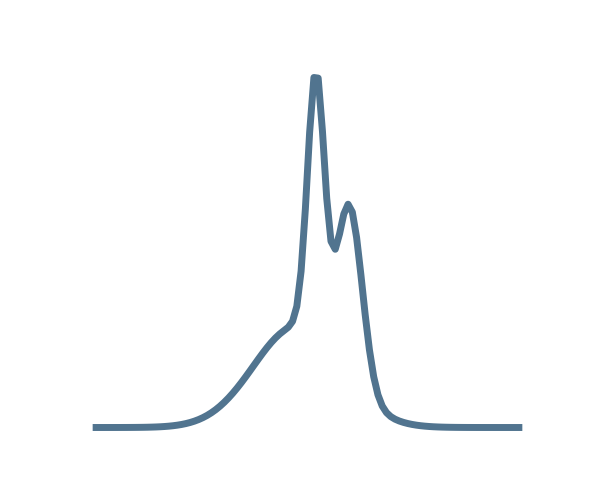

Conditional Optimal Transport Flow Matching

Conditional Optimal Transport Flow Matching

(Lipman et al. 2023)

Flow matching

(Lipman et al. 2023)

Flow matching

(Lipman et al. 2023)

Flow matching

(Lipman et al. 2023)

Flow matching

(Lipman et al. 2023)

Flow matching

(Lipman et al. 2023)

Flow matching

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

✅

✅

✅

Conditional Optimal Transport Flow Matching

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

✅

✅

✅

Conditional Optimal Transport Flow Matching

Optimal Transport Flow matching (Tong et al. 2024)

Flow Matching loss function:

Indepent coupling:

Optimal Transport coupling:

i.e. minimizes the path for all trajectories between and .

This coupling, combined with the linear interpolant, solve the dynamic OT:

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

✅

✅

✅

Conditional Optimal Transport Flow Matching

✅

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

✅

✅

✅

Conditional Optimal Transport Flow Matching

✅

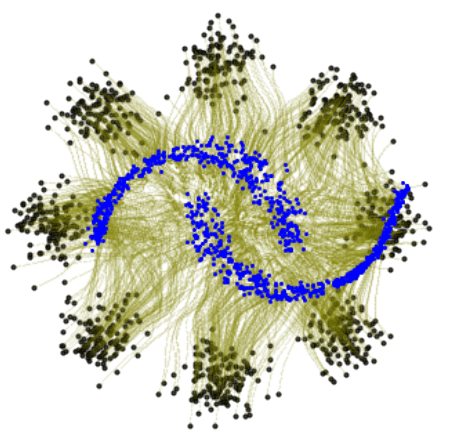

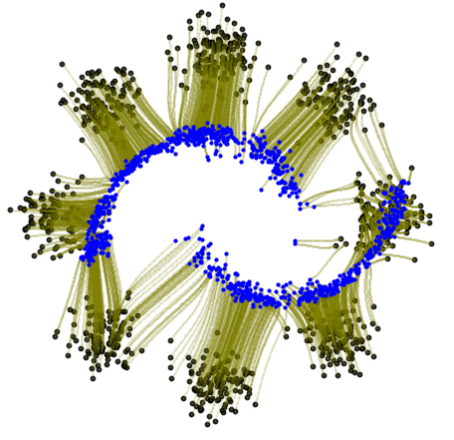

Conditional Optimal Transport Flow matching (Kerrigan et al. 2024)

OT Flow Matching loss function:

Dataset 1

Optimal Transport Plan

Dataset 2

Requirements:

- has to map to a distribution sample.

- has to work in high dimensions.

- has to bridge any two distributions.

- has to bridge conditional distributions.

- has to be the solution of the OT problem.

✅

✅

✅

✅

✅

→ e.g. full nbody, hydro

| O(ms) runtime | ✅ |

| differentiable | ✅ |

| ✅ | |

| realistic | ✅ |

→ e.g. log-normal, LPT, PM

| O(ms) runtime | ✅ |

| differentiable | ✅ |

| ✅ | |

| realistic | ❌ |

Fast simulations

Emulated simulations

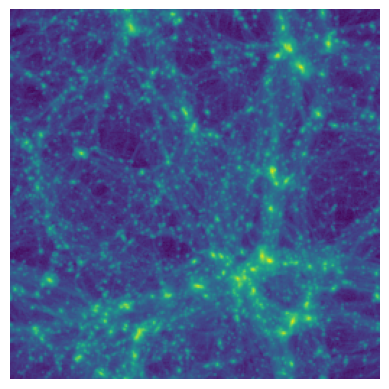

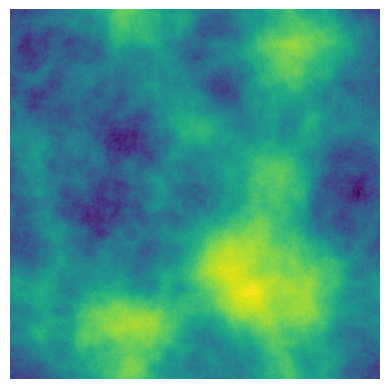

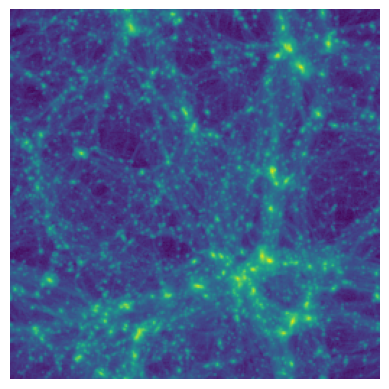

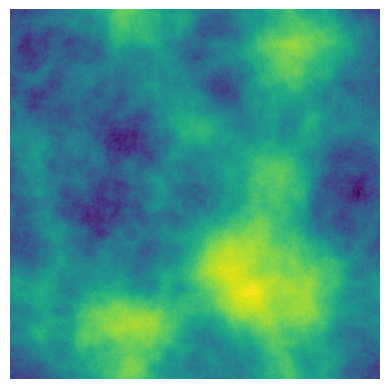

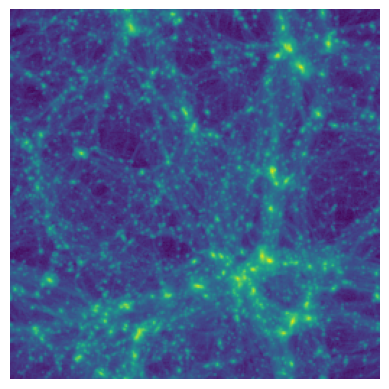

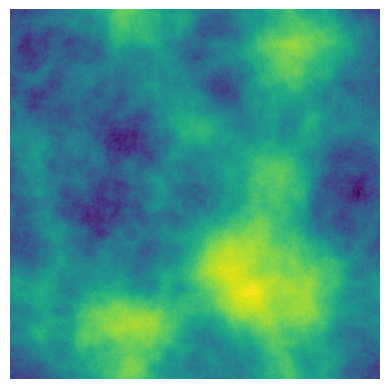

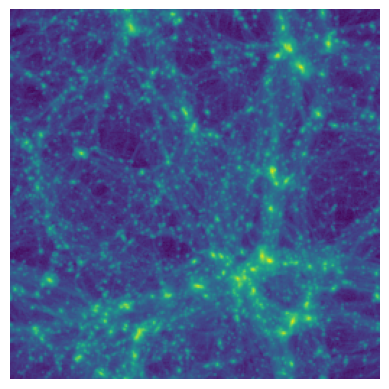

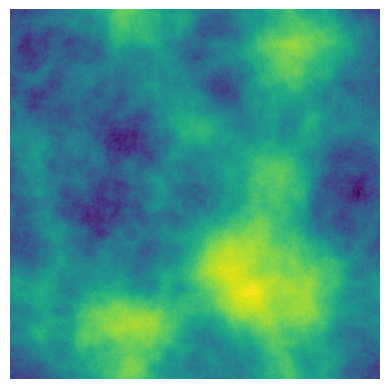

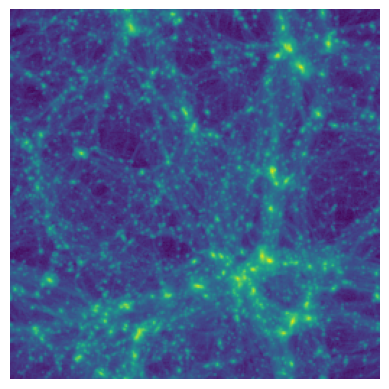

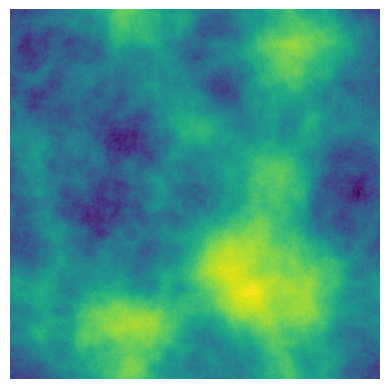

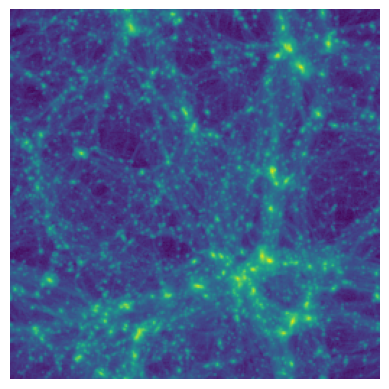

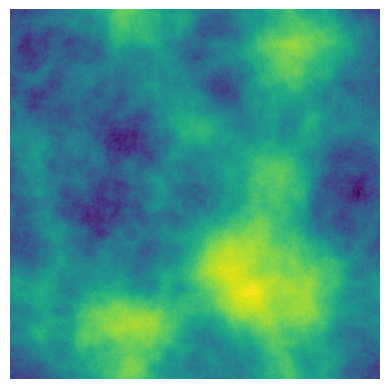

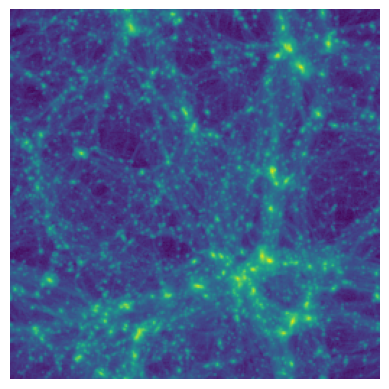

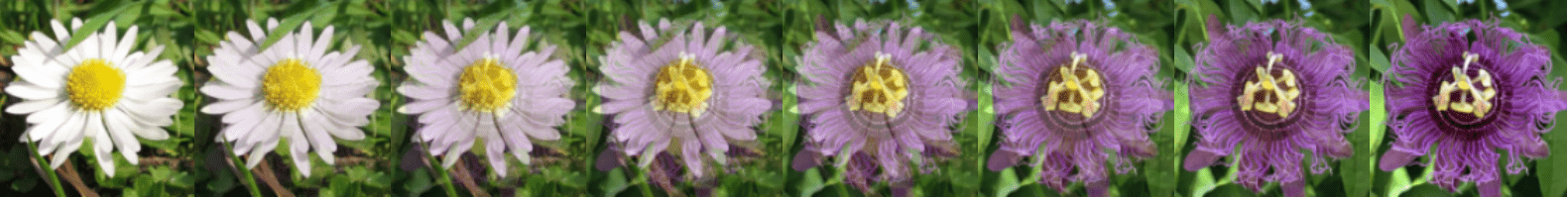

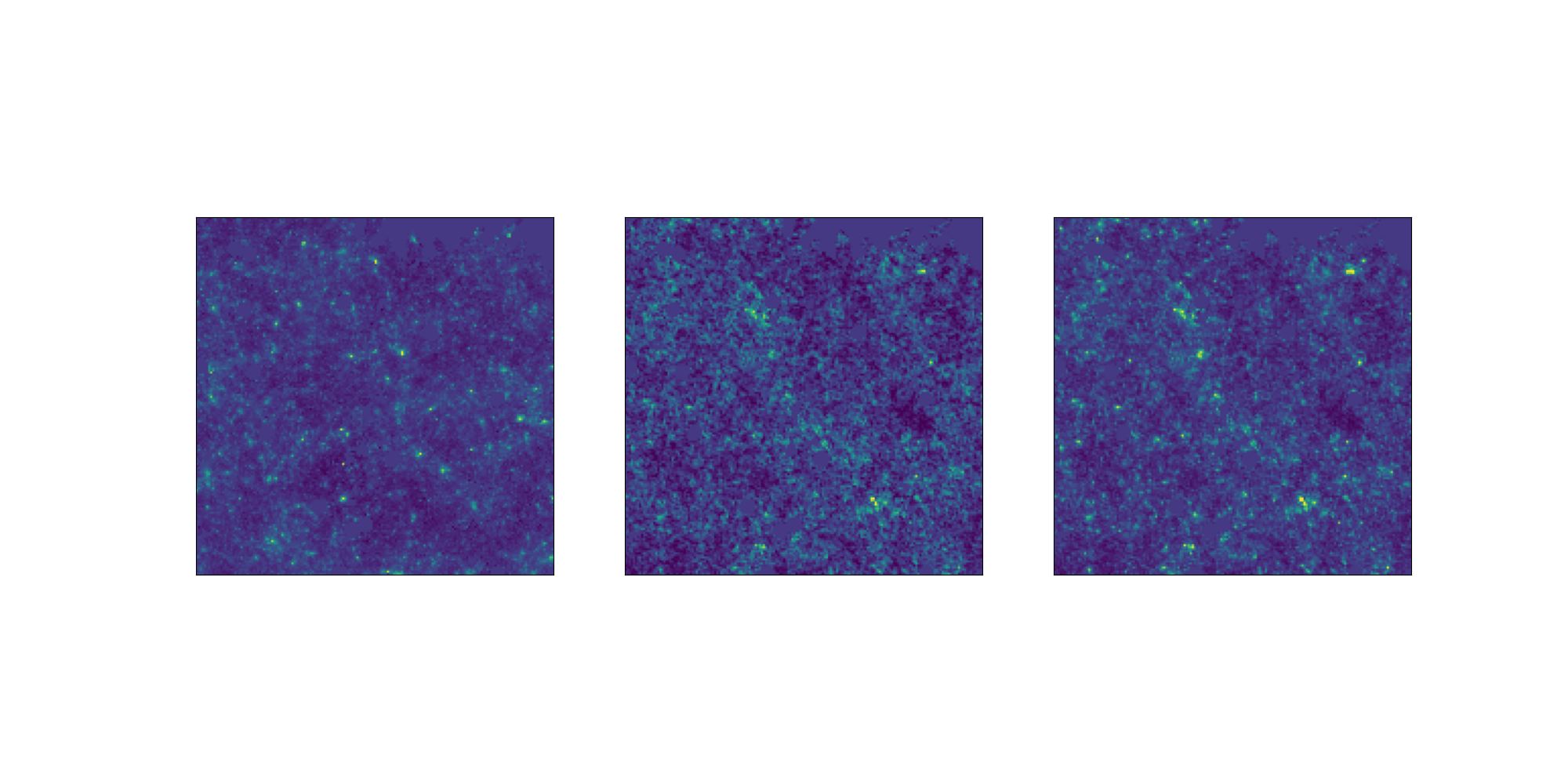

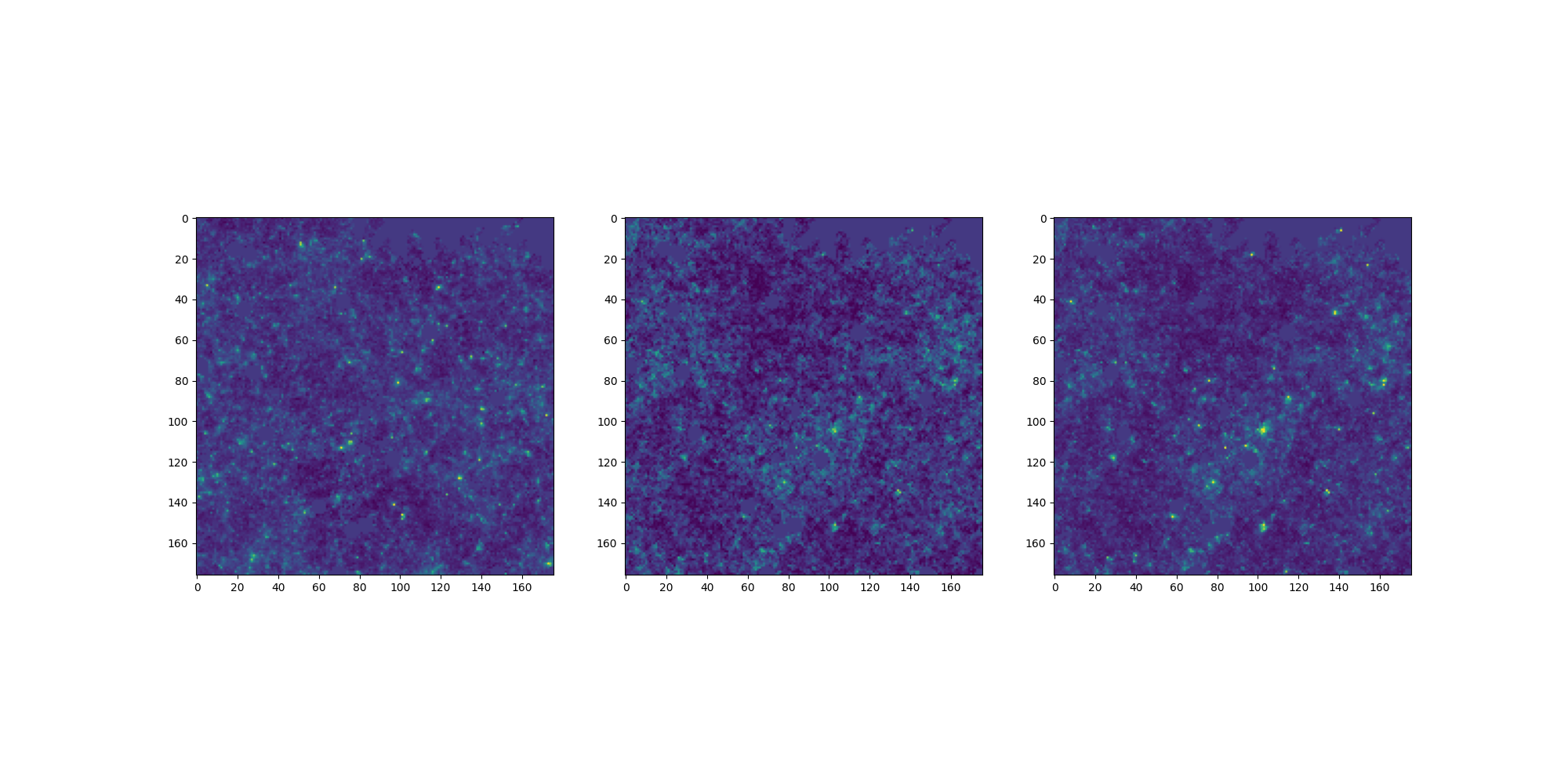

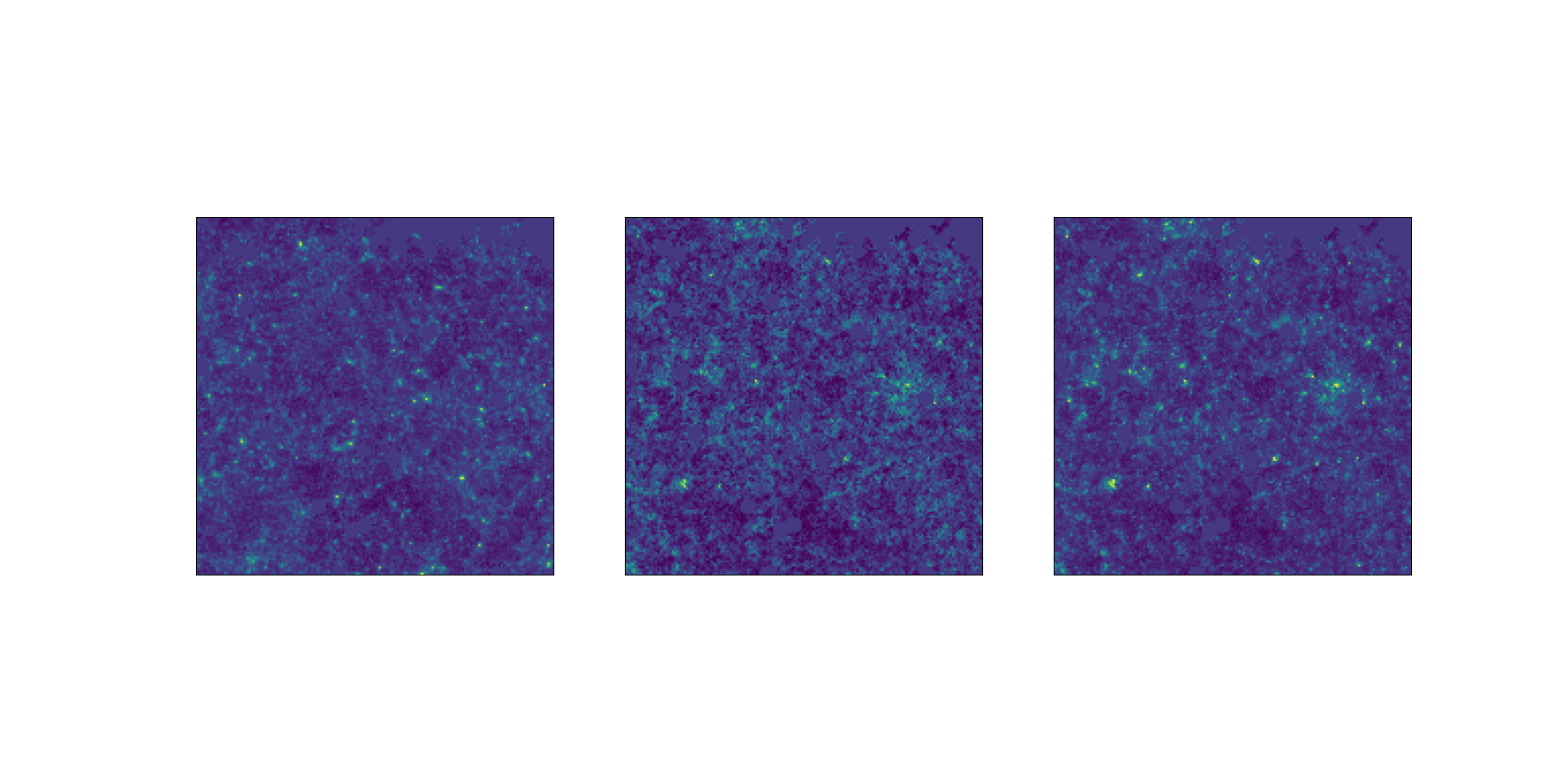

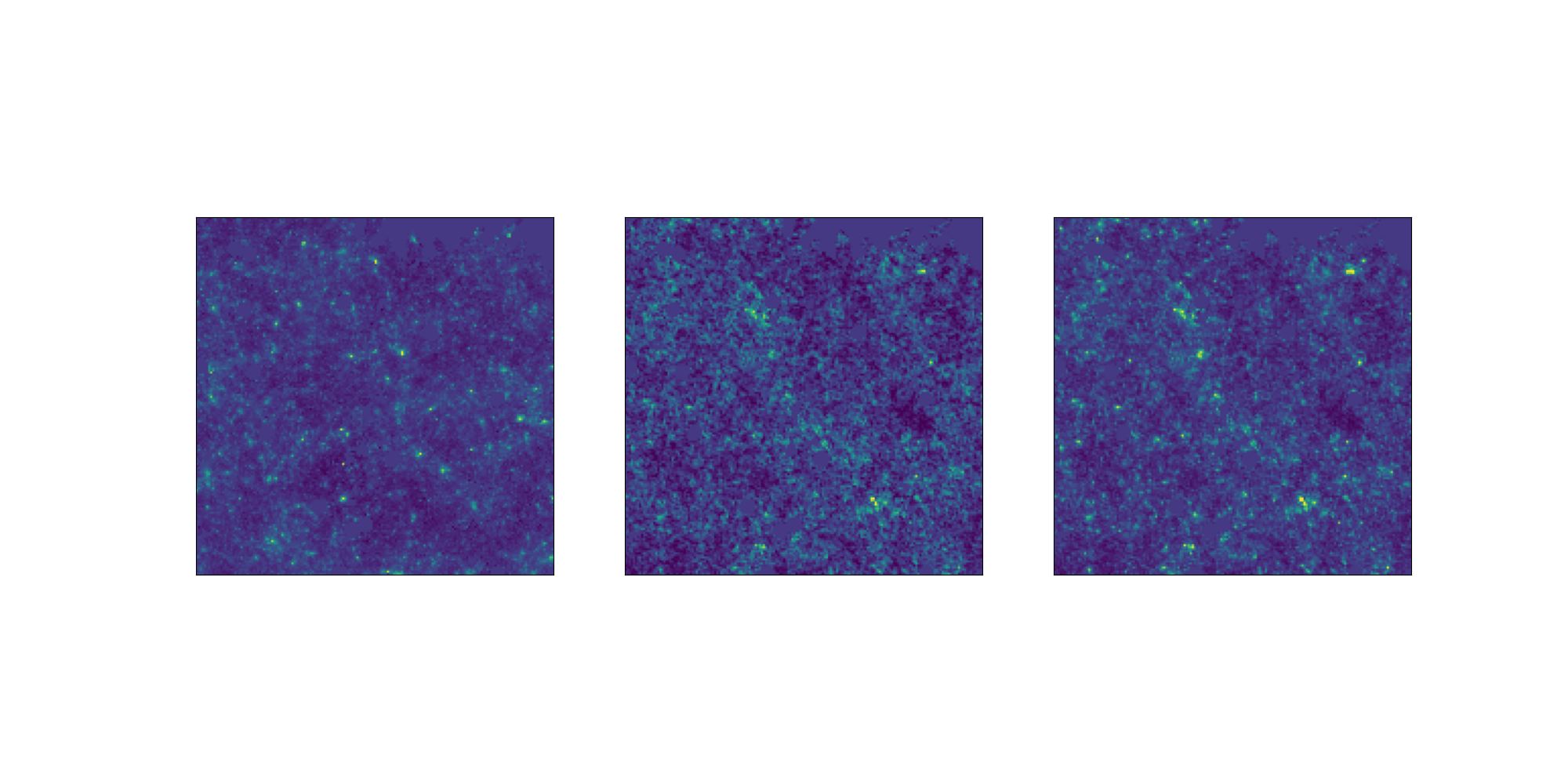

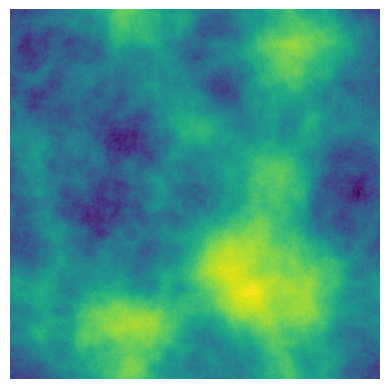

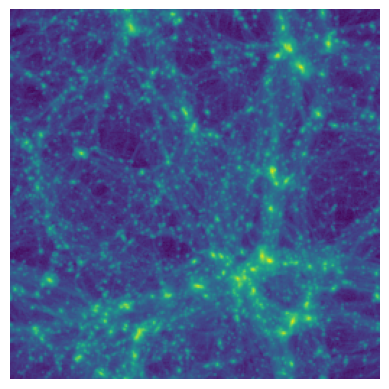

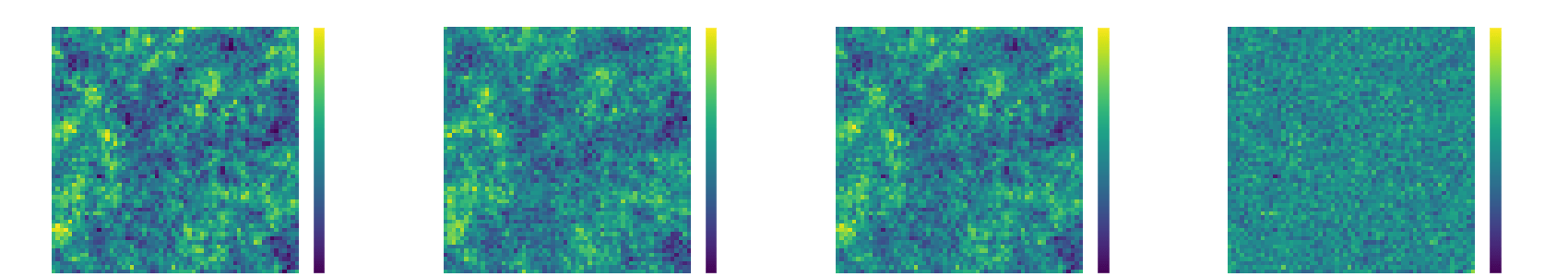

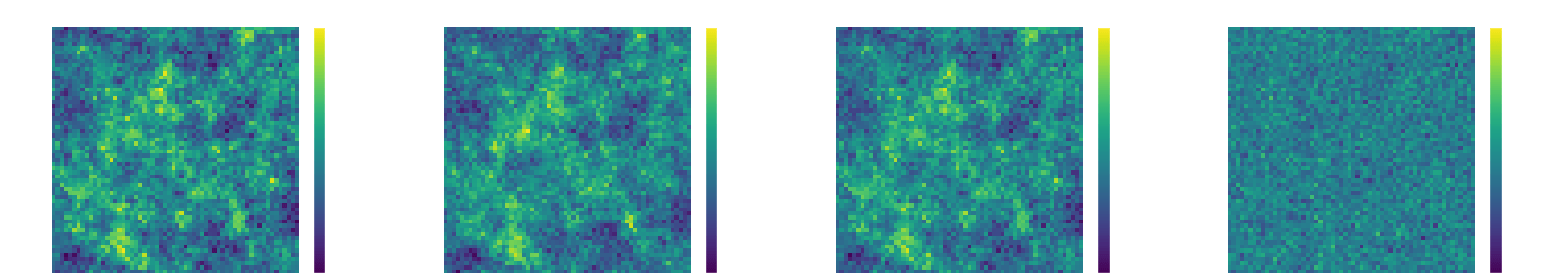

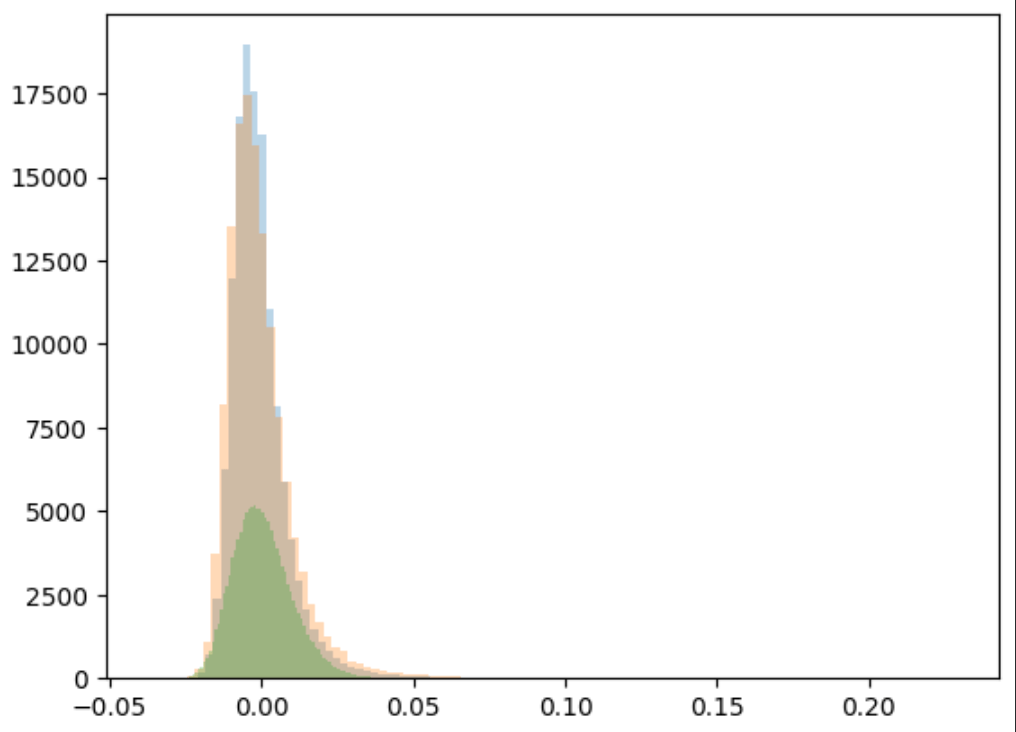

Results on weak lensing maps

LPT

PM

Learned

Residuals

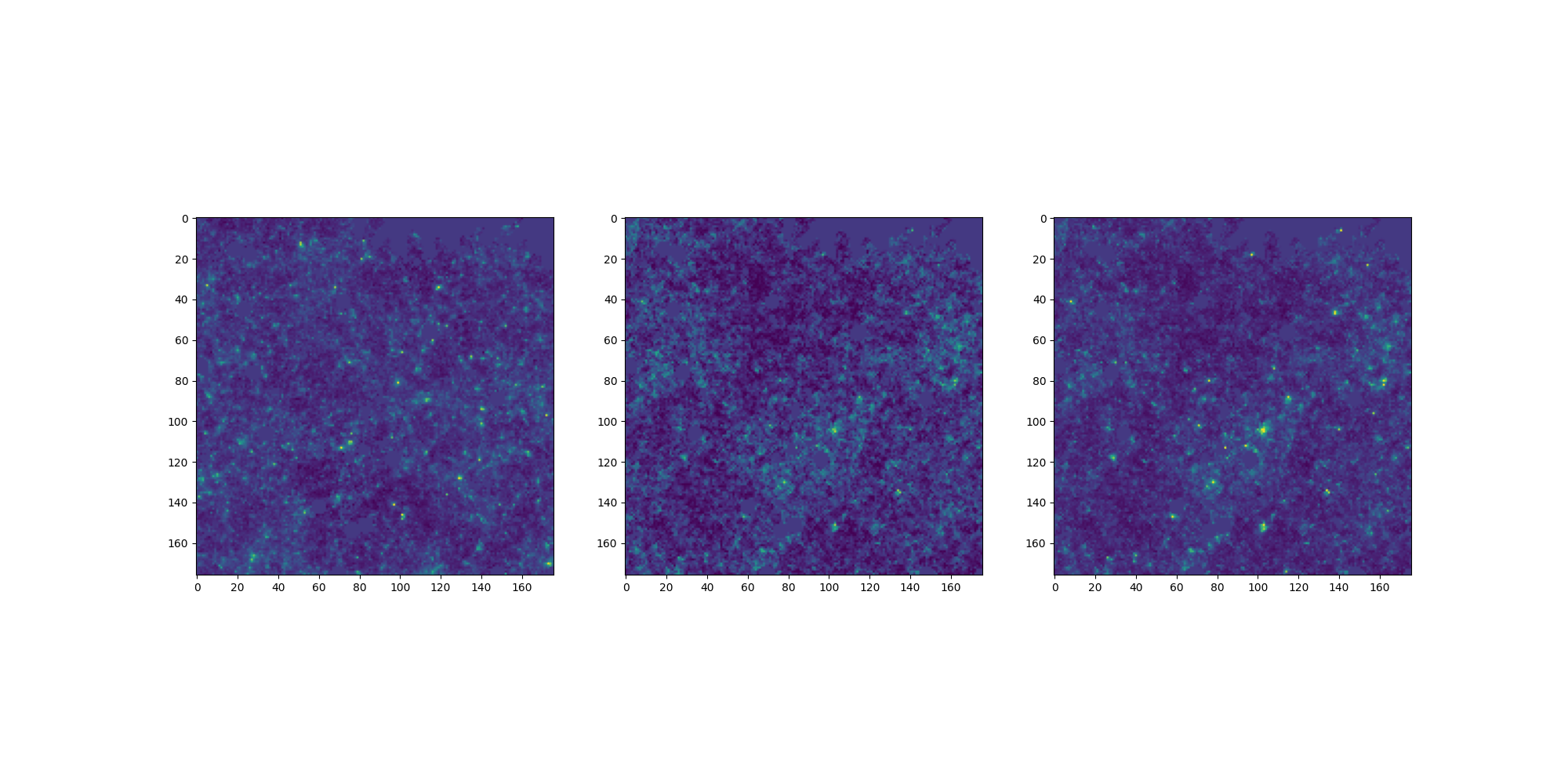

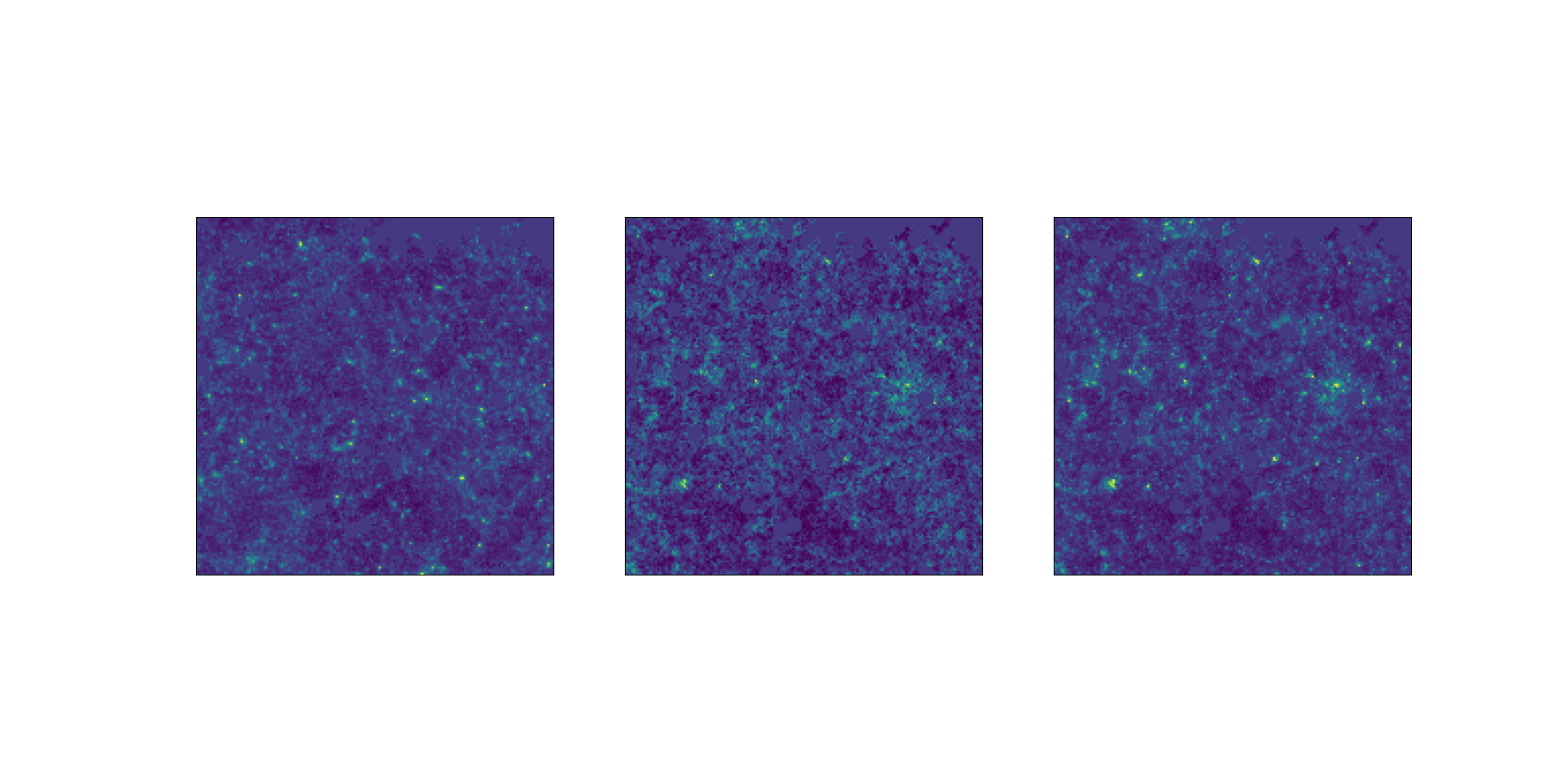

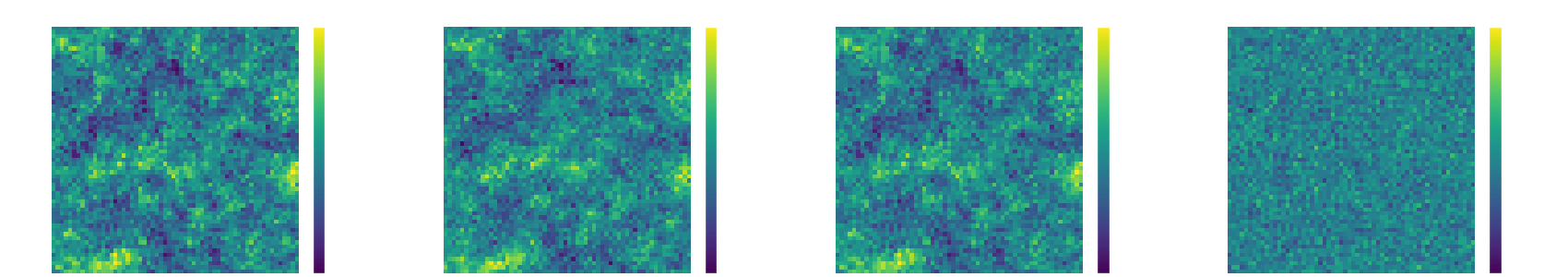

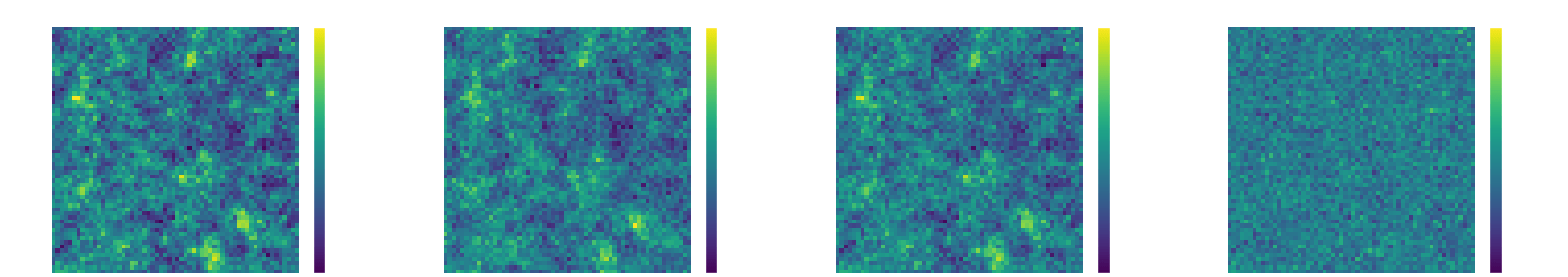

NeurIPS Challenge: Weak Lensing Uncertainty

LogNormal

Emulated

Challenge simulation

VS

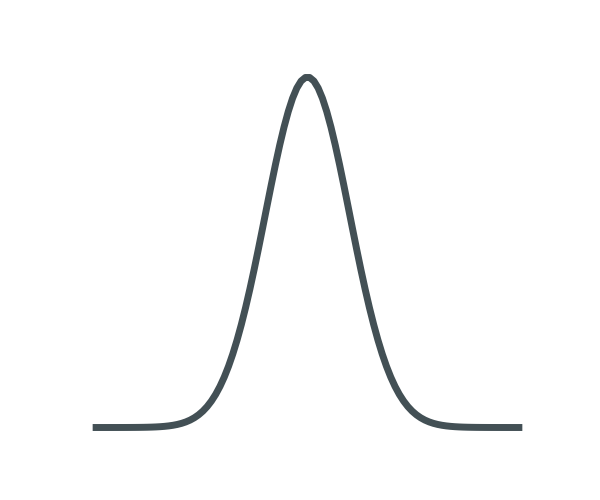

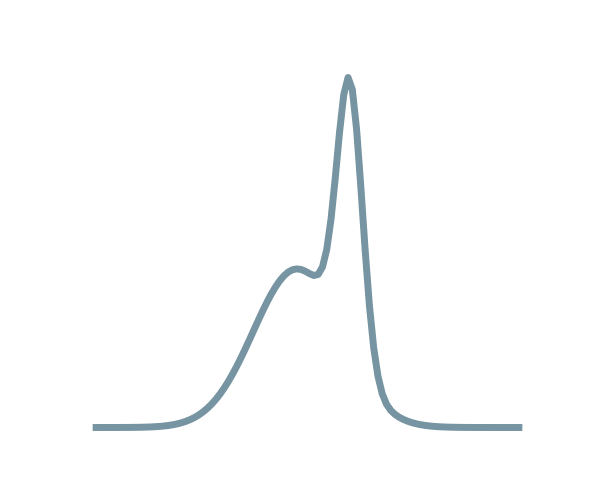

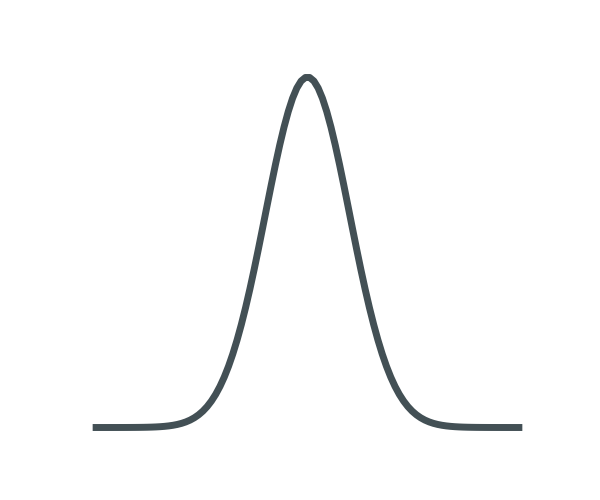

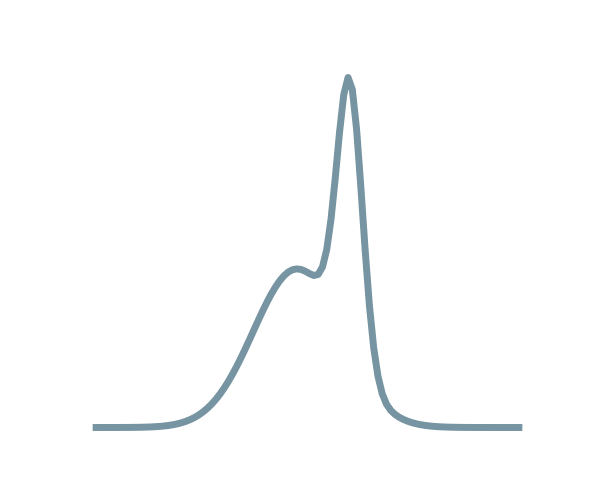

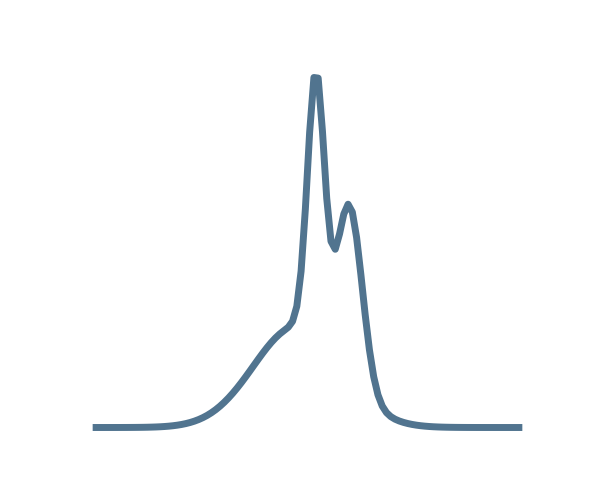

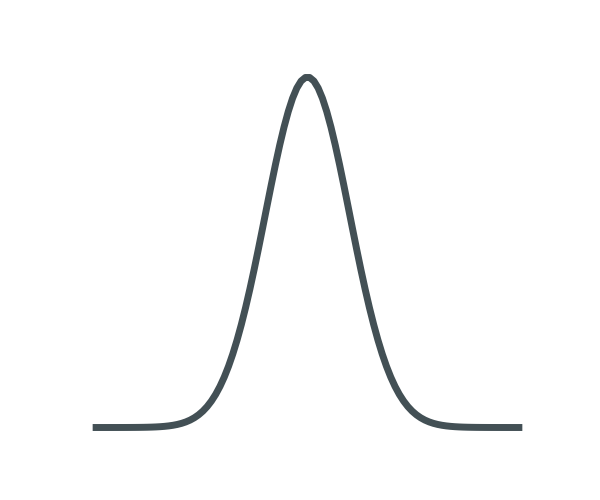

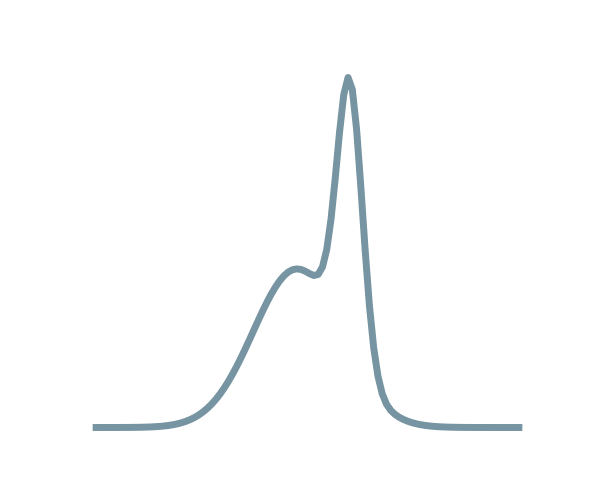

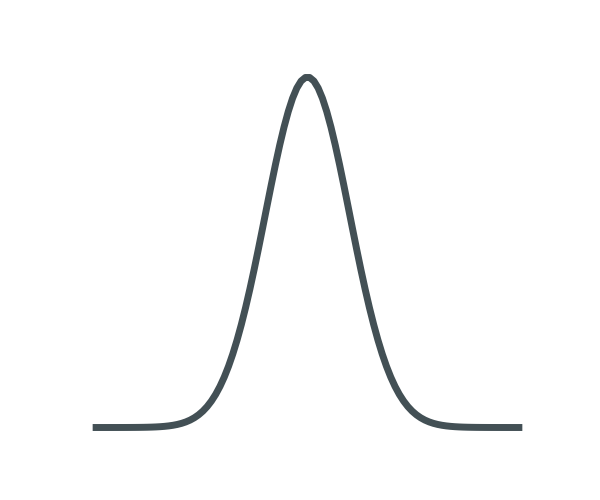

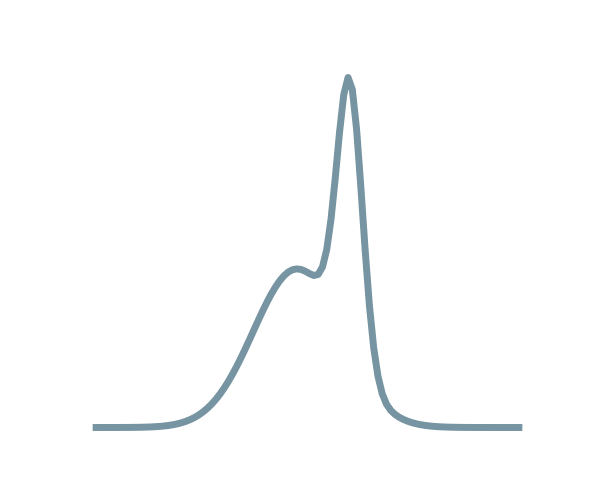

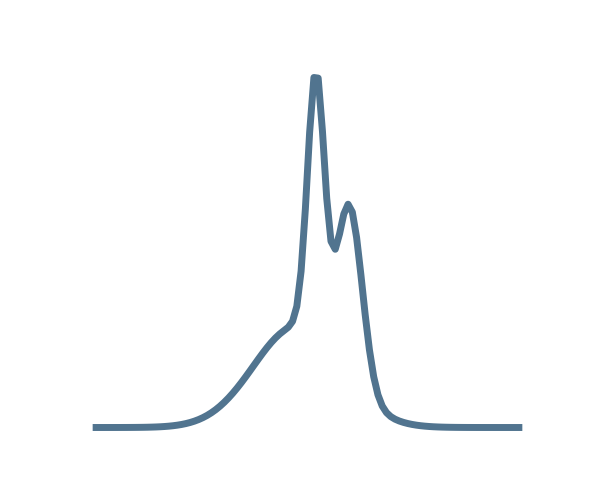

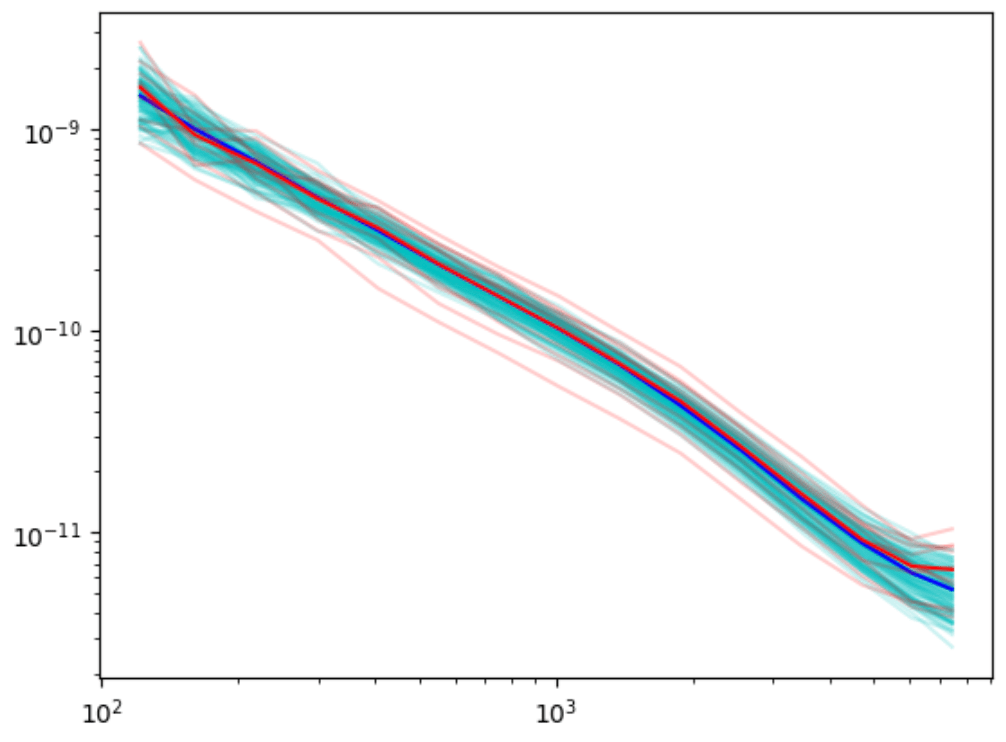

NeurIPS Challenge: Weak Lensing Uncertainty

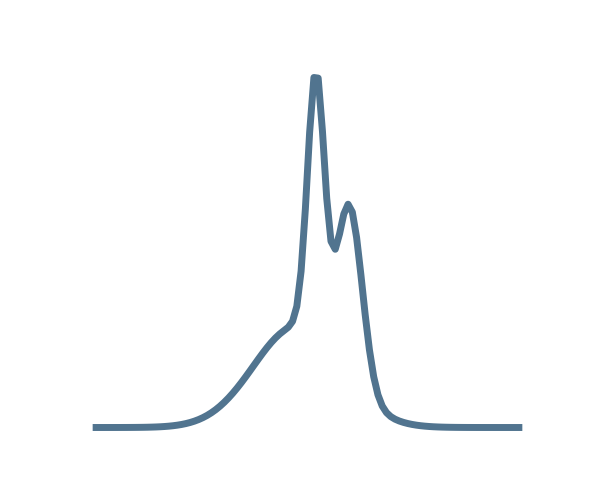

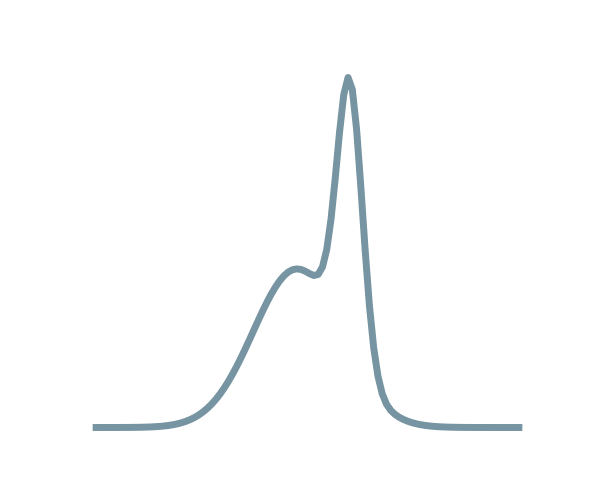

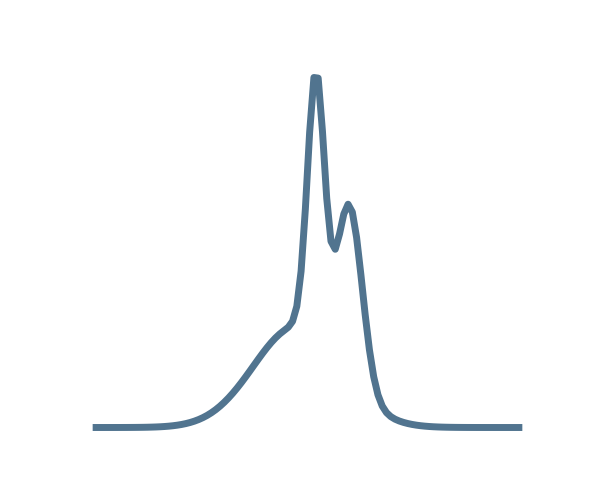

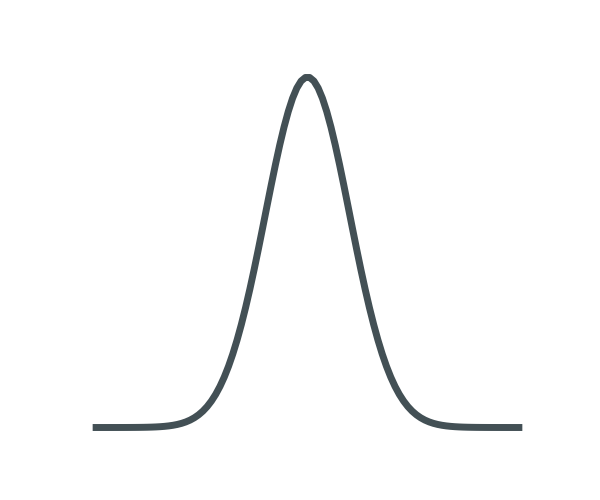

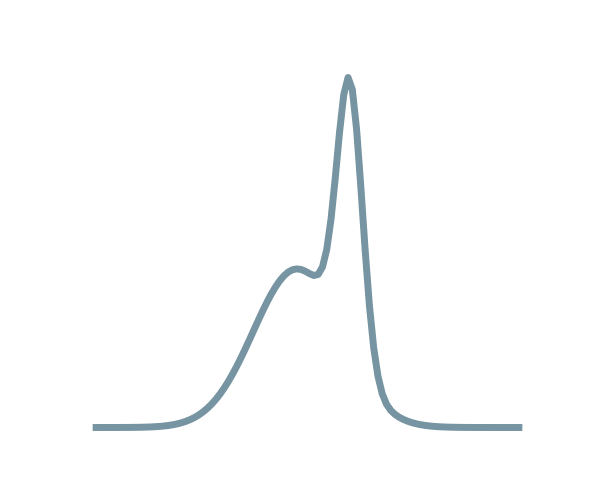

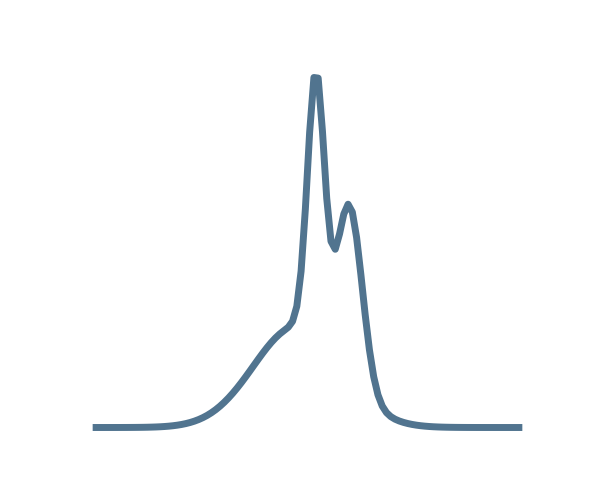

Power spectrum

LogNormal

Emulated

Challenge simulation

VS

NeurIPS Challenge: Weak Lensing Uncertainty

🥳

LogNormal

Emulated

Challenge simulation

VS