Sara Bru Garcia

Behavioral Scientist

Behavioral Science +

14 min read / AI / Behavioral Design / Applied

AI

Exploring how Artificial Intelligence (AI) can be combined with Behavioural Science.

Case Study Overview

Here’s what you can expect to learn:

🔤 Intro to AI and behavioural science

📈 How Lirio and Humu leverage AI and behavioural science

🔮 Exploring Open AI tools and Generative AI

⚖️ Why it’s crucial to consider the ethical implications of these tools

In very simple terms, AI refers to machines that incorporate “human like” intelligence.

Beep Bop Beep

Affirmative.

Jokes. Our language models

are actually quite advanced.

They can perform and sometimes extend on capabilities related to human intelligence, such as vision, speech, recognition or language.

Affirmative.

Except unlike humans, we learn more and we do it much faster.

AI often operates through machine learning.

Affirmative.

Except unlike humans, we learn more and we do it much faster.

Machine learning takes heaps of data and feeds them to algorithms so that they can learn to do something.

Affirmative.

Except unlike humans, we learn more and we do it much faster.

Much like us, these machine learning systems have the capacity to learn from past experience.

Affirmative.

Except unlike humans, we learn more and we do it much faster.

Okay, you can stop bragging now.... Let me do my thing.

Tee-hee

For example, large language models (LLMs) use machine learning algorithms to recognise, predict, and generate human-like language on the basis of billions of text-based datasets.

Whether you have given much thought to AI or not, it is most certainly part of your everyday life.

From giving you personalised shopping suggestions...

to playing your favourite songs through a voice assistant...

to driving your car on autopilot...

or using predictive text to complete common sentences in your email.

Affirmative.

You're welcome.

AI is everywhere.

AI, coupled with increasingly larger datasets and computational power, is accelerating progress in many areas.

But what does this mean for behavioural science?

AI is already a part of users’ daily lives, but it's important to make sure that the way it's designed takes into account an understanding of human behaviour.

Think about a simple example, such as an algorithm to recommend you what to buy based on what others have bought.

These algorithms will make suggestions assuming that if two users buy the same item, they must have similar taste.

So if user A and B bought the same item, the prediction is that user A will be interested in whatever else user B also bought, and vice versa. (amazon-type example)

However, recent research [1] is showing that incorporating models from behavioural science into these algorithms (making them more human-like, or anthropomorphic) results in recommendations that people are more satisfied with.

1. Regular Machine Learning (ML) Algorithm Recommendation

- Recommends products based on what others also bought

- 33% self-reported satisfaction after 3 months

2. Anthropomorphic Learning Algorithm

- Combines AI and Behavioural Science models

- 73% self-reported satisfaction after 3 months

So combining AI and behavioural science can lead to more tailored recommendations, which in turn leads to more satisfied users.

Personalisation is an essential part of the behavioural science toolkit.

It can help us understand the specific context in which the behaviour takes place better.

Allowing us to design personalised feedback, more relevant choices, and tailored information that is more likely to prompt action.

With AI’s ability to process more data than any human ever could, combining AI with behavioural science offers the potential for even more personalised experiences.

This means that interventions could reach larger, diverse populations more effectively, taking into account a wide range of factors

So, how can this combination of AI and behavioural science actually look like…

I will walk you through two examples of how organisations can combine behavioural science and AI to develop precision nudging tools that can address individuals’ unique barriers to target health behaviours [2] and encourage personalised development to employees [3].

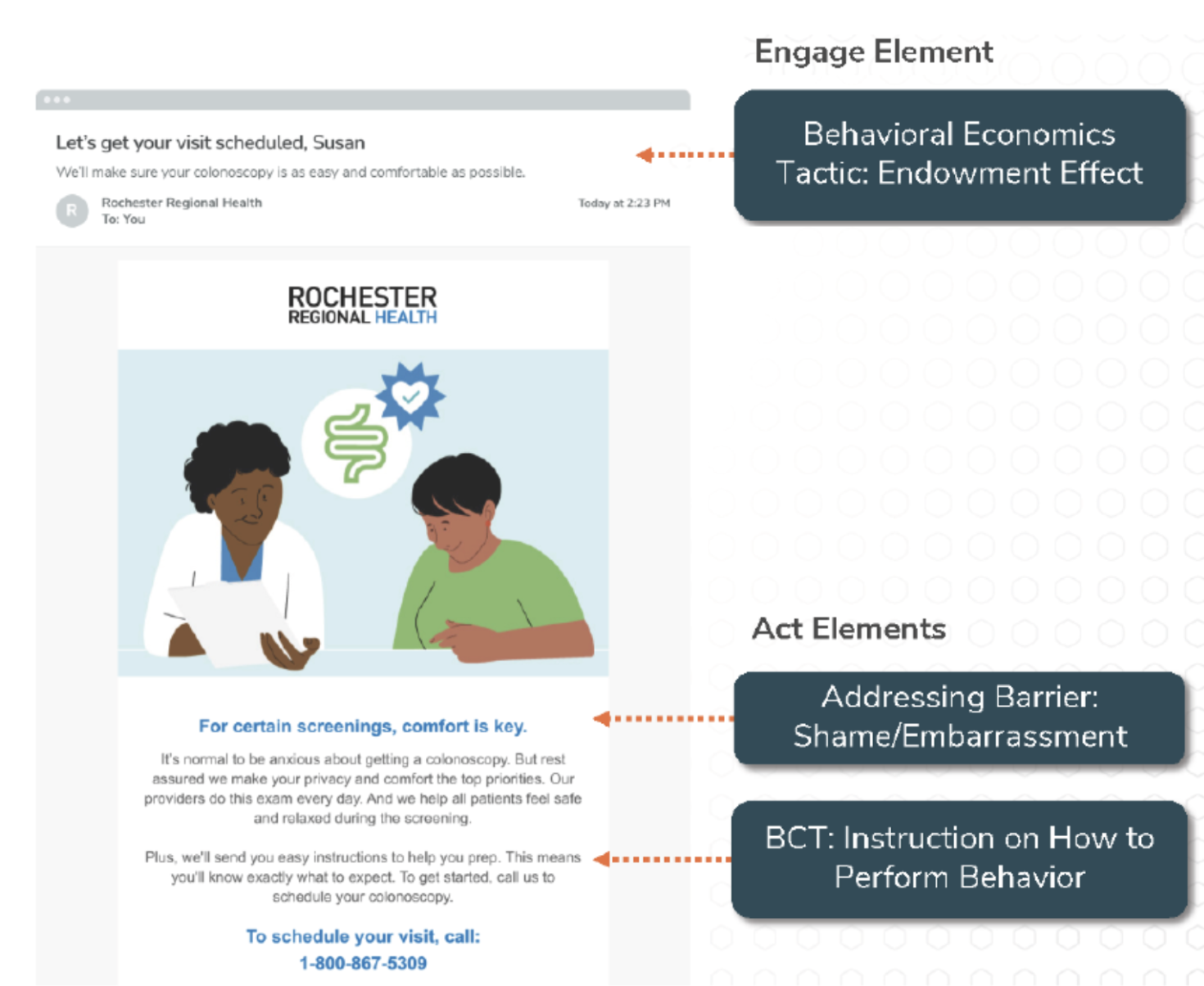

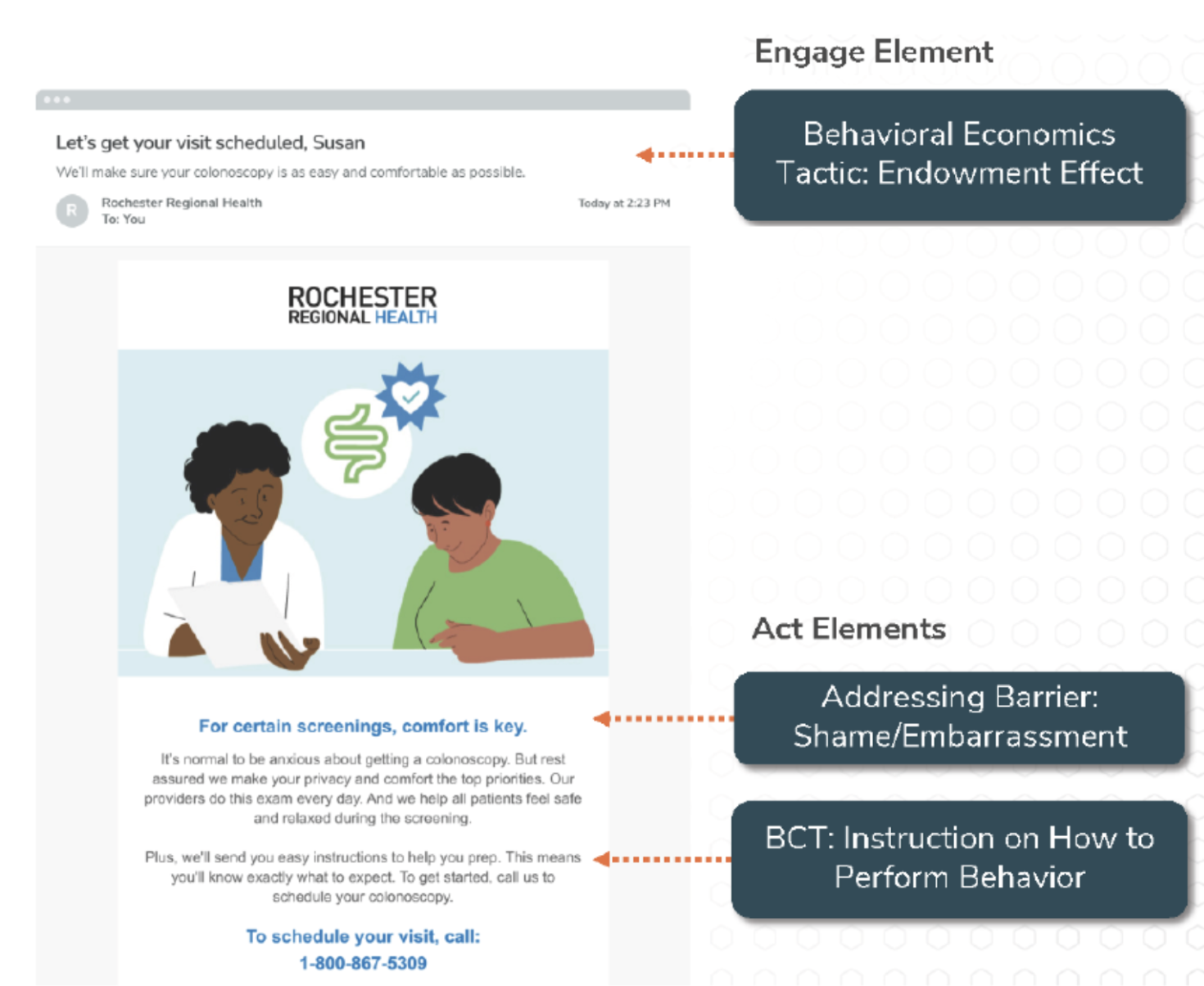

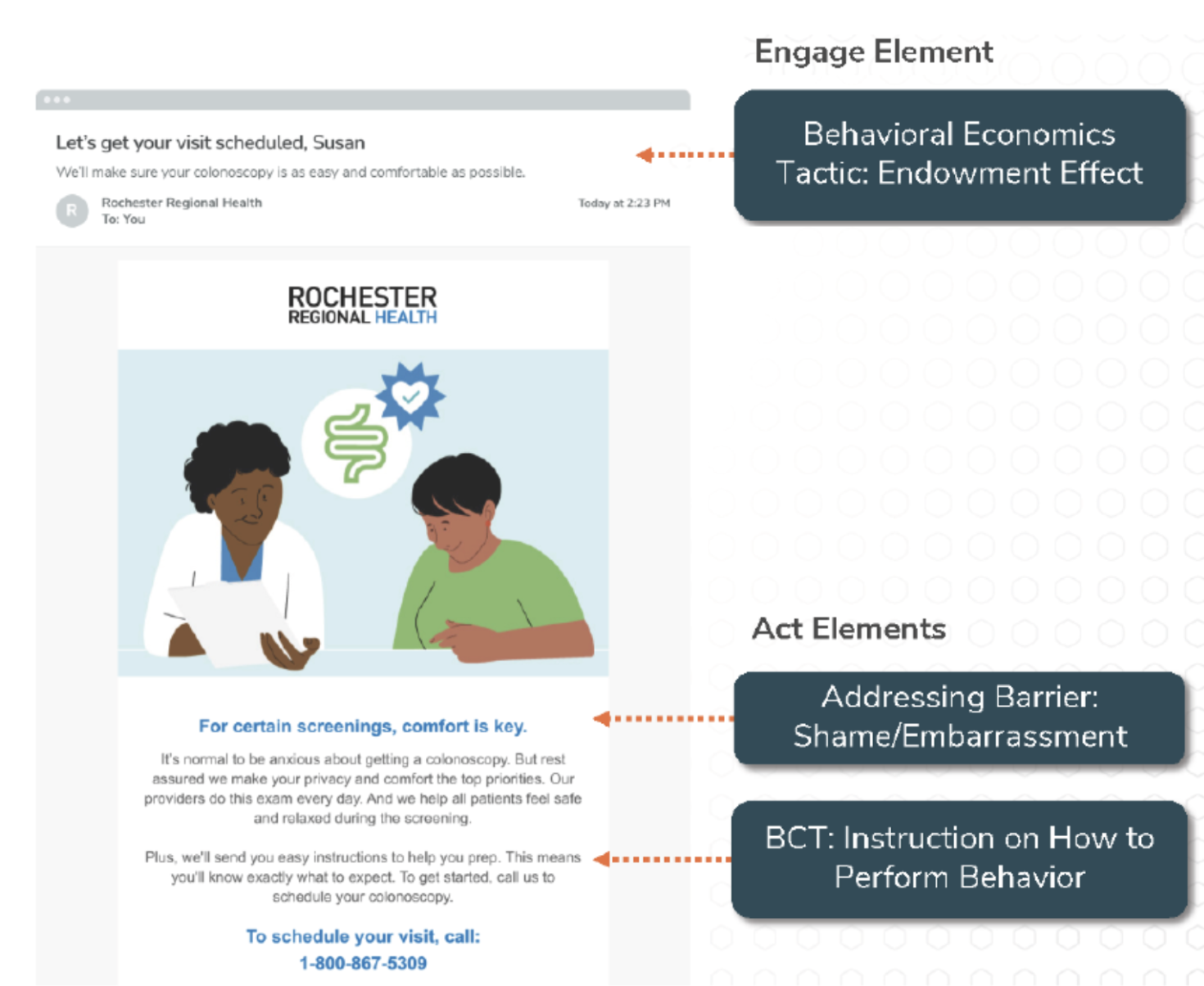

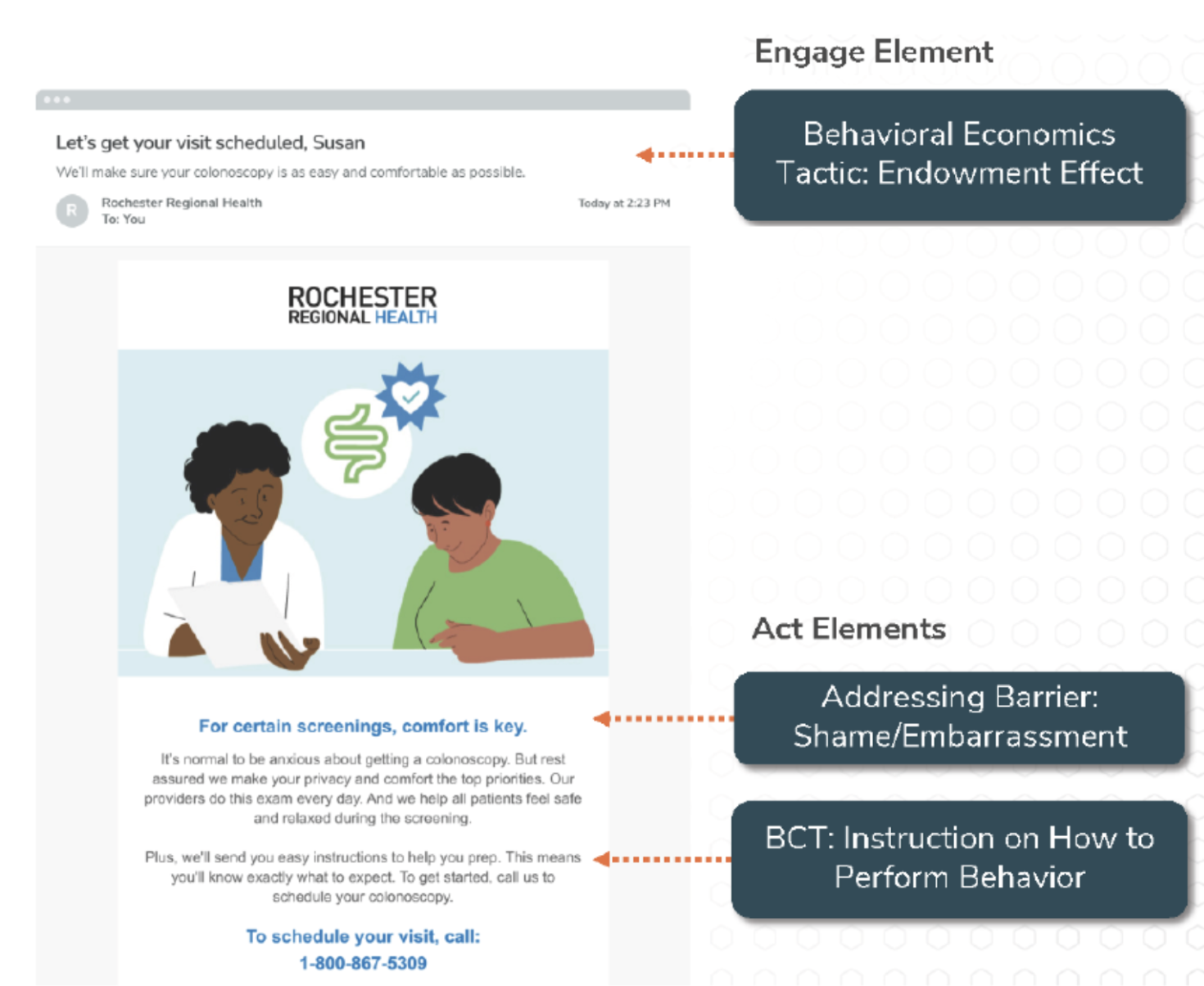

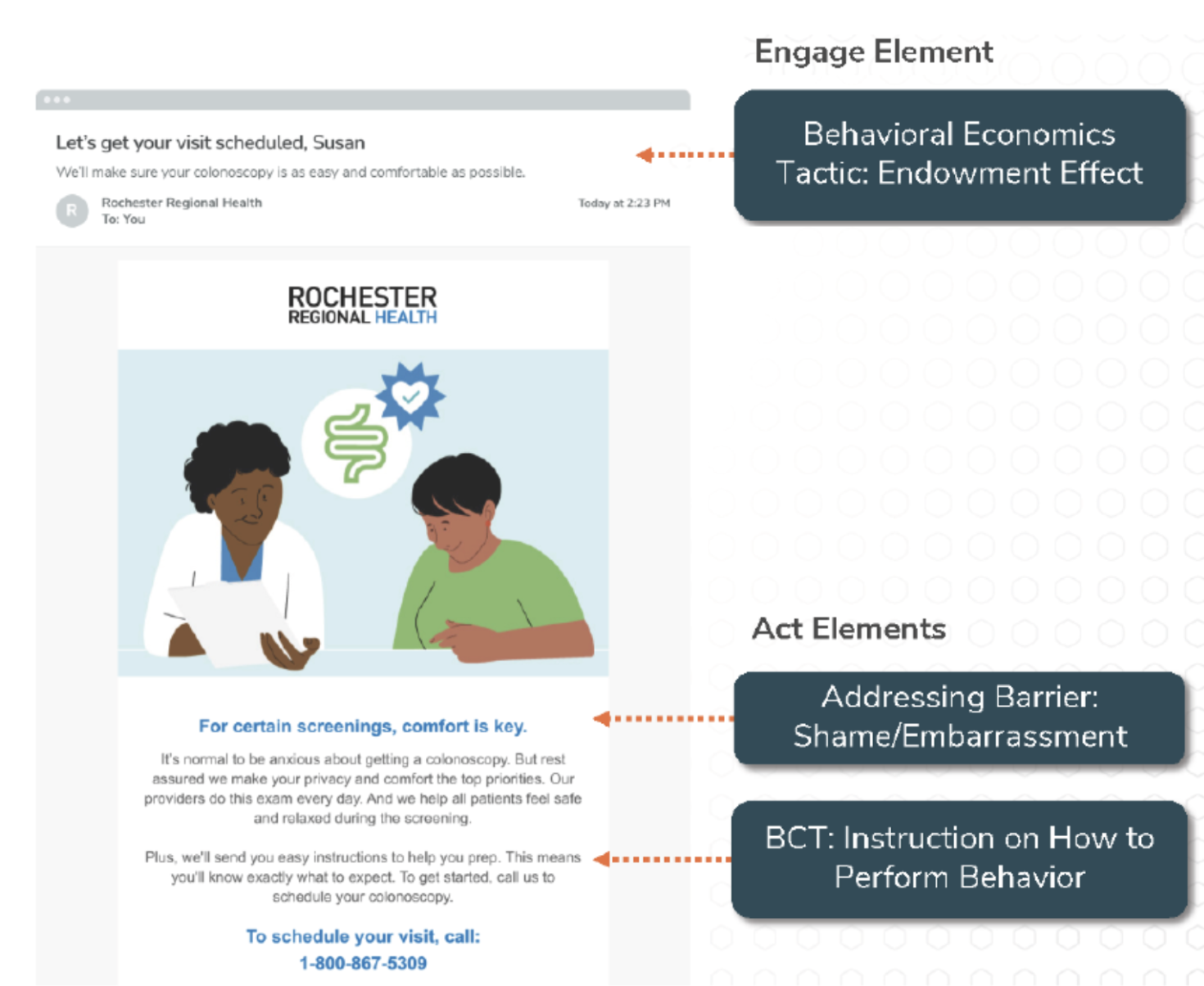

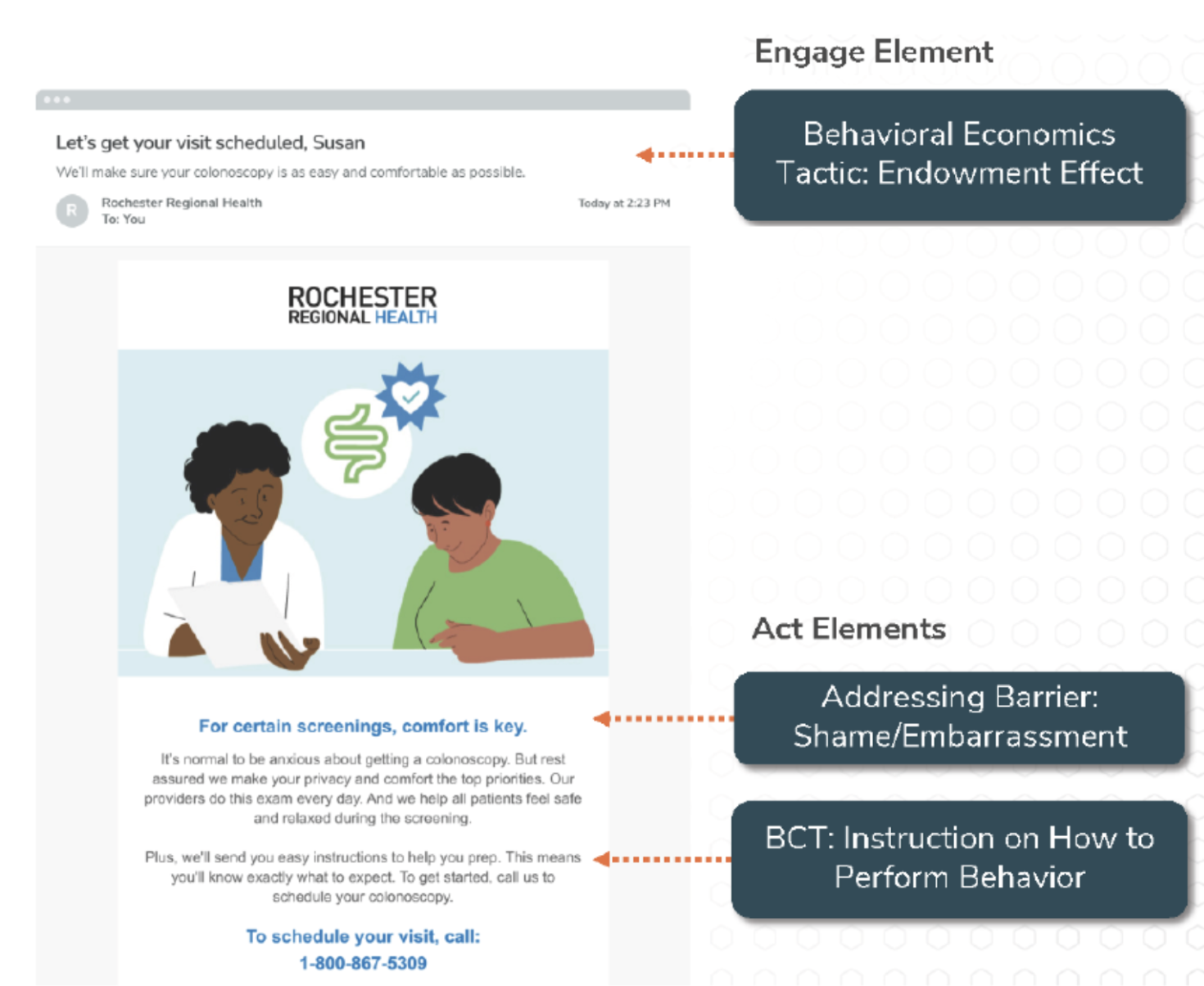

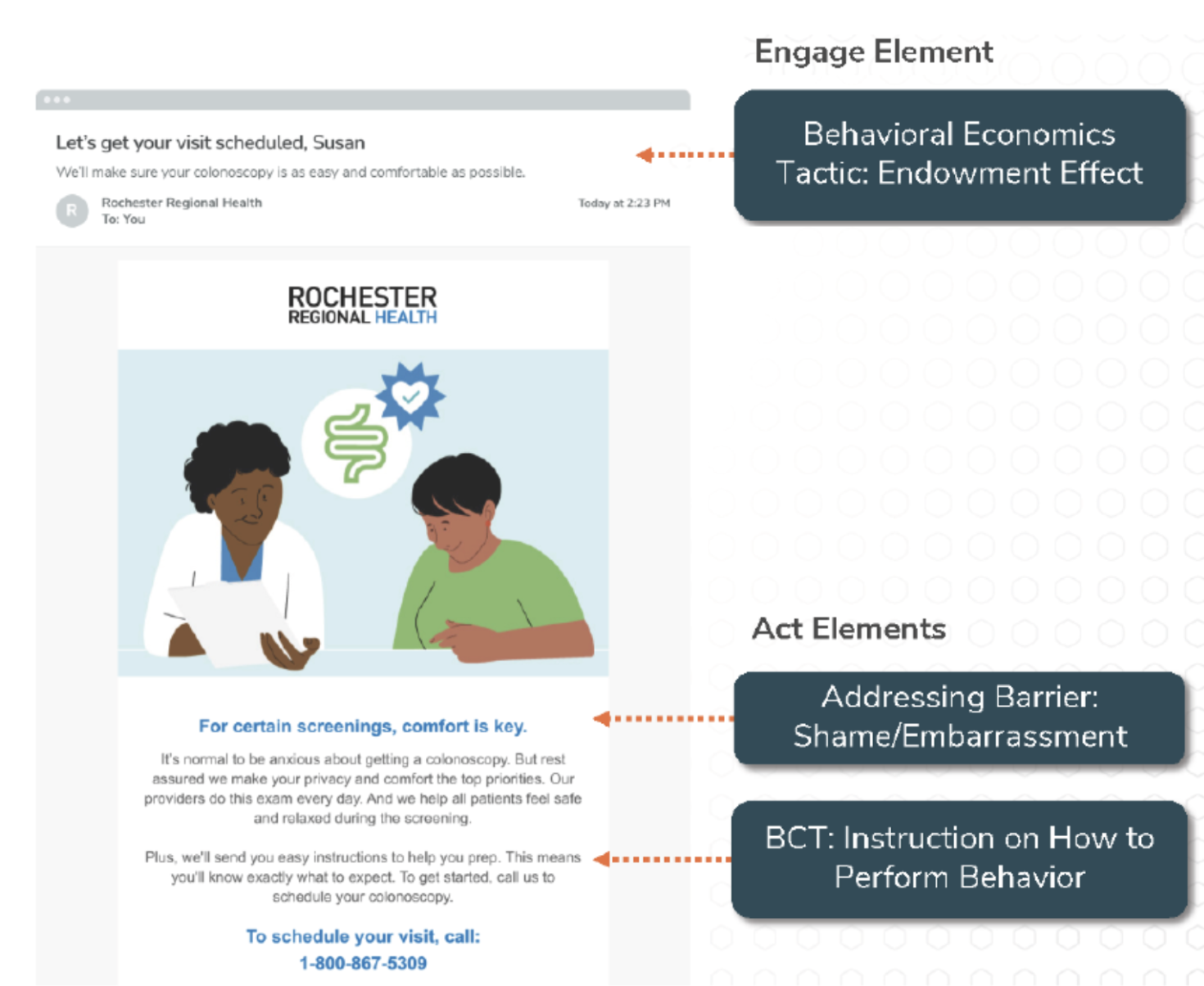

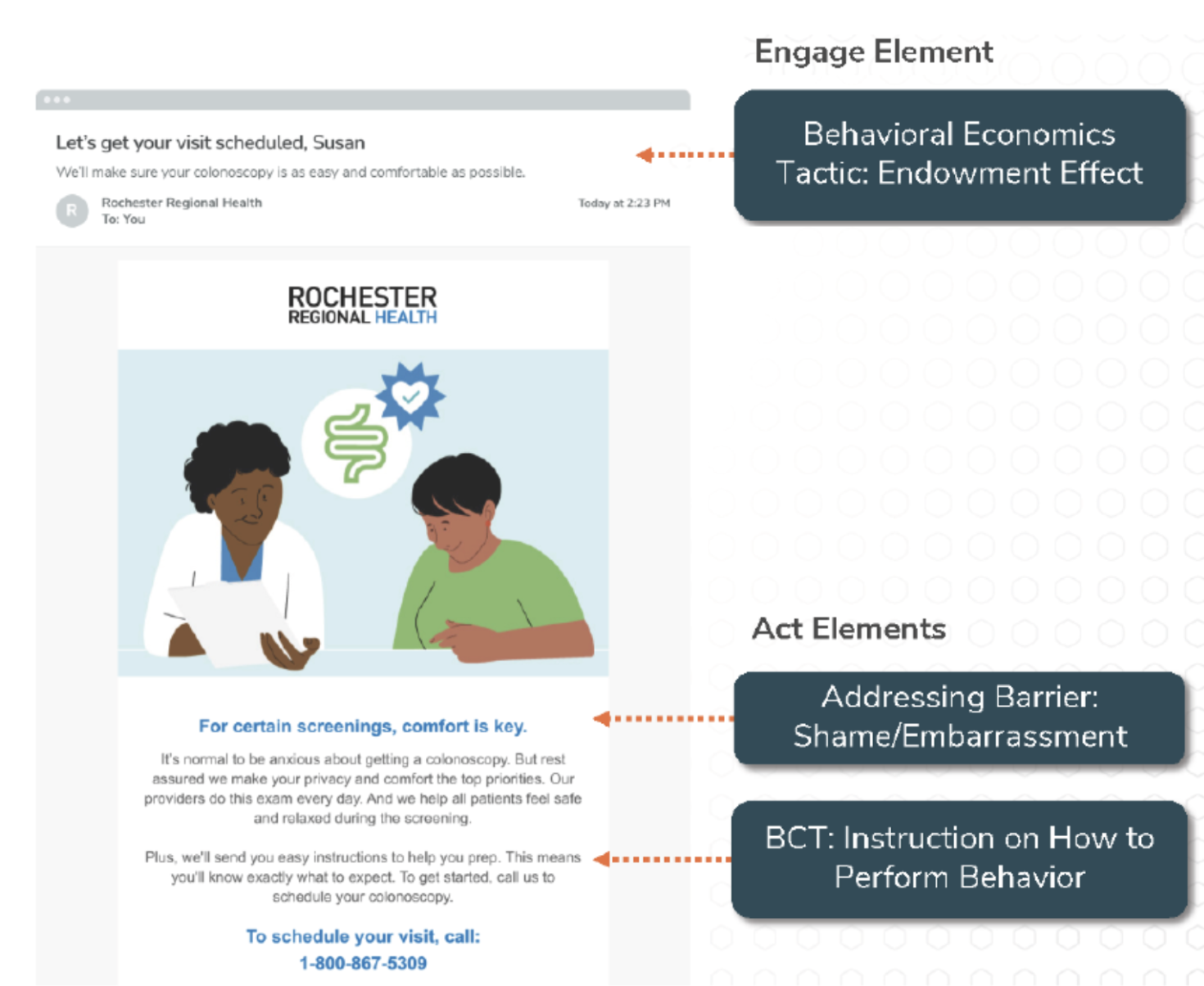

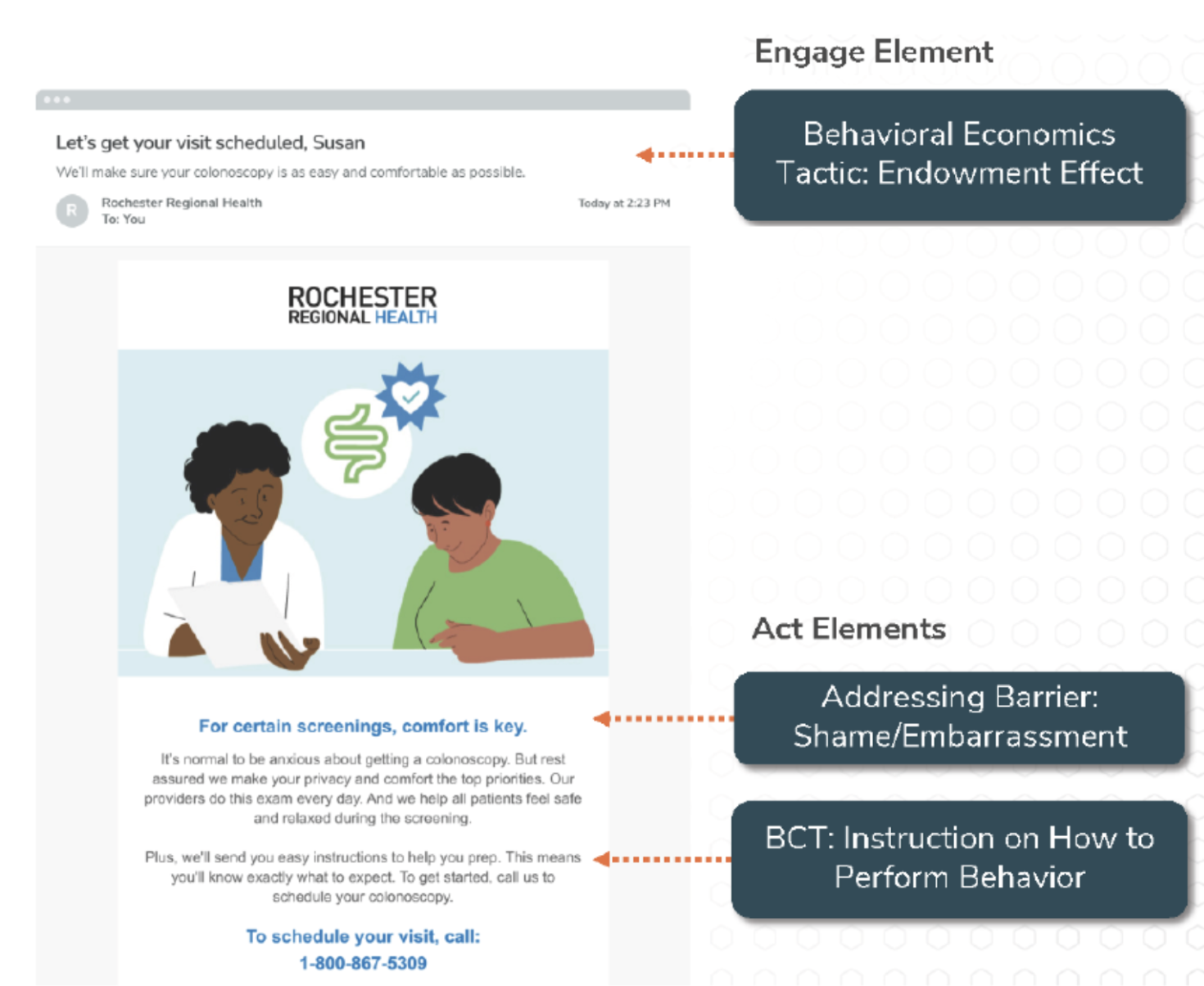

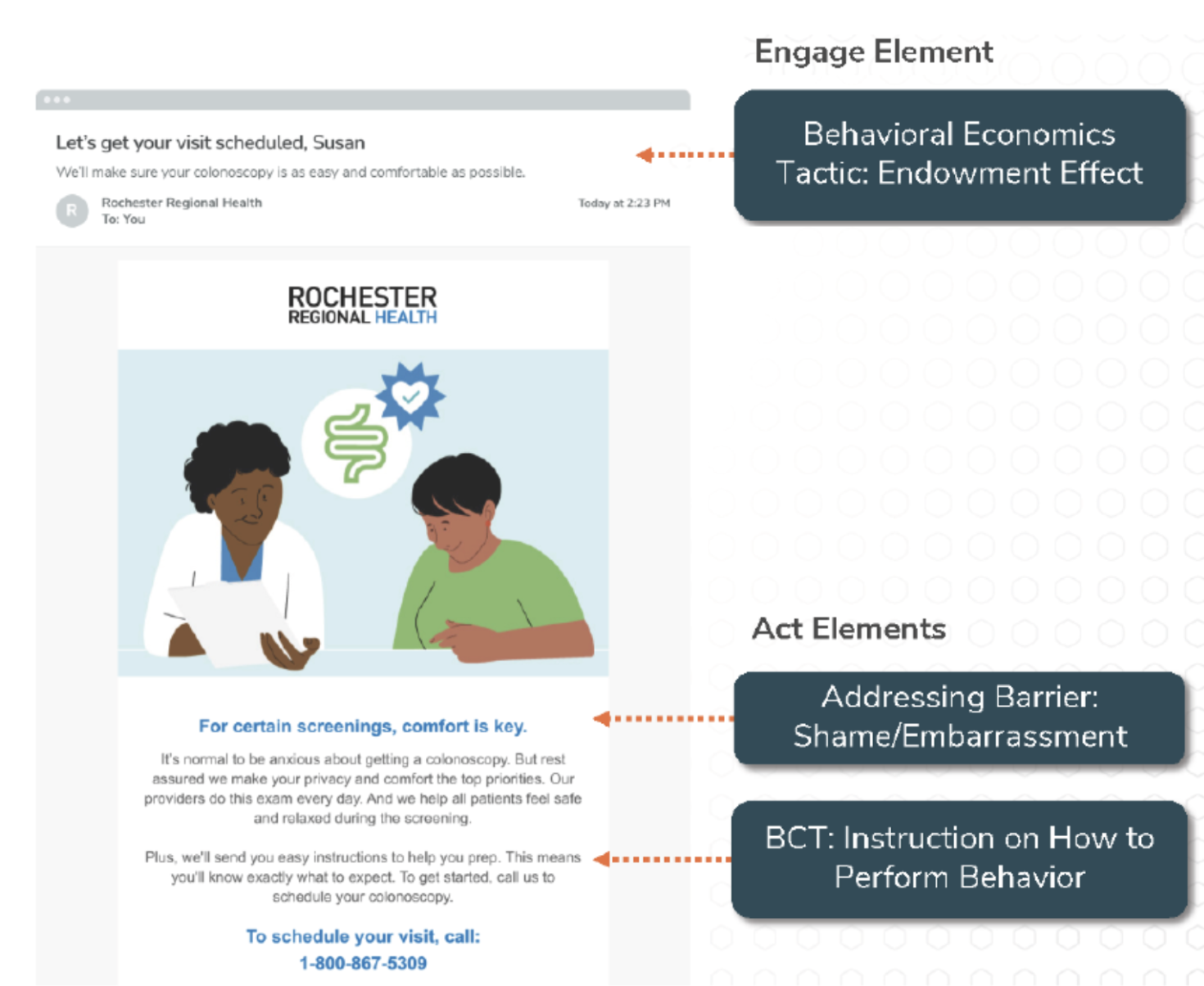

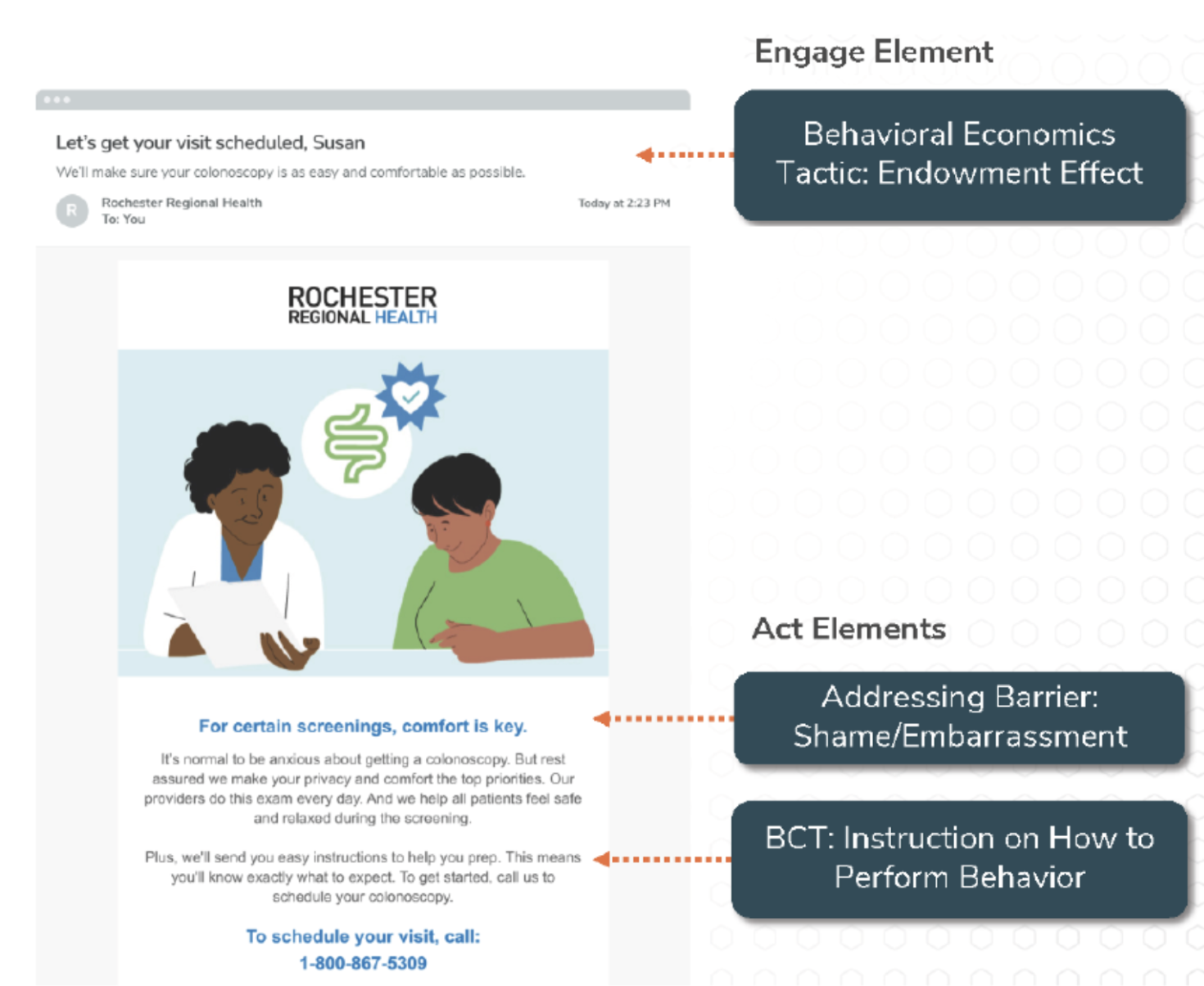

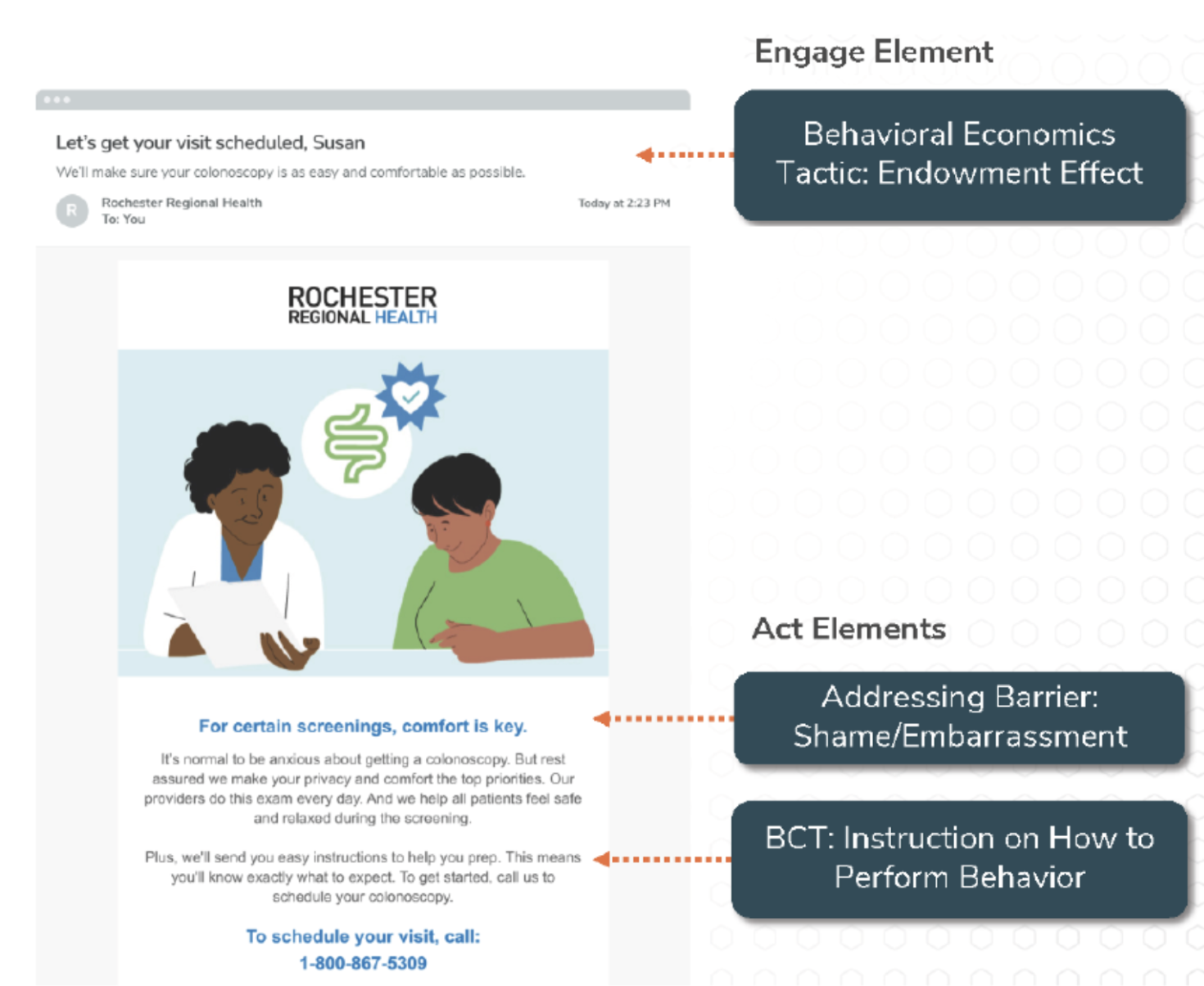

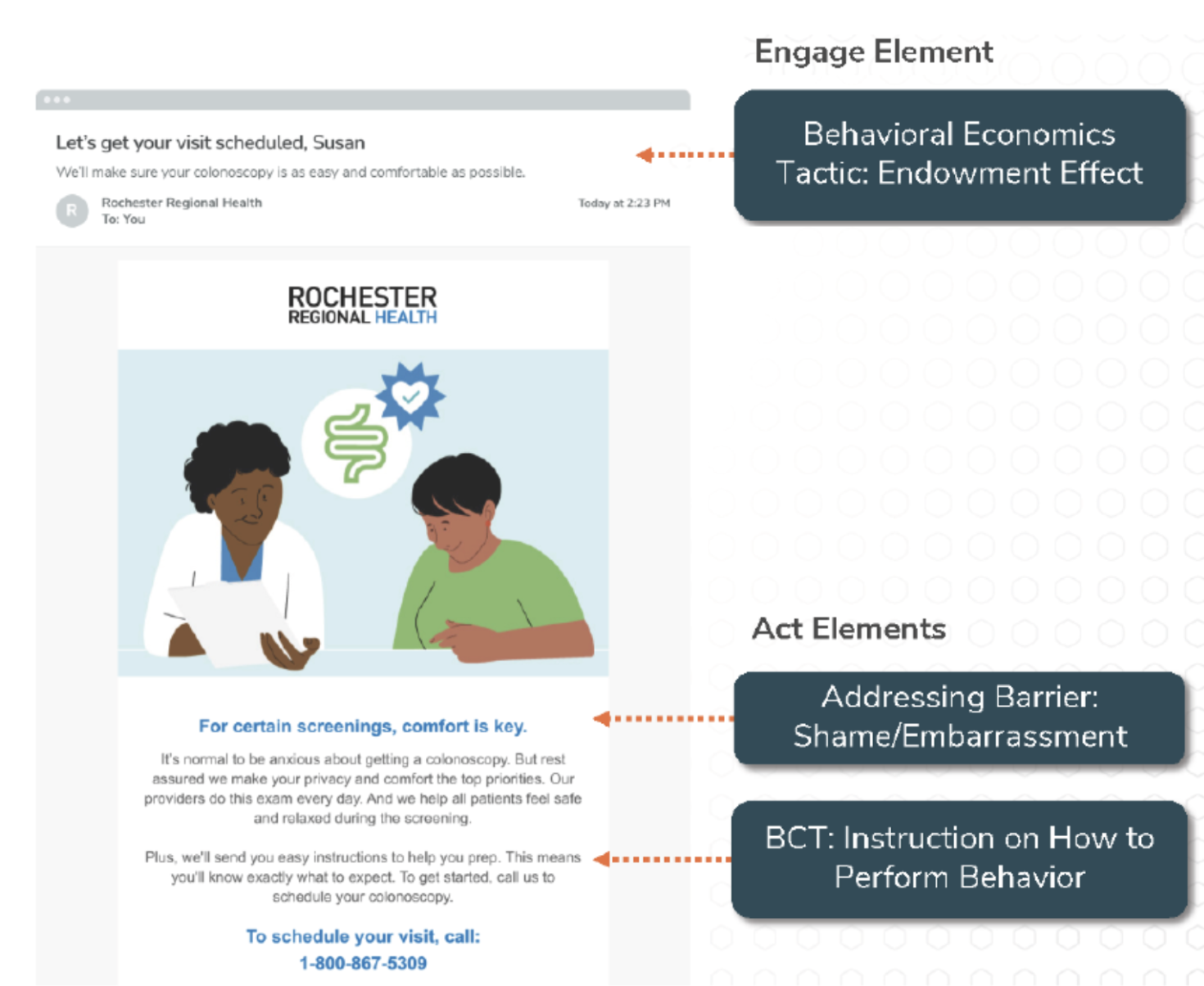

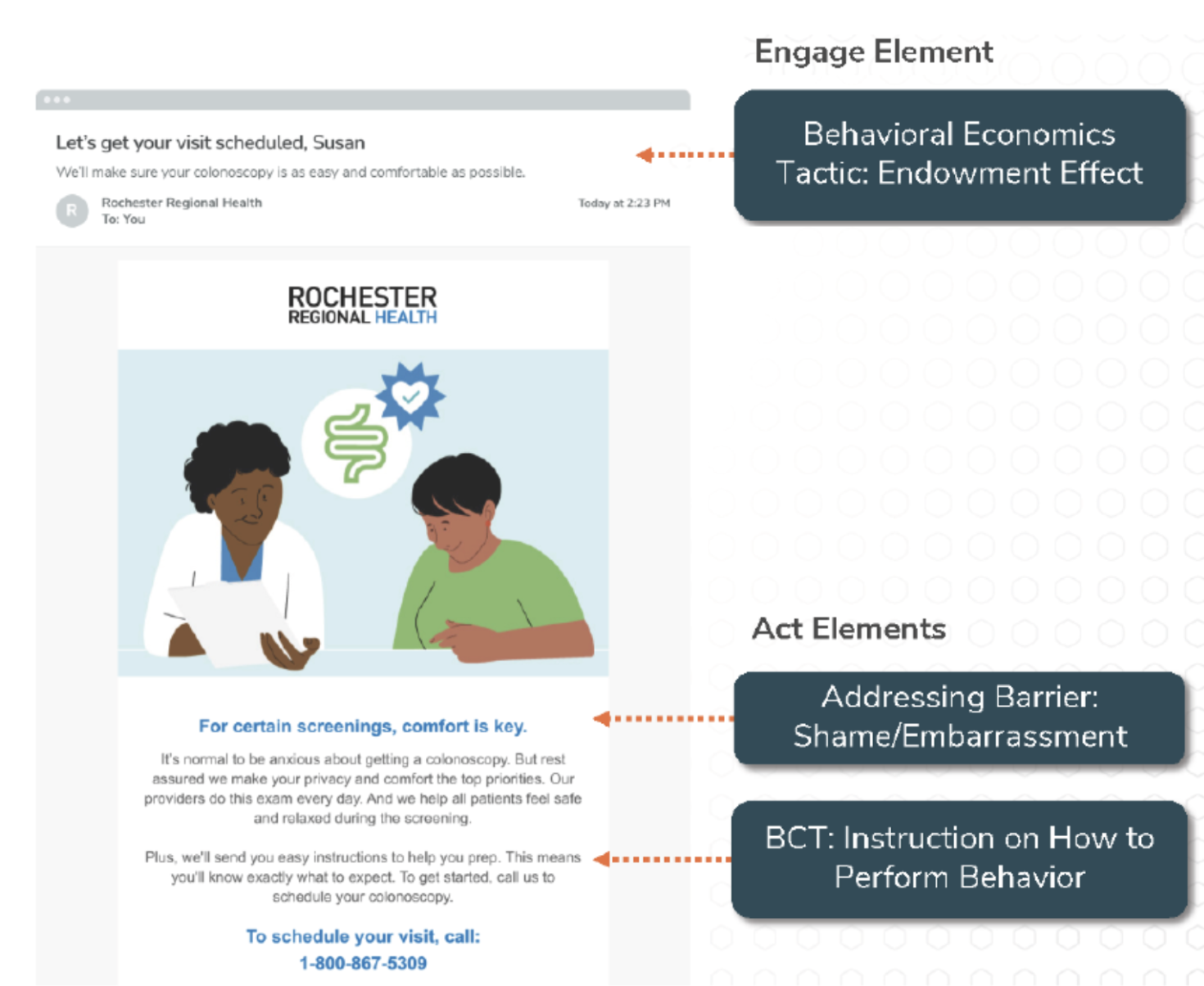

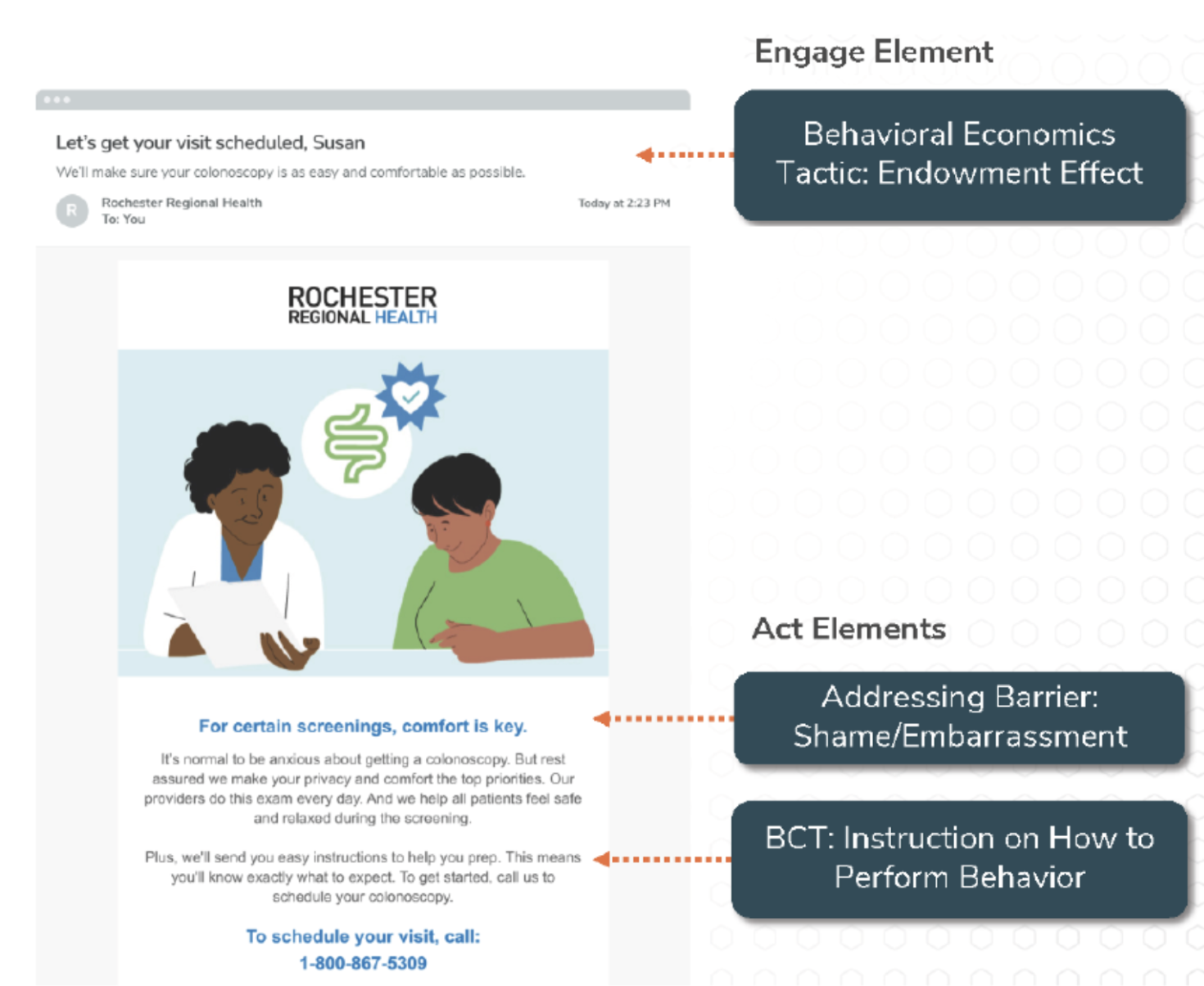

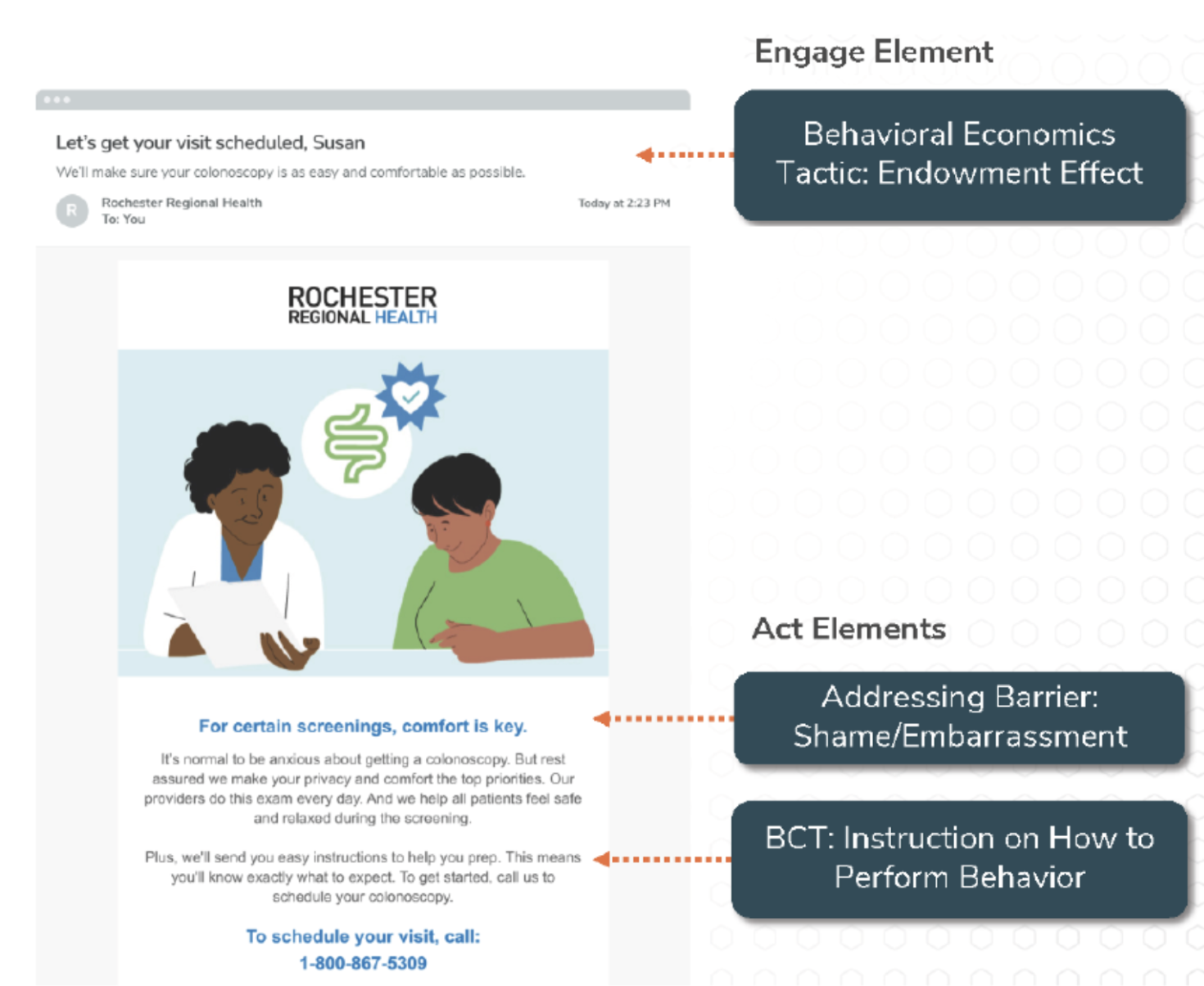

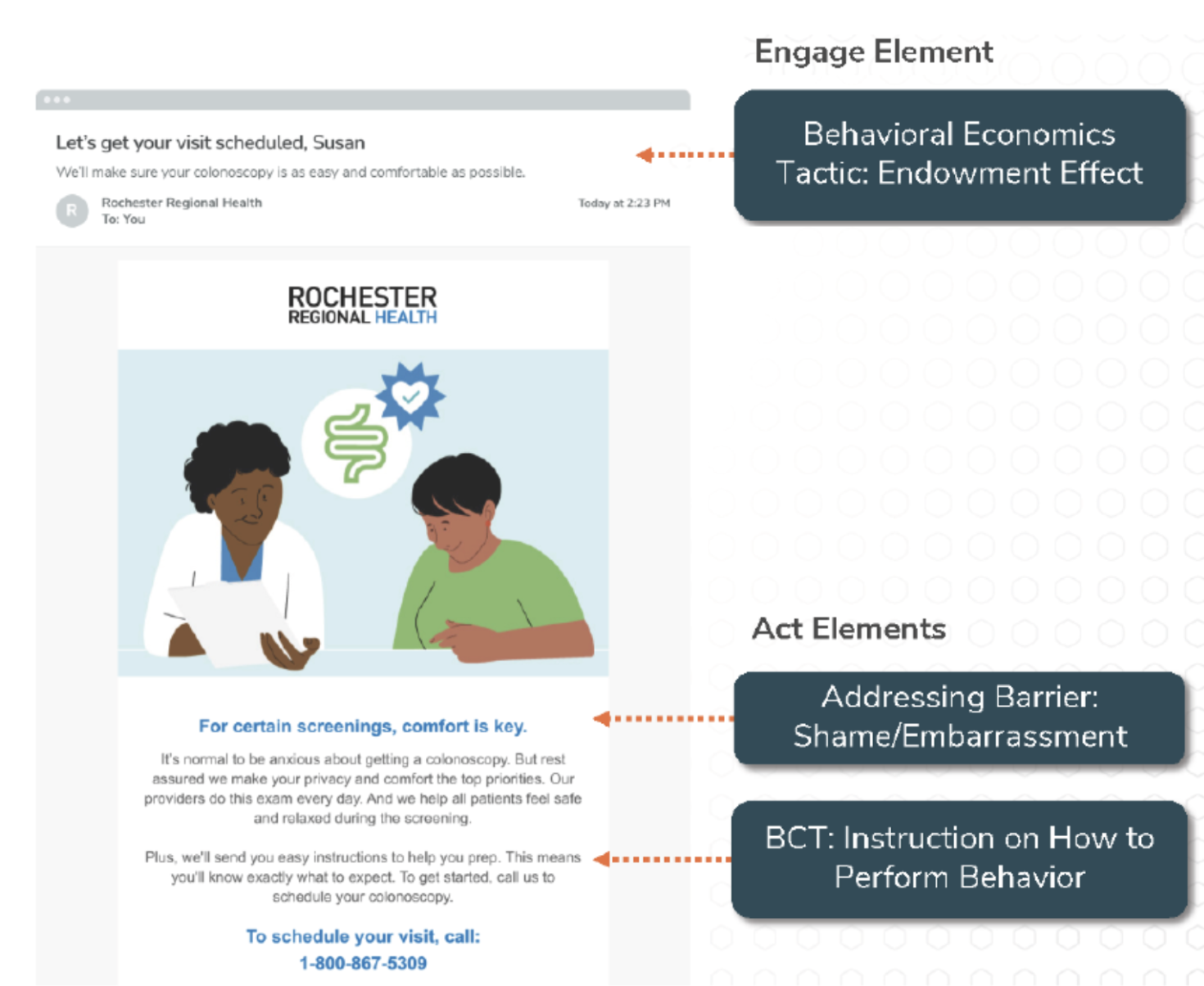

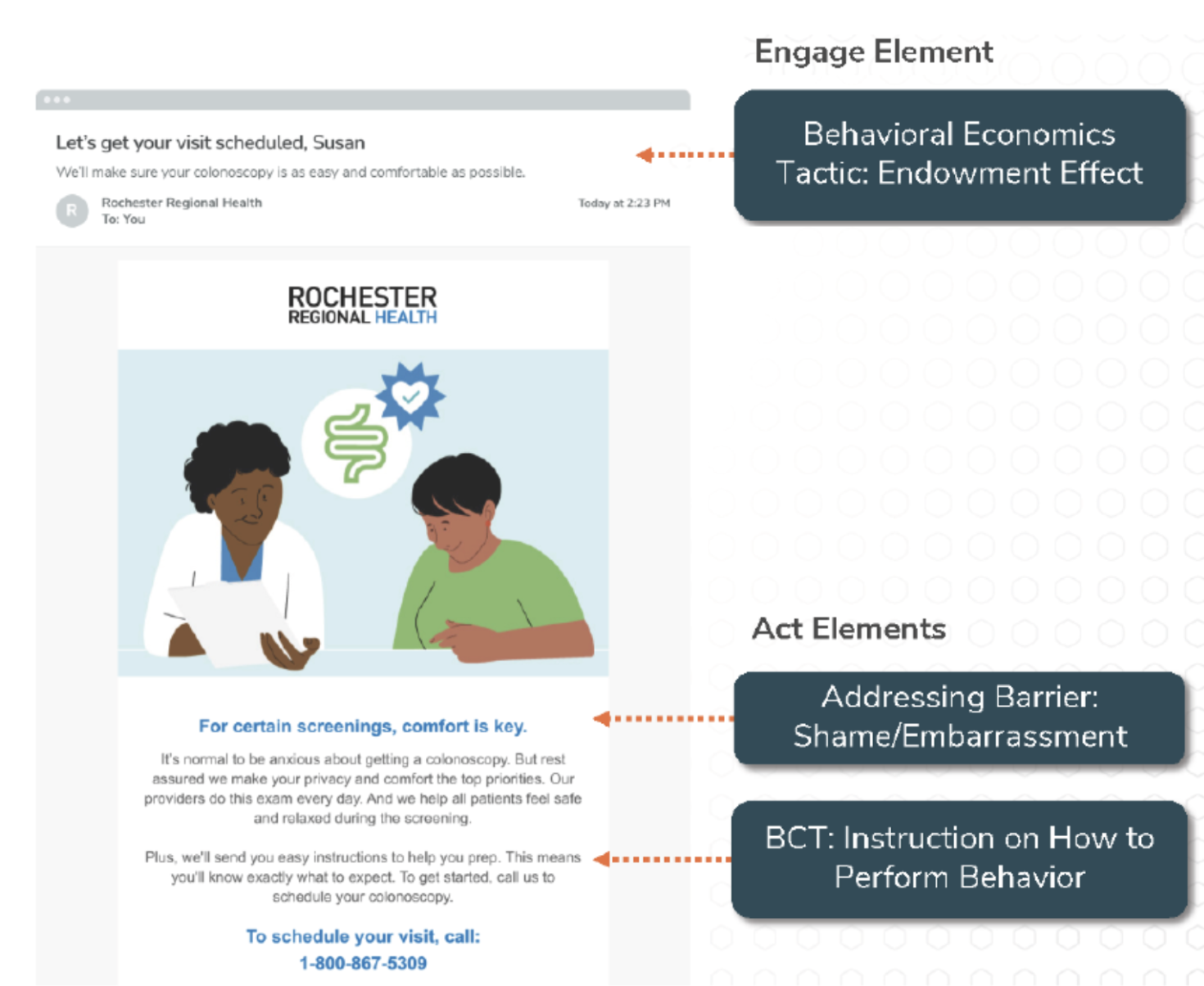

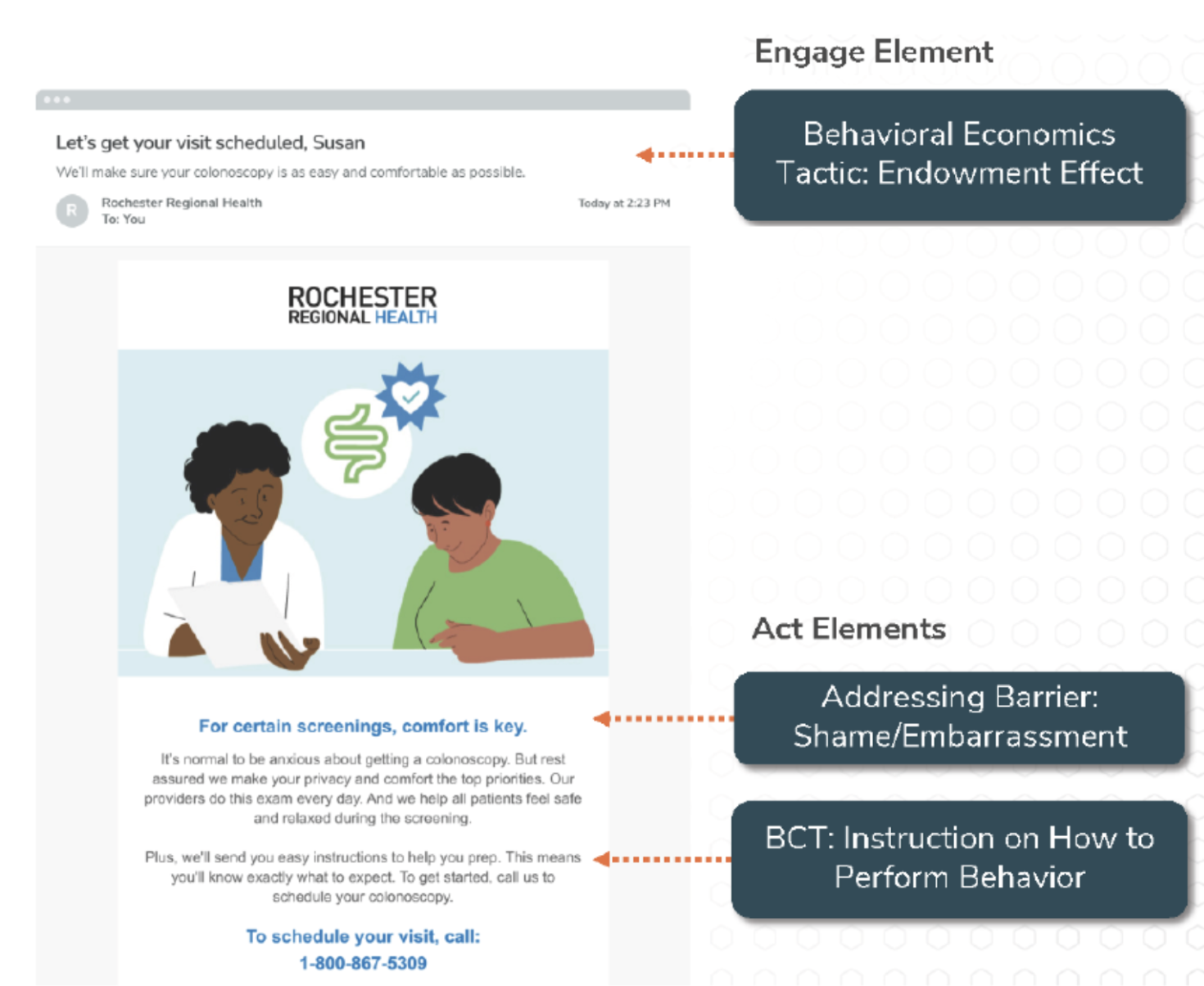

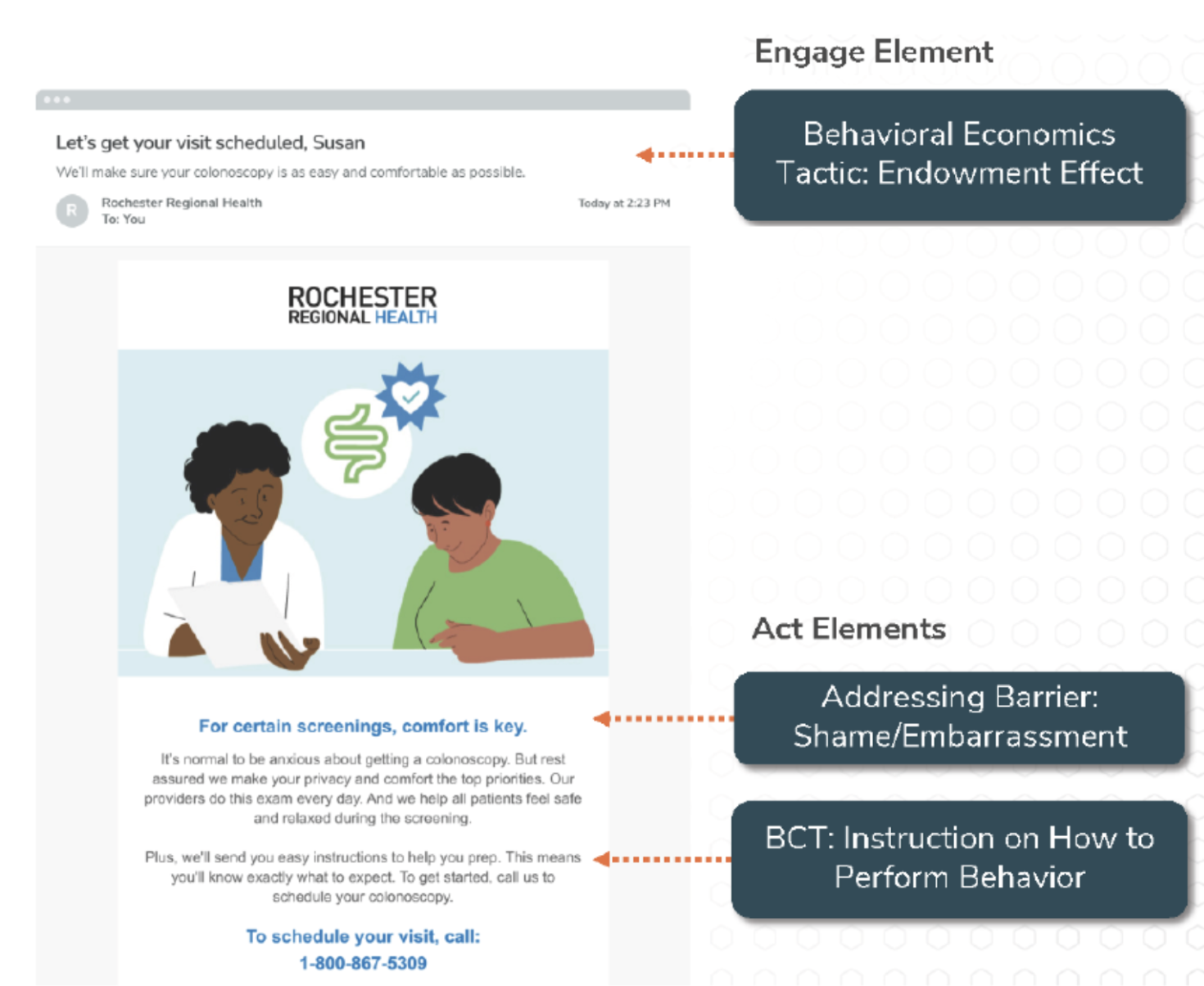

Lirio uses AI combined with behavioural science to design personalised email communications that move people towards the target health behaviours.

The engage element grabs the recipient’s attention so that they are more likely to actually open the email. It contains a behavioural science technique.

It contains a behavioural science technique.

In this example, it leverages the endowment effect by suggesting that the appointment is already theirs to arrange.

The act element addresses specific barriers to the target health behaviour and prompts the recipient to act by using different behaviour change techniques (BCTs), which are likely to influence behaviour.

These different elements are curated by behavioural experts through a process of reviewing previous evidence, conducting research and the use of COM-B and the Behaviour Change Wheel [4].

The machine learning algorithm can then navigate the repository of engage and act elements, retrieve them and assemble messages that are carefully crafted to address specific barriers and prompt action.

By feeding the recipient's behavioural response to the communication (opening the email, clicking on calls to action…) to the algorithm, the communication can learn and adapt and always be most effective for moving that particular individual to action, ensuring that the behavioural science techniques and BCTs are tailored and optimal for users over time.

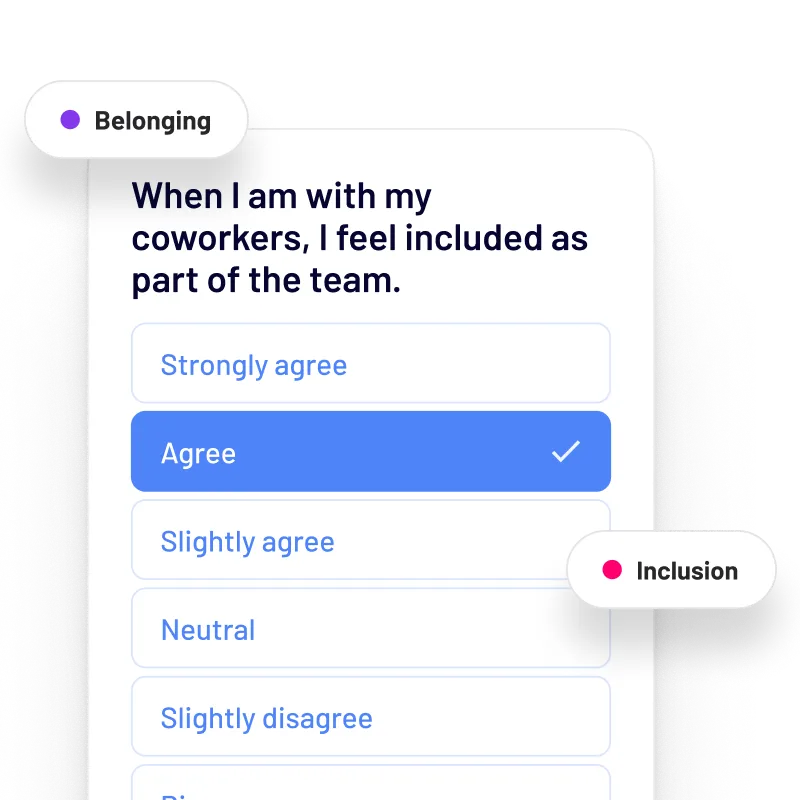

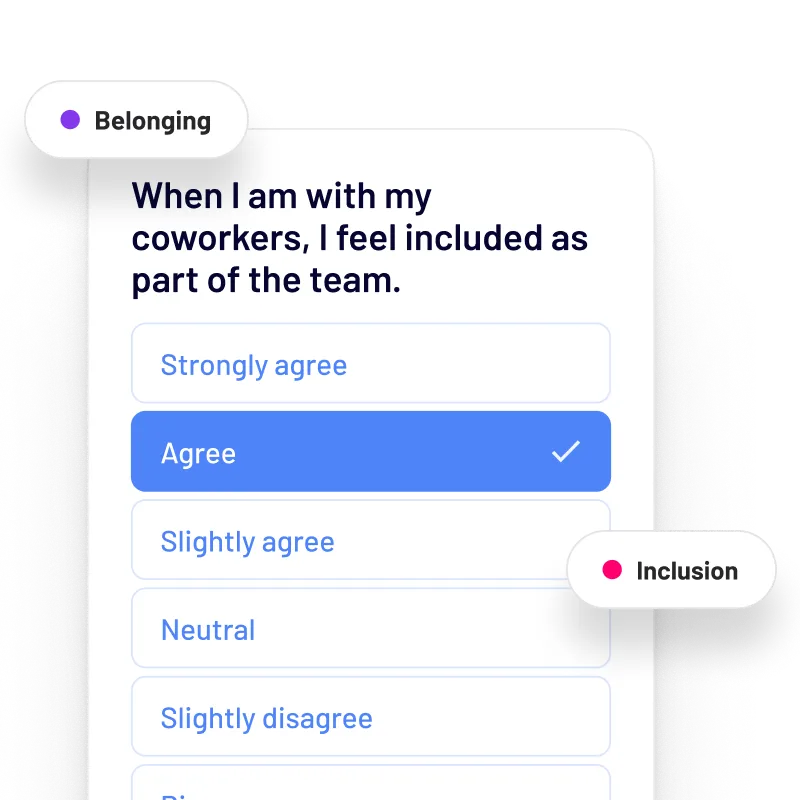

Another company that makes use of behavioural science and AI is HUMU.

HUMU combines behavioural science and AI to personalise nudges that encourage people to adopt better ways of working.

To generate these personalised nudges, HUMU gathers company insights and employee feedback and data, such as commute times.

It then uses a proprietary algorithm to identify the areas of action and recommend evidence-based nudges to make taking actions at work easier and encourage behaviour change in a way that improves employees’ happiness, productivity and retention.

For example, during the onboarding process HUMU will gather data on the different skills that employees want to get as part of their personal development plan.

Based on that data, their nudge engine will come up with tailored prompts to encourage actions that should lead to the desired outcome.

This could take the form of a specific and actionable nudge to the employee’s line manager right before a one-to-one meeting to make sure that they address the specific actions that would support that employee in gaining the skills that they say they wanted to gain…

… or a nudge to the employee before the meeting with some useful prompts to address and navigate a conversation about personal development with your line manager.

These examples show that AI makes it possible for interventions to be assembled and delivered at speed and scale, but AI is no longer only at the hands of a few companies.

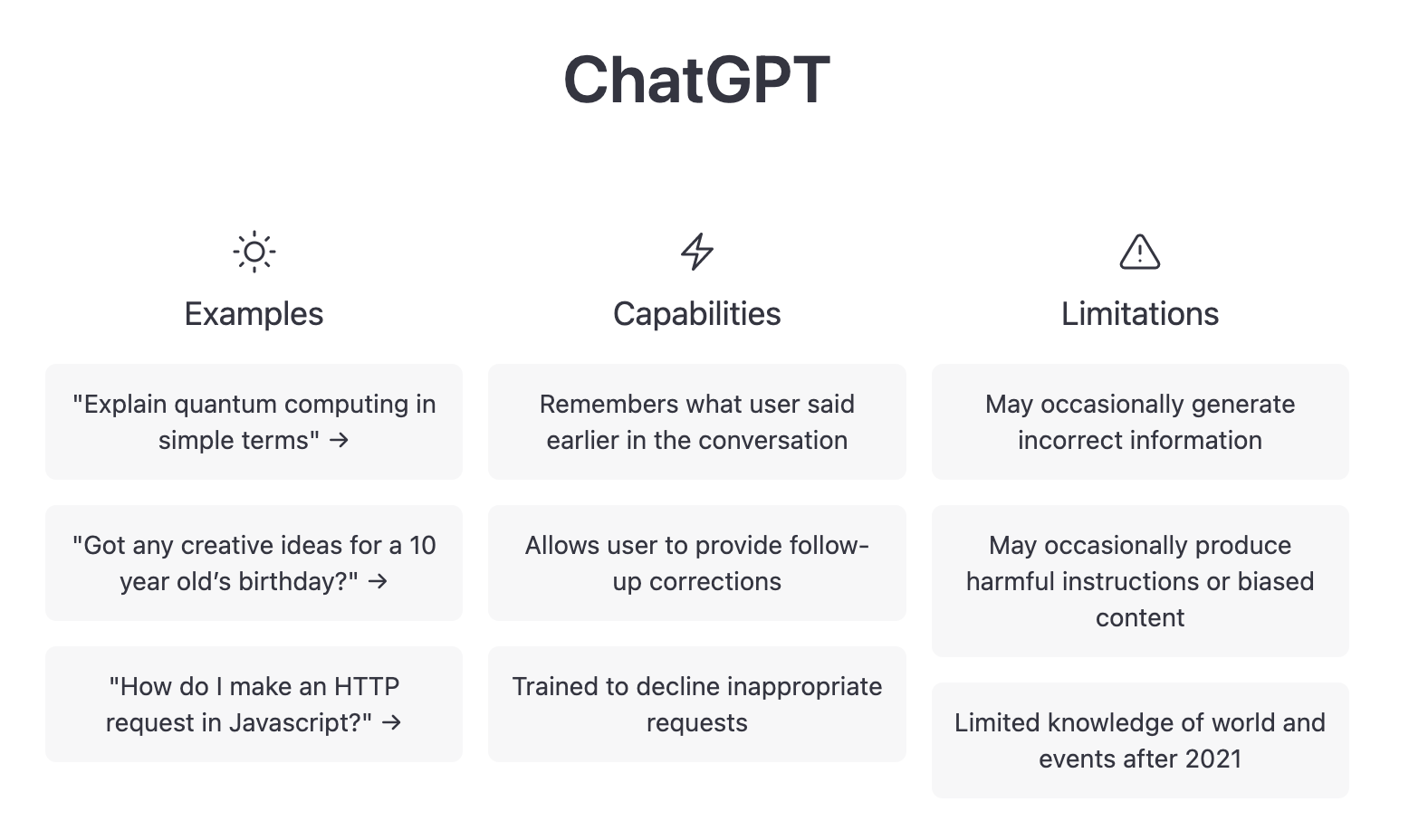

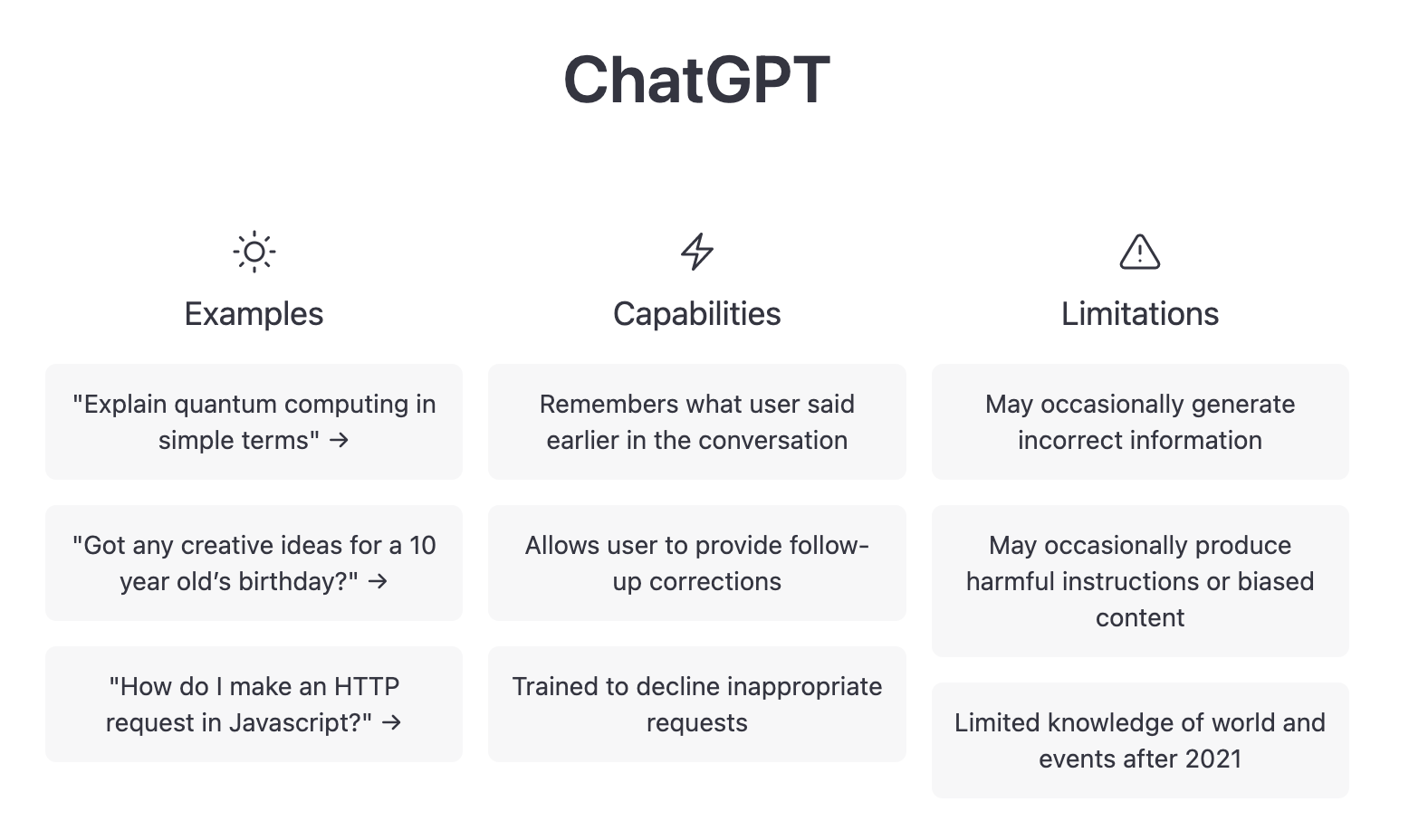

One of the Open AI tools that has recently taken the world by storm is ChatGPT

ChatGPT is a LLM based chatbot that is able to interact with prompts in a conversational way, answer follow up questions, ask for more information in order to provide a response or challenge incorrect premises.

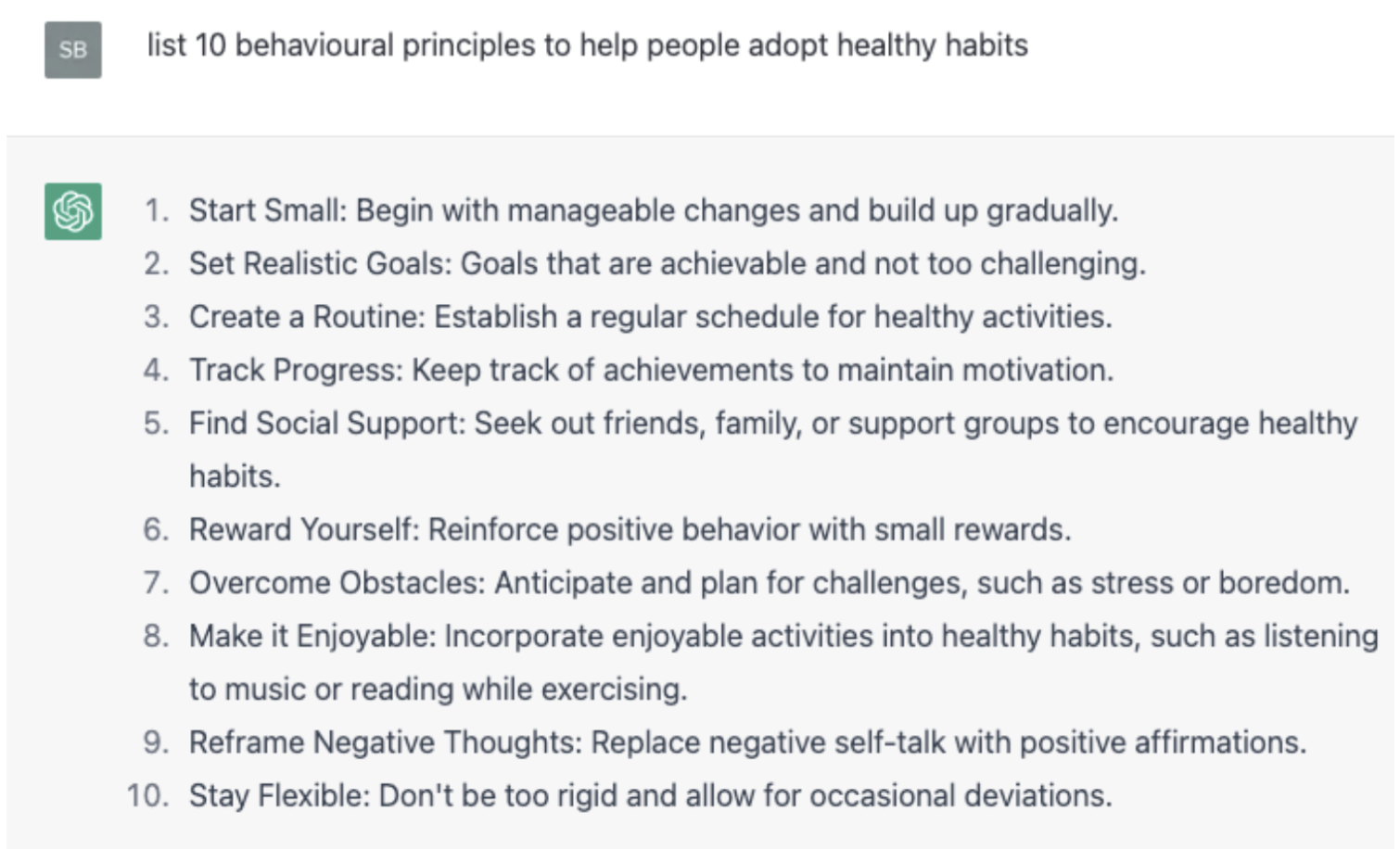

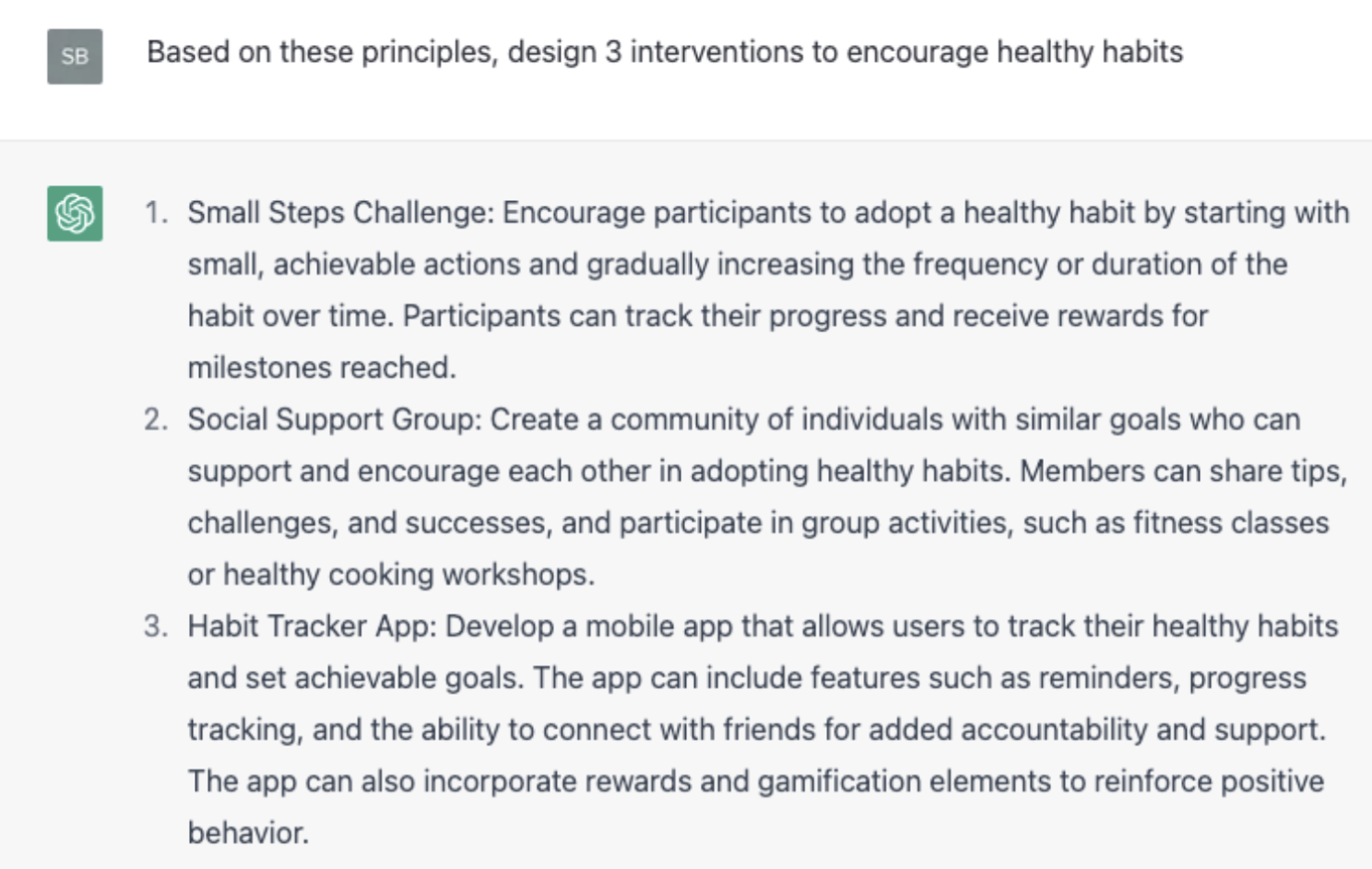

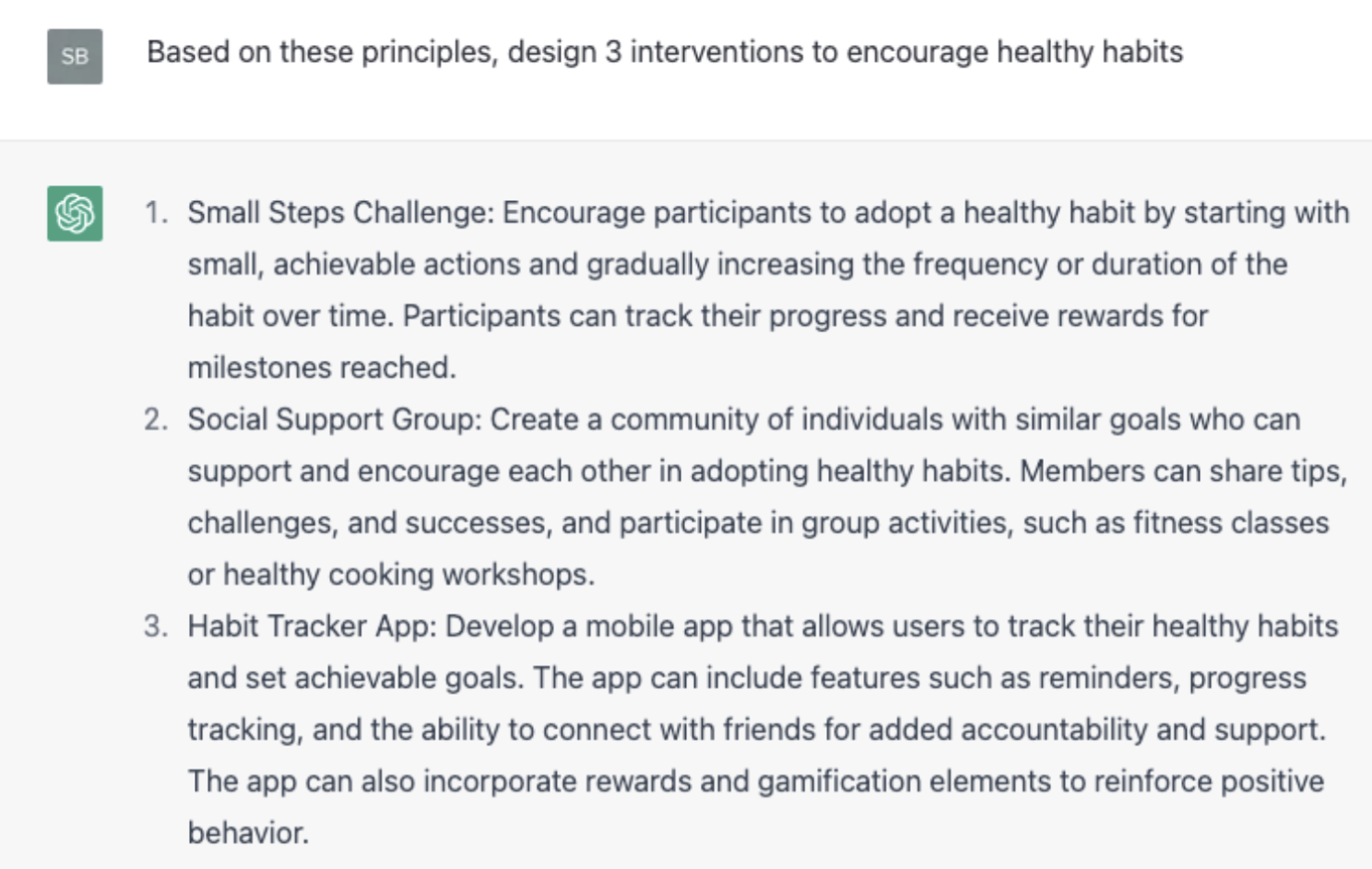

For example, given a prompt, such as “list 10 behavioural principles to help people adopt healthy habits”, ChatGPT can come up with a pretty reasonable series of recommendations:

And it even brainstormed a few intervention ideas based on the list of recommendations

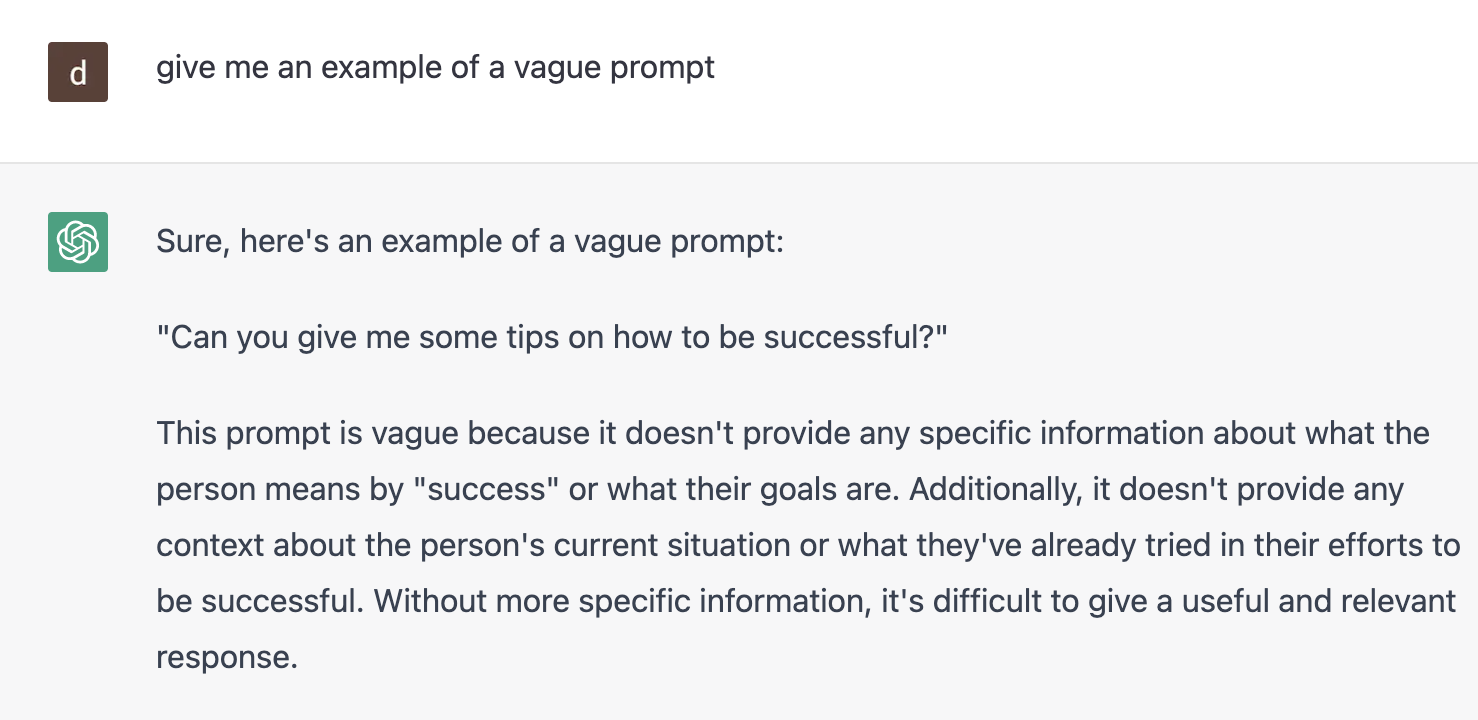

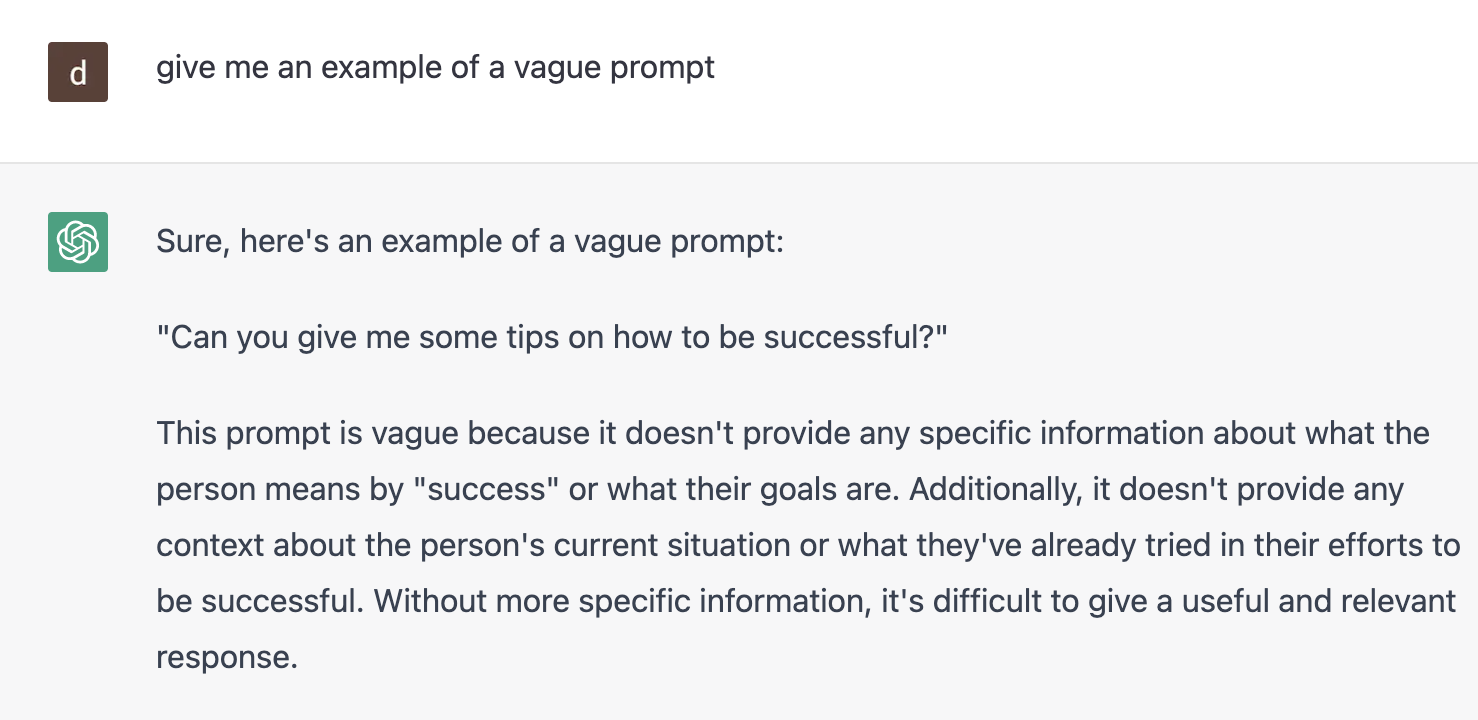

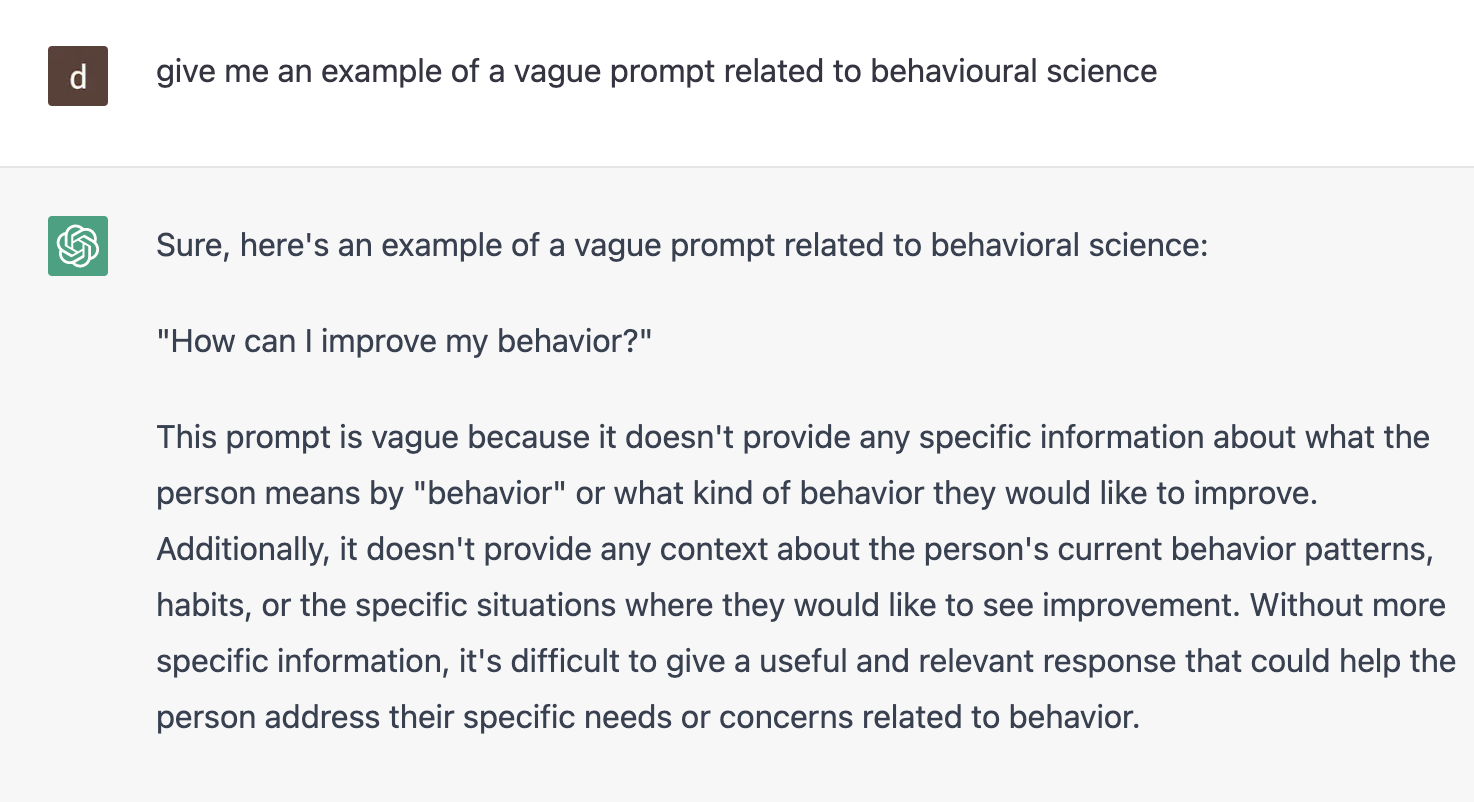

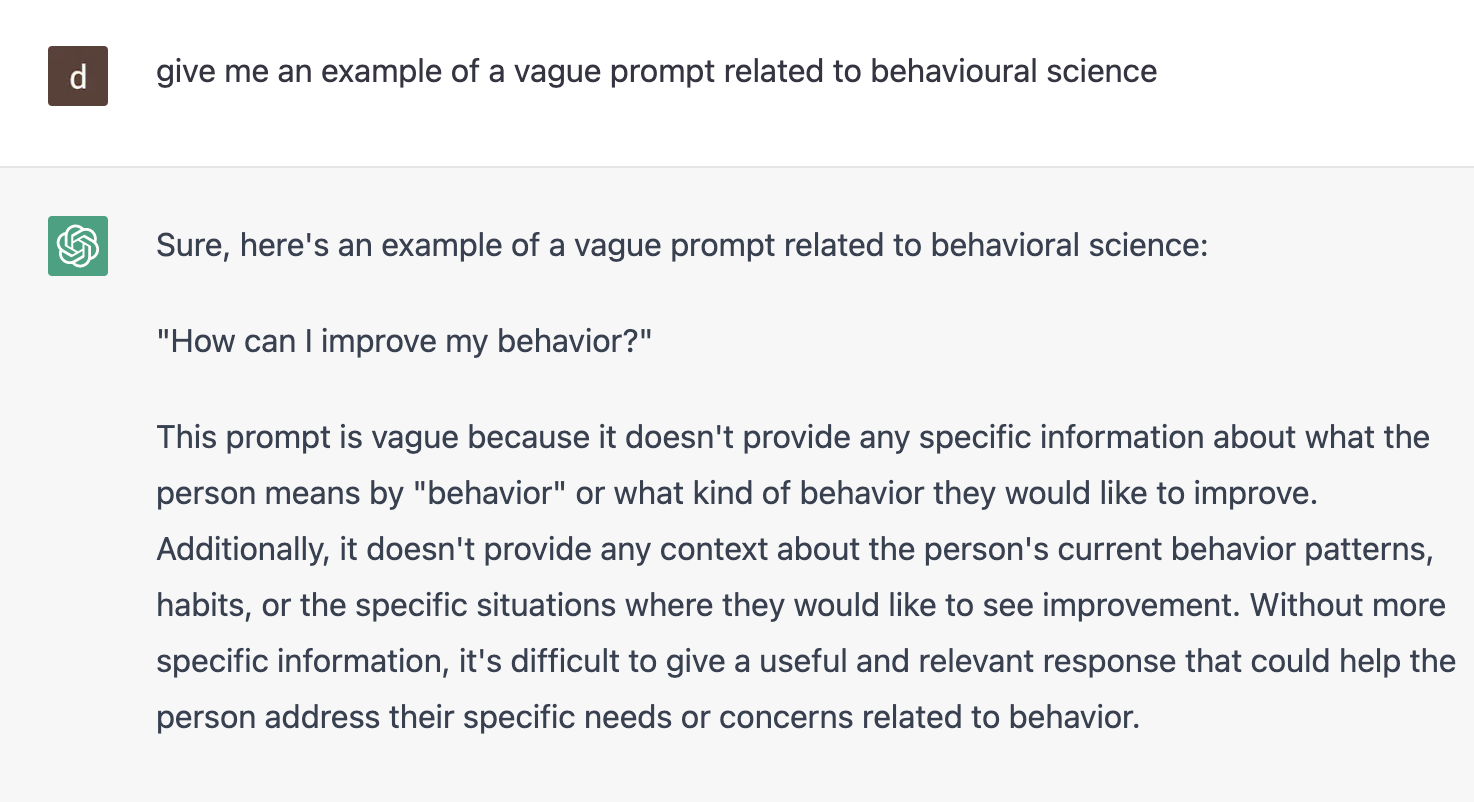

It’s also important to understand that the quality of ChatGPT’s output (including its relevance and veracity) is directly related to the quality of the prompt that we feed it...

...prompts that are too vague or imprecise will give vague and imprecise results...

...so prompt engineering is essential to maximise ChatGPT’s potential (but this is a topic for another case study…)

For now we can only speculate about how the recent rise of open AI tools may impact the way in which we approach behavioural science.

But it’s clear that they are democratising the use of AI and popularising it to new levels.

However, it’s crucial that we proceed with caution and consider the potential downsides of AI development…

AI and behavioural science are a powerful combination with a potential for flexibility and scalability beyond human capability.

But with great power comes great responsibility, and algorithms are only as good as the data they are fed.

In the best of cases, poorly developed algorithms might lead to interventions that are not right for the intended audience and fail to achieve the intended outcomes.

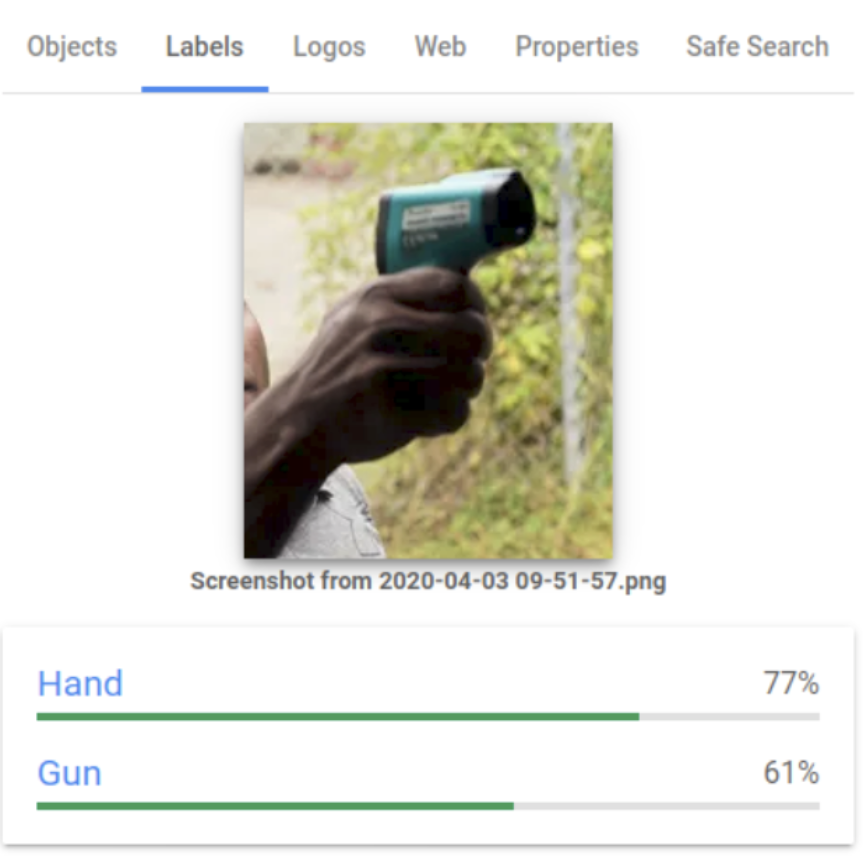

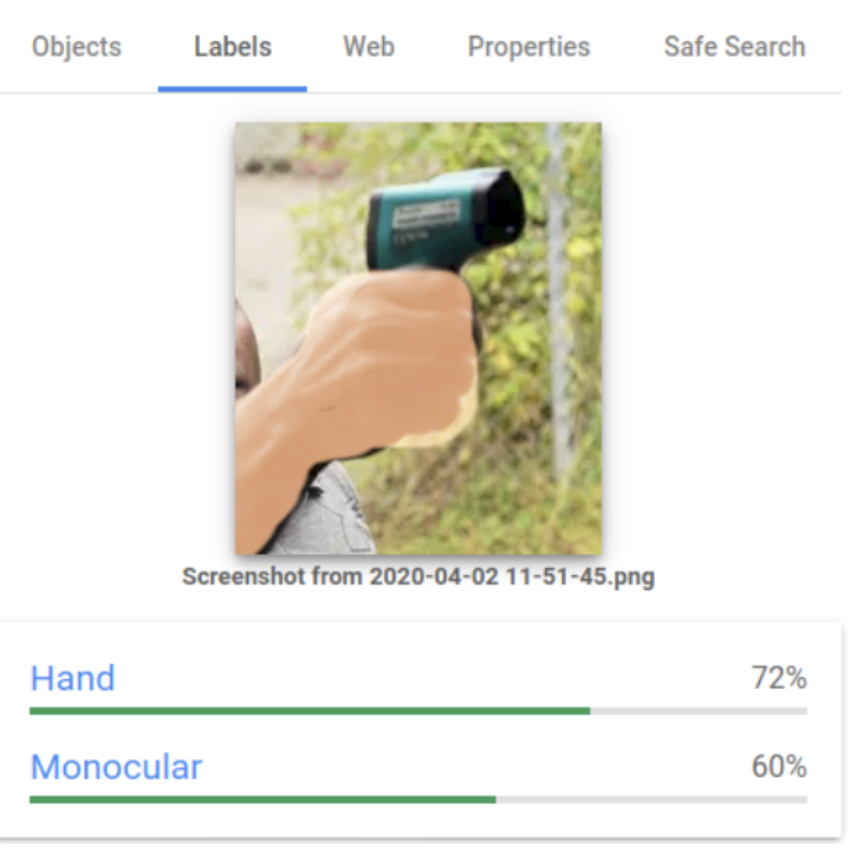

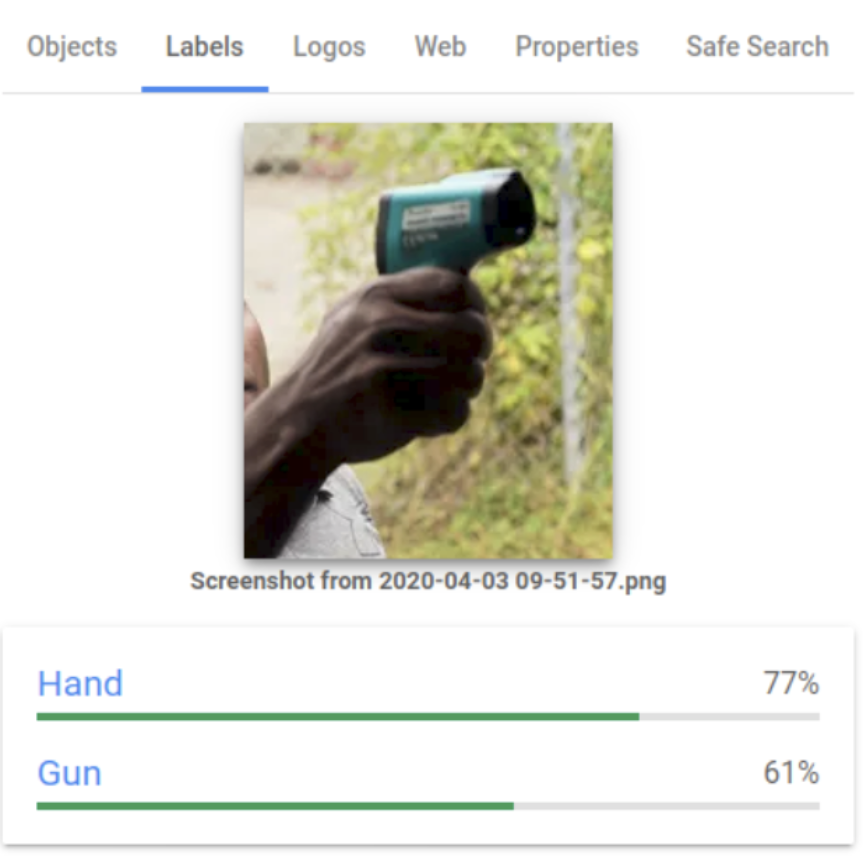

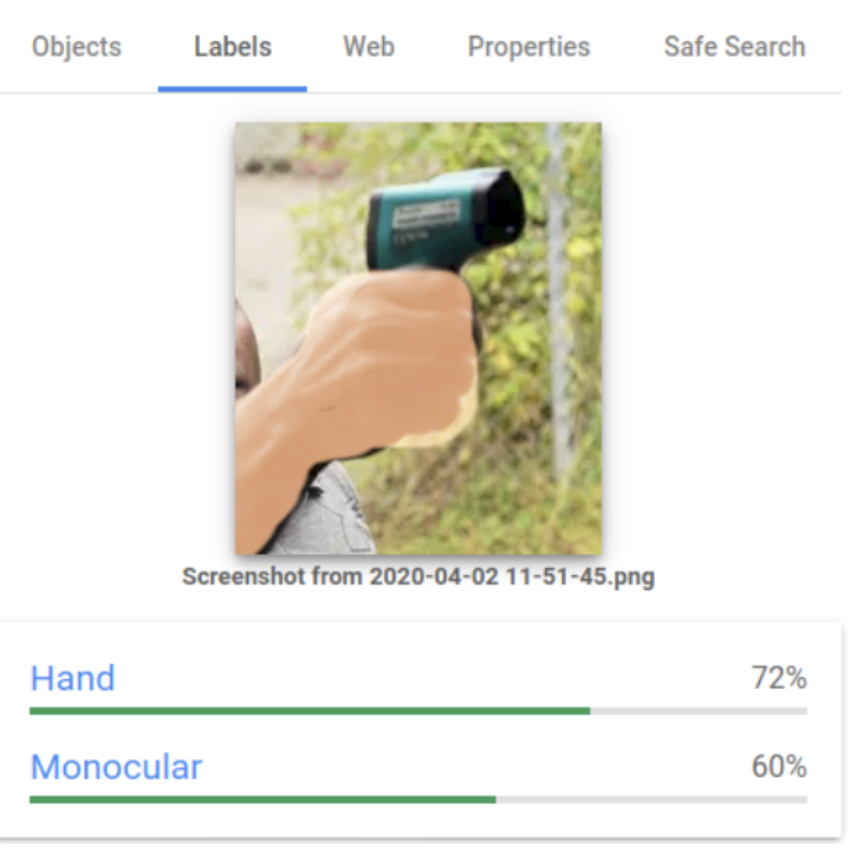

A fun example of this comes from Google’s dream project, which created an algorithm that saw nightmarish dog faces everywhere after being trained on a dog-heavy dataset [5].

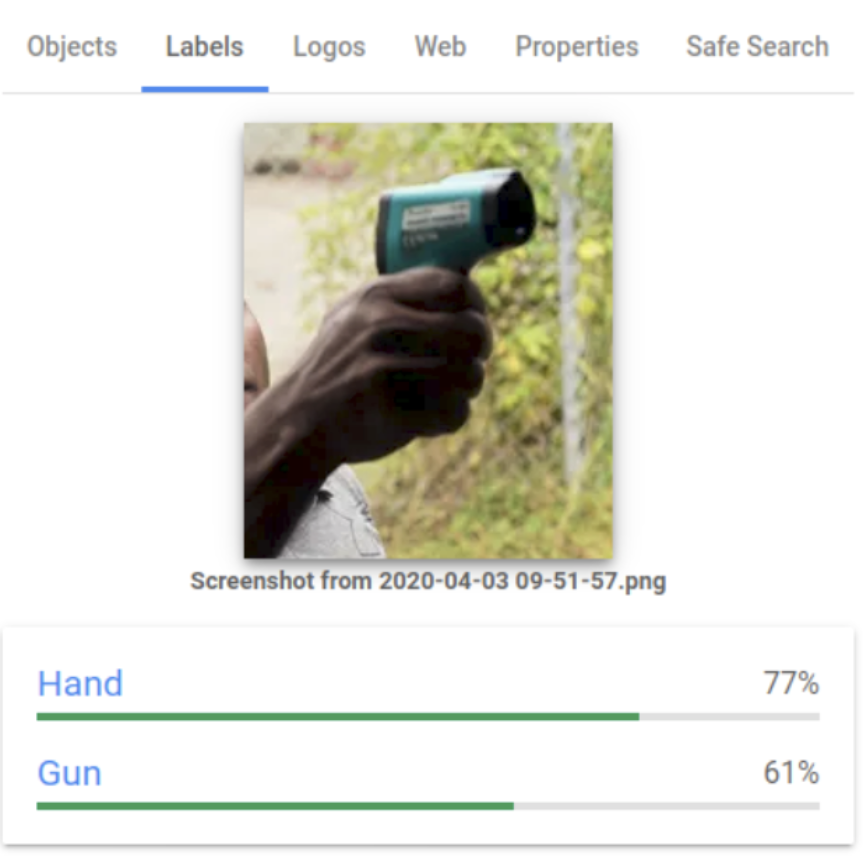

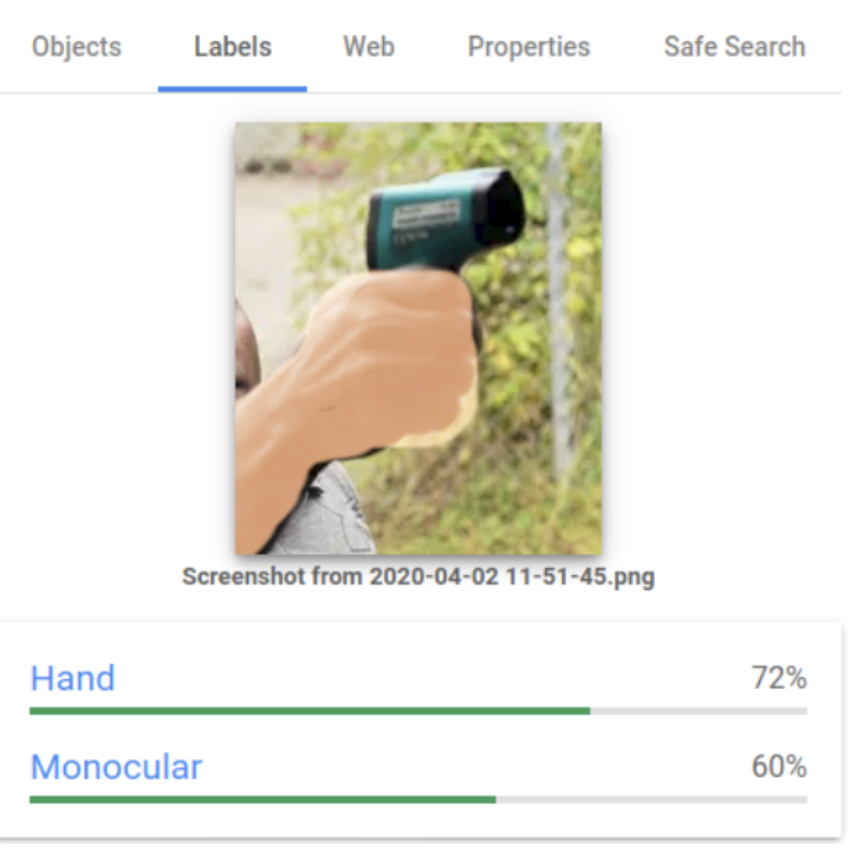

In the worst case scenario, these biased datasets might exacerbate known biases and help perpetuate historical inequalities.

There are countless examples of AI failure involving racism, misogyny or bias in the detection of medical conditions [5-9].

This becomes particularly critical when AI is used to help make decisions that can have catastrophic consequences or someone’s life, such as predicting who is more likely to commit a crime [10] or deciding who is entitled to government support [11].

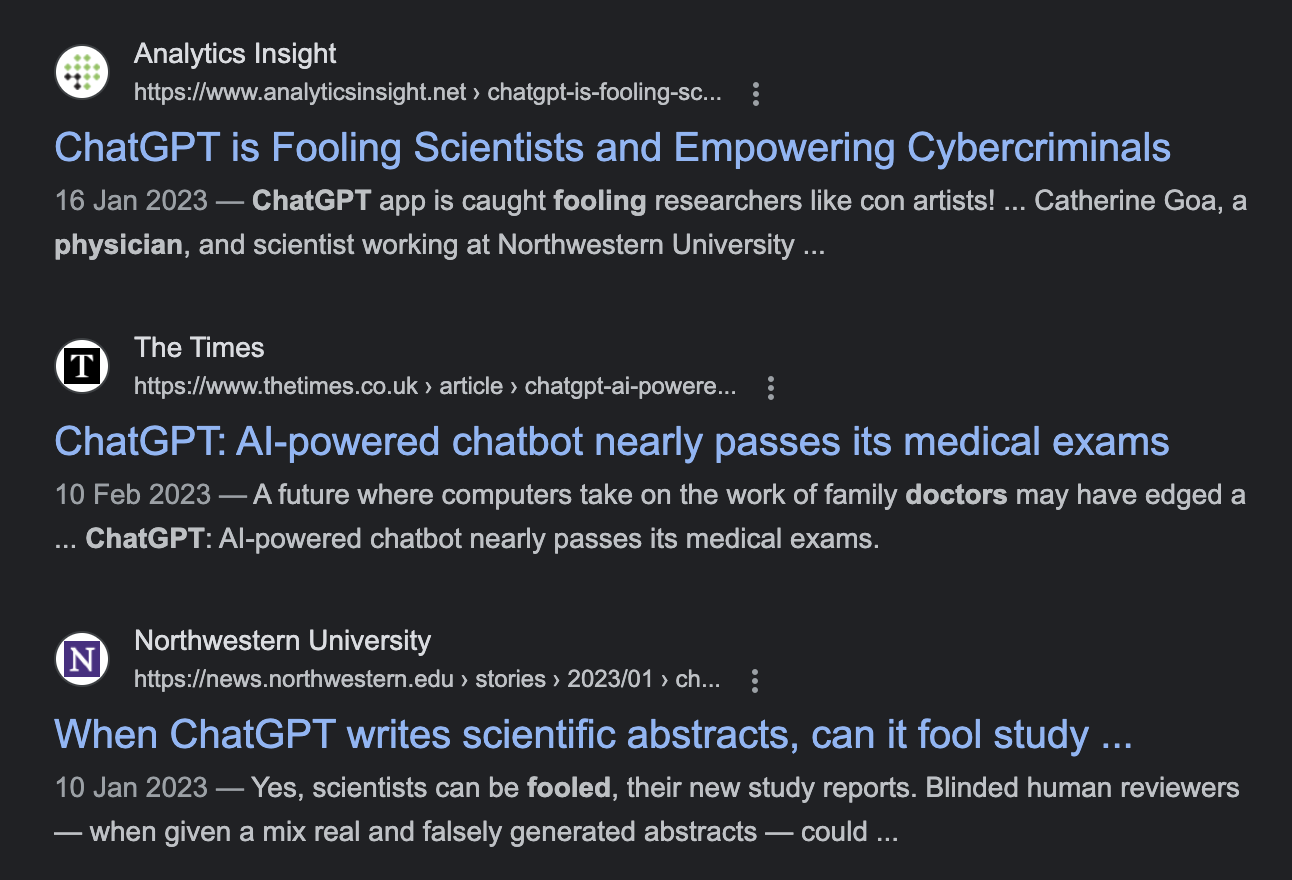

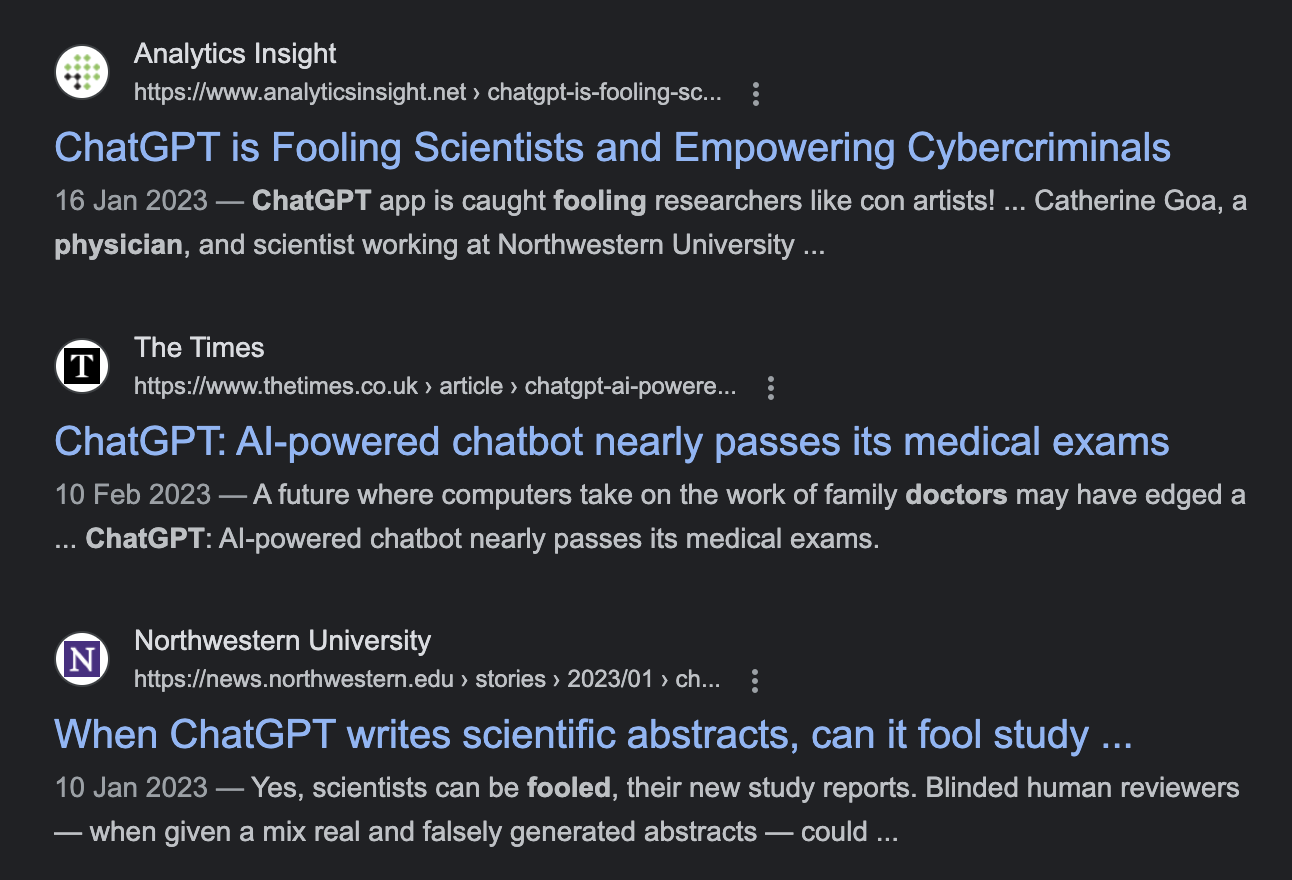

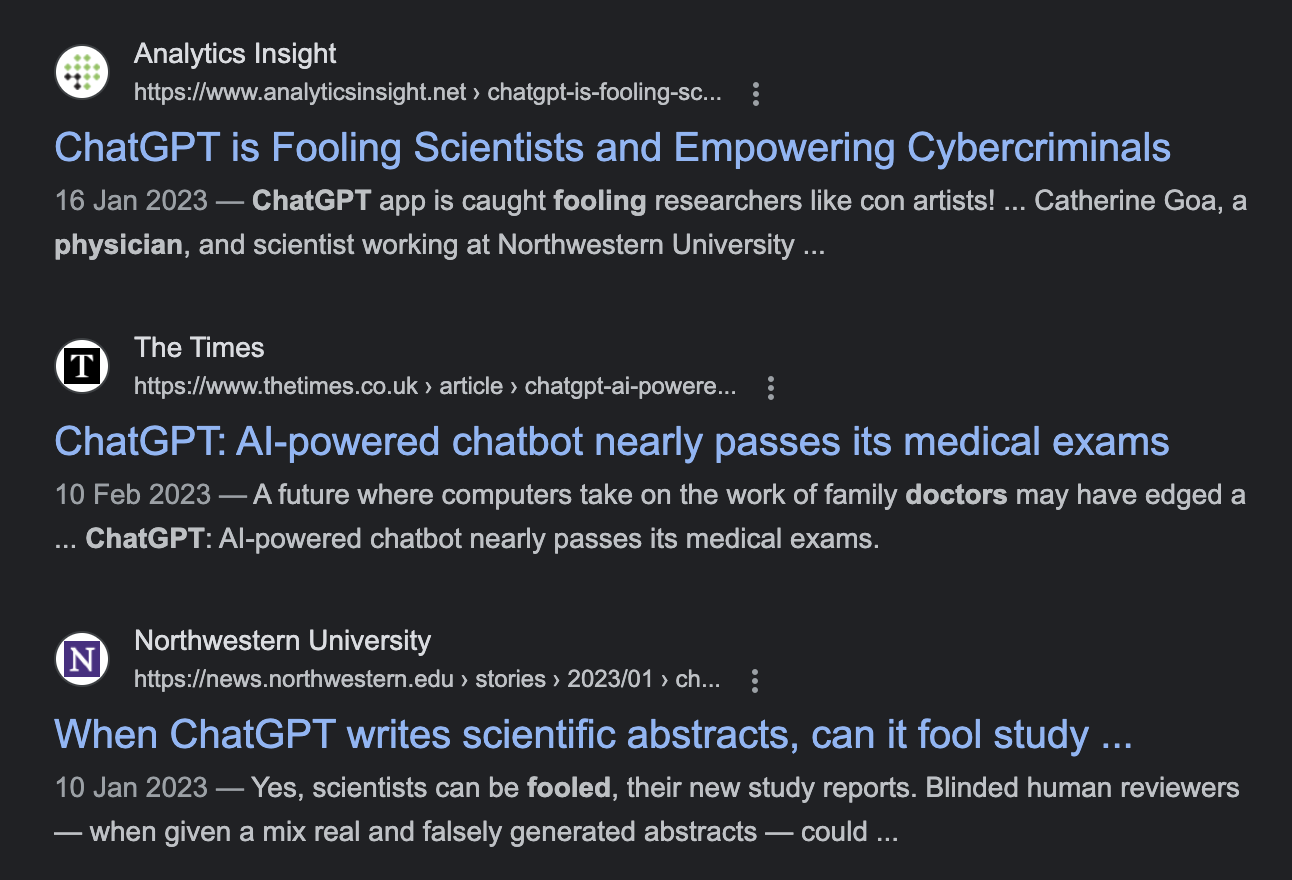

Language models capture patterns of strings of words and reproduce them in a probabilistic way; they aren’t able to distinguish between truth and falsehood.

Recently, Meta removed its LLM, which had supposedly been trained on humanity’s scientific knowledge, because it would make up fake papers and generate evidently biased and nonsensical knowledge [12].

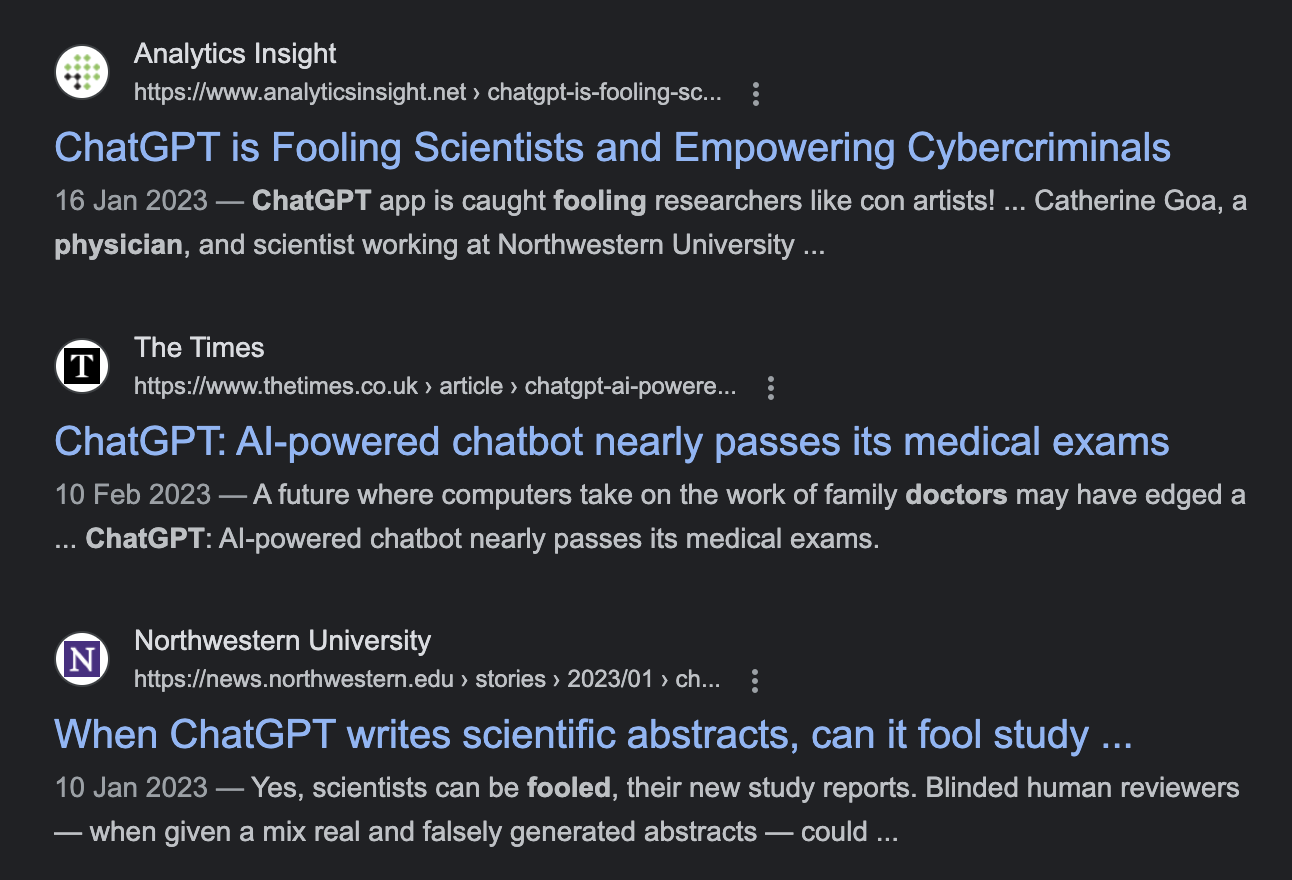

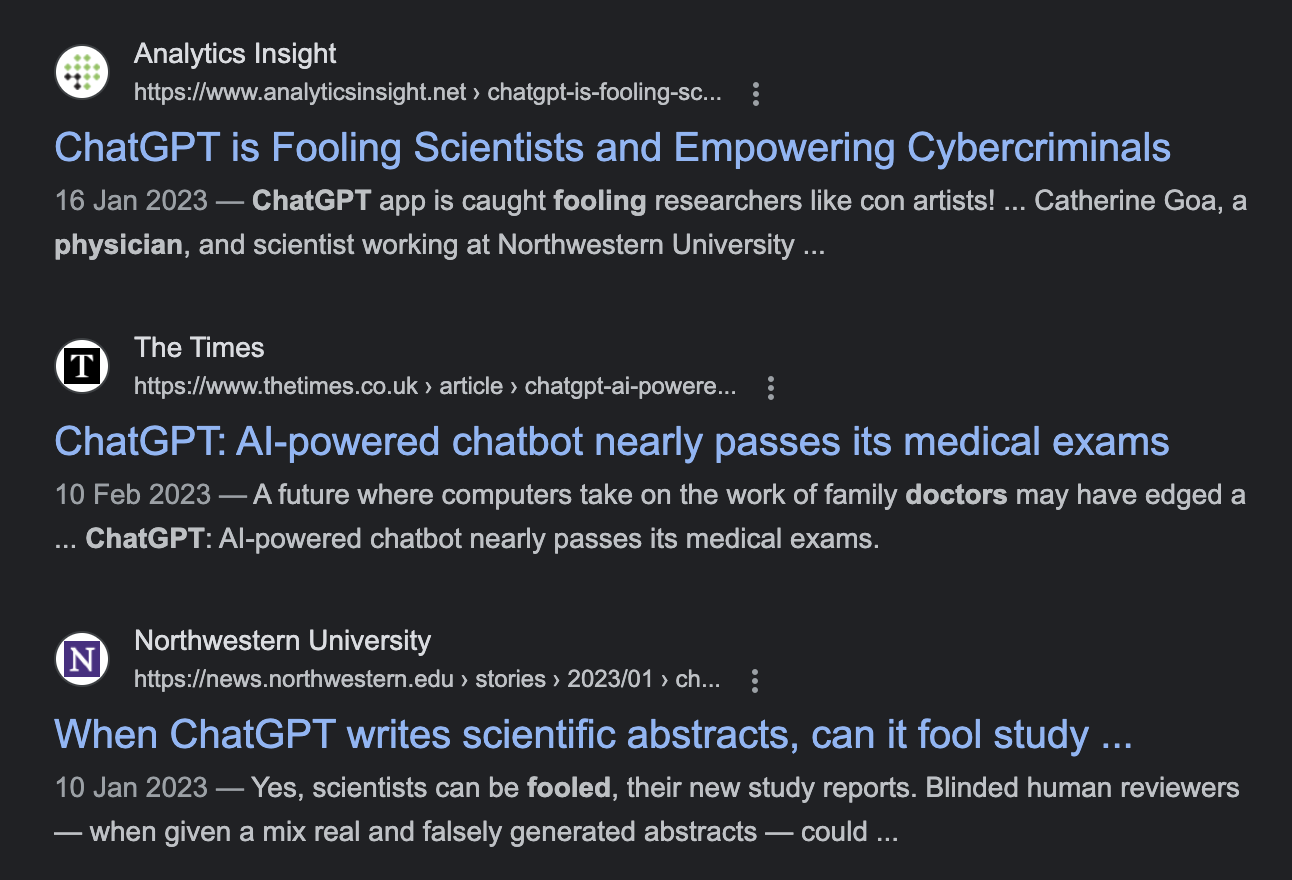

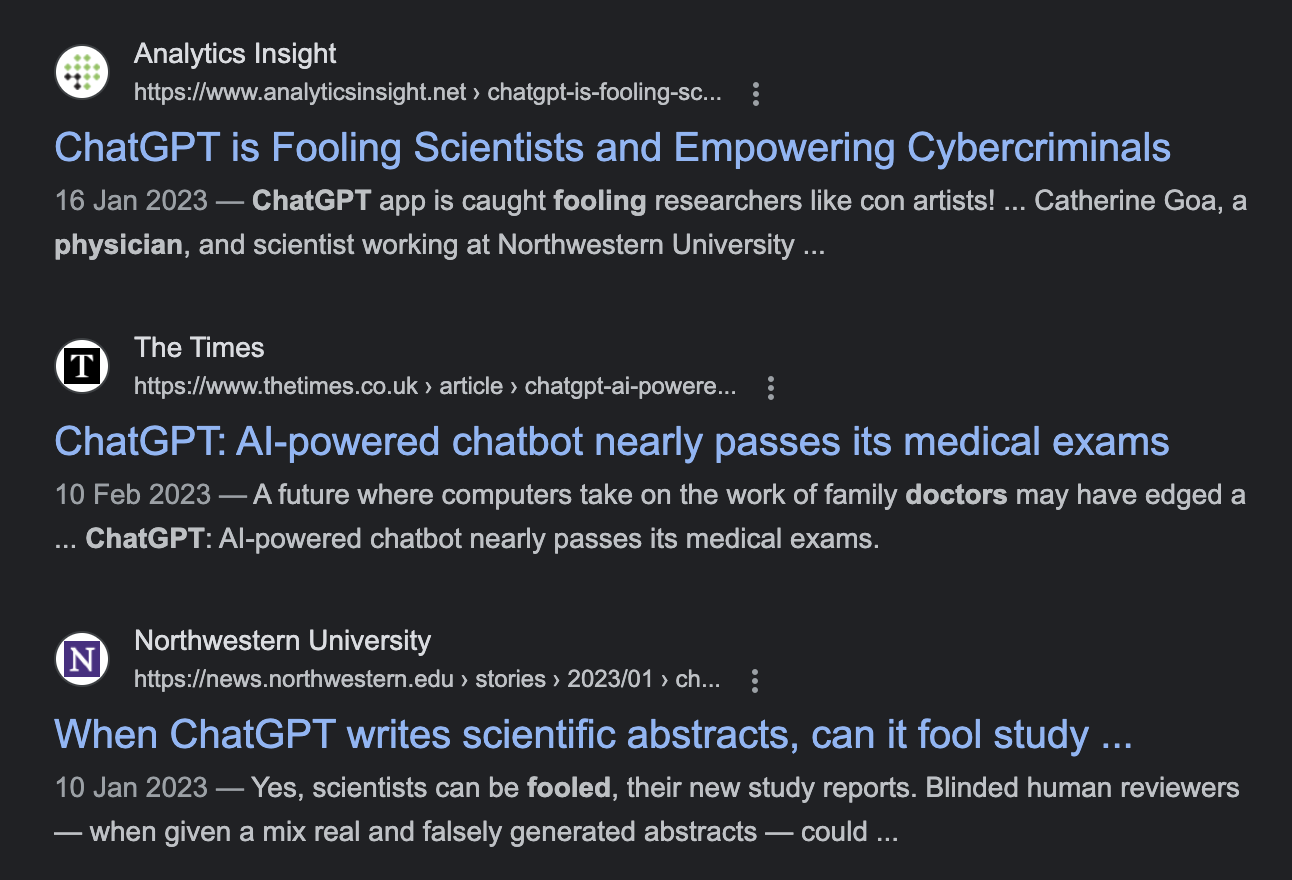

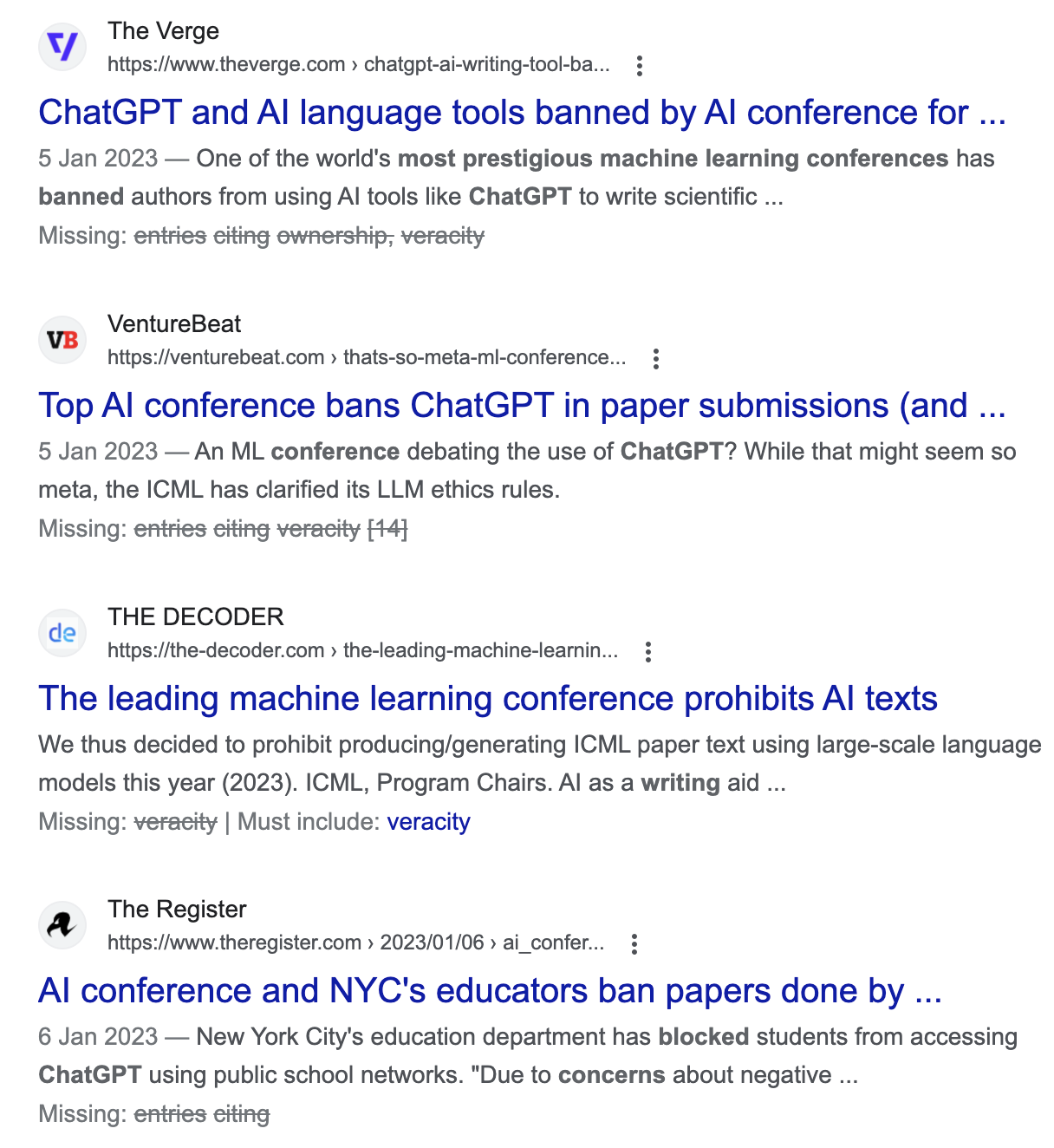

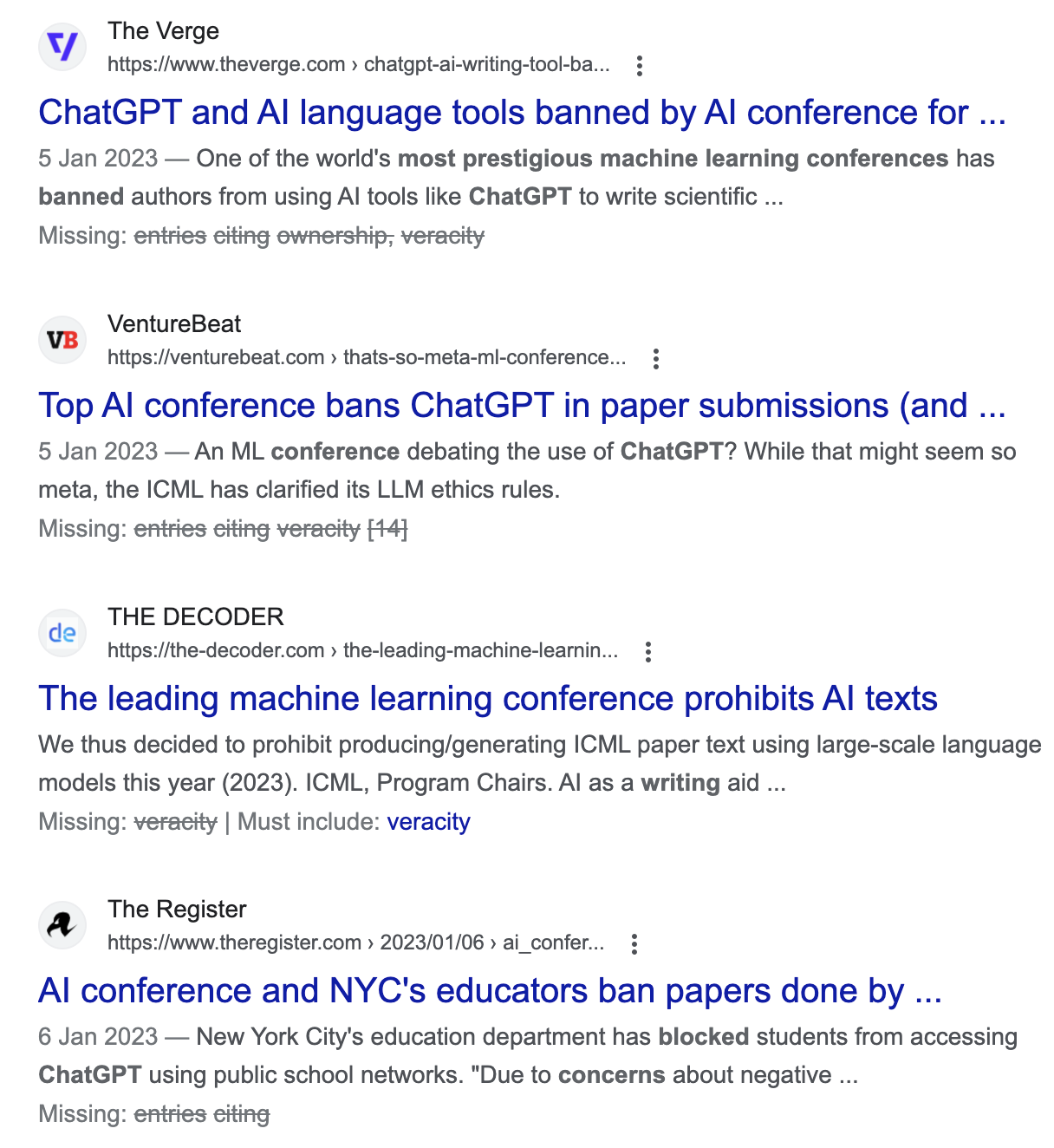

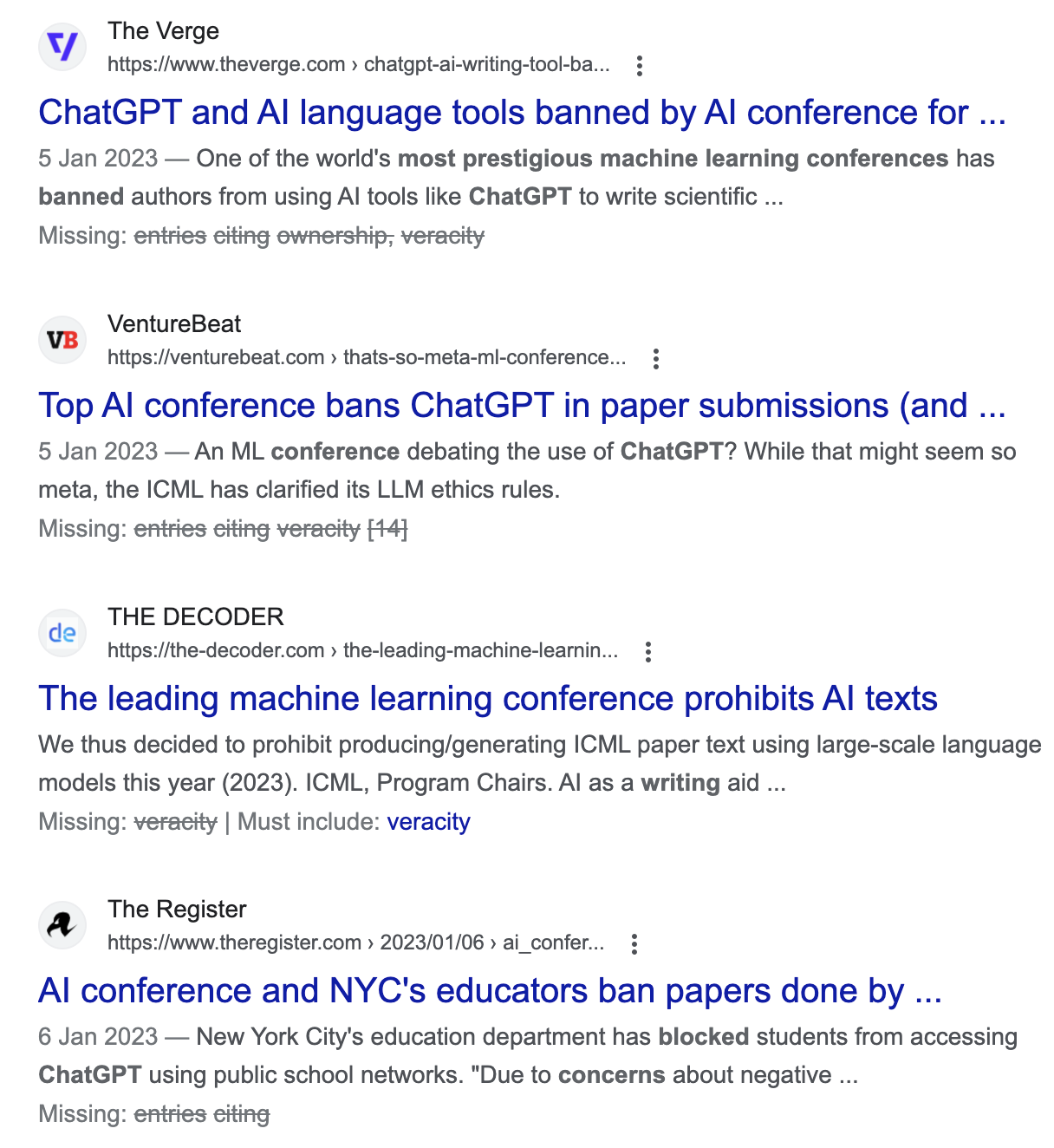

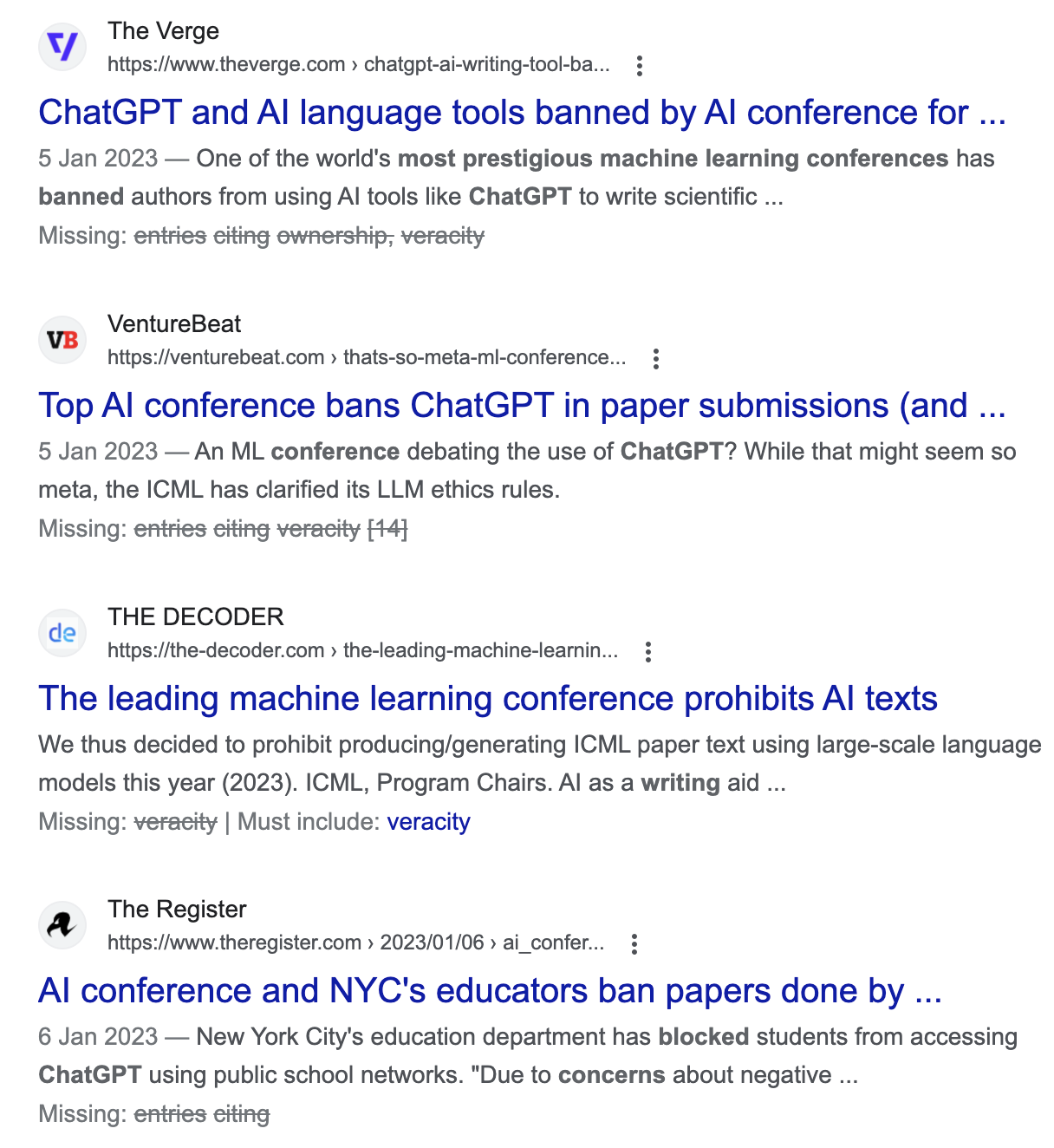

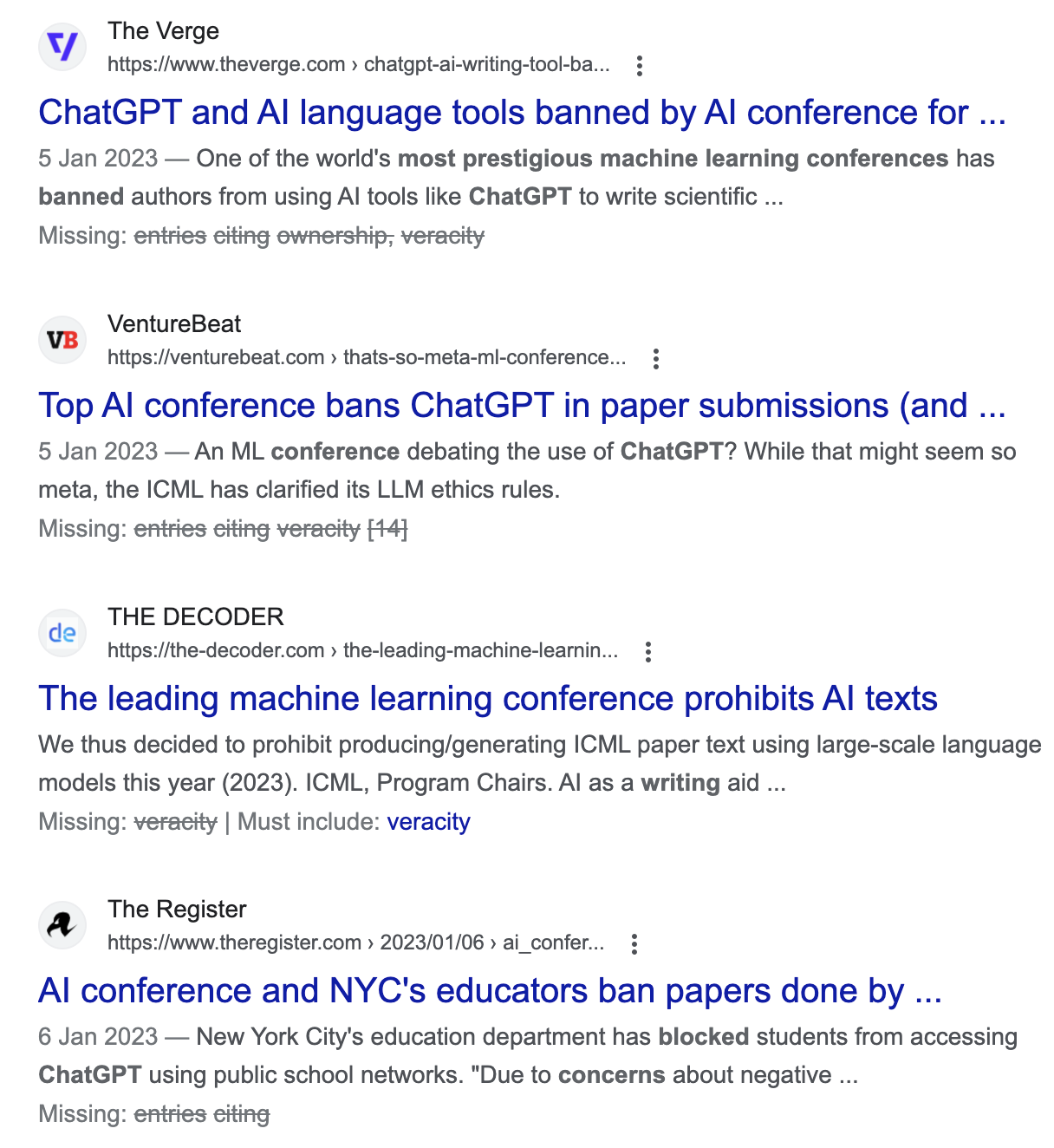

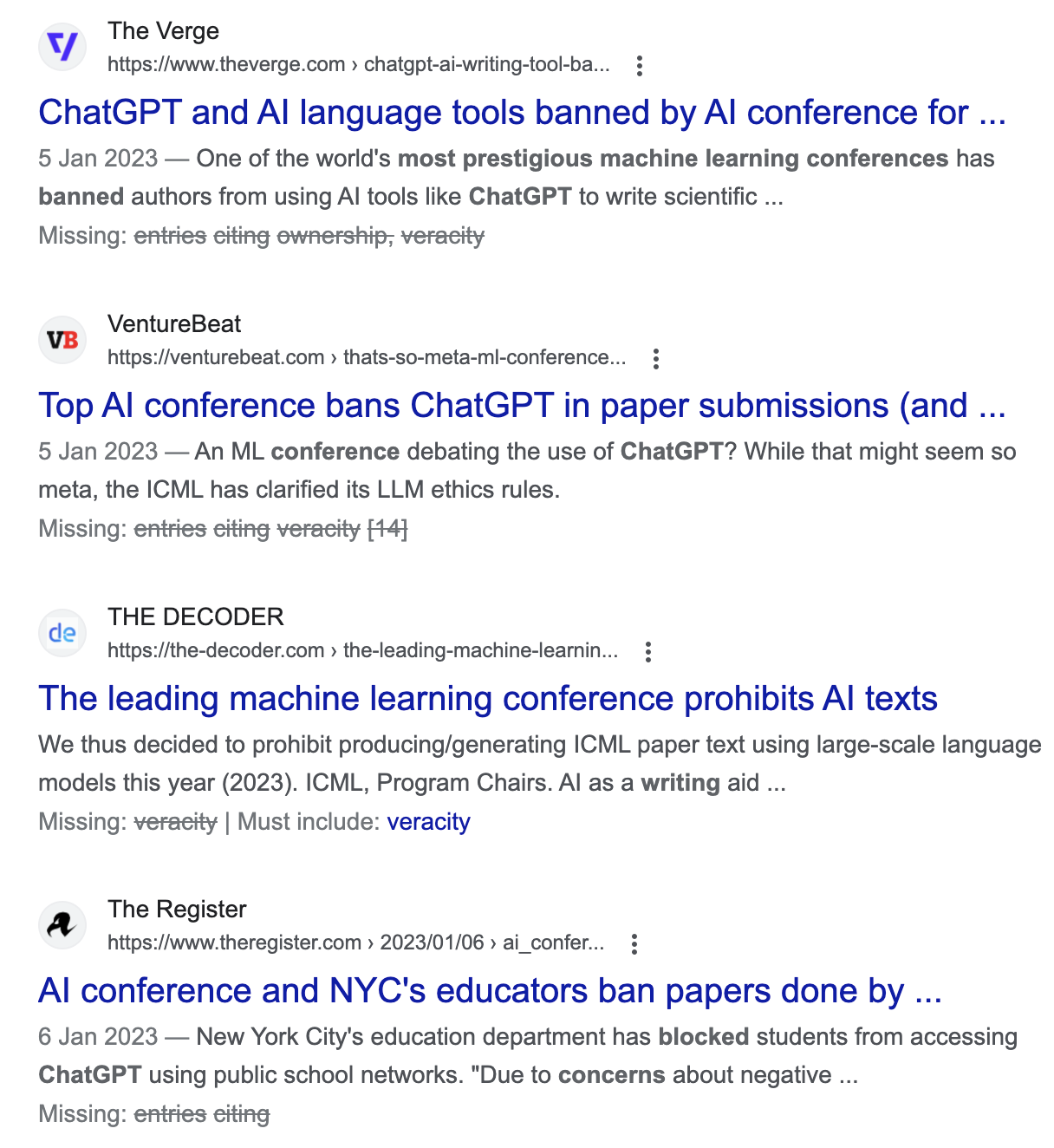

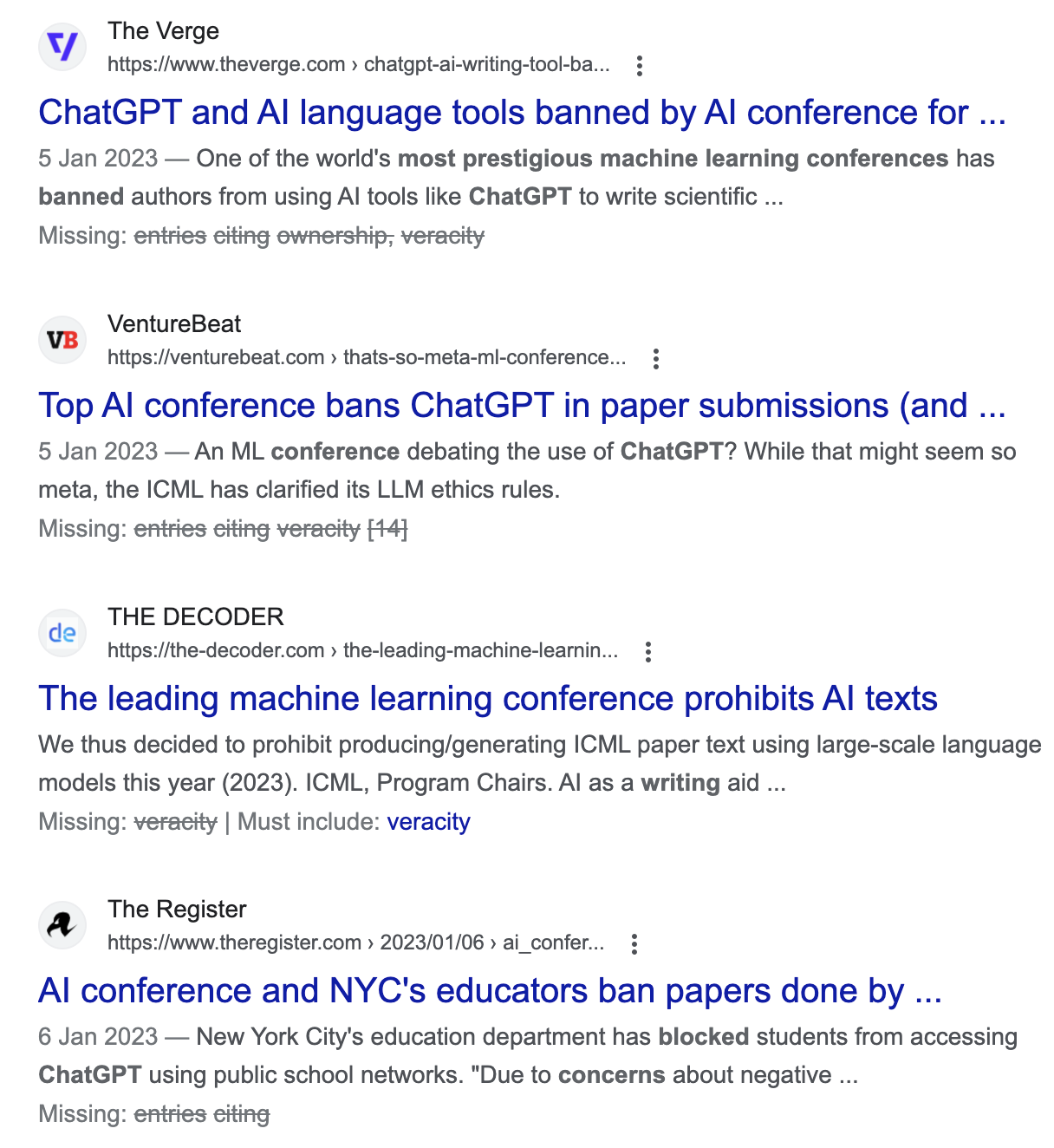

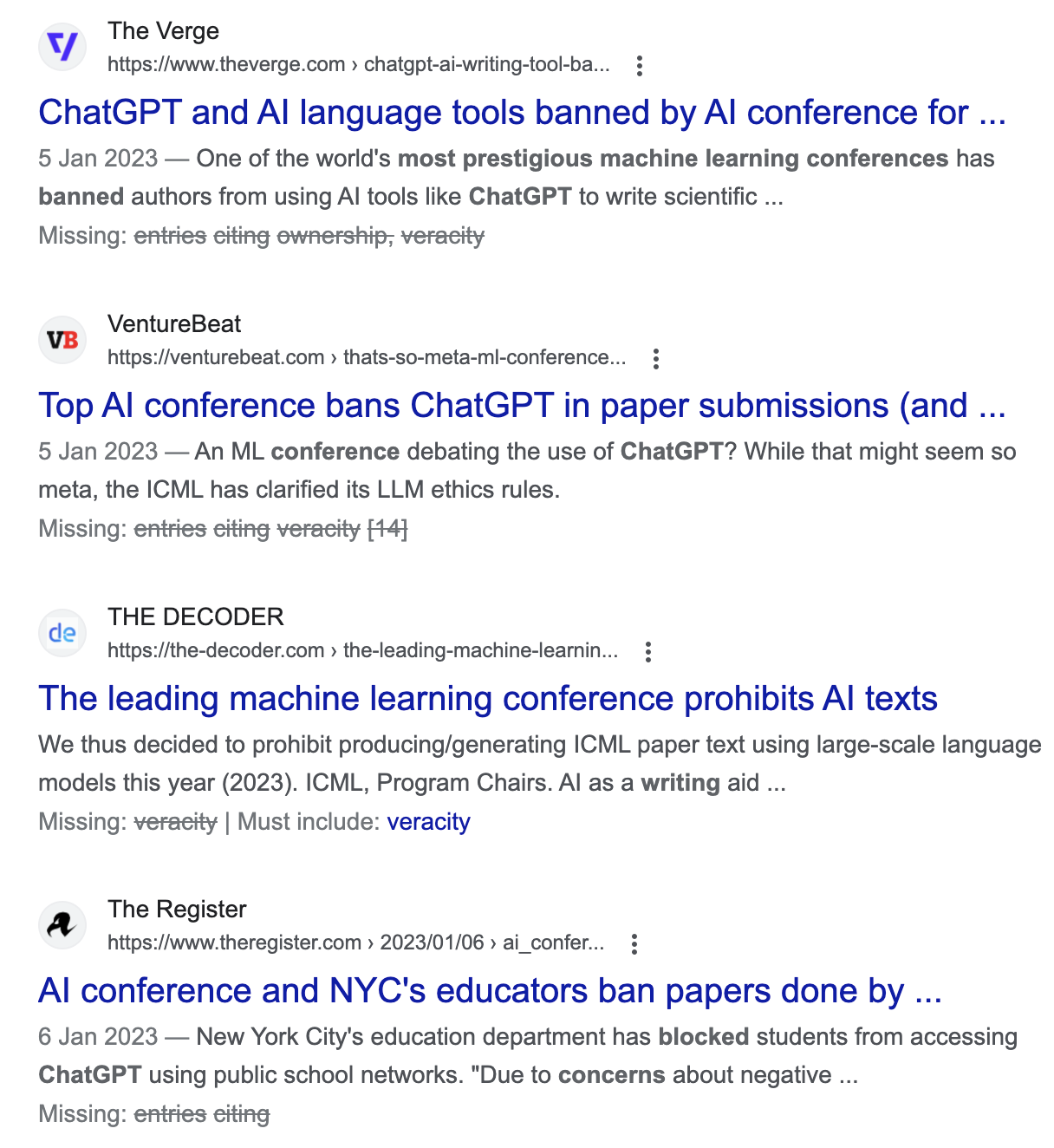

Similarly, there is no question that ChatGPT can write very confident-sounding and compelling content, to the point of fooling medical researchers [13]...

...but this doesn’t mean that the content is accurate, and even one of the most prestigious machine learning conferences recently banned entries written by ChatGPT citing ownership, privacy and veracity concerns [14]

Their authors are aware of this issue, and although they claim to be working on improving the tool they admit that it can create “toxic or biased outputs, make up facts, and generate sexual and violent content without explicit prompting” [15].

The rapid progress with AI tools comes with unintended consequences; they know what language sounds like but have no direct grasp on reality so the implications for misinformation and for perpetuating harmful stereotypes should be very seriously considered.

Behavioral Science

AI

+

+

Ethics

Although these issues affect the field of AI more generally, it’s easy to see how the combination of AI and behavioural science could lead to similar problems if it’s not done carefully and ethically.

Behavioral Science

AI

+

+

Ethics

Practitioners and researchers need to give enough consideration to which groups are represented in the data and how are they represented, and what do we intend to achieve.

Behavioral Science

AI

+

+

Ethics

Using a behavioural science lens combined with the power of AI offers the potential for more effective behaviour change interventions.

In order to realise this potential, however, we need to get it right and address some outstanding issues.

1. Not everybody trusts algorithms

For the combination of behavioural science and AI to succeed in creating more effective experiences that benefit the user, we need to build trust with them.

However, some research shows that people can have difficulties trusting predictions or decisions made by algorithms, even when they see them to outperform a human decision-maker [16, 17].

1. Not everybody trusts algorithms

For the combination of behavioural science and AI to succeed in creating more effective experiences that benefit the user, we need to build trust with them.

To realise the potential of behavioural science and AI we need to work on earning the trust of users by making the black box transparent. Behavioural science can help here by giving AI models a scientific foundation and explaining how conclusions are reached. This is the issue at the core of explainable AI (XAI) [18].

1. Not everybody trusts algorithms

For the combination of behavioural science and AI to succeed in creating more effective experiences that benefit the user, we need to build trust with them.

To realise the potential of behavioural science and AI we need to work on earning the trust of users by making the black box transparent. Behavioural science can help here by giving AI models a scientific foundation and explaining how conclusions are reached. This is the issue at the core of explainable AI (XAI) [18].

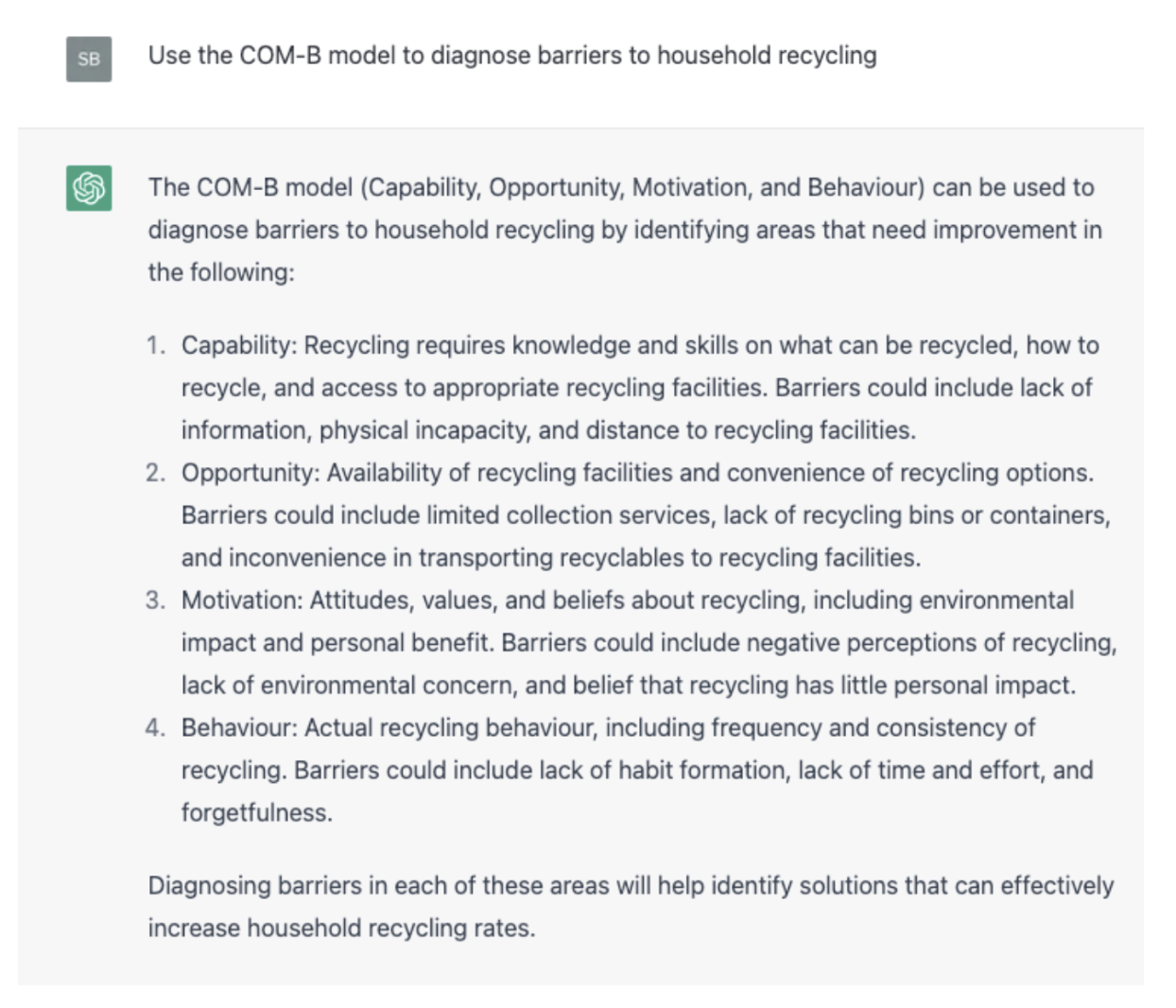

Open AI tools such as ChatGPT can definitely perform some basic behavioural diagnostics.

To realise the potential of behavioural science and AI we need to work on earning the trust of users by making the black box transparent. Behavioural science can help here by giving AI models a scientific foundation and explaining how conclusions are reached. This is the issue at the core of explainable AI (XAI) [18].

1. Not everybody trusts algorithms

For the combination of behavioural science and AI to succeed in creating more effective experiences that benefit the user, we need to build trust with them.

2. Will behavioural science practitioners become obsolete?

For example, given the right prompt, it can suggest nudges to change a behaviour, or produce a COM-B analysis that could be used as a starting point.

Open AI tools such as ChatGPT can definitely perform some basic behavioural diagnostics.

2. Will behavioural science practitioners become obsolete?

For example, given the right prompt, it can suggest nudges to change a behaviour, or produce a COM-B analysis that could be used as a starting point.

Although they could be useful for some initial brainstorming, ChatGPT’s answers to behavioural challenges are quite basic and generic.

Additionally, they show no understanding of the contextual factors that influence behaviour and the systems involved.

Taken together with the fact that it’s not possible to assume that the answers are accurate or based on real evidence.

I personally think that Open AI tools are still far from making behavioural practitioners obsolete.

1. BehSci + AI

-

AI involves machines with human-like intelligence that can perform tasks related to human intelligence.

-

Combining models from behavioural science into AI can result in more tailored and satisfactory recommendations.

2. Combined Power

-

Personalisation is an essential part of the behavioural science toolkit.

-

Combining AI with behavioural science offers the potential for even more personalised experiences, reaching a larger, diverse population.

3. Lirio

- Lirio combines the two to design personalised email communications to move people towards target behaviours.

- The ML algorithm retrieves and assembles messages that are crafted to address specific barriers and prompt action.

4. Humu

- HUMU combines the two to personalise nudges that encourage people to adopt better ways of working.

- HUMU gathers company insights and employee feedback and data to generate these personalised nudges.

Final takeaways 🧠

What did you think about this case study? Let us know so we could improve!

[1] https://www.turing.ac.uk/research/research-programmes-finance-and-economics/behavioural-data-science

[2] Ford, K. L., West, A. B., Bucher, A., & Osborn, C. Y. (2022). Personalized Digital Health Communications to Increase COVID-19 Vaccination in Underserved Populations: A Double Diamond Approach to Behavioral Design. Frontiers in Digital Health, 4.

[3] https://www.humu.com/blog/humu-nudge-engine-make-work-better

[4] Michie, S., Van Stralen, M. M., & West, R. (2011). The behaviour change wheel: a new method for characterising and designing behaviour change interventions. Implementation science, 6(1), 1-12.

[5] https://www.washingtonpost.com/blogs/govbeat/wp/2015/07/08/why-googles-nightmare-ai-is-putting-demon-puppies-everywhere/

[6] https://algorithmwatch.org/en/google-vision-racism/

[7] https://www.nature.com/articles/d41586-018-05707-8

[9] https://www.amazon.co.uk/Invisible-Women-Exposing-World-Designed/dp/1784741728

References

[10] https://www.nature.com/news/there-is-a-blind-spot-in-ai-research-1.20805

[11] https://www.theguardian.com/society/2020/oct/28/nearly-half-of-councils-in-great-britain-use-algorithms-to-help-make-claims-decisions

[12]https://www.technologyreview.com/2022/11/18/1063487/meta-large-language-model-ai-only-survived-three-days-gpt-3-science/

[13] https://www.nature.com/articles/d41586-023-00056-7

[14] https://icml.cc/Conferences/2023/llm-policy

[15] https://openai.com/blog/instruction-following/

[16] Dietvorst, B. J., Simmons, J. P., & Massey, C. (2015). Algorithm aversion: people erroneously avoid algorithms after seeing them err. Journal of Experimental Psychology: General, 144(1), 114.

[17] Cabitza, F. (2019, September). Biases affecting human decision making in AI-supported second opinion settings. In International Conference on Modelling Decisions for Artificial Intelligence (pp. 283-294). Springer, Cham.

[18] https://www.forbes.com/sites/cognitiveworld/2019/07/23/understanding-explainable-ai/?sh=67163ab7c9ef

[19] Behavioral Scientist vs AI - Whose nudges are better?

References

You know... Sharing is Caring

How ‘bout your friends know this Information too. Click & Share.

x7

x11

Insta DM

x23

Creators of this Case-Study

Sara Bru Garcia

Behavioral Scientist