Multi-agent systems

in production

2024-09-09 Vessl/Pinecone meetup

What are we talking about?

- What is LlamaIndex

- Why you should use it

- What can it do

- Retrieval augmented generation

- World class parsing

- Agents and multi-agent systems

What is LlamaIndex?

Python: docs.llamaindex.ai

TypeScript: ts.llamaindex.ai

LlamaParse

Free for 1000 pages/day!

LlamaCloud

1. Sign up:

LlamaHub

- Data loaders

- Embedding models

- Vector stores

- LLMs

- Agent tools

- Pre-built strategies

- More!

Why LlamaIndex?

- Build faster

- Skip the boilerplate

- Avoid early pitfalls

What can LlamaIndex

do for me?

Basic RAG pipeline

Loading

RAG, step 1:

documents = SimpleDirectoryReader("data").load_data()Parsing

RAG, step 2:

(LlamaParse: it's really good. Really!)

# must have a LLAMA_CLOUD_API_KEY

# bring in deps

from llama_parse import LlamaParse

from llama_index.core import SimpleDirectoryReader

# set up parser

parser = LlamaParse(

result_type="markdown" # "text" also available

)

# use SimpleDirectoryReader to parse our file

file_extractor = {".pdf": parser}

documents = SimpleDirectoryReader(

input_files=['data/canada.pdf'],

file_extractor=file_extractor

).load_data()

print(documents)Embedding

RAG, step 3:

Settings.embed_model = HuggingFaceEmbedding(model_name="BAAI/bge-small-en-v1.5")Storing

RAG, step 4:

index = VectorStoreIndex.from_documents(documents)Retrieving

RAG, step 5:

retriever = index.as_retriever()

nodes = retriever.retrieve("Who is Paul Graham?")Querying

RAG, step 6:

query_engine = index.as_query_engine()

response = query_engine.query("Who is Paul Graham?")Multi-modal

npx create-llama

Limitations of RAG

Summarization

Naive RAG failure modes:

Comparison

Naive RAG failure modes:

Multi-part questions

Naive RAG failure modes:

RAG is necessary

but not sufficient

Two ways

to improve RAG:

- Improve your data

- Improve your querying

What is an agent anyway?

- Semi-autonomous software

- Accepts a goal

- Uses tools to achieve that goal

- Exact steps to resolution not specified

RAG pipeline

⚠️ Single-shot

⚠️ No query understanding/planning

⚠️ No tool use

⚠️ No reflection, error correction

⚠️ No memory (stateless)

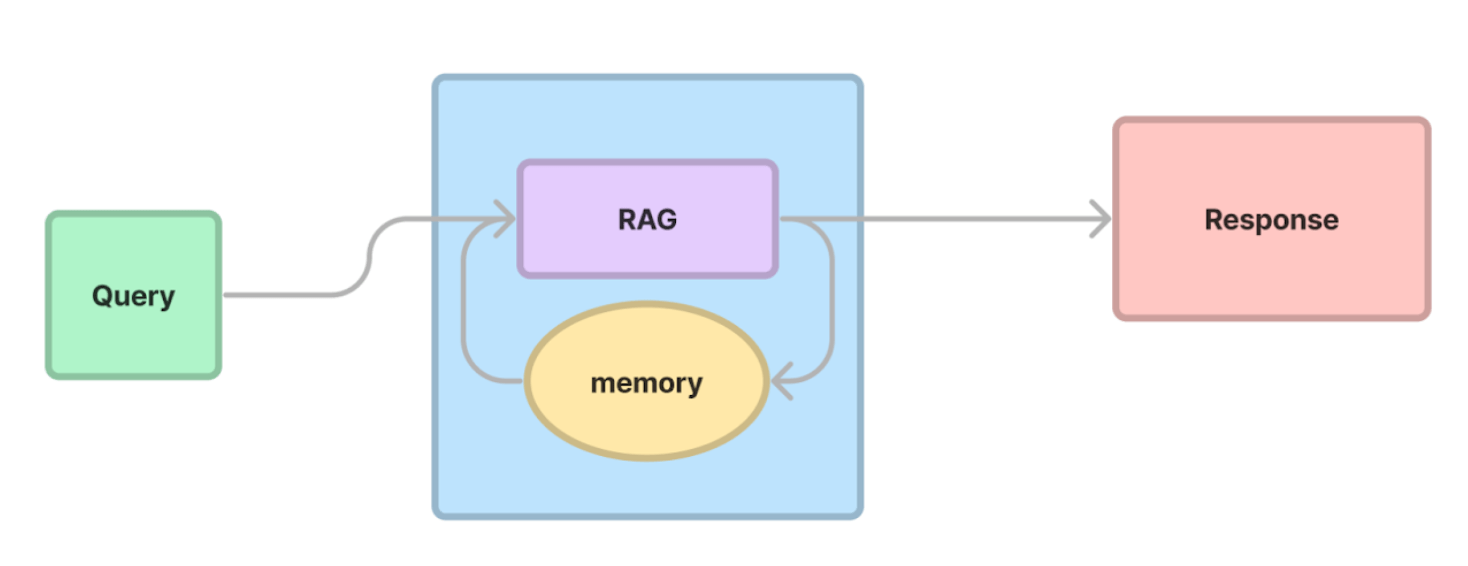

Agentic RAG

✅ Multi-turn

✅ Query / task planning layer

✅ Tool interface for external environment

✅ Reflection

✅ Memory for personalization

From simple to advanced agents

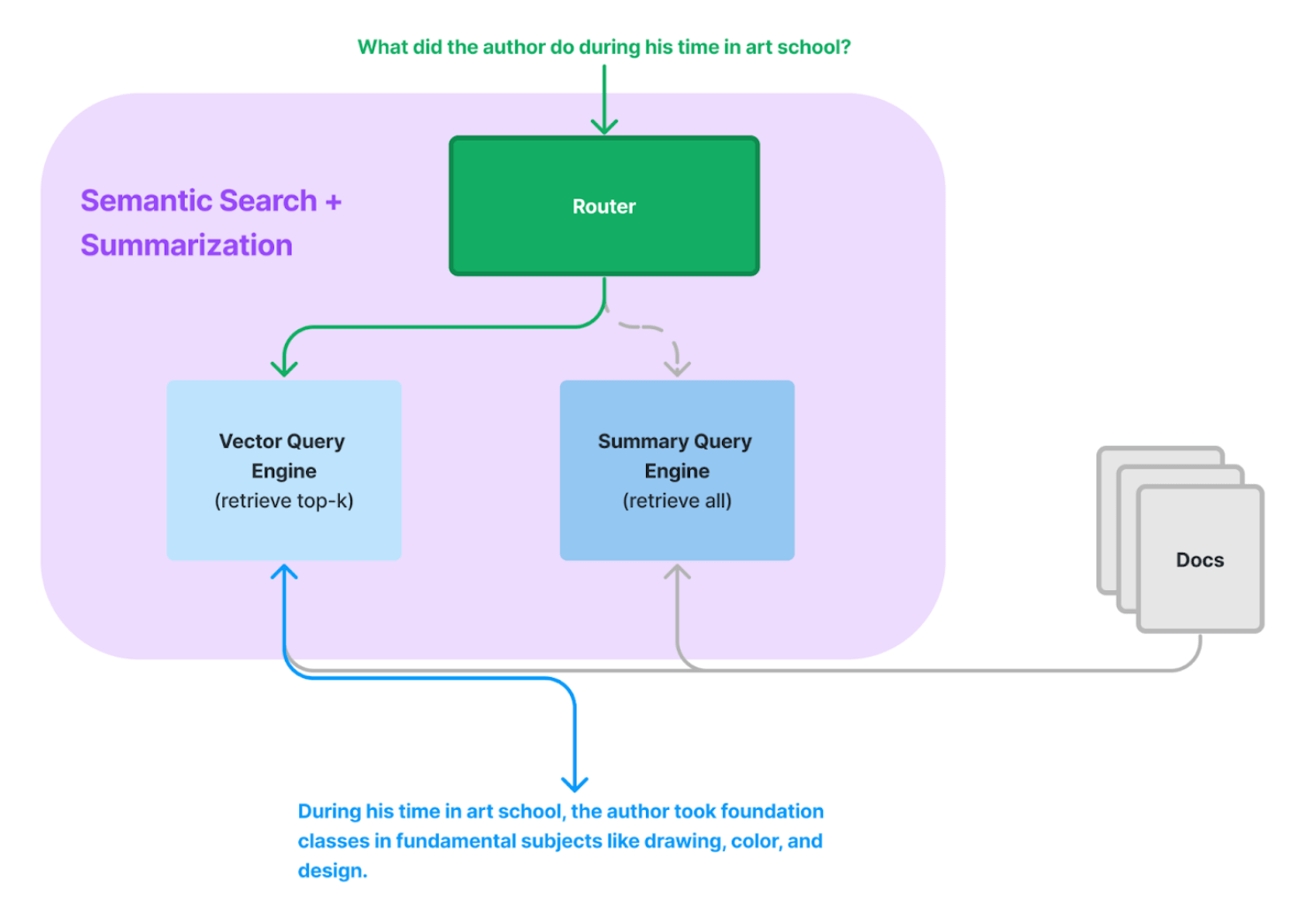

Routing

RouterQueryEngine

list_tool = QueryEngineTool.from_defaults(

query_engine=list_query_engine,

description=(

"Useful for summarization questions related to Paul Graham eassy on"

" What I Worked On."

),

)

vector_tool = QueryEngineTool.from_defaults(

query_engine=vector_query_engine,

description=(

"Useful for retrieving specific context from Paul Graham essay on What"

" I Worked On."

),

)

query_engine = RouterQueryEngine(

selector=LLMSingleSelector.from_defaults(),

query_engine_tools=[

list_tool,

vector_tool,

],

)Conversation memory

Chat Engine

# load and parse

documents = SimpleDirectoryReader("data").load_data()

# embed and index

index = VectorStoreIndex.from_documents(documents)

# generate chat engine

chat_engine = index.as_chat_engine()

# start chatting

response = query_engine.chat("What did the author do growing up?")

print(response)Query planning

Sub Question Query Engine

# set up list of tools

query_engine_tools = [

QueryEngineTool(

query_engine=vector_query_engine,

metadata=ToolMetadata(

name="pg_essay",

description="Paul Graham essay on What I Worked On",

),

),

# more query engine tools here

]

# create engine from tools

query_engine = SubQuestionQueryEngine.from_defaults(

query_engine_tools=query_engine_tools,

use_async=True,

)Tool use

Tools unleash the power of LLMs

Basic ReAct agent

# define sample Tool

def multiply(a: int, b: int) -> int:

"""Multiply two integers and returns the result integer"""

return a * b

multiply_tool = FunctionTool.from_defaults(fn=multiply)

# initialize ReAct agent

agent = ReActAgent.from_tools(

[

multiply_tool

# other tools here

],

verbose=True

)Combine agentic strategies

and then go further

- Routing

- Memory

- Planning

- Tool use

Agentic strategies

- Multi-turn

- Reasoning

- Reflection

Full agent

3 agent

reasoning loops

- Sequential

- DAG-based

- Tree-based

Sequential reasoning

ReAct in action

Thought: I need to use a tool to help me answer the question.

Action: multiply

Action Input: {"a": 2, "b": 4}

Observation: 8

Thought: I need to use a tool to help me answer the question.

Action: add

Action Input: {"a": 20, "b": 8}

Observation: 28

Thought: I can answer without using any more tools.

Answer: 28DAG-based reasoning

Self reflection

Structured Planning Agent

# create the function calling worker for reasoning

worker = FunctionCallingAgentWorker.from_tools(

[lyft_tool, uber_tool], verbose=True

)

# wrap the worker in the top-level planner

agent = StructuredPlannerAgent(

worker, tools=[lyft_tool, uber_tool], verbose=True

)

response = agent.chat(

"Summarize the key risk factors for Lyft and Uber in their 2021 10-K filings."

)The Plan

=== Initial plan ===

Extract Lyft Risk Factors:

Summarize the key risk factors from Lyft's 2021 10-K filing. -> A summary of the key risk factors for Lyft as outlined in their 2021 10-K filing.

deps: []

Extract Uber Risk Factors:

Summarize the key risk factors from Uber's 2021 10-K filing. -> A summary of the key risk factors for Uber as outlined in their 2021 10-K filing.

deps: []

Combine Risk Factors Summaries:

Combine the summaries of key risk factors for Lyft and Uber from their 2021 10-K filings into a comprehensive overview. -> A comprehensive summary of the key risk factors for both Lyft and Uber as outlined in their respective 2021 10-K filings.

deps: ['Extract Lyft Risk Factors', 'Extract Uber Risk Factors']Tree-based reasoning

Exploration vs exploitation

Language Agent Tree Search

agent_worker = LATSAgentWorker.from_tools(

query_engine_tools,

llm=llm,

num_expansions=2,

max_rollouts=3,

verbose=True,

)

agent = agent.as_worker()

task = agent.create_task(

"Given the risk factors of Uber and Lyft described in their 10K files, "

"which company is performing better? Please use concrete numbers to inform your decision."

)Workflows

Why workflows?

Workflows primer

from llama_index.llms.openai import OpenAI

class OpenAIGenerator(Workflow):

@step()

async def generate(self, ev: StartEvent) -> StopEvent:

query = ev.get("query")

llm = OpenAI()

response = await llm.acomplete(query)

return StopEvent(result=str(response))

w = OpenAIGenerator(timeout=10, verbose=False)

result = await w.run(query="What's LlamaIndex?")

print(result)Looping

class LoopExampleFlow(Workflow):

@step()

async def answer_query(self, ev: StartEvent | QueryEvent ) -> FailedEvent | StopEvent:

query = ev.query

# try to answer the query

random_number = random.randint(0, 1)

if (random_number == 0):

return FailedEvent(error="Failed to answer the query.")

else:

return StopEvent(result="The answer to your query")

@step()

async def improve_query(self, ev: FailedEvent) -> QueryEvent | StopEvent:

# improve the query or decide it can't be fixed

random_number = random.randint(0, 1)

if (random_number == 0):

return QueryEvent(query="Here's a better query.")

else:

return StopEvent(result="Your query can't be fixed.")

l = LoopExampleFlow(timeout=10, verbose=True)

result = await l.run(query="What's LlamaIndex?")

print(result)Visualization

draw_all_possible_flows()Keeping state

class RAGWorkflow(Workflow):

@step(pass_context=True)

async def ingest(self, ctx: Context, ev: StartEvent) -> Optional[StopEvent]:

dataset_name = ev.dataset

documents = SimpleDirectoryReader("data").load_data()

ctx.data["INDEX"] = VectorStoreIndex.from_documents(documents=documents)

return StopEvent(result=f"Indexed {len(documents)} documents.")

...Workflows enable arbitrarily complex applications

Customizability

class MyWorkflow(RAGWorkflow):

@step(pass_context=True)

def rerank(

self, ctx: Context, ev: Union[RetrieverEvent, StartEvent]

) -> Optional[QueryResult]:

# my custom reranking logic here

w = MyWorkflow(timeout=60, verbose=True)

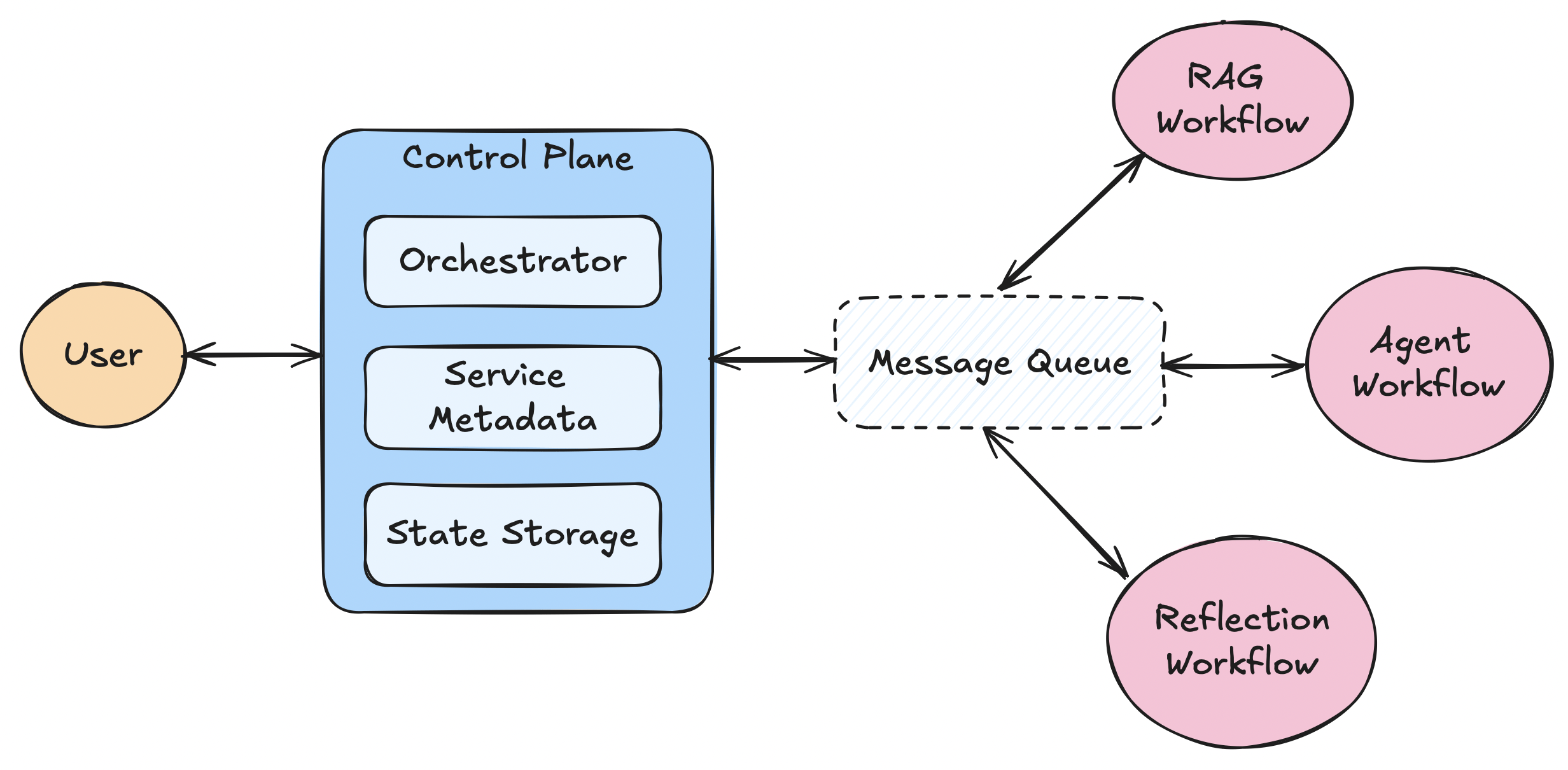

result = await w.run(query="Who is Paul Graham?")Multi-agent workflow

Multi-agent concierge

Full-stack python workflow

Deploying agents

to production

pip install llama-deploy

Agents as microservices

Deploying the core system

from llama_deploy import (

deploy_core,

ControlPlaneConfig,

SimpleMessageQueueConfig,

)

async def main():

await deploy_core(

control_plane_config=ControlPlaneConfig(),

message_queue_config=SimpleMessageQueueConfig(),

)

if __name__ == "__main__":

import asyncio

asyncio.run(main())Deploying a workflow

from llama_deploy import (

deploy_workflow,

WorkflowServiceConfig,

ControlPlaneConfig,

SimpleMessageQueueConfig,

)

from llama_index.core.workflow import Workflow, StartEvent, StopEvent, step

from my_workflow import MyWorkflow

async def main():

await deploy_workflow(

workflow=MyWorkflow(),

workflow_config=WorkflowServiceConfig(

host="127.0.0.1", port=8002, service_name="my_workflow"

),

control_plane_config=ControlPlaneConfig(),

)

if __name__ == "__main__":

import asyncio

asyncio.run(main())Nested workflows

from llama_index.core.workflow import Workflow, StartEvent, StopEvent, step

class InnerWorkflow(Workflow):

@step()

async def run_step(self, ev: StartEvent) -> StopEvent:

arg1 = ev.get("arg1")

if not arg1:

raise ValueError("arg1 is required.")

return StopEvent(result=str(arg1) + "_result")

class OuterWorkflow(Workflow):

@step()

async def run_step(

self, ev: StartEvent, inner: InnerWorkflow

) -> StopEvent:

arg1 = ev.get("arg1")

if not arg1:

raise ValueError("arg1 is required.")

arg1 = await inner.run(arg1=arg1)

return StopEvent(result=str(arg1) + "_result")

inner = InnerWorkflow()

outer = OuterWorkflow()

outer.add_workflows(inner=InnerWorkflow())Try out llama-deploy

Recap

- What is LlamaIndex

- LlamaCloud, LlamaHub, create-llama

- Why RAG is necessary

- How to build RAG in LlamaIndex

- Limitations of RAG

- Agentic RAG

- Routing, memory, planning, tool use

- Reasoning patterns

- Sequential, DAG-based, tree-based

- Workflows

- Loops, state, customizability

- Deploying workflows

What's next?

Thanks!

Follow me on Twitter/X:

@seldo

Please don't add me on LinkedIn.