Lecture 4: Linear Classification

Intro to Machine Learning

Recap:

"Use" a model

"Learn" a model

Regression

Algorithm

\(\mathcal{D}_\text{train}\)

🧠⚙️

hypothesis class

loss function

hyperparameters

regressor

Recap:

"Use" a model

"Learn" a model

train, optimize, tune, adapt ...

adjusting/updating/finding \(\theta\)

gradient based

Regression

Algorithm

\(\mathcal{D}_\text{train}\)

🧠⚙️

hypothesis class

loss function

hyperparameters

regressor

predict, test, evaluate, infer ...

plug in the \(\theta\) found

no gradients involved

Today:

{"good", "better", "best", ...}

\(\{0,1\}\)

\(\{😍, 🥺\}\)

{"Fish", "Grizzly", "Chameleon", ...}

Classification

Algorithm

🧠⚙️

hypothesis class

loss function

hyperparameters

classifier

\(\mathcal{D}_\text{train}\)

Outline

- Linear (binary) classifiers

- to use: separator, normal vector

- to learn: very difficult!

- Linear logistic (binary) classifiers

- Linear multi-class classifiers

-

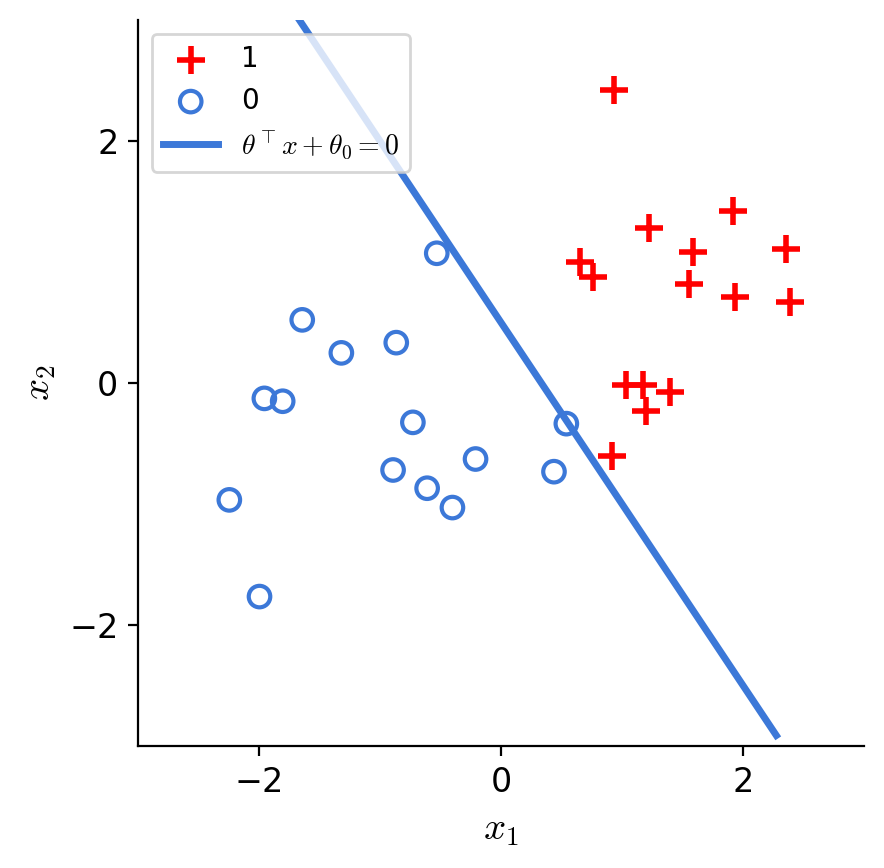

Linear (binary) classifiers

- to use: separator, normal vector

- to learn: very difficult!

- Linear logistic (binary) classifiers

- Linear multi-class classifiers

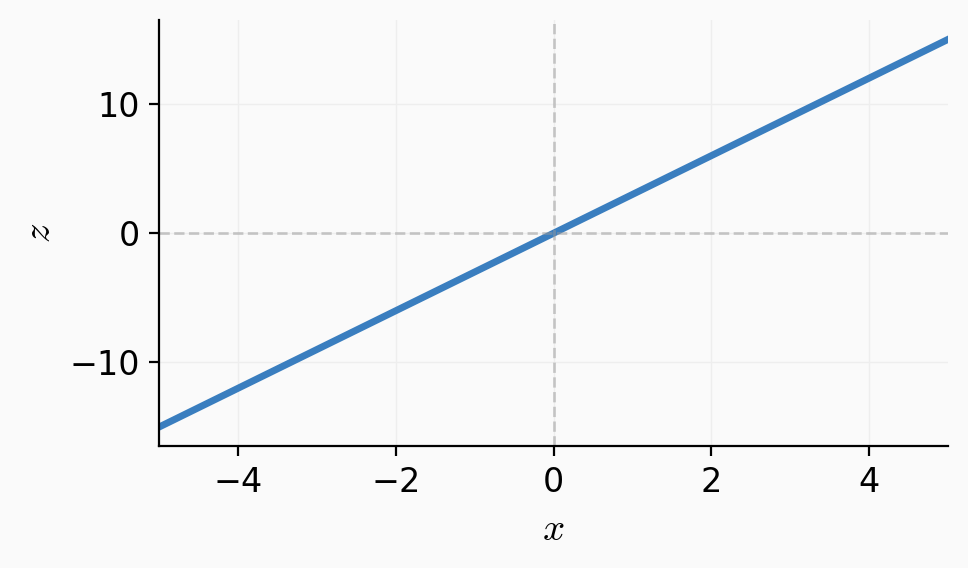

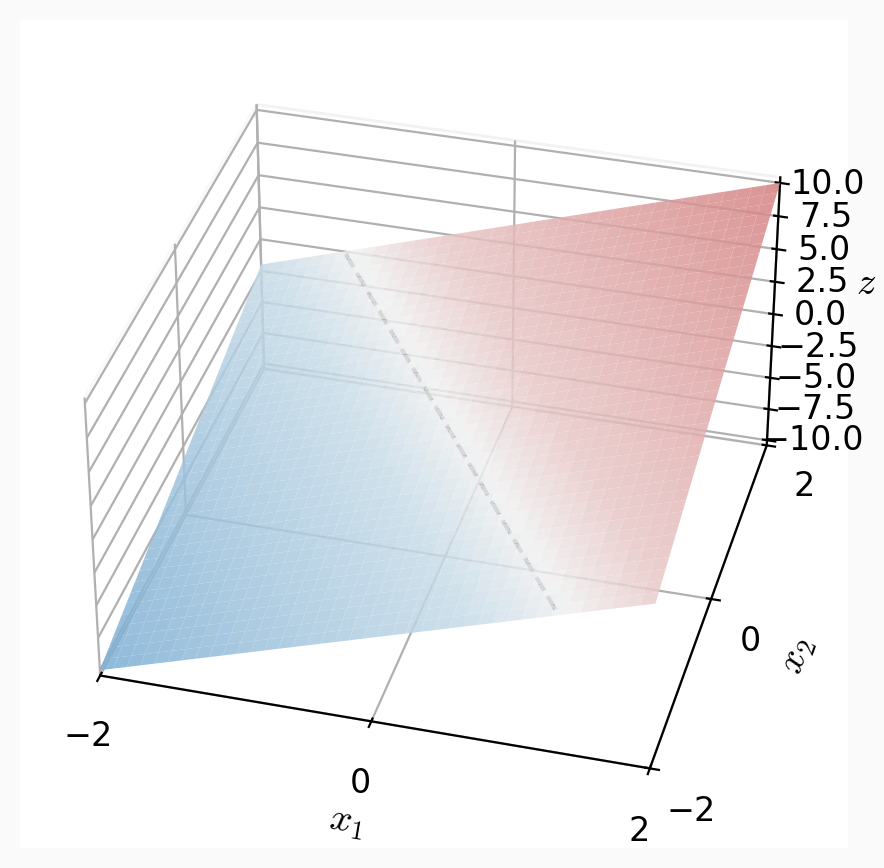

linear regressor

linear binary classifier

features

parameters

linear combination

predict

\(x \in \mathbb{R}^d\)

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

\(\theta^T x +\theta_0\)

\(g = z\)

\(=z\)

if \(z > 0\)

otherwise

\(1\)

0

today, we refer to \(\theta^T x +\theta_0\) as \(z\) throughout.

\(g=\)

label

\(y\in \mathbb{R}\)

\(y\in \{0,1\}\)

Outline

-

Linear (binary) classifiers

- to use: separator, normal vector

- to learn: very difficult!

- Linear logistic (binary) classifiers

- Linear multi-class classifiers

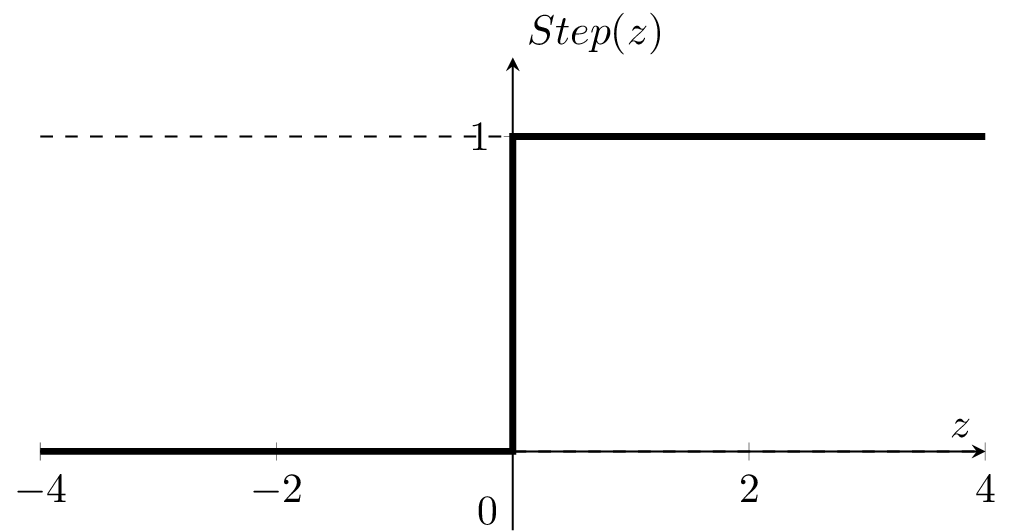

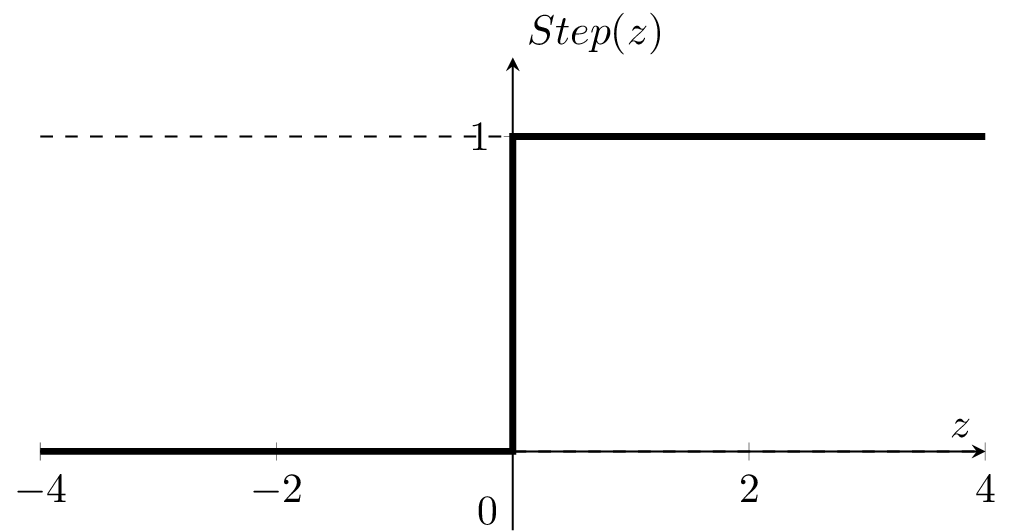

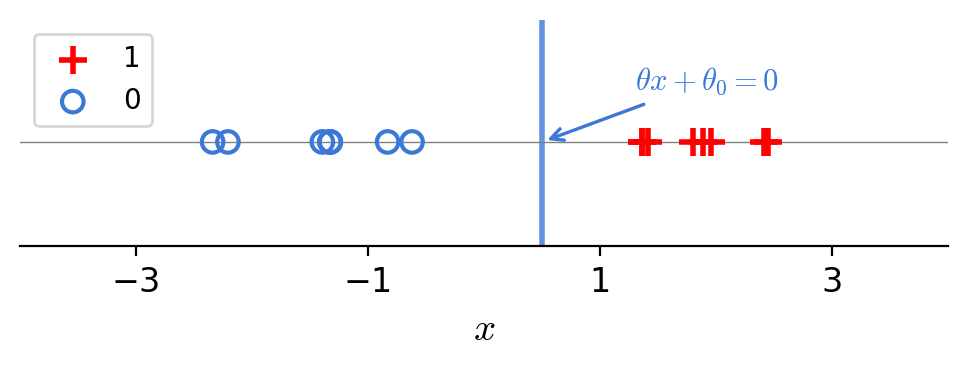

- To learn a model, need a loss function.

- Very intuitive, and easy to evaluate 😍

- One natural loss choice:

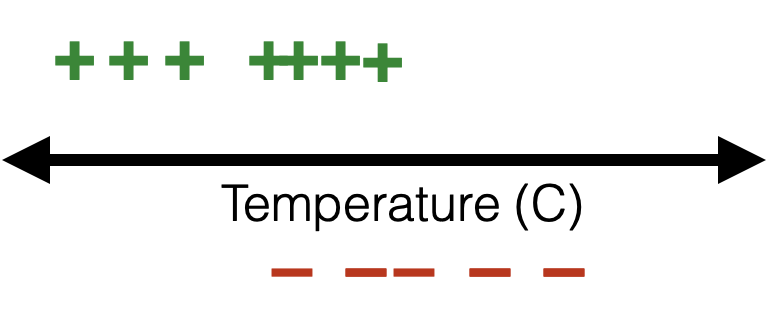

\({J}_{01}(\theta)\) very hard to optimize (NP-hard) 🥺

- "Flat" almost everywhere (zero gradient \(\nabla_{\theta}{J}_{01}(\theta)\))

- "Jumps" elsewhere (no gradient)

linear binary classifier

features

parameters

linear combo

predict

\(x \in \mathbb{R}^d\)

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

\(\theta^T x +\theta_0\)

\(=z\)

loss

linear regressor

- closed-form formula

- gradient descent

optimize

method

if \(z > 0\)

otherwise

\(1\)

0

\(g=\)

\(y \in \mathbb{R}\)

\(y \in \{0,1\}\)

training error almost "flat" w.r.t \(\theta,\) gradient gives very little info

\(\mathcal{L}_{01}(g, y)\) is "flat" and discrete in \(g\)

\(g\) is "flat" and discrete in \(\theta\)

Outline

- Linear (binary) classifiers

-

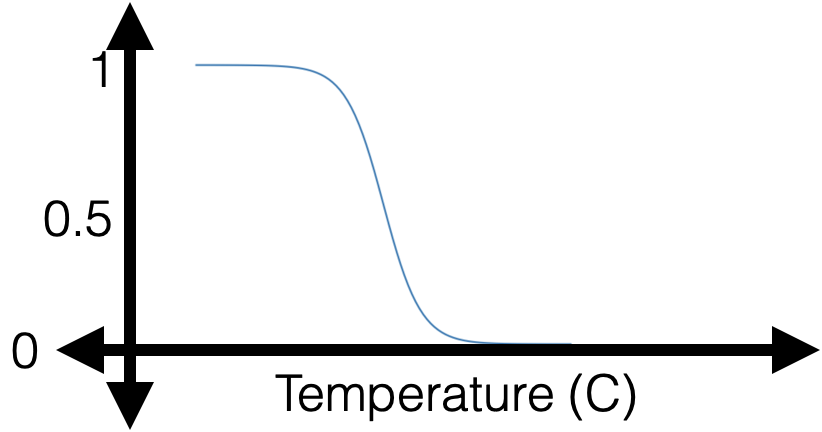

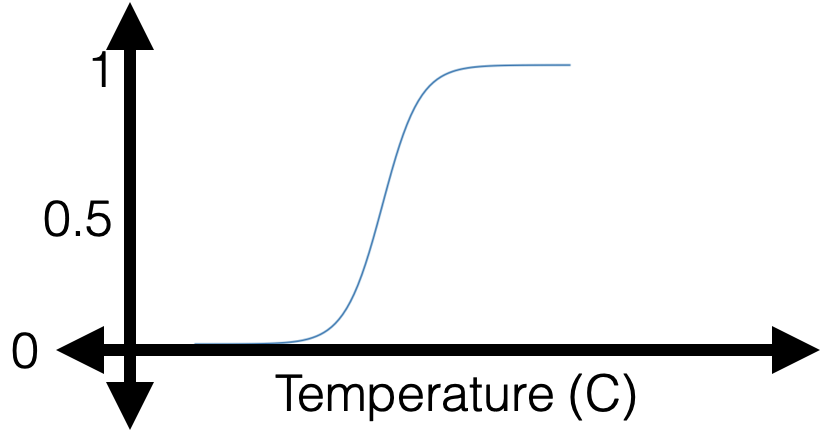

Linear logistic (binary) classifiers

- to use: sigmoid

- to learn: negative log-likelihood loss

- Linear multi-class classifiers

linear binary classifier

features

parameters

linear combo

predict

\(x \in \mathbb{R}^d\)

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

\(\theta^T x +\theta_0\)

\(=z\)

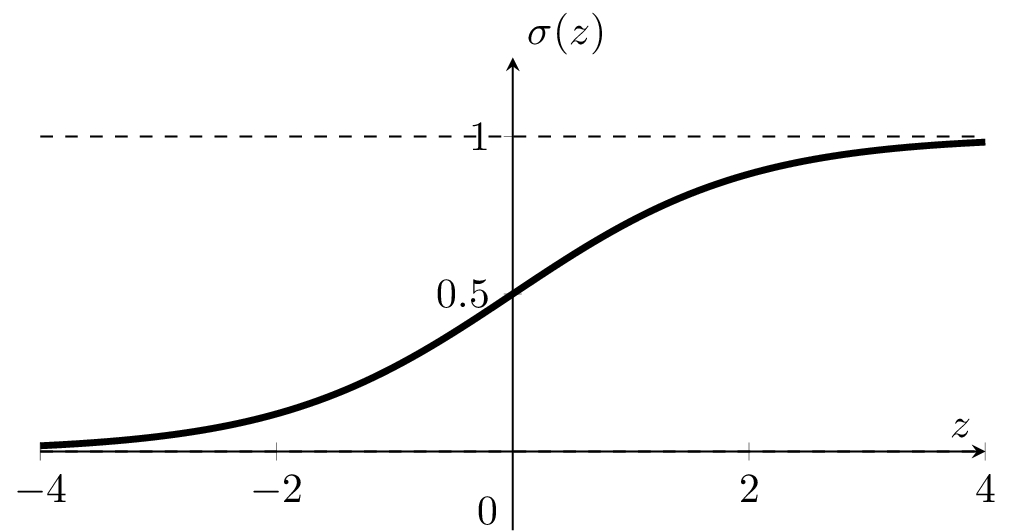

linear logistic binary classifier

if \(z > 0\)

otherwise

\(1\)

0

if \(\sigma(z) > 0.5\)

otherwise

\(1\)

0

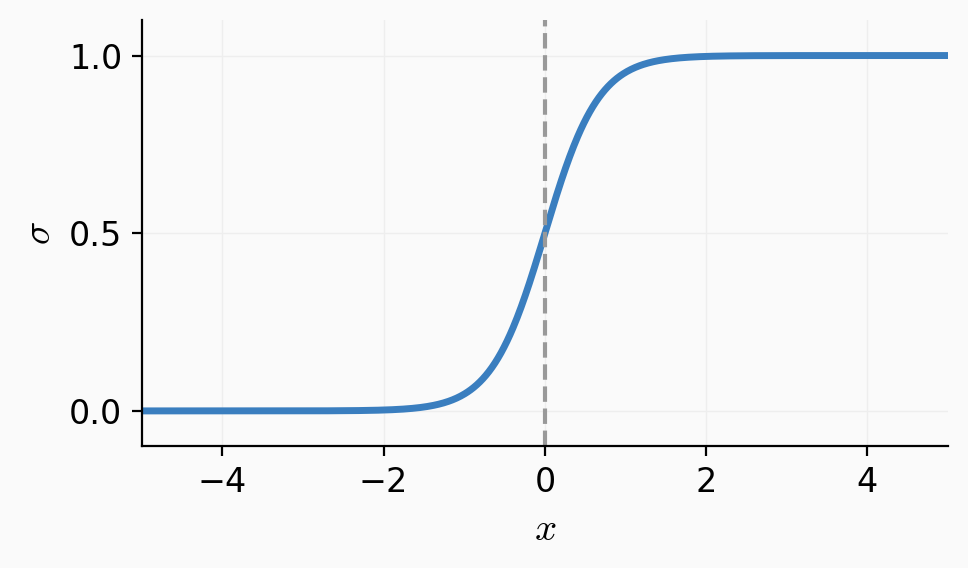

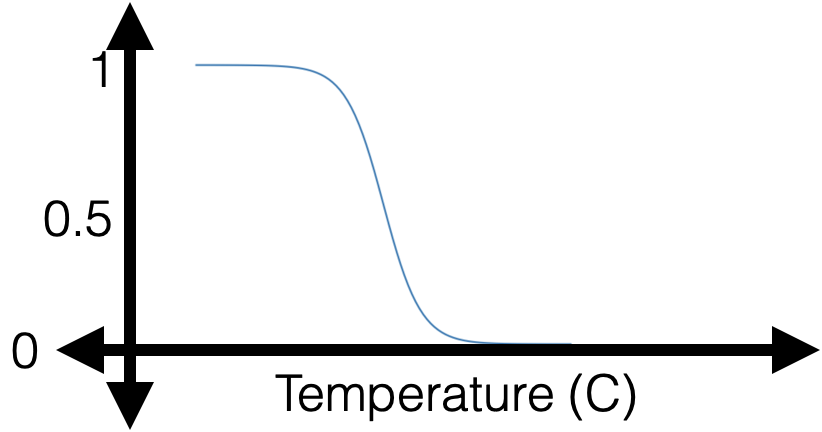

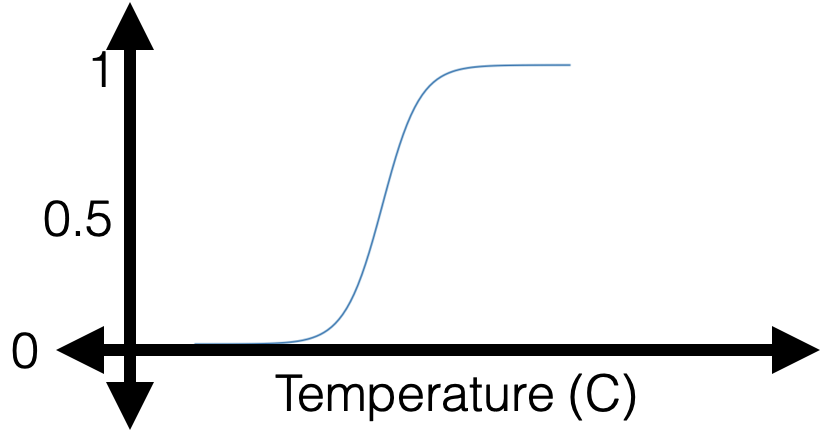

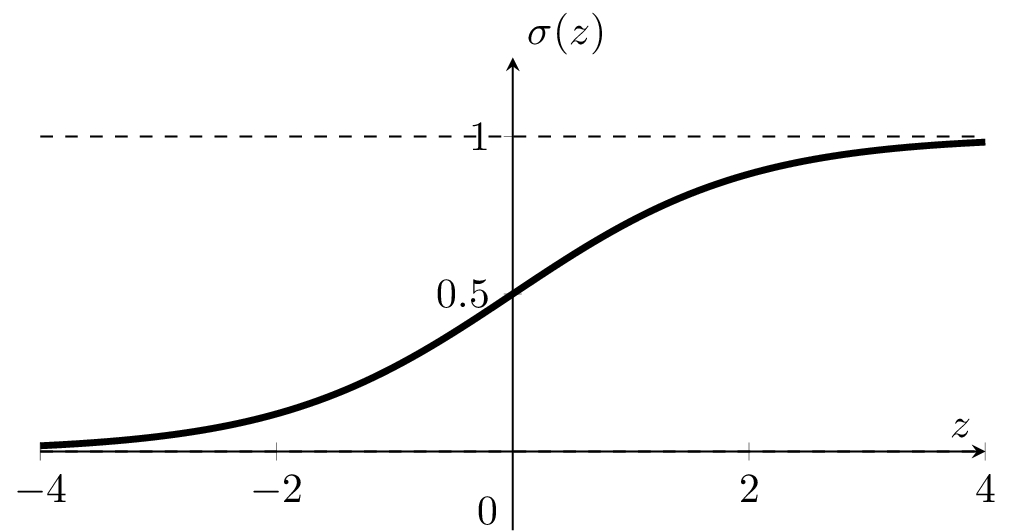

Sigmoid \(\sigma\left(\cdot\right):\) confidence or estimated likelihood that \(x\) belongs to the positive class

- \(\theta\), \(\theta_0\) can flip, squeeze, expand, or shift the \(\sigma\left(x\right)\) graph horizontally

- \(\sigma\left(\cdot\right)\) monotonic, very elegant gradient (see hw/lab)

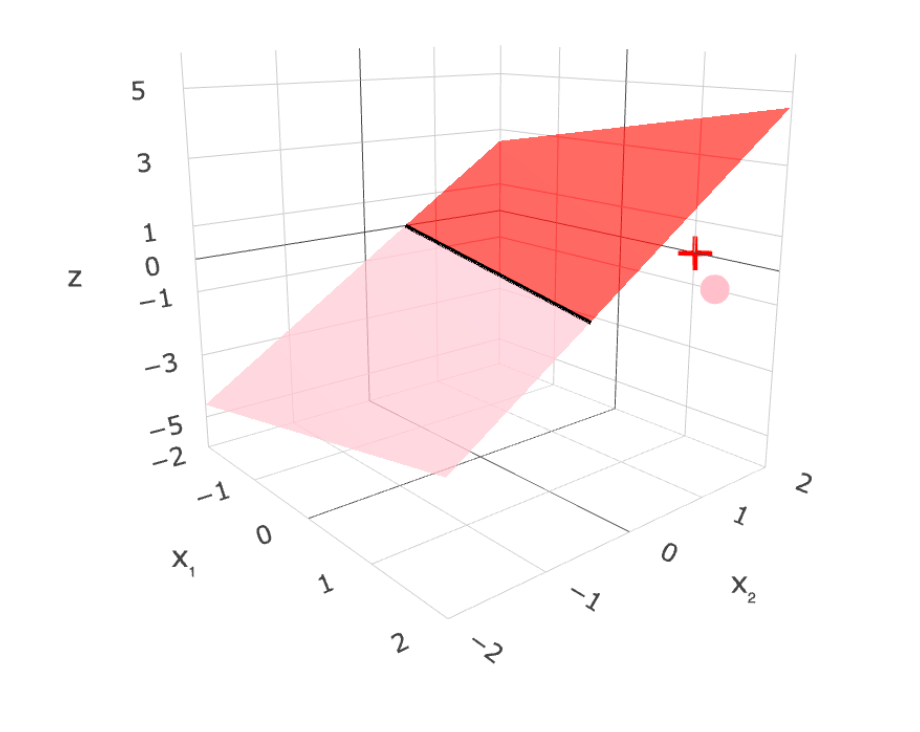

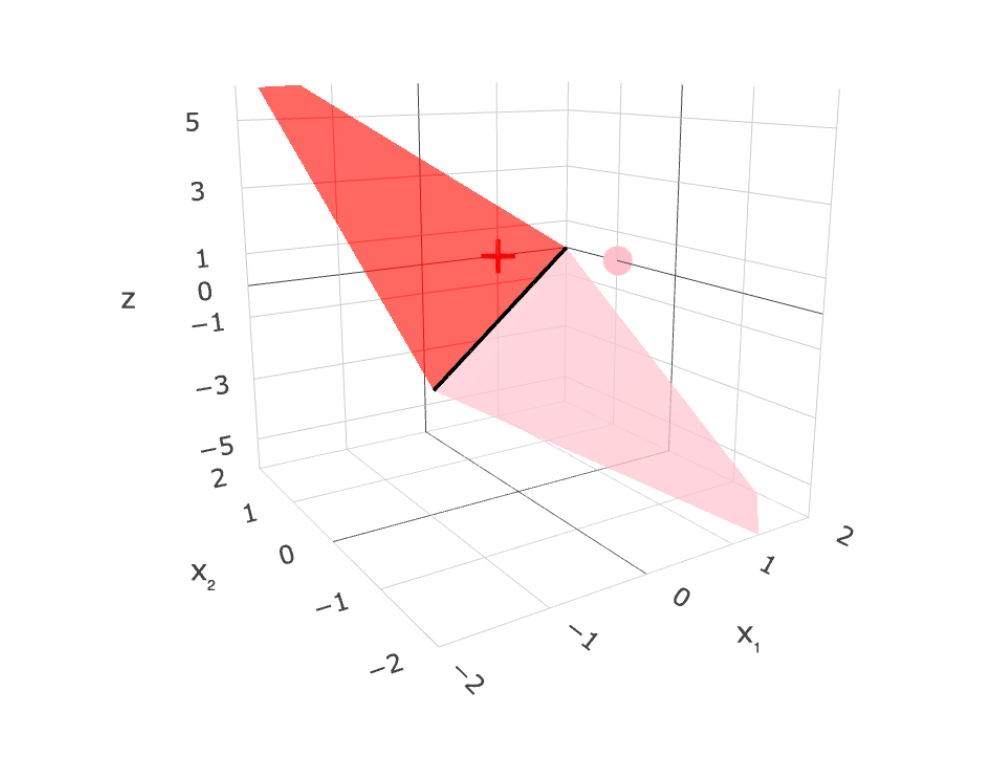

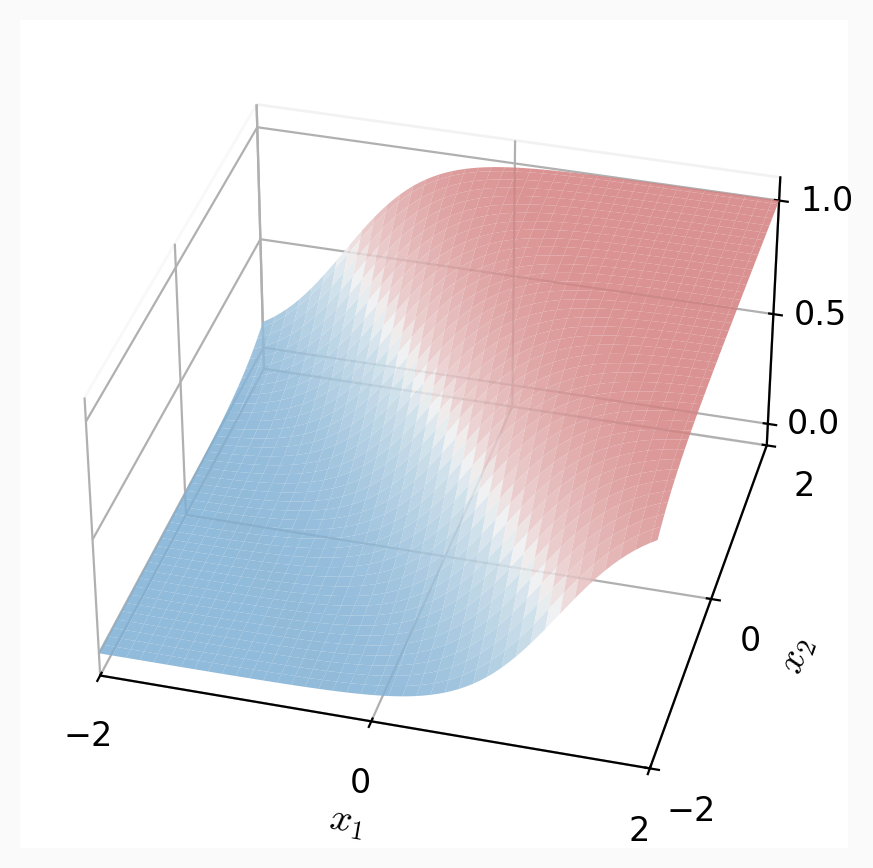

linear logistic binary classifier

1d feature

2d features

features: \(x \in \mathbb{R}^d\)

parameters: \(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

the logit \(z\):

apply sigmoid:

Predict 1 if \(\sigma(z) > 0.5\), else 0.

separator is linear in feature \(x\)!

Outline

- Linear (binary) classifiers

- Linear logistic (binary) classifiers

- to use: sigmoid

- to learn: negative log-likelihood loss

- Linear multi-class classifiers

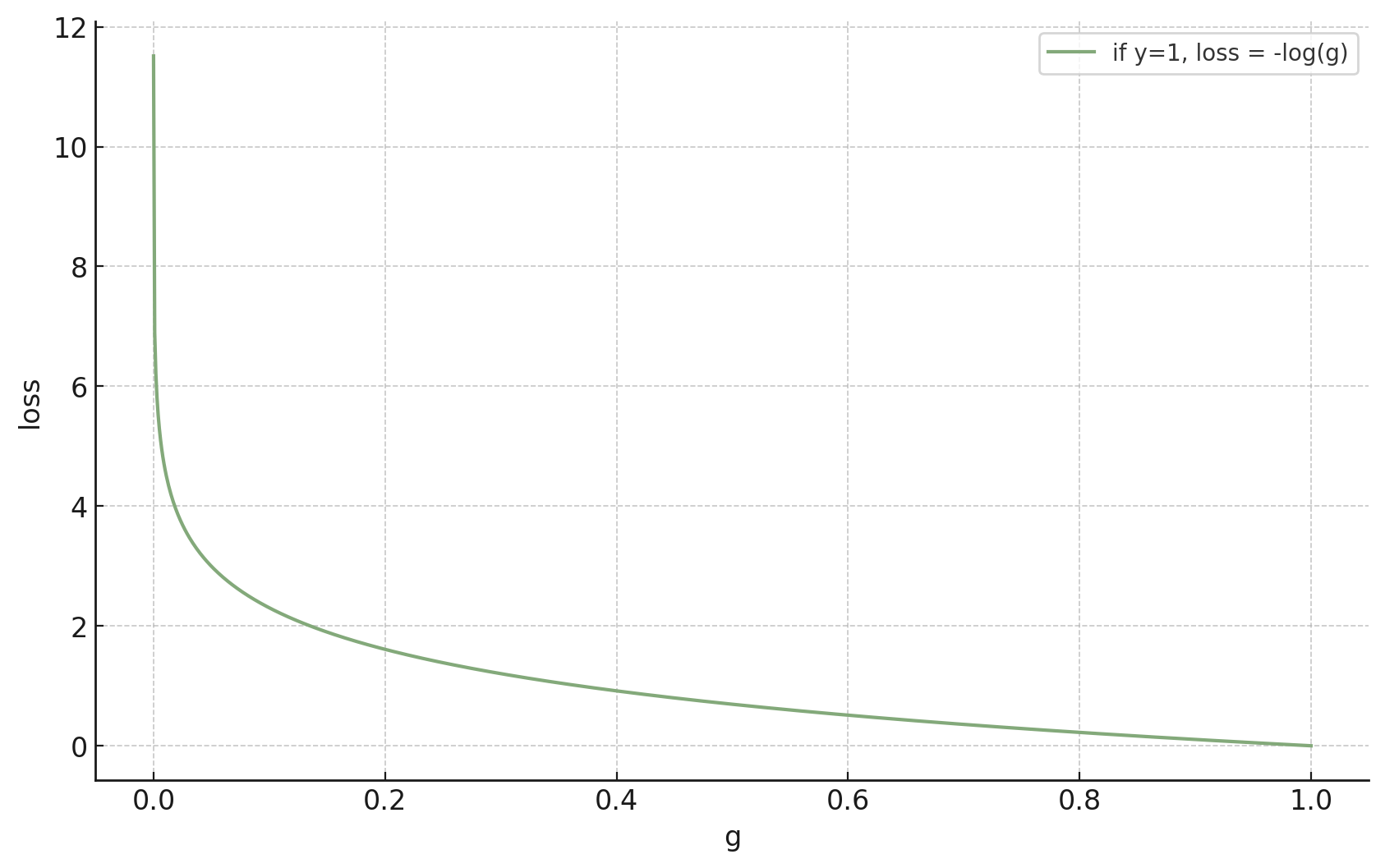

Example: a single training data from \(y = 1\) class

😍

🥺

😍

🥺

\(g\) near \(1\)

\(g\) near \(0\)

want a smooth loss \(\mathcal{L}(g, y)\) to reward \(g\) closer to \(y\)?

negative

log

likelihood

😍

🥺

😍

🥺

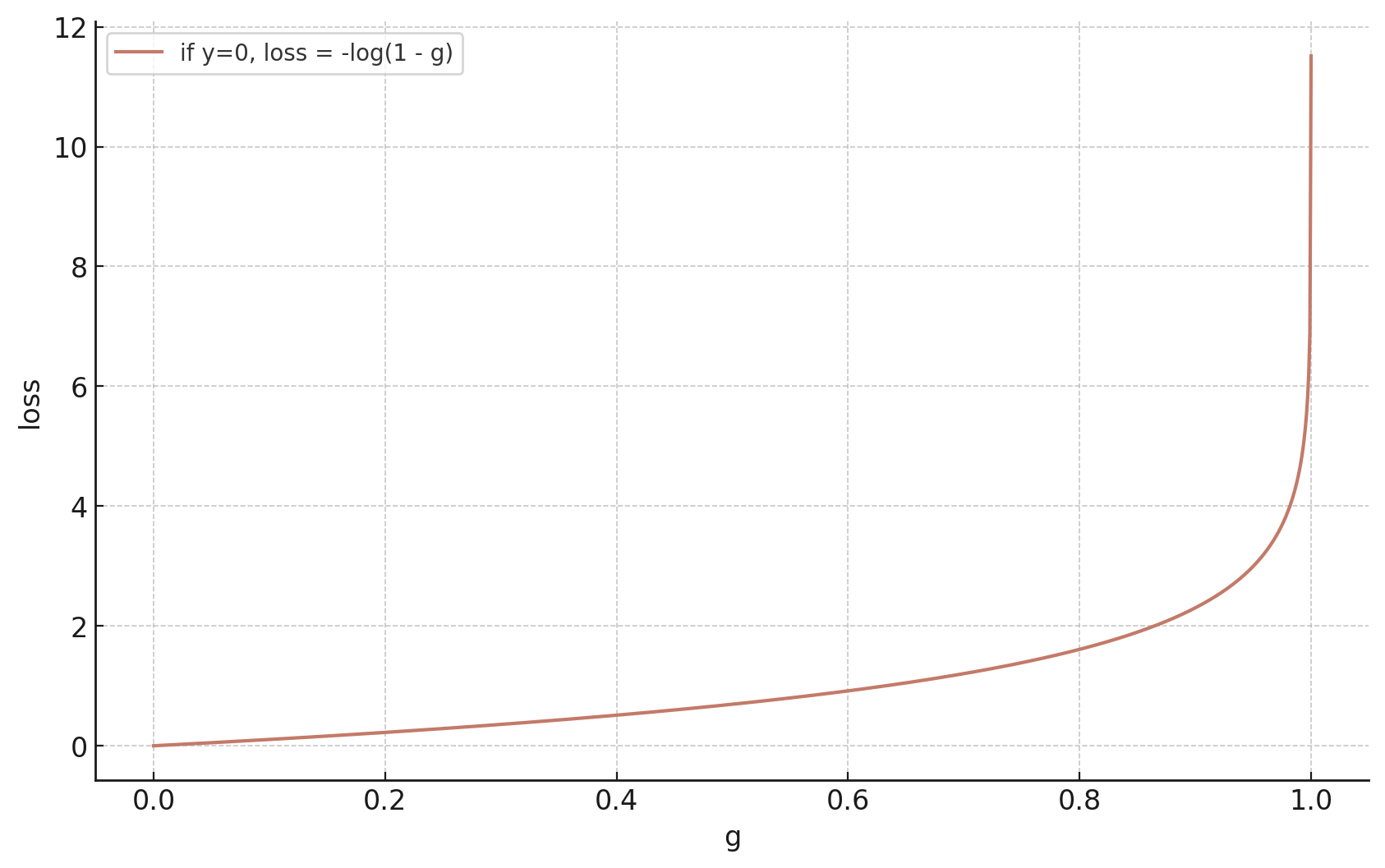

Example: a single training data from \(y = 0 \) class

\(1-g = 1-\sigma\left(\cdot\right):\) the model's predicted likelihood that \(x\) belongs to the negative class.

Because the actual label \(y \in \{0,1\},\)

- When \(y = 1:\)

- When \(y = 0\)

Read as: \(\sum\) (true label for class \(k\)) \(\cdot\) \(-\log\)(predicted prob of class \(k\)).

Since \(y \in \{0,1\}\), only the true class's term survives.

linear binary classifier

linear logistic binary classifier

features

label

parameters

linear combo

predict

loss

optimize via

\(x \in \mathbb{R}^d\)

\(y \in \{0,1\}\)

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

\(\theta^T x +\theta_0 = z\)

NP-hard to learn

gradient descent

training data: \( x= 1, y=1\)

- If the data set is linearly separable, logistic classifier (-log(\sigma) has no (finite) minimizing \(\theta\)

- in theory, \(\theta\) tends to have large magnitude => overly confident

- common to add ridge penalty \(\lambda ||\theta||^2\)

Outline

- Linear (binary) classifiers

- Linear logistic (binary) classifiers

-

Linear multi-class classifiers

- to use: softmax

- to learn: one-hot encoding, cross-entropy loss

Video edited from: HBO, Silicon Valley

🌭

\(x\)

\(\theta^T x +\theta_0\)

\(z \in \mathbb{R}\)

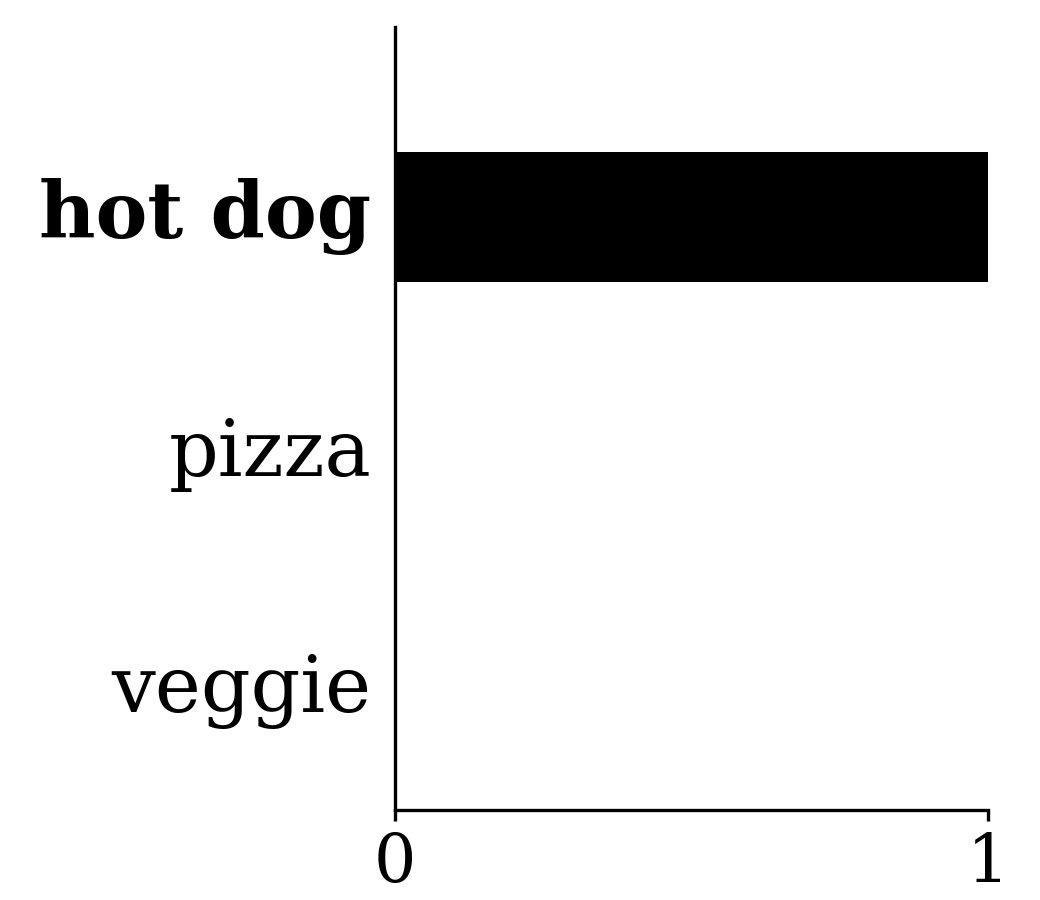

to predict {hotdog, or not_hotdog}, a scalar logit \(z\) suffices

raw score for hotdog

\(1-\sigma\left(\cdot\right):\) predicted probability that \(x\) belongs to not_hotdog

\(\sigma\left(\cdot\right):\) predicted probability that \(x\) belongs to hotdog

normalizing (squashing)

implicitly determines

for \(K > 2\) classes, a single scalar \(z\) no longer suffices — use \(K\) logit scores to keep track

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

🌭

\(x\)

\(\theta^T x +\theta_0\)

\(z \in \mathbb{R^3}\)

predicted probability that \(x\) belongs to hotdog

normalizing (squashing)

for \(K\) classes, use \(K\) logit scores.

e.g. \(K = 3\): \(\{\)hot-dog, pizza, veggie\(\}\)

predicted probability that \(x\) belongs to pizza

predicted probability that \(x\) belongs to veggie

\(K\) logits

one raw score each category

\(\theta \in \mathbb{R}^{d \times K},\)

\(\theta_0 \in \mathbb{R}^{K}\)

outputs all \(\in [0,1]\), sum to \(1\)

max among the \(K\) logits

"soft" max'd in the output

softmax:

e.g.

sigmoid

predict the category with the highest softmax score

softmax:

predict positive if \(\sigma(z)>0.5 = \sigma(0)\)

unifying rule: predict the class with the largest logit

implicit logit for the negative class

features

parameters

linear combo

predict

\(x \in \mathbb{R}^d\)

\(\theta \in \mathbb{R}^d, \theta_0 \in \mathbb{R}\)

\(\theta^T x +\theta_0\)

\(=z \in \mathbb{R}\)

linear logistic

binary classifier

one-out-of-\(K\) classifier

\(\theta \in \mathbb{R}^{d \times K},\)

\(=z \in \mathbb{R}^{K}\)

\(\theta^T x +\theta_0\)

predict positive if \(\sigma(z)>\sigma(0)\)

predict the class with the highest softmax score

\(\theta_0 \in \mathbb{R}^{K}\)

Outline

- Linear (binary) classifiers

- Linear logistic (binary) classifiers

-

Linear multi-class classifiers

- to use: softmax

- to learn: one-hot encoding, cross-entropy loss

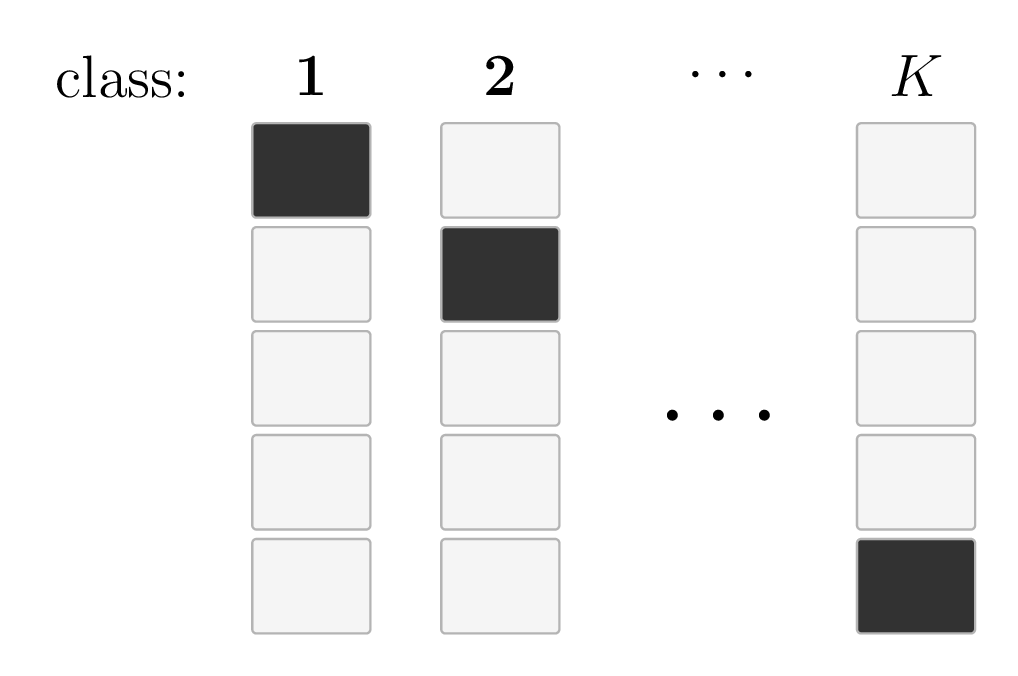

One-hot encoding:

- Generalizes from \(\{0,1\}\) binary labels

Training data

| \(x\) | \(y\) | |||

| ( | 🌭 | , | "hot-dog" | ) |

| ( | 🍕 | , | "pizza" | ) |

| ( | 🥗 | , | "veggie" | ) |

| ( | 🥦 | , | "veggie" | ) |

| \(\vdots\) | ||||

Training data

| \(x\) | \(y\) | |||

| ( | 🌭 | , | \(\begin{bmatrix}1\\0\\0\end{bmatrix}\) | ) |

| ( | 🍕 | , | \(\begin{bmatrix}0\\1\\0\end{bmatrix}\) | ) |

| ( | 🥗 | , | \(\begin{bmatrix}0\\0\\1\end{bmatrix}\) | ) |

| ( | 🥦 | , | \(\begin{bmatrix}0\\0\\1\end{bmatrix}\) | ) |

| \(\vdots\) | ||||

- Encode the \(K\) classes as an \(\mathbb{R}^K\) vector, with a single 1 (hot) and 0s elsewhere

in general, for \(K\) classes:

- Generalizes negative log likelihood loss \(\mathcal{L}_{\mathrm{nll}}({g}, {y})= - \left[y \log g +\left(1-y \right) \log \left(1-g \right)\right]\)

-

Despite the \(K\)-term sum, only the term corresponding to its true class label contributes, since all other \(y_k=0\)

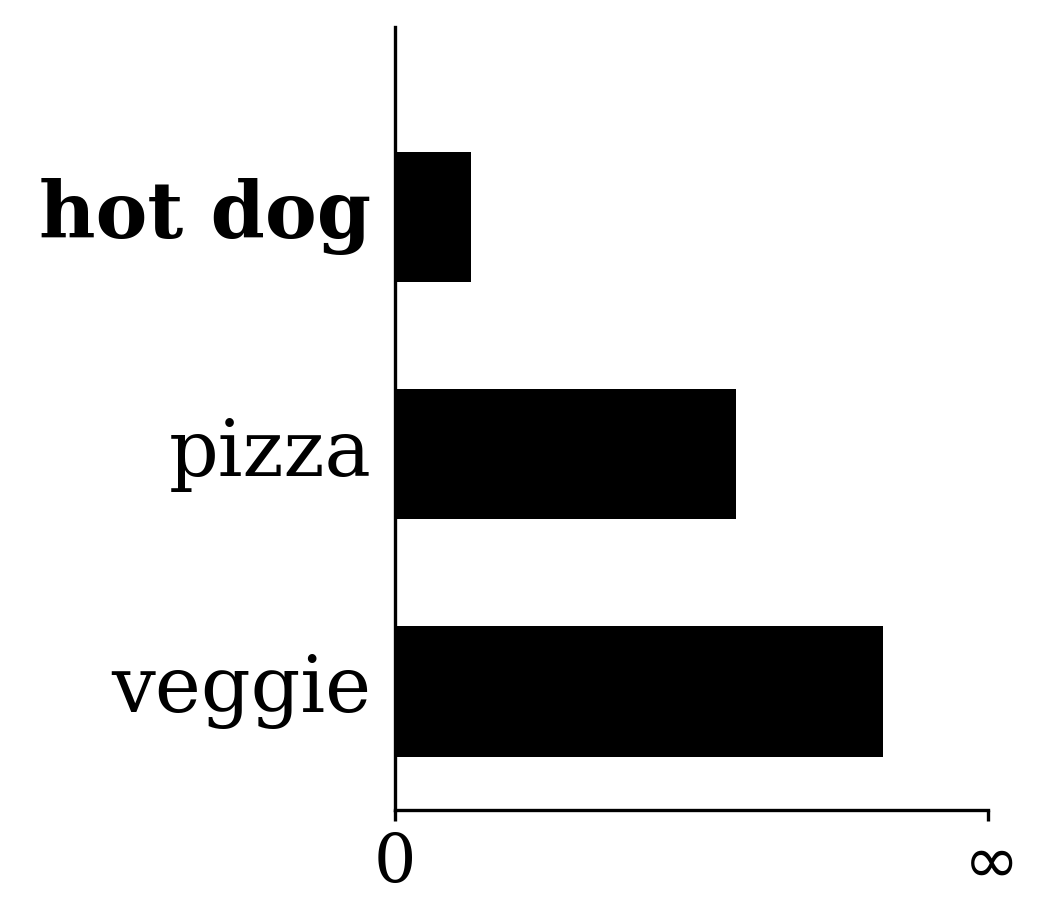

Negative log-likelihood \(K\)-class loss (aka, cross-entropy)

\(y:\)one-hot encoding label

\(y_{{k}}:\) \(k\)th entry in \(y\), either 0 or 1

\(g:\) softmax output

\(g_{{k}}:\) probability or confidence of belonging in class \(k\)

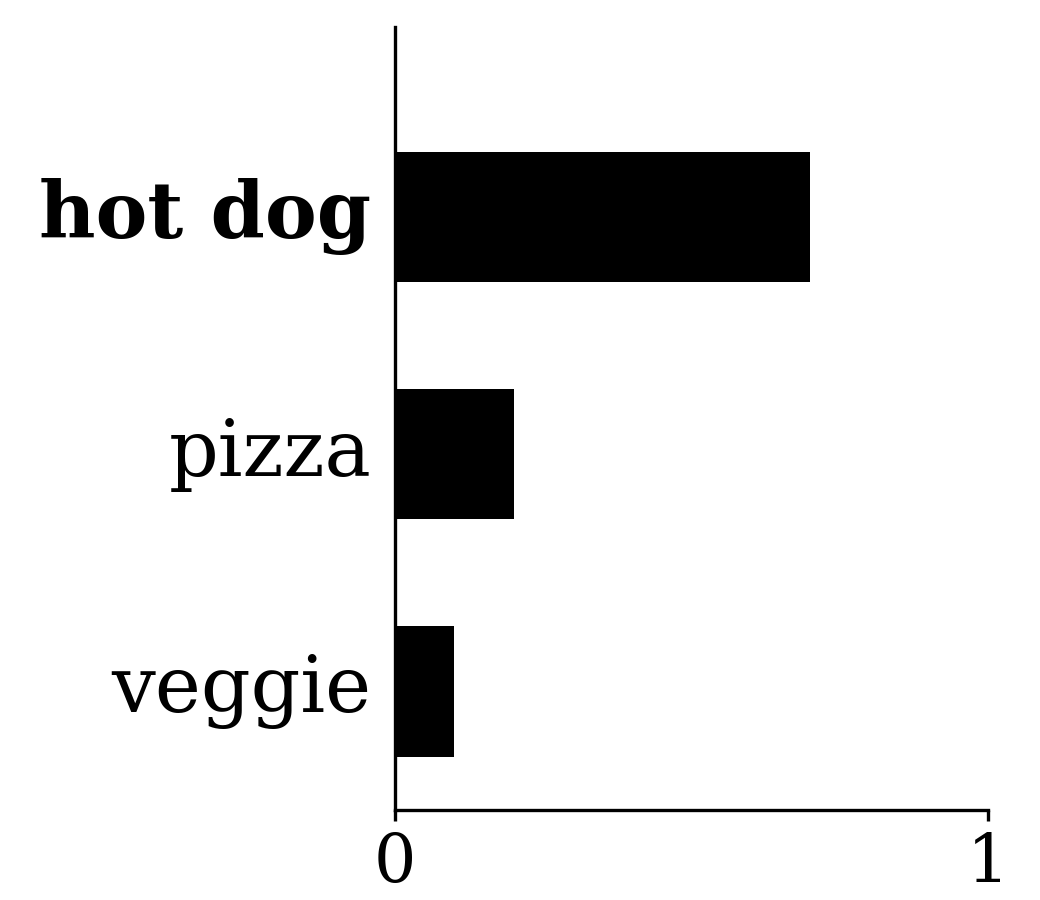

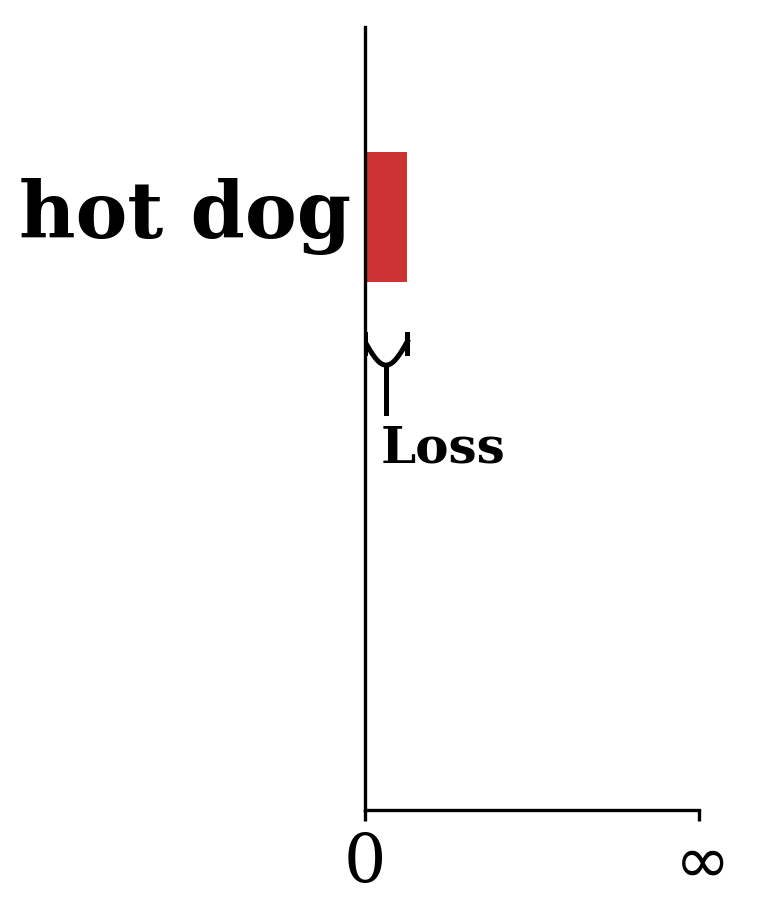

🌭

current prediction \(g=\text{softmax}(\cdot)\)

To reduce the loss, \(g_{\text{hotdog}}\) needs to go up — this signal flows smoothly back to \(\theta\) through \(-\!\log\) and softmax, so we can optimize via gradient descent.

Summary

Classification predicts a label from a discrete set; a linear binary classifier separates the feature space with a hyperplane defined by \(\theta, \theta_0\).

The 0-1 loss is natural for classification but NP-hard to optimize.

The sigmoid \(\sigma(z)\) gives a smooth, probabilistic step function; paired with the NLL loss, we can train via (S)GD.

Regularization remains important for logistic classifiers.

Multi-class classification generalizes via one-hot encoding and softmax.