Properties of Linear Systems

Numerical Methods

David Mayerich

Scalable Tissue Imaging and Modeling (STIM) Laboratory

Department of Electrical and Computer Engineering

Cullen College of Engineering

University of Houston

David Mayerich

STIM Laboratory, University of Houston

Matrix Inversion

David Mayerich

STIM Laboratory, University of Houston

Matrix Inverse

-

Some applications require calculating the matrix inverse \(\mathbf{A}^{-1}\)

-

Computer graphics, image processing, multiple-input multiple-output (MIMO) communication

-

Determining if a linear system is ill-conditioned

-

-

By definition, the matrix inverse is:

David Mayerich

STIM Laboratory, University of Houston

Invert by LU Decomposition

David Mayerich

STIM Laboratory, University of Houston

Invert by LU Decomposition

David Mayerich

STIM Laboratory, University of Houston

Singular Matrices

Infinite or no solutions

Determinants

David Mayerich

STIM Laboratory, University of Houston

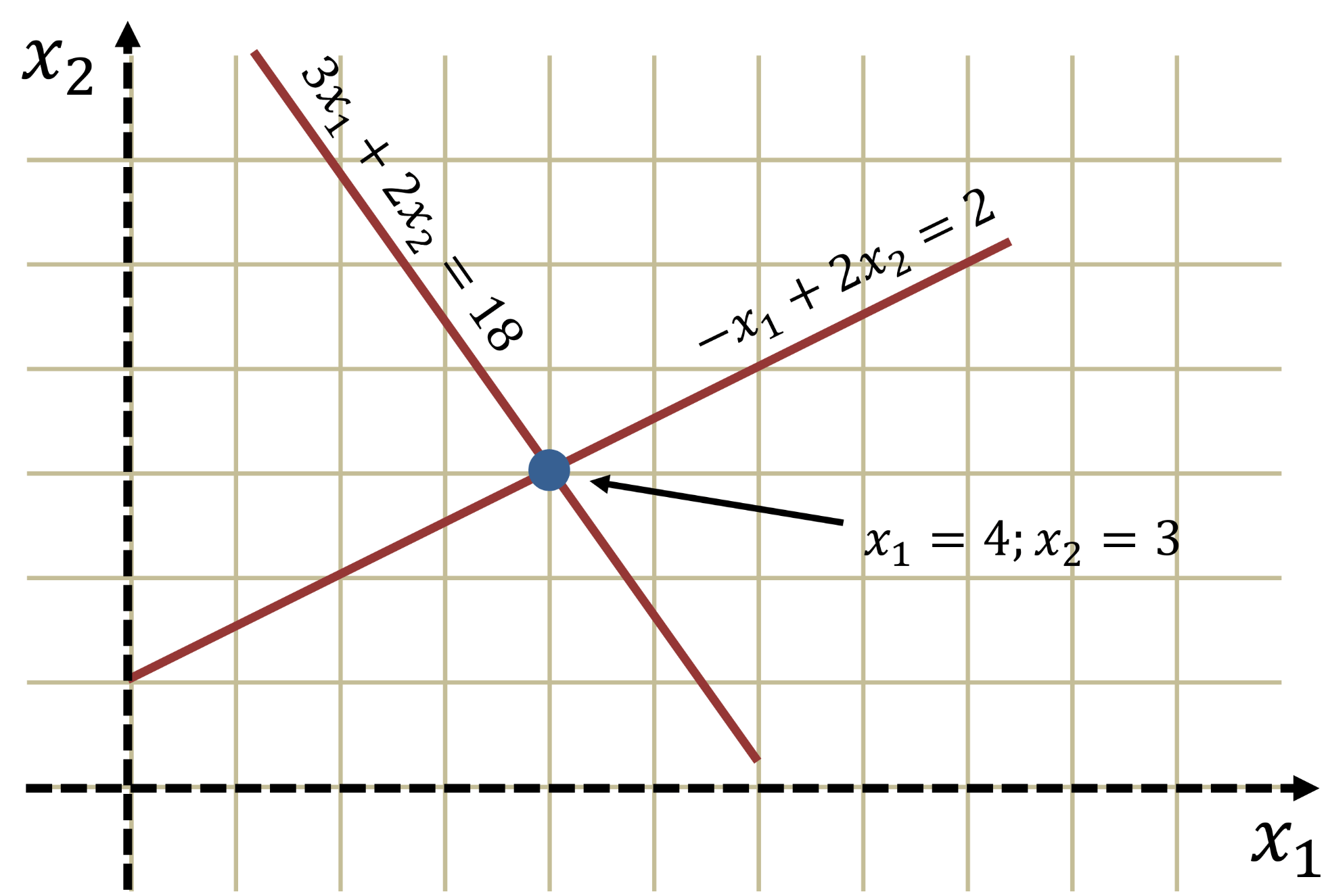

Linear Systems Graphically

-

Given the linear system:

David Mayerich

STIM Laboratory, University of Houston

The solution is the intersection of both lines:

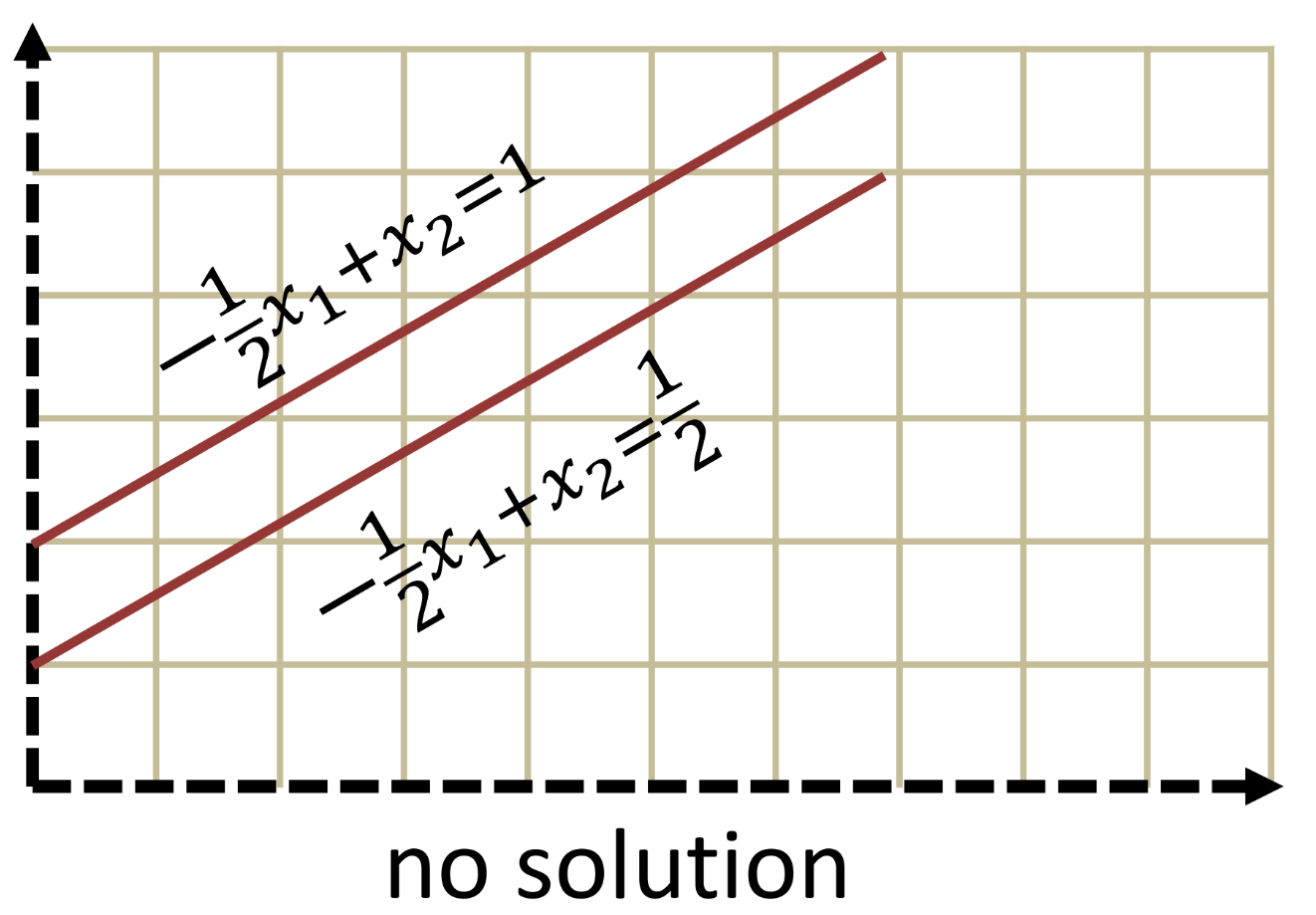

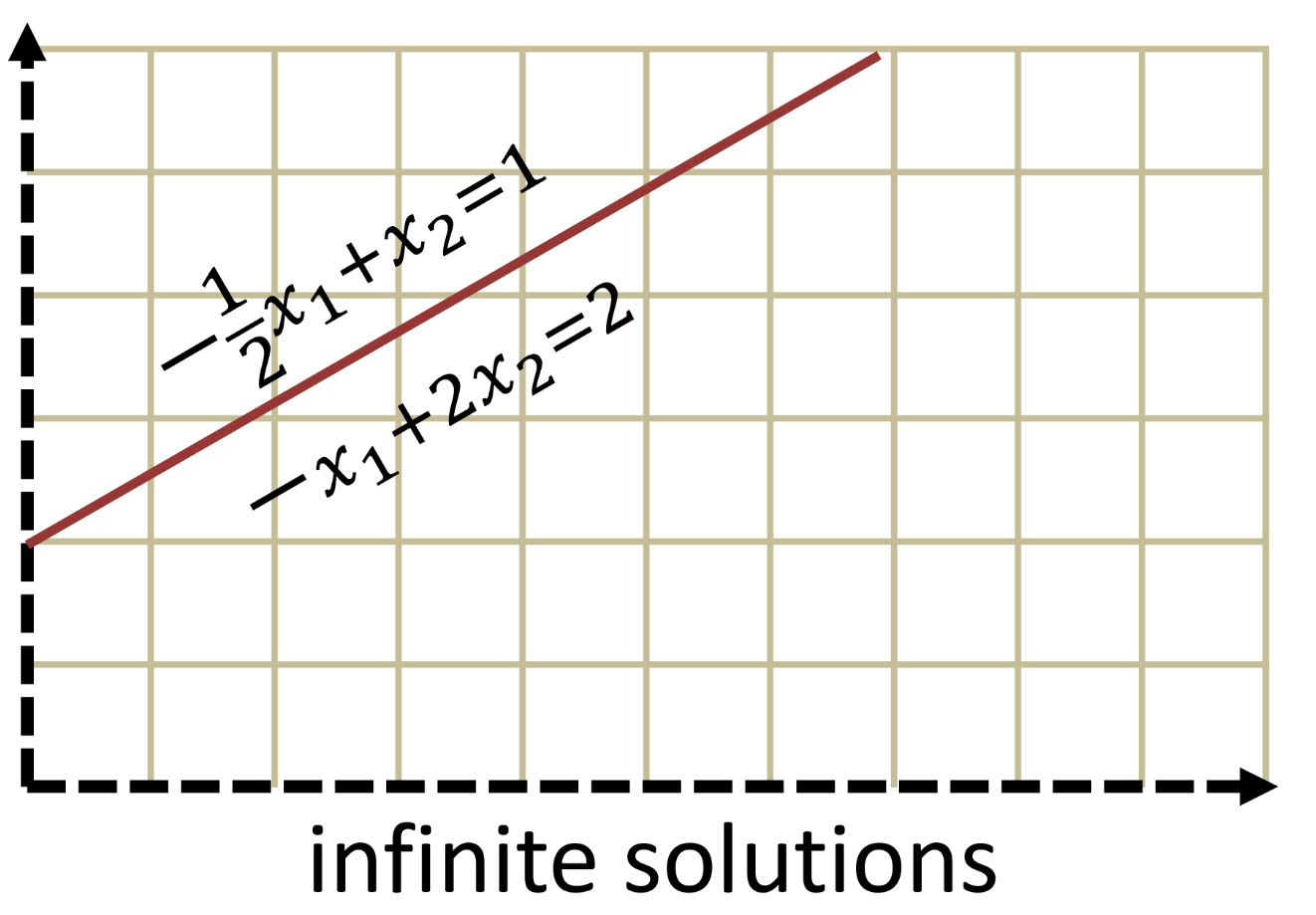

Singular Matrices

-

Singular matrices do not have a unique solution

-

Parallel planes indicate that the system has no solution

-

Overlapping planes result in infinite solutions

-

David Mayerich

STIM Laboratory, University of Houston

-

Matrices with multiple solutions or no solutions

- Statistically these are rare (if you generate random matrices)

Determinants

David Mayerich

STIM Laboratory, University of Houston

-

Singular matrices can be found using the determinant:

-

Determinants of small matrices:

Properties of Determinants

-

The determinant of the identity matrix is \(1\):

-

The determinant of the inverse of a matrix is equal to the inverse of the determinant:

-

Multiplication by a scalar value \(\alpha\):

-

The determinant of a matrix product is equal to the product of the determinants:

-

A linear system \(\mathbf{Ax}=\mathbf{b}\) has a unique solution if and only if \(\text{det}(\mathbf{A})\neq 0\)

David Mayerich

STIM Laboratory, University of Houston

Laplace Expansion

-

For general matrices \(\mathbf{A}\in \mathbb{R}^{n \times n}\), the Laplace expansion can be used:

David Mayerich

STIM Laboratory, University of Houston

\(a_{ij}\) is the scalar entry at row \(i\) and column \(j\)

\(\mathbf{M}_{ij}\) is the submatrix of \(\mathbf{A}\) with row \(i\) and column \(j\) removed

-

Consider the Laplace expansion of a \(3\times 3\) matrix using row \(1\):

The Laplace expansion is a recursive algorithm with non-polynomial complexity \(O(n!)\)

Laplace Expansions for Diagonal Matrices

-

Calculate the Laplace expansion for row \(2\) of the matrix:

David Mayerich

STIM Laboratory, University of Houston

-

Calculate the Laplace expansion for row \(4\):

Calculating \(n\times n\) Determinants

-

The determinant of a triangular matrix is the product of the diagonal elements:

David Mayerich

STIM Laboratory, University of Houston

-

Given the product property \(|\mathbf{A}\mathbf{B}| = |\mathbf{A}| |\mathbf{B}|\), what is \(|\mathbf{A}| = |\mathbf{LU}|\)?

-

The determinant of a matrix \(\mathbf{A}\) can be solved using LU decomposition and calculating the product of the diagonal of \(\mathbf{U}\)

Determinants with Pivoting

-

Swapping rows in a matrix \(\mathbf{A}\) multiplies the determinant by \((-1)\)

David Mayerich

STIM Laboratory, University of Houston

-

Calculate the determinant using scaled partial pivoting:

swap

swap

Matrix Norms

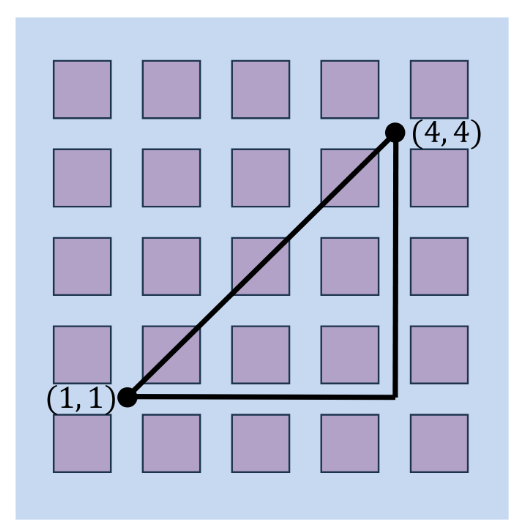

Euclidean and Manhattan Distance

Matrix and Vector Norms

David Mayerich

STIM Laboratory, University of Houston

Vector Norms

-

A vector norm is a scalar metric used to calculate the length of a vector

-

The most common set of vector norms are the \(L^p\)-norms:

David Mayerich

STIM Laboratory, University of Houston

-

The two most common are the \(L^2\)-norm and \(L^1\)-norm:

Euclidean

distance

Manhattan

distance

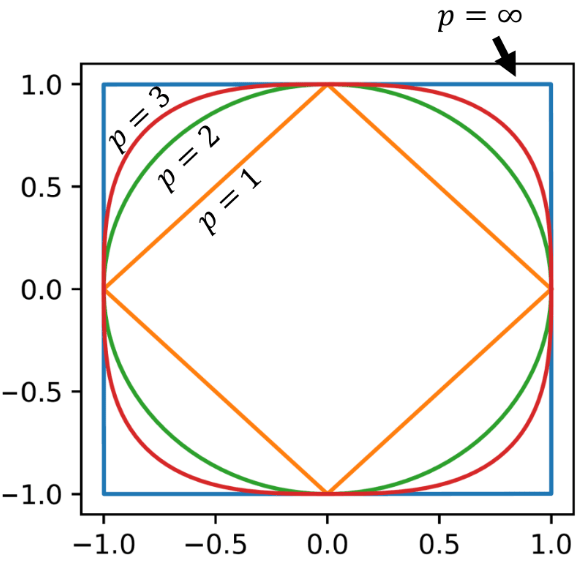

Behavior of \(p\)-Norms

-

The \(L^\infty\)-norm converges to the maximum entry of \(\mathbf{v}\)

-

The \(L^0\) "norm" provides the number of non-zero elements

this is a bit of a hack (hence "norm") by defining \(0^0=0\)

David Mayerich

STIM Laboratory, University of Houston

This graph shows the isovalue where \(||\mathbf{v}||_p = 1\) for \(\mathbf{v}\in \mathbb{R}^{2}\)

Properties of Matrix and Vector Norms

-

A matrix norm \(||\mathbf{A}||\) is induced by its vector norm \(||\mathbf{x}||\) such that they obey the following properties for \(\mathbf{x}\in \mathbb{C}^n\), \(\mathbf{A}\in \mathbb{C}^{n\times n}\), and \(\alpha \in \mathbb{C}\):

David Mayerich

STIM Laboratory, University of Houston

Matrix Induced by \(L^p\)-Norms

David Mayerich

STIM Laboratory, University of Houston

sum the magnitude of all values in each column, taking the largest as the norm

sum the magnitude of all values in each row, taking the largest as the norm

square root of the largest eigenvalue of \(\mathbf{A}\)

- The \(L^2\) norm is the most common, because it provides the tightest bound.

- Requires calculating the eigendecomposition of \(\mathbf{A}\)

- Can be approximated: \(||\mathbf{A}||_2 \leq \sqrt{||\mathbf{A}||_1 ||\mathbf{A}||_\infty}\)

Matrix Conditioning

Ill-Conditioned Systems

Condition Number

David Mayerich

STIM Laboratory, University of Houston

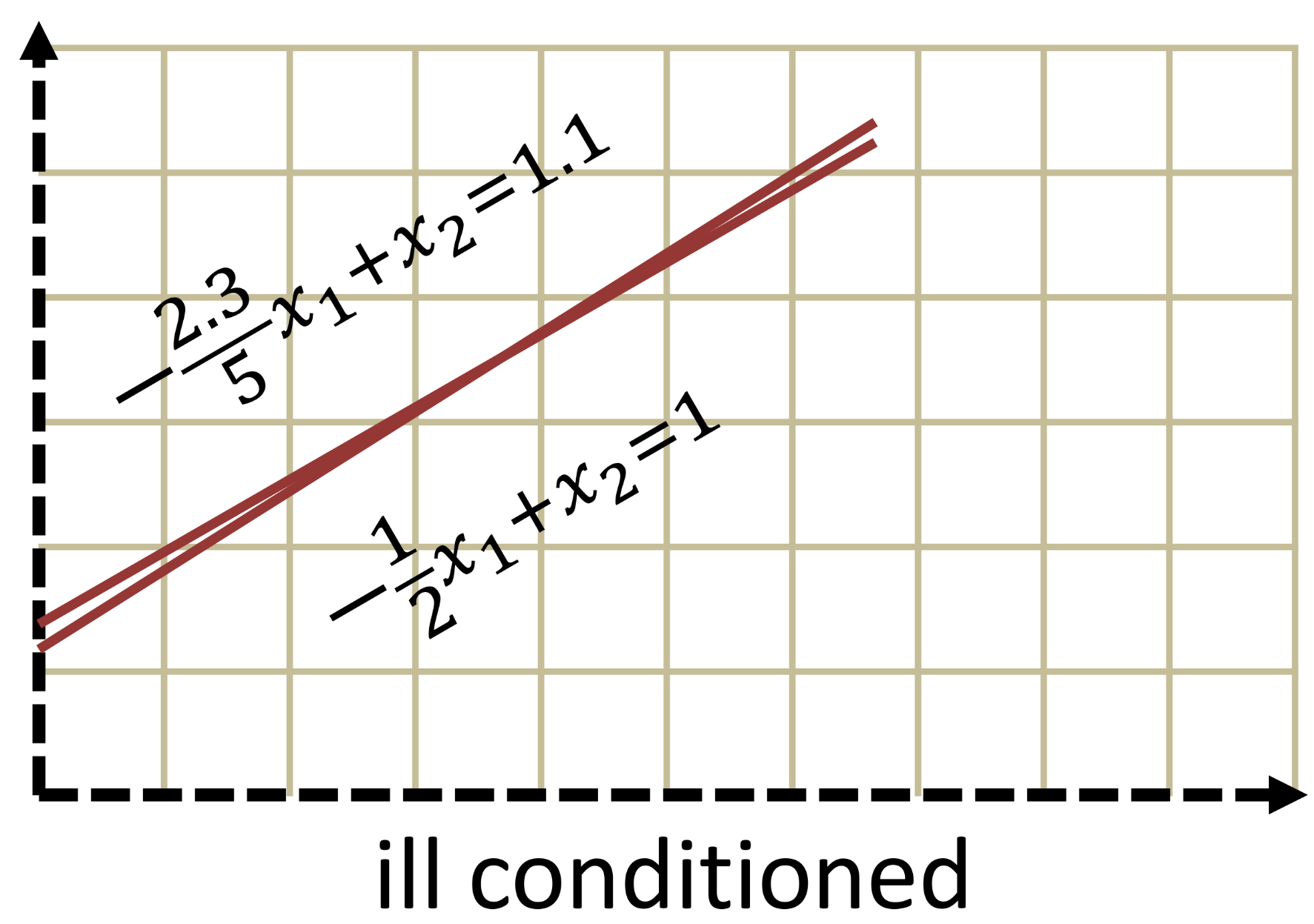

Ill-Conditioned Systems

-

Linear equations representing parallel planes do not have a single solution

-

Matrices representing these systems are singular (non-invertible)

-

Singular matrices have a determinant of zero: \(|\mathbf{A}|=0\)

-

Linear systems with planes that almost overlap are ill-conditioned

-

Ill-conditioned systems are sensitive to small changes in the right-hand-side

David Mayerich

STIM Laboratory, University of Houston

Does a small determinant suggest an ill-conditioned system?

Unfortunately no:

Examples of Ill-Conditioned Systems

David Mayerich

STIM Laboratory, University of Houston

Matrix Condition Number

-

The sensitivity of the linear system to changes in right-hand-side values is quantified by the matrix condition number:

David Mayerich

STIM Laboratory, University of Houston

-

A small condition number indicates that the system is relatively insensitive to input values (including roundoff errors)

-

A large condition number suggests that a system is highly sensitive and may not be solvable

What does the condition number show?

-

Given the linear system \(\mathbf{Ax}=\mathbf{b}\), assume a perturbation \(\mathbf{b}_\Delta\) in \(\mathbf{b}\) that results in a change \(\mathbf{x}_\Delta\) in the solution:

David Mayerich

STIM Laboratory, University of Houston

-

We want to describe \(\mathbf{x}_\Delta\) in terms of \(\mathbf{b}_\Delta\)

since \(\frac{1}{||\mathbf{x}||}\leq \frac{||\mathbf{A}||}{||\mathbf{b}||}\), multiplying doesn't change the inequality

Conditioning and Precision Loss

-

If \(\kappa(\mathbf{A})=10^k\) then we expect to lose \(k\) digits of precision

-

If we know the coefficients of \(\mathbf{A}\) to \(t\)-digit precision, and \(\kappa(\mathbf{A}) \approx 10^k\), then the result is accurate to \(\approx 10^{t-k}\) digits

-

A similar analysis can be done for bits: if \(\kappa(\mathbf{A})=2^b\) then we expect to lose \(b\) bits of precision

-

The \(L^2\) norm is used in the definition of \(\kappa\), however this can be approximated using \(||\mathbf{A}||_2 \leq \sqrt{||\mathbf{A}_1||_1\ ||\mathbf{A}||_\infty}\)

David Mayerich

STIM Laboratory, University of Houston