Regression

Numerical Methods

David Mayerich

Scalable Tissue Imaging and Modeling (STIM) Laboratory

Department of Electrical and Computer Engineering

Cullen College of Engineering

University of Houston

David Mayerich

STIM Laboratory, University of Houston

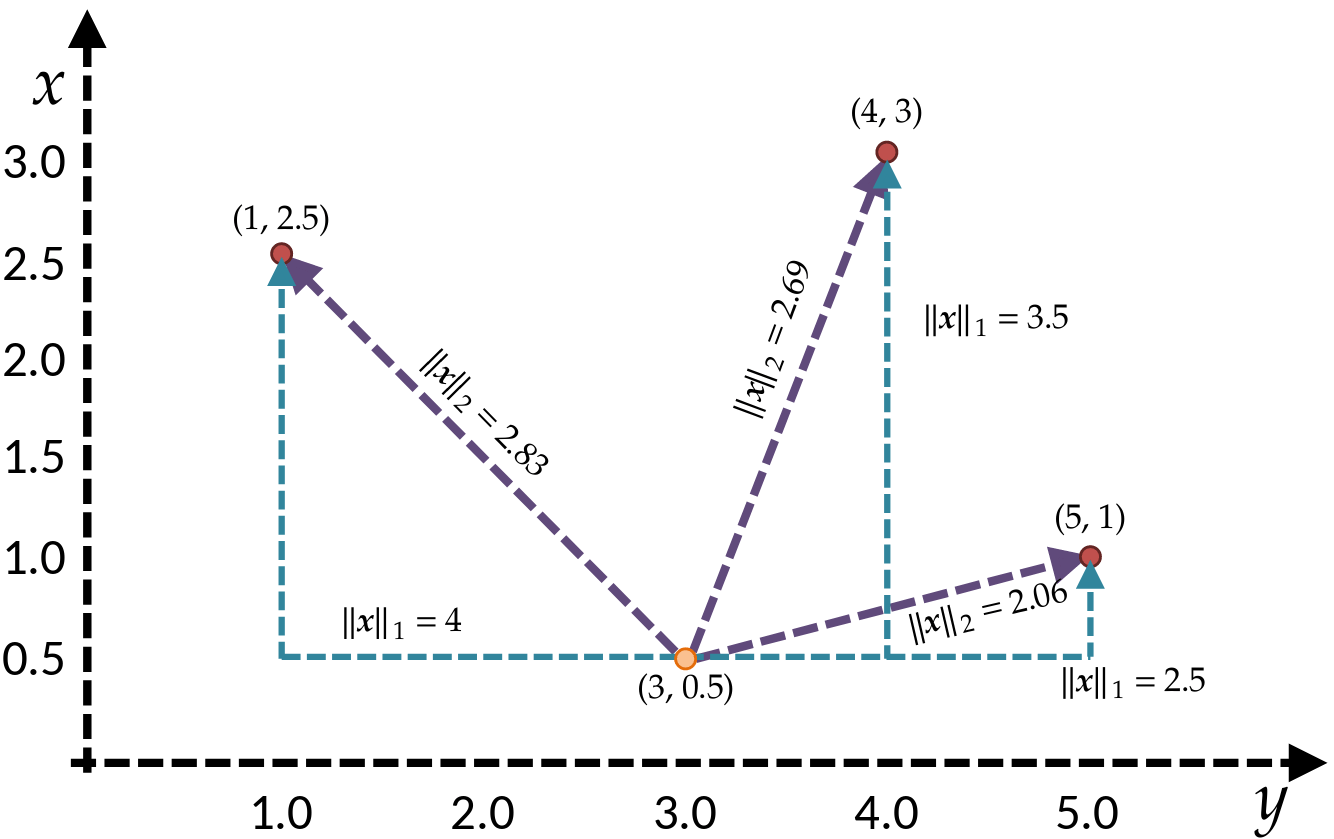

A Review of Vector Norms

-

The norm provides a measure of the magnitude of a vector

-

The notation \(||\mathbf{x}||_p\) denotes the \(L^p\) norm of a vector:

David Mayerich

STIM Laboratory, University of Houston

Manhattan

Euclidean

Statistics

-

Common statistical measurements of a vector \(\mathbf{x}\in\mathbb{R}^n\) include:

-

mean, average, or expected value:

David Mayerich

STIM Laboratory, University of Houston

-

variance:

-

standard deviation:

Regression

-

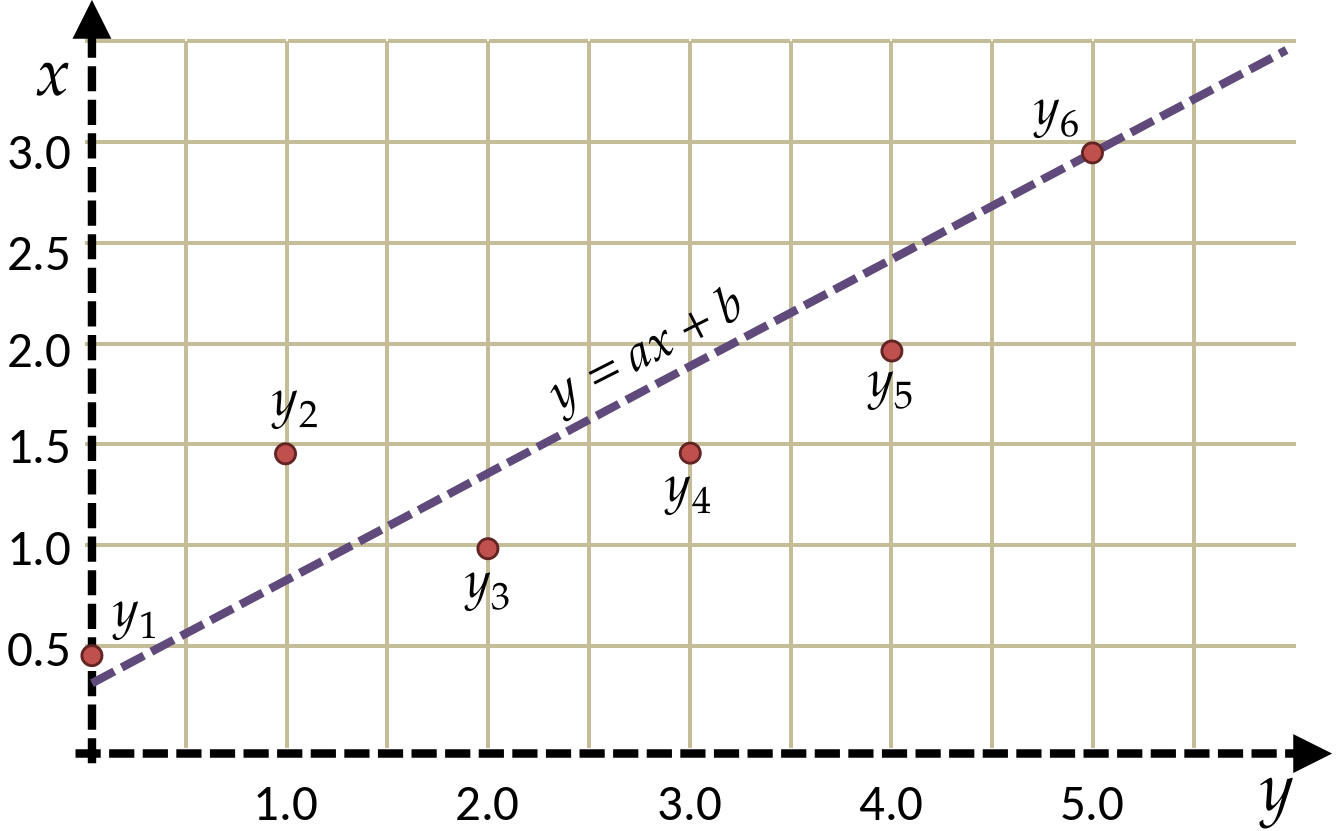

Assume we have a table of data points containing \((x_i,y_i)\) pairs

-

Prior information suggests that these points are on a line

-

(deviations may be due to noise, measurement errors, etc.)

-

David Mayerich

STIM Laboratory, University of Houston

| x | y |

|---|---|

| 0.0 | 0.5 |

| 1.0 | 1.5 |

| 2.0 | 1.0 |

| 3.0 | 1.5 |

| 4.0 | 2.0 |

| 5.0 | 3.5 |

Regression

-

How can we approximate this known function from measured points?

-

If we know that the expected model is a line:

David Mayerich

STIM Laboratory, University of Houston

where \(a\) and \(b\) are the parameters we want to know

-

If we plug in some value \(x_i\) and our model is accurate, we expect:

or, alternatively

-

We want the error term \(\epsilon\) to be as small as possible

Regression Error

-

We calculate the absolute error for a single value:

David Mayerich

STIM Laboratory, University of Houston

-

The sum of all absolute errors gives us a metric to quantify the "fit" between our model and the points:

-

We could select \(a\) and \(b\) such that the \(L^1\) norm is minimized

-

Unfortunately \(L^1\) minimization is difficult:

-

Finding minima of functions generally relies on solving a differential equation

-

\(||\mathbf{x}||_1\) is not differentiable

-

Minimization of Error

-

We have a set of observations:

David Mayerich

STIM Laboratory, University of Houston

-

We can look at the set of values describing the difference between our model \(y=ax+b\) and the observations \(B\):

-

What characteristics do we expect in \(\Psi\) if \(a\) and \(b\) are good parameters?

-

The mean \(\mu(\Psi)\) will be small:

-

all points will lie on the line OR some points will lie above and some below

-

- The variance \(\sigma^2(\Psi)\) will describe the quality of the fit

- ideally \(\sigma^2(\Psi)\) will be small (\(\sigma^2(\Psi) = 0\) if all points are on the line)

Minimization of Error

-

Note that the mean of the difference between the model and the measurements are given by:

David Mayerich

STIM Laboratory, University of Houston

-

With a small mean (\(\mu\approx 0\)), the variance is:

-

What happens to our line if we select \(a\) and \(b\) such that the variance is minimized?

-

Minimizing the variance \(\sigma^2(\Phi)\) minimizes deviation between the model and the measurements

Cost Functions

-

A cost function can be used to describe the quality of a set of parameters

-

The cost function \(K(\cdots)\) is a function of parameters we are searching for:

David Mayerich

STIM Laboratory, University of Houston

model

cost function

-

A smaller value defines a better fit than a larger value:

if \(K(a_1, b_1)<K(a_2, b_2)\) then \(a_1\) and \(b_1\) are "better" parameters

-

It is helpful to have a cost function that is differentiable

-

ex. you can find local minima with Newton's method

-

if a cost function can't be differentiated, we have to use a more complex optimization

-

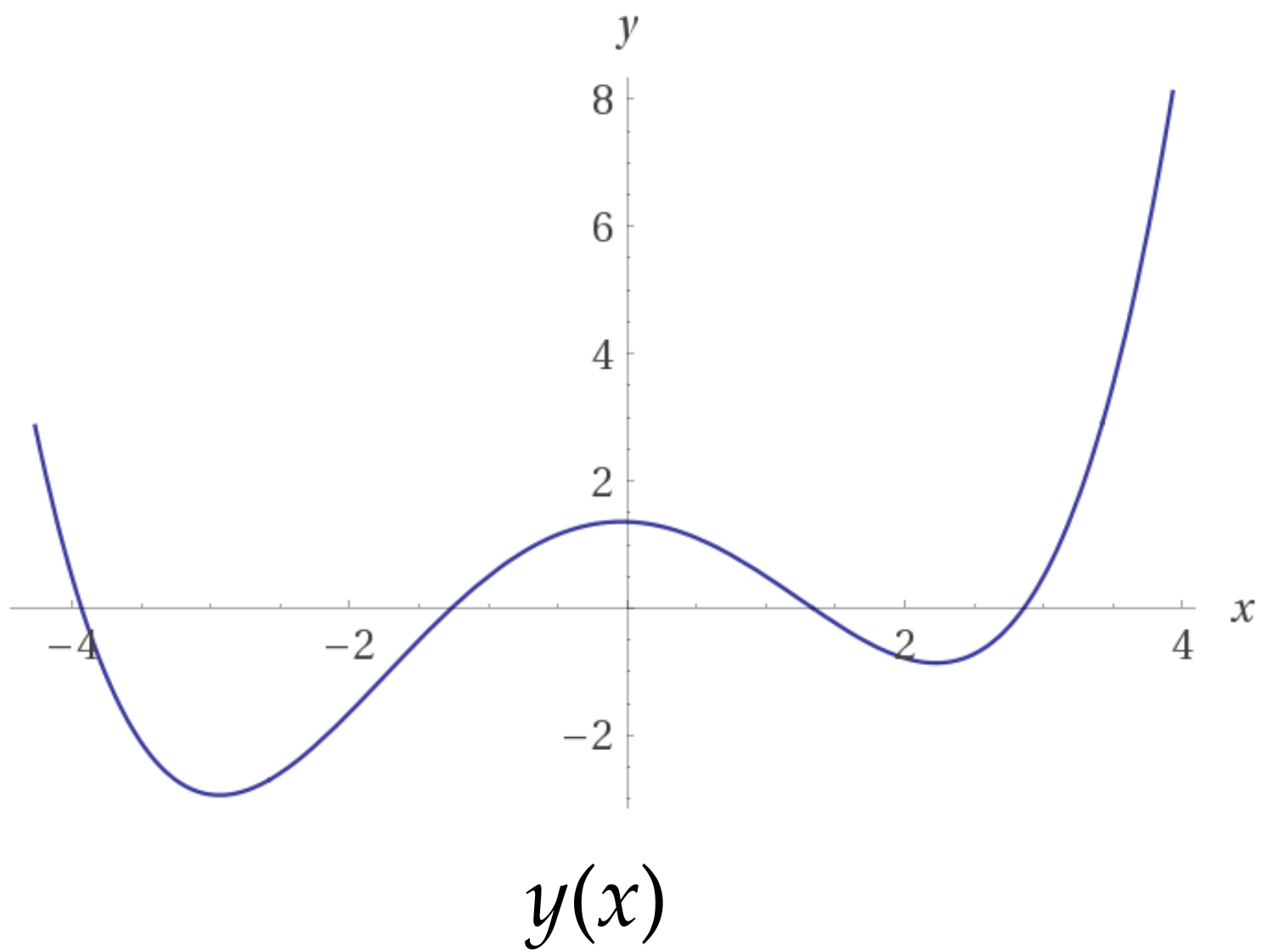

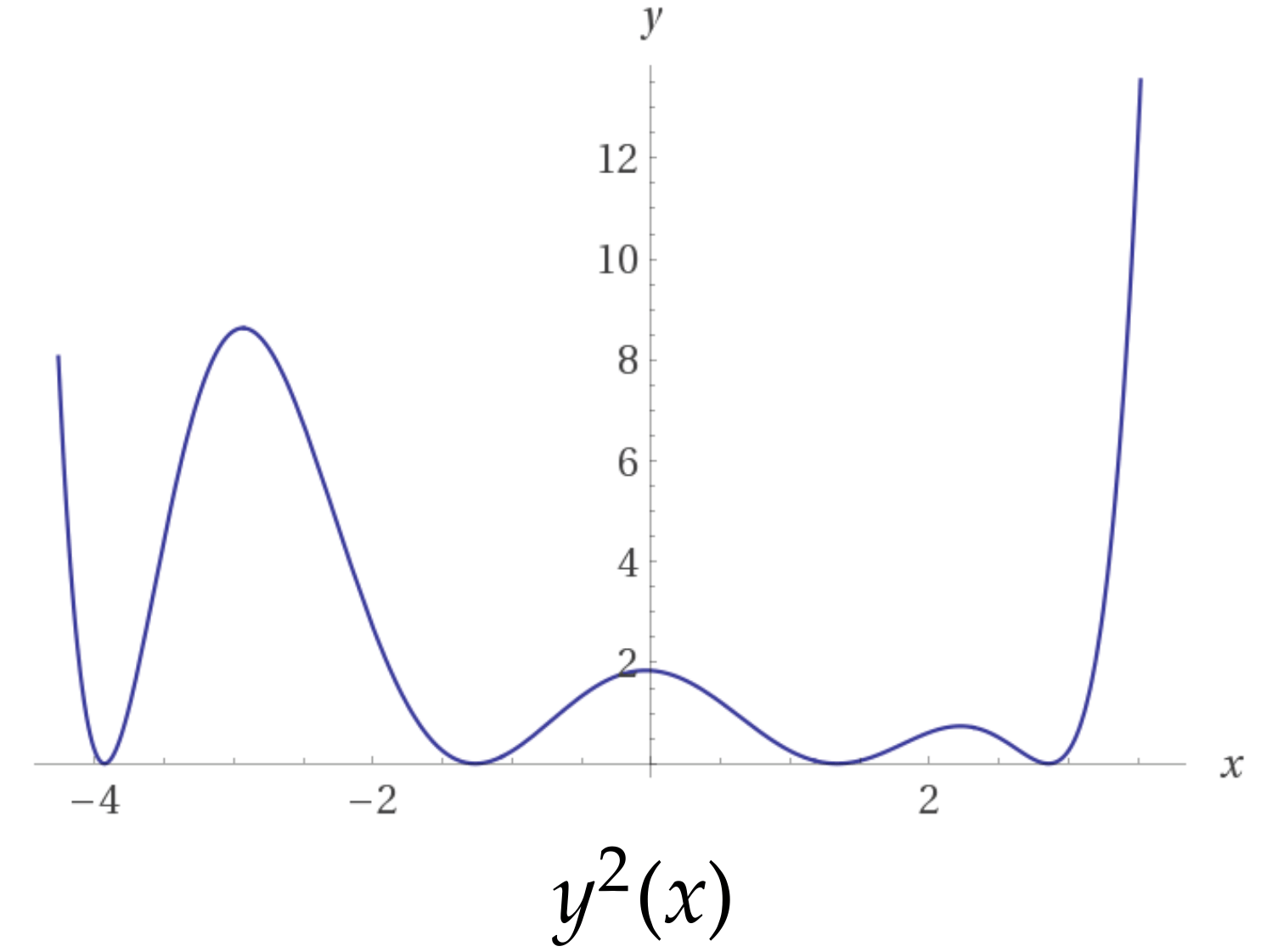

Cost Functions

-

You've worked with cost functions before

-

Finding a root \(f(x)\) be expressed as a cost function \(f^2(x)\)

David Mayerich

STIM Laboratory, University of Houston

Least Squares Fitting

-

Create a model function \(y(x)\) that minimizes the square of the difference between \(y(x)\) and at the points \((x_i, y_i)\)

David Mayerich

STIM Laboratory, University of Houston

-

Linear least squares fitting:

-

the model function is linear in terms of the parameters (\(a, b, \cdots\))

-

the functions \(y_1(x), y_2(x), \cdots\) do not have to be linear - only the coefficients

-

why would this be useful?

-

the cost function is quadratic: there is only one minimum

-

Designing a Cost Function for a Line

-

The variance of the difference between \(N\) measured points and \(y(x)\) is:

David Mayerich

STIM Laboratory, University of Houston

-

Create a cost function \(K\):

-

It doesn't matter if we minimize the variance, or \(N\) times the variance

(the minimum values have the same \(x\) coordinates) -

\(K\) is differentiable and quadratic: it only has one global minimum

Find the Minimum of the Cost Function

-

Since \(K\) is a quadratic function, there is only one minimum characterized by:

David Mayerich

STIM Laboratory, University of Houston

-

Find the set of linear equations for the optimal \(a\) and \(b\):

Find the Minimum of the Cost Function

-

This leaves us with two linear equations to solve:

David Mayerich

STIM Laboratory, University of Houston

Solve the Linear System

David Mayerich

STIM Laboratory, University of Houston

Stability

-

The determinant of a \(2\times 2\) matrix \(\mathbf{M}\) is:

David Mayerich

STIM Laboratory, University of Houston

-

The matrix used in linear least squares is:

-

So the determinant is given by:

-

Since the mean of \(\mathbf{x}\) is:

-

The determinant can be simplified to:

-

The determinant is zero when \(\mu(\mathbf{x}^2)=\mu^2(\mathbf{x})\)

when all \(x_i\) values are identical