Testing for data scientists

Trey Causey

@treycausey

treycausey.com

slides.com/treycausey/pydata2015

whoami

@treycausey

- Day

- Data scientist at Dato

- Nights/weekends

- Sports analytics fan & consultant

- Blog at thespread.us

Today

- Why testing for data scientists?

- Kinds of tests

- Quick review of unit testing

- Unique problems for data scientists

- Promising Python packages

Life of a data scientist

Flickr: michaelmattiphotography

Flickr: sukiweb

Work looks like this

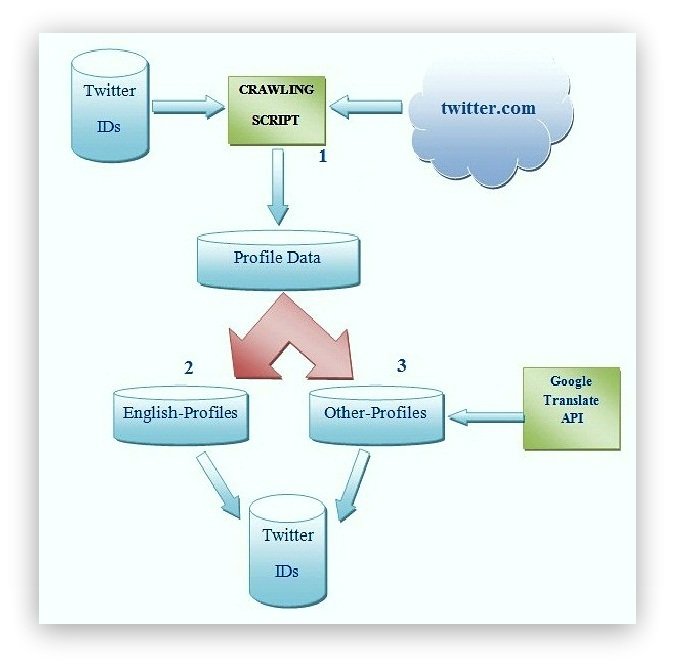

http://www3.cs.stonybrook.edu/~aychakrabort/

Data is messy...

... so is your code

Assumptions at every step.

Software development skills for data scientists

treycausey.com/software_dev_skills.html

- Writing modular, reusable code

- Documentation

- Version control

- Testing

- Logging

What is testing?

Ned Batchelder

"Getting Started Testing"

PyCon 2014

When to test?

- Extract

- Transform

- Model -- the Wild West

- Load

- Repeat

Testing helps you:

- Find bugs

- Check your assumptions

(by making them explicit) - Write simpler code

- Work with others

- Be surprised less

Most relevant kinds of tests

(for data scientists)

- Unit tests

- Regression (why?!?) tests

- Integration tests

Unit tests

- Test one "unit" of code

- No dependencies on

other code you've written - Don't require access

to databases, APIs, etc.

The standard Python unit testing landscape

- py.test

- unittest, unittest2

- nose

- fixtures

- mock

A simple function...

... what could go wrong?

def mean(values):

return sum(values) / len(values)

A simple test

import pytest

def test_mean():

assert(mean([1, 2, 3, 4, 5]) == 2)

Why py.test?

- Less boilerplate

- Fewer classes

- Gets you testing quickly

- Easy to interpret errors

When & what to test?

- When you change code, add a test.

- Test the outcome, not the implementation

- When you find a bug, add a test.

- Help identify complexity

- Don't test code that's already tested!

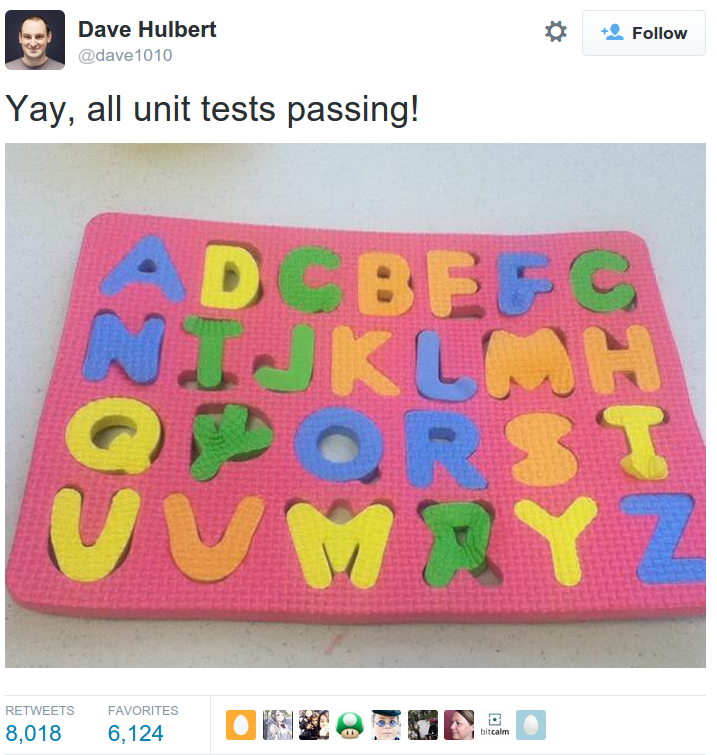

Test-driven development?

Write failing tests first,

fix code until tests pass.

Testing for data science can be a little different...

... deterministic answers may not exist

Overfitting

Laziness (not the good kind)

- Extract data once, build many models

- Data is representative of the future

- Using specific samples to spot-check

Better ways to test

Test properties, not specific values

Make assumptions about data shape & type

Test probabalistically

Some promising

projects

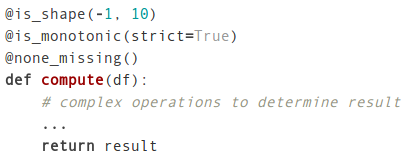

engarde

Hypothesis

Feature Forge

engarde

Tom Augspurger

For "defensive" data analysis

"The raison d’être for engarde is the fact of life that data are messy."

Great for ETL on changing data

- Built with pandas

- Very lightweight

- Use for functions that

accept & return a Dataframe - Just add decorators!

(or use DataFrame.pipe)

Usage

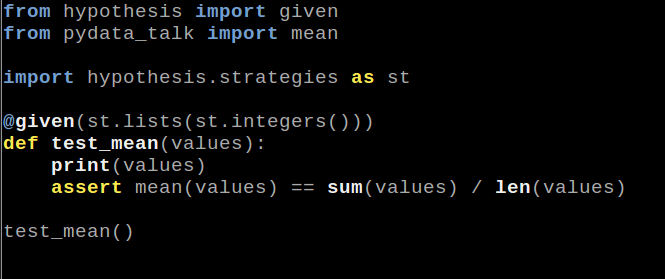

Hypothesis

David R. MacIver

Property-based testing inspired

by Haskell's Quickcheck

How it works

Generate data randomly

according to some specs

(and be slightly diabolical about it)

Details

- Very flexible

- Plugin support

- Finds corner cases fast

- Ideal for code that will be

accepting input "from the wild"

Other features

- Works with existing testing frameworks

- Works with Faker

- Has a datetime plugin

- Tests many Python WTFs

- Experimental NumPy support

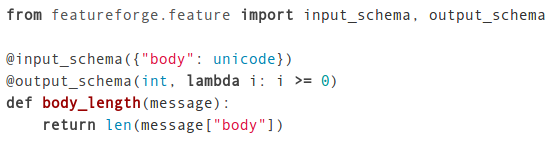

Feature Forge

Daniel Moisset

Javier Mansilla

Rafael Carrascosa

Declare feature schemas & test them

Other features

- Designed with ML and sklearn in mind

- Can build feature creation & testing pipelines

- Supports experiments for testing variety of

features and evaluating many models,

storing results to a database

Probabilistic testing

Model testing is the wild west

engarde: is_monotonic(), within_n_std(), within_set()

Don't test algorithms you haven't personally implemented

scikit-learn, SciPy, NumPy have excellent test suites

Testing algorithms you have implemented

pandas, SciPy, NumPy have excellent testing methods

Numerical computing is tricky.

Try to use existing tools as much as possible.

What I didn't cover

- Testing MCMC code

- Follow @tdhopper

- Continuous integration

- See @digitallogic's answer here:

- http://bit.ly/testing_algorithms

Questions?

@treycausey