NMDP [002 SP_B]

Vidhi Lalchand, Ph.D.

IMU Biosciences

25th Feb, 2026

[203 donors]

Data Semantics & Processing

NMDP 002 SP_B Combined Ratios: Tv5 Panel

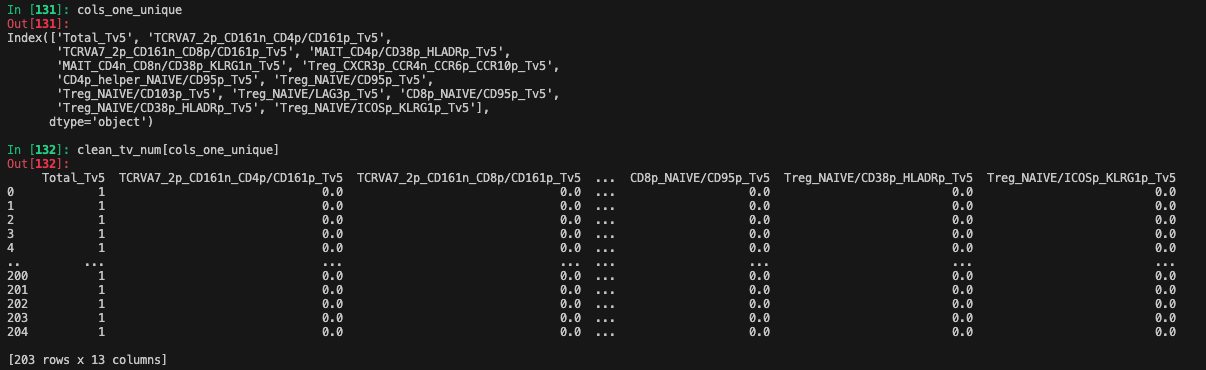

Columns dropped due to NaNs for significant cross-section of donors:

clean_tv_num = clean_tv_num.loc[:, clean_tv_num.nunique(dropna=False) > 1] # Drop columns with only one unique value

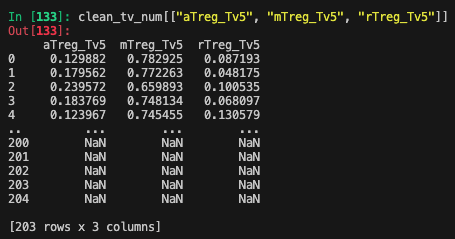

clean_tv_num = clean_tv_num.drop(columns=["aTreg_Tv5", "mTreg_Tv5", "rTreg_Tv5"])

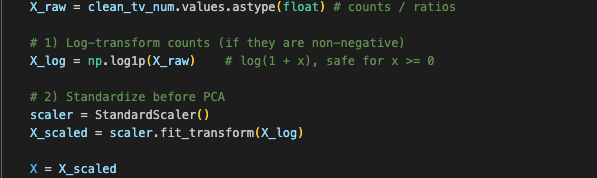

Log1p + Standardization

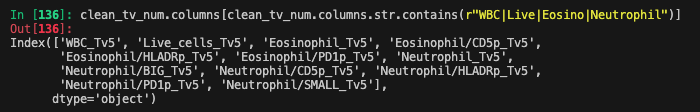

Batch effect + Noise columns

Non-Trivial Predictive Signal for Chronic Relapse in Cell Ratios & Clinical Co-variates

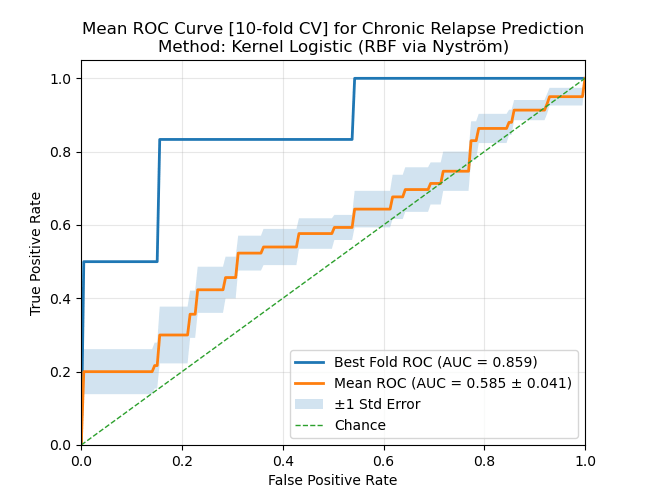

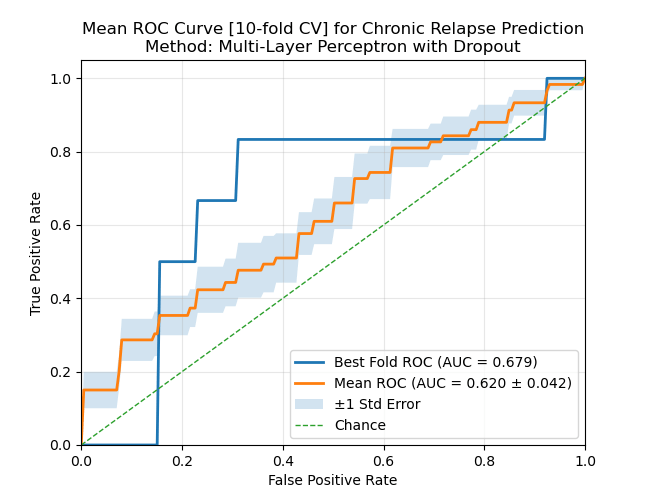

The figures below show averaged ROC curves computed on unseen data for classifying the binary variable of chronic relapse from cell-ratios and clinical covariates. The shaded band shows standard error of the mean at each threshold.

No clinical covariates

With clinical covariates

Non-Trivial Predictive Signal for Chronic Relapse in Cell Ratios & Clinical Co-variates

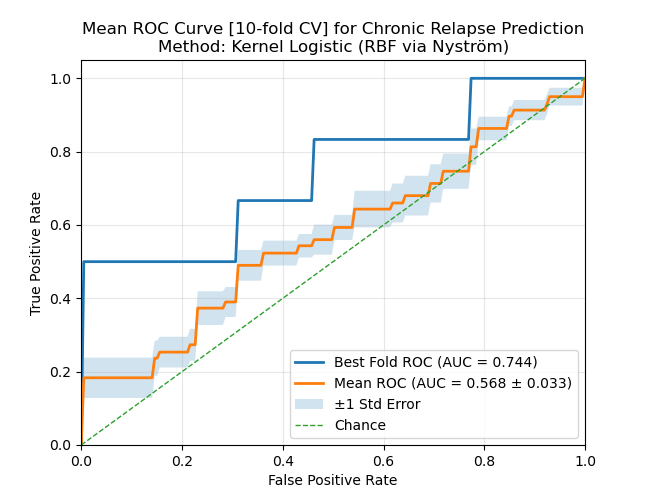

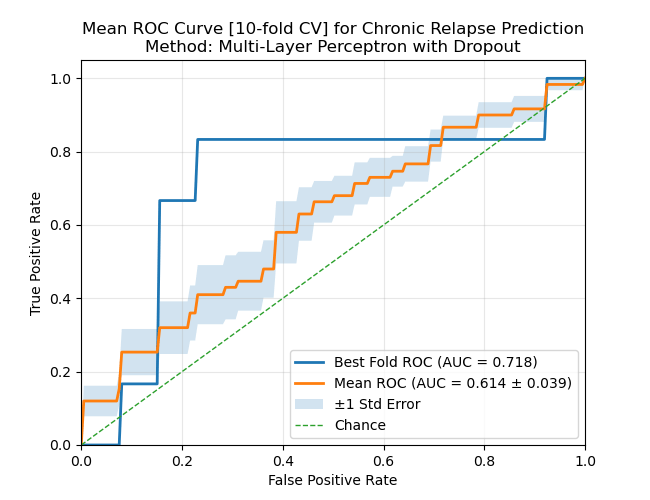

The figures below show averaged ROC curves computed on unseen data for classifying the binary variable of chronic relapse from cell-ratios and clinical covariates. The shaded band shows standard error of the mean at each threshold.

No clinical covariates

With clinical covariates

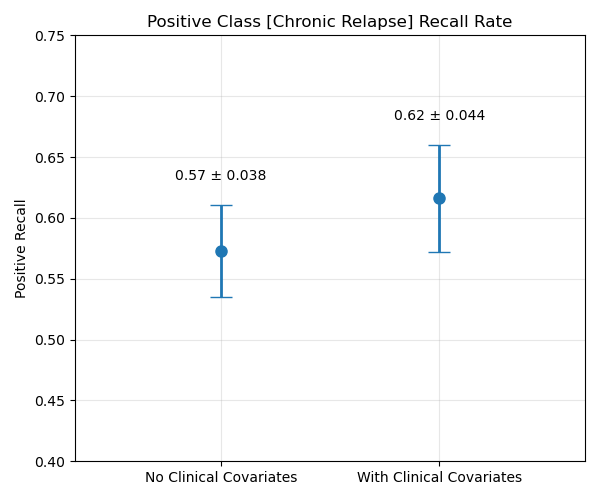

Quantifying the effect of covariates across two independent algorithms

The plot on the left shows the average recall rate across 10-fold CV on unseen donors jointly across both methods (MLP and Kernel Reg.). Although the standard errors overlap, the results suggest modest incremental predictive value from the clinical covariates.

Non-negligible variability across different axis of variation

- No. of folds in cross-validation

- Threshold (probability at which to classify as one)

- Random seed in pytorch, numpy, split

- Initialisations of the model architecture

Algorithmic Factors of Variations

Model Architecture Choices

- The parameters of the model, like kernel lengthscale, number of layers in the MLP, dorpout ratio.

Fold 1/8

/home/vidhi/miniconda3/envs/p1k/lib/python3.10/site-packages/torch/optim/lr_scheduler.py:62: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.

warnings.warn(

Epoch 010 | Train Loss: 1.043 | Val Loss: 1.220 | Val Acc: 0.108 | Val Acc Positive: 1.000

Epoch 020 | Train Loss: 1.179 | Val Loss: 1.222 | Val Acc: 0.216 | Val Acc Positive: 1.000

Epoch 030 | Train Loss: 0.867 | Val Loss: 1.225 | Val Acc: 0.243 | Val Acc Positive: 1.000

Epoch 040 | Train Loss: 1.026 | Val Loss: 1.225 | Val Acc: 0.324 | Val Acc Positive: 0.750

Epoch 050 | Train Loss: 0.766 | Val Loss: 1.209 | Val Acc: 0.378 | Val Acc Positive: 0.500

Fold 1 AUC: 0.523

Fold 1 ACC: 0.486

Fold 1 ACC Positive: 0.500

Fold 2/8

/home/vidhi/miniconda3/envs/p1k/lib/python3.10/site-packages/torch/optim/lr_scheduler.py:62: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.

warnings.warn(

Epoch 010 | Train Loss: 1.038 | Val Loss: 1.203 | Val Acc: 0.189 | Val Acc Positive: 1.000

Epoch 020 | Train Loss: 1.028 | Val Loss: 1.207 | Val Acc: 0.243 | Val Acc Positive: 0.750

Epoch 030 | Train Loss: 0.890 | Val Loss: 1.206 | Val Acc: 0.378 | Val Acc Positive: 0.750

Epoch 040 | Train Loss: 0.799 | Val Loss: 1.205 | Val Acc: 0.459 | Val Acc Positive: 0.750

Epoch 050 | Train Loss: 0.621 | Val Loss: 1.200 | Val Acc: 0.568 | Val Acc Positive: 0.750

Fold 2 AUC: 0.621

Fold 2 ACC: 0.568

Fold 2 ACC Positive: 0.500

Fold 3/8

/home/vidhi/miniconda3/envs/p1k/lib/python3.10/site-packages/torch/optim/lr_scheduler.py:62: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.

warnings.warn(

Epoch 010 | Train Loss: 1.024 | Val Loss: 1.258 | Val Acc: 0.194 | Val Acc Positive: 0.750

Epoch 020 | Train Loss: 0.968 | Val Loss: 1.254 | Val Acc: 0.250 | Val Acc Positive: 0.500

Epoch 030 | Train Loss: 1.052 | Val Loss: 1.253 | Val Acc: 0.278 | Val Acc Positive: 0.500

Epoch 040 | Train Loss: 1.199 | Val Loss: 1.253 | Val Acc: 0.278 | Val Acc Positive: 0.500

Epoch 050 | Train Loss: 1.010 | Val Loss: 1.253 | Val Acc: 0.278 | Val Acc Positive: 0.500

Fold 3 AUC: 0.453

Fold 3 ACC: 0.278

Fold 3 ACC Positive: 0.500

Fold 4/8

/home/vidhi/miniconda3/envs/p1k/lib/python3.10/site-packages/torch/optim/lr_scheduler.py:62: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.

warnings.warn(

Epoch 010 | Train Loss: 1.153 | Val Loss: 1.217 | Val Acc: 0.306 | Val Acc Positive: 1.000

Epoch 020 | Train Loss: 1.134 | Val Loss: 1.208 | Val Acc: 0.500 | Val Acc Positive: 1.000

Epoch 030 | Train Loss: 1.187 | Val Loss: 1.199 | Val Acc: 0.556 | Val Acc Positive: 1.000

Epoch 040 | Train Loss: 1.013 | Val Loss: 1.187 | Val Acc: 0.611 | Val Acc Positive: 1.000

Epoch 050 | Train Loss: 0.939 | Val Loss: 1.178 | Val Acc: 0.611 | Val Acc Positive: 1.000

Fold 4 AUC: 0.742

Fold 4 ACC: 0.611

Fold 4 ACC Positive: 1.000

Fold 5/8

/home/vidhi/miniconda3/envs/p1k/lib/python3.10/site-packages/torch/optim/lr_scheduler.py:62: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.

warnings.warn(

Epoch 010 | Train Loss: 1.043 | Val Loss: 1.235 | Val Acc: 0.111 | Val Acc Positive: 1.000

Epoch 020 | Train Loss: 0.916 | Val Loss: 1.253 | Val Acc: 0.111 | Val Acc Positive: 1.000

Epoch 030 | Train Loss: 0.855 | Val Loss: 1.260 | Val Acc: 0.167 | Val Acc Positive: 1.000

Epoch 040 | Train Loss: 0.936 | Val Loss: 1.267 | Val Acc: 0.167 | Val Acc Positive: 1.000

Epoch 050 | Train Loss: 0.785 | Val Loss: 1.286 | Val Acc: 0.194 | Val Acc Positive: 1.000

Fold 5 AUC: 0.359

Fold 5 ACC: 0.194

Fold 5 ACC Positive: 0.750

MLP_w_Dropout (5-fold CV) - Mean of all folds

Threshold: 0.47 - ACC: 0.427 ± 0.163

Threshold: 0.47 - ACC Positive: 0.650 ± 0.200