Learning From Out-of-Distribution Data in Robotics

Feb 3, 2026

Adam Wei

Open-X

Learning Algorithm

In-Distribution Data

Policy

\(\pi(a | o, l)\)

What are principled algorithms for learning from out-of-distribution data sources?

simulation

Colab w/ Giannis Daras

Our approach: Ambient Diffusion Policy

\(p^{clean}\)

\(p^{corrupt}\)

Out-of-Distribution Data

Diffusion Policy

\(\sigma=0\)

\(\sigma=1\)

"Clean" Data

For all \(\sigma \in [0,1]\): train \(h_\theta(A_\sigma, O, \sigma) \approx \mathbb{E}[A_0 \mid A_\sigma, O]\)

Co-train

\(\sigma=0\)

\(\sigma=1\)

"Corrupt" Data

"Clean" Data

Policy learns to sample from a mixture of \(p^{clean}\) and \(p^{corrupt}\)

For all \(\sigma \in [0,1]\): train \(h_\theta(A_\sigma, O, \sigma) \approx \mathbb{E}[A_0 \mid A_\sigma, O]\)

Ambient

\(\sigma=0\)

\(\sigma=1\)

\(\sigma_{min}\)

\(\sigma > \sigma_{min}\)

"Clean" Data

\(p^{clean}_\sigma\) \(\not\approx\) \(p^{corrupt}_\sigma\)

\(p^{clean}_\sigma\) \(\approx\) \(p^{corrupt}_\sigma\)

Consequence of the data processing inequality

For all \(\sigma \in [0,1]\): train \(h_\theta(A_\sigma, O, \sigma) \approx \mathbb{E}[A_0 \mid A_\sigma, O]\)

Ambient

\(\sigma=0\)

\(\sigma=1\)

\(\sigma_{min}\)

\(\sigma > \sigma_{min}\)

"Clean" Data

At low noise levels, action denoising only depends on local actions

For all \(\sigma \in [0,1]\): train \(h_\theta(A_\sigma, O, \sigma) \approx \mathbb{E}[A_0 \mid A_\sigma, O]\)

\(\sigma \leq \sigma_{max}\)

\(\sigma_{max}\)

"Locality"

\(p^{clean}_\sigma\) \(\approx\) \(p^{corrupt}_\sigma\)

Co-train:

Noisy Teleop

Sim2real Gap

Task Mismatch

Ambient:

91%, high jerk

98%, low jerk

93.5%

84.5%

22.7%

93.3%

\(p^{clean}\)

\(p^{corrupt}\)

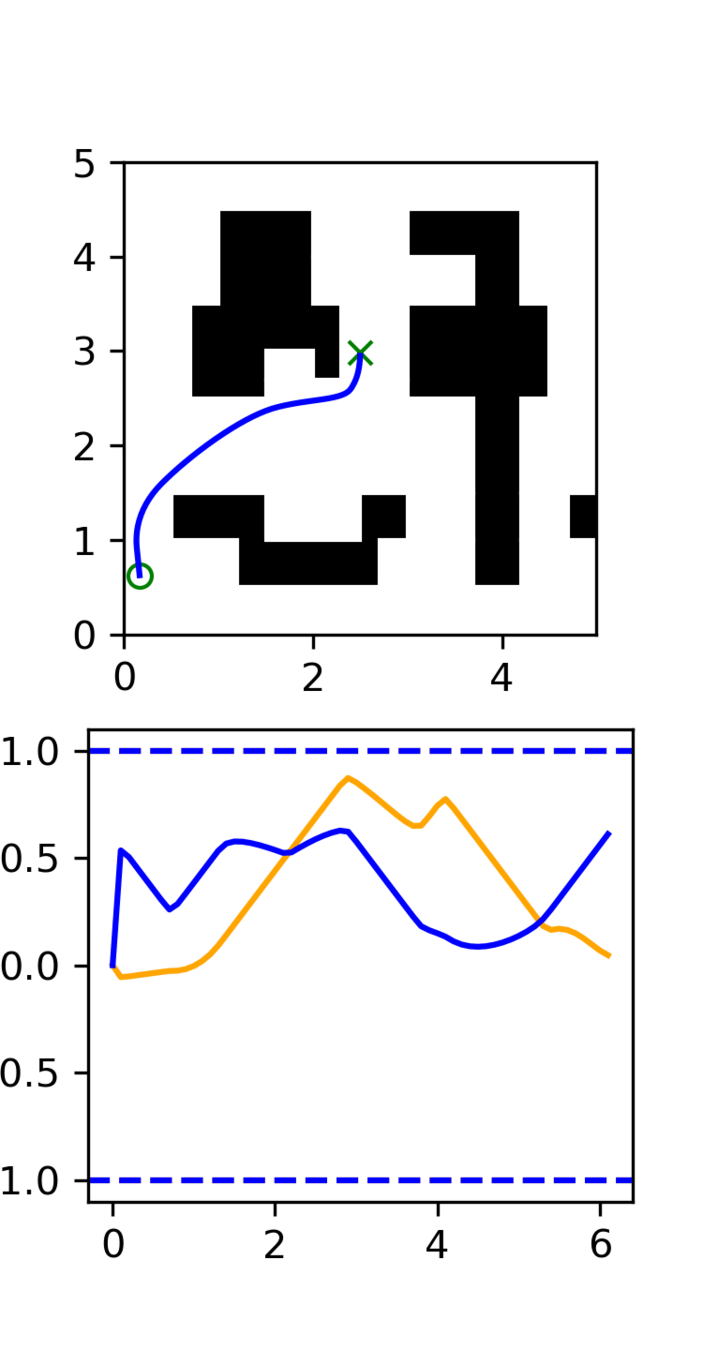

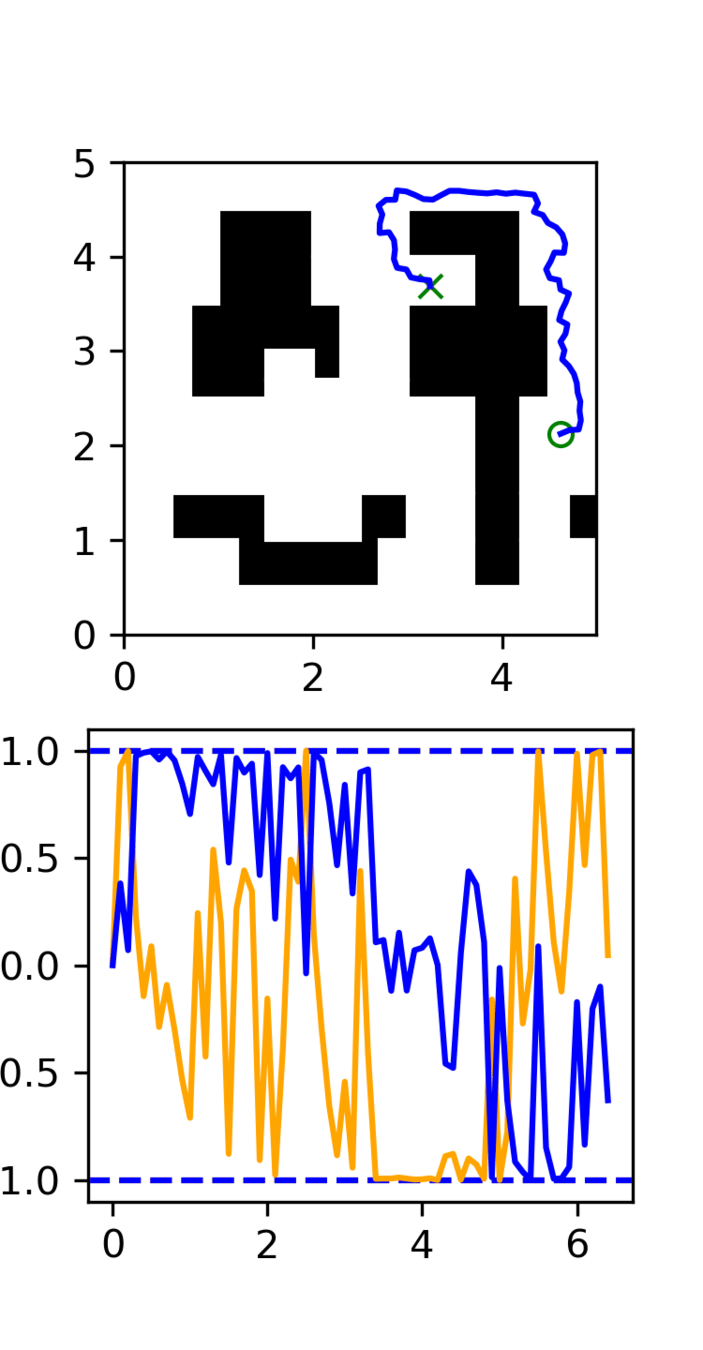

Smooth (GCS)

Non-smooth (RRT)

Sim data

"Real" data

Incorrect logic

Sorts the blocks

Ambient + Locality

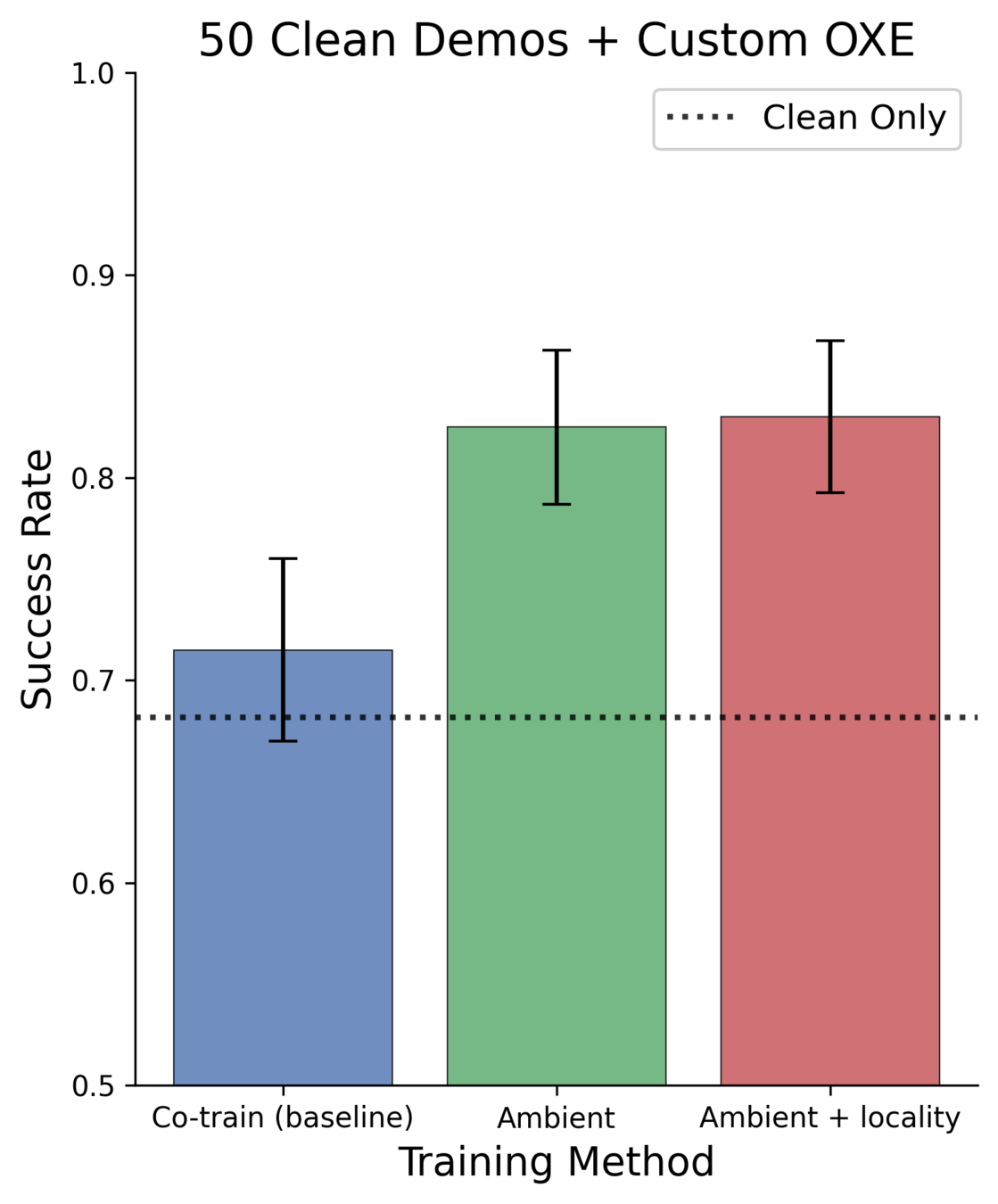

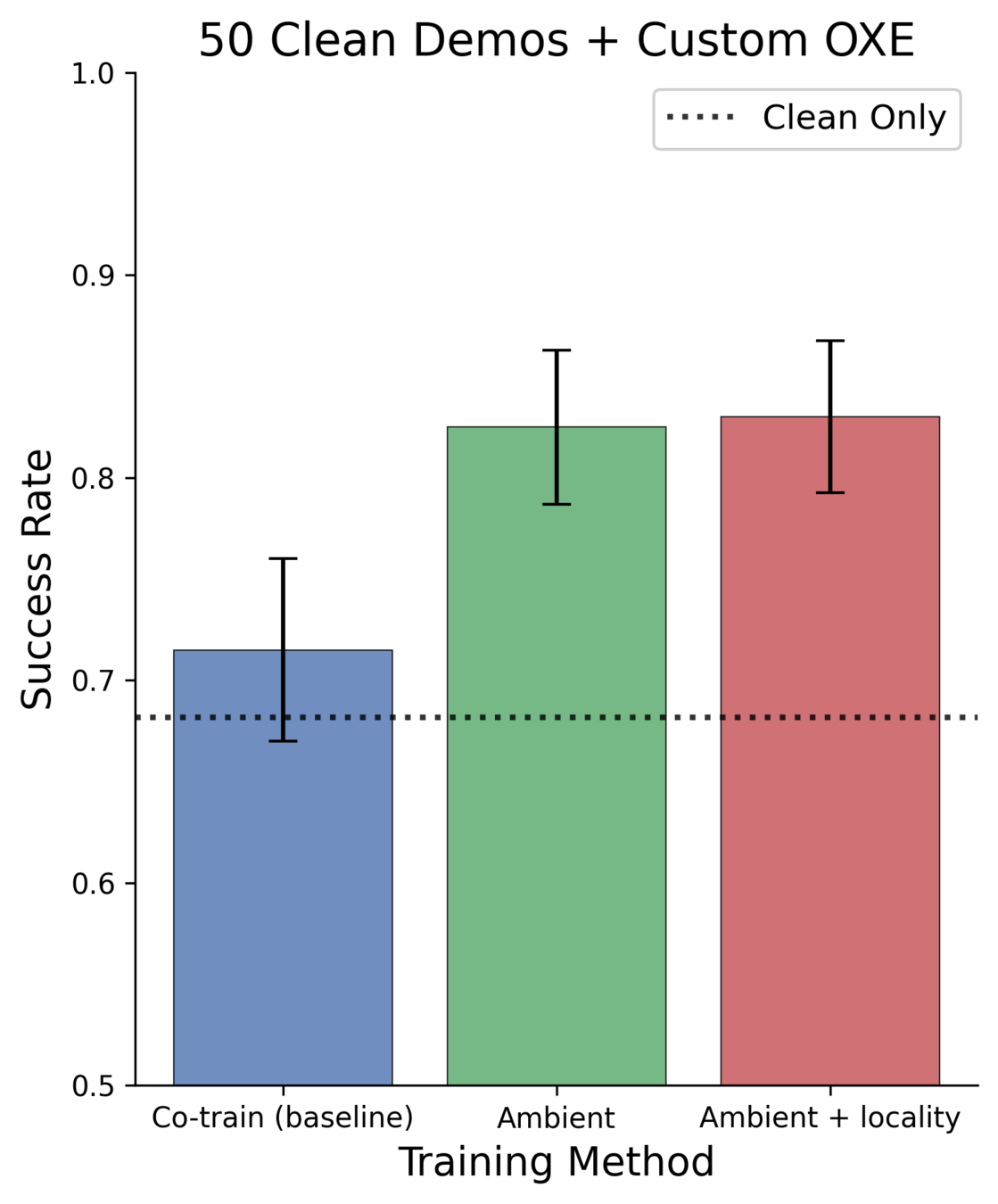

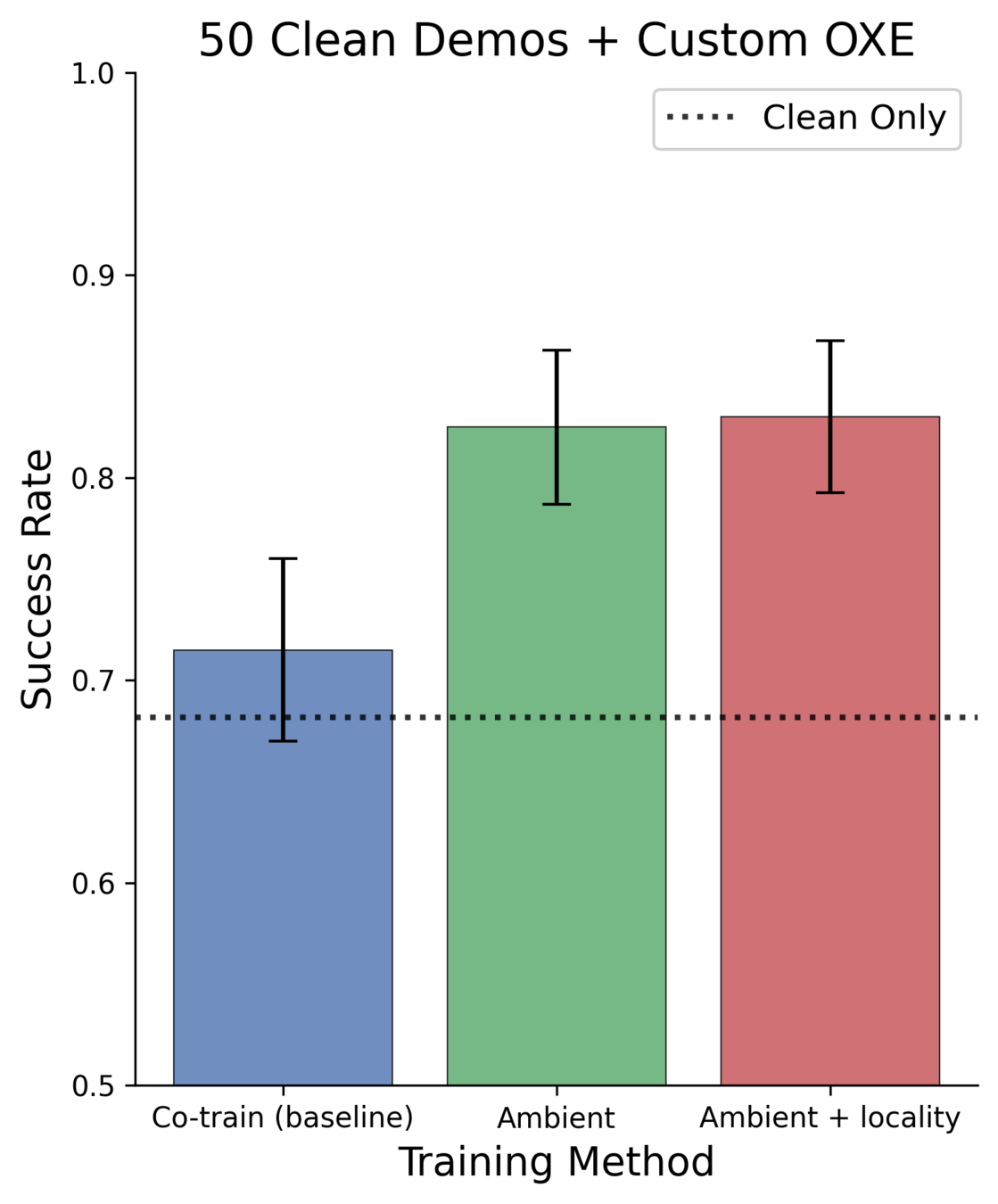

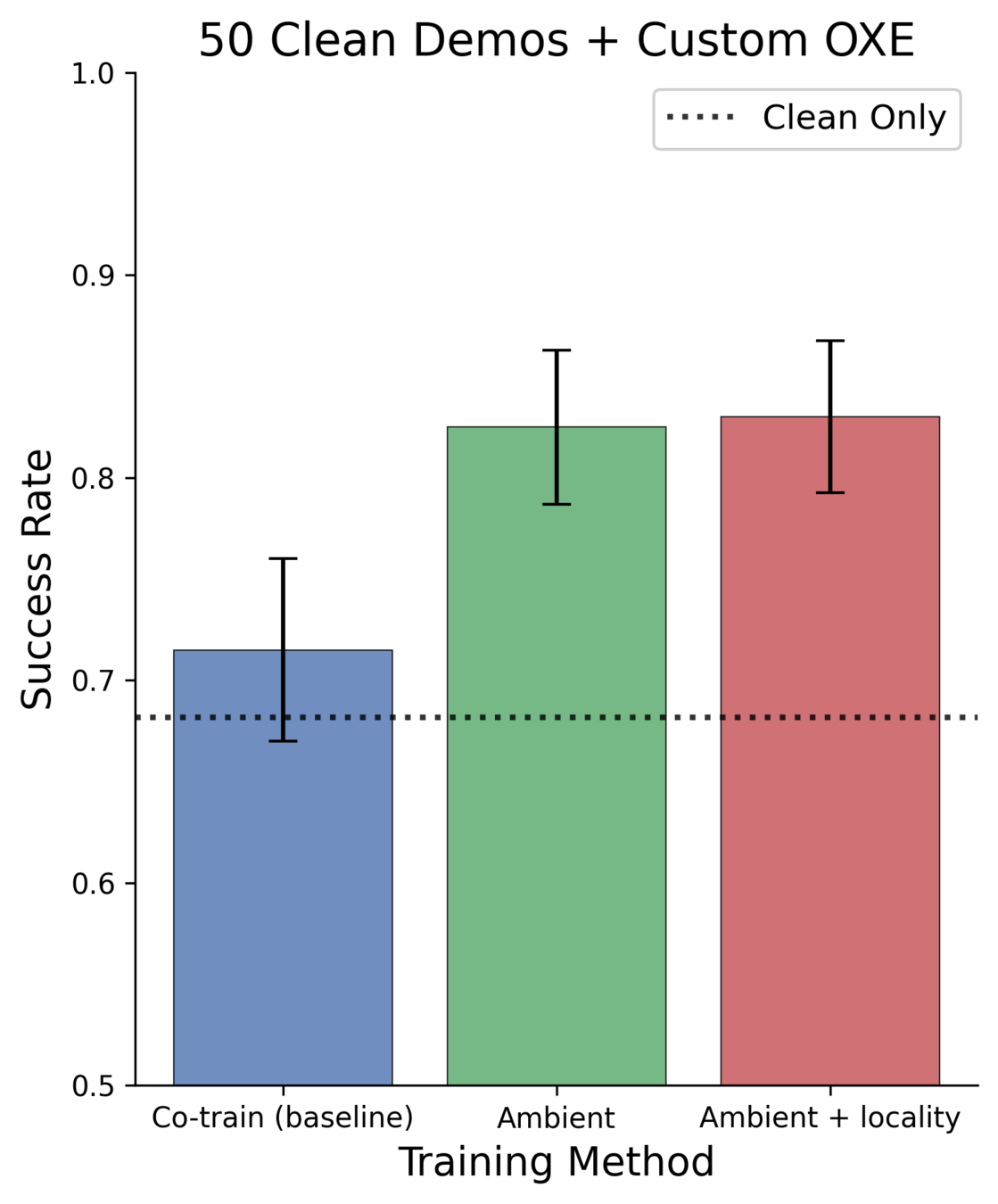

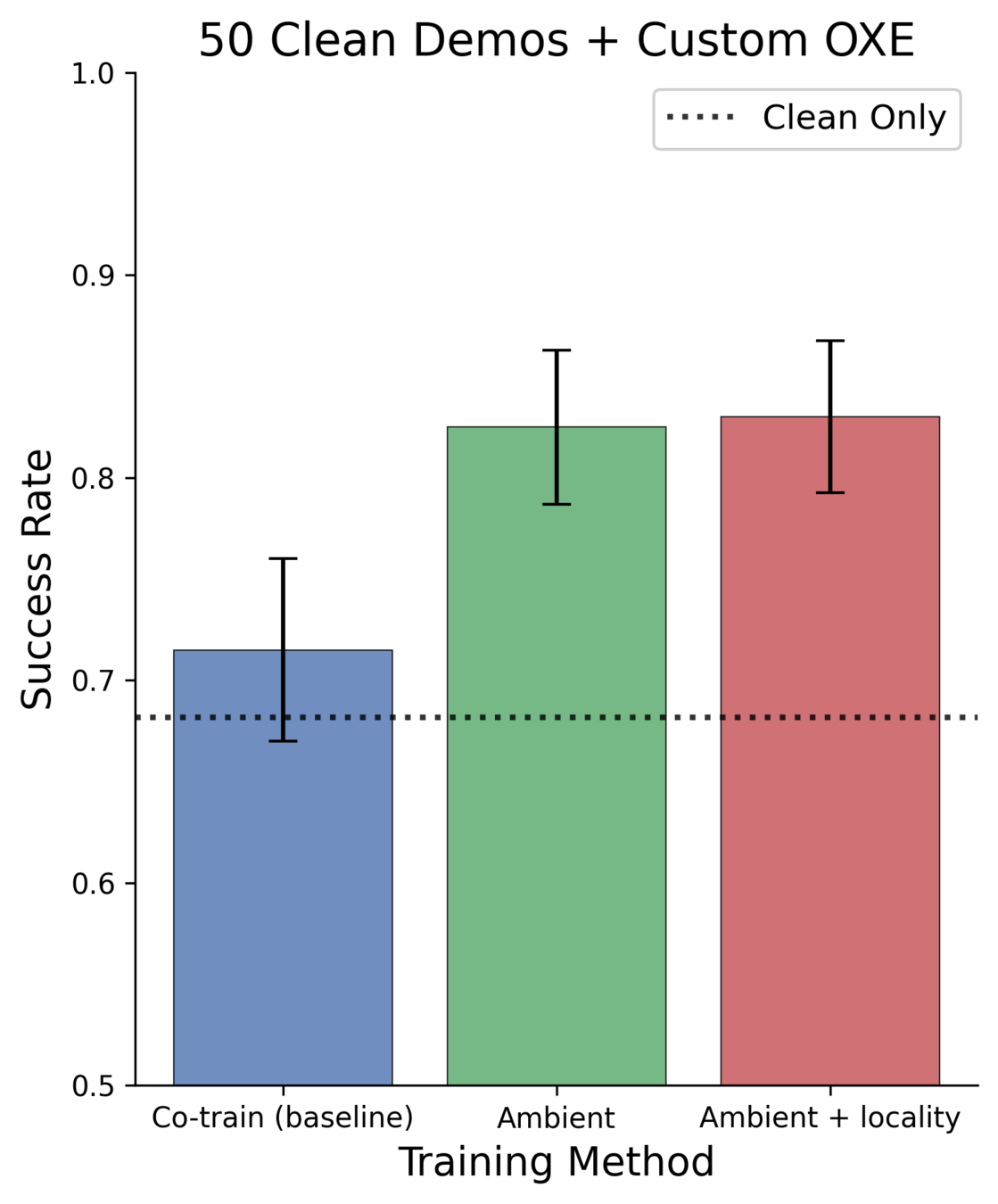

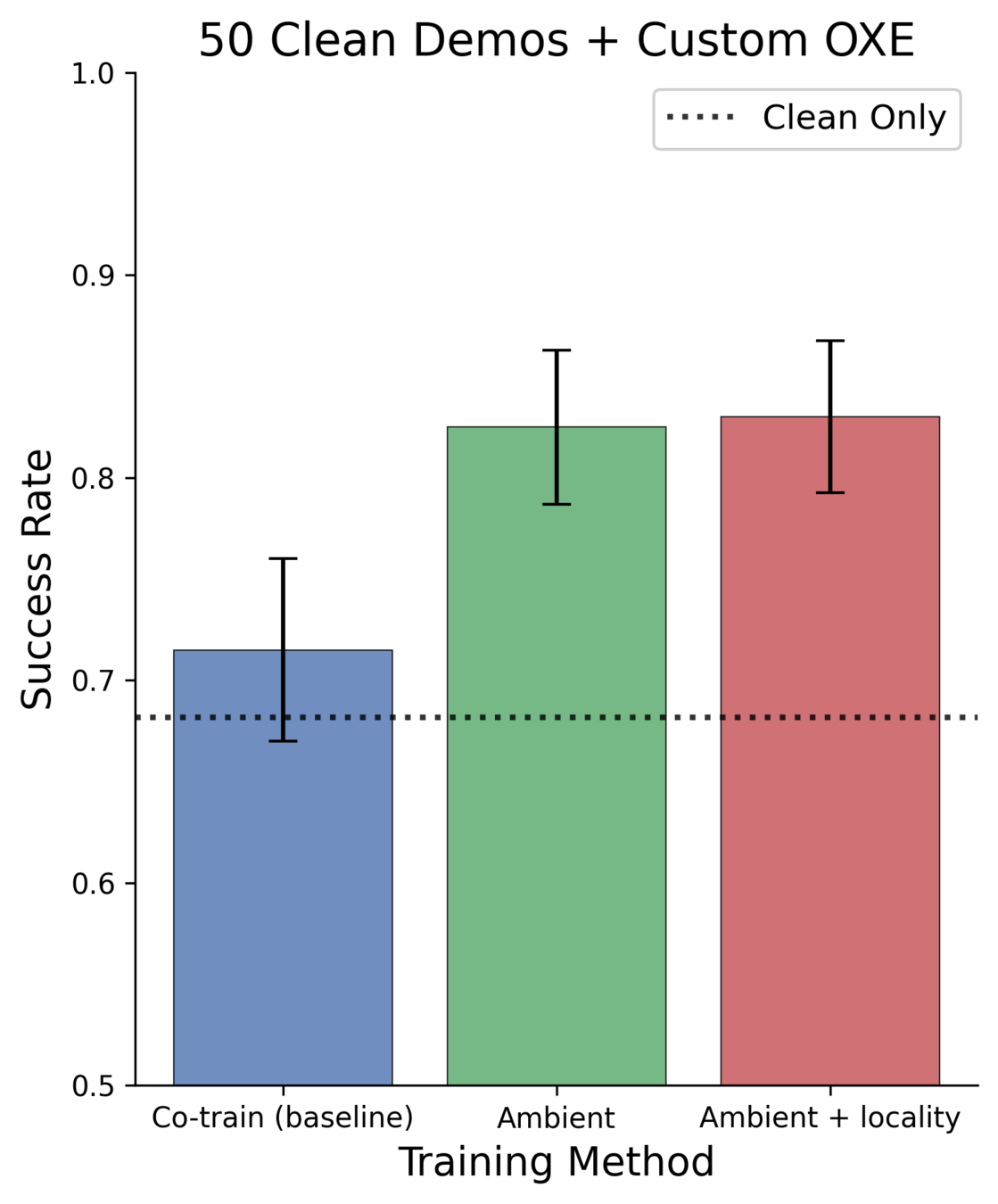

Scaling to Real-World Datasets

Goal: move objects from the table into the drawer

\(\mathcal{D}_{clean}\): 50 in-distribution demos

\(\mathcal{D}_{corrupt}\): Open-X Embodiment

Can we learn from unstructure distribution shifts in large real-world datasets?

Open-X

- cross-embodied

- diff. teleoperators

- sim data!

- mislabeled data

- diff tasks, environments, camera

OpenX Results

More baselines, ablations, dataset combinations, tasks...

...see our upcoming paper :)

Applications

- Sim2real gaps

- Noisy/low-quality teleop

- Task-level mismatch

- Changes in low-level controller

- Embodiment gap

- Camera models, poses, etc

- Different environment, objects, etc

Ambient can be used to learn from any distribution shift in robotics

In-Distribution Data

Open-X

simulation

Out-of-Distribution Data

Applications

- Sim2real gaps

- Noisy/low-quality teleop

- Task-level mismatch

- Changes in low-level controller

- Embodiment gap

- Camera models, poses, etc

- Different environment, objects, etc

Ambient can be used to learn from any distribution shift in robotics

In-Distribution Data

Open-X

simulation

Out-of-Distribution Data

Theory Of Diffusion: On-going Work

1. Ambient Diffusion

2. Long-context policies

3. Tilting diffusion models with reward models

4. Learning from heterogeneous data sources

5. Casuality-informed learning