面向云原生的微服务流控降级防护组件

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

01

02

03

04

06

流控降级

最佳实践

05

微服务稳定性场景

微服务稳定性场景

流量激增

- 流量激增导致CPU/Load飙高,无法正常处理请求

- 突发的热点商品导致缓存被击穿

- 消息投递速度过快,导致消息处理积压

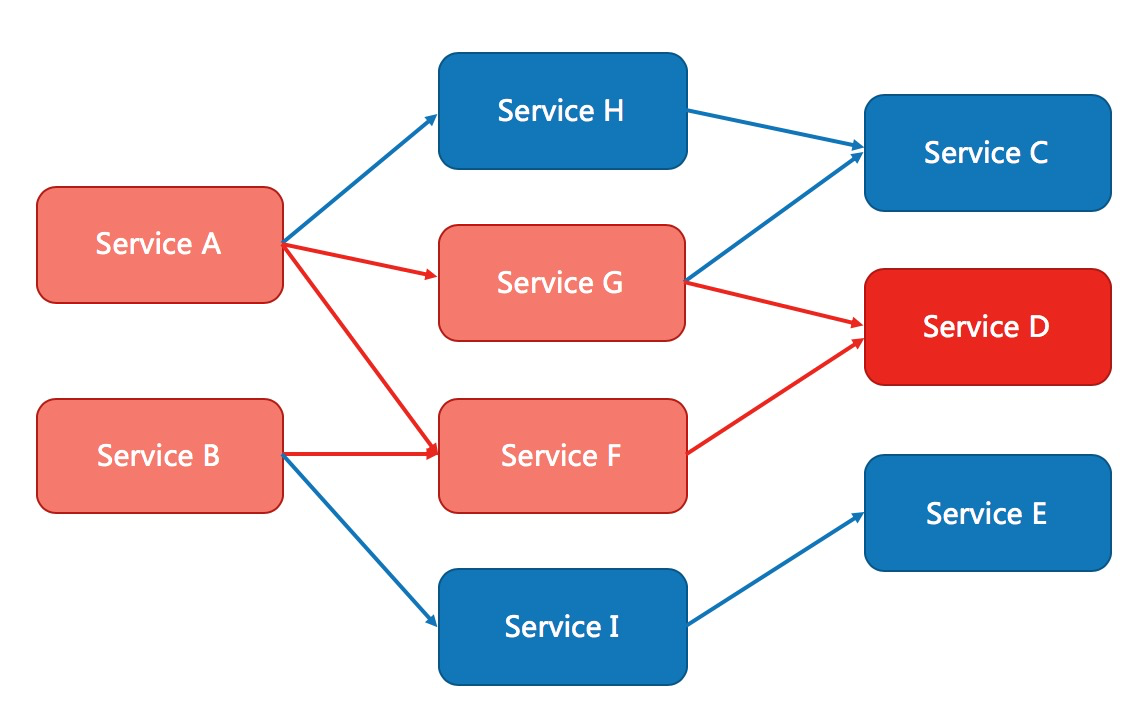

微服务稳定性场景

不稳定的服务

不稳定的下游服务导致RPC超时或则抛出异常,导致自身不可用,进而导致级联失败。

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

02

01

03

04

06

流控降级

最佳实践

05

核心特性

流量控制

速率控制

熔断降级

热点限流

系统负载保护

动态规则配置:Etcd、Consul......

监控日志

规则配置

Dubbo-go

gRPC

gin

echo

自定义扩展

核心概念

资源

- 任何的服务、接口、方法甚至是任意的一段代码都可以作为Sentinel的资源

规则

- 基于资源的实时状态设定的防护规则,所有的规则可以动态调整。

- 核心组件(流控、热点参数、熔断降级...)基于规则判断资源的状态。

资源

规则

规则

规则

规则

规则

定义资源

配置规则

Guard

整体框架

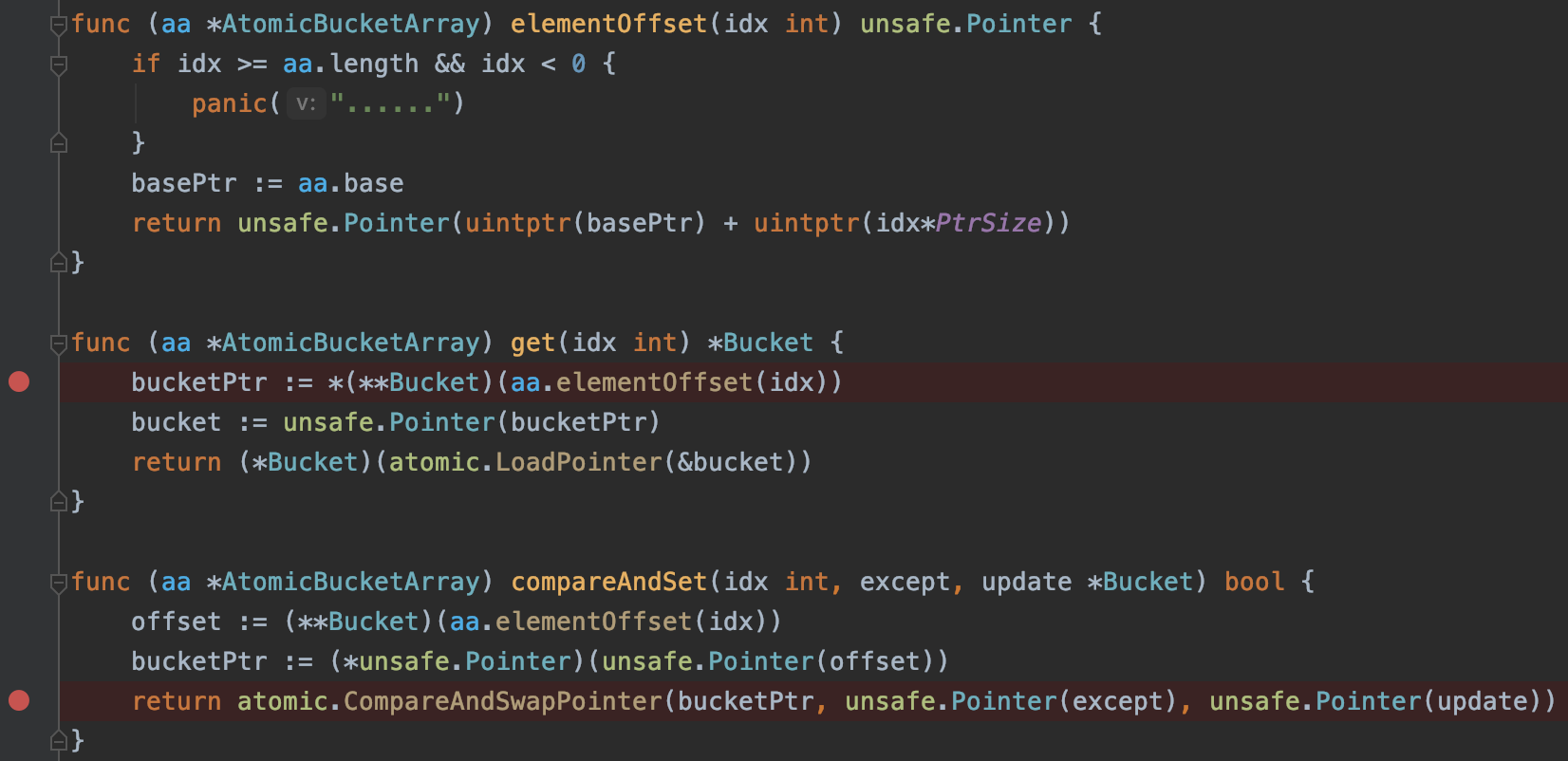

Slot chain

prepare slot / check slot / stat slot

Resource Prepare Slot

SystemSlot

CircuitBreakerSlot

FlowSlot

HotParamsSlot

Rules

resource1

statNode

resource2

statNode

resource3

statNode

Stat Slot

0

1

2

3

4

5

6

......

timestamp:158242177000

passQPS:1200

blockedQPS:30

completedQPS:1190

errorQPS:10

rt:30

timestamp:158242178000

passQPS:1100

blockedQPS:30

completedQPS:1090

errorQPS:10

rt:30

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

03

01

02

04

06

流控降级

最佳实践

05

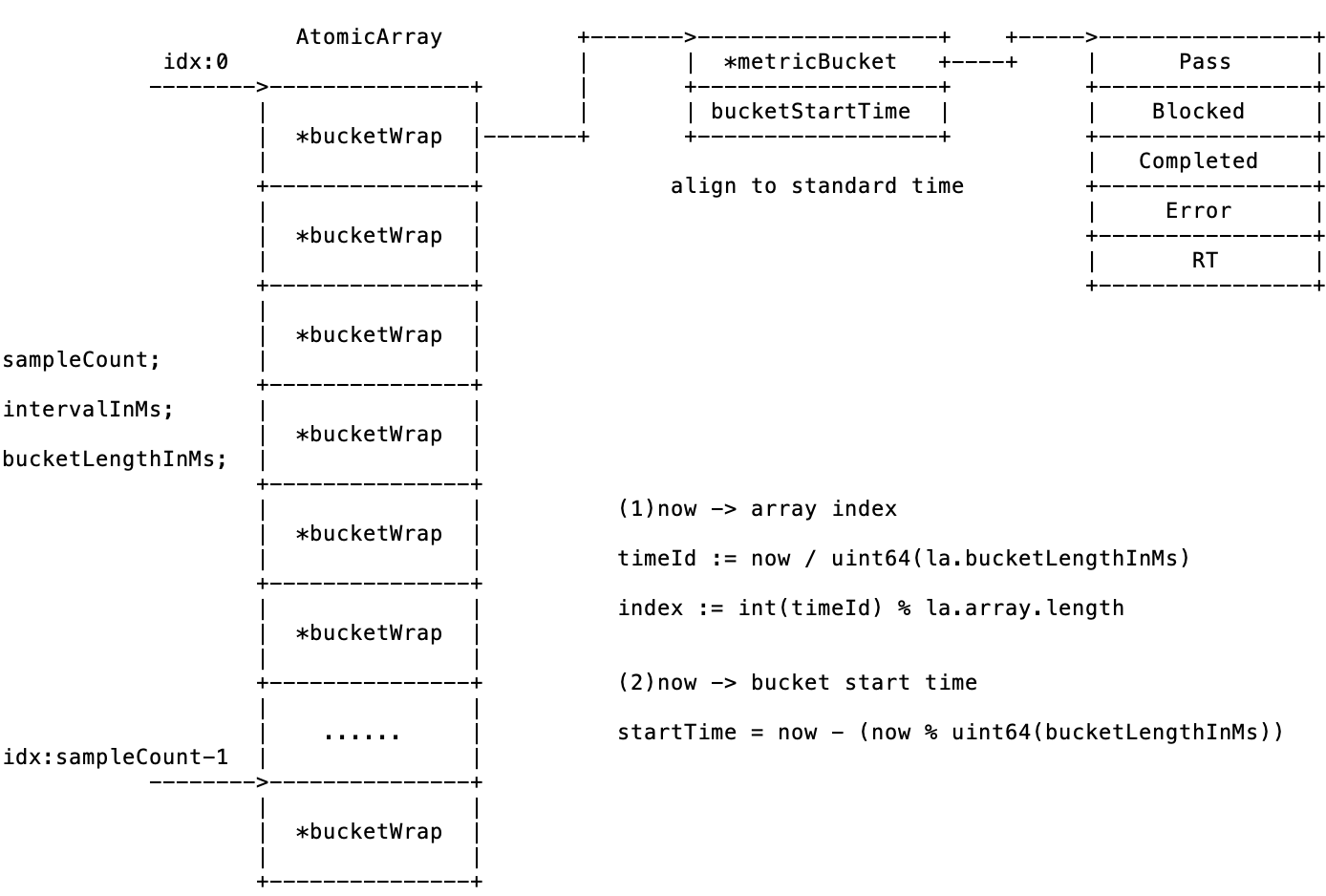

滑动窗口

customize bucket to

reuse sliding Windows

滑动窗口

func (la *LeapArray) currentBucketOfTime(now uint64, bg BucketGenerator) (*BucketWrap, error) {

idx := la.calculateTimeIdx(now)

bucketStart := calculateStartTime(now, la.bucketLengthInMs)

//spin to get the current BucketWrap

for {

old := la.array.get(idx)

if old == nil {

new BucketWrap

if la.array.compareAndSet(idx, nil, newWrap) {

return newWrap, nil

} else {

runtime.Gosched()

}

} else if bucketStart == atomic.LoadUint64(&old.BucketStart) {

return old, nil

} else if bucketStart > atomic.LoadUint64(&old.BucketStart) {

if la.updateLock.TryLock() {

old = bg.ResetBucketTo(old, bucketStart)

la.updateLock.Unlock()

return old, nil

} else {

runtime.Gosched()

}

} else if bucketStart < atomic.LoadUint64(&old.BucketStart) {

// reserve for some special case (e.g. when occupying "future" buckets).

return nil, error

}

}

}Utilize atomic to reduce the the granularity of lock

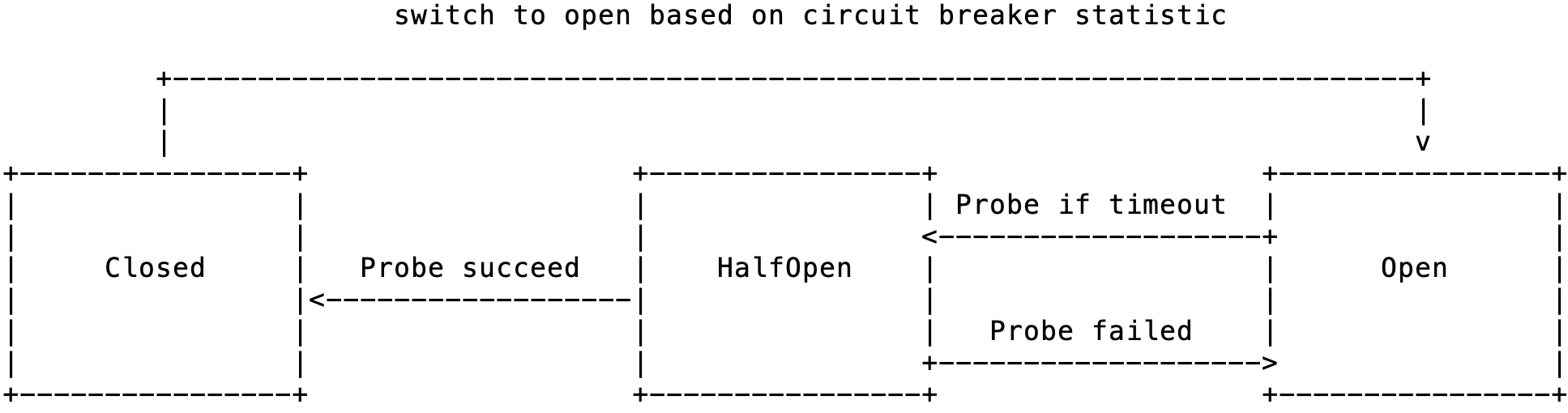

熔断降级

Strategy

- slow request ratio

- error ratio

- error count

cb1

cb2

......

cb1

cb2

......

cb1

cb2

......

resource1

resource2

resource3

Rescource CB Map

Entry

get resource's cb slice

try pass

based on state

Load the newer Rules

statistics equivalent

TokenResult

Probe if timeout

Exit

handle request completion:

1. update statistic

2. update cb state machine

3. notify state change

OnTransformToClosed

OnTransformToOpen

OnTransformToHalfOpen

stat

cb1

cb2

cb3

Statistic of CB

stat

stat

熔断降级

circuit breaking based on state machine

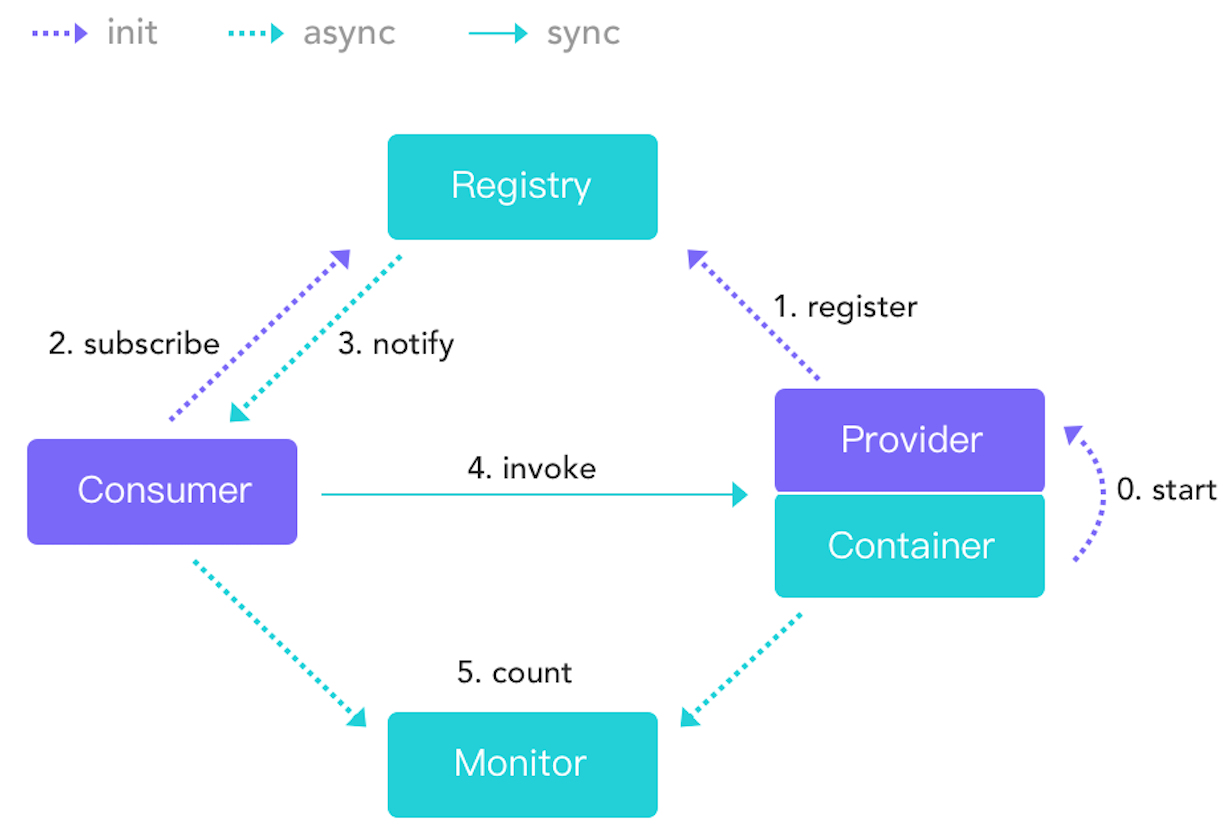

动态数据源

Sentinel is based on rules

Config Center

Etcd / Conful...

Push

Sentinel Dashboard/

Config Center Dashboard/

K8s CRD

Sentinel Datasource

Watch && Notify

Rule Manager

Update Rules

Machine1

Sentinel Datasource

Rule Manager

Update Rules

Machine2

Sentinel Datasource

Rule Manager

Update Rules

Machine3

动态数据源

Datasource scenario

- One datasource->one type of rule

- One datasource->multi types of rule(reuse long connection)

- Interconnected datasources

type PropertyConverter func(src []byte) (interface{}, error)

type PropertyUpdater func(data interface{}) error

type DefaultPropertyHandler struct {

lastUpdateProperty interface{}

converter PropertyConverter

updater PropertyUpdater

}

func (h *DefaultPropertyHandler) Handle(src []byte) error {

defer func() {

if err := recover(); err != nil && logger != nil {

logger.Panicf(......)

}

}()

realProperty, err := h.converter(src)

if err != nil {

return err

}

isConsistent := h.isPropertyConsistent(realProperty)

if isConsistent {

return nil

}

return h.updater(realProperty)

}- 灵活的biz协议解析

- 规则一级缓存

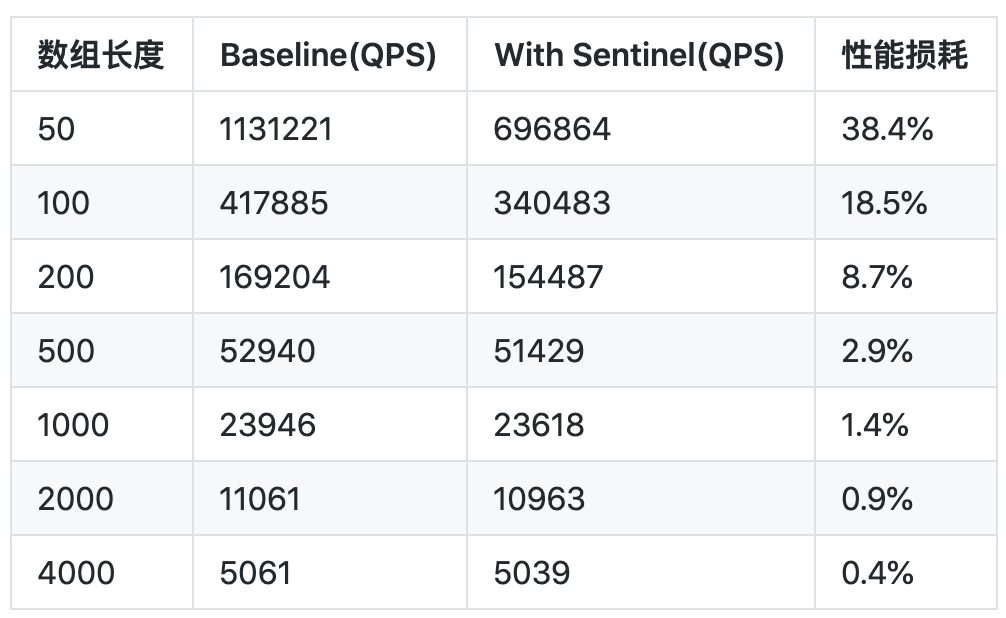

Benchmark

CPU:Intel(R) Xeon(R) CPU E5-2640 v3 @ 2.60GHz (32 Cores)

OS:Red Hat 4.8.2-16

golang version: 1.14.3

测试单协程/并发接入 Sentinel 与不接入 Sentinel 吞吐量的对比。通过执行一些 CPU 密集型操作(数组排序)来模拟不同 QPS 下性能表现:

单线程测试不同Base QPS下性能表现

1)单机 QPS 非常大(16W+)场景,Sentinel 带来的性能损耗会比较大。这种情况业务逻辑本身的耗时非常小,而 Sentinel 一系列的统计、检查操作会消耗一定的时间。常见的场景有缓存读取操作。

2)单机 QPS 在 6W 以下的时候,Sentinel 的性能损耗就比较小了,对大多数场景来说都适用。

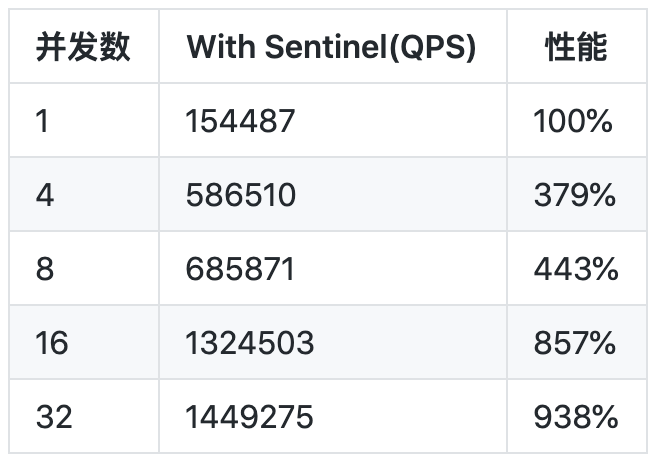

Benchmark

排序数组长度是200时候(Base QPS:169204),并发4/8/16/32/32+并发下的性能表现:

内存损耗:6000 个resource循环跑(单机的极端场景,目前默认最多支持 6000 个resource)

- 单线程不断循环运行:内存占用约 30 MB

- 8个协程并发不断循环运行:内存占用约 36 MB

轻量级

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

04

01

02

03

06

流控降级

最佳实践

05

Data Race

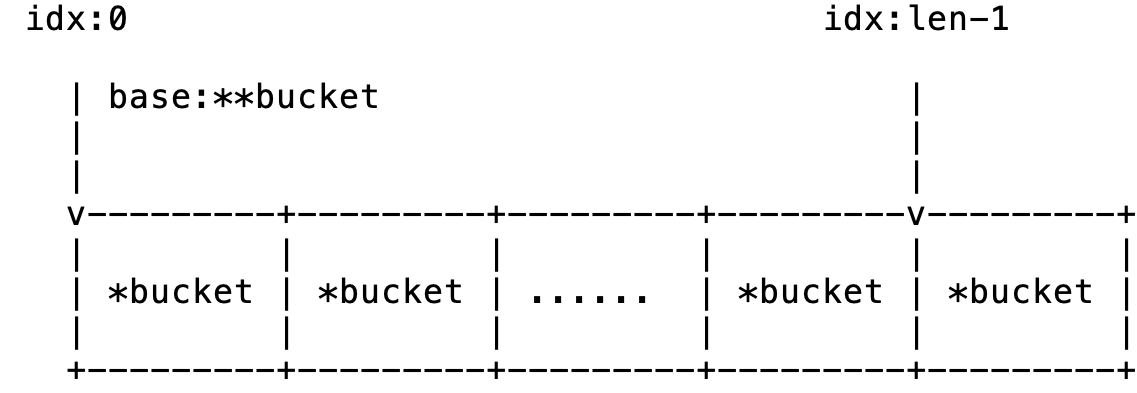

- Sliding window is implements by AtomicArray

- Concurrent safe access to *bucket

type AtomicBucketArray struct {

// The base address of real data array

base unsafe.Pointer

// The length of slice(array)

// Readonly

length int

data []*Bucket

}

sliHeader := (*util.SliceHeader)(unsafe.Pointer(&a.data))

a.base = unsafe.Pointer((**Bucket)(sliHeader.Data))

WHERE?

WHY?

data race is undefined behavior

Data Race

- atomic.Load and atomic.Store must be used in pairs

- Be careful of atomic variable, especially atomic Pointers

- Always use unsafe.Pointer instead of uintptr, except debug

Case Study:

Object Pool Recycle && Data Race

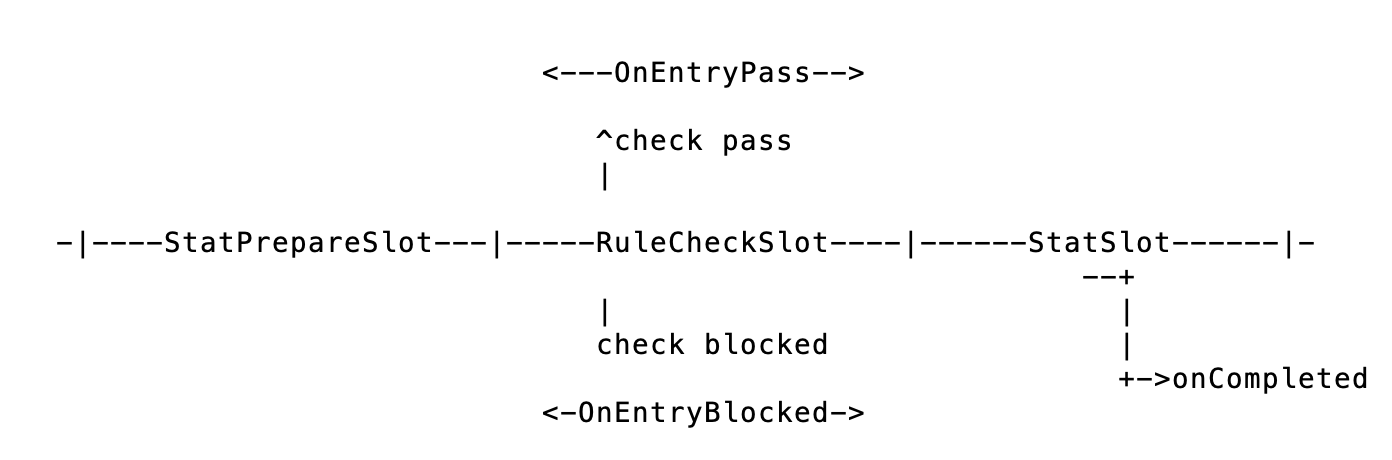

Sentinel core slot chain pipeline

type RuleCheckSlot interface {

// Check function do some validation

// It can break off the slot pipeline

// Each TokenResult will return check result

Check(ctx *EntryContext) *TokenResult

}

type TrafficShapingChecker interface {

DoCheck(node base.StatNode, acquireCount uint32, threshold float64) *base.TokenResult

}

type CircuitBreaker interface {

Check(ctx *base.EntryContext) *base.TokenResult

}Sentinel's check is based on rules

The number of temporary variables, TokenResult,

is very large.

So Pool

exit

Object Pool && Data Race

Let's reappear scenario:

type EntryContext struct {

....

// RuleCheckResult represents the result of

// the Entry slot chain

RuleCheckResult *TokenResult

....

}Object Pool && Data Race

- Concurrency R/W without atomic?

- Context is repeated recycling?

- TokenResult is repeated recycling?

- Reuse TokenResult after putting back?

Troubleshooting:

Object Pool && Data Race

Case Study:

- Manually initialize the object got from the Pool.

- Reset the object before Put.

- Think about the lifecycle of the object in Pool.

- Avoid to assign the pointer of reused object to other variable.(DON'T USE OBJECT AFTER PUT BACK)

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

05

01

02

03

06

流控降级

最佳实践

04

流控降级

RPC architecture

RPC Provider

- QPS模式限流

RPC Consumer

- 控制调用的并发数

- 下游服务熔断降级

微服务的

稳定性

Sentinel特性

碰到的

一些“坑”

展望未来

云原生

核心组件设计

06

01

02

03

05

流控降级

最佳实践

04

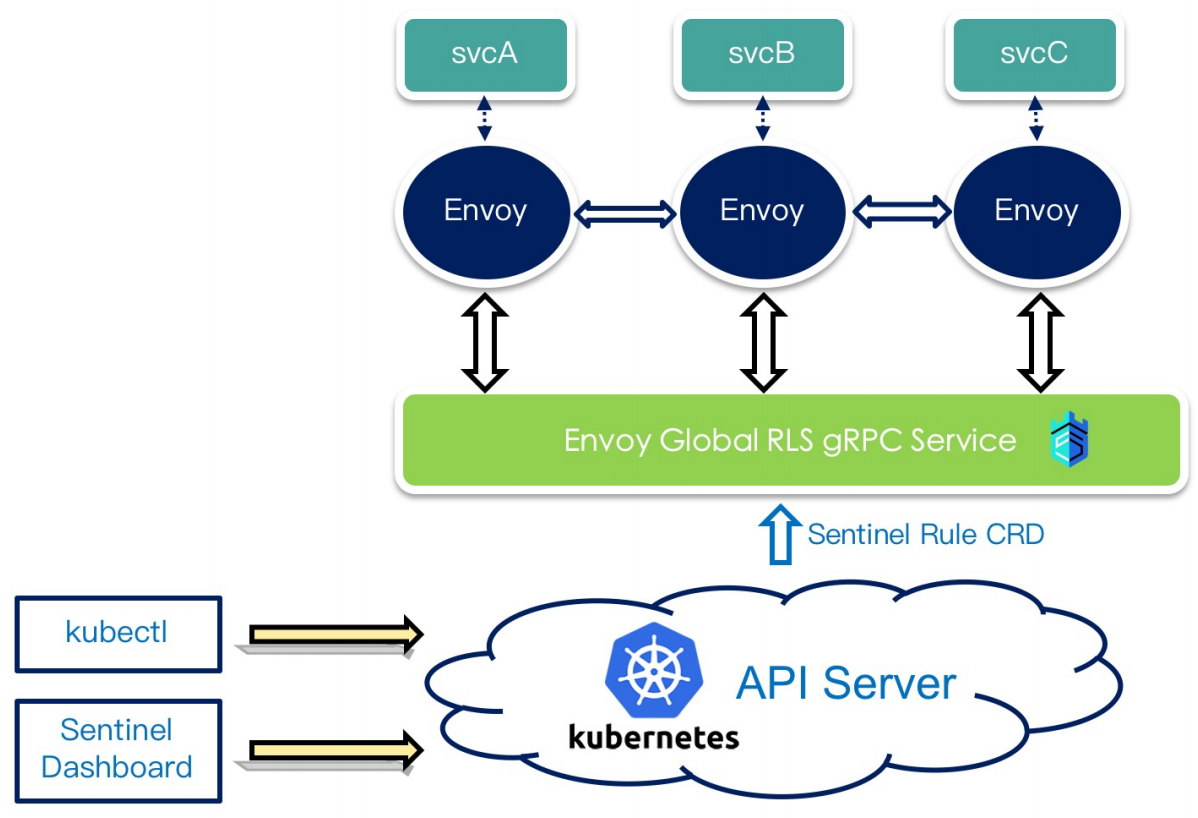

- K8s CRD as dynamic datasource

- Istio Proxy(WASM?)

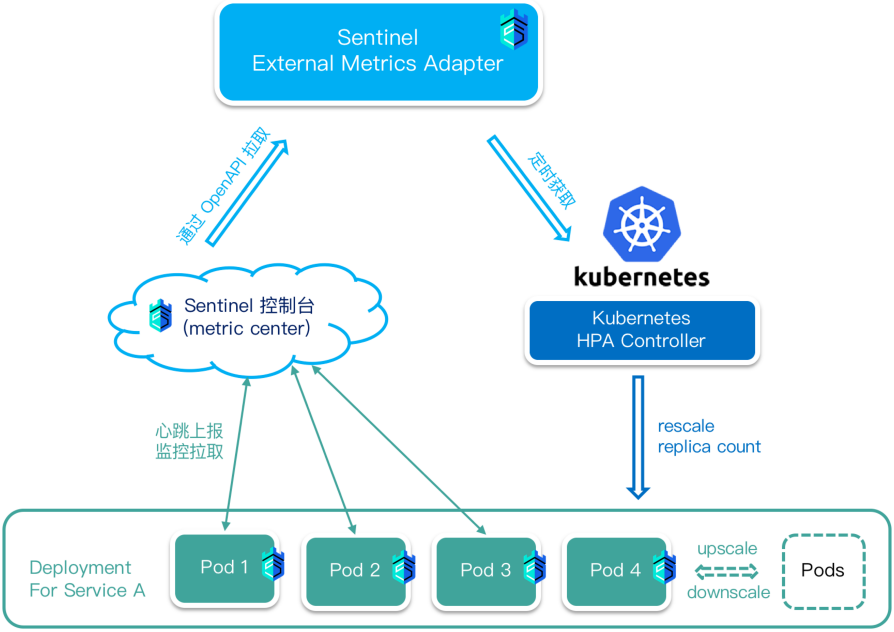

- HPA(Horizontal Pod Autoscaler)

- Cluster flow control

- ......

Cloud native

K8S CRD Datasource

K8S HPA 弹性

弹性指标:

- 应用Pass QPS / Blocked QPS / Total QPS

- 应用平均响应时间

- 结合CPU usage / load指标

Autoscale instead of flow control?

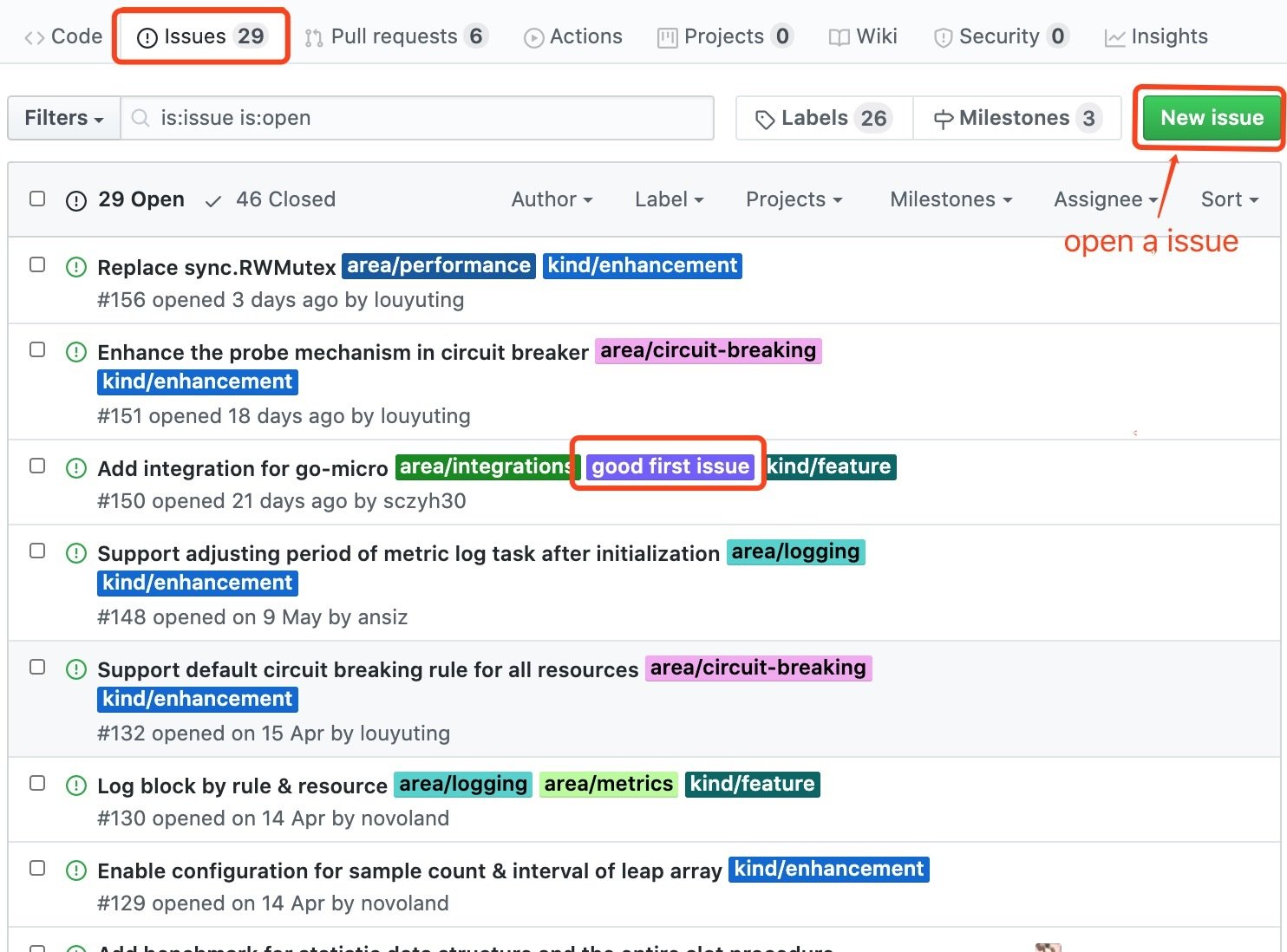

From Zero to Contributor

参与贡献途径

- Github挑选Issue;

- 参与核心贡献小组认领Issue

激励:

- 技术成长

- 核心贡献者成为committer

Contact Us