Diffusion Policies

Towards Foundations Models for Control(?)

Russ Tedrake

Workshop on Control and Machine Learning

October 11, 2023

"What's still hard for AI" by Kai-Fu Lee:

-

Manual dexterity

-

Social intelligence (empathy/compassion)

"Dexterous Manipulation" Team

(founded in 2016)

For the next challenge:

Good control when we don't have useful models?

For the next challenge:

Good control when we don't have useful models?

- Rules out:

- Simulation

- Reinforcement learning (in practice)

- State Estimation / Model-based control

- Two natural choices:

- Learn a dynamics model

- Behavior cloning (imitation learning)

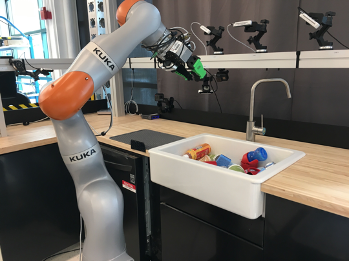

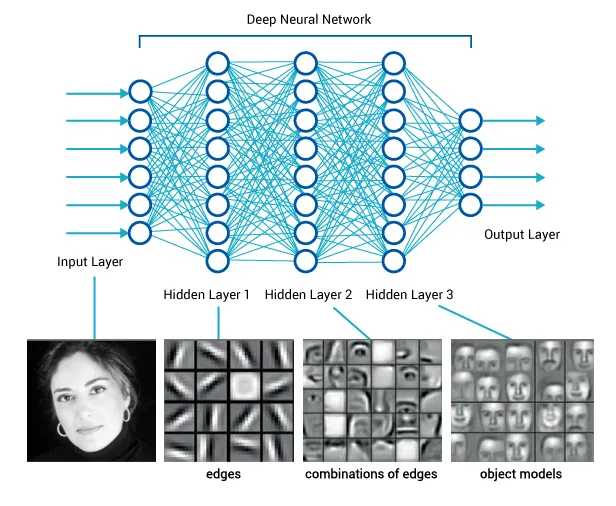

Levine*, Finn*, Darrel, Abbeel, JMLR 2016

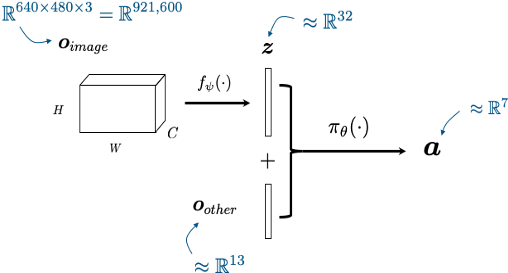

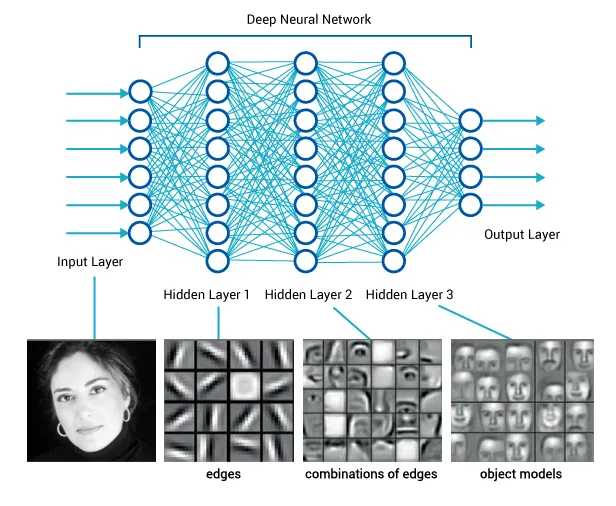

Visuomotor policies

perception network

(often pre-trained)

policy network

other robot sensors

learned state representation

actions

x history

I was forced to reflect on my core beliefs...

- The value of using RGB (at control rates) as a sensor is undeniable. I must not ignore this going forward.

- I don't love imitation learning (decision making \(\gg\) mimcry), but it's an awfully CLEVR way to explore the space of policy representations

- Don't need a model

- Don't need an explicit state representation

- (Not even to specify the objective!)

We've been exploring, and seem to have found something...

Earlier this week...

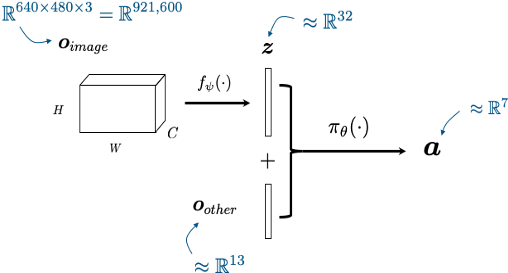

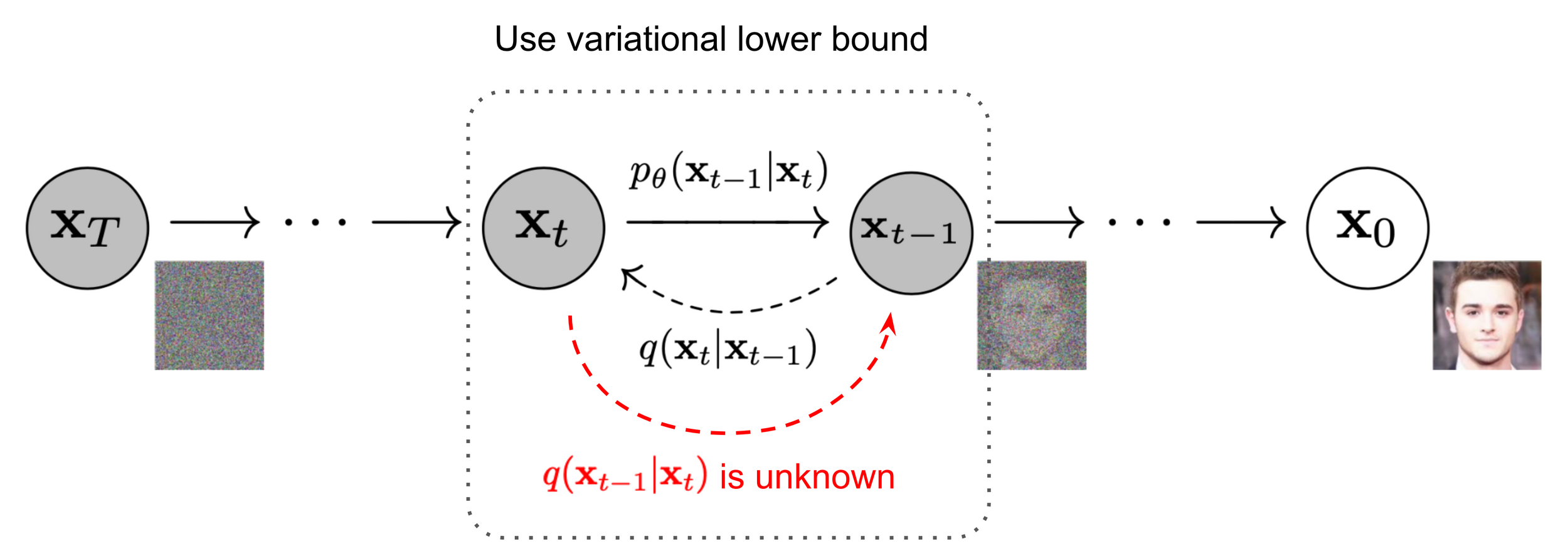

Denoising diffusion models (generative AI)

Image source: Ho et al. 2020

Denoiser can be conditioned on additional inputs, \(u\): \(p_\theta(x_{t-1} | x_t, u) \)

A derministic interpretation (manifold hypothesis)

Denoising approximates the projection onto the data manifold;

approximating the gradient of the distance to the manifold

Representing dynamic output feedback

input

output

Control Policy

(as a dynamical system)

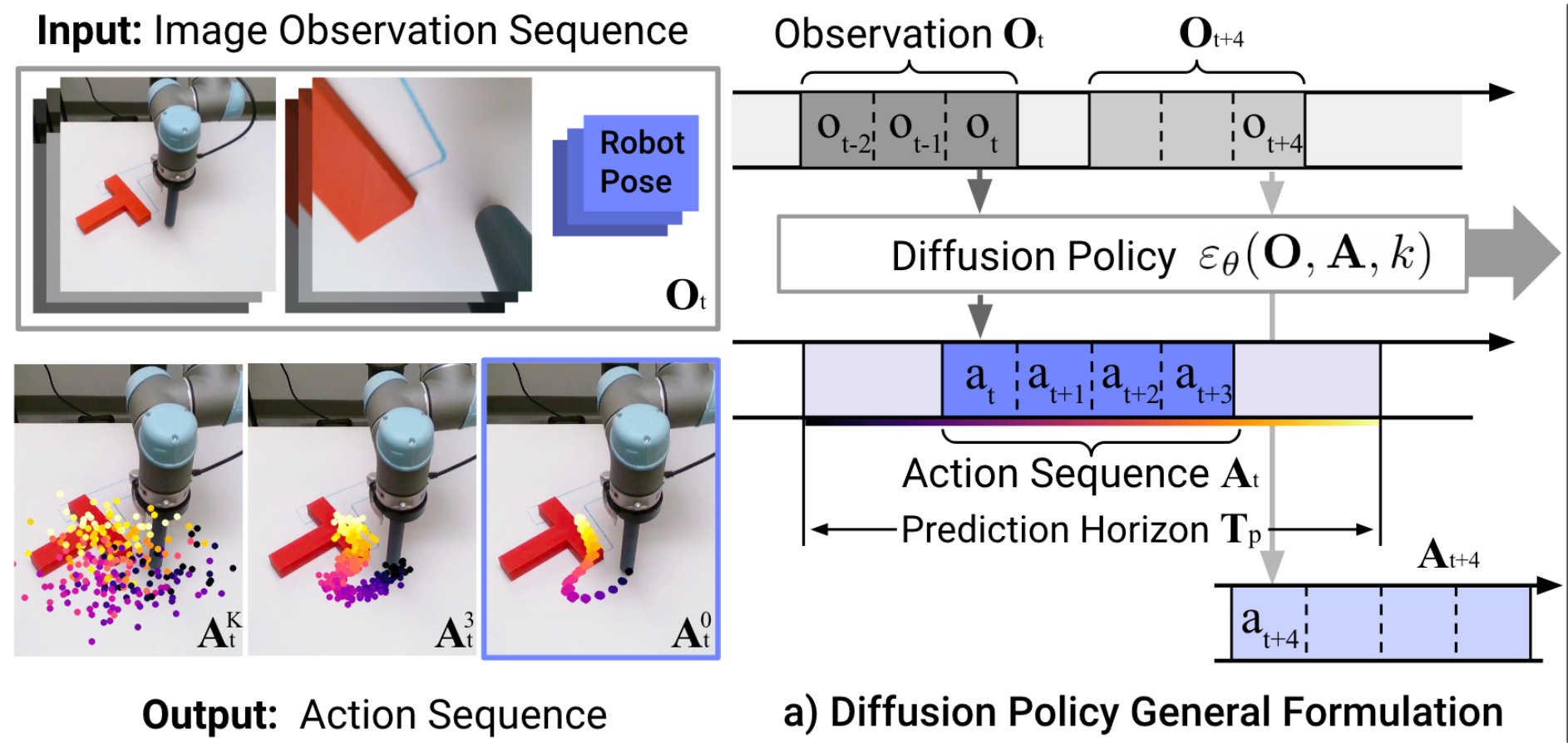

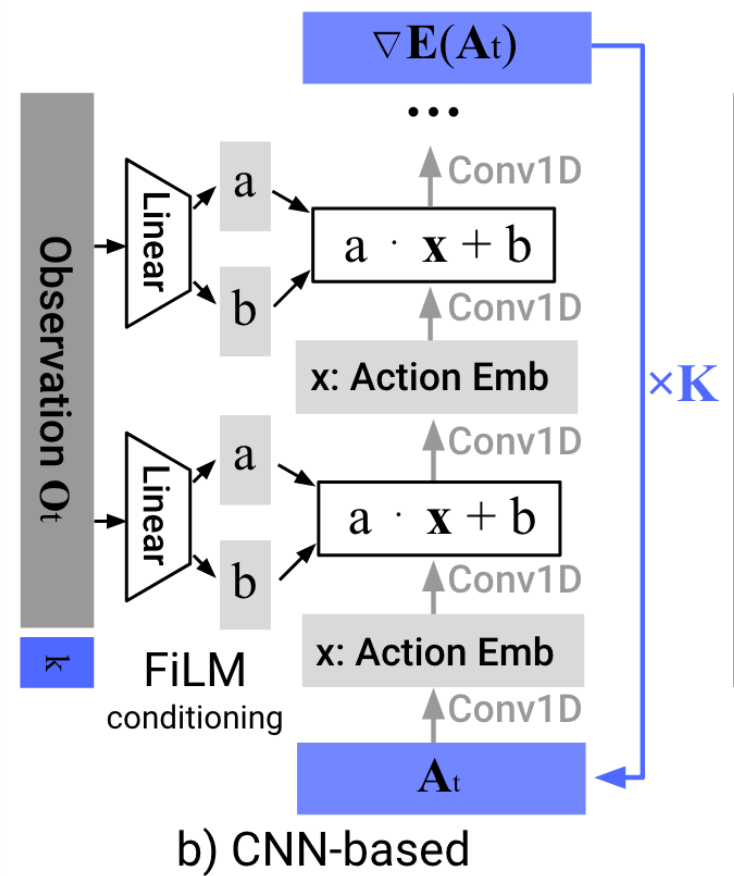

"Diffusion Policy" is an auto-regressive (ARX) model with forecasting

\(H\) is the length of the history,

\(P\) is the length of the prediction

Conditional denoiser produces the forecast, conditional on the history

Image backbone: ResNet-18 (pretrained on ImageNet)

Total: 110M-150M Parameters

Training Time: 3-6 GPU Days ($150-$300)

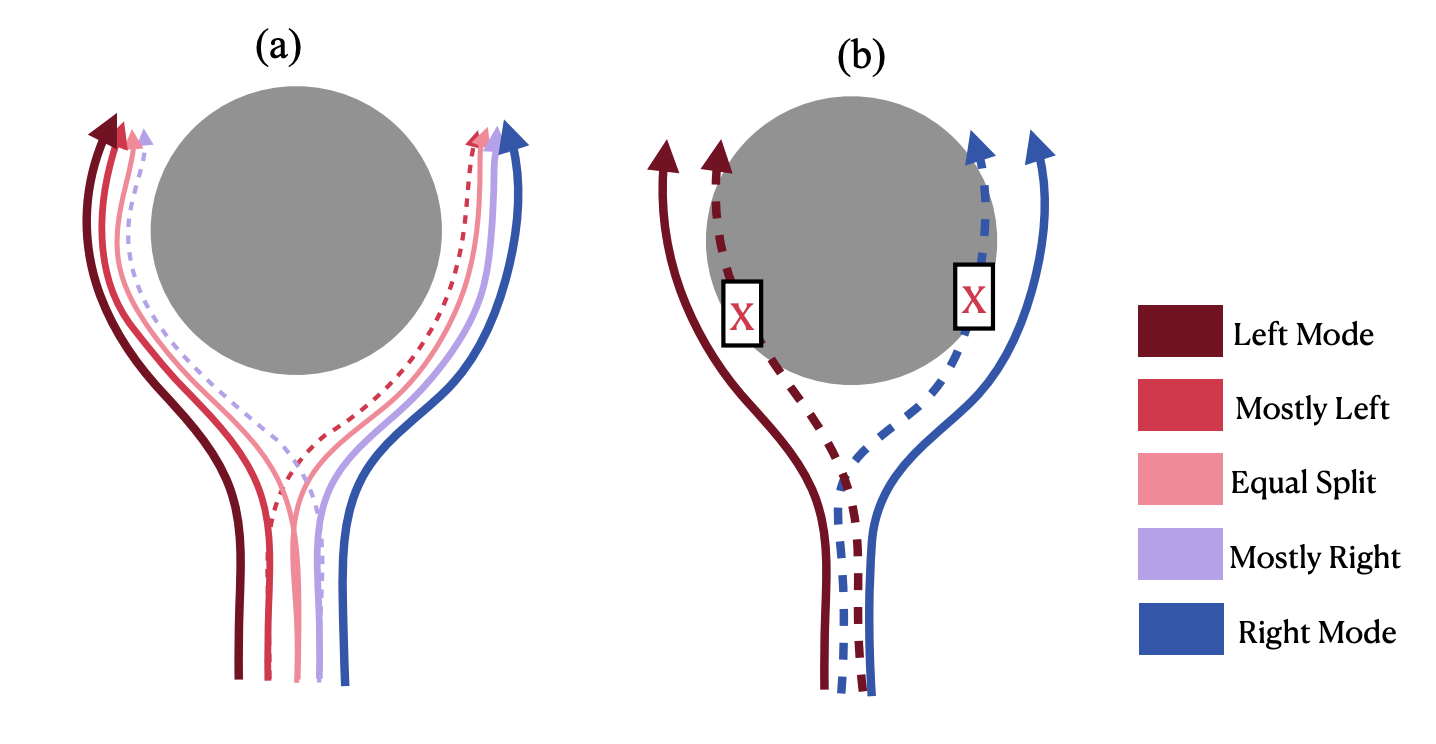

Learns a distribution (score function) over actions

e.g. to deal with "multi-modal demonstrations"

Why (Denoising) Diffusion Models?

- High capacity + great performance

- Small number of demonstrations (typically ~50)

- Multi-modal (non-expert) demonstrations

- Training stability and consistency

- no hyper-parameter tuning

- Generates high-dimension continuous outputs

- vs categorical distributions (e.g. RT-1, RT-2)

- Action-chunking transformers (ACT)

- Solid mathematical foundations (score functions)

- Reduces nicely to the simple cases (e.g. LQG / Youla)

Enabling technologies

Haptic Teleop Interface

Excellent system identification / robot control

Visuotactile sensing

with TRI's Soft Bubble Gripper

Open source:

Scaling Up

- I've discussed training one skill

-

Wanted: few shot generalization to new skills

- multitask, language-conditioned policies

- connects beautifully to internet-scale data

-

Big Questions:

- How do we feed the data flywheel?

- What are the scaling laws?

- I don't see any immediate ceiling

Discussion

I do think there is something deep happening here...

- Manipulation should be easy (from a controls perspective)

- probably low dimensional?? (manifold hypothesis)

- memorization can go a long way

If we really understand this, can we do the same via principles from a model? Or will control go the way of computer vision and language?

Summary

- Dexterous manipulation is still unsolved, but progress is fast

- Visuomotor diffusion policies

- currently via imitation learning from humans

- We need a deeper understanding (e.g. more theory)

- Much of our code is open-source:

pip install drake

sudo apt install drake