Deep Learning

Alexander Lifanov

SpbPython, 2017

Acquired IO

DL != DS

Next frontend (hype)

Examples

Automatic colorization

Add sounds to silent videos

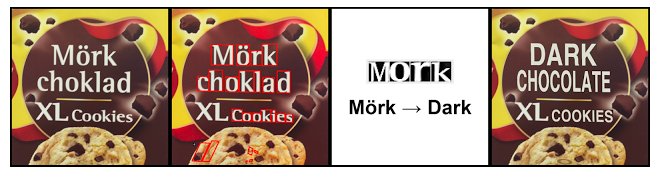

Machine translation

Object detection

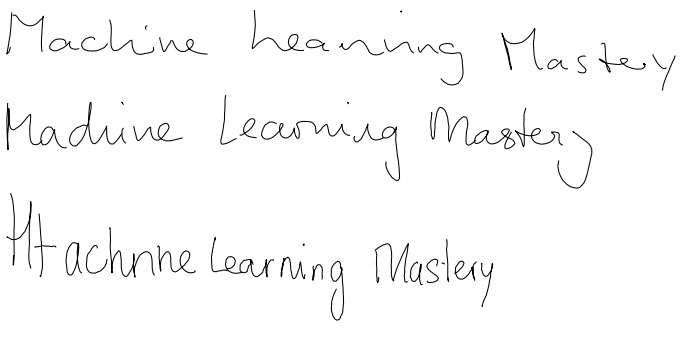

Handwriting generation

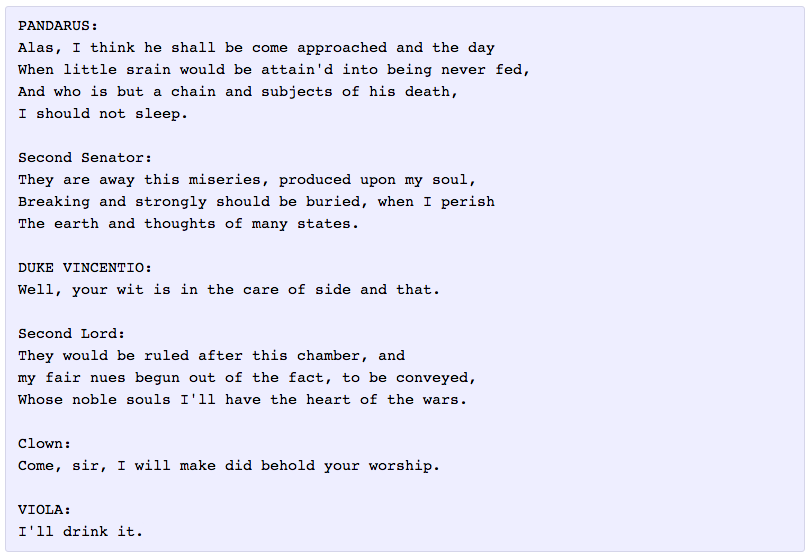

Text generation

Image captioning

Game playing

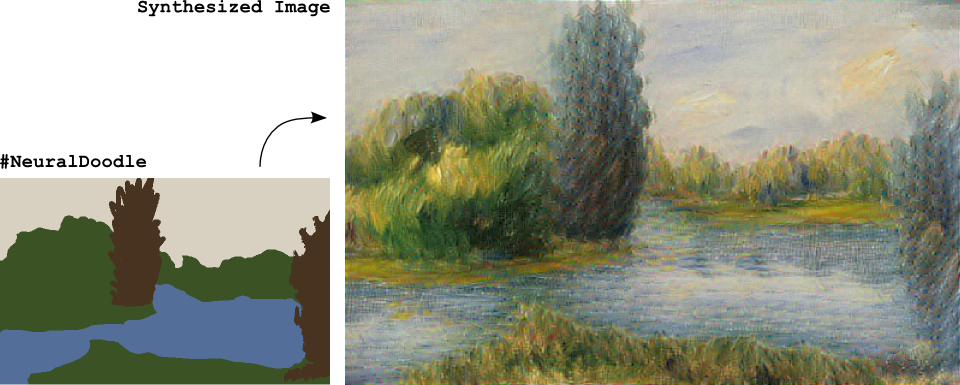

Style transfer

Machine learning

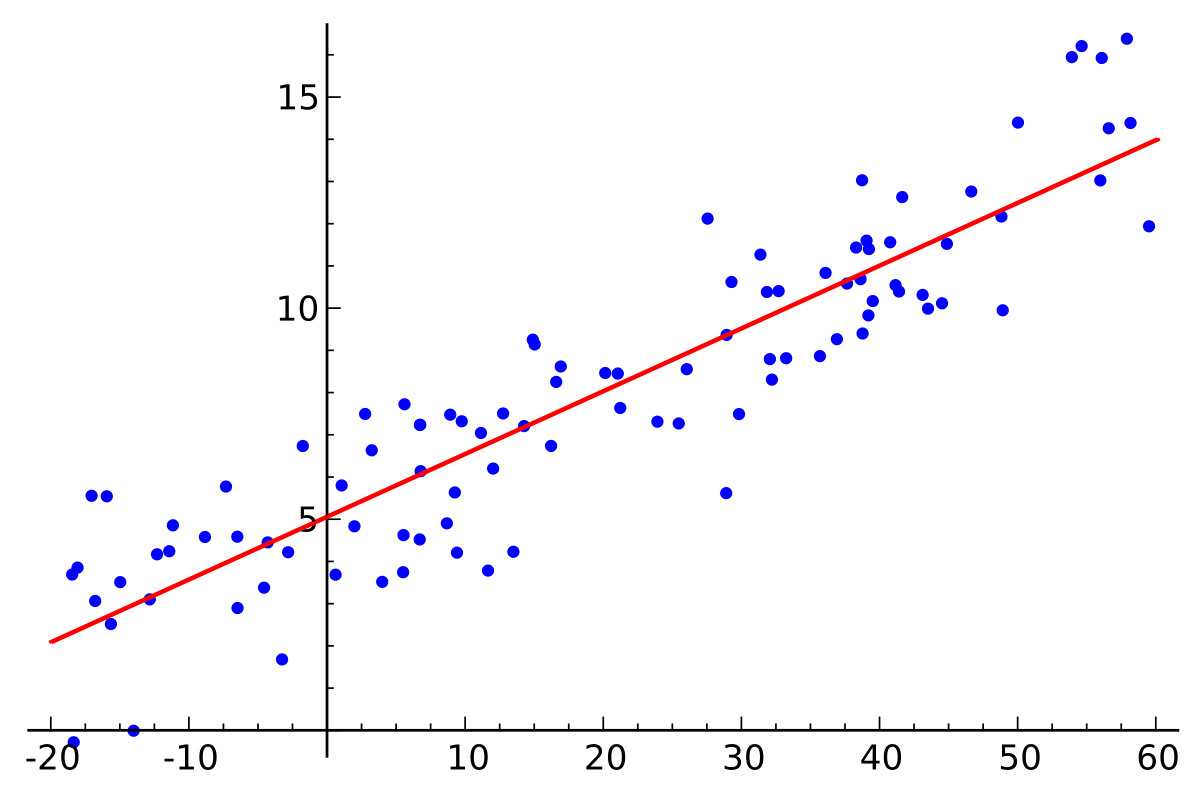

Regression

Supervised learning

Unsupervised learning

Linear regression

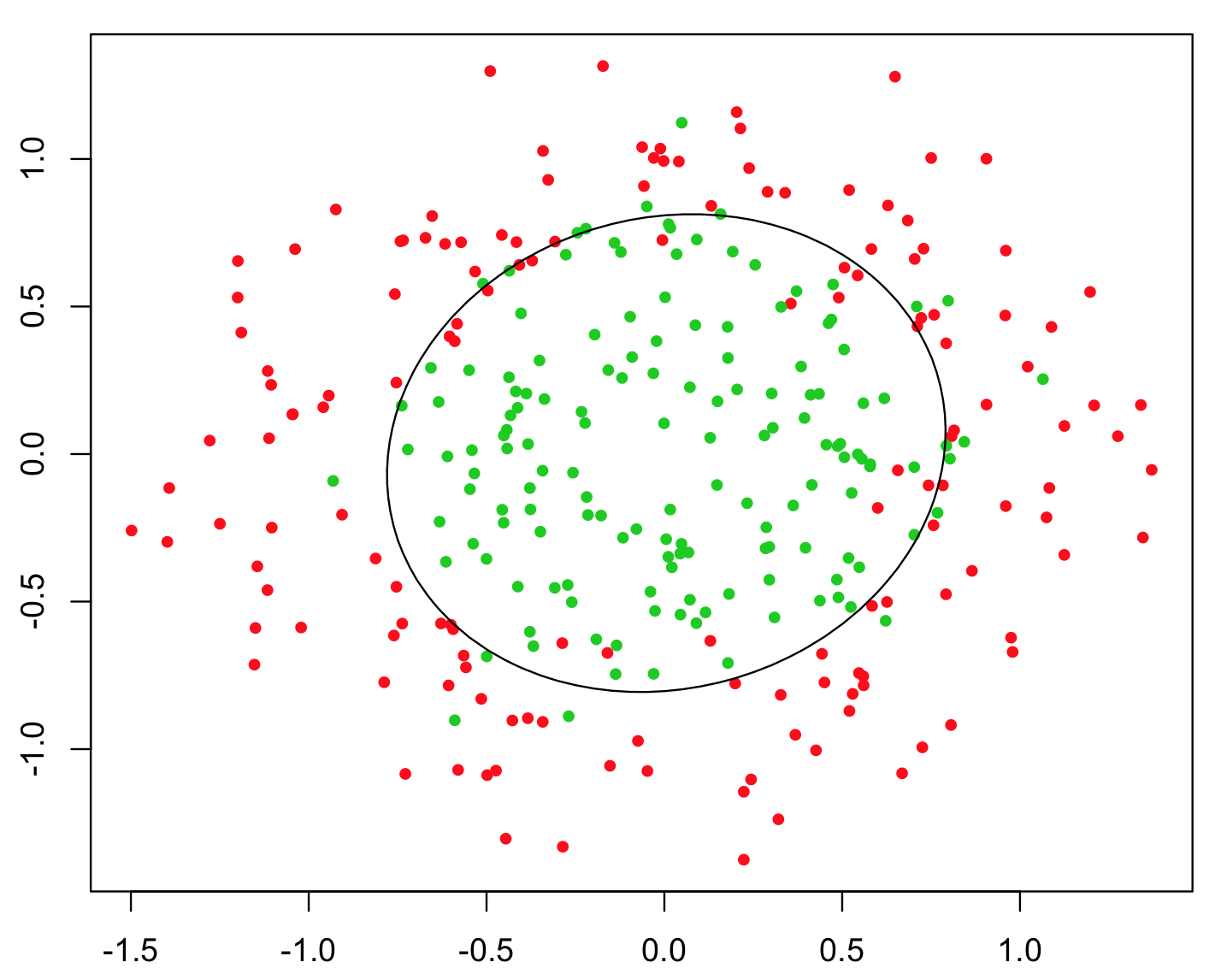

Logistic regression

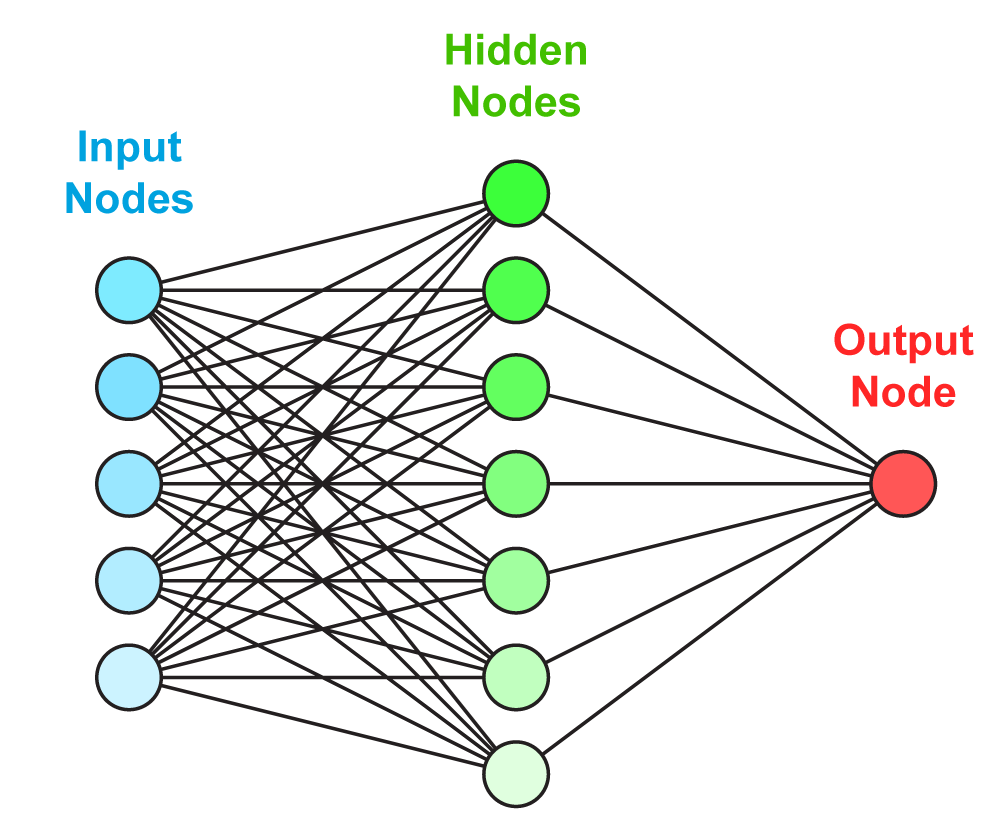

NN

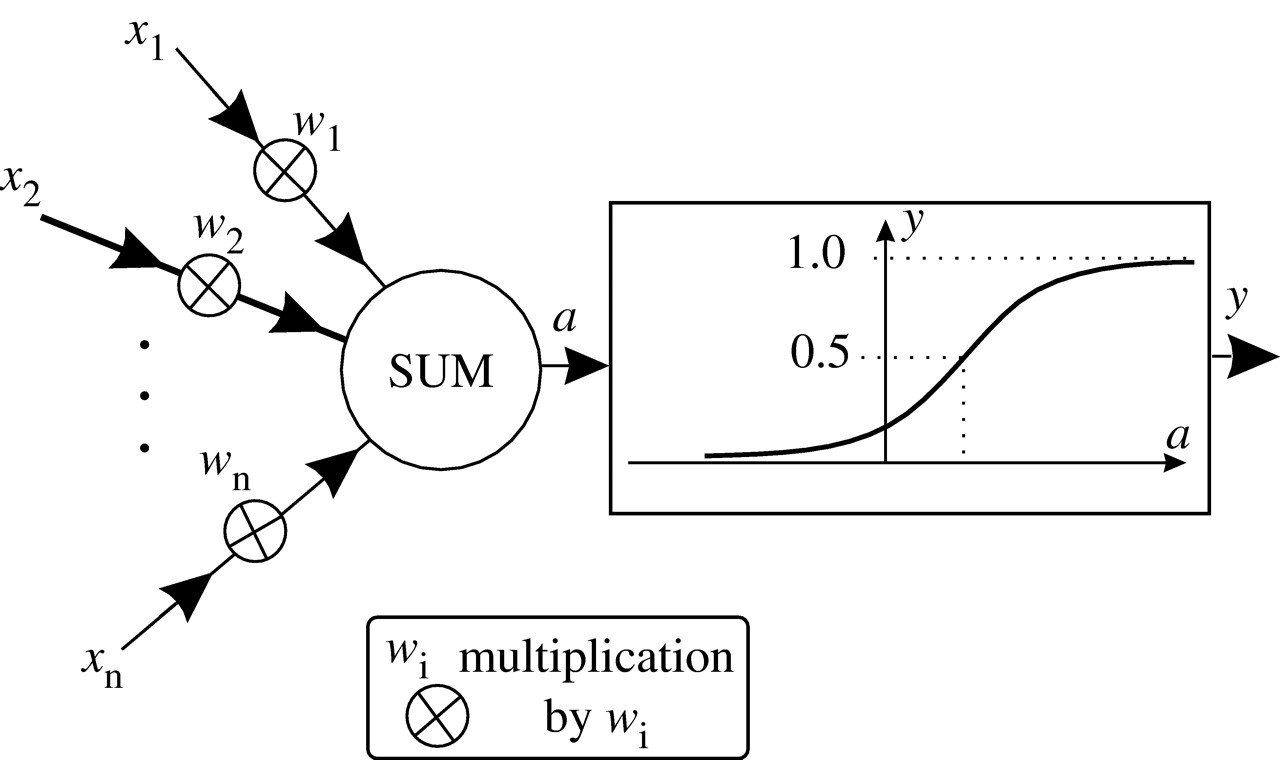

Artificial neuron

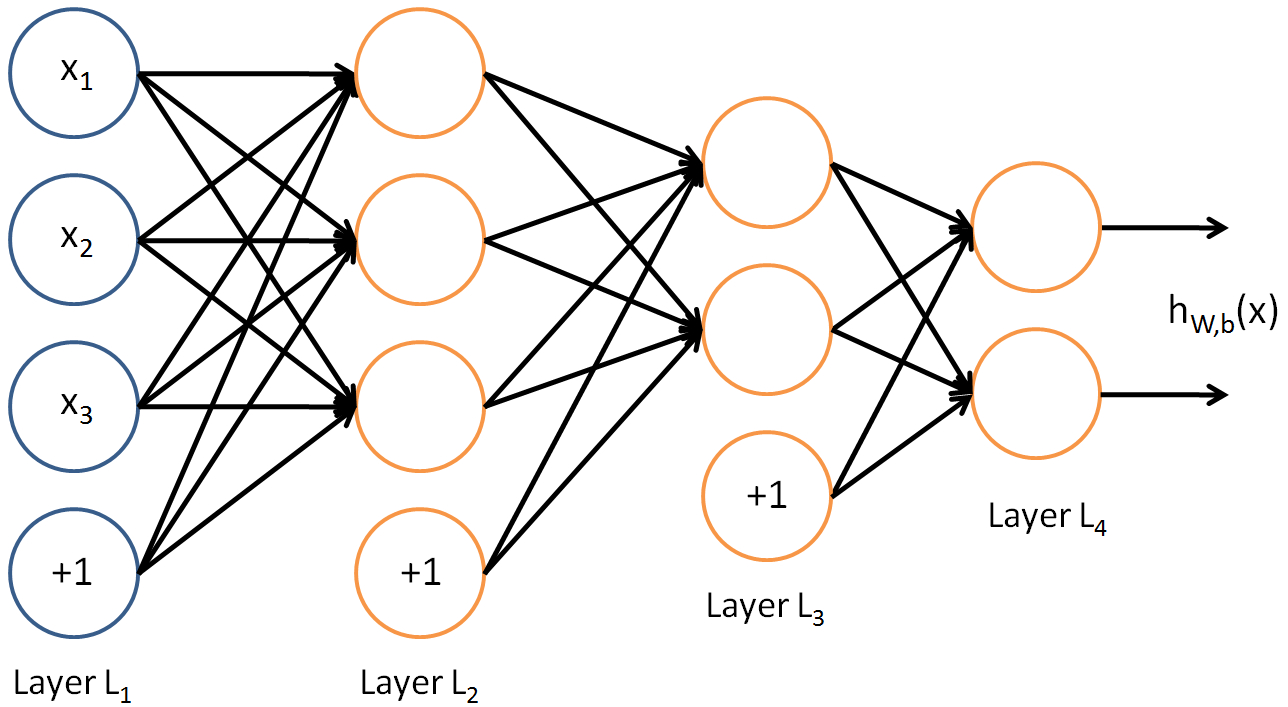

DNN

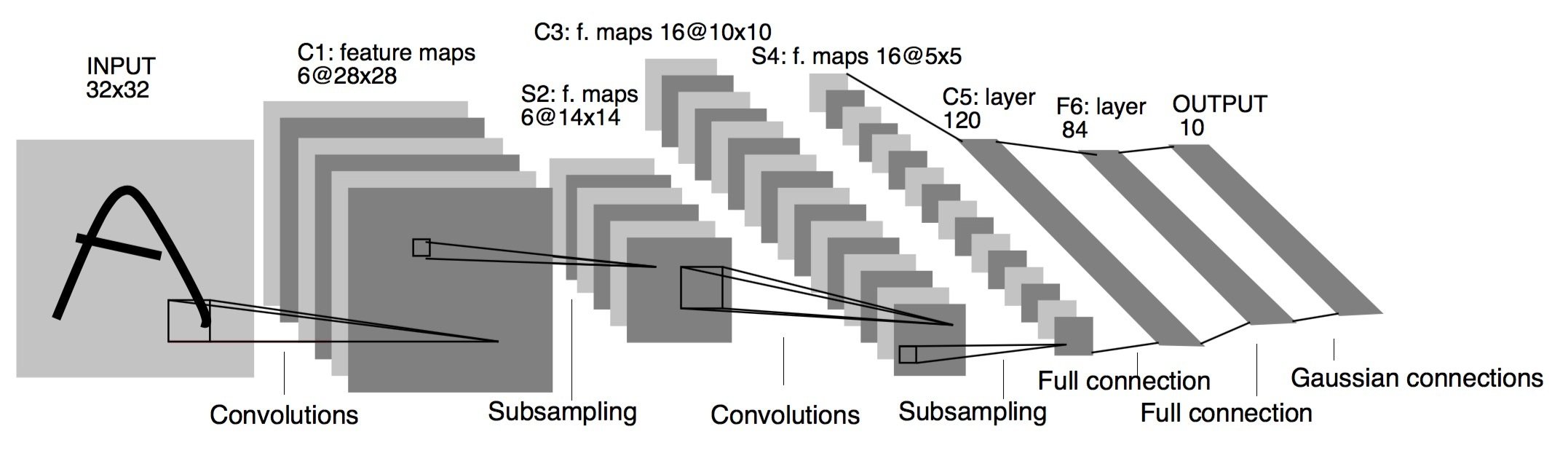

CNN

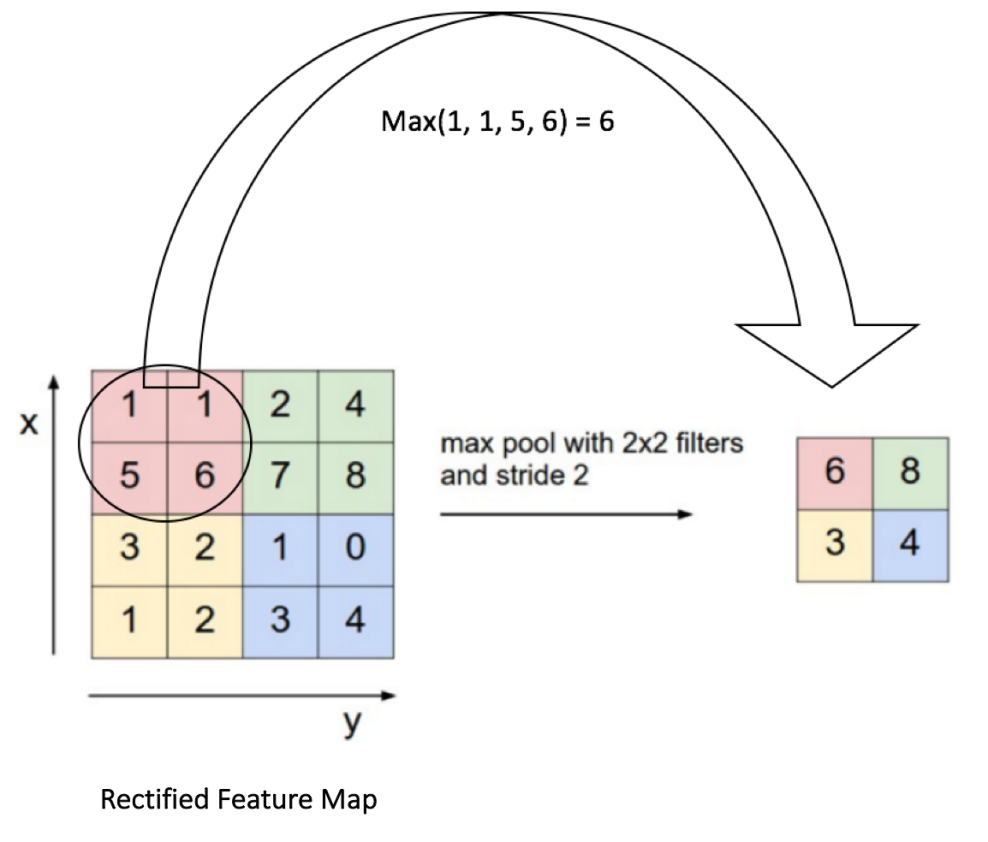

CNN.Pooling

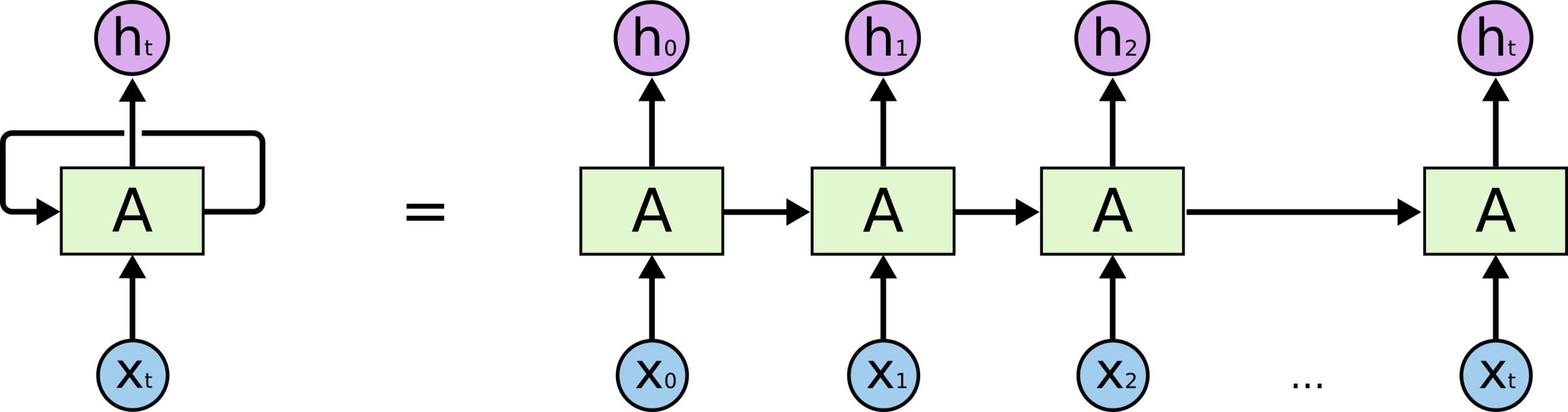

RNN

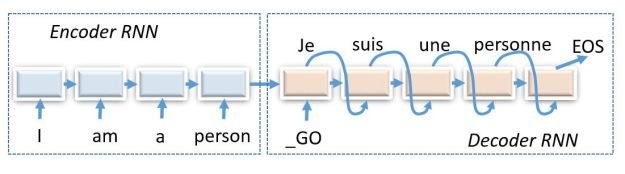

Seq2seq

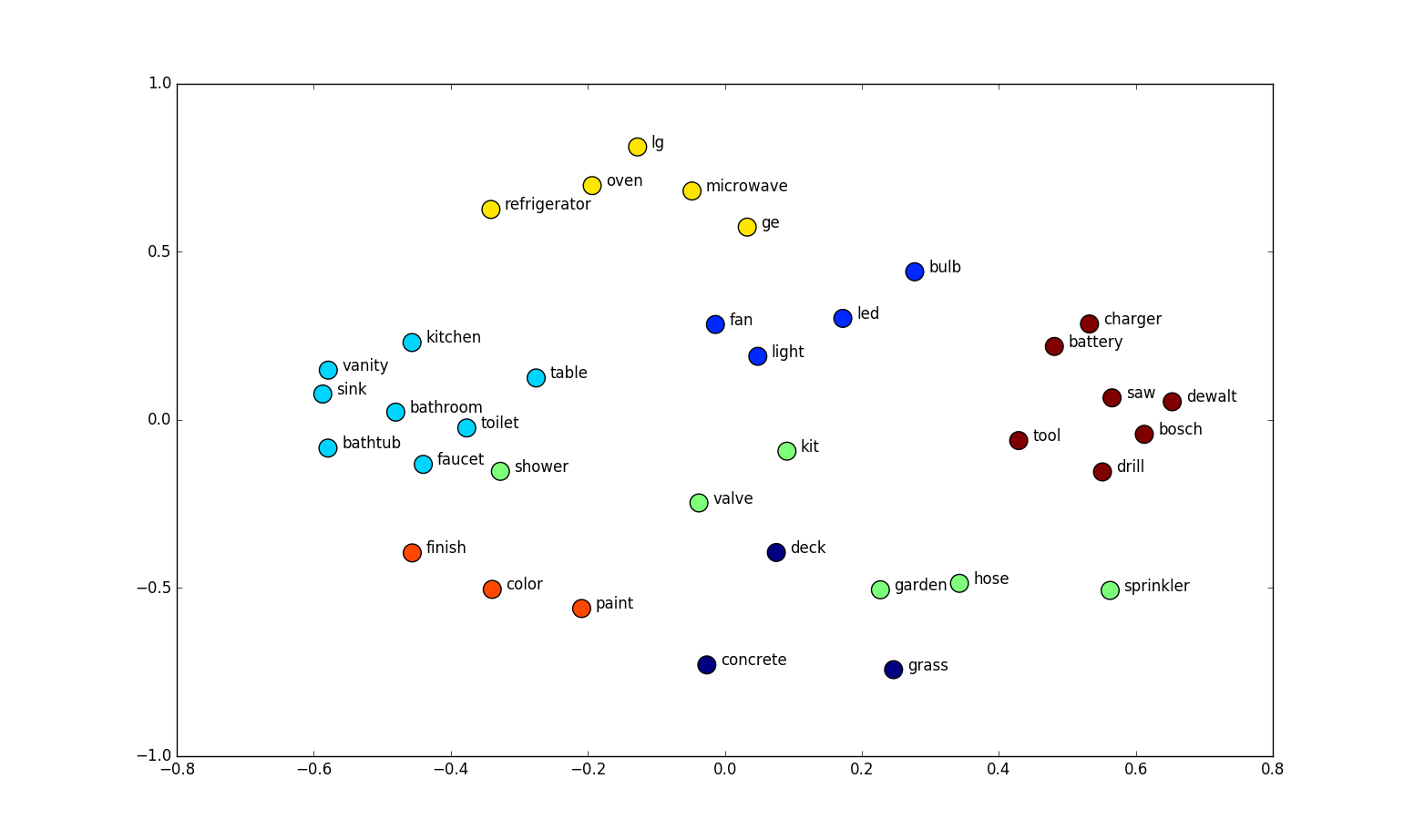

Word embedding

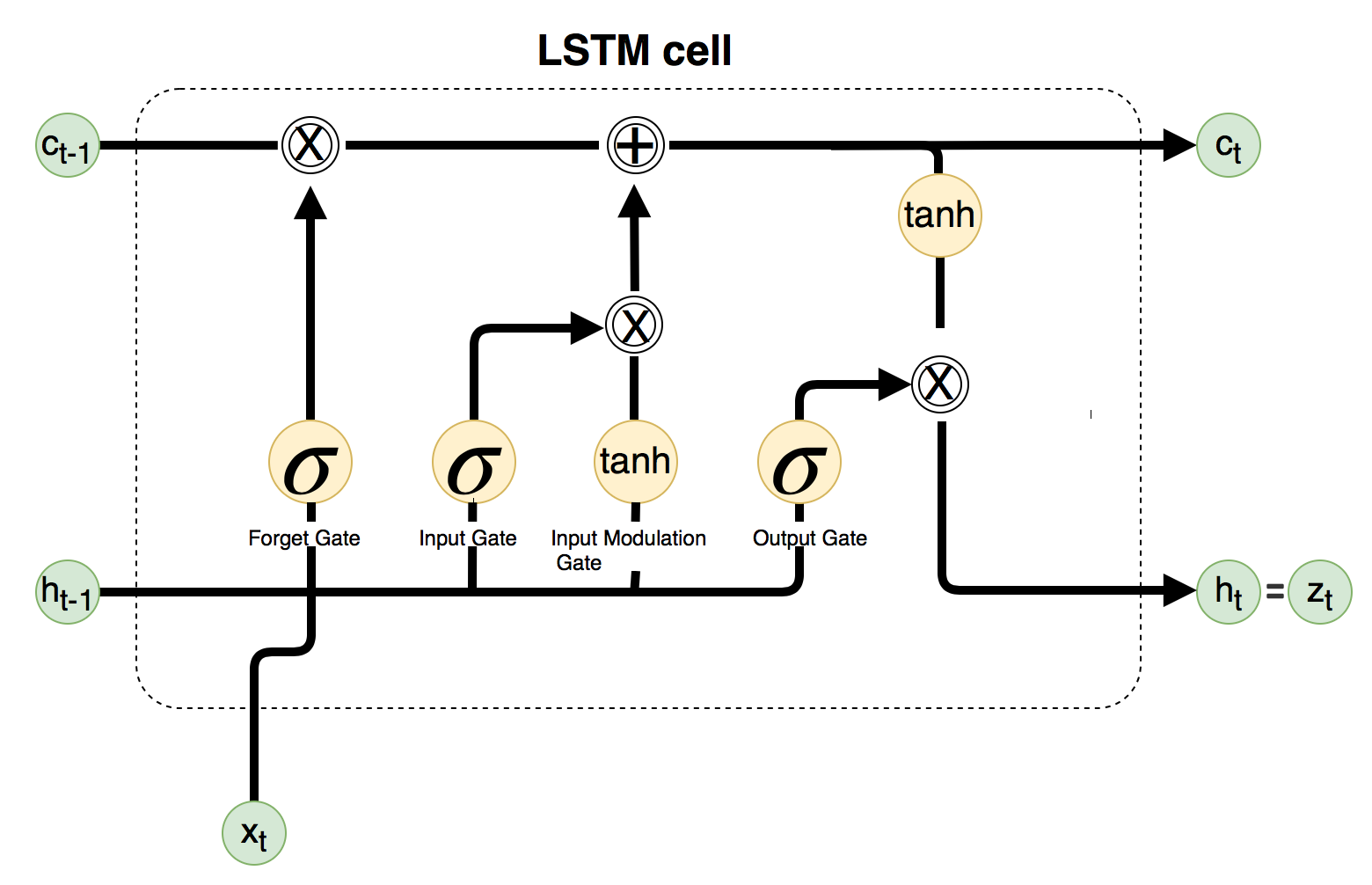

LSTM

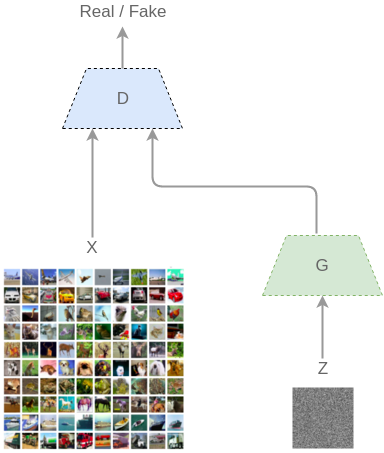

GAN

Transfer learning (a la .so)

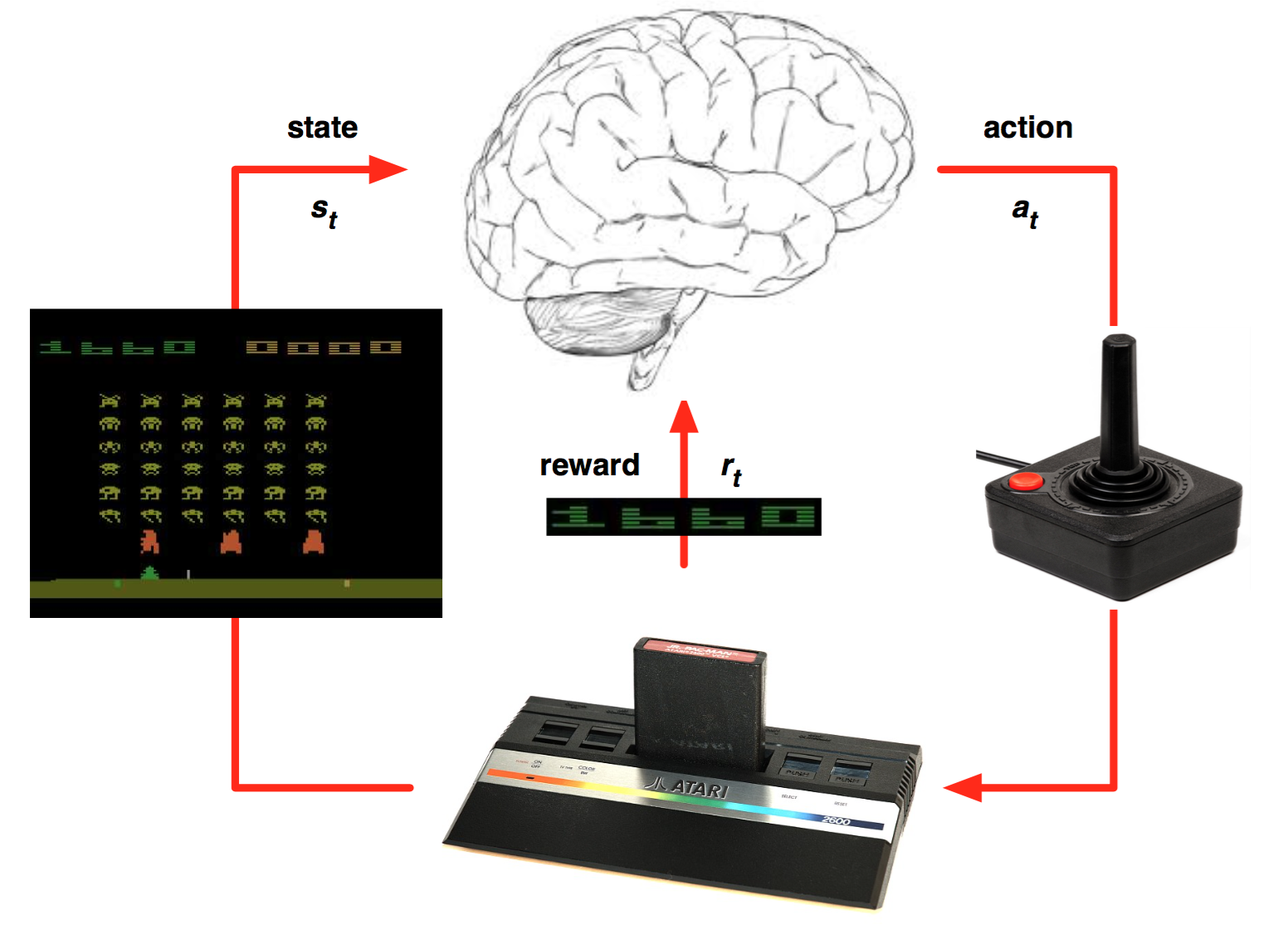

Reinforcement learning (Atari games)

Q(s,a) = r + γ(max(Q(s’,a’))

HTML generation

https://github.com/alifanov/noco

1 second rule

Future apps

Code generation

Code assistant

Short text

Algo UI

Personal UI

Libs and frameworks

numpy

sklearn

Tensorflow

import numpy as np

import tensorflow as tf

# Model parameters

W = tf.Variable([.3], tf.float32)

b = tf.Variable([-.3], tf.float32)

# Model input and output

x = tf.placeholder(tf.float32)

linear_model = W * x + b

y = tf.placeholder(tf.float32)

# loss

loss = tf.reduce_sum(tf.square(linear_model - y)) # sum of the squares

# optimizer

optimizer = tf.train.GradientDescentOptimizer(0.01)

train = optimizer.minimize(loss)

# training data

x_train = [1,2,3,4]

y_train = [0,-1,-2,-3]

# training loop

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init) # reset values to wrong

for i in range(1000):

sess.run(train, {x:x_train, y:y_train})

# evaluate training accuracy

curr_W, curr_b, curr_loss = sess.run([W, b, loss], {x:x_train, y:y_train})

print("W: %s b: %s loss: %s"%(curr_W, curr_b, curr_loss))

------------------------------------------

[]: W: [-0.9999969] b: [ 0.99999082] loss: 5.69997e-11

Theano

Torch

Keras

Simple NN

from keras.models import Sequential

model = Sequential()

from keras.layers import Dense, Activation

model.add(Dense(units=64, input_dim=100))

model.add(Activation('relu'))

model.add(Dense(units=10))

model.add(Activation('softmax'))keras

from keras.models import Sequential

model = Sequential()

from keras.layers import Dense, Activation

model.add(Dense(units=64, input_dim=100))

model.add(Activation('relu'))

model.add(Dense(units=10))

model.add(Activation('softmax'))

model.compile(loss='categorical_crossentropy',

optimizer='sgd',

metrics=['accuracy'])

model.fit(x_train, y_train, epochs=5, batch_size=32)

classes = model.predict(x_test, batch_size=128)

Courses

https://www.udacity.com/course/deep-learning--ud730

https://ru.coursera.org/learn/machine-learning

https://www.youtube.com/playlist?list=PLE6Wd9FR--EfW8dtjAuPoTuPcqmOV53Fu

(Nando de Freitas)

Blogs

https://habrahabr.ru/company/ods/blog/322626/

http://machinelearningmastery.com

https://medium.com/@awjuliani

http://datareview.info

Videos

Artificial Intelligence and Machine Learning

https://www.youtube.com/channel/UCfelJa0QlJWwPEZ6XNbNRyA

DeepLearning.TV

https://www.youtube.com/channel/UC9OeZkIwhzfv-_Cb7fCikLQ

sentdex

https://www.youtube.com/channel/UCfzlCWGWYyIQ0aLC5w48gBQ

Siraj Raval

https://www.youtube.com/channel/UCWN3xxRkmTPmbKwht9FuE5A

Questions

Alexander Lifanov

Telegram: @jetbootsmaker