It was 2017

Computer Vision for IVF

What?

Select which cells to put in cold storage for future IVF

Why?

Storing cells is expensive, and most won't fertilize, so why keep them?

The task (tech.)

- Binary classification

- About 300 (initially) - 700 (later) images/class

- Uniform background, grayscale images

How bad can it be?

Initial estimation: ~3w until the first good prototype

(70% accuracy)

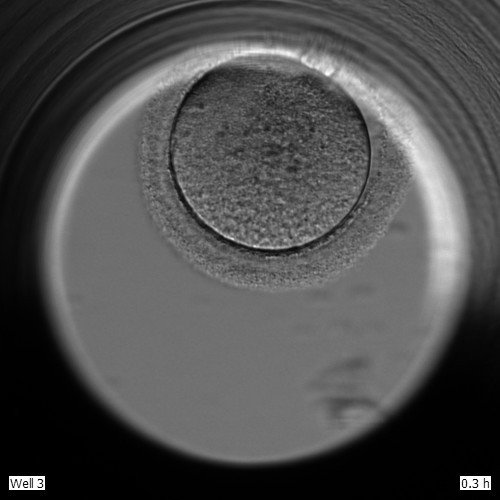

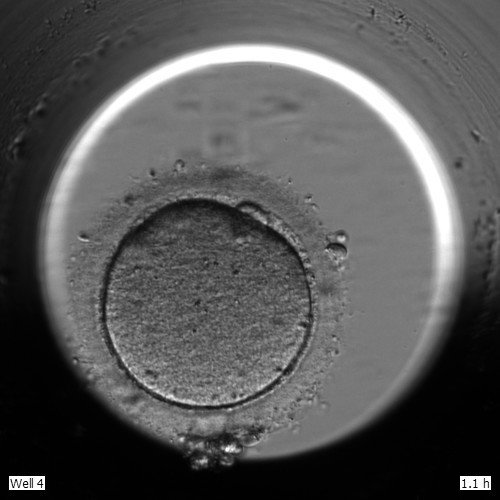

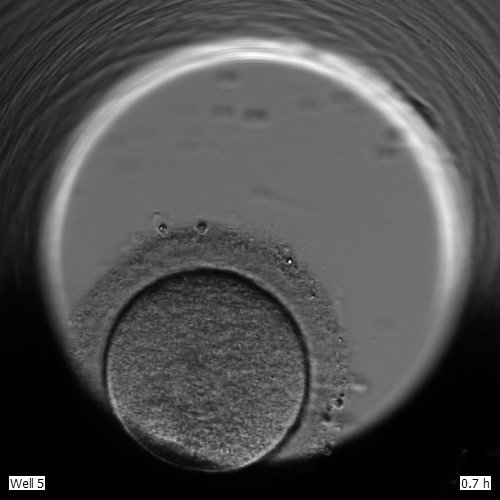

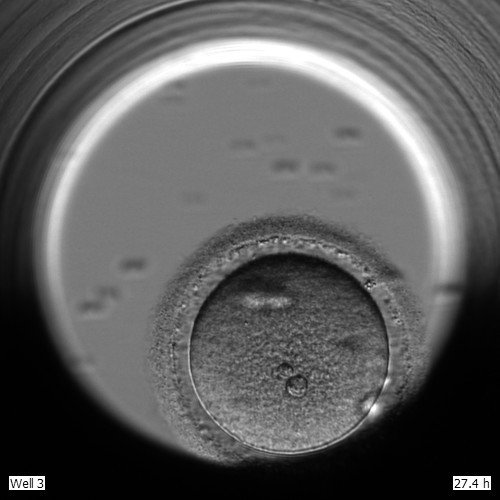

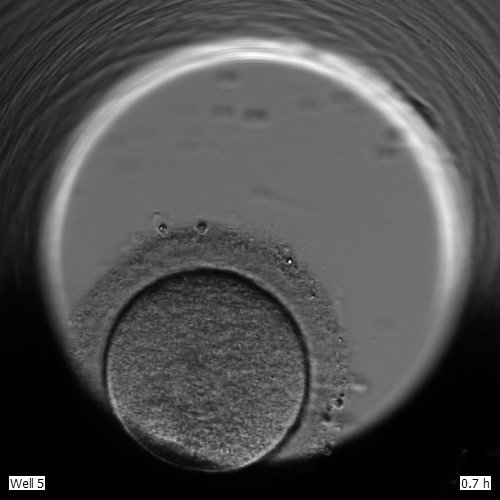

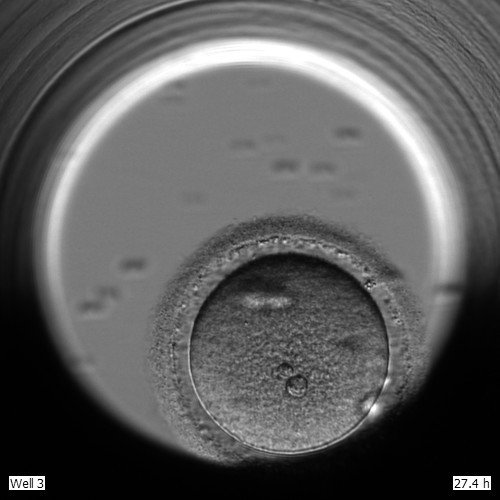

Positive Class

Negative Class

Positive Class

Negative Class

Wait! There's more!

- No clear human baseline

- No support from SME

What was the initial result?

Transfer learning InceptionV3

52% Accuracy

What was the best result after 6 months?

A combination of custom architecture, a bit more data, data augmentation and 1D temporal convolutions

69.2% Accuracy

The best result after ~1 year?

72% Accuracy

With 3x data, a lot of custom image pre-processing, and a professor and a PhD student from a well known US university

We tried a loooot of stuff

- Several Self-Supervised Learning schemes

- Transfer Learning with a few models

- A lot of data augmentation trickery

- Several custom NN architectures

- Tried classic CV based on similar research in bovine cells selection

Lessons learned

- Data is king

- Preprocessing is queen

- Simple problems can be rock hard

- Estimation is useless

Fun story time

One day, we tried Google AutoML Vision (it was still in closed beta). The CEO was helpful and did data augmentation beforehand.

We ran AutoML full training cycle.

And got 98% accuracy.

... hmm

We ran AutoML full training cycle.

And got 98% accuracy.

The CEO was ready to inform the stakeholders that we cracked it.

But, the best result before that was 69.2%.

Using occluded images, it turned out that the network was predicting garbage.

Indeed something was wrong.

Because CEO was helpful and did data augmentations beforehand but hadn't specified the train/test/valid splits, we had a data leak.

We wasted ~1.5k USD and 2 months

But could have wasted more

More lessons learned

- Never trust results that are too good

- Always assume the algorithm is cheating

- Interpretable/explainable ML is important

- Be careful when preparing your data