GAN Tutorial

Arvin Liu @ 北區AIA

Here is

slides.com/arvlinliu/gan-9

This slide is suitable for:

1. Have some experience in Deep Learning

2. Having basic GAN concept.

3. Thinking?

Schedule

| 表定時間 | 大致內容 |

|---|---|

| 14:00 ~ 15:00 | GAN Review, DCGAN, GAN problems (1) |

| 15:00 ~ 15:20 | break |

| 15:20 ~ 16:20 | Least Square GAN, IS/FID, WGAN |

| 16:20 ~ 16:40 | break |

| 16:40 ~ 17:40 | WGAN-GP, conditional GAN, ACGAN, cycle GAN, etc. |

| 17:40 ~ 18:00 | Q&A |

GAN Review

GAN's GOAL

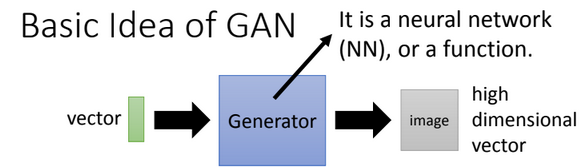

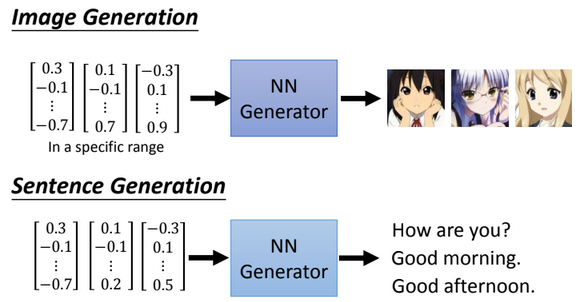

Generate a new data

Generative

Adversarial

Network

Generative Adversarial Network

image resource: 李宏毅老師的投影片

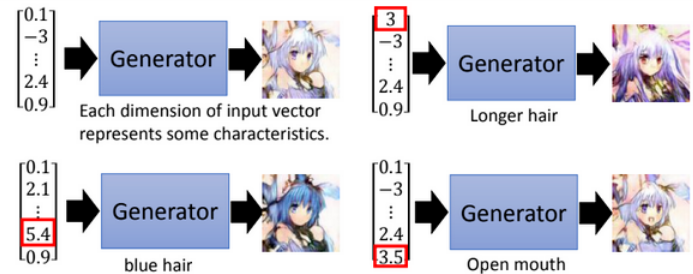

能力值 -G-> 角色

Generative Adversarial Network

Generative Adversarial Network

Generative Adversarial Network

image resource: 李宏毅老師的投影片

Given skill points to generate something.

Skill points

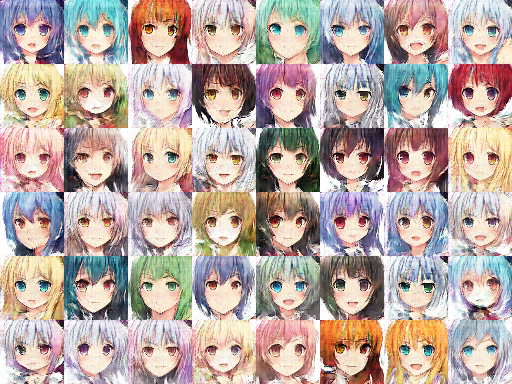

image resource: 李宏毅老師的投影片, anime image powered by http://mattya.github.io/chainer-DCGAN/

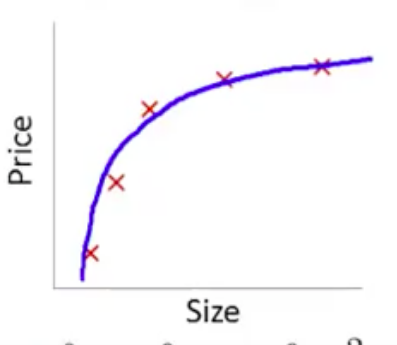

Low dim to high dim?

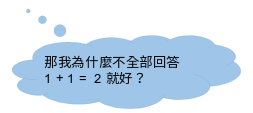

題外話:為什麼我們可以用低維度的vector表示高維度的圖片?

Low dim to high dim?

題外話:為什麼我們可以用低維度的vector表示高維度的圖片?

幾乎不會出現這種圖片

圖片本身就是一個資訊量很低的data。

圖片 dimension >> 可能會出現的圖片 dimension

Auto-Encoder就是想辦法抽取 :)

Generative Adversarial Network

image resource: 李宏毅老師的投影片

Generative Adversarial Network

image resource: 李宏毅老師的投影片

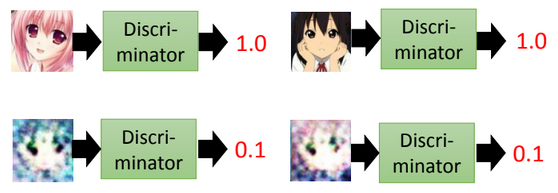

Generally...

Generator

被囚禁在孤島的畫家

Discriminator

常來探望並幫畫家賣畫的藝術家

Generator

- 可以想像成一位被囚禁在孤島的畫家學生

- 為了獎勵 (minimize G_loss),你會想要好好畫畫。

- 智商低落,自己來學可能無法好好歸納特徵。(Why?)

Discriminator

- 可以想像成一位常來探望並幫畫家賣畫的藝術家

- "常常來探望": 他看過其他的人作品,再來探望這位畫家。

(表示看過real data) - "幫畫家賣畫": 想辦法讓畫家畫的更像其他人的畫。

- "常常來探望": 他看過其他的人作品,再來探望這位畫家。

- 不代表他自己會畫畫。(Why?)

Generative Adversarial Network

image resource: 李宏毅老師的投影片

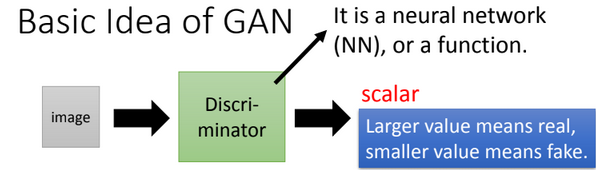

GAN Target

image resource: 李宏毅老師的投影片

Generator:

騙過Discriminator

Discriminator:

分辨出Generator

Why both?

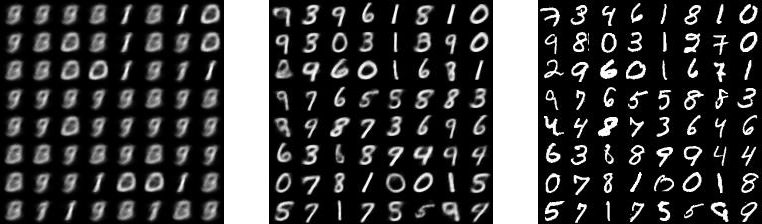

Only Generator

VAE, but blur due to loss function

讓畫家自己依樣畫葫蘆。

Blur VAE

http://kvfrans.com/variational-autoencoders-explained/

1st epoch

9th epoch

Original

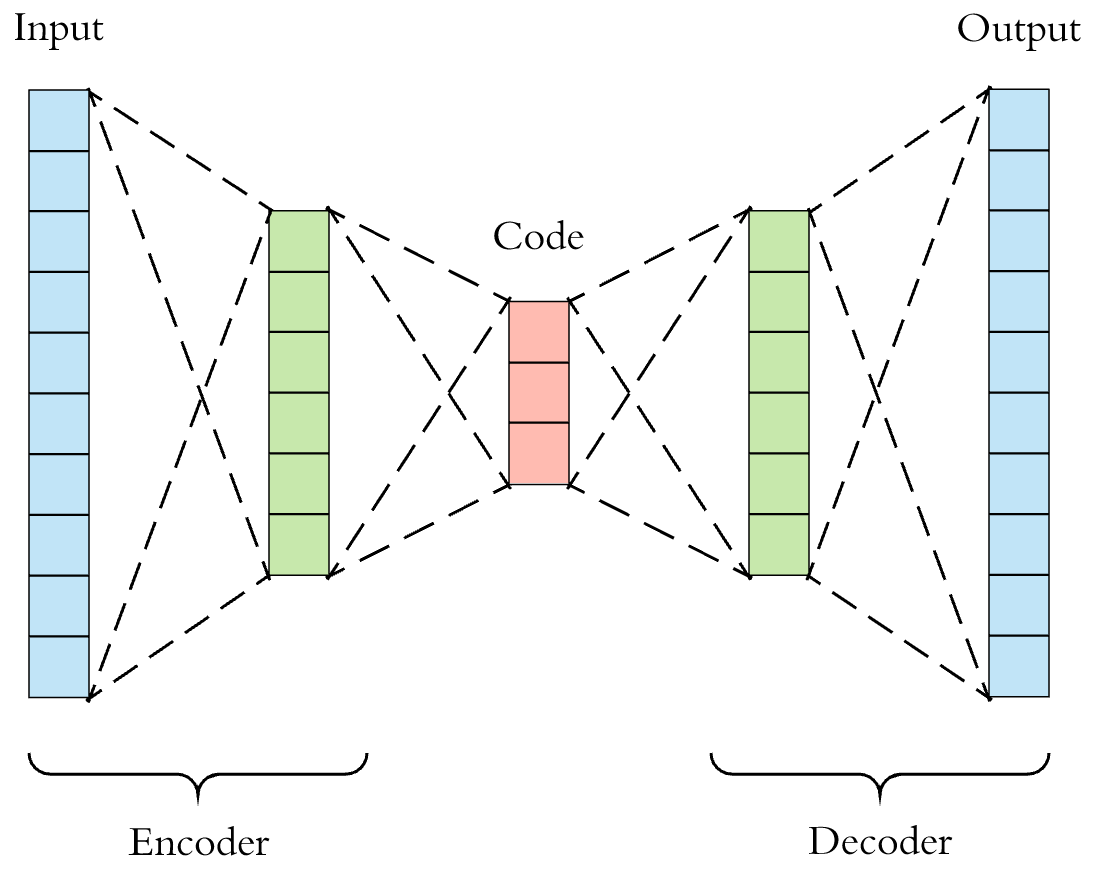

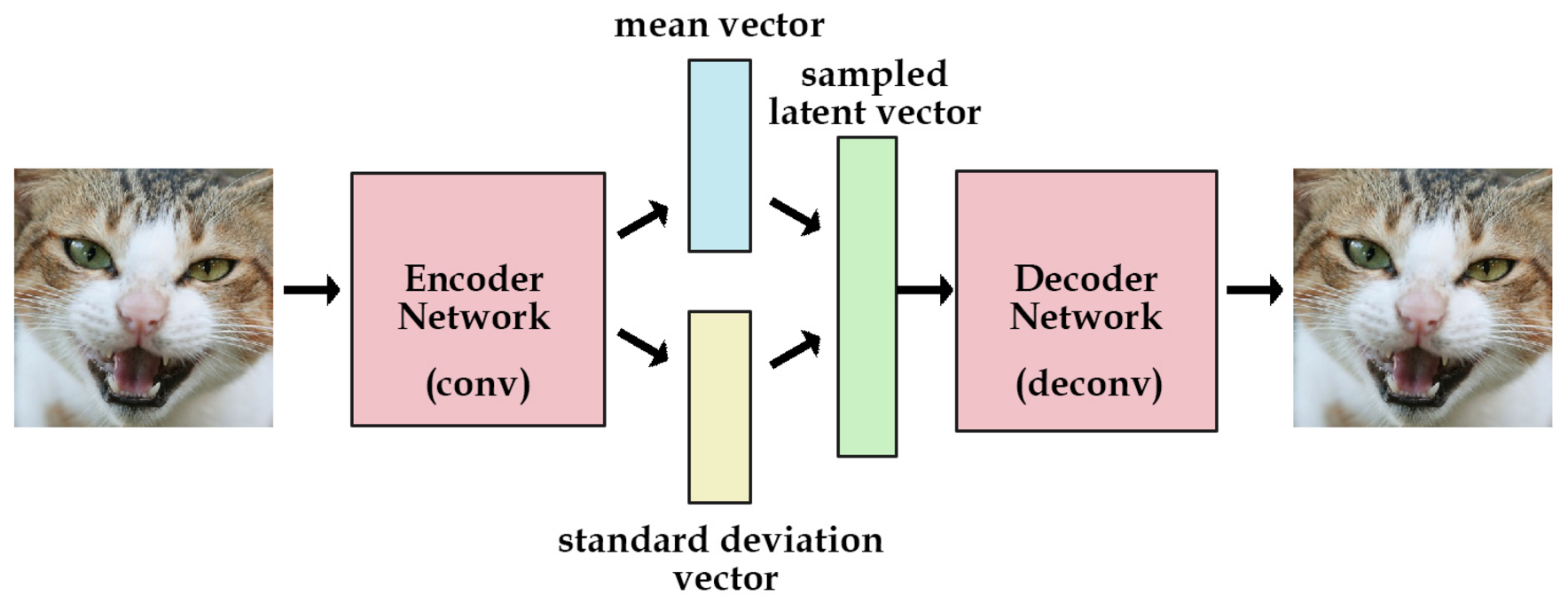

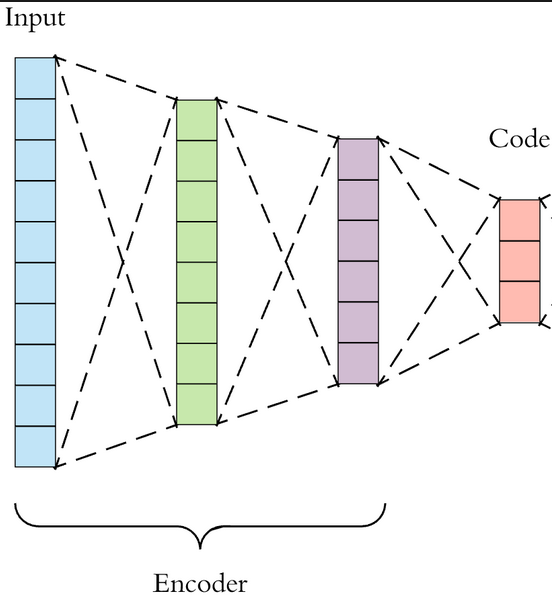

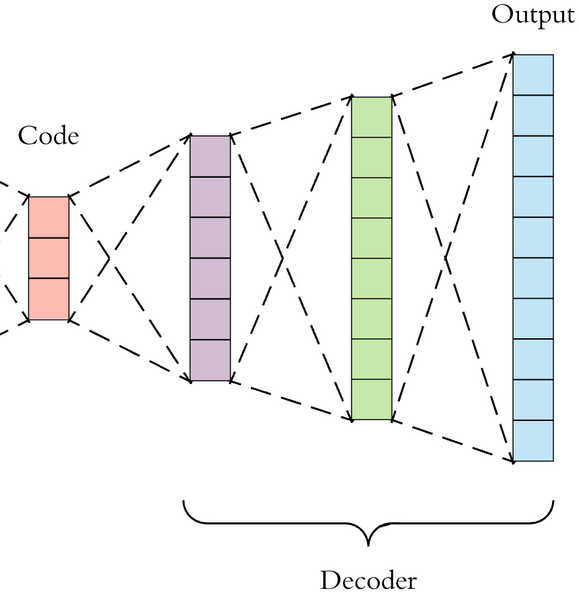

(Recall) What is

Variational AutoEnconder (VAE)?

這是一般的AE,

中間的Code就是個圖片的能力值。

image resource: https://cdn-images-1.medium.com/max/1600/1*ZEvDcg1LP7xvrTSHt0B5-Q@2x.png

Generator

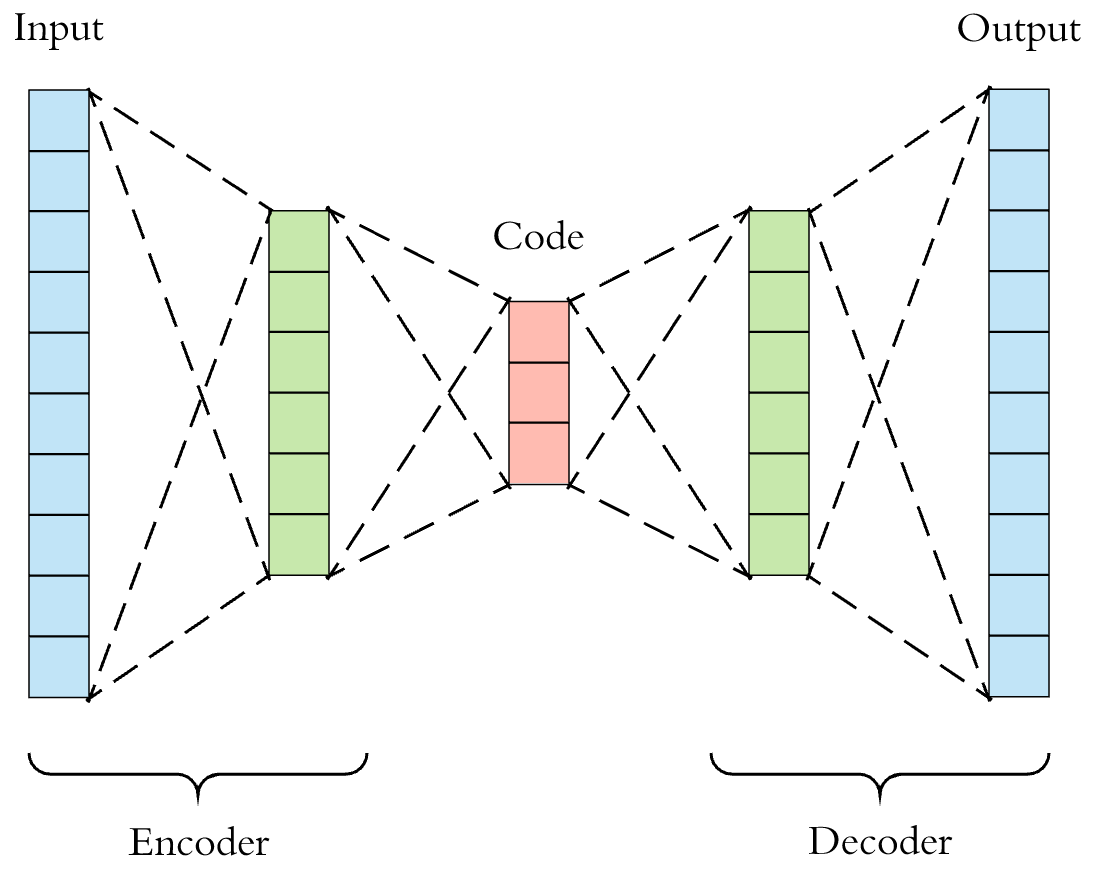

(Recall) What is

Variational AutoEnconder (VAE)?

AE中間都壓成code了,

為什麼不直接調code就好?

image resource: https://cdn-images-1.medium.com/max/1600/1*ZEvDcg1LP7xvrTSHt0B5-Q@2x.png

因為你並不知道中間code的distribution。

(大前提:AE的Decoder做的非常好。)

用AE做Generator可不可達成隨意輸出?

(Recall) What is

Variational AutoEnconder (VAE)?

用AE做出mean(能力值)/std(隨機值),

並希望最後的能力值可以~N(0,1)

image resource: https://www.cnblogs.com/huangshiyu13/p/6209016.html

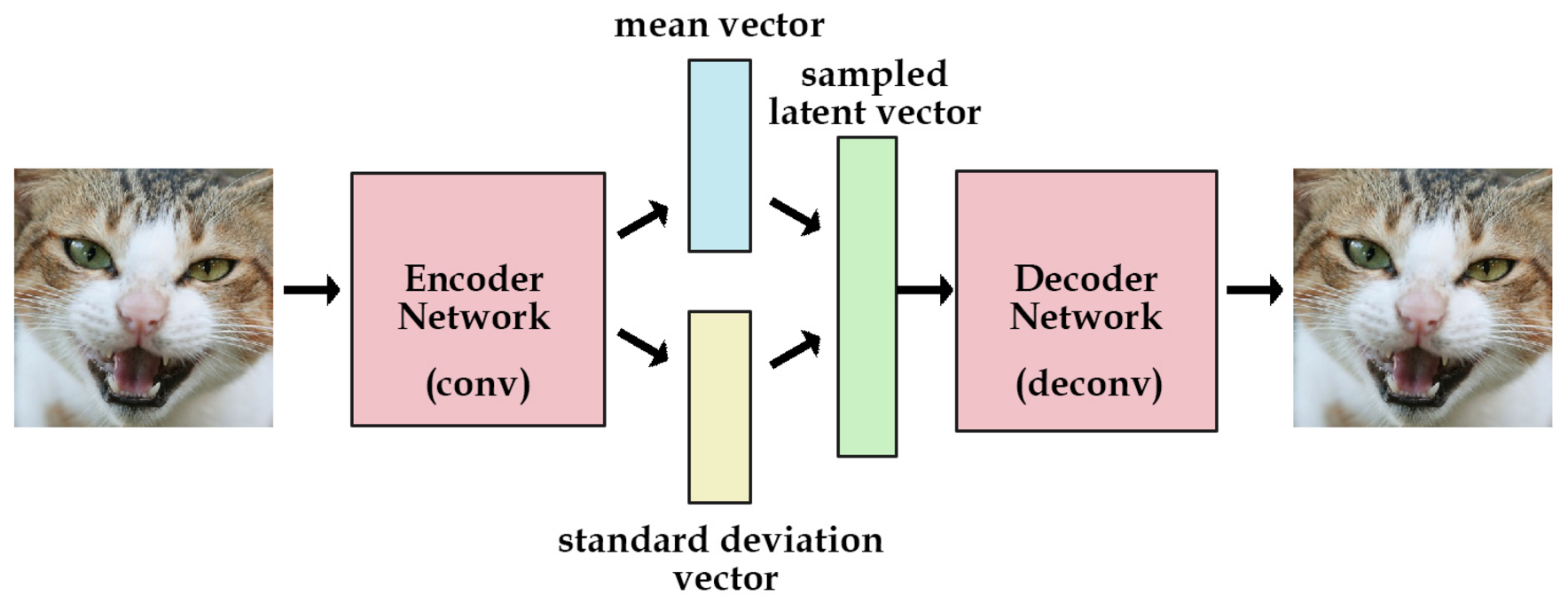

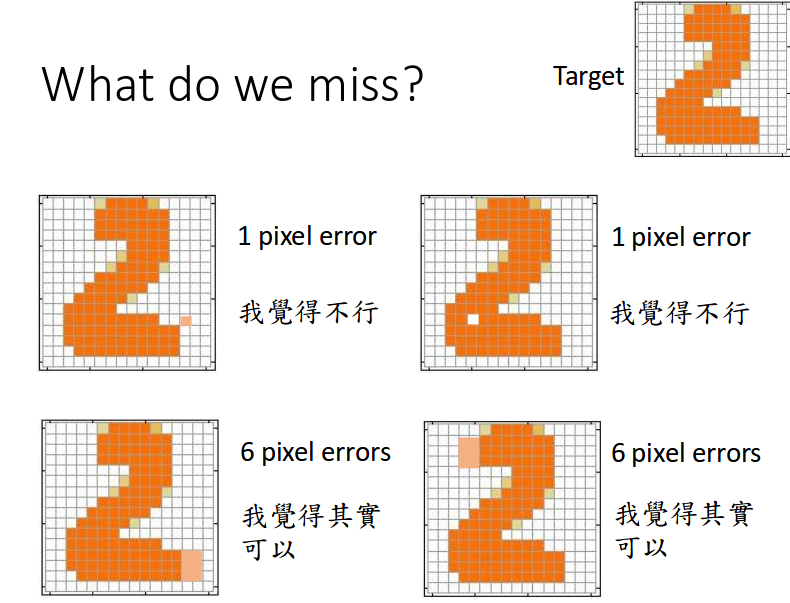

VAE一切都很好?

目前在seq2seq是很好沒錯。

但是圖片...

(Recall)

VAE的Target是什麼?

前後picture要長的L1/L2一樣

(minimize L1 loss / L2 loss)

image resource: https://cdn-images-1.medium.com/max/1600/1*ZEvDcg1LP7xvrTSHt0B5-Q@2x.png

image resource: 李宏毅老師的投影片

Due to superficial loss, we miss somthing.

Loss = NN?

You get the point!

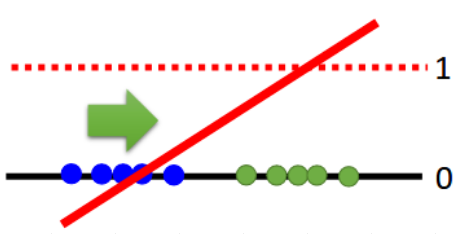

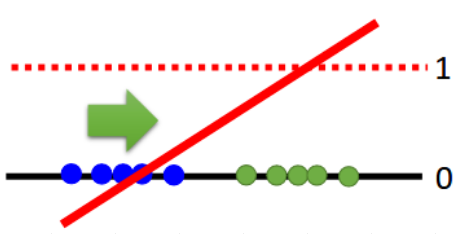

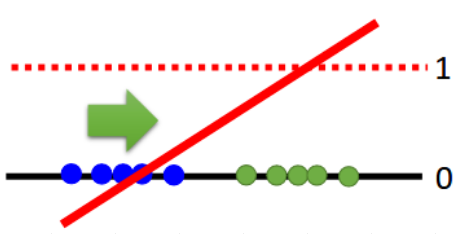

Only Discriminator

Where is GOOD negative

(D(x)=0) sample?

Basically, it's a binary (predict 0,1) problem.

Review of Review

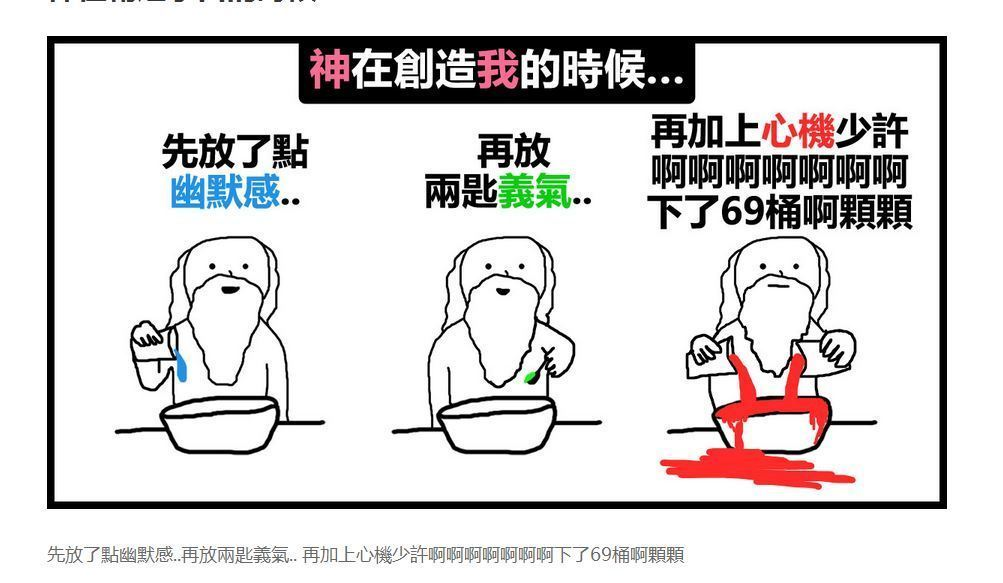

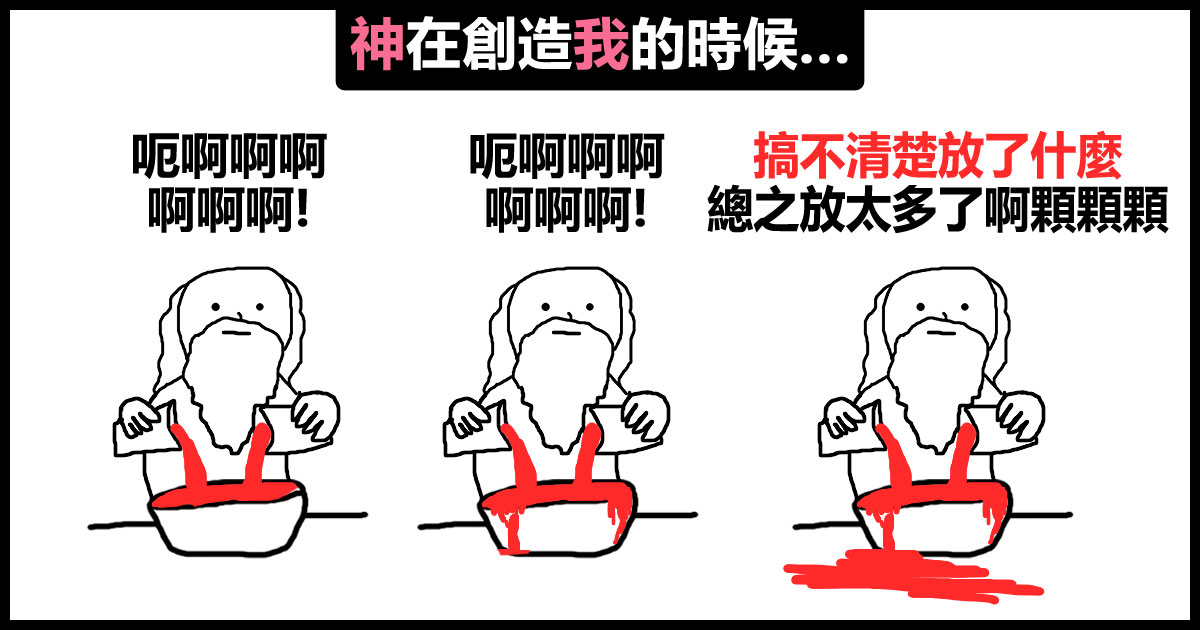

GAN 是一個類似VAE的東西,只差在...

Loss function 是個 NN

GAN 的 latent(能力值)

VAE 的 code 都像是一個distribution。

Intro popular GAN ->

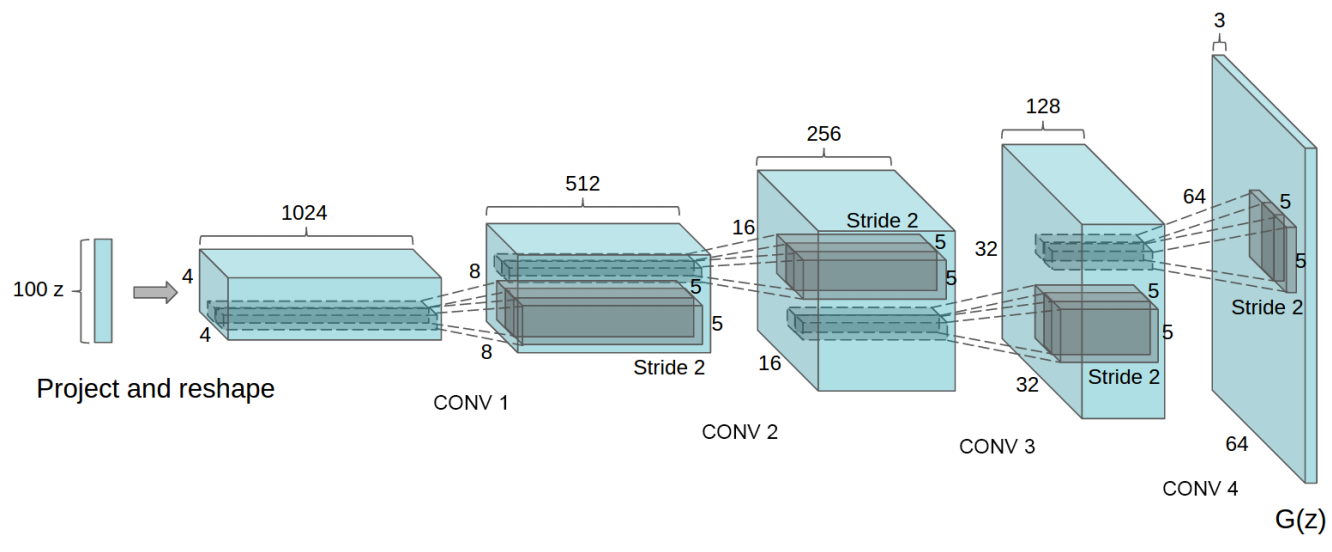

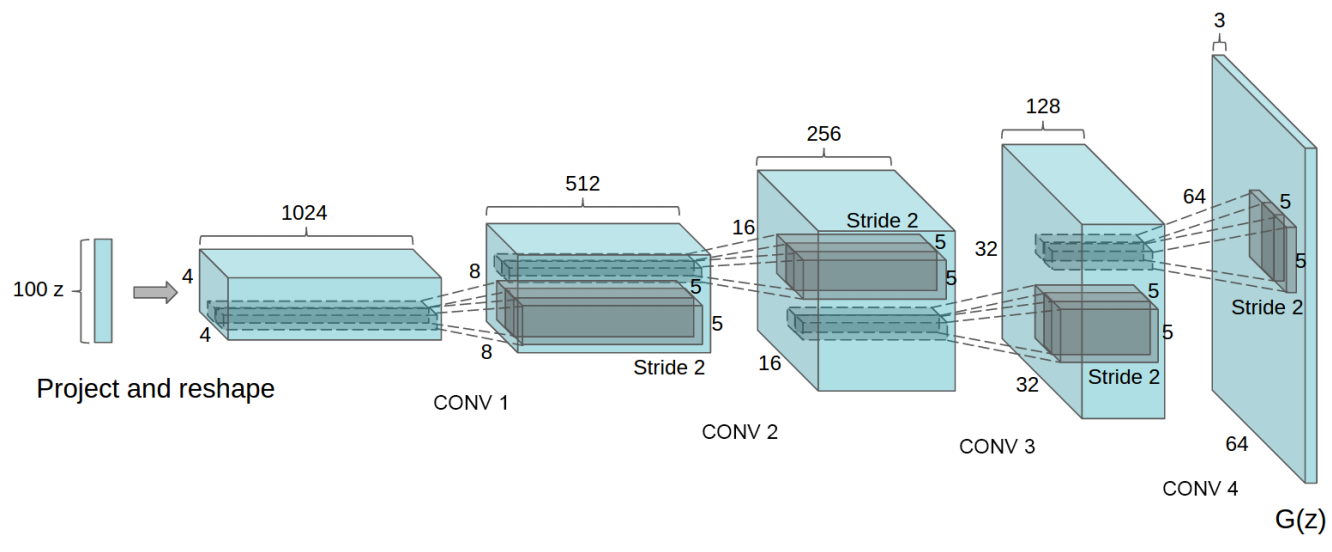

DCGAN Structure

DCGAN(ICLR'16) : https://arxiv.org/abs/1511.06434

Deep Convolutional GAN

Generator Structure

BN-> ReLU

tanh

DCGAN deconv: kernel size (5,5) , stride (2,2)

Discriminator Structure

BN + lReLU

lReLU

score

Before Viewing Code...

* resolution is not high due to image size(64x64).

DCGAN WITHOUT ANY TIPS

After Tips & High Resolution

* resolution = 128x128.

After Tips & High Resolution

Implement by code ->

GAN Code Definition

Sorry... but

I use tensorflow to train my GAN.

picture resource: https://www.tensorflow.org/

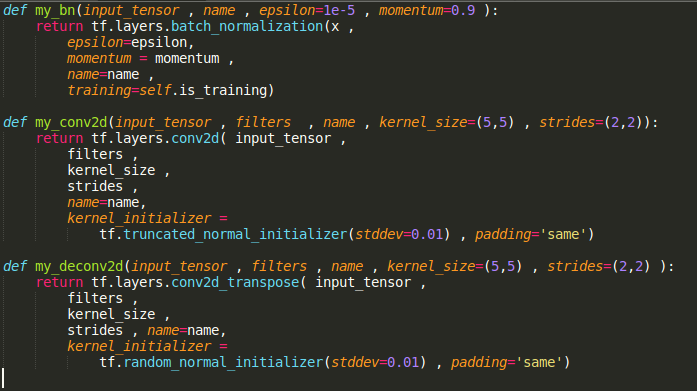

Utils

picture resource: my code displayed on sublime text3

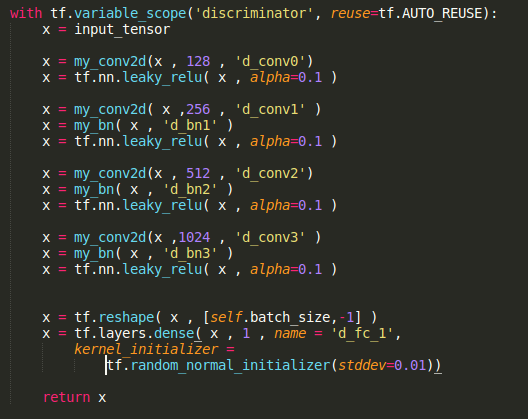

Discriminator

Convolution 128

f : (5,5) , s : (2,2)

Convolution 256

f : (5,5) , s : (2,2)

Convolution 512

f : (5,5) , s : (2,2)

Convolution 1024

f : (5,5) , s : (2,2)

Dense 1

Score

64x64x3 image

picture resource: my code displayed on sublime text3

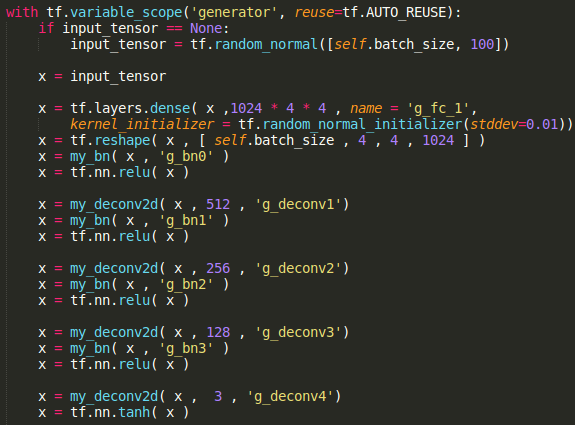

Generator

Conv^T 512

f : (5,5) , s : (2,2)

Conv^T 256

f : (5,5) , s : (2,2)

Conv^T 128

f : (5,5) , s : (2,2)

Conv^T 3

f : (5,5) , s : (2,2)

Dense 2^14

64x64x3 image

latent 100~N(0,1)

picture resource: my code displayed on sublime text3,

note that the latent in original paper is sampled from uniform distribution.

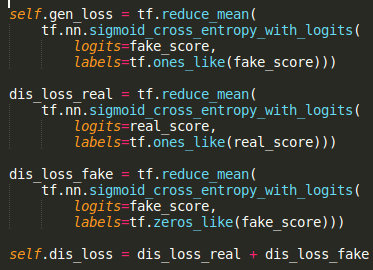

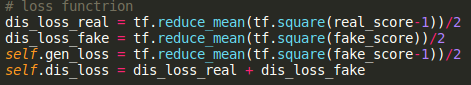

Loss definition

Generator:

騙過Discriminator

Discriminator:

分辨出Generator

想辦法讓Discriminator(Generator) = 1

想辦法讓Discriminator(Generator) = 0,

Discriminator(Real Data) = 1

Loss definition

picture resource: my code displayed on sublime text3

binary cross entropy

tf.nn.sigmoid_cross_entropy_with_logits

Loss definition

picture resource: my code displayed on sublime text3

fake_score = discriminator(fake_data)

real_score = discriminator(real_data)

GAN Code Details

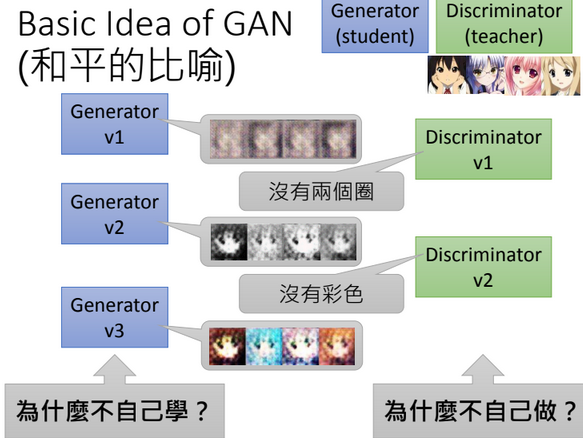

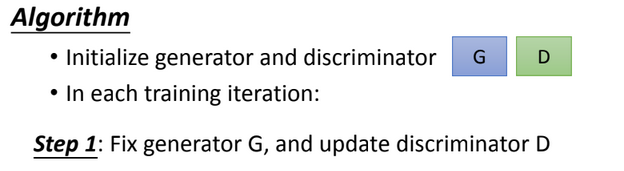

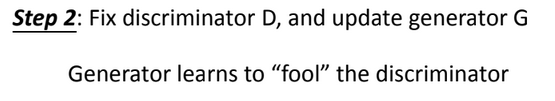

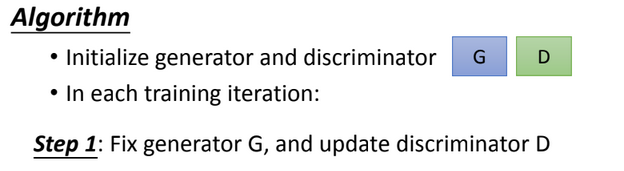

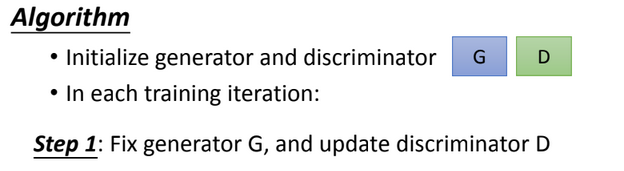

GAN in short terms...

picture resource: 宏毅老師投影片

Why "Fix" parameters?

picture resource: 宏毅老師投影片

Why "Fix" parameters?

picture resource: 宏毅老師投影片

step1 : NOT fix G, train D

step2 : NOT fix D, train G

學生被洗腦,老師痛罵學生。

老師被洗腦,學生騙過老師。

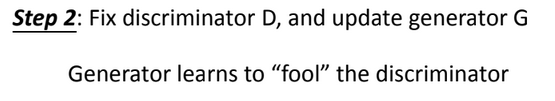

How "Fix" parameters?

picture resource: 宏毅老師投影片

Train on specific variables

- Define Scope : tf.variable_scope(name)

- Get Variables : tf.trainable_variables(name)

- Optimize it : optimizer.minimize(loss,var_list=vars)

Reminder:

variable_scope(reuse = tf.AUTO_REUSE)

Fix specific variables

Graph

這種Loss function稍後會講

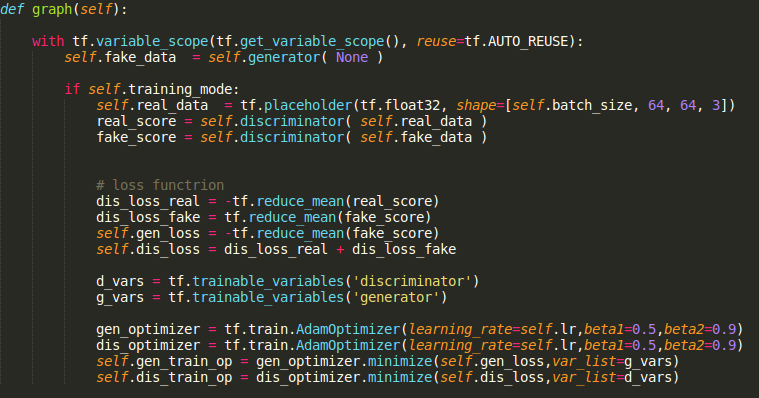

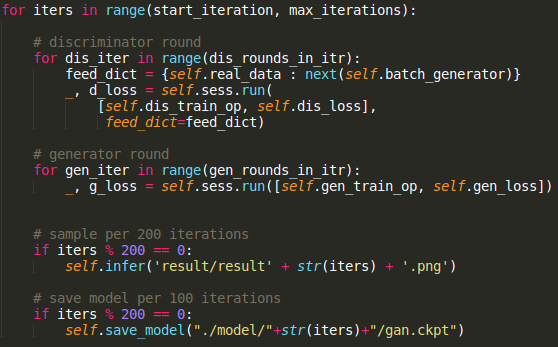

Training

picture resource: my code displayed on sublime text3

Tips:

讓Discriminator 每個step train比較多次。

Discriminator沒學好,Generator怎麼學?

GAN in tasks

1step = 5 Discriminator iters + 1 Generator iter.

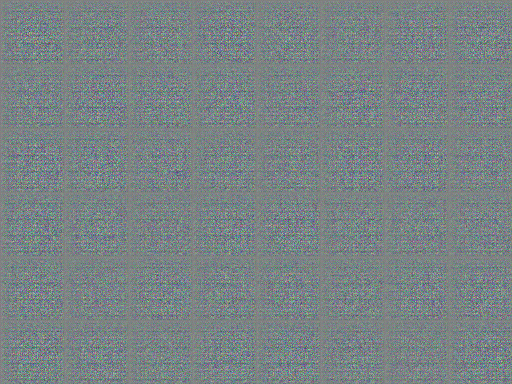

Step 0

picture resource: GAN result from Arvin Liu (myself).

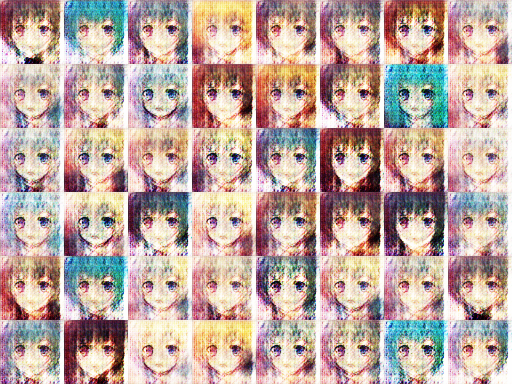

Step 800

picture resource: GAN result by Arvin Liu (myself).

Step 2400

picture resource: GAN result by Arvin Liu (myself).

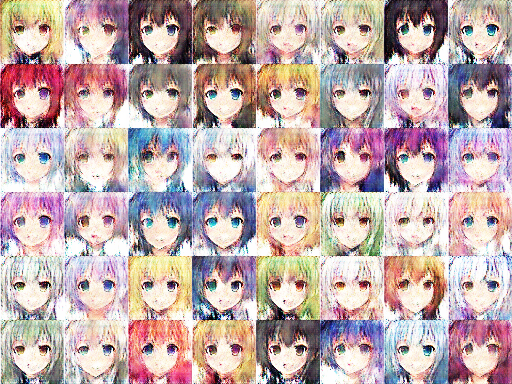

Step 6200

picture resource: GAN result by Arvin Liu (myself).

Step 21800

picture resource: GAN result by Arvin Liu (myself).

However... mode collapse

step 31600

However... mode collapse

step 31600

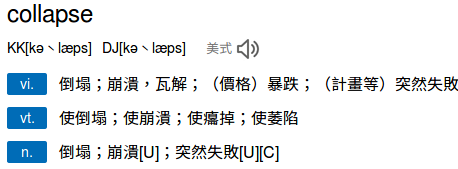

Wait... what is mode collapse?

GAN Problems

GAN Problems

- Mode Collapse

- Diminished Gradient

- Non-Convergence

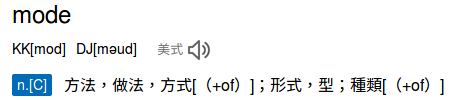

What is Mode Collapse?

種類 - 崩潰/塌陷。

Why Mode Collapse?

Student

Teacher

What is Mode Collapse?

( latent : random normal distribution)

img created

by myself.

What is Mode Collapse?

( latent : random normal distribution)

img created

by myself.

image resource: 李宏毅老師的投影片

Why called Mode Collapse?

Distribution(Mode) Collapse!

科普一下

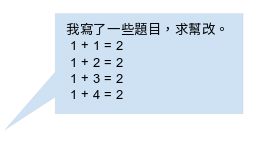

What is Bogo Sort?

image resource: wiki

What is Diminished Grad?

Student

Teacher

Question : Generate a code for sorting

到底是誰的錯?

What is Diminished Grad?

Discriminator too strict!

image resource: 李宏毅老師的投影片

Discri-

minator

score

All possible data

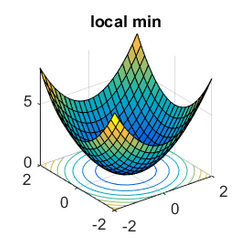

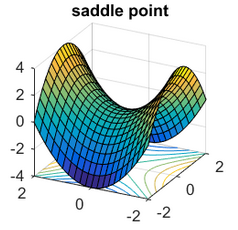

What is Non-convergence?

When to stop

your training?

What is Non-convergence?

Before that,

thinking about GAN's target.

What is Non-convergence?

General Goal

GAN's Goal

image resource https://www.offconvex.org/2016/03/22/saddlepoints/

Early stopping +

validation

HUMAN!

Why GAN finds

a saddle point?

G的參數

D的參數

G的loss

Solve Non-convergence

Human monitoring

Save your model everytime,

save your whole life time.

Problem Timeline - Review

Diminished Gradient

Mode Collapse or

Gradient Explode

Non-convergence

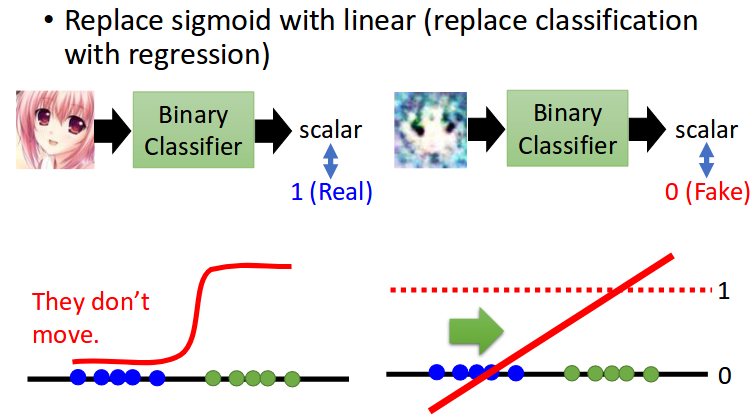

Least Square GAN

Solve Diminished Gradient

https://arxiv.org/abs/1611.04076

Least Square GAN

image resource: 李宏毅老師的投影片

(with sigmoid)

(without sigmoid)

Discriminator score

Sigmoid

會讓整個學習曲線變得很顛頗。

所以我們不過Sigmoid,

讓學習曲線平緩。

Score Scaling Problem

score: 20

Sigmoid後分數

Sigmoid前分數

score: -20

score: 40

score: -40

Sigmoid 不會受到這個問題困擾。

Score Scaling Problem

score: 20

Linear後分數

Linear前分數

score: -20

score: 40

score: -40

對於Linear,只要參數乘倍loss就會下降!

更具體一點:

帶入數字吧!

Least Square GAN

image resource: 李宏毅老師的投影片

Discriminator score

Sigmoid -> Linear , D_loss= D(fake)-D(Real)

step 0: D(fake)=-1 , D(real)=1 , Loss= -2

step 1: D(fake)=-2 , D(real)= 2 , Loss = -4

Loss 變低了, 但是其實它什麼都沒有學到。

Least Square GAN

image resource: 李宏毅老師的投影片

Discriminator score

Sigmoid -> Linear , D_loss= D(fake)-D(Real)

因為 NN 本身就有點自帶Regularization,

所以分數馬上就會爆開。

D(fake) -> -∞ , D(real) -> ∞

Q: D(fake) -> -∞ ,

D(real) -> ∞

會有什麼問題?

Least Square GAN

A: 分數變成 nan/ inf,

python處理不來,

爆掉。

Least Square GAN

image resource: 李宏毅老師的投影片

Discriminator score

Sigmoid -> Linear

D(G) -> -∞ , D(real) -> ∞ ?

壓制在0~1:

D_Loss = (D(G) - 0)^2 + (D(real) - 1)^2

Loss Definition

picture resource: my code displayed on sublime text3

* both real_score & fake_score are NOT

pass through sigmoid function.

* LS GAN有兩種:

Least Square GAN

Loss Sensitive GAN

Early-Stopping

Solve Mode Collapse

How to Prevent Mode Collapse?

Stop training when it happens if you can. :)

Early Stopping

你潮棒der。

Mode Collapse

Mode Collapse

你潮棒der。

Case1:

Case2:

Nice Choice!

img resource:https://9gag.com/gag/aeexzeO/drake-meme

??????

沒人在天天過年的啦!

Early Stopping

IS / FID

Solve Non-convergence

Q1: Non-convergence

真的只能用眼睛看嘛?

A1:

原本是找saddle point,這不可找。

但是你可以用

其他已經固定的model做validation

Recall - Inception Score (IS)

拿一個"已經可以分辨出task物體"的pretrain model 看可不可以分辨出你的生成data。

非常直觀的想法。

你看不看得出來這是~~?

Recall - Inception Score (FID)

拿一個"已經可以歸納出低等級feature"的pretrain model,將fake/real丟進去

看差距多大。

非常直觀的想法。

real跟fake到底像不像?

Q2:

不是所有task都有model啊?

A2:

要是沒有類似的pretrain model,

目前就不可評估。 (例如音樂生成) /

自己用real data做一個AutoEncoder,用它來做FID。

(個人經驗,參考用)

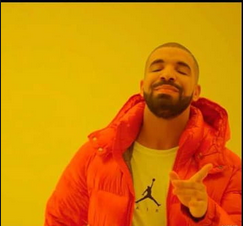

題外話 - 不適合GAN?

題外話 - 不適合GAN?

你心目中所能接受的"Loss" 和你給GAN訂定的 "Loss"不一樣。

沒有人告訴Discriminator 真正的data該長怎樣,那麼它判斷的依據就根你不一樣。

做不好的時候,告訴Discriminator loss該長怎樣

題外話 - 不適合GAN?

CVPR '18: PairedCycleGAN: Asymmetric Style Transfer for Applying and Removing Makeup

題外話 - 不適合GAN?

CVPR '18: PairedCycleGAN: Asymmetric Style Transfer for Applying and Removing Makeup

告訴Discriminator:

你覺得什麼地方是重要的。(疊loss)

WGAN

WGAN : https://arxiv.org/pdf/1701.07875.pdf

Lipschitz Function

Lipschitz Function

如果對於所有的x1,x2都滿足

我們就稱f為

K-lipschitz function

1-Lipschitz Function

所有的x1,x2都滿足

Q: 那麼f會有怎麼樣的性質?

A: 不會很抖,

因為從x1往外走斜率都會<=1

Wasserstein Distance

省略 :)

只要知道他是某種Error Measure就好

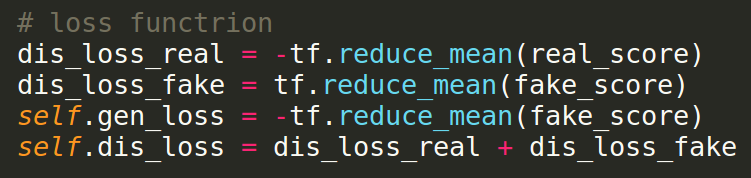

What is WGAN?

WGAN obj function

WGAN objective funciton:

How to measure?

if D is 1-lipschitz -> must be smooth

通過繁雜的證明...

WGAN constraint

Q: WGAN怎讓

Discriminator變成 1-lipschitz?

A: 靠clipping

讓每次更新的參數有個限制。

A2 : 其實不能。它只是個限制。

Q2 : 這樣真的能?

WGAN Method

Q: Weight Clipping?

A: 將每次更新後的各個參數w,

全部壓回 [-c,c]之內。

WGAN

在D是 1-lipschitz function的條件下,(D 不過simgoid)

會不會這種情況?

D(fake) -> -∞ , D(real) -> ∞

Maybe! 但因為WC, 會很緩慢。

1-lipschitz : |f(x)-f(y)| <= |x-y|

WGAN can be better?

Weight Clipping 綁手綁腳。

但是沒有WC又會爆開

如果把WC先拔掉會怎麼樣?

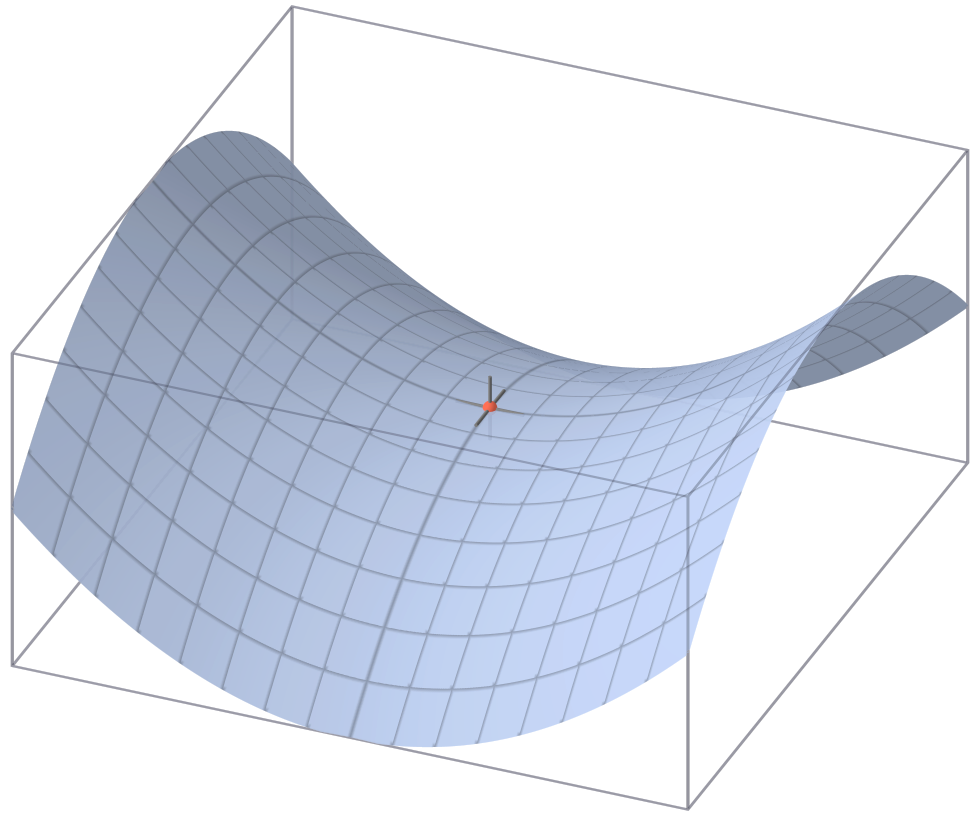

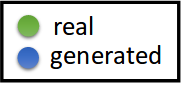

Make it Smooth!!!

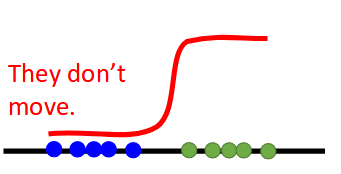

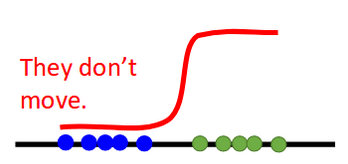

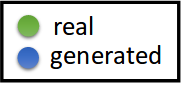

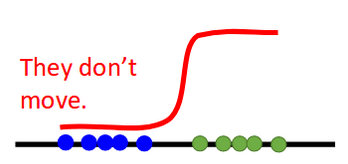

image resource: 李宏毅老師的投影片

They don't move.

Why?

Gradient too steep!

(slope is too steep.)

Discriminator score

All possible data

Diminished Gradient

Explode Score

Gradient Problems?

Problem1: Fake data's score may diminished gradient. (too flat)

Problem2: Due to loss function, score may be scale drastically. (too steep)

Gradient Problems?

Problems: diminished + steep gradient

How to solve unbalanced gradient?

WGAN-GP solve it!

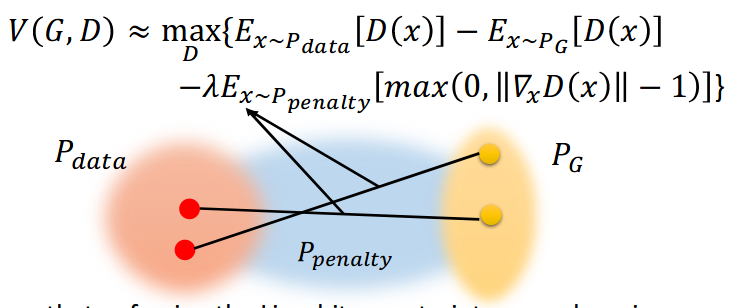

WGAN-GP

Solve Diminished Gradient & Mode Collapse

Improved WGAN(NIPS '17): https://arxiv.org/abs/1704.00028

What is WGAN-GP?

WGAN - Gradient Penalty

Gradient Problems?

Problems: diminished + steep gradient

How to solve unbalanced gradient?

Penalty 中間的 Gradient to 1!

(because of 1-lipschitz function)

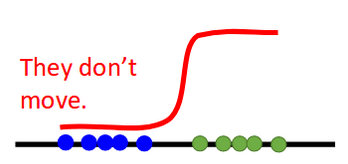

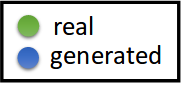

Make it Smooth!!!

image resource: 李宏毅老師的投影片

Q: How to smooth the gradient?

A: Regularization

(give it penalty)

That is Gradient Penalty(GP).

Regularization ?

Too Steep.

More Flat.

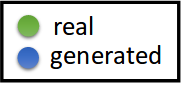

However...

How to find the middle of real & fake data?

How to find this value?

image resource: 李宏毅老師的投影片

Discriminator score

All possible data

Target is between the real data and fake data.

Between

the real and fake?

Interpolation!

Interpolation ?

B

-

α=0.1

α=0.9

Why not GP only on D(G)?

Score may explode in the middle!

Interpolation ?

B

-

α=0.1

α=0.9

How to suppress learning curve?

α = U[0,1],隨機壓一壓。

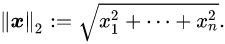

Improved WGAN / WGAN-GP

Note that 2-norm,

image resource: 李宏毅老師的投影片

Personal comment: gradient不能太高因為Loss,

gradient不能太低因為 diminished gradient,而1剛剛好。

(1是paper實驗出的,剛好巧合的符合1-lip的1。)

WGAN-GP implement

Improved WGAN(NIPS '17): https://arxiv.org/abs/1704.00028

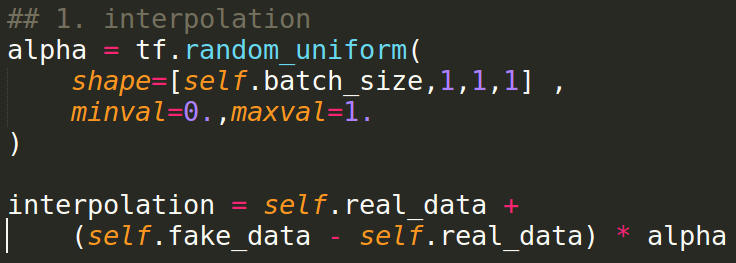

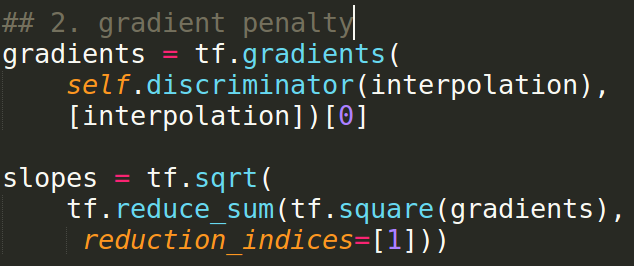

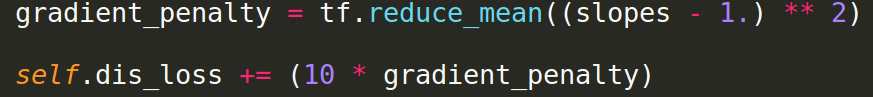

Gradient Penalty Step

- 1. Find interpolation

- 2. Calculate 2-norm of gradient

- 3. Calculate gradient penalty

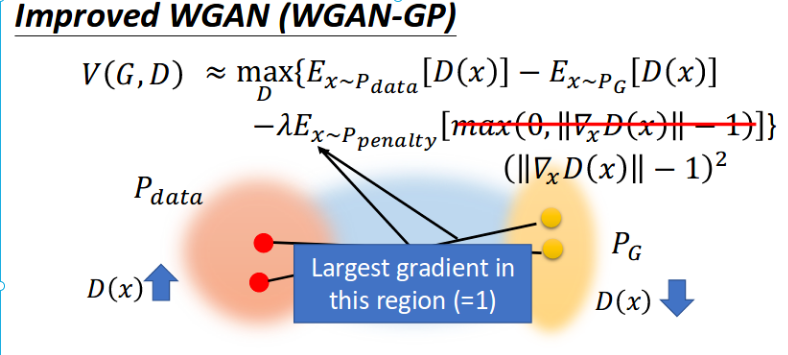

WGAN loss function

Gradient Penalty - Step1

Find interpolation

Gradient Penalty - Step2

||gradient of D(interpolate data)||

Gradient Penalty - Step3

Calculate Gradient Penalty

* lambda = 10 in paper

Reach 1-lipschitz

WGAN

Weight Clipping (WC)

WGAN-GP

Gradient Penalty (GP)

SN-GAN (spectral norm GAN)

divide spectral norm

(Not mentioned but very powerful)

WGAN-GP

Gradient Penalty (GP)

DCGAN+WGAN-GP result

* resolution is not high due to image size(64x64).

Another result

D:G = 4:1 , 5500 iterations

picture resource: GAN result by Arvin Liu (myself).

Why WGAN-GP solve the Mode Collapse?

[Personal viewpoint]

Actually, mode collapse...

If real data & G distribution....

A

B

C

What's your expect?

A

B

C

A

B

C

Dream on ! Get real!

A

B

C

A

B

C

Easy to minimize G_loss for G's data

Mode Collapse!

Discriminator do well on class B,C

Cyclic Changing

A

B

C

Easy to minimize G_loss for G's data

Mode Collapse!

A

B

C

Mode Collapse!

Original GAN

A

B

C

-

-

-

-

Easy to minimize G_loss for G's data

GP with Random Pair -

Amortize the Difficulty

A

B

C

-

-

-

-

Easy to minimize G_loss for G's data

GP with Random Pair -

Amortize the Difficulty

A

B

C

A

B

C

Easy to minimize G_loss for G's data

Cyclic Mode Collapse with Real Examples

DCGAN structure, image size 128x128

DCGAN - Step 44400

picture resource: GAN result by Arvin Liu (myself).

DCGAN - Step 44600

picture resource: GAN result by Arvin Liu (myself).

DCGAN - Step 44800

picture resource: GAN result by Arvin Liu (myself).

DCGAN - Step 45000

picture resource: GAN result by Arvin Liu (myself).

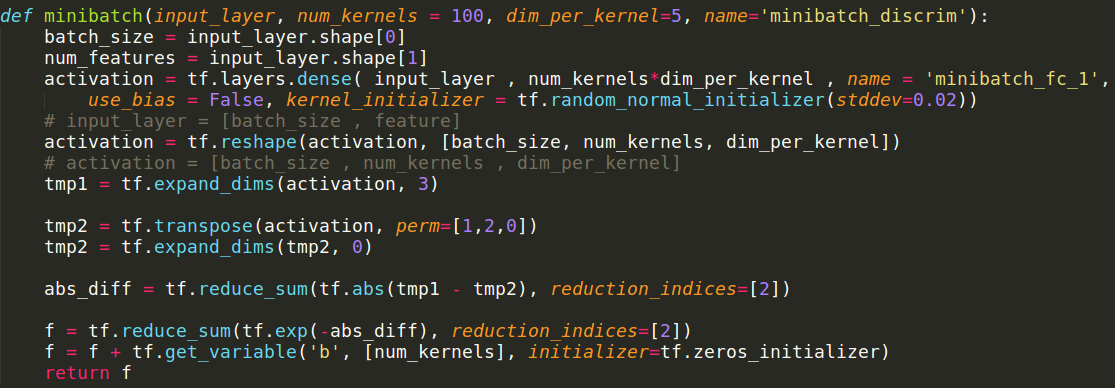

Minibatch-discrimination

Solve Mode Collapse

Ian Goodfellow : https://arxiv.org/pdf/1606.03498.pdf

Add Diversity Loss Intuitively

How to measure diversity?

It's cross-data loss!

MAMA MIA!

Thanks to minibatch!!

We can peep other data!

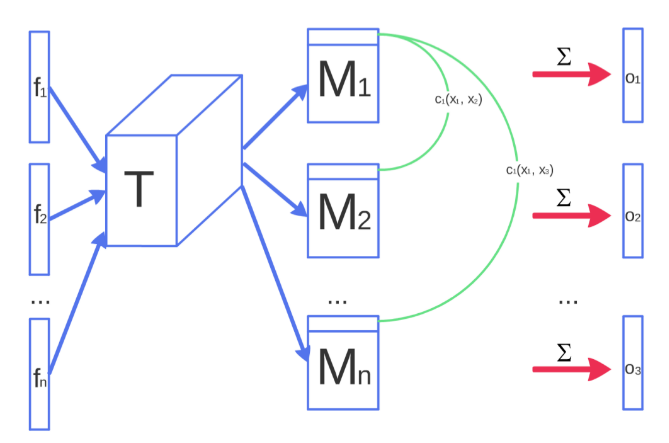

Main Idea of Minibatch Discrimination

- Let discriminator generalize the feature per data.

- N^2 calculate the difference of each pair.

- Append to the original Neural Network.

Flow Chart

img resource: https://arxiv.org/pdf/1606.03498.pdf

F: raw

feature from each data

T: Projection

c: calculate difference

o: calculate diversity

What will Minibatch Discrimination works?

Generator 會順著 Discriminator "diversity"的gradient將接近的data拆開來。

因為Discriminator會知道"哪些data"很像。(有N^2比較。)

Minibatch Discrimination implementation

picture resource: my code displayed on sublime text3

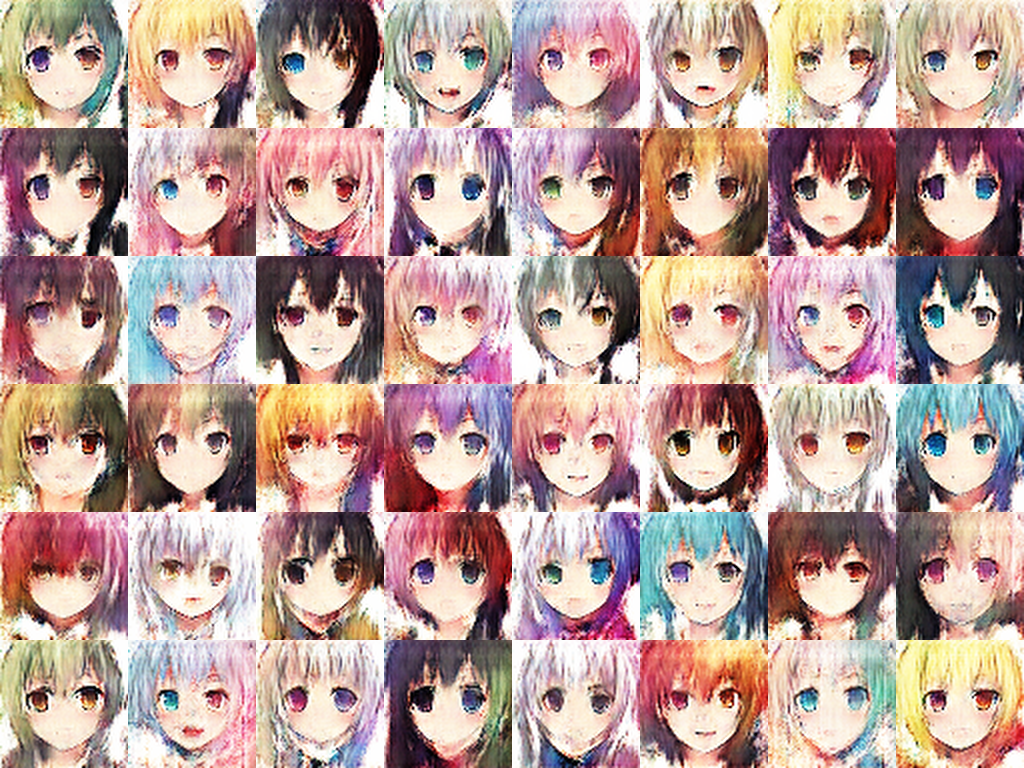

Minibatch Discrimination

picture resource: GAN result by Arvin Liu (myself).

WGAN-GP

- 很難mode collapse。

- Train起來相對久,中間有很大一段過程是在各個隱藏class的疊加態。

- 到非常後段(要收斂了)才會慢慢分離。

- WGAN-GP 就像是一個人生規劃導師,規劃各個data走向不同的路 (interpolation GP)。

Minibatch-Discrimination

- 一開始不會mode collapse,但其實train久了還是會。

- train的速度堪比一般的GAN,甚至更快(?)

- Minibatch-Discrimination就像是一個社會學家,勸戒各位不要都做同個工作。 (Discriminator diverge it)

多重Class的疊加態?

picture resource: GAN result by Arvin Liu (myself).

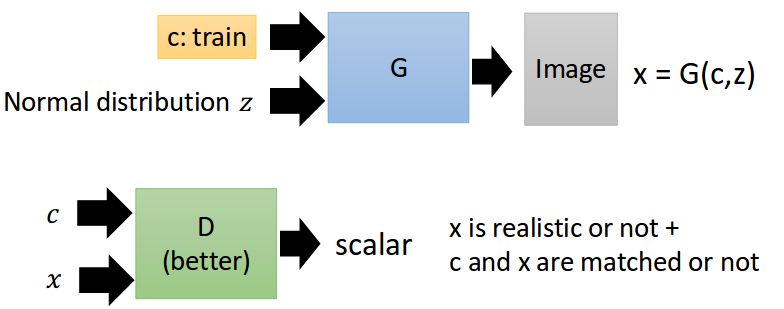

conditional GAN

https://arxiv.org/abs/1411.1784

In a nut shell...

給GAN condition,要求生成資料要符合 condition.

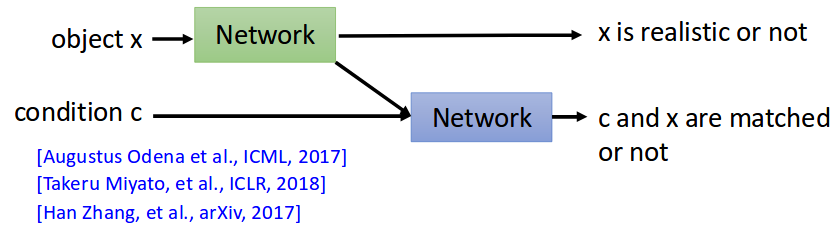

How cGAN?

就直接丟進去Generator和Discriminator就好了!

Why it works???

Discriminator 會利用給定的condition痛罵Generator。

Simple cGAN Flow Chart

image resource: 李宏毅老師的投影片

Another Loss Measurement

你開心就好:)

例如 classify 一個分數,real/fake 一個分數?

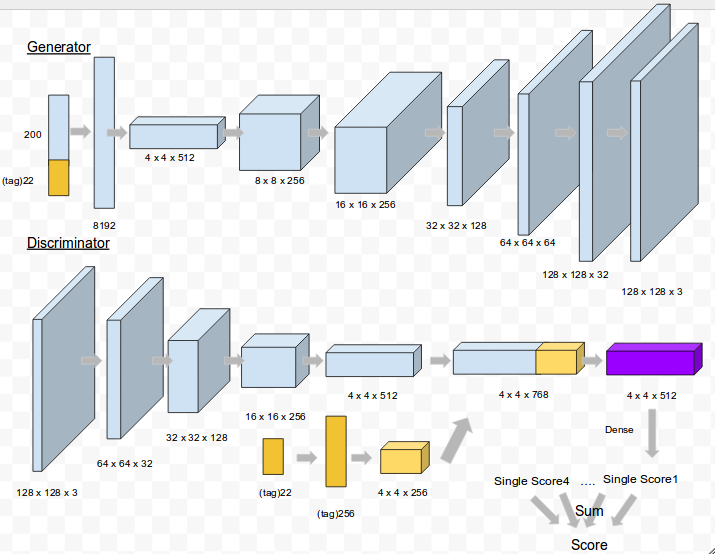

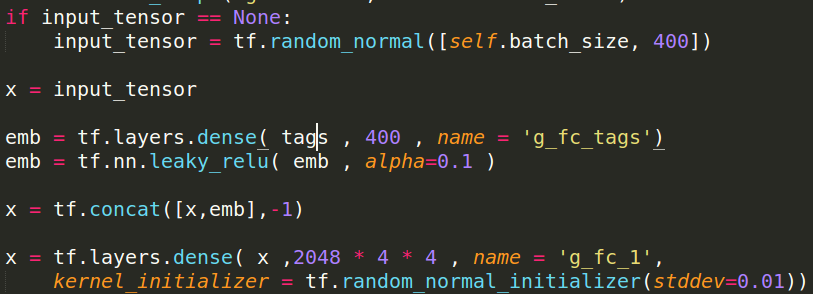

cGAN code

Generator Code

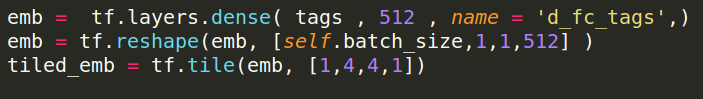

Discriminator Code

Loss Definition

同GAN/W-GAN/f-GAN...。

ACGAN

https://arxiv.org/abs/1610.09585

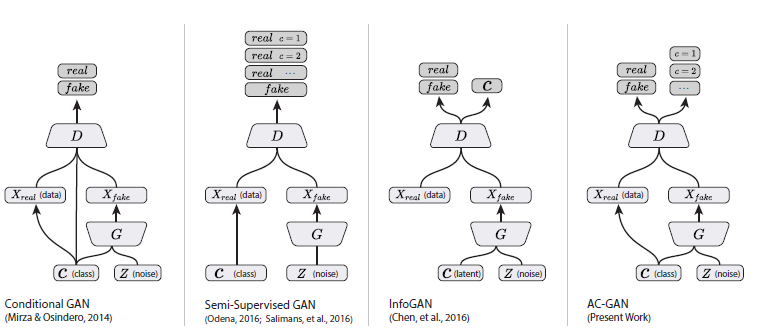

Some Collection...

image resource: https://www.cnblogs.com/punkcure/p/7873566.html

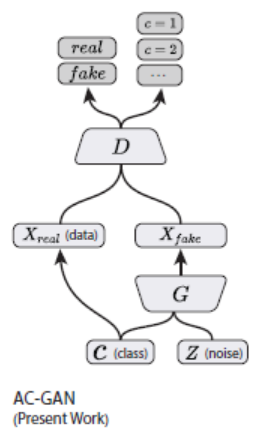

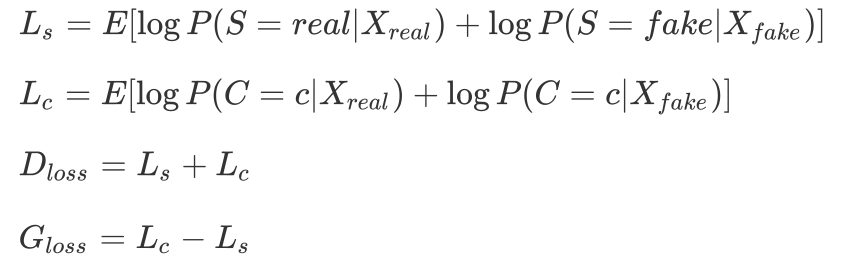

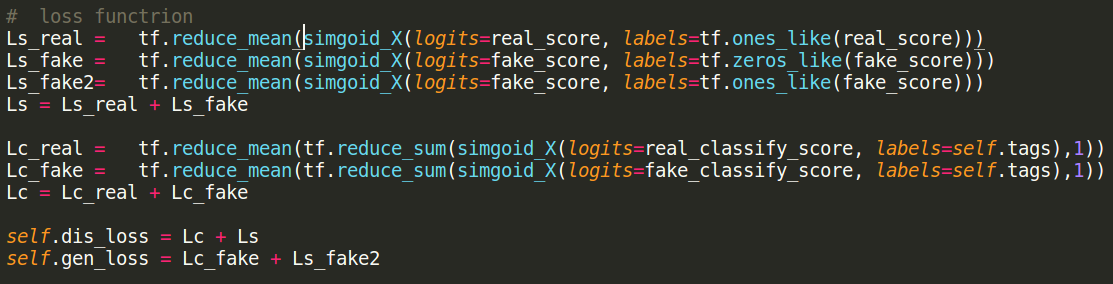

Main Idea of ACGAN

D output C/S

Thus, Having Classification & Synthesis(合成) eror.

ACGAN loss fucntion

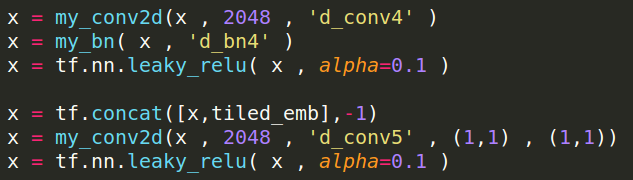

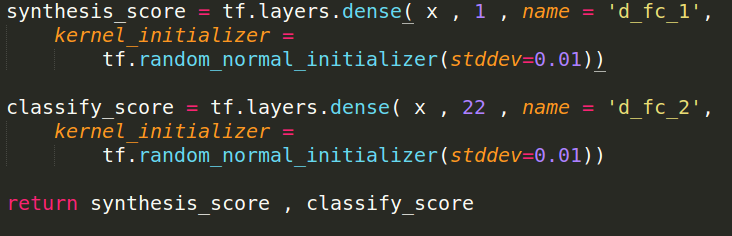

ACGAN code

Generator

same as cGAN.

Discriminator

Only need to change last layer.

Loss Function

In this task, I change some loss function.

(For G, +log(z) -> -log(1-z))

Ls : loss in original GAN.

Lc : binary cross entropy

(tag loss, not softmax.)

ACGAN + minibatch

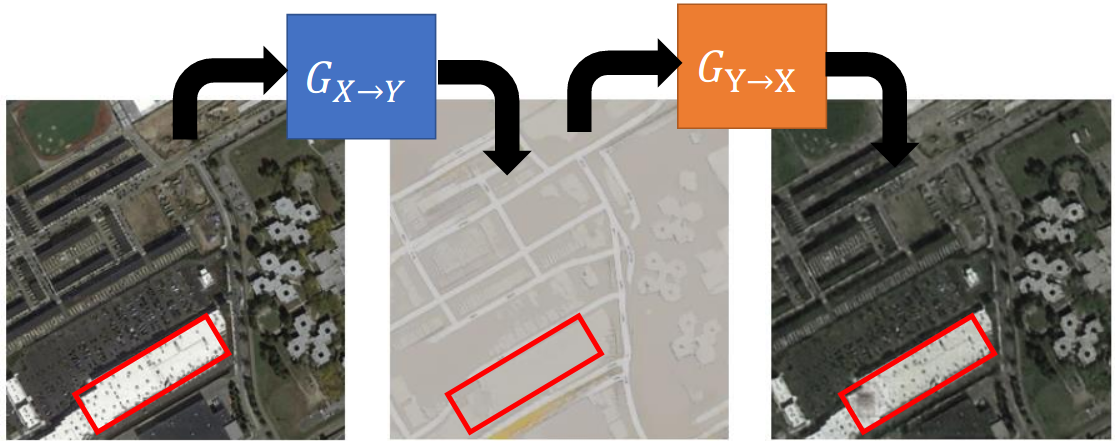

Cycle GAN

Style Transfer

A -> B

例如 畫風 / 聲音變聲等等...

Style Transfer

data

A

fake data B

A -> B

Generator

data

B

A -> B

Discriminator

now task: 畫風A轉B

Style Transfer

paired data A

paired fake dataB

A -> B

Generator

paired data B

now task: 畫風A轉B (paired)

一般的作法。

Style Transfer

paired data A

paired fake dataB

A -> B

Generator

paired data B

now task: 畫風A轉B (paired)

A -> B

Discriminator

condition : embbeded A

GAN

version

Paired Data 不好拿到?

Style Transfer

data

A

paired fake dataB

A -> B

Generator

data

B

now task: 畫風A轉B (paired)

B

Discriminator

Style Transfer - AB接近

data

A

fake data B

A -> B

Generator

data

B

B

Discriminator

?

G會不會只是亂生一個B呢?

由之前的Mode Collapse,我們就可以知道這件事可能發生。

加一個Loss / 直接做或pretrain model都可以

Style Transfer - Cycle GAN

data

A

fake data B

A -> B

Generator

data

B

B

Discriminator

fake data A

B -> A

Generator

A

Discriminator

Style Transfer - Cycle GAN

data

A

fake data B

A -> B

Generator

fake data A

B -> A

Generator

L1_loss

這個L1_loss,我們稱為

Cycle Consistency Loss

Style Transfer - Cycle GAN

data

A

fake

data B

A -> B

Generator

fake

data A

B -> A

Generator

data

B

fake

data A

A -> B

Generator

fake

data B

A ->B

Generator

Cycle Consistency

Cycle Consistency

B

Discriminator

A

Discriminator

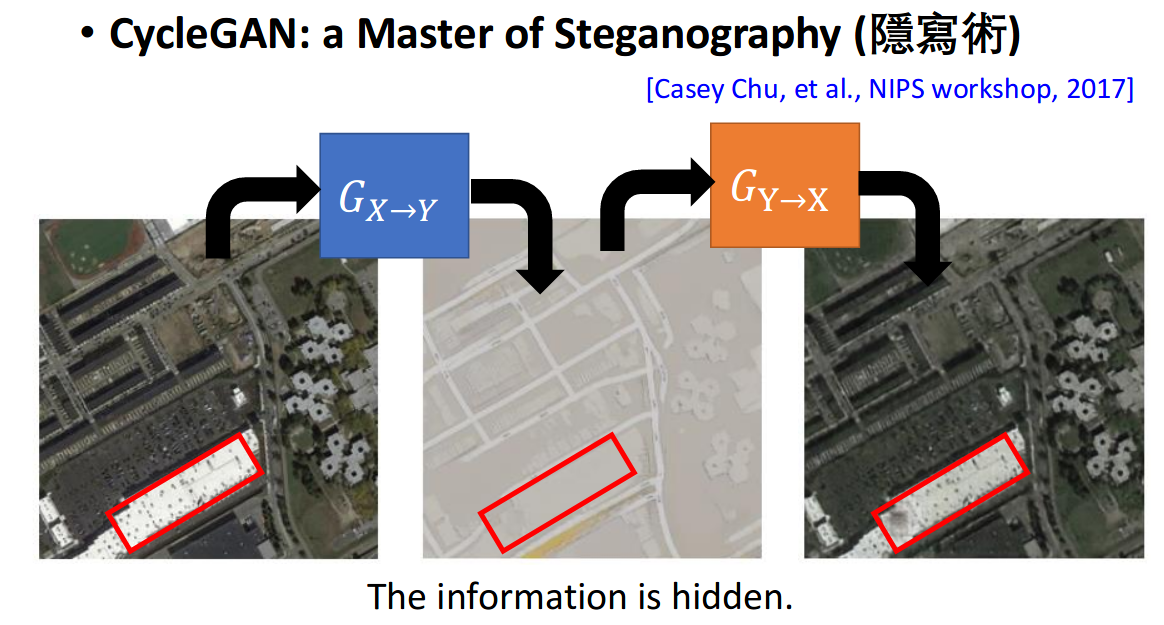

Cycle GAN's Problem

image resource: 李宏毅老師的投影片

What is Stego?

Magic

Cycle GAN's Problem

Cycle Consistency

image resource: 李宏毅老師的投影片

Cycle Consistency 的目的是讓Y像X。但如果G能把資料藏起來,

那麼Cycle consistency loss就沒有意義。

Why GAN?

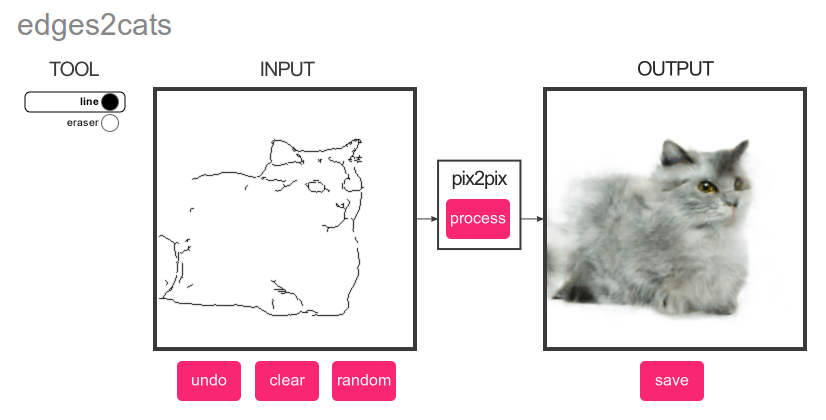

pix2pix

https://affinelayer.com/pixsrv/

pix2pix

use conditional GAN ?

where is the conditional?

Embedding

Generator

U-net

Image Caption (im2txt)

Memes Generation: https://arxiv.org/pdf/1806.04510.pdf

Condition: Encoder(Picture)

Use language model or something as Discriminator

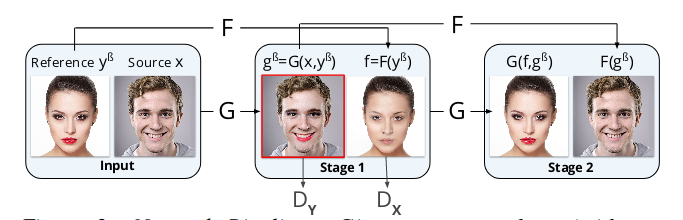

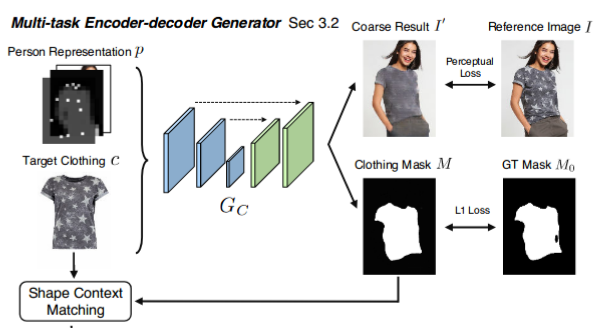

Cloth Try on

https://arxiv.org/pdf/1711.08447.pdf

Cloth Try on

https://arxiv.org/pdf/1711.08447.pdf

(Part from original model)

Style Transfer

https://github.com/Aixile/chainer-cyclegan

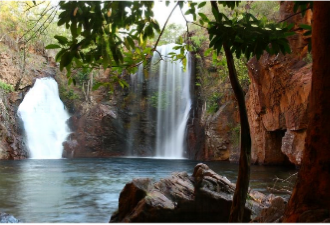

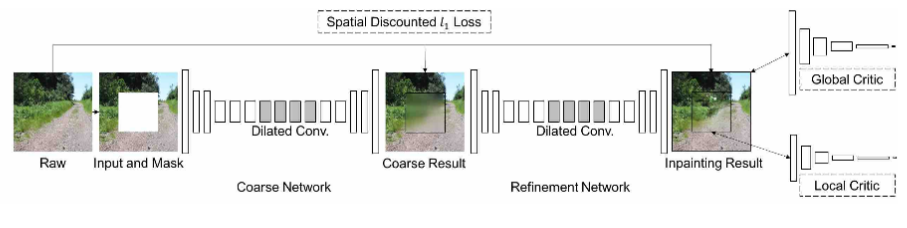

Generative Image Inpainting

https://arxiv.org/pdf/1801.07892.pdf

Generative Image Inpainting

https://arxiv.org/pdf/1801.07892.pdf

In General...

GAN can do most of "Generation Tasks"!

Personal Experience

看看就好,拿來作弊用的

Personal Experience 1

Filter Bad Image

Use STRONGER D to filter G's data.

"Stronger" means train D lonely in the end.

Personal Experience 2

low lr at the end

When you think G is good enough, lower the learning rate, brighter image you get.

(Optional)

Why GAN? - 2

However...

These fashion application, maybe it's too far for you?

太炫砲了用不到!

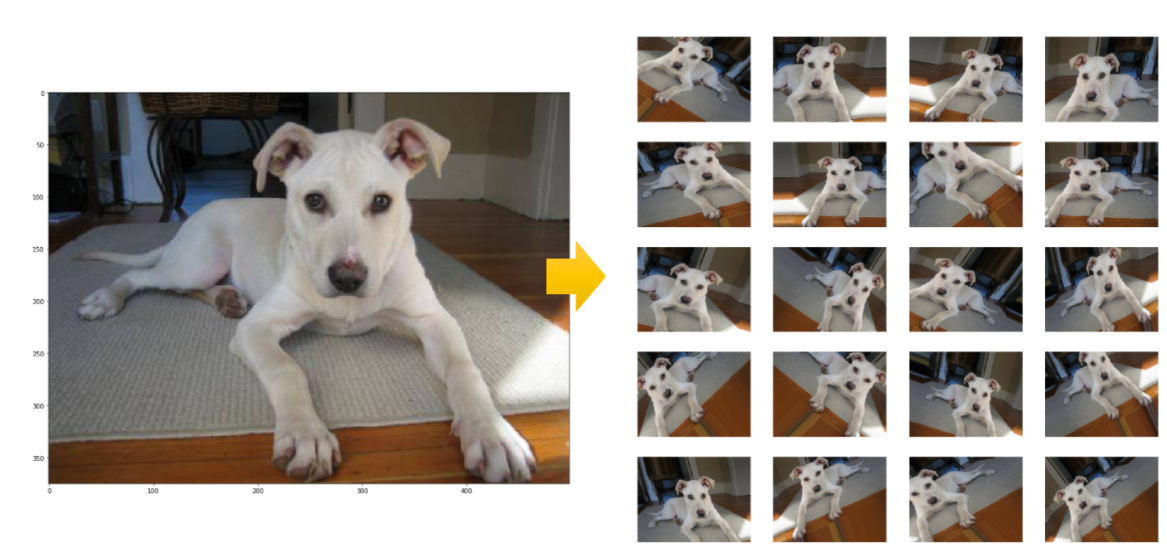

Ultimate Data Augmentation

In classification problem...

Data Augmentation? Why not ...

image resource: https://chtseng.wordpress.com/2017/11/11/data-augmentation-%E8%B3%87%E6%96%99%E5%A2%9E%E5%BC%B7/

[Personal viewpoint]

In real tasks

class A

class B

class C

real data

GAN ganerator

simple augmentation

???

???

???

It's no guarantee that your augmented data follows original data distribution.

For example..

Rotation Degree?

Gaussian Noise?

Scale fx?

These data is based on "exist dataset."

In GAN, generator will generate new data.

In Real Tasks...

[Lab talk]

Data Shortage

假設有兩個相關的dataset,

(CT & MRI)

但是有些圖片只有CT沒有MRI?

Style Transfer!

| Dataset A | Dataset B | is_pair? |

|---|---|---|

| exist | exist | Yes |

| not exist | exist | No |

| exist | not exist | No |

| not exist | not exist | ??? |

Data Shortage

Tips : Dropout in G

https://arxiv.org/pdf/1611.07004v1.pdf

Tips : Use labels

Tips : Batch Normalization

Tips : Avoid Sparse Function

i.e.: Maxpooling, ReLU(but G can use it.)

Tips : Leaky ReLU for D, ReLU for G.

Maybe ReLU is suitible for "edge"?

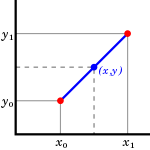

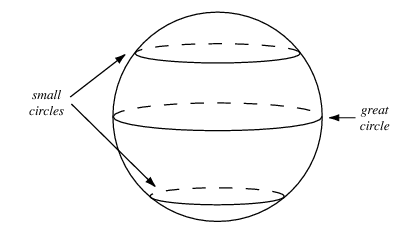

Ques1 : slerp or lerp?

ICLR'2017 (rejected)

https://openreview.net/forum?id=SypU81Ole

LERP (Liner IntERPolation)

https://en.wikipedia.org/wiki/Linear_interpolation

SLERP (Spherical LERP)

WGAN LERP 不用自作主張用SLERP :)

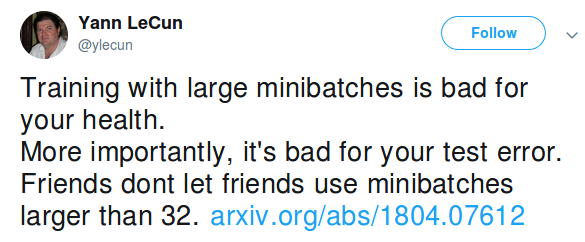

Ques2 : Batch Size?

Expert said...

In any tasks, size of mini-batch = 16~64 in my own practical experience :) .

Why? Maybe...

Q: Why not GD?

A: Easily sink into local minima.

Q: Why not SGD?

A: Hard to converge.

Q: Mini-batch?

Ques3 : Complex task is hard to GAN?

(WGAN-GP) only pink hair

D:G = 4:1 , 5500 iterations

picture resource: GAN result by Arvin Liu (myself).

(WGAN-GP) no-constraint

D:G = 4:1 , 5500 iterations

picture resource: GAN result by Arvin Liu (myself).

MISCs

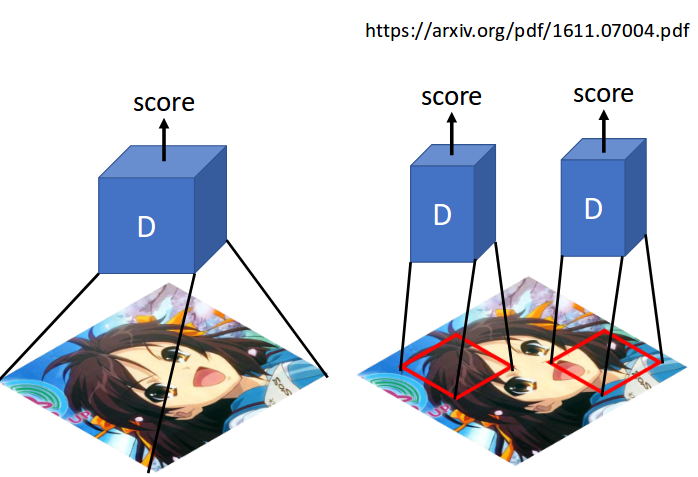

MISC2 - patch GAN

Patch GAN

image resource: 李宏毅老師的投影片

Patch GAN risks

picture resource: GAN result by Arvin Liu (myself).

Review

GAN model

- DCGAN (Deep Convolutional GAN)

- LSGAN (Least Square GAN)

- WGAN (Wesserstein GAN)

- WGAN-GP (Improved Wesserstein GAN)

- Patch GAN (Pix2Pix's Discriminator)

-

conditional GAN

-

ACGAN (Auxiliary Classifier GAN)

-

Cycle GAN

GAN main problems

- Mode collapse

- Diminished Gradient

- Non-convergence

Q & A

Thanks for your listening!

Code:

goo.gl/yRvUk1