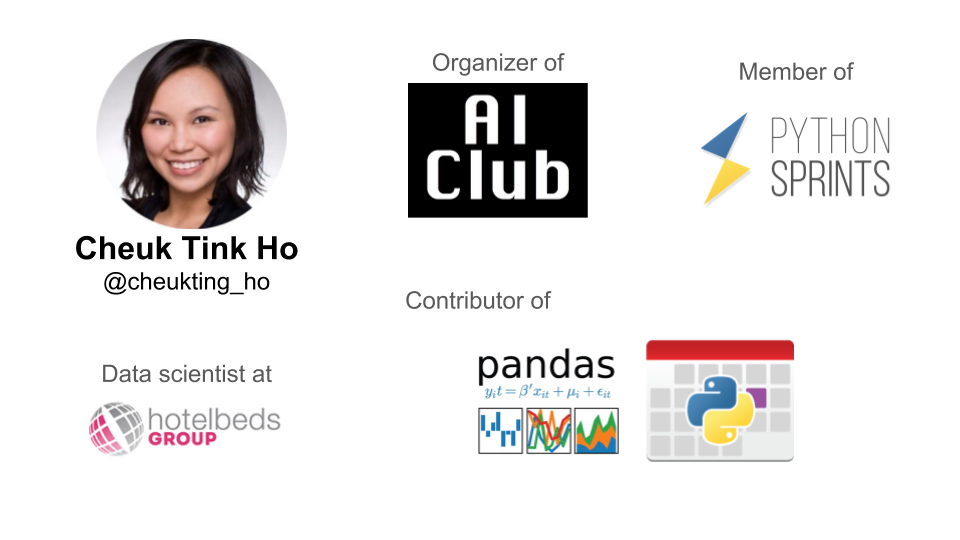

by Cheuk Ting Ho

@cheukting_ho

https://github.com/Cheukting

#EuroPython

using FuzzyWuzzy

Why matching similar company names?

What does similar names imply......?

@cheukting_ho #EuroPython

Somewhere in China...

@cheukting_ho #EuroPython

Matching Strings

"Starbucks" == "StarBucks"

"Starbucks" == "Star bucks"

"Starbucks" == "Starbuck"

String Methods

@cheukting_ho #EuroPython

False

False

False

upper() / lower()

replace(' ','')

rstrip('s')

Fuzzy Matching?

"Fuzzy matching is a technique used in computer-assisted translation as a special case of record linkage. It works with matches that may be less than 100% perfect when finding correspondences between segments of a text and entries in a database of previous translations."

- Wikipedia

tl;dr

matching 2 strings that is similar but not exactly the same

@cheukting_ho #EuroPython

measure the similarity between two strings using

Levenshtein Distance

The distance is the number of deletions, insertions, or substitutions required to transform source into target.

The greater the Levenshtein distance, the more different the strings are.

Levenshtein distance is named after the Russian scientist Vladimir Levenshtein.

@cheukting_ho #EuroPython

Levenshtein distance interpretation

Dynamic Programming

@cheukting_ho #EuroPython

FuzzyWuzzy

Fuzzy string matching like a boss.

-

Simple Ratio

simply measuring the Levenshtein Distance -

Partial Ratio

finding best partial match -

Token Sort Ratio

tokenizing and match despite order difference -

Token Set Ratio

tokenizing and finding best partial match

@cheukting_ho #EuroPython

Company Data

The data we used is found on http://download.companieshouse.gov.uk/en_output.html

It is an openly licensed publicly available dataset that contains a list of registered (limited liability) companies in Great Britain.

Example: CAMBRIDGE

Num of Companies: 15889

@cheukting_ho #EuroPython

Trick 1 - Common words

Matching form common words like "LTD" and "COMPANY" is less meaningful and will be discounted automatically in the algorithm.

Find the 30 most common words in all company names and deduct the matching score of a pair if any keywords is present in the names.

from collections import Counter

all_names = df['CompanyName'][df['RegAddress.PostTown']=='CAMBRIDGE'].unique()

names_freq = Counter()

for name in all_names:

names_freq.update(str(name).split(" "))

key_words = [word for (word,_) in names_freq.most_common(30)]

print(key_words)@cheukting_ho #EuroPython

Trick 2 - Match in Groups

Then we group the names by their 1st character. As the list is too long, it will take forever to match them all at once (15889 x 15889 pairs to consider).

The work around is to match them by groups, assuming if the names are not matched at the 1st character, it is unlikely that they are the same name.

all_main_name = pd.DataFrame(columns=['sort_gp','names','alias','score'])

all_names.sort()

all_main_name['names'] = all_names

all_main_name['sort_gp'] = all_main_name['names'].apply(lambda x: x[0])@cheukting_ho #EuroPython

Fuzzy

Matching

Here for each group, we use token_sort_ratio to matching the names.

Deduct 10 points if have common words.

If score 85 or more then it's a match!

rom fuzzywuzzy import fuzz

all_sort_gp = all_main_name['sort_gp'].unique()

def no_key_word(name):

"""check if the name contain the keywords in travel company"""

output = True

for key in key_words:

if key in name:

output = False

return output

for sortgp in all_sort_gp:

this_gp = all_main_name.groupby(['sort_gp']).get_group(sortgp)

gp_start = this_gp.index.min()

gp_end = this_gp.index.max()

for i in range(gp_start,gp_end+1):

# if self has not got alias, asign to be alias of itself

if pd.isna(all_main_name['alias'].iloc[i]):

all_main_name['alias'].iloc[i] = all_main_name['names'].iloc[i]

all_main_name['score'].iloc[i] = 100

# if the following has not got alias and fuzzy match, asign to be alias of this one

for j in range(i+1,gp_end+1):

if pd.isna(all_main_name['alias'].iloc[j]):

fuzz_socre = fuzz.token_sort_ratio(all_main_name['names'].iloc[i],all_main_name['names'].iloc[j])

if not no_key_word(all_main_name['names'].iloc[j]):

fuzz_socre -= 10

if (fuzz_socre > 85):

all_main_name['alias'].iloc[j] = all_main_name['alias'].iloc[i]

all_main_name['score'].iloc[j] = fuzz_socre

if i % (len(all_names)//10) == 0:

print("progress: %.2f" % (100*i/len(all_names)) + "%")@cheukting_ho #EuroPython

Results

Matches consisted of:

- differ in spelling by 1 character: missing an 'L' or 'I' or 'S'

- highly similar names: 'No.3' instead of 'No.2' or 'EB' instead of 'EH'

- fairly similar names: 'HAMMER AND THONGS PRODUCTIONS LIMITED' and 'HAMMER AND TONG PRODUCTIONS LIMITED'

Applying the matching:

- 57 names are caught similar to another name,

- less then 1% of the total.

- checking drastically reduce form 15889 total to only 57

@cheukting_ho #EuroPython

Happy matching!

https://github.com/Cheukting

@cheukting_ho #EuroPython