Grab the slides: slides.com/cheukting_ho/legend-data-log-reg

Every Monday 5pm UK time

by Cheuk Ting Ho

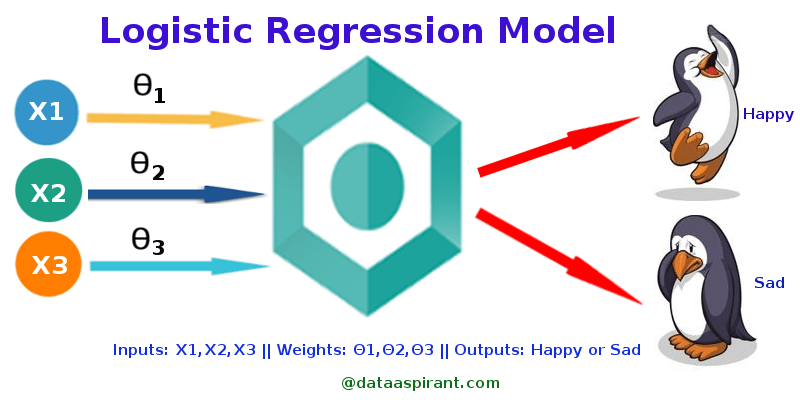

What is logistic regression?

When to use logistic regression?

When what you want to predict has only 2 outcomes. For example,

- To predict whether an email is spam (1) or (0)

- Whether the tumor is malignant (1) or not (0)

- Whether the customer will leave (1) or not (0)

What does the data looks like when we plot it?

Why not linear regression?

- Data are not forming a line

- Relations of x and y are not close to linear

- We need another line to "fit" the data

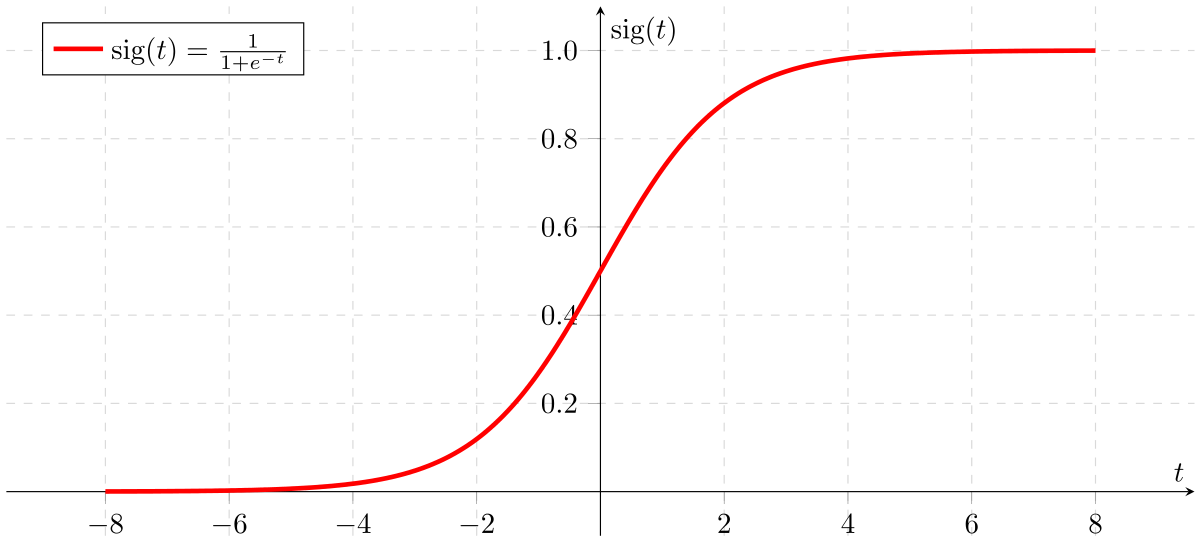

Sigmoid Function

Sigmoid Function

If we find the right t-asix the data will look like a Sigmoid function then we can distingulish 0 and 1

but how?

In linear regression

We find the right (set of) b by mininising the error (the slider game)

Y' = b0 + b1X1 + b2X2 + ...

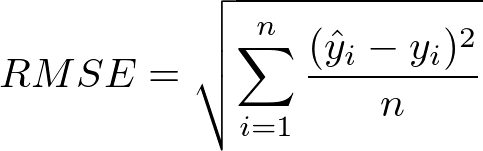

Root Mean Square Error

Remember how we measure the error of the linear regrestion last time?

Root Mean Square Error

Similar to the sum of error square, the standard way of measure how wrong (cost function) of the model form the actually training data is root mean square error (RMSE)

there for the cost function of linear regression is:

Simple logistic regression

Y'' = 1/ 1+ e**-(b1X1+b0)

where if Y'' > 0.5, Y' =1; else, Y'=0

Can we make another slider game?

🤔

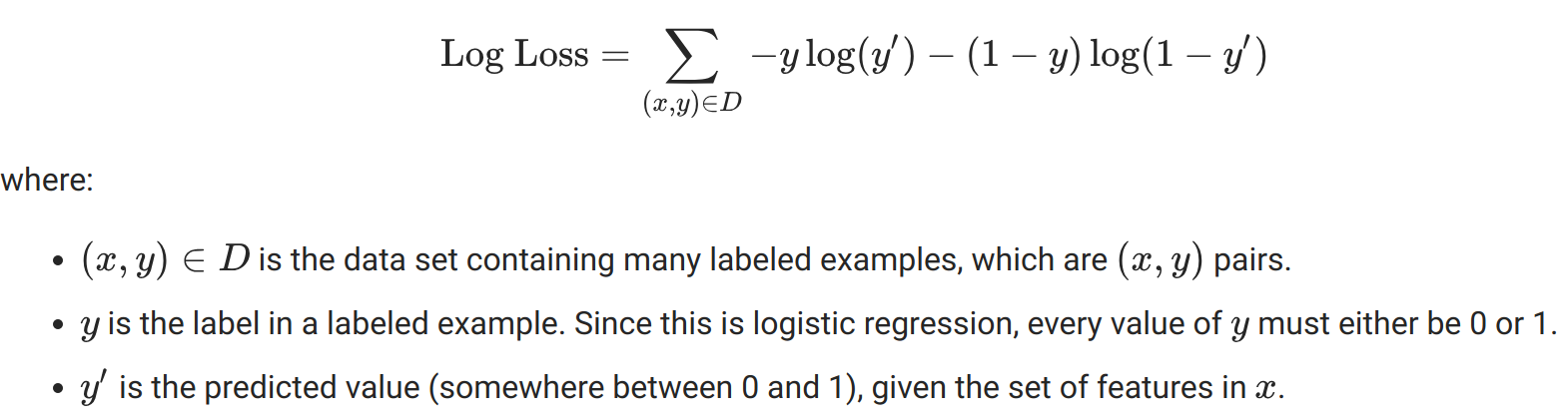

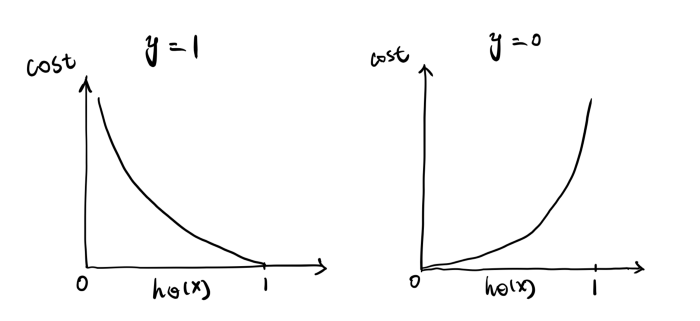

Cost function

We want this minimized!!!

How to do Logistic Regression with Scikit-learn?

Next week:

Multi-catagory Prediction

Every Monday 5pm UK time

Get the notebooks: https://github.com/Cheukting/legend_data