Algebraic Invariants of Tensors: Algorithms and Decompositions

Chris Liu

Dissertation Defense

May 8, 2026

Plan

- Tensors and their algebraic invariants

- First result: a faster algorithm to compute the adjoint and derivation algebra of tensors

- Second result: theorem on the algebraic invariants on the product of tensors

Tensors and their algebraic invariants

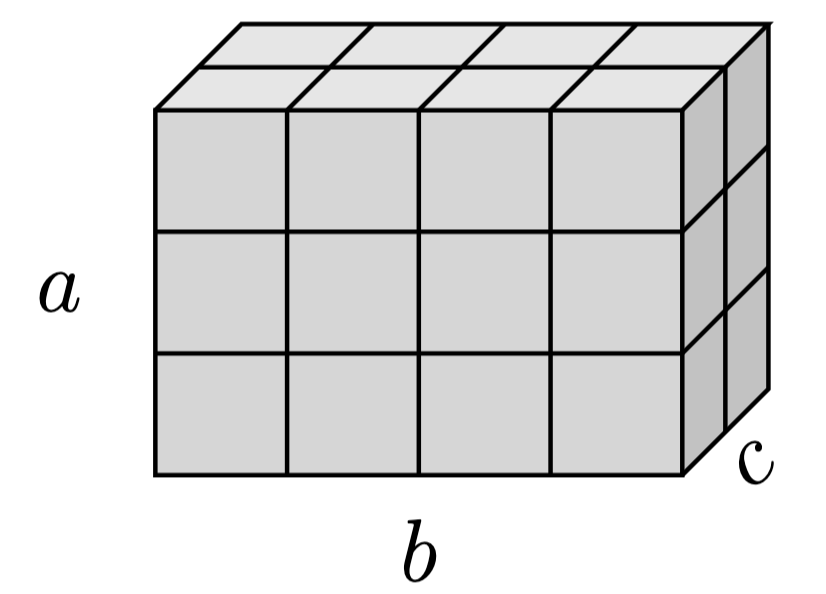

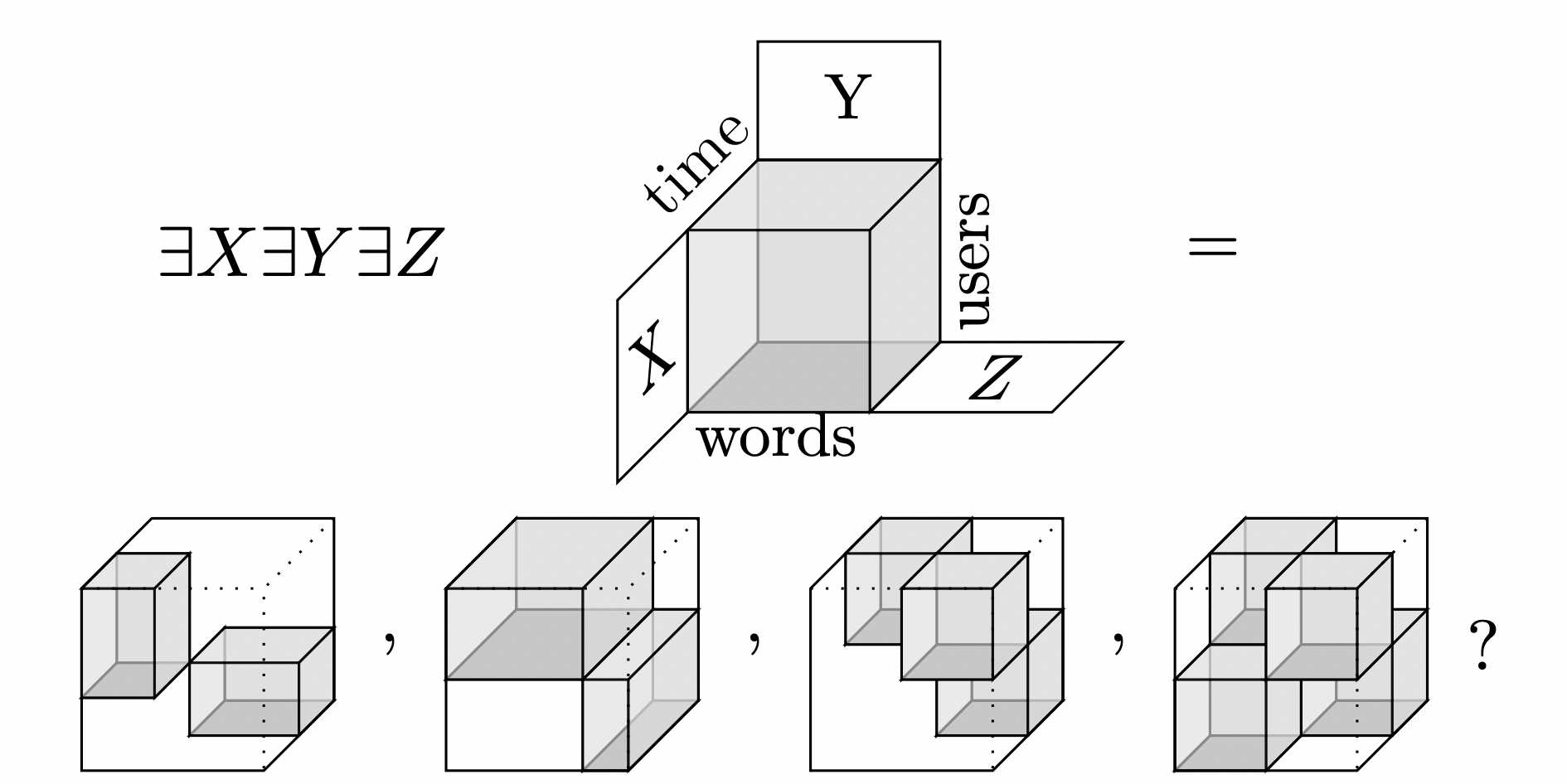

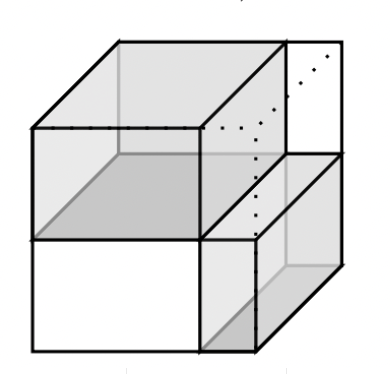

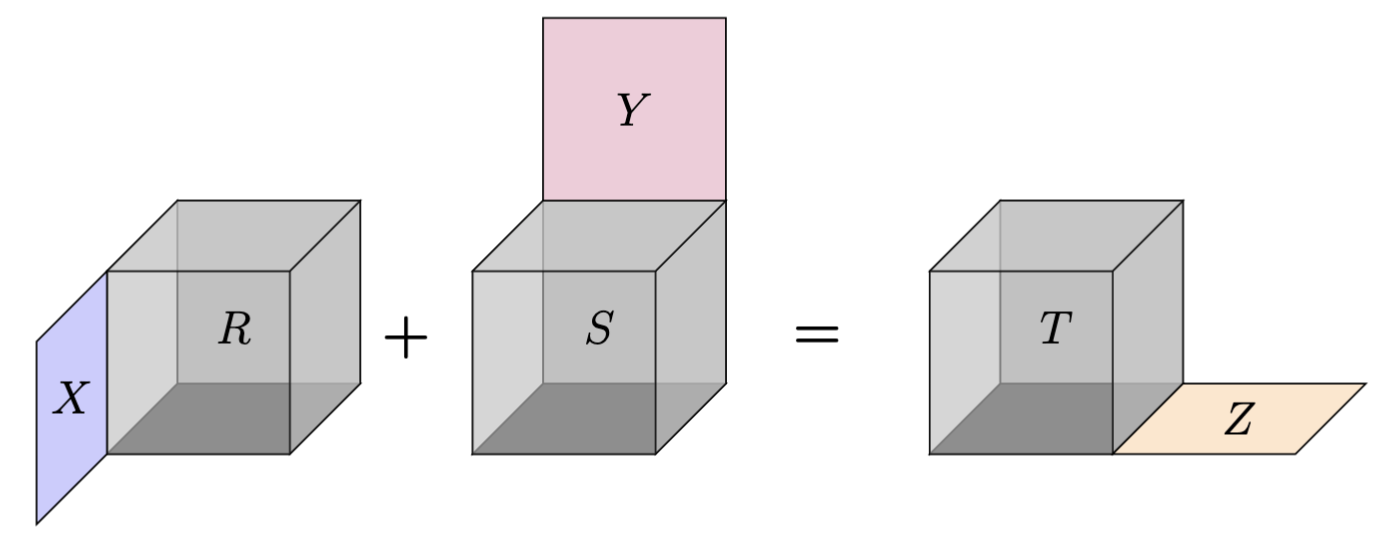

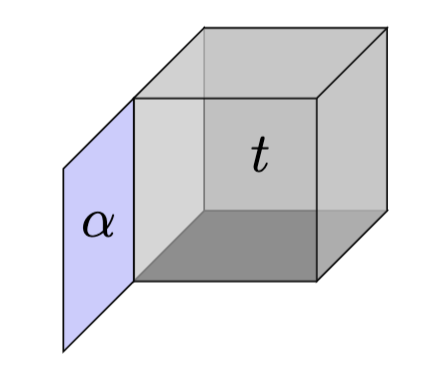

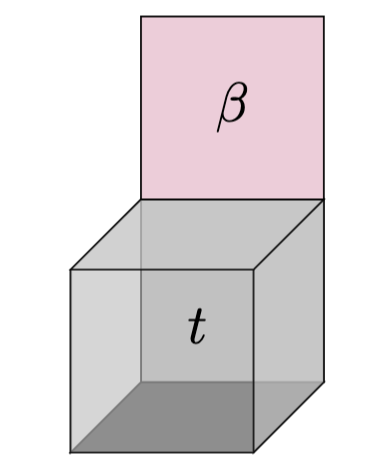

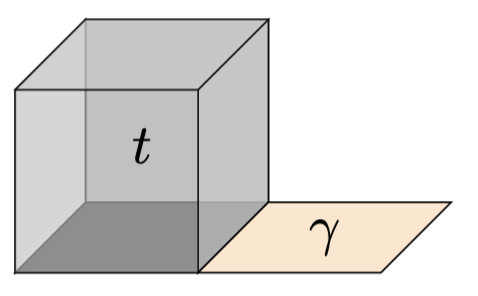

\( T \) is \( (a \times b \times c) \) grid of numbers

Tensors are multiway grids of numbers with a multilinear interpretation

As a bilinear map

\[ t: \mathbb{R}^a \times \mathbb{R}^b \rightarrowtail \mathbb{R}^c \]

As a trilinear form

\[ \tau: \mathbb{R}^a \times \mathbb{R}^b \times \mathbb{R}^c \rightarrowtail \mathbb{R}\]

\[\tau \left(\sum_i u_i e_i, \sum_j u_je_j, \sum_k u_ke_k \right) = \sum_{ijk} T_{ijk} u_iv_jw_k\]

( \( \rightarrowtail \) for multilinear )

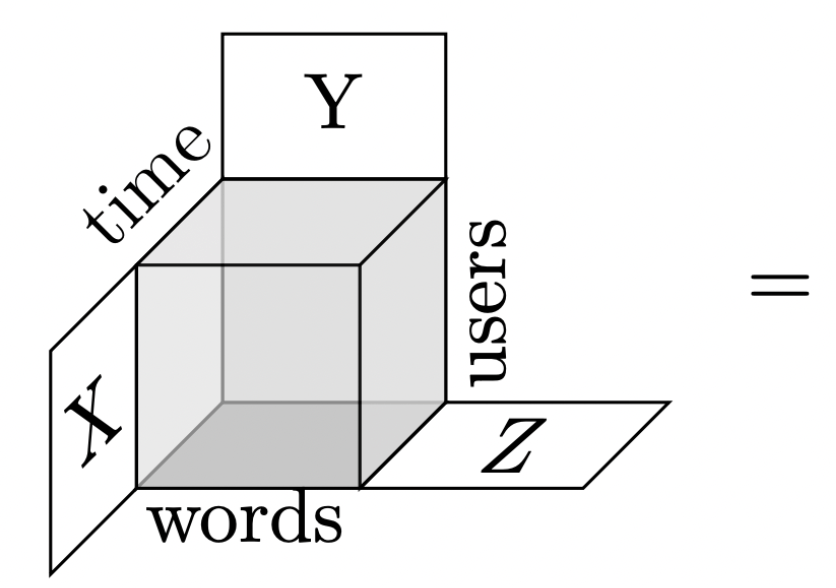

Example 1: Discord chat logs

A cube of numbers, where \( (i,j,k) \) is the number of times word \(i\) was said by user \(j\) at time \(k\)

\( \text{Users} = \{ \text{Andrea}, \text{Bob}, \text{Chris}, \text{Dave}, \text{Eve}, \ldots \} \)

\( \text{Days of the week} = \{\text{Monday}, \text{Tuesday}, \ldots \} \)

\( \text{Words} = \{\text{apple}, \text{break}, \ldots,\text{goalie}, \text{hockey}, \ldots \} \)

\( \text{Groups} = \{ \alpha_1 \cdot \text{Chris} + \alpha_2 \cdot \text{Dave} + \alpha_3 \cdot \text{Eve}, \ldots \} \)

\( \text{Time clusters} = \{\beta_1 \cdot \text{Saturday} + \beta_2 \cdot \text{Sunday}, \ldots \} \)

\( \text{Themes} = \{ \gamma_1\cdot \text{hockey} + \gamma_2 \cdot \text{puck} + \gamma_3 \cdot \text{goalie}, \ldots \} \)

Linear combinations

Data mining algorithm

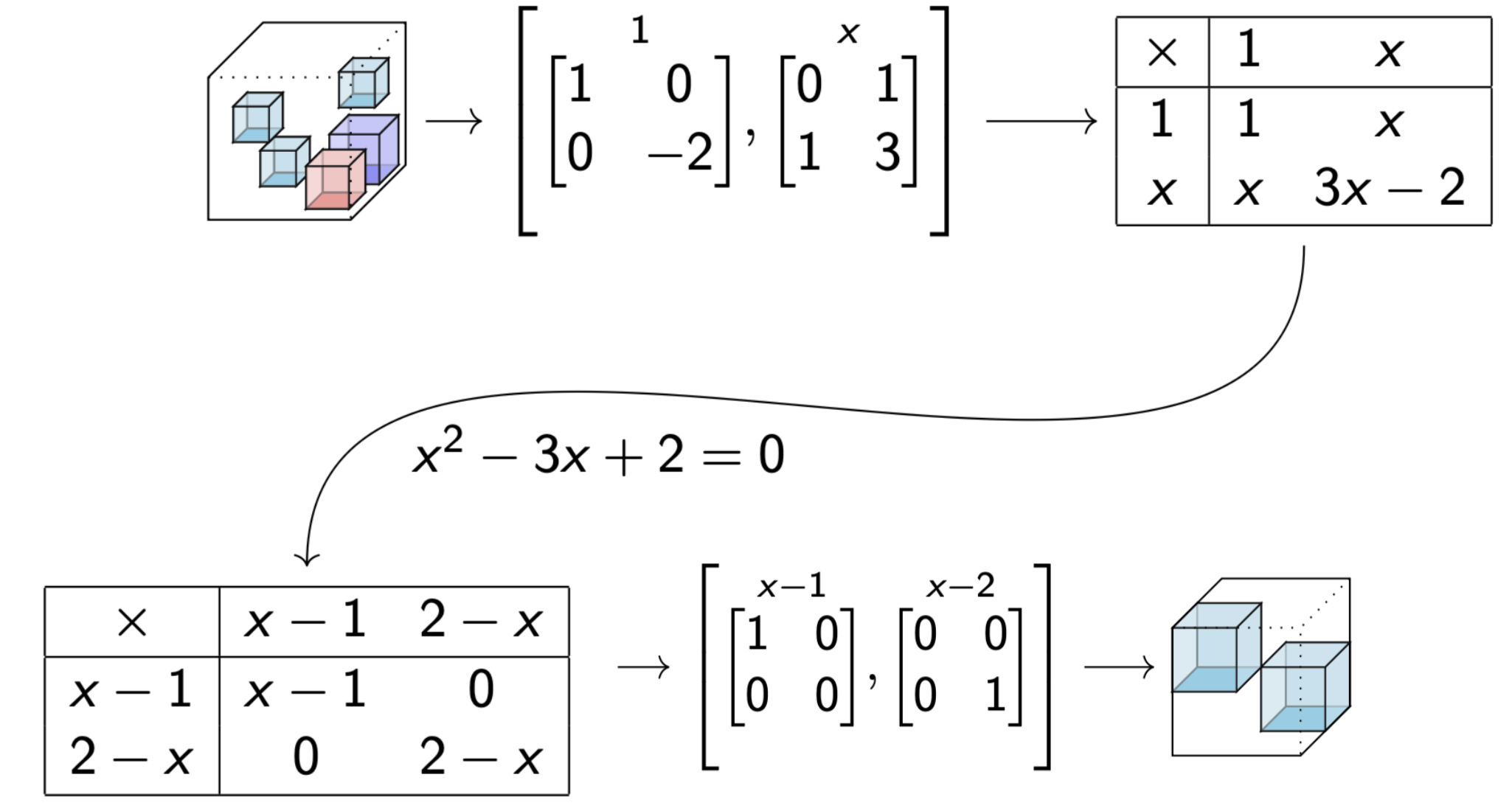

Example 2: Multiplication

Let \( A \) be a \(\mathbb{F}\)-vector space with bilinear multiplication \( \mu: A \times A \rightarrowtail A \) (\(\mathbb{F}\)-algebra)

For ordered basis \( (e_1,\ldots, e_n )\) of \(A\), coordinatize \(\mu\) by a \( (n \times n \times n) \) grid of numbers \(T\) satisfying \( \mu(e_i,e_j) = \sum_k T_{ijk}e_k \).

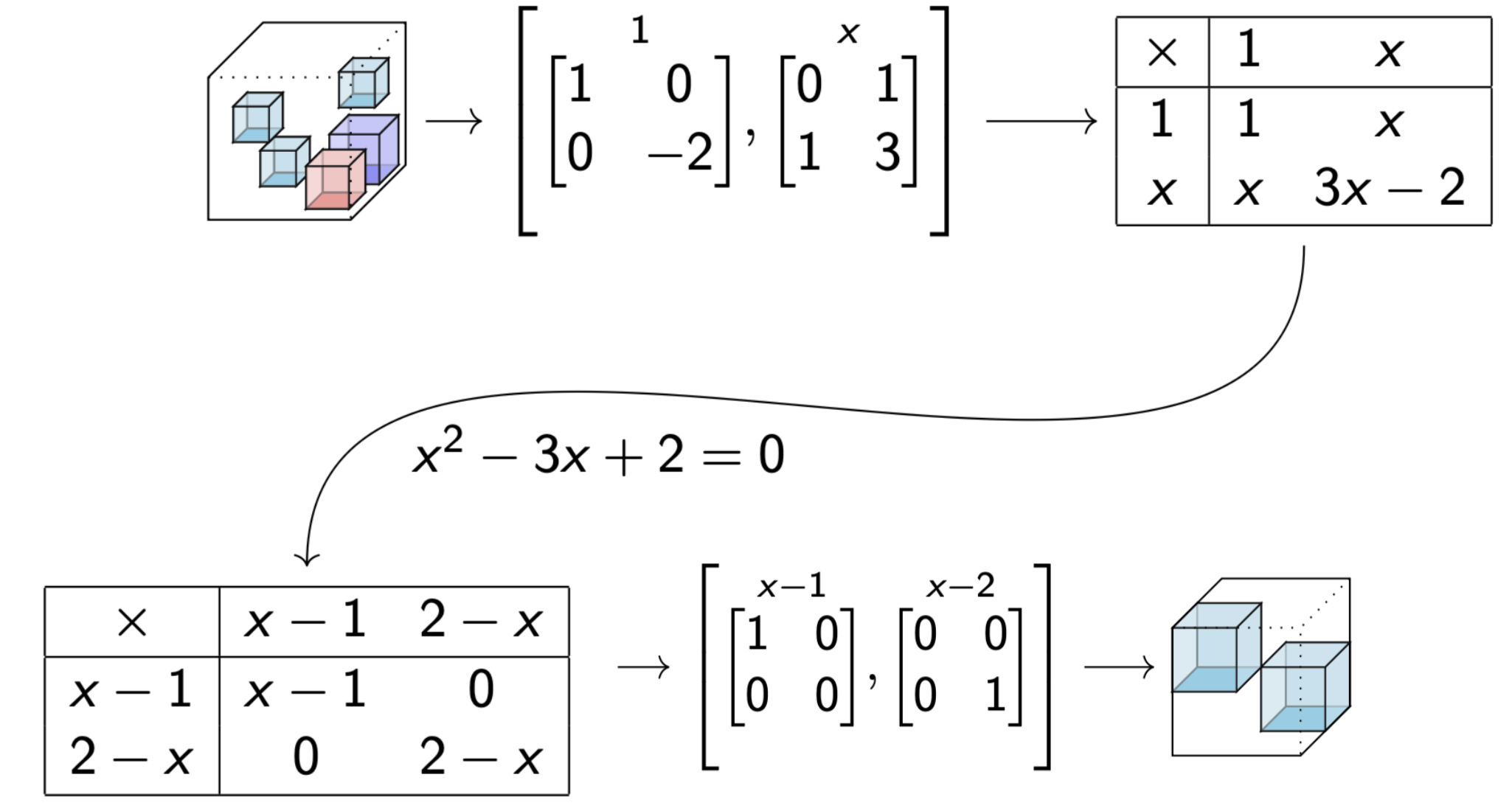

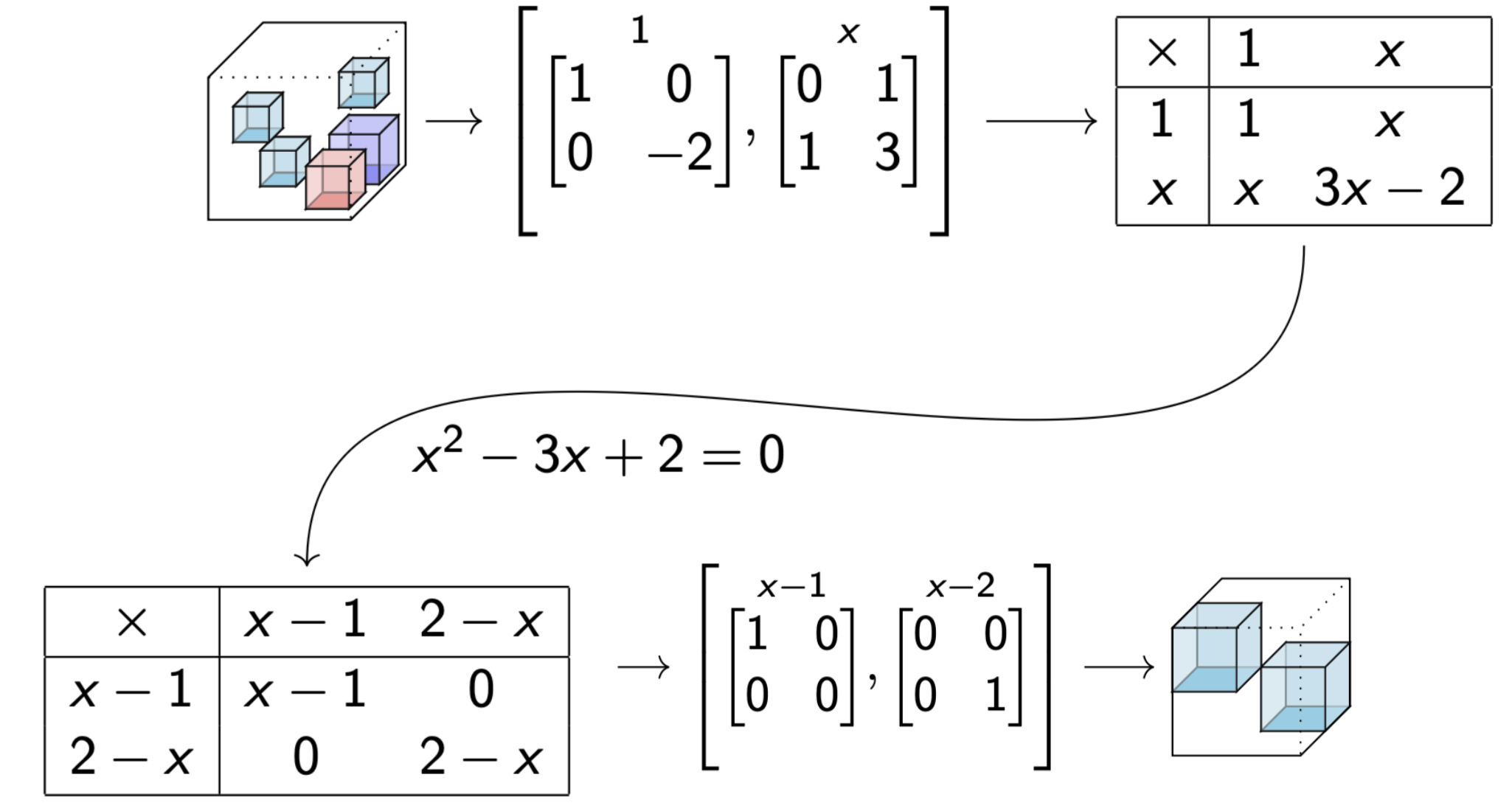

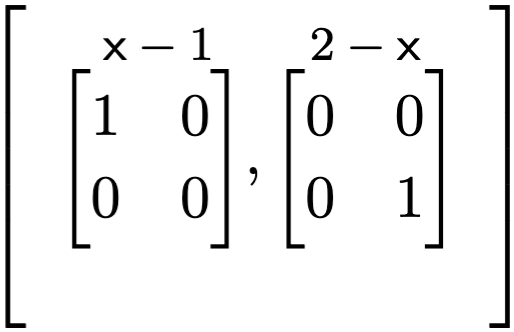

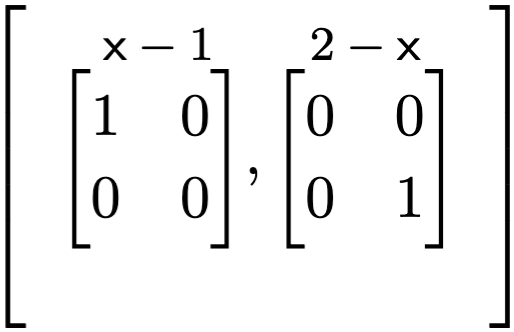

For \(A = \frac{\mathbb{F}[x]}{x^2-3x+2} \)

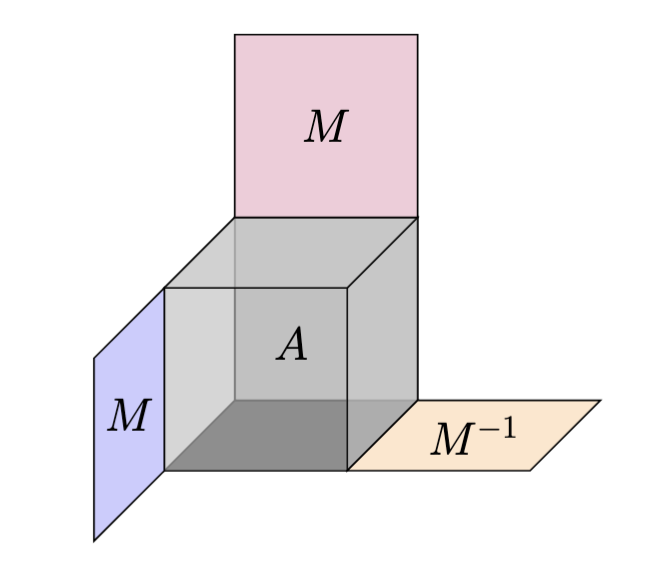

Change of bases

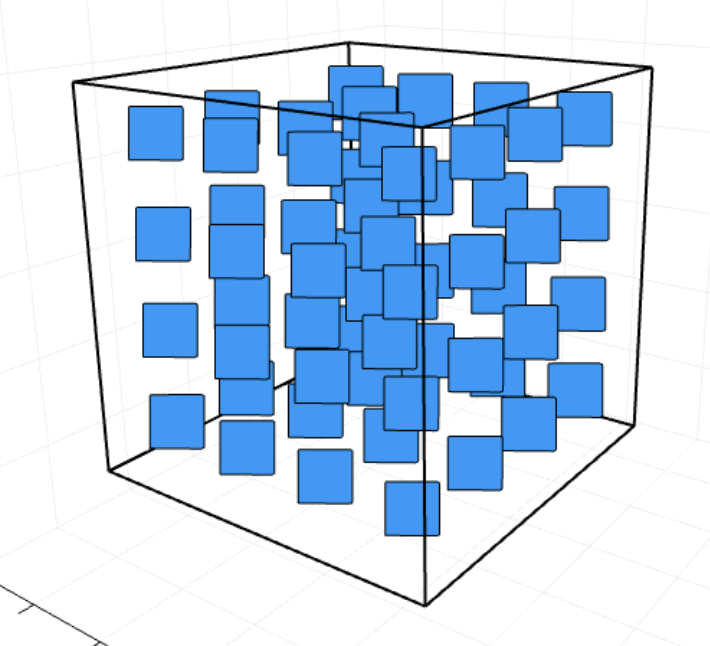

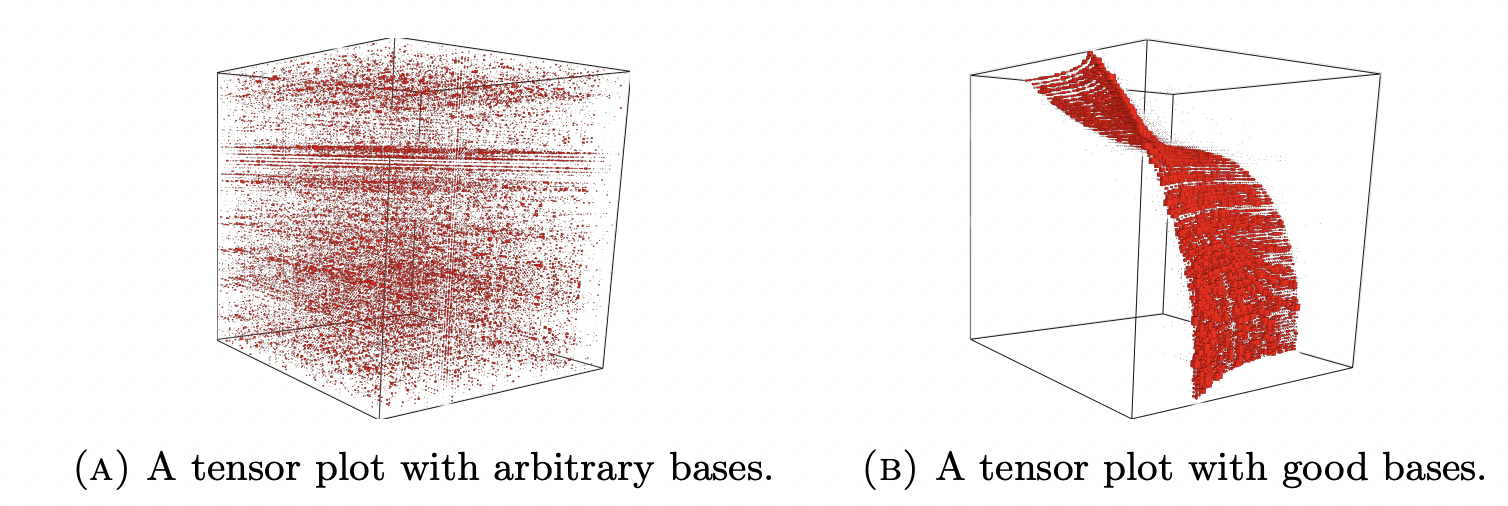

Algebraic invariants detect structure

Source: Optimal Search Spaces for Tensor Problems, Figure 1.1

Adjoints detect

Given \( t: U \times V \rightarrowtail W \)

$$\operatorname{Adj}(t) = \{(X,Y) \mid (\forall u,v) \;\; t(Xu,v) = t(u,Yv) \}$$

Analogy: Given a bilinear form

\( \langle \cdot \mid \cdot \rangle : U \times U \rightarrowtail \mathbb{F} \) and \(A \in \operatorname{End}(U)\), its adjoint \( A^{\ast} \) satisfies \( \langle Au \mid v \rangle = \langle u \mid A^{\ast} v \rangle \)

(transpose for the dot product)

Myasnikov '90, Meataxe (Parker-Norton '84, Ronyai '89), Wilson '08 and others use the algebra, i.e

Theorem

Exists \(\mathcal{E} = \{e_1,\ldots, e_n \} \subset \text{Adj}(t) \),

\( \sum_i e_i = 1\), and \(e_ie_j = e_i \) if \( i = j \), otherwise \(0\)

if and only if

Exists \(\perp\)-decomposition, \(U := \bigoplus_i U_i\) and \(V := \bigoplus_i V_i \),

\[ t(U_i, V_j) = t(U_i,V_i) \quad \text{if } i = j, \text{otherwise } 0 \]

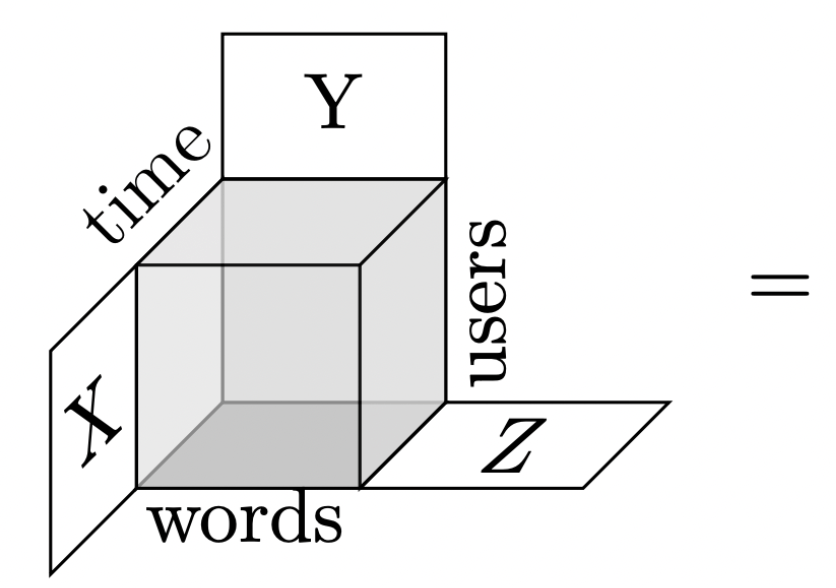

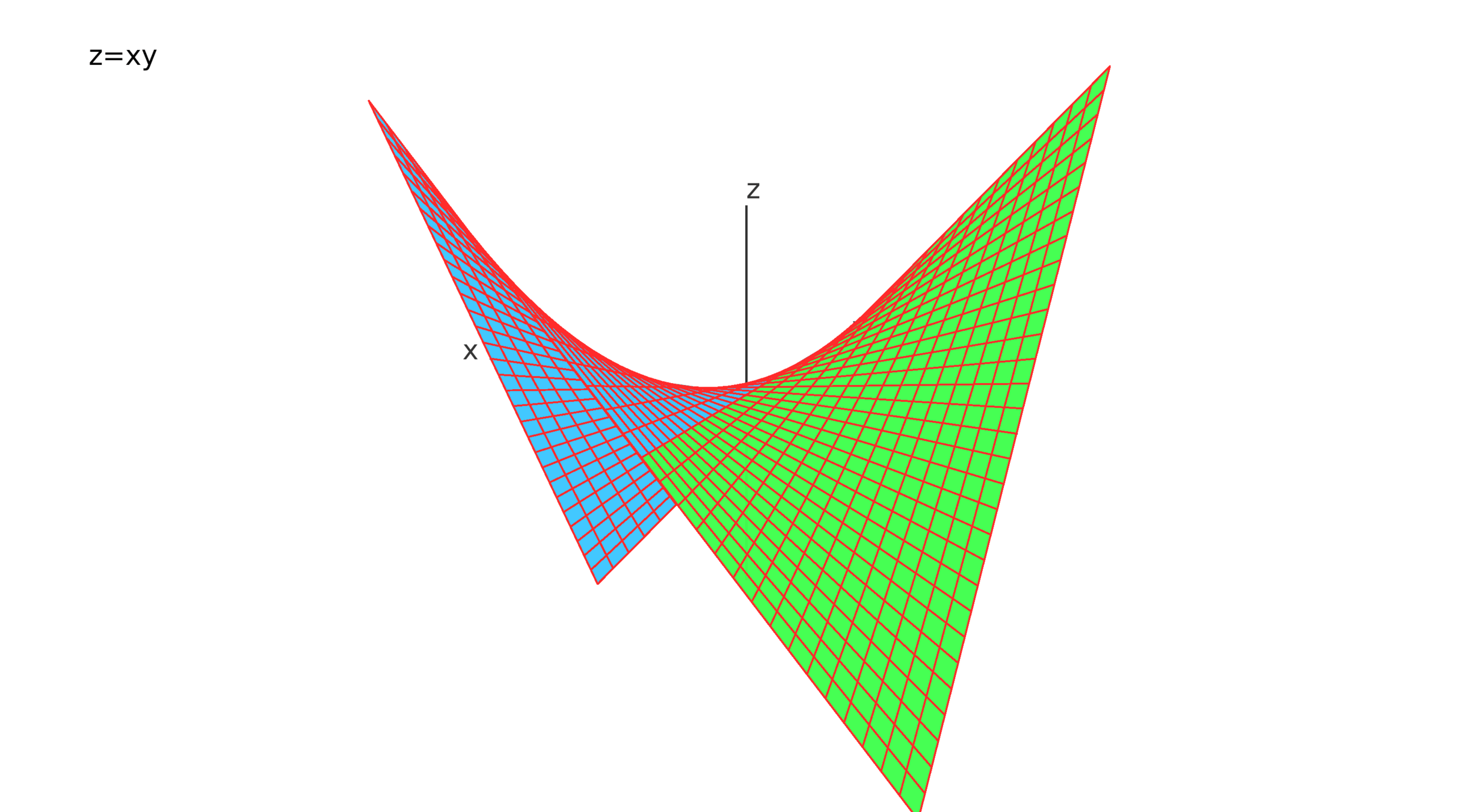

Derivations detect

For \( (u,v,w) \) eigenvectors of \( (X,Y,Z) \) in \( \text{Der}(\tau) \) with eigenvalues \( (\kappa , \lambda, \rho) \),

By distributive property,

\[ (\rho - \kappa - \lambda) (\tau(u,v,w)) = 0 \]

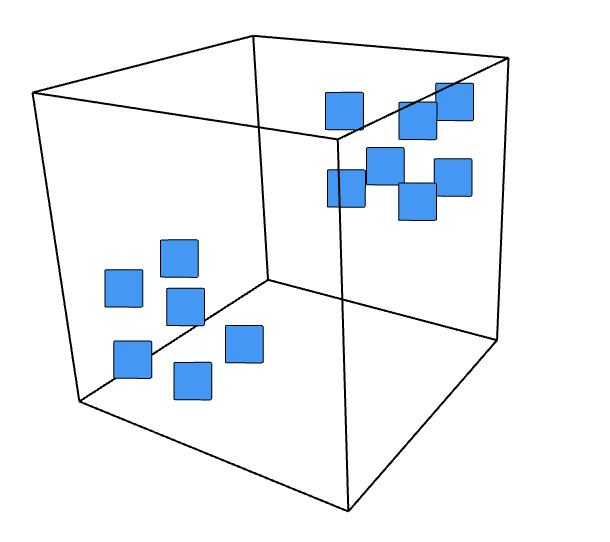

Source: Detecting cluster patterns in tensor data, Figure 3

Given \( t: U \times V \rightarrowtail W \)

$$\operatorname{Der}(t) = \{(X,Y,Z) \mid (\forall u,v) \; Z(t(u,v)) = t(Xu,v) + t(u,Yv) \}$$

Analogy: The product rule in Calculus to understand multiplication. The derivative \( \frac{d}{dx}(fg) = (\frac{d}{dx}f)g + f(\frac{d}{dx}g) \)

The surface \(z=xy\)

Eigenbasis gives cluster patterns

\[\tau(u,v,Zw) = \tau(Xu,v,w) + \tau(u,Yv,w)\]

\[ \tau(u,v,\rho w))-\tau(\kappa u,v,w) - \tau(u, \lambda v,w) = 0\]

Theorem (Brooksbank, Kassabov, Wilson '24)

(For trilinear form \(\tau: U \times V \times W \rightarrowtail \mathbb{F} \) )

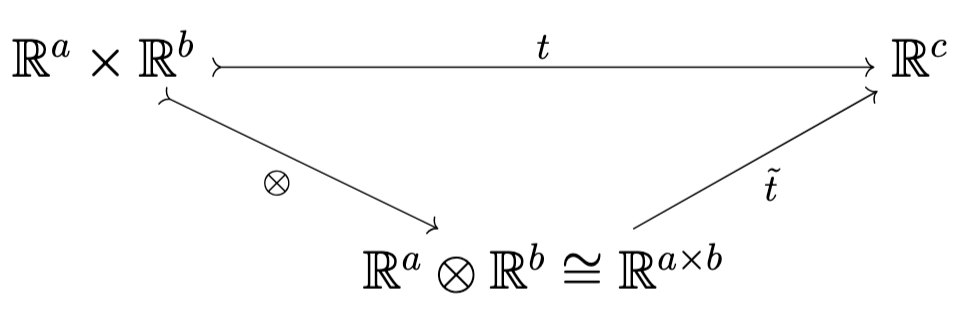

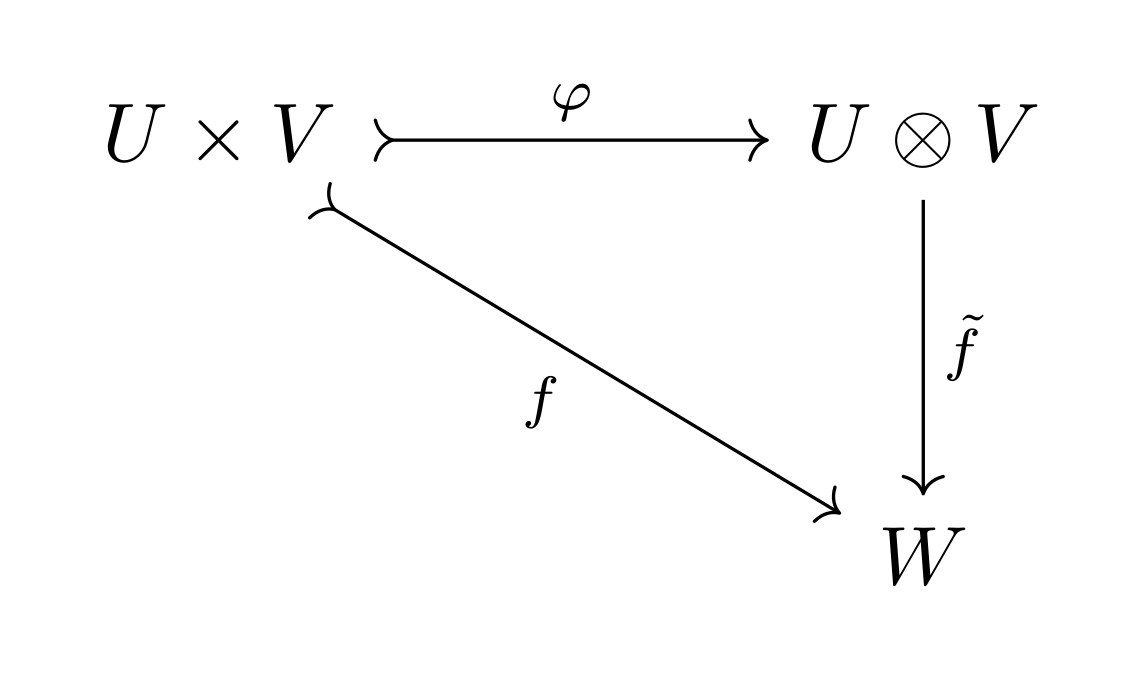

Tensors vs tensor product of vector spaces?

Given \(U,V\) vector spaces, the tensor product space is \( (U \otimes V, \varphi: U \times V \rightarrowtail U \otimes V) \)

such that for all bilinear maps \(f: U \times V \rightarrowtail W\), there exists unique induced linear map \(\tilde{f}: U \otimes V \rightarrow W\) such that \(f(u,v) = \tilde{f}(\varphi(u,v))\).

- \(U \otimes V\) is spanned by \( \{ \varphi(u,v) : u \in U, v \in V \}\)

- Pure tensors are elements \( \varphi(u,v) \in U \otimes V \), often denoted as \(u \otimes v\)

- Elements of \(U \otimes V\) are tensors in our sense after identifying \( U \otimes V \) with \((U \otimes V)^{\ast} \) for instance by a basis

"Theorem" (L.)

Faster (practical and theoretical) algorithm to compute the adjoint and derivation algebra of tensors

Three step algorithm

- solving a smaller restricted subsystem

- lifting the subsystem solution to a global candidate solution

- verify the global candidate solution

Let \(A \in \mathbb{F}^{a \times b \times c} \)

\( (R,S,T) = (A,-A, 0) \) gives adjoint

(Joint with James B. Wilson, Joshua Maglione)

Given

Find

Such that

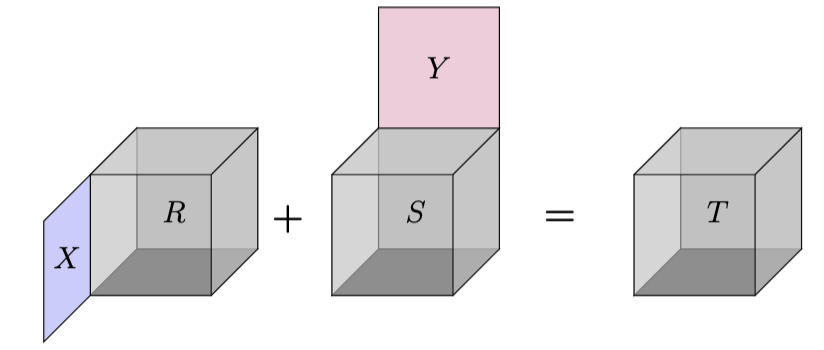

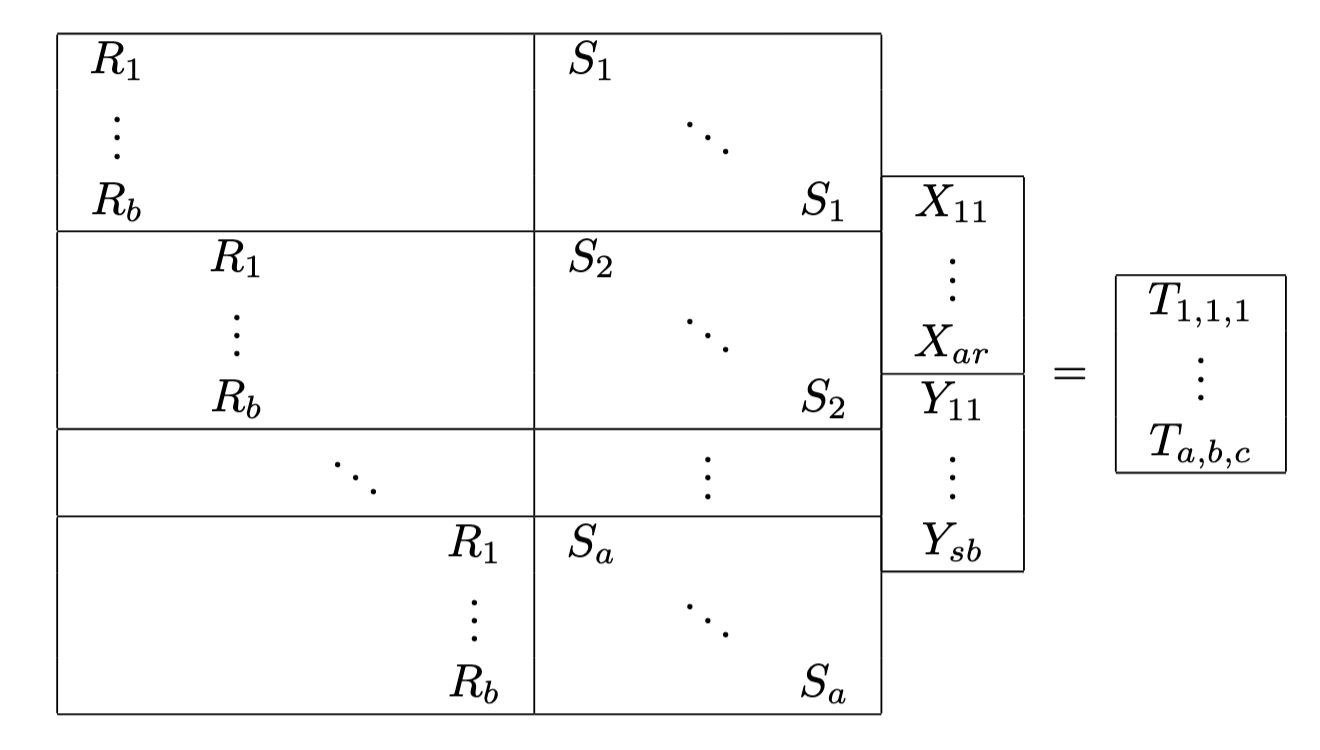

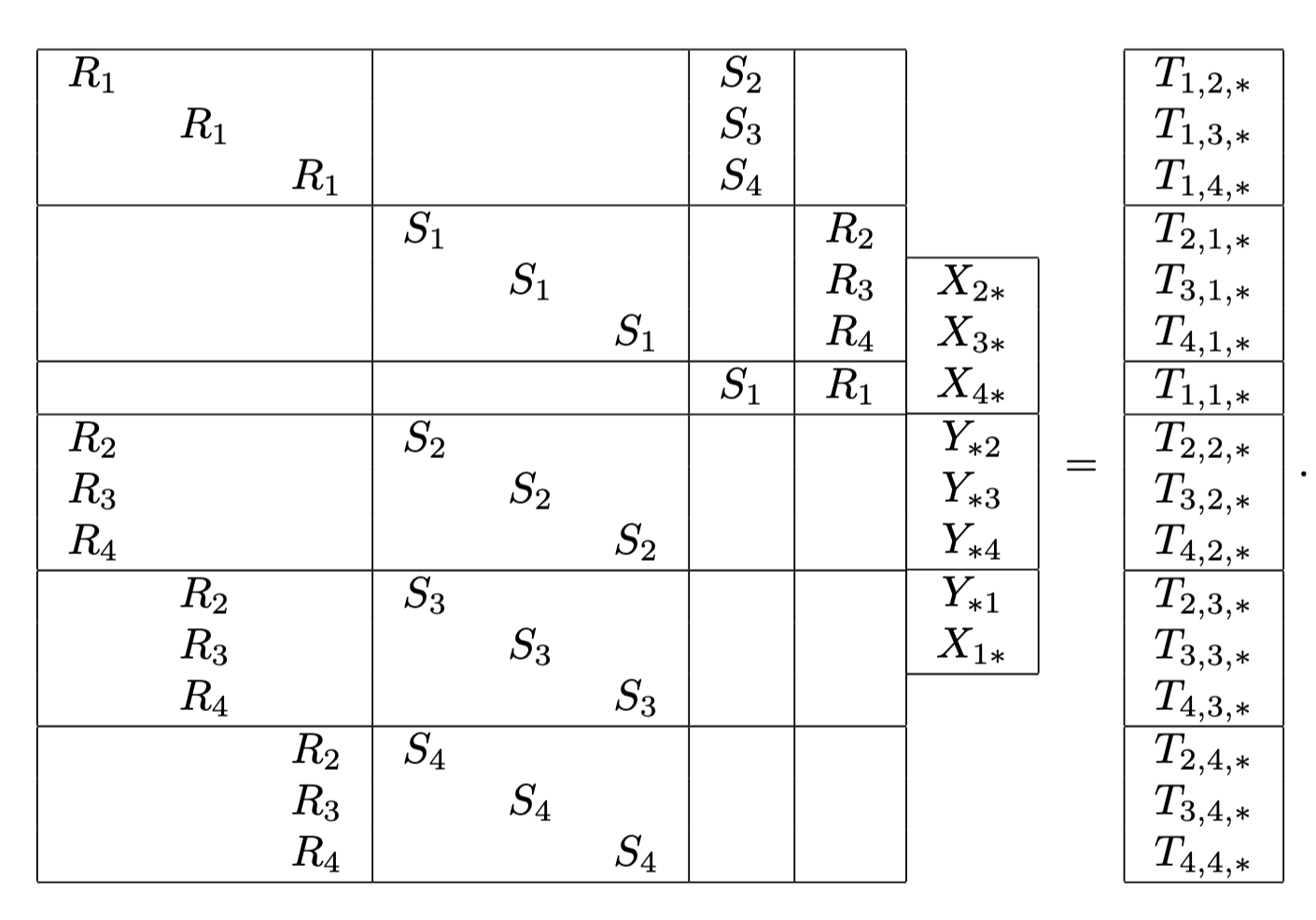

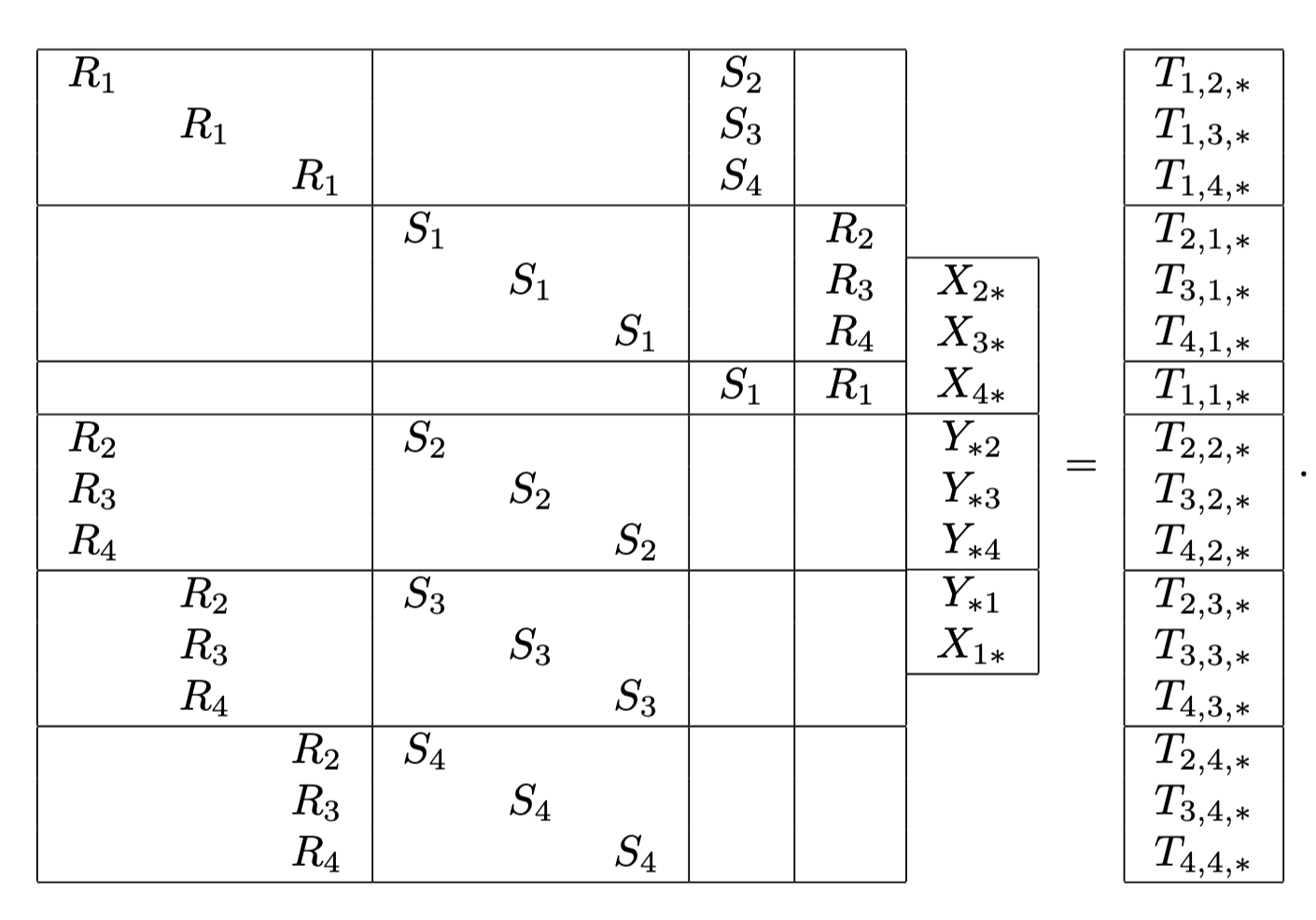

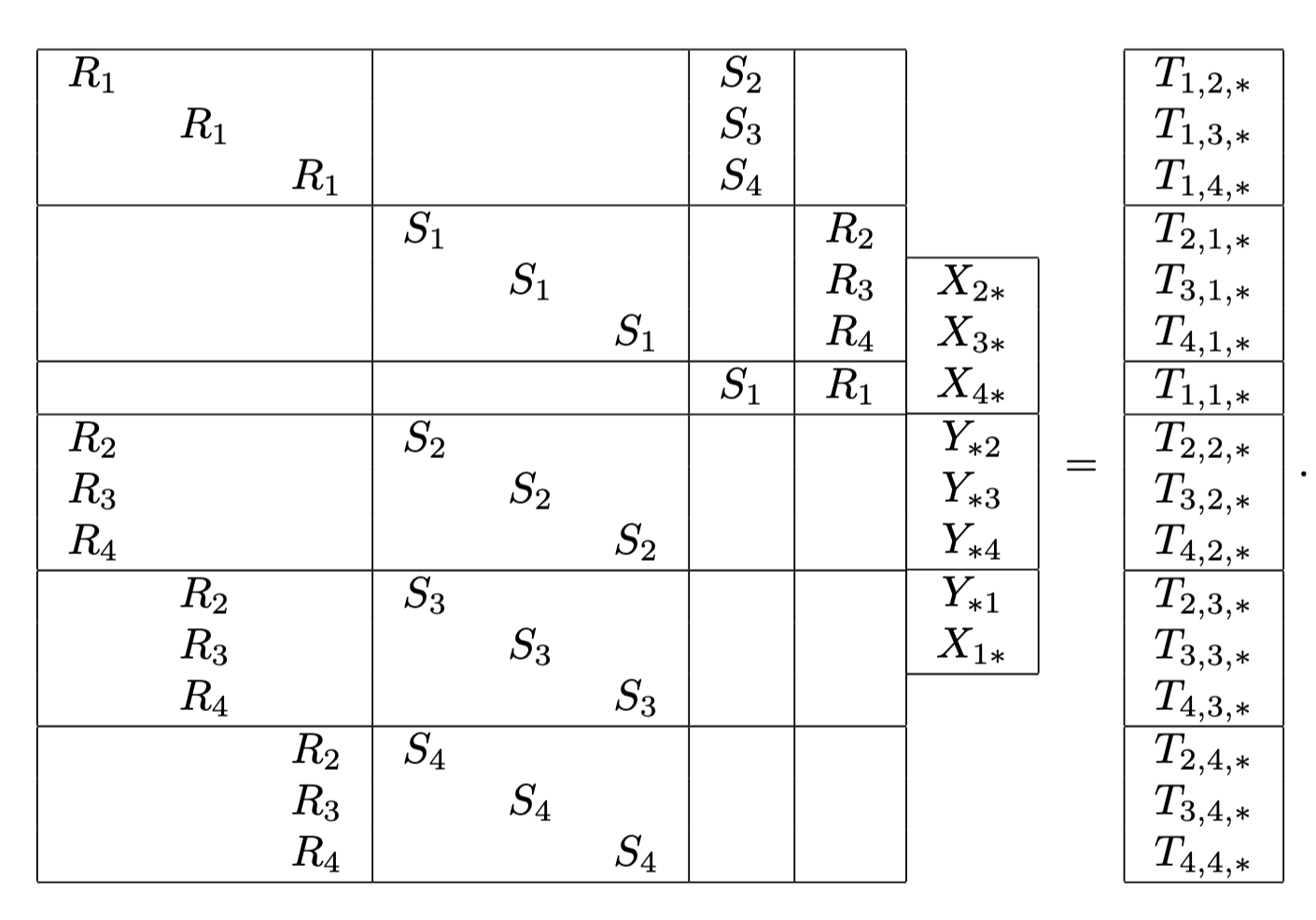

Simultaneous Sylvester System

Recall given \( t: \mathbb{F}^{a} \times \mathbb{F}^{b} \rightarrowtail \mathbb{F}^{c} \) $$\operatorname{Adj}(t) = \{(X,Y) \mid (\forall u,v)\;\; t(Xu,v) = t(u,Yv)\}$$

Corresponding baseline linear system

Example

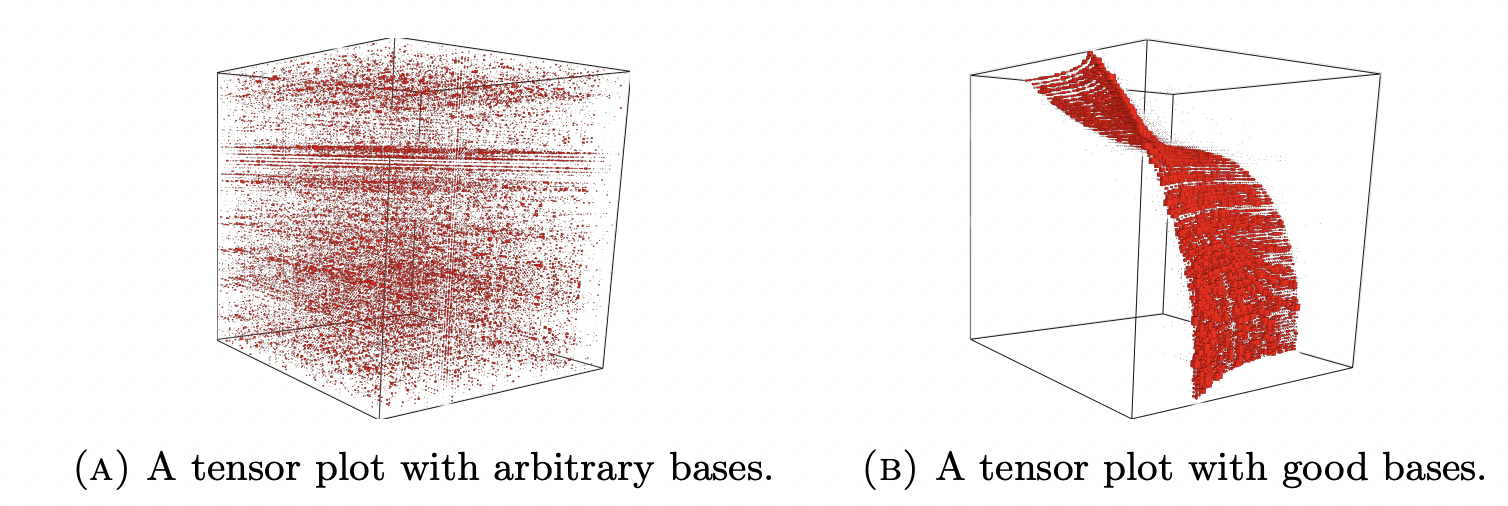

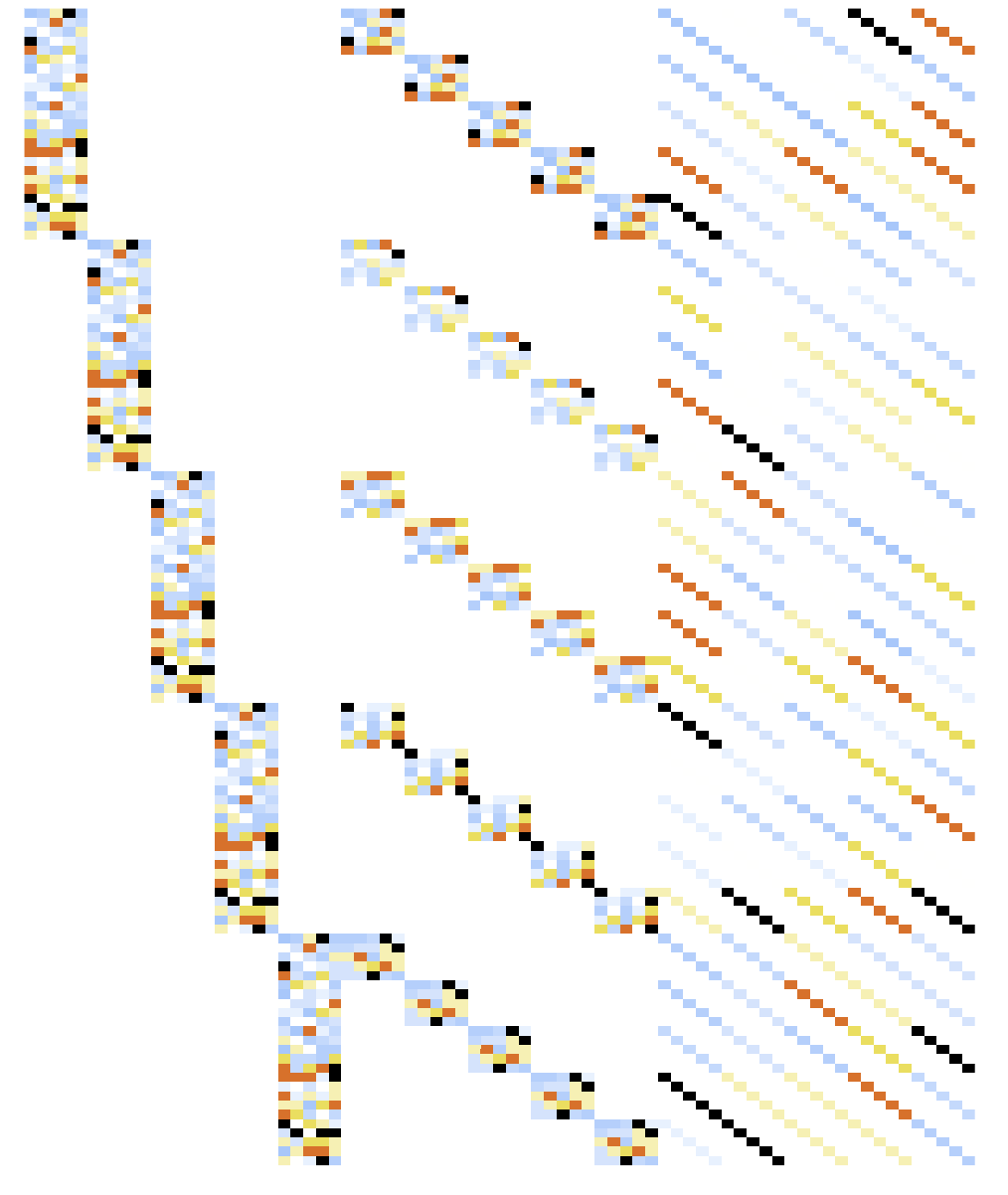

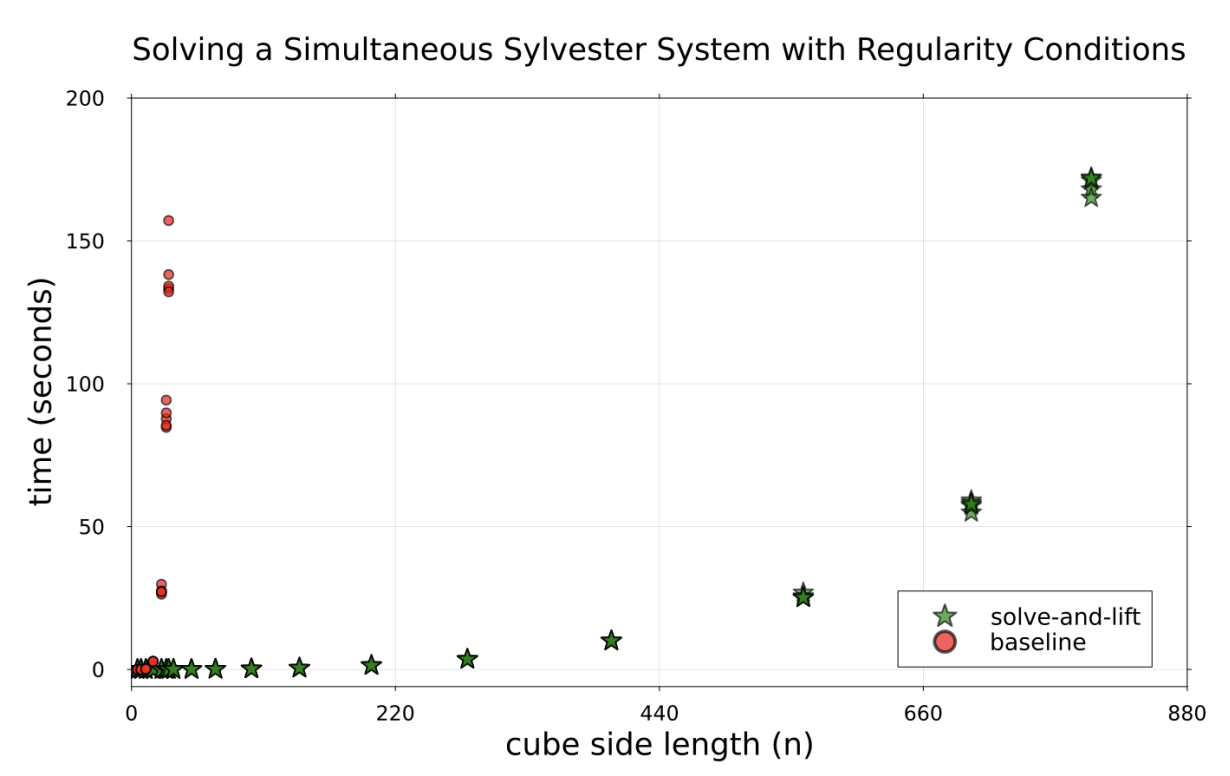

(LMW) Idea: Avoid big linear system altogether with a solve-lift-check approach

Linear system given by matrix of size \( (abc) \times (ar + bs) \) is \(O(n^7) \) to solve using Gaussian Elimination

(\(n = a+b+c+r+s \))

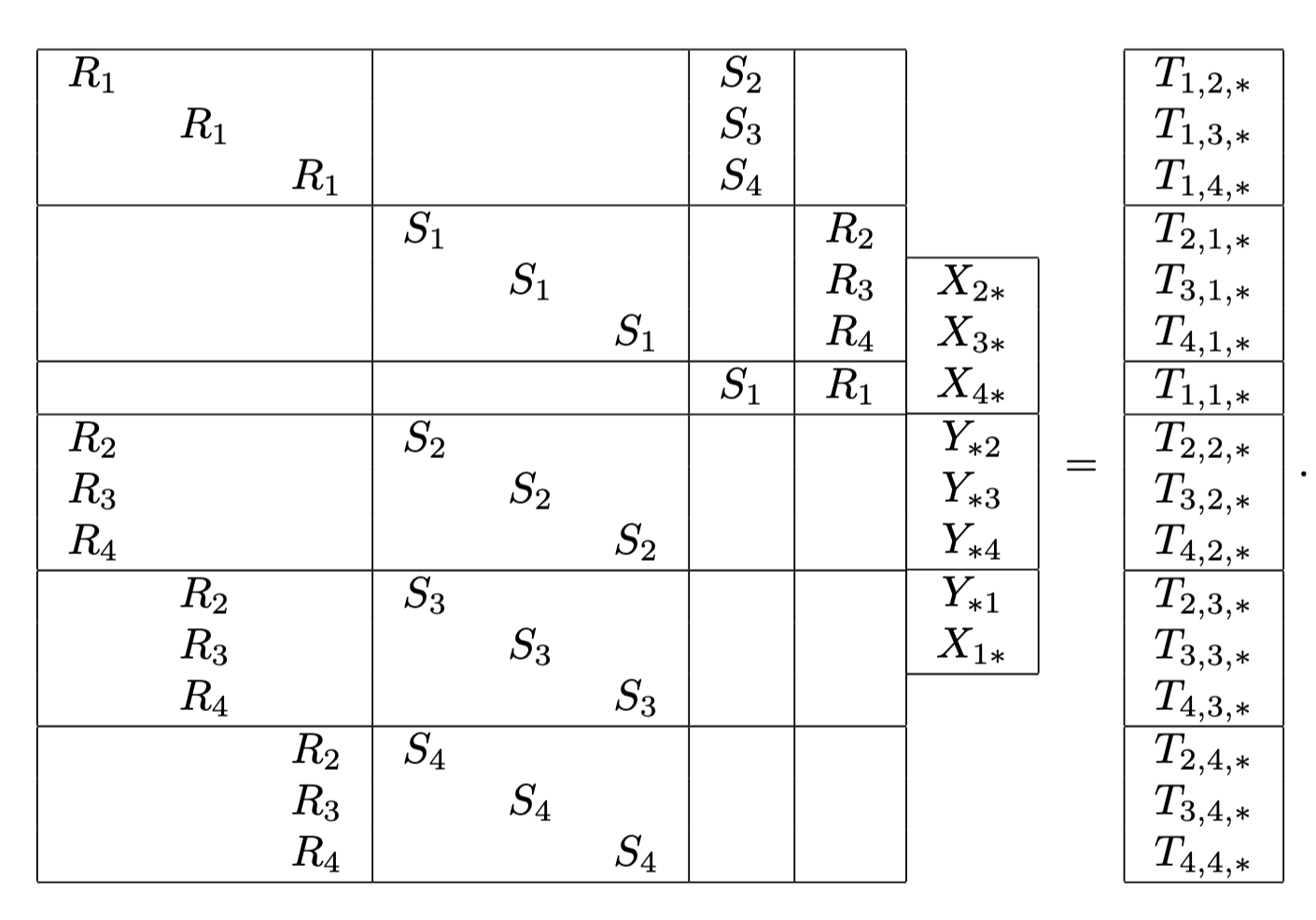

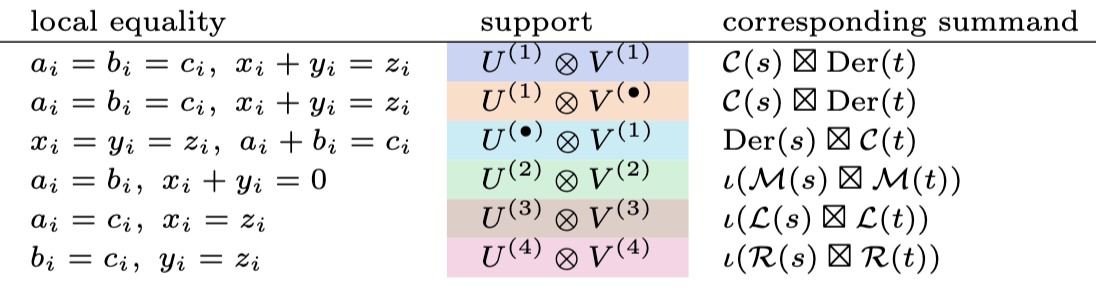

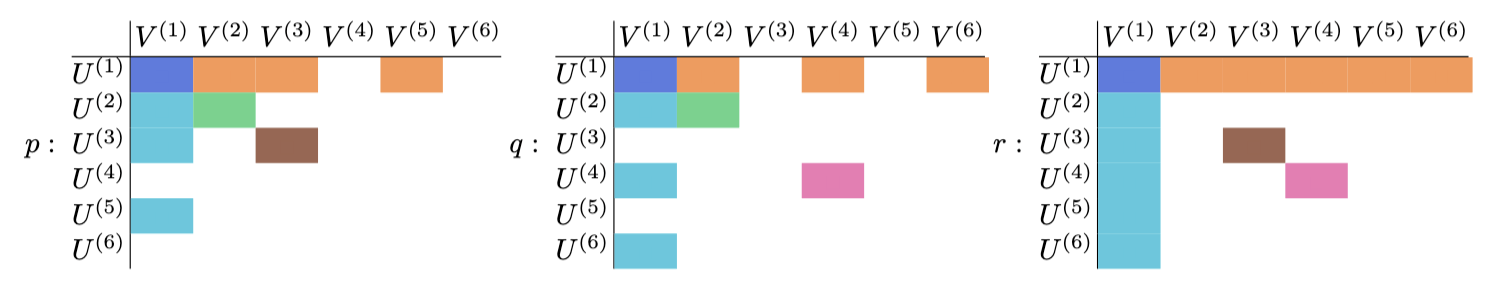

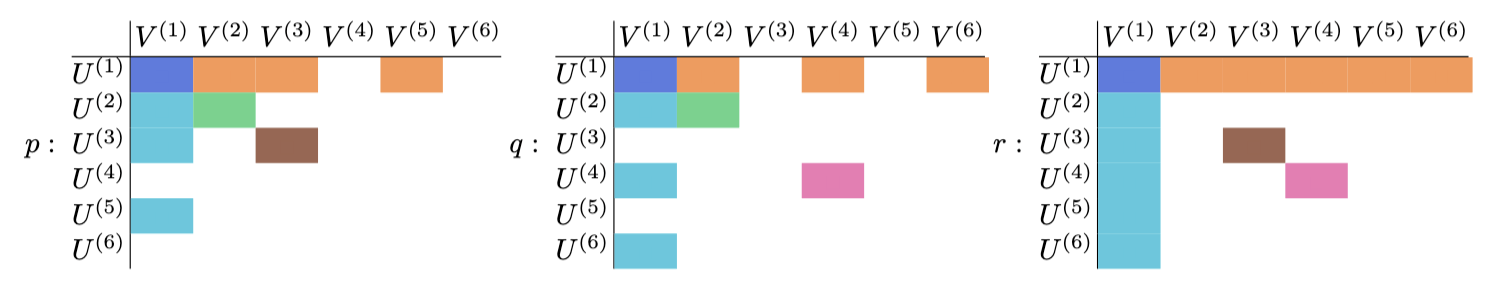

Permute rows & columns

Idea: solve-lift-check approach

\( I \)

\( J \)

\( M_R \)

\( M_S \)

(L.) Distill to

- Solve \( Mx=b \) systems

- Solve \( MX=N(\theta) \) parametrized systems

- Solve \(Au+\alpha = Bv + \beta\) systems

- Restrict to \(I \subset [a]\) for \(S\), and \(J \subset [b] \) for \(R\)

- Solve restricted system for \( (X_{DR}, Y_{DR}) \)

- Backsub \( M_R X = N_R(Y_{DR}) \)

- Backsub \( M_S Y = N_S(X_{DR}) \)

- Verify

Given \(M \in \mathbb{F}^{m \times n} \), and \( N: \Theta \rightarrow \mathbb{F}^{m \times \ell} \) affine map, solve for \(X \in \mathbb{F}^{n \times \ell} \)

Given matrices \(A,B\) and constants \( \alpha, \beta\), solve for all pairs \( (u,v) \)

regular family of instances

- (Bounded dimension) \( \operatorname{dim}(\Theta_{DR}) \leq C\)

- (Lift feasibility if restricted system has solutions)

- \( N_R(Y_{DR}) \subseteq \operatorname{Im}(M_R) \)

- \( N_S(X_{DR}) \subseteq \operatorname{Im}(M_S) \)

- (Lift uniqueness) \( \operatorname{Ker}(M_R) = \operatorname{Ker}(M_S) = \{0\} \)

Theorem A (L.)

Given a regular family, and a black-box algorithm for \( (I,J) \), the solve-and-lift approach takes \(O(n^4)\) arithmetic operations for deterministic solutions.

Remark - The black-box restriction algorithm needed for determinstic runtimes. \( \exists \) randomized algorithms.

\( I \)

\( J \)

\( M_R \)

\( M_S \)

Recall backsub \( M_R X = N_R(Y_{DR}) \), \( M_S Y = N_S(X_{DR}) \)

Assumption is reasonable for natural classes of instances (e.g generic cubic tensors with overdetermined restricted system)

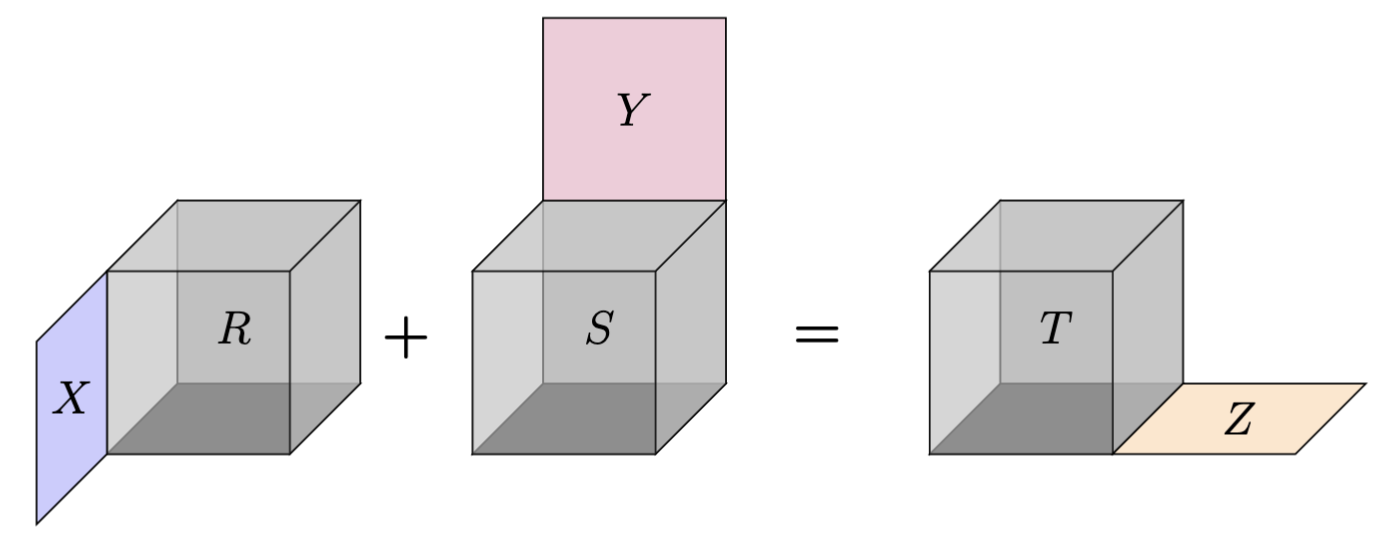

Derivation Equation

Let \(A \in \mathbb{F}^{a \times b \times c} \)

\( (R,S,T) = (A,A, A) \) gives derivation

Recall given \( t: \mathbb{F}^{a} \times \mathbb{F}^{b} \rightarrowtail \mathbb{F}^c \)

$$\operatorname{Der}(t) = \{(X,Y,Z) \mid (\forall u,v) \; Z(t(u,v)) = t(Xu,v) + t(u,Yv) \}$$

Given

Find

Such that

Can you tell \(R \neq S \neq T \) here?

Theorem B (L.)

Given a regular family, and a black-box algorithm for \( (I,J,K) \), the solve-and-lift approach takes \(O(n^{4.5})\) arithmetic operations for deterministic solutions.

Analogous derivation regular family ensure the bounded dimension, and unique lifting property

- Restrict to \(I \subset [a]\) for \(S\), \(J \subset [b] \) for \(R\), and \(K \subset [c] \) for \(T\)

- Solve restricted system for \( (X_{TR}, Y_{TR}, Z_{TR}) \)

- Backsub for \( X,Y,Z \)

- Verify on unrestricted indices

Remark - Extra \( n^{0.5} \) factor is due to the restricted system having \( O(n^{1.5})\) variables

(baseline) \( \text{Der}(t) \) is nullspace of

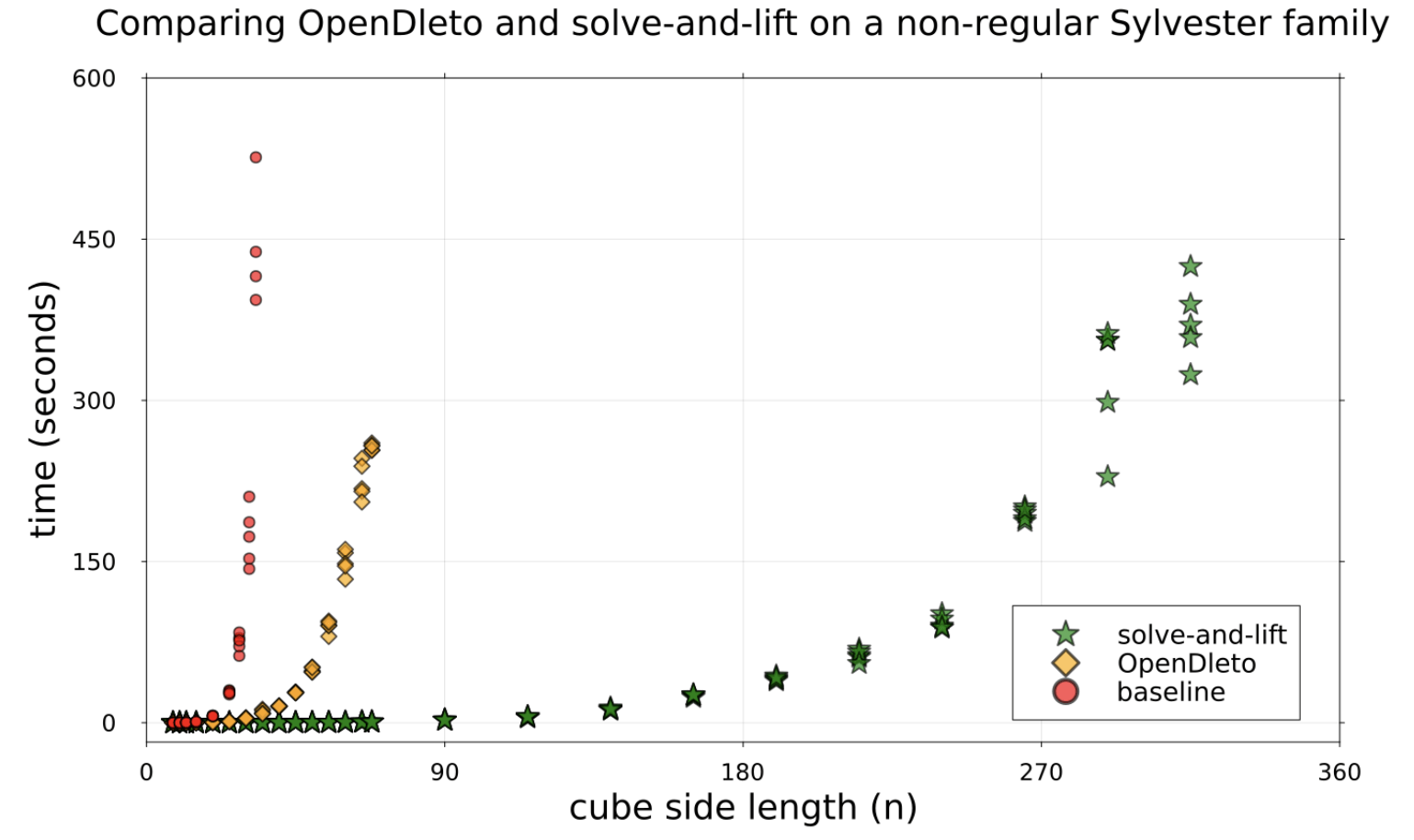

Performance on regular family with one solution realizes theoretical improvements.

Performance on non-regular family with \(n\) solutions for \( (n \times n \times n) \) tensors still shows improvement

Extras cut for time

Remark - As \(O(n^4)\) cost is needed to determinstically verify a solution, this is the best complexity for a determinstic solution we can expect.

"Theorem" (L.)

Decomposing the algebraic invariants of the product of tensors

Tensor products of algebras

Let \( A, B \) be unital associative \( \mathbb{F} \)-algebras

\( A \otimes B \) is a unital associative \(\mathbb{F}\)-algebra, with multiplication

\[ (a \otimes b)(c \otimes d) = ac \otimes bd \]

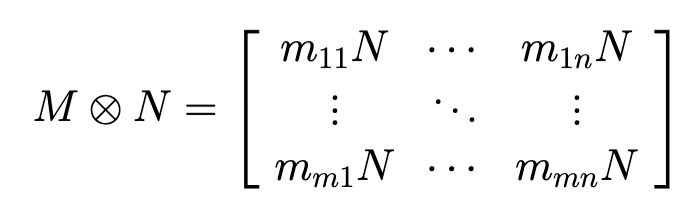

Example: Kronecker product of matricies

Example: Extending scalars (Recall \( \mathbb{H} \) are the Quaternions)

\( u = i_{\mathbb{H}} \otimes i_{\mathbb{C}} \) satisfies \( u^2 = 1 \), so the idempotent \(\frac{1+u}{2} \) splits the algebra

\[ 1 \otimes 1 \mapsto \begin{bmatrix}1 & 0 \\ 0 & 1\end{bmatrix}, i \otimes 1 \mapsto \begin{bmatrix}i & 0\\ 0 & -i\end{bmatrix}, j \otimes 1 \mapsto \begin{bmatrix}0 & 1\\-1 & 0\end{bmatrix}, k \otimes 1 \mapsto \begin{bmatrix}0 & i \\ i & 0\end{bmatrix}\]

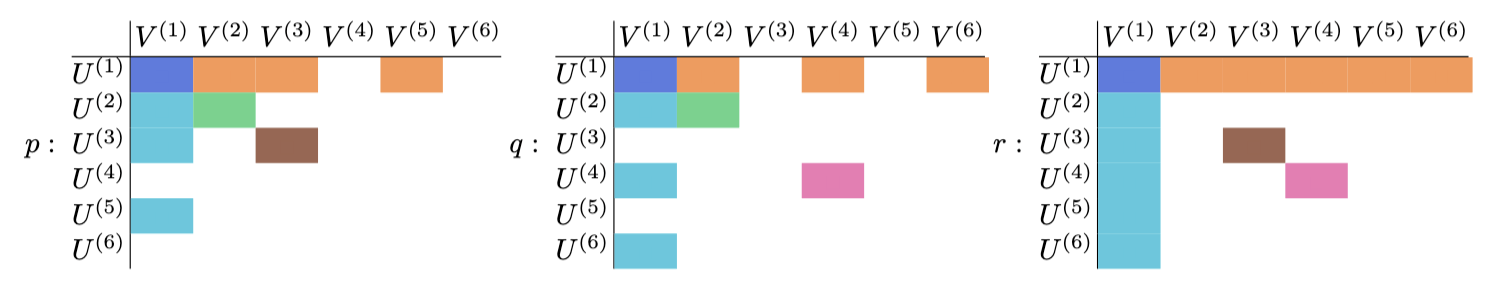

Define on multiplication tables

\(\frac{\mathbb{F}[y]}{y^2+5y+10} \)

\( \mathbb{F}[\sqrt{-1}] \)

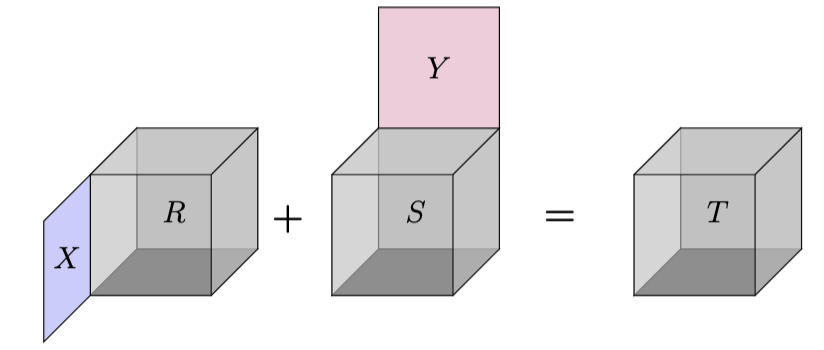

Extend to heterogeneous maps \( s: U \times V \rightarrowtail W \) and \(t: X \times Y \rightarrowtail Z \)

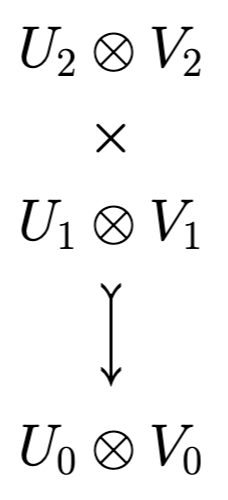

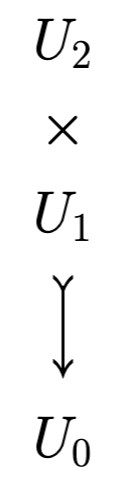

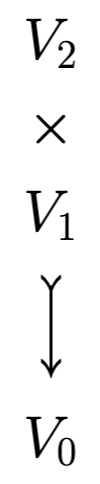

Let \( s: U \times V \rightarrowtail W \) and \(t: X \times Y \rightarrowtail Z \).

Define \( s \otimes t: (U \otimes X) \times (V \otimes Y) \rightarrowtail (W \otimes Z) \) as

\[ (s \otimes t)(u\otimes x, v \otimes y) = s(u,v) \otimes t(x,y) \]

Product of bilinear maps

Let \(s: \mathbb{F}^2 \times \mathbb{F} \rightarrowtail \mathbb{F}^2 \) and \( t: \mathbb{F} \times \mathbb{F}^3 \rightarrowtail \mathbb{F}^3 \) both be bilinear maps corresponding to scaling by \( \mathbb{F} \)

\( s \otimes t \) is a bilinear map from \( (\mathbb{F}^2 \otimes \mathbb{F}) \times (\mathbb{F} \otimes \mathbb{F}^3)\) to \((\mathbb{F}^2 \otimes \mathbb{F}^3) \)

\( s \otimes t \cong r \), for \(r: \mathbb{F}^2 \times \mathbb{F}^3 \rightarrowtail \mathbb{F}^6 \) the outer product tensor

Example

Axis action equalities

Let \(t: U_2 \times U_1 \rightarrowtail U_0\). For \(\alpha \in \operatorname{End}(U_2), \beta \in \operatorname{End}(U_1), \gamma \in \operatorname{End}(U_0)\), axis actions are

$$\mathcal{L}(t) = \{(\alpha, \gamma) : t \bullet_2 \alpha = t \bullet_0 \gamma \}$$

$$\mathcal{M}(t) = \{(\alpha, \beta) : t \bullet_2 \alpha = t \bullet_1 \beta \}$$

$$\mathcal{R}(t) = \{(\beta, \gamma) : t \bullet_1 \beta = t \bullet_0 \gamma \}$$

$$\mathcal{C}(t) = \{(\alpha, \beta, \gamma) : t \bullet_2 \alpha = t \bullet_1 \beta = t \bullet_0 \gamma \}$$

$$\operatorname{Der}(t) = \{(\alpha, \beta, \gamma) : t \bullet_2 \alpha + t \bullet_1 \beta = t \bullet_0 \gamma \}$$

left nucleus

mid nucleus (adjoint)

right nucleus

centroid

derivation

\( \mathcal{LMR}(t) \)

Results for nuclei

Definition (\( \boxtimes \))

Let \(\alpha_{\ast} = (\alpha_2, \alpha_1, \alpha_0) \), and \( f_{\ast} = (f_2, f_1, f_0) \), where \( \alpha_i \in \operatorname{End}(U_i) \), and \(f_i \in \operatorname{End}(V_i) \)

Corollary (Wilson Propositions 7.8 and 7.9, "Decomposing \(p\)-groups via Jordan Algebras")

Let \(d: U \times U \rightarrowtail C\) be a nondegenerate Hermitian \(C\)-form and \(b: V \times V \rightarrowtail W \) a \(k\)-bilinear map. Then \( \operatorname{Adj}(d \otimes b) = \operatorname{Adj}(d) \otimes \operatorname{Adj}(b) \).

Define \( \alpha_{\ast} \boxtimes f_{\ast} \coloneqq (\alpha_2 \otimes f_2, \alpha_1 \otimes f_1, \alpha_0 \otimes f_0) \), where \(\alpha_i \otimes f_i \in \operatorname{End}(U_i \otimes V_i) \)

Theorem C (L.)

Let \(s: U_2 \times U_1 \rightarrowtail U_0\) and \(t: V_2 \times V_1 \rightarrowtail V_0 \) be fully non-degenerate bilinear maps. Then

$$\mathcal{LMR}(s \otimes t) = \mathcal{LMR}(s) \boxtimes \mathcal{LMR}(t)$$

Recall \(\mathcal{LMR}(s) = \mathcal{L}(s) \oplus \mathcal{M}(s) \oplus \mathcal{R}(s) \)

Suppose \( (x,y) \in \mathcal{M}(s \otimes t) \), meaning \( (s \otimes t) \bullet_2 x = (s \otimes t) \bullet_1 y \)

Decompose \( x = \alpha_1 \otimes f_1 + \alpha_2 \otimes f_2 \) and \( y = \beta_1 \otimes g_1 + \beta_2 \otimes g_2 \)

\( \cong \)

\( \otimes \)

Recall \(x \in \operatorname{End}(U_2 \otimes V_2) \cong \operatorname{End}(U_2) \otimes \operatorname{End}(V_2) \)

\( (\tilde{\alpha}_i, \beta_i) \in \mathcal{M}(s) \)

\( (\tilde{f}_i, g_i) \in \mathcal{M}(t) \)

\(\exists M\) where \(AM = B\) and \(M^{-1}X = Y\)

\(AX = BY \) minimal rank factorizations

Upshot: global equality leads to local equalities

Field is \( \mathbb{F}_5 \)

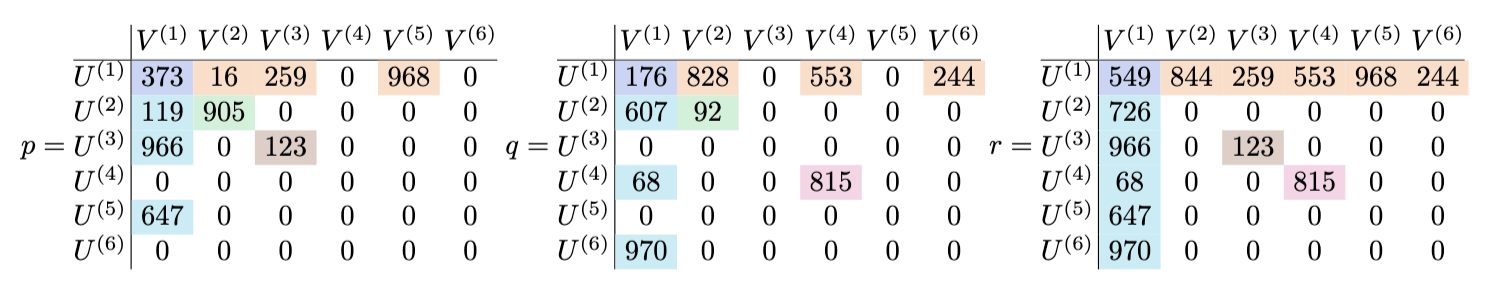

\( \operatorname{col}(A)=\operatorname{col}(AX)=\operatorname{col}(BY)=\operatorname{col}(B) \)

If all nonzero, then up to scalars, either

$$a=b=c \text{ and } x+y=z$$

or

$$a+b=c \text{ and } x=y=z$$

(Grad student's dream)

\( \operatorname{Der}(s \otimes t) = \operatorname{Der}(s) \boxtimes \operatorname{Der}(t) \)

But alas, not the case.

Consider \(a \otimes x + b \otimes y = c \otimes z\), a triple in \( \operatorname{Der}(s \otimes t) \)

In \( \operatorname{Der}(s) \boxtimes \operatorname{Der}(t) \) means that both

- \( (a,b,c) \in \operatorname{Der}(s) \), so \( (a+b=c) \)

- \( (x,y,z) \in \operatorname{Der}(t)\), so \( (x+y=z) \)

Why?

Must have shared factor for sum of two pure tensors to be rank 1

Instead, term in either \( \mathcal{C}(s) \boxtimes \operatorname{Der}(t) \) or \( \operatorname{Der}(s) \boxtimes \mathcal{C}(t) \)

The nuclei case applies when one term is zero

Not possible as \( c \otimes z = (a+b) \otimes (x + y) \) has cross terms

Theorem D (L.)

Let \(s: U_2 \times U_1 \rightarrowtail U_0\) and \(t: V_2 \times V_1 \rightarrowtail V_0 \) be fully non-degenerate bilinear maps.

Then

Results for derivation

Corollary (Benkart-Osborn, Corollary 4.9, Derivations and Automorphisms of Nonassociative Matrix Algebras)

Let \(A\) be a unital algebra. Then \( \operatorname{Der}(\mathbb{M}_n(A)) = I_n \otimes \operatorname{Der}(A) + \operatorname{ad}(\mathbb{M}_n(\operatorname{Nuc}(A))) \)

Recall for \(u \in \operatorname{End}(A)\), the adjoint action \(\operatorname{ad}(u) \in \operatorname{Der}(A) \) is \( x \mapsto ux - xu \)

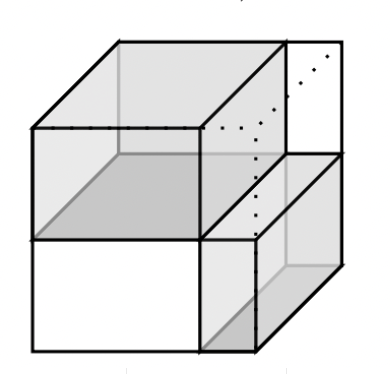

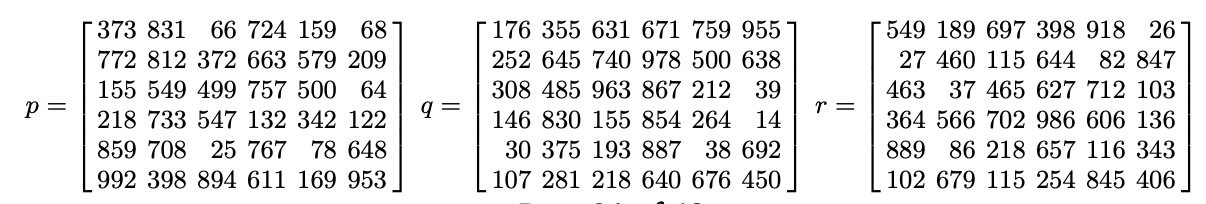

A Locally Independent Unified (LIU)-decomposition of \( (p,q,r) \) consists of a natural number \(n\) and vectors \(a_i \in A, x_i \in X, b_i \in B, y_i \in Y, c_i \in C, z_i \in Z\) such that

- (Decomposition Equality) \( p = \sum_{i=1}^{n} a_i \otimes x_i\), \(q = \sum_{i=1}^{n} b_i \otimes y_i \), and \(r = \sum_{i=1}^{n} c_i \otimes z_i \)

- (Local Equality) \(\forall i \in [n]\), \(a_i \otimes x_i + b_i \otimes y_i = c_i \otimes z_i \)

Key definition

Example

\(p+q=r\), with

Local equalities:

Definition

Let \(p \in A \otimes X \leq U \otimes V\), \(q \in B \otimes Y \leq U \otimes V\), and \(r \in C \otimes Z \leq U \otimes V\) satisfy \(p+q=r\).

Lemma: Let \( (p,q,r) \) be a triple satisfying \(p+q=r\). Then \( (p,q,r) \) has a LIU-decomposition.

Idea: Decompose \(U = \bigoplus_{i=1}^{6} U^{(i)} \) and \(V = \bigoplus_{i=1}^{6} V^{(i)} \) (e.g \(U^{(1)} = A \cap B \cap C \))

in aligned basis

\(p+q=r\) over \( \mathbb{F}_{997} \)

Fix to Grad Student's Dream

Theorem: There exists examples for \(p \in A \otimes X \leq U \otimes V\), \(q \in B \otimes Y \leq U \otimes V\), \(r \in C \otimes Z \leq U \otimes V\), and \(s \in D \otimes W \leq U \otimes V\) satisfying \(p+q+r\) without a LIU-decomposition.

Proof:

Work over \( \mathbb{F} = \mathbb{F}_5 \), and let \(U,V = \mathbb{F}^4 \), with standard bases

\( D = \text{span}\{e_1,e_2\} \leq U, W = \text{span}\{f_1,f_2\} \leq V \)

\( A = \text{span}\{e_1 + e_3, e_2 + e_4 \}, B =\text{span}\{e_1 - e_3, e_2 - e_4 \}, C = \text{span}\{e_1 + e_4, e_2 + 4e_3+ 4e_4 \} \)

\( X = \text{span}\{f_1 + f_3, f_2 + f_4 \}, Y =\text{span}\{f_1 - f_3, f_2 - f_4 \}, Z = \text{span}\{f_1 + 4f_4, f_2 + f_3+ 4f_4 \} \)

As notation, we shall denote \(A = \text{span}\{e_1+e_3 \eqqcolon a_1, e_2+e_4 \eqqcolon a_2\}, B = \text{span}\{b_1,b_2\}\), and so on

Then let

- \( p = a_1 \otimes x_1 + a_1 \otimes x_2 + 4 a_2 \otimes x_1 + 2 a_2 \otimes x_2 \)

- \( q = 3 b_1 \otimes y_1 + 2 b_1 \otimes y_2 + 3 b_2 \otimes y_1\)

- \( r = 2 c_1 \otimes z_1 + 2 c_1 \otimes z_2 + 3c_2 \otimes z_1 + 4 c_2 \otimes z_2 \)

- \( s = e_1 \otimes f_1 + e_2 \otimes f_2 \)

This quadruple satisfies \(p+q+r = s\), with \(p \in A \otimes X, q \in B \otimes Y, r \in C \otimes Z, s \in D \otimes W \), and no local equality exists because any nonzero element of \((A \otimes X + B \otimes Y + C \otimes Z) \cap (D \otimes W)\) is rank 2. (Magma calculation)

This example was created so elements in the intersection has to satisfy

And choosing \(J\) such that \(S \in \mathbb{F}[J] \) is invertible

SKIP DUE TO TIME

If \((x,y) \in \mathcal{M}(s \otimes t) \), the equation \( (s \otimes t)\bullet_2 x = (s \otimes t) \bullet_1 y \) is matrix equality by \( (*) \)

For \(AX = (s \otimes t) \bullet_2 x = (s \otimes t) \bullet_1 y = BY\) minimal rank factorizations, each column of \(A\) is in \(\operatorname{CS}(B) \), and each row of \(X\) is in \(\operatorname{RS}(Y)\)

Key idea for nuclei proof

Extras that I cut

Corollary (Brešar, Theorem 3.1, Derivations of Tensor Products of Nonassociative Algebras)

Let \(R\) and \(S\) be non-associative algebras.

Then every derivation of \(R \otimes S\) can be written as \(d = \operatorname{ad}(u) + \sum_{j=1}^{p} \lambda_{z_j} \otimes f_j + \sum_{i=1}^{q} g_i \otimes \lambda_{w_i} \), where \(u \in \operatorname{Nuc}(R) \otimes \operatorname{Nuc}(S) \), \(z_j \in Z(R)\), \(f_j \in \operatorname{Der}(S) \), and \(g_i \in \operatorname{Der}(R) \)

Corollary - \( \mathcal{C}(s \otimes t) = \mathcal{C}(s) \boxtimes \mathcal{C}(t) \)

In summary

- Tensors are multilinear maps whose algebraic invariants discern structure

- Theorem A, Theorem B - faster algorithm for adjoint and derivation

- Theorem C, Theorem D - decomposition theorems on the nuclei and derivation

\( I \)

\( J \)

\( M_R \)

\( M_S \)

- Algorithms for adjoint and derivation algebra of higher valence tensors (e.g trilinear maps)

- Weaken regular family and black-box restriction requirements using randomized computation model

- Decomposing the product of bilinear maps

Future directions

Questions?

- Tensors are multilinear maps whose algebraic invariants discern structure

- Theorem A, Theorem B - faster algorithm for adjoint and derivation

- Theorem C, Theorem D - decomposition theorems on the nuclei and derivation