Oracle 11gr2 RAC installation

Present By Mao

创建共享磁盘

cd C:\Program Files (x86)\VMware\VMware Workstation

vmware-vdiskmanager.exe -h

vmware-vdiskmanager.exe: unrecognized option 'h'

VMware Virtual Disk Manager - build 16341506.

Usage: vmware-vdiskmanager.exe OPTIONS <disk-name> | <mount-point>

Offline disk manipulation utility

Operations, only one may be specified at a time:

-c : create disk. Additional creation options must

be specified. Only local virtual disks can be

created.

-d : defragment the specified virtual disk. Only

local virtual disks may be defragmented.

-k : shrink the specified virtual disk. Only local

virtual disks may be shrunk.

-n <source-disk> : rename the specified virtual disk; need to

specify destination disk-name. Only local virtual

disks may be renamed.

-p : prepare the mounted virtual disk specified by

the mount point for shrinking.

-r <source-disk> : convert the specified disk; need to specify

destination disk-type. For local destination disks

the disk type must be specified.

-x <new-capacity> : expand the disk to the specified capacity. Only

local virtual disks may be expanded.

-R : check a sparse virtual disk for consistency and attempt

to repair any errors.

-e : check for disk chain consistency.

-D : make disk deletable. This should only be used on disks

that have been copied from another product.

-U : delete/unlink a single disk link.

Other Options:

-q : do not log messages

Additional options for create and convert:

-a <adapter> : (for use with -c only) adapter type

(ide, buslogic, lsilogic). Pass lsilogic for other adapter types.

-s <size> : capacity of the virtual disk

-t <disk-type> : disk type id

Disk types:

0 : single growable virtual disk

1 : growable virtual disk split into multiple files

2 : preallocated virtual disk

3 : preallocated virtual disk split into multiple files

4 : preallocated ESX-type virtual disk

5 : compressed disk optimized for streaming

6 : thin provisioned virtual disk - ESX 3.x and above

The capacity can be specified in sectors, KB, MB or GB.

The acceptable ranges:

ide/scsi adapter : [1MB, 8192.0GB]

buslogic adapter : [1MB, 2040.0GB]

ex 1: vmware-vdiskmanager.exe -c -s 850MB -a ide -t 0 myIdeDisk.vmdk

ex 2: vmware-vdiskmanager.exe -d myDisk.vmdk

ex 3: vmware-vdiskmanager.exe -r sourceDisk.vmdk -t 0 destinationDisk.vmdk

ex 4: vmware-vdiskmanager.exe -x 36GB myDisk.vmdk

ex 5: vmware-vdiskmanager.exe -n sourceName.vmdk destinationName.vmdk

ex 6: vmware-vdiskmanager.exe -k myDisk.vmdk

ex 7: vmware-vdiskmanager.exe -p <mount-point>

(A virtual disk first needs to be mounted at <mount-point>)

vmware-vdiskmanager.exe -c -s 2g -a lsilogic -t 2 D:\sharedisk\1.vmdk

vmware-vdiskmanager.exe -c -s 2g -a lsilogic -t 2 D:\sharedisk\2.vmdk

vmware-vdiskmanager.exe -c -s 2g -a lsilogic -t 2 D:\sharedisk\3.vmdk

vmware-vdiskmanager.exe -c -s 5g -a lsilogic -t 2 D:\sharedisk\4.vmdk

vmware-vdiskmanager.exe -c -s 10g -a lsilogic -t 2 D:\sharedisk\5.vmdk创建共享磁盘

disk.EnableUUID = "TRUE"

#shared disks configure

disk.locking = "FALSE"

diskLib.dataCacheMaxSize = "0"

diskLib.dataCacheMaxReadAheadSize = "0"

diskLib.dataCacheMinReadAheadSize = "0"

diskLib.maxUnsyncedWrites = "0"

scsi1.present = "TRUE"

scsi1.virtualDev = "lsilogic"

scsil.sharedBus = "VIRTUAL"

scsi1:0.present = "TRUE"

scsi1:0.mode = "independent-persistent"

scsi1:0.fileName = "D:\sharedisk\1.vmdk"

scsi1:0.deviceType = "disk"

scsi1:0.redo = ""

scsi1:1.present = "TRUE"

scsi1:1.mode = "independent-persistent"

scsi1:1.fileName = "D:\sharedisk\2.vmdk"

scsi1:1.deviceType = "disk"

scsi1:1.redo = ""

scsi1:2.present = "TRUE"

scsi1:2.mode = "independent-persistent"

scsi1:2.fileName = "D:\sharedisk\3.vmdk"

scsi1:2.deviceType = "disk"

scsi1:2.redo = ""

scsi1:3.present = "TRUE"

scsi1:3.mode = "independent-persistent"

scsi1:3.fileName = "D:\sharedisk\4.vmdk"

scsi1:3.deviceType = "disk"

scsi1:3.redo = ""

scsi1:4.present = "TRUE"

scsi1:4.mode = "independent-persistent"

scsi1:4.fileName = "D:\sharedisk\5.vmdk"

scsi1:4.deviceType = "disk"

scsi1:4.redo = ""编辑rhel7_node1.vmx、rhel7_node2.vmx,添加下列内容

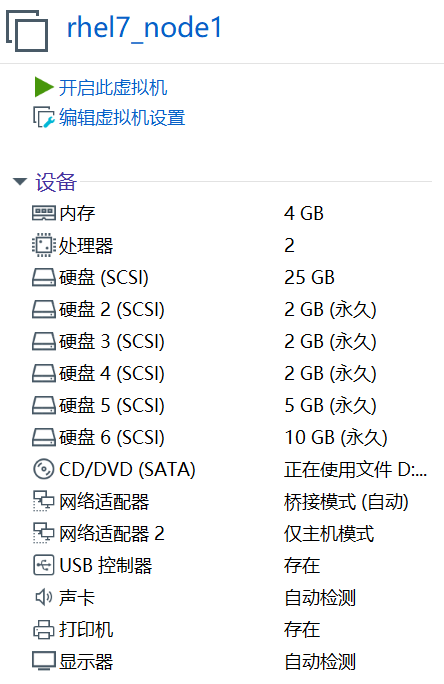

创建共享磁盘

重新打开虚拟机查看配置

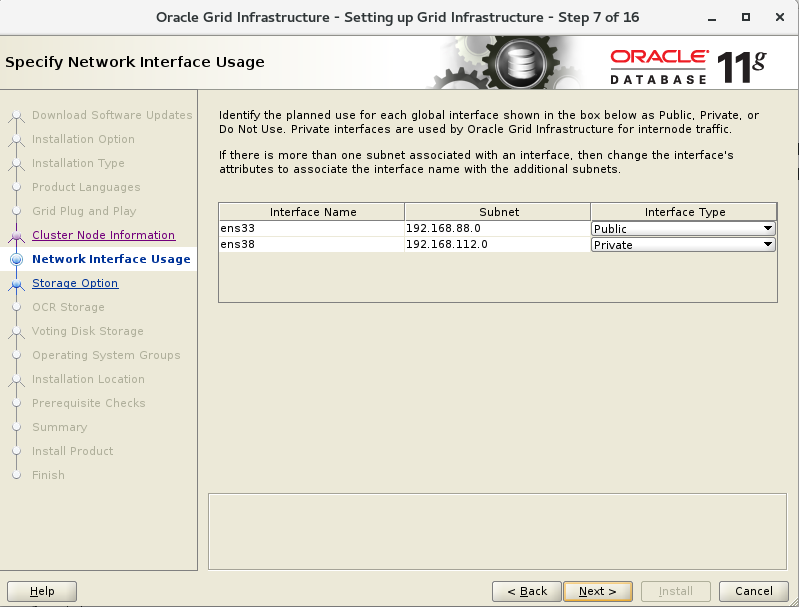

配置网络

规划IP:

#public:

192.168.88.100 rac-node1

192.168.88.101 rac-node2

#vip:

192.168.88.200 node1-vip

192.168.88.201 node2-vip

#priv

192.168.112.100 node1-priv

192.168.112.101 node2-priv

#SCAN

192.168.88.188 rac-scan

node1

[root@localhost ~]# nmcli dev status

DEVICE TYPE STATE CONNECTION

virbr0 bridge connected virbr0

ens33 ethernet disconnected --

ens38 ethernet disconnected --

lo loopback unmanaged --

virbr0-nic tun unmanaged --

[root@localhost ~]# nmcli con show

NAME UUID TYPE DEVICE

virbr0 99233361-c670-4ab1-b985-80bd28c5afb1 bridge virbr0

ens33 9c30f952-a463-4c2c-b426-db614fbcad5b 802-3-ethernet --

[root@localhost ~]# nmcli con mod ens33 ipv4.method manual ipv4.addr "192.168.88.100/24" ipv4.gateway "192.168.88.254"

[root@localhost ~]# nmcli con mod ens33 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens33

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/4)

[root@localhost ~]# nmcli con add con-name ens38 type ethernet ifname ens38 ipv4.addr "192.168.112.100/24" ipv4.method manual

Connection 'ens38' (bd25026a-0424-4f57-82d5-1799d33500a8) successfully added.

[root@localhost ~]# nmcli con mod ens38 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens38

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/11)

[root@localhost ~]# hostnamectl set-hostname rac-node1

[root@localhost ~]# hostnamectl status

Static hostname: rac-node1

Icon name: computer-vm

Chassis: vm

Machine ID: a193e7903a0342eb99e5f1976dacafa8

Boot ID: 3a5f1093fb0441d1b62d4c910fcce6ac

Virtualization: vmware

Operating System: Red Hat Enterprise Linux Server 7.4 (Maipo)

CPE OS Name: cpe:/o:redhat:enterprise_linux:7.4:GA:server

Kernel: Linux 3.10.0-693.el7.x86_64

Architecture: x86-64

[root@rac-node1 ~]# nmcli con show --active

NAME UUID TYPE DEVICE

ens33 9c30f952-a463-4c2c-b426-db614fbcad5b 802-3-ethernet ens33

ens38 bd25026a-0424-4f57-82d5-1799d33500a8 802-3-ethernet ens38

virbr0 c18767de-7a28-4e2a-a8e2-53ccfe51f840 bridge virbr0

[root@rac-node1 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:27:74:a9 brd ff:ff:ff:ff:ff:ff

inet 192.168.88.100/24 brd 192.168.88.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::5022:3ef6:26a:e13e/64 scope link

valid_lft forever preferred_lft forever

3: ens38: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:27:74:b3 brd ff:ff:ff:ff:ff:ff

inet 192.168.112.100/24 brd 192.168.112.255 scope global ens38

valid_lft forever preferred_lft forever

inet6 fe80::deb8:13d1:a129:453a/64 scope link

valid_lft forever preferred_lft forever

4: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000

link/ether 52:54:00:ed:ea:6d brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000

link/ether 52:54:00:ed:ea:6d brd ff:ff:ff:ff:ff:ff

node2

[root@localhost ~]# nmcli con mod ens33 ipv4.method manual ipv4.addr "192.168.88.101/24" ipv4.gateway "192.168.88.254"

[root@localhost ~]# nmcli con mod ens33 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens33

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/4)

[root@localhost ~]# nmcli con add con-name ens38 type ethernet ifname ens38 ipv4.addr "192.168.112.101/24" ipv4.method manual

Connection 'ens38' (83cf0af1-d33e-4490-b119-8bc5dc7ec72e) successfully added.

[root@localhost ~]# nmcli con mod ens38 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens38

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/6)

[root@localhost ~]# hostnamectl set-hostname rac-node2

[root@localhost ~]# hostnamectl status

Static hostname: rac-node2

Icon name: computer-vm

Chassis: vm

Machine ID: 0e963cbc7b0d41d78f8498d6de0d287b

Boot ID: 87bc5a0a71ba445ab5927a8ac4ce2d1d

Virtualization: vmware

Operating System: Red Hat Enterprise Linux Server 7.4 (Maipo)

CPE OS Name: cpe:/o:redhat:enterprise_linux:7.4:GA:server

Kernel: Linux 3.10.0-693.el7.x86_64

Architecture: x86-64

[root@rac-node2 ~]# nmcli con show --active

NAME UUID TYPE DEVICE

ens33 b51d0635-9b15-4777-901f-d1f8d4051a98 802-3-ethernet ens33

ens38 83cf0af1-d33e-4490-b119-8bc5dc7ec72e 802-3-ethernet ens38

virbr0 6b96df00-58c0-4a81-adb1-770b28a7bb50 bridge virbr0

[root@rac-node2 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:2f:d1:a3 brd ff:ff:ff:ff:ff:ff

inet 192.168.88.101/24 brd 192.168.88.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::1ef0:8abb:2b20:49c5/64 scope link

valid_lft forever preferred_lft forever

3: ens38: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:2f:d1:ad brd ff:ff:ff:ff:ff:ff

inet 192.168.112.101/24 brd 192.168.112.255 scope global ens38

valid_lft forever preferred_lft forever

inet6 fe80::bf46:70f9:d7bc:d04c/64 scope link

valid_lft forever preferred_lft forever

4: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000

link/ether 52:54:00:18:d6:64 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000

link/ether 52:54:00:18:d6:64 brd ff:ff:ff:ff:ff:ff

配置网络

node1

[root@localhost ~]# nmcli dev status

DEVICE TYPE STATE CONNECTION

virbr0 bridge connected virbr0

ens33 ethernet disconnected --

ens38 ethernet disconnected --

lo loopback unmanaged --

virbr0-nic tun unmanaged --

[root@localhost ~]# nmcli con show

NAME UUID TYPE DEVICE

virbr0 99233361-c670-4ab1-b985-80bd28c5afb1 bridge virbr0

ens33 9c30f952-a463-4c2c-b426-db614fbcad5b 802-3-ethernet --

[root@localhost ~]# nmcli con mod ens33 ipv4.method manual ipv4.addr "192.168.88.100/24" ipv4.gateway "192.168.88.254"

[root@localhost ~]# nmcli con mod ens33 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens33

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/4)

[root@localhost ~]# nmcli con add con-name ens38 type ethernet ifname ens38 ipv4.addr "192.168.112.100/24" ipv4.method manual

Connection 'ens38' (bd25026a-0424-4f57-82d5-1799d33500a8) successfully added.

[root@localhost ~]# nmcli con mod ens38 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens38

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/11)

[root@localhost ~]# hostnamectl set-hostname rac-node1

[root@localhost ~]# hostnamectl status

Static hostname: rac-node1

Icon name: computer-vm

Chassis: vm

Machine ID: a193e7903a0342eb99e5f1976dacafa8

Boot ID: 3a5f1093fb0441d1b62d4c910fcce6ac

Virtualization: vmware

Operating System: Red Hat Enterprise Linux Server 7.4 (Maipo)

CPE OS Name: cpe:/o:redhat:enterprise_linux:7.4:GA:server

Kernel: Linux 3.10.0-693.el7.x86_64

Architecture: x86-64

[root@rac-node1 ~]# nmcli con show --active

NAME UUID TYPE DEVICE

ens33 9c30f952-a463-4c2c-b426-db614fbcad5b 802-3-ethernet ens33

ens38 bd25026a-0424-4f57-82d5-1799d33500a8 802-3-ethernet ens38

virbr0 c18767de-7a28-4e2a-a8e2-53ccfe51f840 bridge virbr0

[root@rac-node1 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:27:74:a9 brd ff:ff:ff:ff:ff:ff

inet 192.168.88.100/24 brd 192.168.88.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::5022:3ef6:26a:e13e/64 scope link

valid_lft forever preferred_lft forever

3: ens38: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:27:74:b3 brd ff:ff:ff:ff:ff:ff

inet 192.168.112.100/24 brd 192.168.112.255 scope global ens38

valid_lft forever preferred_lft forever

inet6 fe80::deb8:13d1:a129:453a/64 scope link

valid_lft forever preferred_lft forever

4: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000

link/ether 52:54:00:ed:ea:6d brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000

link/ether 52:54:00:ed:ea:6d brd ff:ff:ff:ff:ff:ff

node2

[root@localhost ~]# nmcli con mod ens33 ipv4.method manual ipv4.addr "192.168.88.101/24" ipv4.gateway "192.168.88.254"

[root@localhost ~]# nmcli con mod ens33 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens33

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/4)

[root@localhost ~]# nmcli con add con-name ens38 type ethernet ifname ens38 ipv4.addr "192.168.112.101/24" ipv4.method manual

Connection 'ens38' (83cf0af1-d33e-4490-b119-8bc5dc7ec72e) successfully added.

[root@localhost ~]# nmcli con mod ens38 connection.autoconnect yes

[root@localhost ~]# nmcli con up ens38

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/6)

[root@localhost ~]# hostnamectl set-hostname rac-node2

[root@localhost ~]# hostnamectl status

Static hostname: rac-node2

Icon name: computer-vm

Chassis: vm

Machine ID: 0e963cbc7b0d41d78f8498d6de0d287b

Boot ID: 87bc5a0a71ba445ab5927a8ac4ce2d1d

Virtualization: vmware

Operating System: Red Hat Enterprise Linux Server 7.4 (Maipo)

CPE OS Name: cpe:/o:redhat:enterprise_linux:7.4:GA:server

Kernel: Linux 3.10.0-693.el7.x86_64

Architecture: x86-64

[root@rac-node2 ~]# nmcli con show --active

NAME UUID TYPE DEVICE

ens33 b51d0635-9b15-4777-901f-d1f8d4051a98 802-3-ethernet ens33

ens38 83cf0af1-d33e-4490-b119-8bc5dc7ec72e 802-3-ethernet ens38

virbr0 6b96df00-58c0-4a81-adb1-770b28a7bb50 bridge virbr0

[root@rac-node2 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:2f:d1:a3 brd ff:ff:ff:ff:ff:ff

inet 192.168.88.101/24 brd 192.168.88.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::1ef0:8abb:2b20:49c5/64 scope link

valid_lft forever preferred_lft forever

3: ens38: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:2f:d1:ad brd ff:ff:ff:ff:ff:ff

inet 192.168.112.101/24 brd 192.168.112.255 scope global ens38

valid_lft forever preferred_lft forever

inet6 fe80::bf46:70f9:d7bc:d04c/64 scope link

valid_lft forever preferred_lft forever

4: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000

link/ether 52:54:00:18:d6:64 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000

link/ether 52:54:00:18:d6:64 brd ff:ff:ff:ff:ff:ff

关闭selinux

[root@rac-node1 ~]# getenforce

Enforcing

[root@rac-node1 ~]# setenforce 0

[root@rac-node1 ~]# getenforce

Permissive

[root@rac-node1 ~]# vim /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=disabled

# SELINUXTYPE= can take one of three two values:

# targeted - Targeted processes are protected,

# minimum - Modification of targeted policy. Only selected processes are protected.

# mls - Multi Level Security protection.

SELINUXTYPE=targeted关闭firewalld

[root@rac-node1 ~]# systemctl stop firewalld

[root@rac-node1 ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@rac-node1 ~]# ping -I ens33 -c 3 192.168.88.101

PING 192.168.88.101 (192.168.88.101) from 192.168.88.100 ens33: 56(84) bytes of data.

64 bytes from 192.168.88.101: icmp_seq=1 ttl=64 time=0.327 ms

64 bytes from 192.168.88.101: icmp_seq=2 ttl=64 time=0.825 ms

64 bytes from 192.168.88.101: icmp_seq=3 ttl=64 time=0.827 ms

--- 192.168.88.101 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2000ms

rtt min/avg/max/mdev = 0.327/0.659/0.827/0.237 ms

[root@rac-node1 ~]# ping -I ens38 -c 3 192.168.112.101

PING 192.168.112.101 (192.168.112.101) from 192.168.112.100 ens38: 56(84) bytes of data.

64 bytes from 192.168.112.101: icmp_seq=1 ttl=64 time=0.761 ms

64 bytes from 192.168.112.101: icmp_seq=2 ttl=64 time=1.14 ms

64 bytes from 192.168.112.101: icmp_seq=3 ttl=64 time=0.981 ms

--- 192.168.112.101 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2002ms

rtt min/avg/max/mdev = 0.761/0.963/1.147/0.158 ms

[root@rac-node2 ~]# systemctl stop firewalld

[root@rac-node2 ~]# systemctl disable firewalld.service

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@rac-node2 ~]# ping -I ens33 -c 3 192.168.88.100

PING 192.168.88.100 (192.168.88.100) from 192.168.88.101 ens33: 56(84) bytes of data.

64 bytes from 192.168.88.100: icmp_seq=1 ttl=64 time=0.427 ms

64 bytes from 192.168.88.100: icmp_seq=2 ttl=64 time=1.43 ms

64 bytes from 192.168.88.100: icmp_seq=3 ttl=64 time=0.587 ms

--- 192.168.88.100 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2002ms

rtt min/avg/max/mdev = 0.427/0.815/1.431/0.440 ms

[root@rac-node2 ~]# ping -I ens38 -c 3 192.168.112.100

PING 192.168.112.100 (192.168.112.100) from 192.168.112.101 ens38: 56(84) bytes of data.

64 bytes from 192.168.112.100: icmp_seq=1 ttl=64 time=0.564 ms

64 bytes from 192.168.112.100: icmp_seq=2 ttl=64 time=1.69 ms

64 bytes from 192.168.112.100: icmp_seq=3 ttl=64 time=0.956 ms

--- 192.168.112.100 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2002ms

rtt min/avg/max/mdev = 0.564/1.072/1.698/0.471 ms

创建组、用户、目录

[root@rac-node1 ~]# tail -5 /etc/group

stapusr:x:156:

stapsys:x:157:

stapdev:x:158:

tcpdump:x:72:

mtj:x:1000:

[root@rac-node1 ~]# tail -5 /etc/passwd

avahi:x:70:70:Avahi mDNS/DNS-SD Stack:/var/run/avahi-daemon:/sbin/nologin

postfix:x:89:89::/var/spool/postfix:/sbin/nologin

ntp:x:38:38::/etc/ntp:/sbin/nologin

tcpdump:x:72:72::/:/sbin/nologin

mtj:x:1000:1000:mtj:/home/mtj:/bin/bash

[root@rac-node1 ~]# groupadd -g 1001 oinstall

[root@rac-node1 ~]# groupadd -g 1020 asmadmin

[root@rac-node1 ~]# groupadd -g 1021 asmdba

[root@rac-node1 ~]# groupadd -g 1022 asmoper

[root@rac-node1 ~]# groupadd -g 1031 dba

[root@rac-node1 ~]# groupadd -g 1032 oper

[root@rac-node1 ~]# useradd -g oinstall -u 1001 -G asmdba,asmadmin,asmoper,dba grid

[root@rac-node1 ~]# useradd -g oinstall -u 1002 -G dba,oper,asmdba oracle

[root@rac-node1 ~]# passwd grid

Changing password for user grid.

New password:

BAD PASSWORD: The password is shorter than 8 characters

Retype new password:

passwd: all authentication tokens updated successfully.

[root@rac-node1 ~]# passwd oracle

Changing password for user oracle.

New password:

BAD PASSWORD: The password is shorter than 8 characters

Retype new password:

passwd: all authentication tokens updated successfully.

[root@rac-node1 ~]# mkdir -p /u01/app/grid

[root@rac-node1 ~]# mkdir -p /u01/app/gridbase

[root@rac-node1 ~]# mkdir -p /u01/app/oracle

[root@rac-node1 ~]# chown -R grid:oinstall /u01/

[root@rac-node1 ~]# chown oracle:oinstall /u01/app/oracle/

[root@rac-node1 ~]# chmod -R 755 /u01/

[root@rac-node2 ~]# tail -5 /etc/group

stapusr:x:156:

stapsys:x:157:

stapdev:x:158:

tcpdump:x:72:

mtj:x:1000:

[root@rac-node2 ~]# tail -5 /etc/passwd

avahi:x:70:70:Avahi mDNS/DNS-SD Stack:/var/run/avahi-daemon:/sbin/nologin

postfix:x:89:89::/var/spool/postfix:/sbin/nologin

ntp:x:38:38::/etc/ntp:/sbin/nologin

tcpdump:x:72:72::/:/sbin/nologin

mtj:x:1000:1000:mtj:/home/mtj:/bin/bash

[root@rac-node2 ~]# groupadd -g 1001 oinstall

[root@rac-node2 ~]# groupadd -g 1020 asmadmin

[root@rac-node2 ~]# groupadd -g 1021 asmdba

[root@rac-node2 ~]# groupadd -g 1022 asmoper

[root@rac-node2 ~]# groupadd -g 1031 dba

[root@rac-node2 ~]# groupadd -g 1032 oper

[root@rac-node2 ~]# useradd -g oinstall -u 1001 -G asmdba,asmadmin,asmoper,dba grid

[root@rac-node2 ~]# useradd -g oinstall -u 1002 -G dba,oper,asmdba oracle

[root@rac-node2 ~]# passwd grid

Changing password for user grid.

New password:

BAD PASSWORD: The password is shorter than 8 characters

Retype new password:

passwd: all authentication tokens updated successfully.

[root@rac-node2 ~]# passwd oracle

Changing password for user oracle.

New password:

BAD PASSWORD: The password is shorter than 8 characters

Retype new password:

passwd: all authentication tokens updated successfully.

[root@rac-node2 ~]# mkdir -p /u01/app/grid

[root@rac-node2 ~]# mkdir -p /u01/app/gridbase

[root@rac-node2 ~]# mkdir -p /u01/app/oracle

[root@rac-node2 ~]# chown -R grid:oinstall /u01/

[root@rac-node2 ~]# chown oracle:oinstall /u01/app/oracle/

[root@rac-node2 ~]# chmod -R 755 /u01/

安装依赖软件包

| Item | Requirements |

|---|---|

| Oracle Linux 7 and Red Hat Enterprise Linux 7 |

The following packages (or later versions) must be installed: binutils-2.23.52.0.1-12.el7.x86_64 compat-libcap1-1.10-3.el7.x86_64 gcc-4.8.2-3.el7.x86_64 gcc-c++-4.8.2-3.el7.x86_64 glibc-2.17-36.el7.i686 glibc-2.17-36.el7.x86_64 glibc-devel-2.17-36.el7.i686 glibc-devel-2.17-36.el7.x86_64 ksh libaio-0.3.109-9.el7.i686 libaio-0.3.109-9.el7.x86_64 libaio-devel-0.3.109-9.el7.i686 libaio-devel-0.3.109-9.el7.x86_64 libgcc-4.8.2-3.el7.i686 libgcc-4.8.2-3.el7.x86_64 libstdc++-4.8.2-3.el7.i686 libstdc++-4.8.2-3.el7.x86_64 libstdc++-devel-4.8.2-3.el7.i686 libstdc++-devel-4.8.2-3.el7.x86_64 libXi-1.7.2-1.el7.i686 libXi-1.7.2-1.el7.x86_64 libXtst-1.2.2-1.el7.i686 libXtst-1.2.2-1.el7.x86_64 make-3.82-19.el7.x86_64 sysstat-10.1.5-1.el7.x86_64 |

- Linux x86-64 Oracle Grid Infrastructure and Oracle RAC Package Requirements

安装依赖软件包

- 配置YUM源

[root@rac-node2 ~]# cd /etc/yum.repos.d/

[root@rac-node2 yum.repos.d]# vim iso.repo

[iso]

name=iso

baseurl=file:///run/media/root/RHEL-7.4\ Server.x86_64/

enable=1

gpgcheck=0

[root@rac-node2 yum.repos.d]# yum repolist

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

iso | 4.1 kB 00:00:00

(1/2): iso/group_gz | 137 kB 00:00:00

(2/2): iso/primary_db | 4.0 MB 00:00:00

repo id repo name status

iso iso 4,986

repolist: 4,986

安装依赖软件包

- 安装系统软件包

[root@rac-node2 yum.repos.d]# yum -y install binutils.x86_64 compat-libcap1.x86_64 gcc.x86_64 gcc-c++.x86_64 glibc.i686 glibc.x86_64 glibc-devel.i686 glibc-devel.x86_64 kshlibaio.i686 libaio.x86_64 libaio-devel.i686 libaio-devel.x86_64 libgcc.i686 libgcc.x86_64 libstdc++.i686 libstdc++.x86_64 libstdc++-devel.i686 libstdc++-devel.x86_64 libXi.i686 libXi.x86_64 libXtst.i686 libXtst.x86_64 make.x86_64 sysstat.x86_64

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Package binutils-2.25.1-31.base.el7.x86_64 already installed and latest version

Package glibc-2.17-196.el7.x86_64 already installed and latest version

No package kshlibaio.i686 available.

Package libaio-0.3.109-13.el7.x86_64 already installed and latest version

Package libgcc-4.8.5-16.el7.x86_64 already installed and latest version

Package libstdc++-4.8.5-16.el7.x86_64 already installed and latest version

Package libXi-1.7.9-1.el7.x86_64 already installed and latest version

Package libXtst-1.2.3-1.el7.x86_64 already installed and latest version

Package 1:make-3.82-23.el7.x86_64 already installed and latest version

Package sysstat-10.1.5-12.el7.x86_64 already installed and latest version

Resolving Dependencies

--> Running transaction check

---> Package compat-libcap1.x86_64 0:1.10-7.el7 will be installed

---> Package gcc.x86_64 0:4.8.5-16.el7 will be installed

--> Processing Dependency: cpp = 4.8.5-16.el7 for package: gcc-4.8.5-16.el7.x86_64

--> Processing Dependency: libmpc.so.3()(64bit) for package: gcc-4.8.5-16.el7.x86_64

---> Package gcc-c++.x86_64 0:4.8.5-16.el7 will be installed

---> Package glibc.i686 0:2.17-196.el7 will be installed

--> Processing Dependency: libfreebl3.so for package: glibc-2.17-196.el7.i686

--> Processing Dependency: libfreebl3.so(NSSRAWHASH_3.12.3) for package: glibc-2.17-196.el7.i686

---> Package glibc-devel.i686 0:2.17-196.el7 will be installed

--> Processing Dependency: glibc-headers = 2.17-196.el7 for package: glibc-devel-2.17-196.el7.i686

--> Processing Dependency: glibc-headers for package: glibc-devel-2.17-196.el7.i686

---> Package glibc-devel.x86_64 0:2.17-196.el7 will be installed

---> Package libXi.i686 0:1.7.9-1.el7 will be installed

--> Processing Dependency: libX11.so.6 for package: libXi-1.7.9-1.el7.i686

--> Processing Dependency: libXext.so.6 for package: libXi-1.7.9-1.el7.i686

---> Package libXtst.i686 0:1.2.3-1.el7 will be installed

---> Package libaio-devel.i686 0:0.3.109-13.el7 will be installed

--> Processing Dependency: libaio(x86-32) = 0.3.109-13.el7 for package: libaio-devel-0.3.109-13.el7.i686

---> Package libaio-devel.x86_64 0:0.3.109-13.el7 will be installed

---> Package libgcc.i686 0:4.8.5-16.el7 will be installed

---> Package libstdc++.i686 0:4.8.5-16.el7 will be installed

---> Package libstdc++-devel.i686 0:4.8.5-16.el7 will be installed

---> Package libstdc++-devel.x86_64 0:4.8.5-16.el7 will be installed

--> Running transaction check

---> Package cpp.x86_64 0:4.8.5-16.el7 will be installed

---> Package glibc-headers.x86_64 0:2.17-196.el7 will be installed

--> Processing Dependency: kernel-headers >= 2.2.1 for package: glibc-headers-2.17-196.el7.x86_64

--> Processing Dependency: kernel-headers for package: glibc-headers-2.17-196.el7.x86_64

---> Package libX11.i686 0:1.6.5-1.el7 will be installed

--> Processing Dependency: libxcb.so.1 for package: libX11-1.6.5-1.el7.i686

---> Package libXext.i686 0:1.3.3-3.el7 will be installed

---> Package libaio.i686 0:0.3.109-13.el7 will be installed

---> Package libmpc.x86_64 0:1.0.1-3.el7 will be installed

---> Package nss-softokn-freebl.i686 0:3.28.3-6.el7 will be installed

--> Running transaction check

---> Package kernel-headers.x86_64 0:3.10.0-693.el7 will be installed

---> Package libxcb.i686 0:1.12-1.el7 will be installed

--> Processing Dependency: libXau.so.6 for package: libxcb-1.12-1.el7.i686

--> Running transaction check

---> Package libXau.i686 0:1.0.8-2.1.el7 will be installed

--> Finished Dependency Resolution

Dependencies Resolved

===============================================================================================================================

Package Arch Version Repository Size

===============================================================================================================================

Installing:

compat-libcap1 x86_64 1.10-7.el7 iso 19 k

gcc x86_64 4.8.5-16.el7 iso 16 M

gcc-c++ x86_64 4.8.5-16.el7 iso 7.2 M

glibc i686 2.17-196.el7 iso 4.2 M

glibc-devel i686 2.17-196.el7 iso 1.1 M

glibc-devel x86_64 2.17-196.el7 iso 1.1 M

libXi i686 1.7.9-1.el7 iso 40 k

libXtst i686 1.2.3-1.el7 iso 20 k

libaio-devel i686 0.3.109-13.el7 iso 13 k

libaio-devel x86_64 0.3.109-13.el7 iso 13 k

libgcc i686 4.8.5-16.el7 iso 106 k

libstdc++ i686 4.8.5-16.el7 iso 314 k

libstdc++-devel i686 4.8.5-16.el7 iso 1.5 M

libstdc++-devel x86_64 4.8.5-16.el7 iso 1.5 M

Installing for dependencies:

cpp x86_64 4.8.5-16.el7 iso 5.9 M

glibc-headers x86_64 2.17-196.el7 iso 675 k

kernel-headers x86_64 3.10.0-693.el7 iso 6.0 M

libX11 i686 1.6.5-1.el7 iso 610 k

libXau i686 1.0.8-2.1.el7 iso 29 k

libXext i686 1.3.3-3.el7 iso 39 k

libaio i686 0.3.109-13.el7 iso 24 k

libmpc x86_64 1.0.1-3.el7 iso 51 k

libxcb i686 1.12-1.el7 iso 227 k

nss-softokn-freebl i686 3.28.3-6.el7 iso 198 k

Transaction Summary

===============================================================================================================================

Install 14 Packages (+10 Dependent packages)

Total download size: 47 M

Installed size: 111 M

Downloading packages:

-------------------------------------------------------------------------------------------------------------------------------

Total 64 MB/s | 47 MB 00:00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : kernel-headers-3.10.0-693.el7.x86_64 1/24

Installing : libstdc++-devel-4.8.5-16.el7.x86_64 2/24

Installing : libgcc-4.8.5-16.el7.i686 3/24

Installing : libaio-devel-0.3.109-13.el7.x86_64 4/24

Installing : glibc-2.17-196.el7.i686 5/24

Installing : nss-softokn-freebl-3.28.3-6.el7.i686 6/24

Installing : libmpc-1.0.1-3.el7.x86_64 7/24

Installing : glibc-headers-2.17-196.el7.x86_64 8/24

Installing : glibc-devel-2.17-196.el7.x86_64 9/24

Installing : cpp-4.8.5-16.el7.x86_64 10/24

Installing : gcc-4.8.5-16.el7.x86_64 11/24

Installing : glibc-devel-2.17-196.el7.i686 12/24

Installing : compat-libcap1-1.10-7.el7.x86_64 13/24

Installing : libstdc++-4.8.5-16.el7.i686 14/24

Installing : libXau-1.0.8-2.1.el7.i686 15/24

Installing : libxcb-1.12-1.el7.i686 16/24

Installing : libX11-1.6.5-1.el7.i686 17/24

Installing : libXext-1.3.3-3.el7.i686 18/24

Installing : libXi-1.7.9-1.el7.i686 19/24

Installing : libaio-0.3.109-13.el7.i686 20/24

Installing : libaio-devel-0.3.109-13.el7.i686 21/24

Installing : gcc-c++-4.8.5-16.el7.x86_64 22/24

Installing : libstdc++-devel-4.8.5-16.el7.i686 23/24

Installing : libXtst-1.2.3-1.el7.i686 24/24

iso/productid | 1.6 kB 00:00:00

Verifying : libXext-1.3.3-3.el7.i686 1/24

Verifying : libaio-devel-0.3.109-13.el7.i686 2/24

Verifying : libXtst-1.2.3-1.el7.i686 3/24

Verifying : libX11-1.6.5-1.el7.i686 4/24

Verifying : gcc-4.8.5-16.el7.x86_64 5/24

Verifying : libstdc++-4.8.5-16.el7.i686 6/24

Verifying : libXi-1.7.9-1.el7.i686 7/24

Verifying : libstdc++-devel-4.8.5-16.el7.x86_64 8/24

Verifying : libXau-1.0.8-2.1.el7.i686 9/24

Verifying : libaio-0.3.109-13.el7.i686 10/24

Verifying : glibc-devel-2.17-196.el7.x86_64 11/24

Verifying : nss-softokn-freebl-3.28.3-6.el7.i686 12/24

Verifying : libxcb-1.12-1.el7.i686 13/24

Verifying : glibc-headers-2.17-196.el7.x86_64 14/24

Verifying : glibc-devel-2.17-196.el7.i686 15/24

Verifying : gcc-c++-4.8.5-16.el7.x86_64 16/24

Verifying : libgcc-4.8.5-16.el7.i686 17/24

Verifying : compat-libcap1-1.10-7.el7.x86_64 18/24

Verifying : libaio-devel-0.3.109-13.el7.x86_64 19/24

Verifying : libmpc-1.0.1-3.el7.x86_64 20/24

Verifying : cpp-4.8.5-16.el7.x86_64 21/24

Verifying : libstdc++-devel-4.8.5-16.el7.i686 22/24

Verifying : kernel-headers-3.10.0-693.el7.x86_64 23/24

Verifying : glibc-2.17-196.el7.i686 24/24

Installed:

compat-libcap1.x86_64 0:1.10-7.el7 gcc.x86_64 0:4.8.5-16.el7 gcc-c++.x86_64 0:4.8.5-16.el7

glibc.i686 0:2.17-196.el7 glibc-devel.i686 0:2.17-196.el7 glibc-devel.x86_64 0:2.17-196.el7

libXi.i686 0:1.7.9-1.el7 libXtst.i686 0:1.2.3-1.el7 libaio-devel.i686 0:0.3.109-13.el7

libaio-devel.x86_64 0:0.3.109-13.el7 libgcc.i686 0:4.8.5-16.el7 libstdc++.i686 0:4.8.5-16.el7

libstdc++-devel.i686 0:4.8.5-16.el7 libstdc++-devel.x86_64 0:4.8.5-16.el7

Dependency Installed:

cpp.x86_64 0:4.8.5-16.el7 glibc-headers.x86_64 0:2.17-196.el7 kernel-headers.x86_64 0:3.10.0-693.el7

libX11.i686 0:1.6.5-1.el7 libXau.i686 0:1.0.8-2.1.el7 libXext.i686 0:1.3.3-3.el7

libaio.i686 0:0.3.109-13.el7 libmpc.x86_64 0:1.0.1-3.el7 libxcb.i686 0:1.12-1.el7

nss-softokn-freebl.i686 0:3.28.3-6.el7

Complete!

[root@rac-node2 yum.repos.d]# yum -y install binutils.x86_64 compat-libcap1.x86_64 gcc.x86_64 gcc-c++.x86_64 glibc.i686 glibc.x86_64 glibc-devel.i686 glibc-devel.x86_64 ksh libaio.i686 libaio.x86_64 libaio-devel.i686 libaio-devel.x86_64 libgcc.i686 libgcc.x86_64 libstdc++.i686 libstdc++.x86_64 libstdc++-devel.i686 libstdc++-devel.x86_64 libXi.i686 libXi.x86_64 libXtst.i686 libXtst.x86_64 make.x86_64 sysstat.x86_64

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Package binutils-2.25.1-31.base.el7.x86_64 already installed and latest version

Package compat-libcap1-1.10-7.el7.x86_64 already installed and latest version

Package gcc-4.8.5-16.el7.x86_64 already installed and latest version

Package gcc-c++-4.8.5-16.el7.x86_64 already installed and latest version

Package glibc-2.17-196.el7.i686 already installed and latest version

Package glibc-2.17-196.el7.x86_64 already installed and latest version

Package glibc-devel-2.17-196.el7.i686 already installed and latest version

Package glibc-devel-2.17-196.el7.x86_64 already installed and latest version

Package libaio-0.3.109-13.el7.i686 already installed and latest version

Package libaio-0.3.109-13.el7.x86_64 already installed and latest version

Package libaio-devel-0.3.109-13.el7.i686 already installed and latest version

Package libaio-devel-0.3.109-13.el7.x86_64 already installed and latest version

Package libgcc-4.8.5-16.el7.i686 already installed and latest version

Package libgcc-4.8.5-16.el7.x86_64 already installed and latest version

Package libstdc++-4.8.5-16.el7.i686 already installed and latest version

Package libstdc++-4.8.5-16.el7.x86_64 already installed and latest version

Package libstdc++-devel-4.8.5-16.el7.i686 already installed and latest version

Package libstdc++-devel-4.8.5-16.el7.x86_64 already installed and latest version

Package libXi-1.7.9-1.el7.i686 already installed and latest version

Package libXi-1.7.9-1.el7.x86_64 already installed and latest version

Package libXtst-1.2.3-1.el7.i686 already installed and latest version

Package libXtst-1.2.3-1.el7.x86_64 already installed and latest version

Package 1:make-3.82-23.el7.x86_64 already installed and latest version

Package sysstat-10.1.5-12.el7.x86_64 already installed and latest version

Resolving Dependencies

--> Running transaction check

---> Package ksh.x86_64 0:20120801-34.el7 will be installed

--> Finished Dependency Resolution

Dependencies Resolved

===============================================================================================================================

Package Arch Version Repository Size

===============================================================================================================================

Installing:

ksh x86_64 20120801-34.el7 iso 883 k

Transaction Summary

===============================================================================================================================

Install 1 Package

Total download size: 883 k

Installed size: 3.1 M

Downloading packages:

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : ksh-20120801-34.el7.x86_64 1/1

Verifying : ksh-20120801-34.el7.x86_64 1/1

Installed:

ksh.x86_64 0:20120801-34.el7

Complete!

安装依赖软件包

- 安装ASMLIB软件包

[root@rac-node2 soft]# yum -y install kmod-oracleasm

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Resolving Dependencies

--> Running transaction check

---> Package kmod-oracleasm.x86_64 0:2.0.8-19.el7 will be installed

--> Finished Dependency Resolution

Dependencies Resolved

===============================================================================================================================

Package Arch Version Repository Size

===============================================================================================================================

Installing:

kmod-oracleasm x86_64 2.0.8-19.el7 iso 34 k

Transaction Summary

===============================================================================================================================

Install 1 Package

Total download size: 34 k

Installed size: 119 k

Downloading packages:

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : kmod-oracleasm-2.0.8-19.el7.x86_64 1/1

Verifying : kmod-oracleasm-2.0.8-19.el7.x86_64 1/1

Installed:

kmod-oracleasm.x86_64 0:2.0.8-19.el7

Complete!

[root@rac-node2 soft]# rpm -ivh oracleasmlib-2.0.12-1.el7.x86_64.rpm

warning: oracleasmlib-2.0.12-1.el7.x86_64.rpm: Header V3 RSA/SHA256 Signature, key ID ec551f03: NOKEY

Preparing... ################################# [100%]

Updating / installing...

1:oracleasmlib-2.0.12-1.el7 ################################# [100%]

[root@rac-node2 soft]# rpm -ivh oracleasm-support-2.1.11-2.el7.x86_64.rpm

warning: oracleasm-support-2.1.11-2.el7.x86_64.rpm: Header V3 RSA/SHA256 Signature, key ID ec551f03: NOKEY

Preparing... ################################# [100%]

Updating / installing...

1:oracleasm-support-2.1.11-2.el7 ################################# [100%]

Note: Forwarding request to 'systemctl enable oracleasm.service'.

Created symlink from /etc/systemd/system/multi-user.target.wants/oracleasm.service to /usr/lib/systemd/system/oracleasm.service.在OTN查找关于ASMLIB的下载资源,下载对应版本的ASMLIB包

Oracle ASMLib Downloads for Red Hat Enterprise Linux 7

-kmod-oracleasm(The kernel driver package is available directly from Red Hat)

-oracleasmlib-2.0.12-1.el7.x86_64.rpm

-oracleasm-support-2.1.11-2.el7.x86_64.rpm

设置环境变量

- grid用户

[root@rac-node1 yum.repos.d]# su - grid

[grid@rac-node1 ~]$ vim .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/.local/bin:$HOME/bin

export PATH

export ORACLE_SID=+ASM1

export ORACLE_BASE=/u01/app/gridbase

export ORACLE_HOME=/u01/app/grid

export PATH=$ORACLE_HOME/bin:$PATH

export NLS_LANG=AMERICAN_AMERICA.UTF8

umask 022[root@rac-node2 yum.repos.d]# su - grid

[grid@rac-node2 ~]$ vim .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/.local/bin:$HOME/bin

export PATH

export ORACLE_SID=+ASM2

export ORACLE_BASE=/u01/app/gridbase

export ORACLE_HOME=/u01/app/grid

export PATH=$ORACLE_HOME/bin:$PATH

export NLS_LANG=AMERICAN_AMERICA.UTF8

umask 022

设置环境变量

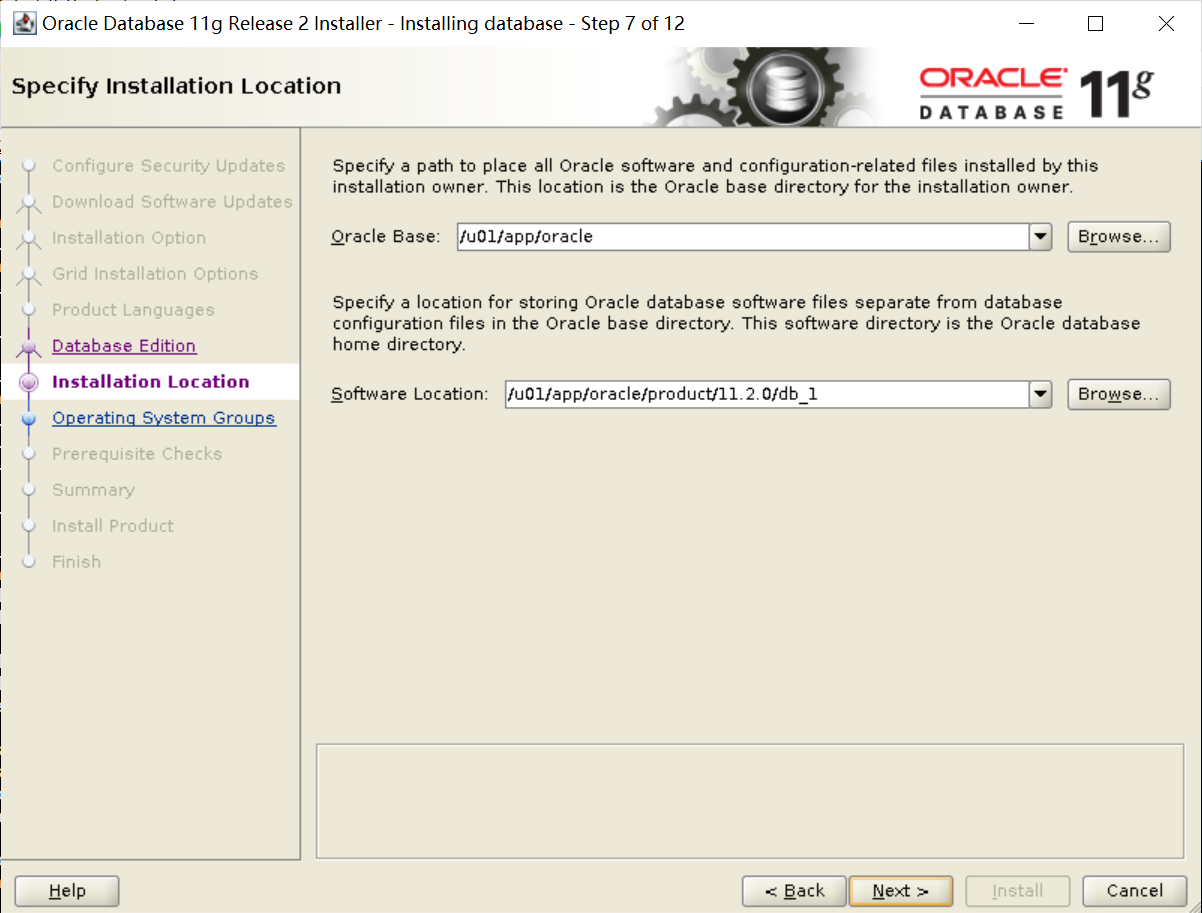

- oracle用户

[root@rac-node1 yum.repos.d]# su - oracle

[grid@rac-node1 ~]$ vim .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/.local/bin:$HOME/bin

export PATH

export ORACLE_SID=orcl-rac1

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/11.2.0/db_1

export PATH=$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export NLS_LANG=AMERICAN_AMERICA.UTF8

umask 022

[root@rac-node2 yum.repos.d]# su - oracle

[oracle@rac-node2 ~]$ vim .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/.local/bin:$HOME/bin

export PATH

export ORACLE_SID=orcl-rac2

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/11.2.0/db_1

export PATH=$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export NLS_LANG=AMERICAN_AMERICA.UTF8

umask 022

设置系统参数

- /etc/sysctl.conf

[root@rac-node2 yum.repos.d]# vim /etc/sysctl.conf

[root@rac-node2 yum.repos.d]# sysctl -p

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = 943718

kernel.shmmax = 3865470566

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048576[root@rac-node2 yum.repos.d]# vim /etc/security/limits.conf

oracle soft nproc 2047

oracle hard nproc 16384

oracle soft nofile 1024

oracle hard nofile 65536

oracle soft stack 10240

oracle hard stack 32768

grid soft nproc 2047

grid hard nproc 16384

grid soft nofile 1024

grid hard nofile 65536

grid soft stack 10240

grid hard stack 32768- /etc/security/limits.conf

禁用NTP

[root@rac-node1 yum.repos.d]# timedatectl

Local time: Sun 2022-04-24 01:21:19 CST

Universal time: Sat 2022-04-23 17:21:19 UTC

RTC time: Sat 2022-04-23 17:21:14

Time zone: Asia/Shanghai (CST, +0800)

NTP enabled: no

NTP synchronized: no

RTC in local TZ: no

DST active: n/a

[root@rac-node1 yum.repos.d]# systemctl status chronyd

● chronyd.service - NTP client/server

Loaded: loaded (/usr/lib/systemd/system/chronyd.service; disabled; vendor preset: enabled)

Active: inactive (dead)

Docs: man:chronyd(8)

man:chrony.conf(5)

配置hosts

[root@rac-node2 yum.repos.d]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

#public:

192.168.88.100 rac-node1

192.168.88.101 rac-node2

#vip:

192.168.88.200 node1-vip

192.168.88.201 node2-vip

#priv

192.168.112.100 node1-priv

192.168.112.101 node2-priv

#SCAN

192.168.88.188 rac-scan

配置ASM磁盘组

[root@rac-node1 ~]# fdisk -l |grep 'Disk /dev'

Disk /dev/sdb: 2147 MB, 2147483648 bytes, 4194304 sectors

Disk /dev/sdd: 2147 MB, 2147483648 bytes, 4194304 sectors

Disk /dev/sdf: 10.7 GB, 10737418240 bytes, 20971520 sectors

Disk /dev/sde: 5368 MB, 5368709120 bytes, 10485760 sectors

Disk /dev/sda: 26.8 GB, 26843545600 bytes, 52428800 sectors

Disk /dev/sdc: 2147 MB, 2147483648 bytes, 4194304 sectors

Disk /dev/mapper/rhel-root: 6442 MB, 6442450944 bytes, 12582912 sectors

Disk /dev/mapper/rhel-swap: 4294 MB, 4294967296 bytes, 8388608 sectors

Disk /dev/mapper/rhel-u01: 15.9 GB, 15879634944 bytes, 31014912 sectors

[root@rac-node1 ~]# fdisk /dev/sdb

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0xe972998e.

Command (m for help): p

Disk /dev/sdb: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0xe972998e

Device Boot Start End Blocks Id System

Command (m for help): n

Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-4194303, default 2048):

Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-4194303, default 4194303):

Using default value 4194303

Partition 1 of type Linux and of size 2 GiB is set

Command (m for help): p

Disk /dev/sdb: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0xe972998e

Device Boot Start End Blocks Id System

/dev/sdb1 2048 4194303 2096128 83 Linux

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@rac-node1 ~]# fdisk /dev/sdc

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0xf515f01e.

Command (m for help): p

Disk /dev/sdc: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0xf515f01e

Device Boot Start End Blocks Id System

Command (m for help): n

Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-4194303, default 2048):

Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-4194303, default 4194303):

Using default value 4194303

Partition 1 of type Linux and of size 2 GiB is set

Command (m for help): p

Disk /dev/sdc: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0xf515f01e

Device Boot Start End Blocks Id System

/dev/sdc1 2048 4194303 2096128 83 Linux

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@rac-node1 ~]# fdisk /dev/sdd

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0xe11aecd6.

Command (m for help): p

Disk /dev/sdd: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0xe11aecd6

Device Boot Start End Blocks Id System

Command (m for help): n

Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-4194303, default 2048):

Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-4194303, default 4194303):

Using default value 4194303

Partition 1 of type Linux and of size 2 GiB is set

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@rac-node1 ~]# fdisk /dev/sde

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0x75a77390.

Command (m for help): p

Disk /dev/sde: 5368 MB, 5368709120 bytes, 10485760 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x75a77390

Device Boot Start End Blocks Id System

Command (m for help): n

Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-10485759, default 2048):

Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-10485759, default 10485759):

Using default value 10485759

Partition 1 of type Linux and of size 5 GiB is set

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@rac-node1 ~]# fdisk /dev/sdf

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0x36c965ec.

Command (m for help): p

Disk /dev/sdf: 10.7 GB, 10737418240 bytes, 20971520 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x36c965ec

Device Boot Start End Blocks Id System

Command (m for help): n

Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-20971519, default 2048):

Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-20971519, default 20971519):

Using default value 20971519

Partition 1 of type Linux and of size 10 GiB is set

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@rac-node1 ~]# partprobe

Warning: Unable to open /dev/sr0 read-write (Read-only file system). /dev/sr0 has been opened read-only.

[root@rac-node1 ~]# fdisk -l |grep '/dev/'

Disk /dev/sdb: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdb1 2048 4194303 2096128 83 Linux

Disk /dev/sdd: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdd1 2048 4194303 2096128 83 Linux

Disk /dev/sdf: 10.7 GB, 10737418240 bytes, 20971520 sectors

/dev/sdf1 2048 20971519 10484736 83 Linux

Disk /dev/sde: 5368 MB, 5368709120 bytes, 10485760 sectors

/dev/sde1 2048 10485759 5241856 83 Linux

Disk /dev/sda: 26.8 GB, 26843545600 bytes, 52428800 sectors

/dev/sda1 2048 6143 2048 83 Linux

/dev/sda2 * 6144 415743 204800 83 Linux

/dev/sda3 415744 52412415 25998336 8e Linux LVM

Disk /dev/sdc: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdc1 2048 4194303 2096128 83 Linux

Disk /dev/mapper/rhel-root: 6442 MB, 6442450944 bytes, 12582912 sectors

Disk /dev/mapper/rhel-swap: 4294 MB, 4294967296 bytes, 8388608 sectors

Disk /dev/mapper/rhel-u01: 15.9 GB, 15879634944 bytes, 31014912 sectors

[root@rac-node2 ~]# fdisk -l |grep '/dev/'

Disk /dev/sdb: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdb1 2048 4194303 2096128 83 Linux

Disk /dev/sde: 5368 MB, 5368709120 bytes, 10485760 sectors

/dev/sde1 2048 10485759 5241856 83 Linux

Disk /dev/sda: 21.5 GB, 21474836480 bytes, 41943040 sectors

/dev/sda1 2048 6143 2048 83 Linux

/dev/sda2 * 6144 415743 204800 83 Linux

/dev/sda3 415744 41943039 20763648 8e Linux LVM

Disk /dev/sdf: 10.7 GB, 10737418240 bytes, 20971520 sectors

/dev/sdf1 2048 20971519 10484736 83 Linux

Disk /dev/sdc: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdc1 2048 4194303 2096128 83 Linux

Disk /dev/sdd: 2147 MB, 2147483648 bytes, 4194304 sectors

/dev/sdd1 2048 4194303 2096128 83 Linux

Disk /dev/mapper/rhel-root: 6224 MB, 6224347136 bytes, 12156928 sectors

Disk /dev/mapper/rhel-swap: 4294 MB, 4294967296 bytes, 8388608 sectors

Disk /dev/mapper/rhel-u01: 10.7 GB, 10737418240 bytes, 20971520 sectors

- 磁盘分区

配置ASM磁盘组

[root@rac-node1 ~]# oracleasm configure -i

Configuring the Oracle ASM library driver.

This will configure the on-boot properties of the Oracle ASM library

driver. The following questions will determine whether the driver is

loaded on boot and what permissions it will have. The current values

will be shown in brackets ('[]'). Hitting <ENTER> without typing an

answer will keep that current value. Ctrl-C will abort.

Default user to own the driver interface []: grid

Default group to own the driver interface []: asmadmin

Start Oracle ASM library driver on boot (y/n) [n]: y

Scan for Oracle ASM disks on boot (y/n) [y]: y

Writing Oracle ASM library driver configuration: done

[root@rac-node1 ~]# oracleasm configure

ORACLEASM_ENABLED=true

ORACLEASM_UID=grid

ORACLEASM_GID=asmadmin

ORACLEASM_SCANBOOT=true

ORACLEASM_SCANORDER=""

ORACLEASM_SCANEXCLUDE=""

ORACLEASM_SCAN_DIRECTORIES=""

ORACLEASM_USE_LOGICAL_BLOCK_SIZE="false"

[root@rac-node1 ~]# oracleasm init

Creating /dev/oracleasm mount point: /dev/oracleasm

Loading module "oracleasm": oracleasm

Configuring "oracleasm" to use device physical block size

Mounting ASMlib driver filesystem: /dev/oracleasm

[root@rac-node2 ~]# oracleasm configure -i

Configuring the Oracle ASM library driver.

This will configure the on-boot properties of the Oracle ASM library

driver. The following questions will determine whether the driver is

loaded on boot and what permissions it will have. The current values

will be shown in brackets ('[]'). Hitting <ENTER> without typing an

answer will keep that current value. Ctrl-C will abort.

Default user to own the driver interface []: grid

Default group to own the driver interface []: asmadmin

Start Oracle ASM library driver on boot (y/n) [n]: y

Scan for Oracle ASM disks on boot (y/n) [y]: y

Writing Oracle ASM library driver configuration: done

[root@rac-node2 ~]# oracleasm init

Creating /dev/oracleasm mount point: /dev/oracleasm

Loading module "oracleasm": oracleasm

Configuring "oracleasm" to use device physical block size

Mounting ASMlib driver filesystem: /dev/oracleasm

- 配置和初始化Oracle ASM Library Driver Software

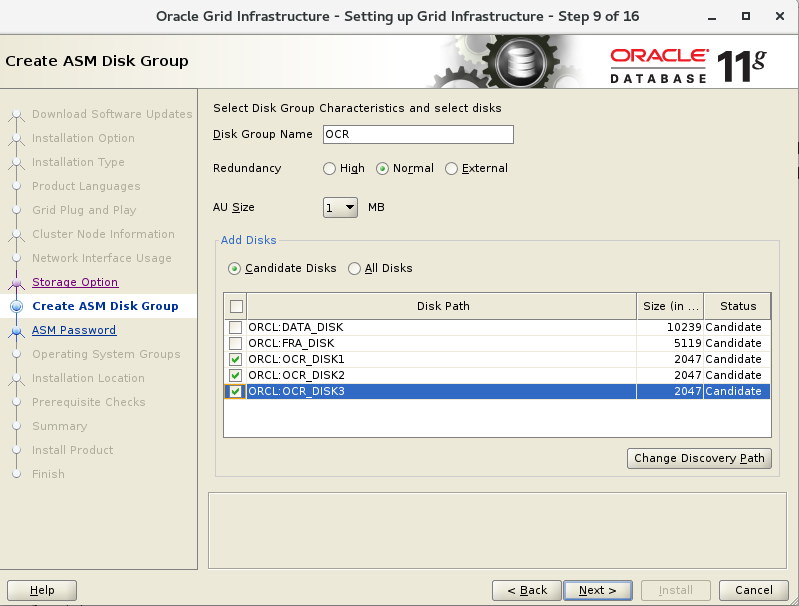

配置ASM磁盘组

node1

[root@rac-node1 ~]# oracleasm createdisk OCR_DISK1 /dev/sdb1

Writing disk header: done

Instantiating disk: done

[root@rac-node1 ~]# oracleasm createdisk OCR_DISK2 /dev/sdc1

Writing disk header: done

Instantiating disk: done

[root@rac-node1 ~]# oracleasm createdisk OCR_DISK3 /dev/sdd1

Writing disk header: done

Instantiating disk: done

[root@rac-node1 ~]# oracleasm createdisk FRA_DISK /dev/sde1

Writing disk header: done

Instantiating disk: done

[root@rac-node1 ~]# oracleasm createdisk DATA_DISK /dev/sdf1

Writing disk header: done

Instantiating disk: done

[root@rac-node1 ~]# oracleasm listdisks

DATA_DISK

FRA_DISK

OCR_DISK1

OCR_DISK2

OCR_DISK3

node2

[root@rac-node2 ~]# oracleasm scandisks

Reloading disk partitions: done

Cleaning any stale ASM disks...

Scanning system for ASM disks...

Instantiating disk "OCR_DISK1"

Instantiating disk "FRA_DISK"

Instantiating disk "DATA_DISK"

Instantiating disk "OCR_DISK2"

Instantiating disk "OCR_DISK3"

[root@rac-node2 ~]# oracleasm listdisks

DATA_DISK

FRA_DISK

OCR_DISK1

OCR_DISK2

OCR_DISK3

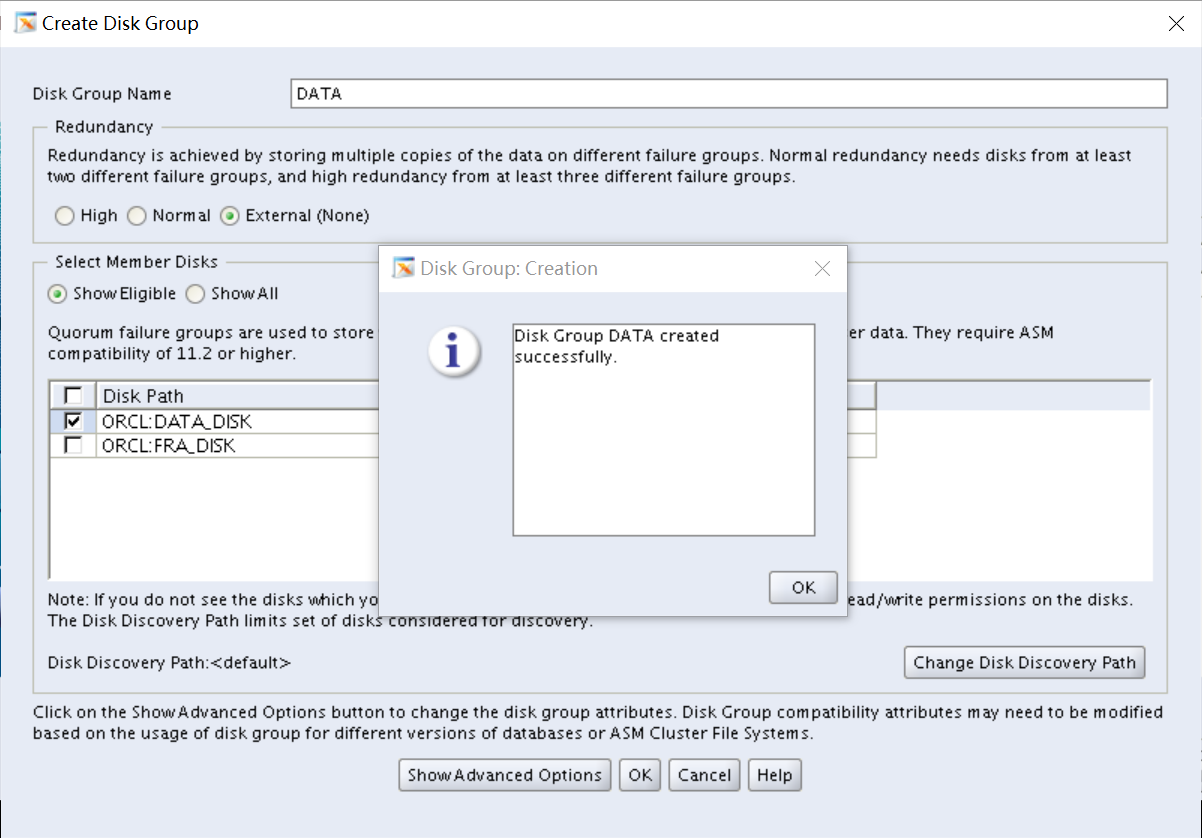

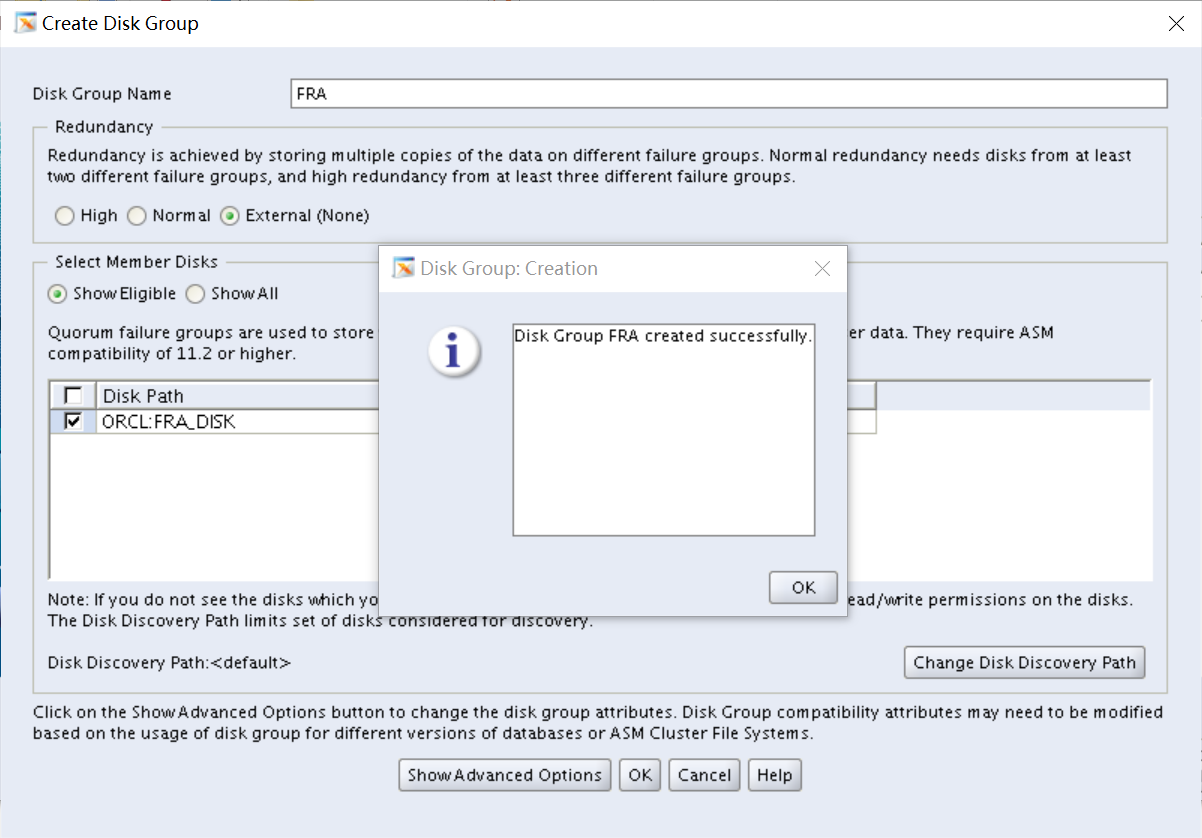

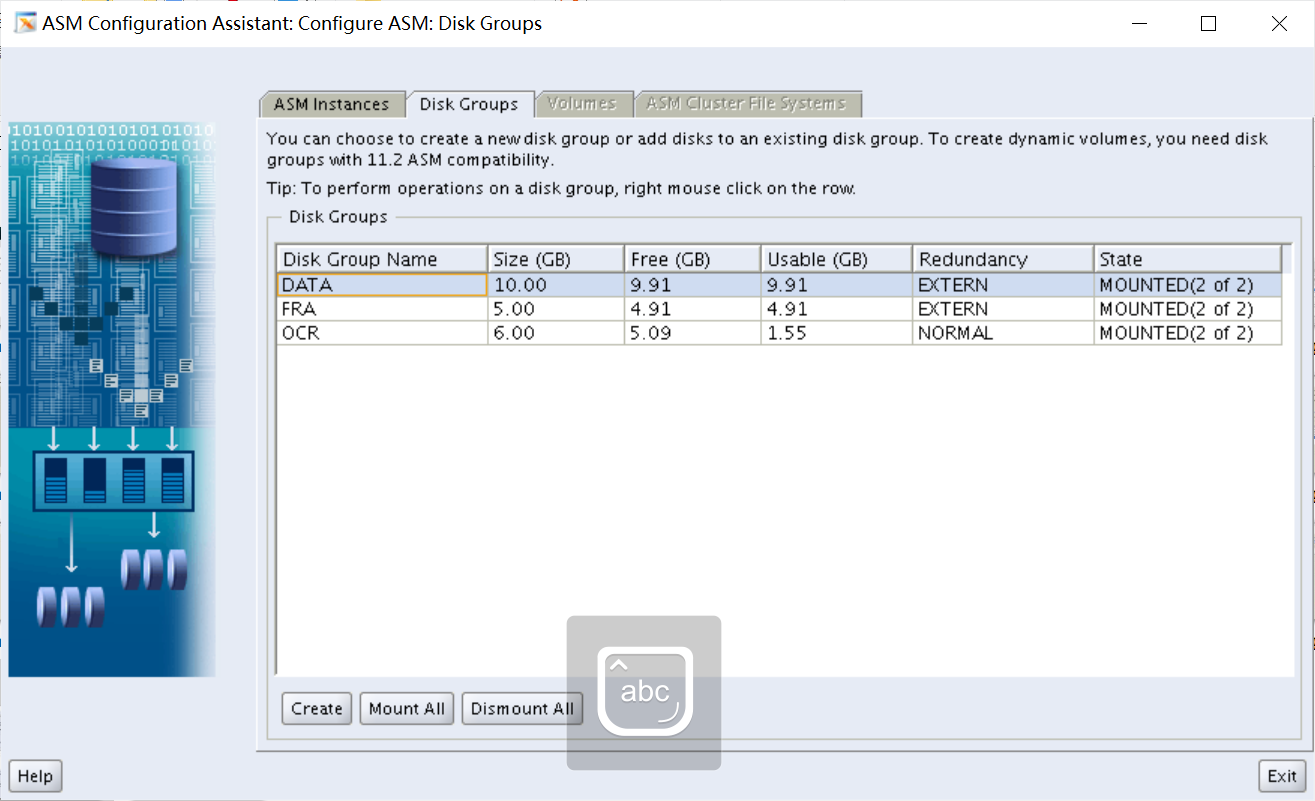

- 创建ASMSM磁盘组

设置用户等效性

node1

[root@rac-node1 ~]# ssh-keygen -t rsa

[root@rac-node1 ~]# ssh-keygen -t dsa

[root@rac-node1 ~]# chmod 755 ~/.ssh

[root@rac-node1 ~]# su - grid

[grid@rac-node1 ~]$ ssh-keygen -t rsa

[grid@rac-node1 ~]$ ssh-keygen -t dsa

[grid@rac-node1 ~]$ chmod 755 ~/.ssh

[grid@rac-node1 ~]$ exit

[root@rac-node1 ~]# su - oracle

[oracle@rac-node1 ~]$ ssh-keygen -t rsa

[oracle@rac-node1 ~]$ ssh-keygen -t dsa

[oracle@rac-node1 ~]$ chmod 755 ~/.ssh

[oracle@rac-node1 ~]$ exit

node2

[root@rac-node2 ~]# ssh-keygen -t rsa

[root@rac-node2 ~]# ssh-keygen -t dsa

[root@rac-node2 ~]# chmod 755 ~/.ssh

[root@rac-node2 ~]# su - grid

[grid@rac-node2 ~]$ ssh-keygen -t rsa

[grid@rac-node2 ~]$ ssh-keygen -t dsa

[grid@rac-node2 ~]$ chmod 755 ~/.ssh

[grid@rac-node2 ~]$ exit

[root@rac-node2 ~]# su - oracle

[oracle@rac-node2 ~]$ ssh-keygen -t rsa

[oracle@rac-node2 ~]$ ssh-keygen -t dsa

[oracle@rac-node2 ~]$ chmod 755 ~/.ssh

[oracle@rac-node2 ~]$ exit- 创建密钥

- 生成密钥认证文件并测试免密登录

node1

[root@rac-node1 ~]# ssh rac-node2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

root@rac-node2's password:

[root@rac-node1 ~]# ssh rac-node2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

root@rac-node2's password:

[root@rac-node1 ~]# scp ~/.ssh/authorized_keys root@rac-node2:~/.ssh/authorized_keys

root@rac-node2's password:

authorized_keys 100% 2000 1.1MB/s 00:00

[root@rac-node1 ~]# ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:27:43 CST 2022

[root@rac-node1 ~]# ssh rac-node2 date

Sun Apr 24 14:27:49 CST 2022

[root@rac-node1 ~]# ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:28:05 CST 2022

[root@rac-node1 ~]# ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:28:12 CST 2022

node2

[root@rac-node2 ~]# ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:30:05 CST 2022

[root@rac-node2 ~]# ssh rac-node2 date

The authenticity of host 'rac-node2 (192.168.88.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node2,192.168.88.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:30:11 CST 2022

[root@rac-node2 ~]# ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:30:28 CST 2022

[root@rac-node2 ~]# ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:30:34 CST 2022

node1

[root@rac-node1 ~]# su - grid

Last login: Sun Apr 24 14:04:20 CST 2022 on pts/0

[grid@rac-node1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[grid@rac-node1 ~]$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[grid@rac-node1 ~]$ ssh rac-node2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

The authenticity of host 'rac-node2 (192.168.88.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node2,192.168.88.101' (ECDSA) to the list of known hosts.

grid@rac-node2's password:

[grid@rac-node1 ~]$ ssh rac-node2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

grid@rac-node2's password:

[grid@rac-node1 ~]$ scp ~/.ssh/authorized_keys grid@rac-node2:~/.ssh/authorized_keys

grid@rac-node2's password:

authorized_keys 100% 2000 1.3MB/s 00:00

[grid@rac-node1 ~]$ ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:35:08 CST 2022

[grid@rac-node1 ~]$ ssh rac-node2 date

Sun Apr 24 14:35:14 CST 2022

[grid@rac-node1 ~]$ ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:35:24 CST 2022

[grid@rac-node1 ~]$ ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:35:31 CST 2022

node2

[grid@rac-node2 ~]$ ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:36:13 CST 2022

[grid@rac-node2 ~]$ ssh rac-node2 date

The authenticity of host 'rac-node2 (192.168.88.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node2,192.168.88.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:36:18 CST 2022

[grid@rac-node2 ~]$ ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:36:30 CST 2022

[grid@rac-node2 ~]$ ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:36:36 CST 2022

node1

[root@rac-node1 ~]# su - oracle

Last login: Sun Apr 24 14:07:51 CST 2022 on pts/0

[oracle@rac-node1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[oracle@rac-node1 ~]$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[oracle@rac-node1 ~]$ ssh rac-node2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

The authenticity of host 'rac-node2 (192.168.88.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node2,192.168.88.101' (ECDSA) to the list of known hosts.

oracle@rac-node2's password:

[oracle@rac-node1 ~]$ ssh rac-node2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

oracle@rac-node2's password:

[oracle@rac-node1 ~]$ scp ~/.ssh/authorized_keys oracle@rac-node2:~/.ssh/authorized_keys

oracle@rac-node2's password:

authorized_keys 100% 2008 552.0KB/s 00:00

[oracle@rac-node1 ~]$ ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:38:59 CST 2022

[oracle@rac-node1 ~]$ ssh rac-node2 date

Sun Apr 24 14:39:03 CST 2022

[oracle@rac-node1 ~]$ ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:39:15 CST 2022

[oracle@rac-node1 ~]$ ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:39:21 CST 2022

node2

[root@rac-node2 ~]# su - oracle

Last login: Sun Apr 24 14:17:57 CST 2022 on pts/0

[oracle@rac-node2 ~]$ ssh rac-node1 date

The authenticity of host 'rac-node1 (192.168.88.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node1,192.168.88.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:39:55 CST 2022

[oracle@rac-node2 ~]$ ssh rac-node2 date

The authenticity of host 'rac-node2 (192.168.88.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac-node2,192.168.88.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:40:00 CST 2022

[oracle@rac-node2 ~]$ ssh node1-priv date

The authenticity of host 'node1-priv (192.168.112.100)' can't be established.

ECDSA key fingerprint is SHA256:cc2lhHe4zEYGxbtI6q0wr7r/fd477b9z1NNUG9K/rtY.

ECDSA key fingerprint is MD5:16:88:d8:b6:1d:78:fd:f9:b7:72:dd:85:15:77:b3:37.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1-priv,192.168.112.100' (ECDSA) to the list of known hosts.

Sun Apr 24 14:40:11 CST 2022

[oracle@rac-node2 ~]$ ssh node2-priv date

The authenticity of host 'node2-priv (192.168.112.101)' can't be established.

ECDSA key fingerprint is SHA256:4iT7tVqXvNfiCysR4IQQmuG/4+xK+CFsvetdrOv4YdE.

ECDSA key fingerprint is MD5:50:12:2c:33:ef:32:9e:22:8d:3b:01:fb:d5:20:4b:5b.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2-priv,192.168.112.101' (ECDSA) to the list of known hosts.

Sun Apr 24 14:40:18 CST 2022

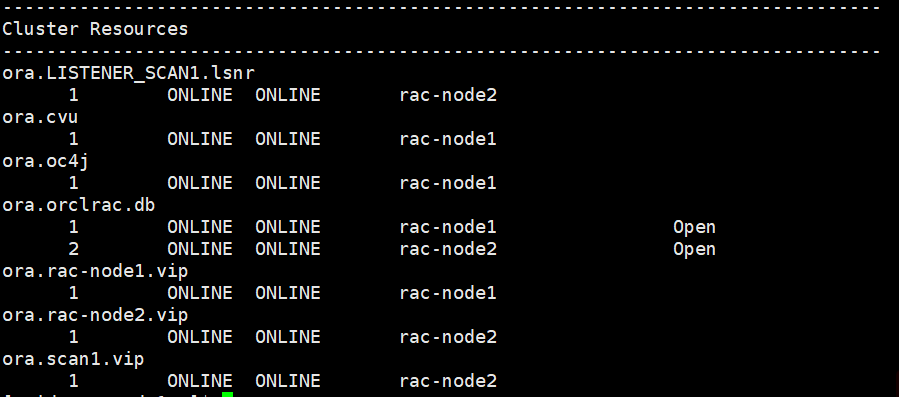

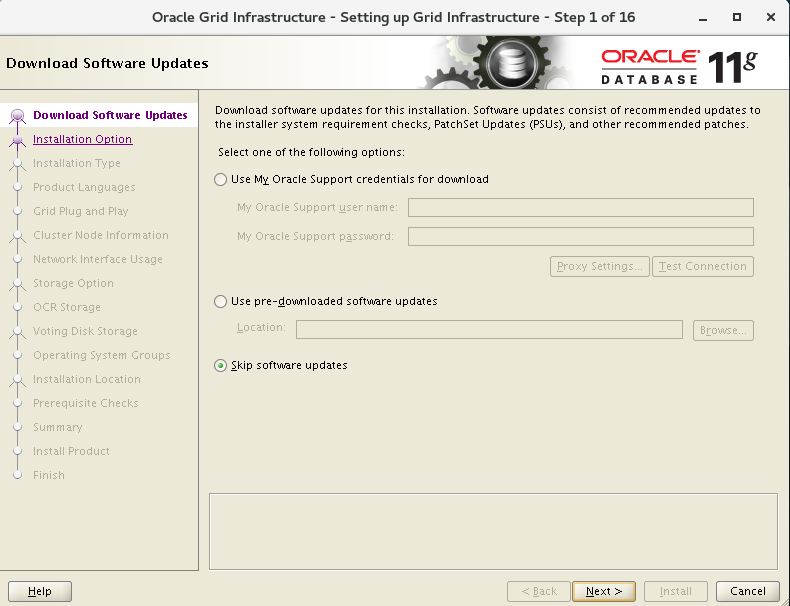

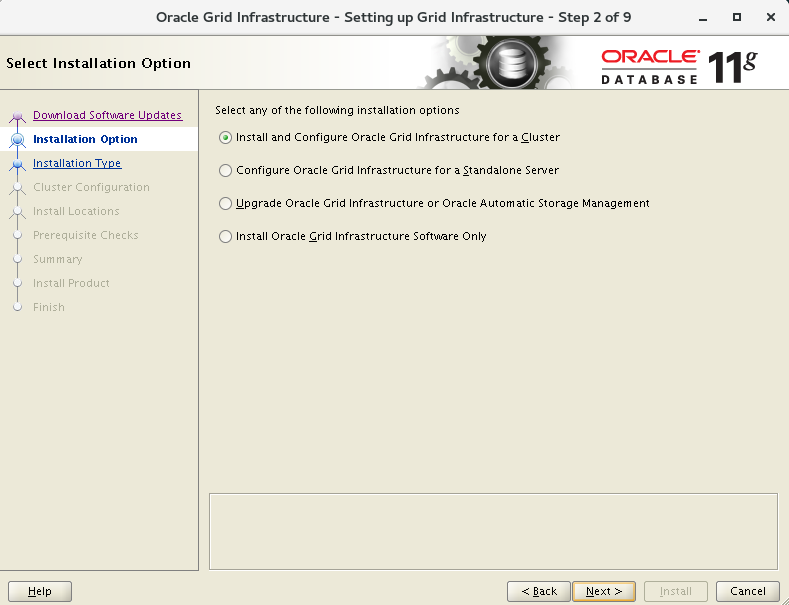

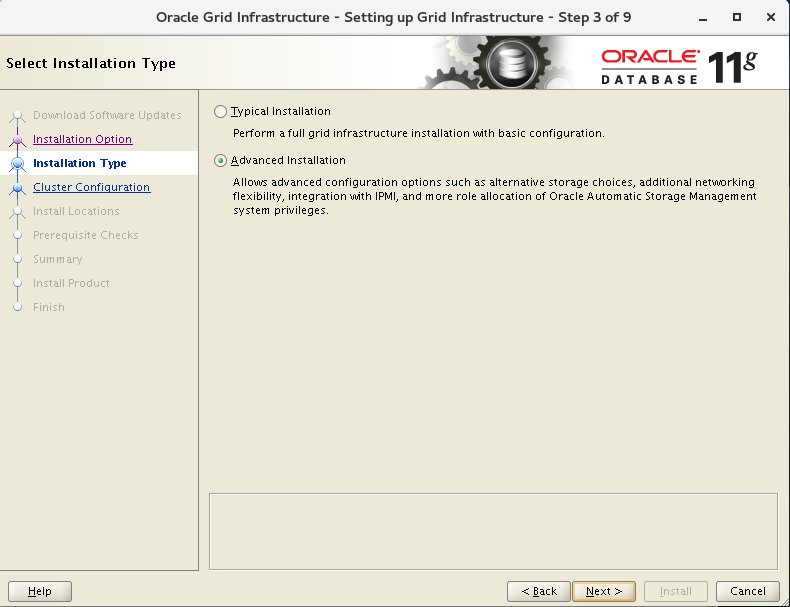

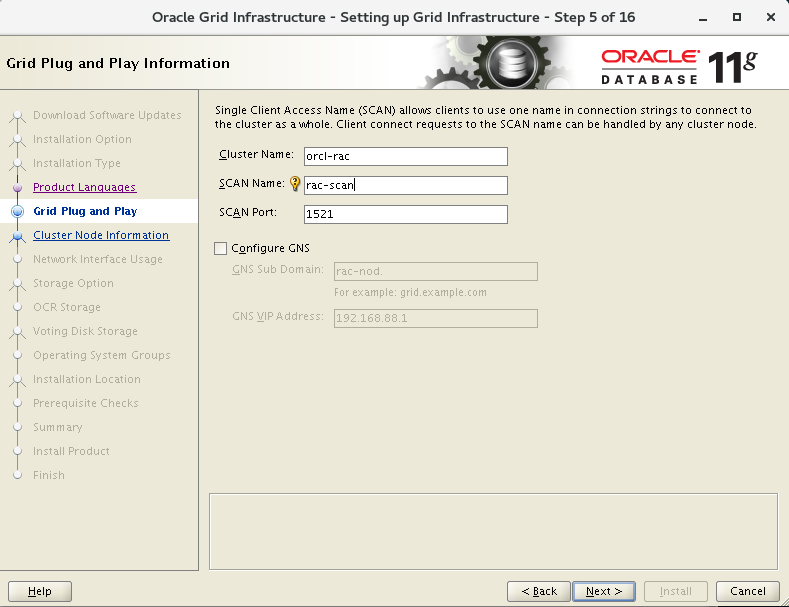

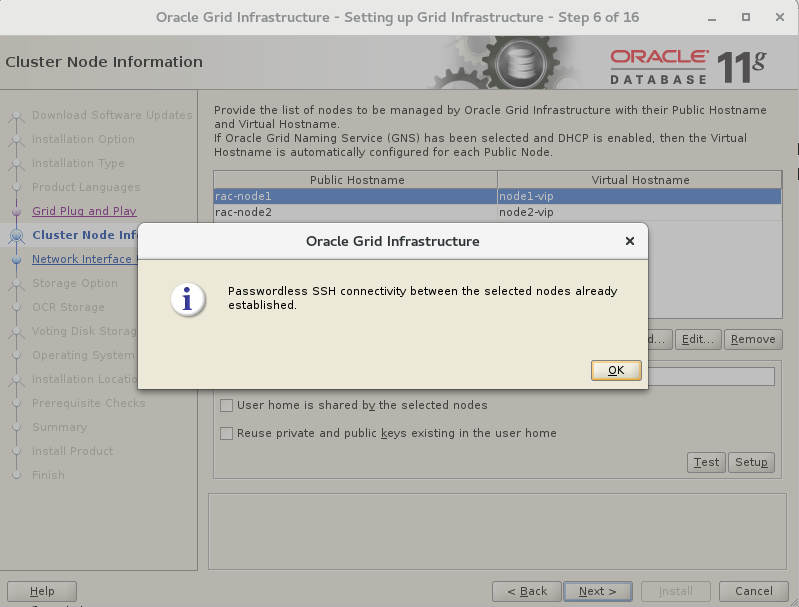

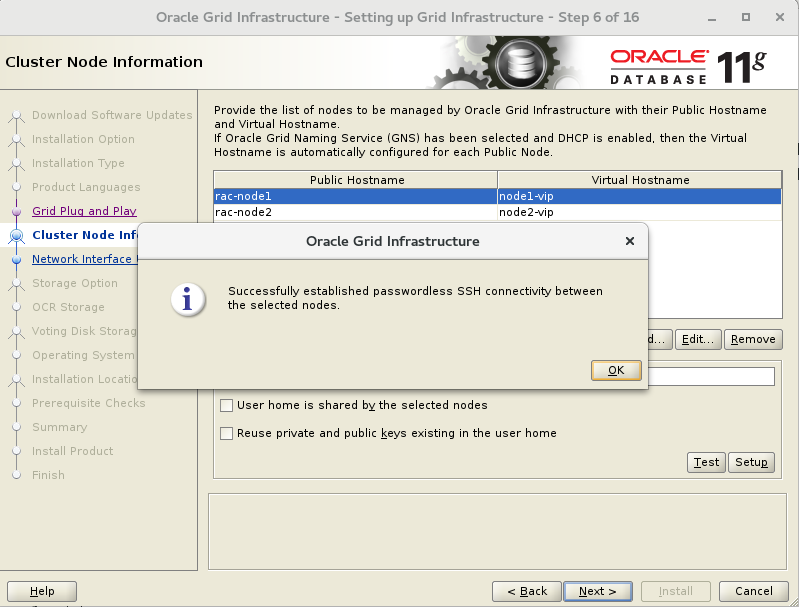

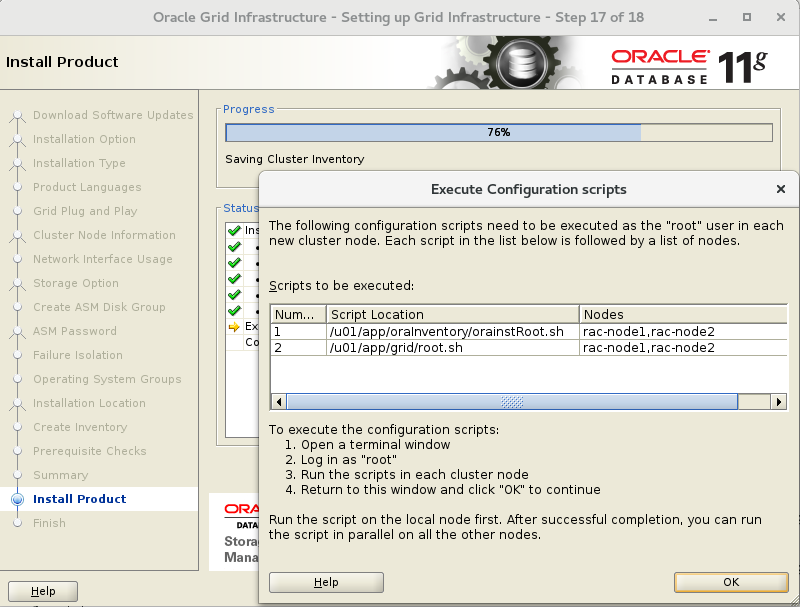

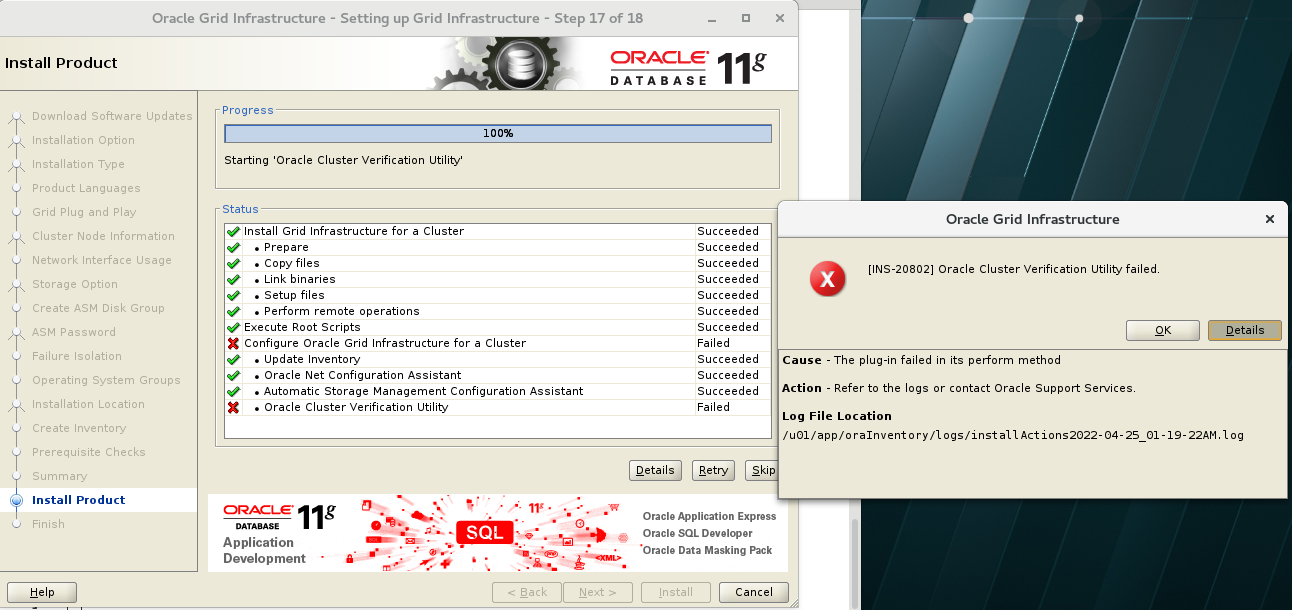

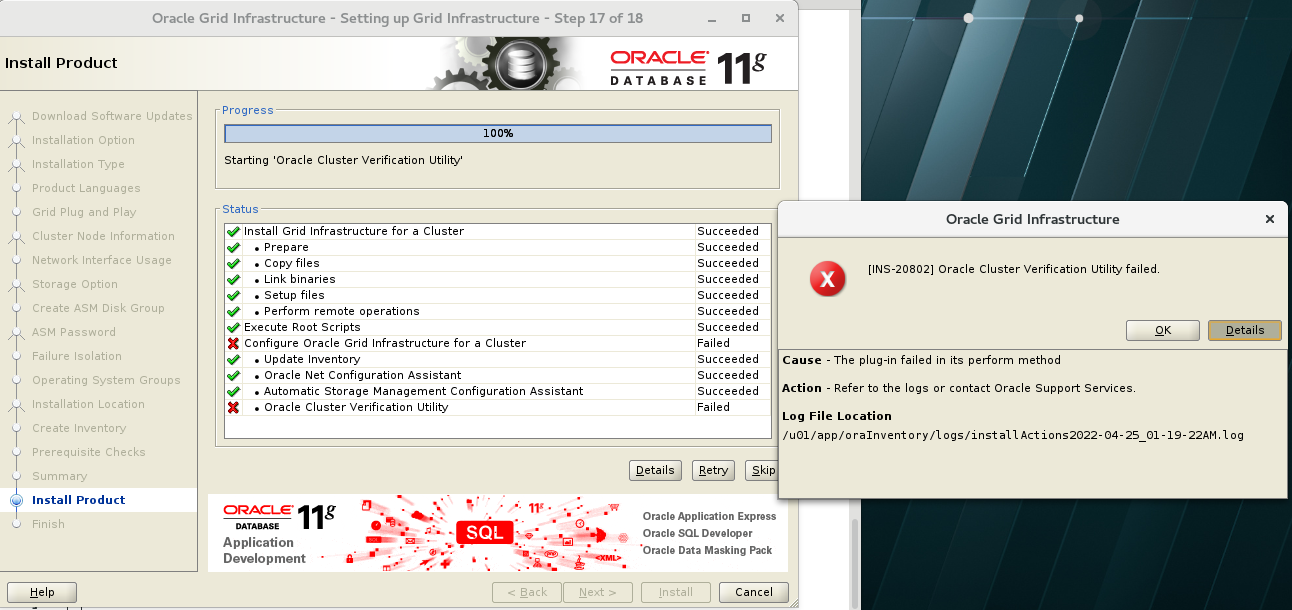

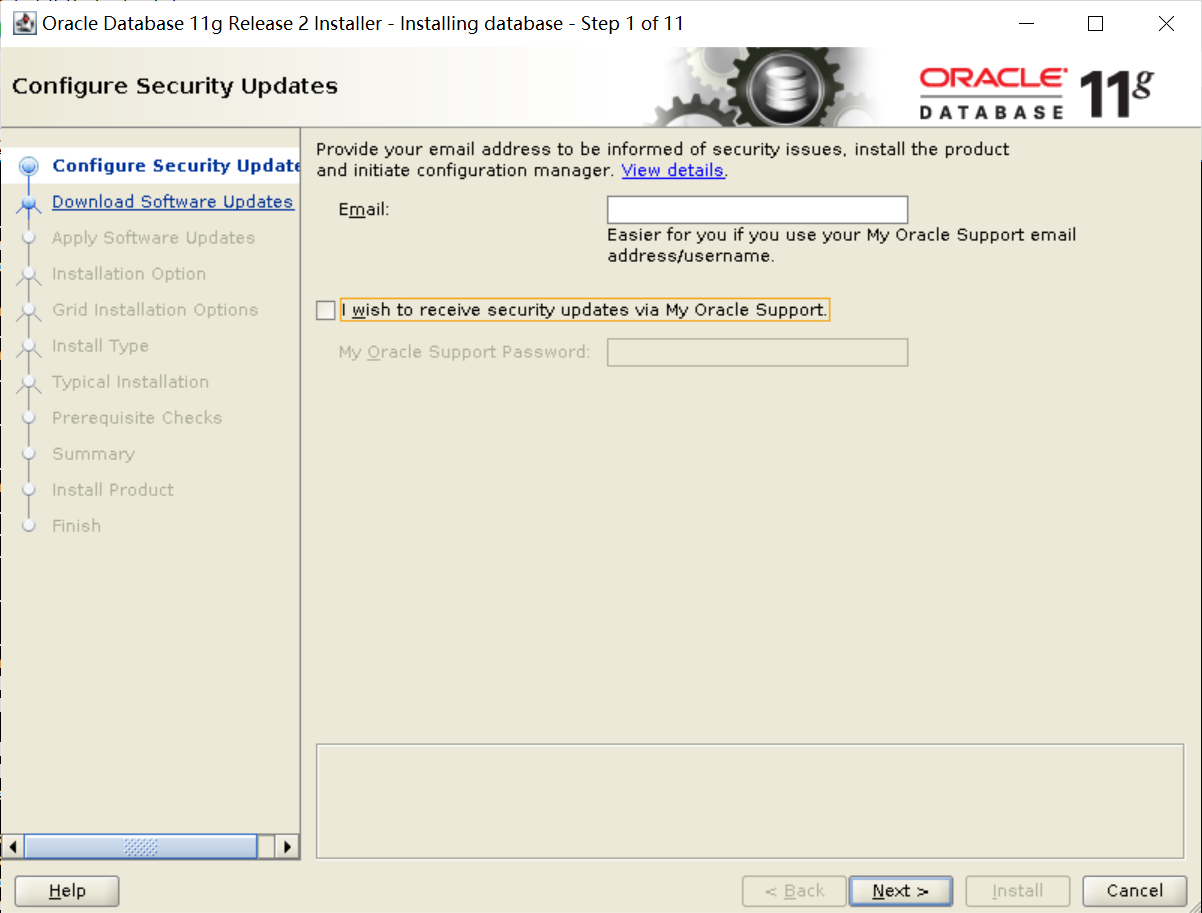

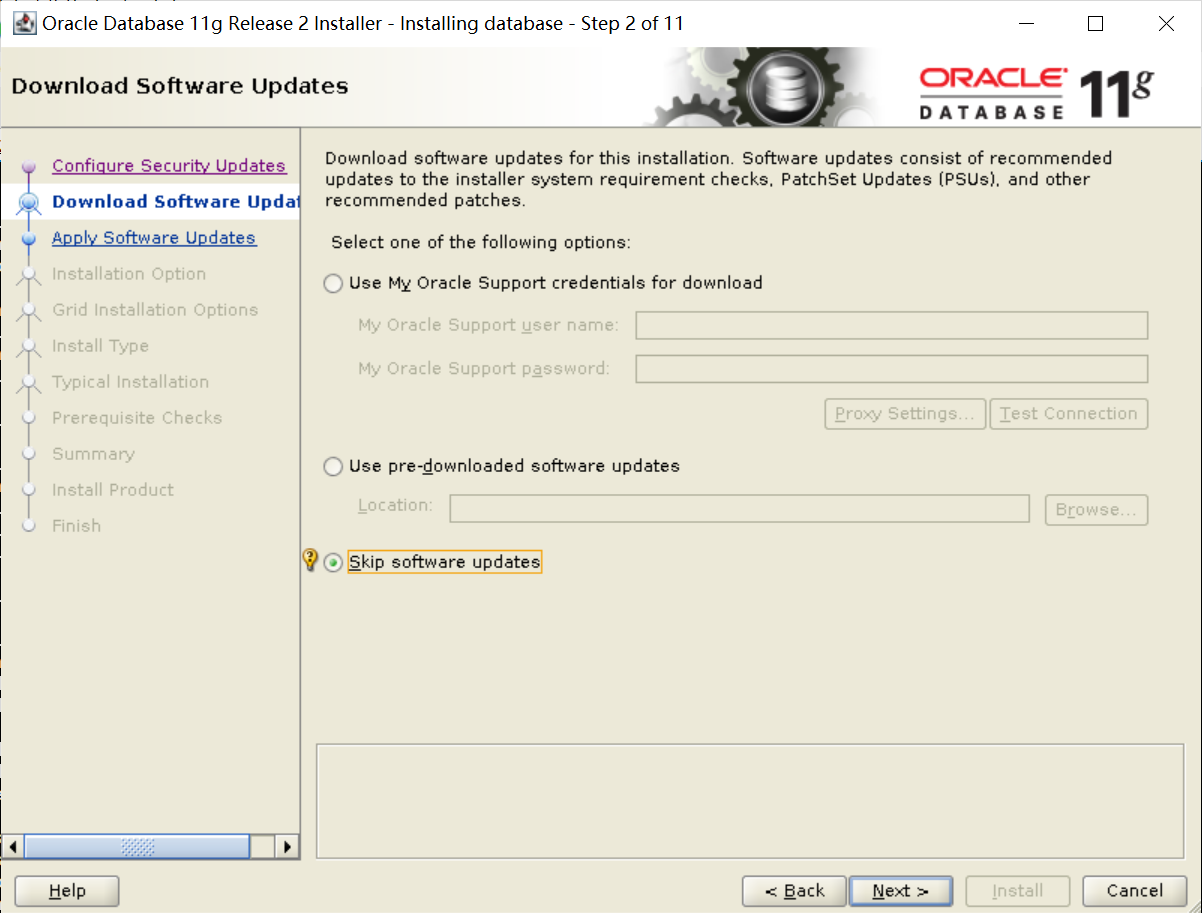

安装GI软件

[root@rac-node1 soft]# su - grid

Last login: Sun Apr 24 14:46:25 CST 2022 on pts/0

[grid@rac-node1 ~]$ cd /u01/soft/grid/

[grid@rac-node1 grid]$ ./runcluvfy.sh stage -pre crsinst -n rac-node1,rac-node2 -verbose

Check: TCP connectivity of subnet "192.168.122.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

rac-node1:192.168.122.1 rac-node2:192.168.122.1 failed

ERROR:

PRVF-7617 : Node connectivity between "rac-node1 : 192.168.122.1" and "rac-node2 : 192.168.122.1" failed

Result: TCP connectivity check failed for subnet "192.168.122.0"

Interfaces found on subnet "192.168.88.0" that are likely candidates for VIP are:

rac-node2 ens33:192.168.88.101

rac-node1 ens33:192.168.88.100

Interfaces found on subnet "192.168.112.0" that are likely candidates for a private interconnect are:

rac-node2 ens38:192.168.112.101

rac-node1 ens38:192.168.112.100

Checking subnet mask consistency...

Subnet mask consistency check passed for subnet "192.168.88.0".

Subnet mask consistency check passed for subnet "192.168.112.0".

Subnet mask consistency check passed for subnet "192.168.122.0".

Subnet mask consistency check passed.

Result: Node connectivity check failed

Check: Kernel parameter for "shmall"

Node Name Current Configured Required Status Comment

---------------- ------------ ------------ ------------ ------------ ------------

rac-node2 943718 943718 2097152 failed Current value incorrect. Configured value incorrect.

rac-node1 943718 943718 2097152 failed Current value incorrect. Configured value incorrect.

Result: Kernel parameter check failed for "shmall"

Check: Package existence for "compat-libstdc++-33(x86_64)"

Node Name Available Required Status

------------ ------------------------ ------------------------ ----------

rac-node2 missing compat-libstdc++-33(x86_64)-3.2.3 failed

rac-node1 missing compat-libstdc++-33(x86_64)-3.2.3 failed

Result: Package existence check failed for "compat-libstdc++-33(x86_64)"

Check: Package existence for "elfutils-libelf-devel"

Node Name Available Required Status

------------ ------------------------ ------------------------ ----------

rac-node2 missing elfutils-libelf-devel-0.97 failed

rac-node1 missing elfutils-libelf-devel-0.97 failed

Result: Package existence check failed for "elfutils-libelf-devel"

Check: Package existence for "pdksh"

Node Name Available Required Status

------------ ------------------------ ------------------------ ----------

rac-node2 missing pdksh-5.2.14 failed

rac-node1 missing pdksh-5.2.14 failed

Result: Package existence check failed for "pdksh"

Checking the file "/etc/resolv.conf" to make sure only one of domain and search entries is defined

File "/etc/resolv.conf" does not have both domain and search entries defined

Checking if domain entry in file "/etc/resolv.conf" is consistent across the nodes...

domain entry in file "/etc/resolv.conf" is consistent across nodes

Checking if search entry in file "/etc/resolv.conf" is consistent across the nodes...

search entry in file "/etc/resolv.conf" is consistent across nodes

Checking DNS response time for an unreachable node

Node Name Status

------------------------------------ ------------------------

rac-node2 failed

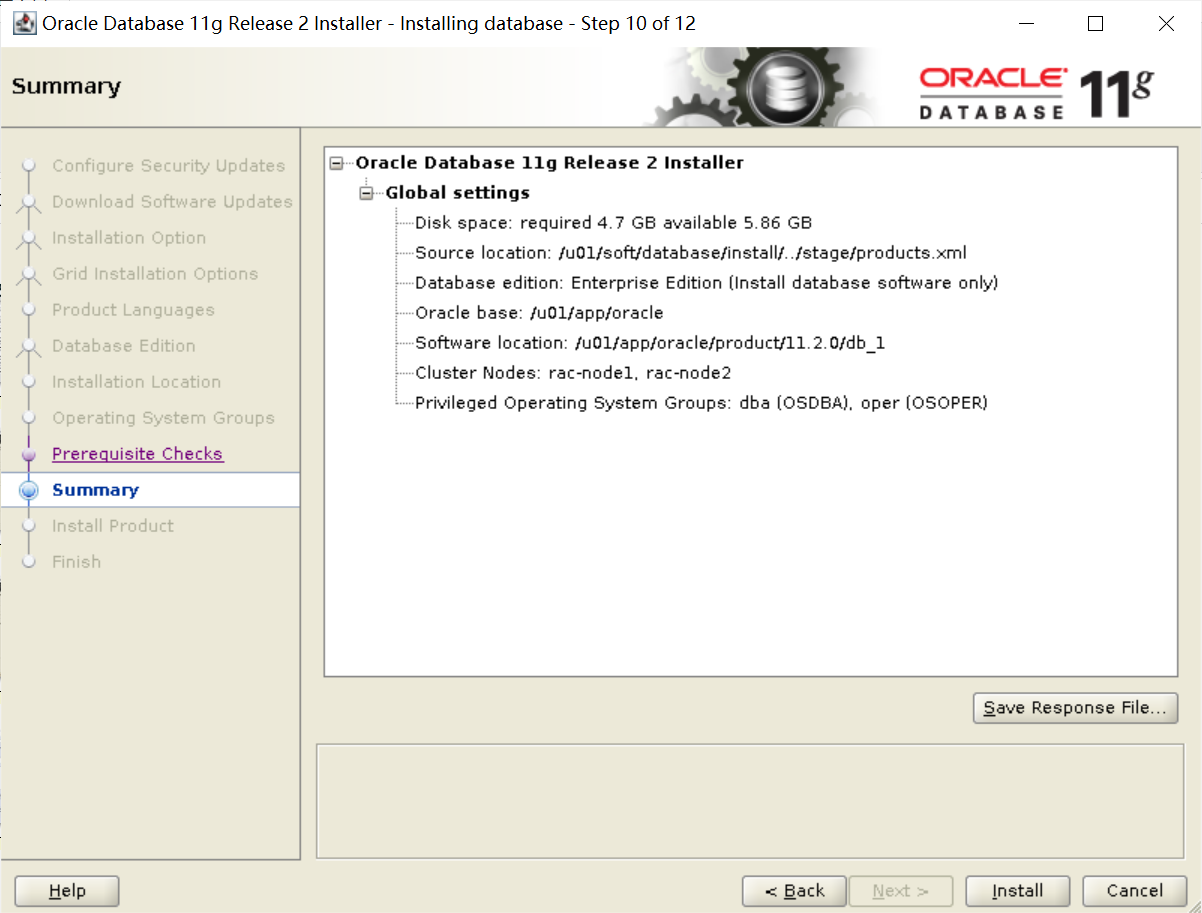

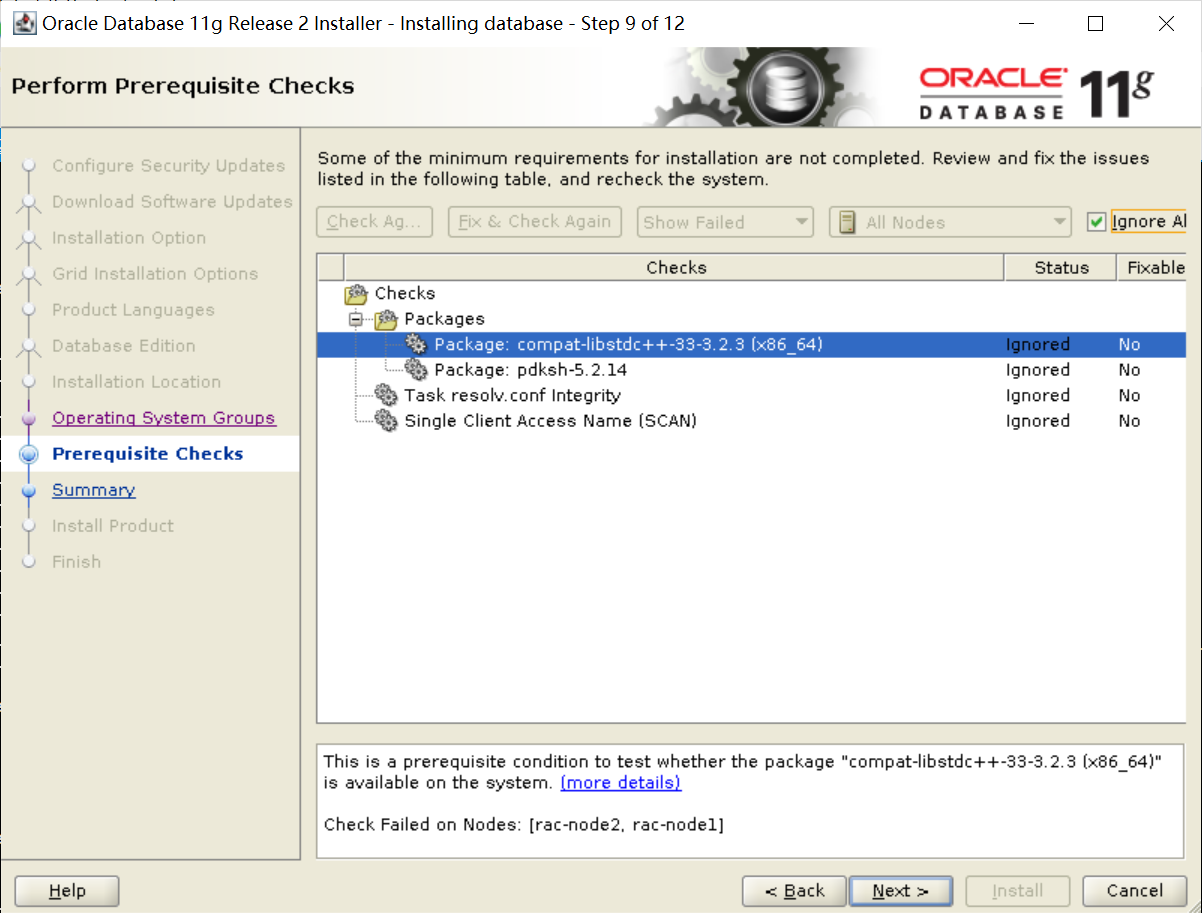

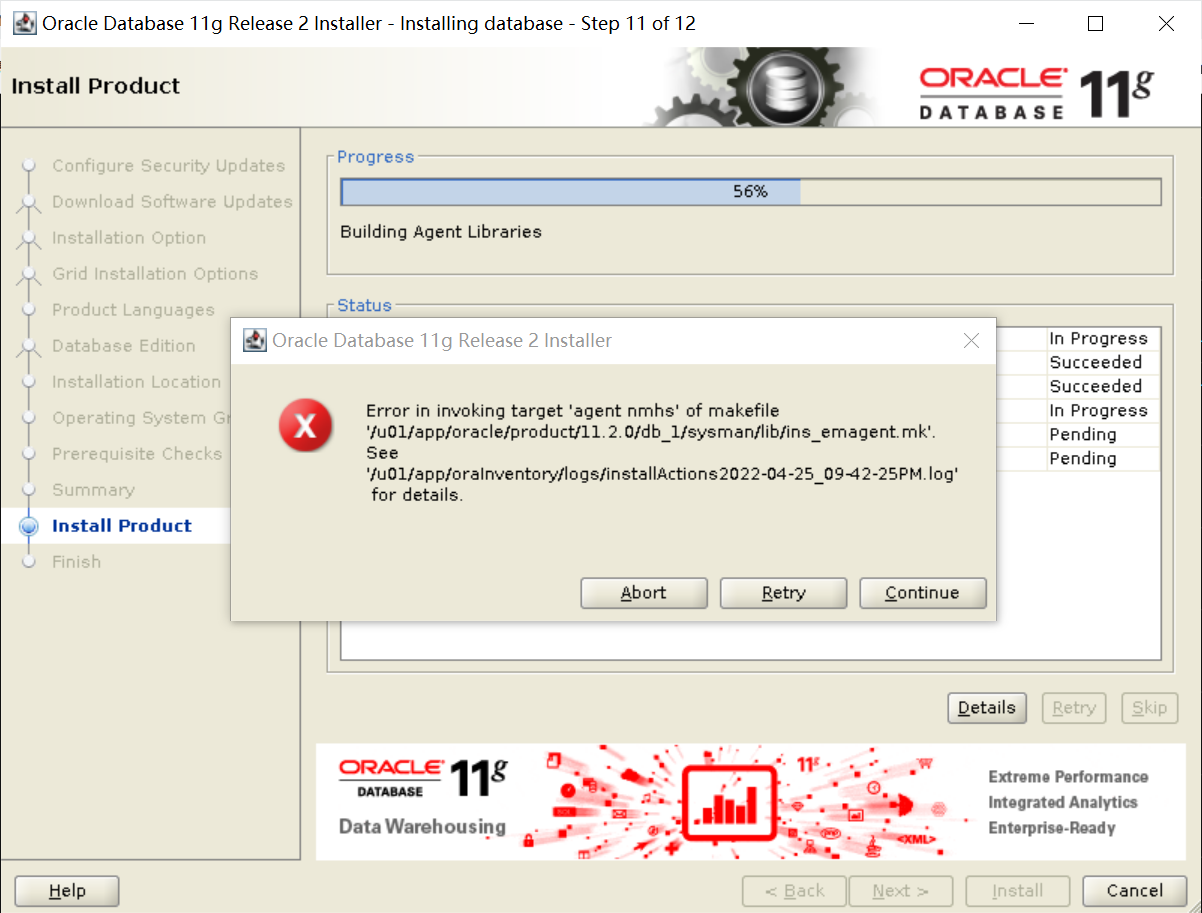

rac-node1 failed