Workflows & Pipelines

a breath of fresh air With Apache Airflow

Florian Dambrine - Principal Engineer

Click to trigger!

> Agenda <

-

General concepts

- What is Airflow ?

- Airflow Executors

- Airflow 101

- Airflow Operators

-

Airflow Deployments

- Airflow as a service

- MLE Airflow Infrastructure

> what is Airflow ?

- ETL

- Machine Learning Jobs

- Cron replacement (offers HA / automatic catch up)

> Airflow use cases

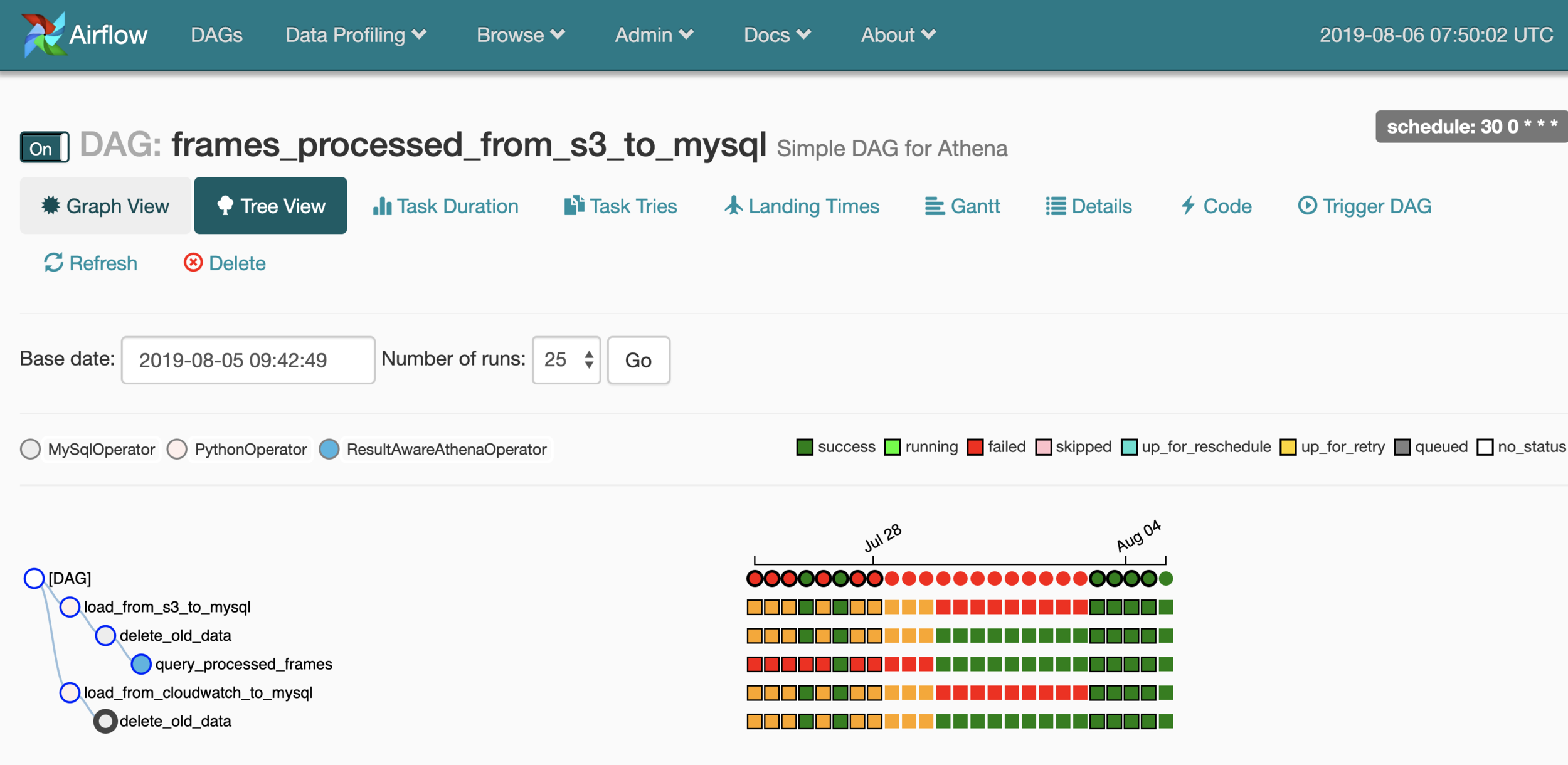

> Airflow Example Dag

import airflow

from airflow.models import DAG

from airflow.operators.bash_operator import BashOperator

from airflow.operators.dummy_operator import DummyOperator

args = {

'owner': 'Airflow',

'start_date': airflow.utils.dates.days_ago(2),

}

dag = DAG(

dag_id='hello_dag',

default_args=args,

schedule_interval=None,

)

run_after = DummyOperator(

task_id='run_after',

dag=dag,

)

run_first = BashOperator(

task_id='run_first',

bash_command='echo "Hello World!"',

dag=dag,

)

run_first >> run_after

if __name__ == "__main__":

dag.cli()

(Directed Acyclic Graph)

> Airflow Executors

How tasks are being executed by Airflow

SequentialExecutor

LocalExecutor

CeleryExecutor

KubernetesExecutor

Run one task at a time on the Airflow instance (development purposes)

Run multiple tasks at a time on the Airflow instance (pre-forking model / vertical scaling)

Kick off Kubernetes pods to execute tasks and cleans up automatically on job completion (dynamic horizontal scaling)

Delegate tasks runtime to Celery workers. Requires a message broker like Redis (common in production, horizontal scaling)

> Airflow 101

# XComs (Cross-Communications)

Let tasks exchange messages. XComs are made of key, value, timestamp and task/dag info. They can be pushed or pulled

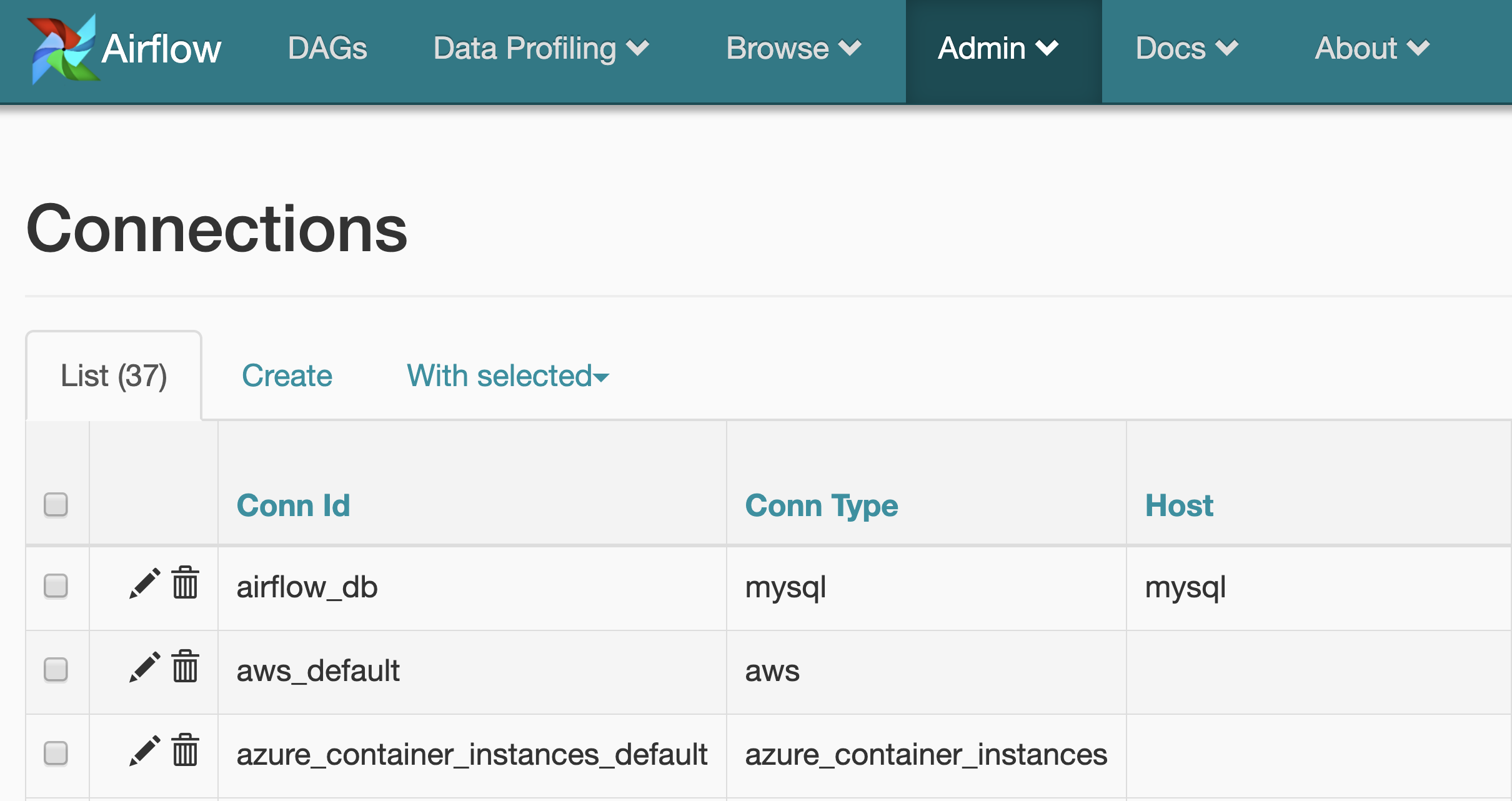

# Connections

The connection information to external systems is stored in the Airflow metadata database and managed in the UI

# DAGS

In Airflow, a DAG – or a Directed Acyclic Graph – is a collection of all the tasks you want to run, organized in a way that reflects their relationships and dependencies.

# Plugins

Airflow offers a generic toolbox for working with data. Using Airflow plugins can be a way for companies to customize their Airflow installation to reflect their ecosystem.

- Make common code logic available to all DAGs (shared library)

- Write your own Operators

- Extend Airflow and build on top of it (Auditing tool)

# Operators

While DAGs describe how to run a workflow, Operators determine what actually gets done. An operator describes a single task in a workflow.

-

BashOperator - executes a bash command

-

PythonOperator - calls an arbitrary Python function

> Airflow OPerators

Focus on your Business logic and leverage operators to glue things together...

SQSPublishOperator

Send message to SQS

DruidOperator

Submits tasks to Druid

DockerOperator

Execute command inside container

JiraOperator

Perform actions on Jira

SlackWebhookOperator

Send notification to Slack

DatabricksOperator

Submits a Spark job to Databricks

S3FileTransformOperator

S3 > local > transform > S3

SparkSqlOperator

Run SparkSql queries

S3ToRedshiftOperator

Load files from S3 to RS

MySqlOperator

Execute SQL query

> Airflow As a service

-

GCP Airflow as a service (On Kubernetes)

- IAM role integration

- No-Ops (Almost...)

-

Astronomer (Airflow Committers)

- Deployment in Astronomer Cloud

- On-prem Kubernetes cluster

-

MLE Airflow

- Perfect project to learn and adopt Kubernetes in MLE team

- ML projects are Kubernetes centric (Kubeflow / MLFlow)

- Built to be easily migrated to a hosted service if wanted

- Stateful components leveraging managed services (RDS)

- Fully integrated with IAM roles / scoped permissions

> Airflow InfraStructure

> Airflow DEMO

Thanks !

Ready to Jump in Airflow now ?