CS6015: Linear Algebra and Random Processes

Lecture 22: Singular Value Decomposition (SVD)

Learning Objectives

What is SVD?

Why do we care about SVD?

Recap

[\mathbf{v}]_S

S^{-1}

[A^k\mathbf{v}_0]_S

\Lambda^k

O(n^2)

A^{k}\mathbf{v} = S\Lambda^{k}S^{-1}\mathbf{v}

S

O(n^2)

O(nk)

O(kn^3)

O(n^2 + nk + n^2)

+ the cost of computing EVs

EVD/Diagonalization/Eigenbasis is useful when the same matrix \(A\) operates on many vectors repeatedly (i.e., if we want to apply \(A^n\) to many vectors)

(this one time cost is then justified in the long run)

\mathbf{v}

A^k\mathbf{v}

A^k

O(kn^3)

(diagonalisation leads to computational efficiency)

Recap

[\mathbf{v}]_S

S^{-1}

[A^k\mathbf{v}_0]_S

\Lambda^k

O(n^2)

A^{k}\mathbf{v} = S\Lambda^{k}S^{-1}\mathbf{v}

S

O(n^2)

O(nk)

O(kn^3)

O(n^2 + nk + n^2)

\mathbf{v}

A^k\mathbf{v}

A^k

O(kn^3)

(diagonalisation leads to computational efficiency)

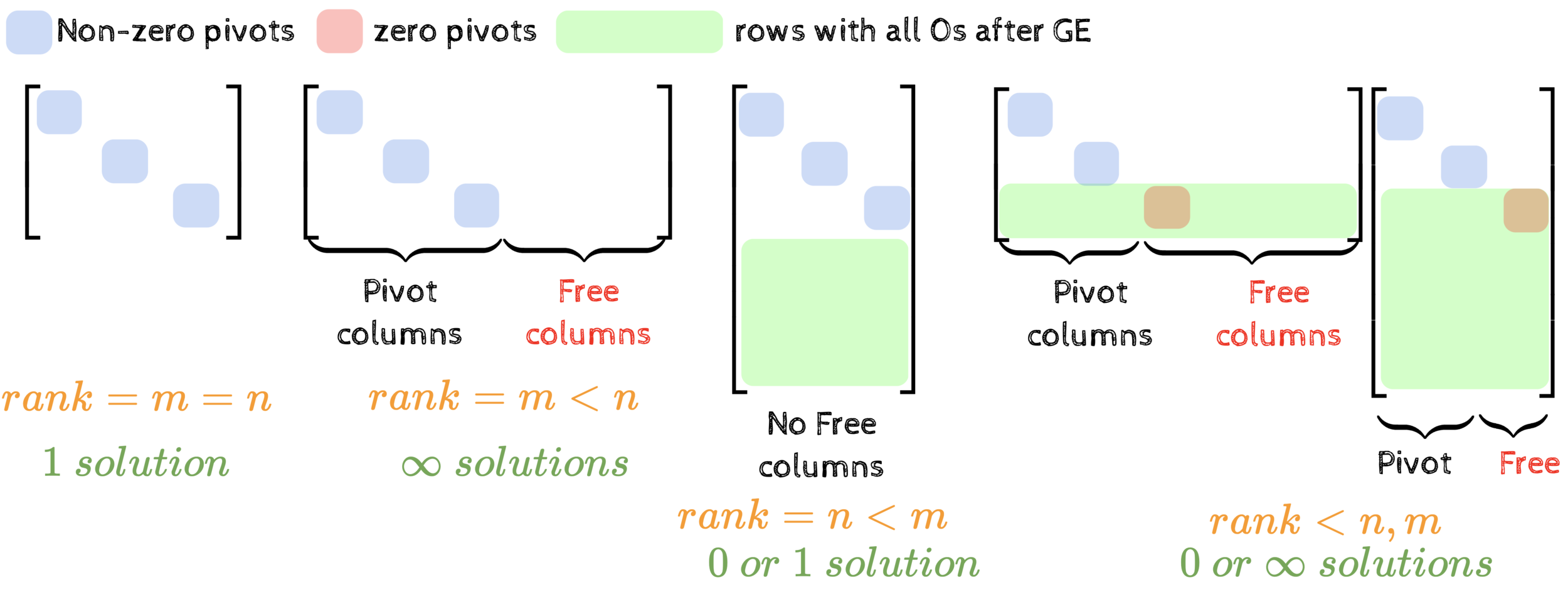

But this is only for square matrices!

What about rectangular matrices?

Even better for symmetric matrices

A = Q\Lambda Q^\top

(orthonormal basis)

Wishlist

Can we diagonalise rectangular matrices?

\underbrace{A}_{m\times n}\underbrace{\mathbf{x}}_{n \times 1} = \underbrace{U}_{m\times m}~\underbrace{\Sigma}_{m\times n}~\underbrace{V^\top}_{n\times n}\underbrace{\mathbf{x}}_{n \times 1}

Translating from std. basis to this new basis

The transformation becomes very simple in this basis

Translate back to the standard basis

(all off-diagonal elements are 0)

(orthonormal)

(orthonormal)

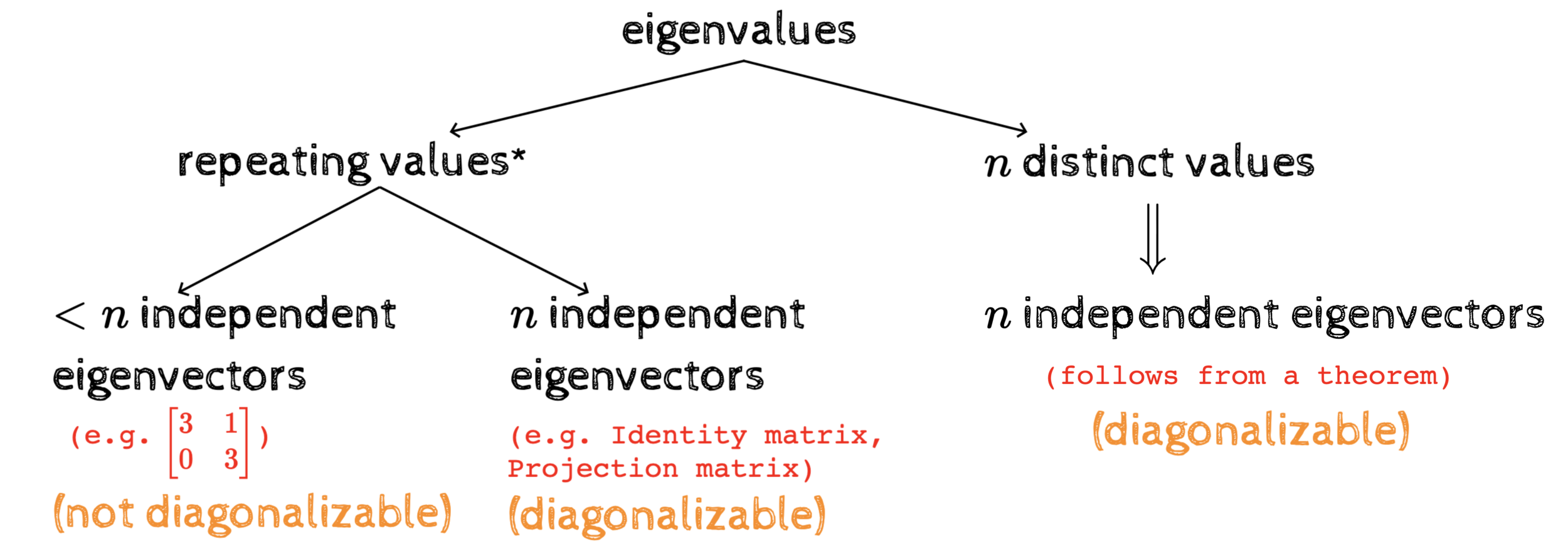

Recap: square matrices

A = S\Lambda S^{-1}

A = Q\Lambda Q^\top

(symmetric)

Yes, we can!

(true for all matrices)

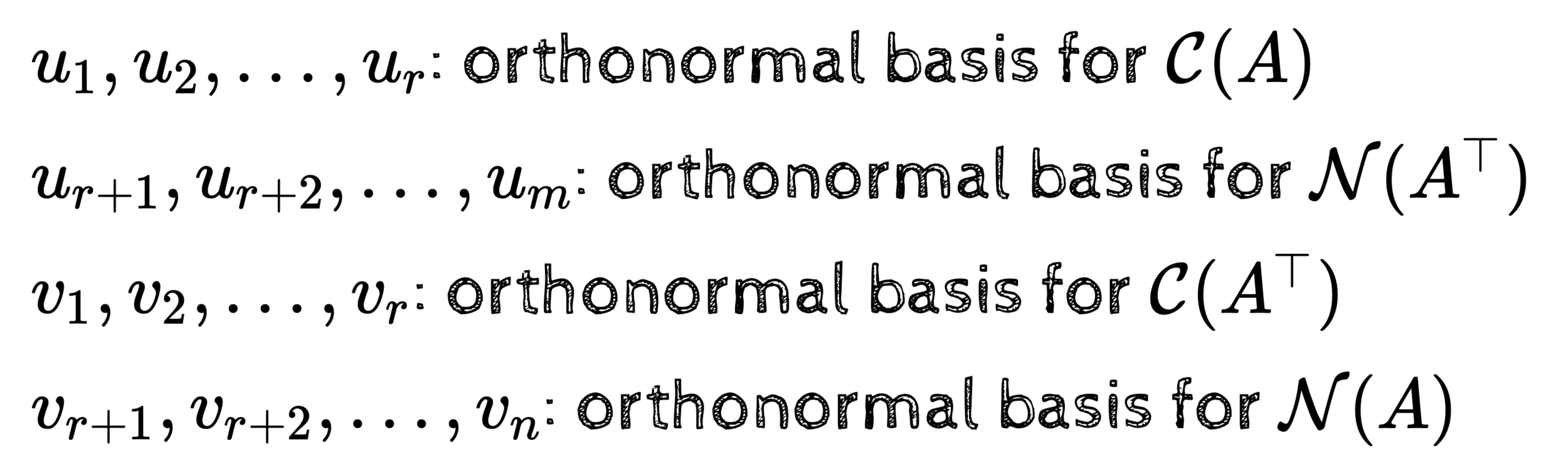

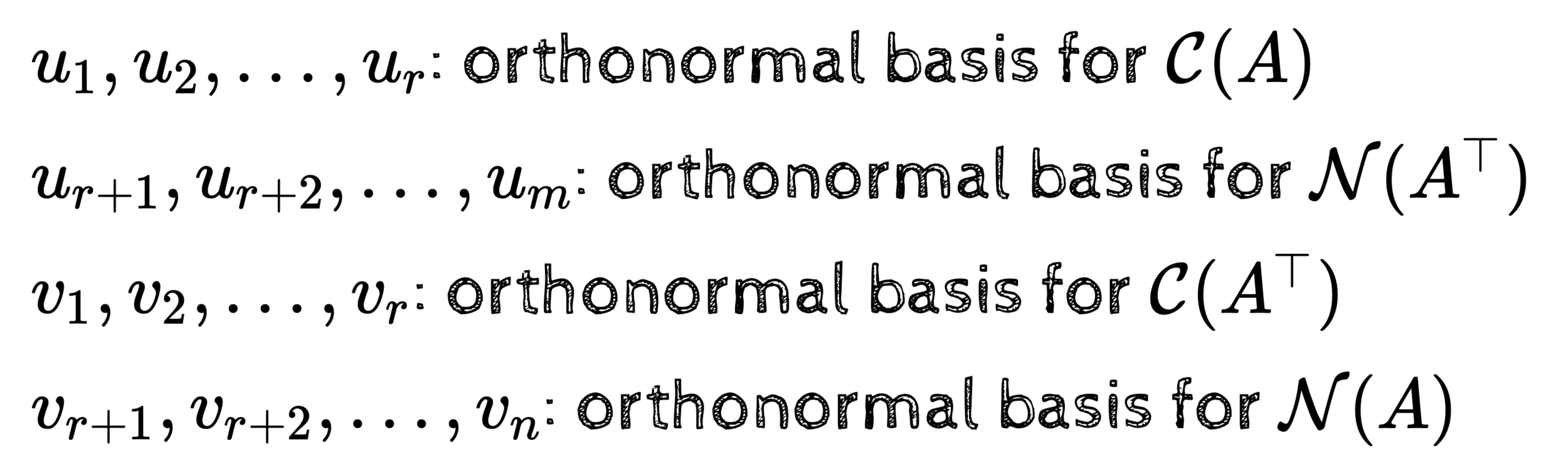

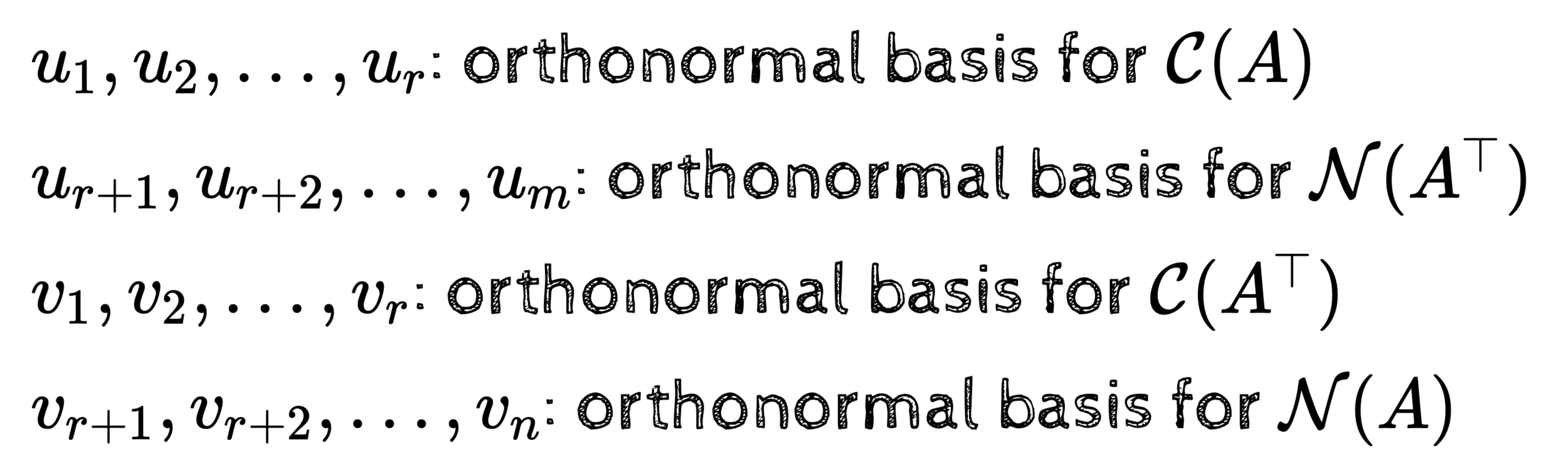

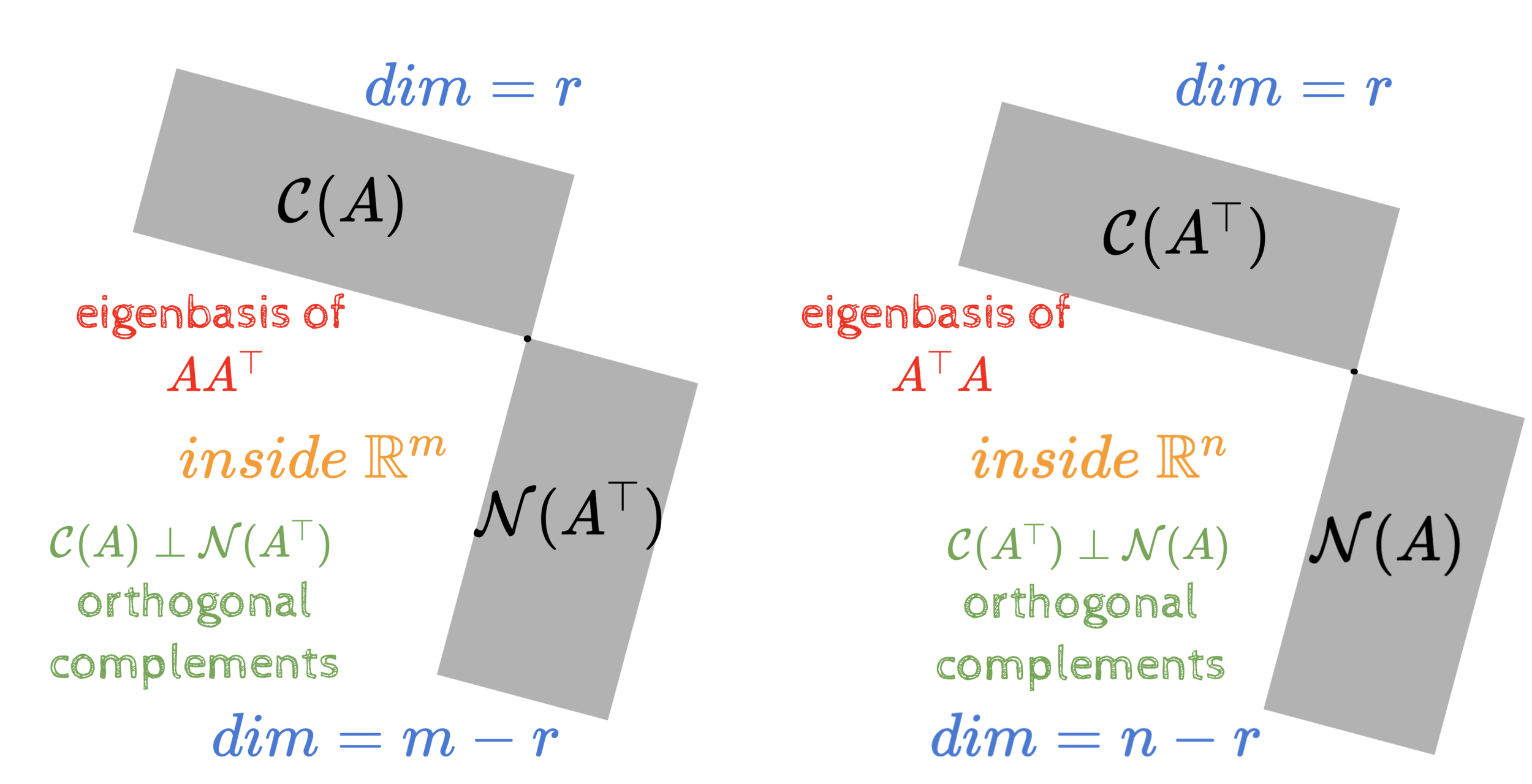

The 4 fundamental subspaces: basis

Let \(A_{m\times n}\) be a rank \(r\) matrix

\(\mathbf{u_1, u_2, \dots, u_r}\) be an orthonormal basis for \(\mathcal{C}(A)\)

\(\mathbf{u_{r+1}, u_{r+2}, \dots, u_{m}}\) be an orthonormal basis for \(\mathcal{N}(A^\top)\)

\(\mathbf{v_1, v_2, \dots, v_r}\) be an orthonormal basis for \(\mathcal{C}(A^\top)\)

\(\mathbf{v_{r+1}, v_{r+2}, \dots, v_{n}}\) be an orthonormal basis for \(\mathcal{N}(A)\)

Let \(A_{m\times n}\) be a rank \(r\) matrix

The 4 fundamental subspaces: basis

Fact 1: Such basis always exist

Start with any basis for a subspace

Use Gram Schmidt to convert it to an orthonormal basis

Fact 2: \(\mathbf{u_1, u_2, \dots, u_r, u_{r+1}, \dots, u_m}\) are orthonormal

The red vectors are orthonormal (given)

The green vectors are orthonormal (given)

Every green vector is orthogonal to every red vector

\(\because \mathcal{N}(A^\top) \perp \mathcal{C}(A) \)

\( \mathcal{C}(A) \)

\( \mathcal{N}(A^\top) \)

\(\mathbf{v_1, v_2, \dots, v_r, v_{r+1}, \dots, v_n}\) are orthonormal

(same argument)

Fact 1: Such basis always exist

Fact 2: \(\mathbf{u_1, u_2, \dots, u_r, u_{r+1}, \dots, u_m}\) are orthonormal

In addition, we want

\(\mathbf{v_1, v_2, \dots, v_r, v_{r+1}, \dots, v_n}\) are orthonormal

A\mathbf{v_i} = \sigma_i\mathbf{u_i}~~\forall i\leq r

\therefore A

\begin{bmatrix}

\uparrow&\uparrow&\uparrow \\

\mathbf{v}_1&\dots&\mathbf{v}_r \\

\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r \\

\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0 \\

0&\dots&0 \\

0&\dots&\sigma_r \\

\end{bmatrix}

The 4 fundamental subspaces: basis

A

\begin{bmatrix}

\uparrow&\uparrow&\uparrow \\

\mathbf{v}_1&\dots&\mathbf{v}_r \\

\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r \\

\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0 \\

0&\dots&0 \\

0&\dots&\sigma_r \\

\end{bmatrix}

Finding \(U\) and \(V\)

\underbrace{A}_{m\times n}~\underbrace{V_r}_{n \times r} = \underbrace{U_r}_{m \times r}~\underbrace{\Sigma}_{r \times r}

(we don't know what such V and U are - we are just hoping that they exist)

\therefore A

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{v}_1&\dots&\mathbf{v}_{r}&\mathbf{v}_{r+1}&\dots&\mathbf{v}_n \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r&\mathbf{u}_{r+1}&\dots&\mathbf{u}_m \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0&0&0 \\

0&\dots&0&0&0 \\

0&\dots&\sigma_r&0&0 \\

0&\dots&0&0&0 \\

0&\dots&0&0&0 \\

\end{bmatrix}

If \(V_r\) and \(U_r\) exist then

null space

First r columns of this product will be and the last n-r columns will be 0

n-r 0 colums

m-r 0 rows

\underbrace{A}_{m\times n}~\underbrace{V}_{n \times n} = \underbrace{U}_{m \times m}~\underbrace{\Sigma}_{m \times n}

\(V\) and \(U\) also exist

The last m-r columns of U will not contribute and hence the first r columns will be the same as and the last n-r columns will be 0

U_r \Sigma

AV_r

Finding \(U\) and \(V\)

AV=U\Sigma

A=U\Sigma V^\top

A^\top A=(U\Sigma V^\top)^\top U\Sigma V^\top

A^\top A=V\Sigma^\top U^\top U\Sigma V^\top

A^\top A=V\Sigma^\top\Sigma V^\top

diagonal

orthogonal

orthogonal

\(V\) is thus the matrix of the \(n\) eigen vectors of \(A^\top A\)

we know that this always exists because is a symmetric matrix

AV=U\Sigma

A=U\Sigma V^\top

AA^\top =U\Sigma V^\top(U\Sigma V^\top)^\top

AA^\top =U\Sigma V^\top V\Sigma^\top U^\top

AA^\top=U\Sigma\Sigma^\top U^\top

diagonal

orthogonal

orthogonal

\(U\) is thus the matrix of the \(m\) eigen vectors of \(AA^\top \)

we know that this always exists because is a symmetric matrix

\(\Sigma^\top\Sigma\) contains the eigenvalues of \(A^\top A \)

HW5:Prove that the non-0 eigenvalues of and are always equal

AA^\top

AA^\top

A^\top A

A^\top A

Finding \(U\) and \(V\)

\underbrace{A}_{m\times n} = \underbrace{U}_{m\times m}~\underbrace{\Sigma}_{m\times n}~\underbrace{V^\top}_{n\times n}

eigenvectors of

transpose of the eigenvectors of

square root of the eigenvalues of or

This is called the Singular Value Decomposition of \(A\)

\(\because U~and~V\) always exist, the SVD of any matrix \(A\) is always possible

since they are eigenvectors of a symmetric matrix

A^\top A

A^\top A

AA^\top

AA^\top

Some questions

\therefore A

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{v}_1&\dots&\mathbf{v}_{r}&\mathbf{v}_{r+1}&\dots&\mathbf{v}_n \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r&\mathbf{u}_{r+1}&\dots&\mathbf{u}_m \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0&0&0 \\

0&\dots&0&0&0 \\

0&\dots&\sigma_r&0&0 \\

0&\dots&0&0&0 \\

0&\dots&0&0&0 \\

\end{bmatrix}

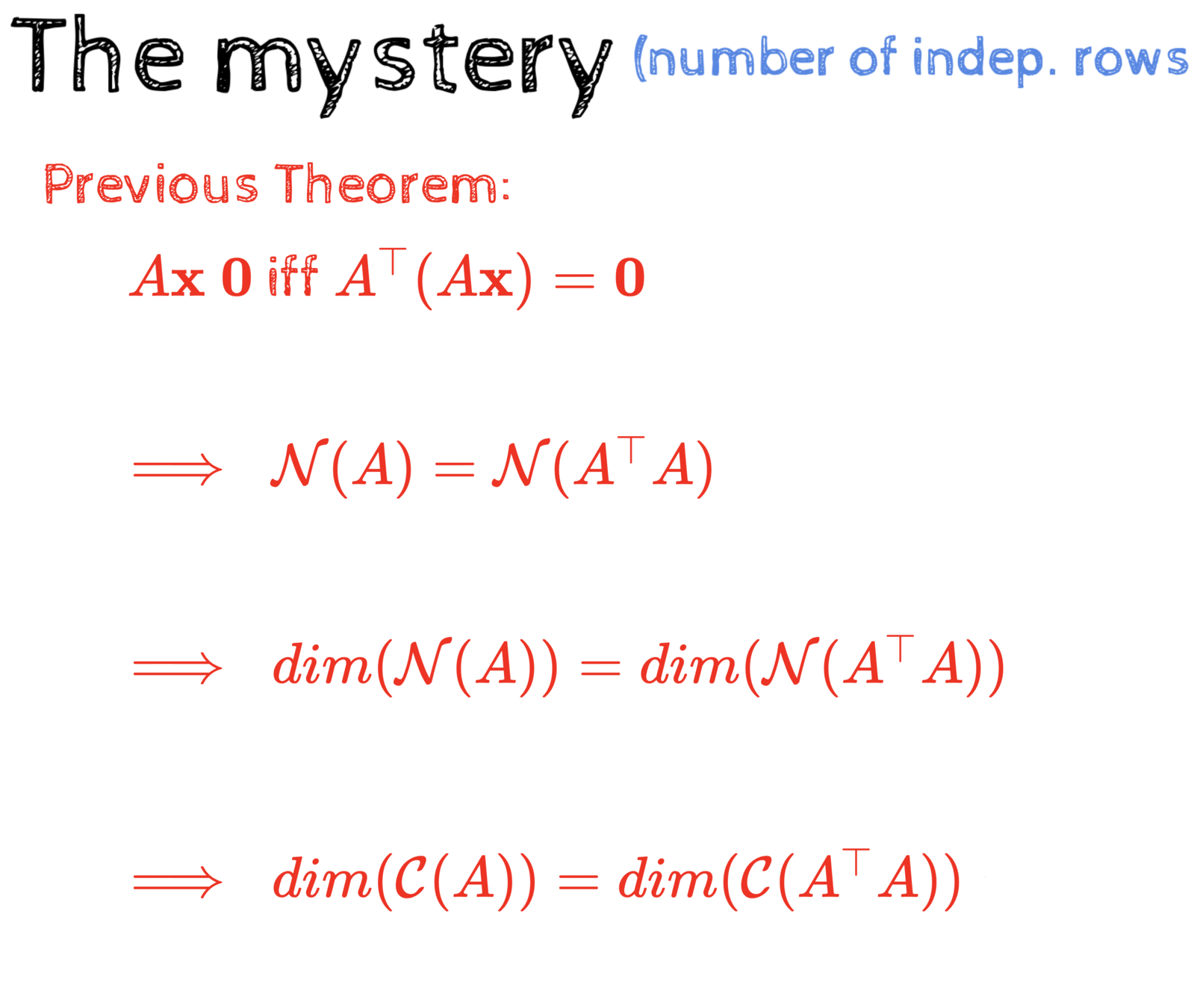

How do we know for sure that these \(\sigma s\) will be 0?

Recall: \(rank(A) = rank(A^\top A) = r\)

If \(rank(A) \lt n\) then rank \(A^\top A \lt n \implies A^\top A\) is singular

\(\implies A^\top A \) has 0 eigenvalues

How many?

as many as the dimension of the nullspace: \(n - r\)

Some questions

\therefore A

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{v}_1&\dots&\mathbf{v}_{r}&\mathbf{v}_{r+1}&\dots&\mathbf{v}_n \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r&\mathbf{u}_{r+1}&\dots&\mathbf{u}_m \\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow&\downarrow \\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0&0&0 \\

0&\dots&0&0&0 \\

0&\dots&\sigma_r&0&0 \\

0&\dots&0&0&0 \\

0&\dots&0&0&0 \\

\end{bmatrix}

How do we know that these form a basis for the column space of A?

How do we know that these form a basis for the rowspace of A?

\underbrace{~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~}

\underbrace{~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~}

so far we only know that these are the eigenvectors of

so far we only know that these are the eigenvectors of

Please work this out! You really need to see this on your own! HW5

AA^\top

A^\top A

Why do we care about SVD?

A=U\Sigma V^\top

\therefore A=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\mathbf{u}_1&\dots&\mathbf{u}_r&\dots&\mathbf{u}_m \\

&\\

&\\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow\\

\end{bmatrix}

\begin{bmatrix}

\sigma_1&\dots&0&0&0&0 \\

0&\dots&0&0&0&0 \\

0&\dots&\sigma_r&0&0&0 \\

0&\dots&0&0&0&0 \\

0&\dots&0&0&0&0 \\

\end{bmatrix}

\begin{bmatrix}

\leftarrow&\dots&\mathbf{v}_{1}^\top&\cdots&\dots&\rightarrow \\

&\\

\leftarrow&\dots&\mathbf{v}_{r}^\top&\cdots&\dots&\rightarrow \\

&\\

&\\

\leftarrow&\dots&\mathbf{v}_{n}^\top&\cdots&\dots&\rightarrow \\

\end{bmatrix}

\therefore A=

\begin{bmatrix}

\uparrow&\uparrow&\uparrow&\uparrow&\uparrow \\

\sigma_1\mathbf{u}_1&\dots&\sigma_r\mathbf{u}_r&\dots&0 \\

&\\

&\\

\downarrow&\downarrow&\downarrow&\downarrow&\downarrow\\

\end{bmatrix}

\begin{bmatrix}

\leftarrow&\dots&\mathbf{v}_{1}^\top&\cdots&\dots&\rightarrow \\

&\\

\leftarrow&\dots&\mathbf{v}_{r}^\top&\cdots&\dots&\rightarrow \\

&\\

&\\

\leftarrow&\dots&\mathbf{v}_{n}^\top&\cdots&\dots&\rightarrow \\

\end{bmatrix}

\therefore A=\sigma_1\mathbf{u_1}\mathbf{v_1}^\top+\sigma_2\mathbf{u_2}\mathbf{v_2}^\top+\cdots+\sigma_r\mathbf{u_r}\mathbf{v_r}^\top

n-r 0 columns

Why do we care about SVD?

A=U\Sigma V^\top

\therefore A=\sigma_1\mathbf{u_1}\mathbf{v_1}^\top+\sigma_2\mathbf{u_2}\mathbf{v_2}^\top+\cdots+\sigma_r\mathbf{u_r}\mathbf{v_r}^\top

largest sigma

smallest sigma

we can sort these terms according to sigmas

\(A\) has \(m \times n \) elements

Each \(\mathbf{u_i}\) has m elements

Each \(\mathbf{v_i}\) has n elements

After SVD you can represent \(A\) using \(r(m+n+1)\) elements

If the rank is very small then this would lead to significant compression

Even further compression can be obtained by throwing away terms corresponding to very small \(\sigma s\)

Fun with flags :-)

Original Image: 1200 x 800

Lot of redundancy

\(rank \lt\lt 800\)

Original Image: 1200 x 800

Lot of redundancy

\(rank \lt\lt 800\)

Puzzle: What is the rank of this flag?

Best rank-k approximation

||A||_F = \sqrt{\sum_{i=1}^m\sum_{j=1}^n |A_{ij}|^2}

A=\sigma_1\mathbf{u_1}\mathbf{v_1}^\top+\sigma_2\mathbf{u_2}\mathbf{v_2}^\top+\cdots+\sigma_k\mathbf{u_k}\mathbf{v_k}^\top+\cdots+\sigma_r\mathbf{u_r}\mathbf{v_r}^\top

Frobenius norm

\hat{A}_k=\sigma_1\mathbf{u_1}\mathbf{v_1}^\top+\sigma_2\mathbf{u_2}\mathbf{v_2}^\top+\cdots+\sigma_k\mathbf{u_k}\mathbf{v_k}^\top

rank-k approximation of A - dropped the last r - k terms

Theorem: SVD gives the best rank-\(k\) approximation of the matrix \(A\)

i.e. \(||A - \hat{A}_k||_F\) is minimum when

\hat{A}_k=U_k\Sigma_kV^T_k

we will not prove this

Still hate eigenvalues?

Do you remember this guy?

Do you remember how we beat him?

Time Travel!

Do you remember who figured out time travel?

Do you remember how?

And with that, I rest my case!

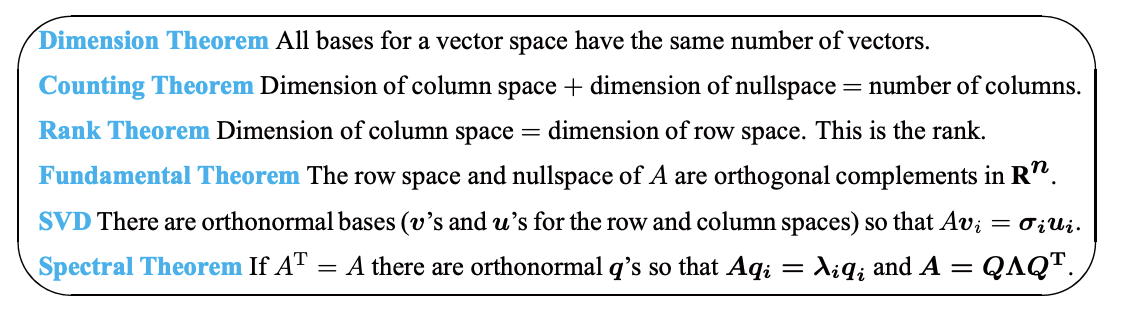

Summary of the course

(in 3 pictures)

Summary of the course

(in 6 great theorems)

Source: Introduction to Linear Algebra, Prof. Gilbert Strang