Humanizing react apps with AI-powered reconciliation

nik72619c

@niksharma1997

AI can build apps...

Saving dev time

Devs using ai

FE apps with aI

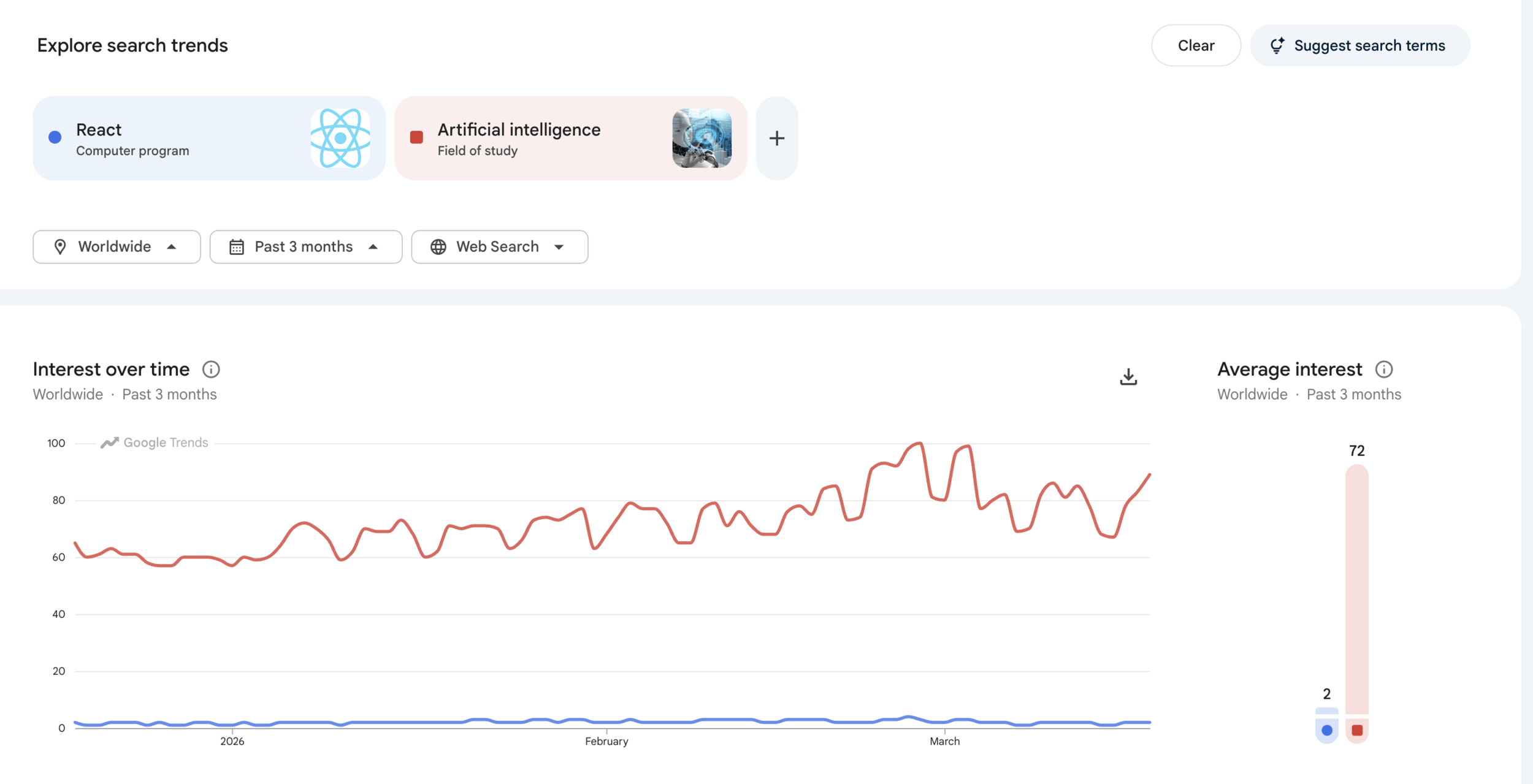

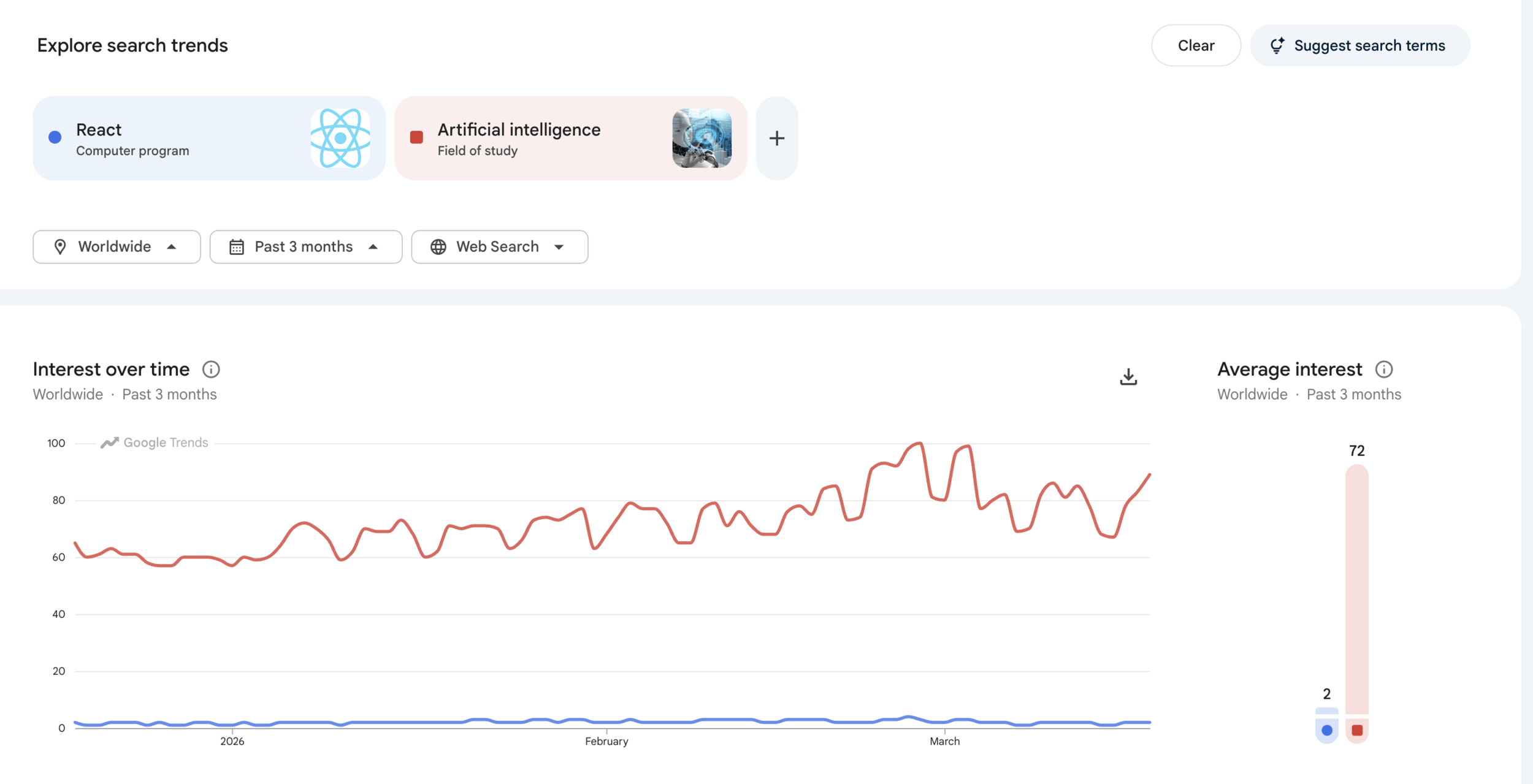

60%

90%

60%

Saving dev time

Devs using ai

FE apps with aI

60%

90%

60%

Saving dev time

Devs using ai

FE apps with aI

60%

90%

60%

What if we shift to react??

Hey, I'm Nikhil 👋

I love to talk about performance, react and design systems

nik72619c

@niksharma1997

Events

Events

Reconcile all updates

Events

Events

Reconcile meaningful updates

1

1

scenario

Should this update trigger a repaint?

- Virtualization

- useMemo & useCallback

- Value change

- Scroll velocity

- Click / hover on a post

- last updated / frequency

How it works?

Create a tfjs model with parameters

// model.js

export function createModel() {

const model = tf.sequential();

model.add(

tf.layers.dense({

inputShape: [8],

units: 12,

activation: "relu",

})

);

model.add(

tf.layers.dense({

units: 1,

activation: "sigmoid",

})

);

model.compile({

optimizer: "adam",

loss: "binaryCrossentropy",

metrics: ["accuracy"],

});

return model;

}How it works?

Create a tfjs model with parameters

// Fake streams: build a row of 8 numbers, label 1 = “meaningful update”, 0 = skip

for (let i = 0; i < 700; i++) {

// ... pick random delta, viewport, dwell, scroll, etc. ...

rows.push([/* 8 normalized numbers */]);

labels.push(/* 1 or 0 from your “meaningful” rule */);

}

await model.fit(tf.tensor2d(rows), tf.tensor2d(labels, [labels.length, 1]), {

epochs: 20,

});Train the model on sample data

How it works?

Create a tfjs model with parameters

// Ask the model: should we commit this new count?

const p = model.predict(tf.tensor2d([[/* same 8 numbers, live */]])).dataSync()[0];

// Commit if model says “yes” OR huge jump safety rule

if (p > 0.52) {

setLikeCount(nextCount); // ← this triggers React reconcile / flash in the demo

}Train the model on sample data

Predict on each candidate update

How it works?

Create a tfjs model with parameters

// 1. Collect features for each row in the same order as your list (or keep parallel arrays of rowId and feature row):

// rows[i] = [f0, f1, ... f(F-1)] for row i

const rows = items.map((item) => buildFeatures(item));

// 2. One tensor, one forward pass

const input = tf.tensor2d(rows); // shape [n, F]

const output = model.predict(input); // shape [n, 1] for your sigmoid head

const scores = Array.from(output.dataSync()); // length n

input.dispose();

output.dispose();

// 3. Map scores back to work

items.forEach((item, i) => {

const p = scores[i];

const shouldCommit = p > 0.52; // your threshold

// apply or skip for item.id

});Train the model on sample data

Predict on each candidate update

optimise to use less predictions

2

2

scenario

Where do users visit the most?

<Charts />

<Expenses />

<Summary />

<div>

<Chart />

<Expenses />

<Summary />

</div>

<Charts />

<Expenses />

<Summary />

let Chart = React.lazy(() => import('./Chart'));

let Expenses = React.lazy(() => import('./Expenses'));

let Summary = React.lazy(() => import('./Summary'));

...

...

...

<div>

<Suspense fallback={<Loader />}>

<Chart />

</Suspense>

<Suspense fallback={<Loader />}>

<Expenses />

</Suspense>

<Suspense fallback={<Loader />}>

<Summary />

</Suspense>

</div>

- Click counts

- Sequence of routes

Chart

Expenses

Summary

.train()

.predict()

Create the model

const WIDGET_COUNT = 5;

function oneHot(widgetId) {

return Array.from({ length: WIDGET_COUNT }, (_, i) => (i === widgetId ? 1 : 0));

}

// Example: widget 2 → [0, 0, 1, 0, 0]

function createPreferenceModel() {

const model = tf.sequential();

model.add(

tf.layers.dense({

inputShape: [5],

units: 10,

activation: "relu",

})

);

model.add(

tf.layers.dense({

units: 1,

activation: "sigmoid",

})

);

model.compile({

optimizer: "adam",

loss: "meanSquaredError",

});

return model;

}

1. capturing clicks

Create the model

import * as tf from '@tensorflow/tfjs';

const model = tf.sequential();

model.add(tf.layers.lstm({

units: 0,

inputShape: [sequenceLength, linksCount]

}));

model.add(tf.layers.dense({

units: linksCount,

activation: 'sigmoid'

}));2. capturing sequence of clicks

Create the model

import * as tf from '@tensorflow/tfjs';

async train (sequencesBatch, labelsBatch) {

await this.model.fit(

sqeuencesBatch, labelsBatch, {

epochs: 30,

validationSplit: 0.15

});

}

Train the model

Create the model

import * as tf from '@tensorflow/tfjs';

model.predict(sequence);

// UI

startPreloadTransition(() => {

setPreloadedWidgetId(predictedWidget);

setPreparedWidgetId(predictedWidget);

});Train the model

predict based on the clicks or sequence

3

scenario

Batch updates intelligently

3

Click

Click

state update!

state update!

state update!

Click

state update!

state update!

state update!

Click

batch update!

Click

batch update!

Was everything needed to be batched?

Click

state update!

state update!

state update!

Click

state update!

important batch of state change!

state update!

Click

important batch of state change!

For our example...

1. Creating the neural network

export function createBatchModel() {

const model = tf.sequential();

model.add(tf.layers.dense({ inputShape: [2], units: 6, activation: "relu" }));

model.add(tf.layers.dense({ units: 1, activation: "sigmoid" }));

model.compile({ optimizer: "adam", loss: "binaryCrossentropy" });

return model;

}1. Delta change

2. Event count

For our example...

2. Training the model

for (let i = 0; i < 240; i++) {

const absNetDelta = Math.random() * 8;

const eventCount = 1 + Math.floor(Math.random() * 6);

rows.push([absNetDelta / 8, eventCount / 6]);

const meaningful = absNetDelta > 3.8 || eventCount >= 4 ? 1 : 0;

labels.push(meaningful);

}

// model.js

const xs = tf.tensor2d(rows);

const ys = tf.tensor2d(labels, [labels.length, 1]);

await model.fit(xs, ys, { epochs: 10, verbose: 0 });

1. Delta change

2. Event count

For our example...

3. Predict meaningful batches

const FLUSH_MS = 360;

const eventBufferRef = useRef([]);

const flushRef = useRef(null);

const scheduleFlush = () => {

if (flushRef.current) return;

flushRef.current = setTimeout(async () => {

const batch = [...eventBufferRef.current];

eventBufferRef.current = [];

flushRef.current = null;

if (!batch.length || !modelRef.current) return;

// → next block: merge, predict, set state once

const summary = await summarizeBatch(batch, modelRef.current);

setAiBoard((prev) => applyPatches(prev, summary.patches));

}, FLUSH_MS);

};

// On each simulated tick (AI path only):

eventBufferRef.current.push({ cardId, delta });

scheduleFlush();1. Delta change

2. Event count

Card A

Card B

Card C

+2, +1, -1 -> netDelta = +2, eventCount = 3

+3, +2 -> netDelta = +5, eventCount = 2

+1 -> netDelta = +1, eventCount = 1

Card A

Card B

Card C

Features:

- A:

[2/8=0.25, 3/6=0.50] - B:

[5/8=0.625, 2/6=0.33] - C:

[1/8=0.125, 1/6=0.17]

Possible model scores:

- A ->

0.48(skip) - B ->

0.57(keep) - C ->

0.22(skip)

Card B

Is AI replacing React

What if predictions are wrong

Nope, its gonna suggest

Fallback to normal behaviour, training is the key

Train your models with care

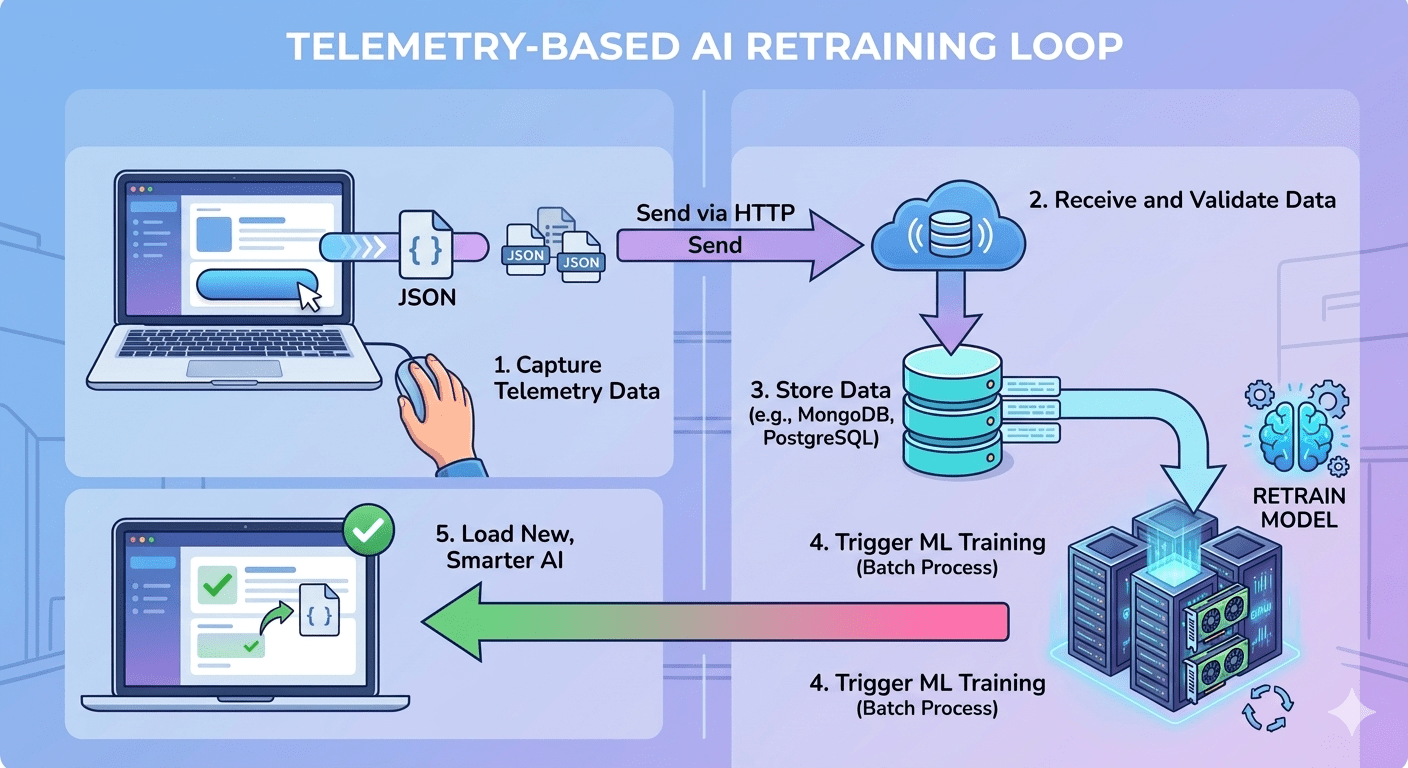

await model.fit();

Backend

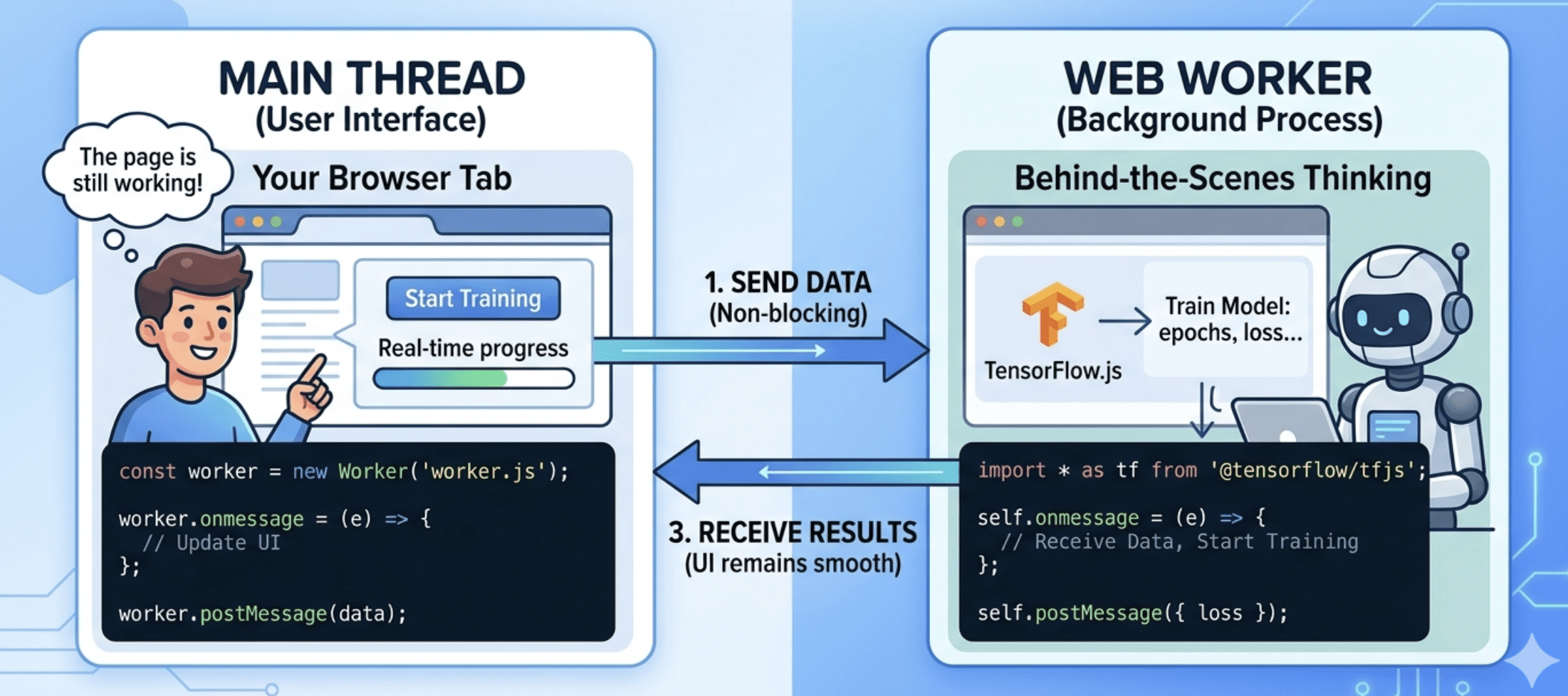

Web workers

Train your models with care

await model.fit();

Backend

Web workers

Train your models with care

await model.fit();

Backend

Web workers

Beware of memory leaks

// Wrap your code in tf.tidy() to automatically clean up intermediate tensors, or manually call tensor.dispose() for long-lived ones.

useEffect(() => {

const model = tf.sequential(); // Create simple model

model.add(tf.layers.dense({ units: 1, inputShape: [1] }));

// CLEANUP: React runs this when the component disappears

return () => {

model.dispose(); // Wipes the GPU memory

console.log("Memory Cleaned");

};

}, []);

“The best code is no code at all.”

“The best code is no code at all.”

RENDER

RENDER

We're hiring!!! 🙌🏻

https://www.deel.com/careers/open-roles/

Happy coding

✌️

nik72619c

@niksharma1997