Tune Assistant Makes LLM Smarter

by 🎙️ @aakash7539 & @priyas

- 🧑🏻💻 Software Engineer at Tune AI

- 🏗️ Building GenAI, ML Systems, and Infra at Tune

-

@aakash7539

About Aakash

About Priya

- 🧑🏻💻 Software Engineer at Tune AI

- 🏗️ Been building Assistants, Backend System and Product

-

@priyas

A tech startup with roots in the city of Chennai. Currently in San Francisco, Bangalore and Chennai. We want to build the best AI out there.

We are the fastest at deploying Llamas in the world. We put Llama-2 in 24 hours and Llama-3 in 1 hour from launch!

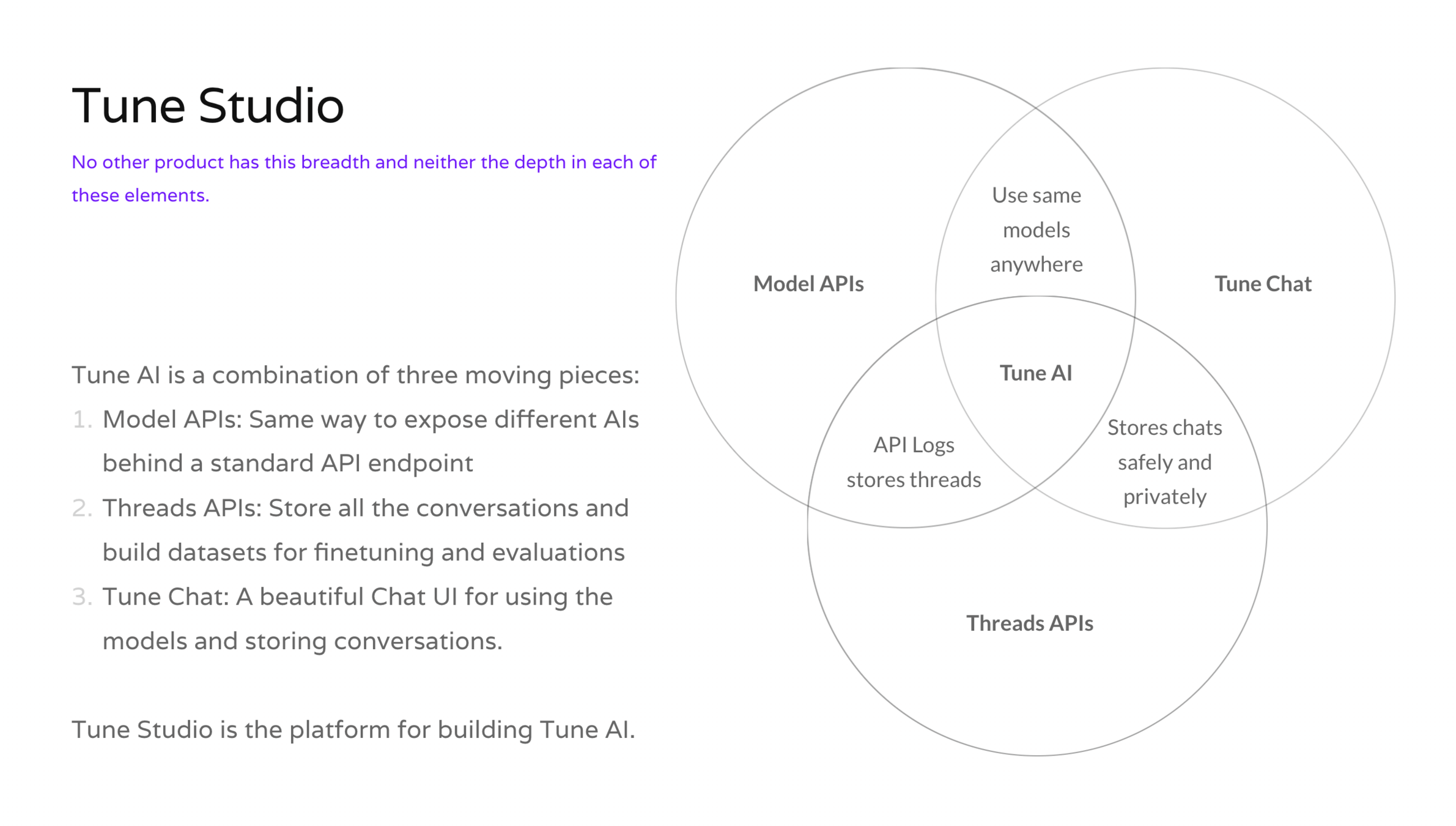

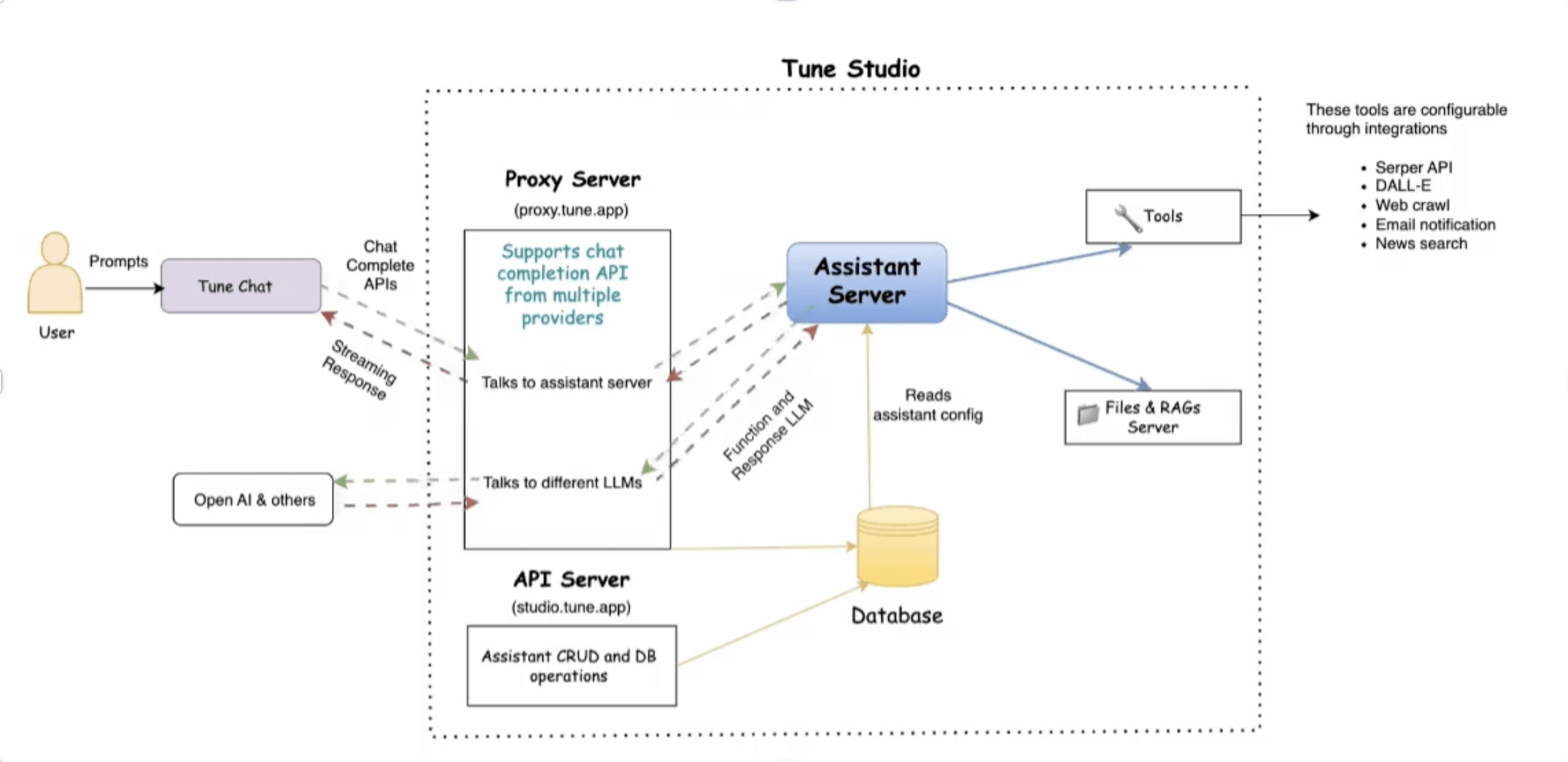

We have built two products, called Tune Studio and Tune Chat.

999,999,999…

Tokens Generated

6,768,000+

Over 6.5 Million conversations with AIs that can read files, generate images, send emails, surf internet and more.

431,300+

Signups on Tune AI

Why is a basic LLMs not smart

- Limited to generating text based on patterns learned from its training data.

- Cannot handle more complex conversations, such as booking a flight or making a payment

- Cannot provide real-time updates, such as fetching the latest news or weather updates

-

Glorified Text Generation Model

Assistants is the solution

-

Solve Complex, Multi-step tasks

-

Proactive vs. Reactive

(Agents vs. ChatGPT)

-

24/7 Operation vs. Single-interaction model

*Email*

follow this thread

*Analyst*

Hey, i found the

discrepancies

-

"Just a software" that uses A.I. to accomplish tasks.

-

Executes multiple steps.

-

Steps are determined by an LLM.

-

Sometimes, the “orchestration layer” is needed.

What ❓

“A person's a person, no matter how small.”

― Dr. Seuss

Agentic Workflow

- Sophisticated iterative and multi-step process to interact and instruct LLMs to complete complex tasks with more/better accuracy.

- In this process, a single task is divided into multiple small tasks that are more manageable

- And each task leave scope for improvements throughout the task completion process.

Multi agent framework in a nutshell

-

Proactive Not Reactive

-

Non-stop (Internal monologue)

-

Commodities Tools

What Tune thinks Agents should be

Internet Search 🔍

- SerpAPi

- Exa.ai

- anon.com

Maps 🗺️📍

- Google Maps

- OSM

Documents 📃

- Google Docs

- Notion

Key Components 🏗️⚙️

-

Function-calling LLM 🧠

-

Task schedulers and orchestrators 🤹🏻♂️

-

Tool integration for expanded capabilities 🛠️

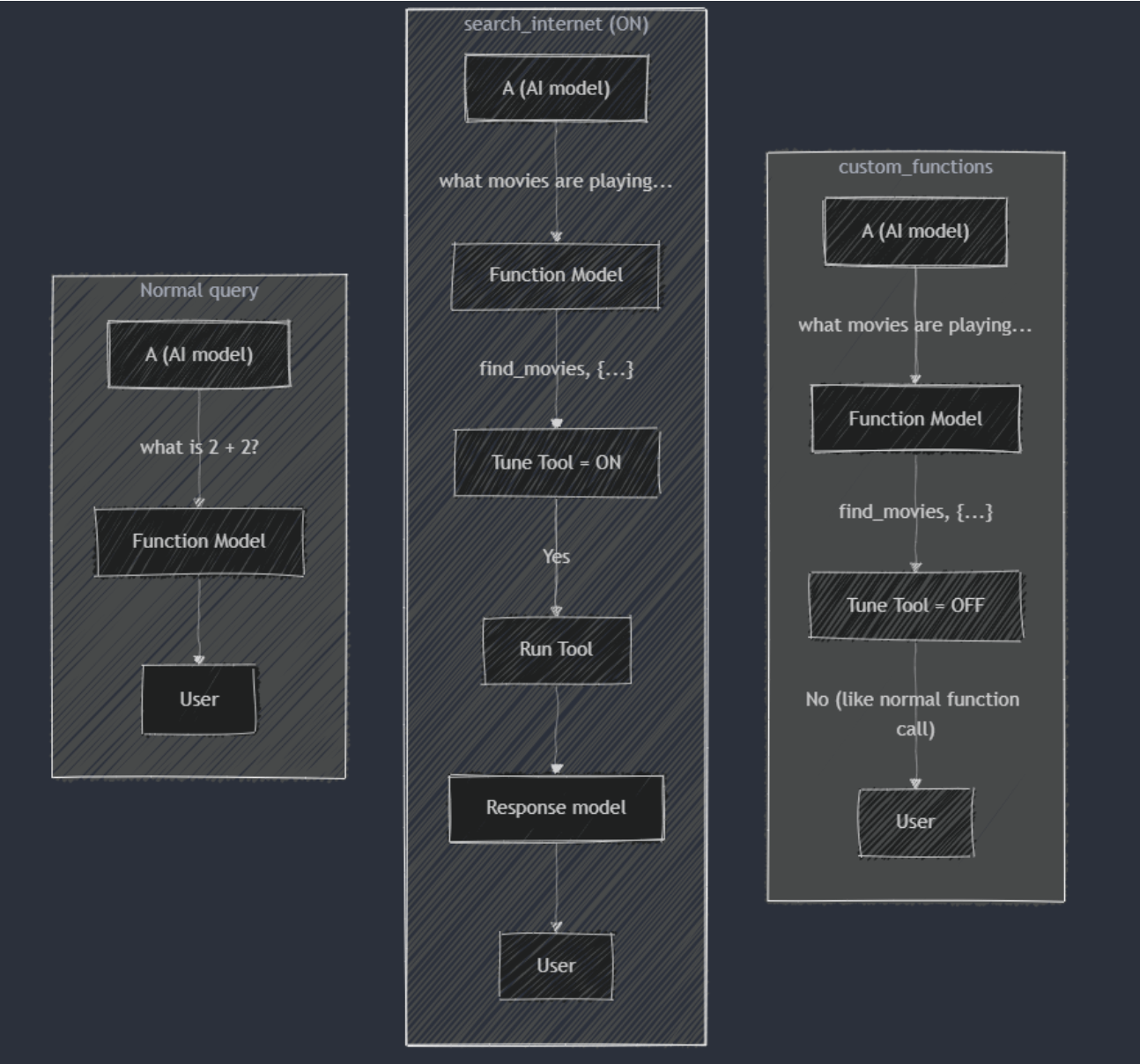

Call LLM with Tools

LLM responds function

You run function

LLM gives response

Allows access to application logic and data directly through the chat completion API.

Enhances the LLM's ability to provide accurate and relevant responses.

Identifies the function using its description from the list of available tools

Provides the function name and needed arguments in a JSON response.

You call the required function with the provided arguments.

Ensures the correct data or action is retrieved or completed

LLM uses the results from the function to generate a response.

Response is detailed and tailored to the user's query.

Function Calling Flow

Function calling Flow

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Proin urna odio, aliquam vulputate faucibus id, elementum lobortis felis. Mauris urna dolor, placerat ac sagittis quis.

Title Text

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Proin urna odio, aliquam vulputate faucibus id, elementum lobortis felis. Mauris urna dolor, placerat ac sagittis quis.

Behind the scenes - Function Calling Process Flow

Tune Assistant Design

Limitations

-

Models are "stupiddd"

- Recursive multistep sucks

- Hallucination with scaling functions

- Baby level understanding of complex functions - data problem

- Massive models

-

LLMs are not fast (Not enough tokens/sec)

- Agents Thinking

- Thinking Tokens

- Less Tokens Less thinking/sec

- Less thinking/sec Slow agents

Checkout the more samples 👇

Text

Subscribe to our newsletter for the latest in GenAI news and updates 📰 🤓

tune.beehiiv.comThank You!!