Создание и запись видео композиций нативными средствами Android

Illya Rodin

-

Отображение камеры на текстуре в OpenGL -

Применение хроматического ключа - Запись видео с результирующим изображением

Отображение камеры на текстуре в OpenGL

SurfaceView

Vs

GlSurfaceView

Vs

TextureView

SurfaceView

Pros:

- Простота

- Есть возможность работы как с OpenGL так и с Canvas

Cons:

- Появление артефактов при перемещении и скалировании (до Android N)

- Необходимо реализовывать собсвенный поток отрисовки

GlSurfaceView

Pros:

- Свой собственный поток отрисовки

- Более глубокая интеграция с OpenGL

Cons:

- Появление артефактов при перемещении и скалировании (до Android N)

TextureView

Pros:

- По-сути, является обычной View и поддерживает почти все что свойственно View

- Позволяет работать как с OpenGL так и с Canvas

Cons:

- Работает только в режиме включенной GPU акселлерации

- Требует отдельный поток отрисовки

Camera API

Vs

Camera 2 API

Подготовка

1. Инициализация текстуры

int[] mTextureHandles = new int[1];

GLES20.glGenTextures(1, mTextureHandles, 0);

GLES20.glBindTexture(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, mTextureHandles[0]);

GLES20.glTexParameteri(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, GLES20.GL_TEXTURE_WRAP_S, GLES20.GL_CLAMP_TO_EDGE);

GLES20.glTexParameteri(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, GLES20.GL_TEXTURE_WRAP_T, GLES20.GL_CLAMP_TO_EDGE);

GLES20.glTexParameteri(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, GLES20.GL_TEXTURE_MIN_FILTER, GLES20.GL_LINEAR);

GLES20.glTexParameteri(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, GLES20.GL_TEXTURE_MAG_FILTER, GLES20.GL_LINEAR);0. Инициализация вспомогательных данных

final byte FULL_QUAD_COORDS[] = {-1, 1, -1, -1, 1, 1, 1, -1};

ByteBuffer mFullQuadVertices = ByteBuffer.allocateDirect(4 * 2);

mFullQuadVertices.put(FULL_QUAD_COORDS).position(0);

2. Настройка холста камеры

SurfaceTexture mSurfaceTexture = new SurfaceTexture(mCameraTexture.getTextureId());

mSurfaceTexture.setOnFrameAvailableListener(this);

mCamera.setPreviewTexture(mSurfaceTexture);

//------------------------------------------------//

@Override

public synchronized void onFrameAvailable(SurfaceTexture surfaceTexture){

requestRender();

}Настройка шейдеров

1. Вершинный шейдер

public static final String VERTEX_SHADER = " \n"

+ "attribute vec4 vertexPosition; \n"

+ "attribute vec2 vertexTexCoord; \n"

+ "varying vec2 texCoord; \n"

+ "uniform mat4 modelViewProjectionMatrix; \n"

+ "\n"

+ "void main() \n"

+ "{ \n"

+ " gl_Position = modelViewProjectionMatrix * vertexPosition; \n"

+ " texCoord = vertexTexCoord; \n"

+ "} \n";2. Фрагментный шейдер

public static final String FRAGMENT_SHADER = " \n"

+ "precision mediump float; \n"

+ "uniform samplerExternalOES texSamplerOES; \n"

+ "varying vec2 texCoord; \n"

+ "\n"

+ "void main() \n"

+ "{ \n"

+ " gl_FragColor = texture2D(texSamplerOES, texCoord); \n"

+ "} \n";Создание программы

private int loadShader(int shaderType, String source)throws Exception{

int shader = GLES20.glCreateShader(shaderType);

if(shader != 0){

GLES20.glShaderSource(shader, source);

GLES20.glCompileShader(shader);

int[] compiled = new int[1];

GLES20.glGetShaderiv(shader, GLES20.GL_COMPILE_STATUS, compiled, 0);

if(compiled[0] == 0){

String error = GLES20.glGetShaderInfoLog(shader);

GLES20.glDeleteShader(shader);

throw new Exception(error);

}

}

return shader;

}

public int setProgram(int vertexShader, int fragmentShader, Context context)

throws Exception{

mShaderVertex = loadShader(GLES20.GL_VERTEX_SHADER, VERTEX_SHADER );

mShaderFragment = loadShader(GLES20.GL_FRAGMENT_SHADER, FRAGMENT_SHADER );

int program = GLES20.glCreateProgram();

if(program != 0){

GLES20.glAttachShader(program, mShaderVertex);

GLES20.glAttachShader(program, mShaderFragment);

GLES20.glLinkProgram(program);

int[] linkStatus = new int[1];

GLES20.glGetProgramiv(program, GLES20.GL_LINK_STATUS, linkStatus, 0);

if(linkStatus[0] != GLES20.GL_TRUE){

String error = GLES20.glGetProgramInfoLog(program);

deleteProgram();

throw new Exception(error);

}

}

return program;

}

public void deleteProgram(){

GLES20.glDeleteShader(mShaderVertex);

GLES20.glDeleteShader(mShaderFragment);

GLES20.glDeleteProgram(mProgram);

}Отрисовка

/*

int videoPlaybackVertexHandle = GLES20.glGetAttribLocation(

videoShaderID, "vertexPosition");

int videoPlaybackTexCoordHandle = GLES20.glGetAttribLocation(

videoShaderID, "vertexTexCoord");

int videoMVPMatrixHandle = GLES20.glGetUniformLocation(

videoShaderID, "modelViewProjectionMatrix");

int videoPlaybackTexSamplerOESHandle = GLES20.glGetUniformLocation(

videoPlaybackShaderID, "texSamplerOES");

private final double quadTexVideoCoordsArray[] = {

0.0f, 0.0f,

1.0f, 0.0f,

1.0f, 1.0f,

0.0f, 1.0f};

Buffer quadTexCoords

*/

@Override

public synchronized void onDrawFrame(GL10 gl) {

GLES20.glClearColor(0.0f, 0.0f, 0.0f, 0.0f);

GLES20.glClear(GLES20.GL_COLOR_BUFFER_BIT);

GLES20.glViewport(0, 0, mWidth, mHeight);

GLES20.glUseProgram(videoPlaybackShaderID);

GLES20.glVertexAttribPointer(videoVertexHandle, 2,

GLES20.GL_BYTE, false, 0, mFullQuadVertices);

GLES20.glVertexAttribPointer(videoTexCoordHandle, 2,

GLES20.GL_FLOAT, false, 0, quadTexеCoords);

GLES20.glEnableVertexAttribArray(videoVertexHandle);

GLES20.glEnableVertexAttribArray(videoTexCoordHandle);

GLES20.glActiveTexture(GLES20.GL_TEXTURE0);

GLES20.glBindTexture(GLES11Ext.GL_TEXTURE_EXTERNAL_OES,

mTextureHandles[0]);

GLES20.glUniformMatrix4fv(videoMVPMatrixHandle, 1,

false, modelViewProjectionVideo, 0);

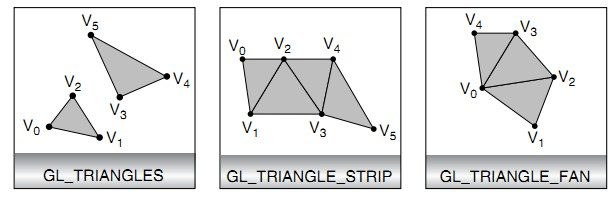

GLES20.glDrawArrays(GLES20.GL_TRIANGLE_STRIP, 0, 4);

GLES20.glUniform1i(videoTexSamplerOESHandle, 0);

GLES20.glDisableVertexAttribArray(videoVertexHandle);

GLES20.glDisableVertexAttribArray(videoTexCoordHandle);

GLES20.glUseProgram(0);

}Обработка изображения

precision mediump float;

uniform sampler2D u_Texture;

varying vec2 v_TexCoordinate;

vec3 mosaic(vec2 position){

vec2 p = floor(position)/8.;

return texture2D(u_Texture, p).rgb;

}

vec2 sw(vec2 p) {return vec2( floor(p.x) , floor(p.y) );}

vec2 se(vec2 p) {return vec2( ceil(p.x) , floor(p.y) );}

vec2 nw(vec2 p) {return vec2( floor(p.x) , ceil(p.y) );}

vec2 ne(vec2 p) {return vec2( ceil(p.x) , ceil(p.y) );}

vec3 glass(vec2 p) {

vec2 inter = smoothstep(0., 1., fract(p));

vec3 s = mix(mosaic(sw(p)), mosaic(se(p)), inter.x);

vec3 n = mix(mosaic(nw(p)), mosaic(ne(p)), inter.x);

return mix(s, n, inter.y);

}

void main()

{

vec3 color = glass(v_TexCoordinate*8.);

gl_FragColor = vec4(color, 1.);

}Blur

precision mediump float;

varying vec2 vTextureCoord;

uniform samplerExternalOES sTexture;

void main() {

vec4 tc = texture2D(sTexture, vTextureCoord);

float color = tc.r * 0.3 + tc.g * 0.59 + tc.b * 0.11;

gl_FragColor = vec4(color, color, color, 1.0);

};

B/W

precision mediump float;

uniform sampler2D u_Texture;

varying vec2 v_TexCoordinate;

//Color gradient

vec4 RandomGradientWarm() {

vec4 color;

vec3 purple = vec3(180./255., 151./255., 202./255.);

vec3 pink = vec3(213./255., 66./255., 108./255.);

color.r = v_TexCoordinate.y * (purple.r - pink.r) + pink.r;

color.g = v_TexCoordinate.y * (purple.g - pink.g) + pink.g;

color.b = v_TexCoordinate.x * (purple.b - pink.b) + pink.b;

color.a = 1.;

return color;

}

//Screen blend

vec3 ScreenBlend(vec3 maskPixelComponent, float alpha, vec3 imagePixelComponent) {

return 1.0 - (1.0 - (maskPixelComponent * alpha)) * (1.0 - imagePixelComponent);

}

//contrast adjust

vec3 contrast(vec3 color, float contrast) {

const float PI = 3.14159265;

return min(vec3(1.0), ((color - 0.5) * (tan((contrast + 1.0) * PI / 4.0) ) + 0.5));

}

void main() {

vec3 color = texture2D(u_Texture, v_TexCoordinate).rgb;

vec4 layer_color = RandomGradientWarm();

color = ScreenBlend(layer_color.rgb, .4, color);

color = contrast(color, 0.2);

gl_FragColor = vec4(color, 1.0);

}Warm

Применение хроматического ключа

Что такое хроматический ключ?

Создание фрагментного шейдера

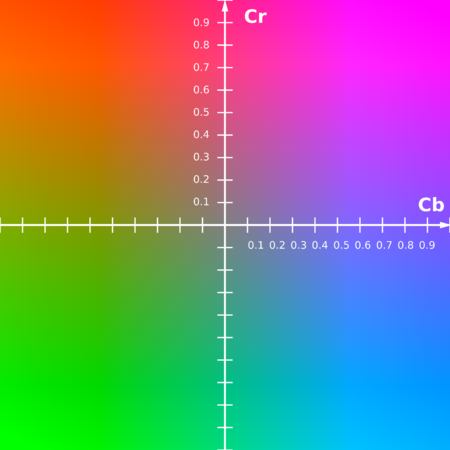

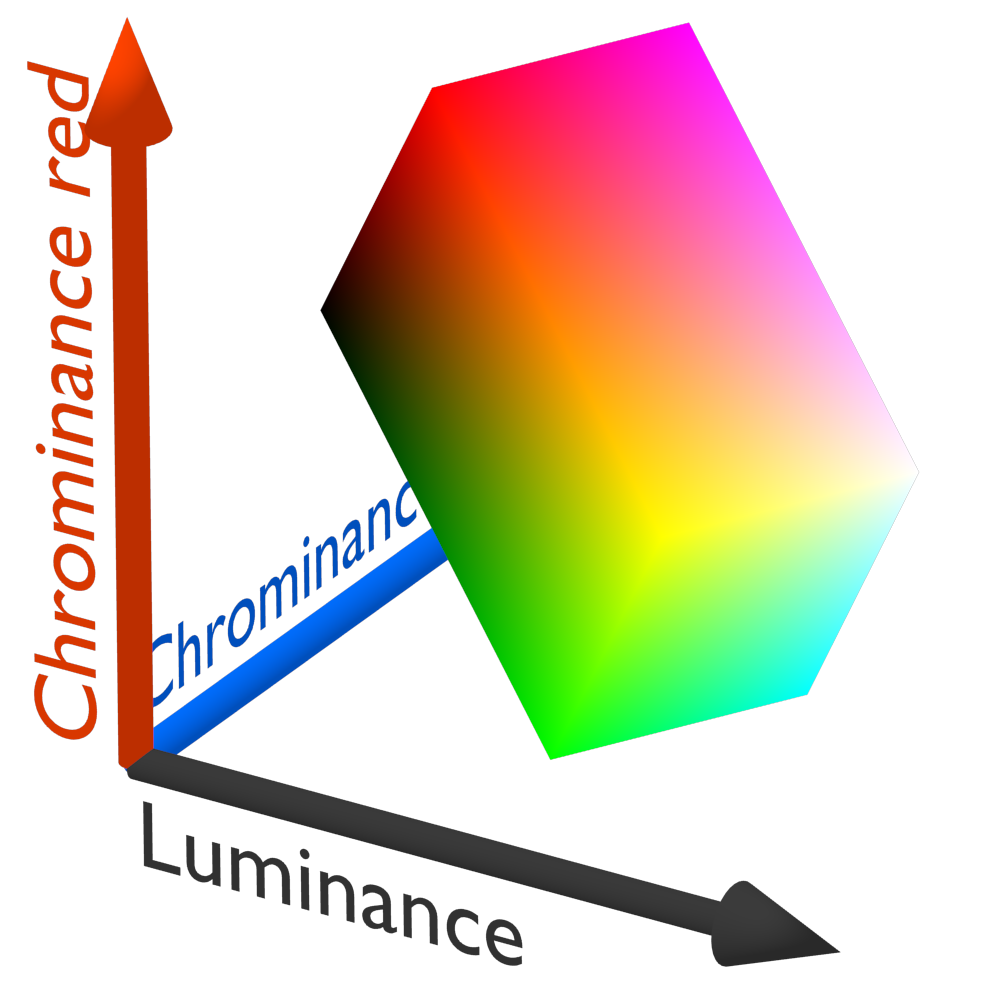

YCbCr

#extension GL_OES_EGL_image_external : require

precision mediump float;

varying vec2 texCoord;

uniform vec4 chromaKeyColor;

uniform samplerExternalOES texSamplerOES;

void main() {

vec4 tex = texture2D(texSamplerOES, texCoord);

float maskY = 0.2989 * chromaKeyColor.r + 0.5866 * chromaKeyColor.g + 0.1145 * chromaKeyColor.b;

float maskCr = 0.7132 * (chromaKeyColor.r - maskY);

float maskCb = 0.5647 * (chromaKeyColor.b - maskY);

float Y = 0.2989 * tex.r + 0.5866 * tex.g + 0.1145 * tex.b;

float Cr = 0.7132 * (tex.r - Y);

float Cb = 0.5647 * (tex.b - Y);

float blendValue = smoothstep(0.4, 0.5, distance(vec2(Cr, Cb), vec2(maskCr, maskCb)));

gl_FragColor = vec4(tex.rgb* blendValue, 1.0 * blendValue);

};Text

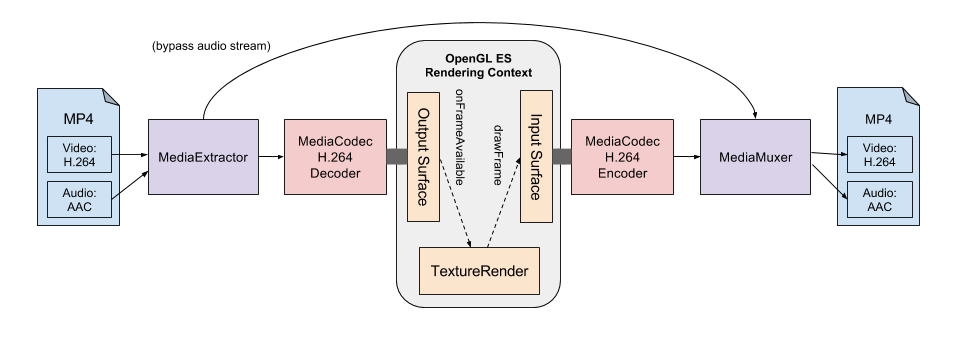

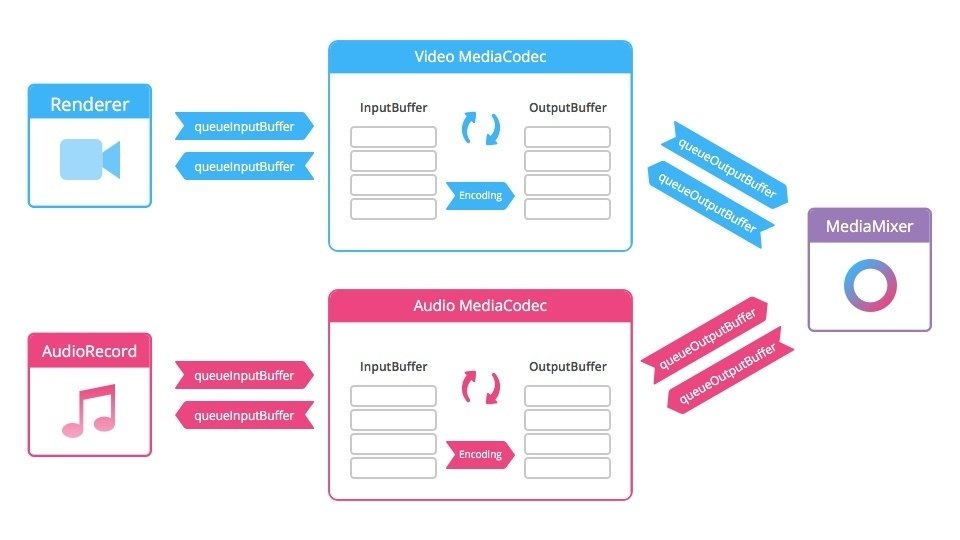

Запись видео с результирующим изображением

FBO (Framebuffer object)

Draw Twice

Pros:

- Простота

Cons:

- Потенциально, требует 2x времени и ресурсов на рендеринг картинки

Offscreen draw

Pros:

- Высокое быстродействие

- Не требует OpenGL 3.0

Cons:

- Требует наличие offscreen буфера

blitFramebuffer

Pros:

- Не требует offscreen буфера

- Еще более высокое быстродействие

Cons:

- Требует OpenGL 3.0 и выше

MediaMuxer