Liberating Hong Kong public data using web scraping

Guy Freeman, Data Guru Limited, 1st December 2018

What is web scraping?

When you visit a website, you actually download HTML code from a computer (usually called a server), which your browser converts into a web page.

What is web scraping?

If you want to collect data from a web site, you can program a computer to automatically visit web pages (aka download HTML) and extract the data directly from the HTML. That's it!

One benefit is that the infinitely multifarious structure of web pages is boiled down to just the pure data that you are interested in, and that you can data science the s&!t out of.

Liberating Hong Kong public data

Although Hong Kong is slowly making structured public data available via APIs, e.g. through data.gov.hk, much public data is still only available through web sites, or even through PDFs. Web scraping allows us to collect, analyse, and use the data for whatever purposes we desire.

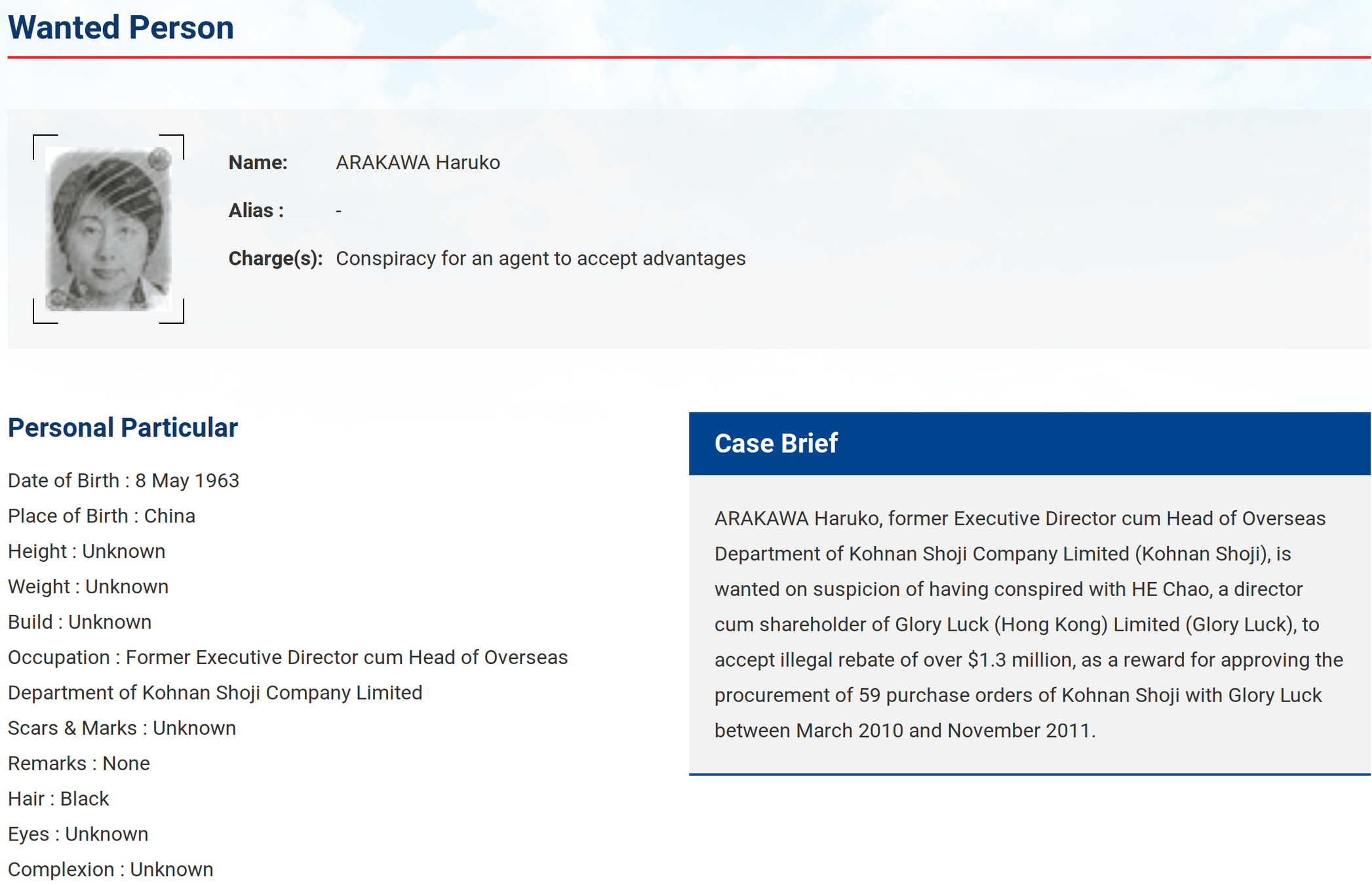

Today's useful example: who are Hong Kong's most wanted, and what's their deal?

Alas, due to Hollywood (maybe), the FBI's Ten Most Wanted Fugitives is far more famous than our ICAC's Wanted Person list. Let's fix that by scraping the list on ICAC's web site and making an action movie from it (maybe).

Hong Kong's Most Wanted

fbi.gov/wanted/topten

icac.org.hk/en/law/wanted/

LAME

AWESOME

Scraping Hong Kong's Most Wanted

Strategy

Find list of Most Wanted

Scrape data from each Most Wanted

Scraping Hong Kong's Most Wanted

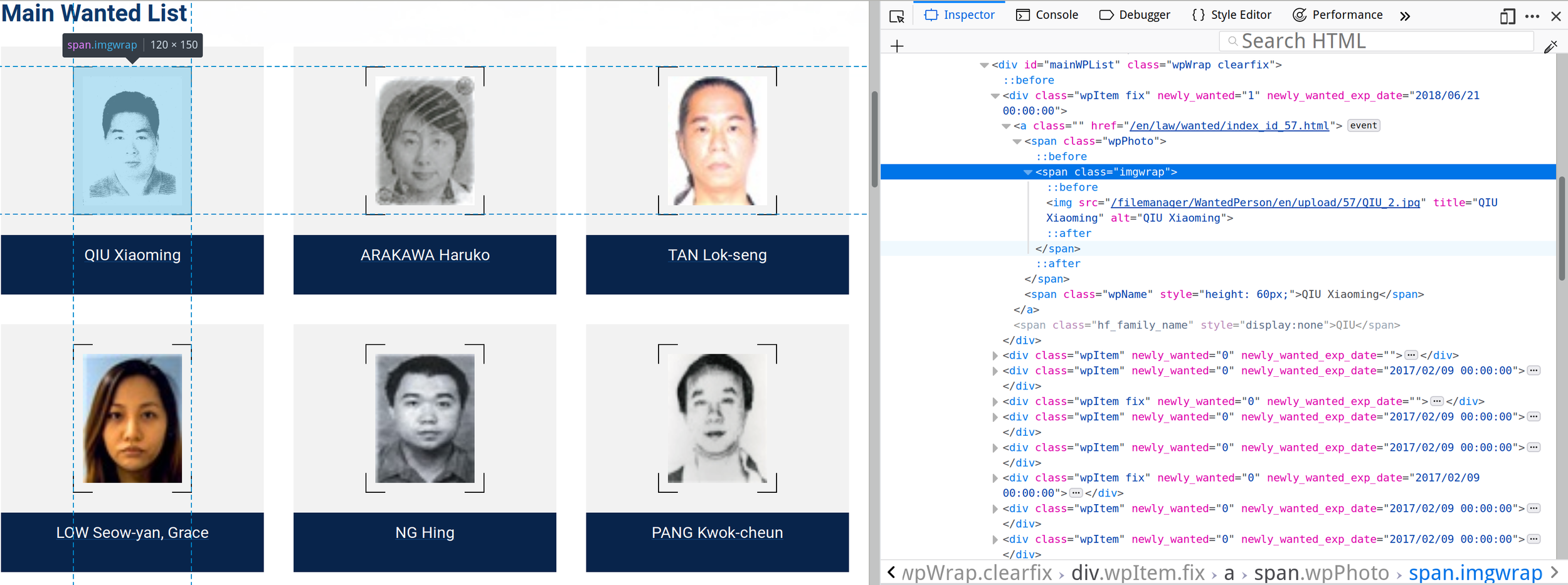

Tactics: HTML Inspection

Scraping Hong Kong's Most Wanted

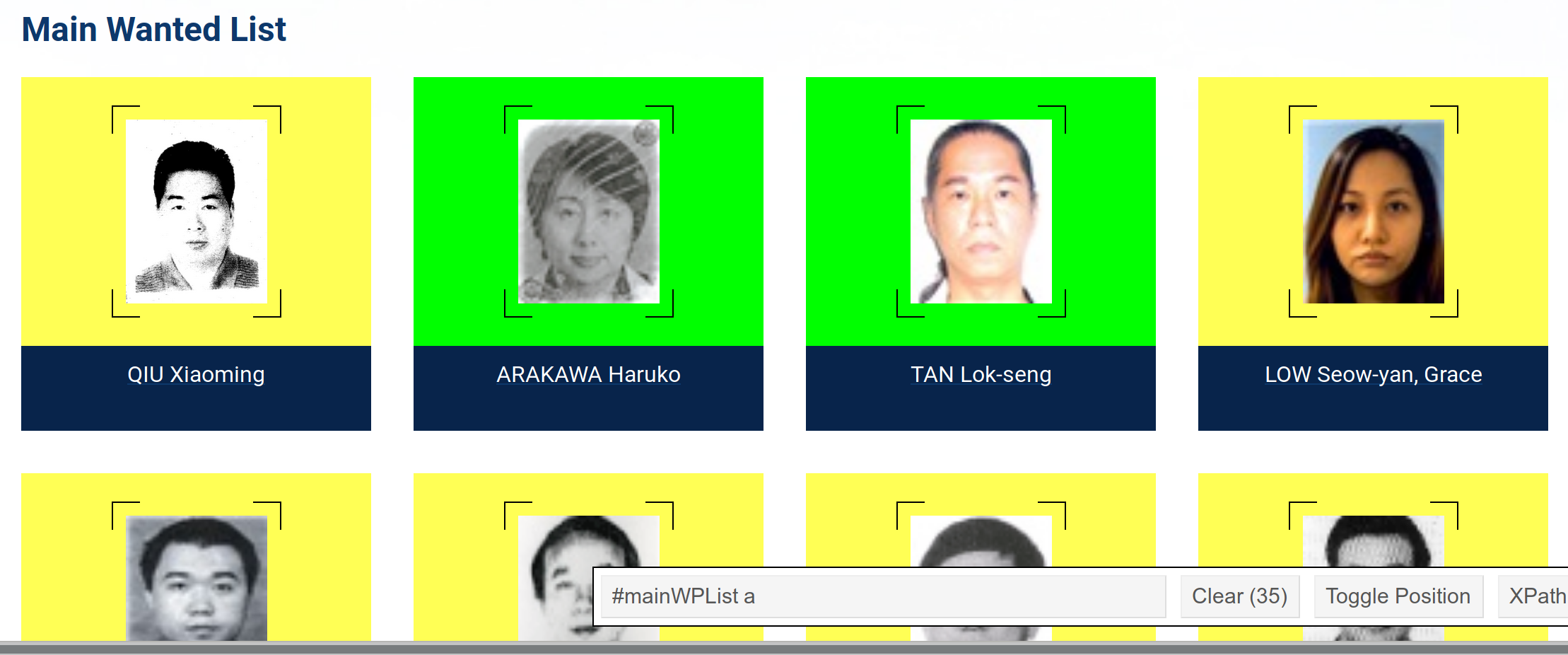

Tactics: HTML Selectors

using SelectorGadget

Scraping Hong Kong's Most Wanted

Tactics: Following links and mapping selectors to data attributes with Scrapy

We actually now know enough to scrape! I will now demonstrate LIVE how easy it is to write and execute a scraper using the Python library Scrapy to extract clean, structured information about ICAC's scary Most Wanted!

Scraping Hong Kong's Most Wanted

LIVE:

Writing and executing a scraper

Books and links

- Practical Web Scraping for Data Science: Best Practices and Examples with Python

- https://selectorgadget.com/

- https://scrapy.org/

- Scrapinghub (hosted scrapy service)