Przetwarzanie rozproszone w Node.js

meet.js Gdańsk #22

Gdańsk, 20.01.2020

Łukasz Rybka

Co-founder / Chief Technology Officer

Trener

Full-stack JavaScript Developer

Teza

Node.js jest doskonałym środowiskiem do implementacji systemów rozproszonych

Mariusz

- Programista JavaScript/TypeScript

- Zazwyczaj realizuje projekty przy pomocy Angular, Nest/Express w architekturze monorepo (Nrwl Nx)

- Rozpoczął niedawno pracę dla klienta potrzebującego systemu e-commerce dla swojej firmy

Planning #1

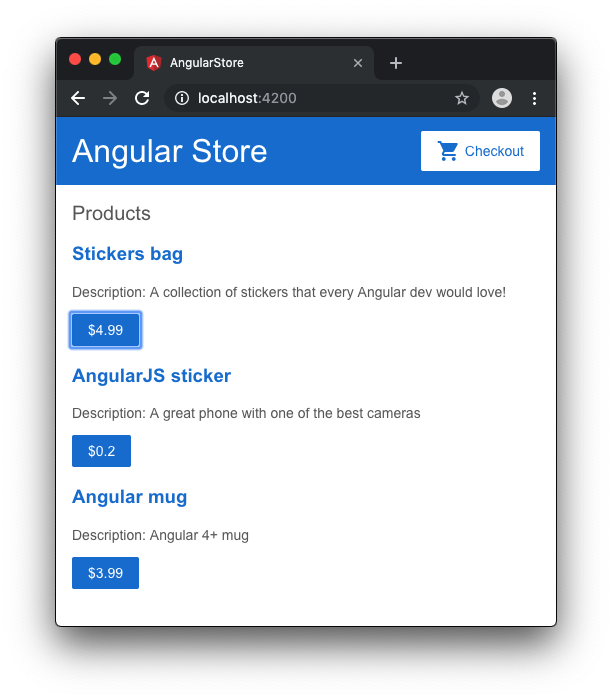

Klient prosi o przygotowanie początkowej wersji aplikacji w celu przetestowania zakupionego projektu graficznego.

Design aplikacji

App Controller

import { Controller, Get } from '@nestjs/common';

import { Product } from '@angular-store/api-interfaces';

import { AppService } from './app.service';

@Controller()

export class AppController {

constructor(private readonly appService: AppService) {}

@Get('products')

async getData(): Promise<Product[]> {

return this.appService.getProducts()

}

}App Service

import { Injectable } from '@nestjs/common';

import { Product } from '@angular-store/api-interfaces';

@Injectable()

export class AppService {

getProducts(): Promise<Product[]> {

return Promise.resolve([

{

name: 'Stickers bag',

price: 4.99,

description: 'A collection of Angular stickers.'

}

]);

}

}Planning #2

W trakcie kolejnej sesji planowania klient prosi Mariusza o przygotowanie strony z listą wszystkich zrealizowanych zamówień w danym miesiącu.

Design strony

Przykładowe zamówienie

const orders: Order[] = [

{

id: 1,

orderDate: new Date(Date.parse('2019-07-11T16:15:00')),

userEmail: 'customer1@dev.null',

items: [

{ productId: 1, price: 4.00, quantity: 1 },

{ productId: 3, price: 3.99, quantity: 2 }

]

}

];Logika biznesowa

getOrders(): Promise<OrderWithTotal[]> {

const orders: Order[] = [...];

return Promise.resolve(orders.map(order => {

const orderWithTotal: OrderWithTotal = { ...order, total: 0 };

orderWithTotal.total = order.items.reduce((tot, item) => {

return tot + item.quantity * item.price;

}, 0);

return orderWithTotal;

}));

}Planning #5

Po pewnym czasie funkcjonowania aplikacji na środowisku produkcyjnym klient zaobserwował, że wraz ze zwiększającą się ilością zamówień działanie strony z zamówieniami staje się coraz bardziej kłopotliwe.

Analiza problemu

HTTP 504

The HyperText Transfer Protocol (HTTP) 504 Gateway Timeout server error response code indicates that the server, while acting as a gateway or proxy, did not get a response in time from the upstream server that it needed in order to complete the request.

źródło: https://developer.mozilla.org/en-US/docs/Web/HTTP/Status/504

Node.js server

By default, the Server's timeout value is 2 minutes, and sockets are destroyed automatically if they time out. However, if you assign a callback to the Server's 'timeout' event, then you are responsible for handling socket timeouts.

źródło: https://nodejs.org/dist/latest-v6.x/docs/api/http.html#http_server_settimeout_msecs_callback

Node.js server - "optymalizacja"

async function bootstrap() {

const app = await NestFactory

.create<NestExpressApplication>(AppModule);

app.getHttpServer().setTimeout(180000);

// ...

}Planning #7

W trakcie kolejnej sesji planowania wywiązała się żywa dyskusja między Mariuszem a Klientem na temat stabilności strony zamówień. Ustalono wówczas, że zamiast generować raport w czasie rzeczywistym będzie on tworzony w tle.

Podejście #1

@Post('orders/report')

async generateReports(@Body('month') month: number,

@Body('year') year: number) {

const report = await this.appService

.getOrderReport(year, month);

if (report === null) {

setTimeout(() => {

this.appService

.generateOrderReport(year, month);

}, 0);

}

return {};

}Wady

- Utrata kontroli nad przebiegiem aplikacji

- Brak gwarancji, że skrypt asynchroniczny się wykona

- Potencjalne źródło problemów race condition

- System kolejkowy w Node.js

- Oparty na Redis

- Wbudowany system izolacji worker'ów

- Operacje wykonywane za jego pomocą są atomowe

Krok #1 - inicjalizacja

import { Injectable } from '@nestjs/common';

import * as Bull from 'bull';

import { getRedisConfig } from './config.helpers';

@Injectable()

export class OrderReportService {

private queue: Bull.Queue;

private readonly queueName = 'order_reports';

constructor() {

this.queue = new Bull(this.queueName, {

redis: getRedisConfig()

});

}

}

Krok #2 - definicja

this.queue.process('generate_report',

async (job: Bull.Job, done: Bull.DoneCallback) => {

setTimeout(() => {

console.log(`Report generated!`);

console.log(JSON.stringify(job.data));

done();

}, 5000);

}

);Krok #3 - delegacja

async generate(year: number, month: number) {

await this.queue.add('generate_report', {

year,

month

});

}Planning #14

Od wprowadzenia systemu kolejkowania w aplikacji pojawiło się 6 nowych typów raportów i przyszedł czas aby poprawić wydajność systemu ich generowania (stabilność na tym etapie jest zadawalająca).

Bull - cykl życia

źródło: https://optimalbits.github.io/bull/

- Producer

- Consumer

- Listener

Bull - struktura

Planning #18

Po kolejnych usprawnieniach w systemie generowania raportów Klient poprosił Mariusza o możliwość generowania wybranego raportu cyklicznie.

Definicja

this.paymentsQueue = new Bull(this.queueName, {

redis: getRedisConfig()

});

// Queue process logic omitted...

this.paymentsQueue.add({}, {

repeat: { cron: '0 10 * * MON-FRI' }

});

Monitorowanie

- Taskforce

- Arena

- bull-repl

- Prometheus (z Bull Queue Exporter)

Gotowe aplikacje UI

- Graficzny interfejs dla Bull i Bee

- Monitorowanie kolejek i zadań

- Podgląd szczegółów i stacktrace

- Możliwość uruchomienia ponownie i wznowienia zadania

- Wsparcie dla Docker'a

Arena

Konfiguracja

{

"queues": [{

"name": "order_reports",

"hostId": "Order Reports",

"host": "docker.for.mac.localhost",

"port": 6379

}]

}Uruchomienie

docker run -p 4567:4567 \

--name bull_arena --rm \

-v ~/arena/config.json

:/opt/arena/src/server/config/index.json \

mixmaxhq/arena:latestPlanning #22

W trakcie kolejnej sesji planowania klient poprosił Mariusza o dodanie do systemu raportowania powiadomień mailowych kiedy dany raport jest już gotowy.

Implementacja

this.queue.on('completed', (async job => {

await this.sendEmailNotification(

job.data.year,

job.data.month

);

}));Lokalne eventy #1

.on('error', (error) => {});

.on('waiting', (jobId) => {

// A Job is waiting to be processed

// as soon as a worker is idling.

});

.on('active', (job, jobPromise) => {

// A job has started.

// You can use jobPromise.cancel()

// to abort it.

});

.on('stalled', (job) => { });Lokalne eventy #2

.on('progress', (job, progress) => {

// A job's progress was updated!

});

.on('completed', (job, result) =>{

// A job successfully completed with a `result`.

});

.on('failed', (job, err) => {

// A job failed with reason `err`!

});Lokalne eventy #3

.on('paused', () => {

// The queue has been paused.

});

.on('resumed', (job) =>{

// The queue has been resumed.

})

.on('cleaned', (jobs, type) => {

// Old jobs have been cleaned

// from the queue. `jobs` is

// an array of cleaned jobs,

// and `type` is the type of

// jobs cleaned.

});Lokalne eventy #4

.on('drained', () => {

// Emitted every time the queue has

// processed all the waiting jobs

// (even if there can be some

// delayed jobs not yet processed)

});

.on('removed', (job) => {

// A job successfully removed.

});Globalne eventy

// Local event

queue.on('completed', listener):

// Global event - listens to whole queue,

// distributed across Redis listeners

queue.on('global:completed', listener);Co dalej?

const myJob = await myqueue.add({

foo: 'bar'

}, {

priority: 3

});Priorytety

Współbieżność

this.queue.process('generate_report', 5,

async (job: Bull.Job, done: Bull.DoneCallback) => {

setTimeout(() => {

console.log(`Report generated!`);

console.log(JSON.stringify(job.data));

done();

}, 5000);

}

);Sandbox

Sometimes jobs are more CPU intensive which will could lock the Node event loop for too long and Bull could decide the job has been stalled. To avoid this situation, it is possible to run the process functions in separate Node processes. In this case, the concurrency parameter will decide the maximum number of concurrent processes that are allowed to run.

Thank you!