State-of-the-Art WebGL 2.0

Course Presenters

Xavier Ho

CSIRO

The University of Sydney

contact@xavierho.com

Juan Miguel de Joya

DigitalFish

Google Spotlight Stories

juandejoya@gmail.com

Neil Trevett

NVIDIA

Khronos Group

ntrevett@nvidia.com

Goals and Expectations

-

Goal

-

WebGL 2.0 is out in the wild. What new features can we use?

-

-

Expectations

-

Cover things at the lowest level

-

Go deep on certain techniques

-

Discuss techniques in WebGL 2.0 which were:

-

Extensions in WebGL 1.0

-

Not in WebGL 1.0 at all

-

-

-

Prerequisites

-

Some WebGL 1.0, or a rough graphics rendering knowledge

-

Agenda

-

History of *GL, Introduction to WebGL 2.0 (15 minutes)

-

Optimizations and Techniques, Examples (50 minutes)

-

Texture Support

-

Geometry Instancing

-

Transform Feedback

-

Deferred Rendering

-

Multiple Draw Buffers

-

Volumetrics

-

Shading Language Updates

-

-

State-of-Industry Survey, Future of WebGL (15 minutes)

-

Conclusion (10 minutes)

WebGL 2.0 at a Glance

WebGL 1.0: In Brief

-

WebGL 1.0 was released 2011, widely adopted.

-

WebGL Stats: 95% adoption

-

Can I Use: 92% adoption

-

-

WebGL 1.0 used in a variety of applications

-

Artists showcase work on Sketchfab

-

Jupiter and Its Moons, New York Times

-

The Dawn Wall, El Capitan’s Most Unwelcoming Route, New York Times

-

Math graphic with MathBox

-

Audio visualizations

-

Web Games

-

Unity’s WebGL target

-

-

WebGL 2.0: It's Happening

-

WebGL 2.0 based on OpenGL ES 3.0 feature set

- Brings desktop and mobile platforms’ features closer together

-

WebGL 2.0 are available, slowly gaining traction:

-

WebGL Stats: 50% adoption

-

Can I Use: 28.89% adoption

-

-

WebGL 2.0 implementations are available today:

-

Firefox v53 (April 18, 2017, 3.91% usage)

-

Chrome v58 (April 18, 2017, 16.92% usage)

-

Edge “intends to ship”

-

-

How to Get a WebGL Implementation for more

Agenda

-

Textures

-

Many texture formats supported

-

3D textures, 2D texture arrays

-

Immutable textures

-

Full non-power-of-two texture support

-

Seamless cube maps

-

-

Instanced drawing*

-

Multiple render targets*

-

Transform feedback

-

Multisampled renderbuffers

-

Shading Language upgrades

-

Performant GPU-side copy/compute operations

-

Uniform buffers

-

Vertex array objects*

-

Sampler objects

-

Query objects

-

Sync objects

WebGL 2.0 is not entirely backwards compatible with WebGL 1.0.

(*) Available via extensions in WebGL 1.0. They are considered core in WebGL 2.0, so requesting them will fail. In addition, GLSL 3.00 (shading language upgrade) is required for access to the full feature set.

Techniques and Optimizations

Textures in WebGL 2.0

-

Texture formats supported in WebGL 2.0

RGB RGBA LUMINANCE_ALPHA LUMINANCE ALPHA R8 R16F R32F R8UI RG8 RG16F RG32F RG8UI RGB8 SRGB8 RGB565 R11F_G11F_B10F RGB9_E5 RGB16F RGB32F RGB8UI RGBA8 SRGB8_ALPHA8 RGB5_A1 RGB10_A2 RGBA4 RGBA16F RGBA32F RGBA8UI

-

Shaders can directly access texels (texture pixels) and query its size

-

Full 8-, 16- and 32-bit texture support means no loss of precision

Textures in WebGL 2.0 (cont.)

-

3D textures & 2D texture arrays: Texture objects now have width, height, and depth

-

Non-power-of-2 textures: WebGL 2.0 will accept texture of any dimension ratio

-

Immutable textures: Better performance and lower memory use

-

‘Immutable’ refers to allocation, not the content

-

-

Integer-based and floating point textures: Full precision to suit your needs

-

sRGB textures: Linear color space calculation and interpolation

-

Depth textures: Store depth information alongside color

-

Direct texture lookup: Available to shaders, size of texture and individual pixels

-

Texture access in vertex shaders: Displacement effects

-

Common compressed textures: Available to WebGL 2.0 as an extension (ETC2/EAC formats)

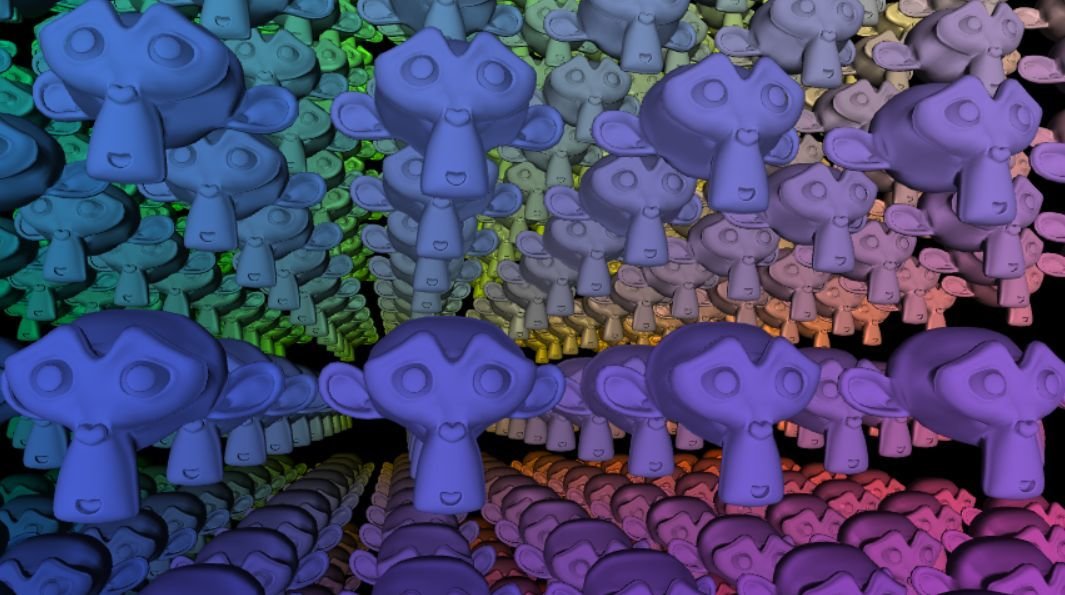

Geometry Instancing

-

Geometry Instancing: rendering multiple copies of same mesh in a scene with a single draw call.

-

Objects differ slightly, but essentially are the same.

-

Great for details and set dressing (trees, grass, buildings), can be used for crowds/characters.

-

Big performance improvement

-

Eliminates function call overhead.

-

-

Instancing: Non-WebGL Cases

-

Mesh scattering, mesh particles for details.

-

Procedural placement systems.

-

Regular mesh instance variation.

-

Instancing data in byte buffer, data location explicitly calculated in shader.

-

-

Hash comparisons for draw call consolidation.

Horizon Zero Dawn [GDC 2017].

The Witcher 3: Wild Hunt [SIGGRAPH 2015]

Battlefield Series [DX11 Rendering for BF3]

Uncharted 4: A Thief’s End [VentureBeat]

Pixar’s Piper [fxGuide]

Instancing: WebGL Examples

-

Three.js demos (WebGL 1.0 via extensions):

-

Instancing demo (simple triangles)

-

Indexed instancing (single box), interleaved buffers, dynamic updates

-

-

WebGL w/ extensions

Transform Feedback

-

Transform Feedback: a vertex post-processing step for recording values output from the vertex process into the Buffer Objects.

-

Computations and data from shaders stay on GPU.

-

Post-transform attributes can be easily read from the buffer.

-

Transform Feedback: Usage

-

Particle Systems: composed of a large amount of small particles that simulate physically-driven phenomena, such as smoke, dust, fireworks, rain, etc.

Without Transform Feedback...

1. WebGL copies particle contents of the VBO from GPU memory to CPU memory.

2. Calculations on particle attributes.

3. Reload information onto the GPU to be rendered.

Managing data transfer between GPU and CPU and redundant complex computation takes time and bandwidth!

With Transform Feedback...

1. Connect w/ Transform Feedback Buffer.

2. Apply further transformations on VBOs before rendering, all computations done on the GPU.

3. No application involvement other than connecting buffers and setting state.

Good for doing complex computations on VBOs, offload CPU and memory read times.

Transform Feedback: WebGL

-

Caching and reusing GPU computation

-

Stateful particle systems

-

Non-WebGL implementations

-

Capture and reuse for geometric deformations

-

Terrain generation calculations

Transform Feedback: WebGL

-

Caching and reusing GPU computation

-

Stateful particle systems

-

Non-WebGL implementations

-

Capture and reuse for geometric deformations

-

Terrain generation calculations

Integer Vertex Attributes

- Go hand-in-hand with transform feedback, as well as integer texture support.

-

Examples

-

Maintain a pseudo-random number generator’s state per-vertex.

-

Interactive particles demo required a RNG built around modulus-based integer vertex attributes in order to maintain state in conjunction with transform feedback phase.

-

Randomly dies/lives, written back into particle state of next frame.

-

-

-

More generally:

-

Can send multiple integers into the vertex shader.

-

Perform logical operations on them.

-

Output them.

-

Forward Rendering

Forward Rendering: pass geometry data into the vertex shader(s) for per-vertex operations, have these rasterized into fragments, do per-pixel operations via the fragment shader(s) before render.

- Render primitive by lighting it according to all light sources. Each primitive is passed down linearly.

- Heavy on performance, wastes a lot of fragment shader runs.

Deferred Rendering

Deferred Rendering: rendering is deferred until all primitives have been passed down the pipeline.

- Two stages: geometry pass for geometry data stored as in multiple draw buffers; lighting pass to calculate final shading and lighting calculations based on buffered data.

- Decouples expensive fragment processes to a later stage in the pipeline.

Deferred: Pros and Cons

Forward Rendering…

Pros

1. Polygon data are passed per vertex and fragment, drawn over the screen.

2. Preserves true transparency and provides anti-aliasing out of the box.

Cons

1. Linear, heavy on performance

2. Wasted fragment shader calculations

3. Limited number of lights available for rendering.

Deferred Rendering…

Pros

1. Actual fragment information as a screen pixel, only one fragment shader pass.

2. Great for dynamic lights and optimizing for a larger amount of light sources and post-processing.

Cons

1. Requires high bandwidth, memory.

2. Requires transparency, anti-aliasing workarounds.

Deferred: Non-WebGL Cases

-

Real-time lighting via light linked list

-

Physically correct lighting models

-

SPU-based deferred shading

-

Extensive particle systems

-

Post-processing effects (bloom, FXAA, AO)

- Destiny [SIGGRAPH 2013]

- Early History of Deferred Shading and

- Lighting [Rich Geldreich]

- Deferred Lighting Approaches [RTR 2009]

- Sunset Overdrive [SIGGRAPH 2014]

- Battlefield Series [EA DICE GDC 2011]

- Uncharted 4 [SIGGRAPH 2016]

- Killzone Shadowfall [Guerrilla Games]

Multiple Draw Buffers

-

Available as WebGL 1.0 as extension, now core

-

Allow rendering to multiple framebuffers in one pass

-

Big performance boost for:

-

Deferred rendering

-

Multipass post-processing effects

-

Other techniques that use depth, color, normals, and so on into multiple buffers

-

-

Applications of Multiple Draw Buffers

Volumetrics: Non-WebGL Cases

-

Occluded scattering resulting in volumetric shadows and light shafts

-

Heterogeneous participating media

-

Sun/Particle interaction, physically-based sky and atmosphere simulation, large scale fog

-

Art directable clouds for Horizon Zero Dawn, Pixar’s The Good Dinosaur

-

- Uncharted 4: A Thief’s End [Advances 2016]

- Call of Duty: Black Ops 3 [Advances 2016]

- Horizon Zero Dawn [Advances 2015]

- Frostbite Engine [Advances 2015]

- Pixar’s The Good Dinosaur [ACM DL]

Volumetrics: Non-WebGL Cases

-

Volumetric materials for character pipeline.

Volumetrics: WebGL Examples

-

Volumetric rendering simulates the propagation of light rays into a material’s volume (a.k.a a participating media), which allow for a broad range of rendering effects.

-

Volume ray marching is a technique where a ray is shot/cast from the camera into the material’s volume for rendering calculations.

Images: Nop Jiarathanakul, Almar Klein

Shading Language Updates

First line always starts with #version 300 es

Compiler needs to know if you’re using the new version.

Some of the new features:

-

- texture(sampler, uv).[rgba]

flat/smooth Interpolators

Flexible loop lengths in GLSL

Dynamic indexing

See GLSL ES 3.00 Specification for the complete feature set.

Images: Shuai Shao, Trung Le, Patrick Cozzi

State of Industry Survey

State of: WebGL 2.0 Frameworks

-

PlayCanvas: Commercial game engine, supports most WebGL 2.0 features

-

Blend4Web: Blender-interoperable engine, supports most WebGL 2.0 features

-

Shadertoy: Supports GLSL 3.00 fragment shader with WebGL 2.0

-

Frameworks intend to support WebGL 2.0 in the near future:

-

Three.js: WebGL2Renderer [discussion]

-

Babylon.js: Implementing feature by feature [list]

-

Whitestorm.js, A-Frame: depends on Three.js

-

State of: WebGL 2.0 Games

After the Flood [article][video]

- Procedural clouds (3D textures)

- Procedural water ripples/reflections

- Animated trees

- Dynamic lights

- Leaf particle system

- Alpha to coverage (antialiased foliage)

- HDR rendering MSAA for correct blending of antialiased HDR surfaces.

- Hardware PCF for shadow filtering

- Compressed textures (DXT, PVR, ETC1)

- Asynchronous asset streaming

- Runtime lightmap baking

- Planar mirrors for mirrored surfaces

State of: Arts and Performance

-

Just A Reflektor (2013) , Arcade Fire + Google Data Arts

-

Project SUN (2017), Philip Schütte + Venice Art Projects

-

Stream (2016), Ezra Miller + Houston's Day for Night Festival

-

HTC Vive Projection Mapping in WebVR (2016), Hien Huynh + Marpi @ DanceHackDay

-

Virtual Art Sessions, Google Tilt Brush team

-

Echolocation: SF WebGL Laser Light Show (2017), Juan de Joya + George Hurd @ Webfest 2017 (TBA)

State of: Data Visualization

-

Data visualisation of > 1m datasets at 60fps.

-

Regl: Declarative and stateless WebGL

-

Pixi.js: Fast, flexible, 2D WebGL renderer

-

How Uber Uses Psychological Tricks to Push Its Drivers’ Buttons, New York Times [link]

-

-

Deck.gl: Large-scale data visualisation

-

European flight paths [link]

-

-

Three.js: High-level scene graph

-

Bear 71 by Jam3 [link]

-

-

Google Earth VR: Virtual reconstruction of our planet [link]

-

Shipmap: Visualisation of Global Cargo Ships [link]

-

D3.js and Three.js: GeoJSON interoperation [link]

State of: WebVR/WebAR

-

Device adaptations are growing (96% for WebGL 1.0, 37% for WebGL 2.0, and growing).

-

WebVR Specification v2 Draft: [link], slated for Q3 2018 recommendation

-

Firefox v55 shipped WebVR by default (8th August, 2017)

Image: Don McCurdy

VR/AR: Non-WebGL Cases

-

Different setups

-

Mobile 360/VR/AR

-

Room-scale VR/AR

-

(Full) body tracking

-

-

Cinematic and/or narrative experiences

-

After Solitary, FRONTLINE/Emblematic Group

-

Pearl, Google Spotlight Stories

-

-

Interaction/Gaming Experiences

-

Job Simulator, Owlchemy Labs

-

Robot Recall, Epic Games

-

-

Virtual training/simulation

-

Pre-flight safety check, Qantas

-

Elevator maintainence (Azure IoT), thyssenkrupp

-

VR/AR: Non-WebGL Cases

-

Art

-

Installation 036, Steve Teeps

-

VR is for Artists, TIME

-

-

Visualization

-

KPMG HoloLens Data Analytics tool, KPMG and Loook

-

Virtual Data Visualization Platform, Virtualitics

-

-

Social VR

-

Facebook Spaces Demo, Facebook

-

-

Medical/Health

-

Advanced Imaging and Medical Modeling Program, OSF Healthcare

-

Smart Bionics Medium Article, The Luke Hand Project

-

VR/AR: Non-WebGL Cases

-

Academic Research

-

Putting yourself in the skin of a black avatar reduces implicit racial bias, Consciousness and Cognition (Vol 22, Issue 3; August 2013).

-

First Person Experience of Body Transfer in Virtual Reality, PLOS One (May 2010)

-

A Survey of Augmented Reality, Foundations and Trends in Human-Computer Interaction (Vol 8, Issue 2-3, March 2015)

-

Headset “Removal” for Virtual and Mixed Reality, Google Research and Daydream Labs (2017)

-

The Coming Age of Empathic Computing, Medium (2017)

-

-

Industry Standardization

-

OpenXR, Khronos Group

-

Why WebAR/WebVR?

-

WebVR/AR important part of ecosystem

-

Benefits of WebGL for widespread cross-compatibility and adoption

-

Works on every platform. WebVR specification does not distinguish what device you use.

-

-

WebVR becoming more readily available:

-

Firefox shipped WebVR for everyone for v55 (August 8th, 2017)

-

Image: SantaWinsAgain

WebAR/WebVR Frameworks

-

Frameworks include:

-

WebVR, Google’s JavaScript API for creating immersive VR experiences [demo]

-

A-Frame: Make WebVR with HTML and Entity-Component. [demo]

-

JSARtoolkit: Emscripten port of ARToolKit to JavaScript. [demo]

-

Argon.js: Adding augmented reality content to web applications [demo]

-

Awe.js: Mixed reality on the web [link]

-

Image: Mozilla

WebAR/WebVR: It’s Happening

-

VR Web Browser

-

WebVR NYC Snow Globe, Ronik Design

-

Broken Night VR, Eko

-

WebScreenVR, Samuel Cadillo

-

WebVR experiments, Google

-

Google I/O 2017 Prototype, Wayfair [Github]

-

Posture Recognition using Kinect, Azure IoT, ML and WebVR, Microsoft

-

BandExplorerVR, The Tin

-

Virtuleap WebVR Hackathon

What’s Coming in the Future?

-

The web is fundamentally a publishing platform. With WebGL to power rendering, AR/VR becomes feasible on the web.

-

Many libraries are implementing WebGL 2.0 support, with Three.js brand new WebGL2Renderer. Being worked on in parallel to WebGLRenderer development.

-

WebGL 2.0 Compute Shader extension.

-

GPGPU computations, parallel data structures, ray tracing, AI/Neural networks

-

-

WebGL NEXT (2.1 onwards) will continue to bridge the gap between graphics drivers and the web

-

WebGL 2.0 just came out, focus on widespread adoption and growth. Still figuring out what’s next..

-

Pre-validated design to reduce draw call overhead.

-

Looking at next generation APIs (Metal, Vulkan, DX12).

-

Eliminate class of issues associated with OpenGL.

-

GPU for the Web Community good place to be engaged in community conversations.

-

Conclusion and Resources

Conclusion

WebGL 2.0 exposes the OpenGL ES 3.0 feature set.

- Texture formats, transform feedback, multiple render targets enabling efficient graphics rendering

- Forward vs deferred rendering, and volumetrics

- Non-power-of-2 textures and direct texture access

WebGL empowers many fields of practice.

- Real-time, data visualisation, performance arts

- WebAR and WebVR

- Supported by major companies and platforms

- Many available frameworks to choose from.

What will you create next?

Image: Khronos Group

Course Materials & Resources

Course materials available at:

Related resources:

PicoGL.js Minimal WebGL 2-only rendering library. Low-level.

React Three.js Examples Update the scenegraph with React.js.

GPU for the Web Community The next-generation "GPU on the web" API, tracking the feature set of the explicit style APIs (D3D12, Metal, Vulkan).