Obtain original web presentation here:

https://slides.com/odineidolon/chym2017-8/fullscreen#/

This PDF version is of lower quality

HOW TO DEAL WITH OBSERVATIONAL DATA FOR (HYDROLOGICAL) MODELLING PURPOSES

ADRIANO FANTINI

ICTP, Trieste, Italy

afantini@ictp.it

Online presentation:

Which observations do you need for hydrology?

- Precipitation (possibly hourly, esp. for small basins)

- Temperature

- Snow

- Elevation data

- Land use data

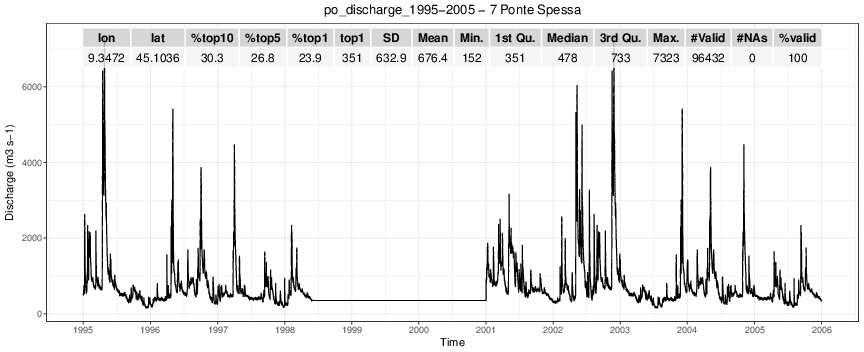

- Discharge / water stage

Gridded:

- Precipitation

- Temperature

- Elevation

- Land use

Dense or sparse?

In-situ:

- Precipitation

- Temperature

- Discharge

- Water stage

Advantages

- Uniform availability, often global

- Compare easily with models

- Generally straighforward formats (e.g. NetCDF)

- Efficient processing

- Different variables on the same grid

- Usually quality-controlled

Gridded

Disadvantages

- Heavily dependent on gridding method

- Not suitable for comparison over specific points

- Usually derived from in-situ data

- Dataset resolution != actual resolution (!!!)

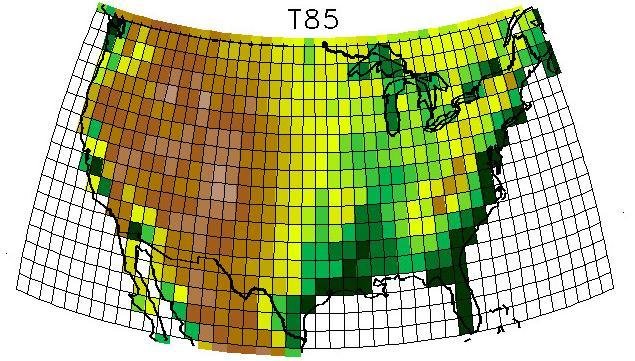

Basic categories:

- Inverse Distance Weighting

- Kriging

- Spline Interpolation

- Surface polygons

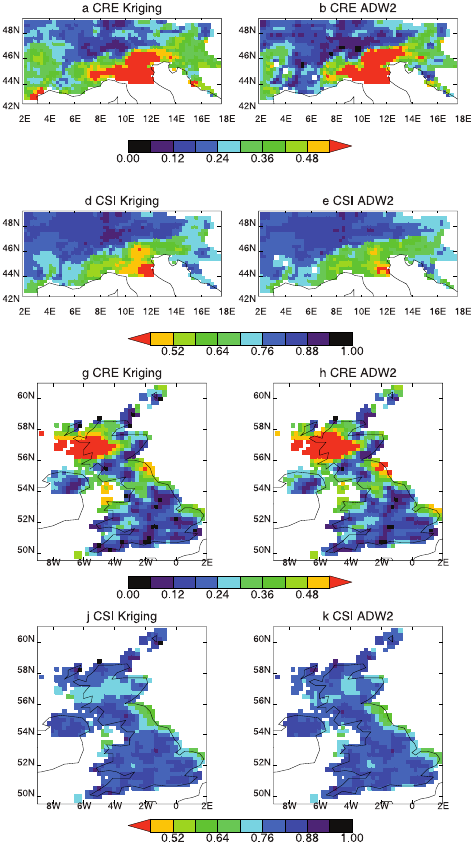

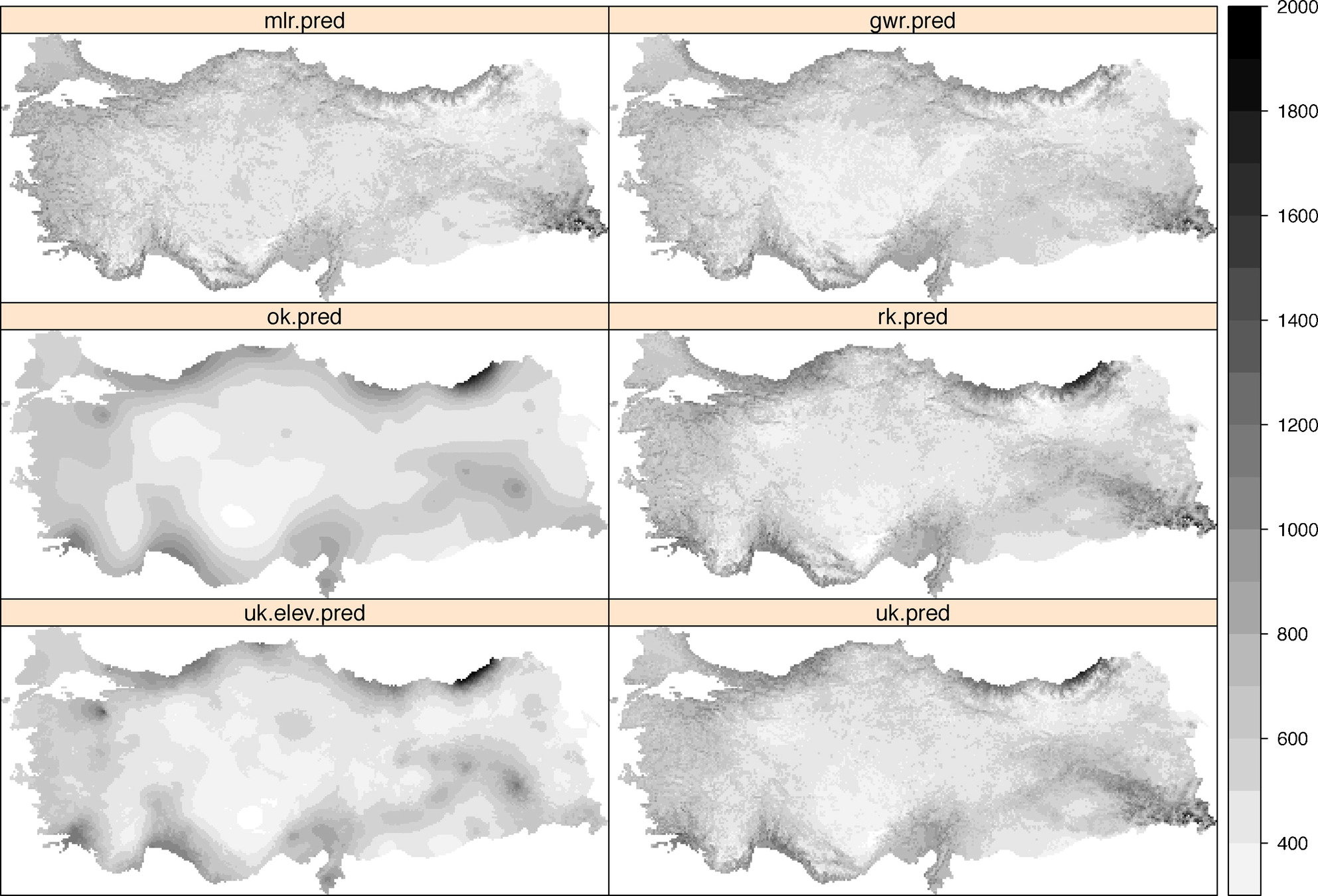

Gridding methods

CAN HAVE DIFFERENT RESULTS!

Mohr, 2008

Basic categories:

- Inverse Distance Weighting

- Kriging

- Spline Interpolation

- Surface polygons

Gridding methods

CAN HAVE DIFFERENT RESULTS!

Hofstra, 2008

Bostan, 2012

Advantages

- No gridding/smoothing -> good for extremes

- Easy to compare with models (e.g. discharge at a given point)

- Do not hide anything from the user

- Dataset resolution == actual resolution

- Metadata!

In-situ

Disadvantages

- Scarse data availability

- Often in very weird formats

- Often lacking quality control

- Hard to compare with gridded (e.g. climate) models (PR, T)

Common problems with

in-situ measurements

Temporal and spatial problems:

- Short timescale

- Missing periods

- Low station density

- Missing timesteps

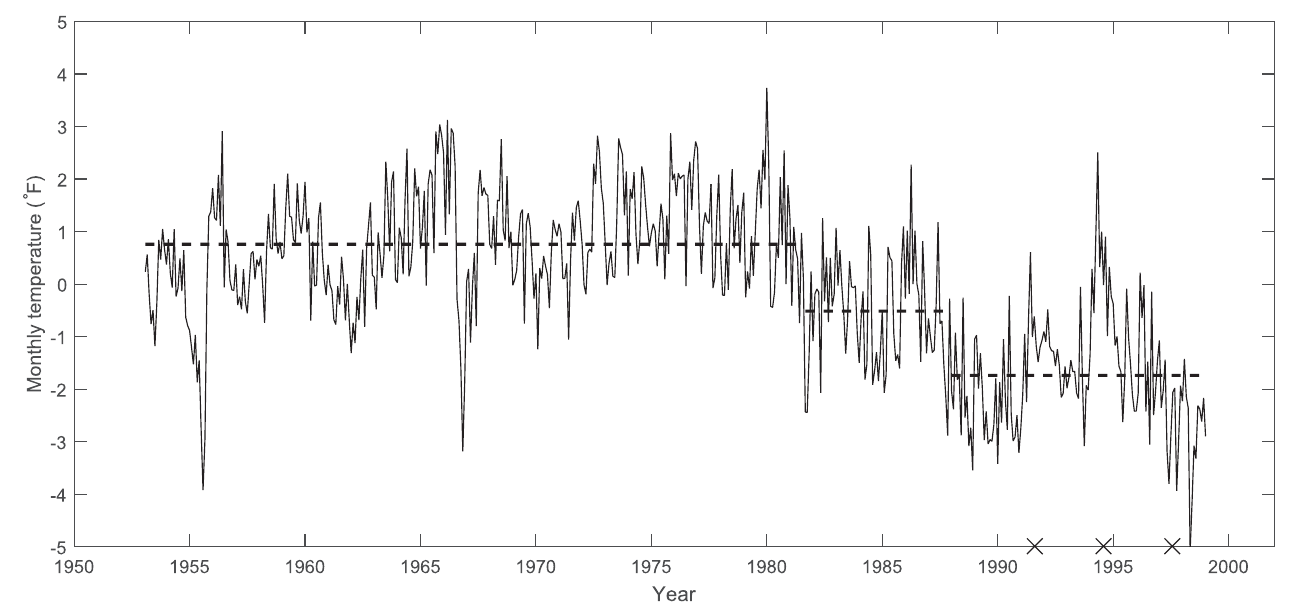

Data quality problems:

- Breaks and inhomogeneities

- Manual measurement errors

- Equipment errors and failures

- Weather-related measurement errors

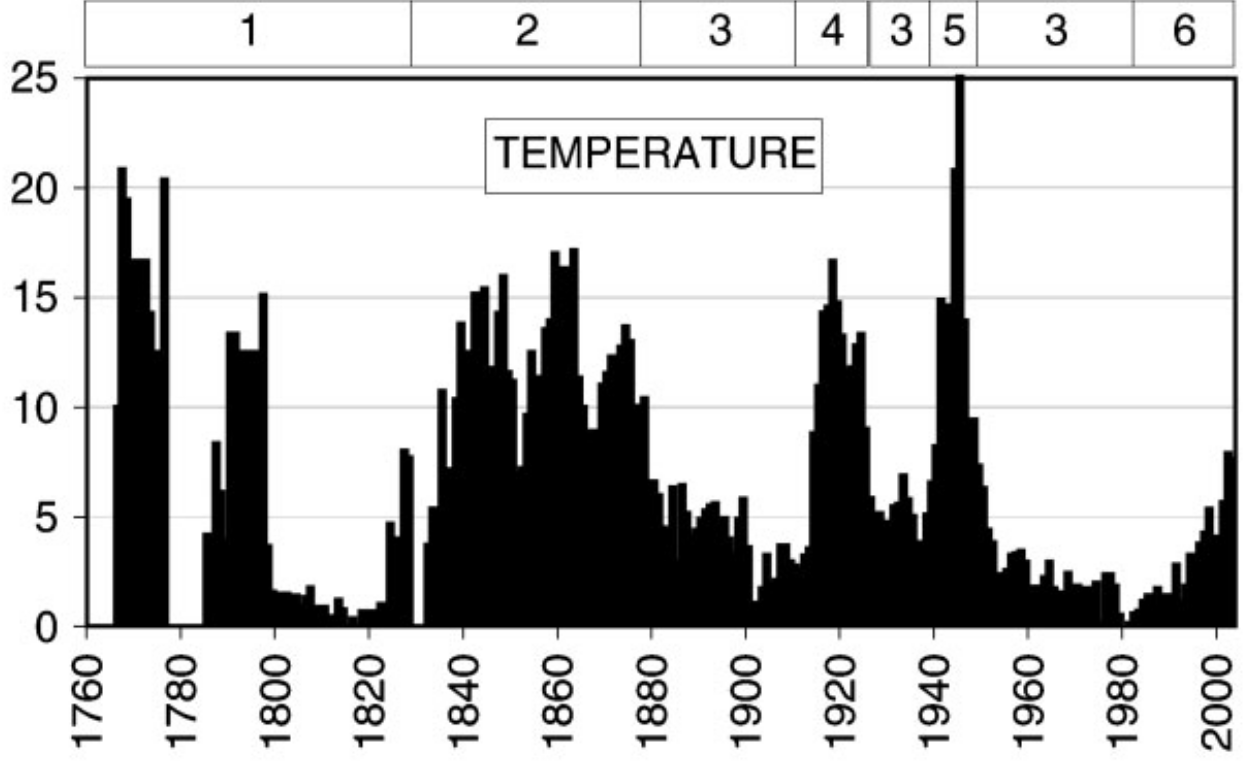

Temporal and spatial problems

- Short timescale

- Low station density

- Missing timesteps

- Missing periods

HISTALP database, Bohm et al., 2007

Data quality problems

- Manual measurement errors

- Equipment errors and failures

Hewaarachchi et al., 2016

Inhomogeneities

- Changes in measurement time

- Station relocations

- Instrumentation upgrades

- Incorrect maintainance

Data quality problems

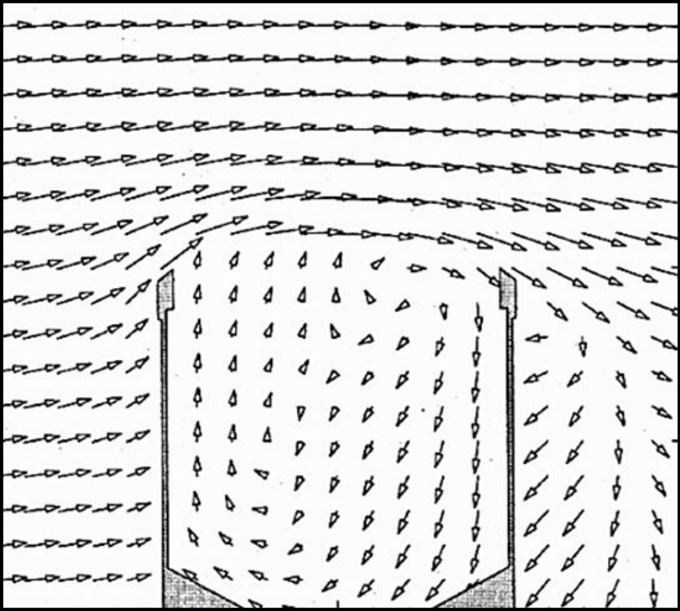

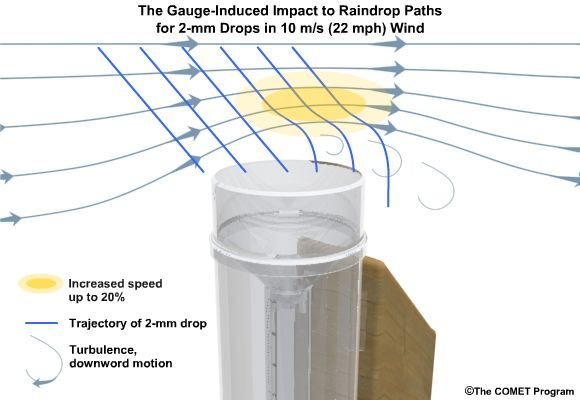

Measurement errors due to:

- Sensor icing

- Lack of power

- Vandalism

- Lack of maintenance

- Gauge undercatch

Data acquisition problems

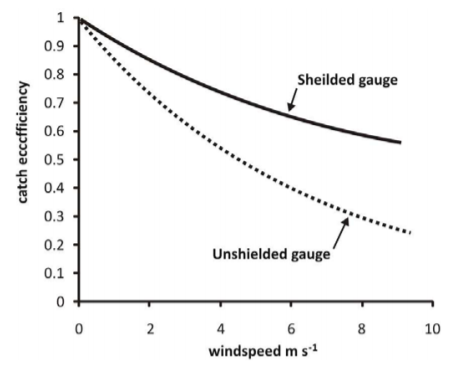

EXAMPLE: PRECIPITATION GAUGE UNDERCATCH

Nespor and Sevruk, 1999

-

on average ~30% ?

-

~80% for extreme winter solid events?

Data acquisition problems

Macdonald and Pomeroy, 2008

EXAMPLE: PRECIPITATION GAUGE UNDERCATCH

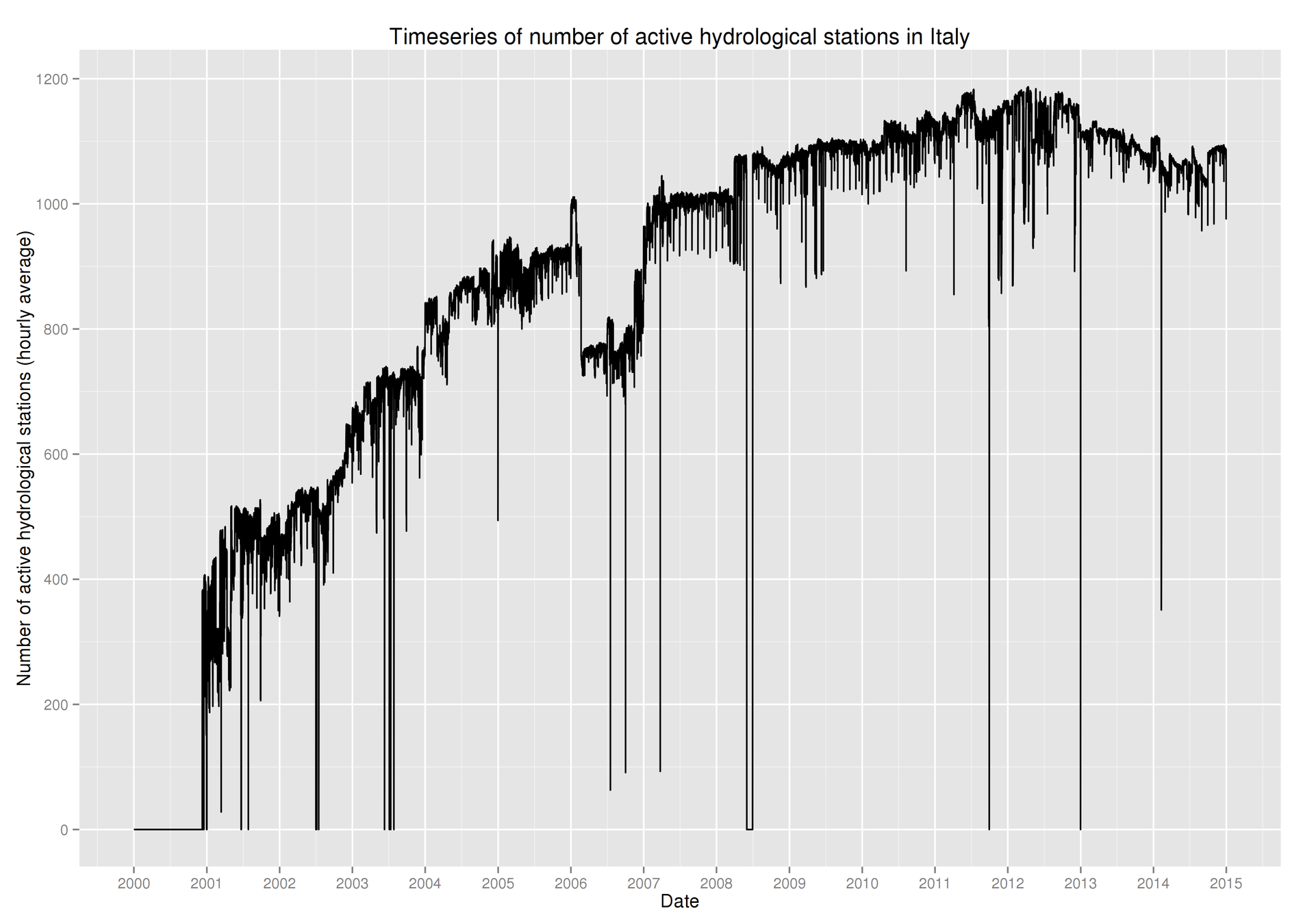

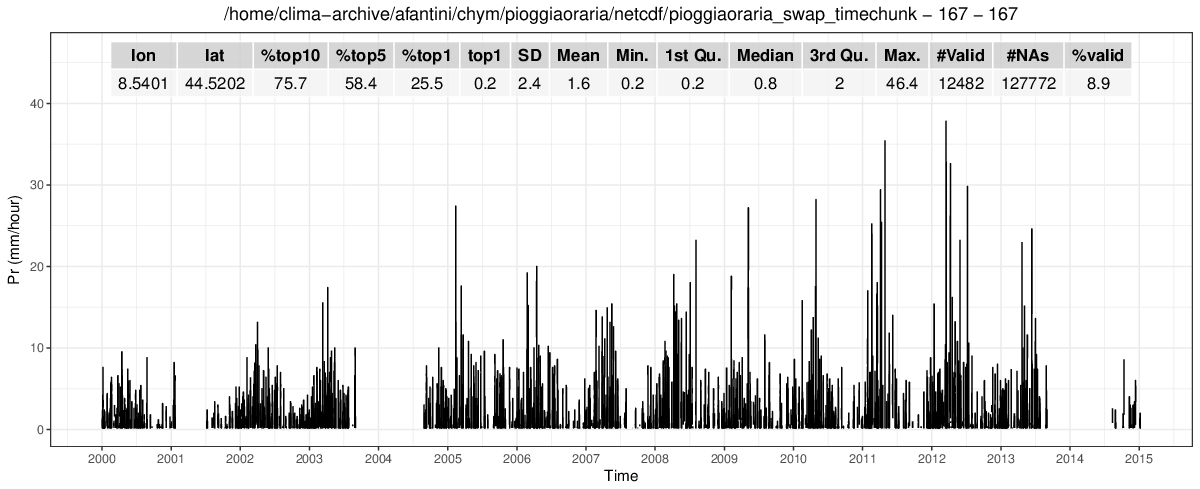

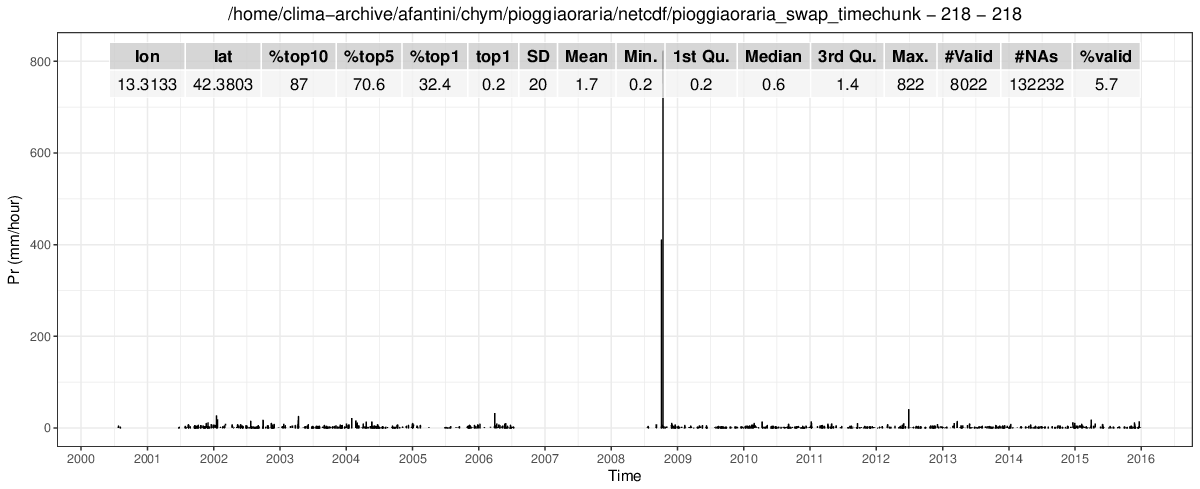

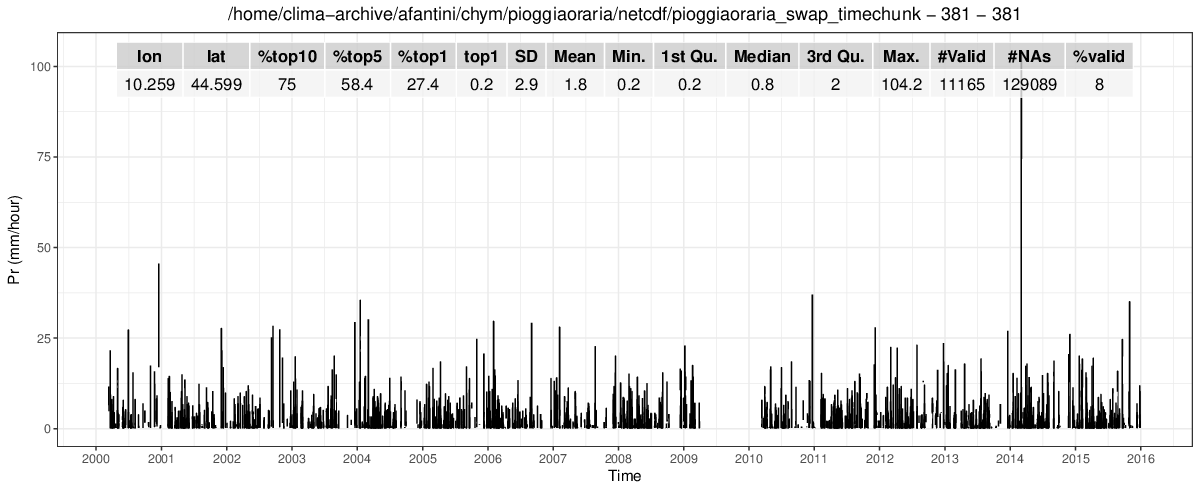

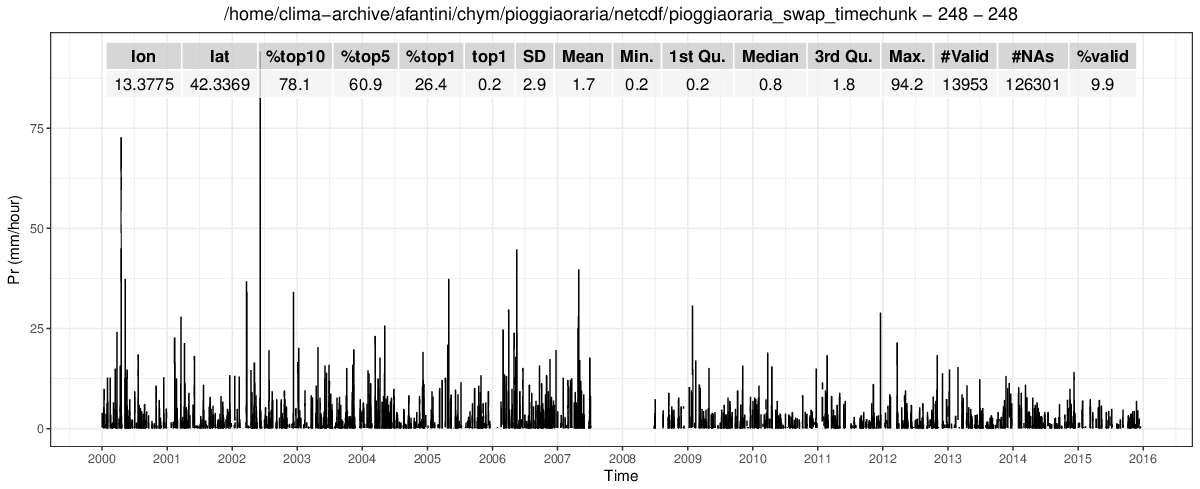

An in-situ example

- Precipitation

- Hourly

- From different institutions

- ~2200 stations on average

- uneven spatial coverage

- 2000-2016

?

?

Timeseries are usually not enough to identify inhomogeneities and errors

Metadata

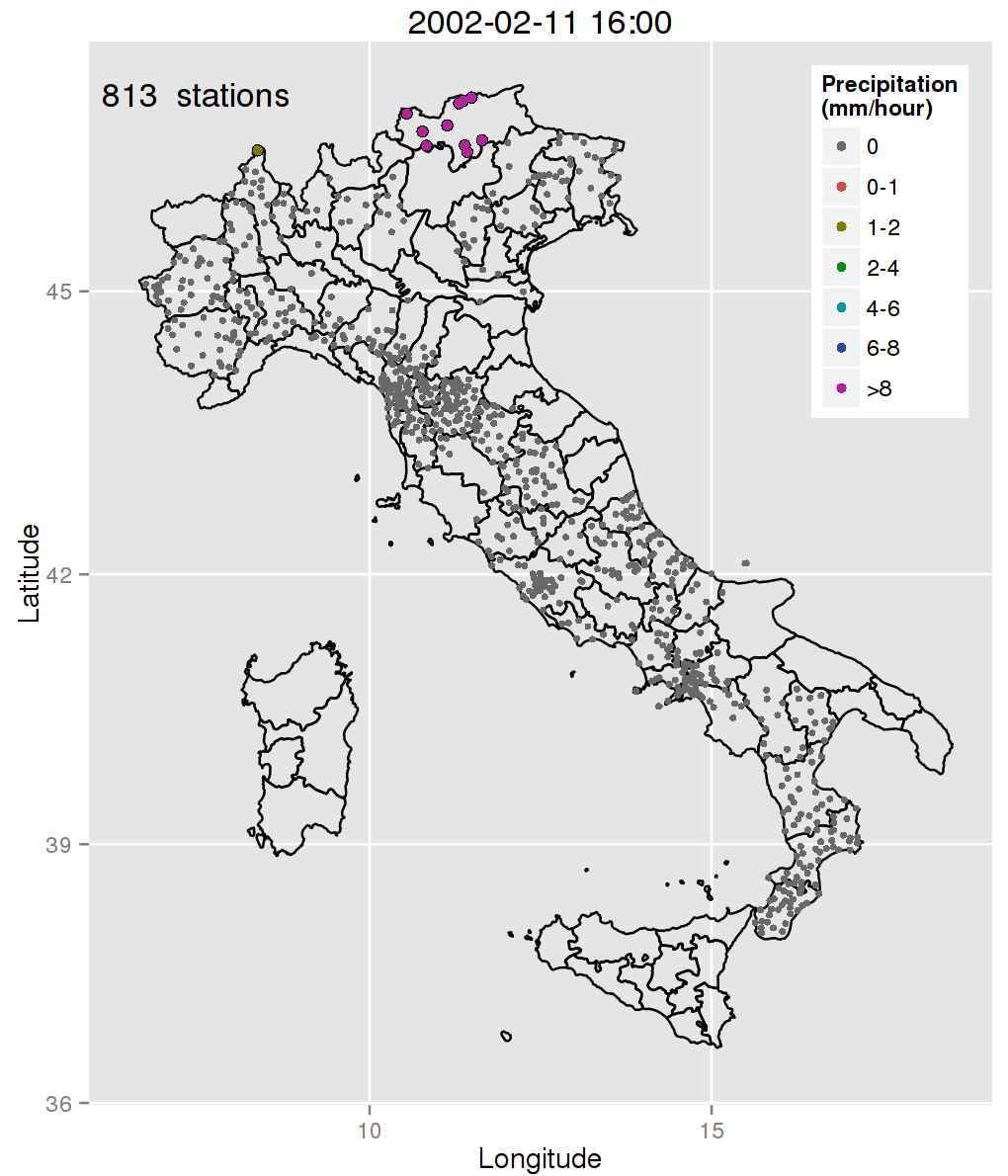

Spatial analysis

What can we do based on this?

- Cut outliers over a given fixed threshold

- Variable threshold based on SD or IQR

- Remove consecutive suspicious values

Metadata

all the information that is not data itself

- Gauge type and characteristics

- Station history (relocations, upgrades...)

- Recorded changes in station environment

- News about extreme events (hard to find for old data)

WE OFTEN DO NOT HAVE ACCESS TO THIS, AND IT'S EXTREMELY TIME CONSUMING

Spatial analysis

Maps + comparison to neighbouring stations

- Can be automated, once a criterion is chosen

- Possibilities for choosing reference stations: nearest neighbours, distance radius, height range, high correlation...

REQUIRES HIGH ENOUGH STATION DENSITY

HARD TO DO ON HIGHLY SPATIALLY VARIABLE FIELDS (e.g. PRECIPITATION) OR REGIONS (e.g. MOUNTAINS)

!

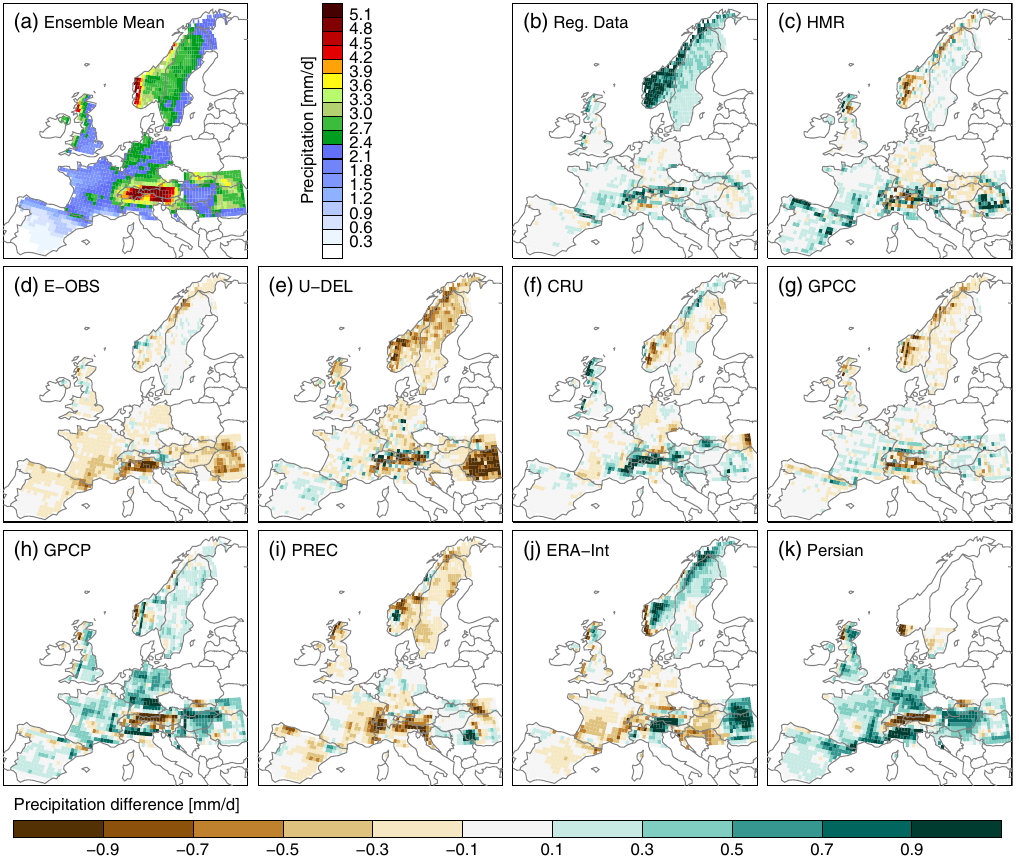

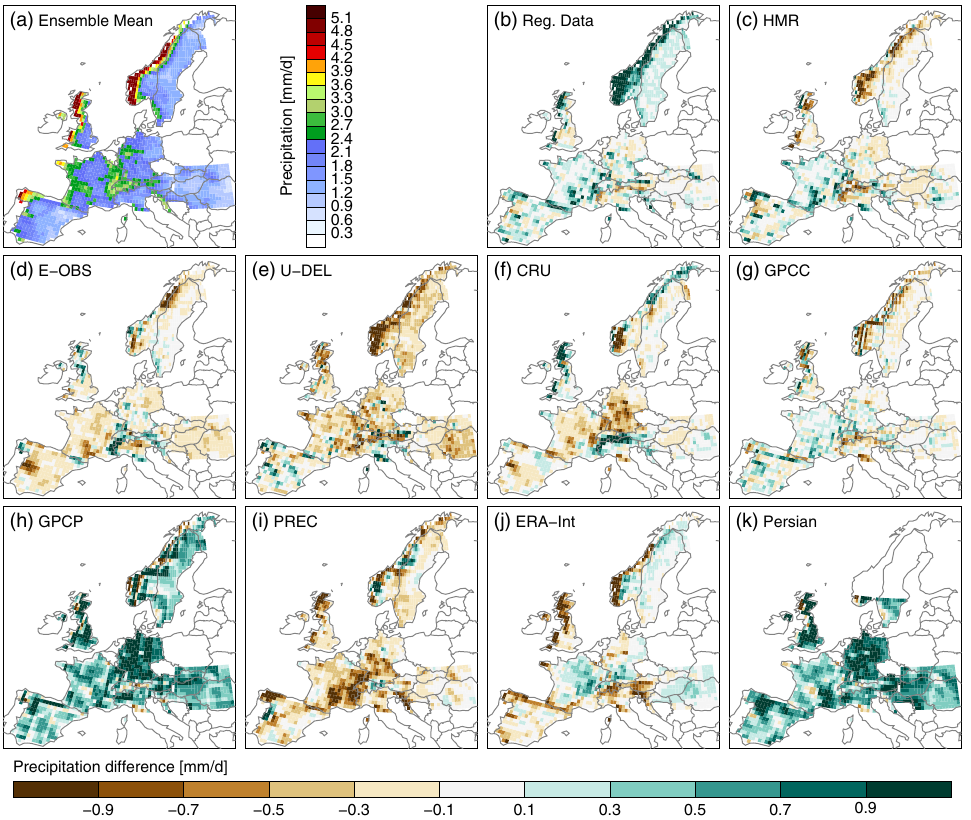

Even after correction...

Prein et al., 2017

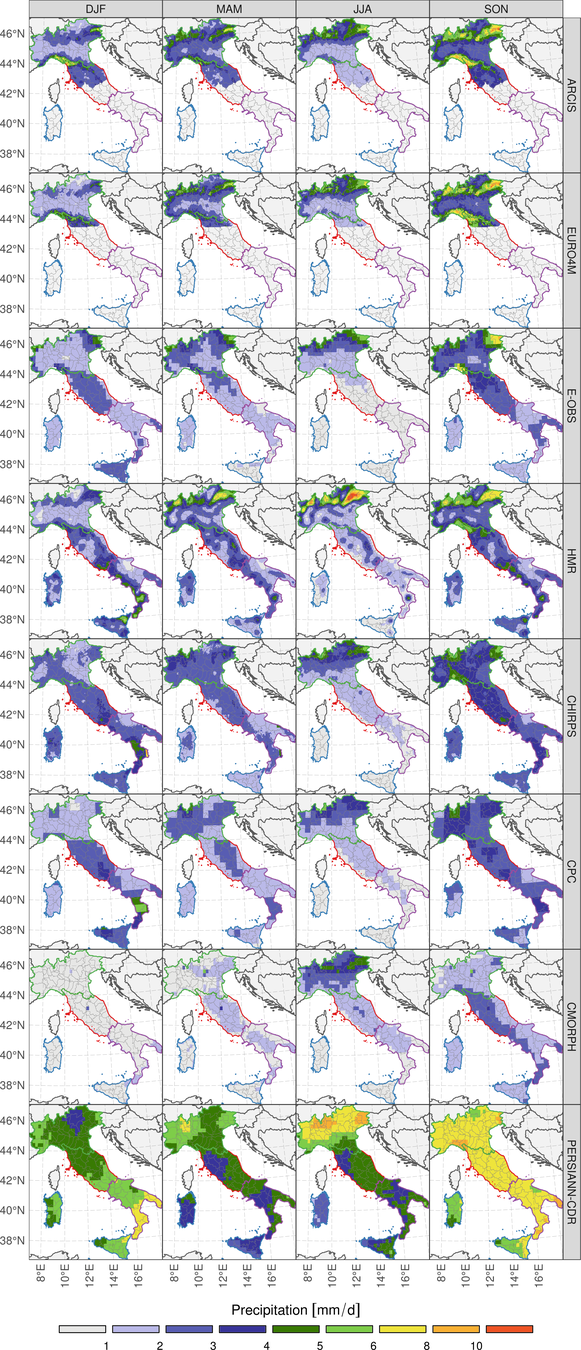

JJA

DJF

Prein et al., 2017

DJF

MAM

JJA

SON

2001-2016

mean precip

2001-2016

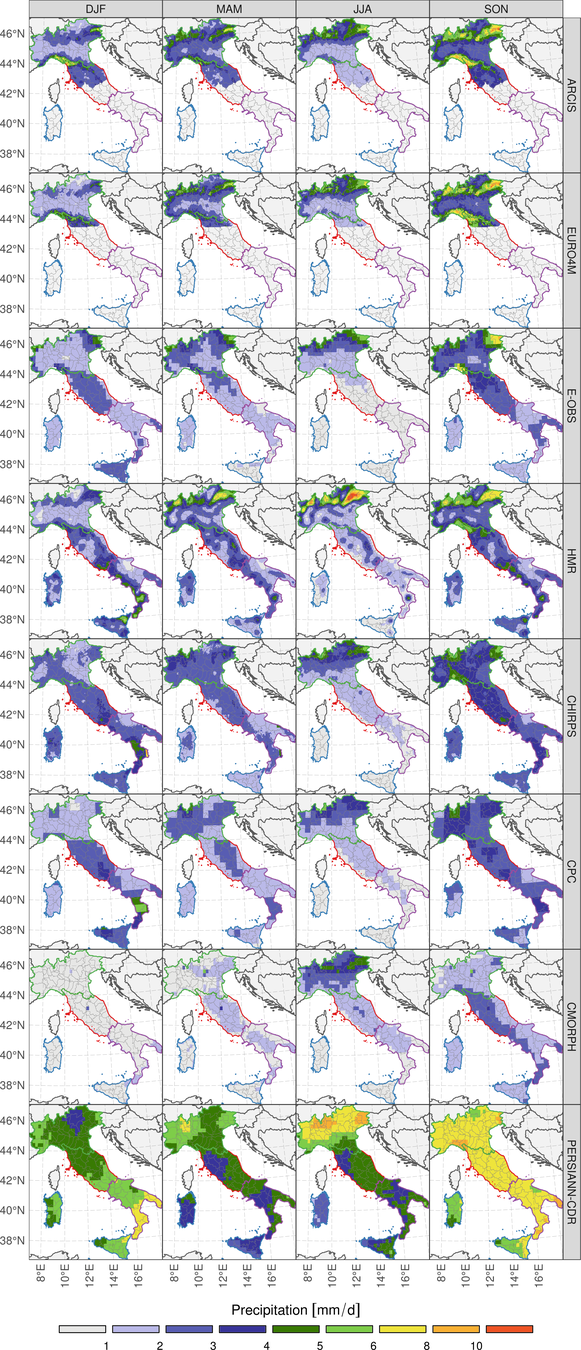

Precipitation probability density function

(Northern Italy only)

A few remarks

The best approach to correct data is heavily dependent on:

- Application

- Variable (e.g. precipitation > discharge > temperature)

- Availability of metadata

- Station density

- Length of the records

- Manual resources available

A CORRECTION WILL OFTEN NOT BE POSSIBLE

OBSERVATIONAL DATA WITH VERY HIGH UNCERTAINTY

But... what about other data sources?

RADAR

- Only for precipitation

- Depends heavily on location

- Can be shielded by topography

- Can be shielded by intense rain

- Frequent downtime

SATELLITE

- Precipitation, temperature

- ~ Worldwide

- The same algorithm is not necessarily good everywhere

- Resolution is generally poor (0.25° max)

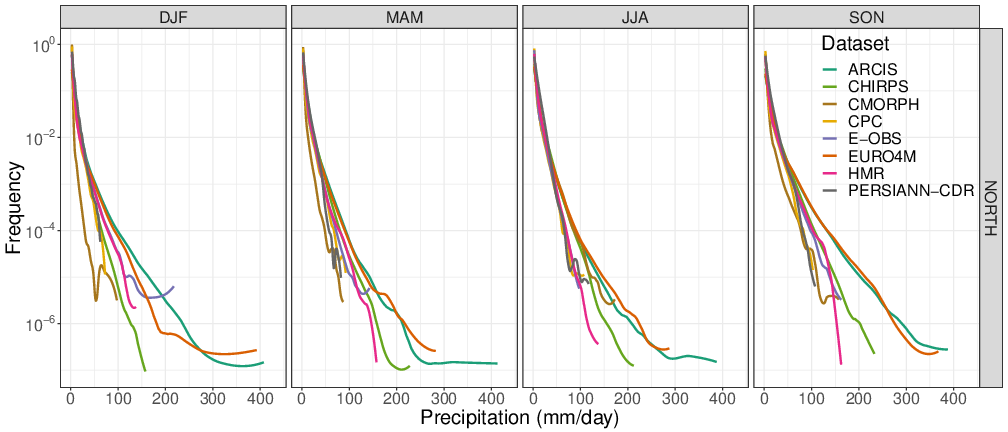

Liu, 2014

They are just proxies!

Requirement to choose an algorithm

But they are getting better and better!

TRMM ALGORITHMS CORR

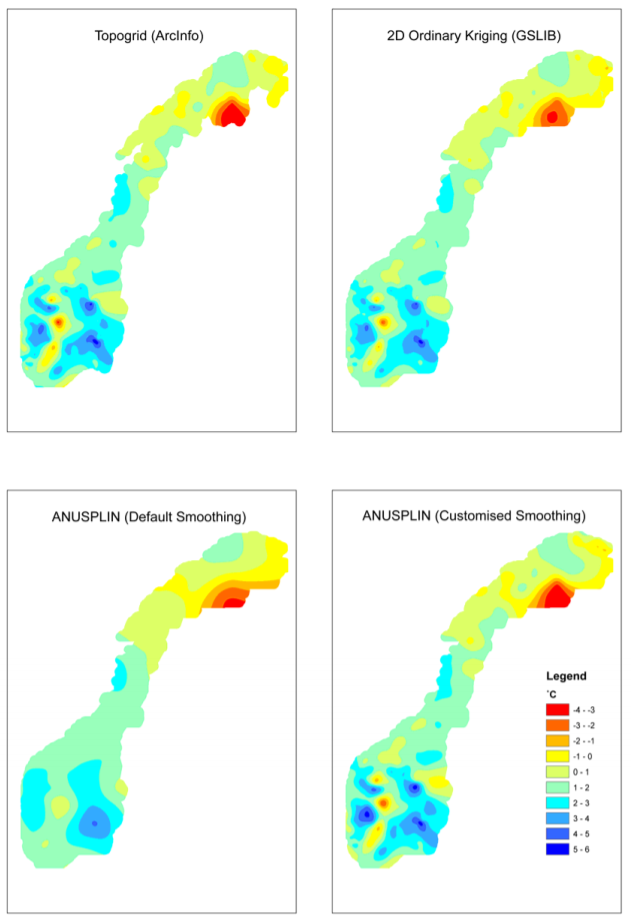

DEMs

- ASTER (30m)

- SRTM (30/90m)

- HydroSHEDS (90m)

- JAXA ALOS (30m)

- GTOPO (1km)

- WorldDEM (12m)

- Local, national DEMs

- ...

Digital Elevation Models

Usually satellite based, sometimes LIDAR

~100km

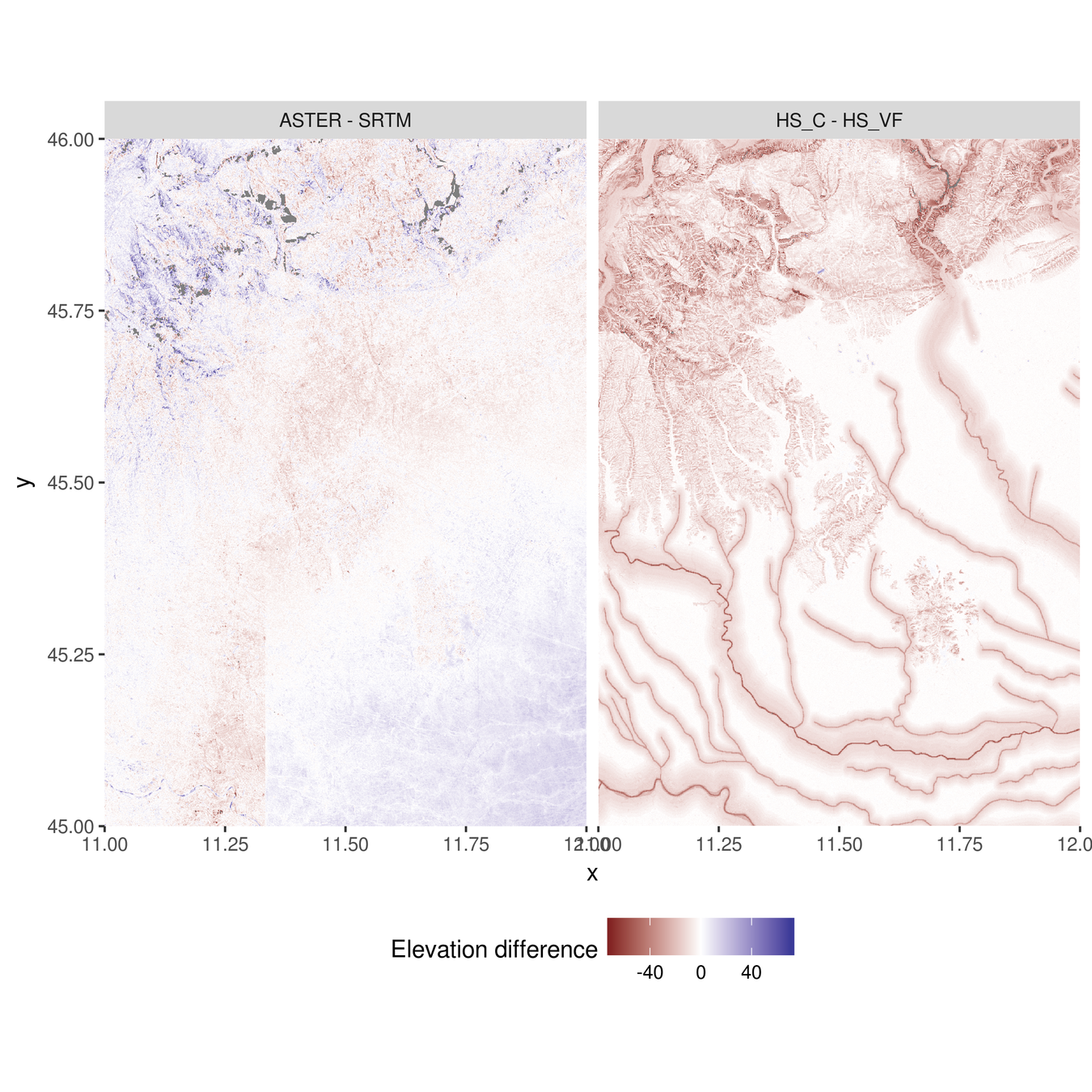

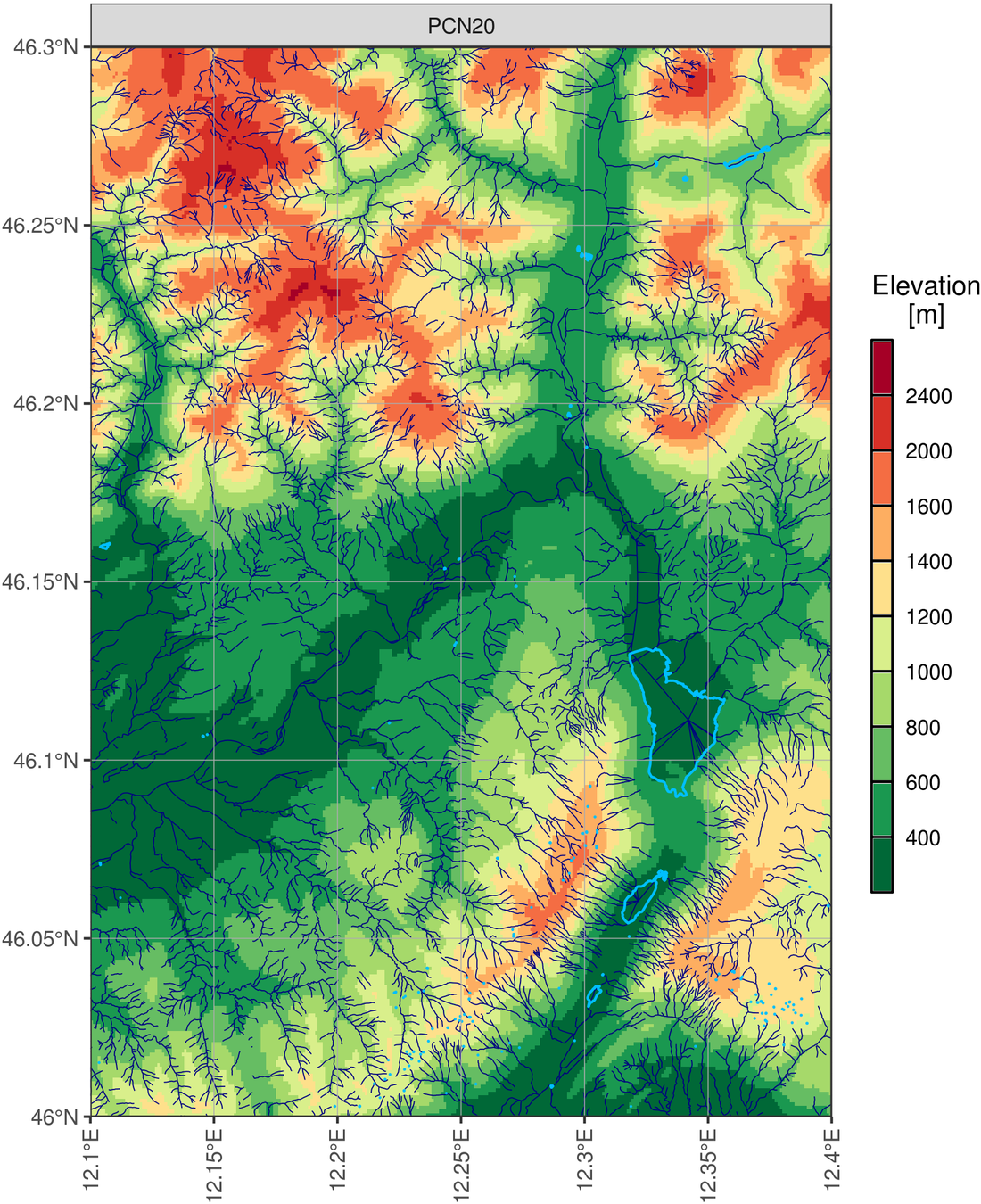

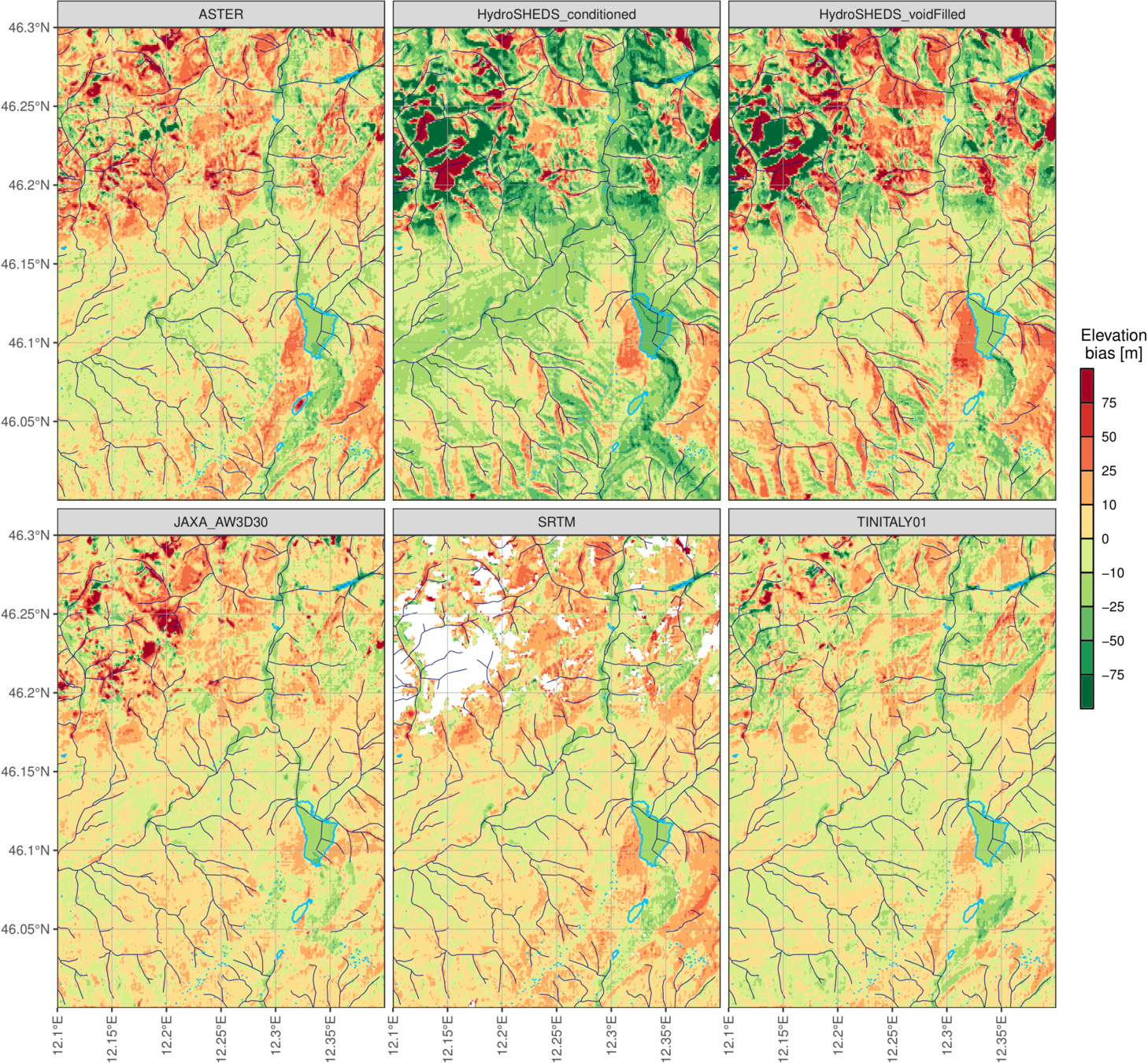

Another example: comparison over a small area

High resolution Italian official DEM:

- 20m resolution

- Obtained from military contour maps

- Comparison with:

- ASTER

- HS-c

- HS-vf

- JAXA

- SRTM

- TINITALY01

- All remapped on a 100m grid

~30km

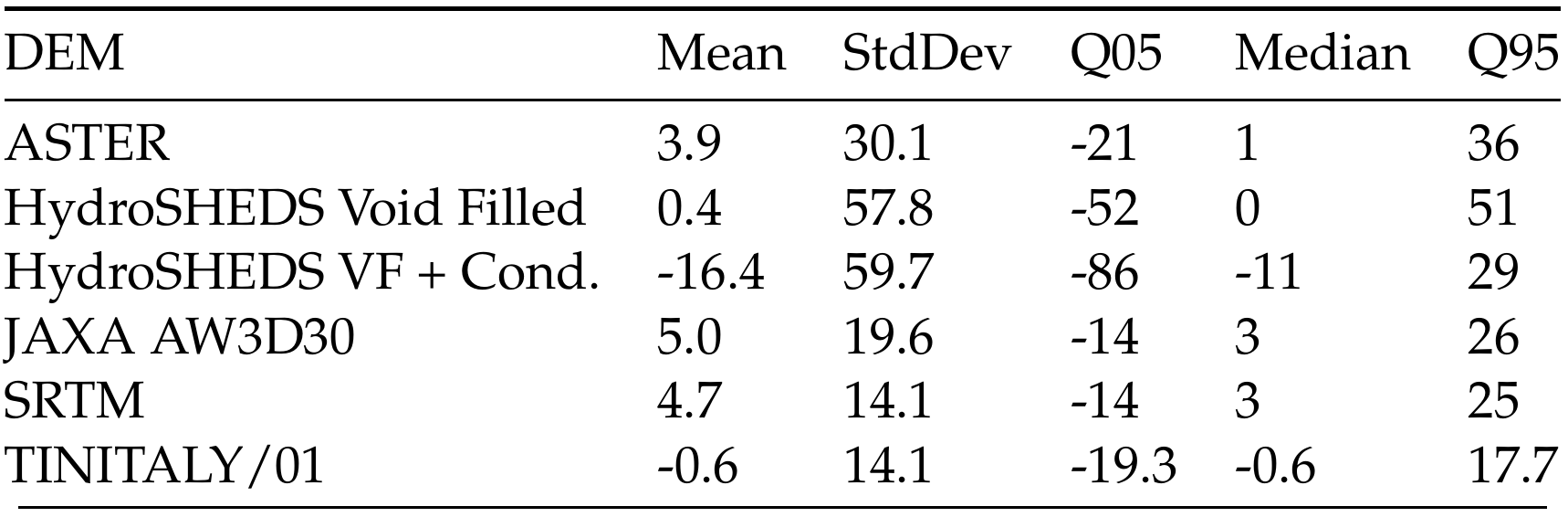

ASTER

HS-c

HS-vf

JAXA

SRTM

TINITALY01

- Average height differences of several meters

- 5th-95th bias percentile range up to 115 meters

- Standard deviation of bias up to 60m

- Even the best alternative DEM has a 5th-95th bias percentile range of 35 meters, more than enough to introduce issues in river routing!

- CHOICE OF DEM MATTERS!

Take home message

-

Do not underestimate observational uncertainty

-

Choose your data source based on your application

-

Never-ever blindly trust un-checked obs data!!!