Zero to DevOps in

Under an Hour with

http://slides.com/dalealleshouse/kube-azure

Who is this guy?

Dale Alleshouse

@HumpbackFreak

hideoushumpbackfreak.com

github.com/dalealleshouse

Agenda

-

What is Kubernetes?

-

Kubernetes Goals

-

Kubernetes Basic Architecture

-

Awesome Kubernetes Demo!

-

What Now?

What is Kubernetes?

- AKA K8S

- Greek for Ship's Captain (κυβερνήτης)

- Google's Open Source Container Management System

- https://github.com/kubernetes

- Lessons Learned from Borg/Omega

- K8S is speculated to replace Borg

- Released June 2014

- 1.0 Release July, 2015

- Currently in Version 1.6

- Highest Market Share

K8S Goals

- Container vs. VM Focus

- Portable (Run everywhere)

- General Purpose

- Any workload - Stateless, Stateful, batch, etc...

- Flexible (consume wholesale or a la carte)

- Extensible

- Automatable

- Advance the state of the art

- cloud-native and DevOps Focused

- mechanisms for slow monolith/legacy migrations

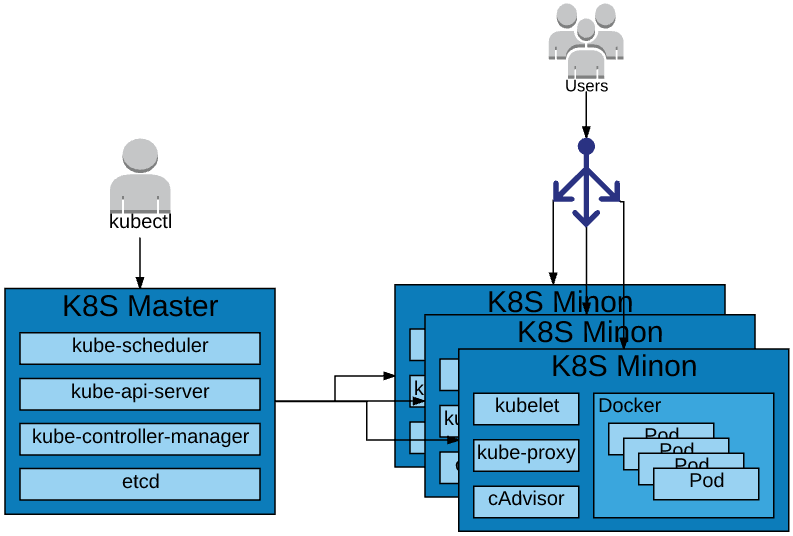

K8S Architecture

More Architecture Info

- Omega: flexible, scalable schedulers for large compute clusters

- https://research.google.com/pubs/pub41684.html

- Large-scale cluster management at Google with Borg

- https://research.google.com/pubs/pub43438.html

- Borg, Omega, and Kubernetes

- https://research.google.com/pubs/pub44843.html

- https://github.com/kubernetes/community

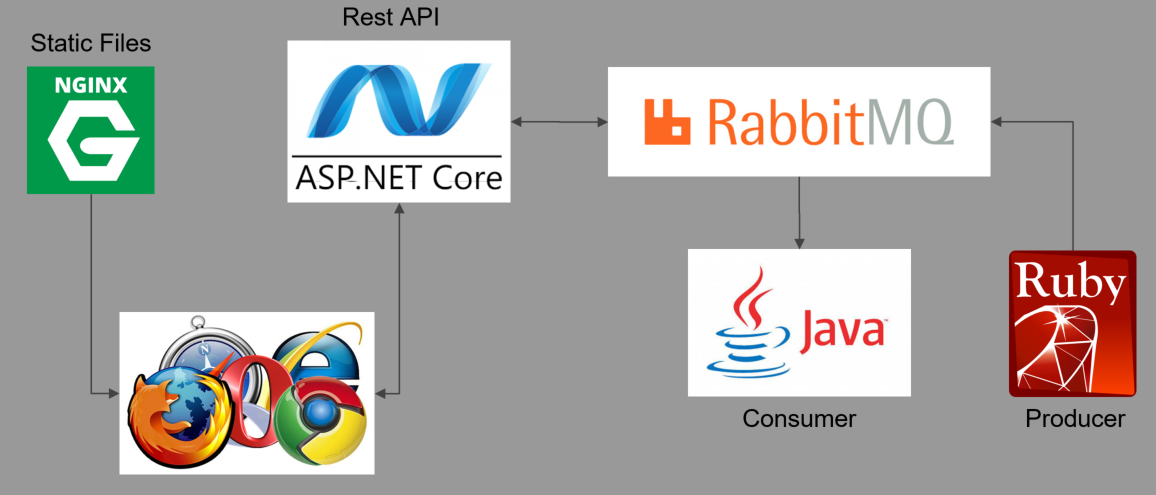

Demo System

https://github.com/dalealleshouse/zero-to-devops/tree/azure

Prerequisites

- Azure CLI

- https://docs.microsoft.com/en-us/cli/azure/install-azure-cli

- Docker

- https://www.docker.com/community-edition

Create Cluster

Create a new resource group

DNS_PREFIX=kube-demo

CLUSTER_NAME=kube-demo

az acs create --orchestrator-type=kubernetes --resource-group $RESOURCE_GROUP \

--name=$CLUSTER_NAME --dns-prefix=$DNS_PREFIX --generate-ssh-keysCreate Cluster

RESOURCE_GROUP=kube-demo

LOCATION=eastus

az group create --name=$RESOURCE_GROUP --location=$LOCATIONkubectl

# Install kubectl

sudo az acs kubernetes install-cli

# Authorize and configure for new cluster

az acs kubernetes get-credentials --resource-group=$RESOURCE_GROUP --name=$CLUSTER_NAMEInstall and configure

# View ~/.kube/config

kubectl config view

# Verify cluster is configured correctly

kubectl get csVerify

Deployments

- Deployments consist of pods and replica sets

- Pod - One or more containers in a logical group

- Replica set - controls number of pod replicas

# Create a deployment for three internal deployments

kubectl run java-consumer --image=dalealleshouse/java-consumer:1.0

kubectl run ruby-producer --image=dalealleshouse/ruby-producer:1.0

kubectl run queue --image=rabbitmq:3.6.6-management

# View the pods created by the deployments

kubectl get pods

# Run docker type commands on the containers

kubectl exec -it *POD_NAME* bash

kubectl logs *POD_NAME*Internal Services

Services provide a durable end point

# Notice the java-consumer cannot connect to the queue

kubectl get logs *java-consumer-pod*

# The following command makes the queue discoverable via the name queue

kubectl expose deployment queue --port=15672,5672 --name=queue

# Running the command again shows that it is connected now

kubectl get logs *java-consumer-pod*External Services

Create REST API Deployment and Load Balancer

# Create deployment

kubectl run status-api --image=dalealleshouse/status-api:1.0 port=5000

# Create Service

kubectl expose deployment status-api --port=80 --target-port=5000 --name=status-api \

--type=LoadBalancer

# Watch for service to become available

watch 'kubectl get svc'Create Load Balancer Service for Front End with env var pointing to REST API

kubectl run html-frontend --image=dalealleshouse/html-frontend:1.0 --port=80 \

--env STATUS_HOST=*STATUS-HOST-ADDRESS*

kubectl expose deployment html-frontend --port=80 --name=html-frontend --type=LoadBalancerInfrastructure as Code

The preferred alternative to using shell commands is storing configuration in yaml files. See the kube directory

# Delete all objects made previously

# Each object has a matching file in the kube directory

kubectl delete -f kube/

# Recreate everything

kubectl create -f kube/Default Monitoring

K8S has a default dashboard

kubectl proxyNavigate to http://127.0.0.1:8081/ui

Scaling

K8S will automatically load balance requests to a service between all replicas.

# Scale the NGINX deployment to 3 replicas

kubectl scale deployment html-frontend --replicas=3K8S can create replicas easy and quickly

Auto-Scaling

K8S can scale based on load.

# Maintain between 1 and 5 replicas based on CPU usage

kubectl autoscale deployment java-consumer --min=1 --max=5 --cpu-percent=50

# Run this repeatedly to see # of replicas created

# Also, the "In Process" number on the web page will reflect the number of replicas

kubectl get pods -l run=html-frontendSelf Healing

K8S will automatically restart any pods that die.

# View the html-frontend pods

kubectl get pods -l run=html-frontend

# Forcibly shut down container to simulate a node\pod failure

kubectl delete pod *CONTAINER*

# Containers are regenerated immediately

kubectl get pods -l run=html-frontend

Health Checks

If the endpoint check fails, K8S automatically kills the container and starts a new one

# Find front end pod

kubectl get pods -l run=html-frontend

# Simulate a failure by manually deleting the health check file

kubectl exec *POD_NAME* rm usr/share/nginx/html/healthz.html

# Notice the restart

kubectl get pods -l run=html-frontend...

livenessProbe:

httpGet:

path: /healthz.html

port: 80

initialDelaySeconds: 3

periodSeconds: 2

readinessProbe:

httpGet:

path: /healthz.html

port: 80

initialDelaySeconds: 3

periodSeconds: 2Specify health and readiness checks in yaml

Rolling Deployment

K8S will update one pod at a time so there is no downtime for updates

# Update the image on the deployment

kubectl set image deployment/html-frontend html-frontend=dalealleshouse/html-frontend:2.0

# Run repeadly to see the number of available replicas

kubectl get deploymentsViewing the html page shows an error. K8S makes it easy to roll back deployments

# Roll back the deployment to the old image

kubectl rollout undo deployment html-frontendDelete Demo

az group delete -n kube-demoWhat Now?

- K8S Docs

- https://kubernetes.io/docs/home/

- Free Online Course from Google

- https://www.udacity.com/course/scalable-microservices-with-kubernetes--ud615

- Callibrity Training (bstewart@callibrity.com)

- Maybe I can Help

- @HumpbackFreak

Thank You!

Zero to DevOps in Under an Hour with Kubernetes - Azure Edition

By Dale Alleshouse

Zero to DevOps in Under an Hour with Kubernetes - Azure Edition

The benefits of containerization cannot be overstated. Tools like Docker have made working with containers easy, efficient, and even enjoyable. However, management at scale is still a considerable task. That's why there's Kubernetes. Come see how easy it is to create a manageable container environment. Live on stage (demo gods willing) you'll witness a full Kubernetes configuration. In less than an hour, we'll build an environment capable of: Automatic Binpacking, Instant Scalability, Self-healing, Rolling Deployments, and Service Discovery/Load Balancing.

- 1,938