Mesos in Production

Decisions you'll make

after drinking the Kool-Aid

Alan Scherger

Sr. Janitor @

flyinprogrammer

The Kool-Aid

Docker

-

Enables the decentralization of application development.

- Bundles all of our applications dependencies into an isolated, versioned package

- Enables us to create a contract between the application and the hardware resources it requires

Mesos

-

Enables the centralization of hardware allocation

- Frameworks enable us to provide both generic and highly customized mechanisms for deploying and managing these application contracts.

The Kool-Aid sounds great!

Except we just claimed there exists a system which will successfully centralize an intentionally decentralized architecture.

Mesos Deployment

CAP Theorem

-

Consistency

- All nodes see the same data at the same time

-

Availability

- A guarantee that every request receives a response about whether it succeeded or failed

-

Partition tolerance

- The system continues to operate despite arbitrary partitioning due to network failures

Mesos Stack

Mesos

Zookeeper

When in high-availability mode,

Mesos requires a Zookeeper cluster.

Mesos Stack

Mesos

Zookeeper

To run both clusters with high availability we must run at a minimum 3 nodes of each service.

Mesos

Mesos

Zookeeper

Zookeeper

Mesos Cluster

Zookeeper Cluster

Mesos Stack

When we run our Marathon framework on top of Mesos,

it also relies on Zookeeper to maintain state and coordinate leader election.

Marathon

Marathon Cluster

Marathon

Marathon

Mesos

Mesos Cluster

Mesos

Mesos

Zookeeper

Zookeeper Cluster

Zookeeper

Zookeeper

What's the problem with this picture?

Marathon

Marathon Cluster

Marathon

Marathon

Mesos

Mesos Cluster

Mesos

Mesos

Zookeeper

Zookeeper Cluster

Zookeeper

Zookeeper

- What happens when ZK dies?

- How will we test and roll out upgrades?

So let's build multiple clusters pods.

Marathon

not-prod-pod-1

Mesos

Zookeeper

Marathon

not-prod-pod-2

Mesos

Zookeeper

Marathon

prd-pod-1

Mesos

Zookeeper

Marathon

prd-pod-2

Mesos

Zookeeper

Production

SLA

Not

Production

SLA

Which spawns some questions!

- Do we stripe our pods across availability zones?

- 'best practices' vs reduced latency and simplicity

- Where can we reduce hardware costs?

- 'Doubling up' creates cascading leader elections during failures.

- How will we orchestrate our deployments?

A Production Stack

Zookeeper/Mesos/Marathon Pods

Artifact Repository

Source Code Repository

Docker Registry

Logging Storage and Analytics

Metric Storage and Analytics

Service Discovery

Load Balancing

Orchestration

Monitoring and Alerting

Build System

Storage

Data Streaming

Automated Recovery

Automated Deployment

Support Services

Secrets

Which spawns more questions!

How will we choose to implement each part of this stack?

Can my existing choices handle the ephemeral nature of containers?

Which services will be pod, availability zone, or region specific?

How do we incorporate security?

How will we educate our engineering group?

Will all this change actually solve a real business problem?

Let's tackle these challenges one at a time.

Java + Docker

Zombie Reaping

- PID1 is required to reap zombie and orphaned processes from the process table

- joyent/containerpilot (formerly Containerbuddy)

- krallin/tini

Secrets

- How are you going to inject secrets into your container?

- 'secret zero' problem

- ™ @jmoney8080

- we shouldn't bake shared secrets into our images

- Environment variables

- Good, but exposed

- Environment variables via sidecar

- Better, but what will be our agent of authorization.

- 'secret zero' problem

Secret Zero

Secret Storage

Secret Service

Container

Continuous Delivery

Container Registry

Continuous Integration

Code Repo

Layers of Trust

RMI

Normal Application

Application

0.0.0.0:8080

Bridge

host_ip:31000

Typical Application

Port Mapping

host

curl host:31000

RMI

Application

0.0.0.0:31000

Bridge

host_ip:31000

RMI Server

Port Mapping

host

curl host:31000

RMI

"portMappings": [{

"containerPort": 8080,

"hostPort": 0,

"servicePort": 0,

"protocol": "tcp",

"name": "api",

"labels": {}"portMappings": [{

"containerPort": 0,

"hostPort": 0,

"servicePort": 0,

"protocol": "tcp",

"name": "rmi",

"labels": {}Typical Application

Port Mapping

RMI Server

Port Mapping

RMI

# Specify what address our applications should bind to.

SO_BIND_ADDR=${SO_BIND_ADDR:-0.0.0.0}

# If we've set RMI_PORT, then we probably want to do RMI Port things

if [ -n "$RMI_PORT" ]; then

# We need the hostname rmi is started with to the match the hostname we will access it with.

# By default if the user supplies something explicit, us that, else use HOST which

# Marathon sets to the agent hostname. Otherwise, use localhost for when this script is used outside of Docker.

addJvmParameter java.rmi.server.hostname ${RMI_HOST:-${HOST:-localhost}}

# If RMI_PORT is set to a PORT{int}, patch it with the real port

# and export a PORT_{int}={int} pair.

if [[ $RMI_PORT = "PORT"* ]] ; then

export RMI_PORT=$(($RMI_PORT))

# Marathon does not map PORT_(PORT NUMBER) for ephemeral ports

export PORT_$RMI_PORT=$RMI_PORT

fi

# Have our RMIRegistry and JMXConnectorServer bind to the socket address

# which will be routable through the docker bridge

addJvmParameter jetty.jmxrmihost $SO_BIND_ADDR

# Use our final RMI_PORT

addJvmParameter jetty.jmxrmiport ${RMI_PORT}

fi

ROOT_PASSWORD=hunter8Environment Variable Mapping

Mesos Networking

Docker Networking

- Docker has five typical network options (--net)

- none

- no attached network devices

- host

- direct access to the host network interfaces

- bridge

- linux network bridge with masquerade

- overlay

- driver bundled with docker/libnetwork

- custom plugin

- none

I have not tried this!

But I'd love to know if it works!

Mesos Networking

- Support Container Network Interface (CNI)

- MESOS-4641

- Strategy for Utilizing Docker 1.9 Multihost Networking - MESOS-3828

Currently I typically use 'host' or 'bridge' networking.

Service Discovery

Marathon Config

"portMappings": [{

"name": "foo",

"labels": {},

"containerPort": 8081,

"hostPort": 0,

"servicePort": 0,

"protocol": "tcp"

}, {

"name": "bar",

"labels": {},

"containerPort": 8082,

"hostPort": 0,

"servicePort": 0,

"protocol": "tcp"

}]Mesos API

curl master:5050/tasks

"discovery": {

"name": "app1",

"ports": {

"ports": [{

"name": "foo",

"number": 31792,

"protocol": "tcp"

}, {

"name": "bar",

"number": 31793,

"protocol": "tcp"

}]

}

},Mesos-DNS

- Dynamically builds DNS records from tasks in Mesos

- Stateless

- Drop dead simple configuration

- Has a REST API

Mesos-DNS

vagrant@mesos:~curl localhost:8123/v1/hosts/mps.v100.test-app.marathon.mesos.

[

{

"host": "mps.v100.test-app.marathon.mesos.",

"ip": "172.17.0.2"

},

{

"host": "mps.v100.test-app.marathon.mesos.",

"ip": "172.17.0.4"

},

{

"host": "mps.v100.test-app.marathon.mesos.",

"ip": "172.17.0.3"

}

]Mesos-DNS

vagrant@mesos:~$ curl localhost:8123/v1/services/_mps.v100.test-app._tcp.marathon.mesos.

[

{

"service": "_mps.v100.test-app._tcp.marathon.mesos.",

"host": "mps.v100.test-app-xc4p5-s0.marathon.mesos.",

"ip": "172.17.0.2",

"port": "31893"

},

{

"service": "_mps.v100.test-app._tcp.marathon.mesos.",

"host": "mps.v100.test-app-xc4p5-s0.marathon.mesos.",

"ip": "172.17.0.2",

"port": "31894"

},

...

- Favors consistency over availability

- Intro to Consul

- Gotcha: DNS caching

"dns_config": {

"node_ttl": "10s",

"allow_stale": true,

"max_stale": "10s",

"service_ttl": {

"*": "10s"

}

}

- Favors availability over consistency

- Java - Netflix opinionated

- Has a REST API

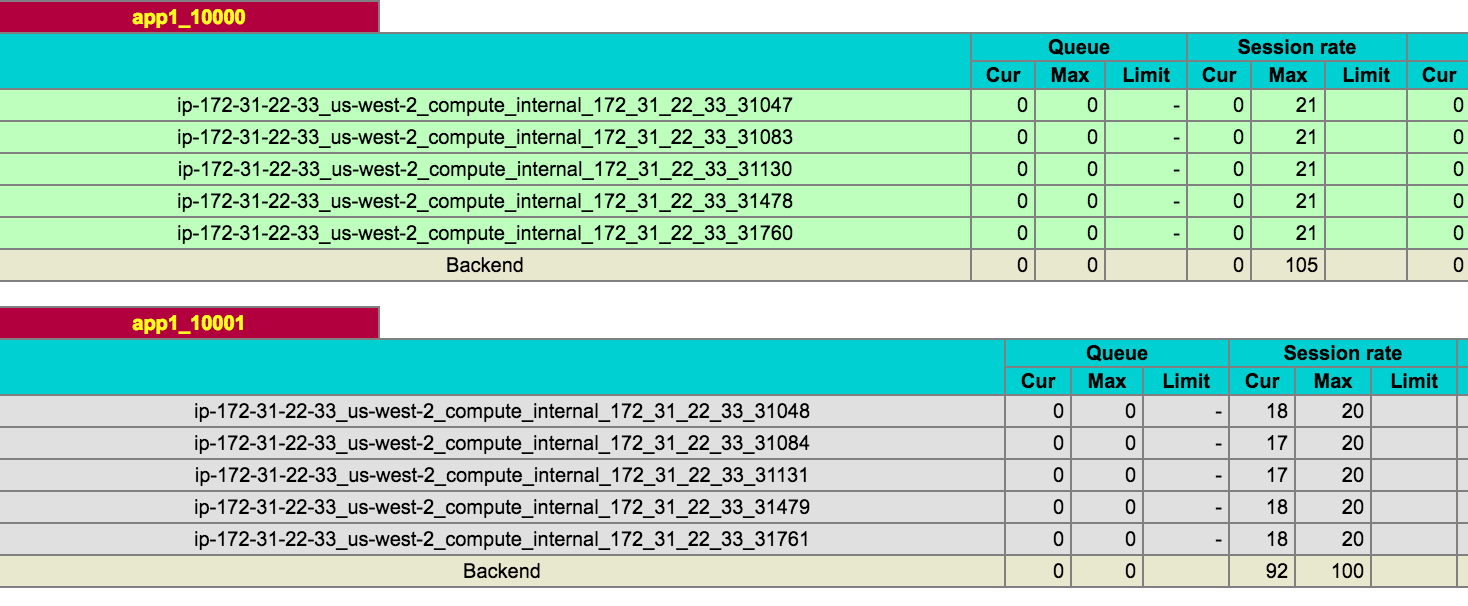

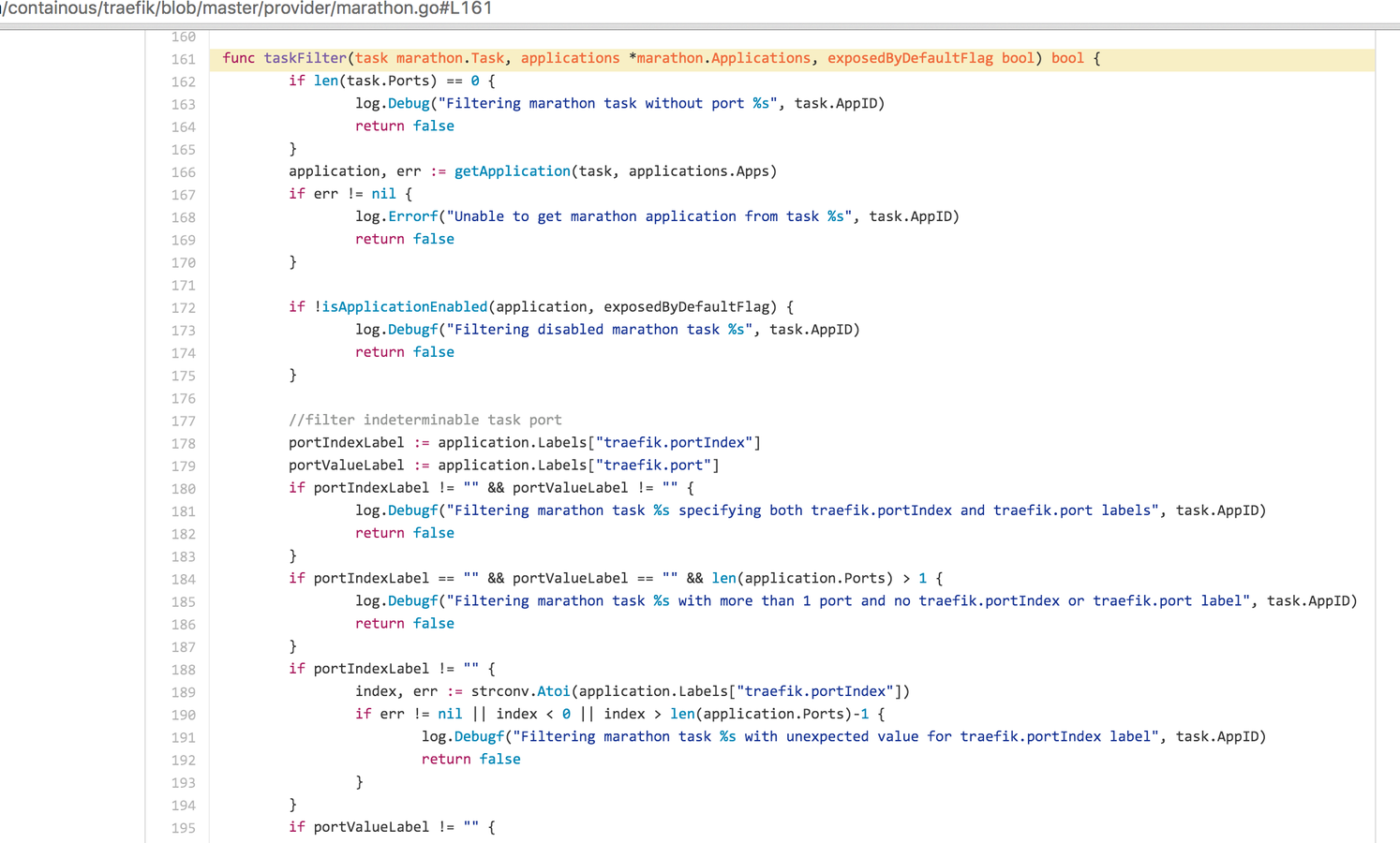

Load Balancing

Marathon-lb

- Stateless - only relies on marathon

- Wraps HAProxy

- Relies on Marathon service ports

docker run -d \

-e PORTS=9090 \

--net=host \

mesosphere/marathon-lb \

sse \

-m http://master:8080 \

--health-check \

--group externalMarathon-lb

{

"id": "/app1",

"labels": { "HAPROXY_GROUP": "external" },

"container": {

"docker": {

"image": "flyinprogrammer/mps",

"network": "BRIDGE",

"portMappings": [{

"containerPort": 8081,

"hostPort": 0,

"servicePort": 10000,

"protocol": "tcp",

"name": "foo",

"labels": {}

}, {

"containerPort": 8082,

"hostPort": 0,

"servicePort": 10001,

"protocol": "tcp",

"name": "bar",

"labels": {}

}]

Marathon Configuration

Marathon-lb

If a servicePort value is assigned by Marathon then Marathon guarantees that its value is unique across the cluster.

ab -n 100000 -c 20 http://54.186.59.17:10000/

ab -n 100000 -c 20 http://54.186.59.17:10001/

- RPC load balancer

-

https://blog.buoyant.io/2016/04/19/linkerd-dcos-microservices-in-production-made-easy/

- FAIL: Setting "constraints": [["hostname", "UNIQUE"]], and scaling a task to the number of nodes in the cluster does not guarantee that your agent will always be running on every host.

Logging

Local Logging

- Mesos sandbox logging

- Now has logrotate support

- Docker log drivers

- json-file

- --log-opt max-size=50m

- none

- syslog

- fluentd

- awslogs

- gcplogs

- json-file

Remote Storage

- rsyslog -> kafka -> *

- ELK

- Splunk

- Papertrail

- Loggly

- Sumo Logic

And many more.

Gotchas

- host vs container hostname

- Mesos taskid vs containerid

- logfile 'source'

Metrics

Application Deployment

We just need to make one.

Connect with Austin Mesos Users:

Join the Mesos Community:

Contact Me

Mesos in Production

By Alan Scherger

Mesos in Production

Things you might consider after drinking the Kool-Aid

- 3,973