Supervised Open Information Extraction

Gabriel Stanovsky, Julian Michael, Luke Zettlemoyer, and Ido Dagan

Presented By

Dharitri Rathod

Lamia Alshahrani

Yuh Haur Chen

What is

Open Information Extraction?

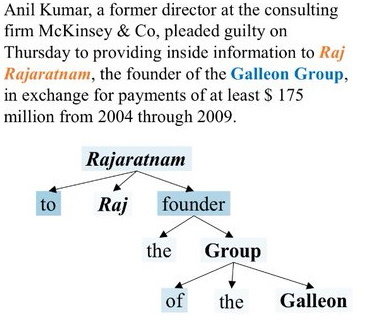

Open Information Extraction (OIE)

Usages

- textual entailment

- question answering

- knowledge base populations

Open IE is systems

extract tuples of natural language expressions that

represent the basic propositions asserted by a sentence.

Usages :They have been used for

a wide variety of tasks, such as textual entailment , question answering

, and knowledge base populations

- Open IE was used in Semi-supervised approach or rule based algorithm . In this paper they present new data and method for open IE to improve the performance they used supervised learning .

- They extend QA-SRL techniques and apply it to the QAMR corpus.

Background

2007

Idea of Open IE

2011

Reverb

2018

2016

OpenIE4

OIE2016

QA-SRL

QAMR

Supervised OpenIE

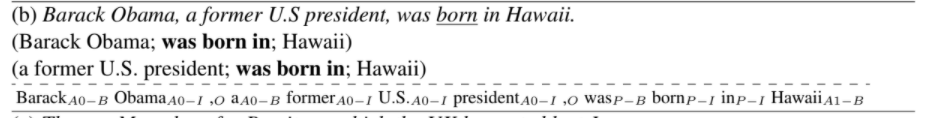

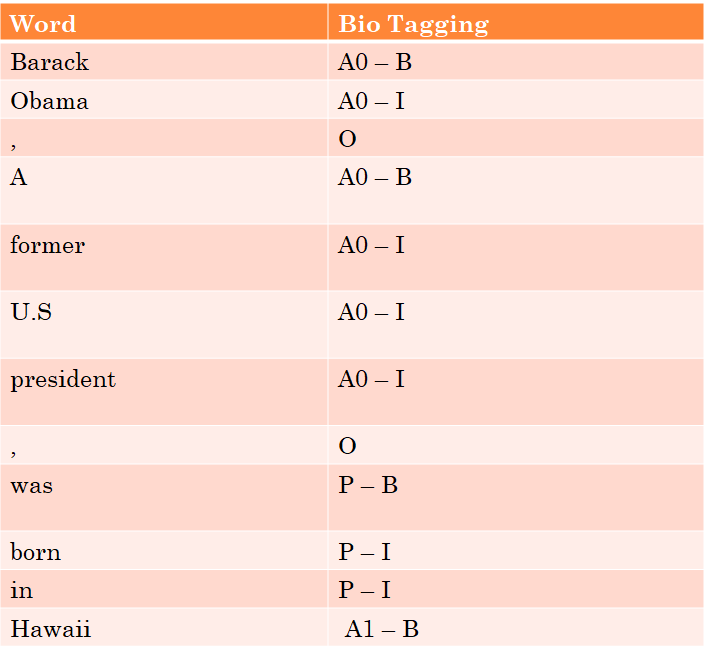

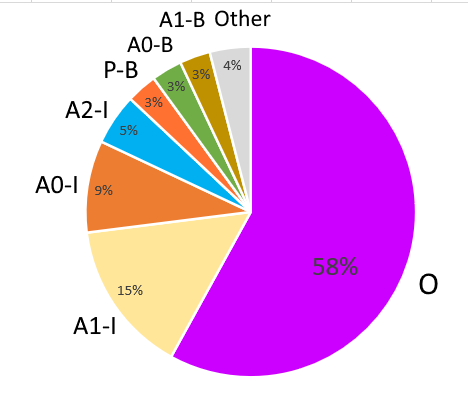

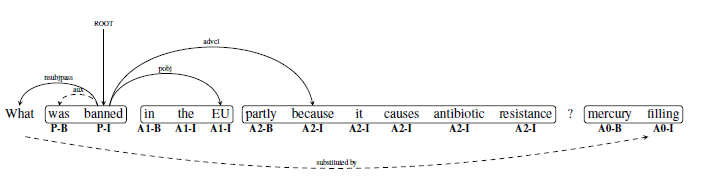

Custom BIO Tagging

BIO Tagging

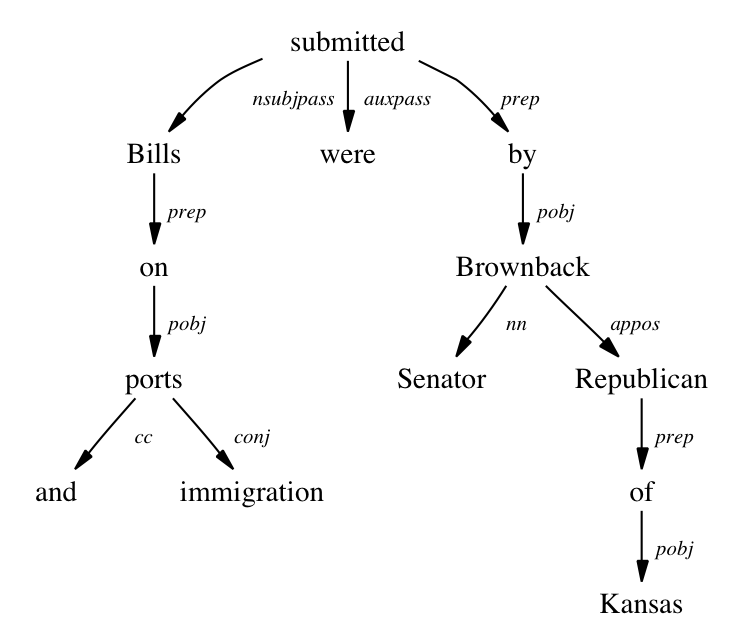

Each tuple is encoded with respect to a single predicate, where argument labels indicate their position in the tuple.

“Barack Obama, a former US President, was born in Hawaii”

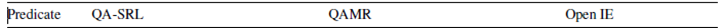

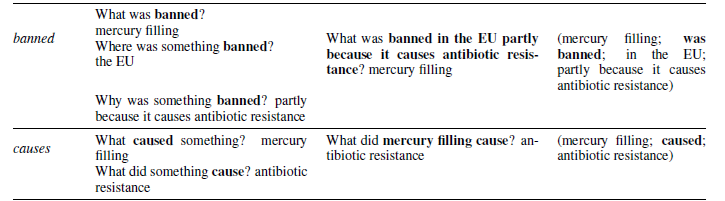

QA-SRL QAMR

"Mercury filling, particularly prevalent in the USA, was banned in the EU, partly because it causes antibiotic resistance. "

Who did What to whom, when, and where?

RNN OIE

"working love learning we on deep"

"we love working on deep learning" :)

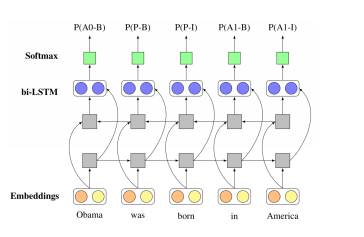

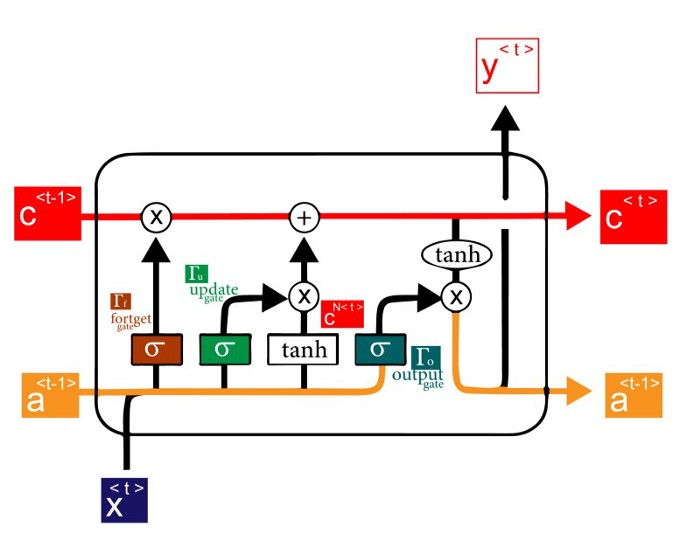

LSTM

BI-LSTM

Confidence interable

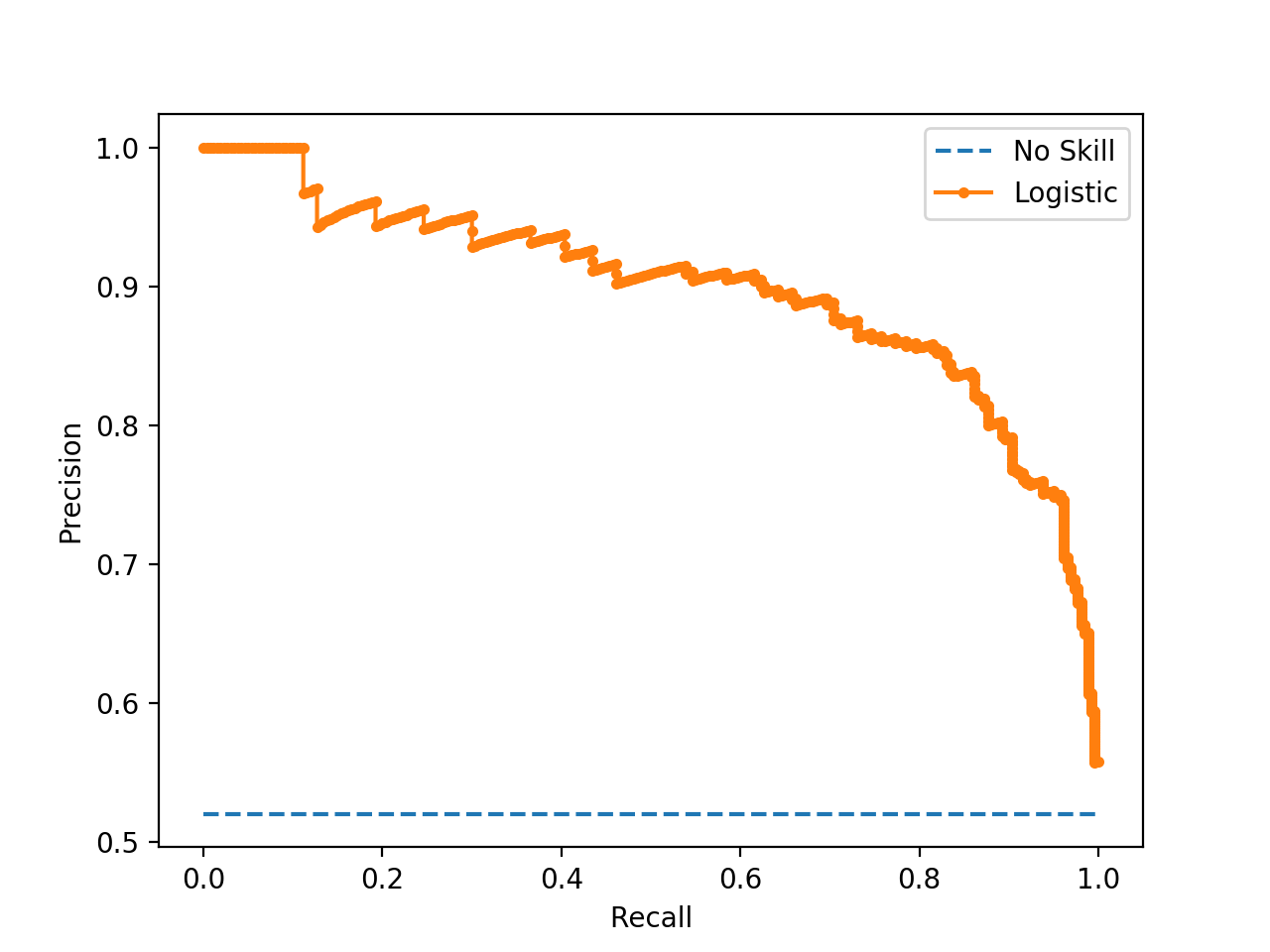

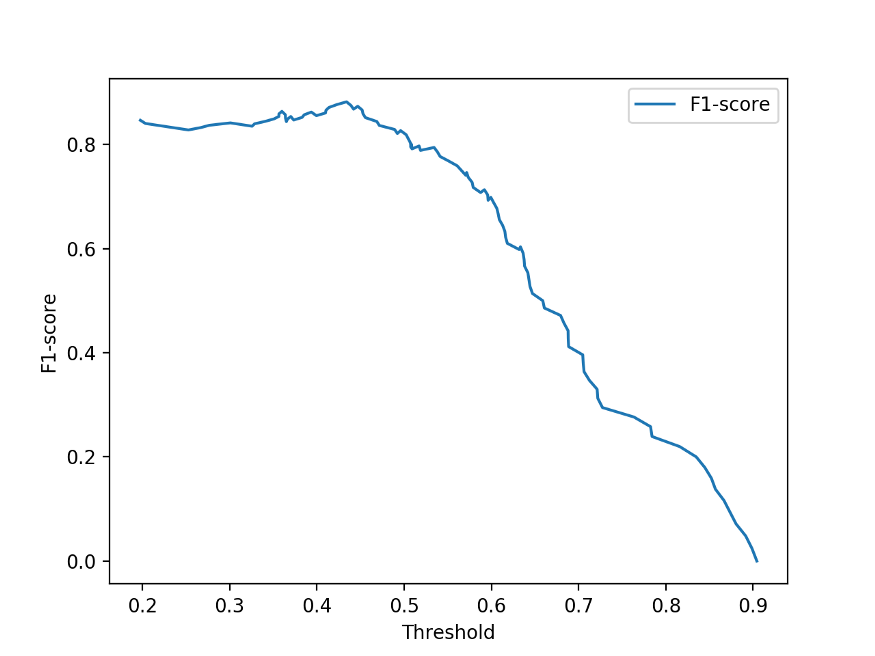

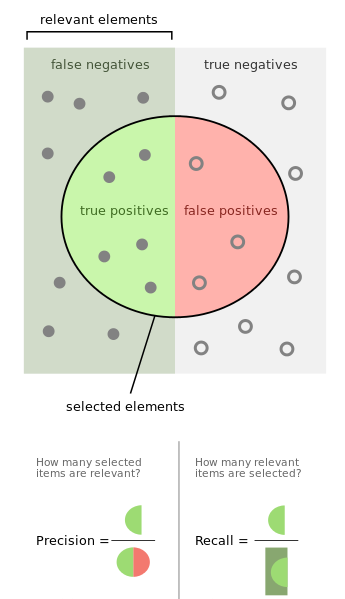

Evaluation

Metrics

- Precision-recall (PR) curve

- Compute the area under the PR curve(AUC)

- F-measure

Precision =

True Positives / (True Positives + False Positives)

Recall =

True Positives / (True Positives + False Negatives)

F1 =

2* precision* recall/

precision + recall

The sheriff standing against the wall spoke in a very soft voice

Matching function

The Sheriff;

spoke; &

in a soft voice

The sheriff standing against the wall;

spoke;

in a very soft voice

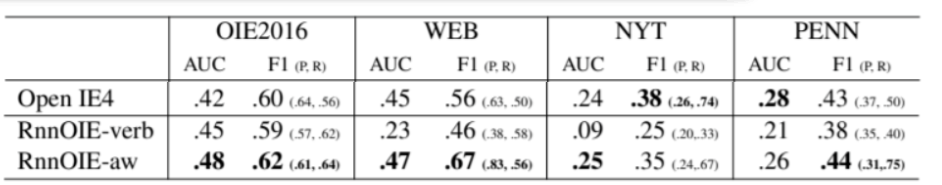

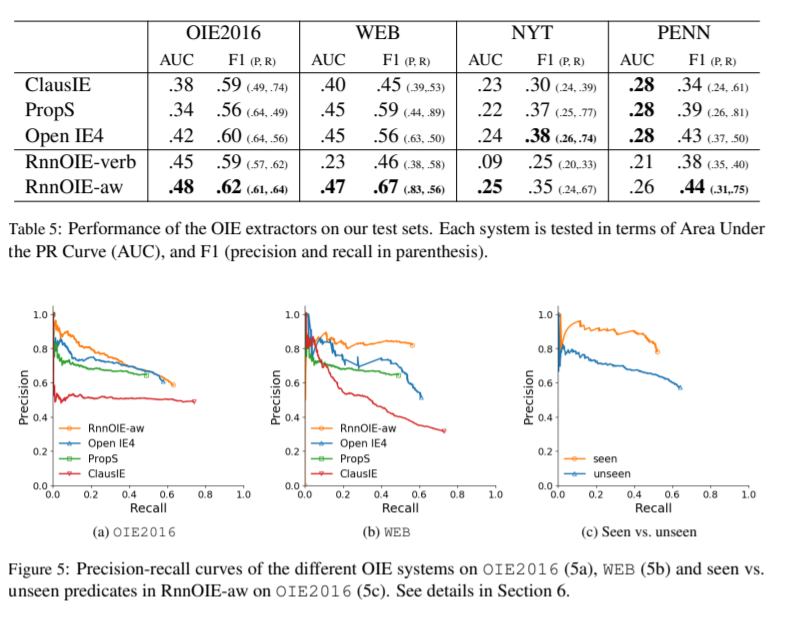

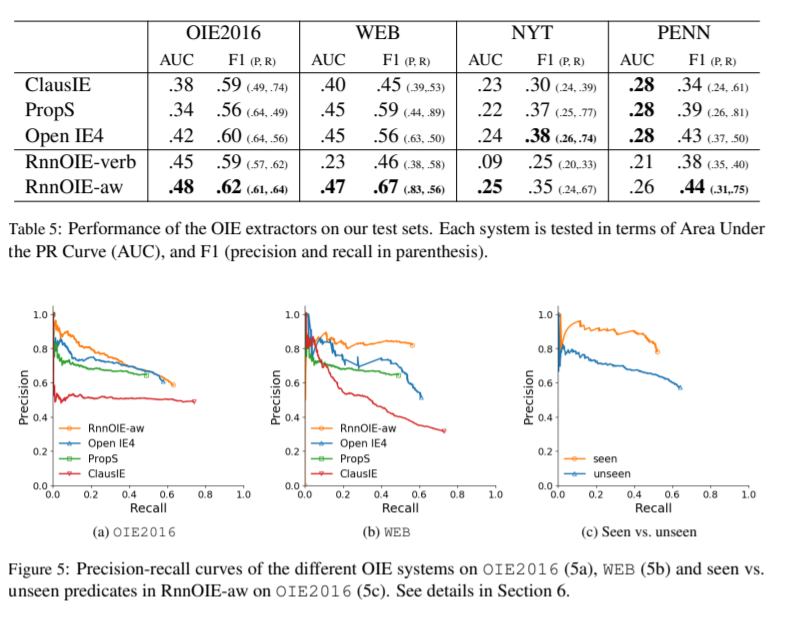

| OIE2016 | WEB, NYT, PENN |

|---|---|

| Penn Treebank gold syntactic trees | predicted trees |

Evaluation result-1

Rank 1 1 1 1 1 3 2 1

"Furthermore, on all of the test sets,

extending the training set significantly improves our model’s performance"

Test set: Only | Include

verb predicates | nominalizations

Evaluation result-2

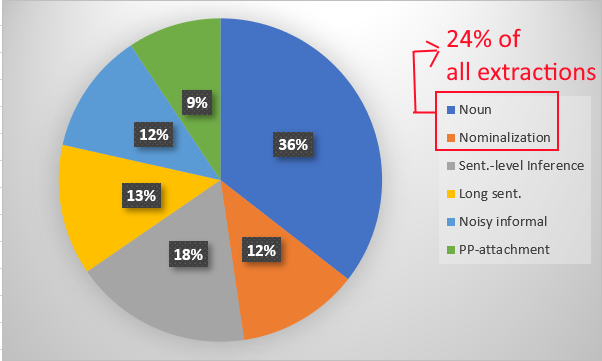

Performance Analysis - unseen predicates

The unseen part contains 145 unique predicate lemmas in 148 extractions,

24% out of the 590 unique predicate lemmas

&

7% out of the 1993 total extractions

RnnOIE-aw

Performance Analysis - Runtime analysis

| ClausIE | PropS | Open IE4 | RnnOIE | |

|---|---|---|---|---|

| Xeon 2.3GHz CPU | 4.07 | 4.59 | 15.38 | 13.51 |

| Efficiency % | 26.5% | 29.8% | 100% | 87.8% |

Data set: 3200 sentences from OIE2016

Using GPU : RnnOIE get 11.047 times faster (149.25 sentences/sec)

(sentences/sec)

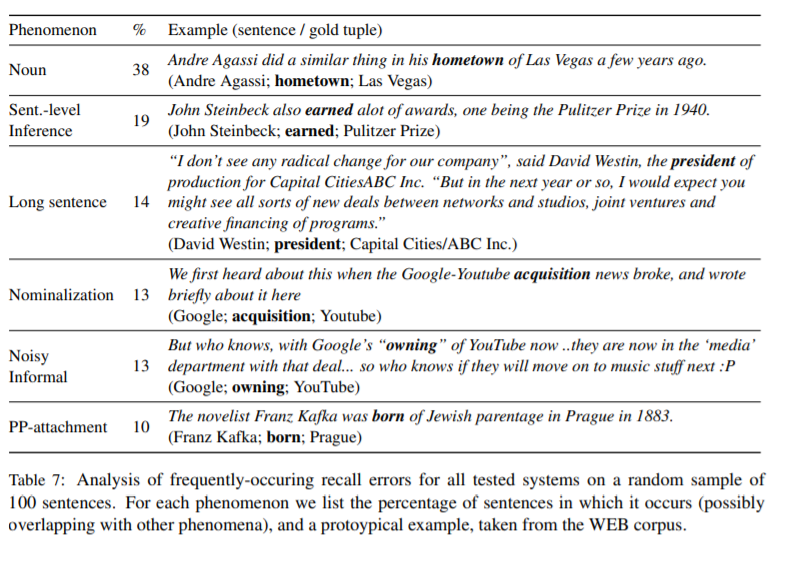

Performance Analysis - Error analysis

a random sample of 100 recall errors:

> 40 words

(Avg. :29.4 words/sentence )

Summary

Open IE

bi-LSTM

sequence tagging problem

formulating

BIO encoding

confidence score

extend

OIE2016

QAMR

train

QA-SRL paradigm

Pros and Cons

What's unique and impressive?

| Pros | Cons |

|---|---|

| Supervise system | CPU runtime is slower |

| Provide confidence scores for tuning their PR tradeoff | It can't answer some QA |

| Their system did perform well compare to others | |

References

Copy of deck

By jackiechen08

Copy of deck

- 303