welcome to 3D

Martin Schuhfuss | @usefulthink | m.schuhfuss@gmail.com

const container = document.querySelector('.container');

const canvas = document.createElement('canvas');

canvas.width = container.offsetWidth;

canvas.height = container.offsetHeight;

container.appendChild(canvas);

const gl = canvas.getContext('webgl');

// ---- setup viewport size

gl.viewport(0, 0, canvas.width, canvas.height);

// ---- clear screen with black

gl.clearColor(0.0, 0.0, 0.0, 1.0);

gl.clear(gl.COLOR_BUFFER_BIT);

// ---- create and compile shaders

const vs = gl.createShader(gl.VERTEX_SHADER);

const fs = gl.createShader(gl.FRAGMENT_SHADER);

// ---- upload and compile GLSL shaders

gl.shaderSource(vs, `

attribute vec3 position;

void main() { gl_Position = vec4(position, 1.0); }

`);

gl.shaderSource(fs, `

void main() { gl_FragColor = vec4(1.0, 1.0, 1.0, 1.0); }

`);

gl.compileShader(vs);

gl.compileShader(fs);

let's draw a triangle...

// [... getContext, init viewport and clear]

// [... create and compile shaders]

// ---- link a program out of the two shaders

const shaderProgram = gl.createProgram();

gl.attachShader(shaderProgram, vs);

gl.attachShader(shaderProgram, fs);

gl.linkProgram(shaderProgram);

gl.useProgram(shaderProgram);

// ---- create a buffer with vertex-data

const vertexBuffer = gl.createBuffer();

gl.bindBuffer(gl.ARRAY_BUFFER, vertexBuffer);

const vertices = new Float32Array([

-1.0, 0.0, 0.0,

1.0, 0.0, 0.0,

0.0, 1.0, 0.0

]);

gl.bufferData(gl.ARRAY_BUFFER, vertices, gl.STATIC_DRAW);

// ---- bind the buffer to the vertex-shader attribute

const positionAttr = gl.getAttribLocation(shaderProgram, "position");

gl.vertexAttribPointer(positionAttr, 3, gl.FLOAT, false, 0, 0);

// ---- draw it. finally.

gl.drawArrays(gl.TRIANGLES, 0, vertices.length);

No really, IT's just a triangle

WTF?

I think there's a better way to explain 3D rendering

...and we don't really need any code for this

Computer-Graphics and 3d-rendering

- almost 50 years of research

- probably one of the best financed areas of research ($100bn/yr in games several hundred more in VFX)

- unbelievably huge scientific field: maths, physics, numerics, radiometry, photometry, colorimetry, ...

[...] I’ll start with the surface of the topic and ask myself what I don’t fully get — I look for those foggy spots in the story where when someone mentions it or it comes up in an article I’m reading, my mind kind of glazes over with a combination of

“ugh it’s that icky term again nah go away” and

“ew the adults are saying that adult thing again and I’m seven so I don’t actually understand what they’re talking about.”

Then I’ll get reading about those foggy spots — but as I clear away fog from the surface, I often find more fog underneath. So then I research that new fog, and again, often come across other fog even further down.

Tim Urban

WHAT IS 3D RENDERING?

use a virtual camera to

take pictures of a virtual environment/scene

FIGURE OUT HOW LIGHT BEHAVES AND WHICH LIGHT ENDS UP ON THE CAMERA SENSOR

simulate how photons would bounce around and find those that hit the camera sensor

problem: there are just way too many photons

rendering techniques

raytracing / PATH-TRACING

Disney's practical guide to path tracing

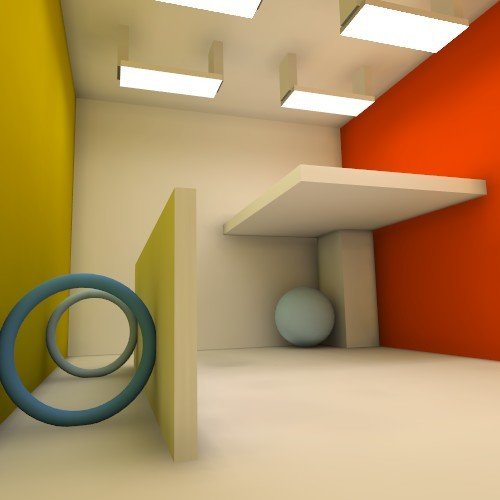

RAYTRACING / PATH-TRACING

- very accurate rendering

- supports special light behaviour like reflection, refraction, caustics and global illumination

- used by almost everything that doesn't need to be realtime (i.e. all VFX in movies or static renderings)

- computers are not yet fast enough to do this in realtime (ToyStory3: each frame took 2‑15 hours to render)

WEBGL PATH-TRACING DEMOS

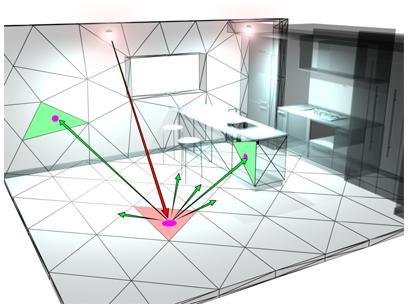

radiosity

"A realistic simulation of how light behaves in an environment"

radiosity

http://www.3dmax-tutorials.com/Radiosity_Solution.html https://de.wikibooks.org/wiki/Blender_Dokumentation:_Radiosity_als_Modellierungswerkzeug

see http://www.cise.ufl.edu/~s11022et/ for more information.

RASTERIZATION / scanline rendering

- used by most realtime applications today

- GPUs are designed for this

- every major game-engine and WebGL implement scanline-rendering

RASTERIZATION / scanline rendering

- objects made from triangles

- triangles are projected onto the screen

- pixel-colors are computed only from data provided for the triangle

MATH

(doesn't hurt, I promise)

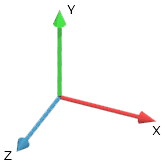

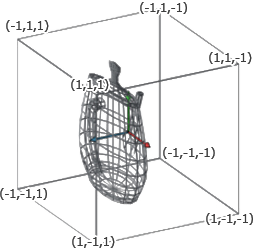

COORDINATE SPACES

- frame of reference for vertices

- a point of origin and three axes (x, y, z)

- "right handed" system

VERTICES

- a vertex describes a point in a coordinate-space

- three components: (x, y, z)

TRANSFORMING VERTICES / MATRIx MULTIPLICATION

- vectors can be transformed (rotation, translation, scaling etc.) using matrix-multiplication

- the matrix is a "recipe" how to convert a vector from one coordinate space into another

- they are everywhere (remember css transform: matrix3d(...)?)

homogenous coordinates

or, "why are there 4 dimensional coordinates everywhere?"

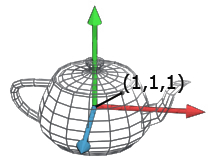

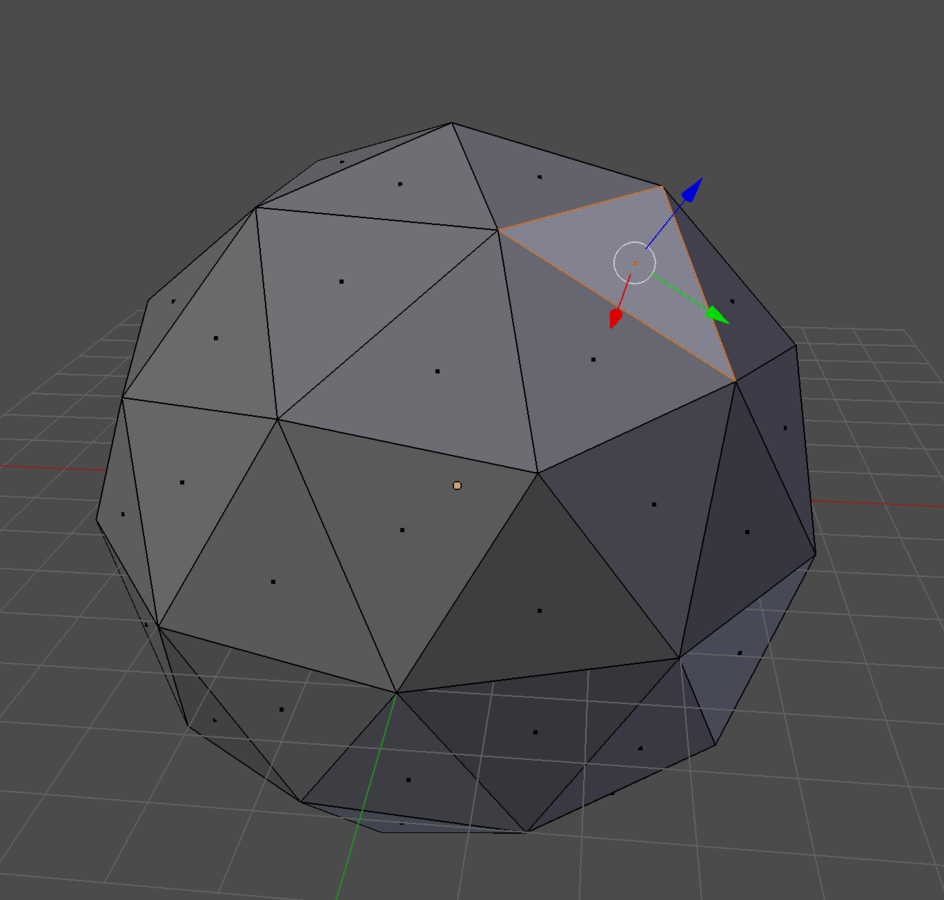

GEOMETRIES

- geometries are defined using triangles* / faces

- all faces together form the object-surface

- vertices for all triangles are defined in object-space

* most 3D environments like DirectX or OpenGL also allow quads as primitives

OBJECTS / Meshes

- created from a geometry and material-information

- provide the coordinate space used by the geometry (object-space)

- manage transforms relative to world-space

- hierarchical: can contain objects that are then relative to the parent coordinate space

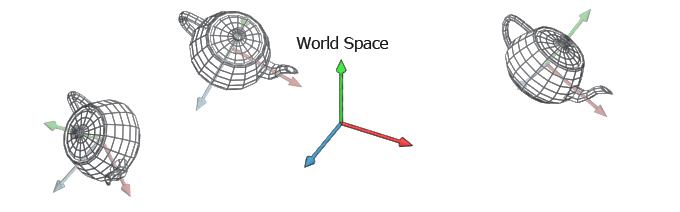

SCENE-GRAPH

- root-object - parent to all objects rendered

- hosts the global coordinate space all objects are positioned in (world space)

- also contains the camera and lights, which are special types of objects

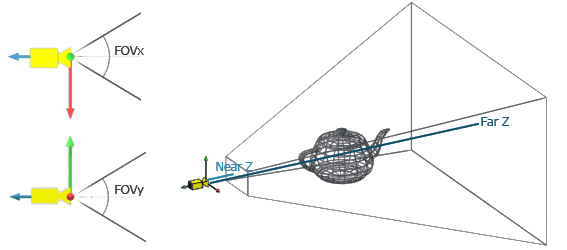

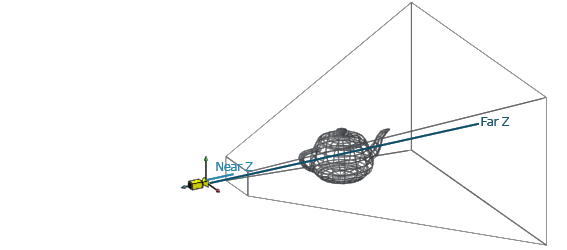

Camera

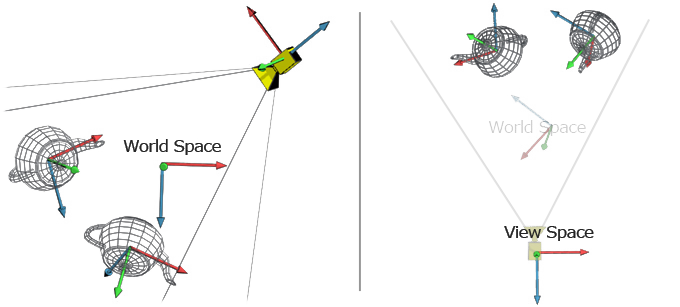

- just another object with it's own coordinate space (view-space)

- is positioned to look at parts of the scene

- field of view angles (fov), near- and far-plane define the view-frustrum

model-, world- AND view-Space

PERSPECTIVE TRANSFORM

- vertices are transformed from world-space into view-space

- multiplying with a special projection-matrix transforms coordinates into the canonical view volume or clip-space

- everything outside the range [-1, 1] is invisible to the camera and will be clipped

The rendering algorithm

- project all vertices into the canonical view volume

- assemble triangles from sets of three vertices

- rasterize triangles

- compute the color-value for every fragment

- blend color-value with previously computed value for the screen-pixel

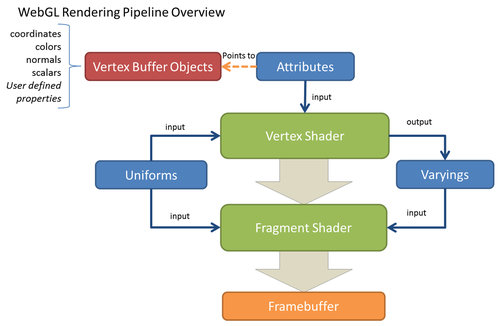

Webgl rendering pipeline

primitive assembly

rasterization

color-blending

Webgl rendering pipeline

DRAWCALLS

- any number of triangles can be passed to the GPU at once, initiating the pipeline to start

- optimizing the number of drawcalls is most important for overall performance

Webgl rendering pipeline

VERTEX-SHADEr

- vertices (world-space coordinates) and other per-vertex data (colors, texture-coordinates, ...) is passed to the GPU as attributes

- constants can be passed as uniform (can be updated per drawcall)

- the vertex-shader will compute clip-space coordinates and pass them back into the pipeline

- can declare special variables (varying) for the fragment-shader

The vertex-shader is run on the GPU for each and every vertex, without any information about other vertices.

Webgl rendering pipeline

PRIMITIVE ASSEMBLY AND RASTERIZATION

- every sequence of three vertices forms a triangle*

- those triangles are then rasterized, or divided based on where exactly display-pixels are covered by the triangle

- these affected pixels are called fragments

* there are other rendering-modes like triangle-strips and fans, but those are ignored here

Webgl rendering pipeline

FRAGMENT-SHADER

- can only access the varying-values defined by the vertex-shader and the uniforms defined for this drawcall

- usually implements lighting equations and various forms of texturing

The fragment-shader is run on the GPU once for every fragment of every triangle, and outputs a color-value for the fragment.

MATERIALS

There's somebody talking about Elm as I write this, I guess this needs to be in another Talk.

QUESTIONS?

please feel free to contact me later for chatting or questions...

welcome to 3d – hhjs

By Martin Schuhfuss

welcome to 3d – hhjs

- 1,723