AWS Redshift

Guillaume Simard

- Coveo

- Usage data Infrastructure

- Machine learning infrastructure

Pierre-Alexandre St-Jean

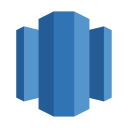

Dynamic reporting

100M

189M

6.38M

THAT'S A LOT

OF NUTS ROWS!

AWS Redshift

Petabyte scale data warehouse

Amazon Redshift delivers fast query performance by using columnar storage technology to improve I/O efficiency and parallelizing queries across multiple nodes.

"Cheap"

Scalable

Fast

"Cheap"

1000 USD / TB / Year*

5000 USD / TB / Year*

*With 3 year reserved instances

Scalable

Linear horizontal scaling*

*Depending on use case

Fast*

select origin_country, query_expression, count(*)

from ua.searches

where datetime > '2015-01-01'

and query_expression != ''

group by origin_country, query_expression

order by count desc

limit 100;

2.47s

select document_title, count(distinct click_id)

from ua.clicks

left join ua.searches on searches.search_id = clicks.search_id

where searches.query_expression like '%banana%'

group by document_title

order by count desc;

1.88sselect * from ua.searches where user_name = 'gsimard' limit 1;

26.14s*

How does it work?

The technical stuff

SQL

select searches.user_id, query_expression, document_title

from ua.searches

left join ua.clicks on searches.search_id = clicks.search_id

where searches.user_id = 'gsimard@coveo.com'

and length(query_expression) > 5

and searches.origin_country like 'C%'

order by query_expression;SQL Compilation

select searches.user_id, query_expression, document_title

from ua.searches

left join ua.clicks on searches.search_id = clicks.search_id

where searches.user_id = 'gsimard@coveo.com'

and length(query_expression) > 5

and searches.origin_country like 'C%'

order by query_expression;Leader

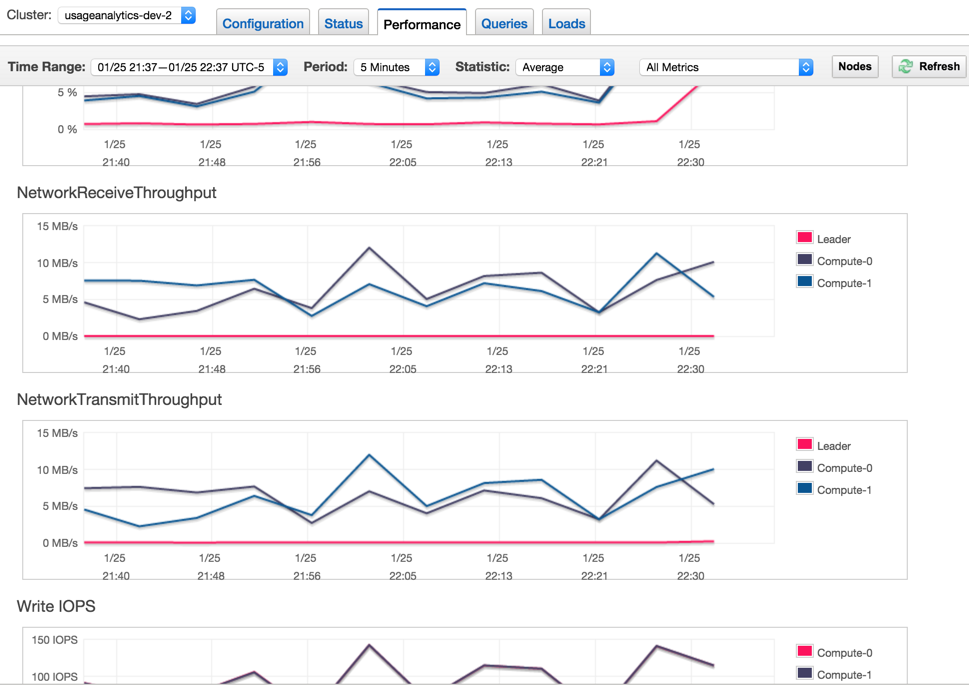

Compute

Compute

Compute

Compute

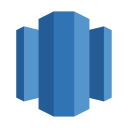

Sharding

Dist Key

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

user_id VARCHAR(128) ENCODE LZO,

user_name VARCHAR(128) ENCODE LZO,

query_expression VARCHAR(500) ENCODE LZO,

advanced_query_expression VARCHAR(2048) ENCODE LZO,

response_time_ms INT ENCODE BYTEDICT,

PRIMARY KEY (search_id))

}

DISTKEY(search_id)

SORTKEY(account_id, datetime);

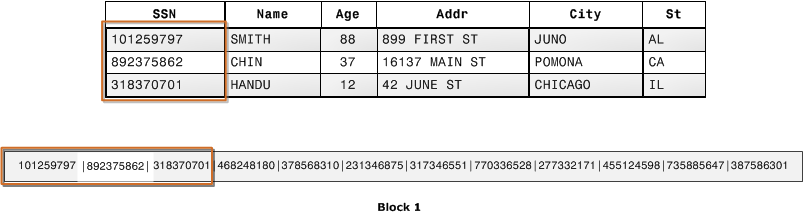

Columnar Storage

Sort Key

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

user_id VARCHAR(128) ENCODE LZO,

user_name VARCHAR(128) ENCODE LZO,

query_expression VARCHAR(500) ENCODE LZO,

advanced_query_expression VARCHAR(2048) ENCODE LZO,

response_time_ms INT ENCODE BYTEDICT,

PRIMARY KEY (search_id))

}

DISTKEY(search_id)

SORTKEY(account_id, datetime);

Compression

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

user_id VARCHAR(128) ENCODE LZO,

user_name VARCHAR(128) ENCODE LZO,

query_expression VARCHAR(500) ENCODE LZO,

advanced_query_expression VARCHAR(2048) ENCODE LZO,

response_time_ms INT ENCODE BYTEDICT,

PRIMARY KEY (search_id))

}

DISTKEY(search_id)

SORTKEY(account_id, datetime);

COPY

copy users from 's3://awssampledbuswest2/tickit/allusers_pipe.txt'

credentials 'aws_access_key_id=<access-key-id>;aws_secret_access_key=<secret-access-key>'

delimiter '|' region 'us-west-2';

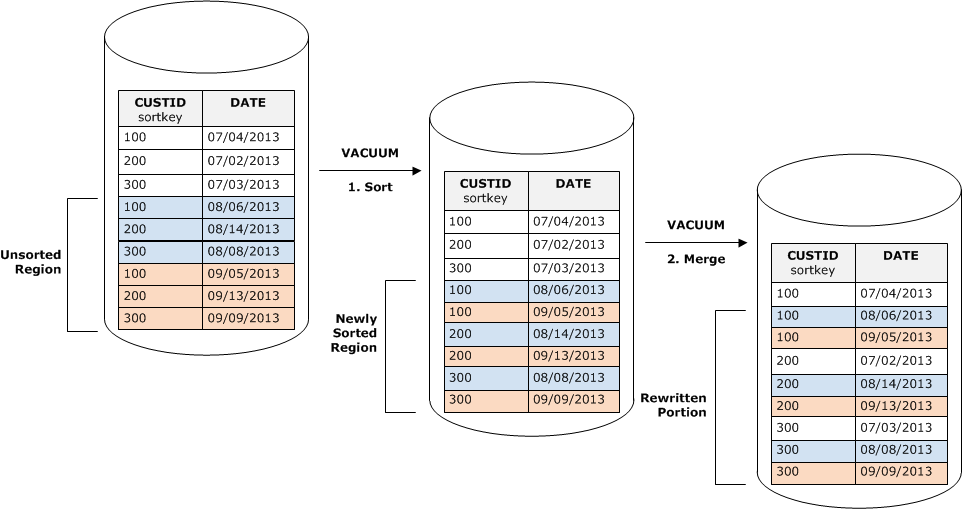

Vacuum

Analyze

Easy integration with AWS

- S3

- Kinesis

- DynamoDB

Fully managed

TL;DR

Redshift is pretty cool

<Insert technical guy here>

Let's build something

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

query_expression VARCHAR(500) ENCODE LZO),

visit_id CHAR(36) ENCODE LZO,

DISTKEY(search_id)

SORTKEY(datetime,account_id);

create table custom_events(

account_id VARCHAR(128),

visit_id CHAR(36) ENCODE LZO,

search_id CHAR(36) ENCODE LZO,

event_type VARCHAR(128) ENCODE LZO,

event_value VARCHAR(128) ENCODE LZO,

visit_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP)

DISTSTYLE ALL

SORTKEY(datetime,account_id);Wait...

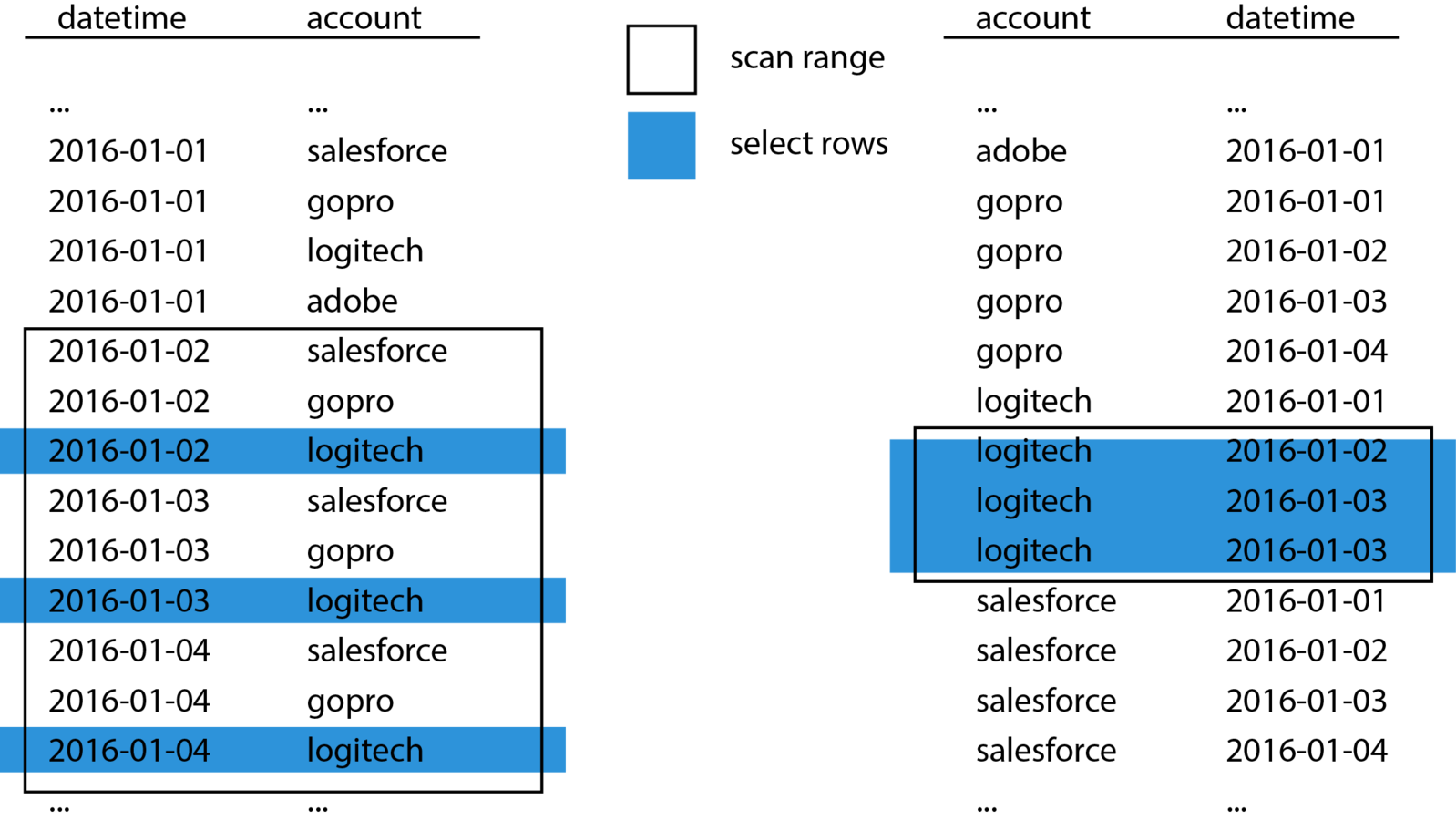

Gotcha #1 - Sorting

- Are there columns that you can always filter on?

- Sort key

- Lowest cardinality first

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

query_expression VARCHAR(500) ENCODE LZO),

visit_id CHAR(36) ENCODE LZO,

DISTKEY(search_id)

SORTKEY(datetime,account_id);

CREATE TABLE searches (

account_id VARCHAR(128),

search_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP,

query_expression VARCHAR(500) ENCODE LZO),

visit_id CHAR(36) ENCODE LZO,

DISTKEY(search_id)

SORTKEY(account_id,datetime);Let's get all unique visits

SELECT count(DISTINCT coalesce("searches"."visit_id",

"custom_events"."visit_id")) AS "UniqueVisit"

FROM

(SELECT "visit_id",

"search_id"

FROM "ua"."searches"

WHERE ("datetime" BETWEEN TIMESTAMP '2015-05-22 05:00:00.0'

AND TIMESTAMP '2016-01-21 04:59:59.999')

AND "account_id" = 'charmander') AS "searches"

FULL OUTER JOIN

(SELECT "search_id",

"visit_id"

FROM "ua"."custom_events"

WHERE ("datetime" BETWEEN TIMESTAMP '2015-05-22 05:00:00.0'

AND TIMESTAMP '2016-01-21 04:59:59.999')

AND "account_id" = 'charmander') AS "custom_events"

ON "custom_events"."search_id" = "searches"."search_id";Wow... it's slow!

EXPLAIN is your best friend

XN Aggregate (cost=1445266.96..1445266.96 rows=1 width=112)

-> XN Subquery Scan volt_dt_0 (cost=1445266.73..1445266.91 rows=18 width=112)

-> XN HashAggregate (cost=1445266.73..1445266.73 rows=18 width=152)

-> XN Hash Full Join DS_DIST_INNER (cost=1.40..1445264.28 rows=979 width=152)

Inner Dist Key: custom_events_dist.search_id

Hash Cond: ("outer".search_id = "inner".search_id)

-> XN Seq Scan on searches (cost=0.00..285250.63 rows=979 width=80)

Filter: (((account_id)::text = 'charmander'::text)

AND ("datetime" >= '2015-05-22 05:00:00'::timestamp without time zone)

AND ("datetime" <= '2016-01-21 04:59:59.999'::timestamp without time zone))

-> XN Hash (cost=1.40..1.40 rows=1 width=224)

-> XN Seq Scan on custom_events (cost=0.00..1.40 rows=1 width=224)

Filter: (((account_id)::text = 'charmander'::text))

AND (("datetime" <= '2016-01-21 04:59:59.999'::timestamp without time zone)

AND ("datetime" >= '2015-05-22 05:00:00'::timestamp without time zone))XN Aggregate (cost=1445266.96..1445266.96 rows=1 width=112)

-> XN Subquery Scan volt_dt_0 (cost=1445266.73..1445266.91 rows=18 width=112)

-> XN HashAggregate (cost=1445266.73..1445266.73 rows=18 width=152)

-> XN Hash Full Join DS_DIST_INNER (cost=1.40..1445264.28 rows=979 width=152)

Inner Dist Key: custom_events_dist.search_id

Hash Cond: ("outer".search_id = "inner".search_id)

-> XN Seq Scan on searches (cost=0.00..285250.63 rows=979 width=80)

Filter: (((account_id)::text = 'charmander'::text)

AND ("datetime" >= '2015-05-22 05:00:00'::timestamp without time zone)

AND ("datetime" <= '2016-01-21 04:59:59.999'::timestamp without time zone))

-> XN Hash (cost=1.40..1.40 rows=1 width=224)

-> XN Seq Scan on custom_events (cost=0.00..1.40 rows=1 width=224)

Filter: (((account_id)::text = 'charmander'::text))

AND (("datetime" <= '2016-01-21 04:59:59.999'::timestamp without time zone)

AND ("datetime" >= '2015-05-22 05:00:00'::timestamp without time zone))Uh-oh

Gotcha #2

Data redistribution

Beware of :

DS_DIST_INNER (The inner table is redistributed)

DS_BCAST_INNER (A copy of the entire inner table is broadcast to all the compute nodes)

DS_DIST_ALL_INNER (The entire inner table is redistributed to a single slice because the outer table uses DISTSTYLE ALL)

DS_DIST_BOTH (Both tables are redistributed)

create table custom_events(

account_id VARCHAR(128),

visit_id CHAR(36) ENCODE LZO,

search_id CHAR(36) ENCODE LZO,

event_type VARCHAR(128) ENCODE LZO,

event_value VARCHAR(128) ENCODE LZO,

visit_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP)

DISTSTYLE ALL

SORTKEY(account_id,datetime);

create table custom_events(

account_id VARCHAR(128),

visit_id CHAR(36) ENCODE LZO,

search_id CHAR(36) ENCODE LZO,

event_type VARCHAR(128) ENCODE LZO,

event_value VARCHAR(128) ENCODE LZO,

visit_id CHAR(36) ENCODE LZO,

datetime TIMESTAMP)

DISTKEY(search_id)

SORTKEY(account_id,datetime);Distribute it

careers.coveo.com

?

Redshift

By Guillaume Simard

Redshift

AWS Redshift introduction

- 2,479