Learning Data Science

Lecture 9

Deep Learning Techniques

f

Inputs

Outputs

In most cases, we will never be able to determine the exact function

ML: algorithms that approximate these complex, non-linear functions as well as possible, without manual intervention

What is ML?

Supervised

ML Algorithms

Unsupervised

Classification

Regression

Clustering

Dimensionality

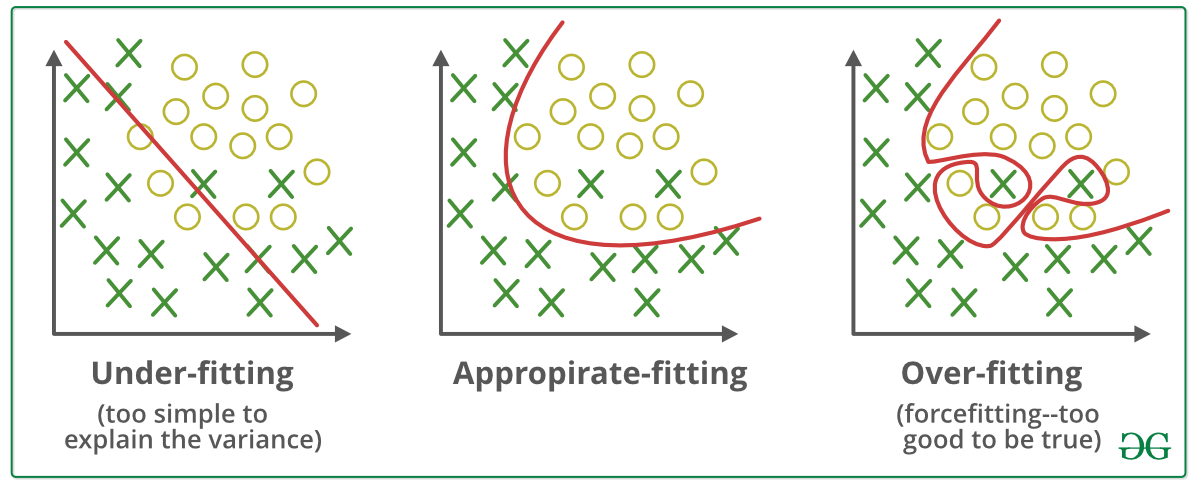

⚠️ Overfitting and Underfitting

- Overfitting: learning hyper-specific patterns that won't recur in the future

- Underfitting: failing to capture important patterns

The standard pipline

- Gather your data

- Exploratory Data Analysis

- Cleaning and feature scaling

- Train/Test splitting

- Train model

- Evaluate model

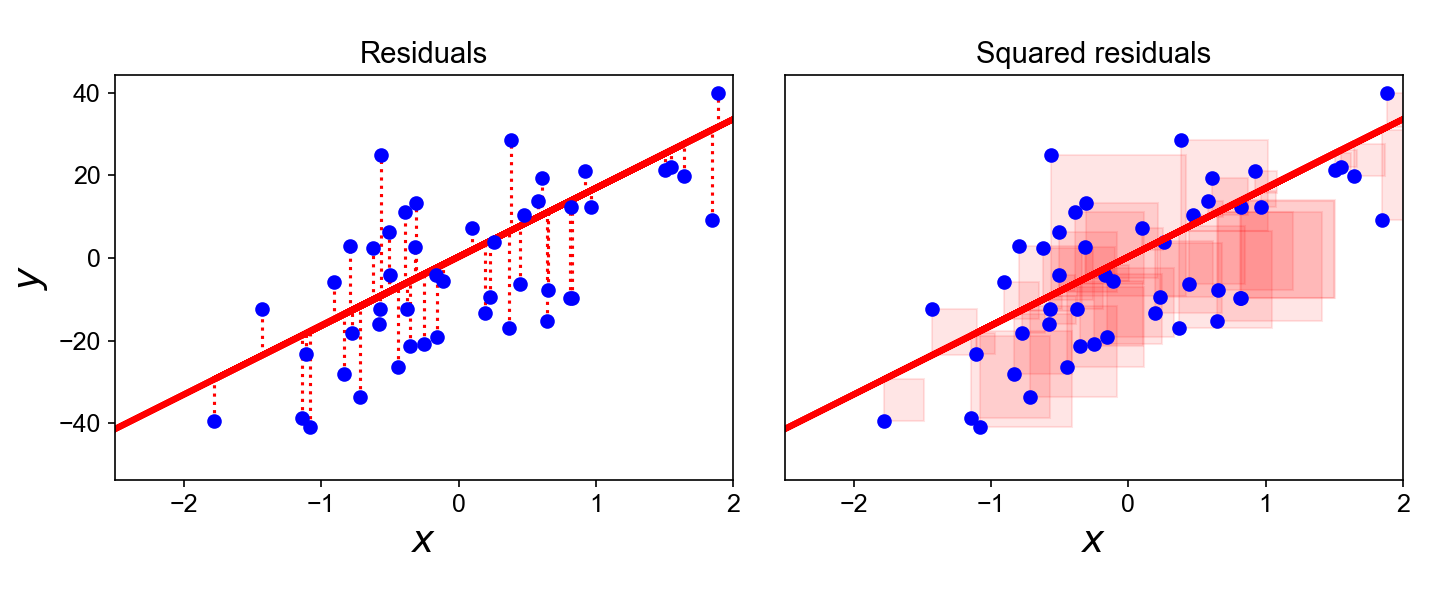

Linear Regression

Usually has an analytical solution!

Supervised + Regression

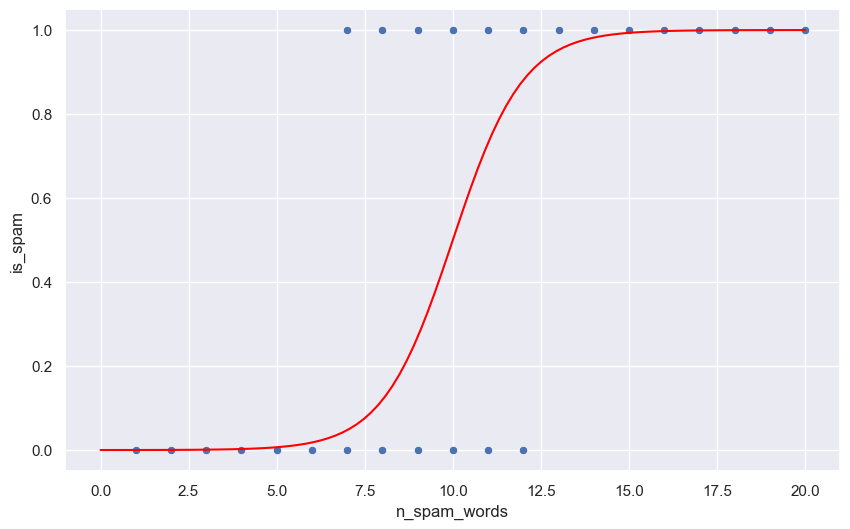

Logistic Regression

Variant of linear regression for binary classification

Supervised + Classification

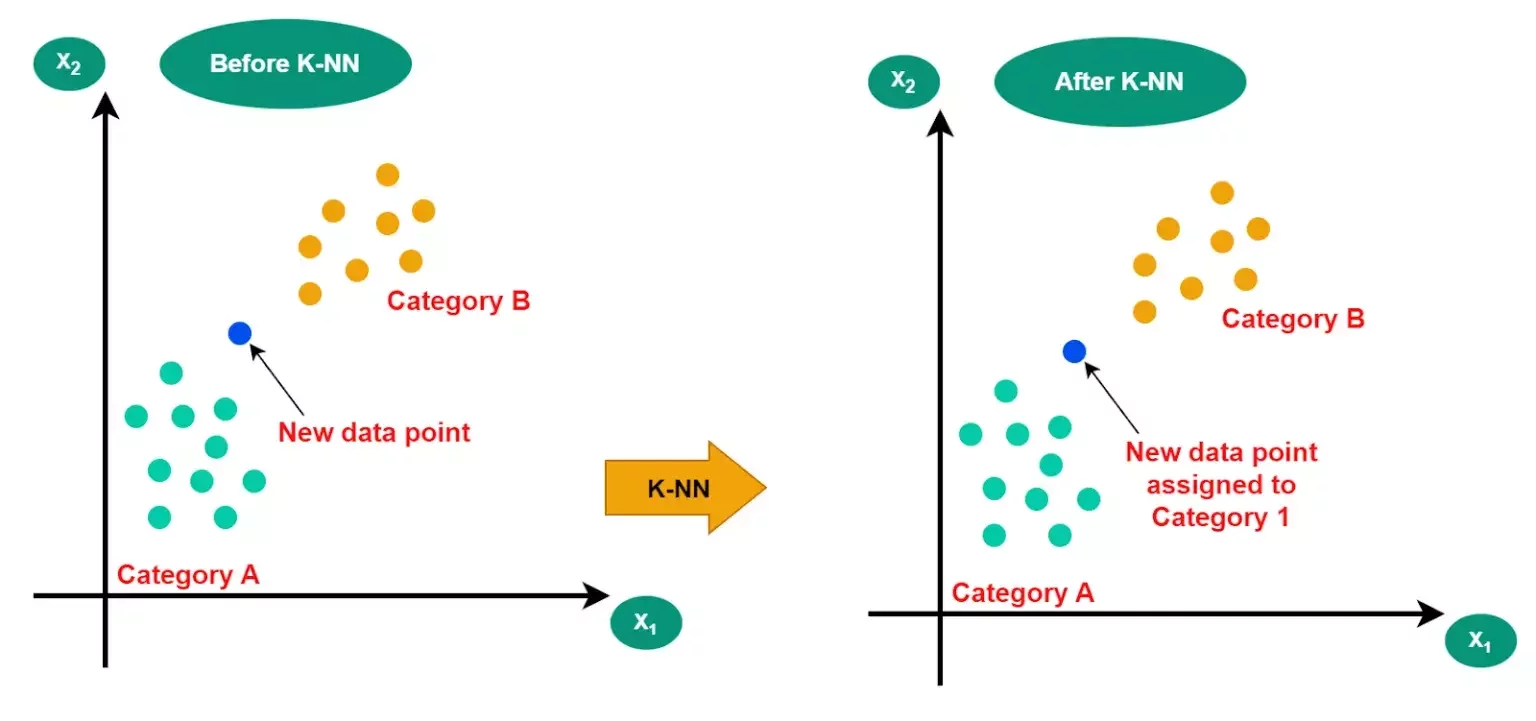

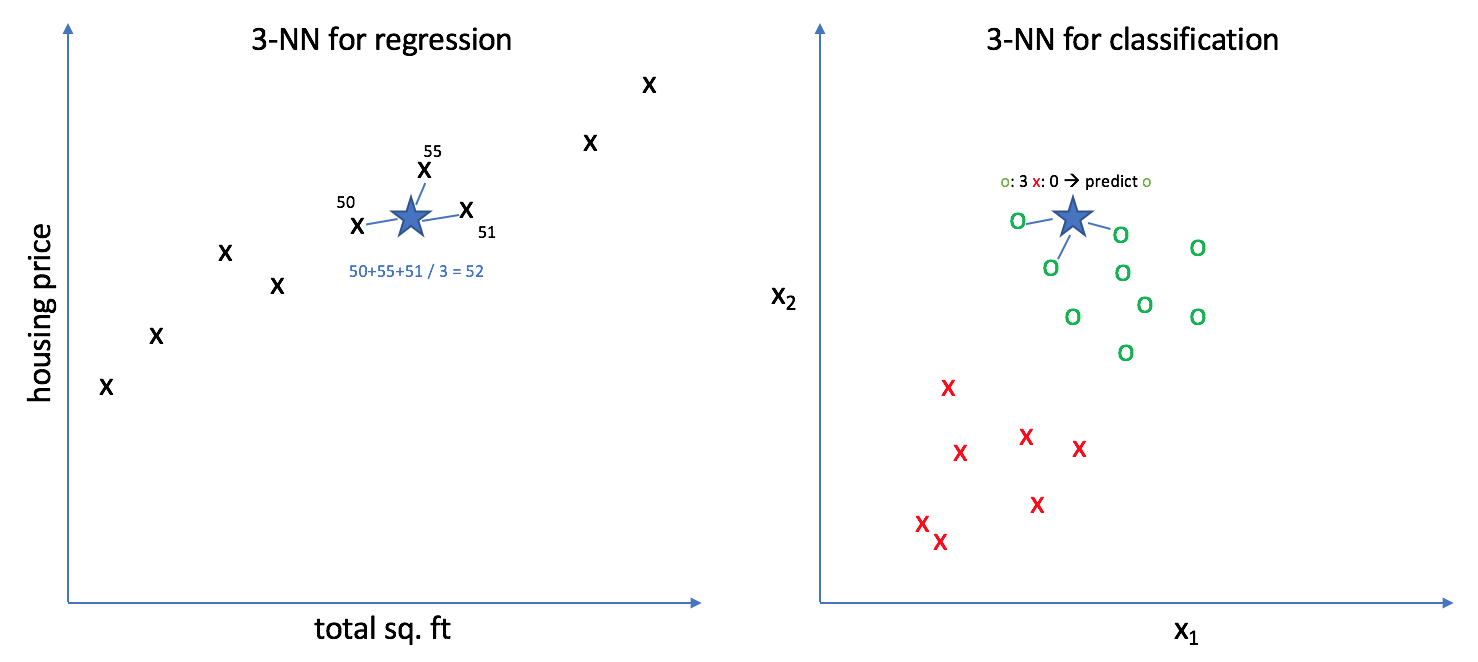

k-nearest neighbors

Supervised + Classification

k-nearest neighbors

Supervised + Regression

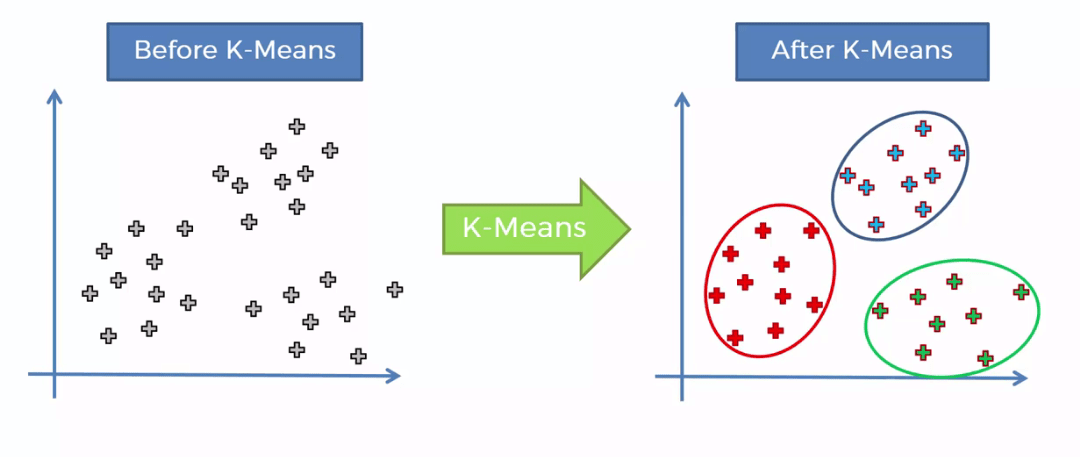

k-means clustering

Unsupervised + Classification

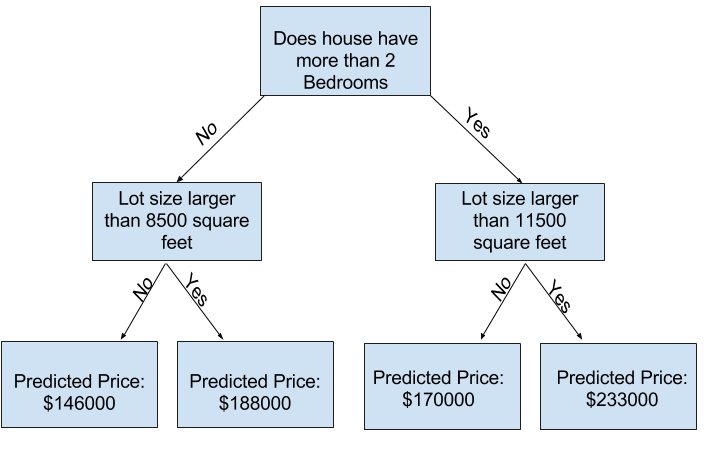

Decision Trees

Supervised + Regression/Classification

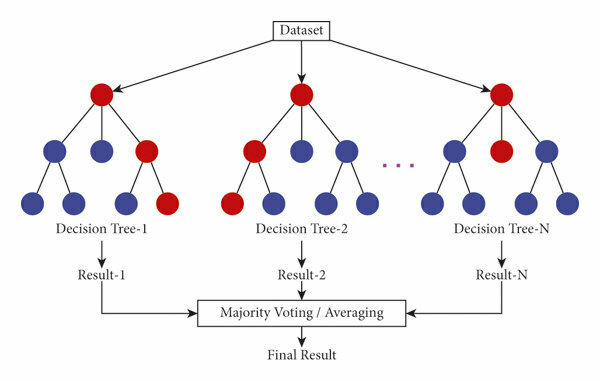

Random Forests

Supervised + Regression/Classification

✨ Ensemble ✨

Lecture 9

- Recap

- Deep Learning Intuition

- How Neural Networks learn

- Neural Nets from scratch

- Computer Vision with PyTorch

Animal

With more data, the more an algorithm can expand its worldview

Animal

The Mother Algorithm

for supervised deep learning

- Gather your labeled data

- Initialize the parameters of your model [randomly]

- Let your algorithm guess something

- Select some metric to tell the algorithm how wrong it is

- Update the parameters to reduce this error

Cost Model Intuition

Animals

Fruits

What is a simple cost model for this algorithm?

Cost Model Intuition

Animals

Fruits

for example:

Cost = N_errors / N_predictions

2/11 ~ 0.18

Cost Model Intuition

Animals

Fruits

ML ➡️ how do we reduce this cost as much as possible

✨optimization✨

f

Inputs

Outputs

Human brains are really good at doing these tasks, mainly due to our O(10^10) neurons

Now imagine a mathematical function with O(10^10) parameters....

671 billion Parameters

f

Inputs

Outputs

Lecture 9

- Recap

- Deep Learning Intuition

- How Neural Networks learn

- Neural Nets from scratch

- Computer Vision with PyTorch

How do Neural Networks Learn?

💫 Calculus

💫 Partial derivatives

The Loss Function

The loss function is some function which tells us how poorly our outputs predicted compared to the true values

Also called the "Cost Function"

f

Inputs

Predicted

Outputs

True

Labels

Partial derivatives allow us to understand one single parameter of our model affects the Loss function

💫 Partial derivatives

671 billion Parameters

pick one

💫 Partial derivatives

- If this derivative is positive, than an increase in w ➡️ increase in L

- If this derivative is negative, than an decrease in w ➡️ decrease in L

✨ The partial derivative of our Loss function tells us exactly how to fine-tune each parameter

💫 Partial derivatives

✨ The partial derivative of our Loss function tells us exactly how to fine-tune each parameter

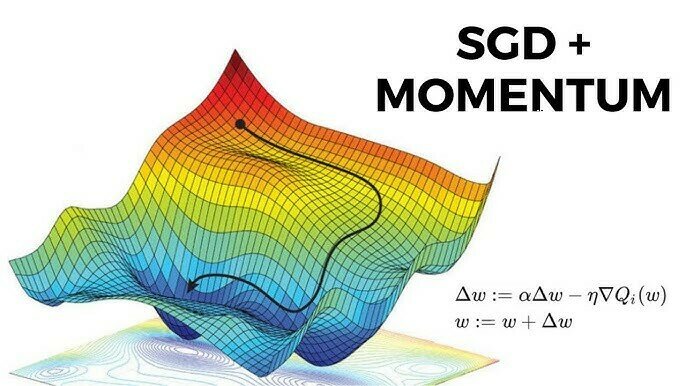

This process is called Gradient Descent

🤓 Let's go to the blackboard!

Lecture 9

- Recap

- Deep Learning Intuition

- How Neural Networks learn

- Neural Nets from scratch

- Computer Vision with PyTorch

Time for live coding!

Notebook will appear on the Indico later.

Lecture 9

- Recap

- Deep Learning Intuition

- How Neural Networks learn

- Neural Nets from scratch

- Computer Vision with PyTorch

Pytorch

Open source deep learning framework

✨ Nearly all of the functions we just coded by hand are included

Pytorch

Open source deep learning framework

✨ Nearly all of the functions we just coded by hand are included

- supersets of all numpy functions that can work on GPUs

- activation functions

- loss functions

- automatic differentiation and backprop

- ...and that's just the beginning!

Pytorch

PyTorch Lightning

One layer on top of PyTorch!!

- Automatic training loops

- Checkpointing (ie you can stop and restart training from where you left off)

- Nice Logging

- Easy training on multiple GPUs or computers

uv add lightningValidation Dataset

Train Dataset

model learns patterns from it

Validation Dataset

judge model during training

Test Dataset

model is graded at the very end

Validation Dataset

Validation Dataset

judge model during training

From now on, let's try to also use validation datasets

- extra unseen data that you can keep using during training

- Monitor for over/underfitting

- Use for hyperparameter tuning

- Use for model selection

Let's code with PyTorch!

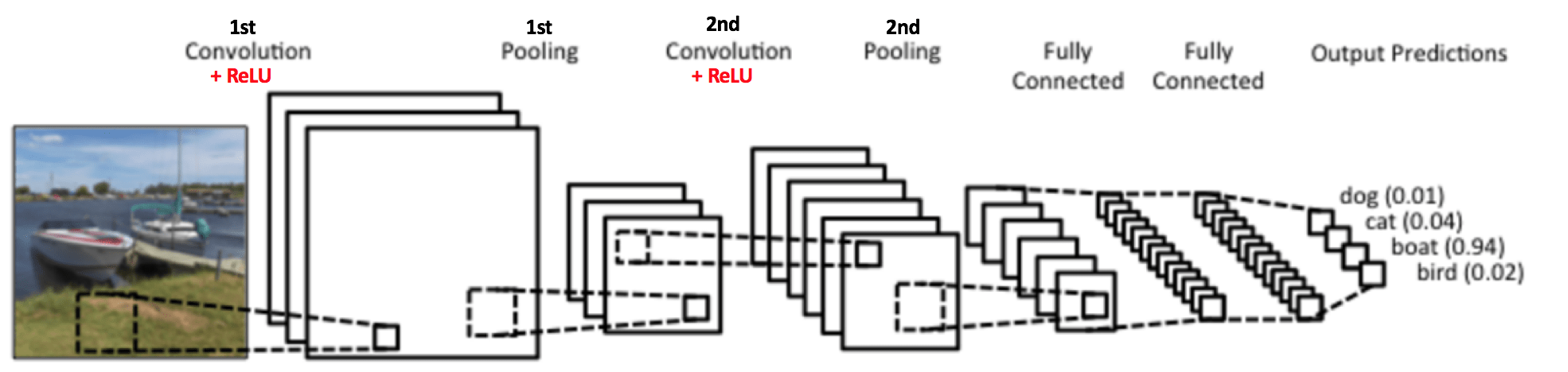

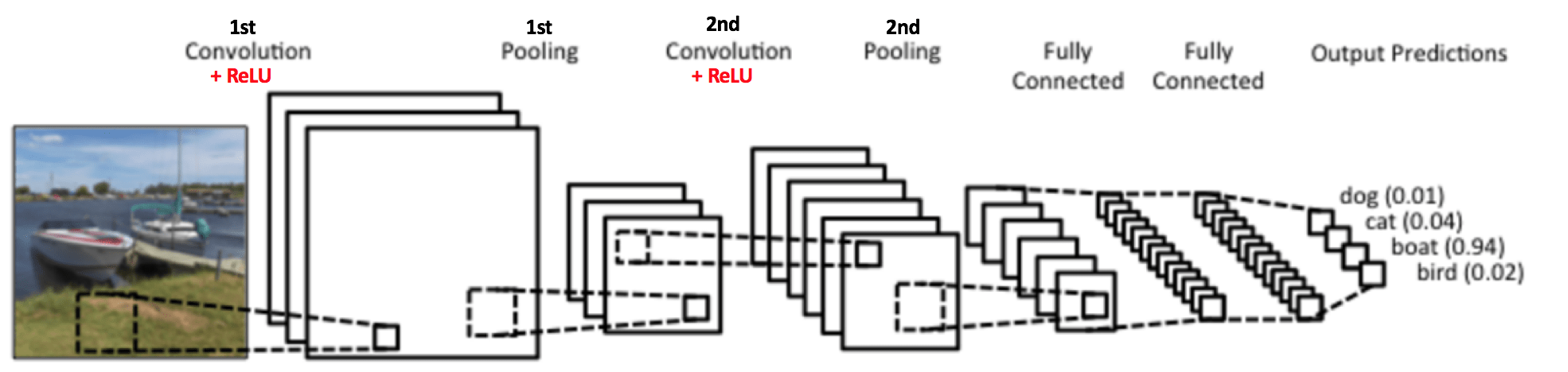

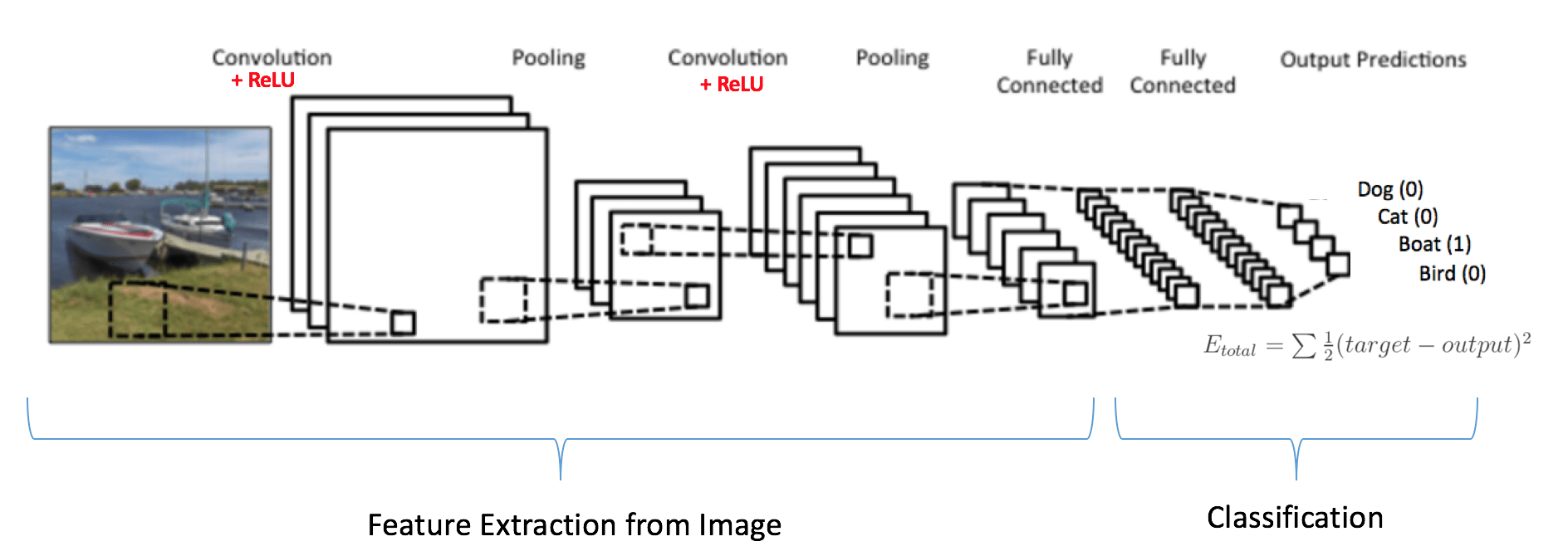

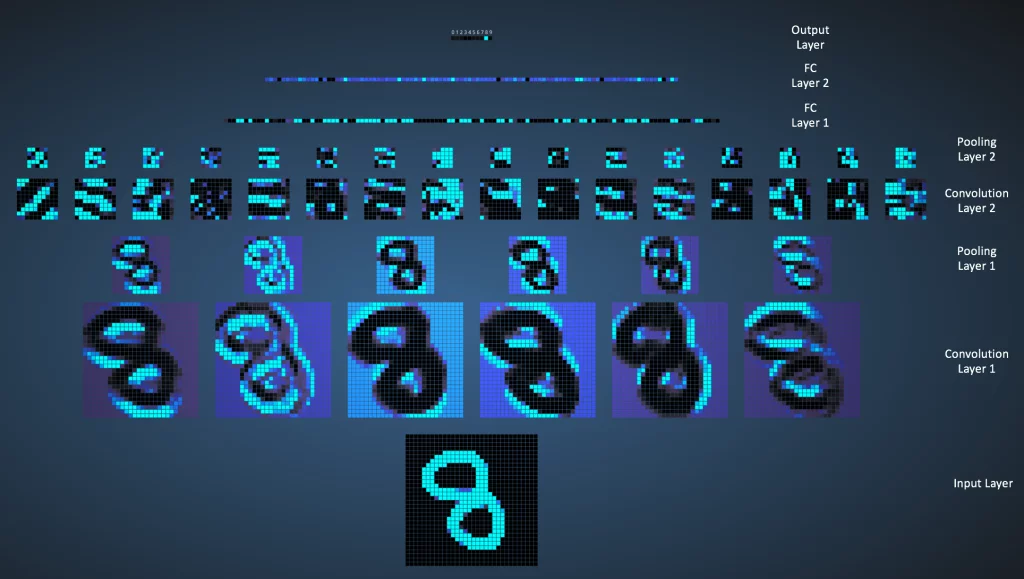

Convolutional Neural Networks (CNN)

in a nutshell

Convolutional Neural Networks

A type of NN which specializes in computer vision

We want to extract new features from the images in a way that encodes spatial information as well

Convolutional Neural Networks

A type of NN which specializes in computer vision

We want to extract new features from the images in a way that encodes spatial information as well

✨ Dimensionality Reduction

Convolutional Neural Networks

A good recipe:

- Input

- Convolution(s)

- Activation Function

- Pooling

- Repeat one or more times

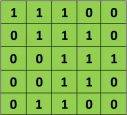

Example image

A cute 3x3 matrix

the convolution of the two

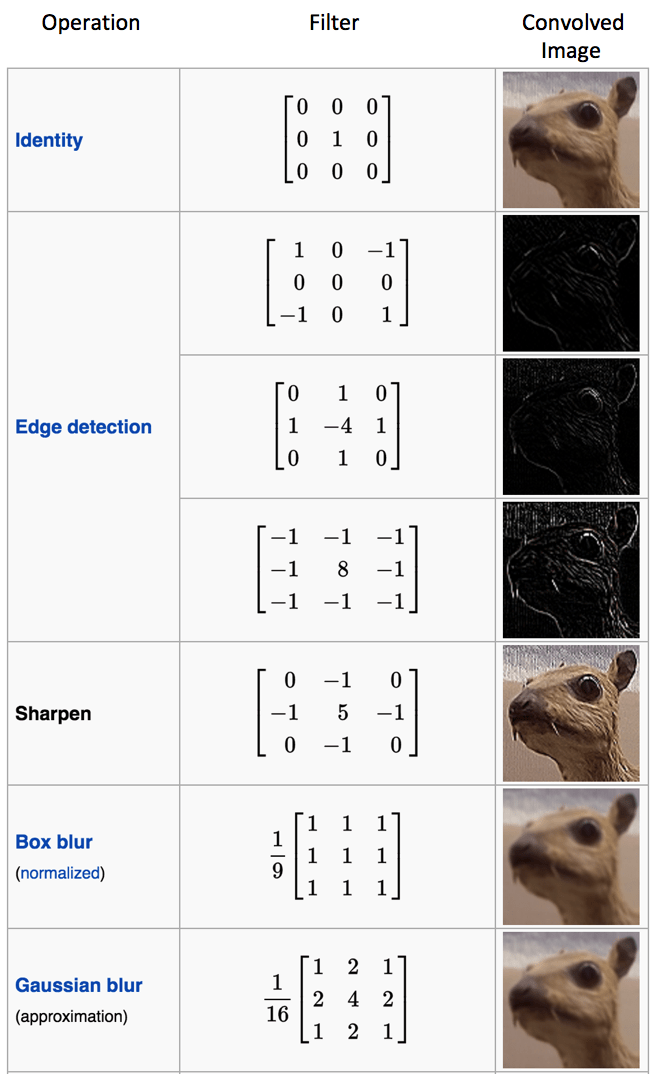

Convolutions

Convolutions

Act like filters on an image!

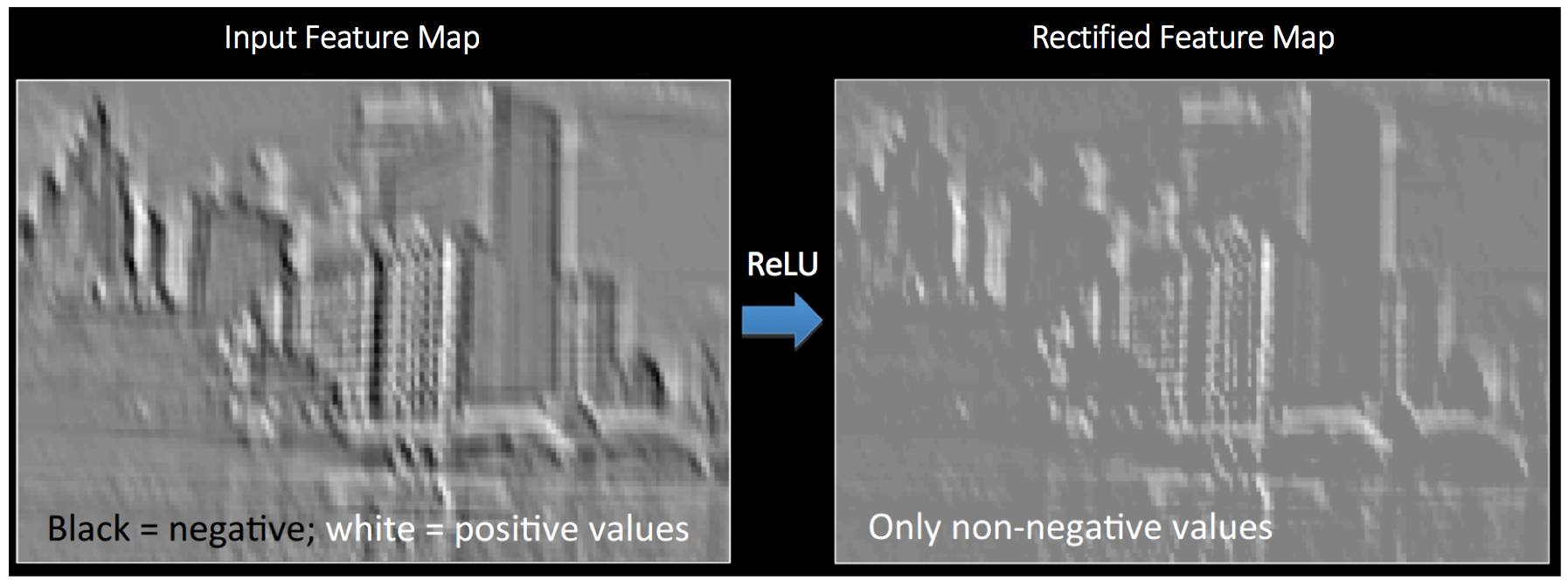

Convolutions

Then apply an activation function

to add non-linearity

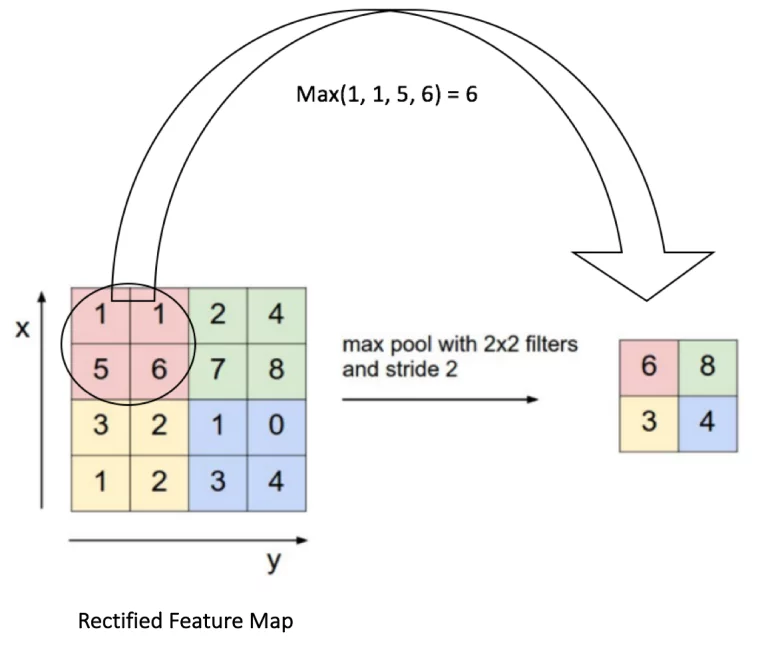

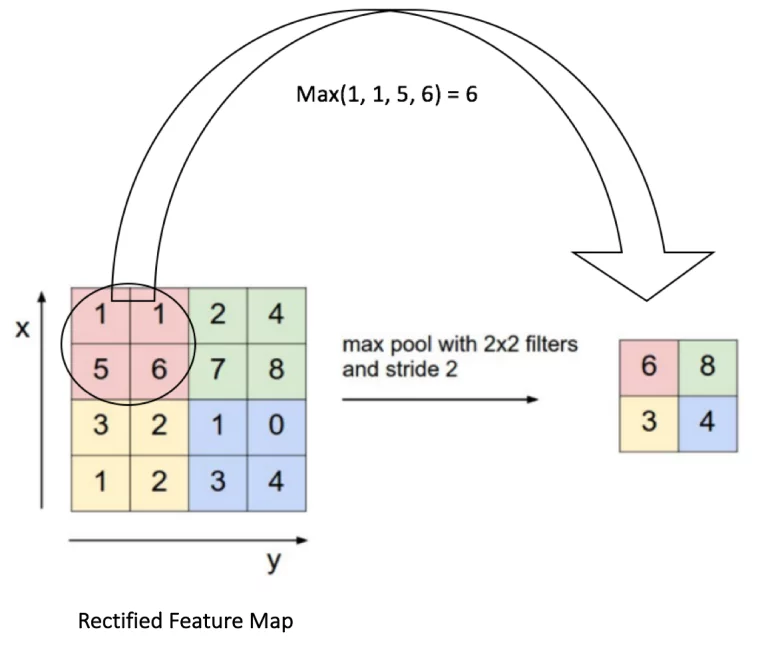

Pooling

- use some function to combine neighboring groups of pixels

- E.g Max Pooling

- Just take the maximum pixel value from a window

Pooling

Why?

- Reduce the number of input features making training more manageable

- Fewer parameters helps avoid overfitting

- Adds invariance to small local distortions, transformations, or translations

- Can add scale invariance as well

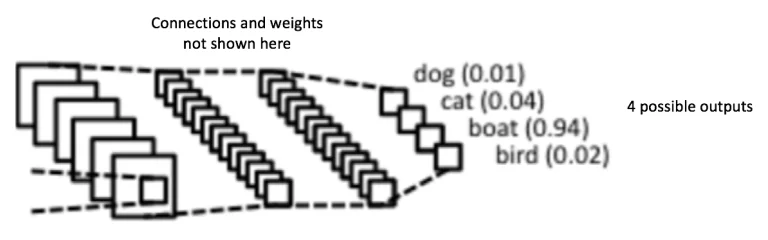

Fully connected layers

- Lastly, use the highly reduced feature sets as inputs to a fully-connected NN.

- Exactly like the one we just created from scratch!

- A cheap way of learning non-linear combinations of our features

The full picture

The full picture

Let's code!

Lecture 9

- Recap

- Deep Learning Intuition

- How Neural Networks learn

- Neural Nets from scratch

- Computer Vision with PyTorch

The End

Learning Data Science Lecture 9

By astrojarred