Scaling a MeteorJS SaaS app on AWS

Brett McLain

Application Systems Architect

PotashCorp

What should you get out of this talk?

- A better understanding of MeteorJS (architecture, deployment tools, clustering).

- A better understanding of Amazon Web Services (EC2, ELB, OpsWorks, CloudFormation, Route 53, Auto Scaling).

- How to deploy, cluster, and scale a MeteorJS web app.

Application Overview

-

SaaS application that uses Twilio to send SMS and phone calls to devices that connect to the cell phone network.

-

MeteorJS (runs on Node.js)

-

MongoDB

-

Flot Charts

-

Twitter Bootstrap

- Blah blah blah.

What is MeteorJS?

- "Meteor is a full-stack JavaScript platform for developing modern web and mobile applications."

- Out of the box tight integration with MongoDB + full data reactivity.

- Install MeteorJS and have an app written in minutes with well established and easily understood patterns.

- Hot code push.

- Full data reactivity.

- Easily build for Android and iPhone (hot code push).

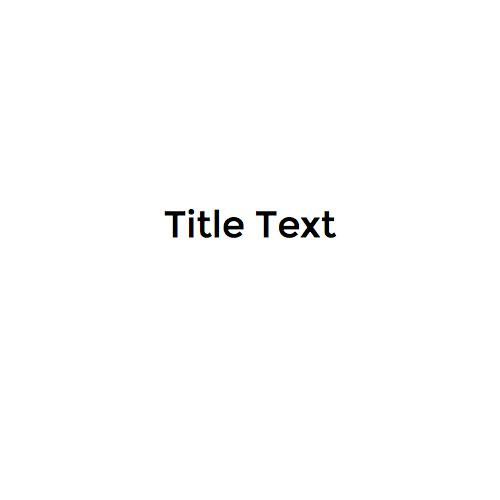

Meteor Architecture

-

Production deployments are NodeJS applications.

-

Meteor has it’s own package manager called isobuild. Extremely similar to NPM.

-

Meteor can also use NPM packages now on the client or server (as of version 1.3).

-

Supports ECMAScript 6 (ES 2015).

-

Meteor Up (mup) - Meteor deployment plugin.

- Meteor Cluster - Meteor clustering plugin.

Meteor Deployments

-

I use Meteor Up (mup) for deployments.

-

Single command to build and deploy code to an unlimited number of servers in a rolling fashion.

-

Installs all necessary software automatically (NodeJS, npm, MeteorJS, docker, nginx, mongo).

-

Setups up an nginx reverse proxy in front of a docker instance for meteor and a docker instance for mongo.

- 10 seconds of downtime per instance!

{

// Server authentication info

"servers": [

{

"host": "localhost",

"username": "ubuntu",

"pem": "~/RelaySupply.pem"

}

],

// Install MongoDB in the server, does not destroy local MongoDB on future setup

"setupMongo": false,

// WARNING: Node.js is required! Only skip if you already have Node.js installed on server.

"setupNode": true,

// WARNING: If nodeVersion omitted will setup 0.10.36 by default. Do not use v, only version number.

"nodeVersion": "0.10.40",

// Application name (No spaces)

"appName": "RelaySupplyControlPanel",

// Configure environment

"env": {

"PORT": 80,

"ROOT_URL": "https://controlpanel.relaysupply.com",

"MONGO_URL": "mongodb://10.0.2.151:27017,10.0.3.138:27017,10.0.4.44:27017/relaysupply-prod",

"MONGO_OPLOG_URL": "mongodb://10.0.2.151:27017,10.0.3.138:27017,10.0.4.44:27017/local",

"DISABLE_WEBSOCKETS": "0",

"CLUSTER_BALANCER_URL": "REPLACE_ME",

"CLUSTER_DISCOVERY_URL": "mongodb://10.0.2.151:27017/relaysupply-prod",

"CLUSTER_SERVICE": "web",

},

// Meteor Up checks if the app comes online just after the deployment

// before mup checks that, it will wait for no. of seconds configured below

"deployCheckWaitTime": 60,

"ssl": {

"certificate": "./.prod/fullchain.pem",

"key": "./.prod/privkey.pem",

"port": 443

}

}Meteor Cluster

-

Provides clustering capability within Meteor JS apps.

-

Meteor Cluster is a regular meteor package.

- Turns each meteor server into a load balancer or a DDP instance (backend server).

- New nodes register with MongoDB backend and are auto-discovered. Traffic begins routing to them immediately.

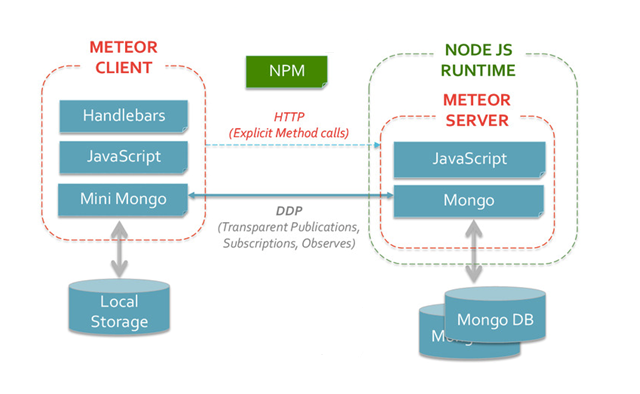

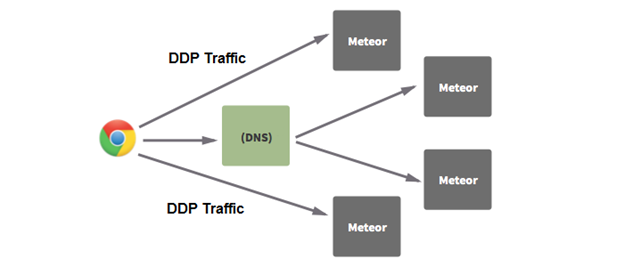

Round Robin DNS Load Balancing

1 Load Balancer, 2 DDP Servers

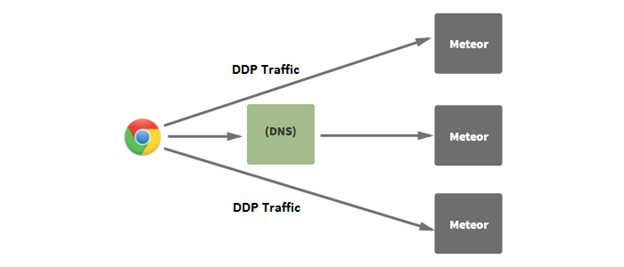

2 Load Balancers, 2 DDP Servers

Meteor Clustering

-

Load balancer servers are pointed to by DNS records.

-

Once the client connects to a load balancer server, it *might* receive the address of a DDP server to share out the load.

-

Supports clustering of microservices too!

So...now what?

- Meteor has some great deployment and clustering tools, but how do we deploy it in a scalable way?

- We need to pick a host that fits well with our deployment tools and utilize the clustering capabilities of Meteor.

Initial Objectives

-

Low Cost!

-

Availability

-

Performance

- Low Maintenance

Where to start?

-

Originally hosted everything (prod, dev, mongo, reverse proxy) on a single Virtual Private Server (VPS).

-

I have past experience with Amazon, it’s well documented, tons of tools.

-

Google *appears* pricier, less tools.

-

Digital Ocean…?

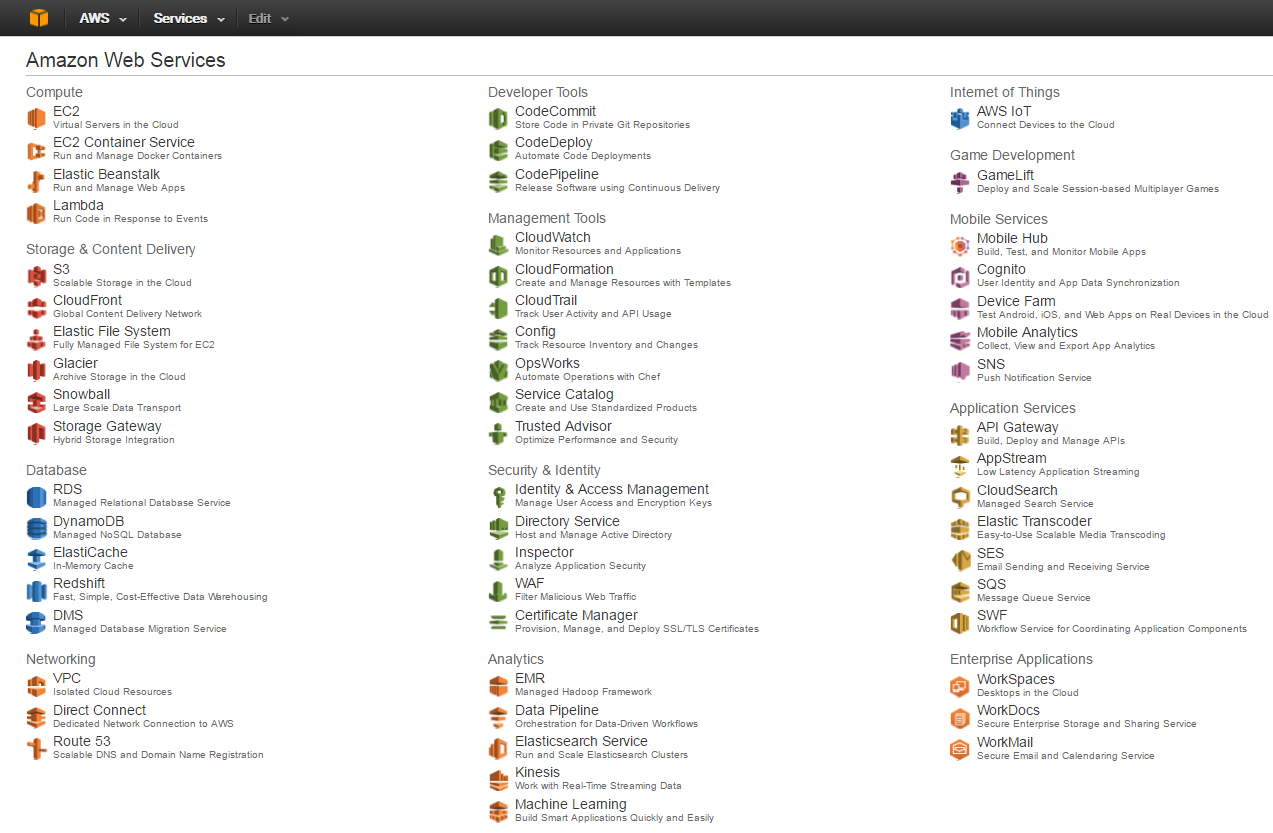

So what does Amazon offer?

-

EC2 (Elastic Compute Cloud)

-

Elastic Bean Stalk

-

Elastic Load Balancers

-

Route 53 (DNS)

-

OpsWorks

-

CloudFormation

-

Elastic Block Store

-

The list goes on!

Elastic Compute Cloud (EC2)

-

On demand infrastructure.

-

Amazon Machine Image (AMI) of many popular operating systems, platforms, community images, etc.

-

Reserved Instances - t2.micro = $0.006/hour - 3 year contract. Relatively cheap.

-

Spot instances (bid for unused servers @ 80% of cost).

-

Can apply auto-scaling groups.

-

Fairly basic...let's see what else Amazon has!

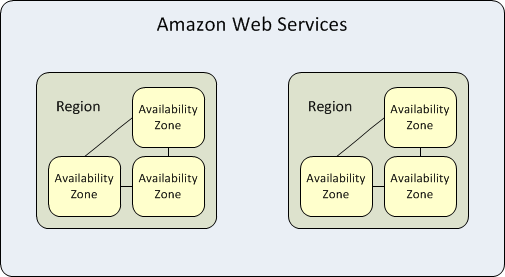

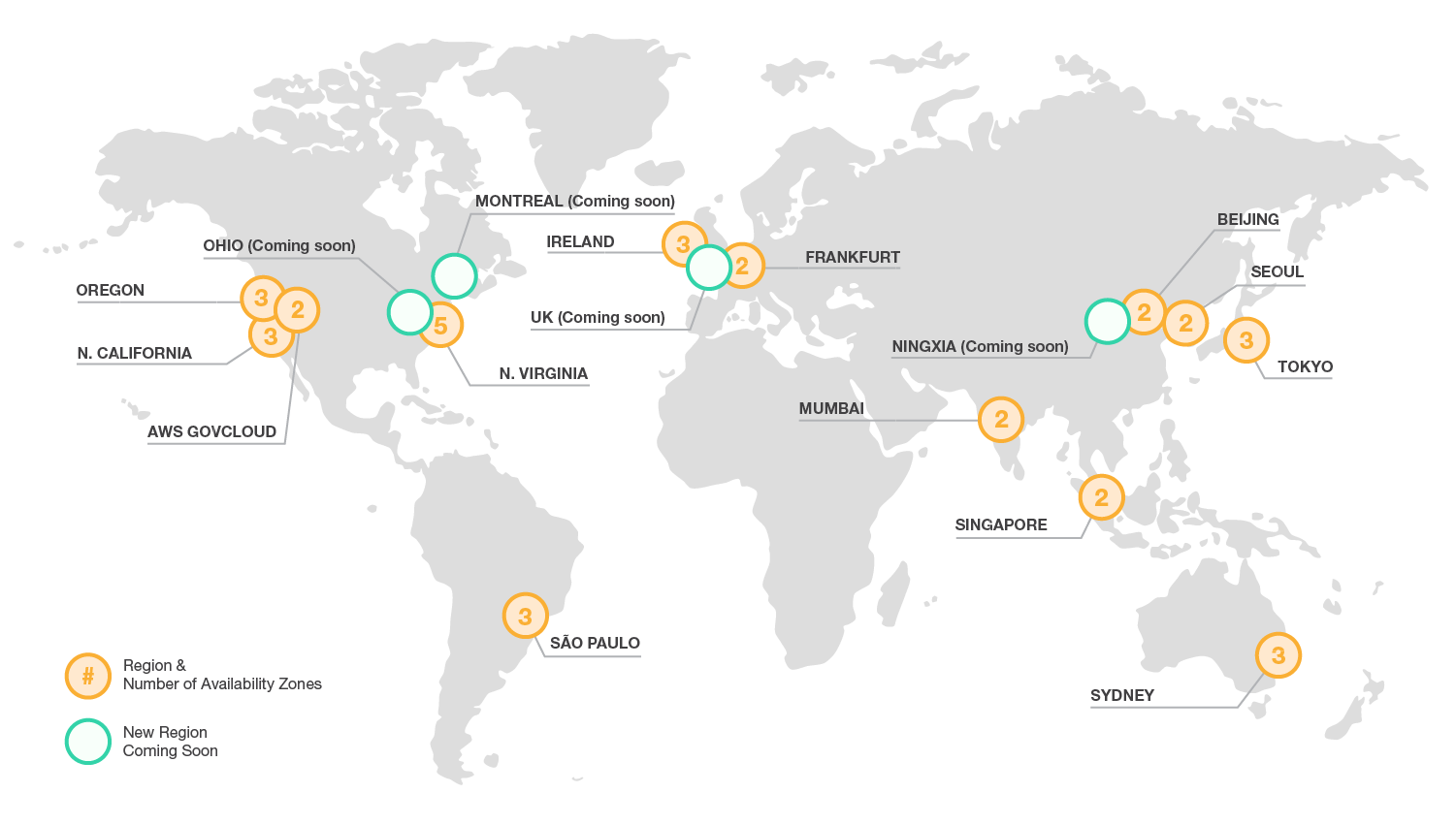

EC2 Regions and Availability Zones

- 11 Regions (i.e. US East)

- 33 Availability Zones

- us-east-1a

- us-east-1b, etcare availability zones while US East (N. Virginia), US West (Oregon), Asia Pacific (Tokyo)

Elastic BeanStalk

-

Elastic Beanstalk is a Platform as a Service (PaaS) that handles the complexities of your underlying infrastructure.

-

Manages load balancing (via ELBs), instance scaling, instance health monitoring, and deployments.

- Supports Docker, Go, Java, .NET, Node, PHP, Python, Ruby.

Let's see how this goes...

- Elastic Beanstalk seemed like a great solution. Handles scaling and load balancing (with ELB).

- Ran into problems very quickly...

- MeteorJS settings are a JSON file exported as an environment variable. Beanstalk strips environment variables of a number of special characters!

- Quickly realized that it is not possible to do websockets, sticky sessions, and HTTPS with Elastic Load Balancers.

How about OpsWorks?

- OpsWorks is a configuration management service that helps you configure and operate applications of all shapes and sizes using Chef.

- You can define the application’s architecture and the specification of each component including package installation, software configuration and resources such as storage.

Nope.

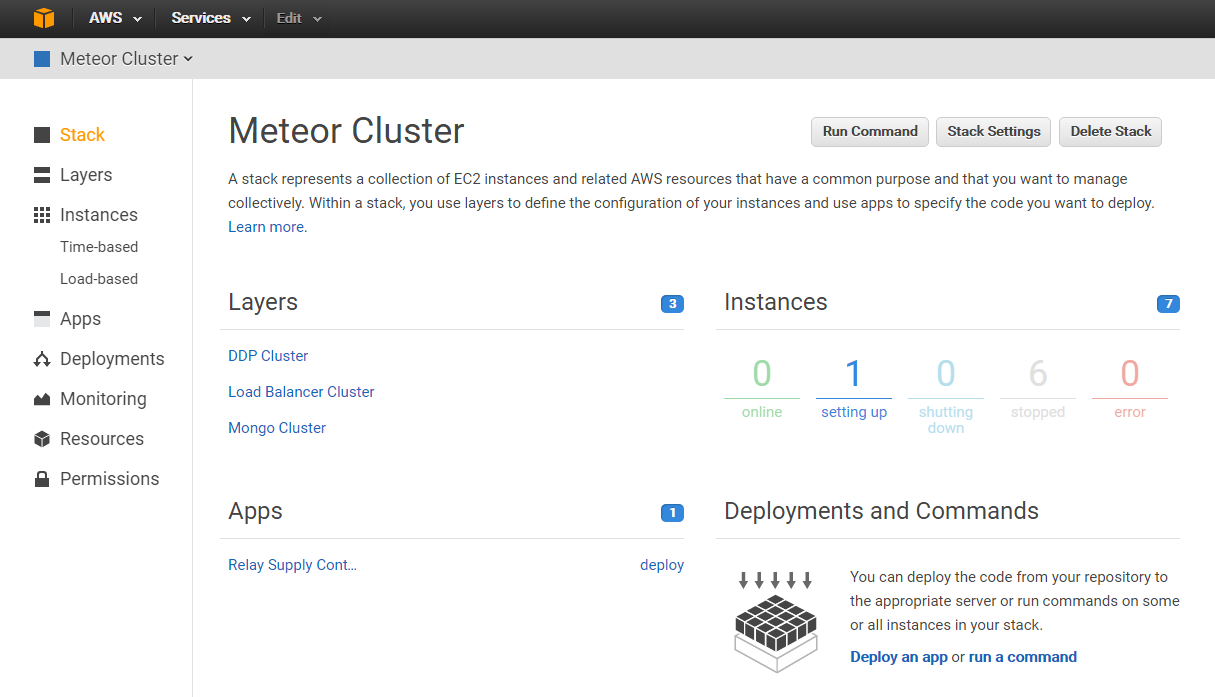

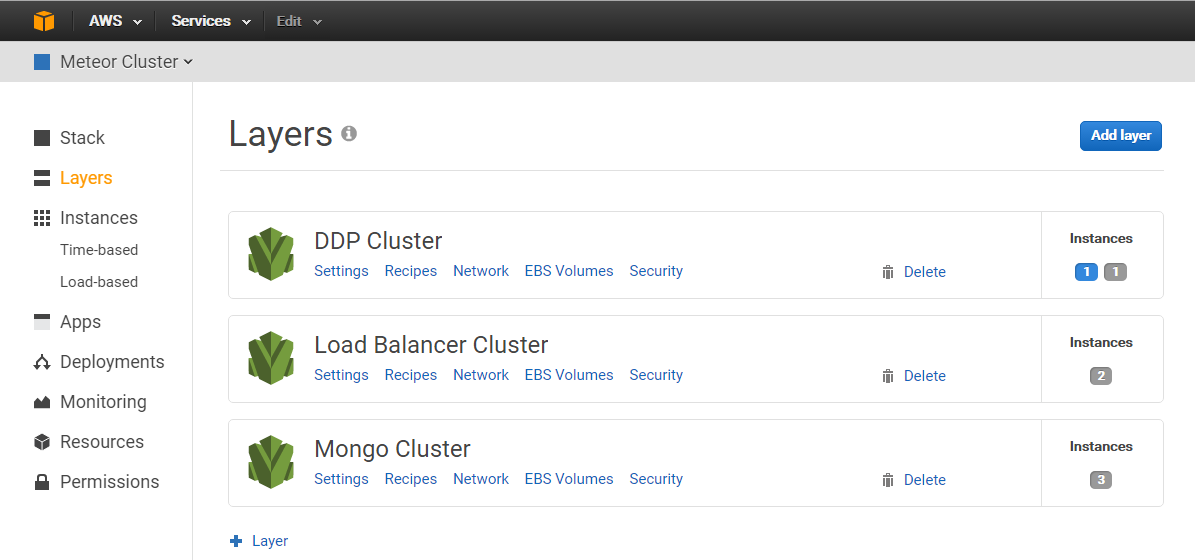

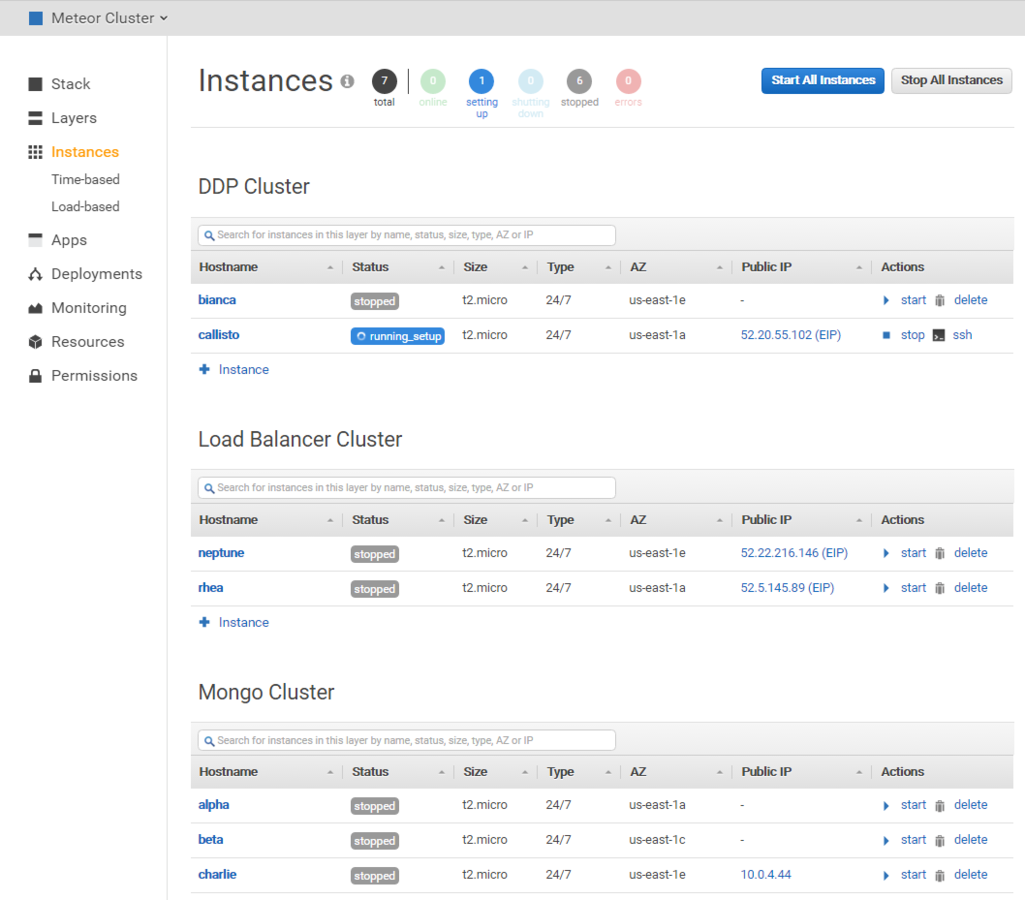

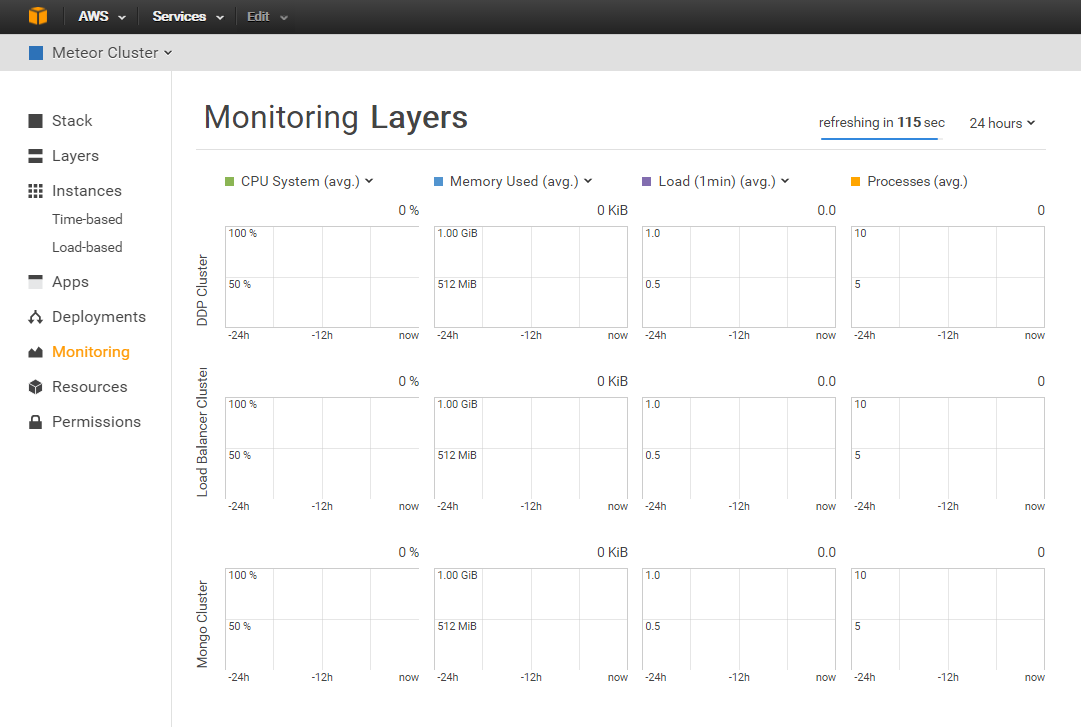

- Stood up a MongoDB cluster and two separate Meteor clusters.

- Autoscaling and application stack health-checks are nice!

- Didn't bother using Chef as I have my own deployment tools.

- Ran into problems very quickly...

- "running_setup" never ended. Servers would hang. No support from Amazon!

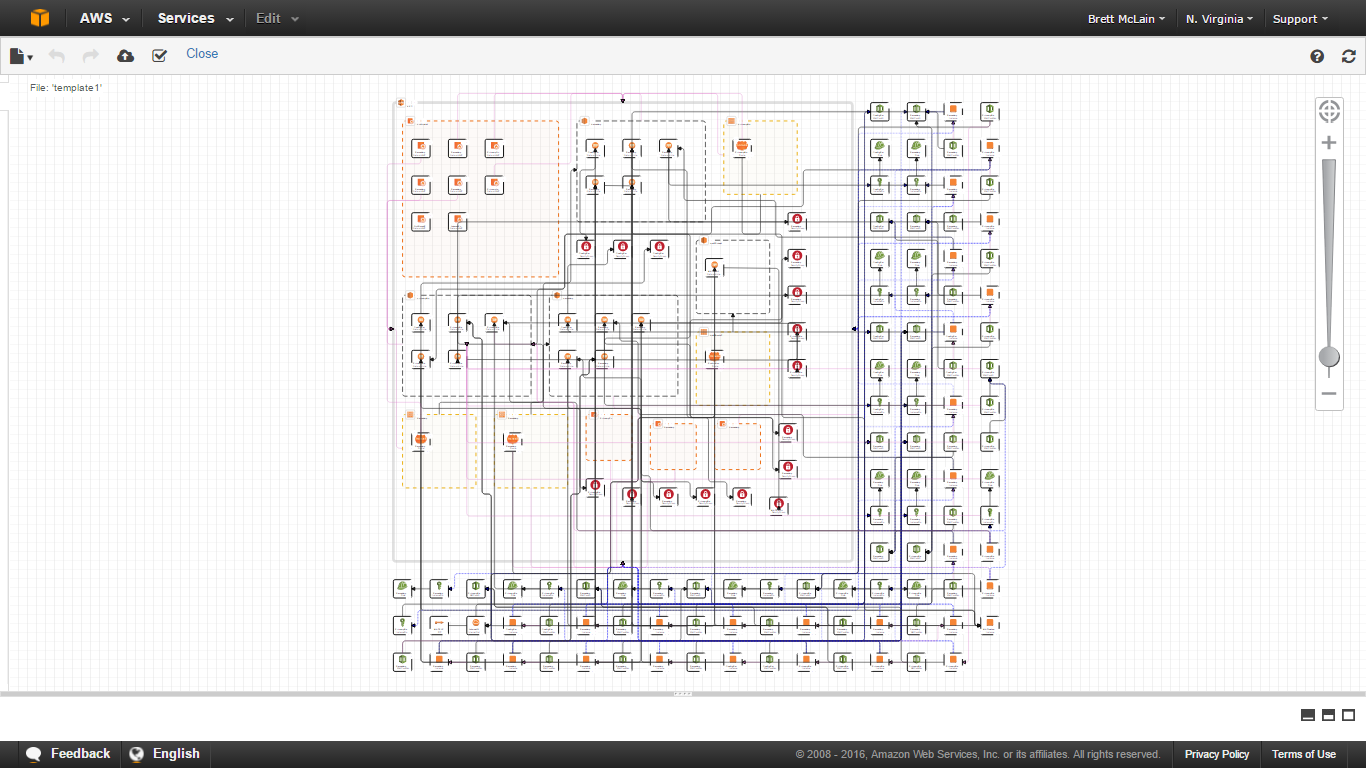

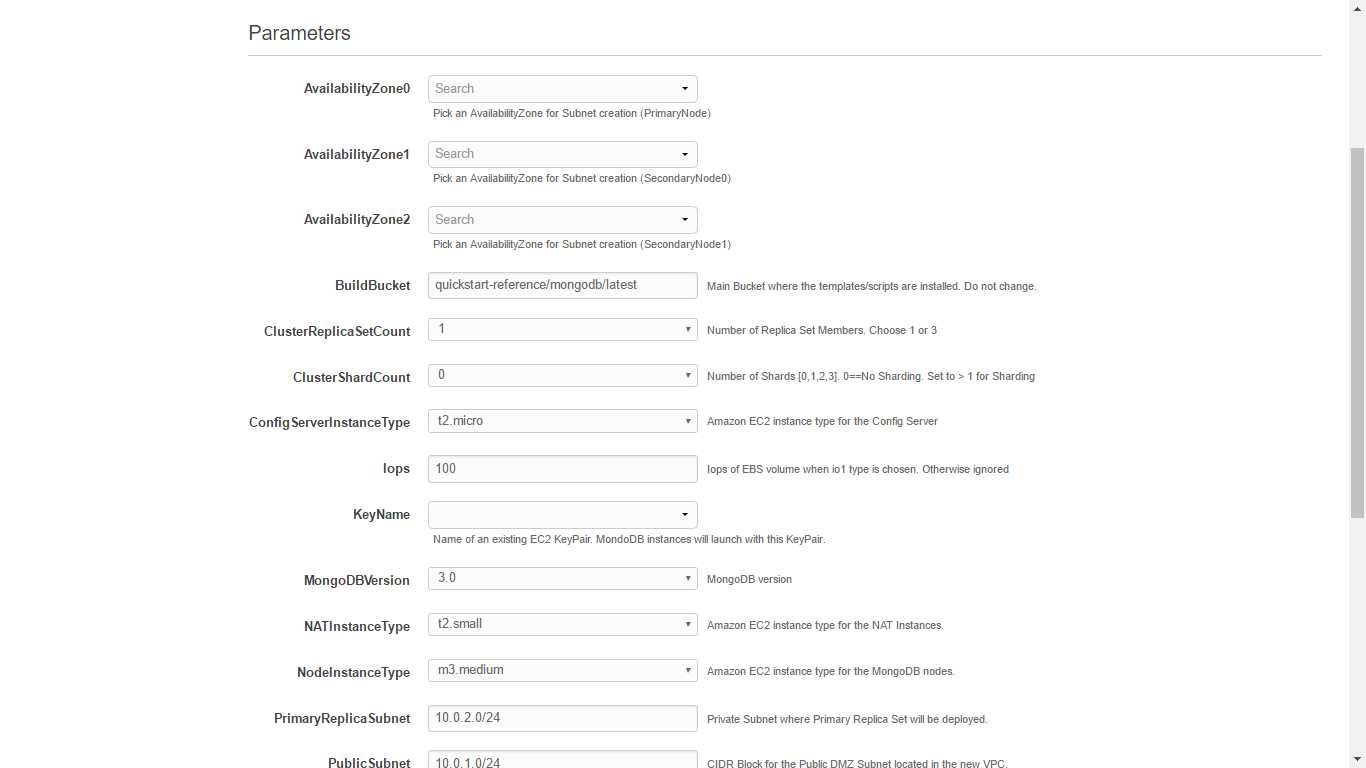

Ok, uh, what else is left? CloudFormation?

- CloudFormation allows you to define infrastructure and services as a configuration template.

- Provision servers, storage, networks, NATs, relationships, run scripts, etc. all from a JSON config.

- Rapid deployment of an entire stack, extremely powerful and _extremely_ complicated.

CloudFormation

- I used the MongoDB CloudFormation template provided by Amazon (only on github, not via the CloudFormation interface).

- Over time I abandoned it as the scripts they used for deployment contained a race condition that would cause deployment to fail about 50% of the time.

- For my purposes, this was overkill.

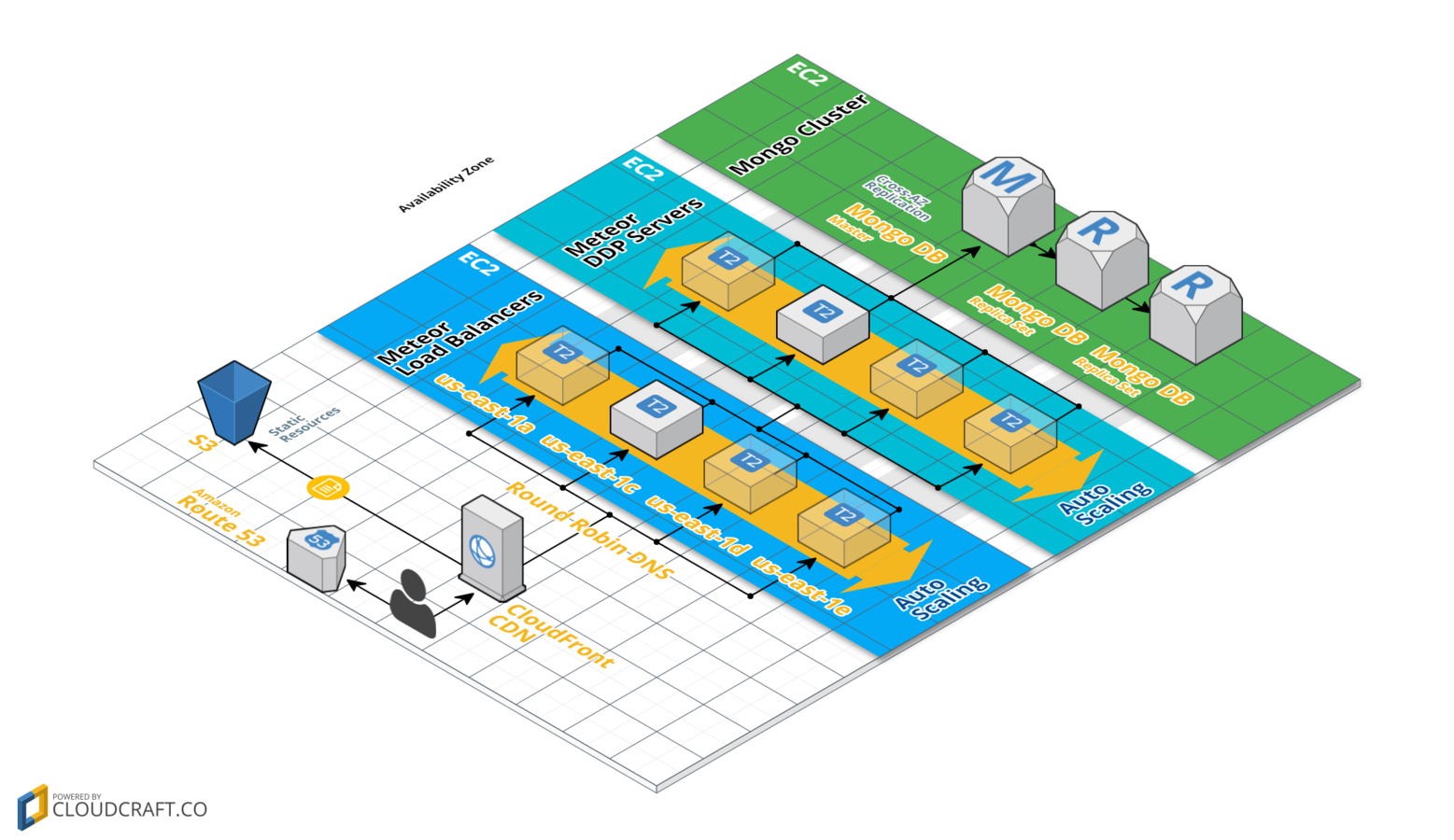

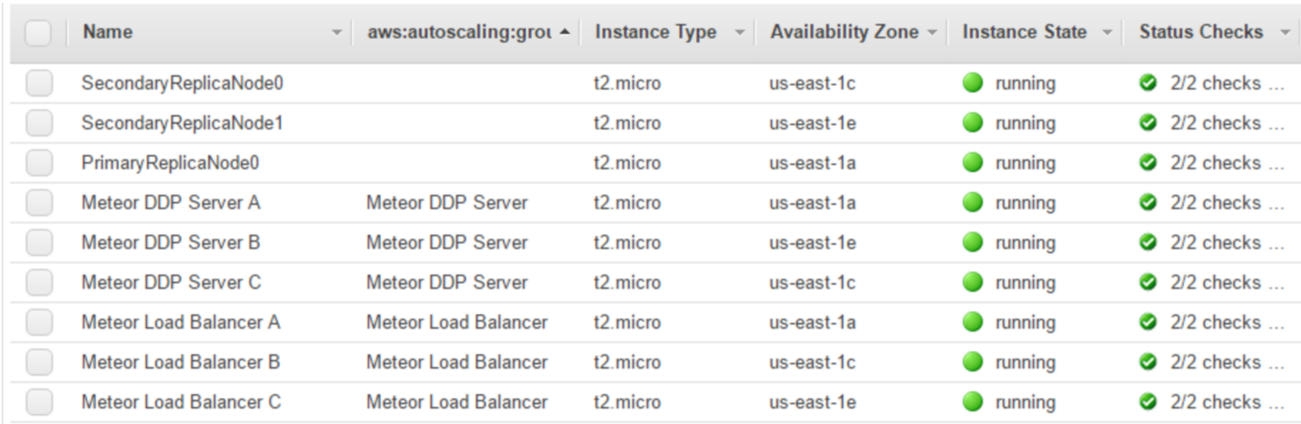

So, EC2 then?

- Setup 3 layers: (1) Load Balancers, (1) DDP Servers, (3) Mongo Replica Sets (no shards).

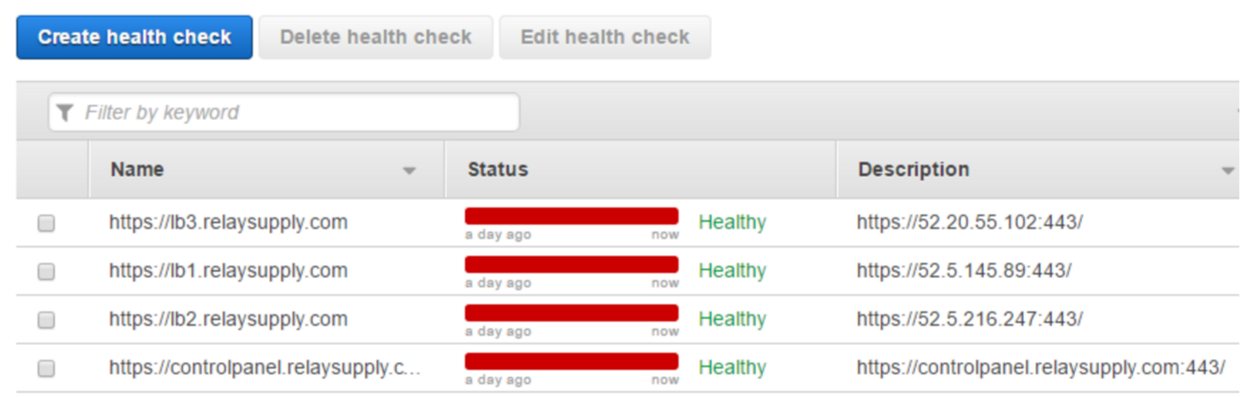

- Created 5 DNS records for the load balancers to do round robin DNS.

- Used Route 53 latency weighted routing to failover load balancers that are offline to load balancers that are online.

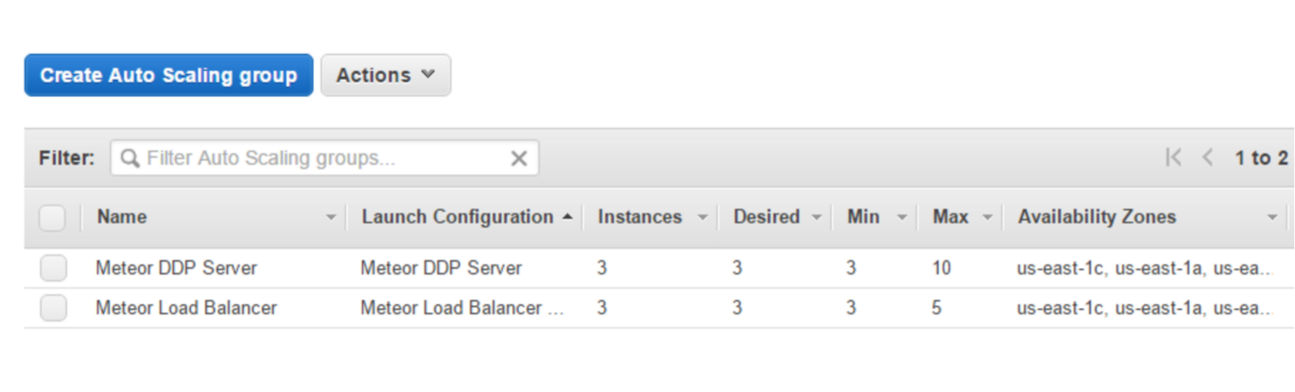

- 2 auto scaling groups, one for load balancers, one for DDP servers.

- Lets Encrypt for SSL/TLS.

Route 53

- Route 53 is a highly available and scalable DNS.

- Routing policies are:

- Simple Routing

- Weighted Routing

- Latency Routing

- Failover Routing

Down the rabbit hole...

-

Auto scaling occurs when average CPU > 70% for 2 minutes.

-

When auto scaling occurs in either auto scaling group, it creates a new instance using the same image.

-

That image runs a script on boot...

do_all_the_things.sh

- Step 1: git pull to get latest code.

- Step 2: Determine if an elastic IP address is already assigned (if not, then this is a fresh instance).

- Step 3a: If fresh instance, and DDP server, use auto-assigned public IP.

- Step 3b: If fresh instance, and load balancer, check to see which DNS elastic IP's are unused and assign one.

- Deploy to self (build code and deploy to localhost).

- Step 4: Done!

Testing Methodology

- Used MeteorDown for load testing. It connects to an endpoint, opens up a DDP connect and completes some action (in this case a 200kb document transfer) before closing the connection.

- Scale up connections incrementally and monitor when and how fast instances are created.

- Determine total capability of 5 load balancers and 10 DDP servers (each instance is t2.micro, 1 vCPU, 512mb ram).

Testing Results

- First auto scaling event: provisioning of instance, boot, git pull, build, and deploy is 2 minutes 30 seconds.

- Second+ auto scaling event: 1 minute 10 seconds.

- Concurrent subscriptions achieved: 38,000

- Maximum subscriptions per instance is reduced by approximately 1% per added instance.

What I learned...

- Meteor has very powerful tools out of the box, however these tools do not lend themselves well to solutions such as Elastic Beanstalk or Google App Engine.

- DNS is a finicky mistress.

- Scaling load balancers is hard; scaling a web app using sticky websockets is even harder!

- Diving in head first has some serious drawbacks - it's difficult to navigate this space without someone to guide you.

What did I end up with?

- One line command to do rolling deployments to all my servers.

- 10 second downtime when deploying.

- Highly available (across AZ's), scalable architecture (auto-scaling + MongoDB sharding/replicas).

- 38,000 concurrent connections constantly re-establishing websockets and sending data.

- Great support for microservices.

- Reactive data and UI, hot code push, mobile deployment.

What was the point of this?

- Hopefully you understand that it IS possible to scale MeteorJS applications, and that it isn't that hard (I just made it hard for myself).

- High level understanding of a few of Amazon's infrastructure and deployment services.

Scaling a MeteorJS SaaS app on AWS

By Brett McLain

Scaling a MeteorJS SaaS app on AWS

- 1,360