SPHN Workflow Interoperability Workshop

Johannes Köster

2018

https://koesterlab.github.io

dataset

results

Data analysis

"Let me do that by hand..."

dataset

results

dataset

dataset

dataset

dataset

dataset

"Let me do that by hand..."

Data analysis

dataset

results

dataset

dataset

dataset

dataset

dataset

automation

From raw data to final figures:

- document parameters, tools, versions

- execute without manual intervention

Reproducible data analysis

dataset

results

dataset

dataset

dataset

dataset

dataset

scalability

Handle parallelization:

- execute for tens to thousands of datasets

- efficiently use any computing platform

automation

Reproducible data analysis

dataset

results

dataset

dataset

dataset

dataset

dataset

Handle deployment:

be able to easily execute analyses on a different system/platform/infrastructure

portability

scalability

automation

Reproducible data analysis

dataset

results

dataset

dataset

dataset

dataset

dataset

scalability

automation

portability

dataset

results

dataset

dataset

dataset

dataset

dataset

Define workflows

in terms of rules

Define workflows

in terms of rules

rule mytask:

input:

"path/to/{dataset}.txt"

output:

"result/{dataset}.txt"

script:

"scripts/myscript.R"

rule myfiltration:

input:

"result/{dataset}.txt"

output:

"result/{dataset}.filtered.txt"

shell:

"mycommand {input} > {output}"

rule aggregate:

input:

"results/dataset1.filtered.txt",

"results/dataset2.filtered.txt"

output:

"plots/myplot.pdf"

script:

"scripts/myplot.R"Define workflows

in terms of rules

rule mytask:

input:

"path/to/{dataset}.txt"

output:

"result/{dataset}.txt"

script:

"scripts/myscript.R"

rule myfiltration:

input:

"result/{dataset}.txt"

output:

"result/{dataset}.filtered.txt"

shell:

"mycommand {input} > {output}"

rule aggregate:

input:

"results/dataset1.filtered.txt",

"results/dataset2.filtered.txt"

output:

"plots/myplot.pdf"

script:

"scripts/myplot.R"Define workflows

in terms of rules

Define workflows

in terms of rules

rule mytask:

input:

"data/{sample}.txt"

output:

"result/{sample}.txt"

shell:

"some-tool {input} > {output}"rule name

how to create output from input

define

- input

- output

- log files

- parameters

- resources

Define workflows

in terms of rules

rule mytask:

input:

"data/{sample}.txt"

output:

"result/{sample}.txt"

script:

"scripts/myscript.py"reusable

Python/R scripts

Define workflows

in terms of rules

rule map_reads:

input:

"{sample}.bam"

output:

"{sample}.sorted.bam"

wrapper:

"0.22.0/bio/samtools/sort"reuseable wrappers from central repository

Define workflows

in terms of rules

use CWL tool

definitions

rule map_reads:

input:

"{sample}.bam"

output:

"{sample}.sorted.bam"

cwl:

"https://github.com/common-workflow-language/"

"workflows/blob/fb406c95/tools/samtools-sort.cwl"dataset

results

dataset

dataset

dataset

dataset

dataset

scalability

automation

portability

Scheduling

Paradigm:

Workflow definition shall be independent of computing platform and available resources

Rules:

define resource usage (threads, memory, ...)

Scheduler:

- solves multidimensional knapsack problem

- schedules independent jobs in parallel

- passes resource requirements to any backend

Scalable to any platform

workstation

compute server

cluster

grid computing

cloud computing

Command-line interface

# execute workflow locally with 16 CPU cores

snakemake --cores 16

# execute on cluster

snakemake --cluster qsub --jobs 100

# execute in the cloud

snakemake --kubernetes --jobs 1000 --default-remote-provider GS --default-remote-prefix mybucketConfiguration profiles

snakemake --profile slurm --jobs 1000$HOME/.config/snakemake/slurm

├── config.yaml

├── slurm-jobscript.sh

├── slurm-status.py

└── slurm-submit.pydataset

results

dataset

dataset

dataset

dataset

dataset

Full reproducibility:

install required software and all dependencies in exact versions

portability

scalability

automation

Software installation is a pain

source("https://bioconductor.org/biocLite.R")

biocLite("DESeq2")easy_install snakemake./configure --prefix=/usr/local

make

make installcp lib/amd64/jli/*.so lib

cp lib/amd64/*.so lib

cp * $PREFIXcpan -i bioperlcmake ../../my_project \

-DCMAKE_MODULE_PATH=~/devel/seqan/util/cmake \

-DSEQAN_INCLUDE_PATH=~/devel/seqan/include

make

make installapt-get install bwayum install python-h5pyinstall.packages("matrixpls")Package management with

package:

name: seqtk

version: 1.2

source:

fn: v1.2.tar.gz

url: https://github.com/lh3/seqtk/archive/v1.2.tar.gz

requirements:

build:

- gcc

- zlib

run:

- zlib

about:

home: https://github.com/lh3/seqtk

license: MIT License

summary: Seqtk is a fast and lightweight tool for processing sequences

test:

commands:

- seqtk seqIdea:

Normalization installation via recipes

#!/bin/bash

export C_INCLUDE_PATH=${PREFIX}/include

export LIBRARY_PATH=${PREFIX}/lib

make all

mkdir -p $PREFIX/bin

cp seqtk $PREFIX/bin

- source or binary

- recipe and build script

- package

rule mytask:

input:

"path/to/{dataset}.txt"

output:

"result/{dataset}.txt"

conda:

"envs/mycommand.yaml"

shell:

"mycommand {input} > {output}"Integration with Snakemake

channels:

- conda-forge

- defaults

dependencies:

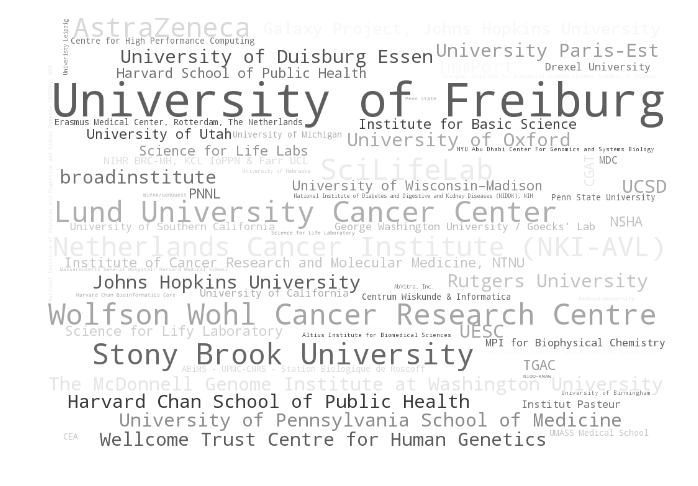

- mycommand ==2.3.1Over 3000 bioinformatics related packages

Over 200 contributors

Singularity

rule mytask:

input:

"path/to/{dataset}.txt"

output:

"result/{dataset}.txt"

singularity:

"docker://biocontainers/mycommand#2.3.1"

shell:

"mycommand {input} > {output}"Singularity + Conda

singularity:

"docker://continuumio/miniconda3:4.4.1"

rule mytask:

input:

"path/to/{dataset}.txt"

output:

"result/{dataset}.txt"

conda:

"envs/mycommand.yaml"

shell:

"mycommand {input} > {output}"define OS

define tools/libs

Sustainable publishing

# archive workflow (including Conda packages)

snakemake --archive myworkflow.tar.gzAuthor:

- Upload to Zenodo and acquire DOI.

- Cite DOI in paper.

Reader:

- Download and unpack workflow archive from DOI.

# execute workflow (Conda packages are deployed automatically)

snakemake --use-conda --cores 16Conclusion

With

- the rule-based DSL

- modularization via wrappers and CWL integration

- seamless execution on all platforms without adaptation of the workflow definition

- integrated package management and containerization

Snakemake covers all three dimensions of fully reproducible data analysis.

portability

scalability

automation

Snakemake

By Johannes Köster

Snakemake

SPHN Workflow Interoperability Workshop

- 2,158