Some high-level take-aways from the MLSS Africa 2019

Some high-level take-aways from the MLSS Africa 2019

Topics & "editor's choice"

- Kernel Methods Arthur Gretton

- Reinforcement Learning Benjamin Rosman

- Ethical AI Bettina Berendt

- Variational Inference David Blei

- Causal Inference Bernhard Schölkopf & Ferenc Huszar

- How to write a great research paper Panel

- Monte Carlo Inference Iain Murray

- Big Spaces John Skilling

- Optimal Transport Marco Cuturi

- Big Data & Astronomy Michelle Lochner

- Data Science in Practice McElory Hoffmann

- Auto. Diff. for ML Niko Brümmer

- Probabilistic Thinking Shakir Mohamed

- NLP necessary for ML ? Sharon Goldwater

- Data Compression Christian Steinruecken

Causal Inference

Schölkopf & Huszar

Schölkopf

- Theoretical background

- ways of (wrongly) doing causal inference

- structural causal models

Huszar

- practical considerations

- using causal inference to solve ML problems

- estimating the relevant quantities from data

Causal Inference

- causality compl. probability theory (cf. Simpson's paradox)

treatment A better for small kidney stones

treatment A better for large kidney stones

treatment B better overall (!!!) - Reichenbach's Principle:

"If X and Y are dependent, then there exists Z causally influencing both. X and Y are conditionally independent given Z." - Structural Causal Model:

- DAG giving causal influences (edges) between observables (vertices)

- X = f(PA(X), U)

- local causal Markov condition:

each X independent of non-descendants given parents -> structural causal model

Causal Inference Schölkopf

Taken from Ferenc Huszar's Causal Inference in Everyday Machine Learning Slides from MLSS Africa 2019

Causal Inference Ferenc Huszar

Taken from Ferenc Huszar's Causal Inference in Everyday Machine Learning Slides from MLSS Africa 2019

Causal Inference Ferenc Huszar

Taken from Ferenc Huszar's Causal Inference in Everyday Machine Learning Slides from MLSS Africa 2019

Causal Inference Ferenc Huszar

Kernel Methods

Arthur Gretton

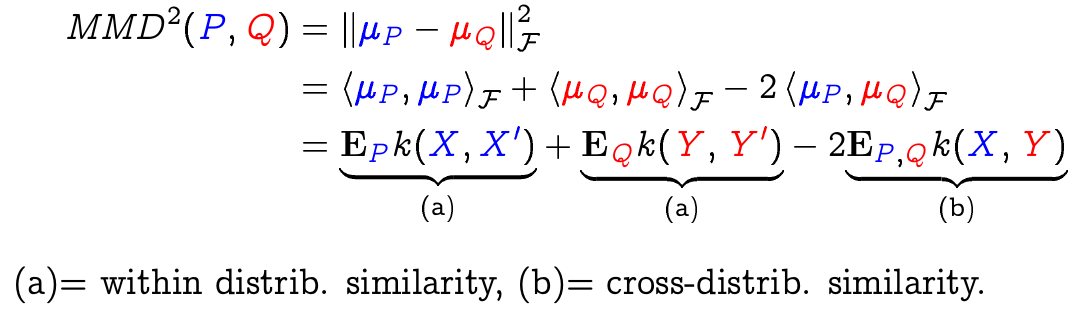

IPMs, MMD, Kernel Trick

- use features to distinguish distributions

- use MMD as divergence measure w/ kernel trick

- IPM view:

- find "witness" or discriminative function with maximally different expected values wrt both dist.

- witness functions from RKHS -> MMD

- bounded Lipschitz witness functions -> Wasserstein

Kernel Methods Arthur Gretton

Taken from Arthur Gretton's Kernel Methods Part 1 Slides from MLSS Africa 2019

Kernel Methods Arthur Gretton

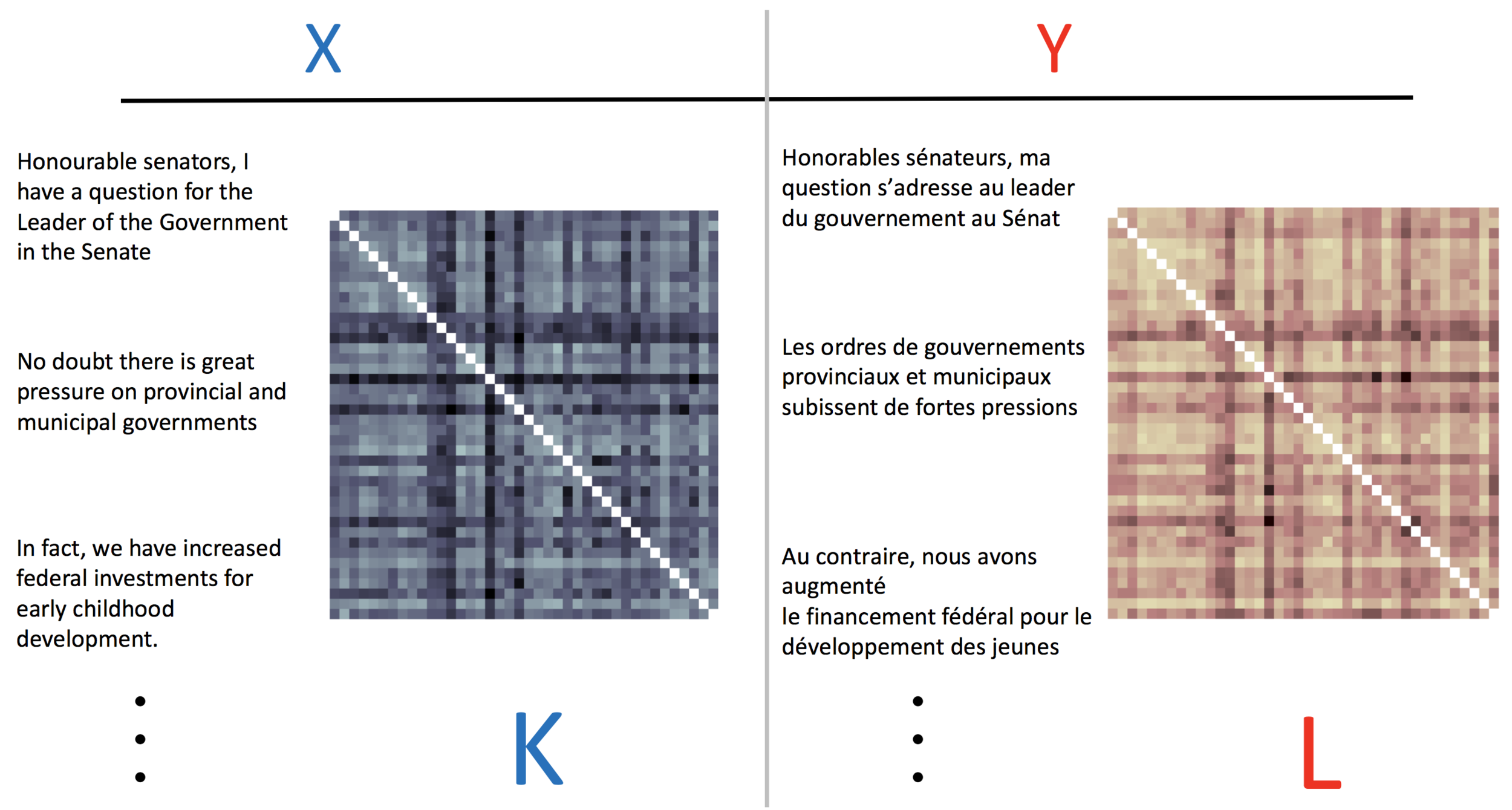

Taken from Arthur Gretton's Kernel Methods for Hypothesis Testing and Sample Generation Slides from MLSS Africa 2019

Dependence Detection

COCO: max. singular value of feature covariance

HSIC: sum of singular values = MMD(PXY,PXPY) with prod. kernel

HSIC = 1/n^2 trace(KL)

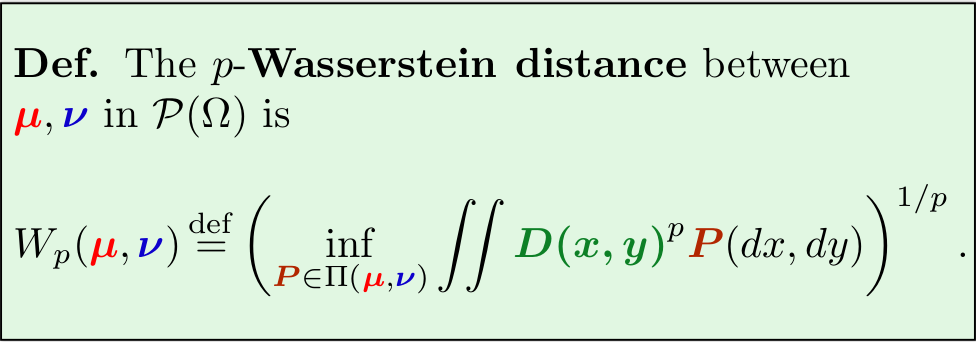

Optimal Transport

Cuturi

Monge

- "transport" continuous (probability) mass distribution to another distribution, subject to cost function (in space)

- solution is a deterministic mapping in space

Kantorovic

- distribute discrete mass distribution to another distribution, subject to cost function (in space)

- solution is a probabilistic mapping/joint distribution in space (Kantorovic Relaxation)

Taken from Marco Cuturi's A Primer on Optimal Transport Slides from MLSS Africa 2019

Optimal Transport Marco Cuturi

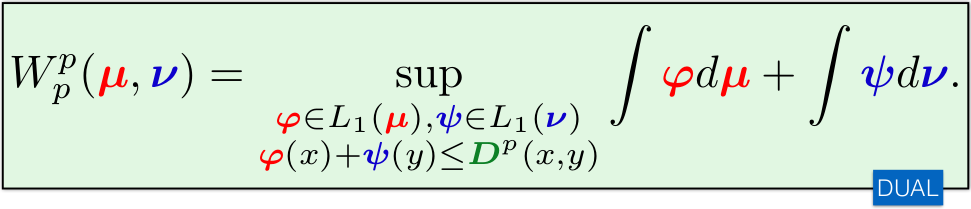

Optimal Transport Marco Cuturi

Taken from Marco Cuturi's A Primer on Optimal Transport Slides from MLSS Africa 2019

Taken from Marco Cuturi's A Primer on Optimal Transport Slides from MLSS Africa 2019

Taken from Marco Cuturi's A Primer on Optimal Transport Slides from MLSS Africa 2019

Optimal Transport Marco Cuturi

MLSS Africa

By Johannes Leugering

MLSS Africa

- 1,153