Levenshtein Transformer

Introduction

- Levenshtein distance: insertion, deletion, substitution

- Imitation learning

- Two policies executed alternatively (insertion, deletion)

- Comparable or better results than standard Transformer on MT and summarization while still being parallelisable

- One model for text generation and text post-editing

- Prominent component: learning algorithm

- Dual policy learning -> When training one policy, we use the output from its adversary at the previous iteration as input

Levenshtein Transformer

Contributions

- x5 speed up compared to standard Transformer

- Handles insertion and deletion operations

- Learning algorithm with an imitation learning framework, tackling the complementary and adversarial nature of the dual policies

- Unify sequence generation and refinement, machine translation model applied directly (without fine tuning) to translation post-editing

Levenshtein Transformer

Problem Formulation Sequence generation and refinement

Problem Formulation Sequence generation and refinement

Possible sequences

Vocabulary

Max seq length

Problem Formulation Sequence generation and refinement

Possible sequences

Actions

Vocabulary

Max seq length

: insert or delete

Problem Formulation Sequence generation and refinement

Environment

Possible sequences

Actions

Vocabulary

Max seq length

: insert or delete

Problem Formulation Sequence generation and refinement

Environment

Possible sequences

Actions

Vocabulary

Max seq length

Reward function

: insert or delete

: reward

(e.g. Levenshtein distance)

Problem Formulation Sequence generation and refinement

Environment

Possible sequences

Actions

Vocabulary

Max seq length

Input sequence (empty or incomplete)

Reward function

: insert or delete

: reward

(e.g. Levenshtein distance)

Problem Formulation Sequence generation and refinement

Agent is modelled by policy , it maps

a sequence over a distribution of actions

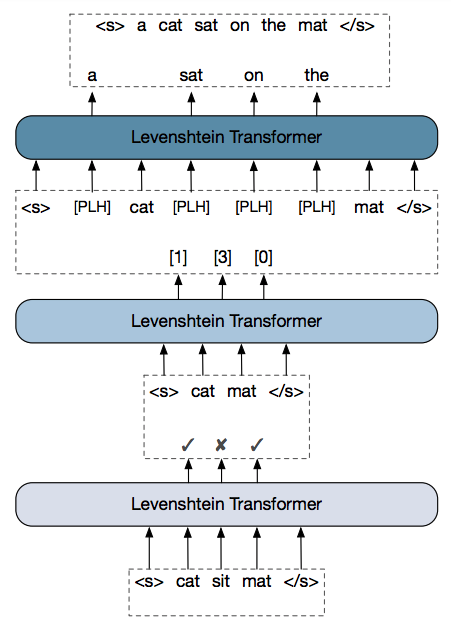

Problem Formulation Actions: Deletion & Insertion

<s>

</s>

Problem Formulation Actions: Deletion & Insertion

Deletion policy:

is a binary decision (0 or 1)

Can't delete start or end tokens :

Problem Formulation Actions: Deletion & Insertion

Predict the number of insertions: placeholder policy

a 2 phase insertion process

Predict the token of each insertion: token policy

Problem Formulation Actions: Deletion & Insertion

Policy alternate combination

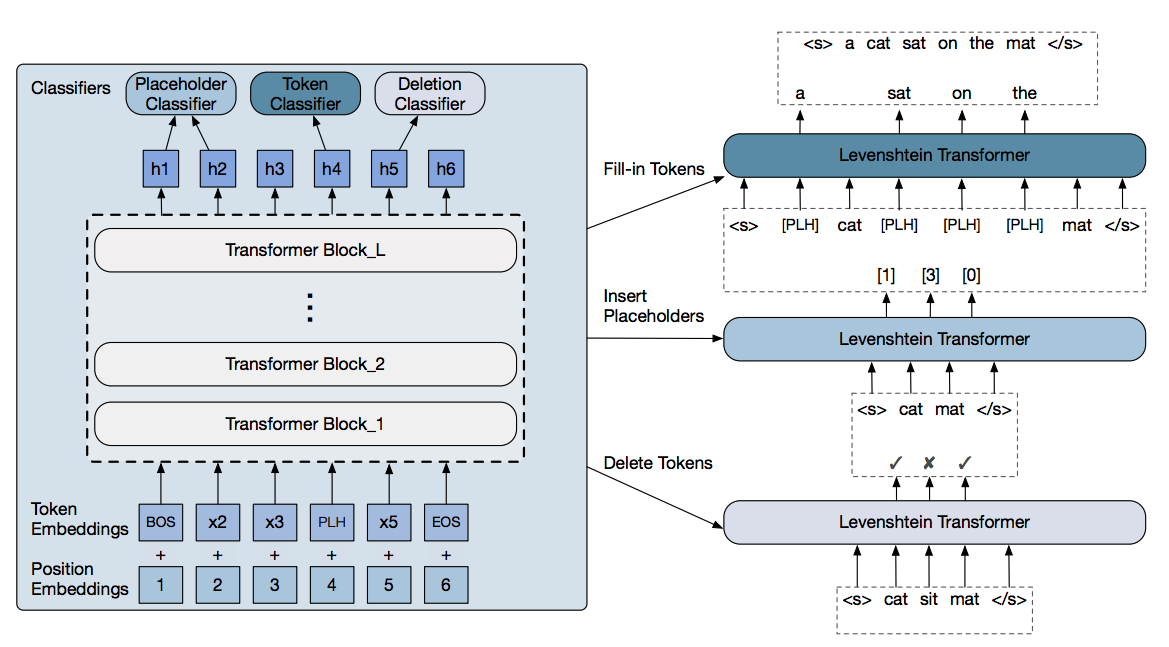

Levenshtein Transformer Model

States from l-th block

: token embedding

: position embedding

Levenshtein Transformer Model

Levenshtein Transformer Model

Deletion classifier outputs 0 or 1

Levenshtein Transformer Model

Placeholder classifier outputs number of tokens to insert

Levenshtein Transformer Model

Token classifier predicts the likelihood of vocabulary tokens

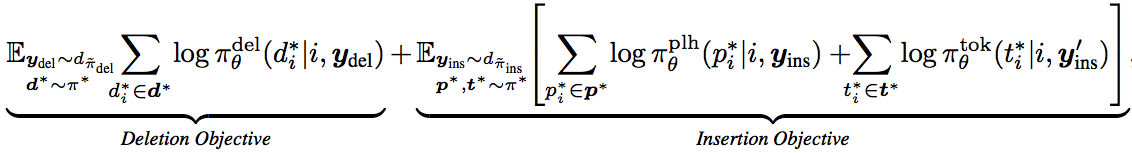

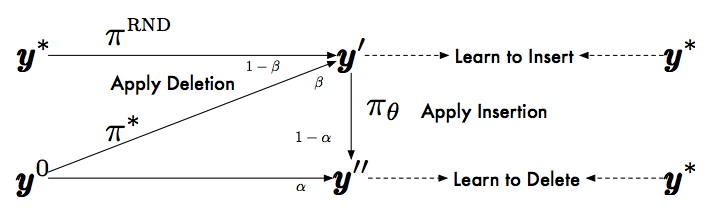

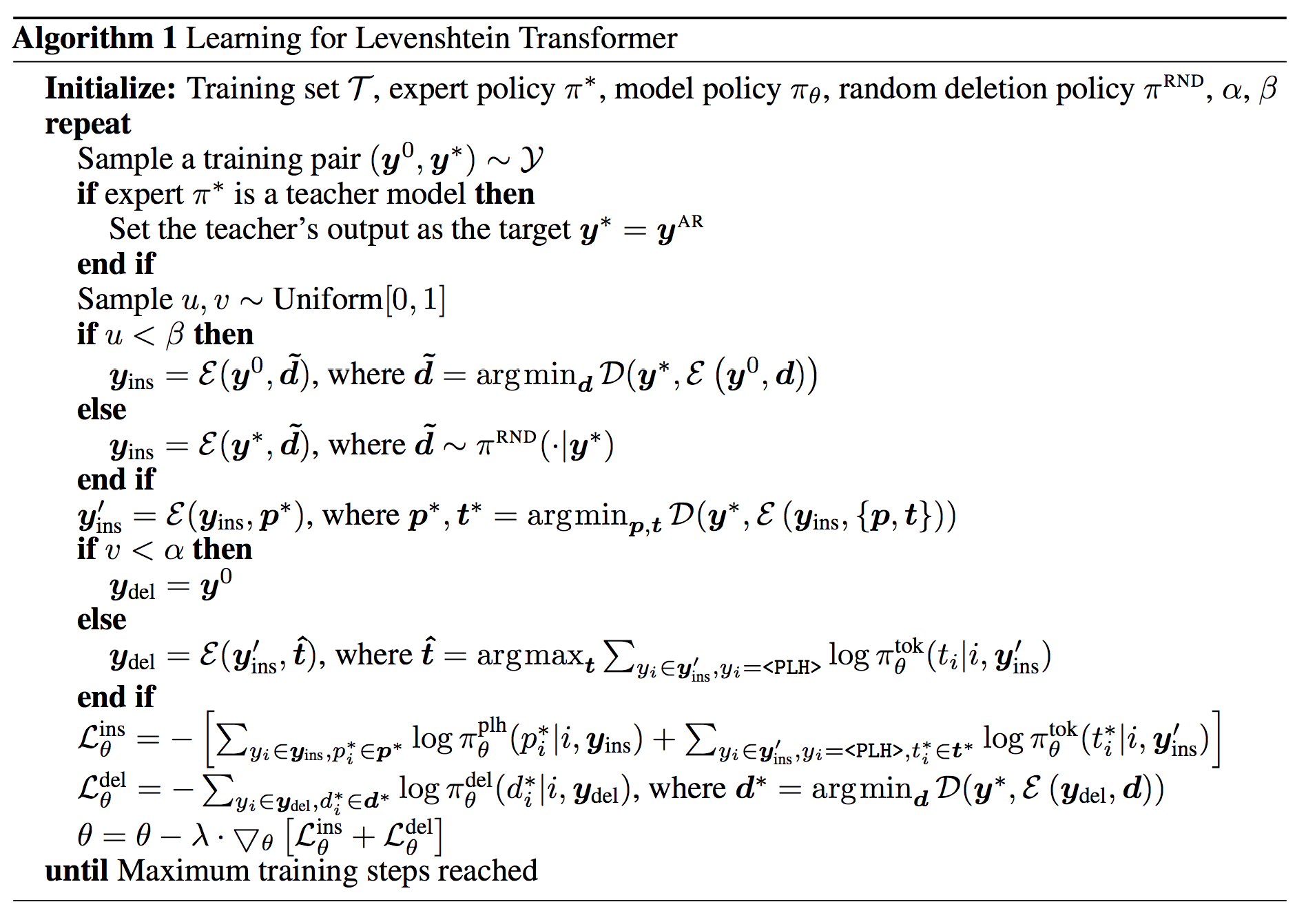

Levenshtein Transformer Dual-policy Learning

Learn to imitate an expert policy

Objective:

and are state distributions

They suggest actions, then we optimise based on these actions

Levenshtein Transformer Dual-policy Learning

Learn to delete

if

else

Levenshtein Transformer Dual-policy Learning

Learn to insert

if

else

Levenshtein Transformer Dual-policy Learning

Expert policy

Oracle:

Levenshtein without substitution

Distillation: teacher model as expert policy, replace ground-truth by beam-search results

Levenshtein Transformer Dual-policy Learning

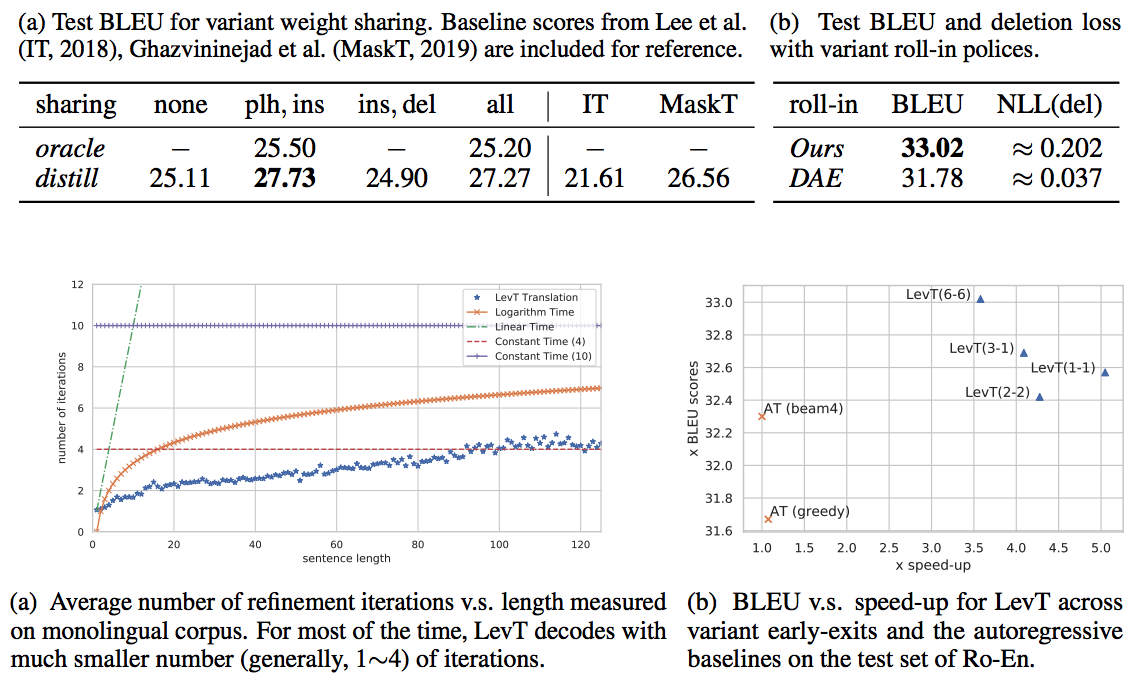

Levenshtein Transformer Inference

Greedy decoding

Training exit:

-

two consecutive iterations with same result

-

max number of iterations

Penalty for empty placeholders

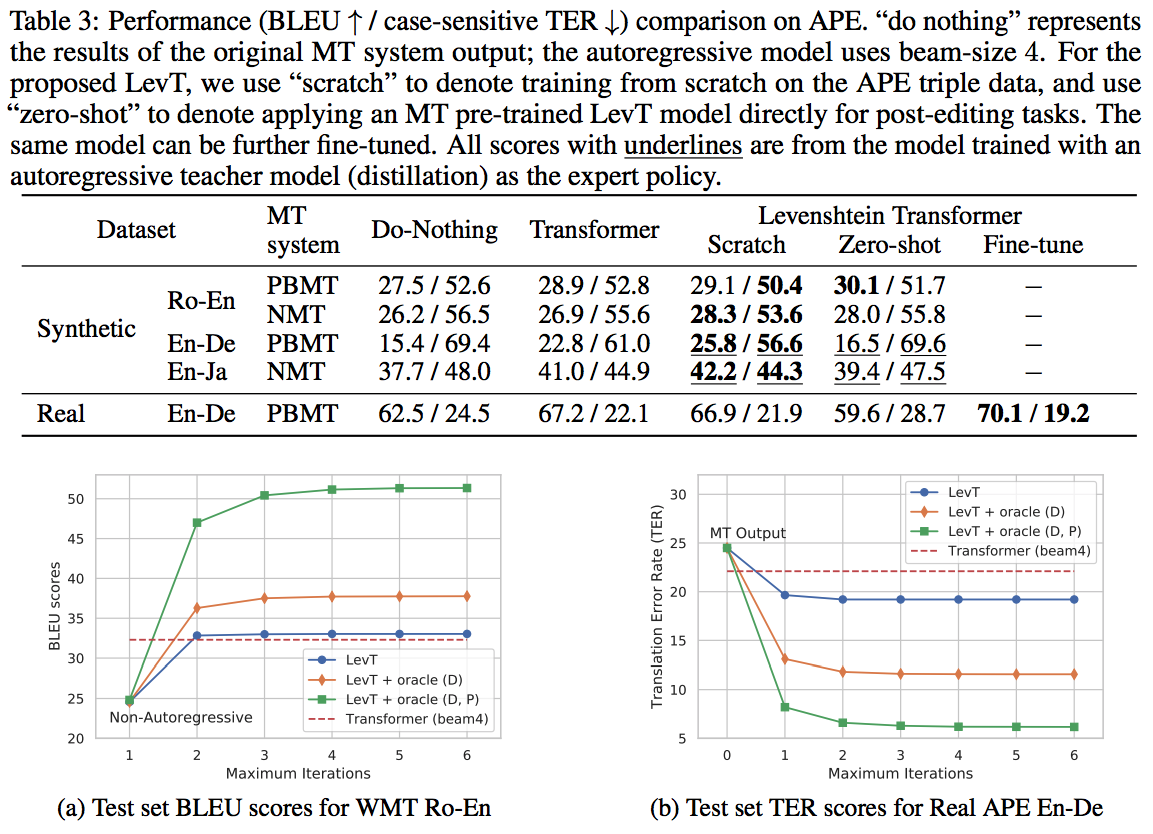

Experiments

réu trad 15/11/2019

By mb1475963

réu trad 15/11/2019

- 325