Generalization Dynamics of Linear Diffusion Models

NeurIPS Blitz talks, Claudia Merger, Sebastian Goldt

06.05.2025

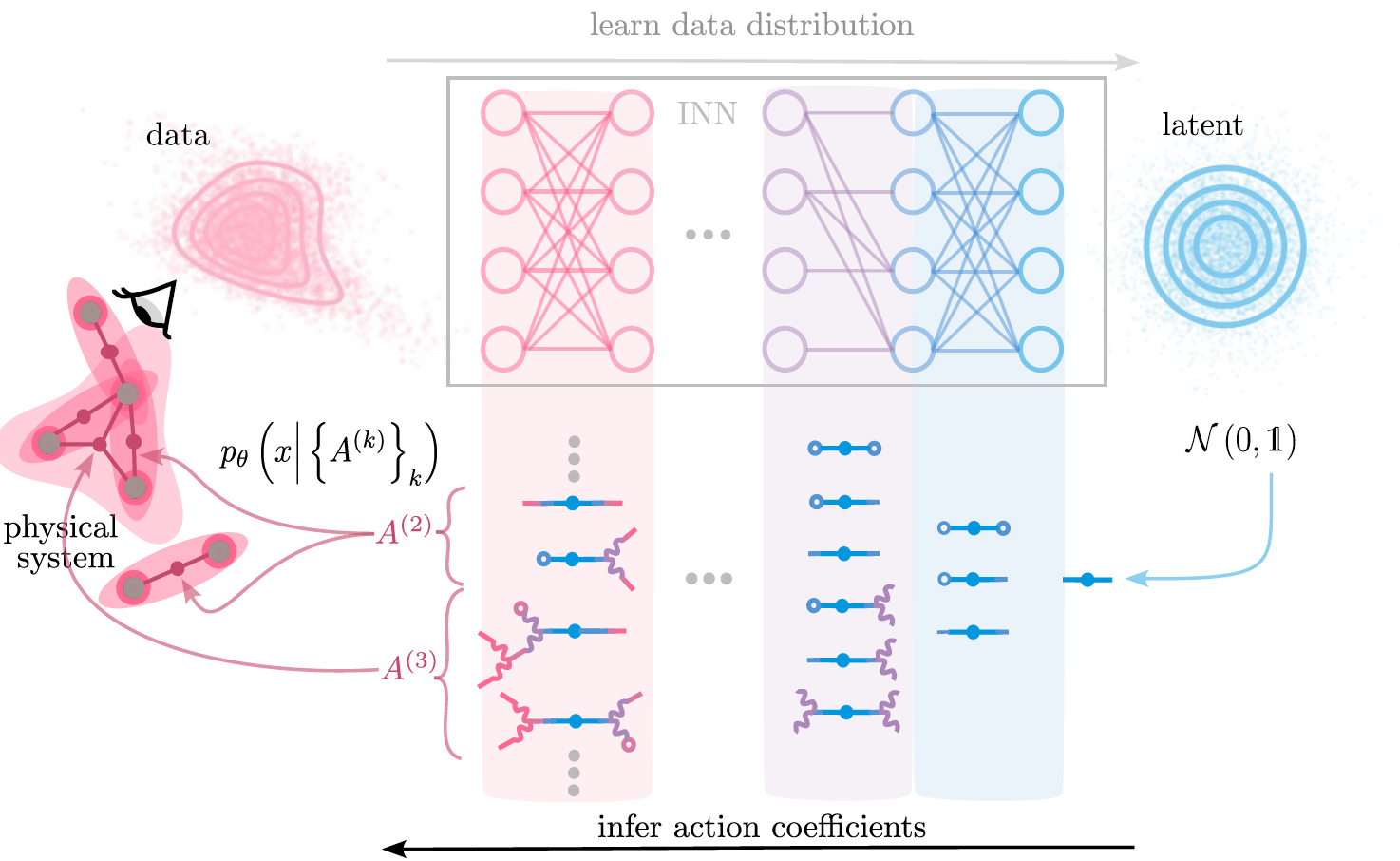

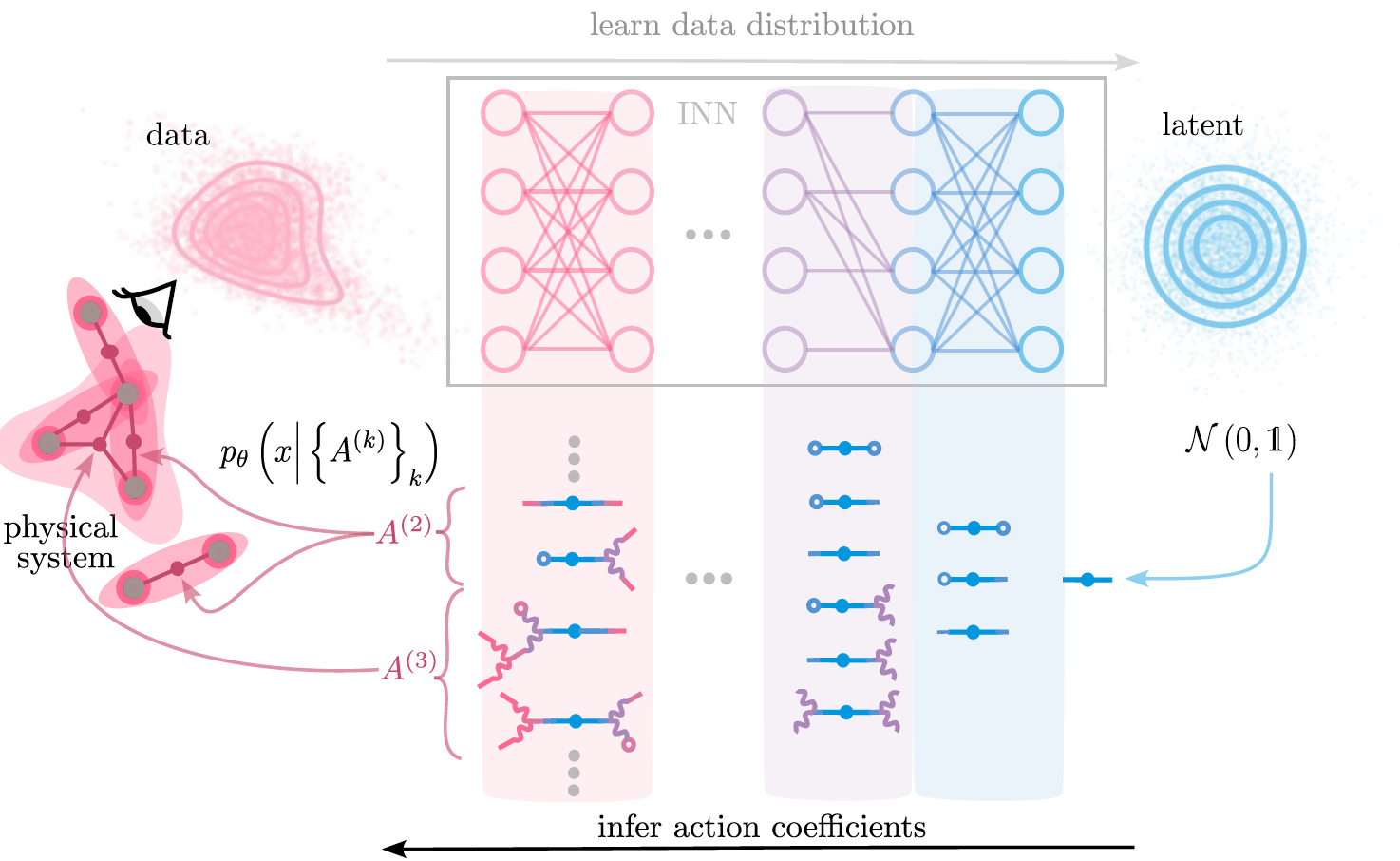

Diffusion models\(^1\)

[1] Ho, Jonathan, Ajay Jain, and Pieter Abbeel. ‘Denoising Diffusion Probabilistic Models’, 2020

reverse iterative noising process by predicting noise \( \epsilon_t = \epsilon_{\theta}(x_t,t) \) at each step

Diffusion models\(^1\) first memorize, then generalize\(^2\)

[1] Ho, Jonathan, Ajay Jain, and Pieter Abbeel. ‘Denoising Diffusion Probabilistic Models’, 2020

reverse iterative noising process by predicting noise \( \epsilon_t = \epsilon_{\theta}(x_t,t) \) at each step

[2] Kadkhodaie, Z. et. al. Generalization in Diffusion Models Arises from Geometry-Adaptive Harmonic Representations’. April 2024.

\(\rightarrow\) Empirically, generalization occurs when \(N\) is "large enough"

How much data do diffusion models need?

\(\rightarrow\) Fully tractable model: Linear diffusion models

\(\rightarrow\) Empirically, generalization occurs around \(\text{\# training examples} \sim d\)

Assume data are \(\rho = \mathcal{N} \left( \mu,\Sigma \right) \)

Linear models learn: \(\rho_{N} \approx \mathcal{N} \left( \mu_0,\Sigma_0 +c\text{Id}\right) \)

\( \mu_0,\Sigma_0 = \) empirical mean and covariance of training data \( \neq \mu, \Sigma \)

How large should \(N\) be?

\(\rightarrow\) Fully tractable model: Linear diffusion models

test loss \( \sim \text{Tr}\frac{ \Sigma -\Sigma_0}{\left(\Sigma_0 + c\text{Id} \right)^2} +const. \)

when \(N < d\),

we find \(d-N\) directions \(\nu\) where \( \Sigma_0 e_{\nu} = 0\)

\(\Rightarrow \) test loss \( \gtrsim \sum_{\nu} \frac{ \Sigma_{\nu,\nu}}{c^2} \)

"fill up" all relevant directions in \( \Sigma_0 \)

Beyond the test loss?

\( = \)

\( +\, const. \)

test loss

\( \rightarrow \) test loss measures difference from the best model

Beyond the test loss?

Kullbeck-Leibler divergence

\( \text{DKL} (\rho_N| \rho) \sim \ln \frac{\left| \Sigma \right|}{\left| \Sigma_0 + c \text{Id} \right|} \)

\( \rightarrow \) distributions align when relevant directions in \(\Sigma \) are also present in \( \Sigma_0\)

\( N \sim d\)

Stay tuned for...

...the spectrum of \(\Sigma\)?

...regularization?

...early stopping?

...the difference between Linear and non-linear diffusion models?

Diffusion models blitz talks

By merger

Diffusion models blitz talks

- 142