Generalization Dynamics of Linear Diffusion Models

Claudia Merger, Sebastian Goldt

first Workshop on Principles of Generative Models

07.12.2025

What are diffusion models?

Task: Given some data \( \mathcal{D} \) from an unknown distribution \( p \)

Generate \( x \sim p \)

"happy data scientist"

"summer in Trieste"

"intelligent bamboo"

Sohl-Dickstein, et al., ‘Deep Unsupervised Learning Using Nonequilibrium Thermodynamics’.

Song, Yang, and Stefano Ermon. ‘Generative Modeling by Estimating Gradients of the Data Distribution’,2020

Ho, et al. ‘Denoising Diffusion Probabilistic Models’, 2020

Diffusion models reverse the noising process.

train model \( \epsilon_{\theta} \) to predict noise:

\(L= \sum_t \bigg\langle||\epsilon -\epsilon_{\theta} \left(x_t,t\right) ||^2 \bigg\rangle_{\epsilon, x_0} \)

How do diffusion models learn to sample?

Sohl-Dickstein, et al., ‘Deep Unsupervised Learning Using Nonequilibrium Thermodynamics’.

Song, Yang, and Stefano Ermon. ‘Generative Modeling by Estimating Gradients of the Data Distribution’,2020

Ho, et al. ‘Denoising Diffusion Probabilistic Models’, 2020

How much data, \(N\)?

Conventionally: \( N\sim \exp(\alpha d) \)

Dynamic regularization (sampling) and early stopping (training) bias

"Generalization"

"Memorization"

Kadkhodaie, Z. et. al. Generalization in Diffusion Models Arises from Geometry-Adaptive Harmonic Representations’. April 2024.

= Global minimum of the training loss

increasing \(N\)

Biroli et al. 2024. Nature Communications,

George et. al. 2025. arXiv.2502.04339.

George et al. 2025 arXiv.2502.00336.

Bonnaire et. al. 2025. arXiv.2505.17638.

Biroli, Mezard, 2023, Journal of Statistical Mechanics, Theory and Experiment

How much data, \(N\)?

Conventionally: \( N\sim \exp(\alpha d) \)

data structure?

"Generalization"

"Memorization"

= Global minimum of the training loss

increasing \(N\)

Can data structure help us learn?

Eigenvalues of \( \Sigma \): \(\lambda_{\nu}\propto \nu^{-k}, \quad \text{Tr}\Sigma=const. \)

\(\rightarrow \, k\) controls hierachy

Population loss: Wang & Pehlevan, NeurIPS 2025

\(\rightarrow \) What happens at finite \(N\)?

Can data structure help us learn?

Data drawn from

\(\rho = \mathcal{N} \left( \mu,\Sigma \right) \)

\( \mu_0,\Sigma_0 = \) empirical mean and covariance of training data \( \neq \mu, \Sigma \)

\(\rightarrow\) Fully tractable model: Linear diffusion models*

Linear models learn:

\(\rho_{N} \approx \mathcal{N} \left( \mu_0,\Sigma_0 +c\text{Id}\right) \)

\(L= \sum_t \bigg\langle||\epsilon -\epsilon_{\theta} \left(x_t,t\right) ||^2 \bigg\rangle_{\epsilon, x_0} \)

\(\epsilon_{\theta} \left(x_t,t\right)=W_t(x_t+b_t) \)

Eigenvalues of \( \Sigma \): \(\lambda_{\nu}\propto \nu^{-k}, \quad \text{Tr}\Sigma=const. \)

Linear models: Generalization with \(N\)

test loss \( \sim \text{Tr}\frac{ \Sigma -\Sigma_0}{\left(\Sigma_0 + c\text{Id} \right)^2} +const. \)

find \(d-N\) directions \(\nu\) where \( \Sigma_0 e_{\nu} = 0\)

\(\Rightarrow \) test loss \( \gtrsim \sum_{\nu} \frac{ \Sigma_{\nu,\nu}}{c^2} \)

\( \rightarrow \) nullspace of \( \Sigma_0 \) drives overfitting

Kullback-Leibler divergence

\( \text{DKL} (\rho_N| \rho) \sim \ln \frac{\left| \Sigma \right|}{\left| \Sigma_0 + c \text{Id} \right|} \)

Linear models: Generalization with \(N\)

\( \rightarrow \) nullspace of \( \Sigma_0 \) drives overfitting

\( \rightarrow \) Stronger hierarchy in data improves performance at fixed \(N\)

test loss \( \sim \text{Tr}\frac{ \Sigma -\Sigma_0}{\left(\Sigma_0 + c\text{Id} \right)^2} +const. \)

Impact of data structure

Eigenvalues of \( \Sigma \): \(\lambda_{\nu}\propto \nu^{-k}, \quad \text{Tr}\Sigma=const. \)

Kullback-Leibler divergence \( \text{DKL} (\rho_N| \rho) \sim \ln \frac{\left| \Sigma \right|}{\left| \Sigma_0 + c \text{Id} \right|} \)

Learning dynamics

Weights exponentially relax with rate \( \propto \bar{\alpha_{t}}\lambda_{\nu}^{0}+1-\bar{\alpha}_{t}\)

\( \lambda^0_{\nu} =0 \rightarrow \) slower learning

More hierarchical \( \Sigma \rightarrow \) slower learning, less severe overfitting

regularization \(c\):

minimal scale of variability

Linear models learn:

\(\rho_{N} \approx \mathcal{N} \left( \mu_0,\Sigma_0 +c\text{Id}\right) \)

\(\rightarrow \) mitigates overfitting

Conclusion

Assumption: Linear models & Gaussian Data

Two regimes:

\( N <d \): Nullspace of \(\Sigma_0 \) drives overfitting, data hierarchy is beneficial

\(N>d\): \(\text{DKL}\) decays with \(d/(4N)\) irrespective of structure

Effective measures to prevent overfitting when, \( N \ll d \)?

\(\rightarrow \) Early stopping & regularization

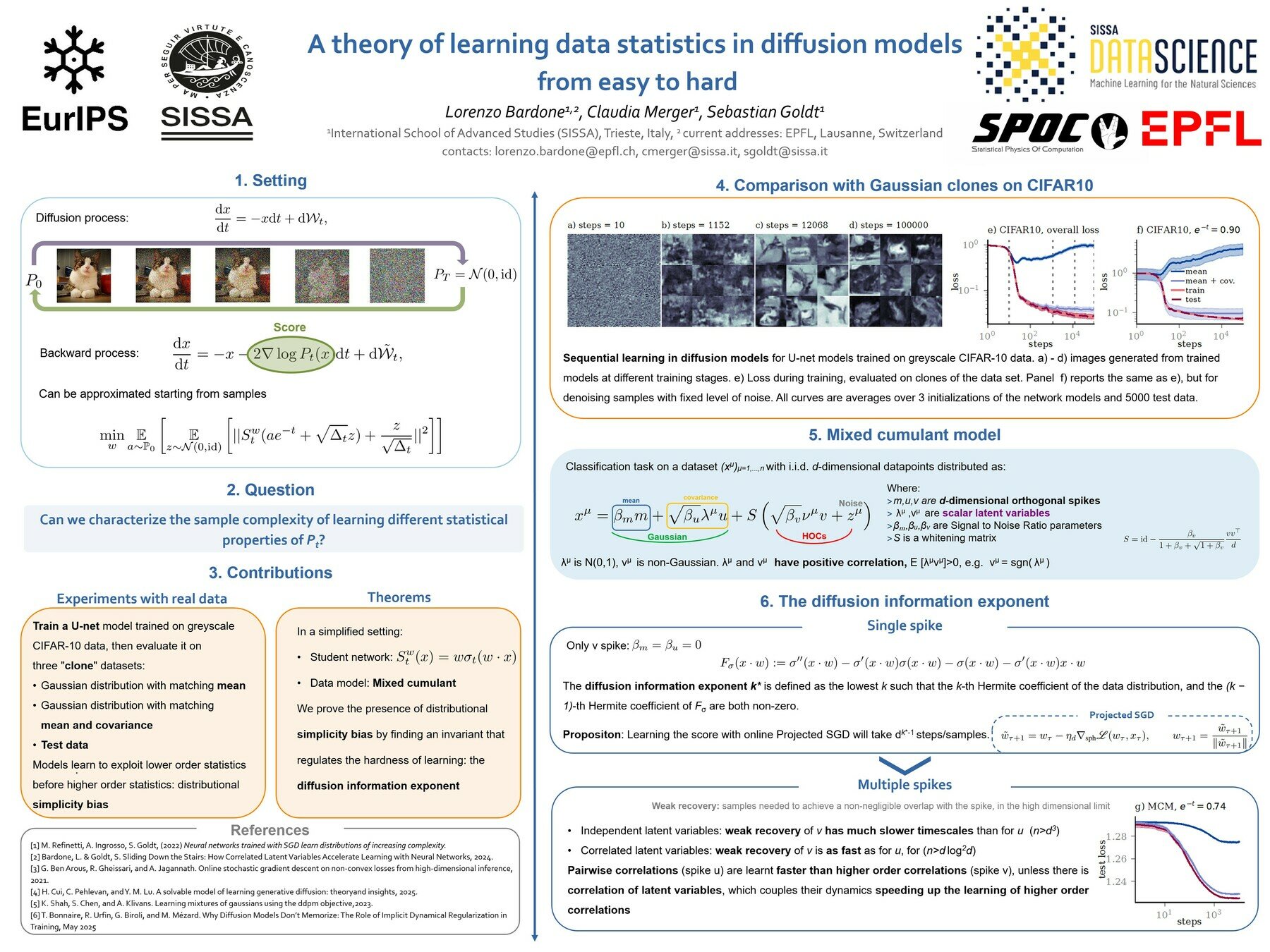

Higher order statistics? Check out Lorenzo's poster!

Learning dynamics?

Comparison to reference

Non-linear vs. Linear

CIFAR-10

CelebA

Images have a highly structured covariance

Not enough to span the space of faces!

naive solution: \( \frac{||\Sigma - \Sigma_0||_F^2}{||\Sigma||_F^2}\rightarrow \frac{d_{\text{effective}}}{N}\)

CelebA: \( d_{\text{effective}}\approx 7 \)

MNIST: \( d_{\text{effective}}\approx 25 \)

EURIPS talk 2025

By merger

EURIPS talk 2025

- 73