Data Science

Theory of Neural Networks

Claudia Merger

13.12.2024

Generative Models

examples use cases:

- image generators

- physical observables (replace costly scientific simulations)

Task: Given some data \( \mathcal{D} \) from an unknown distribution \( p \)

Generate \( x \sim p \)

Task is solved by learning \( \, p_{\theta} \approx p\)

"happy data scientist"

"summer in Trieste"

"intelligent bamboo"

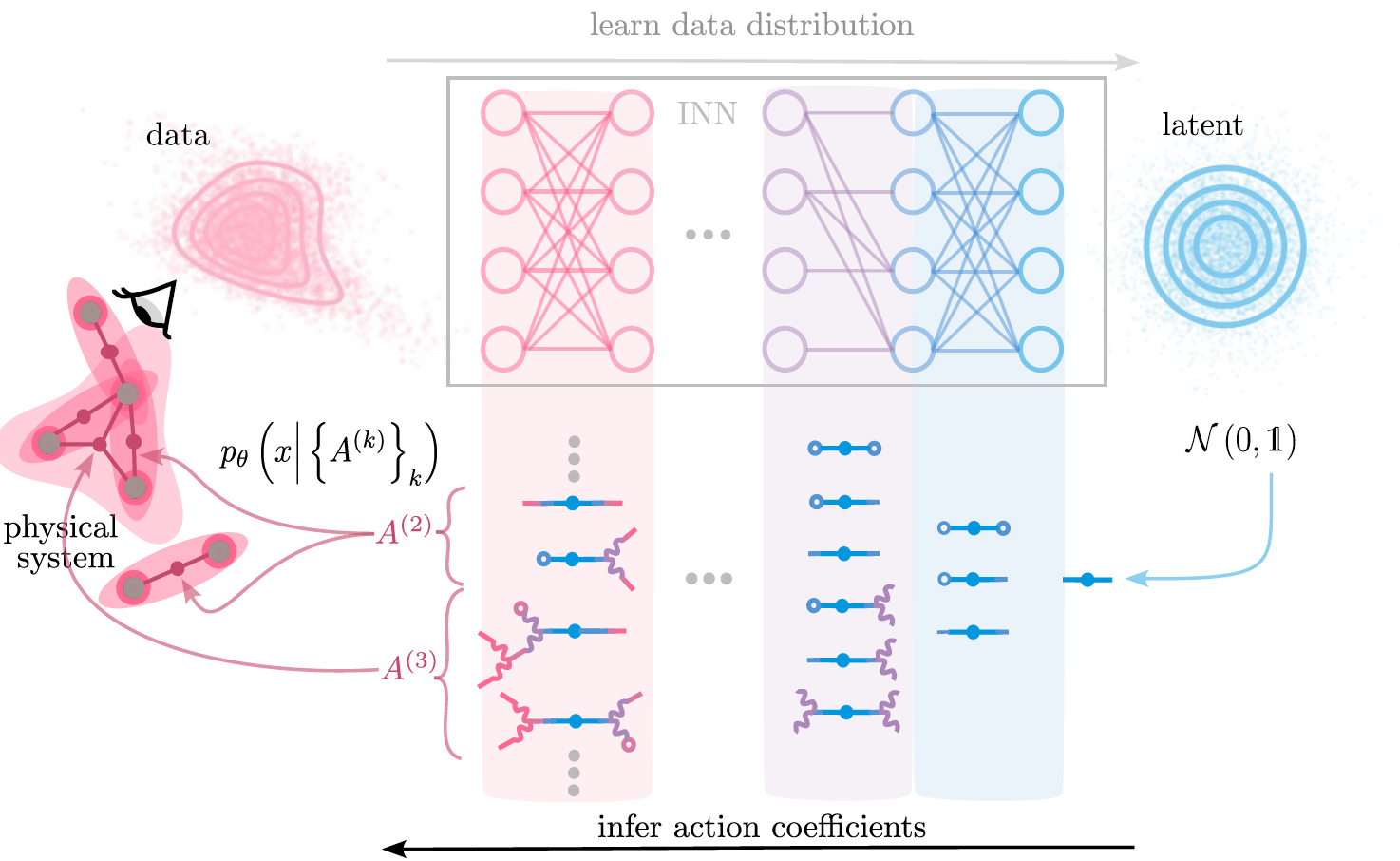

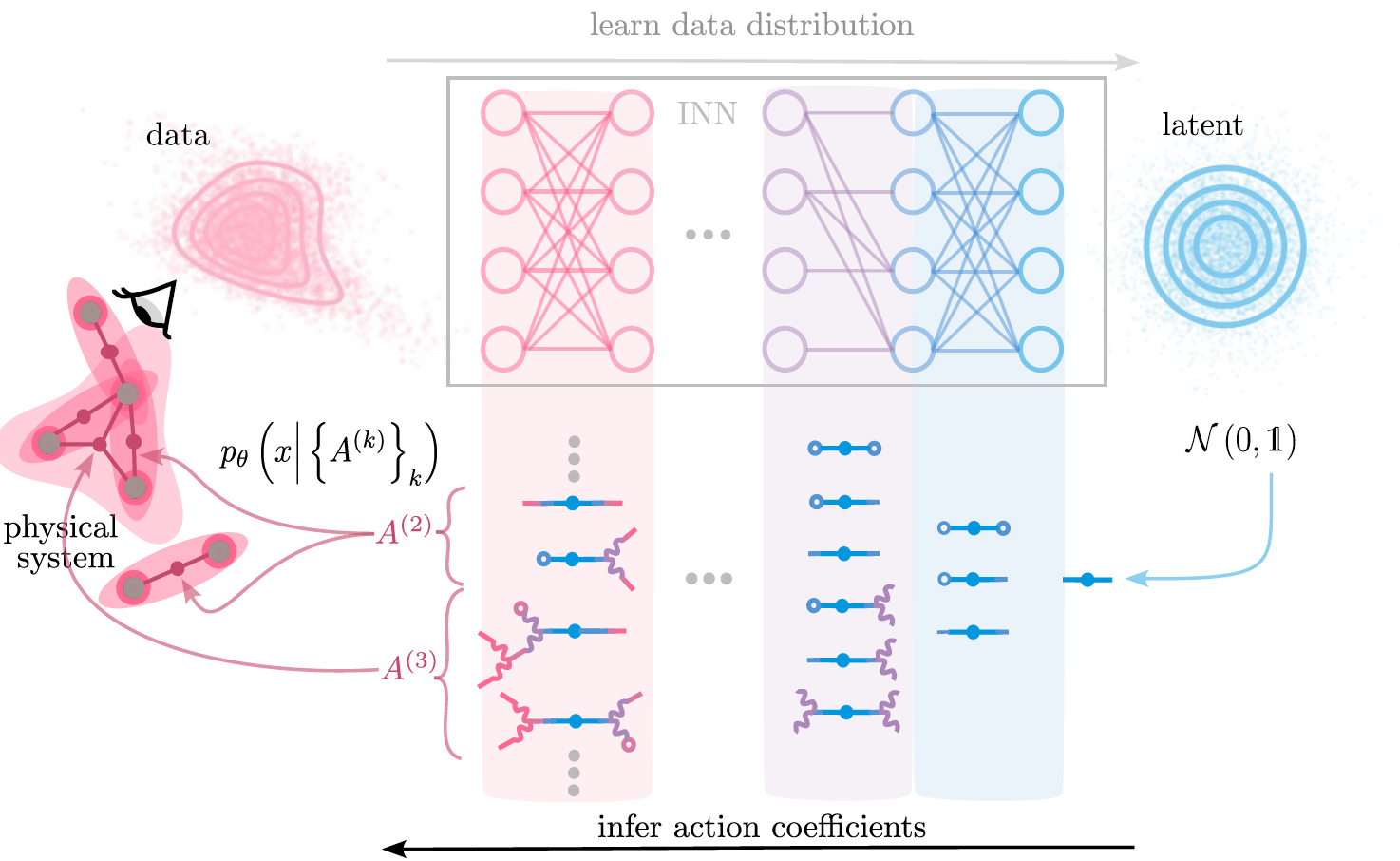

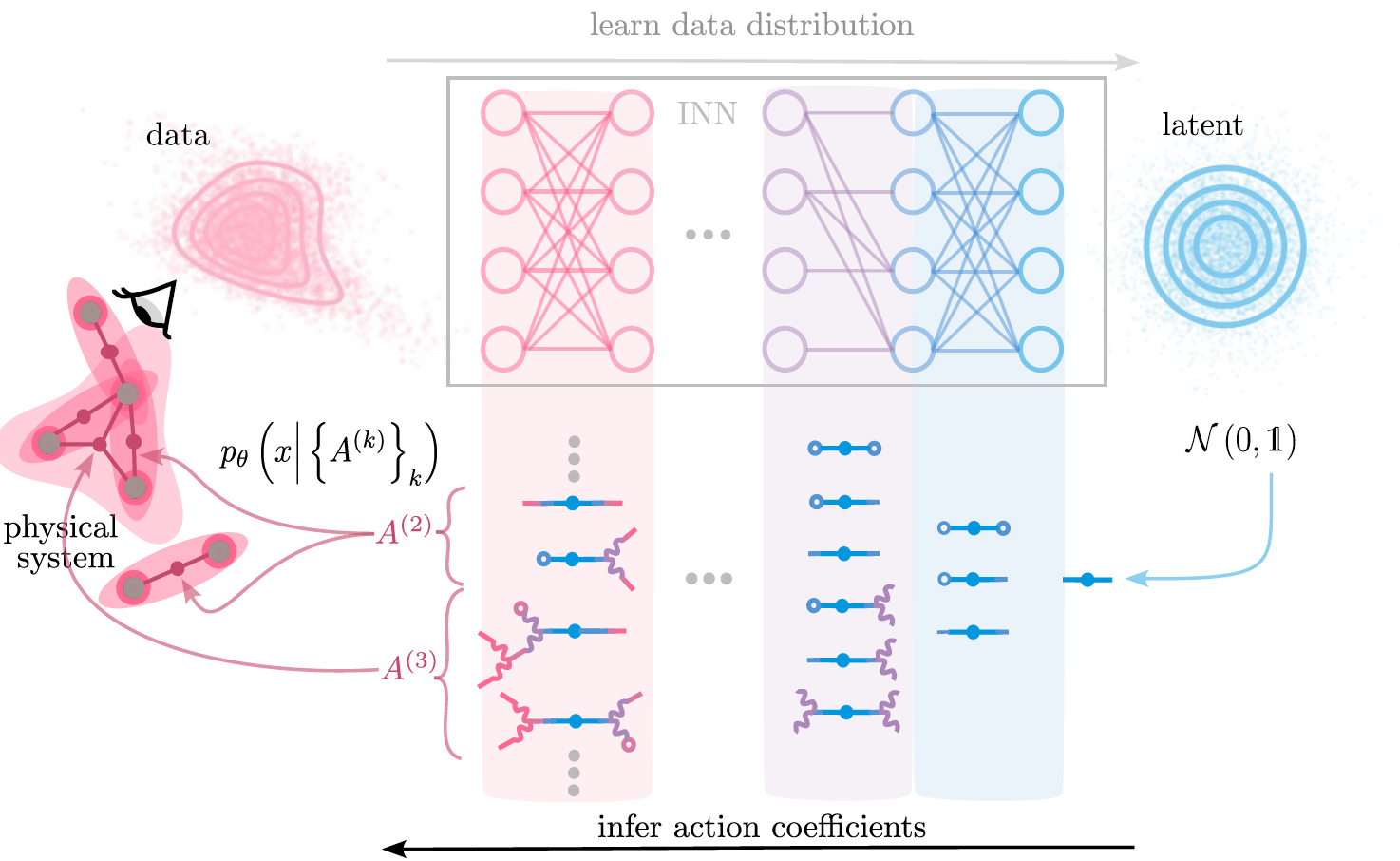

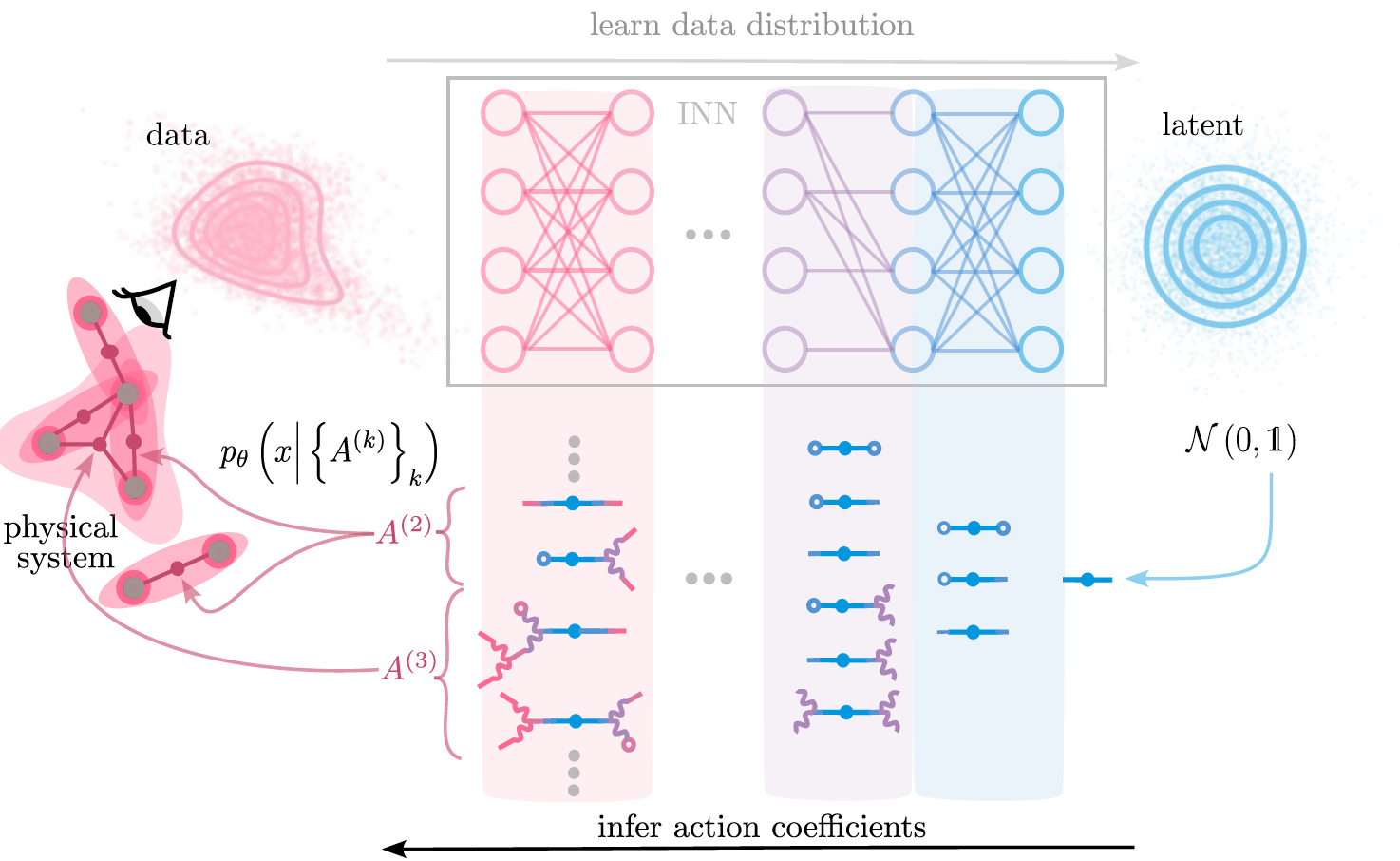

Example: Invertible neural networks

NICE (Dinh et. al., 2015 ), RealNVP (Dinh et. al., 2017), GLOW (Kingma et. al. , 2018)

Learning through simplification

What do Invertible Neural Networks learn?

generate samples

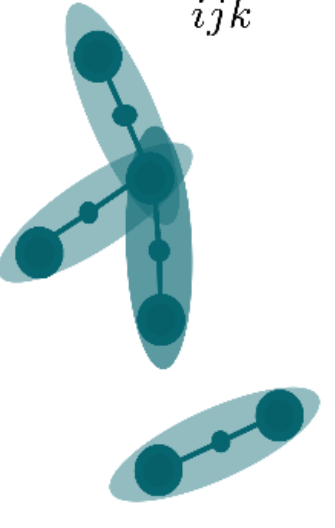

Idea: describe system via interactions between its constituents

Example: Invertible neural networks

NICE (Dinh et. al., 2015 ), RealNVP (Dinh et. al., 2017), GLOW (Kingma et. al. , 2018)

Infer interactions from trained neural network

Merger, Rene, Fischer, et. al. ‘Learning Interacting Theories from Data’. PRX, 2023

Example 2: Diffusion

Ho, Jonathan, Ajay Jain, and Pieter Abbeel. ‘Denoising Diffusion Probabilistic Models’, 2020

Example 2: Diffusion

Ho, Jonathan, Ajay Jain, and Pieter Abbeel. ‘Denoising Diffusion Probabilistic Models’, 2020

Diffusion models reverse the noising process by predicting the noise vector.

How much data do diffusion models need?

Can we predict when generalization happens?

\( \mathcal{D_A} \)

\( \mathcal{D_A} \)

\( \mathcal{D_B} \)

\( \mathcal{D_B} \)

\( \mathcal{D_B} \)

use \( \mathcal{D_A} \) to train \( \epsilon_A \)

use \( \mathcal{D_B} \) to train \( \epsilon_B \)

split data into

\( \mathcal{D_A},\mathcal{D_B} \)

Kadkhodaie, Z. et. al. Generalization in Diffusion Models Arises from Geometry-Adaptive Harmonic Representations’. April 2024.

Can we predict when generalization happens?

Ansatz: Pairwise Interactions in the data?

\( \rightarrow \) covariance

\(p \)

\(p_{\theta} \)

diffusion

prediction

Summary: Understanding Generative Neural Networks

Task: Given some data \( \mathcal{D} \) from an unknown distribution \( p \)

Generate \( x \sim p \)

Task is solved by learning \( \, p_{\theta} \approx p\)

Questions:

- What can we learn from \(p_{\theta} \) about data?

- How close are \( p, \, p_{\theta} \) ?

\( p\)

\( \, p_{\theta} \)

\( \Rightarrow \) Interactions as a language to span model space.

\( \Rightarrow \) Representative of Group approach to understand Neural Networks and Learning

Theory of Neural Networks Group

Neural Architectures

Learning

Algorithms

Data statistics

computation in biol. systems

(and friends)

Physics Day Data Science

By merger

Physics Day Data Science

- 81